Abstract

The research aimed to examine the factors affecting educators’ acceptance and use of artificial intelligence (AI) software based on the Unified Theory of Acceptance and Use of Technology 2 (UTAUT2). Research data was collected from 280 educators (teachers and education university lecturers) working at schools or universities across seven regions of Türkiye. The data was gathered using a self-report questionnaire including demographic information questions and Artificial Intelligence Acceptance Scale for Education (AIASE). Within the scope of this study, the AIASE was developed to determine educators’ acceptance of artificial intelligence technology. In the scale, the performance expectancy (PE) subscale included seven items, while the effort expectancy (EE), social influence (SI), facilitating conditions (FC), hedonic motivation (HM), and habit (H) subscales each comprised five items. The price value (PV) subscale contained three items, and the behavioral intention (BI) subscale was represented by six items. In conclusion, the developed scale provides a valid and reliable tool for educators. According to the goodness-of-fit values calculated in the Structural Equation Modeling analysis, the research model indicated an adequate fit. The results showed PE, HM, and H were significant determinants of BI toward educators’ acceptance of artificial intelligence software, but only H was a significant determinant of use behavior (UB). The model variables altogether explained 68% of the variability in educators’ BI and 34% of the variability in use behavior. According to the results, incentives and practices should be implemented that reinforce the factors of HM, PE and H that are effective in the AI acceptance.

Plain Language Summary

This study explored the factors affecting teachers’ and university lecturers’ acceptance and use of artificial intelligence (AI) software in education in Tü rkiye. A total of 280 educators participated. To measure acceptance, a new questionnaire was developed including several key factors: performance expectancy (PE): how much educators believe using AI will improve their teaching performance; effort expectancy (EE): how easy it is for educators to use AI for teaching; social influence (SI): how much educators feel that important people (e.g., colleagues) expect them to use AI; facilitating conditions (FC): availability of support and resources to help educators use AI; hedonic motivation (HM): the fun or enjoyment educators experience when using AI; habit (H): the degree to which educators automatically use AI in teaching; price value (PV): educators’ assessment of the benefits of AI compared to its cost; behavioral intention (BI): educators’ intention or willingness to use AI; use behavior (UB): actual use of AI in teaching. The results showed that PE, HM, and H were important factors influencing educators’ intention to use AI, while only H (Habit) significantly affected actual use. Together, these factors explained 68% of educators’ intention to use AI and 34% of their actual use. These findings suggest that schools and universities can promote AI adoption by making AI tools useful, enjoyable, and easy to integrate into daily teaching, and by encouraging routines that support regular use. Understanding these factors can help educators adopt AI more effectively in their teaching.

Introduction

The artificial intelligence emerged in the 20th century as the forerunner of all innovative technologies with the potential to revolutionize our lives (Bhagat et al., 2023; Hinojo-Lucena et al., 2019). Its human-like abilities to solve complex problems in every field including education have moved the world causing (Bhagat et al., 2023; Duong et al., 2023). AI-enabled technologies are believed to have the potential to lead to a significant paradigm change in the education (Hidayat-ur-Rehman & Ibrahim, 2023). AI offers new possibilities for instructors to enhance their instruction by assisting them in their role as facilitators and evaluators (Al Darayseh, 2023). AI tools like ChatGPT enable students to acquire the skills required by the competitive business world of the 21st century (Adarkwah et al., 2023).

The efficient use of AI in education brings new responsibilities to educators (Ayanwale et al., 2022; Bower et al., 2024; Sanusi et al., 2024; Tan et al., 2025; Zhai et al., 2021). Although educators are expected to review and improve their AI capabilities (Zhai et al., 2021), many educators are either unaware of the scope and components of AI technology (Hinojo-Lucena et al., 2019) or are just exploring the potential pedagogical opportunities of AI softwares (Zawacki-Richter et al., 2019). Indeed, researches have shown that majority of the educators do not incorporate AI-based tools into their teaching (Adarkwah et al., 2023; Alwaqdani, 2025; Cheah et al., 2025; Galindo-Domínguez et al., 2024; Iqbal et al., 2022) and they are quite concerned about how AI will affect their work (Alwaqdani, 2025; Zhai et al., 2021). This might be because they find it difficult to integrate AI into their courses (Ayanwale et al., 2022), have negative perceptions about implementing AI in the courses (Iqbal et al., 2022), and lack awareness, experience, and conceptual understanding (Adarkwah et al., 2023) and knowledge (Alwaqdani, 2025). Also, teachers are experiencing difficulties in adopting artificial intelligence technology due to reasons such as resistance to change, lack of resources, and limited training (Darmawan et al., 2024). However, user willingness to accept of these technological advancements is just as important to the success of AI-supported educational systems as it is to technological advancements (Algerafi et al., 2023). According to Yao and Abd Halim (2023), teachers’ actual utilization of AI technology is directly impacted by their willingness to accept AI. Guo et al. (2025) pointed out that teachers’ reluctance to adopt artificial intelligence technology will prevent this technology from realizing its potential in the learning and teaching process. Because of this, it is important to investigate the characteristics that may encourage or discourage the adoption of AI tools (Venkatesh, 2022). While AI is being included into education, it is necessary to examine the level of acceptance of AI by teachers and the internal and external factors that influence this acceptance level (An et al., 2023). This study will aid to the discovery of factors that facilitate the successful application of AI technology in the education by investigating the variables that affect educator’ acceptance of AI.

Theoretical Framework

Artificial Intelligence Technology

In 1956, John McCarthy, Claude Shannon, Nathaniel Rochester, and Marvin Minsky worked to define and advance artificial intelligence technology, as part of the Dartmouth Summer Research Project (McCarthy et al., 2006). Therefore, the 1956 Dartmouth project is often considered the first event that launched artificial intelligence as a research discipline, and McCarthy is recognized as the inventor of the term “artificial intelligence” (Moor, 2006).

Since 1956, advances in mathematics, chemistry, biology, linguistics, and artificial intelligence solutions have influenced a number of theoretical definitions of artificial intelligence (Popenici & Kerr, 2017). Kaplan and Haenlein (2019) define AI as “a system’s ability to interpret external data correctly, to learn from such data, and to use those learnings to achieve specific goals and tasks through flexible adaptation” (p. 17). Tredinnick (2017) defined AI as “a general term that currently refers to a cluster of technologies and approaches to computing focussed on the ability of computers to make flexible rational decisions in response to often unpredictable environmental conditions” (p. 37). According to the previous literature’s definitions, Oladapo and Gbadamosi, (2018) defined AI, often referred to as Machine Intelligence “as any devise, machine, computer, computer controlled robot, system or software that can reason, decide and perform specific task in a manner that is similar to human brains” (p. 113).

A notable advancement in artificial intelligence is the development of Chat Generative Pre-Trained Transformer (ChatGPT), a system that can produce text resembling human language and converse with people (Habibi et al., 2023). The AI-driven chatbot, ChatGPT, can serve as a digital assistant that can provide explanations, answer questions and develop learning materials (Foroughi et al., 2023). One of the most important application areas of AI tools like ChatGPT is education, and such innovative technologies have the potential to transform education (Adarkwah et al., 2023; Al Darayseh, 2023).

Artificial Intelligence Technology in Education

The wide range of features and functionalities of artificial intelligence technology has attracted the attention of researchers and practitioners in the field of education (Niu et al., 2024; K. Zhang & Aslan, 2021). Many educators see AI technology (such as ChatGPT) as a potential opportunity to shape the future of education (Ahmad et al., 2022; Foroughi et al., 2023). AI helps teachers transfer knowledge more effectively, reduces workload by doing time-consuming routine tasks, and provides time for teachers to give quick feedback to students (Ahmad et al., 2022; Alhwaiti, 2023; Habibi et al., 2023). Beyond delivering ready-made and tangible experiences, AI tools can assess and interact with users, thus delivering rich and complex forms of output and experience (Bazelais et al., 2024). AI Technologies such as ChatGPT can develop virtual tutors and create virtual educational environments, enabling students to learn at any time and from any place, increasing the flexibility and convenience of learning (Yu, 2023). AI can provide enriched learning environments, create adaptive learning materials, and offer meta-cognitive guidance to students (K. Zhang & Aslan, 2021).

AI systems can tailor learning content to each student’s needs (Ahmad et al., 2022), learning abilities and interests (Yu, 2023). In this way, AI applications support personalized learning (Alhwaiti, 2023; Habibi et al., 2023; Niu et al., 2024), AI technologies can offer students the chance to explore and access information without limits (Algerafi et al., 2023; Habibi et al., 2023). AI technology can actively track students’ learning progress by automatically monitoring their learning performance (Niu et al., 2024). It enables rapid assessment of large numbers of students in an objective way (Ahmad et al., 2022).

Despite the abovementioned benefits, it is also stated that the development of artificial intelligence will make learners more dependent on computers for information and research (Tiwari et al., 2023). It is also noted that it will bring challenges in terms of evaluation and ethical considerations (Adeshola & Adepoju, 2023; Firat, 2023). There are also current challenges such as cost, lack of AI expertise among trainers, and lack of guidelines for trainers (K. Zhang & Aslan, 2021).

UTAUT 2

UTAUT was proposed by Venkatesh et al. (2003) through the empirical validation of a synthesis of eight key models within the technology acceptance models. These models are: the theory of planned behavior, the social cognitive theory, the innovation diffusion theory, the technology acceptance model, the motivational model, the theory of reasoned action, the model of PC utilization, and a model combining the technology acceptance model and the theory of planned behavior. In the UTAUT, the variables that directly affect use behavior are behavioral intention and facilitating conditions, while the exogenous variables affecting behavioral intention in the model are performance expectancy, effort expectancy, social influence, and facilitating conditions (Venkatesh et al., 2003). Although the UTAUT model adequately explains corporate technology acceptance, it was extended and revised by Venkatesh et al. (2012) with the UTAUT2 model to explain consumers’ technology acceptance. The hedonic motivation, habit and price value were added to the extrinsic variables in the UTAUT2 (Venkatesh et al., 2012). In addition, in the UTAUT 2 model, the individual characteristics of the users such as age, experience, gender were proposed to have a moderating effect on some of the relationships between extrinsic and intrinsic variables (Venkatesh et al., 2012). The UTAUT2 model yielded strong results, explaining 52% of the variance in consumers’ UB and 74% of the variance in consumers’ BI to use a technology (Venkatesh et al., 2012, 2016).

Previous research demonstrates that the UTAUT2 model offers a theoretically consistent and empirically supported framework for explaining the adoption of AI and other digital technologies across different disciplines. Studies in a wide range of fields, such as business (Foroughi et al., 2025; Mohamad et al., 2026), telecommunications (Usiña-Báscones et al., 2025), economics (Sadiq et al., 2025), healthcare (Naik et al., 2025), management (Bagnato et al., 2025), design and creativity studies (Wang & Zhang, 2023), and social sciences (Gansser & Reich, 2021), provide strong empirical evidence for the interdisciplinary validity of UTAUT2, especially in the context of the adoption of AI technologies. One of the most commonly used theories adopting various technologies, the UTAUT model (Venkatesh, 2022) has also been frequently used in AI technology adoption in education due to researchers’ and educators’ interest in AI technology (e.g., An et al., 2023; Chatterjee & Bhattacharjee, 2020; Foroughi et al., 2023; Guggemos et al., 2020). Therefore, the current study draws on the conceptual foundation of UTAUT2, empirically validated across diverse disciplines, and applies this foundation to the explanation of determinants in the educational context.

Current Study and Research Hypotheses

UTAUT is one of the most frequently applied models to assess behavioral intentions in AI acceptance (Kelly et al., 2023). The relevant literature includes UTAUT studies (e.g., Duong et al., 2023; Guggemos et al., 2020; Strzelecki & ElArabawy, 2024) and UTAUT2 researches (e.g., Foroughi et al., 2023; Habibi et al., 2023; Strzelecki, 2024) investigating university students’ artificial intelligence technology acceptance in education. However, educators’ behavioral intentions to use AI software and the factors affecting their usage behaviors have not been investigated enough. There are a limited number of studies investigating educators’ acceptance of AI technology within the framework of UTAUT models, almost all of which (An et al., 2023; Chatterjee & Bhattacharjee, 2020; Hidayat-ur-Rehman & Ibrahim, 2023; Yao & Abd Halim, 2023; X. Zhang & Wareewanich, 2024) were done in the context of the UTAUT model. Although there are a few studies investigating educators’ views on the use of artificial intelligence within the framework of UTAUT2 (Bissessar, 2023; Bower et al., 2024), there is no structural equation modeling study that examines the factors influencing educators’ use of artificial intelligence applications during their courses within the framework of the UTAUT2. In the current study, the factors affecting educators’ behavioral intentions to use AI software during their courses and their usage behaviors were examined within the framework of UTAUT2 (Venkatesh et al., 2012). To better contextualize these findings within the UTAUT2 framework, the key constructs of the model are briefly outlined below.

Performance expectancy refers to the degree to which an educator believes that the use of AI software will improve his/her teaching performance (Venkatesh et al., 2003). Effort Expectancy is the degree of ease associated with the use of AI software by an educator for teaching purposes (Venkatesh et al., 2003). Social Influence is the degree to which an educator perceives that important others (e.g., colleagues, school principals) believe he/she should use AI software for teaching purposes (Venkatesh et al., 2003). Facilitating Conditions is the degree to which an educator believes that an organizational and technical infrastructure exists to support the use of AI software for teaching purposes (Venkatesh et al., 2003). Hedonic Motivation is the fun or pleasure an educator derives from using AI software for teaching purposes (Venkatesh et al., 2012). Price Value is an educator’s cognitive tradeoff between the perceived benefits of artificial intelligence software and the monetary cost of using them (Dodds et al., 1991; Venkatesh et al., 2012). Habit is the extent to which an educator tends to use AI software automatically for teaching purposes (Limayem et al., 2007; Venkatesh et al., 2012). Behavioral intention is an educator’s intention to use AI software for teaching purposes (Ajzen, 1991; Venkatesh et al., 2003, 2012).

From an AI pedagogy perspective, the UTAUT2 constructs operate as an interconnected set of perceptions that collectively guide educators’ adoption of AI tools in teaching–learning processes. Educators’ judgments about whether AI can enhance instructional quality, support content development, increase interactivity, and improve feedback mechanisms (PE) draw on evidence showing AI’s contribution to pedagogical effectiveness (An et al., 2023; Chatterjee & Bhattacharjee, 2020; Ezekiel & Akinyemi, 2022; Rahiman & Kodikal, 2024). Simultaneously, their evaluations of how easy it is to learn, operate, and integrate AI tools (EE) shape their willingness to incorporate such technologies into routine classroom practices (An et al., 2023; Chatterjee & Bhattacharjee, 2020; Rahiman & Kodikal, 2024; Suzianti et al., 2023). Adoption tendencies are also structured by perceived professional expectations and the influence of colleagues or institutional actors (SI), which have been shown to guide educators’ technology uptake (An et al., 2023; Suzianti et al., 2023). Moreover, educators’ perceptions of infrastructural readiness, technical assistance, and organizational support (FC) determine the practical feasibility of using AI tools within educational settings (An et al., 2023; Chatterjee & Bhattacharjee, 2020; Strzelecki, 2023; Suzianti et al., 2023). Affective responses such as enjoyment and intrinsic motivation (HM) further reinforce adoption behavior (Suzianti et al., 2023), while cost–benefit evaluations regarding AI accessibility (PV) influence acceptance decisions (Strzelecki, 2023). Over time, repeated interaction with AI tools fosters habitual and automatic use patterns (H), which shape both future intention and actual practice (Strzelecki, 2023; Suzianti et al., 2023). Finally, educators planned, intentional, and future-oriented decisions to integrate AI into instruction (BI) function as a central driver of future engagement (An et al., 2023; Ayanwale et al., 2022; Chatterjee & Bhattacharjee, 2020; Hidayat-ur-Rehman & Ibrahim, 2023; Strzelecki, 2023).

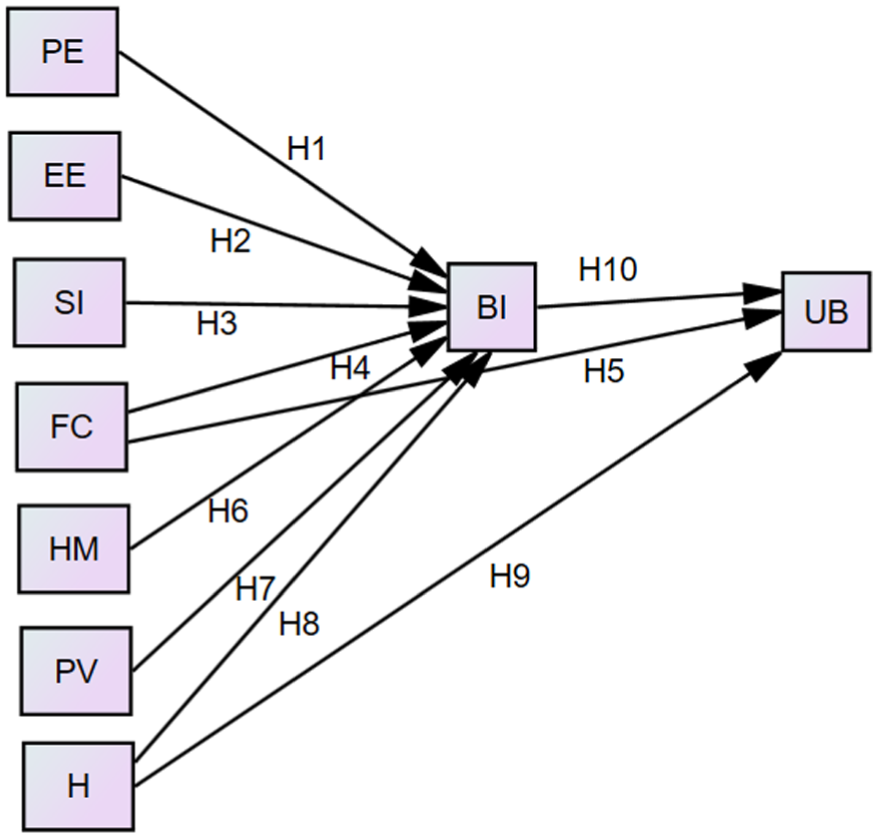

The variables described above form an interconnected whole within the UTAUT2 framework. In the model, PE, EE, SI, FC, HM, H, and PV collectively shape educators’ behavioral intention toward AI use, and BI functions as the primary determinant of actual usage behavior. In addition, FC and H directly influence UB, underscoring the combined impact of environmental support and routinized technology use on adoption processes (Venkatesh et al., 2012). Taken together, UTAUT2 provides a comprehensive theoretical framework explaining educators’ adoption of AI tools through the holistic interaction of cognitive, affective, social, and structural factors, rather than the isolated effects of individual constructs.

Empirical studies conducted across various educational contexts consistently support the relationships posited in UTAUT2. PE has been found to positively influence BI (Hypothesis 1), as individuals who perceive AI technologies as enhancing instructional quality, interactivity, and content preparation tend to show higher intention to adopt them (An et al., 2023; Bazelais et al., 2024; Duong et al., 2023; Foroughi et al., 2023; Guggemos et al., 2020; Habibi et al., 2023; Strzelecki, 2023; Strzelecki & ElArabawy, 2024; Yao & Abd Halim, 2023). Similarly, EE exerts a positive effect on BI (Hypothesis 2), as ease of learning and integrating AI tools encourages teachers, students, and other educational stakeholders to use them (Al Darayseh, 2023; Foroughi et al., 2023; Guggemos et al., 2020; Hidayat-ur-Rehman & Ibrahim, 2023; Strzelecki, 2023). Social dynamics also play a key role: SI strengthens BI (Hypothesis 3), reflecting the influence of colleagues, administrators, and peers in encouraging AI adoption across educational settings (An et al., 2023; Guggemos et al., 2020; Habibi et al., 2023; Strzelecki, 2023; Strzelecki & ElArabawy, 2024; Yao & Abd Halim, 2023). Furthermore, FC influence both BI (Hypothesis 4) (Chatterjee & Bhattacharjee, 2020; Habibi et al., 2023) and UB (Hypothesis 5) (Habibi et al., 2023; Strzelecki, 2023; Strzelecki & ElArabawy, 2024), emphasizing that organizational support, adequate infrastructure, and technical assistance are essential for effective AI integration. Affective and experiential factors further reinforce technology adoption. HM positively influences BI (Hypothesis 6), as the enjoyment derived from AI use motivate individuals to incorporate such tools into educational activities (Foroughi et al., 2023; Habibi et al., 2023; Strzelecki, 2023). PV also shapes BI (Hypothesis 7): studies conducted with university students (Strzelecki, 2023), adopters (Cabrera-Sánchez et al., 2021), and general users (García de Blanes Sebastián et al., 2022) within the context of the UTAUT2 model revealed that PV is effective on BI in individuals’ acceptance of artificial intelligence. Habit further reinforces technology acceptance by positively affecting both BI (Hypothesis 8) and UB (Hypothesis 9), showing that routine use and familiarity promote the translation of intention into actual AI-supported practices (Habibi et al., 2023; Strzelecki, 2023). Finally, consistent with core technology acceptance theories, BI strongly predicts UB (Hypothesis 10) across educational settings (Bazelais et al., 2024; Habibi et al., 2023; Strzelecki, 2023; Strzelecki & ElArabawy, 2024).

The definitions of UTAUT2 model’s components adapted for educators’ acceptance of artificial intelligence technology and the previous research findings on the effects of these components on BI and UB are presented as a basis for each of our research hypotheses:

Since previous studies on AI acceptance based on UTAUT models have not found the significant moderating effect of experience, gender and age variables in the model (e.g., Bazelais et al., 2024; Cabrera-Sánchez et al., 2021; Strzelecki, 2023), moderator analysis was not conducted in this study.

Method

Participants and Data Collection

Participants of the study were 280 teachers and education university lecturers (Age: Mean = 36.83, S = 8.23, min-max = 23–64) working in Türkiye during the 2023 to 2024 academic year (see Table 1). Prior to data collection, all approvals were sought from local authorities and approved by the ethics committee. The participants in the study were selected using a snowball sampling method. In this context, the researchers first contacted educators (teachers and teaching staff working in education faculties) within their professional and personal networks via email, social media (Instagram), and WhatsApp. Participants were clearly informed of the study’s purpose and scope, and that participation was voluntary. They were provided with a Google Form survey link. The initial participants were asked to refer other individuals who met the research criteria—teachers and university lecturers working in education faculties—to the study. As a result of this process, a total of 280 educators were reached. Although the snowball sampling method may limit the representativeness of the sample, the participants were diverse, including educators from different educational institutions and in various disciplines, which provided valuable insights into the acceptance of artificial intelligence software.

Descriptive Information About the Participants.

Instruments

The data of the study were collected using an online questionnaire (Google form survey) during the spring semester of 2023 to 2024. In the first part of the questionnaire, participants were informed about the purpose and scope of the research and the confidentiality of the information to be obtained from the research. This part also included information on how artificial intelligence software can be used for different purposes in education referring to sample software programs like ChatGPT. In the second part, the participants were asked questions about their gender, age, province, professional seniority, at what level they teach (preschool, primary school, secondary school, high school, higher education), main teaching branch/field of expertise, frequency of using artificial intelligence in their lessons (never, rarely, sometimes, usually, always). The third part of the instrument included the Artificial Intelligence Acceptance Scale for Education (AIASE).

Artificial Intelligence Acceptance Scale for Education

AIASE was developed to measure the acceptance of artificial intelligence software by educators. The scale was prepared based on the UTAUT2 theoretical framework (Venkatesh et al., 2012). While preparing the scale items, the theoretical frameworks of UTAUT (Venkatesh et al., 2003) and UTAUT2 (Venkatesh et al., 2012) were first examined in detail. According to the UTAUT2 theoretical framework, the scale was planned to include PE, EE, SI, FC, HM, H, PV, and BI subscales. Then, UTAUT scales related to artificial intelligence acceptance were examined in the literature (An et al., 2023; Ayanwale et al., 2022; Chatterjee & Bhattacharjee, 2020; Ezekiel & Akinyemi, 2022; Hidayat-ur-Rehman & Ibrahim, 2023; Rahiman & Kodikal, 2024; Suzianti et al., 2023). As a result of the literature review, an original item pool was created by the researchers, taking into account the requirements of the theoretical and pedagogical dimensions of each UTAUT2 construct in the educational context, and a draft scale consisting of 41 items was constructed. The number of items in the subscales aims to adequately measure the pedagogical and behavioral dimensions of each factor in an educational context. The answer options ranged from 1 (strongly disagree) to 5 (strongly agree).

Below, operational definitions and measurements of the scales used in the study are presented.

Performance expectancy: A 7-item scale was prepared to measure educators’ belief that they can improve their lesson planning, teaching, and evaluation processes by using artificial intelligence software; prepare course materials more effectively; better monitor their students’ learning and development processes; and positively support their professional development.

Sample Item: “Artificial intelligence software can help me plan my lessons effectively.”

Effort expectancy: A 5-item scale was prepared to measure educators’ perceptions of the ease of learning and implementing artificial intelligence software in their courses.

Sample Item: “It is easy for me to learn to use artificial intelligence software in my classes.”

Social ınfluence: A 5-item scale was prepared to measure the perception levels of educators regarding the expectations and support of important people (e.g., colleagues, school administrators).

Sample Item: “Educators in my close circle support me in using artificial intelligence software.”

Facilitating conditions: A 5-item scale was used to measure educators’ perceptions of the existence of the technical and organizational infrastructure necessary to use artificial intelligence software in their courses.

Sample Item: “I have the necessary opportunities to use artificial intelligence software in my lessons.”

Hedonic motivation: A 5-item scale was prepared to measure the level of enjoyment and pleasure that educators receive from using artificial intelligence software in their classes.

Sample Item: “Artificial intelligence software makes my lessons more enjoyable.”

Habit: A 5-item scale was prepared to measure whether educators’ use of artificial intelligence software in their classes has become routine.

Sample Item: “It is a habit for me to use artificial intelligence software in my lessons.”

Price value: A 3-item scale was prepared to measure educators’ evaluation of the appropriateness of the cost of using artificial intelligence software in their classes.

Sample Item: “The cost of the technological tools (camera, computer, smartphone, tablet, etc.) required to use artificial intelligence technology in my lessons is reasonable.”

Behavioral ıntention: A 6-item scale was prepared to measure educators’ intentions to use artificial intelligence software in their classes.

Sample Item: “I plan to increase my use of artificial intelligence technology in my classes in the future.”

To evaluate content validity, expert opinions were solicited from four educators specializing in educational technology. The 41-item draft was reviewed by these experts, who assessed each item’s appropriateness for sub-dimensions, clarity, and relevance to the target participants. According to Davis (1992) technique, all items achieved a content validity index (CVI) of 1.0. Based on expert suggestions, minor wording adjustments were made to three items; however, no items were removed. Following this, two additional educators, not involved as participants, reviewed the items for clarity, comprehensibility, and suitability. As a result of their feedback, minor linguistic improvements were made to increase readability and the items were finalized. Scale items are presented in the Appendix.

Data Analysis

The theoretical model presented in Figure 1 was tested in two stages of Structural Equation Modeling (SEM) analysis: Testing the measurement model and testing the structural model (Kline, 2011). In the first stage, the measurement model showing the relationship between the responses to the items in the AIASE (observed constructs) and the eight latent constructs in UTAUT2 was tested using Confirmatory Factor Analysis (CFA). As CFA is a confirmatory method, no item elimination is performed, and all items were retained in accordance with the a priori theoretical framework. Factor loadings were examined, and a minimum cutoff of .50 was applied to ensure that each item adequately represented its corresponding latent construct (Hair et al., 2014). In addition, the Discriminant, Convergent, and Nomological Validity of the measurement tool was examined by using the results obtained from the CFA and the relationships between the factors. Cronbach alpha and Composite Reliability (CR) reliability coefficients were calculated to prove the reliability of the data obtained from the scale. In the second stage, the structural model (research model) was tested. In the analysis performed in the AMOS software program, the bootstrapping mediation method was used to determine the statistical significance of indirect effects (Koopman et al., 2015). Critical values of the goodness of fit values are presented in Table 2 (see Brown, 2015; Hair et al., 2014; Hu & Bentler, 1999; Tabachnick & Fidell, 2013). In addition, the sensitivity of the measurements to common method variance was examined with Harman’s single factor test (Podsakoff et al., 2003, p. 879). The results revealed that the one-factor structure of the scale was not confirmed (χ2/df = 14.98, RMSEA = 0.224, RMR = 0.16, GFI = 0.33, AGFI = 0.26, CFI = 0.86, NFI = 0.84, IFI = 0.86, RFI = 0.84). According to this result, it can be said that common method variance is not a problem for the measurements of this research.

Research model.

CFA Results for the 8-Factor Structure of the AIASE.

Results

Model Fit Measures (Measurement Model)

The relationships between the 8 latent and 41 observed variables in the measurement model derived from UTAUT2 were tested with CFA. The data set’s (n = 280) appropriateness for CFA was examined before starting the analysis. Since the z-values ranged from ±3.29 and the skewness and kurtosis values ranged from ±1.5 (kurtosis = −0.939 to 0.833; skewness = −1.084 to 0.946), it can be said that univariate normality was attained (Tabachnick & Fidell, 2013). Also, the data can be considered to meet multivariate normality since the Mardia kurtosis value of 357.825 is less than the critical threshold of 1,763 (p[p+2] = 41×43) (Raykov & Marcoulides, 2008). Furthermore, the majority of the correlation matrix’s items have Pearson correlations greater than .30, which suggests that factor analysis is appropriate and that there is no singularity issue with the variables (Tabachnick & Fidell, 2013). Additionally, the fact that correlation values remains below the .90 cutoff suggests that there is no multicollinearity problem between the variables (Field, 2009). Bartlett’s (1954) test of sphericity was significant (χ2 = 11,987.877, df = 820, p < .001), suggesting that the data set was appropriate for factor analysis and that there were significant correlations between the variables (Pallant, 2011). The sample size was adequate for the study, according to the Kaiser–Meyer–Olkin (KMO) measure of sampling adequacy, which was .939 (Field, 2009). Given that multivariate normality was satisfied, the Maximum Likelihood (ML) estimator was used for CFA (Hair et al., 2014). Table 2 presents the CFA results.

As a result of the CFA, the chi-square test result was significant (χ2 = 1,897.75, df = 751, p = .0000 < .01). Therefore, the model was confirmed by examining other goodness of fit values. In Table 2, it is observed that χ2/df, RMSEA, RMR, and SRMR values are acceptable, CFI, NFI, NNFI, IFI, and RFI values show excellent fit. However, GFI and AGFI values were below the acceptable value. The indices of GFI and AGFI are not independent of the sample size but are strongly influenced by it and the desired values are achieved when the sample size is high (Anderson & Gerbing, 1988; Hooper et al., 2008). Although the ideal sample size for confirmatory factor analysis is determined as 300 in the literature in terms of the suitability of the sample size (Hair et al., 2014), confirmatory factor analysis was conducted with data from n = 280 participants in this study. In conclusion, since the goodness of fit values of the 8-factor model is at acceptable or excellent levels except for GFI and AGFI values, it can be said that the measurement model demonstrates an acceptable fit. Standardized factor loadings, the squares of standardized factor loadings, and t-values are also presented in the Table 3.

t(CR) Values, Standardized Factor Loadings, and Explained Variance Values (R2).

p < .01.

As a result of CFA, the standardized factor loadings ranged between .72 and .92 in PE factor, .77 and .90 in EE factor, .71 and .88 in SI factor, .56 and .79 in FC factor, .87 and .94 in HM factor, .83 and .91 in H factor, .79 and .92 in PV, and .86 and .97 in BI. All factor loadings exceeded the minimum cutoff value of .50, and all t-values (CR) were statistically significant (p < .01), indicating that the observed variables adequately represented their respective latent constructs.

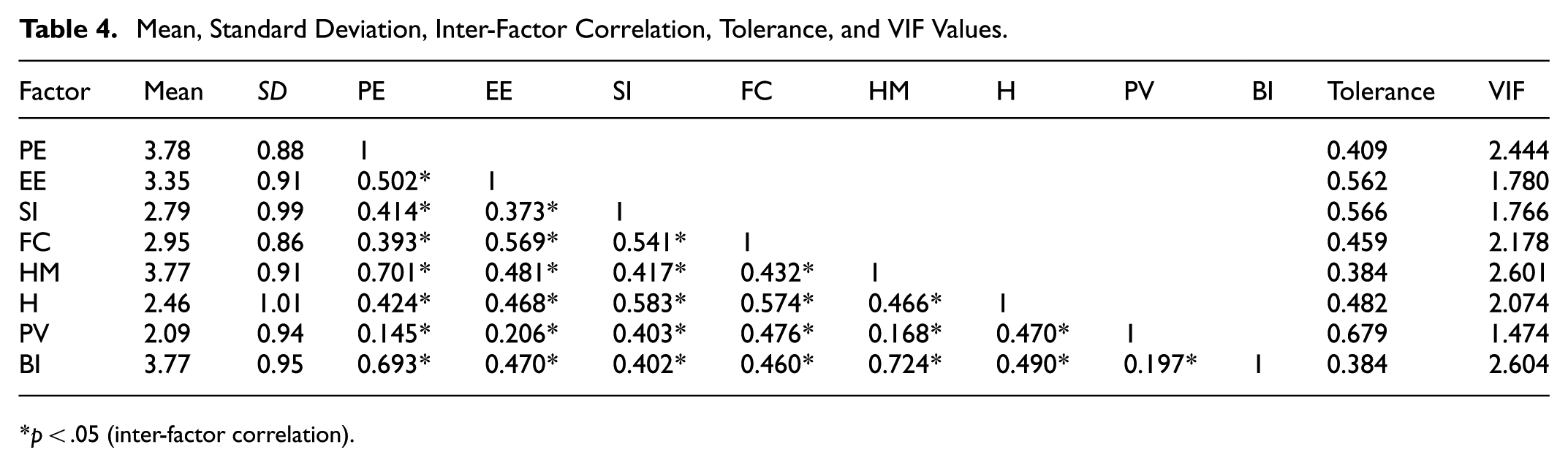

The mean, standard deviation (SD), relationships between factors, tolerance, and VIF values are presented in Table 4.

Mean, Standard Deviation, Inter-Factor Correlation, Tolerance, and VIF Values.

p < .05 (inter-factor correlation).

Since the correlation coefficients between variables are below 0.80, tolerance values are >0.10, and VIF values are <10, there is no multicollinearity issue between the variables (see Table 4) (Hair et al., 2014).

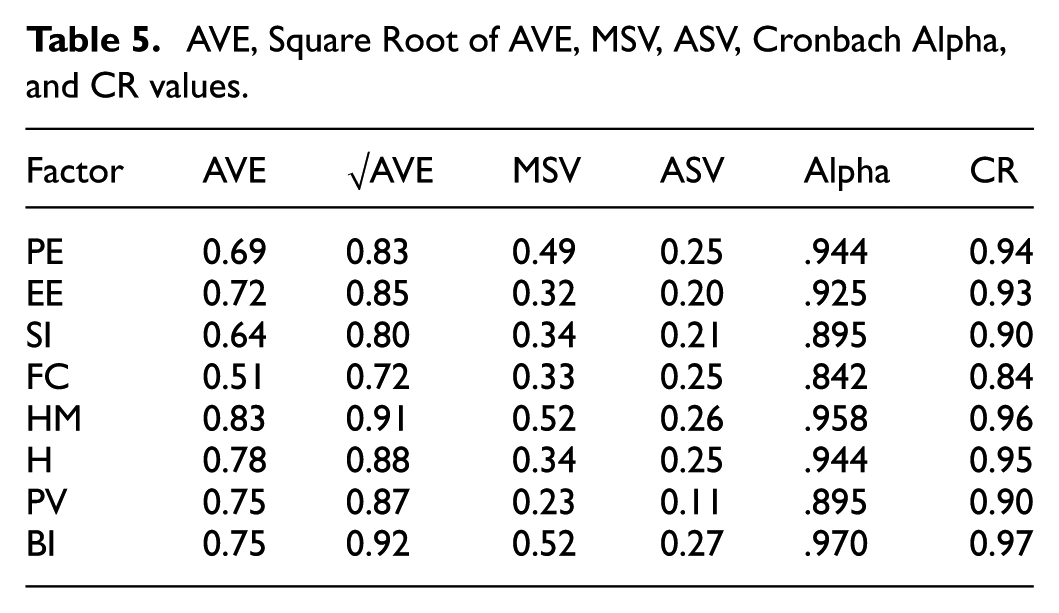

The Average Variance Extracted (AVE), Square root of AVE, Maximum Shared Variance (MSV), Average Shared Variance (ASV), Cronbach alpha, and CR values are presented in Table 5.

AVE, Square Root of AVE, MSV, ASV, Cronbach Alpha, and CR values.

According to Tables 3–5, it can be said that discriminant validity is provided since the Square root of AVE value is greater than the correlation values between the factors in all factors and AVE > ASV and AVE > MSV, and convergent validity of the measurement tool is provided since the factor loadings (>0.50), CR (>0.70) and AVE values (>0.50) are high. In addition, the positive significant relationships between the factors indicate that the instrument is compatible with the initial theoretical structure, and its nomological validity is ensured. High Cronbach alpha and CR coefficients indicate that the data obtained from the measurement tool are sufficiently reliable (Kline, 2011).

Hypothesis Testing Results (Structural Model)

With SEM analysis, we examined the structural relationships between the internal and external variables in the research model. Before the analysis, univariate and multivariate normality was examined for the population distributions of the model’s variables. z values calculated in the range of ±3.29 for the variables in the data set obtained from n = 280 people showed that there were no univariate outliers (Tabachnick & Fidell, 2013). In addition, the skewness and the kurtosis values ranging between ±1 for all variables in the model showed that univariate normality was achieved. According to the Mahalanobis distance values calculated for the detection of multivariate outliers, 20 multivariate outliers at p < .05 significance level were removed from the data set. Moreover, a critical ratio (CR) of less than 5 (CR = −0.135, kurtosis = −0.235) provided evidence for multivariate normality (Bentler, 2006). The Maximum Likelihood Estimation method, which has multiple normality assumption and is widely preferred in SEM analyses, was utilized to test the structural model (Kline, 2011).

The goodness of fit values calculated as a result of the path analysis (χ2/df = 10.457/5 = 2.091, p = .063, RMSEA = 0.065, RMR = 0.015, GFI = 0.991, AGFI = 0.922, CFI = 0.996, NFI = 0.992, IFI = 0.996, RFI = 0.941, TLI = 0.968) revealed that the model showed a good fit. Figure 2 and Table 6 show the research model’s path analysis results.

Estimated path coefficients of the research model as a result of path analysis.

Path Coefficients, t Values and Hypothesis Test Results for the Effects in the Research Model.

Note. χ2 = 10.457; df = 5.

p < .05. ***p = .000.

The analysis’s findings demonstrated that PE (β = .324; t = 5.973; p < .05), HM (β = .443; t = 7.834; p < .05), H (β = .102; t = 1.991; p < .05) variables have a significant positive effect on educators’ BI. On the other hand, the effect of EE, SI, FC and PV on BI was not significant (p > .05). Taken together, the exogenous variables in the structural model explain 68% of the variance in educators’ BI. In addition, educators’ behavior of using AI software for education purpose was strongly influenced by their use habits (β = .519; t = 7.897; p < .05), while the effect of FC and BI on UB was not significant (p > .05). According to the model variables altogether accounts for the 34% of the variability in educators’ behavior of using AI software for education purpose.

Discussion

In the current research, the factors influencing Turkish educators’ acceptance and use of artificial intelligence software for educational purposes were investigated based on the UTAUT2. In this context, the two-stage SEM analysis was conducted. In the first stage, the data obtained from the AIASE developed by the researchers to measure the perceptions of educators regarding their acceptance of artificial intelligence software were proven to be valid and reliable. In the second stage, the structural model was tested and according to the results of SEM analysis, four of the 10 research hypotheses were accepted (H1, H6, H8, and H9) and six hypotheses (H2, H3, H4, H5, H7, and H10) were rejected. The findings of the research are discussed with the related studies in the literature.

In the study, it was found that PE had a significant effect on BI. Accordingly, it can be asserted that teachers’ intention to use AI software can be strengthened as they perceive the potential of AI software in lesson planning and implementation, enhancing student learning and assessment. This result is consistent with the results of studies that found that when educators become aware of the advantages of AI for their instruction, their behavioral intentions to use AI in their teaching may increase (Ali et al., 2025; An et al., 2023; Ayanwale et al., 2022; Yao & Abd Halim, 2023; X. Zhang & Wareewanich, 2024). An et al. (2023), in their study where PE was the strongest predictor of BI, stated that teachers with high-performance expectancy had beliefs that the quality of teaching would improve with the use of artificial intelligence. Alhwaiti (2023) pointed out that faculty members tend to accept the use of AI provided that it helps them improve their performance at work and improve their relationships with people. Teachers’ willingness to accept AI technology will rise, according to Yao and Abd Halim (2023), as they become more aware of how AI may enhance teaching activities, increase job efficiency, and support professional development. The result related to PE extends the research results that PE is effective on BI in teachers’ technology acceptance (e.g., Du & Liang, 2024; Kim & Lee, 2022; Nikolopoulou et al., 2021; Šumak & Šorgo, 2016) to include AI technology acceptance.

Another result of the study is that EE had not a significant effect on BI. The result shows that the ease of use of artificial intelligence software does not affect the BI of educators to use the software for educational purposes. This result is consistent with the results of studies that found that teachers’ perceptions of EE do not affect on behavioral intention to use (An et al., 2023), willingness to adopt (Yao & Abd Halim, 2023) and willingness to use (X. Zhang & Wareewanich, 2024) AI. Similarly, there are also previous research results suggesting that EE doesn’t significantly impact BI regarding teachers’ technology acceptance (Nikolopoulou et al., 2021; Šumak & Šorgo, 2016). On the other hand, Hidayat-ur-Rehman and Ibrahim (2023) found that educators’ EE has an effect on their intention to use Chatbot. Al Darayseh (2023) also found that science teachers’ perception of ease of use has a significant effect on BI.

In the study, the effect of SI on BI was found to be non-significant. This finding indicates that the encouragement and support of colleagues and institutions do not affect educators’ intention to use AI for educational purposes. This may be due to the fact that the use of AI technology in education in Türkiye is still in its infancy and that social incentives and support are insufficient. However, in contrast, studies have also found that SI has a significant effect on educators’ behavioral intentions to use artificial intelligence (An et al., 2023; Yao & Abd Halim, 2023; X. Zhang & Wareewanich, 2024). Although similar results were not obtained in studies on teachers, there are studies that determined that SI does not have a significant effect on BI in university students’ acceptance of AI in education (e.g., Bazelais et al., 2024; Foroughi et al., 2023). Nikolopoulou et al. (2021) also found that the relationship between SI and BI in teachers’ acceptance of mobile internet was not significant.

Another result was FC had no significant effect on BI and UB. Accordingly, the technological knowledge and skills of educators and the financial and technical support provided do not affect their intention to use artificial intelligence software for educational purposes. Similarly, there are studies indicating that FC has no effect on BI in AI acceptance (Cabrera-Sánchez et al., 2021; Foroughi et al., 2023; García de Blanes Sebastián et al., 2022; Strzelecki, 2023; Yao & Abd Halim, 2023). Yao and Abd Halim (2023) found that FC has no effect on primary school teachers’ willingness to adopt AI technology in China. This result corroborates the previous research results that found that FC is not a significant predictor of BI and UB (e.g., Nikolopoulou et al., 2021) in teachers’ technology acceptance. In contrast, some research have determined the significant effect of FC on BI (Chatterjee & Bhattacharjee, 2020; X. Zhang & Wareewanich, 2024) and UB (Habibi et al., 2023; Strzelecki, 2023; Strzelecki & ElArabawy, 2024) in AI acceptance.

One of the remarkable results of the study is that HM is the leading significant predictor of BI. This result implies that as teachers’ enjoyment increases, their intention to use AI software in their lessons increases. Studies investigating teachers’ technology acceptance have also found a significant effect of HM on BI (e.g., Du & Liang, 2024; Nikolopoulou et al., 2021). Adarkwah et al. (2023) stated that academics perceived the use of ChatGPT’s interface as fun. In the literature, while there are studies that have determined that HM predicts the intention to use in AI technology acceptance (Cabrera-Sánchez et al., 2021; Foroughi et al., 2023; Habibi et al., 2023; Strzelecki, 2023), there are also studies that have determined that the relationship between HM and BI is not significant (e.g., García de Blanes Sebastián et al., 2022).

As a result of the research, Habit significantly affected BI. In addition, it was determined that H was the only significant predictor of UB in the research model. These results indicate that as educators make it a habit to use artificial intelligence software in their courses, their behavioral intentions and usage behaviors may increase. The findings also show that the only significant predictor of UB is Habit, suggesting that educators’ actual usage behavior is more dependent on routines and previous habits despite their strong intentions. This result supports the results of studies showing that H is effective on BI (Cabrera-Sánchez et al., 2021; García de Blanes Sebastián et al., 2022; Habibi et al., 2023; Strzelecki, 2023) and UB (Cabrera-Sánchez et al., 2021; Habibi et al., 2023; Strzelecki, 2023) in AI acceptance. In addition, this finding is consistent with the results of studies that identified the significant effect of habit on BI and UB in technology acceptance studies conducted with teachers (e.g., Kim & Lee, 2022; Nikolopoulou et al., 2021).

In the current research, PV was one of the variables that was shown to have no significant impact on BI. Similarly, García de Blanes Sebastián et al. (2022) found that PV has no significant effect on BI in users’ acceptance of the artificial intelligence. On the other hand, in many studies conducted within the framework of UTAUT model (Cabrera-Sánchez et al., 2021; García de Blanes Sebastián et al., 2022; Strzelecki, 2023), PV was found to have a significant effect on BI in AI acceptance.

In the study, the predictive effect of BI on UB was found to be non-significant. Among teachers’ technology acceptance research, there are studies that found a significant relationship between BI and UB (Kim & Lee, 2022; Nikolopoulou et al., 2021) and not (Šumak & Šorgo, 2016). On the other hand, in most of the studies using the UTAUT model, it was found that BI was a significant predictor of UB in AI acceptance (Bazelais et al., 2024; Cabrera-Sánchez et al., 2021; Habibi et al., 2023; Strzelecki, 2023; Strzelecki & ElArabawy, 2024).

The results obtained from this study may have been influenced by contextual and cultural factors. In their meta-analysis of the UTAUT2 theory, Tamilmani et al. (2021) emphasized that results obtained from a single user group cannot be universally generalized to all technology user types and revealed that the predictive power of technology acceptance models may vary depending on cultural and contextual differences. Similarly, in their study examining the validity of the cross-cultural UTAUT model, Gogus et al. (2012) stated that cultural factors are a significant determinant of technology acceptance, and the availability of technological infrastructure is also a critical variable for the model’s functioning. Addressing the cross-cultural validity of UTAUT, Oshlyansky et al. (2007) acknowledged that the model can be applied in diverse cultural contexts and noted that the UTAUT tool offers valuable insights into understanding cross-cultural differences in technology acceptance.

The results of this study gain particular significance when considered within the structural and cultural context of Türkiye. AI initiatives in education in Türkiye began with the National Artificial Intelligence Strategy Document published in 2021 (Presidency of the Republic of Turkey Digital Transformation Office & Ministry of Industry and Technology, 2021). As stated in the 2024 to 2028 Strategic Plan published by Ministry of National Education, Strategy Development Department (2024) and the Roadmap of Turkish Higher Education Towards 2030 of the Council of Higher Education, 2025, the aim is to develop AI applications in education. The Ministry of National Education’s (2025) Artificial Intelligence in Education Policy Document and Action Plan (2025–2029) states that AI policies in education aim to improve teachers’ digital skills and align curricula with AI. However, while AI integration in education has accelerated in Türkiye, implementation is still in its infancy. Applications are still in the policy development and pilot phases, have not been fully integrated into the education system, and educators lack sufficient technical and pedagogical support. This study examined educators’ acceptance and use of AI-based software within the Turkish educational context, highlighting both theoretical and contextual dynamics of technology adoption.

The results demonstrate that PE, HM, and H were strong predictors of Behavioral Intention (BI), highlighting that perceived personal benefit, intrinsic satisfaction, and prior experience are key drivers of AI adoption, particularly in contexts where technological infrastructure is still in development. In contrast, EE, FC, SI, and PV did not significantly predict BI. This result may stem from the early phase of AI adoption in Turkish education. Although educators express strong behavioral intention, obstacles such as inadequate technological infrastructure, limited technical support, incomplete institutional policies, unclear ethical standards, and insufficient pedagogical resources may affect the influence of these external factors. Additionally, as AI use remains rare and unfamiliar in Turkish schools, neither colleagues, administrators, nor organizational culture currently exerts substantial encouragement or pressure for adoption. As a result, social and institutional support systems are not yet robust enough to serve as effective predictors.

The lack of significance found in the relationship between Behavioral Intention and Usage Behavior in this study can be considered one of the most critical findings. While model variables explained 68% of educators’ BI, UB only explained 34%. This suggests that even if factors such as Performance Expectancy, Hedonic Motivation, and Habit are strong determinants of intention, the reflection of intention on actual behavior is limited. Furthermore, this finding suggests that, despite structurally sufficient intention, it may not translate into action due to external constraints, and the UTAUT2 model’s assumption of internal causality in technology acceptance and use should be questioned under certain contextual conditions. The difference between BI and UB can be explained by the fact that teachers need training and guidance to effectively integrate AI tools into their pedagogical strategies, and the potential negative impacts of AI in education, such as data privacy and ethical concerns, may be influential (Alwaqdani, 2025; Cheah et al., 2025). Furthermore, external factors such as a lack of institutional policies, inadequate technology infrastructure, lack of technical support, funding constraints, and resistance to change may also limit educators’ actual use (Cheah et al., 2025; Darmawan et al., 2024). This suggests that the actual use of AI in education may be influenced by contextual factors such as infrastructure, institutional support, and pedagogical guidance, and these factors should be taken into account. Indeed, Watted (2025) emphasizes in his study that sustainable AI applications in education require targeted investments in infrastructure, culturally responsive AI tools, and systemic support mechanisms. Therefore, even if teachers’ intentions are high, external factors such as a lack of technological infrastructure, technical support limitations, institutional policy gaps, ethical concerns, and a lack of guidance on AI can prevent this intention from translating into actual use. In line with UTAUT2 assumptions (Venkatesh et al., 2012), these results indicate that behavioral intention alone may be insufficient to guarantee adoption under constrained systemic conditions. This situation may be attributed to the still-developing AI infrastructure in education in Türkiye and the limited institutional resources and guidance available to support teachers’ use of technology. This could also be attributed to economic factors. The fact that AI software is often priced in dollars and that teachers’ salaries in Türkiye are relatively low may limit educators’ decision-making about purchasing and regularly using these technologies. Therefore, the lack of economic access, along with technical infrastructure and institutional support, can be considered a significant contextual factor hindering the translation of intention into actual behavior.

On the other hand, Qi et al. (2025) emphasized AI literacy as a potential determinant of AI adoption and noted that this issue and its interaction with UTAUT have been studied to a limited extent. According to the literature by Ng et al. (2021), AI literacy is defined in four dimensions: “Know & Understand AI,”“Apply AI,”“Evaluate & Create AI,” and “AI Ethics.” In particular, the AI Ethics dimension demonstrates that ethical concerns play a critical role in teachers’ adoption of AI tools (Brandhofer & Tengler, 2024). In contrast, the UTAUT2 model structurally fails to incorporate ethical and data privacy concerns, which are increasingly important in the context of educational AI. Indeed, Xue et al. (2025) emphasize that ethical considerations are rarely addressed in the UTAUT/UTAUT2 extensions and that ethical dimensions should be integrated into the model to understand AI adoption, particularly in the educational context. As AI applications in education in Türkiye are still in development, teachers’ ability to effectively use AI tools pedagogically and ethically may be influenced not only by their technical infrastructure but also by their levels of AI literacy and ethical awareness.

Taken together, these results indicate that, while the UTAUT2 model is applicable in the Turkish context, certain path relationships are influenced by cultural and structural factors. Universal generalizations of technology acceptance models may require reconsideration in developing contexts. Incorporating cultural or institutional moderators into future research could strengthen both the theoretical rigor and contextual sensitivity of adoption models.

Theoretical and Practical Implications

Theoretical Implications

This study makes different contributions to the literature on the use of AI technology in education. First of all, the results of the study extend the UTAUT2 studies on educators’ acceptance and use of technology in their courses to artificial intelligence technology and guide future research. The results show that UTAUT2 explains 68% of the variability in educators’ BI and 34% of the variability in UB and has the power to predict the use of AI software in their courses. The adequate validation of the UTAUT2 model in the research showed the applicability of the UTAUT2 in educators’ acceptance of artificial intelligence technology. The present research provides valuable insights to practitioners who want to promote the adoption of AI in education and ensure its implementation.

Practical Implications

Practical recommendations are offered to educational administrators and decision-makers, teachers and university lecturers, technology developers, and educational technology researchers who can be affected by the results of this research and put them into practice.

Existing training programs and processes can influence teachers’ tendency to accept and use AI software. In this study, significant effects of PE, HM, and H on BI and significant effects of H on UB were revealed. Based on these results, educational institutions can organize special training and development programs that include activities such as providing instructors with examples of how AI technologies can increase lesson efficiency and emphasizing the advantages of using AI in their lessons. Educational institutions can make arrangements to facilitate the integration of AI technologies by reviewing existing curricula and teaching/learning processes. In particular, providing training programs offering guidance and support on how to use AI technologies in a way that improves performance can help teachers adopt these technologies more comfortably.

Moreover, providing user-friendly interfaces, personalized experiences, and ongoing support can also make teachers more likely to enjoy using these technologies.

Limitations and Future Directions

The results of this study are limited to data obtained from educators working in Türkiye through a snowball sampling method. The snowball sampling method may have led to a higher likelihood of inclusion of individuals who are interested in AI technology and have more positive attitudes toward it. Additionally, the fact that the participants were selected from people whom the researchers could reach through social networks increases the risk of selection bias. This methodological limitation limits the generalizability of the findings and suggests that the results should be evaluated contextually. Randomly selecting educators from different regions, branches, and educational levels across Türkiye is important for enhancing the generalizability of these results.

In the study, educators’ use of artificial intelligence software was analyzed within the framework of UTAUT2, the extended UTAUT. The UTAUT model developed by Venkatesh et al. (2003) was created by synthesizing the components of eight fundamental technology acceptance models and demonstrated higher explanatory power when the eight models were evaluated individually on the original data. UTAUT synthesizes the statistically significant variables of many technology acceptance models, so it is to be expected that it does not include all variables. However, in future studies, the inclusion of additional variables from different technology acceptance models, such as the attitude variable found in TAM or the self-efficacy in Social Cognitive Theory, could broaden the theoretical scope of the model. Also, including variables such as AI literacy and ethical awareness in the UTAUT2 model could increase the contextual explanatory power of the model and allow for a more comprehensive assessment of AI acceptance in education.

The study is a cross-sectional study in which the perceptions of educators regarding their acceptance of artificial intelligence software in their courses were determined. The majority of the participants of the study have limited use of AI software in their courses. As educational environments are organized in accordance with the use of AI, teachers are provided with the necessary trainings, and teachers’ experience in the use of AI technology in their lessons increases, their perceptions may also change. Accordingly, it is recommended to conduct longitudinal studies with repeated measurements in order to observe the effect of changes that may occur in educators’ perceptions overtime on the relationships between variables.

The lack of significance of the BI → UB relationship may be due to educators’ use of AI software still in its infancy, and external structural conditions (lack of organizational support, technical access barriers, lack of resources) may limit the ability to translate intention into action. Future research could explore the intention-behavior relationship more comprehensively and assess contextual differences by adding external variables such as organizational support and access to technical infrastructure as mediators or moderators in the UTAUT2 model.

This study examined the acceptance of AI software for teaching in general. In future research, comparative studies on AI technology acceptance in different disciplines of education (mathematics, science, social sciences, etc.) can be conducted. This study also reflects the acceptance of AI by educators working in Türkiye. Future studies are advised to compare the acceptance of educators in different countries.

Conclusion

In Türkiye, the use of AI technology to education is still in its infancy and a lot of educators are either oblivious of AI applications or have limited experience with them. Therefore, this research is a pioneering study that examines the factors affecting the behavioral intention and usage of artificial intelligence software in the courses of educators in Türkiye. In addition, the use of the UTAUT2 model, which is frequently used in technology acceptance and has strong results in explaining technology acceptance and use, is also valuable in this research. According to the research results,

BI is significantly positively impacted by the variables PE, HM, and H, but not significantly by the variables EE, SI, FC, or PV.

UB is significantly positively impacted by variable H, but not significantly by the variables FC or BI variables.

This research paves the way for evaluating whether educators in Türkiye and similar countries adopt the use of AI applications in education. Also, the research provides results that will contribute to the design of educational settings that will improve educators’ acceptance and use of AI software for educational purposes.

Footnotes

Appendix

| Performance expectancy (PE) |

| PE1. Artificial intelligence software can help me plan my lessons effectively. |

| PE2. Thanks to artificial intelligence software, I can teach my lessons more effectively. |

| PE3. Thanks to artificial intelligence software, I can prepare more effective course materials. |

| PE4. Thanks to artificial intelligence software, my students can learn lessons better. |

| PE5. Thanks to artificial intelligence software, I can monitor my students’ progress more effectively. |

| PE6. Thanks to artificial intelligence software, I can assess my students objectively. |

| PE7. Artificial intelligence software positively impacts my professional development. |

| Effort expectancy (EE) |

| EE8. It is easy for me to learn to use artificial intelligence software in my classes. |

| EE9. I can easily become proficient in using artificial intelligence software in my lessons. |

| EE10. It is not difficult to learn the logic of using artificial intelligence software in my lessons. |

| EE11. I don’t need to put in much effort to learn how to use artificial intelligence software in my lessons. |

| EE12. It is not difficult to learn about artificial intelligence software that educators can use. |

| Social influence (SI) |

| SI13. The number of teachers I know who use artificial intelligence software in their classes is increasing. |

| SI14. More educators are now encouraging the use of AI software in classrooms. |

| SI15. Educators in my close circle support me in using artificial intelligence software. |

| SI16. My institution encourages me to use artificial intelligence software in my courses. |

| SI17. Very soon, there will be no educators left who do not use artificial intelligence software in their classes. |

| Facilitating conditions (FC) |

| FC18. I have the necessary opportunities to use artificial intelligence software in my lessons. |

| FC19. Artificial intelligence technology is compatible with other technologies (smartphones, tablets, computers, etc.) that I use in my lessons. |

| FC20. I have the skills necessary to use artificial intelligence software in my classes. |

| FC21. My institution provides the necessary technical support for using artificial intelligence software in my courses. |

| FC22. My institution provides the necessary financial support for using artificial intelligence software in my courses. |

| Hedonic motivation (HM) |

| HM23. Artificial intelligence software makes my lessons more enjoyable. |

| HM24. It is fun to use artificial intelligence software in my classes. |

| HM25. Students enjoy my classes where I use artificial intelligence software. |

| HM26. I enjoy using artificial intelligence software in my lessons. |

| HM27. Students enjoy attending classes that use artificial intelligence software. |

| Habit (H) |

| H28. It is a habit for me to use artificial intelligence software in my lessons. |

| H29. I always prefer to use artificial intelligence software in my lessons. |

| H30. When creating an instructional design for my courses, I first use artificial intelligence software. |

| H31. I constantly follow artificial intelligence applications used in education. |

| H32. I can’t stop using artificial intelligence software in my lessons. |

| Price value (PV) |

| PV33. The cost of the technological tools (camera, computer, smartphone, tablet, etc.) required to use artificial intelligence technology in my lessons is reasonable. |

| PV34. Full (paid) versions of artificial intelligence software that I can use in my lessons are within my budget. |

| PV35. Training on the use of artificial intelligence technology in education is cost-effective. |

| Behavioral intention (BI) |

| BI36. I want to make more use of artificial intelligence software in my lessons. |

| BI37. I predict that I will use artificial intelligence software more in my classes in the future. |

| BI38. I will look for opportunities to use artificial intelligence software in my classes in the future. |

| BI39. I stay updated on AI developments to enhance my teaching. |

| BI40. I plan to increase my use of artificial intelligence technology in my classes in the future. |

| BI41. I will always try to use artificial intelligence technology in my lessons. |

Acknowledgements

The authors would like to thank the Scientific Research Projects Coordination Unit of İnönü University, Turkey, for funding the open access publication fees of this article [Project Number: SBA-2025-4251].

Ethical Considerations

The manuscript has not been published elsewhere and that it has not been submitted simultaneously for publication elsewhere. Ethics committee approval and all permissions were obtained from local authorities prior to data collection.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The open access publication fees of this article were funded by the Scientific Research Projects Coordination Unit of Inonu University, Türkiye [Project Number: SBA-2025-4251].

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Materials and/or code availability: There isn’t any material and/or code. The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.