Abstract

Instructional design (ID) plays a critical role in enhancing learning by systematically developing educational experiences based on established standards and competencies. The International Board of Standards for Training, Performance, and Instruction (IBSTPI) provides a comprehensive framework of competencies that guide professionals in the ID field across multiple domains. This study aims to assess how effectively an undergraduate educational technology program incorporates ID competencies and to explore the impact of a reconstructed project-based evaluation course on students’ development of competencies within the Evaluation & Implementation domain. Using pre- and post-course IBSTPI competency surveys, open-ended questions, and semi-structured interviews, this mixed-methods study analyzed both quantitative and qualitative evidence. Results indicated that students generally perceived themselves as competent across all domains, with the highest self-ratings in Planning & Analysis and Design & Development. Statistical analysis revealed significant improvements in all domains, including Evaluation & Implementation, underscoring the integrative role of the reconstructed project-based course in reinforcing and applying prior knowledge. Interviews further highlighted the importance of iterative learning processes fostered by sequential coursework and practical experiences, although some gaps were identified in the Management domain proficiency.

Plain Language Summary

This study explores how well an undergraduate educational technology program prepares students for professional roles in instructional design (ID), focusing on the alignment between course content and the essential competencies outlined by the International Board of Standards for Training, Performance, and Instruction (IBSTPI). Instructional design is a field that aims to improve learning experiences by carefully designing educational materials and experiences. To succeed, professionals must develop a wide range of competencies across areas such as planning, analysis, design, development, evaluation, and implementation. Using a combination of surveys and interviews, this research examined students’ perceptions of their competency levels before and after completing a key last-semester course, which focuses on evaluation and implementation strategies. Findings show that students generally felt confident in their abilities, especially in the areas of planning, analysis, design, and development. The CET 472 course notably played an important role in strengthening students’ competencies, with significant improvements observed in the evaluation and implementation domain, the primary focus of the course. Additionally, students’ job experiences contributed meaningfully to their development, particularly in professional foundations, planning and analysis, and management competencies. Interviews revealed that both the sequence of courses and practical experiences helped students build their skills over time, though some students still reported gaps in their management abilities. These results suggest that while the program effectively supports student growth in many areas, there is an opportunity to place greater emphasis on developing management-related competencies. Overall, this study highlights the value of integrating targeted coursework with hands-on experiences to better prepare future instructional design professionals for the demands of the workplace.

Keywords

Introduction

Instructional design (ID) plays a crucial role in learning by improving the effectiveness of instruction and supporting the acquisition of knowledge and skills (Andreea, 2023). Since the beginning of the 1940s, it has gained importance in various fields including education, health, government, military, industry, business, and non-profit organizations (Reiser, 2001). Guided by learning and instructional theories, and structured taxonomies, ID includes the creation, development, and delivery of educational content to learners (Bose, 2012). Richey et al. (2001) described ID as “a collection of scientific principles pertaining to instructional methods, learner characteristics, learning environments, and outcomes” (p. 181).

Instructional designers responsible for creating ID must understand how students learn and identify the materials and methods that will best help individuals reach their academic goals (Parker, 2020). They also follow and utilize certain standards while doing this. The field of ID encompasses numerous professional organizations, many of which set the standards and competencies for the profession (Martin & Ritzhaupt, 2021). Some of the important standards can be listed as follows: IBSTPI, International Society for Performance Improvement (ISPI), Association for Talent Development (ATD), Association for Educational Communications and Technology (AECT), Online Learning Consortium (OLC), International Society for Technology in Education (ISTE), University Professional and Continuing Education Association (UPCEA; Martin & Ritzhaupt, 2021). Each organization prioritizes different areas and offers specific standards and competencies tailored to respective programs.

ID focuses on developing, assessing, and evaluating instruction (Hart, 2020). By utilizing the ID process, which involves assessing learner needs, setting measurable objectives, evaluating outcomes, and employing continuous formative evaluation, instructional designers can ensure that interventions meet the needs of all stakeholders and achieve desired outcomes. The ID field acknowledges the significance of ensuring the effectiveness of instruction through validation. Hence, evaluation is an essential ID competency (DeVaughn, 2022; DeVaughn & Stefaniak, 2020). Implementing both formative and summative evaluation plans is deemed essential in professional practice and recognized as an essential ID competency (Koszalka et al., 2013). However, there remains a notable gap between theory and application in ID education (DeVaughn, 2022). Research has identified a discrepancy between the skills employers expect of ID graduates and the competencies these graduates actually possess, particularly in the area of evaluation (Klein & Kelly, 2018; Larson & Lockee, 2009).

ID graduates often report that while their education emphasizes theory, there is insufficient emphasis on bridging this theory with real-world practice. This gap suggests that instructional programs may not adequately prepare students for the demands of the workforce, particularly in mastering industry standards and competencies. Faculty may recognize the importance of evaluation, but postgraduates often feel that their programs fall short of providing authentic, situated learning experiences that foster the practical application of these skills (DeVaughn & Stefaniak, 2020). It is critical to better align ID education with the competencies required by employers and to ensure that graduates are well-equipped for professional practice. Over the past three decades, the ID field has evolved to remain relevant with advancements in educational technology and the learning sciences (Tracey & Boling, 2014). This evolution brings unique challenges, requiring ID programs to adapt. Such programs prepare instructional designers through courses reflecting current trends and innovations, ensuring graduates can meet the evolving needs of education and training in the digital age (West et al., 2017). Analyzing the courses offered in these departments can provide insights into the preparation of future instructional designers.

In light of these changes, aligning ID education with recognized competency frameworks such as IBSTPI has become increasingly critical. As ID programs continue to evolve, integrating such perspectives into competency-based curricula is important for ensuring that students are not only theoretically informed but also practically prepared. Calls for instructional design to engage learners in authentic, project-based tasks and ill-structured problems are not new (Jonassen, 1999; Herrington et al., 2009). However, while these perspectives have shaped the discourse on what ID should achieve, empirical research explicitly examining their implementation through systematic approaches, such as project-based learning, remains relatively limited, indicating a need for further exploration in contemporary contexts.

To address these gaps, the authors reconstructed a last-semester, compulsory, project-based course focusing on courseware evaluation in an undergraduate ID program, aligned with IBSTPI standards. In this research, IBSTPI competencies were also used for program evaluation and student reflection. The study aims to contribute to the literature on competency-based education by examining how a project-based reconstructed capstone course fosters evaluation and implementation competencies in undergraduate ID students.

Specifically, the purpose of this study is twofold: first, to evaluate the degree to which an undergraduate program (hereafter referred to as the program), namely Computer Education and Educational Technology, integrates ID competencies and standards in its courses; second, to explore how a reconstructed project-based course on courseware evaluation enables higher education students to develop competencies regarding the evaluation and implementation domain.

To achieve the research purposes, the following research questions were examined:

How do students in the program perceive their proficiency in ID competencies defined by the IBSTPI survey?

To what extent were IBSTPI competencies addressed by the courses in the program as perceived by students?

Is there a significant difference in students’ perception of evaluation and implementation competencies after they attend the reconstructed course?

Theoretical Framework

This study’s theoretical framework integrates two foundations: adult learning principles operationalized through project-based learning (PBL) and competency-based standards defined by IBSTPI. The combination of these perspectives allows for an ID approach that not only engages learners in authentic, practice-oriented tasks but also measures and develops the competencies necessary for professional success in the field. In ID education, embedding PBL within competency-based frameworks such as the IBSTPI standards enables learners to bridge theory and practice while developing skills across multiple domains, including evaluation and implementation. By aligning course activities with adult learning principles and the authentic, iterative nature of PBL, higher education programs can foster deeper mastery of competencies and prepare graduates for the aforementioned complex demands of the workplace (Ramírez de Dampierre et al., 2024).

Project-Based Learning (PBL)

In a recent systematic literature review, some of the most often used theories in ID are listed as the connectivity theory, the self-directed learning theory, the cognitive load theory, the interest-driven creator theory, and the adult learning theory (Abuhassna & Alnawajha, 2023). Adult learning theory emphasizes that mature learners are self-directed, bring prior experiences to learning contexts, and are motivated by the relevance and applicability of content to real-world challenges (Knowles et al., 2014). PBL operationalizes these principles by engaging learners in authentic, sustained tasks that mirror professional practice, allowing them to construct knowledge through collaboration and reflection (Bell, 2010; Blumenfeld et al., 1991).

The theoretical framework of this study is grounded in PBL, which posits that learners construct knowledge more effectively when engaged in meaningful and authentic tasks that resemble professional practice (Krajcik & Shin, 2014). Within the scope of this study, PBL has been framed as a powerful pedagogical approach for ID education because this approach engages students in complex, real-world tasks that require collaboration, critical thinking, and iterative problem solving (Bell, 2010; Blumenfeld et al., 1991; Krajcik & Blumenfeld, 2006), all of which mirror the demands of professional ID practice. By centering learning around authentic projects, ID programs can foster deeper understanding and practical competence in areas such as design, development, evaluation, and implementation.

By grounding ID education in adult learning principles and PBL, it becomes essential to identify the specific competencies these approaches aim to develop and assess, particularly through established frameworks like the IBSTPI standards. The following subsection elaborates on the IBSTPI framework and explains why it was selected as the benchmark for evaluating competency development in this study.

IBSTPI

For many years, researchers have been interested in the competencies that constitute the ID profession and how these competencies should be evaluated (Yalçın et al., 2021). Sims and Koszalka (2008) describe competency as the combination of knowledge, skills, and attitudes that allow individuals to successfully carry out the tasks associated with a specific profession, meeting the expected standards of performance. Similarly, Rethans et al. (2002) explain competency-based assessments as tools that evaluate how practitioners perform in simulated professional environments, contrasting these evaluations with their actions in real-world practice.

IBSTPI is at the forefront of establishing and verifying standards for professionals in the domains of learning, development, and performance improvement. The organization focuses on identifying and articulating the competencies required by instructional professionals across various sectors such as industry, academia, and government agencies. IBSTPI holds a distinguished position in the ID field (Byun, 2001). The significance of IBSTPI’s ID competencies lies in their comprehensive coverage of all aspects of ID and development roles, encompassing the segments outlined by the extensively adopted ADDIE model (Byun, 2001). According to DeVaughn and Stefaniak (2020), numerous research efforts have been undertaken to investigate the competencies that are important and utilized by instructional designers, with a high proportion of these studies employing the IBSTPI competency standards as a benchmark.

Initially, IBSTPI crafted a set of ID competencies in 1986, which were revised in 2000 and again in 2012 to expand and deepen their scope, reflecting the field’s growing complexity and breadth. This revision resulted in the competencies being organized into five domains, comprising 22 ID competencies (see Table 1) and accompanied by 105 performance statements, highlighting areas as follows: Professional Foundations (5 competency items), Planning & Analysis (4 competency items), Design & Development (7 competency items), Evaluation & Implementation (3 competency items), and Management (3 competency items). The IBSTPI competencies were empirically validated through the pioneering studies of Atchison (1996), Song (1998), and Richey et al. (2001). Based on qualitative interviews with 15 experienced instructional designers working in higher education, vocational training, business and industry, and government, Atchison (1996) aimed to identify the essential competencies of expert instructional designers. His findings revealed two distinct levels of competencies: those required for novice instructional designers and those specific to expert instructional designers (Campbell, 2007).

IBSTPI Competency Model.

Source. Summarized from Koszalka et al. (2013). Copyright 2012 by IBSTPI, www.ibstpi.org.

Competencies and standards are increasingly being used in higher education to guide program development, evaluate curricula, and gather assessment data on student performance in specific areas (Dragoo & Barrows, 2016; Spector et al., 2006). In addition, competencies enable students to reflect on their perceived strengths and weaknesses (Dabbagh & English, 2015).

Given this alignment, the present study employs the IBSTPI standards both as a framework for program evaluation and as a lens for measuring changes in students’ perceived competencies, providing a direct connection between the theoretical foundation and the methodological approach outlined in the next section.

Method

Research Design

This study employed an integrated mixed method design, specifically a blended design, for purposes of complementarity (Greene, 2007). In blended designs, multiple methods are utilized to examine different aspects of a complex phenomenon, fulfilling the mixed methods objectives of complementarity or initiation. Within the blended research design adopted in this study, both quantitative and qualitative data collection methods were employed concurrently to provide a comprehensive understanding of ID competency development.

Quantitative data were gathered through pre- and post-course IBSTPI competency surveys, allowing for statistical analysis of students’ self-perceived proficiency across five domains. To enrich and contextualize these findings, qualitative data were collected via open-ended question responses and semi-structured interviews. Grounded in Greene’s (2007) principle of complementarity, these qualitative components offered deeper insights into students’ learning experiences, course contributions, and perceived competency growth. The open-ended survey items captured students’ reflections on where and how they developed specific competencies, while the interviews offered detailed insights into the iterative nature of learning across the program, particularly in connection with the reconstructed course. By triangulating data from surveys, open-ended responses, and interviews, the study aims to provide a multidimensional interpretation of the phenomenon. The integration occurred during the analysis phase, where data from different sources were connected to form a cohesive interpretation.

Participants and Context

The participants in the study were students in the Computer Education and Educational Technology undergraduate program at a public university in Turkey during the Spring semester of the 2023 to 2024 academic year. The sample consisted of 26 participants, 38.5% of whom were female and 61.5% male, with an age range of 23 to 24 years. The undergraduate program targets the integration of both the domains of education and technology by focusing on the analysis, design, development, implementation, and evaluation of the processes and tools for learning and instruction. Toward this goal, the undergraduate program involves educational software development, programming languages, e-learning, principles and methods in instruction, research methods, and teaching practicum.

The study was conducted during a last-semester compulsory course, namely CET 472- Courseware Design, Development, and Evaluation course (hereafter referred to as the course or CET 472), designed to evaluate educational software in light of human-computer interaction research and contemporary learning theories. Before CET 472, the students enrolled in several core program courses related to different competency domains. For example, in their fifth semester, they engage in an ID course (CET 341) focusing on Planning & Analysis, and Design & Development domains. In this course, the students designed an educational courseware scenario based on a chosen topic from the middle school mathematics or science curriculum. Within the scope of this course, they engaged in activities such as analyzing the subject and age group, writing learning objectives, and designing a storyboard for the educational courseware, which was later evaluated in CET 472.

Following CET 341, the students enrolled in a design and development of multimedia course (CET 372) in their sixth semester. They revised their designs and developed screens of the courseware using multimedia design principles that they learned during the courses in their third and fourth semesters (e.g., CET 215, CET 224). After these learning experiences, they enroll in CET 472 in their last semester of the undergraduate program. All participants completed the third-year prerequisite courses before attending CET 472. See Figure 1 for the details of the third year of the program.

Third-year curriculum of the undergraduate program.

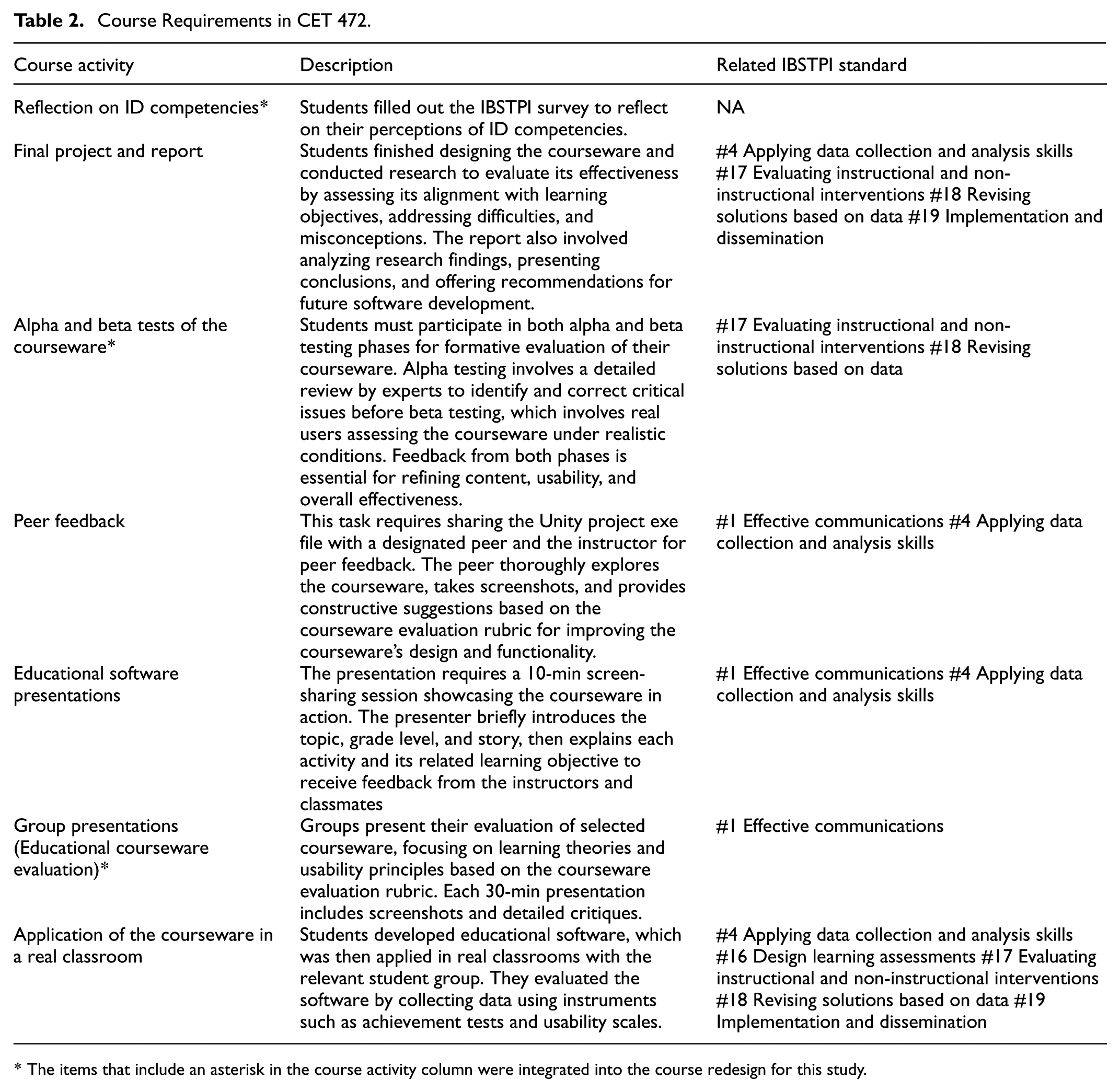

Cet 472 (the course) is offered in students’ last semester of the program and mainly focuses on activities related to the Design & Development and Evaluation & Implementation domains of the IBSTPI competencies. The authors reconstructed the course for better alignment with the IBSTPI competencies. The items in Table 2 that include an asterisk in the course activity column were integrated into the course redesign for this study. During the study, students participated in different course activities and submitted their work through the University’s Learning Management System (e.g., Moodle). Through project-based activities integrated in the course, such as formative evaluation, peer feedback, and classroom implementation, students engage in iterative cycles of design and evaluation that reflect real-world ID workflows. Thus, it was hypothesized that there would be a significant increase in students’ scores in the evaluation and implementation domain after they attended the course. See Table 2 for the details of the course requirements.

Course Requirements in CET 472.

The items that include an asterisk in the course activity column were integrated into the course redesign for this study.

Each course activity was explicitly mapped to the IBSTPI ID competencies, ensuring that project-based tasks directly supported the development of targeted professional skills. Aligned with the indicated gaps from the literature, the authors noticed the need for more authentic learning experiences for evaluation-related practices in the program. For example, implementing the Alpha and Beta tests assignment was integrated into the course design to provide opportunities for conducting formative evaluation. Alpha testing involved a detailed review by experts to identify and correct critical issues before beta testing, which involves two real users (e.g., fifth-grade students) assessing the courseware under realistic conditions. Feedback from both phases was essential for refining the content, usability, and overall effectiveness of the educational software.

This deliberate alignment reinforced the Evaluation & Implementation domain while also integrating skills from other domains such as Design & Development. Integrating authentic, project-based tasks within a competency-based framework allowed the course to provide a supportive environment where learners could connect theory and practice to strengthen their competencies.

Data Collection Tools and Procedure

For this study, the quantitative data were collected using the IBSTPI survey at the beginning and end of the course. Participants were asked to rate their level of competency in each domain of the IBSTPI. The IBSTPI competency set can be used as a self-report instrument to measure ID competencies, providing a robust method for assessing and improving skills (Yalçın et al., 2021). A five-point Likert-type scale was used to measure participants’ perceived competence, ranging from fully competent (5), quite competent (4), somewhat competent (3), a little competent (2), and noncompetent (1). In the study, the original version of the IBSTPI competency set was used, with no changes made to the items or the language. The survey was distributed online via Google Forms. Completing the survey did not have a time limit; participants had 1 week to complete the survey.

The qualitative data were collected through open-ended questions and semi-structured interviews. To explore how students perceived the contribution of their course-related experiences to their ID competencies, participants’ responses to the open-ended question “Why this rating and where did you develop this level of competence?” were collected for each competency item (22 items in total) in the post-IBSTPI survey.

Additionally, the authors designed a semi-structured interview protocol (see Appendix A) to explore participants’ overall learning experiences and self-assessed competencies across five domains. For each domain, participants were asked both to evaluate their level of competence and to identify which course contributed most to their development in that area, including descriptions of relevant learning activities. To enhance its clarity and relevance, the protocol was reviewed and revised in consultation with a researcher experienced in qualitative methods.

At the beginning of the semester, the purpose of the study was announced to the students, and the required permissions were obtained to use their course GPAs and answers to the IBSTPI survey as the data for the study. Participants gave written consent to participate at the beginning of the study. The survey data of the students who did not want to participate in the study were removed from the dataset.

Shortly after completing the course activities and the post-survey, the second author emailed the selected six participants, - inviting them to participate in the interviews. To gain a deeper understanding, participants were selected for interviews based on their academic achievement levels, categorized as low, medium, and high according to their GPAs. Three participants agreed to take part in the interview voluntarily. Semi-structured interviews were then conducted using a web-conferencing tool (e.g., Zoom), with each interview lasting approximately 30 to 45 min. Additional written informed consent forms for the interview were obtained from volunteer participants.

Data Analysis

Before the data analysis, the data underwent a verification process to ensure completeness for all measurements: pre- and post-IBSTPI survey scores and answers to the open-ended questions in the post-survey. Participants with any missing data points were excluded, resulting in the removal of six participants. All statistical analyses were performed using IBM SPSS software (Version 29).

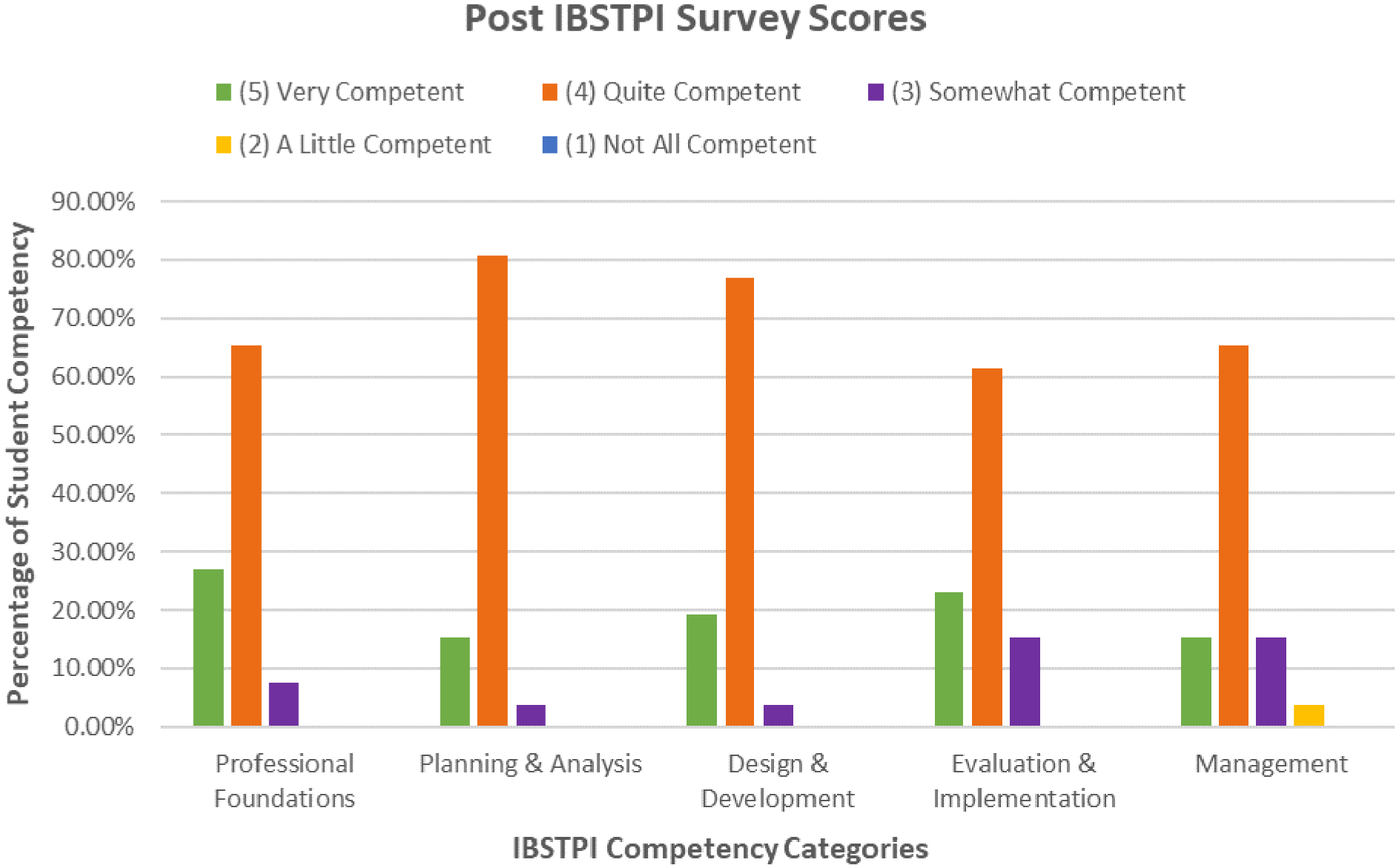

For the first and second research questions, frequencies were calculated for every sub-competency within each domain. For every domain, percentages were computed based on the total frequencies. To address the first research question, the average competency level for each domain was calculated for every participant. The distribution of these averages was analyzed, and percentile ranks were computed to provide insights into the overall competency levels among the participants. This data was organized into a graph (Figure 2) using Microsoft Excel (Version 2016) for clarity and further analysis.

Percentage of student competency ratings by IBSTPI competency categories.

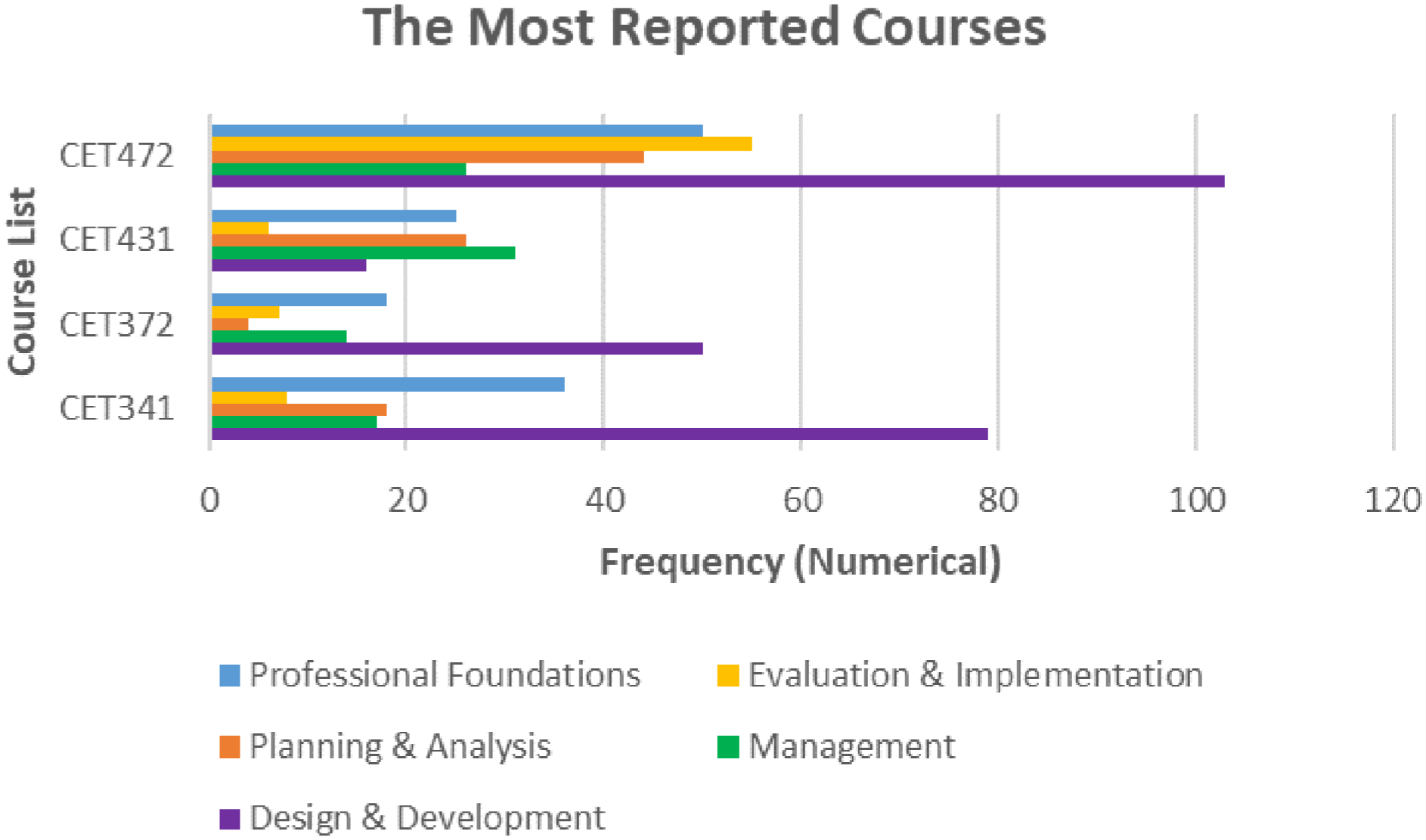

To address the second research question, the survey included open-ended questions (22 in total) to indicate the courses in which the competencies regarding each domain were met. Participants were instructed to write relevant course names or any related experience briefly. Responses were converted into frequency counts in Microsoft Excel (Version 2016; see Figure 3). Analysis of participants’ responses to these open-ended questions revealed that job experiences significantly facilitated competency development across domains. Consequently, the category named “Job” emerged from the qualitative analysis and was included in the frequency count for each domain.

Frequencies of the most reported courses.

To further explore participants’ perceptions of how IBSTPI competencies are addressed by the courses in the ID program, the audio recordings of the online interviews were transcribed verbatim for analysis. An open coding approach was employed to identify initial codes and categories within the data (Saldaña, 2021). First, three authors independently coded one interview; they then met to review and reconcile their codes, enhancing intercoder reliability (Creswell & Poth, 2016). Subsequently, the first author conducted open coding on all three interviews, yielding 2 categories and 11 codes (see Table 3). The second and third authors reviewed and confirmed the coding framework.

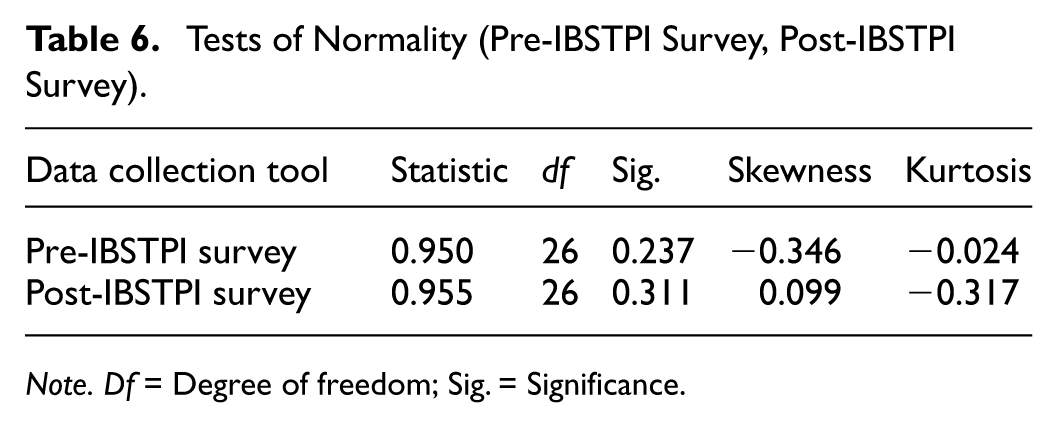

Categories and Codes Derived From the Interviews.

To address the third research question, several statistical tests were conducted. Initially, the data were assessed for normality using the Shapiro-Wilk test and skewness and kurtosis values (±1; Hair et al., 2010). A paired samples test was then employed to analyze and compare the participants’ pre-survey and post-survey scores for each domain. A significance level of p < .05 was accepted. Finally, the researchers triangulated the results of quantitative (IBSTPI Survey) and qualitative (answers to open-ended questions in the survey and interviews) data for each research question.

Trustworthiness

To ensure the trustworthiness of the study, we adopted Lincoln and Guba’s (1985) criteria of credibility, transferability, dependability, and confirmability. Credibility was supported through prolonged engagement with participants and methodological transparency. Students were informed that participation in the study would not affect their grades, and they completed the survey as part of a course-related reflection activity. During interviews, participants were encouraged to respond honestly. Participants’ anonymity was protected by assigning pseudonyms before the data analysis process. To reduce researcher bias, all three authors collaboratively coded the data and engaged in peer debriefing throughout the analysis process. Transferability was addressed by providing thick descriptions of the research context and participants, allowing readers to assess the applicability of findings to other settings. Dependability was ensured by maintaining a detailed audit trail of research decisions, coding procedures, and data collection protocols. The collaborative coding process also contributed to consistency in interpretation.

In this study, the researchers held dual roles as both instructors and researchers, which shaped their engagement with the participants and the instructional context. The first author served as the primary instructor for CET 472 and has taught it for 6 semesters. With extensive experience in ID and educational technology, the first author was responsible for designing and delivering the course content, including the integration of authentic evaluation activities such as alpha and beta testing. The second author acted as the teaching assistant and was actively involved in course implementation, student support, and data collection and analysis. The second author also graded course assignments and final projects, which provided close familiarity with students’ work and progress. While this dual involvement offered valuable insights into students’ learning processes, both researchers made conscious efforts to maintain neutrality during instruction, data collection, and analysis. To minimize potential bias, the authors adhered strictly to participants’ own words during qualitative analysis and reporting. Consequently, the researchers critically examined their positionality, acknowledging their dual roles as instructors and investigators, and took steps to minimize their influence on participants’ responses.

Findings

Research Question 1. Students’ Perceived Competency Levels

The post-IBSTPI survey results showed that most students participating in the study (N = 26) rated themselves as competent across all domains. In the domain of Professional Foundations, 26.92% of students rated themselves as “Very Competent,” while 65.38% felt “Quite Competent,” and a smaller percentage (7.69%) perceived themselves as “Somewhat Competent.” For Planning and Analysis, 15.38% of students identified as “Very Competent,” with a significant majority (80.77%) rating themselves as “Quite Competent” and 3.85% as “Somewhat Competent.” In the Design &Development domain, 19.23% of students considered themselves “Very Competent,” while 76.92% felt “Quite Competent,” and 3.85% rated themselves as “Somewhat Competent.” Regarding Evaluation &Implementation, 23.08% of students perceived themselves as “Very Competent,” 61.54% as “Quite Competent,” and 15.38% as “Somewhat Competent.” Lastly, in the Management domain, 15.38% of students rated themselves as “Very Competent,” 65.38% as “Quite Competent,” 15.38% as “Somewhat Competent,” and 3.85% as “A Little Competent.” These results suggest that students generally rate themselves as quite proficient in most ID competencies, with a notable percentage perceiving themselves as very competent in key areas. Figure 2 presents the distribution of these averages and percentile ranks to provide insights into the overall competency levels among the participants.

Analysis of self-reported competency levels, based on the combined percentages of “Very Competent” (5) and “Quite Competent” (4) ratings, revealed that participants perceived the highest competence in the Planning & Analysis and Design & Development domains (96.15% each). Professional Foundations followed at 92.3%, and Evaluation & Implementation at 84.62%. The Management domain received the lowest combined competency rating at 80.76%.

The qualitative analyses of the semi-structured interviews with three participants supported the quantitative results. All interview participants perceived themselves as competent in all aspects of IBSTPI domains. However, two participants reported having fewer opportunities in the Management domain than in other domains and rated themselves as ‘somewhat competent’. These qualitative findings will be further explored in the following sub-section.

Research Question 2. Alignment Between the Competency Levels and Courses

The extent to which IBSTPI domains were addressed by the courses in the under program, as perceived by students, varied across different domains. In the domain of Professional Foundations, CET472 was mentioned most frequently, with 50 mentions, followed by CET341, with 36 mentions, indicating that both courses significantly addressed professional foundations. For Planning & Analysis, CET472 led with 44 mentions, and CET431 was the second most mentioned course with 26 mentions, demonstrating a strong emphasis on planning and analysis in both courses. In the domain of Design &Development, CET472 was again the most cited course, receiving 103 mentions, while CET341 followed with 79 mentions, showing that both courses had a substantial focus on design and development. In Evaluation &Implementation, CET472 remained the most recognized course, with 55 mentions, followed by CET 462 with 10 mentions, highlighting their significant contributions to students’ competencies in evaluation and implementation. Lastly, in the domain of Management, CET431 was the most frequently mentioned course, with 31 mentions, and followed closely by CET 472 with 26 mentions, underscoring their role in addressing management competencies. Figure 3 illustrates that CET 472 was by far the most frequently mentioned course across domains, highlighting its central role in students’ competency development and supporting its identification as a key integrative experience.

One interesting finding was that CET 472 was the most reported course in all domains except Management. These results may be attributed to the fact that while the CET 472 primarily emphasizes Evaluation & Implementation, it is intentionally designed to highlight the continuous alignment between evaluation and other ID domains. In the preceding CET 341 course, students employed the ADDIE model for their instructional designs. Since the ADDIE model consists of five interconnected and iterative stages, during the CET 472 course, students working on software development in the Evaluation & Implementation stages also engaged in activities related to Analysis, Design, and Development, reflecting model’s cyclical nature.

The findings from the semi-structured interviews with three participants also highlighted the value of CET 472 in their educational journey, particularly because it allowed them to connect prior knowledge from other core courses. Participants emphasized the iterative nature of their learning experiences, where course activities and assignments required continuous revision, fostering deeper understanding and skill refinement.

One key category that emerged related to specific course experiences was the connection between prior learning across courses, from foundational courses like CET 341 to CET 472. For example, Victoria described the learning experience as “constantly revising,” indicating that the iterative process of designing, revising, and receiving feedback was crucial to competency development. The following quotation from the interview sheds light on this experience:

In CET 341, since aspects like user interactions and multimedia principles were important in the design process, we were constantly receiving feedback accordingly. We both learned these principles and designed based on them. So, in that sense, I do remember receiving a lot of design-related feedback from my instructor. I clearly remember that … (Interview with Victoria)

Victoria also emphasized how CET 472 helped them develop their skills, especially in evaluation and implementation, through activities such as alpha and beta testing. These iterative steps allowed students to refine their instructional designs based on real feedback, bridging theory and practice. Notably, she shared this example in direct response to a question about the Evaluation & Implementation domain competency: “For example, do you think that you can revise instructional and non-instructional solutions based on data?” The fact that this specific learning experience immediately came to mind suggests that the course design effectively supported the practical application of this competency. The following excerpt from the interview illustrates this experience: “Yes, in CET 472, during the alpha and beta testing, for instance, one of the students who tried my material was able to complete it in about 15 minutes. That’s why I decided to make one of the activities more difficult” (Interview with Victoria).

Participants also noted that CET 472 built on prior courses by reinforcing professional foundations and integrating planning and analysis competencies. As Victoria noted, the sequential structure of the courses allowed them to apply knowledge from earlier lessons while progressively enhancing competencies. This demonstrates the role of CET 472 in synthesizing and applying skills from earlier courses, making it a cornerstone of their learning experience.

The analysis of Matt’s experience, particularly in the context of CET 472, revealed key insights into the intersection of theory and practice in students’ academic development. One notable aspect of CET 472 is its iterative learning process, which involves continuous cycles of project revision informed by instructor and peer feedback, as all interview participants noted. This iterative approach fostered a deeper engagement with both the theoretical and practical dimensions of the subject matter.

In terms of competencies, Matt reported considerable growth in design, development, evaluation, and implementation skills closely associated with the iterative, feedback-driven structure of the course. A particularly significant element of CET 472, as Matt described, was its focus on practical application. Matt was able to directly observe the impact of the developed educational courseware on student learning. This exposure to real-world implementation was instrumental in academic and professional growth, facilitating the transition from foundational courses such as CET 341 and CET 372 to more integrative and advanced learning experiences. The experience also reflects the Evaluation & Implementation competency of revising instructional and non-instructional solutions based on data, as the learning activity required analyzing outcomes and making adjustments accordingly. This process aligns with the iterative cycles central to PBL, where feedback from authentic contexts informs continuous refinement of solutions.

Actually being able to apply something that was completed with real students and then seeing the results, such as “this worked, the scores improved,” was a memorable moment. It was both satisfying and validating, creating a sense that all those years of study had led to producing something that enabled a class of students to learn. The results may not have been perfect, but it was still possible to observe tangible progress. (Interview with Matt)

Matt’s responses regarding the Design & Development question highlighted how multiple courses across the program, including CET 341, CET 472, practicum courses (CET 451 and 462), and others, collectively contributed to competency development. The following excerpt illustrates this reflection:

Maybe again a mix of 341 and 472…maybe the practicum courses…all of them started to come to mind—maybe even 282. In that one, a lesson was planned. Certain things had to be explained and shown, and the level of the students at the school was known. Interventions could be adjusted accordingly, and topics that would interest them could be chosen. So, they actually helped a bit, too. (Interview with Matt)

During the interview, Matt also noted areas where they feel less confident, particularly in management and planning/analysis, suggesting that additional practice and real-world exposure would be beneficial in these domains. For example, one interview question explored participants’ perceived readiness to perform specific competencies in the Planning & Analysis domain, such as “Conduct a needs assessment in order to recommend appropriate design solutions and strategies” and “Identify and describe target population and environmental characteristics.” In response, Matt reflected on how prior project-based experiences had provided opportunities to design and receive feedback, yet also revealed limitations in applying these skills in fully authentic contexts. The following excerpt from the interview illustrates this perspective:

As I mentioned before, we produced and produced things on the other side, received feedback. But when it came to seeing students’ needs and analyzing them, I didn’t have much real practice in thinking about what a second—or maybe even a third—party might look for when creating something for them. So yes, in theory, I know I should do these things. But when I want to put it into practice, I’m not sure if I can get the expected results—or rather, whether I can manage the process correctly. (Interview with Matt)

Overall, qualitative findings indicated that CET 472 played a pivotal role in participants’ academic journey. These findings suggested that the project-based, iterative nature of the course design enabled learners to integrate prior experiences into complex, authentic tasks, reflecting the principles of adult learning theory and supporting the transfer of competencies across contexts.

When we explored the other responses to the open-ended question on the post-survey, several core program courses stood out in different domains. The most interesting finding was that teaching practicum courses, namely CET 451 and CET 462, were leading courses in the Design & Development and Evaluation & Implementation domains. In the Design & Development domain, CET462 ranked fourth in frequency of citation, with 14 mentions. Figure 4 shows additional courses and experiences that participants linked to competency growth, highlighting the complementary role of diverse courses alongside CET 472.

Frequencies of the other responses to the open-ended question.

In Evaluation & Implementation, CET462 again stood out with 10 mentions, and CET451 followed with 8 mentions. An analysis of one of the interviews provided insight related to this finding. Matt indicated that the teaching practicum courses were critical learning opportunities, offering hands-on experience in real classroom settings where theoretical knowledge could be applied. These internships created opportunities to test skills and knowledge with actual student groups, contributing significantly to professional development. As Matt explained:

Practicum courses can be mentioned. In those, as a faculty of education student, observations were made about the situation of the students, what they were doing, what needed to be taught, and what had been learned about the topic to be taught. Theory tells one thing, but reality is another—there’s that side of it as well. (Interview with Matt)

The qualitative findings also indicated that participants’ jobs significantly contributed to developing their Professional Foundations, Planning & Analysis, and Management competencies. In these domains, Table 4 shows the distribution of responses regarding the most frequent course, the second frequent course, and the job experiences.

Distribution of Responses Regarding the Competency and Course Alignments.

Analysis of one of the interviews provided insight into the influence of job-related experiences on ID competency development. Barry reported that working in various roles since the beginning of university had significantly contributed to competencies in the Management domain. Although management-related skills were not extensively applied during course activities, the highest sense of competence in this domain was attributed to responsibilities handled in the workplace. While recognizing the foundational value of university education, particularly in ID, Barry considered job experience to be more impactful for professional growth as “Working develops a person about ten times more than school does in terms of professional competence. Without working and gaining experience, it’s not possible to improve in this regard” (Interview with Barry).

Despite this emphasis on job experience, Barry acknowledged that educational background had influenced job-related practices. For example, Barry mentioned applying multimedia design principles, learned in earlier courses as follows: “When preparing a presentation or a weekly newsletter, attention was paid to which colors to use or which fonts were appropriate—things that had been emphasized many times in class. That means they stayed in mind and added something professionally” (Interview with Barry).

This illustrates that while job experiences may play a primary role in shaping professional competencies, theoretical knowledge acquired through coursework can complement and reinforce professional practices in authentic contexts. In relation to the IBSTPI framework, these experiences demonstrate how competencies in the Management domain can be cultivated through responsibilities outside the academic environment.

Research Question 3. Differences Between Pre-Survey and Post-Survey in Evaluation

Table 5 displays the descriptive statistics of the participants’ pre-survey and post-survey results in the Evaluation and Implementation domain. The Shapiro-Wilk test was used to determine whether the pre-survey and post-survey scores followed a normal distribution. Additionally, the Skewness and Kurtosis values were checked to see if they fell within acceptable limits. The findings revealed that the data for both the pre-survey and post-survey scores (p > .05) followed a normal distribution. The Skewness and Kurtosis values were also within acceptable limits, as presented in Table 6.

Descriptive Statistics of Pre- and Post-IBSTPI Survey.

Note. N = Number; SD = Standard Deviation.

Tests of Normality (Pre-IBSTPI Survey, Post-IBSTPI Survey).

Note. Df = Degree of freedom; Sig. = Significance.

A paired samples test was conducted to compare the means of the participants’ pre-survey and post-survey scores in the Evaluation and Implementation domain. The test results showed a statistically significant difference in the scores between the pre-survey and post-survey phases for participants who attended the course (t(25) = 5.413, p < .001). The effect size for this analysis (d = 1.0) was found to exceed Cohen’s (1988) convention for a large effect. Detailed test results are provided in Table 7.

Statistical Differences Between Pre- and Post-IBSTPI Survey Scores in the Evaluation & Implementation Domain.

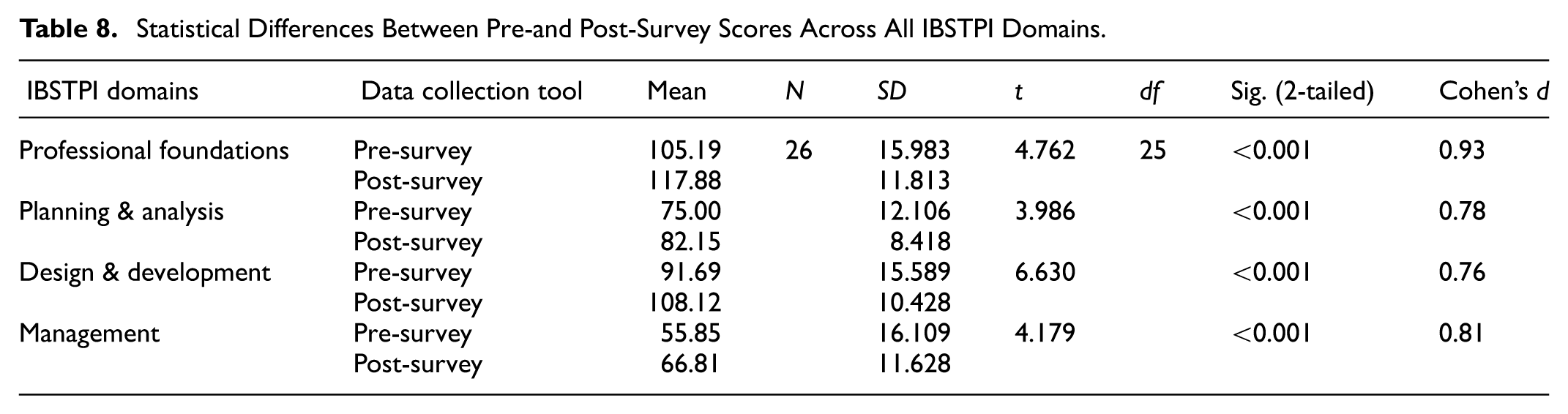

Based on our initial assumptions, we anticipated finding differences only in the Evaluation & Implementation domains, as these were the primary focus of the CET 472 course. However, as Research Question 2 revealed, CET 472 emerged as the most mentioned course across all IBSTPI domains. Consequently, we expanded our analysis to include other domains, examining pre- and post-survey results in each. We first assessed the normality of the data by reviewing the Skewness and Kurtosis values for both the pre-and post-survey scores across all domains, which confirmed that the data were normally distributed. Following this, paired samples tests were conducted for each domain.

The results demonstrated significant statistical differences between the pre-and post-survey scores across all domains, with notable improvements in post-survey scores. While the effect sizes for the Professional Foundations domain (d = 0.93) and Management domain (d = 0.81) were found to exceed Cohen’s (1988) convention for a large effect, the Planning & Analysis domain (d = 0.78) and Design & Development domain (d = .76) were found to exceed Cohen’s (1988) convention for a medium effect. Detailed test results are provided in Table 8.

Statistical Differences Between Pre-and Post-Survey Scores Across All IBSTPI Domains.

These results may be attributed to the fact that while the CET 472 course primarily emphasizes Evaluation and Implementation, it is intentionally designed to highlight the continuous alignment between evaluation and other ID domains. In the preceding CET 341 course, students employed the ADDIE model to design interactive learning environments. As Maddison and Kumaran (2017) note, the ADDIE model is not linear but consists of five interconnected and iterative stages. Consequently, during the CET 472 course, students working on courseware evaluation also engaged in activities related to analysis, design, and development, reflecting the cyclical nature of the model.

Discussion and Conclusions

This study’s findings provide critical insights into the undergraduate students’ competencies within the ID field, evaluated through the framework of the IBSTPI competencies. Beyond confirming general proficiency across domains, the results also revealed unexpected patterns and gaps, particularly regarding the extensive influence of CET 472. A striking and somewhat unexpected finding was that CET 472, while reconstructed to target Evaluation & Implementation, emerged as the most frequently mentioned course across nearly all domains. This suggests that its project-based, iterative design had integrative effects, enabling students to consolidate prior knowledge in analysis, design, and professional foundations as well. Such results reflect adult learning principles (Knowles et al., 2014), in which learners draw upon accumulated experiences in meaningful contexts, and align with project-based learning research showing that authentic, complex tasks foster cross-cutting competencies (Bell, 2010; Blumenfeld et al., 1991; Krajcik & Blumenfeld, 2006).

The IBSTPI post-survey results indicate that students generally perceive themselves as very competent, particularly in Planning & Analysis and Design & Development. This aligns with broader literature emphasizing the importance of these competencies in ID education (Martin & Ritzhaupt, 2021). ID models like ADDIE underscore the iterative processes of planning, analysis, design, and development and help explain why students feel confident in these areas (Maddison & Kumaran, 2017). Qualitative findings from the study suggested that participants experienced competency growth in these areas during their journey through CET 472, where they engaged in multiple hands-on projects that reinforced the application of theory to practice.

Compared to other domains, despite a significant difference between post and pre-survey results, participants reported lower confidence in the Evaluation and Implementation domain. This reflects a persistent gap between theoretical knowledge and real-world application in ID (Klein & Kelly, 2018; Larson & Lockee, 2009). Despite improvements in evaluation skills following the course, challenges persisted, particularly in transferring theoretical concepts into practical application. While CET 472 provided opportunities for feedback and iteration, implementation of their instructional designs in real classrooms was optional. Hence, only a few students completed that step, allowing students like Matt to refine their evaluation skills; these competencies remain areas where more exposure to real-world contexts would be beneficial. These findings suggest that authentic and situated learning experiences must be embedded earlier and more systematically. Internships, external collaborations, and structured leadership roles in projects could provide students with repeated opportunities to strengthen these competencies.

Qualitative findings indicated that the holistic nature of the courses in the program, blending theoretical and practical elements, enabled participants to develop competencies across domains such as Professional Foundations and Design & Development through iterative learning and project revisions. Similarly, Kolodner’s (1997) findings highlight the importance of iterative learning in ID. On the other hand, one of the most significant findings of this study is the gap between theory and practice, particularly in the domains of Evaluation & Implementation and Management. Although CET 472 aimed to address this gap through project-based learning, qualitative findings indicated challenges in applying their theoretical knowledge to real-world scenarios (Larson & Lockee, 2009).

The findings suggest that ID programs should offer more situated learning experiences, such as internships or project-based assignments, to better bridge the gap between theory and practice. As one of the interview participants’ reflections highlighted the value of these real-world experiences, the direct observation of the impact of the designs on student learning outcomes provided a practical experience that boosted confidence in Evaluation & Implementation. In addition to coursework, job-related experiences significantly supported competency development. Qualitative findings indicated that professional work contexts reinforced skills in Professional Foundations, Planning & Analysis, and Management, areas that are sometimes underrepresented in formal courses. This finding suggests that ID programs should place greater emphasis on providing students with opportunities to practice project management, resource allocation, and leadership skills, competencies that are increasingly important in the professional world (Yalçın et al., 2021).

It is essential to acknowledge that these findings are context-bound. Unlike many instructional design programs worldwide that are offered at the graduate level, the program explored here is an undergraduate program. Graduate students often enter with prior professional experience, which shapes their competency development differently. In contrast, in this undergraduate context, many participants began working part-time in educational or related fields during their third year. This helps explain why job-related experiences were particularly salient in shaping competencies across domains. While such experiences supported growth in Professional Foundations and Planning & Analysis, the Management domain remained relatively underdeveloped, indicating that informal job experiences alone may not substitute for structured curricular integration.

Limitations and Future Directions

A limitation of the study is the small sample size (N = 26), which may affect the generalizability of the findings. The reliance on self-reported survey data also introduces potential bias, as students’ perceptions may not always reflect their actual competency levels. Additionally, the focus on a single course, CET 472, highlights its importance but may overlook contributions from other courses in developing competencies. Participants’ reflections indicate that while CET 472 played a significant role in the learning process, other courses also contributed to the development of foundational knowledge and skills.

Future research should incorporate more diverse measures of competency development, such as performance-based assessments or structured reflection tasks using IBSTPI. It would also be valuable to examine how other courses in the ID curriculum contribute to competency development, particularly in areas like management, where students reported lower confidence. Comparative studies between undergraduate and graduate ID programs could further illuminate how program level and participants’ prior job experiences interact with competency development. Such studies would help clarify the extent to which findings from this context generalize to broader ID education.

Overall, this study demonstrates that CET 472 effectively served as a capstone course, with participants identifying the final project as the primary context for competency growth, and paired-samples t-tests confirming significant pre–post improvements across all IBSTPI domains, highlighting how systematically scaffolded, iterative, and cumulative authentic projects drive competency development throughout the program. ID programs should: (a) Embed smaller-scale evaluation and implementation projects earlier in the program; (b) integrate structured leadership and management roles into different tasks throughout the courses; (c) strengthen connections between practicum, workplace learning, and IBSTPI standards.

In conclusion, this study emphasizes the need for ID programs to maintain strong alignment with industry standards, particularly the IBSTPI competencies, and to provide students with opportunities to engage in project-based learning. By doing so, ID programs can better prepare students to meet workforce demands and ensure they are equipped with the necessary competencies for professional success.

Footnotes

Appendix A

Acknowledgements

We extend our gratitude to the participants who generously contributed their time and insights to this study. During the preparation of this work, the authors utilized Generative AI tools to enhance the language.

Ethical Considerations

This study received ethical approval from the Ethics Committee in Social Sciences and Humanities of Bogazici University (Code: E-84391427-050.01.04-174745) on March 14, 2024. Written informed consent was obtained from all participants in the study. Instructional Designer Standards: Competencies and Performance Statements were developed by IBSTPI® (Copyright © 2013 International Board of Standards for Training, Performance, and Instruction [ibstpi®![]() ]. All rights reserved. Used with permission).

]. All rights reserved. Used with permission).

Consent to Participate

Participants gave written consent to participate at the beginning of the study. Additional written informed consent forms for the interview were obtained from volunteer participants.

Authors’ Contributions

Mutlu Şen-Akbulut: Conceptualizing, Research design, Data collection, and Data analysis—original draft, Writing—review, and editing. Ekrem Kutbay: Research design, Data collection, and Data analysis—original draft, Writing—review and editing. Bersu Ansen: Data organization, Data analysis, Writing—review and editing. All authors have read and approved the final manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data used to gain the findings of this study are available from the corresponding author upon request.