Abstract

As employees are important stakeholders within the organization, it is crucial for enterprises to value employee voice behavior (VB). However, with the rise of algorithm management, whether and how this AI-driven paradigm impacts this important VB remains poorly understood. This study aims to uncover the dark side of algorithmic management by investigating the inhibiting effect of employees’ negative algorithmic experiences on their VB and its underlying mechanism. Applying Conservation of Resources (COR) theory as our framework, we leverage a big data text analysis approach, conducting text mining and Structural Topic Modeling (STM) on a large corpus of employee reviews for online delivery platforms sourced from Glassdoor. The findings show that employees’ negative algorithm experiences are mainly derived from AI algorithm matching and AI algorithm control. Subsequently, the negative algorithm experiences diminish employees’ recognition of organizational culture, which, in turn, suppresses their VB. Furthermore, the presence of work-life balance does not alleviate the inhibitory effect that negative algorithmic experiences have on employee VB. Through empirical analysis, this study reveals a negative relationship between algorithmic management and VB. These findings offer important theoretical and practical implications for gig platforms enterprises to optimize their algorithmic design and strengthen cultural identification, thereby encouraging employee voice.

Introduction

With the rise of the gig economy, an increasing number of individuals are opting to enter the online delivery industry (Wang et al., 2021; H. Zhao et al., 2025). Online platforms exemplify a quintessential form of organization that manages employees through algorithms (Vallas & Schor, 2020; Won et al., 2023), leveraging real-time data collection to continuously refine and iterate these algorithms for monitoring employee behavior and optimizing work processes (Kellogg et al., 2020). While algorithms have significantly enhanced the efficiency of platform companies, they also continue to exert ongoing effects on employee behavior (Tan et al., 2021). By providing algorithmic applications that streamline customer service and ensure prompt order access, the platform enables employees to save time and work more efficiently (Kellogg et al., 2020; P. Liu et al., 2025). However, scholars also acknowledge the dark side of algorithmic management. Real-time control imposed by algorithmic management poses certain threats to employee privacy, and the opacity of algorithms impedes employees’ understanding of internal mechanisms, leading to skepticism regarding the fairness of platform allocation mechanisms (Gal et al., 2020). Although some studies have explored the impact of algorithms on employees’ psychological and behavioral outcomes—such as well-being (Deng et al., 2025), employee performance (M. Liu et al., 2024), creativity (D. Li et al., 2024), and employee engagement (N. Liu et al., 2025)—a significant gap remains in understanding how algorithmic experiences influence employee organizational citizenship behavior.

Employee voice behavior (VB) is a typical organizational citizenship behavior, which is the act of employees taking the initiative to voice their opinions in order to propose solutions to organizational problems and improve overall performance (Morrison, 2023; Van Dyne et al., 2003). Employees are vital sources of feedback on organizational problems and information for proposing viable solutions (Huang et al., 2024). Employee voice is good for getting organizational decisions made (Wei et al., 2015). Therefore, organizations need to identify the factors that encourage employee voice. Previous scholars have examined the influencing factors of VB in detail from different perspectives. From the employee perspective, researchers have analyzed the impact of variables such as gender, self-efficacy beliefs (Eibl et al., 2020), perceived overqualification (Xiang et al., 2025), and AI awareness (He et al., 2025) on VB. From the organizational perspective, studies have examined the role of factors including cultural elements (Lu & Gursoy, 2024; Zhou & Sun, 2025); leadership traits such as Manager Psychological Ownership (Duan et al., 2024), transformational leadership (Jianu et al., 2025), and digital leadership (C. Yang et al., 2024); and other factors like performance work systems (Ashiru et al., 2022); social media use (G. Zhao, 2024). These studies provide us with a map of ideas about the predictors of VB. However, to our knowledge, there have been no studies on the impact of employees’ algorithmic experiences on VB.

As previously mentioned, although existing research has shed light on the impact of algorithmic management on employees in platform enterprises, we still lack a clear understanding of how platform workers perceive algorithms and how these experiences, especially the negative ones, influences their organizational citizenship behavior. From the perspective of employee-generated information, such as employee reviews, a wealth of valuable information can be distilled, yet our understanding of employees’ experiences with algorithms remains limited. What specific dimensions constitute employees’ algorithm experiences? Do these dimensions affect employees, and if so, do they differ in their impact weights? Organizational citizenship behavior is what enterprises expect employees to implement to promote organizational development. Against the backdrop of algorithmic management, is there a certain correlation between platform employees’ organizational citizenship behavior and their algorithm experiences? Previous studies on employee algorithm experiences have mostly relied on questionnaires or interviews to obtain data on employee experiences (W. Li et al., 2024; Perez et al., 2022; Wu et al., 2023). Our study takes a different approach, starting from the resources of employee-generated information, and explores the aforementioned questions through text mining and topic modeling techniques:

We further explore the boundary conditions and process mechanisms of the relationship between employees’ negative algorithm experiences and VB. Algorithmic management is changing the way companies are run, and in doing so is affecting the culture of the company (Bititci et al., 2006). Previous studies have indicated that employees’ acceptance of organizational culture influences their behavior (Ortega-Parra & Ángel Sastre-Castillo, 2013). Therefore, could employees’ cultural recognition mediate the relationship between their negative algorithm experiences and VB? According to the COR theory, employees’ cultural recognition can be seen as a social resource provided by the organization. A high level of cultural recognition indicates that employees perceive the company as offering richer resources, and they are likely to invest in ways that achieve resource conservation. This investment approach includes taking actions that positively benefit the organization, such as engaging in VB. Thus, we hypothesize that employees’ cultural recognition may mediate the relationship between their negative algorithm experiences and VB.

Furthermore, given the flexibility inherent in platform gig economies, employees likely enjoy greater autonomy in time allocation. Work-life balance (WLB), as a crucial personal resource, is theoretically posited to buffer the impact of resource depletion (Clark, 2000; Hobfoll et al., 2018). However, this proposition has yet to be tested in the context of algorithmic management. Therefore, we aim to investigate whether WLB moderates the relationship between employees’ negative algorithmic experiences and their VB. Since there is a research gap regarding these backgrounds, we propose the following research question:

Based on its typicality and representativeness in algorithmic management practices, this study selects the online delivery industry as its research context. Online delivery platforms are among the domains where algorithmic management is most profoundly and thoroughly implemented in the gig economy (Kellogg et al., 2020). From order matching and route navigation to performance evaluation and reward-punishment systems, algorithmic systems govern nearly the entire workflow of delivery employees. This intensive datafication of management makes employees’ algorithmic experiences, particularly negative ones, clearly and intensely observable, providing an ideal setting for this study to investigate the consequences of algorithmic management. Because the psychological and behavioral responses of employees in this industry to algorithmic management are more pronounced, delivery employees become a key population for studying the effects of negative algorithmic experiences on employee behaviors, such as VB. From the perspective of COR theory, algorithmic management influences employees’ resources—for instance, causing resource loss via unreasonable algorithmic matching—and such resource changes further shape subsequent employee behavior (Hobfoll et al., 2018). Thus, exploring how this group copes with algorithmic management holds significant theoretical and practical implications.

This study makes several contributions to the research on employee algorithm experiences and organizational citizenship behavior, with a particular focus on investigating negative algorithm experiences. Firstly, the study fills a gap in the literature by examining the impact of employee algorithm experiences on VB from a micro perspective. Secondly, this paper employs text mining techniques to extract a significant amount of data, examining the effectiveness of employee algorithm experiences on VB. This is the first time such a data collection method has been used in this niche field, expanding the scope of data sources for research. Thirdly, it examines the mediating mechanisms and boundary conditions between employees’ negative algorithm experiences and VB from the perspectives of employees’ cultural recognition and work-life balance. This approach broadens the understanding of employees’ negative algorithmic experiences and enriches the literature on algorithmic management and VB.

The structure of this paper is as follows: Section 2 elaborates on the theoretical foundation and research hypotheses. Section 3 introduces the data acquisition, processing, and research methodology based on text mining. Section 4 presents the results of the topic modeling and empirical analysis. Section 5 provides an in-depth discussion of the findings, elucidating their theoretical and practical implications, and also points out the research limitations and future directions. Finally, the Conclusion section summarizes the entire paper.

Theoretical Background and Hypotheses

Conservation of Resources Theory

The Conservation of resources theory (COR) was initially developed to explain the generation of stress (Hobfoll, 1989). It later became widely applied in the field of management to elucidate employee behavior. The theory fundamentally posits that individuals acquire various resources to retain what they consider important. Stress occurs when these resources are lost or are under threat. People respond to stress by making various efforts to maintain their resources (Hobfoll et al., 2018).

Platform workers often experience work pressure from algorithm management because of algorithm bias and numerous work instructions (Petriglieri et al., 2019). When employees perceive that algorithmic management negatively affects their work or life (e.g., invasion of privacy, receiving incorrect instructions that result in wasted time or missed orders, etc.), according to the COR theory, they perceive that their resources are being threatened, or even that some of their resources are being usurped. In response to this perceived threat, employees may respond in various ways to minimize the loss of their resources. For example, they may reduce their favorable behavior toward the organization, thus preserving their personal resources. VB, as a typical category of behavior beneficial to the organization, is at risk of being undermined in such situations. Thus, we believe that employees’ negative algorithm experiences may be negatively associated with their VB.

Additionally, according to the COR theory, social relationships are valuable resources (Hobfoll, 1989). Employees’ cultural recognition and work-life balance can be seen as such resources that foster high-quality relationships between employees and the company. These relational resources enhance an individual’s ability to work and motivate employees to engage in behaviors that benefit the organization, including voice (Huertas-Valdivia et al., 2021; Owens et al., 2016). Consequently, based on the COR theory, we further hypothesize that cultural recognition and work-life balance may influence the relationship between employee negative algorithmic experience and voice. Supported by COR theory, we further hypothesize that organizational cultural recognition and work-life balance may influence the relationship between employee negative algorithmic experience and VB.

Algorithmic Management

Algorithmic management, first proposed by Lee et al. (2015), refers to the practice of using algorithmic systems for management, replacing traditional management methods to achieve automation (Danaher et al., 2017; Jarrahi et al., 2021). Algorithms coordinate customer demands and employee activities through real-time feedback and data utilization (Meijerink et al., 2021), often associated with human resource management practices (Duggan et al., 2020). Initially applied to study employee behaviors in the gig economy, algorithmic management is sometimes referred to as “Platformic management” due to its widespread use in platform-based gig economies (Jarrahi et al., 2020). It represents a novel rational real-time management approach that expands employee choices with the assistance of algorithms (Griesbach et al., 2019). For instance, algorithm-based matching can quickly deliver more suitable orders to employees, thereby enhancing efficiency (Schildt, 2017). Platform workers based on algorithms also enjoy greater autonomy and flexibility (Rosenblat & Stark, 2016). Furthermore, companies can effectively manage their workforce by promptly capturing employee statuses through algorithms.

Although algorithms provide numerous benefits to both employees and companies, it’s essential to recognize the dark side of algorithmic management. At the organizational level, algorithmic systems are not flawless and may encounter technical issues. Due to the dynamic nature of environmental factors, algorithmic decisions may sometimes be unfair or inaccurate (Newlands, 2021). From the perspective of employees, algorithmic-based real-time monitoring may induce stress during work (Wiener et al., 2023). Due to algorithmic biases, employees may experience work overload (Petriglieri et al., 2019). Algorithmic flaws can result in employees receiving contradictory instructions, which may lead to task conflicts and decrease job satisfaction. Additionally, algorithmic tracking to some extent violates workers’ privacy (Park et al., 2021).

Employees’ Negative Algorithmic Experiences and Voice Behavior

Employee voice behavior encompasses employees’ suggestions and ideas regarding the development of the organization (Morrison, 2011) as well as their concerns and assessments of problems within the enterprise, aiming to facilitate problem-solving for the organization (Liang et al., 2012). This behavior can foster organizational development and enhance a company’s competitive advantage (Islam et al., 2019). From the perspective of the Conservation of Resources (COR) theory, employee voice is a resource that benefits organizations. However, engaging in VB necessitates an investment of energy and resources by employees, which can be seen as a form of resource-depleting behavior. We hypothesize that employees’ negative algorithmic experiences would predict their VB. The reason is as follows:

First, the regulatory approach of algorithmic management, such as punishing employees through real-time monitoring (Newell & Marabelli, 2015) and reducing order quotas for failure to complete tasks (Möhlmannn et al., 2023; Rosenblat & Stark, 2016), places employees in a state of stress, thereby depleting their psychological resources (Gal et al., 2020). According to the COR theory, when employees perceive that their resources are being depleted, they will take action to safeguard existing resources or to eliminate threatening factors (Hobfoll, 1989, 2002). Consequently, when employees sense that an organization’s algorithmic management is encroaching upon their time or infringing upon their privacy, this negative algorithmic experience can lead them to feel that the company is harming their resources. The depletion of these resources prompts their behavior aimed at preventing further loss or minimizing the occurrence of additional losses. In such cases, employees may choose to curtail or diminish their contributions to the organization, that is, exhibit reduced VB. This is because VB itself necessitates the expenditure of their energy and other precious resources.

Second, algorithmic management may impair employees’ experiences of equality, safety, or dignity, resulting in negative emotions and pessimistic work status (Park et al., 2021; Petriglieri et al., 2019; Wiener et al., 2023). Emotional experiences and emotional state at work significantly impact work motivation and behavior (X. Y. Liu et al., 2019; Seo et al., 2004). A negative experience with algorithms greatly affects how employees perceive their treatment, both psychologically and materially. According to Organizational Justice Theory, injustices embedded in algorithmic control within organizations—specifically non-transparent algorithmic penalty practices, which reflects procedural injustice, and biased handling of disputes, which represents interactional injustice—constitute a direct infringement on employees’ dignity and perceptions of fairness (Newman et al., 2020). Such infringements translate to a profound depletion of employees’ psychological resources. According to the COR theory, employees must exert effort to regulate their negative emotions, which may lead to the depletion of resources and, consequently, affect their subsequent behaviors, including VB (Ng & Feldman, 2012; Xu et al., 2015). Liang et al. (2012) suggest that employees can provide valuable contributions to their organizations by expressing opinions and ideas as a form of communication and by engaging in activities that consume their resources. A negative algorithmic experience will likely have the opposite effect. Employees may counteract what they perceive as the company’s treatment by reducing their VB, which alleviates their sense of resource depletion. Previous studies by some scholars have also confirmed the negative impact of negative emotions on VB (De Clercq & Pereira, 2023; Gabriel et al., 2024). Therefore, when employees experience negative emotions due to negative algorithmic experiences, they are more likely to reduce their VB.

Third, from the perspective of management style, employees generally believe that human managers are better at social-emotional communication than algorithmic management (Castelo et al., 2019). Consequently, employees managed by algorithms may feel that their suggestions and ideas, which consume resources for the enterprise, are not being well listened to or accepted. Therefore, from the perspective of the COR theory, employees perceive their resources as wasted and their contributions as undervalued, leading to an exacerbation of the resources lost. In particular, this negative algorithmic experience can make employees feel victimized by the algorithms, heightening their aversion to algorithmic management (Choudhury et al., 2020). COR theory posits that when employees’ resources are exhausted, they enter a defensive mode (Hobfoll et al., 2018), and as a result, they may offer fewer resources in response, thereby further exacerbating the negative impact on VB. Thus, we propose:

Cultural Recognition Within Organizations

Organizational culture refers to the shared values, meanings, symbols, and beliefs within a collective organization (Maher, 2000; Sriramesh et al., 2013). Organizational culture provides essential organizational information for employees’ work and innovation. A recognized organizational culture improves employee engagement, which, in turn, enhances productivity (Eidizadeh et al., 2017). When employees are highly identified with the organizational culture, a potentially strong connection is established between employees and the organization. They internalize the values and goals of the organization into their own, further influencing their actions (Brammer et al., 2015; Edwards, 2005). Employees’ recognition of the organizational culture is akin to the organization providing them with intangible cultural resources. On the other hand, employees who recognize and value the organization’s culture often feel a greater sense of belonging and ownership (Freiling & Fichtner, 2010). This sense of belonging serves as a typical psychological resource. According to the COR theory, employees must invest resources to prevent resource loss (Hobfoll et al., 2018). Consequently, employees may engage in behaviors that are investments in the organization’s benefit. The purpose of such investments is to facilitate organizational development, preserve these resources, and reduce their loss. VB serves as one channel through which employees provide feedback to the enterprise and can thus be considered an investment in resources. Employees’ recognition of organizational culture also reflects their emotional orientation. Barsade and O'Neill (2016) argue that employees’ recognition of organizational culture generates positive emotions, which in turn lead to behaviors that are beneficial to organizational development. As reviewed, we suggest that employees’ recognition of organizational culture may positively correlate with their engagement in VB; conversely, it may also hinder VB.

Previous studies suggest that algorithmic management can dehumanize work processes and diminish the intimacy of interpersonal relationships (Beane, 2019; Graham et al., 2017; Shestakofsky, 2017). The application of algorithms alters the way employees and managers interact with each other and the power structure, potentially fostering a toxic organizational culture (Beunza, 2019; Danaher et al., 2017). Negative experiences with algorithmic management can lead to increased negative emotions among employees and greater dissatisfaction with the enterprise (Won et al., 2023). The management style of a company is closely related to its organizational culture (Ortega-Parra & Ángel Sastre-Castillo, 2013). According to the COR theory, employees’ negative experiences with algorithm-centric management styles employed by companies can damage their psychological resources. This can result in their dissatisfaction with the current work environment and organizational culture, thereby diminishing their recognition of the organization’s established culture. From the lens of Social Exchange Theory, the exchange relationship between employees and organizations extends beyond economic resources to encompass socio-emotional resources (Blau, 1964). When organizations, via algorithmic systems, impose negative experiences—such as perceptions of injustice and operational inefficiency—on employees, they breach the principle of reciprocity. In turn, employees rebalance this exchange relationship by diminishing their emotional commitment to, and identification with, the organization’s culture. This reduced identification, in turn, naturally discourages employees from engaging in extra-role behaviors, particularly VB. Consequently, we propose

Work-Life Balance

Work-life balance (WLB) refers to the rational arrangement of time allocation between work and family life by employees, focusing primarily on the conflict between work time and leisure time (Clark, 2000). Compared to traditional employment, gig work facilitated by algorithmic matching on delivery platforms enables employees to rapidly locate individual tasks, providing them with considerable flexibility (Lin et al., 2023). This relaxation of restrictions on work hours enables employees to have more control over scheduling their work and leisure time, which contributes to the increasing number of gig platform workers (Wang et al., 2021). According to the COR theory, the work-life balance facilitated by the work style is also a form of energy resource provided by the organization to its employees (Hobfoll, 1989). According to the previous discussion, when employees have a negative experience with the algorithm, their VB tends to decrease. If, due to algorithmic management, employees have a better work-life balance (WLB), they will have more energy resources compared to those with a lower WLB. The COR theory suggests that individuals with greater initial resources are less vulnerable to resource loss (Hobfoll et al., 2018). Consequently, employees with a higher level of WLB are less likely to experience resource loss from negative algorithmic experiences, which, in turn, weakens the impact on subsequent behaviors, including VB. Therefore, in line with COR theory, we propose

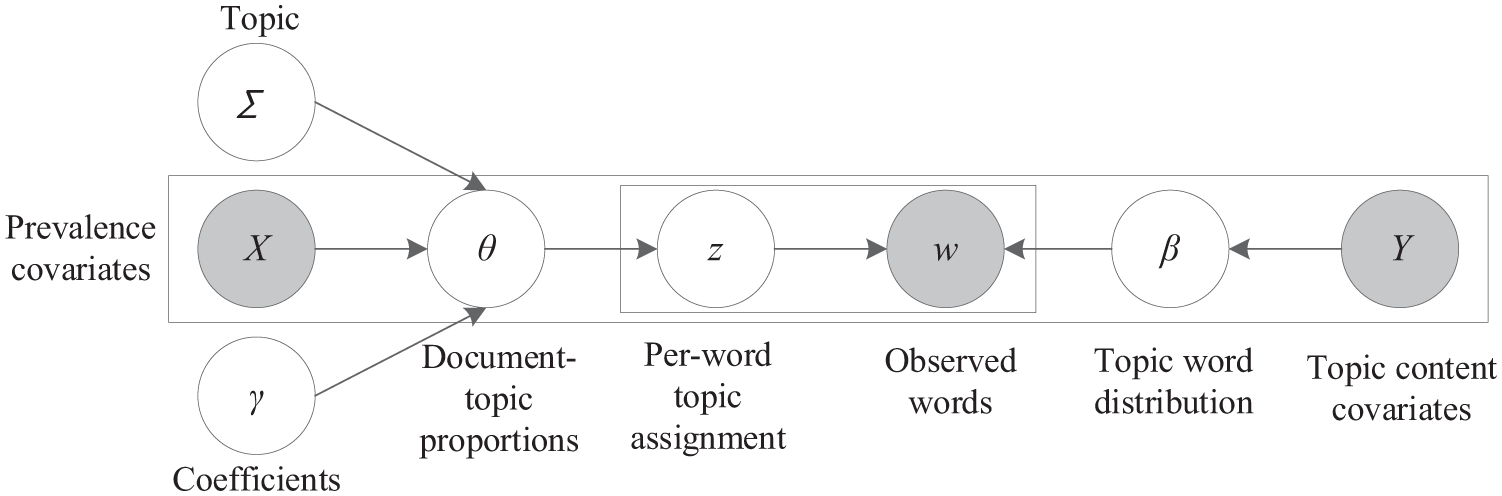

The figure illustrates the theoretical model (see Figure 1).

Theoretical model.

Methodology

Based on user activity and data availability, we first selected employee review data from 10 major online delivery platforms worldwide on Glassdoor, including DoorDash, Grubhub, foodpanda, Uber, Gopuff, Deliveroo, Grab, Delivery Hero, Zomato, and Instacart. Secondly, through structural topic modeling (STM), we can obtain labels for each topic based on high-frequency words and representative comments. Thirdly, we obtain employee comment topics related to algorithms, and through regression analysis, examine the relationship between negative algorithmic experiences and VB among employees, as well as the mediating effect of cultural recognition and the moderating effect of WLB. The specific implementation process is as follows:

Data Availability and Processing

Data Source and Collection

First, the data source platform was selected. The Glassdoor platform was chosen as the data source based on its status as a globally leading employer review platform. Its structured review format, which categorizes content into “Pros,”“Cons,” and “Advice to Management,” provides an ideal framework for systematically analyzing employee experience. The platform permits both current and former employees to post anonymous company reviews that are not subject to corporate vetting. Prior research in organizational behavior and human resource management has established the reliability of Glassdoor data (Abdulsalam et al., 2025; Chang et al., 2024; Hope et al., 2021).

Second, we identified the sample firms. Based on review quantity and data availability on Glassdoor, we selected 10 major online delivery platforms: DoorDash, Grubhub, foodpanda, Uber, Gopuff, Deliveroo, Grab, Delivery Hero, Zomato, and Instacart, which ensured data richness.

Third, we determined the data collection method. Data collection was performed using Python-based tools, including the requests library. The collection period spanned from the inception of each platform’s review section until February 2024, resulting in a total of 61,217 raw employee reviews. Python has been widely recognized in academic research for its robust data processing capabilities and has been effectively employed in previous studies (Do et al., 2023; Orea-Giner et al., 2022). The web crawler program was subjected to ethical review and collected only publicly available review text and rating data, without accessing any personal user information such as names or contact details. Each data record contained the following metadata: review date, employee job title, employment status (current or former), length of service, textual content from the “Pros,”“Cons,” and “Advice to Management” sections, and ratings on various metrics such as Work-Life Balance and Culture & Values.

Data Screening and Cleaning

First, we conducted position screening. The initial dataset contained reviews from employees in various roles, including delivery personnel, customer service representatives, and operations staff. To focus the analysis on delivery workers, a position-matching approach was implemented. This process began with keyword-based screening using terms such as “delivery driver,”“shopper,” and “dasher,” followed by manual verification to account for platform-specific job titles. For instance, Instacart uses “Shopper” while DoorDash uses “Dasher” to refer to delivery personnel. After this screening process, 12,134 reviews specifically associated with delivery positions were retained.

Second, further validity screening was conducted to align with the study’s focus on negative algorithmic experiences. Reviews were retained only if their “Cons” section contained a minimum of five words and substantive content relevant to negative work perceptions. This step led to the removal of blank reviews and those lacking meaningful content, yielding a final dataset of 3,134 valid reviews, the distribution of which by company is shown in Table 1.

Number of Valid Reviews.

Third, we performed text extraction and preprocessing. Text extraction and preprocessing followed established natural language processing (NLP) procedures (M. Yang & Han, 2021). Consistent with the research objective of examining negative algorithmic experiences, only the textual content from the “Cons” section of reviews was extracted for analysis. The NLP cleaning pipeline included several key steps: removing punctuation, numbers, and stop words (e.g., “the,”“and”); and performing tokenization and word frequency counting. These preparatory steps were essential for structuring the data for subsequent topic modeling analysis (J. Liu et al., 2022).

Measurement

Previous studies that utilize employee-generated information to examine the impact of algorithms on employees are scarce. These studies have primarily been conducted by filtering out textual comments specifically related to algorithms (Saydam et al., 2024). This method is relatively accurate; however, it runs the risk of mistakenly discarding valuable information because of incomplete keywords, which could impact the total number of samples. Therefore, given the unique attribute that the work of delivery workers is closely tied to algorithms, after preliminary screening, we first incorporate all relevant information generated by employees into the topic text, aiming to capture as much effective information from the comments as possible. Subsequently, we apply topic modeling to extract the themes. Finally, we analyze the themes to identify those related to AI algorithms for empirical research.

Model Set-Up and Topic Number Estimation

We adopted the STM (Structural Topic Model) as used by M. Yang and Han (2021). The basic schematic diagram of STM is showed in Figure 2. The data is preprocessed before topic clustering, and then the optimal number of topics is determined by selecting K value.

A graphical illustration of STM using plate notation.

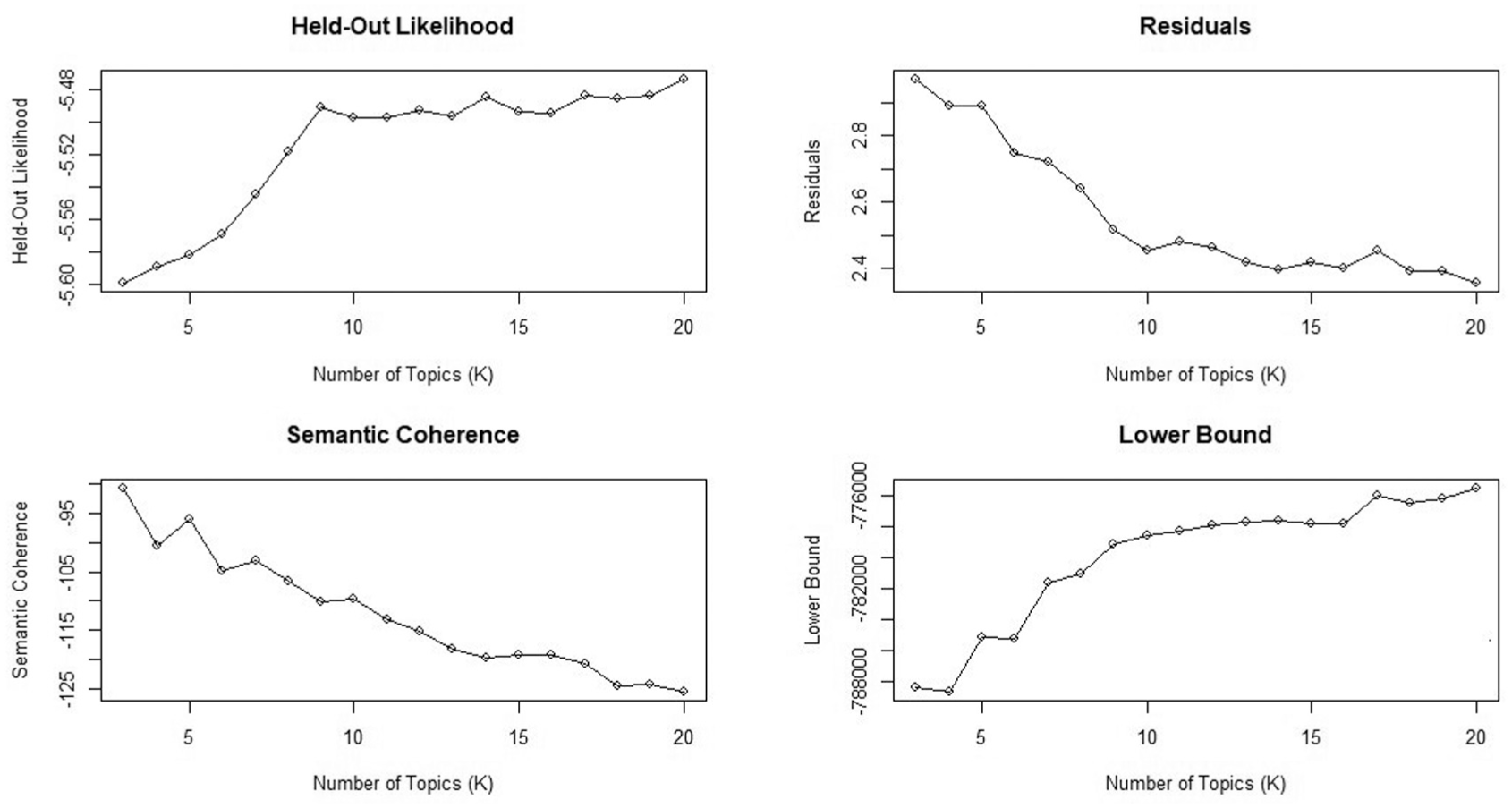

We use the search (Roberts et al., 2014; M. Yang & Han, 2021) in stm and the furr package function in R to determine the optimal number of topics by evaluating the fitting index of the training model to the sparse matrix in the range of 3 to 15. By comparing the fit index changes corresponding to the different K values shown in Figure 3. Considering the local maximum value of preserving likelihood and semantic consistency, we believe that the optimization of the topic clustering effect reaches the local optimal value when K = 9. Therefore, a nine-topic model solution is chosen in this study.

The selective topic model solution.

Topic Labeling

Based on the top words of nine topics (which appear most frequently in this topic and least frequently in other topics, so as to effectively distinguish topics and avoid overlapping or partial overlapping of topics) and the most representative comments, we determined the labels of the topics, as shown in Table 2.

Topic Labeling and Summary.

Results

This section employs topic modeling to analyze the content of each theme and utilizes regression analysis to explore their impact on VB.

Analysis of Topic Results

Table 1, which presents the results of topic modeling for the “cons” section of Glassdoor delivery worker reviews, identified nine themes. Before delving into in-depth analysis, it is necessary to clarify the analytical focus of this study. The core of this research is to explore employees’ experiences with “algorithmic management” systems. Therefore, we screened all themes based on the framework of the algorithmic management theory proposed by Kellogg et al. (2020), and selected themes directly related to algorithmic management functions for subsequent empirical testing. From these, we extracted topics related to algorithms as Topics 1, 3, 7, 8, and 9. In line with the discussions by Kellogg et al. (2020), algorithmic management primarily manifests in task allocation matching and performance management through monitoring for rewards and penalties. We categorize these topics into two major themes: “AI matching” perception and “AI control” perception. Specifically, we divide Topic 1 (Unreasonable time matching), Topic 3 (Lack of technical response), and Topic 9 (Unreasonable order schedules) into the category of “AI matching” perception, while Topic 7 (Unreasonable algorithmic constraints and penalties) and Topic 8 (Algorithmic unfairness in dispute handling) into the “AI control” perception category.

The remaining themes—including Theme 2 (High taxes), Theme 4 (Low salary), Theme 5 (High cost of driving), and Theme 6 (Inconsistent income)—are indeed significant challenges faced by delivery workers. However, these issues are more closely related to the industry’s compensation models, economic policies, and inherent occupational costs, rather than employees’ direct experiential interactions with algorithms as the management agent. Therefore, to maintain the focus of the research question and theoretical framework, this study will primarily analyze the five themes directly related to algorithmic management (Themes 1, 3, 7, 8, and 9), while the remaining themes will not be treated as core analytical objects.

For the “AI matching” perception, Topics 1, 3, and 9 reflect employees’ negative experiences regarding the precision of algorithmic task allocation. Generally, algorithmic control can enhance the efficiency of the matching process by scheduling tasks promptly in response to customer needs (Kellogg et al., 2020; Rosenblat & Stark, 2016). However, platform delivery employees, who interact directly and continuously with the algorithm, may intuitively perceive its shortcomings (Delfanti, 2021). For instance, Topic 1 addresses the issue of excessively long wait times for food delivery, which are caused by inaccurate algorithms. This issue directly affects employee productivity and customer satisfaction. Topic 3 concerns the potential bias of the algorithm in task assignment, resulting in employees being assigned mismatched tasks, which not only wastes employees’ time and energy but also affects the quality of work. Topic 9 focuses on the unreasonable duty schedule formulated by the algorithm, resulting in problems in the working time arrangement of employees, which may lead to overwork or unstable work. Representative comments on these topics are the direct experiences and feedback of employees on the algorithm matching process. These negative experiences could potentially undermine employees’ trust and acceptance of the algorithm, consequently impacting their motivation and commitment to their work. Additionally, these problems may also lead to dissatisfaction and resistance among employees, which could diminish their loyalty and sense of identity with the platform enterprises (Möhlmannn et al., 2023).

[ [ [

Algorithmic systems are capable of evaluating the quality of employees’ work in real time by monitoring and collecting information (Duggan et al., 2020; Evans & Kitchin, 2018), However, employees frequently harbor concerns regarding the fairness of these evaluation outcomes. Two primary reasons underpin this skepticism. Firstly, the opacity of algorithmic decisions is a significant factor contributing to employees’ skepticism about the fairness of the outcomes (Duggan et al., 2020). The lack of clarity regarding the operational mechanism and criteria of the algorithmic rating system can lead employees to question both its reliability and persuasiveness (Griesbach et al., 2019). As a result, employees may question the fairness of treatment differences and worry that they are being treated unfairly. Secondly, the rapid changes in results also make it difficult for employees to have enough time to accept and digest these results, resulting in a lack of control and doubt about the fairness and rationality of the outcomes (Stark & Pais, 2020). In Topic 7, employees expressed dissatisfaction with the privacy invasions and restrictions enforced by the algorithms. In Topic 8, employees voiced skepticism about the reliability of the algorithmic evaluation system, including its ability to handle relationships with customers. Below are representative comments for Topics 7 and 8.

[ [

Empirical Analysis and Findings

Subsequently, we will analyze the direct effects, mediating mechanisms, and boundary conditions of the negative algorithmic experiences of delivery employees on their VB. Two control variables are used in this study: “Employment” represents whether the employee is currently employed (with a value of 1 for current employees and 0 for former employees), and “Tenure” indicates the length of the employee’s service. We describe these variables and the measurement methods in Table 3.

Variable Descriptions.

Main Effects

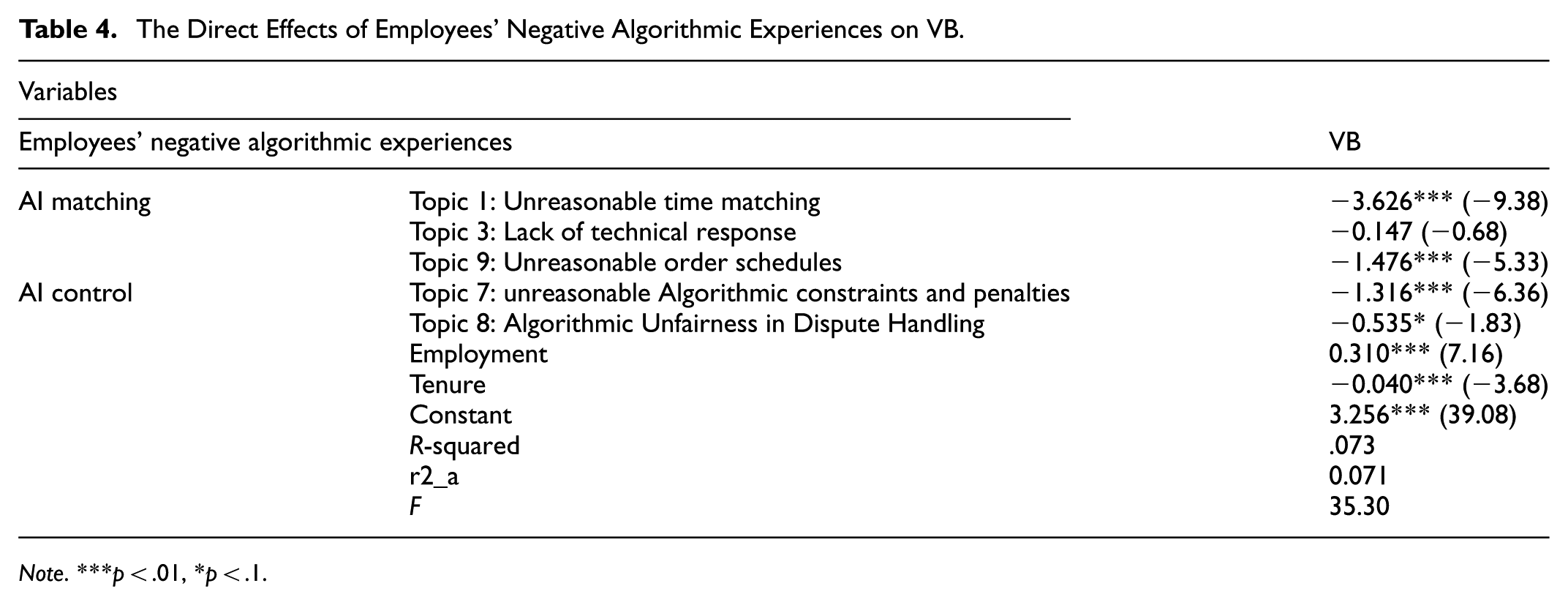

We first test the direct effects of the five topics of employees’ negative algorithmic experiences on their voice behavior, as shown in Table 4. Except for Topic 3 (“Lack of technical response”), all other topics were significantly negatively correlated with employees’ negative algorithmic experiences, confirming

The Direct Effects of Employees’ Negative Algorithmic Experiences on VB.

Note. ***p < .01, *p < .1.

The impact of topics 7 and 8 in AI control perception on VB is significant, albeit relatively small. Topic 8, in particular, stands out with the highest percentage of reviews at 0.117, suggesting that employees hold the most negative perception of algorithmic constraints and penalties. However, the inhibitory effect of this topic on VB is minimal (except topic 3, which is not significant). This suggests that, compared to AI control perception, AI matching perception resulting from the accuracy of algorithms has a greater impact on employees’ VB.

The reason for this situation is that AI matching, unlike AI control, directly impacts the work content of employees, affecting their time costs, income, and other resources. The perception of direct resource loss is more profound and intense than that of the psychological resource loss caused by AI control. According to the COR theory, employees are more inclined to take defensive actions in response to greater resource losses in order to prevent further loss. Therefore, even though the proportion of comments expressing negative emotions from employees under AI control was similar, the impact of their AI matching perception on VB was more significant. We believe that the reason why the results of topic 3 are not significant may lie in the fact that, from the perspective of COR theory, compared with other matching problems, the feedback of technical problems belongs to the overall algorithm system and is shared by all employees managed by algorithms. This sense of relative fairness mitigates the employees’ experience of resource deprivation to a certain extent. As a result, it reduces the negative impact of subsequent employee behaviors, including VB.

Mediation Effects

We conducted separate mediation analyses for each of the five themes, with the results detailed in Tables 5 to 9. This analysis aimed to uncover the unique mechanism of action for each theme, and its results should be interpreted in conjunction with the main effects from the multiple regression analysis in Table 4. Overall, cultural recognition plays a key mediating role in the relationship between employees’ negative algorithmic experiences and VB, although its pattern of influence varies depending on the nature of the algorithmic issue. For Themes 1, 7, 8, and 9, the findings were consistent. In both the multiple regression models in Table 4 and the mediation tests, they exhibited a significant direct suppressive effect on VB. More importantly, cultural recognition demonstrated a significant negative mediating effect in all these cases. This provides strong support for our theoretical framework: when employees encounter these negative experiences, which involve core resources and fundamental fairness, their recognition with the organizational culture is weakened, thereby inhibiting their VB.

Test of Mediating Effect 1.

Note. ***p < .01.

Test of Mediating Effect 2.

Note. ***p < .01, **p < .5.

Test of Mediating Effect 3.

Note. ***p < .01.

Test of Mediating Effect 4.

Note. ***p < .01.

Test of Mediating Effect 5.

Note. ***p < .01, **p < .05, *p < .1.

Theme 3 (Lack of technical response), however, revealed a more complex pattern. In the multiple regression model in Table 4, after controlling for all other types of negative experiences, the direct effect of Theme 3 on VB was a non-significant negative value. This suggests that in real-world scenarios, when “Lack of technical response” co-occur with other issues like “Unreasonable time matching,” their net effect aligns with other themes, collectively contributing to an atmosphere that suppresses VB. However, in the separate mediation analysis (Table 6), Theme 3 exhibited its uniqueness, it had a significant positive mediating effect on VB through cultural recognition. This seemingly contradictory result suggests that Theme 3 may be perceived as a fixable operational issue rather than a principled issue. Employees with a higher degree of cultural recognition are more likely to provide constructive feedback on such problems, thus leading to a positive association when analyzed in isolation. Next, we will interpret the mediation results for each theme separately.

As shown in Table 5, cultural recognition played a significant partial mediating role in the relationship between Topic 1 and VB. This implies that the time waste and inefficiency caused by algorithms indirectly reduce VB by weakening employees’ cultural recognition. This finding provides strong support for our theoretical framework. The mediating effect of Topic 1 was the most prominent among all themes, highlighting the detrimental impact of direct resource loss on employees’ psychological contract.

The findings regarding Topic 3 reveal the complexity of the impact of negative algorithmic experiences. As shown in Table 6, when analyzed in isolation, this theme was positively correlated with VB, suggesting that employees may view technical issues as correctable operational flaws and are willing to provide constructive feedback for them. However, when considered alongside other more invasive negative experiences in the multiple regression model (Table 4), this positive relationship disappeared. This indicates that among the various algorithmic problems employees face, those that touch upon fairness and core resources are dominant; their strong negative effects mask VB that technical issues might otherwise elicit. This finding underscores the importance of treating negative algorithmic experiences as a multidimensional construct and examining the interactions within it.

The results in Table 7 show that cultural recognition plays a significant partial mediating role in the relationship between Topic 7 and VB. This reveals that unreasonable algorithmic constraints and penalties are not merely a procedural issue; rather, they are interpreted by employees as a controlling culture lacking trust, respect, and flexibility. Employees respond by reducing their cultural recognition and, consequently, withdrawing their VB.

The mediating effect for Topic 8 in Table 8 is also significant. This finding emphasizes that when employees feel presumed guilty by the algorithmic system and lack proper channels for appeal in a dispute, they perceive profound procedural and interactional injustice. This experience directly challenges employees’ belief in the cultural recognition and justice. This constitutes the key psychological mechanism that leads them to choose silence and to cease VB aimed at organizational improvement.

Lastly, concerning Topic 9, as shown in Table 9, cultural recognition also served as a significant partial mediator. Unreasonable algorithmic scheduling throws off employees’ work-life rhythm, an action similarly interpreted as the organization’s disregard for employee well-being. This process, by depleting the social resource of cultural recognition, culminates in the suppression of extra-role behaviors like VB.

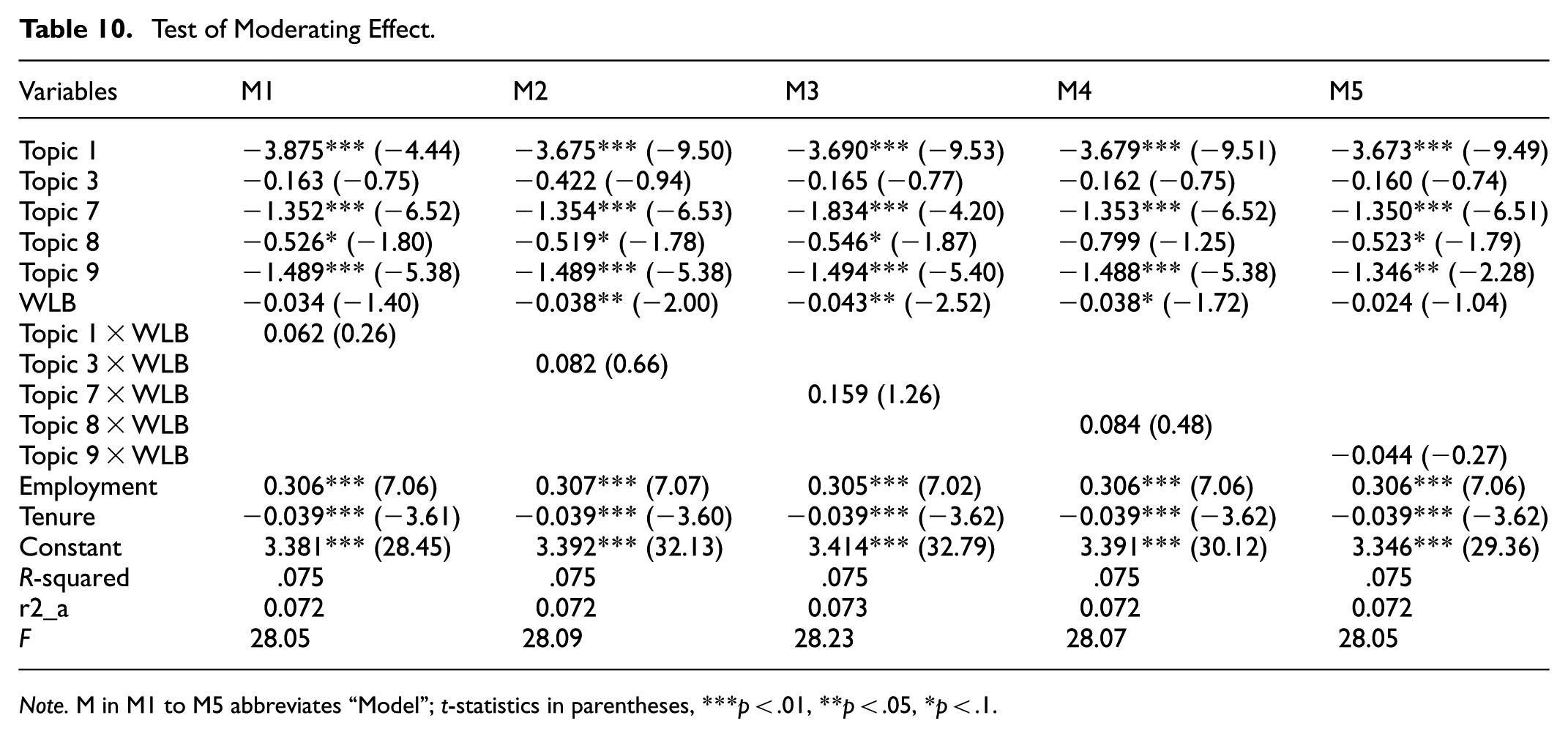

Moderation Effects

In this section, we introduce the moderating variable Work-Life Balance (WLB), as shown in Table 10. The results indicate that the moderation coefficient of Work-Life Balance (WLB) on the main effects is mostly positive but not significant. In other words, the work-life balance facilitated by algorithms among platform employees does not mitigate the negative impact of their negative algorithmic experiences on their VB. Employees do not reduce their negative experiences and thus give more advice to the company because the flexibility of the time allocation of the job position balances their work-life. This may be because the flexibility in time allocation is considered essential for platform work, and employees engage in platform work precisely because of this flexibility (Jarrahi, 2018; Wood et al., 2018). Therefore, Work-Life Balance is not perceived to reduce negative experiences.

Test of Moderating Effect.

Note. M in M1 to M5 abbreviates “Model”; t-statistics in parentheses, ***p < .01, **p < .05, *p < .1.

Discussion

Drawing on COR theory, this study empirically examined the mechanism through which negative algorithmic experiences of online delivery couriers affect their VB, using large-scale employee review data from the Glassdoor platform. The results of text mining and STM revealed two core categories of negative experiences: AI Matching and AI Control. Further regression analysis indicated that these experiences generally have a suppressive effect on VB, and this impact is realized through the mediating path of cultural recognition. The following section will discuss the core findings of this study in the context of the online delivery work environment.

Theoretical Significance

First, this study deepens our understanding of the negative consequences of algorithmic management and establishes a negative association between negative algorithmic experiences and VB of online delivery employees through a big data approach. Our findings resonate with prior research indicating that algorithmic opacity and control can trigger employee strain and negative behavioral responses (Gal et al., 2020; Kellogg et al., 2020; Rosenblat & Stark, 2016). More importantly, through topic modeling, we specified the abstract concept of negative experiences into two major dimensions: inaccurate algorithmic matching and unfair algorithmic control. The empirical results show that the inhibitory effect of algorithmic matching problems on VB is stronger than that of algorithmic control problems. This finding provides new evidence for Demetis and Lee (2018) assertion: when algorithmic decisions involve more complex, human skill-like coordination and dispatching (i.e., the matching function), their mistakes have a more direct and severe impact on employees because they directly deprive employees of their time and financial resources (Hobfoll et al., 2018). In contrast, while algorithmic control may raise questions of fairness, its impact is likely to be more psychological and emotional, with a relatively weaker immediate suppressive effect on VB.

Second, this study reveals and validates the key mediating role of cultural recognition in the relationship between negative algorithmic experiences and VB. This finding expands the research on the mechanisms through which algorithmic management affects online delivery employee behavior. According to COR theory, negative algorithmic experiences erode employees’ recognition with the organizational culture, which is a crucial social and psychological resource (Hobfoll et al., 2018). When employees feel disrespected due to algorithmic injustice or errors, their trust in the values and culture proclaimed by the platform collapses (Brougham & Haar, 2018). This aligns with the perspective of Algorithm Sensemaking, wherein employees interpret algorithmic actions to understand their work environment (Möhlmannn et al., 2023). Persistent negative experiences lead to a negative interpretation of the organizational culture, thereby weakening their willingness to contribute to the organization through voice. Concurrently, this mediating mechanism is also linked to psychological contract theory. Negative experiences with algorithmic management can be perceived as the organization’s breach of its promise to provide a fair and supportive work environment, thus violating the psychological contract between the employee and the organization, and reducing their organizational identification and subsequent VB (Tomprou & Lee, 2022). This mediation broadens the scope of new theoretical applications for organizational culture within the realm of algorithmic management.

Thirdly, we introduced text mining and topic modeling techniques into the subdivision study of the relationship between online delivery employees’ negative algorithmic experiences and VB for the first time. This innovation expands the data sources of empirical research. Most empirical studies on employee VB have traditionally relied on questionnaire surveys and interviews (Wen & Chi, 2023). We apply text data from Glassdoor employee reviews to the algorithmic domain of negative perceptions of platform employees, utilizing text mining and topic modeling techniques. This approach overcomes the limitations of traditional methods (Bai, He et al., 2024; Bai, Yu et al., 2024; Joshi et al., 2024). By obtaining objective online delivery employee review data, we ensured the validity of the data and thereby expanded the range of research methods in this field.

Practical Applications

First, platform firms must prioritize algorithmic optimization, particularly concerning the precision and fairness of task matching. The finding that algorithmic matching problems have the strongest inhibitory effect on employee VB indicates that this is a key pain point in current management practices. Platform firms should invest in technical resources to enhance the accuracy and rationality of their algorithms in order dispatch, route planning, and time scheduling, thereby reducing unnecessary waiting times and resource waste for employees. Concurrently, for algorithmic control, its transparency and explainability should be increased (Gal et al., 2020). For instance, clearly communicating penalty rules and establishing clear, user-friendly appeal channels can provide employees with a clear recourse when they feel they have been treated unfairly. This helps to mitigate the sense of fairness deficit and negative emotions arising from algorithmic opacity.

Furthermore, cultural recognition as a process mechanism between employees’ algorithmic experiences and VB significantly mediates the relationship between the two. Therefore, in management practices, efforts should be made to enhance employees’ cultural identification from the perspective of organizational culture construction. Although the cultural connection between online platform organizations and employees is relatively loose compared to other types of organizations (Nilsen et al., 2022), our study offers new insights for managing online platform employees by focusing on organizational culture construction. Platform enterprises cannot rely solely on technological management; instead, they must proactively shape and maintain an organizational culture that respects employees, emphasizes fairness, and encourages communication. By means of offline activities, online communities, and regular communication meetings, it is possible to strengthen employees’ sense of belonging and identification with organizational values, which is crucial for sustaining positive employee behaviors in a highly digitalized environment. Approaching from the angle of organizational culture construction, enhancing organizational cohesion can better stimulate employee behaviors beneficial to the organization.

Finally, platform managers should re-examine the role of WLB in the gig economy. Our finding that the moderating effect of WLB is non-significant is an important practical signal. It suggests to platforms that merely providing scheduling flexibility may be insufficient to compensate for the resource depletion and emotional harm caused by flaws in the algorithm’s core functions. Companies need to move beyond the surface-level benefit of flexibility and commit to fundamentally improving the quality of the employee work experience in order to effectively motivate VB.

Limitations and Future Research

This study has several limitations, which also point to directions for future research:

First, this study is based on cross-sectional data, making it difficult to make strict causal inferences. Future research could employ experimental methods to further validate the causal relationship between negative algorithmic experiences and VB. Second, although we revealed the mediating role of cultural recognition, the boundary conditions between these two variables and their more complex underlying mechanisms remain to be explored. For example, future studies could use continuous text mining techniques to dynamically track negative algorithmic experiences expressed by employees on social media or internal forums, and investigate how the intensity and frequency of such experiences affect their VB, as well as whether contextual factors such as leadership style (C. Yang et al., 2024), or coworker support act as important boundary conditions. Third, this study focused on cultural recognition as a mediating mechanism; future research could explore other explanatory pathways. For instance, from the perspective of Self-Determination Theory, researchers could examine whether negative algorithmic experiences inhibit intrinsic motivation and VB by thwarting employees’ basic psychological needs for autonomy, competence, and relatedness (Deci & Ryan, 2000). Finally, the data for this study were sourced from a public, third-party review platform. While this approach avoids common method bias, it cannot capture all dimensions of the employee experience. Future research could adopt mixed-methods approaches to gain a more comprehensive and in-depth understanding of employee experiences under algorithmic management.

Conclusion

Through an analysis of extensive employee reviews from the Glassdoor platform, this study draws the following main conclusions: First, we identified the core conceptual dimensions of negative algorithmic experiences, namely AI Matching and AI Control. We found that the inhibitory effect of AI Matching problems on VB is significantly stronger than that of AI Control problems. This finding highlights that in the gig economy, algorithmic efficiency, which directly affects employees’ immediate economic and temporal resources, is the primary factor influencing their work experience and citizenship behaviors. Second, cultural recognition is a key mediating mechanism in the process through which algorithmic management affects VB. The study confirms that negative algorithmic experiences indirectly lead to a reduction in VB by weakening employees’ cultural recognition. This mechanism reveals that algorithmic management is not merely a technical process but also a social and psychological one, poor algorithmic performance is interpreted by employees as a deficiency in organizational values, thereby damaging the social exchange relationship and psychological contract between them. Third, WLB does not buffer the negative impact of negative algorithmic experiences on VB. This indicates that the fundamental predicament created by algorithms has a more destructive power than the superficial advantage of flexibility. Platform enterprises cannot rely solely on work flexibility to compensate for the losses caused by their algorithms.

Footnotes

Acknowledgements

We are grateful for the financial support provided by the Fundamental Research Funds for the Heilongjiang Provincial Universities.

Ethical Considerations

This article does not contain any studies with human participants performed by any of the authors.

Consent to Participate

This article does not contain any studies with human or animal participants.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was financially supported by the Fundamental Research Funds for the Heilongjiang Provincial Universities (Grant No. 2024-KYYWF-0912).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available on request from the corresponding author, upon reasonable request.