Abstract

This study investigates how digital mindset, AI sensemaking, trust in AI, digital teaching competence, and institutional support influence AI integration among open education teachers in China. Drawing on Sensemaking Theory, the Digital Mindset Framework, and Algorithm Aversion Theory, the study employs a three-wave survey of 366 instructors in Guangdong Province and analyzes the data using Partial Least Squares Structural Equation Modeling (PLS-SEM). Findings reveal that digital mindset and AI sensemaking significantly predict AI integration, while trust in AI and digital teaching competence do not have direct or consistent mediating effects. Surprisingly, perceived institutional support negatively moderates the relationship between digital mindset and both AI sensemaking and trust, suggesting that overly directive support may undermine autonomy in open education settings. The study contributes theoretically by extending digital mindset and sensemaking frameworks to the AI-in-education context and offering a context-sensitive interpretation of trust theory. Practically, it highlights the need for autonomy-respecting, interpretive support structures and mindset-based training programs. These insights inform more effective AI adoption strategies in decentralized learning environments.

Plain Language Summary

This study shows that a teacher’s mindset—being open to change and willing to experiment—and their ability to make sense of how AI fits with teaching values are the strongest drivers of adoption. Surprisingly, technical skills and trust in AI systems do not guarantee use, and too much institutional pressure can even backfire by making teachers feel less autonomous. The results suggest that training should focus on building teachers’ confidence and interpretive skills, while institutional leaders should create flexible, supportive environments rather than imposing rigid mandates. These lessons can help education systems design AI strategies that respect teacher autonomy and encourage more effective, ethical use of AI in teaching.

Introduction

Artificial intelligence (AI) is rapidly reshaping education worldwide, promising to enhance personalization, automate routine tasks, and provide data-driven insights into student learning. By 2024, the global market for AI in education was valued at over USD 5.8 billion and is projected to exceed USD 32.27 billion by 2030 (Grand View Research, 2023). Governments are embedding AI into national strategies, and universities are redesigning digital infrastructures to integrate intelligent tutoring systems, predictive analytics, and adaptive platforms (Holmes & Tuomi, 2022; Luckin & Cukurova, 2019; OECD, 2021). In China, more than 50 billion RMB was invested between 2018 and 2022 under initiatives such as the New Generation Artificial Intelligence Development Plan and Education Informatization 2.0 Action Plan (Bhutoria, 2022; Zhu et al., 2023). Open and distance education (ODE) has been a major beneficiary of this investment: with more than 5 million active learners across provincial and city-level digital campuses, ODE institutions are expected to lead in the experimentation and scaling of AI-enabled instruction (Bozkurt & Stracke, 2023).

Despite these developments, a paradox persists. While Chinese ODE institutions report high levels of infrastructural readiness and policy support, the pedagogical use of AI remains limited. National surveys indicate that although over 70% of universities have introduced AI-supported systems, fewer than 30% of instructors report using them meaningfully in teaching and assessment (Chen et al., 2022; Lucas et al., 2024). Even among adopters, AI is most often applied to peripheral administrative tasks such as grading, attendance tracking, or analytics dashboards, rather than being integrated into lesson design or student engagement (Bozkurt, 2024; Register & Spiro, 2024). This gap between technological availability and pedagogical integration raises pressing questions for both policymakers and educators: why does investment not translate into widespread instructional adoption, especially in autonomy-rich ODE environments where teachers are expected to innovate?

Existing literature has provided only partial answers. Research on technology adoption in education remains dominated by competence-based and utilitarian models, particularly the Technology Acceptance Model (TAM) and the Unified Theory of Acceptance and Use of Technology (UTAUT; Ifenthaler & Yau, 2020; Venkatesh et al., 2012). These frameworks emphasize ease of use and perceived usefulness, offering valuable insights into the conditions that make adoption possible. Yet they do not sufficiently explain why instructors with comparable digital skills and infrastructural access differ so significantly in their willingness to embed AI in teaching (Dwivedi et al., 2023). The persistence of the adoption paradox suggests that deeper psychological, cognitive, and contextual dynamics may be at play.

One overlooked dimension is dispositional readiness, especially the digital mindset. Defined as a future-oriented, innovation-accepting orientation (Leonardi, 2021; Westerman et al., 2015), digital mindset has been shown in management and organizational research to influence openness to technological change (Khare et al., 2024). In the context of Chinese ODE, where instructors enjoy high pedagogical autonomy but limited structured support, such dispositions may be the decisive factor that separates proactive innovators from passive users. Yet empirical studies have rarely examined digital mindset in education, leaving its role in AI adoption under-theorized and untested.

Closely connected is the cognitive process of sensemaking. Teaching with AI requires educators to interpret ambiguous technologies, assess their alignment with pedagogical values, and reconcile ethical concerns such as bias and fairness. Sensemaking theory (Weick, 1995) suggests that behavior under uncertainty is shaped by the meanings individuals construct, rather than by technical affordances alone. Instructors in ODE—often working in isolation and with fewer opportunities for peer consultation—are particularly reliant on their interpretive capacities (Mouta et al., 2025; Wolf & Paine, 2020). Yet existing AI adoption research has largely overlooked sensemaking as an explanatory lens, treating adoption as a rational choice rather than an interpretive act (Siemens et al., 2022).

A further constraint is the issue of trust in AI systems. Studies in organizational behavior and decision sciences consistently highlight algorithm aversion—users’ reluctance to rely on automated systems, especially when errors are visible or explanations are opaque (Dietvorst et al., 2015; Glikson & Woolley, 2020). For educators, whose professional legitimacy depends on safeguarding student learning, trust is not merely a technical consideration but a pedagogical necessity. Trust can be defined as the willingness to accept vulnerability to algorithmic recommendations in consequential tasks (Mcknight et al., 2011), and it is conceptually distinct from its antecedents such as perceived competence, reliability, or ethical alignment (Osman et al., 2024). However, empirical evidence on how instructors develop or withhold trust in AI within classrooms remains scarce. Without this affective dimension, models of adoption risk overlooking a critical mediator of behavioral intention and action.

Finally, adoption cannot be separated from the institutional context. Most studies assume that institutional support universally facilitates adoption, but in ODE contexts characterized by high instructor autonomy, this assumption may not hold. Excessive or prescriptive institutional interventions can unintentionally constrain innovation by undermining autonomy (Galindo-Domínguez et al., 2024). This dynamic is particularly relevant in China, where ODE instructors balance top-down policy imperatives with local teaching discretion (C. Li et al., 2025). Yet little is known about when institutional support enables adoption and when it suppresses it.

Taken together, these gaps highlight the need for an integrative framework that considers dispositional (digital mindset), cognitive (sensemaking), affective (trust), and contextual (institutional support) factors (Bozkurt, 2024; Dwivedi et al., 2023). Addressing these gaps is critical to understanding why infrastructural readiness does not automatically produce pedagogical adoption, and how to design support structures that respect instructor autonomy while encouraging meaningful innovation.

This study responds to these challenges by:

Proposing a Dispositional–Cognitive–Contextual (DCC) framework that integrates digital mindset, AI sensemaking, and trust in AI as mechanisms shaping instructional integration.

Examining the moderating role of perceived institutional support, theorized as a boundary condition that can either enable or inhibit adoption in autonomy-driven ODE environments.

Testing the framework empirically using a multi-wave survey of 366 instructors in Guangdong Province, one of China’s most digitally advanced but paradoxically under-integrated ODE systems.

In doing so, the study contributes to both theory and practice. Theoretically, it extends technology adoption research by moving beyond competence- and utility-driven models to highlight interpretive, dispositional, and contextual dynamics. Practically, it offers evidence-based guidance to policymakers and ODE leaders seeking to close the gap between infrastructure investment and pedagogical adoption, ensuring that the billions invested in AI-enabled education translate into authentic, scalable improvements in teaching and learning.

Literature Review

Drivers of AI Integration in Open Education

While prior studies on technology adoption in education have largely applied competence- and utility-based frameworks such as the Technology Acceptance Model (TAM) and the Unified Theory of Acceptance and Use of Technology (UTAUT), these approaches tend to reduce adoption to a linear outcome of perceived usefulness and ease of use (Alam et al., 2024; Osman et al., 2024). Such models explain conditions for usability but neglect the broader psychological, interpretive, and contextual dynamics that shape whether educators meaningfully embed AI into their teaching. This limitation is especially pronounced in open and distance education (ODE), where instructors exercise greater autonomy and must rely more heavily on their own orientations and judgments.

A growing body of research suggests that individual dispositions may play a decisive role in shaping adoption. The concept of a digital mindset—a future-oriented, innovation-accepting orientation (Leonardi, 2020)—has been shown in organizational contexts to foster openness to experimentation and proactive use of digital tools (Westerman et al., 2015). Yet in education, empirical work continues to focus on skills and competencies (Gerli et al., 2022), leaving the role of digital mindset underexplored. In ODE, where instructors must independently decide whether to experiment with AI, mindset may provide the psychological foundation that explains differences in uptake among equally competent teachers.

Dispositions alone, however, cannot account for adoption without attention to the cognitive process of sensemaking. Teaching with AI requires educators to interpret how technologies align with pedagogical goals, ethical standards, and professional values. Sensemaking theory (Weick, 1995) highlights that individuals act on the meanings they construct under conditions of ambiguity, not merely on technical affordances. Yet AI education studies seldom incorporate this perspective, continuing to privilege utilitarian drivers over interpretive work (Mouta et al., 2025). For ODE instructors—who often operate with minimal centralized guidance—the ability to make sense of AI’s role may determine whether they restrict its use to administrative tasks or adopt it for substantive pedagogical purposes.

The affective dimension of trust further conditions adoption. Research on algorithm aversion demonstrates that users are reluctant to rely on automated systems when errors are visible or when system operations are opaque (Dietvorst et al., 2015). For educators, whose legitimacy depends on safeguarding student learning, trust in AI represents more than confidence in reliability: it reflects ethical alignment, fairness, and professional responsibility (Osman et al., 2024). Despite its centrality, few studies have examined trust as a mediator between instructors’ orientations and their actual use of AI in teaching, leaving a gap in understanding how affective confidence shapes adoption decisions.

Finally, these processes are embedded within institutional contexts. Prior research often treats institutional support as an unquestioned facilitator, emphasizing training, technical assistance, and policy incentives (C. Li et al., 2025). Yet in autonomy-rich ODE environments, institutional signals may have unintended consequences. Prescriptive or heavy-handed support can be perceived as constraining, dampening rather than encouraging innovation (Galindo-Domínguez et al., 2024). This possibility has rarely been theorized in education research, despite its relevance to understanding when institutional support enables adoption and when it undermines instructor agency.

Taken together, these insights indicate that AI integration in ODE is shaped not only by technical competence or infrastructure but by the interaction of dispositional (digital mindset), cognitive (sensemaking), affective (trust), and contextual (institutional support) factors. Prior literature has treated these elements in isolation or neglected them altogether. Integrating them into a coherent model provides a stronger basis for explaining why ODE instructors vary in their adoption of AI and under what conditions institutional strategies succeed or fail. This recognition motivates the Dispositional–Cognitive–Contextual (DCC) framework advanced in this study.

Cognitive and Affective Drivers: Sensemaking and Trust in AI

Dispositions, however, are insufficient without processes that translate openness into behavior. Sensemaking theory (Weick, 1995) emphasizes how individuals construct meaning under ambiguity. In AI-mediated teaching, opacity is common: adaptive platforms flag “at-risk” students without rationale, dashboards generate predictions without context, and essay scorers apply criteria that instructors cannot audit. Instructors must interpret these signals—are they augmentative cues or unreliable artifacts? Studies show that educators’ frames strongly shape usage: some adopt AI alerts as formative feedback, others dismiss them as surveillance or error-prone noise (Mouta et al., 2025). In ODE, where collegial consultation is limited, this interpretive burden is heightened. A digital mindset can facilitate deeper sensemaking by encouraging experimentation, cue-seeking, and reframing, but sensemaking remains the mechanism that determines whether AI is seen as pedagogically legitimate.

Even constructive interpretations will not translate into integration without trust. Trust in AI should be defined as the willingness to be vulnerable to algorithmic outputs in consequential tasks (Mcknight et al., 2011), not conflated with competence or reliability beliefs. Prior work often mixes these dimensions, obscuring the distinct role of trust as a gatekeeping mechanism. Algorithm Aversion Theory (Dietvorst et al., 2015) provides nuance: users disengage when (a) errors are observable, (b) processes are opaque, or (c) systems lack repairability. In classrooms, these conditions are routine—adaptive quizzes may misclassify students, grading systems may penalize non-standard English, and chatbots may provide factually incorrect explanations. In such cases, trust collapses even when overall accuracy is high. Conversely, human-in-the-loop designs with transparent rationales and override functions foster reliance. For ODE instructors, whose legitimacy rests on protecting student outcomes, trust therefore acts as the affective hinge: without it, sensemaking leads only to informed rejection.

Contextual Moderators: Institutional Support as Enabler or Constraint

Finally, adoption occurs within institutional contexts that can either enable or inhibit these dispositional and interpretive processes. Prior literature treats perceived institutional support (PIS) as a straightforward facilitator, highlighting resources, training, and technical assistance (Gbobaniyi et al., 2023). Yet in autonomy-rich ODE environments, the effects of support are more nuanced. Support that is perceived as enabling—such as voluntary workshops, opt-in pilots, or protected experimentation spaces—signals trust in instructor autonomy and strengthens the links from DM to sensemaking and from sensemaking to trust. In contrast, prescriptive support, such as mandated use of centralized dashboards or quotas for AI-based feedback, can trigger resistance. Galindo-Domínguez et al. (2024) found that instructors often comply superficially with mandated systems while continuing to rely on traditional practices for high-stakes decisions, producing a use–depth gap.

This paradox explains why institutions can report high “AI usage” while adoption remains shallow. Instructors strategically position AI at the administrative periphery to meet compliance requirements while insulating pedagogy from perceived external control. Such dynamics are rarely theorized in mainstream adoption models, yet they are essential to understanding ODE. Institutional support is therefore best conceptualized as a boundary condition: it amplifies adoption when aligned with autonomy but constrains it when experienced as coercive.

Theoretical Foundations

The theoretical basis of this study rests on the integration of three perspectives—Digital Mindset (DM), Sensemaking Theory, and Trust Theory, particularly Algorithm Aversion Theory—to explain how educators in open education environments engage with artificial intelligence (AI). While these frameworks have been applied independently in domains such as organizational change, digital transformation, and human–algorithm interaction, they have rarely been synthesized in educational contexts where autonomy, uncertainty, and minimal oversight dominate. This integration allows us to conceptualize AI adoption not merely as a matter of perceived usefulness and ease of use, as emphasized by TAM and UTAUT, but as a dispositional–cognitive–affective process shaped by contextual boundaries.

At the dispositional level, DM provides a psychological orientation that underpins educators’ openness to digital innovation. A digital mindset reflects future orientation, comfort with uncertainty, and a proactive approach to experimentation with emerging technologies (Leonardi, 2020). Unlike digital teaching competence, which emphasizes measurable skills, DM highlights educators’ interpretive flexibility and willingness to explore new practices. This distinction is particularly salient in open and distance education (ODE), where instructors often have the autonomy to decide whether, how, and to what extent they use AI. For example, instructors with similar technical competence may diverge significantly in adoption because their orientations toward experimentation differ. Hence, DM serves as the initial trigger that influences how educators approach AI, setting the stage for subsequent interpretive and affective processes.

Sensemaking provides the cognitive mechanism through which these orientations are translated into action. According to Weick (1995), individuals faced with ambiguity construct meaning through interpretation, framing, and reconstruction of experiences. Teaching with AI involves navigating opaque systems, such as adaptive learning platforms or predictive analytics dashboards, that often lack transparency. Instructors must therefore decide whether to frame AI as a supportive partner in pedagogy or as a threat to professional autonomy. Prior studies have shown that sensemaking mediates the relationship between exposure to new technologies and behavioral action under uncertainty (Wolf & Paine, 2020). In ODE contexts, where collegial scripts are limited and teachers often work in isolation, sensemaking becomes especially critical. A digital mindset shapes this interpretive process by encouraging educators to search for cues, test applications, and reframe challenges as opportunities. Thus, sensemaking is positioned in this study as a mediator that links DM to meaningful AI integration.

While cognitive interpretation is necessary, it is insufficient without the affective dimension of trust. Trust in AI represents the willingness to be vulnerable to algorithmic systems, encompassing confidence in their competence, reliability across tasks, and ethical alignment with educational values (Mcknight et al., 2011). Algorithm Aversion Theory (Dietvorst et al., 2015) highlights that users often reject algorithms after witnessing even minor errors, particularly when outputs are opaque or when systems provide no feedback loops for correction. In classrooms, these risks are amplified: an adaptive quiz that misclassifies a student or a grading tool that penalizes non-standard language can directly affect learners’ outcomes and undermine instructors’ legitimacy. Thus, trust functions as the gatekeeper between openness and action. Even educators with high DM and strong sensemaking capabilities may refuse to adopt AI if they perceive its errors as unrepairable or its operations as opaque. Conversely, when systems provide transparent rationales and allow human-in-the-loop corrections, trust can grow over time, enabling deeper integration.

The interplay of these mechanisms unfolds within an institutional context that acts as a boundary condition. Perceived institutional support (PIS) is often assumed to unilaterally facilitate adoption through training, resources, and policy incentives (Eisenberger & Stinglhamber, 2011). However, in autonomy-rich ODE environments, institutional interventions can have paradoxical effects. Support that is enabling—such as voluntary workshops, sandbox experimentation, or opt-in pilots—reinforces DM and strengthens sensemaking and trust. In contrast, prescriptive interventions, such as mandated dashboards or usage quotas, may undermine autonomy and erode trust, leading to superficial or resistant adoption. This contextual sensitivity is often overlooked in the adoption literature, yet it is essential to explain why infrastructure investment does not automatically translate into pedagogical innovation in Chinese ODE.

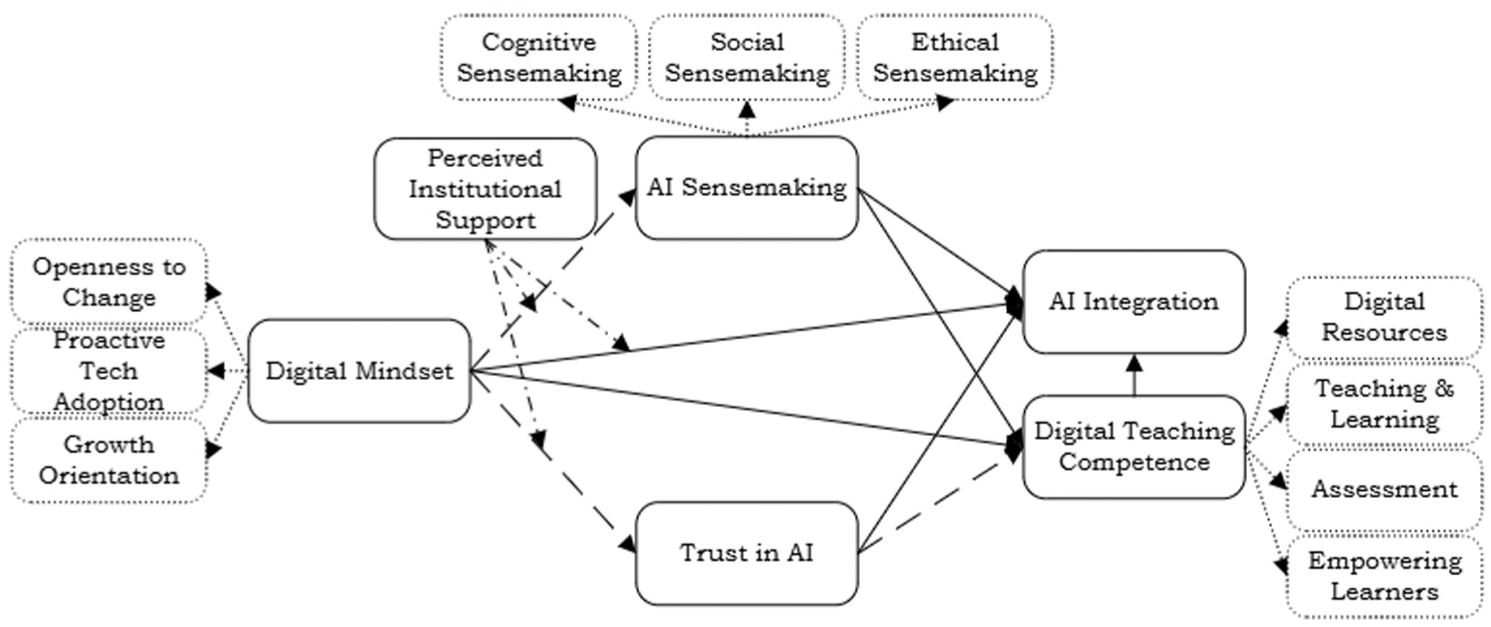

Taken together (Figure 1), these perspectives form a coherent explanatory chain. DM establishes dispositional readiness, which influences the depth and direction of sensemaking, while trust determines whether interpretation becomes action. Institutional support moderates each link, amplifying or constraining the pathways through which dispositions and cognitions translate into behavior. This integration provides a more nuanced alternative to TAM and UTAUT by conceptualizing AI adoption as a function of interpretive depth, dispositional agility, and trust calibration, situated within institutional contexts. By weaving these theories into a single framework, this study addresses the adoption paradox in ODE and advances a richer understanding of why educators with similar competencies and resources arrive at divergent decisions about AI integration.

Research framework.

Hypothesis Development

Digital Mindset and AI Integration

The successful integration of artificial intelligence (AI) in educational contexts hinges not only on infrastructural readiness but also on teachers’ psychological orientation toward technology. The concept of a DM—characterized by openness to change, innovation acceptance, and a belief in the transformative power of digital tools—has emerged as a key determinant of technology-driven pedagogical reform (Leonardi, 2021; Pietsch & Mah, 2024). In particular, open education teachers, who often operate autonomously in digitally saturated environments, rely heavily on self-directed decision-making. For example, instructors in China’s Open University system often independently select AI-based analytics dashboards to track student performance, without real-time institutional guidance or peer support. For these educators, DM functions as a critical enabler, shaping how AI is perceived—not as a threat, but as an opportunity for instructional innovation (Sulistiyani et al., 2025; Zhang & Zhang, 2024).

While empirical studies indicate that teachers with strong digital orientations are more inclined to experiment with AI, personal experience with such tools often remains limited (Galindo-Domínguez et al., 2024; Lucas et al., 2024). Therefore, it is posited that DM acts as a precursor to behavioral adoption in open educational contexts.

AI Sensemaking and AI Integration

AI integration entails more than technical competence; it requires educators to interpret the purpose, value, and implications of AI systems within their pedagogical frameworks. Sensemaking Theory (Weick, 1995) provides a powerful lens for examining how teachers respond to uncertainty and ambiguity introduced by AI, particularly in its potential to alter instructional roles and ethical responsibilities.

AI tools often lack transparency and pedagogical alignment, necessitating cognitive and social processes of interpretation. Teachers must not only assess how AI supports learning objectives but also reconcile its use with broader educational values. For instance, instructors may struggle to accept AI-generated student risk alerts when the decision-making criteria are not transparent, potentially conflicting with their commitment to inclusive pedagogy. Studies confirm that AI adoption is mediated by how its utility is interpreted through organizational and professional lenses (X. Li et al., 2024; Madan & Ashok, 2024). In the open education context, where peer collaboration and structured guidance may be minimal, sensemaking becomes a vital mechanism for bridging the gap between interest and actual use (Siemens et al., 2022; Sudeeptha et al., 2024).

Digital Teaching Competence and AI Integration

Although attitudinal readiness and interpretive capacity are important, they must be coupled with Digital Teaching Competence (DTC) to yield effective AI integration. DTC encompasses a teacher’s ability to select, adapt, and apply digital tools in pedagogically meaningful ways, including lesson planning, content curation, assessment, and learner support (Pujeda, 2023; Simut et al., 2024).

Teachers with higher digital proficiency are more likely to trust and effectively use AI, as they possess both the confidence and capability to evaluate its outputs and align them with instructional goals (Lucas et al., 2024). For example, an instructor who receives AI-generated insights on student misconceptions can effectively translate this into personalized feedback or restructured content delivery. Moreover, as AI systems increasingly incorporate adaptive algorithms and learning analytics, competencies such as data literacy and ethical digital judgment become central to meaningful adoption (Scarci et al., 2024).

Moderating Effects: Role of Perceived Institutional Support

While DM provides the psychological orientation for educators to explore and adopt technological innovations, its translation into meaningful AI integration is neither automatic nor consistent. This variability is shaped, in part, by the institutional environment, which acts as a contextual moderator that either enables or constrains the enactment of individual dispositions (Weick, 1995). In high-autonomy settings such as open education, institutional scaffolding—if misaligned—may suppress rather than support initiative, making the role of support systems a critical boundary condition.

According to Sensemaking Theory, individuals do not interpret new technologies in isolation; instead, their interpretations are influenced by social and organizational structures that provide cues about appropriateness, safety, and pedagogical alignment (Bani-Hani et al., 2021). In this context, Perceived Institutional Support (PIS)—defined as educators’ perceptions of the extent to which their institution provides resources, training, policy clarity, and leadership encouragement for AI use—shapes how a DM is cognitively and affectively enacted.

For example, when institutions introduce AI-supported teaching tools in tandem with structured faculty workshops or create dedicated spaces for experimentation and co-design, educators are better positioned to interpret AI technologies as pedagogically viable rather than externally imposed (Biccard & Sibisi, 2024). These supportive cues serve as meaning-making anchors, facilitating deeper engagement with AI through enhanced AI Sensemaking (AIS). Therefore, PIS is expected to moderate the relationship between DM and Sensemaking, amplifying the impact of mindset when institutional signals are empowering and coherent.

Similarly, trust in AI is not purely dispositional; it is socially constructed and often emerges from organizational signaling about fairness, transparency, and ethical use. Institutions that invest in AI explainability training, data governance protocols, and faculty co-creation of AI guidelines foster a climate of psychological safety that strengthens educators’ confidence in algorithmic tools (Lai-Wan et al., 2023; J. Li et al., 2021). These signals mitigate algorithm aversion and enable even initially skeptical teachers to develop trust in AI systems. Thus, PIS is theorized to moderate the relationship between DM and Trust in AI, particularly in environments where AI-related risks are salient.

Furthermore, PIS directly shapes the extent to which a DM results in actual behavioral integration of AI. Teachers who are dispositionally open but face institutional barriers—such as vague policies, insufficient support, or lack of autonomy-respecting infrastructure—may become disengaged or revert to conventional pedagogies. Conversely, institutions that offer innovation grants, professional communities of practice, or inclusive AI policy frameworks can empower digitally motivated teachers to translate intention into action (H. Ahmed, 2024; Madan & Ashok, 2024). Thus, PIS is proposed as a moderator of the direct link between DM and AI Integration.

Trust in AI as a Mediator

Teachers may possess a DM, yet refrain from using AI tools unless they trust the system’s fairness, transparency, and pedagogical value. Trust in AI thus acts as a psychological enabler, translating attitudinal readiness into practical behavior (S. Ahmed & Aziz, 2025). Obenza et al. (2023) demonstrated that trust mediates the link between self-efficacy and AI attitudes, while Lucas et al. (2024) confirmed that trust significantly affects AI use among educators. For instance, teachers may avoid AI-based formative assessment tools if they suspect bias in question generation—even if they are technologically confident. Trust mediates this tension by reducing psychological distance and enhancing user confidence (Meenal & Amit, 2024).

AI Sensemaking as a Mediator

Even with a strong DM, educators must cognitively interpret how AI technologies align—or conflict—with their teaching philosophies and goals. Sensemaking enables this by guiding meaning attribution, allowing teachers to negotiate AI’s role amid ambiguity (Weick, 1995). In highly autonomous teaching environments, such as open universities, this interpretive process becomes a gateway from disposition to behavior. For example, an educator unsure about AI-generated course pacing suggestions must reconcile those with learner engagement patterns and curriculum flow. When this reconciliation is successful, integration follows. Studies confirm that sensemaking mediates the link between openness to AI and its actual adoption (Sudeeptha et al., 2024).

Digital Teaching Competence as a Mediator

Trust in AI reflects confidence in the system; however, teachers also require operational competence to apply it effectively. Even with trust, implementation is unlikely without digital fluency. Teachers with high DTC can, for example, critically assess AI-generated student insights, interpret dashboards, and adjust instruction accordingly. This conversion of trust into action is increasingly essential as AI becomes embedded in instructional systems. Empirical findings affirm that DTC is a critical mediator between psychological readiness and practical implementation (Ayanwale et al., 2024; Galindo-Domínguez et al., 2024; Hava & Babayiğit, 2024).

Methodology

Research Context

This study examines AI integration in China’s open and distance education (ODE) system, focusing on Guangdong Province, a nationally recognized locus of educational digitalization. Guangdong has been central to pilots of the

Sampling Frame and Participants

The sampling frame comprised instructors at recognized open universities and digital campuses across Guangdong. Institutions were identified through the Guangdong Department of Education and cross-verified with national sources (Open University of China; National Online Education Resource Center) to ensure coverage and institutional diversity. Eligibility required (a) active teaching responsibilities in 2024 to 2025, (b) access to at least one AI-supported platform (e.g., automated grading, analytics dashboards), and (c) experience with asynchronous or flexible formats characteristic of ODE. A multi-wave purposive design aligned measurement with the theorized temporal ordering of antecedents, mediators, and outcomes.

Data Collection Procedure

Data were collected in three temporally separated waves over 5 months (November 2024 to March 2025), providing temporal separation between predictors, mediators, and outcomes. Each wave was administered via institutional email and official academic WeChat channels using identical consent procedures.

Attrition diagnostics (dropout models and T1 mean comparisons) indicated no differences by DM, PIS, gender, age, or experience (all

Non-Response and Bias Control

Procedural safeguards included temporal separation of constructs across waves, randomized item order, neutral wording, and assurances of anonymity and confidentiality. As a diagnostic, early–late response comparisons revealed no significant differences (

Measurement Instrument

All constructs were measured as reflective latent variables using multi-item scales adapted to the ODE–AI context (Appendix A). Digital Mindset (DM) was defined as educators’ orientation toward digital change, reflected in future orientation, tolerance for uncertainty, and proactive experimentation (Agarwal & Prasad, 1998; Dweck, 2006; Leonardi, 2020; Oreg & Katz-Gerro, 2006; Parasuraman, 2000; Pietsch & Mah, 2024). AI Sensemaking (AIS) captured the interpretive work that confers pedagogical meaning to AI outputs, measured across content sense, explanatory sense, and social sense (Bani-Hani et al., 2021; Siemens et al., 2022; Sudeeptha et al., 2024).

Trust in AI (TAI) was conceptualized as the

Digital Teaching Competence (DTC) represented instructors’ capability to enact AI-supported pedagogy, spanning assessment design, analytics literacy, learner orchestration, and empowerment (Kohtamäki et al., 2016; Madan & Ashok, 2024; Mishra & Koehler, 2006; Redecker, 2017; Tondeur et al., 2017). Perceived Institutional Support (PIS) was measured through four items adapted from Eisenberger and Cameron (1996) and Gbobaniyi et al. (2023), focusing on the autonomy-aligned usefulness of institutional resources for AI integration. AI Integration (AII) was expanded to eight items capturing the breadth and depth of adoption, including design, formative and summative feedback, learning analytics, personalization, orchestration, resource generation, and iterative refinement (Venkatesh et al., 2003; Yu & Li, 2022).

All items were adapted through a two-stage validation process: (a) expert review by three domain specialists and two instructional-design researchers to ensure content validity and contextual fit, and (b) a pilot with eight ODE instructors to assess clarity, terminology alignment, and cognitive interpretability. Minor revisions were made to match common AI-related vocabulary in Chinese ODE institutions. All scales were anchored on a five-point Likert response format (1 = strongly disagree, 5 = strongly agree).

Higher-order specification. DM and DTC were modeled as reflective–reflective hierarchical component models, consistent with their construct ontology: dimensions (e.g., DM → future orientation/uncertainty/proactivity; DTC → design/analytics/orchestration) are manifestations of a broader underlying domain. Treating them as formative would imply causal independence among facets, which is conceptually inconsistent with dispositional and capability-based constructs.

Data Analysis Strategy

Descriptive statistics were generated in SPSS 26, and structural relationships were estimated using PLS-SEM in SmartPLS 4. PLS was chosen over CB-SEM because it prioritizes variance explanation and prediction, accommodates hierarchical component models, and is robust to non-normal data with modest samples (Alam et al., 2025). Moreover, PLS is particularly suited for complex models involving higher-order constructs, modest sample sizes, and prediction-oriented objectives, whereas CB-SEM emphasizes global model fit and assumes multivariate normality, which were less aligned with the goals and data characteristics of this study.

Indirect effects were tested using bias-corrected bootstrapping with 5,000 resamples (95% confidence intervals). Predictive validity was assessed using PLSpredict with k-fold cross-validation; RMSE and MAE were decomposed at both indicator and construct levels (Shmueli et al., 2019), enabling robust assessment of out-of-sample prediction. It is acknowledged that instructors in this study were nested within institutions, which could introduce shared variance due to contextual factors such as institutional policies, culture, or infrastructure.

Data Analysis

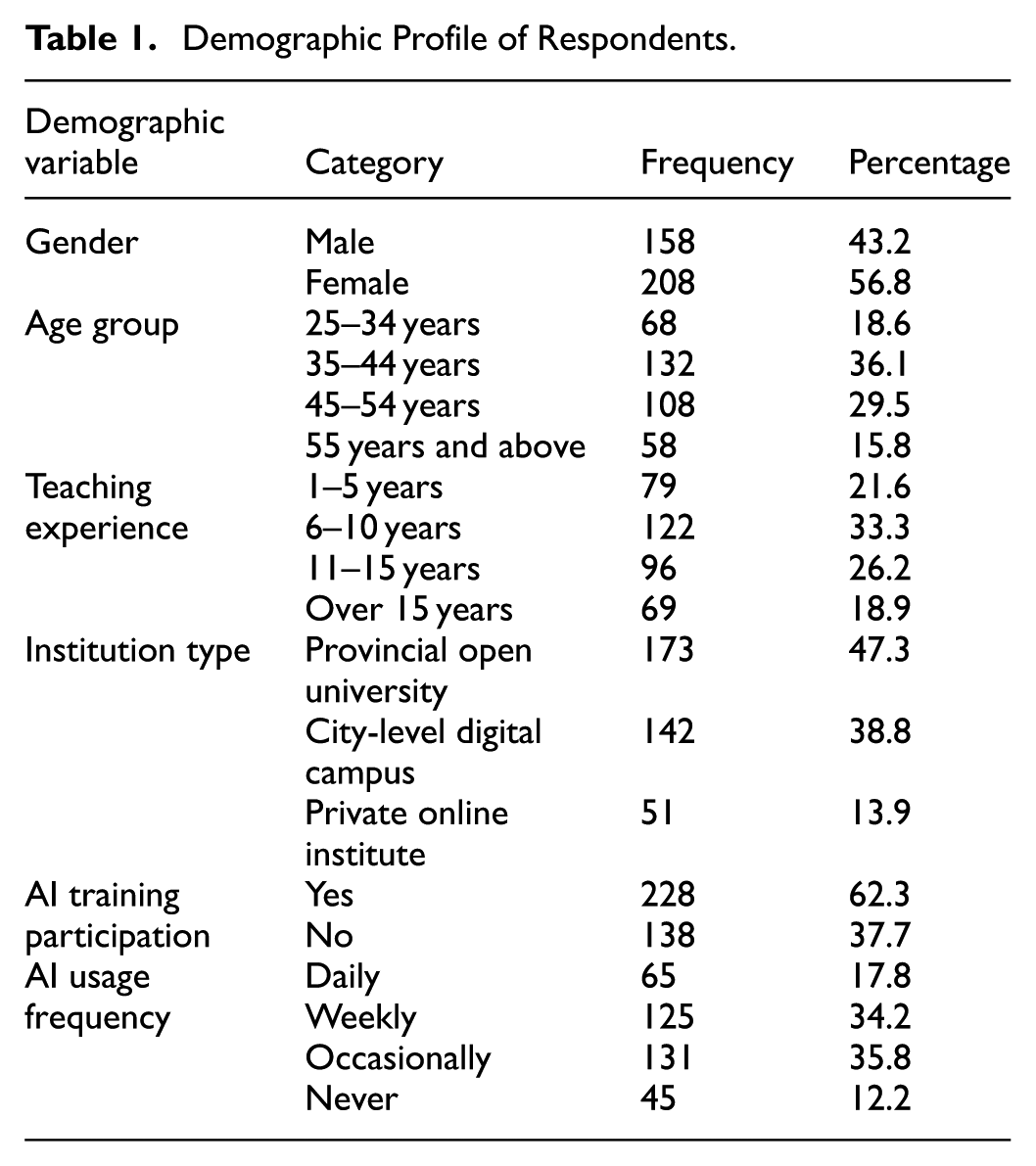

Out of the 520 distributed questionnaires, a total of 366 open education teachers successfully completed and submitted valid responses across all three waves of data collection, yielding a final response rate of 70.4%. Table 1 shows the demographic characteristics of the respondents. A total of 56.8% of the respondents in this study were female, while 43.2% were male. In terms of age, the largest group of respondents was aged between 35 and 44 years (36.1%), followed by those in the 45 to 54 age group (29.5%). Respondents aged 25 to 34 accounted for 18.6% of the sample, while those 55 years and above made up 15.8%. Regarding teaching experience, 33.3% of participants had been teaching for 6 to 10 years, followed by 26.2% with 11 to 15 years of experience, and 21.6% with 1 to 5 years. The remaining 18.9% reported over 15 years of teaching experience. With respect to institutional affiliation, 47.3% of the respondents worked at provincial open universities, followed by 38.8% from city-level digital campuses and 13.9% from private online institutes. As for AI training participation, 62.3% of respondents indicated that they had undergone formal AI-related training, while the remaining 37.7% had not received such training. In terms of AI usage frequency, 35.8% of the teachers reported occasional use of AI in their teaching, followed by 34.2% who used AI on a weekly basis. A smaller proportion (17.8%) indicated daily usage, while 12.2% reported that they never used AI in their instructional practice.

Demographic Profile of Respondents.

Measurement Model Statistics

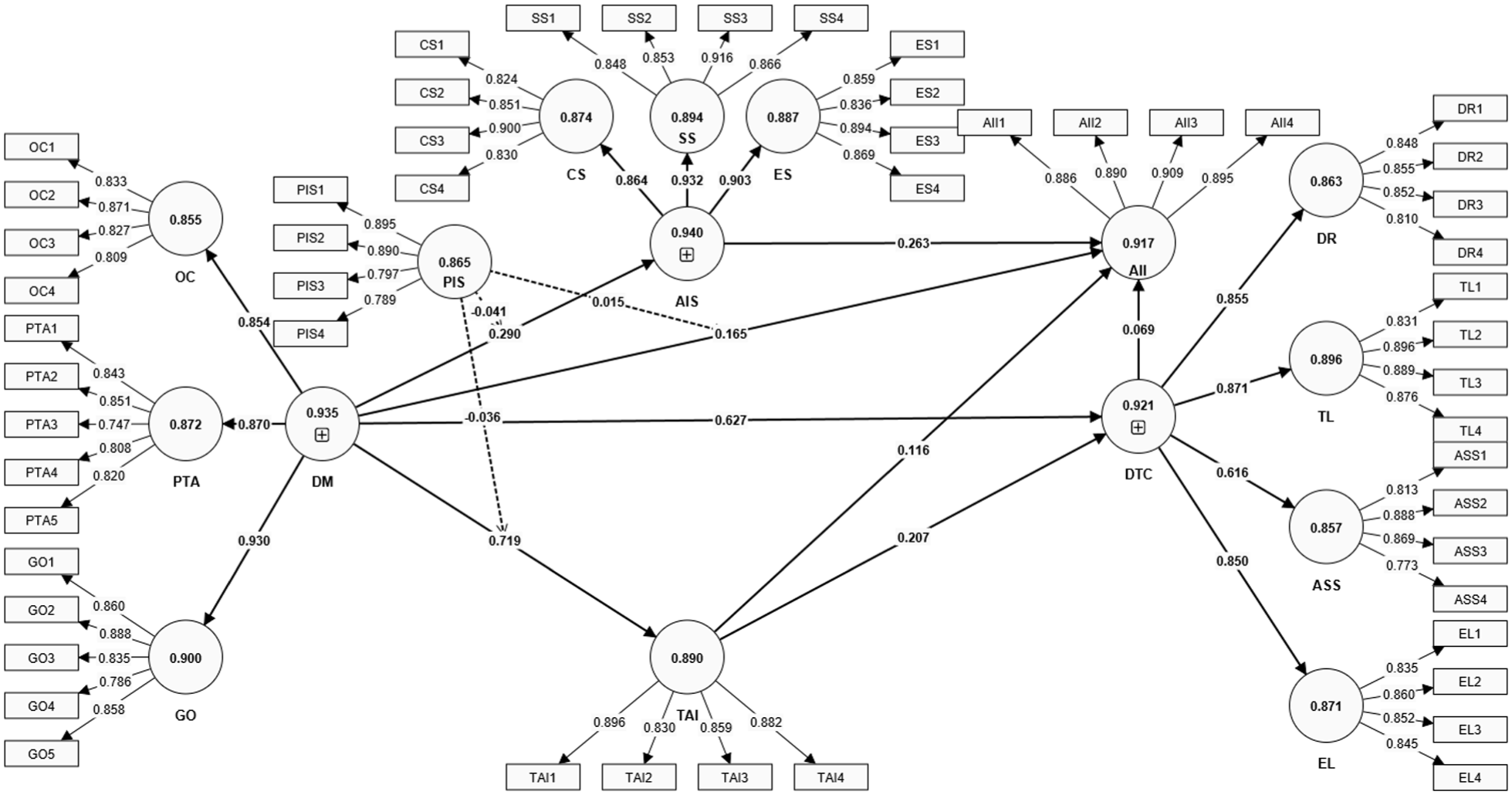

The measurement model (Figure 2) was evaluated to ensure the reliability and validity of all reflective constructs. The assessment included indicator reliability (outer loadings), internal consistency reliability (Cronbach’s Alpha and Composite Reliability), convergent validity (Average Variance Extracted), and discriminant validity (HTMT and Fornell–Larcker criteria).

Measurement model.

Table 2 presents the outer loadings, VIF values, Cronbach’s Alpha (CA), Composite Reliability (CR), and Average Variance Extracted (AVE) for each construct. All outer loadings exceeded the recommended threshold of 0.70 (Hair et al., 2022), confirming acceptable indicator reliability. The CA values ranged from .855 to .917 and CR values from 0.902 to 0.941, all surpassing the 0.70 benchmark, indicating strong internal consistency. The AVE for all constructs exceeded the 0.50 threshold (Fornell & Larcker, 1981), thereby confirming convergent validity.

Measurement Model Statistics.

Notably, AII demonstrated excellent internal reliability with CR = 0.941 and AVE = 0.801. Constructs like DR, EL, and AI also reflected high internal consistency and convergent validity (CRs above 0.90 and AVEs > 0.70). All VIF values were below 3.5, indicating no multicollinearity concerns.

Discriminant validity was assessed using both the FLC and the HTMT. As shown in Table 3, the square root of each construct’s AVE (diagonal) was greater than the inter-construct correlations (off-diagonals), fulfilling the Fornell–Larcker condition. Furthermore, all HTMT values were below the conservative threshold of 0.90, indicating strong discriminant validity across constructs (Henseler, 2018).

Discriminant Validity.

The second-order reflective constructs—DTC, AIS, and DM—were validated through repeated indicator modeling. As shown in Table 4, all dimensions significantly contributed to their respective higher-order constructs. For DTC, the dimension TL had the highest loading (β = .871,

Outer Loading Composition of Higher Order Constructs.

Model Fit and Predictive Relevance

The structural model was evaluated using key indicators of explanatory and predictive performance, including

Model Fit and Predictive Statistics.

Predictive relevance was assessed using Stone-Geisser’s

Prediction error metrics further support this conclusion. RMSE values ranged from 0.482 to 0.600, while MAE ranged from 0.352 to 0.442—within acceptable thresholds for behavioral prediction models. These findings validate the model’s theoretical structure and underscore its utility in forecasting AI-related instructional behavior among open education teachers in China.

Hypothesis Testing and Discussion

The structural (Figure 3, Table 6) results shed light on the complex and sometimes paradoxical dynamics of AI integration in open and distance education (ODE). The direct effect of Digital Mindset (DM) on AI integration (β = .248,

Structural model.

Structural Model Statistics.

AI Sensemaking (AIS) provides this interpretive bridge. Its effect on integration (β = .288,

By contrast, Digital Teaching Competence (DTC) did not significantly predict AI integration (β = .069,

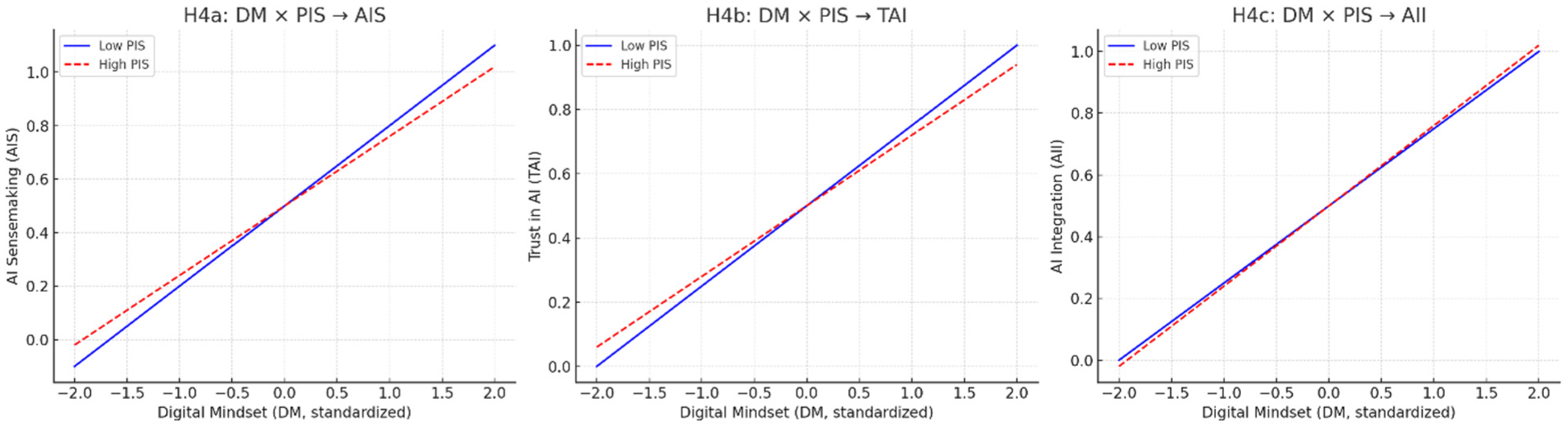

The moderating effects of Perceived Institutional Support (PIS) further complicate the picture (Figure 4). PIS significantly dampened the influence of DM on both AIS (β = −.040,

Moderation interaction plots.

Trust in AI (TAI) presented another unexpected result. Despite being modeled as a mediator, it did not significantly explain adoption (β = .081,

Finally, the absence of mediation through DTC (β = .012,

Together, these results advance a richer account of the adoption paradox in ODE. DM provides readiness, AIS mediates through interpretive legitimacy, trust acts as a threshold rather than a sequential mediator, and competence operates as an enabler or moderator rather than a direct driver. Institutional support, meanwhile, functions as a double-edged sword—facilitating adoption when autonomy is respected but suppressing it when experienced as control. This interplay underscores the need to move beyond competence-utility models and toward frameworks that capture the dispositional, cognitive, affective, and contextual contingencies of AI integration in education.

Implications of This Study

Theoretical Implications

This study offers several critical theoretical contributions by empirically integrating and extending three influential frameworks—Sensemaking Theory, the DM Framework (Leonardi, 2020), and Trust Theory, particularly Algorithm Aversion Theory—into the context of AI integration in open and distance education.

First, this study advances Sensemaking Theory by demonstrating that teachers’ AI integration is not merely a matter of technical readiness or institutional mandates but a cognitively mediated process. Specifically, AI adoption is shaped by how educators interpret the pedagogical value, ethical considerations, and contextual alignment of AI tools. This aligns with Weick’s (1995) proposition that individuals must construct meaning around ambiguous events before acting. In open education environments—characterized by decentralized structures and high instructional autonomy—such interpretive processes become indispensable. The study’s finding that sensemaking significantly mediates the link between DM and AI integration supports a dynamic view of sensemaking that incorporates not only internal beliefs but also external cues like institutional signaling. Thus, the sensemaking process in digitally autonomous contexts is more socially embedded and dynamic than previously theorized.

Second, this study expands the DM Framework beyond its traditional application in corporate or managerial contexts. While prior work has linked DM to openness toward innovation and comfort with technological change, this research demonstrates its foundational role in enabling downstream mechanisms like sensemaking and trust formation. Teachers with strong DMs are not only more inclined to adopt AI tools directly but also more likely to engage interpretively and affectively with these tools. This extension positions DM as a precursor to higher-order psychological processes, suggesting it is both a motivational and cognitive resource. Moreover, the observed interaction between DM and perceived institutional support indicates that the impact of mindset is context-sensitive. Support structures that fail to align with autonomy-centered values may undermine the activation of mindset into behavior. As such, DM should be reconceptualized as a relational construct—activated or inhibited by institutional scaffolding—rather than a static personal trait.

Third, the findings provide a nuanced critique of Trust Theory and Algorithm Aversion Theory. While prior studies (Obenza et al., 2023) have emphasized trust as a prerequisite for AI adoption, this study finds that trust does not significantly mediate the relationship between DM and integration. This suggests that in open education settings, trust may serve more as a threshold condition—necessary but not sufficient. Once basic trust is established, its additional influence may plateau, especially in high-autonomy contexts where educators rely more on self-guided evaluation than institutional reassurance. Moreover, the absence of a mediating role for digital teaching competence in the trust pathway further challenges traditional linear models, indicating a disconnect between affective confidence and operational capability. This highlights the need for future theoretical models to view trust and competence as potential moderators or conditional enablers rather than fixed mediators.

Collectively, these findings call for an integrated theoretical model that bridges disposition (DM), cognition (sensemaking), affect (trust), and behavior (integration), moderated by institutional context. We propose a novel Dispositional–Cognitive–Contextual (DCC) Framework for understanding AI integration in education. This framework positions DM as a foundational enabler, activated through sensemaking and conditioned by institutional signals, thereby offering a more holistic lens for examining educator-AI interactions in decentralized, evolving educational ecosystems.

Practical Implications

The findings provide a set of actionable insights for institutions, policymakers, and professional development stakeholders seeking to promote meaningful AI integration in open and distance education (ODE).

First, the study highlights that technical readiness and competence are necessary but not sufficient for adoption. Institutions must deliberately cultivate a

Second, effective adoption depends on interpretive sensemaking rather than procedural compliance. Institutions should therefore create collaborative interpretive spaces where educators can critically examine AI’s pedagogical role, share experiences, and interrogate risks. Practical mechanisms include cross-functional AI literacy groups, peer-led case discussions, and guided walkthroughs of algorithmic operations. These forums not only support sensemaking but also foreground ethical considerations—such as privacy, surveillance, and algorithmic bias—that are often overlooked. For example, educators should be supported in evaluating whether predictive dashboards inadvertently profile students or whether automated grading tools reproduce linguistic and cultural bias. Embedding ethical reflection into training ensures adoption aligns with both professional responsibility and student rights.

Third, the results reveal that institutional support can backfire when it is experienced as intrusive or prescriptive. In autonomy-rich settings like ODE, institutions should shift from top-down directives to autonomy-respecting support models. Co-construction is key: involving teachers in the selection of AI tools, in the drafting of usage guidelines, and in the development of assessment protocols enhances ownership and reduces resistance. Flexible support structures—such as opt-in mentorships, voluntary sandbox environments, or micro-support teams—are more effective than universal mandates. These approaches preserve educator agency while providing scaffolding for innovation.

Fourth, the non-significant findings on digital competence and trust suggest that barriers to integration lie less in technical skill deficits than in motivational and interpretive gaps. Institutions should therefore design professional development around pedagogical integration pathways rather than isolated tool training. For instance, micro-credentials in “AI-Augmented Instructional Design” or “Ethical AI Pedagogy” could help educators translate competence and trust into practice. These programs can bridge the knowing–doing gap by emphasizing how AI can be aligned with pedagogical goals, rather than focusing narrowly on functional proficiency.

Finally, policy-level interventions must acknowledge the boundary conditions of these findings. The insights are most applicable to higher education and ODE systems where instructors exercise significant autonomy; they may not transfer to highly centralized or K–12 contexts where curricula are standardized and discretion is limited. Policymakers should support scalable yet adaptable infrastructure, such as open-source AI evaluation rubrics, cross-provincial pilot programs, and repositories of vetted tools and use cases. Such initiatives democratize access while allowing local adaptation to institutional culture and readiness.

In sum, meaningful AI integration requires institutions and policymakers to design ecosystems that scaffold not just technical skills but also the psychological, ethical, and interpretive dimensions of adoption. Respecting autonomy, embedding ethics, and clarifying contextual boundaries are essential for ensuring that AI strengthens rather than undermines the pedagogical mission of open education.

Limitations and Future Research

Several limitations temper the interpretation of this study. First, the research is geographically bounded to Guangdong Province—a digitally advanced yet culturally specific region of China. The province’s high digital infrastructure and alignment with national AI initiatives may not represent conditions in less resourced or more centralized education systems. Generalization to other contexts, including K–12 or tightly standardized systems, should therefore be made with caution and tested through cross-national or cross-institutional comparisons.

Second, although multi-wave data collection reduced common method variance by temporally separating antecedents, mediators, and outcomes, reliance on self-reported surveys introduces potential biases. Social desirability, perceptual inflation, and shared method variance cannot be entirely ruled out. Future studies should triangulate self-reports with behavioral usage data, classroom observations, or institutional records to provide more robust evidence of adoption behaviors.

Third, the purposive sampling frame, while ensuring participants had relevant AI exposure, may introduce sampling bias. Instructors who opted into the survey may be more motivated or digitally inclined than non-respondents. Probability-based or stratified sampling across institutions and disciplines would strengthen representativeness and enhance external validity.

Fourth, omitted variables limit the explanatory power of the model. Prior AI experience, disciplinary orientation, and institutional mandate strength likely shape adoption dynamics but were not measured here. For example, instructors in STEM fields may perceive AI-enabled analytics as directly relevant, while those in humanities disciplines may resist due to epistemological concerns. Likewise, mandatory AI policies could alter how institutional support interacts with professional autonomy. Future research should explicitly incorporate these factors as control variables or moderators to refine explanatory precision.

Fifth, the nested structure of the data poses additional considerations. Instructors were embedded within institutions, which may create shared variance due to institutional culture, policy environments, or infrastructure. Multilevel modeling was not feasible in this study because of insufficient numbers of respondents per institution and the analytic focus on individual-level psychological and cognitive processes. Nonetheless, future research should adopt multilevel SEM or hierarchical linear modeling to capture cross-level influences, particularly the ways institutional policy interacts with individual dispositions to shape AI integration.

Finally, while the three-wave design improved temporal ordering, the study remains cross-sectional. Causal inferences about how dispositions, sensemaking, and trust evolve over time remain tentative. Longitudinal panel studies, experimental interventions, or naturalistic field experiments would better capture adoption trajectories and clarify causal mechanisms.

Conclusion

This study examined the psychological, cognitive, and institutional antecedents of AI integration among ODE instructors in Guangdong Province. Grounded in sensemaking theory, digital mindset research, and algorithm aversion theory, the findings show that digital mindset and AI sensemaking consistently drive adoption, while competence and trust play more conditional roles. Importantly, institutional support, typically assumed to facilitate integration, emerged as a double-edged moderator—sometimes weakening the constructive influence of mindset when perceived as controlling.

Together, these insights advance understanding of the “adoption paradox” in autonomy-rich educational settings. Rather than viewing AI integration as a linear function of competence and infrastructure, the findings highlight a dynamic interplay of dispositional readiness, interpretive cognition, affective thresholds, and contextual boundaries. This multidimensional perspective contributes to ongoing debates about how educators negotiate the promises and perils of AI in teaching and offers a foundation for designing more autonomy-sensitive strategies to support meaningful integration.

Footnotes

Appendix

Ethical Considerations

All study procedures were conducted in accordance with the ethical standards outlined in the Declaration of Helsinki and approved by the Human Resource Ethics Committee, Department of information Technology, Guangdong Open University, Guangzhou City, Guangdong Province, P. R. China.(Reference No:202410). Written informed consent was obtained from all participants prior to their involvement in the study, ensuring their full understanding of the research objectives, potential risks and benefits, and their right to withdraw from participation at any time without penalty.

Author Contributions

Conceptualization: Na Meng; Methodology: Nan Jiang and Na Meng; Formal Analysis: Nan Jiang; Investigation: Nan Jiang; Writing—Original Draft Preparation: Nan Jiang; Writing—Review & Editing: Nan Jiang and Na Meng; Supervision: Na Meng; Funding Acquisition: Nan Jiang.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research received grants from Guangzhou Philosophy and Social Science 2023 Planning Project (Project number: 2023GZGJ176).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data supporting this study’s findings will be made available upon reasonable request to the corresponding author.