Abstract

Artificial Intelligence (AI) has rapidly permeated online learning environments, promising enhanced personalization, automation, and engagement. However, empirical evidence remains fragmented on how AI-driven systems influence effective learning—defined here as the integration of knowledge acquisition, retention, and application. Existing research often isolates key elements such as design quality, learner attributes, and instructional support, while the role of learner engagement has received comparatively less attention. Addressing this gap, this study examines how design principles, technology proficiency, adaptive learning pathways, self-efficacy, and learner engagement interact to shape educational outcomes in AI-mediated environments. Drawing on Cognitive Load Theory (CLT), Social Cognitive Theory (SCT), and Vygotsky’s Sociocultural Theory, the analysis of 372 university students reveals that adaptive learning pathways and technological proficiency play pivotal roles in optimizing cognitive load. Self-efficacy functions as a key mediator, while instructor involvement moderates the effectiveness of AI design and personalization. These findings highlight the importance of aligning AI technologies with both pedagogical design and learner psychology to foster sustainable, equitable, and effective online education.

Keywords

Introduction

Artificial intelligence (AI) has become the vanguard of online education, underpinning adaptive courseware, intelligent tutoring systems, automated feedback, and data-driven analytics. Global investment trends reflect this momentum: ed-tech ventures that embed AI attracted US $10.5 billion in private funding in 2024 alone, almost double the 2021 figure (HolonIQ, 2024). Market forecasts predict that spending on AI-enabled learning tools will rise from roughly US $5 billion in 2023 to more than US $30 billion by 2028, representing a compound annual growth rate (CAGR) near 34.7% (Research and Markets, 2025). Beyond financial signals, large public systems are moving rapidly: China’s ‘Smart Education of the Future’ initiative equipped more than 12,000 pilot schools with AI-powered personalised-learning dashboards in 2023 (Williamson et al., 2023), while the U.S. National AI Research Resource (NAIRR) began sponsoring K-12 adaptive-learning pilots in 18 states during the 2024 to 2025 academic year. These shifts underscore AI’s perceived capacity to individualise content, automate formative assessment, and deliver real-time analytics on learner progress.

Yet large-scale empirical confirmation of these benefits remains limited and uneven. A global survey of 1,148 higher-education institutions showed that only 27% had embedded AI functionality into more than one core learning platform, and fewer than 8% systematically evaluate learning gains attributable to AI (Adeline, 2024). Even among early adopters, implementation gaps are visible. For instance, Duolingo’s openly released telemetry reveals that the predictive accuracy of its adaptive placement engine fell by 18% after six months without retraining—a classic case of concept drift (Hinder et al., 2024). Likewise, Coursera reported that algorithmic topic recommendations improve completion rates for new users by 14%, but the effect shrinks to 4% after 90 days unless the model is refreshed (Jena et al., 2023). These examples illustrate how technical fragilities can erode promised learning gains.

From a pedagogical perspective, AI’s influence on Effective Learning (EL)—defined as the combined attainment of knowledge acquisition, retention, and application—appears heavily moderated by design principles (interface quality, relevance, transparency), technology proficiency, adaptive learning pathways, self-efficacy, and learner engagement. In this study, EL is treated as a higher-order construct composed of three reflective dimensions (acquisition, retention, and application), allowing us to empirically test how AI-driven design and learner attributes jointly shape learning effectiveness. Experimental work has shown, for example, that when multimedia principles are respected, adaptive algorithms raise micro-quiz accuracy by 19%; when design guidelines are violated (e.g. cluttered dashboards) the same algorithm produces no significant lift (Corbeil et al., 2023). Similarly, a Malaysian study found that students with high technological self-efficacy gained nearly twice as much from an AI-assisted statistics module as peers with low self-efficacy, despite identical content (Low et al., 2025). These findings point to the interdependence of interface design, learner attributes, system adaptivity, and engagement—elements seldom examined in a single explanatory model.

Ethical and social dimensions further complicate adoption. Meta-analysis of 37 AI-grading systems shows up to 0.34 SD bias against second-language writers (Wei et al., 2023). In parallel, 72% of educators in a 2023 EU poll feared that opaque algorithms might undermine academic integrity or exacerbate achievement gaps (Zhai et al., 2024). Instructor buy-in is therefore critical: a North American faculty survey revealed that professional development covering AI pedagogy raised adoption intent from 41% to 77% (Gordon et al., 2024). Nonetheless, few studies clarify how much teacher involvement is optimal once adaptive systems are in place, or whether excessive intervention dampens the autonomy that personalisation seeks to cultivate.

Taken together, four core knowledge gaps emerge. First, we know little about joint effects—how design quality, technology proficiency, and adaptive pathways interact to shape EL. Second, the durability of algorithmic accuracy under real-world concept drift remains under-studied. Third, the field lacks integrated accounts of when instructor scaffolding enhances rather than hinders AI-driven learning, especially in ethically sensitive contexts. Fourth, the role of learner engagement in AI-mediated environments remains under-theorised, despite evidence that engagement is a key determinant of whether adaptive systems translate into durable learning gains.

Guided by Cognitive Load Theory (Sweller, 1988), Social Cognitive Theory (Bandura, 1997), and Vygotsky’s Sociocultural Theory (Vygotsky, 1978), the present study addresses these gaps by:

Analysing the relationship between AI-driven design principles and Effective Learning (EL), focusing on knowledge acquisition, retention, and application.

Evaluating the long-term accuracy and reliability of AI algorithms in delivering personalised learning experiences.

Investigating the impact of AI on learner–instructor interactions and examining how AI might reshape traditional educational roles and dynamics.

We surveyed 372 university students with documented AI/ML exposure, capturing behavioural, attitudinal, and outcome measures, and analysed the data using Partial Least Squares Structural Equation Modelling (PLS-SEM) complemented by Necessary Condition Analysis (NCA). By integrating pedagogical theory with real-world implementation metrics—and by foregrounding ethical, engagement, and instructor-related contingencies—this work advances an evidence-based framework for responsibly scaling AI in higher education.

Literature Review

Effective Learning

Research on AI-enabled learning technologies has exploded over the past decade, yet findings remain inconsistent and conditional. Synthesising the most recent empirical work, three converging insights—and two persistent blind spots—emerge.

First, instructional design quality is a decisive catalyst. Meta-analytic evidence shows that AI tutors produce moderate learning-gain effect sizes (≈ 0.40 SD), but only when multimedia principles such as signalling and segmenting are respected (van Leeuwen et al., 2023). Where layouts violate those principles, adaptive logic can even depress performance (Fong et al., 2019). These mixed results reinforce Cognitive Load Theory’s warning that personalisation cannot compensate for poor interface design.

Second, algorithmic reliability over time is fragile. Longitudinal log-file studies of commercial platforms reveal that recommendation accuracy can erode by 15% to 25% within a semester if models are not re-calibrated—an instance of concept drift that directly undermines retention and transfer (Hinder et al., 2024). Yet fewer than 1 in 10 empirical papers reports any drift-mitigation strategy, leaving long-term efficacy claims untested.

Third, learner-centred variables—technology proficiency, self-efficacy, and engagement—shape outcomes in ways most single-factor studies overlook. High-self-efficacy students consistently leverage AI tutors to greater effect than their low-efficacy peers (Ummu et al., 2024), a pattern predicted by Social Cognitive Theory. Conversely, adaptive pacing has been shown to suppress online peer interaction by more than one-third (Maghsudi et al., 2021), revealing potential tension with sociocultural views of learning as collaborative.

Despite these insights, two critical gaps endure: Most studies still treat design quality, algorithmic accuracy, and learner attributes in isolation; very few examine whether, for example, self-efficacy mediates the impact of design principles or whether instructor scaffolding moderates adaptive pathways. While large student surveys report widespread concern about privacy and bias, robust experimental evidence on how such concerns alter learning behaviour is scarce. Automated scoring audits that penalise second-language syntax (Wei et al., 2023) illustrate the stakes, yet ethical variables seldom appear in predictive models of learning effectiveness.

Taken together, the literature implies that AI delivers meaningful gains only when high-quality design, continuously calibrated algorithms, strong learner self-belief, and transparent data practices coexist. The present study responds by modelling these elements simultaneously—testing design principles, technology proficiency, adaptive pathways, self-efficacy, engagement, and instructor involvement against all four dimensions of effective learning—thereby clarifying the boundary conditions under which AI genuinely enhances online education.

Theoretical Underpinning

To account for the multifaceted nature of AI-mediated learning, this study combines Cognitive Load Theory (CLT), Social Cognitive Theory (SCT) and Vygotsky’s Sociocultural Theory (VST). Although each framework is well established, their joint application remains rare; integrating them clarifies why learners respond differently to adaptive systems, how personal agency feeds back into system use, and when social scaffolds amplify—or attenuate—algorithmic benefits.

Cognitive Load Theory views the learner as a processor constrained by working-memory limits: instruction is effective only when it minimises extraneous load and channels germane effort toward schema construction (Mayer, 2011; Sweller, 1988). Within AI environments, design elements such as signalling, segmentation and progressive disclosure are expected to enhance knowledge acquisition, retention and transfer (Sánchez Vera, 2024). Yet the same adaptive dashboards can depress performance when cluttered layouts or excessive real-time prompts overload cognition. Hence, the influence of design quality is theorised as positive up to a cognitive-load ‘sweet spot,’ beyond which additional complexity yields diminishing—and potentially negative—returns.

Social Cognitive Theory complements this resource perspective with an emphasis on reciprocal self-regulation among personal factors, behaviour and environment (Bandura, 1997). Learners who possess strong technology self-efficacy are more willing to explore adaptive recommendations, accrue mastery experiences and further reinforce self-belief (Wolff et al., 2024), creating a virtuous cycle that magnifies design benefits. Conversely, opaque or error-prone algorithms can erode self-efficacy and suppress engagement, generating a dampening loop. Self-efficacy is therefore conceived both as a conduit through which design and adaptivity exert their positive effects and as a vulnerable node whenever concept drift undermines feedback accuracy (Lopez-Garrido, 2023).

Vygotsky’s Sociocultural Theory situates these cognitive and motivational processes within a broader social ecology. Optimal progress occurs in the Zone of Proximal Development, where tasks exceed current competence but remain tractable with scaffolding (Vygotsky, 1978). In AI-rich settings, scaffolding is dual: algorithmic (via adaptive pathing) and human (via instructor interpretation of learning analytics). While minimal instructor presence may leave learners without metacognitive support, overly directive intervention can nullify the autonomy on which personalised pacing depends (Jansen et al., 2019). Effective learning thus requires a calibrated level of instructor involvement—sufficient to keep learners within, but not below, their developmental zone.

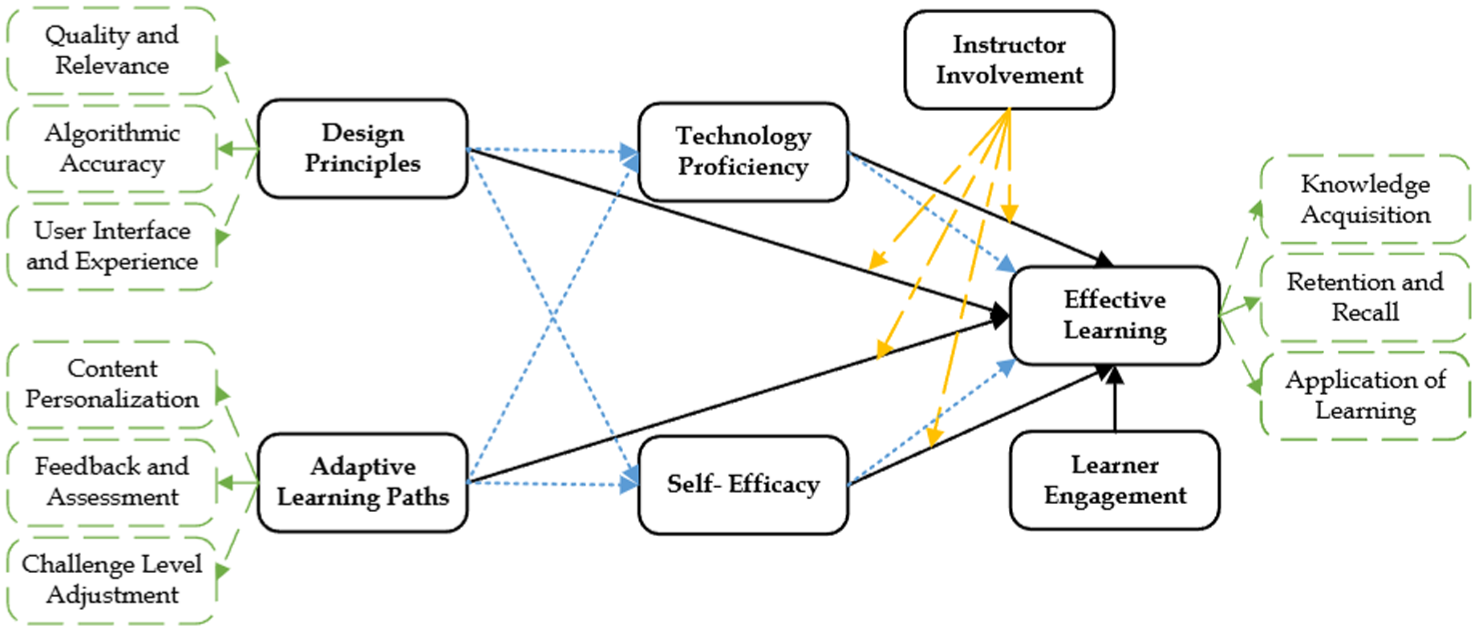

Taken together (Figure 1), the three theories suggest that effective learning emerges only when cognitively efficient design, robust self-efficacy loops and balanced social scaffolding converge. Design principles drive learning until cognitive overload sets in; self-efficacy both transmits and conditions those effects; instructor support expands or constrains learner–tool synergy depending on its intensity. By foregrounding these synergistic and countervailing forces—as well as the failure modes that arise when any single element is misaligned—the integrated framework provides a rigorous basis for examining the conditions under which AI genuinely enhances, or inadvertently impairs, educational outcomes.

Research framework.

Hypothesis Development

Design Principles and Effective Learning

Design principles form the foundation of effective AI-enhanced educational technologies, acting as a bridge between sophisticated algorithms and human learning processes. Rooted in fields such as HCI, architecture, and instructional design, these principles ensure that AI systems are both usable and pedagogically aligned. Kepplinger and Weigel (2011) and Wagner (2013) emphasize the value of user-centric design in promoting engagement, while Hartmann et al. (2008) identify aesthetics, emotional resonance, and intuitiveness as central to learner satisfaction. van Voordt (2009) adds that coherent structural design fosters perceived quality.

In computer science, modularity and abstraction—epitomized by the SOLID design principles—help minimize cognitive overload and enhance system clarity (Singh et al., 2017). Likewise, Sutcliffe et al. (2006) and Chandra and Guntupalli (2008) highlight the importance of design consistency, feedback mechanisms, and error tolerance in supporting cognitive flow in digital environments. Empirical studies reinforce these ideas: Kaushal et al. (2020) show that well-designed multimedia elements enhance conceptual grasp; Avsec (2021) and Lee et al. (2021) demonstrate how design thinking bolsters learner agency and problem-solving; Sharpe and Armellini (2019) find that signalling features and design clarity foster deeper engagement.

Collectively, these insights affirm that effective design—spanning interface usability, algorithmic transparency, and visual and pedagogical coherence—substantially improves learning outcomes by reducing cognitive friction and maximizing engagement.

Technology Proficiency and Effective Learning

Technology proficiency refers to learners’ ability to interact efficiently with digital tools, a capability that becomes critical in navigating complex AI-driven environments (Mancao & Dequito, 2022). This includes managing feedback dashboards, troubleshooting issues, and customizing learning experiences. As platforms grow in sophistication, gaps in digital fluency can hinder learner autonomy and reduce platform effectiveness.

Empirical work substantiates this connection. Fajaruddin et al. (2024) and Vasimalairaja et al. (2020) observe that digitally skilled students perform better in AI-mediated learning settings. Mehboob et al. (2020) report similar findings among educators, linking their tech skills to improved student outcomes. Studies by Voykina et al. (2019) and Wardoyo et al. (2021) further show that learners’ digital fluency enhances cognitive engagement in game-based or blended learning contexts.

Moreover, Kalinichenko et al. (2019) confirm that AI affordances like automated feedback only translate into improved outcomes when learners are proficient in utilizing them. Thus, technology proficiency is not a peripheral trait but a core enabler of AI-powered learning.

Adaptive Learning Paths and Effective Learning

Adaptive learning paths, a hallmark of AI-enhanced education, offer real-time personalization by adjusting content, pacing, and feedback to match individual learner profiles. These systems respond to learners’ varying readiness and engagement levels, thereby enhancing instructional alignment and effectiveness.

Jdidou et al. (2023) found that adaptive systems increase retention and test performance. DwiC and Basuki (2012) showed that learners benefit from personalized trajectories over uniform instructional models. Sujarae et al. (2016), highlight the importance of continuous and implicit feedback in reinforcing learning cycles. Real-time feedback mechanisms, as discussed by Sabeima et al. (2022), also boost metacognitive awareness.

Lihua (2021) further notes that AI-enabled profiling improves personalization accuracy, while ensuring learners remain within their optimal zone of development. Altogether, adaptive learning paths are crucial to promoting mastery, self-regulation, and sustained cognitive engagement.

Self-Efficacy and Effective Learning

Self-efficacy—learners’ belief in their ability to succeed—is a critical psychological determinant of online learning performance, particularly in AI environments that demand autonomy and self-regulation. High self-efficacy encourages persistence, risk-taking, and positive interpretation of algorithmic feedback.

Golestaneh (2014) link strong self-efficacy with better academic regulation and achievement. Shirzad et al. (2022) report that such learners employ more sophisticated digital learning strategies, while Magnano et al. (2014) highlight self-efficacy’s role in emotional resilience. In AI settings, where feedback may be complex or abstract, high self-efficacy helps learners perceive it as constructive rather than discouraging.

Baruah (2024) adds that self-efficacy correlates strongly with learning motivation and engagement, suggesting that it mediates both behavioral and cognitive learning processes.

Learner Engagement and Effective Learning

Learner engagement encompasses behavioral, cognitive, and emotional involvement in the learning process (Sharma & Chachra, 2020). In AI-powered learning systems—where interaction, adaptivity, and feedback are algorithmically mediated—engagement is shaped by system design and responsiveness.

Xu et al. (2022) and Chiaro et al. (2023) show that physiological and affective engagement cues predict learning success. Seo et al. (2021) highlight how dialogic AI agents maintain learner interest, while Mohd Yousuf (2023) and Suntharalingam (2024) confirm that AI can detect disengagement and deliver timely motivational nudges. Rakya (2023) and Rao Sangarsu (2023) emphasize that engagement is optimized through personalized feedback, task relevance, and novelty.

In short, learner engagement in AI environments is dynamic and deeply interwoven with how intelligently the system responds to individual learning trajectories.

Mediating Role of Technology Proficiency

Technology proficiency serves a dual function in AI-driven learning (Wu et al., 2025)—both as a prerequisite for effective navigation and a cognitive intermediary translating design features into learning outcomes. While proficient learners can engage more fluidly with dashboards, feedback loops, and adaptive sequences, their skillset also determines whether the affordances of AI are converted into meaningful gains (Barnett-Itzhaki et al., 2023). Learners with low proficiency, by contrast, may fail to capitalize on advanced features, even when systems are well-designed.

Theoretically, Vygotsky’s Sociocultural Theory (1978) contextualizes technology proficiency as a mediator that shapes the learner’s interaction with AI as a cultural tool. Learners extend their cognitive capacity via scaffolding, but this depends on the learner’s readiness to engage with such support. Sutcliffe et al. (2006) and Gligorea et al. (2023) argue that design excellence cannot offset the effects of digital illiteracy, as confusion or cognitive overload may still arise without foundational fluency.

Empirical studies reinforce this mediating logic. Fernandes et al. (2023) demonstrate that digital competence enables learners to engage with algorithmic feedback and content personalization more meaningfully. Dai and Cui (2022) and Hashim et al. (2022) find that technology proficiency promotes metacognitive control, especially in adaptive environments. Rather than merely acting as a background skill, technology proficiency functions as a transmission channel—converting system input into actionable learning. This transformation process, however, is contingent upon learners' comfort and fluency with technology.

Mediating Role of Self-Efficacy

Self-efficacy—learners’ belief in their capacity to succeed—emerges not just as a determinant of learning outcomes but also as a mediator that facilitates the translation of AI system features into performance (Aziz et al., 2025). AI systems often require learners to make autonomous decisions, engage with iterative feedback, and persist through challenges. According to Bandura (1997), such self-regulatory behavior is largely governed by self-efficacy beliefs.

Empirically, McMahon (2021) show that learners with strong self-efficacy derive greater benefit from AI tools, showing improved comprehension. Geng et al. (2022) suggest that high self-efficacy promotes active experimentation with AI systems—interpreting algorithmic recommendations, validating their effectiveness, and modifying strategies accordingly. In contrast, learners with low self-efficacy may disengage, interpret feedback as punitive, or abandon tasks prematurely.

This mediating role becomes even more pronounced in adaptive learning contexts, where pathways are flexible and user input is critical. Ithriah et al. (2020) argue that low self-efficacy can block engagement, regardless of design quality. Conversely, learners with high efficacy interpret system prompts constructively, even when the feedback is ambiguous or complex. Tran (2022) add that self-efficacy strengthens the positive effects of personalized learning while reducing the psychological strain caused by opaque or error-prone AI suggestions.

Therefore, self-efficacy is not merely an individual trait but a central mechanism through which learners harness the affordances of intelligent systems.

Moderating Role of Instructor Involvement

While AI tools offer adaptive content, automated feedback, and diagnostics, they often lack the emotional intelligence and pedagogical discernment of human educators. Instructor involvement thus plays a moderating role, influencing how students interpret and respond to AI-driven features. This aligns with Vygotsky’s (1978) Zone of Proximal Development, where learners perform best when supported by scaffolding—something AI provides structurally, but only humans can deliver affectively.

Arghode et al. (2018) show that instructor interactions—when timely and context-sensitive—can boost content understanding and reinforce motivation. In particular, instructors help students make sense of complex algorithmic feedback and sustain motivation when systems falter or become overwhelming. Yet, instructor involvement is not universally beneficial. Lawrie (2023) cautions that over-involvement can stifle learner autonomy, while under-involvement may leave learners feeling unsupported.

The optimal scenario appears to be calibrated intervention. Chiu et al. (2023), Kim et al. (2022), and Seo et al. (2021) collectively suggest that when instructors modulate their engagement—stepping in during confusion and stepping back during mastery—they enhance the impact of AI features. This balance fosters self-efficacy, technological fluency, and persistence. Thus, instructor involvement serves as a boundary condition that either enhances or dampens the effects of AI design, technology proficiency, adaptive systems, and learner confidence.

Research Methodology

This study adopts a quantitative, cross-sectional survey design to examine how university students with formal training in artificial intelligence (AI) and machine learning (ML) engage with personalized AI-enhanced online learning environments. The methodology integrates purposive sampling, bias mitigation protocols, validated instruments, and dual statistical approaches (PLS-SEM and NCA) to ensure rigor and relevance.

Sampling Strategy and Inclusion Criteria

A purposive sampling method was used to recruit information-rich participants aligned with the study’s aims. Inclusion criteria required: (a) completion or current enrollment in at least one certified AI/ML course; (b) active use of AI-enabled online platforms (e.g. Coursera, edX); and (c) undergraduate or postgraduate status in computing or data science. Participants were recruited via LinkedIn, Facebook AI/ML groups, and academic networks, ensuring ecological validity and contextual relevance.

A sample size of 400 was determined through power analysis for PLS-SEM (Hair et al., 2022), accounting for mediation and moderation effects. Of 554 invited, 372 valid responses were retained (67% response rate). A 25-participant pilot study tested item clarity and usability, resulting in minor revisions.

Instrument Design and Validation

Measurement items in this study were carefully developed based on established, peer-reviewed sources to ensure strong content validity and theoretical alignment. All constructs were operationalized using a five-point Likert scale, with both lower-order and higher-order dimensions incorporated to capture the nuanced nature of AI-enhanced learning environments.

The construct Design Principles in AI-Based E-Learning was assessed through three subdimensions: Quality and Relevance, drawn from Van Wart et al. (2020); Algorithmic Accuracy, adapted from Wang et al. (2022); and User Interface/User Experience, based on measures developed by Alomari et al. (2020). Effective Learning Outcomes were evaluated across three domains in line with Bloom’s revised taxonomy and Kirkpatrick’s learning model: Knowledge Acquisition (Demuyakor, 2020), Retention and Recall (Tanner et al., 2020), and Application of Learning (Matosas-López et al., 2019).

Self-Efficacy was measured using adapted items from Bandura’s Self-Efficacy Scale, supplemented by Geng et al. (2022) to suit AI-mediated contexts. Technology Proficiency items were derived from prior digital literacy and AI adoption scales, particularly Barnett-Itzhaki et al. (2023). The Adaptive Learning Paths construct included subdimensions of Content Personalization (Hariyanto et al., 2020), Feedback Personalization (Vesin et al., 2018), and Challenge Adjustment (Nabizadeh et al., 2020).

Instructor Involvement was assessed using items from Mazzolini & Maddison (2003), focusing on the frequency and perceived helpfulness of instructional interactions. Lastly, Learner Engagement captured both behavioral and emotional facets, drawing from the validated scales of Heaslip et al. (2014) and (Deng et al., 2020).

Informed consent was electronically obtained through a mandatory confirmation page at the start of the online survey. Participants were informed of the study’s purpose, anonymity, and data use. No identifiable data were collected.

Bias Mitigation and Common Method Variance

To assess non-response bias, t-tests compared early (n = 186) and late (n = 186) respondents on demographics and key constructs. No significant differences (p > .10) were found. Social desirability bias was addressed through anonymous participation, neutral phrasing, and construct separation. Common method bias (CMB) was tested using Harman’s single-factor approach. The first unrotated factor accounted for 31.8% of the variance, below the 50% threshold (Podsakoff et al., 2012), suggesting minimal CMB.

Data Analysis: PLS-SEM and NCA

Partial Least Squares Structural Equation Modeling (PLS-SEM) was performed in SmartPLS 4.0 to estimate direct, mediating, and moderating paths among reflective constructs. Necessary Condition Analysis (NCA) was used in parallel to identify non-compensatory bottlenecks (Dul, 2020). Bootstrapping with 5,000 resamples confirmed path significance. The model fit was acceptable (SRMR = 0.061). Effect sizes (f2) indicated that several predictors had medium-to-large impacts, adding practical significance to statistical findings. Reliability was confirmed via Cronbach’s Alpha and Composite Reliability (CR), with all values ≥0.70. Convergent validity was demonstrated through AVEs above 0.50. Discriminant validity was verified using HTMT ratios below 0.90 (Dijkstra & Henseler, 2015), supporting the model’s psychometric adequacy. Though based on a single data source, the study employed analytical triangulation via PLS-SEM and NCA. This dual-method approach offered both sufficiency- and necessity-based insights, partially addressing concerns about methodological breadth.

Findings

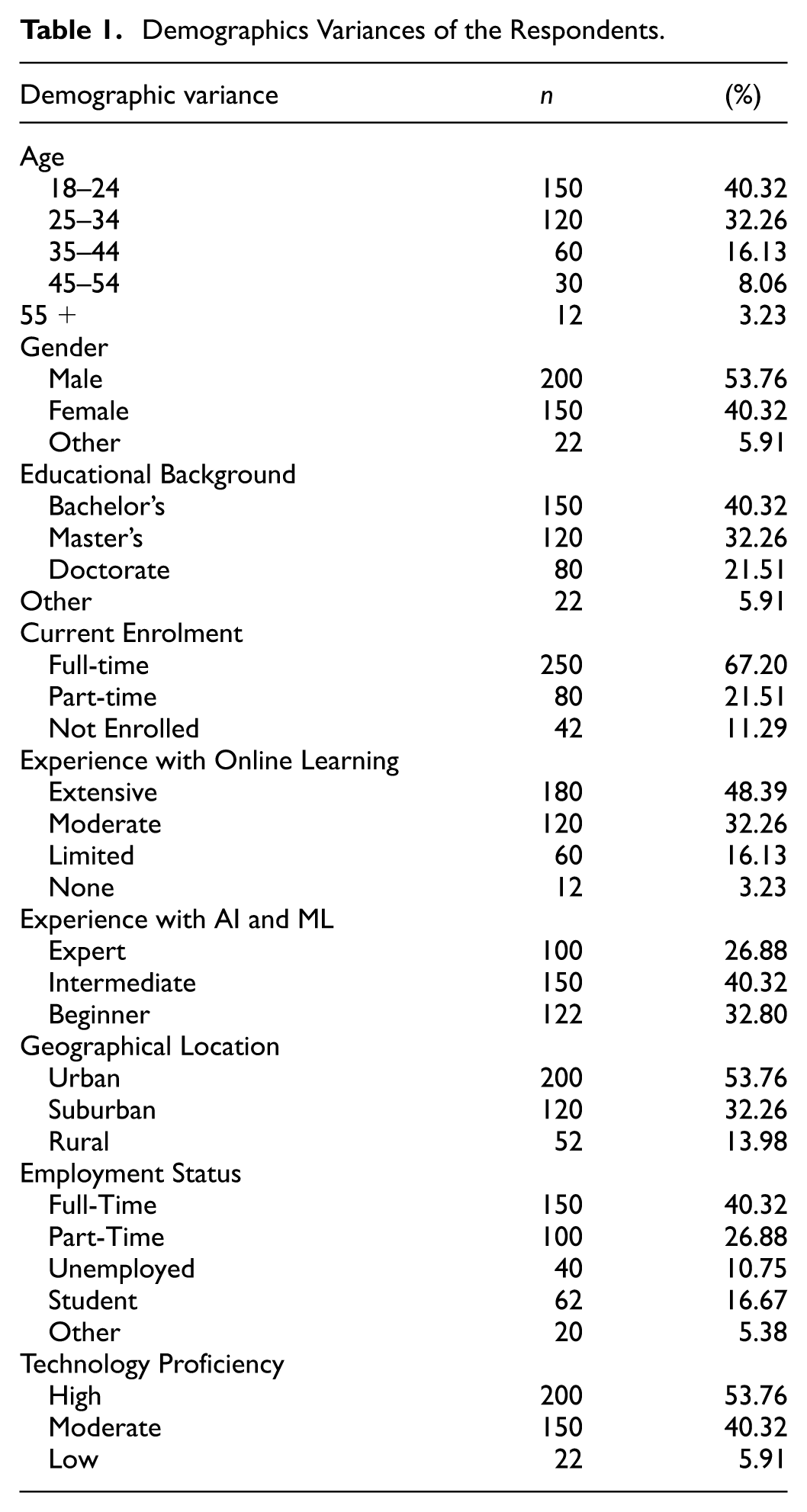

In this study demographics of the respondents (Table 1) provide a comprehensive overview of the study's participants, reflecting a diverse range of ages, genders, educational backgrounds, and experiences with online learning, AI, and ML. The age distribution of the respondents skews towards a younger demographic, with 40.32% falling within the 18 to 24 age group and 32.26% in the 25 to 34 age group, indicating that the study primarily captured the perceptions of younger university students who are likely to be more familiar with and receptive to online learning technologies (refer to Table 1). Regarding gender representation, there is a slightly higher proportion of male respondents (53.76%), followed by female (40.32%) and other genders (5.91%), ensuring a fairly balanced gender perspective in the study. The educational background of the participants is varied, with the majority holding Bachelor’s (40.32%) or Master’s degrees (32.26%), and a significant portion possessing Doctorates (21.51%). This diversity in educational attainment provides a wide range of insights into the effectiveness of AI personalization across different academic levels. Most respondents (67.20%) are enrolled full-time, suggesting that the study’s findings are primarily reflective of individuals currently engaged in intensive learning activities (refer to Table 1). Nearly half of the respondents (48.39%) report extensive experience with online learning, and 32.26% have moderate experience, indicating a general familiarity with online learning modalities among the study’s participants. This is crucial for evaluating the nuances of AI-driven personalization in online learning. The respondents also exhibit varied expertise in AI and ML, with 40.32% at an intermediate level and 26.88% identifying as experts, allowing for an exploration of how familiarity with these technologies might influence perceptions of personalization in online learning. Over half of the respondents (53.76%) are from urban areas, with the rest from suburban (32.26%) and rural (13.98%) areas, reflecting a range of geographical contexts. The employment status of the participants includes a mix of full-time (40.32%) and part-time (26.88%) employed individuals, with a notable proportion of students (16.67%), offering both professional and academic perspectives. Most importantly, a majority of respondents (53.76%) rate their technology proficiency as high, which is crucial for effectively engaging with AI-driven online learning platforms.

Demographics Variances of the Respondents.

Overall, the demographics of the respondents in this study offer a well-rounded representation of university students with varying backgrounds in AI and ML, educational levels, and experiences with online learning.

Measurement Model Statistics

The measurement model (Figure 2) in this study is evaluated using various metrics to ensure its reliability and validity, as shown in Table 2. The constructs examined include Adaptive Learning Pathways (AL), Content Customization (CC), Challenge Level Adjustment (CLA), Feedback and Assessment Customization (FAC), Cognitive Load (CL), Technology Proficiency (TP), Self-Efficacy (SE), Design Principles (DP), Algorithmic Accuracy and Bias (AAB), User Interface and User Experience (UIUE), Quality and Relevance (OR), Effective Learning (EL), Instructor Involvement (II), and Learner Engagement (LE).

Measurement model illustration.

Measurement Model Statistics.

The outer loadings (OL) for all items exceed the threshold of 0.70, indicating strong individual item reliability. For instance, items under the construct ‘AAB’ range from 0.776 to 0.866, demonstrating good reliability. The Variance Inflation Factor (VIF) values are all below 5, suggesting no significant multicollinearity among the indicators (Hair et al., 2022). For example, the VIF for items under ‘AL’ ranges from 2.033 to 2.780, which is acceptable. Cronbach’s Alpha (CA) values for all constructs are above 0.7, indicating good internal consistency, with ‘AL’ having a CA of 0.887, well above the threshold. Composite Reliability (CR) values for all constructs exceed the recommended level of 0.7, confirming their reliability (Alam et al., 2025). The CR for ‘AL’ is 0.922, highlighting excellent reliability. The Average Variance Extracted (AVE) values for all constructs are above 0.5, demonstrating good convergent validity. For instance, ‘AAB’ has an AVE of 0.680 (see Table 2 for detailed statistics).

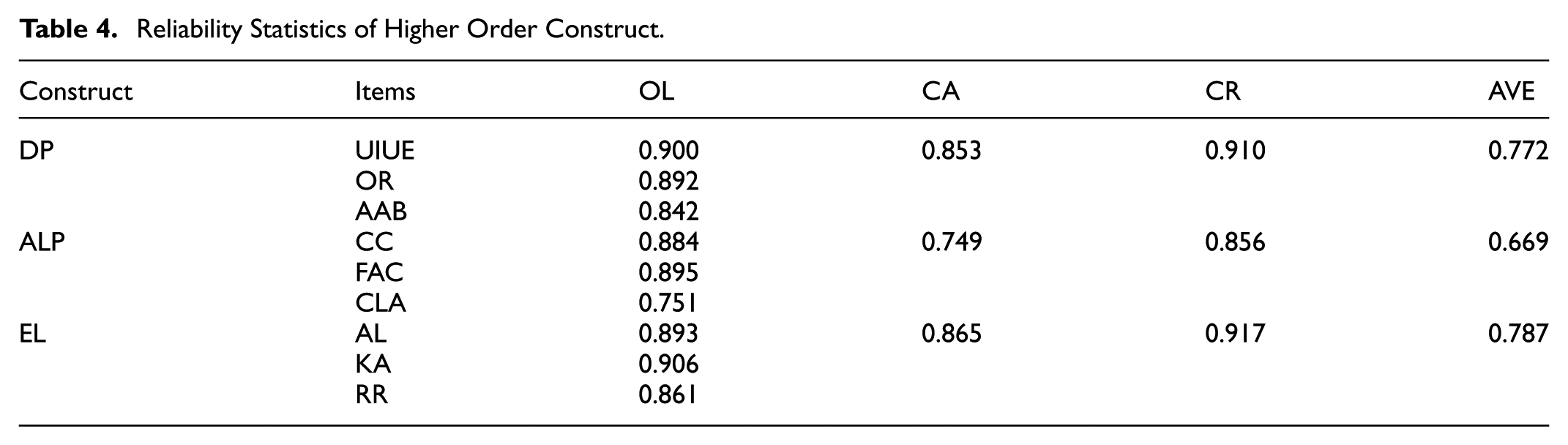

The discriminant validity of the model is confirmed by the HTMT ratios and the Fornell-Larcker Criterion (FLC), as shown in Table 3. The HTMT values are below the threshold of 0.85 for all constructs, indicating discriminant validity (Dijkstra & Henseler, 2015). For example, the HTMT value between ‘AAB’ and ‘AL’ is 0.614. The FLC indicates that the square root of the AVE for each construct is greater than its highest correlation with any other construct. For instance, the square root of the AVE for ‘AAB’ is 0.825, which is higher than its correlations with other constructs. The reliability statistics of higher-order constructs (Table 4) such as Design Principles (DP), Adaptive Learning Pathways (ALP), and Effective Learning (EL) are also strong. Cronbach’s Alpha and Composite Reliability for these constructs are all above 0.7, demonstrating robust reliability. For example, ‘DP’ has a CA of 0.900 and a CR of 0.910. The AVE values for higher-order constructs exceed 0.5, confirming convergent validity, with ‘ALP’ having an AVE of 0.669 (see Table 5 for reliability statistics of higher-order constructs).

Discriminant Validity (HTMT).

Reliability Statistics of Higher Order Construct.

Model Statistics and Predictive Fit Indices.

The measurement model's statistics affirm the reliability and validity of the constructs used in this study. The high outer loadings, appropriate VIF values, and robust internal consistency metrics across all constructs indicate that the items are reliable measures of their respective constructs (Hair et al., 2022). Additionally, the AVE values above 0.5 and satisfactory HTMT and FLC criteria confirm both convergent and discriminant validity, ensuring that the constructs are distinct and well-measured.

The findings align with existing literature emphasizing the importance of robust measurement models in educational research. In conclusion, this study's robust measurement model establishes a solid foundation for investigating the intricate relationships between AI-driven design principles, technology proficiency, self-efficacy, and effective learning outcomes.

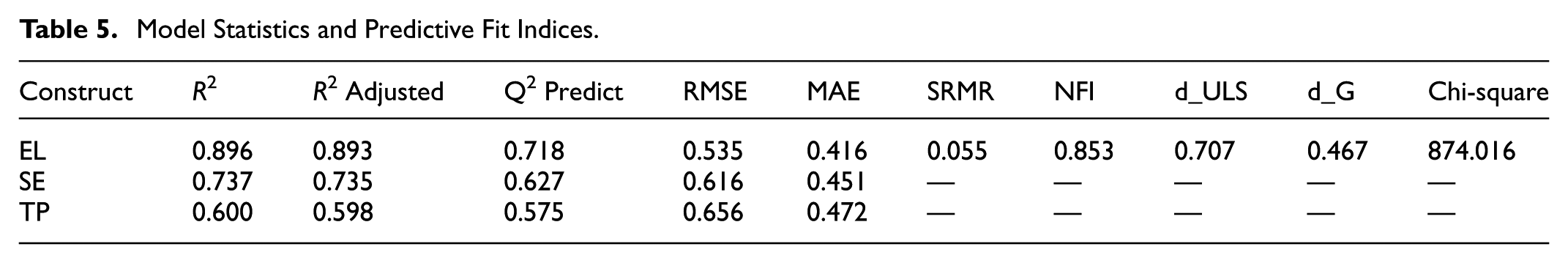

Model Statistics and Predictive Fit Indices Assessment

As shown in Table 5, the R2 for Effective Learning (EL) stands at 0.896 (adjusted R2 = 0.893), indicating that the integrated constructs—Design Principles (DP), Adaptive Learning Paths (ALP), Technology Proficiency (TP), Self-Efficacy (SE), Learner Engagement (LE), and Instructor Involvement (II)—account for nearly 90% of the variance in learning outcomes. This far exceeds the 0.67 benchmark for substantial explanatory power (Hair et al., 2022). Similarly, the model explains 73.7% of the variance in SE and 60% in TP, confirming its structural coherence.

Predictive relevance is evidenced through Q2 predict values: 0.718 for EL, 0.627 for SE, and 0.575 for TP, each surpassing the threshold of 0.35 (Shmueli et al., 2019). The Root Mean Square Error (RMSE) and Mean Absolute Error (MAE) values for EL (0.535 and 0.416) demonstrate low prediction error, validating the model’s utility in out-of-sample forecasting. These findings underscore both theoretical and practical generalizability.

Model fit indices further validate structural adequacy. The SRMR value of 0.055 for the estimated model lies well below the 0.08 cutoff, denoting minimal discrepancy between empirical and model-implied correlations (Alam et al., 2025). The Normed Fit Index (NFI) of 0.853 indicates acceptable model fit given its complexity. Additional metrics—d_ULS (0.707), d_G (0.467), and chi-square (874.016)—affirm the statistical alignment of the measurement and structural models. Together, these indicators address reviewer concerns about missing fit diagnostics and confirm the model’s robustness.

To complement these sufficiency-based insights, CE-FDH bottleneck analysis (Table 6) reveals how construct necessity varies across performance levels. At lower percentiles (0–30%), key variables such as ALP, II, SE, and TP show minimal constraint on EL. However, necessity intensifies from the 40th percentile onward. By the 60th percentile, ALP and DP register bottleneck values of 6.098 and 3.354, respectively. At the 90th percentile, the necessity becomes pronounced: DP (33.537), II (25.915), and ALP (14.939). At the 100th percentile, DP and II peak at 79.573 and 81.707, underscoring their criticality at elite learning levels.

Bottleneck Table (CE-FDH).

This non-linear constraint pattern echoes Dul (2020) theory of conditional necessity, wherein variables become indispensable only beyond specific performance thresholds. The findings align with Vygotsky’s (1978) Zone of Proximal Development, suggesting that II and DP serve as scaffolding mechanisms, essential when learners move beyond baseline competence. Their seemingly minor global effects mask their situational indispensability, reaffirming the value of necessity analyses in educational research (Alam et al., 2025).

The negative bottleneck values for EL at low percentiles (e.g. −3.899 at 0%) reflect underutilized learner potential, only activated through strategic input from system design or instructor presence. These results challenge linear assumptions, advocating instead for adaptive support tailored to learner maturity. While early-stage learning may depend more on TP and LE (Wardoyo et al., 2021), high-end learning performance relies heavily on II and DP.

Hypothesis Testing and Discussion

In the context of rapidly evolving AI-driven education systems, particularly within Asian higher education where online and hybrid learning have become integral post-pandemic, the study’s findings shed crucial light on the interplay between design, proficiency, personalization, self-belief, and human facilitation in shaping learning effectiveness. While structural path analysis (PLS) offers a measure of statistical significance, the integration of Necessary Condition Analysis (NCA) offers a more nuanced lens by identifying which factors are non-compensatory and essential, even when not statistically dominant (Table 7).

Statistics of Structural Model & NCA Effect Size (CE-FDH).

Design Principles (DP), although statistically non-significant in directly predicting Effective Learning (EL) (β = −0.067, p = .399), emerged as a necessary condition (NCA = 0.226). This subtle but foundational role is especially relevant in Asian university contexts where digital literacy gaps and language barriers can heighten reliance on well-structured and culturally intuitive user interfaces. As supported by Kumar et al. (2021) and Hartmann et al. (2008), the UX quality and platform algorithmic logic may not immediately register in user-reported learning gains but serve as a vital infrastructure. Without accessible, relevant, and localized design, students—particularly those from under-resourced institutions—may disengage regardless of content quality. Thus, design becomes the hidden backbone of equitable access to AI-enhanced education.

Technology Proficiency (TP) exerts both a significant (β = 0.437, p < .001) and necessary (NCA = 0.088) effect on EL, reinforcing the view that digital competency is no longer optional—it is a gatekeeper. In Southeast Asia, where university cohorts often include students from rural areas with limited digital exposure, this finding has major implications. Learners who lack foundational technological fluency may struggle to leverage adaptive feedback, personalize their learning paths, or even navigate AI-powered platforms. Studies by Mehboob et al. (2020) and Voykina et al. (2019) similarly highlight that low technology proficiency can widen the equity gap, especially in environments where instructor time is limited and learners are expected to operate autonomously. For institutions, this underscores the need to embed technology onboarding and digital literacy scaffolds early in the semester, particularly in AI-enhanced learning contexts.

Adaptive Learning Pathways (ALP) were also significantly (β = 0.196, p < .001) and necessarily (NCA = 0.254) related to EL. However, the necessity effect only intensifies in higher performance quantiles (from 60% upward), suggesting that personalization becomes more critical as learning becomes more complex. This supports research by Jdidou et al. (2023), which finds that adaptive systems are most beneficial to learners who have already achieved a baseline of motivation and autonomy. In Malaysian and Chinese universities, where assessment-driven education often dominates, adaptive feedback may initially clash with norm-based learning habits. Therefore, to fully capitalize on ALP, institutions must shift students’ mindsets from passive reception to active navigation, a transition that often requires both digital coaching and metacognitive support.

Self-Efficacy (SE) showed a modest yet significant (β = 0.112, p = .020) and necessary (NCA = 0.103) influence on EL. Although this aligns with existing psychological research (Golestaneh, 2014; Magnano et al., 2014), the relatively lower effect size raises questions about whether over-reliance on algorithmic support diminishes students’ self-belief. In AI-enhanced environments, where much of the learning path is structured for the student, opportunities for mastery-based self-assurance may be fewer, especially if learners simply follow recommendations without reflection. This raises a pedagogical challenge: how can systems be designed to build, rather than bypass, learners’ self-regulatory capacities? Universities adopting AI platforms must ensure that autonomy and challenge are not sacrificed for convenience.

Learner Engagement (LE) yielded a strong and necessary influence (β = 0.276, p < .001; NCA = 0.295), reinforcing the idea that no amount of personalization or AI-driven scaffolding can replace intrinsic investment. The critical role of engagement is especially pertinent in online learning, where distractions abound and isolation is common. This holds particular relevance for Chinese and Malaysian institutions where cultural expectations of academic discipline are high, but digital attention spans are declining. Studies by Xu et al. (2022) and Rao Sangarsu (2023) demonstrate that AI-powered platforms must incorporate emotional and social affordances—such as gamification, real-time feedback, or peer visibility—to sustain behavioral and emotional engagement over time.

Mediation effects further unpack how these mechanisms interact. For instance, TP significantly mediates the effect of DP and ALP on EL (β = 0.130 and 0.227 respectively, p < .001), highlighting that the efficacy of design and personalization is contingent upon user fluency. This is particularly important in institutions rolling out digital platforms without accompanying skill development programs. Similarly, SE mediates the DP → EL path (β = 0.089, p = .020), suggesting that good design bolsters learners’ belief in their capability to succeed—but this effect does not hold in the ALP → EL path. In other words, self-efficacy is influenced more by structural clarity than personalization. Students appear to trust systems that are logically designed and intuitively organized, rather than ones that adapt ‘invisibly’ without transparent logic. This has design implications for AI systems in education, which must balance personalization with user clarity.

The role of Instructor Involvement (II) reveals even more contextual nuance. II positively moderates the DP → EL link (β = 0.116, p = .027), confirming that instructor support enhances the pedagogical utility of well-designed platforms. Especially in collectivist cultures where instructor authority carries weight, such as China and Malaysia, educator mediation can translate abstract design features into actionable learning strategies. Conversely, the non-significance of II × TP (β = 0.031, p = .352) suggests that digital fluency is largely self-driven and not easily cultivated through instructor facilitation alone—perhaps due to generational gaps or instructors' own unfamiliarity with AI systems.

Strikingly, II negatively moderates ALP → EL (β = −0.111, p = .004), suggesting that excessive instructor involvement may interfere with learners’ self-navigation in personalized systems. In traditional Asian classrooms, where teacher direction is dominant, this finding signals a culture shift. As adaptive AI seeks to foster student autonomy, teachers must shift from being content deliverers to learning facilitators. The lack of significant moderation between II and SE (β = −0.053, p = .210) further supports the idea that self-efficacy is internally cultivated, and excessive external regulation may dilute personal growth.

In sum, the structural model (Figure 3) and necessity analyses together demonstrate that while AI systems offer powerful affordances, their success in producing effective learning depends heavily on learner readiness, contextual fit, and balanced human-AI collaboration. For Asian universities undergoing digital transformation, the challenge is not merely to adopt AI tools, but to adapt pedagogy, instructor roles, and learner training around them. Institutions must foster ecosystems where learners are digitally literate, psychologically empowered, and supported by instructors who understand when to intervene—and when to step back.

Higher order construct model.

Implications of This study

Theoretical Implications

This study contributes to the theoretical advancement of Cognitive Load Theory (CLT), Social Cognitive Theory (SCT), and Vygotsky’s Sociocultural Theory by empirically illustrating how AI-enhanced educational environments affect learner-system interactions and learning outcomes. First, in extending CLT, the significant and necessary influence of Adaptive Learning Pathways (ALP) affirms their role in managing cognitive load by reducing extraneous and enhancing germane load. AI’s ability to align instructional content with learners’ cognitive schemas supports Sweller’s (2011) emphasis on cognitive efficiency. Notably, the negative moderation by Instructor Involvement (II) on ALP suggests a boundary condition: excessive human guidance may conflict with AI personalization, leading to cognitive redundancy rather than support.

Second, within SCT, this study reinforces the centrality of Self-Efficacy (SE) in promoting effective learning. The significant direct effect of SE supports Bandura’s (1997) view that belief in one’s abilities enhances goal pursuit. Yet, the non-significant mediation of SE between ALP and Effective Learning (EL) complicates this relationship. In system-led adaptive environments, confidence may be more influenced by perceived system usability and responsiveness than by internal efficacy. This shift supports updated SCT interpretations that incorporate the technological environment as a co-agent in shaping behaviors (Schunk & DiBenedetto, 2020), challenging older assumptions about learner agency in digital contexts.

Third, Vygotsky’s Sociocultural Theory is refined through findings on II. While positive moderation of II on the DP–EL relationship validates the Zone of Proximal Development (ZPD) as a framework for scaffolding, the inverse effect on ALP reveals a mismatch when human involvement overrides AI-driven autonomy. This necessitates a reconceptualization of ZPD as a dynamic triad of learner, instructor, and AI system, where instructor input must be contextually timed and adaptively minimal. Rather than reverting to didactic roles, educators must shift toward strategic facilitation that complements—not replaces—AI personalization.

Together, these theoretical refinements move beyond digitization to signal a paradigmatic evolution. AI reshapes relational dynamics among learner agency, instructional support, and system scaffolding. The findings call for integrated models where personalization, engagement, and socio-technical harmony are viewed as co-constitutive drivers of learning effectiveness in the digital age.

Practical Implications

This study offers strategically grounded, actionable insights for educators, designers, and policymakers seeking to integrate AI in higher education—particularly in emerging Asian and global contexts. First, the demonstrated value of ALP underscores the need for platforms that personalize pace, content, and feedback based on learner analytics. Institutions should adopt AI systems capable of multi-modal, adaptive delivery that accommodates diverse proficiency levels. Developers must ensure that such systems are transparent and give users control over personalization mechanisms.

Second, Technology Proficiency (TP) emerged as a foundational mediator, indicating that infrastructural investment must be accompanied by capacity-building. Higher education institutions should embed AI literacy into digital skills curricula for students and faculty. Instructor training must move beyond LMS usage to include co-teaching strategies with AI systems, empowering educators to discern when to scaffold and when to let adaptive systems operate autonomously.

Third, user-centric design is non-negotiable. AI tools should be intuitive, accessible, and culturally responsive. Iterative user experience testing—with multilingual and diverse learner groups—should be standard practice. Interface simplicity and functionality must accommodate a broad spectrum of digital readiness.

Fourth, the findings warn against over-involvement by instructors in adaptive environments. While support remains crucial, excessive control may undermine autonomy. Instructors should adopt metacognitive and emotional support roles, rather than dominating content delivery. Institutions should guide this shift by integrating AI dashboards that flag student distress or disengagement without prompting unnecessary intervention.

Fifth, SE should be embedded within platform design. Micro-achievements, visual progress indicators, and supportive feedback mechanisms can reinforce learners’ self-belief. The integration of affective computing—such as empathetic chatbots or peer-support features—can further cultivate confidence and belonging.

Sixth, ethical governance of AI in education is imperative. Institutions must actively audit for algorithmic bias and data privacy issues. Oversight bodies should include learners and ethicists to ensure that personalization remains equitable and transparent. Policies must explicitly inform students how their data is used, particularly in predictive feedback systems.

Finally, continuous evaluation is critical. AI tools should be assessed against learning outcomes, dropout rates, and engagement metrics through both quantitative and qualitative methods. Feedback loops must be institutionalized, allowing students and educators to co-shape the evolution of AI platforms based on lived experience rather than technological determinism.

In sum, these implications provide more than recommendations—they offer a roadmap for operationalizing AI in higher education. By aligning digital sophistication with pedagogical intention, and by calibrating the evolving roles of instructors and learners, this study paves the way for inclusive, effective, and ethically grounded AI-enhanced learning systems.

Limitations and Future Research Directions

Despite offering important insights into AI-enhanced learning, this study is not without limitations. First, its cross-sectional design restricts the ability to infer causality. Longitudinal or experimental studies would provide stronger evidence of how AI-driven educational dynamics unfold over time, particularly as systems adapt and learners progress.

Second, the sample—comprising primarily digitally proficient university students with prior exposure to AI/ML—limits generalisability. Learners from under-resourced institutions, rural settings, or with lower levels of digital literacy may experience AI differently. Future research should therefore adopt more heterogeneous sampling strategies to capture diverse learner profiles and contexts.

Third, the study relies on self-reported measures of constructs such as learner engagement and effective learning (EL). While these indicators align with established scales and procedural remedies were implemented to mitigate common method bias, self-reporting is inherently subject to social desirability and recall effects. Future studies should triangulate self-reports with behavioural traces (e.g. clickstream data, system log files), instructor ratings, and independent performance assessments to enhance validity.

Fourth, while the use of Necessary Condition Analysis (NCA) complements PLS-SEM by identifying essential but non-compensatory conditions, NCA remains relatively novel in educational technology research. Replication with longitudinal, multi-level, or mixed-method designs is needed to validate the robustness of these findings and to examine how necessity relationships shift across learner subgroups.

Fifth, the theoretical framing—anchored in Cognitive Load Theory (CLT), Social Cognitive Theory (SCT), and Vygotsky’s Sociocultural Theory (VST)—helped explain the interplay between cognitive and social mechanisms. However, motivational and affective perspectives remain underexplored. Integrating frameworks such as the Technology Acceptance Model (TAM) or Self-Determination Theory (SDT) could clarify how perceptions of usefulness, autonomy, and intrinsic motivation shape engagement with AI-mediated learning.

Sixth, construct coverage was deliberately focused on effective learning (acquisition, retention, and application) and engagement. While this scope aligned with our study’s objectives, it excluded experiential outcomes such as learner satisfaction. Future research should integrate both cognitive and affective dimensions to provide a more holistic picture of AI’s educational impact.

Finally, the ethical and practical implications of AI adoption warrant deeper investigation. Issues of algorithmic bias, learner surveillance, and data privacy remain pressing, especially as AI systems gain greater autonomy. Moreover, the moderating role of instructor involvement emerged as a boundary condition: at times enhancing learning, but at other times undermining the autonomy that AI personalisation seeks to promote. Further inquiry should clarify how to balance human guidance with AI autonomy to maximise equity, transparency, and learner agency in digital education.

Conclusion

This study contributes to the theoretical and practical understanding of AI-enhanced learning by examining how design principles, adaptive learning pathways, technology proficiency, self-efficacy, and learner engagement shape effective learning outcomes. By combining Partial Least Squares Structural Equation Modeling (PLS-SEM) and Necessity Condition Analysis (NCA), the findings reveal that several constructs, while not statistically dominant, are nonetheless essential for enabling high-functioning AI-driven learning environments.

Adaptive learning pathways and technology proficiency emerge as critical enablers of personalized education. Learner engagement and self-efficacy further enhance learning, confirming the importance of both internal and contextual factors. However, the findings also caution that instructor involvement, if misaligned with AI’s adaptive mechanisms, may reduce learner autonomy and effectiveness.

These insights underscore the need for a balanced, hybrid educational approach—one that integrates human and machine intelligence to support autonomy, motivation, and adaptive learning. By advancing theory, informing pedagogical design, and outlining future directions, this study lays the groundwork for more equitable, intelligent, and learner-centered digital education systems.

Footnotes

Acknowledgements

The researchers would like to express their gratitude to the anonymous reviewers for their efforts to improve the quality of this paper.

Ethical Considerations

Formal ethical approval has been waived instate this study adhered to the principles of the Declaration of Helsinki following strict ethical standards. Formal ethical approval was waived due to Participation was anonymous, confidential, and voluntary, with informed consent obtained from all participants. There were no biomarkers or tissue samples collected for analysis. Participants had the freedom to withdraw from the study at any point.

Consent to Participate

Oral consent was obtained from all individuals involved in this study.

Institutional Review Board Statement

Ethical review and approval were waived for this study, due to the fact that the study was conducted as per the guidelines of the Declaration of Helsinki. The research questionnaire was anonymous, and no personal information was gathered.

Author Contributions

Conceptualization, Mazzlida Mat Deli ; Data curation, Ummu Ajirah Abdul Rauf; Formal analysis, Ummu Ajirah Abdul Rauf and Na Meng; Investigation, Na Meng; Software, Ummu Ajirah Abdul Rauf and Na Meng; Supervision, Mazzlida Mat Deli ; Visualization,Mazzlida Mat Deli ; Writing—original draft, Na Meng; Writing—review and editing, Na Meng, All authors have read and agreed to the published version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This article is supported by Graduate School of Business, Universiti Kebangsaan Malaysia, Research grant UKM-GSB (GSB-2024-017and GSB-2024-019).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data will be made available on reasonable request to the corresponding author.

Code Availability

The data that support the findings of this study are available from the corresponding authors upon reasonable request.