Abstract

Recent advances in large language models (LLM) have introduced new possibilities for computer-assisted language learning. However, empirical studies on integrating ChatGPT or other LLMs into language learning platforms remain limited. In response to this gap, the present study examines the acceptance of an LLM-assisted reading platform. In this platform, LLM is used to generate glossary, translations, assessment questions, and to provide instant assistance through an embedded Chatbot. A post-usage survey based on the extended Unified Theory of Acceptance and Use of Technology, with the additional constructs of perceived intelligence and task-technology fit, was administered to 175 undergraduates in China, following 1 month of platform use. PLS-SEM analysis indicated that usability-related constructs, specifically effort expectancy, and facilitating conditions, didn’t significantly influence undergraduates’ behavioral intention to use the platform. In contrast, given LLMs’ flexible alignment with diverse reading tasks, perceived intelligence and task-technology fit emerged as crucial drivers of sustained engagement, alongside other significant performance-oriented and affective factors, such as performance expectancy and hedonic motivation. Furthermore, it was observed that social influence also had a considerable effect on undergraduates’ behavioral intention of using that platform. These findings offer important implications for the design and application of LLM-assisted educational technologies, highlighting the importance of learners’ performance objectives, playful features, and social drivers.

Plain Language Summary

This study explored Chinese university students’ intention to continue using an LLM-assisted reading platform supported by large language models like ChatGPT. After four weeks of use, survey data from 175 students showed that performance-related factors, such as performance expectations, enjoyment, perceived intelligence, and task-technology fit, strongly influenced their intention to keep using the platform. In contrast, usability factors like effort expectations and access to resources were not significant. Social influence also played a notable role. These findings suggest that aligning AI tools with learners’ goals, engagement needs, and social contexts is important for sustained adoption in educational settings.

Keywords

Introduction

Reading is one of the most essential skills for language learners and is critical to personal and academic success, as most learning materials remain text-based even in the digital age (Schleicher, 2023). However, reading is not an innate process (Gough & Hillinger, 1980), and recent reports from the Program for International Student Assessment have shown a global decline in the average reading proficiency of 15-year-old students since 2012 (Schleicher, 2023). This decline underscores the need for innovative solutions to support and enhance reading skills in education. In this context, emerging technologies such as ChatGPT and other large language models (LLMs) offer transformative potential. These technologies can reshape learning practices by facilitating personalized learning experiences and content generation, offering new ways to address the challenges in improving reading proficiency (Li et al., 2024; Mohebi, 2024; Yang & Li, 2024).

Previous studies have demonstrated that ChatGPT could serve multiple functions in reading, including explaining the contextual meanings of words, correcting grammatical errors, generating texts in diverse styles, producing questions, and offering translations, among other capabilities (Kohnke et al., 2023). Further research also showed that ChatGPT positively influenced students’ learning, notably in areas such as vocabulary, grammar and writing, thereby fostering greater engagement and motivation (Karataş et al., 2024).

However, technology must first be accepted before it can effectively enhance language learners’ capabilities. To achieve meaningful learning outcomes, curriculum designers and educators should fully grasp how learners perceive computer-assisted language learning (Albirini, 2006). In this regard, studies have applied the technology acceptance model to explore the determinants of emerging educational technology adoption, including language MOOCs (Hsu, 2023), virtual reality (Du & Liang, 2024), automated speech evaluation (Zou et al., 2023), generative artificial intelligence (GAI; Cai et al., 2023; Liu & Ma, 2023), and large language models. However, existing technology acceptance research concerning large language models (LLMs) in language learning has primarily focused on ChatGPT or other LLMs as a standalone tool (e.g., García-Alonso et al., 2024; Hu & Gong, 2025; Kılıç & Çelik, 2025; Mustofa et al., 2025; Peng & Liang, 2025). As more platforms, such as Khanmigo, CRAFT, and Learn About, begin incorporating LLMs into their learning products, and universities are trying to integrate LLMs into their learning platforms, investigating the acceptance of the systems with embedded LLMs will offer critical insights for educators and platform designers who seek to enhance instructional design and learners’ sustained engagement.

This study explored the acceptance of an LLM-assisted online reading platform, in which LLM is used for diverse features via the OpenAI API, including generating glossary list, performing translations, generating assessment questions, and providing instant feedback via an embedded Chatbot. Given the significant advancements in LLM capabilities, the Unified Theory of Acceptance and Use of Technology (UTAUT) was extended by incorporating task-technology fit and perceived intelligence as additional constructs. Conducted in China, the study examined undergraduate students’ acceptance of the platform through a post-usage survey administered after 4 weeks of engagement. The study aims to address the following two research questions:

Literature Review and Research Hypotheses

LLM-Assisted Language Learning

Large language models (LLMs), particularly ChatGPT, have created new opportunities in the field of language learning. Researchers have explored the potential of large language models for a variety of pedagogical applications, including explaining terminology, generating sample essays and questions, adapting text difficulty, and providing translations (Kohnke et al., 2023). Furthermore, ChatGPT could also be used as a dictionary (Lew et al., 2024), a reading comprehension question generator (Lin & Chen, 2024), or personalized learning assistant (Yu et al., 2025).

For writing tasks, ChatGPT could help learners prepare outlines, revise content, proofread their essays, and reflect on their writing (Su et al., 2023). In addition, it could also provide lexical and grammatical feedback (Guo, 2025) and assess the quality of the writings (Bucol & Sangkawong, 2025; Gjorevski et al., 2025), but the quality of its feedback was still not on par with that of well-trained evaluators (Steiss et al., 2024). Empirical studies have shown that ChatGPT improved students’ writing skills, boosted teachers’ efficacy (Ghafouri et al., 2024), and enhanced learners’ engagement (Zare et al., 2025).

The fulfillment of the potential of LLM in language learning relies on the continuous usage of the learners. With a growing trend of integrating LLMs into various applications, driven by their flexibility and capacity for advanced learning analytics, a more comprehensive understanding of LLM acceptance may also require a shift in focus. Research may need to move beyond standalone tools like ChatGPT and examine user acceptance within these LLM-assisted platforms.

UTAUT2

One of the central challenges in information system research is identifying the factors that drive users to accept or reject the system (Swanson, 1988). To tackle this challenge, Davis (1989) developed the Technology Acceptance Model (TAM) to explore the mechanisms underlying users’ acceptance and effective utilization of emerging technologies, based on the Theory of Reasoned Action (Fishbein & Ajzen, 1975). This model has been extensively employed by scholars to understand the challenges encountered by organizations in promoting new information systems (e.g., Liu et al., 2015), and the acceptance of learning technologies, such as mobile-assisted learning (Hoi & Mu, 2021), Chatbots (Chen et al., 2020), Web2.0 (Mei et al., 2018), Blackboard (Alhumsi & Alshaye, 2021) and ChatGPT (Liu & Ma, 2023; Sun et al., 2025).

To increase the explanatory power of TAM, Venkatesh et al. (2003) proposed the Unified Theory of Acceptance and Use of Technology (UTAUT) by consolidating the constructs from TAM and seven other related theoretical models, which was later expanded into UTAUT2 (Rondan-Cataluña et al., 2015; Venkatesh et al., 2012). Compared with TAM, UTAUT2 incorporates a broader range of constructs, including “performance expectancy, effort expectancy, social influence, facilitating conditions, hedonic motivation, price value, and habit,” along with moderating factors such as “age, gender, and experience” (Venkatesh et al., 2012). In the context of UTAUT2, performance expectancy is the belief that using a system will enhance an individual’s performance (Ahmed et al., 2024; Venkatesh et al., 2003), which is foundational to behavioral intention. Effort expectancy measures the efforts expected to use a system (Venkatesh et al., 2003), which are anticipated to be more prominent initially, but later their impact is overshadowed by other factors (Davis, 1989). Beyond these, facilitating conditions, which are the available organizational and technical supports, also directly impact behavior intention (Venkatesh et al., 2003; Wang et al., 2020). The model is also extended to include hedonic motivation, or the perceived enjoyment of using the technology (Brown & Venkatesh, 2005; Venkatesh et al., 2012). Social influence, defined as the perception that important individuals believe one should adopt the new system, is another key factor shaping user behavior (Venkatesh et al., 2003; Ma & Huo, 2023). All these factors converge to predict behavioral intention, which is the crucial precursor to actual technology use, reflecting the individual’s commitment to performing the behavior (Ajzen, 1985, 1991).

Recent studies show that the UTAUT2 model is effective for investigating the acceptance of innovative educational technologies like virtual reality (Du & Liang, 2024), metaverse technologies (Kalınkara & Özdemir, 2024), and generative AI (Strzelecki & ElArabawy, 2024). With the rise of ChatGPT, scholars have applied this model to understand the acceptance of ChatGPT in different scenarios, such as the adoption of ChatGPT among business students (Al-Okaily et al., 2025), and the use of ChatGPT for assessments (Lai et al., 2024).

These studies underscore the model’s continued explanatory power in elucidating the acceptance of emerging technologies among learners and educators within a rapidly evolving academic environment. Their findings also demonstrate the model’s extensibility and effectiveness in capturing the complex dynamics of user acceptance in emerging digital learning contexts.

Based on the UTAUT model and the previous studies, the following hypotheses are proposed:

Perceived Intelligence

In contrast to traditional artificial intelligence tools, LLM can capture the complexity and diversity of language and communicate with human beings in a more natural and humanized way. Given this capability, the construct of perceived intelligence is introduced into the research. The definition of perceived intelligence has evolved alongside technological advancements. Initially, it was as the user’s perception of technology’s intelligence, knowledge, and purpose (Balakrishnan & Dwivedi, 2024; Johnson et al., 2008). It later came to refer to an AI assistant’s ability to process and generate natural language for effective output (Mirnig et al., 2017). Currently, perceived intelligence emphasizes an assistant’s capacity to automatically process and generate natural language for efficient outcomes (Moussawi et al., 2021; Seeber et al., 2020).

Perceived intelligence has been extensively utilized in research on human-robot interaction to examine the adoption of technologies such as consumer robotics and personal agents. Empirical evidence demonstrates its significant impact on the continuous usage of robots or agents in diverse contexts, such as intelligent agents (Moussawi et al., 2023), mobile banking apps (Lee et al., 2023) and hotel robots (Song et al., 2024). Furthermore, a study from China indicates that the long-term adoption of generative AI among university students was, in part, influenced by their perceived intelligence of the technology (Liu et al., 2025). Despite these findings, there is limited attention in the literature regarding the impact of perceived intelligence on the usage intention of LLM-assisted language learning platforms.

Drawing upon these insights, we formulate the following hypothesis:

Task-Technology Fit

While the technology acceptance model examines technology adoption through the lens of perceived usefulness and perceived ease of use, task-technology fit assesses technology from a task perspective. As one of the most important developments in information systems theory (Melchor-Ferrer, 2014), task-technology fit refers to the degree to which a technology supports an individual in accomplishing their tasks, evaluating the interplay between task requirements, individual capabilities, and the functionalities offered by the technology (Goodhue, 1998; Goodhue & Thompson, 1995; Howard & Rose, 2019).In particular, it measures the degree of alignment among task requirements, individual capabilities, and the functionality of the technology (Huang et al., 2017).

The task-technology fit (TTF) model was originally applied to analyze technology adoption in consumer-focused industries, such as data services (Pagani, 2006). Its strong explanatory power, however, has led to its subsequent application within the educational sector to understand the acceptance and integration of various technologies. For instance, TTF has been shown to positively impact the continued usage intention of MOOCs (Wu & Chen, 2017) and accounted for a significant portion of the variance in the motivation to adopt digital textbook services (Rai & Selnes, 2019). Expanding on this, more recent investigations have highlighted the significant role of TTF in the adoption of machine translation for second language learning (Sha et al., 2025) and the integration of generative artificial intelligence in elementary education (Du & Lv, 2024).

Building on this theoretical foundation and the previous studies, we propose the following hypotheses:

Proposed Research Model

Grounded on UTAUT2, task-technology fit, perceived intelligence and relevant literature, this study modified the original UTAUT2 model. The constructs of “price value” and “habit” are removed, in consideration of the fact that the LLM-assisted reading platform provided in this study was offered free of charge to the EFL learners in China and most learners lacked prior experience with LLM-assisted reading platforms when the research was conducted. In addition, perceived intelligence and task-technology fit were added. Figure 1 illustrates the research model and the proposed hypotheses.

Research model.

Methodology

Research Procedure and Method

Considering that generative artificial intelligence boomed in 2023 and a mere Chatbot is not convenient for reading practice, a customized LLM-assisted reading platform named LinguaPilot was developed. As shown in Figure 2, the researchers first designed a poster that illustrated the characteristics and benefits of the LLM-assisted reading platform to recruit the students from four universities in China, with the assistance of their English teachers. Then the tutorial videos were given to these students, who joined the program on a voluntary basis. Following 4 weeks of online reading, the participating students received the questionnaire through Tencent Questionnaire, an online platform widely utilized in China. The survey was set up to prevent multiple submissions from a single device or WeChat account. Before starting, participants were briefed about the study’s goals and how their data would be used, and they must give their consent to proceed. To ensure data quality, they were also informed that questionnaires with unusually short or prolonged completion durations would be flagged and excluded from the analysis.

Research procedure.

The questionnaire data were analyzed using Partial Least Squares Structural Equation Modeling (PLS-SEM). This methodology was employed to rigorously test the validity, reliability, and discriminant validity of the measurement model, alongside evaluating the proposed hypotheses. All statistical analyses were conducted using Smart PLS 4.1.0. Consistent with the established two-step analytical procedure proposed by Anderson and Gerbing (1988), the process involved an initial measurement model evaluation, which assessed the quality of the constructs, followed by a structural model assessment, which examined the hypothesized paths among the latent constructs.

Participants

Participants were recruited from four Chinese universities using a convenience sampling approach. A total of 175 students participated in the study, a sample size deemed sufficient for the analysis. This determination was based on the widely accepted guideline that the sample size should be at least ten times the maximum number of direct paths (inner or outer model links) leading to any single latent variable within the structural equation model (Goodhue et al., 2012; Kock & Hadaya, 2018).

Table 1 provides a comprehensive overview of the demographic characteristics of the participants in the study. Among the participants, 65 were first-year students, representing 37.1% of the total sample; 21 were second-year students, accounting for 12.0%; and 89 were third-year students, making up 50.9%. In terms of gender, there were 137 female students, comprising 78.3% of the total, and 38 male students, making up 21.7%. Regarding academic disciplines, 92 students (52.6%) were majoring in English-related fields, while 83 students (47.4%) were in non-English-related fields.

Demographic Statistics of Participants (N = 175).

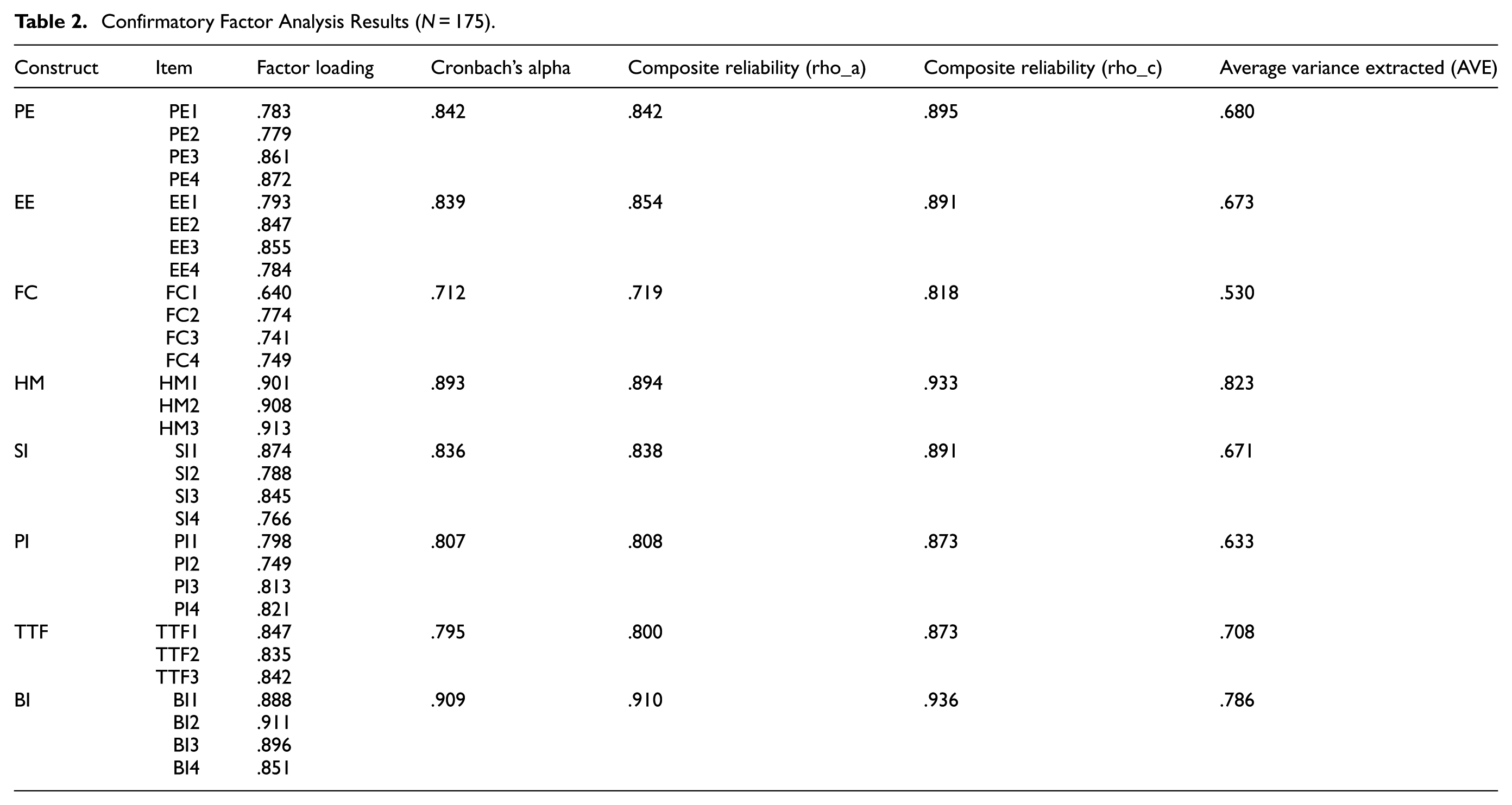

Questionnaire

The questionnaire was structured into two parts. Part 1 was designed to gather essential demographic information from participants, including their gender, academic grade, and major. Additionally, it included a screening question to ascertain whether students had prior experience with any LLM-assisted reading platform, specifically mentioning LinguaPilot as an example. Part 2 focused on measuring various constructs related to the acceptance of LLM-assisted reading platforms. All items within this section were rated on a 7-point Likert scale, with response options ranging from “strongly disagree” (1) to “strongly agree” (7). To ensure their suitability and cultural relevance, all items in Part 2 were adapted from established measures in previous related studies. These translated items subsequently underwent a rigorous review process by two Chinese experts in English as a Foreign Language (EFL) teaching, ensuring their accuracy and appropriateness for the target population. The instrument is presented in Appendix I.

LinguaPilot Platform

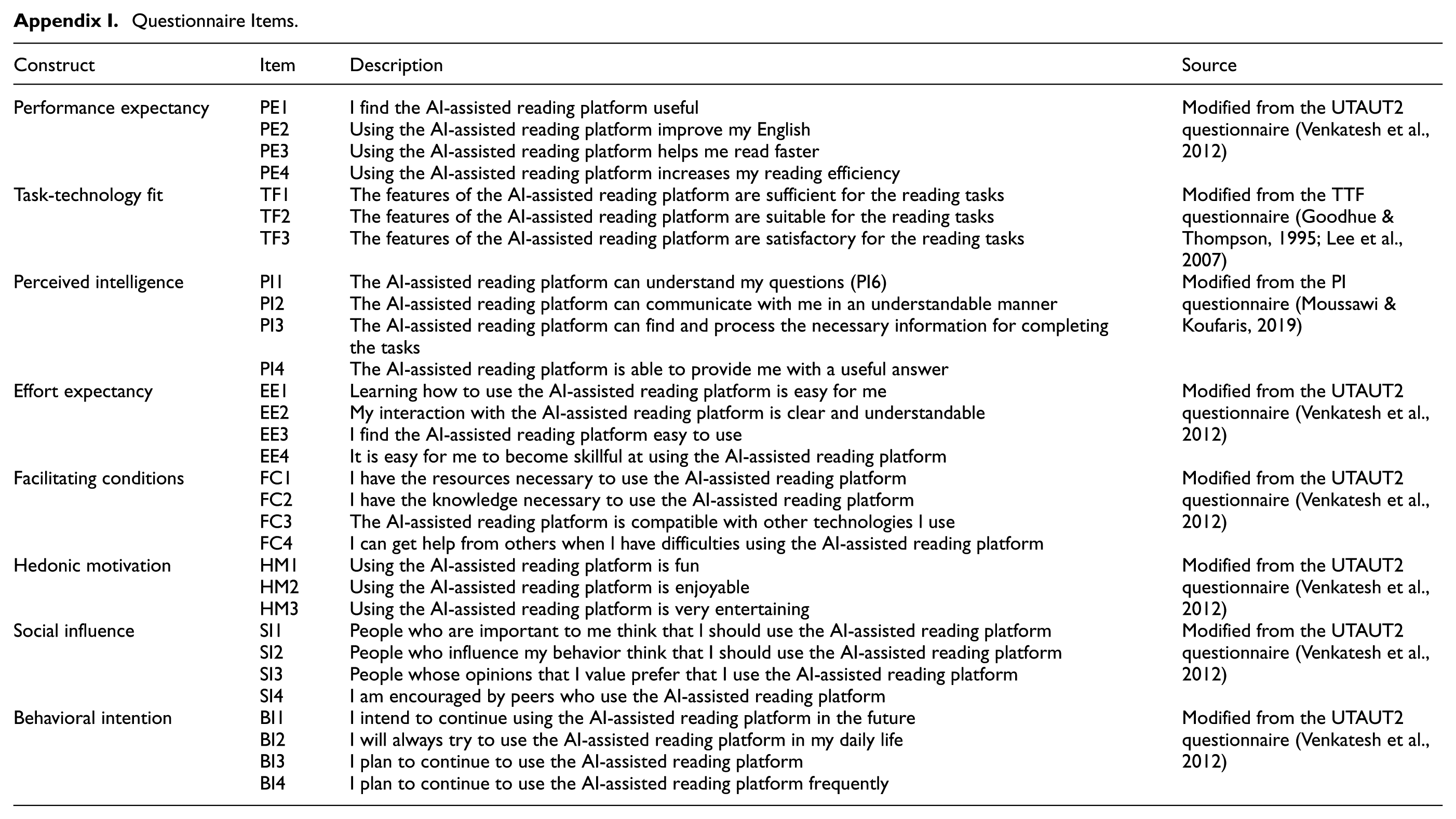

LinguaPilot is an LLM-assisted reading platform designed to help users practice English reading skills. The platform integrates the LLM via the OpenAI API to provide immediate Chatbot-based feedback. It also uses the API to generate glossaries, translations, and multiple-choice assessment questions. The practice materials on LinguaPilot are from authentic reading materials from standardized English tests in China (including College English Test Band 4 and 6, Test for English Majors Band 4 and 8) and English newspapers such as China Daily and Beijing Review. After searching by difficulty levels, learners can select an article and start their reading practice. When they encounter difficulties in reading, they can ask the LLM Chatbot to translate or explain the difficult and long sentences (see Figure 3). In addition, the glossary is generated via OpenAI API, with each word accompanied by definitions, Chinese translation, sample sentences, etymology, synonyms, and antonyms (see Figure 4).

Article reading page.

Glossary page.

Data Analysis and Results

The data analysis process commenced with confirmatory analysis, followed by discriminant validity analysis and collinearity check. Finally, the path coefficients were analyzed to validate the hypotheses, followed by the analysis of model fit and predictive power.

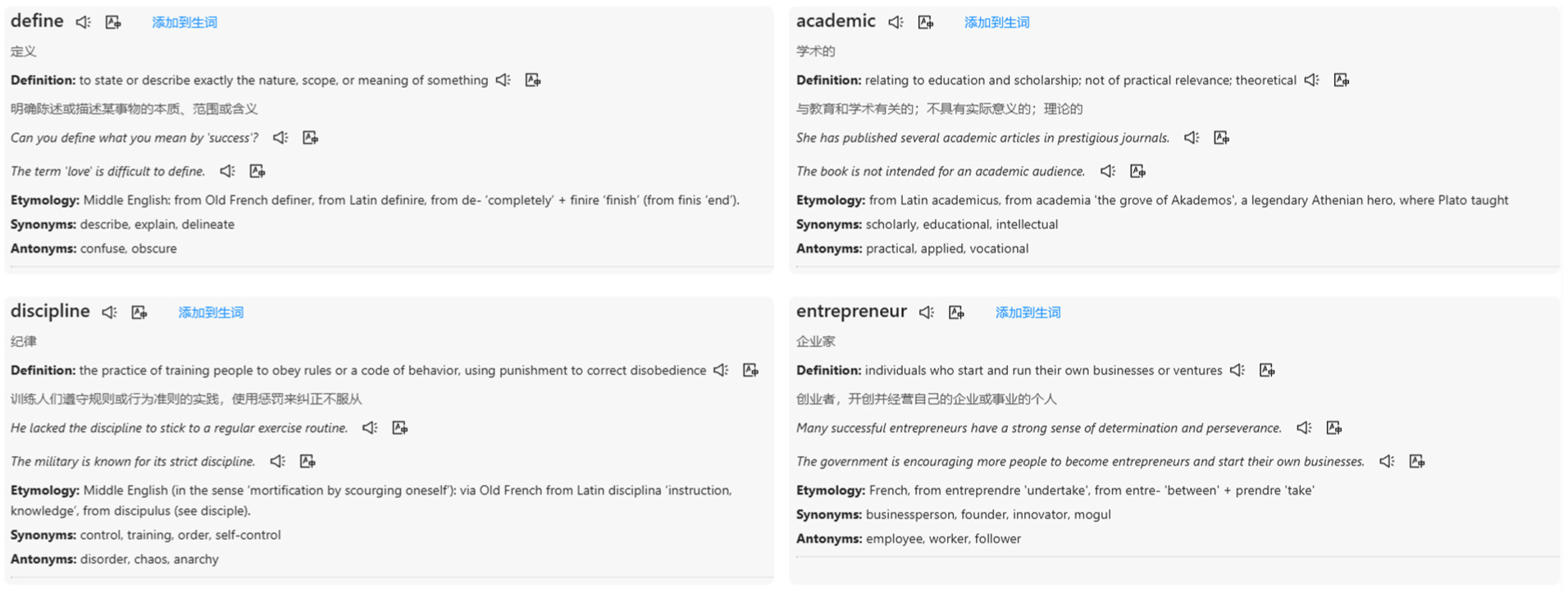

Confirmatory Factor Analysis

A confirmatory factor analysis (CFA) was performed to rigorously evaluate the psychometric properties of each latent construct within the proposed measurement model. Specifically, the reliability of each construct was examined using three distinct, yet complementary, measures: Cronbach’s Alpha, Composite Reliability, and AVE. Cronbach’s Alpha provided an estimate of internal consistency, indicating the degree to which items within a scale were intercorrelated (Cronbach, 1951). Simultaneously, convergent validity was assessed through the AVE, which quantifies the proportion of variance in the observed indicators explained by their underlying latent construct (Fornell & Larcker, 1981). The comprehensive results of this confirmatory factor analysis, including all relevant reliability and validity coefficients, are presented in Table 2.

Confirmatory Factor Analysis Results (N = 175).

As detailed in Table 2, the validity of the constructs was supported by various measures. The factor loadings for all items are well above the conventional threshold of .6, ranging from .640 to .913, which indicates that the indicators are strong and reliable measures of their respective constructs. With AVE values ranging from .530 to .823, all constructs demonstrate good convergent validity, as these values consistently exceeded the threshold of .5 recommended by Fornell and Larcker (1981).

Furthermore, the reliability of the measurement model was comprehensively supported by both Composite Reliability and Cronbach’s Alpha. The values of composite reliability (rho_a and rho_c) range from .719 to .936, surpassing the commonly accepted benchmark of .7 (Bagozzi & Yi, 1988). Similarly, Cronbach’s Alpha values, which range from .712 to .910, are all above the frequently cited threshold of .7 (Fornell & Larcker, 1981; Lai et al., 2023; Nunnally & Bernstein, 1994). These consistent results across multiple reliability metrics indicate high internal consistency among the manifest indicators for each latent construct, suggesting that they are largely free from random measurement error.

Discriminant Validity and Collinearity

Discriminant validity was thoroughly assessed using two established criteria to ensure that each construct was distinct. First, Fornell and Larcker’s (1981) criterion was applied, which stipulates that the square root of the AVE for each construct must be greater than its correlations with all other constructs in the model (Castro-Lopez et al., 2024). Our analysis confirmed this condition was met across all constructs, providing strong evidence for their discriminant validity. Second, the Heterotrait–Monotrait Ratio of Correlations (HTMT) was employed as a complementary indicator of discriminant validity. All calculated HTMT values are below the conservative threshold of .90 (Henseler et al., 2015; Ringle et al., 2023). This further corroborates the distinctiveness of the latent constructs, thereby abating potential issues of multicollinearity in the subsequent structural model analysis.

In PLS-SEM, the Variance Inflation Factor (VIF; Kock & Lynn, 2012) is used for detecting collinearity, with values below five indicating the absence of common method bias (James et al., 2013). In our analysis, the VIF values for all constructs range from 1.000 to 3.366, falling well below this threshold, indicating that the model is largely free from collinearity issues.

Path Coefficients

This study evaluated nine hypotheses, as summarized in Table 3. Seven of these hypotheses received significant support from the data. Specifically, H1 (PE → BI), H4 (HM → BI), H5 (SI → BI), H6 (PI → BI), and H7 (TTF → BI) all demonstrate significant positive effects. The T-values for these direct paths ranged from 1.669 to 2.561, with corresponding p-values consistently below .05, indicating statistical significance. Furthermore, the mediating hypothesis H9 (TTF → PE → BI) also exhibits a significant positive indirect effect, suggesting that PE mediates the relationship between TTF and BI. In addition, H8 (TTF → PE) shows a particularly strong and direct positive effect, with a high T-value of 5.925 and a p-value less than .001. This finding highlights the significant role of TTF in directly enhancing PE. Conversely, H2 (EE → BI) and H3 (FC → BI) are not supported, as indicated by their low T-values and non-significant p-values.

Results of Hypothesis Testing.

p < .10. **p < .05. ***p < .01.

In total, seven of the nine hypothesized relationships are supported, as depicted in Figure 5. The model demonstrated substantial explanatory power for Behavioral Intentions (BI), with an R2 value of .554 (Hair & Alamer, 2022).

Results of PLS analysis.

Model Fit and Predictive Power

Model fit was evaluated using the standardized root mean square residual (SRMR). Following the guidelines of Chen (2007), the SRMR was derived based on the covariance of the predicted matrices. An SRMR value of .10 or below is considered acceptable (Hair et al., 2011; Hu & Bentler, 1999). In this study, the SRMR was .086, indicating a reasonable model fit (Table 4).

Model Fit.

Predictive power refers to a model’s ability to accurately predict new data (Shmueli et al., 2019). The predictive power of the model was analyzed using the PLSpredict/CVPAT procedure (Hair et al., 2019). This analysis yielded Q2 values of .481 for Behavioral Intention (BI) and .176 for Performance Expectancy (PE). As both Q2 values are above zero, the model exhibits good predictive power for these constructs.

Discussions

UTAUT2

The findings of this study revealed that several key factors exerted a significant positive influence on behavioral intention. Specifically, performance expectancy, hedonic motivation, and social influence all demonstrate statistically significant positive effects, which aligns with previous studies regarding the drivers of technology adoption in various contexts (e.g., Castro-Lopez et al., 2024). However, in contrast to the initial hypotheses, neither effort expectancy nor facilitating conditions significantly affect the behavioral intention in this study.

Consistent with previous findings, learners who hold high expectations regarding a system’s effectiveness are more prone to perform contiguous actions for achieving their learning goals (H1; e.g., Cai et al., 2023; Hoi, 2020; Polyportis & Pahos, 2024). In the context of English reading, one key factor influencing such expectations is the system’s ability to address learners’ actual difficulties. Vocabulary remains a major obstacle for university students in understanding texts in their reading practice. To cope with this challenge, learners often rely on translation to improve their reading comprehension (Boustani, 2019; Emirmustafaoğlu & Gökmen, 2015; Ramachandran & Rahim, 2004; Wei & Macaro, 2024). The LLM-assisted reading platform effectively supports language learning by providing rapid and accurate translations and explanations, with a particular benefit coming from the provision of word meanings (Wang, 2024). This capability, combined with LLM-generated grammatical feedback, helps learners internalize language patterns more effectively (Monaghan et al., 2021; O’Neill & Russell, 2019). This alignment with learners’ needs reinforces the perceived performance, which in turn increases their intention to use it.

However, diverging from previous research that has shown effort expectancy to significantly influence subsequent usage behavior (e.g., Du & Liang, 2024; Liu & Ma, 2023; Venkatesh et al., 2012; Wan et al., 2020), the current study revealed no significant effect of effort expectancy on prospective use (H2 was not supported). This result aligned with findings from studies on Chatbot-based language learning (e.g., Chen et al., 2020). In an era where usability is a paramount consideration in software development, most applications undergo rigorous usability testing before release. Consequently, users are likely to encounter minimal barriers during use. It is plausible, therefore, that language learners perceive ease of use as a baseline expectation rather than a significant factor influencing their continued engagement with the LLM-assisted reading platform. Furthermore, millions of applications are vying for users’ limited time, a poorly designed platform may deter users, but a well-designed and user-friendly platform may not guarantee sustained usage.

A similar rationale applies to facilitating conditions (H3 was not supported). Facilitating conditions are typically well provided for university students, particularly in China. Institutions often offer free access to computer facilities, and most students possess personal devices such as smartphones and laptops. In addition, the construction of digital campuses in China has resulted in widespread availability of wired, wireless, 5G, and virtual private networks, with free Internet access on campus. Given this context, the non-significant influence of facilitating conditions became more comprehensible. Much like effort expectancy, facilitating conditions functioned as foundational prerequisites rather than decisive motivators for using the LLM-assisted reading platform. This suggests that when resources and support are already abundant and accessible, they cease to be significant motivators for use.

Beyond the previously discussed factors, social influence was a significant predictor of learners’ behavioral intention of using the LLM-assisted reading platform (H4). Consistent with prior research on technology adoption in collectivist cultures, learners were more likely to persist with the platform when they felt encouraged by important people in their social environment (Mustofa et al., 2025; Sawang et al., 2014). While China’s culture has become more individualistic (Steele & Lynch, 2013), it remains rooted in collectivism, where the opinions of peers and other important individuals strongly influence technology adoption. Hedonic motivation also contributed meaningfully to a user’s behavioral intention to continue using the platform (H5). The positive path coefficient (β = .170) and significant p-value (p = .017) indicate that intrinsic satisfaction enhances a learner’s willingness to engage with the platform. This is likely because the LLM provided students with greater control over the reading process and reduced their anxiety by offering instant, useful feedback, thereby elevating their enjoyment. Collectively, these findings underscore that both external encouragement and internal enjoyment are key drivers of sustained usage behavior.

Perceived Intelligence and Task-Technology Fit

Perceived intelligence, that is, the extent to which learners view the system as capable of mimicking human-like understanding and responsiveness, also has a considerable influence in determining the undergraduates’ behavioral intention (H6). The perceived intelligence of the LLM-assisted reading platform was enhanced by its ability to engage in smooth, conversational interactions. Furthermore, this intelligence enabled the system to break down complex sentences and provide clear, interactive, and real-time explanations, which fostered the development of grammatical competence. This, in turn, boosted learners’ confidence and sustained their engagement, a finding consistent with existing research on conversational agents (Xu et al., 2022).

Our study further explores the influence of task-technology fit on Chinese undergraduates’ behavioral intention of using the LLM-assisted reading platform. We find that task-technology fit is the most important factor in determining the behavioral intention (the path coefficient is .232). It not only directly shaped the behavioral intention (H7), but also significantly influenced performance expectancy (H8). When a system’s functionality aligns with the specific task requirements of language learners, their confidence in completing those tasks is enhanced, thereby boosting their PE. The LLM-assisted reading platform achieves this by offering features that traditional tools lack, such as instant analysis, simplification, and decomposition of complex sentences. This alignment between the platform’s capabilities and user needs ultimately shapes learners’ BI, a role that is partially mediated by their PE. Essentially, TTF does not simply lead to BI directly; its influence is significantly channeled through users’ belief that the system will improve their performance (H9), a finding consistent with previous research (Wan et al., 2020; Zhou et al., 2010).

Implications

This study expands the Unified Theory of Acceptance and Use of Technology 2 (UTAUT2) model by incorporating perceived intelligence and task-technology fit to determine the factors influencing undergraduates’ continued intention to use the LLM-assisted reading platforms. It enriches our understanding of the acceptance of LLM-assisted platforms by focusing on key factors attributed to LLMs, that is, their increased intelligence and their suitability for a broad range of educational tasks, which contribute to a higher task-technology fit.

In technology-assisted language learning, the importance of perceived intelligence for sustained use of LLM-assisted platforms requires a focus on improving the intelligence of these models. To mitigate the inherent “hallucination” problem that can erode user trust (Huang et al., 2025), technical interventions are important. These include implementing advanced LLM features, including retrieval-augmented generation (RAG; Gao et al., 2024), agentic AI (Acharya et al., 2025), or self-evolving agents for generating adaptive learning materials and creating personalized learning paths (Gao et al., 2025). Additionally, the design and deployment of LLM-assisted platforms should prioritize task-technology fit. This requires a comprehensive understanding of the specific requirements of educational tasks. Preliminary studies, informed by established educational theories, may be conducted to ascertain students’ evolving needs. This proactive approach ensures that platforms remain relevant and effective, thereby promoting their continued adoption in response to rapid societal and economic changes.

Given the significant influence of hedonic motivation on user adoption, LLM-assisted platforms should be designed to enhance learner enjoyment. As play is an essential experience for learning (Chen et al., 2024), incorporating playful elements, such as gamification or competition in the learning activities, can make learning more engaging (Costantini et al., 2025). For example, platforms could use LLMs to generate language-learning games or design activities where students compete against an LLM-powered peer, similar to non-playable characters (NPCs) in video games. Furthermore, successful technology adoption depends on the involvement of key stakeholders. Efforts should be made to gain the support of teachers and student leaders, as their opinions can be a powerful driver of continued platform use among students.

Conclusion, Limitations, and Future Directions

The popularity of LLM-assisted learning platforms and their increasing adoption by users highlight a growing area of research interest. In this study, we investigated two constructs critical to understanding the intelligence of large language models, namely, perceived intelligence and task-technology fit, and explored their relationship with learners’ acceptance in the post-usage context. Our results showed that usability factors (e.g., effort expectancy, facilitating conditions) did not significantly influence continued usage intention. In contrast, constructs related to performance and engagement (including performance expectancy, perceived intelligence, task-technology fit, and hedonic motivation), as well as social influence, are crucial drivers for continuous usage.

Theoretically, this study advances technology acceptance research by providing empirical support for the extended UTAUT2 model within the novel context of LLM-assisted language learning. It highlights the critical role of integrating factors like user perception of the LLM’s cognitive capabilities and the alignment of technology with learning requirements when evaluating educational technologies. Practically, the findings suggest that educators should prioritize the design of intelligent, context-aware and playful features based on a deep understanding of actual learning situations to enhance perceived intelligence and enjoyment.

However, limitations exist. The sample was limited to Chinese undergraduate students from a few universities, which may restrict the cross-cultural generalizability. The cultural context of China, where there is a strong emphasis on collectivism and social influence, may not be representative of learners in more individualistic societies. Additionally, the study did not account for other factors that could influence technology adoption, such as learners’ factors, including their prior exposure to AI tools, their self-efficacy, their AI literacy level, their language proficiency levels, or the nature of the task (e.g., reading, writing or speaking). The short-term, self-reported nature of the data may have introduced social desirability bias.

Recognizing the need for broader generalizability, future research may explore the cross-cultural and cross-institutional comparisons. This would offer a deep understanding of how the acceptance of LLM-assisted learning is influenced by diverse cultural contexts and varying institutional environments. Furthermore, studies may employ longitudinal designs or triangulate self-reported data with behavioral data (e.g., learning logs) to obtain comprehensive insights into the technology acceptance of LLM-assisted language learning platforms over time.

Footnotes

Appendix

Questionnaire Items.

| Construct | Item | Description | Source |

|---|---|---|---|

| Performance expectancy | PE1 | I find the AI-assisted reading platform useful | Modified from the UTAUT2 questionnaire (Venkatesh et al., 2012) |

| PE2 | Using the AI-assisted reading platform improve my English | ||

| PE3 | Using the AI-assisted reading platform helps me read faster | ||

| PE4 | Using the AI-assisted reading platform increases my reading efficiency | ||

| Task-technology fit | TF1 | The features of the AI-assisted reading platform are sufficient for the reading tasks | Modified from the TTF questionnaire (Goodhue & Thompson, 1995; Lee et al., 2007) |

| TF2 | The features of the AI-assisted reading platform are suitable for the reading tasks | ||

| TF3 | The features of the AI-assisted reading platform are satisfactory for the reading tasks | ||

| Perceived intelligence | PI1 | The AI-assisted reading platform can understand my questions (PI6) | Modified from the PI questionnaire (Moussawi & Koufaris, 2019) |

| PI2 | The AI-assisted reading platform can communicate with me in an understandable manner | ||

| PI3 | The AI-assisted reading platform can find and process the necessary information for completing the tasks | ||

| PI4 | The AI-assisted reading platform is able to provide me with a useful answer | ||

| Effort expectancy | EE1 | Learning how to use the AI-assisted reading platform is easy for me | Modified from the UTAUT2 questionnaire (Venkatesh et al., 2012) |

| EE2 | My interaction with the AI-assisted reading platform is clear and understandable | ||

| EE3 | I find the AI-assisted reading platform easy to use | ||

| EE4 | It is easy for me to become skillful at using the AI-assisted reading platform | ||

| Facilitating conditions | FC1 | I have the resources necessary to use the AI-assisted reading platform | Modified from the UTAUT2 questionnaire (Venkatesh et al., 2012) |

| FC2 | I have the knowledge necessary to use the AI-assisted reading platform | ||

| FC3 | The AI-assisted reading platform is compatible with other technologies l use | ||

| FC4 | I can get help from others when l have difficulties using the AI-assisted reading platform | ||

| Hedonic motivation | HM1 | Using the AI-assisted reading platform is fun | Modified from the UTAUT2 questionnaire (Venkatesh et al., 2012) |

| HM2 | Using the AI-assisted reading platform is enjoyable | ||

| HM3 | Using the AI-assisted reading platform is very entertaining | ||

| Social influence | SI1 | People who are important to me think that l should use the AI-assisted reading platform | Modified from the UTAUT2 questionnaire (Venkatesh et al., 2012) |

| SI2 | People who influence my behavior think that I should use the AI-assisted reading platform | ||

| SI3 | People whose opinions that I value prefer that I use the AI-assisted reading platform | ||

| SI4 | I am encouraged by peers who use the AI-assisted reading platform | ||

| Behavioral intention | BI1 | I intend to continue using the AI-assisted reading platform in the future | Modified from the UTAUT2 questionnaire (Venkatesh et al., 2012) |

| BI2 | I will always try to use the AI-assisted reading platform in my daily life | ||

| BI3 | I plan to continue to use the AI-assisted reading platform | ||

| BI4 | I plan to continue to use the AI-assisted reading platform frequently |

Acknowledgements

The authors acknowledge the use of ChatGPT to edit the text to improve its form, and they take full responsibility for the content of this article.

Ethical Considerations

This study was approved by the Institutional Review Board (IRB) in the Institute of Language Sciences, Shanghai International Studies University, China (Approval Number 20250117001).

Consent to Participate

The informed consent was obtained from all participants involved in this study.

Author Contributions

Conceptualization, Methodology, Formal Analysis, Writing – original draft: Baorong Huang. Data curation, Writing – review and editing: Zhihao Dong and Juhua Dou.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data will be made available on request.