Abstract

As Artificial Intelligence (AI) becomes increasingly integrated into educational settings, students’ attitudes toward AI significantly influence their willingness to adopt and engage with these emerging technologies. Although interest in AI education is on the rise, empirical research exploring students’ attitudes especially from a gender perspective remains scarce. To bridge this gap, the present study developed and validated a scale grounded in UNESCO’s AI education guidelines to measure high school students’ attitudes toward AI. A total of 553 students from grades 10, 11, and 12 participated in a sequential, three-phase study that included exploratory and confirmatory factor analyses, followed by gender-based comparisons. The analysis confirmed a robust three-factor structure encompassing Determination, Exploration, and Collaboration. Results revealed notable gender differences: male students scored significantly higher in the Determination and Exploration dimensions, while no significant gender gap was observed in Collaboration. Effect size estimates indicated small to moderate practical significance, underscoring the subtle yet meaningful nature of these differences. These findings emphasize the importance of fostering inclusive, gender-responsive AI education practices that ensure equitable engagement for all learners. The validated scale offers a reliable tool for assessing students’ attitudes toward AI, while the proposed framework provides a foundation for designing targeted pedagogical interventions. By identifying specific areas of disparity and offering strategies to address them, this study contributes to both theoretical advancements and practical improvements in AI education, ultimately supporting the creation of equitable learning environments that empower every student to thrive in an AI-driven future.

Plain language summary

Customer satisfaction depends on a number of factors and affects the financial performance of a enterprise. It is not entirely clear how the system of customer satisfaction factors affects the performance of the enterprise. It appears that the relationship between customer satisfaction and customer expectations is crucial. The customer satisfaction model is constructed based on the resulting customer expectations and on a questionnaire survey of customers of the selected enterprises. The financial performance of these enterprises was evaluated based on accounting data that were used as inputs to the financial ratios, which were then evaluated together to rank the enterprise by a single number. The joint evaluation was carried out in two different ways (by comparing the results of selected indicators of the other enterprises studied and by using a standard index into which the results of the required financial indicators were entered). It was found that the financial performance of an enterprise (measured together by profitability, liquidity and activity indicators) is directly influenced by customer expectations and indirectly by customer satisfaction, product quality (as perceived by the customer) and customer knowledge of the product. Customer loyalty and product competitiveness (as perceived by the customer) have a smaller and more indirect effect on financial performance. At the same time, it has been shown that better results of the evaluation of the financial performance of enterprises are given by comparing the results of selected indicators of other enterprises studied. This research is limited by the sample size, which is on the borderline of a small sample (102 enterprises). It is further limited by its narrow focus on the food industry.

Keywords

Introduction

Artificial Intelligence (AI) is rapidly transforming the global educational landscape, reshaping how students learn, communicate, and prepare for the future (Eguchi et al., 2021; K. Kim & Kwon, 2024). Increasingly, K-12 classrooms are integrating AI-powered systems such as intelligent tutoring systems (Mousavinasab et al., 2021), virtual assistants (Forman et al., 2023), and personalized learning platforms (Kem, 2022) to support dynamic, adaptive, and personalized instruction. These technologies offer real-time feedback, content customization, and learning analytics tailored to individual needs, positioning students not only as users but also as future contributors to an AI-driven world (Buentello-Montoya, 2023; Ng et al., 2021; Sto Tomas et al., 2019).

As AI systems become increasingly embedded in K-12 education, students’ attitudes toward AI, including their perceptions of its usefulness, risk, and value, play a critical role in shaping both their engagement with AI and their future aspirations (B. Zhang & Dafoe, 2019). Positive attitudes can foster curiosity and persistent engagement, while negative attitudes may lead to anxiety, avoidance, or passive use of AI technologies. These attitudes are not formed in isolation; rather, they are shaped by a constellation of individual, contextual, and sociocultural factors, such as prior exposure to technology, digital self-efficacy, socioeconomic background, and cultural beliefs (Schepman & Rodway, 2020; Suh & Ahn, 2022). Importantly, the motivational climate of the school including teacher attitudes, instructional practices, and peer dynamics can either support or hinder students’ openness to AI. In this regard, Self-Determination Theory (Deci & Ryan, 2000) provides a valuable framework, emphasizing that students are more likely to adopt positive learning dispositions when their psychological needs for autonomy, competence, and relatedness are fulfilled (Xia et al., 2022). Schools that support student choice, mastery, and collaboration can thus play a powerful role in shaping AI-related attitudes.

One prominent sociocultural factor influencing students’ AI engagement is gender (Ezeoguine & Eteng-Uket, 2024). Research suggests that boys and girls often encounter different experiences in AI education, shaped by differential exposure, access, and societal expectations (Armutat et al., 2024; Stöhr et al., 2024). While boys frequently report higher confidence and interest in using AI for problem-solving and innovation, girls may express greater apprehension, driven by lower self-efficacy, fewer role models, and concerns about the ethical implications of AI (Antonio & Tuffley, 2014; Young et al., 2023). However, much of this research remains adult-centric, focusing on university students or professionals, and fails to examine how gendered differences in AI attitudes emerge during the formative high school years (Chaudhry & Kazim, 2021).

Despite the growing recognition of the importance of student attitudes in shaping AI learning, the field lacks valid, developmentally appropriate instruments tailored to adolescents. Most existing scales (e.g., Schepman & Rodway, 2023; Sindermann et al., 2021) were designed for adults and rooted in general technology acceptance models, which may not capture the educational, motivational, and ethical nuances relevant to younger learners. Even recent efforts to design student-focused tools, such as Suh and Ahn’s (2022) scale, were validated in populations without formal AI instruction, limiting their utility in classroom contexts where AI education is actively being implemented. Moreover, many of these instruments fail to incorporate the core values emphasized in UNESCO’s K-12 AI education framework (UNESCO, 2022), including personal, social, human, and ethical dimensions that are essential for cultivating responsible AI engagement.

The urgency of this study is underscored by the accelerated rollout of AI technologies in school settings and the growing international push for AI literacy at the K-12 level. Recent developments in generative AI tools and their growing presence in classrooms have heightened the need to understand how students perceive and interact with these technologies. Without timely and developmentally appropriate assessment tools, educators and policymakers risk overlooking students’ motivational and emotional engagement with AI, particularly during formative years when attitudes are still malleable. As AI becomes embedded not only in curricula but also in students’ daily learning experiences, the need to measure and address their attitudes has never been more critical.

To address these critical gaps, the present study introduces a novel and validated AI Attitude Scale specifically developed for high school students. Grounded in Self-Determination Theory and aligned with UNESCO’s AI education guidelines, this scale captures three core dimensions of AI attitude: Exploration (curiosity and openness toward AI), Determination (persistence in learning despite challenges), and Collaboration (peer engagement in AI-related tasks). This developmentally sensitive and theoretically grounded instrument provides a robust tool for understanding how adolescents perceive and engage with AI. Additionally, the study examines whether gender disparities exist in these attitudes, offering new insights into how inclusive and equitable AI education strategies can be designed and implemented.

Literature Review

The Evolving Role of AI in Education

Artificial Intelligence (AI) has rapidly evolved from a niche technological innovation to a transformative force reshaping global education systems (Qin et al., 2023). In efforts to prepare students for future careers, AI technologies are increasingly embedded within teaching and learning processes-moving beyond static computing tools to enable personalized, adaptive, and data-driven instruction (Ng et al., 2021). In contemporary K-12 classrooms, AI-powered applications such as intelligent tutoring systems (Mousavinasab et al., 2021), virtual assistants (Forman et al., 2023), and adaptive learning platforms (Kem, 2022) are now used to deliver differentiated instruction by offering real-time feedback and predictive analytics tailored to students’ individual profiles (Farhood et al., 2024; Yim & Su, 2024).

While these technologies offer promising pathways for educational innovation, they also pose distinct challenges and opportunities for learners and educators. For instance, effective AI integration requires a baseline of digital literacy that many students have not yet attained, particularly when it comes to evaluating AI-generated content or understanding algorithmic processes (Ng et al., 2021; Pinski & Benlian, 2024). Simultaneously, AI presents opportunities to foster critical thinking and metacognitive awareness, especially when students are encouraged to question AI outputs, analyze ethical dilemmas, and examine potential biases. Nevertheless, the ethical dimensions of AI including data privacy, algorithmic fairness, and accountability remain largely unaddressed in most K-12 curricula (Kooli, 2023; UNESCO, 2022). These realities call for pedagogically sound strategies and evaluative tools that can help students navigate both the cognitive and moral complexities of AI in the classroom.

Despite AI’s potential to enhance learning outcomes, student adoption remains inconsistent. Barriers include fear or skepticism of the technology (Wang et al., 2022), limited understanding of its utility (Chai et al., 2020), and concerns about unethical use (Kooli, 2023; Pinski & Benlian, 2024). For example, the introduction of generative AI tools like ChatGPT has sparked academic integrity concerns, particularly regarding plagiarism, leading to uncertainty among both students and educators about its responsible use (Cotton et al., 2023; Stone, 2022). This hesitation reflects broader concerns about the readiness of educational systems to manage and guide AI use effectively. A further challenge is the way students often perceive AI narrowly as a tool for completing assignments rather than as a meaningful subject of inquiry or potential career domain (Chookaew et al., 2024; H. Zhang et al., 2023). This instrumental perspective may inhibit intrinsic motivation to explore, experiment, or innovate using AI. Therefore, while the infrastructure for AI adoption in education is expanding, student attitudes remain a central determinant of how effectively its potential is realized.

Factors Shaping Students’ Attitudes Toward AI

Student attitudes toward AI are influenced by a combination of individual characteristics and environmental factors. Attitudes encompass affective (emotional), cognitive (belief-based), and behavioral (intentional) components (Marx et al., 2023; Park & Kwon, 2023). Research emphasizes the role of prior exposure, digital confidence, and perceived relevance as individual-level determinants of these attitudes (Mertala et al., 2022). However, the school context plays a critical mediating role in shaping how these individual factors translate into actual engagement with AI.

Pedagogical strategies, teacher encouragement, peer interaction, and access to exploratory learning tasks collectively shape a school’s motivational climate. Self-Determination Theory (Deci & Ryan, 2000) offers a comprehensive lens for examining these dynamics. According to SDT, when students’ psychological needs for autonomy (feeling of control), competence (feeling effective), and relatedness (sense of belonging) are met, they are more likely to develop intrinsic motivation to engage with learning technologies like AI (Kilday & Ryan, 2022).

Empirical findings support this theoretical alignment. For example, autonomy-supportive environments that allow students to make choices in their AI learning, along with competence-enhancing feedback and socially engaging, peer-based activities, significantly contribute to more positive attitudes toward AI (Chiu et al., 2024; Sellami et al., 2023). These outcomes suggest that student motivation is not fixed but shaped by dynamic interactions between learners and their environments (Barretto et al., 2021). Therefore, the integration of SDT-informed instructional practices may serve as a foundation for improving student receptivity to AI technologies.

Gender Disparities in AI Engagement

A significant number of empirical studies reveal that gender-based disparities in AI learning contexts continue to emerge across educational settings. Research consistently shows that boys are more likely than girls to have early exposure to AI-relevant tools, often through informal, exploratory activities such as gaming or robotics clubs (Campos & Scherer, 2024; Zhou & Xu, 2007). These early experiences help cultivate technological confidence and interest, which persist through secondary and postsecondary education. In contrast, girls often face barriers including limited exposure, lack of relatable role models, and prevailing stereotypes that depict AI and technology fields as male-dominated (Antonio & Tuffley, 2014; Armutat et al., 2024; Sobieraj & Krämer, 2020).

However, these disparities are not immutable. Research demonstrates that inclusive instructional practices such as cooperative AI learning tasks, mentorship by diverse role models, and socially relevant design projects can significantly narrow gender gaps (Hsu et al., 2022; Ouyang et al., 2023). For example, Alvarez et al. (2022) found that when female students engaged with technology in socially meaningful contexts, their perceived competence improved, aligning closely with that of male peers (Li & Kirkup, 2007). These findings emphasize the socially constructed nature of gender disparities and point to school culture and curriculum design as key levers for promoting equity in AI education.

As students begin forming stable academic identities during high school, addressing gender-based disparities becomes even more critical (Master et al., 2016; Young et al., 2023). Efforts to increase AI participation among underrepresented groups must therefore attend to both systemic and psychological barriers that shape long-term engagement and identity development in the field.

Gaps in Existing Measurement Tools

Despite the surge in interest around AI education, there is a notable absence of developmentally appropriate, psychometrically validated instruments for assessing students’ attitudes toward AI. Existing tools such as the General Attitudes Toward Artificial Intelligence Scale (Schepman & Rodway, 2023) and the Attitudes Toward Artificial Intelligence Scale (Sindermann et al., 2021) were designed for adult populations and emphasize constructs like fear, utility, and societal risk. These tools are grounded in broad technology acceptance frameworks and fail to capture the unique motivational, ethical, and developmental considerations relevant to adolescents.

Recent efforts have moved toward student-focused tools. Persson et al. (2021) adapted a robotics-related scale to account for gender and ethnicity, while Suh and Ahn (2022) developed an AI Attitude Scale for youth. However, the latter was validated in populations with minimal AI exposure, limiting its utility in real-world school environments where AI instruction is actively implemented. Furthermore, these tools seldom incorporate core components outlined in the UNESCO K-12 AI education framework (UNESCO, 2022), which emphasizes ethical responsibility, collaboration, social inclusion, and human-centered design.

As AI becomes an integral component of classroom learning, there is a growing need for instruments that reflect the complex interplay of psychological, ethical, and contextual factors influencing how students engage with AI. A valid and developmentally appropriate scale can guide educators and policymakers in designing interventions that support not only digital readiness but also ethical and inclusive engagement with AI technologies.

Theoretical Framework and Scale Dimensions

To address these gaps, this study draws on Self-Determination Theory (Deci & Ryan, 2000) as a foundational framework to reconceptualize students’ AI attitudes across three core dimensions: Exploration (reflecting students’ curiosity and proactive willingness to engage with AI tools and resources, thus operationalizing autonomy by capturing students’ intrinsic motivation and self-directedness); Determination (capturing students’ persistent commitment to learning and mastering AI-related skills despite challenges, directly aligning with the competence construct in SDT, which emphasizes perceived effectiveness and capability in achieving desired outcomes); and Collaboration (assessing students’ ability and inclination to work with peers in AI-related tasks, clearly operationalizing relatedness by emphasizing social connectedness, peer support, and a sense of community in AI learning environments). These dimensions explicitly align with SDT’s psychological needs for autonomy, competence, and relatedness, making them both developmentally appropriate and theoretically robust. UNESCO’s guidelines further support these domains by promoting hands-on, socially inclusive, and ethically informed AI learning.

Additionally, this study examines whether students’ attitudes toward AI are associated with the motivational climate of their school environment. By assessing this relationship, the study tests the nomological validity of the proposed AI Attitude Scale, anchoring it not only in psychometric structure but also in its relevance to real-world educational dynamics. Ultimately, the study investigates whether these attitudes differ across genders, thereby contributing to ongoing discussions about equity and inclusivity in AI education. Accordingly, the present study is guided by the following research questions:

Based on the theoretical frameworks and prior research discussed above, the following hypotheses were formulated based on the research questions to guide the empirical validation:

Methodology

This study employed a sequential three-phase data collection process to ensure rigorous testing and validation of the developed scale and hypotheses as outlined in the literature (DeVellis, 2003; Hinkin, 2005). Each phase was designed to progressively contribute to the research question, incorporating both exploratory and confirmatory statistical analyses. This methodology ensures the robustness of the scale by exploring, validating, and empirically testing its underlying structure, reliability, and validity to support its theoretical and practical applicability. Further details of the methodology have been provided in the following subsections:

Instrument Design

The survey was developed to measure students’ attitudes toward AI and the supportive learning environment influencing these attitudes (Appendix 1). Following established scale development guidelines recommended by DeVellis (2003) and Churchill (1979; see Figure 1), the questionnaire was structured into two distinct sections: Section A assessed students’ attitudes toward AI, while Section B evaluated the supportive learning environment motivating students’ AI engagement.

Scale development procedure followed in the study.

To generate initial survey items, the research team conducted an extensive literature review of existing AI attitude scales (Schepman & Rodway, 2023; Sindermann et al., 2021; Suh & Ahn, 2022). However, these existing scales predominantly targeted general technology acceptance among adults and were thus insufficient for assessing the AI attitudes of high school students actively learning about AI in educational settings. Consequently, new, developmentally appropriate items were specifically created to effectively capture students’ AI attitudes. Multiple rounds of iterative discussions and revisions among the research team members were undertaken until consensus was reached.

Section A of the questionnaire was specifically grounded in Self-Determination Theory (Deci & Ryan, 2000), emphasizing autonomy, competence, and relatedness as core factors shaping students’ attitudes toward learning. Additionally, scale items were aligned with UNESCO’s AI education guidelines, ensuring a globally recognized theoretical foundation for evaluating students’ attitudes toward AI (UNESCO, 2022). Section B was designed to highlight the significance of supportive learning environments in fostering favorable attitudes toward AI (Kilday & Ryan, 2022; Sellami et al., 2023).

The initial draft of the questionnaire, which included Sections A and B, comprised 30 items assessed using a 5-point Likert scale ranging from 1 (Strongly Disagree) to 5 (Strongly Agree). Section A, designed to measure students’ attitudes toward AI, initially consisted of 20 items, while Section B, focused on the supportive learning environment, included 10 items. To ensure content validity, the questionnaire was reviewed by a panel of 10 experts specializing in AI education and educational psychology. Each expert rated the relevance and clarity of the items using a 4-point Likert scale (1 = Not Relevant to 4 = Highly Relevant), in accordance with the guidelines of Polit and Beck (2006). Experts also provided qualitative feedback on item wording, conceptual alignment with the targeted constructs, and developmental appropriateness for high school learners. Based on these evaluations, the research team calculated the Item-Level Content Validity Index (I-CVI) for each item, applying a minimum threshold of 0.78. Items that received low I-CVI scores or demonstrated conceptual redundancy were revised or removed. Guided by expert recommendations and established psychometric standards, the refinement process resulted in a final version of the questionnaire comprising 25 items: 17 in Section A and 8 in Section B, each demonstrating strong content validity, theoretical coherence, and suitability for high school students. Detailed CVI results are provided in Supplemental Tables 1 and 2.

Sample and Procedure

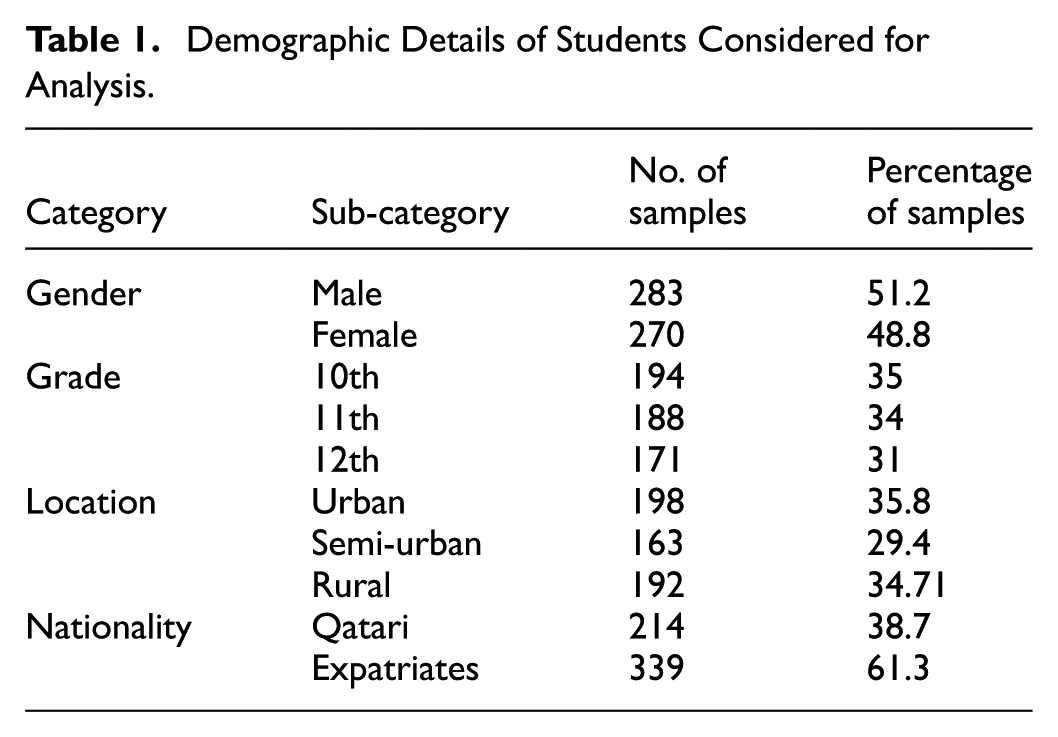

The study included a sample of 553 students, adhering to Hinkin’s (2005) recommendation of maintaining a participant-to-item ratio ranging from 1:4 to 1:10 for scale validation. In this study, a 1:5 ratio was applied to confirm the adequacy of the sample size for statistical analysis. Participants were high school students enrolled in grades 10, 11, and 12, comprising 194 students from grade 10, 188 from grade 11, and 171 from grade 12, with a gender distribution of 283 male and 270 female students. A purposive sampling method was employed to select students enrolled in the Computing and Information Technology course, a core curriculum subject designed to promote AI literacy. This sampling approach ensured that participants had prior formal exposure to AI, aligning with the research objectives. The sample represented a diverse group of students in Qatar, including both Qatari nationals and expatriate peers from various Asian and African countries. Students were drawn from government schools across urban, semi-urban, and rural areas, ensuring representation of varied socio-cultural and educational contexts (see Table 1).

Demographic Details of Students Considered for Analysis.

Data collection was carried out in three phases after receiving ethical clearance from the University Institutional Review Board (XX-IRB 222-EA/24). In Phase 1, 364 questionnaires were distributed, yielding 243 responses (66.7% response rate), of which 223 were retained after data cleaning. In Phase 2, 223 questionnaires were distributed, resulting in 211 responses (94.6% response rate), with 200 retained. In Phase 3, 200 questionnaires were distributed, resulting in 183 responses (91.5% response rate), with 130 retained after data screening. The questionnaire was administered in a pen-and-paper format, and research team members supervised each data collection session to support comprehension and ensure completion. Each session lasted approximately 30 min and was conducted in designated classrooms with school approval.

The study design minimized risk by ensuring that all responses were anonymous, with no personally identifiable information collected at any stage. Participation was entirely voluntary; students were informed about the purpose of the study, the confidentiality of their responses, and their right to withdraw at any time without penalty. Informed consent was obtained from parents or legal guardians prior to data collection, and student assent was also secured at the time of participation. The potential benefits of the study including advancing knowledge about equitable and inclusive AI education were considered to far outweigh any minimal risks to participants

Data Analysis

To ensure a rigorous and systematic validation of the undertaken constructs, the data analysis was done separately for data collected in three stages. Using separate datasets for EFA and CFA aligns with best practices in psychometric research and prevents overfitting (Hair et al., 2010; Kline, 2015). Likewise, the third dataset was reserved for conducting t-tests and correlation analyses to further validate group differences and factor interrelations. Additionally, the use of t-tests and correlation analysis provides a broader understanding of the construct’s applicability and relational dynamics, as recommended by Field (2018). This phased approach not only enhances the reliability and validity of the findings but also ensures that the scale can be generalized across different populations. The data analysis began after confirming that all student entries were complete and free of missing data. The details of the analysis done in phases are as follows:

Phase 1: The data collected from 223 students in Phase 1 was assessed to determine its suitability for conducting Exploratory Factor Analysis (EFA). This analysis aimed to identify latent factor structures and refine scale items by examining patterns within the data. To ensure the correlation matrix was not random, the Kaiser-Meyer-Olkin (KMO) value was used (Kaiser, 1974), along with Bartlett’s test of sphericity to assess sample adequacy. EFA was conducted using Principal Component Analysis with Varimax rotation to extract and consolidate variables into fewer factors. This analysis was performed using SPSS Statistics 29. Factors with factor loadings greater than 0.40 were considered significant (Stevens, 2009), forming the basis for the subsequent analysis, which examined the relationships between each variable and its corresponding factor. Additionally, the reliability of each factor was assessed using Cronbach’s alpha to verify the internal consistency of the data.

Phase 2: Confirmatory Factor Analysis (CFA) and nomological validity was conducted using AMOS software version 29. A separate dataset collected in Phase 2 consisting of 200 students, which included both male and female participants, was utilized. The CFA was performed to validate the factor structure identified during the Exploratory Factor Analysis (EFA), ensuring that the hypothesized model adequately represents the underlying variables (Kline, 2015). By using a separate dataset, we minimized the risk of overfitting and enhanced the model’s generalizability (Byrne, 2013). The model’s goodness of fit was assessed using several indices, including Chi-Square/df, Root Mean Square Error of Approximation (RMSEA), Standardized Root Mean Square Residual (SRMR), Comparative Fit Index (CFI), Tucker-Lewis Index (TLI), and Incremental Fit Index (IFI). Additionally, the convergent validity of the factors was evaluated using Average Variance Extracted (AVE) values, ensuring that these values exceeded 0.50 (Fornell & Larcker, 1981), which indicates the average amount of variance that a factor explains within its variables. The reliability of each factor was measured using Composite Reliability (CR), with a threshold of 0.70 or higher to ensure adequate internal consistency. Furthermore, the discriminant validity of the factors was assessed, confirming that the square root of the AVE for each factor was greater than the intercorrelations among them (Sultana & Farooq, 2024).

Phase 3: After confirming the dimensions of students’ AI attitudes, a descriptive statistical analysis was conducted using the Phase 3 dataset (N = 103) to examine the presence or absence of gender disparities in students’ attitudes toward AI. Using an Independent samples t-test, the sample data was analyzed to determine whether the factors comprising students’ AI attitudes significantly vary among male and female students. Further, a correlation analysis was performed between the factors of students’ AI attitudes, to determine the dependency of one factor on the other. The Pearson correlation coefficient was evaluated, and its value was used to determine the strength and direction of the relationship between these factors.

Results

A total of 553 student responses were gathered across the three phases of data collection. Table 1 provides the demographic profile of the overall sample to offer contextual understanding of the participants’ backgrounds and to support the generalizability of the study’s findings.

Given that the survey comprised two distinct sections, Section A (attitudes toward AI) and Section B (supportive learning environment), separate EFAs were performed for each section. For Section A, Principal Component Analysis (PCA) with Varimax rotation identified three distinct dimensions representing students’ AI attitudes. These dimensions-Determination, Exploration, and Collaboration-were identified based on factor loadings, theoretical alignment, and conceptual coherence with prior literature, as well as UNESCO’s AI education framework (UNESCO, 2022; see Table 2). The naming of these factors adhered to established psychometric practices, deriving labels from item content, thematic consistency, and theoretical grounding (Hinkin, 2005). The factor loadings ranged from 0.544 to 0.859, indicating strong item-factor relationships. Cronbach’s alpha was calculated for each factor, yielding reliability coefficients ranging from .772 to .915, which exceeds the recommended threshold of 0.70 (Ten Berge, 1995), confirming satisfactory reliability (see Table 3).

Definitions of the Extracted Dimensions of Student Attitudes Toward AI as per UNESCO (2022).

Illustration of EFA Results of AI Attitude and Motivation.

For Section B, the items developed to measure the supportive learning environment emerged as a unidimensional construct named “Motivation.” This factor represents the consistent support students perceive from teachers and the school environment, contributing to their engagement and interest in technology-based learning (Sellami et al., 2023). Initially, after the expert validation process, Section B contained eight items; however, following the EFA analysis, three items were removed due to insufficient factor loadings (below 0.40) or significant cross-loadings. Consequently, the final Motivation construct comprised five items, with factor loadings ranging from 0.660 to 0.859, and Cronbach’s alpha of .843, indicating strong reliability. Thus, the final questionnaire used in subsequent analyses contained a total of 22 items.

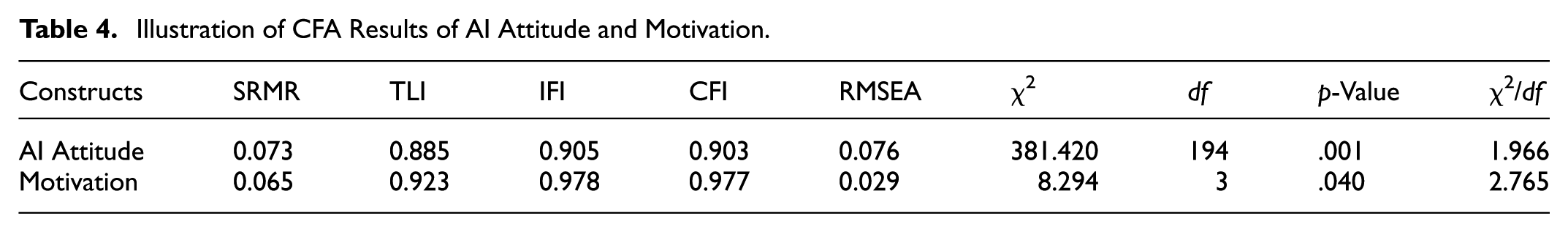

To evaluate model fit, several indices were used in accordance with best practices in structural equation modeling (Byrne, 2013; Kline, 2015). The CFA model for AI Attitude demonstrated a strong fit, as indicated by the following indices: SRMR = 0.073, TLI = 0.885, IFI = 0.905, CFI = 0.903, and RMSEA = 0.076. The chi-square statistic was significant (χ2 = 381.420, df = 194, p < .001), but the χ2/df ratio was 1.966, which falls below the recommended cut-off of 3.0, indicating an acceptable model fit. Similarly, the Motivation construct exhibited excellent fit indices as a unidimensional factor: SRMR = 0.065, TLI = 0.923, IFI = 0.978, CFI = 0.977, and RMSEA = 0.029. The chi-square value for this construct was also statistically significant (χ2 = 8.294, df = 3, p = .040), and the χ2/df ratio of 2.765 supports the adequacy of the model fit. These results confirm that both the multidimensional structure of AI Attitude and the unidimensional structure of Motivation align well with the empirical data. The overall CFA findings are presented in Table 4. This validation supports the structural integrity of the scale, enabling reliable use in further analyses related to students’ attitudes toward AI and the motivating educational context.

Illustration of CFA Results of AI Attitude and Motivation.

To evaluate the internal consistency and convergent validity of students’ AI Attitude and Motivation, Composite Reliability (CR), and Average Variance Extracted (AVE) were calculated. The CR values for all factors exceeded the recommended threshold of 0.70, indicating strong internal consistency (Table 5). Additionally, AVE values were assessed to determine the extent to which the items within each factor were correlated and represented the intended construct. While the AVE for collaboration is close to the suggested threshold of 0.50, its CR values exceeded 0.70, and the factor loadings for all items within these factors were greater than 0.50. These findings align with Fornell and Larcker’s (1981) criteria, supporting the model’s acceptable convergent validity (Table 5). Such results affirm the robustness of the measurement model, providing a reliable foundation for subsequent analyses.

Composite Reliability and Average Variance Extracted (AVE) Values for AI Attitude and Motivation Construct.

The discriminant validity of the factors contributing to students’ AI attitudes was evaluated using the Fornell-Larcker criterion, which states that the square root of each factor’s average variance extracted (AVE) should exceed the correlation between the other factors. The results indicated that the factors possess discriminant validity, supporting the distinctiveness of each factor in the model (Table 6).

Discriminant Validity Assessment: AVE, Its Square Root (in Italics), and Inter-Dimension Correlations.

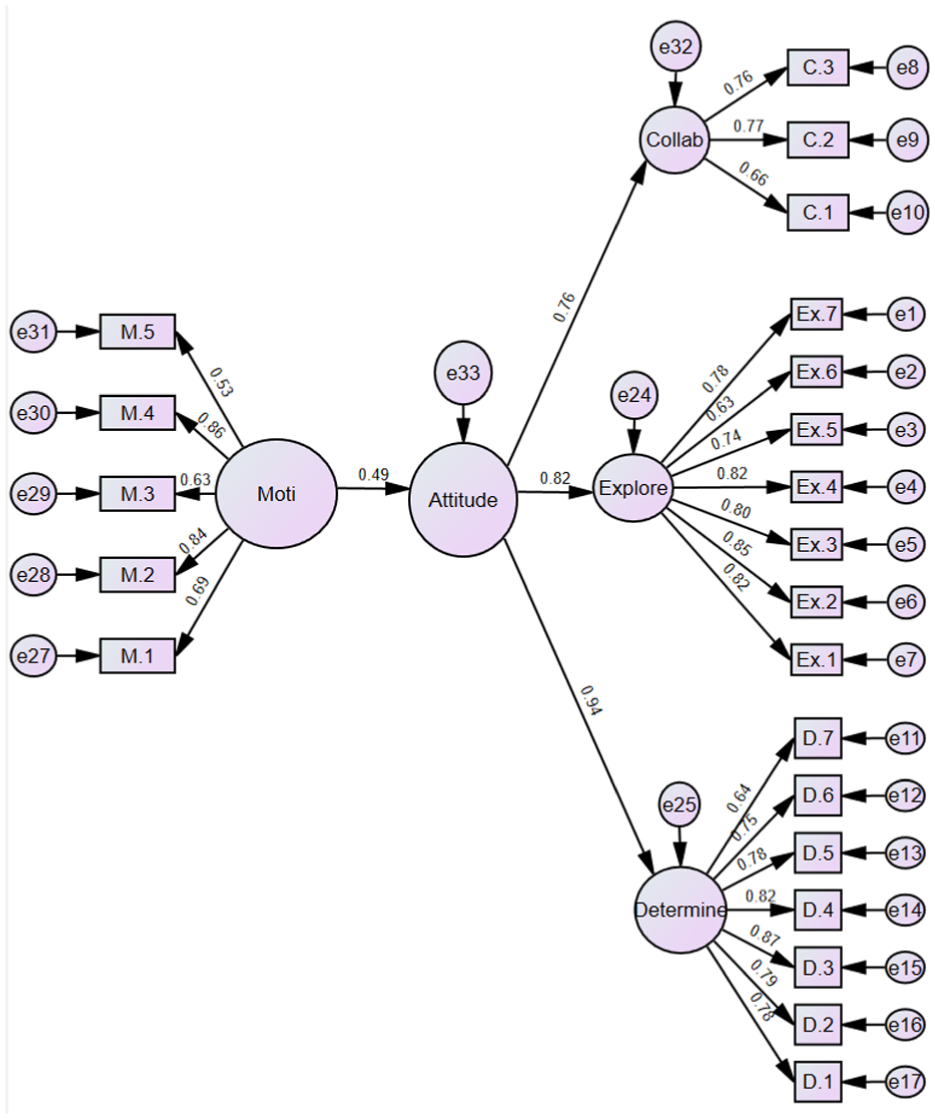

Further, nomological validity of the proposed scale was also established with the motivation construct defined as supportive learning environment, which was used as an external criterion to validate the relationship between students’ AI attitudes and their perception of external academic support. It was measured using a five-item scale developed from the conclusions of Sellami et al. (2023) regarding the influence of support on students’ effective engagement and interest in technology learning. Through this assessment, this study aims to evaluate the extent to which the students perceive their teachers and school environment as facilitators of AI learning.

The relationship between AI Attitudes and Motivation from Supportive Learning Environment was tested through structural modeling in AMOS 29 to confirm the strength of the nomological network. The findings indicate a positive correlation between students’ AI attitudes and their perception of a supportive learning environment (Figure 2), demonstrating that students who feel encouraged and supported in their AI learning journey exhibit higher engagement, motivation, and persistence in AI-related activities. This result aligns with Self-Determination Theory (Deci & Ryan, 2000), which emphasizes that autonomy-supportive and socially supportive educational settings enhance intrinsic motivation and positively influence students’ attitudes toward AI learning. The structural model further reinforces the theoretical foundation of the proposed scale by confirming that students’ AI attitudes are significantly shaped by the quality of their learning environment, providing empirical support for the scale’s nomological validity within the context of AI education research.

Structural equation model to assess the nomological validity of AI Attitude with motivation.

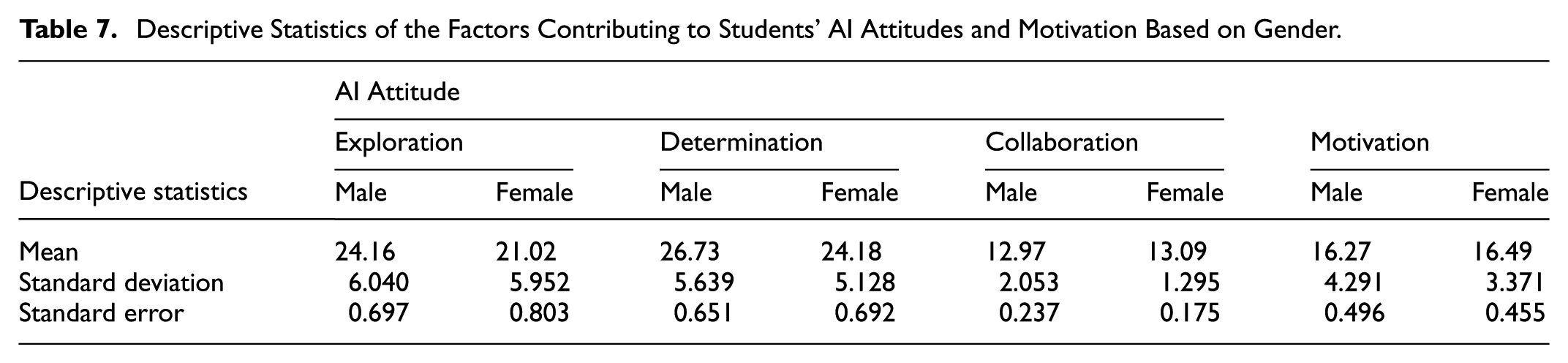

Descriptive Statistics of the Factors Contributing to Students’ AI Attitudes and Motivation Based on Gender.

Illustration of the Results of the Independent Samples t-Test.

As shown in Table 8, the Levene’s test confirmed equal variances for all attitude dimensions except Collaboration (p = .004), for which equal variances were not assumed. Based on this, the appropriate t-test results (assuming or not assuming equal variances) were interpreted accordingly. The results revealed statistically significant gender differences in the dimensions of Exploration (t = 2.948, p = .004) and Determination (t = 2.647, p = .009), where male students scored higher than their female counterparts. In contrast, the dimensions of Collaboration (p = .690) and Motivation (p = .748) did not show statistically significant differences between male and female students.

To assess the magnitude of these differences, Cohen’s d was calculated as a measure of effect size. The effect size for Exploration (d = 0.523) indicated a moderate effect, while Determination (d = 0.470) demonstrated a small effect. For Collaboration and Motivation, the effect sizes were negligible (d = −0.066 and −0.057, respectively), further supporting the non-significant findings. These results are visually summarized in Figure 3, which presents mean differences (Male − Female), p-values, and Cohen’s d across the four attitude dimensions. The graph highlights the areas where meaningful gender differences were found and reinforces the statistical interpretations derived from Table 8. Collectively, these findings suggest that gender disparities in AI attitudes are most pronounced in self-regulatory or agentic dimensions, such as Exploration and Determination, where males tend to report higher levels. In contrast, both genders appear to share comparable perceptions of motivation and collaborative engagement with AI, as indicated by the lack of significant differences in those dimensions.

Cohen’s d effect sizes for gender differences in AI Attitude dimensions.

Further, to determine whether the factors of students’ AI attitudes correlated with each other for male and female students, a correlation analysis was performed (Table 9). This analysis demonstrated the relationship between the factors and how they are interdependent, based on gender differences among students. For both male and female students, the three factors correlated with each other significantly, indicating a notable interaction between the dimensions of students’ AI attitudes.

Correlation Analysis of the Factors Contributing to Students’ AI Attitudes Based on Gender.

Correlation is significant at the .05 level (two-tailed).

Correlation is significant at the 0.01 level (two-tailed).

Discussion

Students’ attitudes toward AI or any form of technology are shaped by their exposure, expertise, and perceived significance of these tools in their daily lives and future careers (Milicevic et al., 2024). Students tend to exhibit favorable attitudes toward AI when they view it as an essential component of modern education (Su & Yang, 2024) and as a tool for personal growth (K. Kim & Kwon, 2024). Building on this understanding of how technological attitudes are formed, the present study empirically identified key dimensions that structure students’ attitudes toward AI. The current study confirmed that students’ AI attitudes constitute a multidimensional construct encompassing the dimensions of Determination, Exploration, and Collaboration.

These three empirically derived dimensions, Exploration, Determination, and Collaboration demonstrate clear alignment with the core psychological needs proposed in Self-Determination Theory (SDT): autonomy, competence, and relatedness. Exploration reflects autonomy, as it captures students’ intrinsic motivation, curiosity, and voluntary engagement with AI tools. Students who actively seek out AI experiences inside and outside the classroom (K. Kim & Kwon, 2024) do so from a sense of self-direction, indicating a high degree of autonomy in their learning. Determination aligns with competence, reflecting students’ persistence, mastery orientation, and confidence in overcoming AI-related challenges. This was evident in students’ career aspirations and their inclination to pursue AI-related higher education, particularly among those who viewed AI as a means to solve real-world problems and enhance daily life. In contrast, those with less engagement exhibited lower levels of determination. Finally, Collaboration maps onto the need for relatedness, as it underscores the role of peer support, teamwork, and social connectedness in shaping positive attitudes toward AI. Collaborative learning experiences provide students with opportunities to build meaningful interpersonal relationships while navigating AI content, thereby fulfilling their need to feel connected and supported (Chiu et al., 2023; Ouyang et al., 2023). Together, these findings affirm that students’ engagement with AI is deeply rooted in the satisfaction of their psychological needs, offering a theoretically grounded understanding of their attitudes toward emerging technologies.

Gender-based analysis yielded further insights (see Tables 8 and 9). Significant differences were observed in the Determination and Exploration dimensions, with male students scoring higher. These findings are consistent with literature suggesting that gendered disparities in access, confidence, and social expectations contribute to differences in engagement with technology (Horta & Tang, 2023). For example, girls often report fewer early opportunities to explore emerging technologies (Master et al., 2016), which may result in lower self-efficacy and confidence in pursuing AI careers (Asio & Sardina, 2025). Societal perceptions of technological competence and internalized stereotypes can also shape students’ beliefs about AI (Sultana et al., 2025). However, such disparities are not fixed. Inclusive instructional strategies, visible role models, and hands-on learning experiences can significantly mitigate gender gaps in AI attitudes (Pérez-González & García-González, 2025).

Interestingly, no significant gender differences were observed in the Collaboration dimension. This may be attributed to the inherently inclusive and socially engaging nature of collaborative AI learning tasks. The items in this dimension such as enjoying teamwork, learning more through group work, and addressing ethical concerns while programing reflect a collective learning experience that transcends individual differences. Collaborative environments tend to create egalitarian spaces where students, regardless of gender, contribute ideas, share responsibilities, and support one another. Moreover, peer interaction in AI projects provides both male and female students with opportunities to learn socially, which can buffer the effects of confidence gaps often seen in individual learning contexts. These findings align with previous literature suggesting that team-based tasks encourage equitable participation and foster a sense of relatedness, which may naturally mitigate gender-based disparities (Chiu et al., 2023; J. Kim et al., 2022). Therefore, it is possible that the relational dynamics in group-based AI learning promote inclusive attitudes, resulting in negligible gender differences in this domain.

Correlation analysis further demonstrated that Exploration, Determination, and Collaboration are significantly interrelated (Table 9). This suggests that enhancing one domain such as increasing opportunities for AI exploration may have positive ripple effects on students’ persistence and peer collaboration. Such interconnectedness highlights the value of holistic interventions that support multiple aspects of students’ AI engagement.

Although the gender-based analysis did not reveal any differences in motivation provided by teachers and schools to male and female students, the importance of a supportive learning environment cannot be understated. It remains indispensable for the growth and persistence of students in the field of AI (Chiu et al., 2024). Teachers, through their interactive and experiential teaching methods, can enable students to explore the pros and cons of AI technologies (Hsu et al., 2021). Providing students with opportunities to experience an AI tool develops a sense of interest and curiosity in them, enabling a better understanding of the tool and its potential benefits. Additionally, explaining the real-world applications of AI tools to students through field trips, animations, and interactive videos can help deepen their understanding (Aung et al., 2022). Along with teachers, peer interaction can boost students’ confidence in utilizing AI (J. Kim et al., 2022). Peers, both males and females, can interact through exchanging information, instruction, and experiences while using a particular AI tool, making it easier to understand. Since some students view AI as fascinating, while others perceive it as complicated and challenging (Marrone et al., 2025), hands-on activities such as programing chatbots or training machine learning models can simplify concepts and foster positive attitudes (Sanusi et al., 2023).

Thus, the attitudes of students toward AI depend on diverse factors and can be viewed as a multidimensional construct (Katsantonis & Katsantonis, 2024). Multiple approaches and methods can be implemented to enhance students’ attitudes toward AI. In terms of pedagogy, teachers can tailor AI learning approaches and activities to enhance students’ engagement. This approach can be applied equally to both male and female students, combining hands-on exploratory tasks with collaborative reflections (Akhigbe & Adeyemi, 2020). Alternatively, differentiated strategies can be employed. Male students may exhibit lower attention to detail and prefer learning by doing, making exploratory hands-on AI learning most effective. In contrast, females may value a deeper understanding before engaging in application, preferring a reflective and structured learning approach (Ai, 1999). Additionally, informal learning opportunities can enhance students’ confidence in pursuing AI beyond the classroom. Such experiences allow students to develop autonomy and relatedness with peers in different environments by exchanging knowledge and skills. This form of learning fosters collaborative and team spirit. Parental or family support also plays a crucial role in shaping students’ positive AI attitudes by providing resources, encouragement, and opportunities for learning (Sha et al., 2016). Such support should be equitably accessible to both male and female learners (Figure 4).

A proposed framework for enhancing students’ AI attitude and to reduce possible gender disparities.

Limitations and Future Research

This study offers a valuable contribution by developing and validating a multidimensional instrument to assess high school students’ attitudes toward artificial intelligence (AI). Nevertheless, certain limitations should be acknowledged to inform future research directions. The sample consisted of 553 students enrolled in a Computing & IT course, purposively selected to ensure exposure to AI-related content. While appropriate for initial validation, this sampling approach may restrict the generalizability of findings to students from other academic backgrounds who may have less familiarity with AI. To broaden applicability, future studies should consider more diverse student populations across academic streams, geographic regions, and cultural contexts.

The use of a cross-sectional, self-report survey design also limits the ability to capture how attitudes toward AI develop over time or in response to specific educational interventions. Longitudinal research or experimental designs could provide valuable insights into the dynamics of attitude formation and change. Moreover, the instrument was designed specifically for high school students; its suitability for younger learners or those at early stages of AI exposure remains to be explored. Further validation using shorter or age-adapted versions of the scale would help ensure developmental appropriateness and measurement equivalence across demographic groups.

In addition, exclusive reliance on surveys may not fully capture the complexity of students’ experiences and motivations. Supplementing quantitative data with qualitative approaches, such as interviews, focus groups, or classroom observations, could offer richer interpretations. Lastly, exploring the alignment between students’ attitudes and actual behaviors, such as elective course choices, AI project participation, or career interests, would enhance the scale’s predictive utility and practical relevance.

Furthermore, while the scale employed a conventional Likert format beginning with “Strongly Agree,” this design choice was based on established psychometric practices and consistency with validated attitude measures (DeVellis, 2017). However, it may introduce a minor risk of acquiescence bias among respondents. Although such standard ordering supports response consistency and ease of understanding particularly for younger participants future research could explore alternative formats or include reverse-coded items to examine potential effects on data quality and response behavior. Together, these directions can help refine the AI-Attitude Scale, strengthen its theoretical foundation, and support the development of more inclusive and effective AI education strategies.

Conclusion

Students’ attitudes toward AI are one of the crucial elements that determine the success of any implemented AI curriculum. The attitudes of students vary depending on several factors, including the AI learning content, learning resources, and the learning environment. Providing students with the knowledge and skills to operationalize any AI tool will, in turn, help to generate a positive attitude toward AI. Since AI is a relatively new subject for young minds, it is essential to engage and explain AI concepts and tools to them in an engaging and interactive manner. Drawing upon the self-determination theory and the guidelines proposed by UNESCO, this study developed and validated a scale to measure students’ attitudes toward AI. The confirmed dimensions contributing to students’ AI attitudes were exploration, determination, and collaboration. Further, this study also evaluated whether there is a gender disparity in attitudes toward AI among students.

The results revealed that students’ attitudes in terms of determination and exploration varied among male and female students. Whereas, collaboration did not depict a similar difference in terms of gender. This evaluation highlights the importance of amending existing AI curricula to foster interest and intention among students to explore AI in their current and future learning endeavors. Since this study was the first of its kind, which evaluated gender disparity in AI attitudes among high school students, this form of evaluation might be employed with students in lower grades who are undergoing AI education in order to implement a revised AI curriculum at the K-12 level. This study, thereby recommends future researches to focus more on the gender disparity issues at all levels of K-12 education to develop a more equitable and sustainable AI education for both male and female students.

Supplemental Material

sj-docx-1-sgo-10.1177_21582440251378375 – Supplemental material for Exploring Students’ Attitudes Toward Artificial Intelligence (AI): Psychometric Validation of AI-Attitude Scale

Supplemental material, sj-docx-1-sgo-10.1177_21582440251378375 for Exploring Students’ Attitudes Toward Artificial Intelligence (AI): Psychometric Validation of AI-Attitude Scale by Almaas Sultana, Nafilah Abdul Latheef, Nitha Siby and Zubair Ahmad in SAGE Open

Footnotes

Appendix 1

Ethical Considerations

This study was approved by the Qatar University Institutional Review Board (QU-IRB 222-EA/24) prior to data collection. All procedures followed ethical guidelines as outlined by Qatar University and the APA Ethical Principles (Section 8.05). Written informed consent was obtained from the parents or legal guardians of all participants, and students’ assent was obtained at the time of data collection. Participation was voluntary, and all responses were anonymized to ensure confidentiality. Participants were informed of their right to withdraw at any time without penalty.

Author Contributions

Almaas Sultana: Writing - Original Draft, Methodology, Review & Editing; Nafilah Abdul Latheef: Writing - Original Draft; Nitha Siby: Data Curation; Zubair Ahmad: Conceptualization, Funding acquisition, & Editing.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work was funded by the Qatar Research, Development, and Innovation Council (QRDI; Grant ARG01-0502-230058).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The raw data supporting the conclusion of this article will be made available by the authors without undue reservation.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.