Abstract

Recent research on chatbots has revealed consumers’ skeptical attitudes toward them in the form of less interaction and inconsistent use intentions. This study investigates how three social attributes of chatbots (perceived warmth, perceived ability, and social presence) affect consumers’ perceived trust in chatbots and their intention to use them. Furthermore, the moderating effect of Implicit theory on perceived trust, willingness to use, and interaction satisfaction was tested. An empirical analysis of a questionnaire survey of 303 consumers indicated that the perceived warmth, competence, and social presence of chatbots positively influence consumers’ trust in chatbots. Moreover, the perceived trust toward chatbots mediates the relationship between the perceived warmth, competence, and social presence of chatbots (IV), consumers’ continuous usage intention, and interaction (DV) satisfaction. Moreover, Implicit theory, as beliefs about the variability of human characteristics (such as intelligence, personality) gradually formed by individuals in interpersonal interactions, can affect individuals’ cognitive processing and behavioral decision-making. Implicit self-theory can be divided into Entity theory and gradient theory based on different beliefs. This article examines the applicability of Implicit self-theory in the field of trust and explores the moderating role of Implicit self-theory. Whether it is the entity theorist or gradient theorist, once they trust a chatbot, they will increase their willingness to use it and improve their interaction satisfaction. It also discusses ways to improve the design of chatbots and maximize their utility, providing theoretical and managerial implications for scholars and practitioners.

Introduction

In 1950s, Turing (1950) first conceptualized chatbots, and his description of intelligent machine behavior sparked widespread comprehension of chatbots. In recent years, chatbots have emerged as a prominent research area in artificial intelligence and natural language processing and have found extensive applications across various domains. An increasing number of companies are leveraging chatbots to augment or even replace human customer service with client support (Caldarini et al., 2022; Gelbrich et al., 2021; Roy & Naidoo, 2021; Sands et al., 2021). Chatbots are autonomous programs that engage in conversations with users using natural language interfaces, enabling users to pose queries or issue commands in everyday language and obtain the desired products or services through interactive dialogue (Brandtzaeg & Følstad, 2018; De Cicco, da Costa e Silva, & Alparone, 2021; De Cicco, da Costa e Silva, & Palumbo, 2021). Essentially simulating human-like conversations in a natural manner, these intelligent conversational computer programs employ techniques such as natural language processing, machine learning, and artificial intelligence to emulate online human-machine automated conversation systems. They serve as virtual assistants for customer service tasks (Luo et al., 2019; Rapp et al., 2021; Sojasingarayar, 2020).

Chatbots are increasingly assuming the role of service providers as an emerging interface between enterprises and consumers. The integration of chatbots into service experiences is driven by the cost-saving potential of artificial intelligence compared to human customer service (De Keyser et al., 2019; Huang & Rust, 2018; Zhu et al., 2022). The use of AI-enabled chatbots promises benefits in operational cost savings, service efficiency, and more positive user experiences (X. Cheng et al., 2022). For example, many e-commerce providers leverage chatbots in their endeavors to provide consumers with immediate response and 24/7 assistance (Chen & Lin, 2019; X. Cheng et al., 2022). In the finance industry, a chatbot named “Leo” is employed by the United Bank for Africa Plc to provide financial services for customers and enhance their engagement (Mogaji et al., 2021). In the hotel industry, a robotic concierge named “Connie” is used by Hilton Worldwide to provide information to guests and enhance their personalized experience (Tavakoli & Mura, 2018). During the COVID-19 pandemic, many people relied on chatbots to provide them with basic mental health support (Brown & Halpern, 2021), and a social chatbot named XiaoIce has been designed for long-term engagement with users to provide them access to empathetic conversations for emotional support (Skjuve et al., 2021). Chatbot assistance is more efficient when interacting with consumers during service and provides an enhanced user experience (Roy & Naidoo, 2021). Chatbots have evolved beyond mere interactive tools to powerful instruments that foster customer engagement and cultivate strong relationships between enterprises and customers (X. Cheng et al., 2022; De Cicco et al., 2020; Lin et al., 2023).

In recent years, with the rapid advancement of artificial intelligence technology, chatbots have evolved from mere information providers into complex social partners capable of simulating human communication (Adamopoulou & Moussiades, 2020a; Guingrich & Graziano, 2023; Rapp et al., 2021). This transformation represents not only a technological advancement but also a profound understanding of human emotional needs. In this process, perceived warmth, perceived competence, and social presence have emerged as key factors influencing user acceptance and satisfaction. Perceived warmth refers to users’ feelings of friendliness, care, and understanding exhibited by the chatbot (Liu et al., 2022; McLean et al., 2021). Research has shown that when chatbots display warm behaviors, users are more likely to establish trust and engage in deeper interactions. This perception of warmth can be manifested through the chatbot’s linguistic style, the timeliness of responses, and its sensitivity to user emotions (Caldarini et al., 2022; Han, 2021; Pelau et al., 2021).

Perceived competence pertains to users’ evaluation of a chatbot’s ability to perform tasks. This perception of competence can be realized through the chatbot’s expertise, problem-solving strategies, and accurate understanding of user needs (Caldarini et al., 2022; Nuruzzaman & Hussain, 2018; Wollny et al., 2021; Zheng et al., 2023). In the context of this study, competence is identified as one of the two fundamental dimensions influencing individuals’ social judgments of other entities (e.g., chatbots), referring to the perceived abilities or expertise of the target individual, which are typically associated with traits such as intelligence, knowledge, skills, and efficiency (Fiske et al., 2007; Gilad et al., 2021). Research has indicated that when consumers perceive higher levels of competence in their interactions with chatbots, they tend to develop greater trust in these systems (X. Cheng et al., 2022). Moreover, perceived competence in chatbots significantly impacts customer satisfaction, thereby influencing their willingness to recommend and continue using these systems (Kallel et al., 2023).

Social presence describes users’ perception of the chatbot as a social entity. When chatbots are able to exhibit human-like social behaviors and modes of communication, users may perceive them as genuine social partners, thereby enhancing the naturalness and enjoyment of interactions (Caldarini et al., 2022; Skjuve et al., 2021). Recent research has further explored how these factors interact to influence users’ acceptance and experience with chatbots. For instance, Caldarini et al. (2022) noted in their literature review that chatbot design should take into account users’ perceived warmth, perceived competence, and social presence to enhance overall user satisfaction and intention to use. Additionally, Okonkwo and Ade-Ibijola (2021) emphasized the potential applications of chatbots in the educational domain in their systematic review, highlighting how these social attributes can enhance the learning experience. Furthermore, studies have found that as users’ trust in chatbots and their self-disclosure increase, the human-machine relationship undergoes significant emotional exploration and engagement, indicating that the social presence of chatbots can positively impact users’ well-being (Chaves & Gerosa, 2021; Ciechanowski et al., 2019; Skjuve et al., 2021).

Despite its potential advantages over traditional human customer service, chatbot technology is still in its early stages, and limited research is available. Consequently, its promotion and application in the market have not been as smooth as anticipated (Pereira et al., 2022; Pizzi et al., 2023; Roy & Naidoo, 2021). Existing studies indicate that consumer trust in chatbots and willingness to use them are relatively low. Consumers prefer to interact with humans rather than chatbots (Adam et al., 2021; Ashfaq et al., 2020; Van Pinxteren et al., 2020), and their satisfaction levels vary (Shumanov & Johnson, 2021). Furthermore, according to previous research, consumers expect chatbots to possess human-like interaction capabilities when performing tasks because they may eventually replace human roles (Go & Sundar, 2019). However, current literature on cultivating consumers’ perceived trust in chatbots remains limited. Consequently, there is a relative scarcity of studies exploring the relationship between consumer trust in chatbots, their willingness to use them, and their satisfaction with their interactions. Scholars have found that the social attributes of chatbots not only influence consumers’ social interaction goals but also impact their reactions toward chatbot behaviors (X. Cheng et al., 2022; Go & Sundar, 2019; Konya-Baumbach et al., 2023; Schanke et al., 2021; Sheehan et al., 2020). Therefore, designing chatbots’ social properties to enhance consumer trust has become an important focus of current research. Considering these research gaps, this study aims to address the following three questions:

RQ1: How do consumer perceptions of chatbots’ social attributes (perceived warmth, perceived competence, and social presence) affect their willingness to use and satisfaction with their interactions? What are the mechanisms underlying this phenomenon?

RQ2: What role does consumers’ trust in chatbots play in exploring their intention to use and satisfaction with their interactions?

RQ3: Do implicit self-theory influence consumer’ continuance intention to use chatbots and satisfaction with their interactions?

To address the aforementioned questions, this study aims to establish a theoretical framework model. This model focuses on the intrinsic social attributes of chatbots, drawing upon relevant literature such as consumer psychology and social presence theory. From the perspectives of social relationships and consumer perception, it explores the subtle and complex interrelationships among perceived warmth, perceived ability, social presence, satisfaction, and intention to use during the decision-making process of consumer-chatbot interactions. Furthermore, this study examines the application of implicit personality in consumer behavior research to enhance our understanding of consumer behavior and effectively predict and guide it. In this context, we consider chatbots to be intelligent technologies capable of replacing human customer service representatives while interacting with individuals. We aim to explore whether chatbots can effectively address various queries encountered by consumers during usage and ascertain their potential for enhancing consumer satisfaction and eliciting positive emotional responses during shopping experiences.

Literature Review and Hypothesis Development

Perceived Warmth and Trust

In the digital age, chatbots, as a significant application of artificial intelligence, have been widely utilized in areas such as customer service, online shopping, and social media (Adam et al., 2021; Doherty & Curran, 2019; Følstad & Skjuve, 2019; Sheehan et al., 2020; Torous et al., 2021). These intelligent systems interact with users through natural language processing, providing information, solving problems, and enhancing the user experience (De Keyser et al., 2019; Huang & Rust, 2018; Zhu et al., 2022). Consumer trust in chatbots not only determines the quality of the user experience but also affects the intention for continued use (Hsiao & Chen, 2022; Kasilingam, 2020; Yen & Chiang, 2021). However, the formation of trust is a complex process that involves multidimensional psychological factors. Among these factors, perceived warmth is regarded as a key determinant of trust development. Perceived warmth refers to the emotional characteristics perceived by consumers during their interactions with chatbots, such as friendliness, care, and understanding (Ang et al., 2018; Liu et al., 2022; McLean et al., 2021). This perception of warmth can evoke positive emotional and behavioral responses, significantly enhancing consumer trust in the chatbot, which in turn influences their usage behavior and satisfaction (Bufquin et al., 2017; Choi et al., 2016; Ehrke et al., 2020; Gao & Mattila, 2014; Ivens et al., 2015; Kolbl et al., 2020; Möller & Herm, 2013; Shuqair et al., 2021).

Perceived warmth is one of the critical dimensions in Cuddy et al.’s (2007) dual-dimension theory, with the other dimension being perceived competence. According to this theory, individuals assess both warmth and competence when evaluating others or technology (Cuddy et al., 2007; Fiske et al., 2007). In human-computer interactions, perceived warmth can foster an emotional connection between consumers and chatbots, leading to the formation of trust. This theory is equally applicable in interactions between consumers and chatbots; when consumers perceive chatbots as exhibiting traits of care and emotional support, they are more likely to trust the chatbot and even regard it as a social partner.

Research by Ltifi (2023) indicates that when chatbots display human-like social behaviors and modes of communication, users are more likely to perceive them as genuine social partners, thereby enhancing the naturalness and enjoyment of interactions. This perception of warmth and competence is crucial for establishing user trust (Ltifi, 2023). Huang and Rust (2021) found in their study that the empathy and friendliness of chatbots are major predictors of consumer trust (Huang & Rust, 2021). In the customer service sector, the anthropomorphic design elements of chatbots, such as human-like appearance and socially oriented communication styles, have been shown to significantly influence users’ sense of social presence, satisfaction, trust, and empathy (Go & Sundar, 2019; Roy & Naidoo, 2021; Schanke et al., 2021; Sheehan et al., 2020). Experimental research by Janson (2023) pointed out that these anthropomorphic design elements enhance user trust and satisfaction by increasing social presence. In service delivery dialogue scenarios, perceived warmth has the potential to enhance the social proximity between humans and chatbots, which is vital for building trust (Ehrke et al., 2020). Finally, research by X. Cheng et al. (2022) examined the impact of anthropomorphism on consumer responses to chatbots from the perspectives of trust and relational norms. They found that the anthropomorphic attributes of perceived warmth and perceived competence positively influence consumers’ perceived trust in chatbots, while communication delays have a negative impact. Relational norms play a moderating role in the relationship between anthropomorphic attributes and chatbot trust (X. Cheng et al., 2022).

Although existing research has explored the relationship between perceived warmth and trust, the mechanisms of this relationship in the specific context of consumer interactions with chatbots have yet to be fully explained. Therefore, this study aims to investigate how perceived warmth influences consumers’ intention to use and satisfaction with chatbots through trust, and based on the aforementioned theoretical analysis, the following hypotheses are proposed:

H1: Perceived warmth positively impacts consumers’ trust in chatbots.

Perceptive Competence and Trust

Since the mid-1980s, many organizations have formulated and redesigned their management development strategies around competency-based systems. In this process, the term “competence” has been assigned various meanings (Epstein & Hundert, 2002; Le Deist & Winterton, 2005; McAuley, 1994; Roy & Naidoo, 2021). Perceived competence can be defined as an individual’s subjective judgment of the abilities of others or technologies, particularly in contexts of decision support or problem-solving. This concept originates from the competence-based trust model, which posits that competence is one of the fundamental dimensions in the process of trust formation (H. J. Lee, 2004). In digital interaction contexts, perceived competence encompasses not only the chatbot’s accurate understanding of user inquiries and the provision of effective solutions but also the timeliness and professionalism of its information delivery (X. Cheng et al., 2022; Y. Jiang et al., 2023; Lei et al., 2021). When users perceive the chatbot as having high competence, they are more likely to rely on the technology and develop a higher level of trust (Fiske et al., 2007; Gilad et al., 2021; McKnight et al., 2011).

Trust is a significant factor in consumer interactions with chatbots, as it not only determines consumers’ acceptance of the information provided by the chatbot but also influences their willingness to continue using it. Consumers tend to trust chatbots that they believe are capable of understanding and effectively responding to their needs. This is because perceived competence reflects the chatbot’s ability to handle user needs and problems effectively. Mayer et al. (1995) proposed in their research that ability, benevolence, and integrity are core factors of perceived credibility, with ability being one of the key dimensions of trust. Nordheim et al. (2019) explored the key factors influencing trust in customer service chatbots and proposed an initial model for enhancing trust in chatbots used in customer service.

Research indicates that when consumers perceive chatbots as exhibiting expertise and skilled competencies while providing services, they are more willing to trust the system and exhibit positive emotional feedback (Hollebeek et al., 2021). The study by Følstad et al. (2018) shows that correctly responding to user inquiries and providing practical recommendations are crucial elements for chatbots to gain customer trust. Consumers expect chatbots to address user issues smoothly, accurately, comprehensively, and with high quality, while providing valuable information during interactions to assist users in achieving high precision and success rates in problem-solving (Adamopoulou & Moussiades, 2020b; Følstad et al., 2019; Misischia et al., 2022). Consequently, chatbots with strong problem-solving capabilities and personalized customization abilities are more likely to be perceived as having superior competence levels (Chung et al., 2020). Existing research emphasizes the importance of competence in enhancing consumer trust in chatbots (Liew & Tan, 2018; Yen & Chiang, 2021). Adam et al. (2021) demonstrated that the perceived competence of chatbots, including their accurate understanding of user queries and provision of effective solutions, is a significant factor influencing user satisfaction. When chatbots display high levels of skills and expertise, consumers are more likely to trust the information and services they provide (Adam et al., 2021).

Although prior studies have examined the relationship between perceived competence and trust, the mechanisms underlying this relationship in the specific context of consumer interactions with chatbots have yet to be fully elucidated. Therefore, this study aims to explore how perceived competence influences consumers’ intention to use and satisfaction with chatbots through trust, and based on the aforementioned theoretical analysis, the following hypotheses are proposed:

H2: Perception competence positively impacts consumers’ trust in chatbots.

Social Presence and Trust

Social Presence Theory was originally proposed by Short et al. (1976) to explain the degree to which individuals perceive the “presence” of others when communicating through different media (Short et al., 1976). With advancements in technology, this theory has been applied to study trust mechanisms in human-computer interaction. Araujo (2018) posits that social presence is the perception of another life form or artificial entity by humans, which elicits a response from them; this characteristic of chatbots is a key factor in human interaction with these systems. The ability of chatbots to engage with humans fosters a sense of social presence among consumers, guiding them to view AI chatbots as a form of interaction akin to their interactions with other individuals (Qiao et al., 2022). When users establish a strong sense of connection with a chatbot, they experience closer emotional and social ties (Go & Sundar, 2019), leading to a positive attitude toward the chatbot and the development of trust (Schuetzler et al., 2020).

Research indicates that consumers tend to trust systems with high social presence when interacting with chatbots. This may be due to the fact that when chatbots exhibit human-like behaviors, users are more inclined to view them as intentional interaction partners, thereby ascribing a higher level of trust (Ng et al., 2020; Schuetzler et al., 2020; Toader et al., 2019). For instance, Ng et al. (2020) empirically demonstrated that enhancing a chatbot’s social presence, such as through the use of natural language generation techniques to improve the fluency and emotional expression of conversations, significantly increases users’ trust. This phenomenon can be further explained by Media Richness Theory, which posits that the richer the medium, the easier it is for users to perceive social cues through that medium, thus enhancing trust in the interaction partner (Suh, 1999; Sun & Cheng, 2007). In the context of chatbots, a rich medium is characterized by the chatbot’s ability to interact with users through various modes of expression (e.g., text, voice, and emojis). These expressions augment the chatbot’s social presence, thereby increasing user trust.

Toader et al. (2019) identified social presence as a key factor influencing consumer trust in chatbots. They found that when chatbots exhibit higher social presence through design and interaction methods, consumer trust in these systems increases (Toader et al., 2019). Ng et al. (2020) discovered in their experimental study that chatbots with social emotional characteristics can enhance user trust levels, and even in situations where the chatbot makes mistakes, users are more likely to trust chatbots with high social presence. The research by Go and Sundar (2019) explored how human-like characteristics of chatbots influence user trust and acceptance. They proposed that when chatbots exhibit more human traits, users perceive a higher social presence, which in turn increases their trust in these systems (Go & Sundar, 2019).

Despite existing research exploring the relationship between social presence and trust, the mechanisms of this relationship in the specific context of consumer interactions with chatbots have yet to be fully elucidated. Therefore, this study aims to investigate how the social presence of chatbots influences consumers’ intention to use and satisfaction through trust, and based on the aforementioned theoretical analysis, the following hypotheses are proposed:

H3: Social presence positively impacts consumers’ trust in chatbots.

The Mediating Role of Trust

Morgan and Hunt (1994) define “trust” as one party having confidence in the reliability and integrity of the exchange partner. Mayer et al. (1995) propose that trust is commonly defined as a consumer’s positive expectation of a technological system or service provider, particularly in situations where uncertainty and risk are present. Trust is crucial in highly uncertain environments, especially in online contexts, and has been a focal point in various technology-based chatbot studies (De Visser et al., 2016). Despite academic debates regarding consumer trust, it is considered an essential factor that cannot be overlooked in the interaction process between humans and chatbots. Følstad et al. (2018) confirmed the view that consumer interaction with chatbots has a positive effect on trust enhancement. Nordheim et al. (2019) explored the key factors of trust in customer service chatbots and proposed an initial model for enhancing trust in customer service chatbots.

As the intimacy and trust between consumers and chatbots increase, they will be willing to learn more about the functionalities of chatbots so that they can utilize and explore them (Qiao et al., 2022). Trust is a prerequisite for user acceptance and continued use of chatbot services (Pavlou, 2003). Huang and Rust (2021) explored the relationship between users and chatbots, including how perceived warmth affects the user’s intention to use chatbots through trust. The study found that users’ perceived warmth toward chatbots enhances trust, which in turn increases the intention to use (Huang & Rust, 2021). Følstad et al. (2018) conducted interviews to study how trust in chatbots is formed among users. The research emphasized the importance of trust in user-chatbot interactions and explored how trust affects users’ intention to use and satisfaction (Følstad et al., 2018). In addition, previous studies have revealed that perceived warmth can enhance consumer satisfaction with chatbot interactions (Chandra et al., 2022; Nguyen et al., 2022). On one hand, consumers’ inferences about chatbots’ good intentions rely on perceived warmth (Y. Xu et al., 2023). The higher the benevolence attributed to chatbots, the higher the trust that consumers will not be deceived, thereby increasing satisfaction with human-computer interaction (Gelbrich et al., 2021). On the other hand, perceived warmth is associated with considering others’ needs (Kull et al., 2021). The more warmth consumers perceive in chatbots, the more likely they are to feel cared for and understood, which in turn increases satisfaction with the chatbot (Packard et al., 2018). Based on the above theoretical analysis, this paper proposes the following hypotheses:

H4: Trust mediates the relationship between perceived warmth and continuance intention to use.

H5: Trust mediates the relationship between perceived warmth and interaction satisfaction.

According to Self-Determination Theory (SDT), competence is viewed as a fundamental psychological need that, when satisfied, becomes a necessary prerequisite for the motivation required to engage in and complete tasks (Ryan & Deci, 2002). In this study, competence serves as one of the two essential dimensions influencing individuals’ social judgments of other entities, such as chatbots, referring to people’s perceived ability or expertise of the target individual, typically associated with traits such as intelligence, knowledge, skills, and efficiency (Fiske et al., 2007; Gilad et al., 2021). Existing research has focused on the critical role of perceived competence in enhancing consumer trust in chatbots and the behavioral outcomes resulting from this trust—perceived competence influences users’ trust in chatbots, thereby affecting their intention to continue using and their satisfaction with these services (Liew & Tan, 2018; Tan & Liew, 2022; Toader et al., 2019; Yen & Chiang, 2021). Nguyen et al. (2022), based on SDT theory and HCI literature, found that perceived competence positively impacts performance satisfaction and system satisfaction. Individuals who believe they can successfully complete tasks are more likely to feel satisfied with their performance and the systems they use (Nguyen et al., 2022). X. Cheng et al. (2022) demonstrated that the anthropomorphic features of chatbots, particularly perceived competence, are among the key factors in building consumer trust in chatbots, which in turn influences consumers’ willingness to continue using chatbot services (X. Cheng et al., 2022; Shin et al., 2023; Yen & Chiang, 2021). To enhance consumer acceptance and satisfaction with chatbots, designers and managers need to focus on improving the perceived competence of chatbots, making them more efficient and reliable in interactions (Følstad et al., 2018; Mostafa & Kasamani, 2022; Nordheim et al., 2019). Additionally, the research highlights the potential impact of cultural differences on study outcomes, providing directions for future cross-cultural research (X. Cheng et al., 2022). Based on the above theoretical analysis, this paper proposes the following hypotheses:

H6: Trust mediates the relationship between perceived competence and continuance intention to use.

H7: Trust mediates the relationship between perceived competence and interaction satisfaction.

The theory of social presence provides evidence for how humans apply social cues to anthropomorphic design in intelligent technologies. Through interaction with anthropomorphized chatbots, users can perceive a sense of social presence (Roy & Naidoo, 2021), which is the feeling of coexistence with others during interaction (Short et al., 1976). The social attributes conveyed through social presence are considered both a facilitator of trust and a prerequisite for trust (Gefen & Straub, 2003). A series of studies have been conducted to explain the impact of chatbots on consumer behavioral intentions. Scholars have interpreted the influence of chatbot external representations (visual presentation, identity image, and conversational cues) on consumer behavior (consumer attitudes, trust, satisfaction, loyalty, and purchase intentions) from various perspectives, including the theory of anthropomorphism, social response theory, psychological ownership theory, and social presence theory (Go & Sundar, 2019). Moreover, research indicates that the intrinsic attributes of chatbots are also significant; social presence has a positive effect on consumer attitudes and purchase intentions. It is necessary to design chatbots anthropomorphically and create a sense of social presence (Araujo, 2018; Y. Cheng & Jiang, 2020; De Cicco et al., 2020).

In fact, a recent meta-analysis by Blut et al. (2021) finds support for the idea that humanizing chatbots leads to higher social presence perceptions, which can lead to higher intentions to use the bot, largely disproving previously proposed negative consequences (e.g., the uncanny valley effect; Mori et al. 2012). Moreover, social presence theory suggests that social presence influences the choice of a communication medium. The question whether social presence mediates the relationship between chatbot or robot characteristics and positive user responses has been the focus of a variety of other studies, for example, studies on bots as caregivers (Kim et al., 2013), bots as companions (Heerink et al. 2008), and bots with different personalities (K. M. Lee et al., 2006). All studies are in accordance that once social presence is created, usage will follow. This is because social presence is associated with higher consumer trust (Gefen & Straub, 2004; Toader et al., 2020) as well as higher socialness (Van Doorn et al., 2017). Therefore, high social presence should be positively associated with usage intentions, while low social presence should negatively affect the intention to use. Based on the above theoretical analysis, this study proposes the following hypotheses:

H8: Trust mediates the relationship between social presence and continuance intention to use.

H9: Trust mediates the relationship between social presence and interaction satisfaction.

The Moderating Role of Implicit Self-Theory

Implicit theories are defined as individuals’ beliefs about the nature of human attributes, characteristics, and phenomena more generally (Chiu et al., 1997; Dweck et al., 1995). Dweck (2013) developed the inner theory into the implicit self-theory and believed that implicit self-theory theory is the view held by ordinary people about the basic characteristics of people (such as intelligence, morality, and personality traits) and the variability of things around them, including the two dimensions of ontology and gradualism (Dweck et al., 1995; Dweck, 2013). Entity theorists believe that human characteristics are relatively fixed and that a person can’t change his or her behavior through hard work, whereas gradient theorists believe that human characteristics can change. They believe that individuals can change through continuous effort, learning, and gaining experience, which can significantly change and develop their self (Levy et al., 1998; Murphy & Dweck, 2016; Plaks et al., 2005).

In human-computer interaction research, trust is considered a fundamental prerequisite for facilitating interaction between robots and humans (Simon et al., 2020). Trust plays a significant role in consumer interactions with chatbots, reflecting consumers’ perceptions of the reliability and integrity of these systems (X. Cheng et al., 2022; Guo et al., 2022). Research on implicit theories has shown that people’s fundamental beliefs about human traits vary, and these beliefs subsequently influence their understanding and reactions to human behavior (Chiu et al., 1997). Although no studies to date have directly examined the moderating role of consumers’ implicit personality views on their willingness to use and satisfaction with chatbots, existing research indicates that individuals’ implicit personality views can affect their trust in others or groups (Akdim et al., 2023; Zierau et al., 2021). Zierau et al. (2021) compared the differences in trust between users interacting with chatbots and those using traditional web interfaces, finding that the design of chatbots—especially their conversational and anthropomorphic features—plays a crucial role in establishing user trust. The interface design of chatbots can enhance users’ perceptions of trust, thereby increasing their willingness to share personal information. Additionally, the study revealed that users’ trust in chatbot interfaces is more important than their trust in the service provider (Zierau et al., 2021). Heyselaar and Bosse (2019) explored how implicit social behaviors can be used to assess users’ perceptions of chatbots’ autonomy. They proposed a task based on implicit psychological theories to measure users’ implicit social behavior toward text-based chatbots. Preliminary findings indicated that participants adapted significantly more to chatbots when completing tasks together, compared to completing tasks alone (p < .017) (Heyselaar & Bosse, 2019). Allan et al. (2022) investigated the influence of implicit self-theory on people’s perceptions and responses to social robots, highlighting the importance of implicit self-theory in the field of human-robot interaction, particularly in the processes of social robot acceptance and trust-building. Differences in acceptance levels between essentialists and gradient theorists may affect their interaction satisfaction and willingness to continue using social robots (Allan et al., 2022).

H10: The implicit self-theory moderates the relationship between trust and consumer’s continuance intention to use chatbots.

H11: The implicit self-theory moderates the relationship between trust and consumer’s interaction satisfaction.

Based on the assumptions, this study proposes the theoretical research model, as shown in Figure 1.

Research model.

Research Design

Measurement

This study used a questionnaire-based survey method. The variables were measured using well-established scales from existing literature, and all scale items had previously been used to study behavioral intention outcomes between consumers and chatbots. The scales used in this study were all measured using a 7-point Likert scale ranging from 1 = “strongly disagree” to 7 = “strongly agree.” The measurement of perceived warmth was derived and adapted from a 4-item scale by Cuddy et al. (2007), Kervyn et al. (2012), and Y. Xu et al. (2023); the measurement of perceived competence utilized a 5-item scale from Bastiansen et al. (2022); the measurement of social presence employed a 5-item scale from Adam et al. (2021); trust was measured using a 4-item scale from H. Jiang et al. (2022); the measurement of implicit self-theory was based on the classic 8-item scale by Levy et al. (1998), the measurement of continuance intention to use adopted a 5-item scale from Qiao et al. (2022); and the measurement of interaction satisfaction employed a 5-item scale from Chung et al. (2020). For building the implicit self-theory index, this study averaged the eight items after reversing entry items. The higher the final score, the more inclined it is toward gradient theory; conversely, the lower the score, the more inclined it is toward entity theorists. All measurement modified and revised based on current research.

Data Collection

This study focuses on chatbots in the customer service domain, designed to simulate human conversation and provide instant customer support and problem resolution. The survey sample targets consumers who have interacted with chatbots, primarily emphasizing their general query handling capabilities, including FAQs, product information inquiries, and basic customer service tasks. Data were collected via an online questionnaire distributed and managed using the “Wenjuanxing” platform (https://www.wjx.cn/), a well-known online survey platform in China with a large user base, effectively reaching diverse audiences across different age groups, professions, and regions. The questionnaire design was based on established scales from existing literature, modified to meet the specific needs of this research. Prior to the official release, two rounds of pilot testing were conducted. The first round involved 10 doctoral students, aiming to verify the clarity and rationale of the questionnaire items. Based on their feedback, some item wording was revised, and the order of the scales was adjusted. The second round included 15 actual users, focusing on testing the questionnaire’s operability and response time to ensure that each participant could complete it within 10 min.

This study employed a snowball sampling method to investigate consumer behaviors regarding chatbot usage. After completing the questionnaire, each respondent was asked to recommend at least two acquaintances who met the criteria (i.e., consumers who had used chatbots at least three times in the past 6 months), thereby gradually expanding the sample through the referral network. Additionally, to avoid over-reliance on a single audience group, we specifically focused on consumers with varying educational backgrounds and levels of technological familiarity during the data collection period, ensuring that the research findings could be applicable to a broader population. After the data collection concluded, we screened the questionnaires, eliminating incomplete responses or those with apparent anomalies (e.g., excessively short response times or repeated selections of the same option), resulting in 324 valid responses.

A total of 324 questionnaires were collected. Following a thorough screening process to identify missing values and outliers, 21 responses were excluded, resulting in a final dataset comprising 303 valid questionnaires. Among the 303 respondents, the demographic categories were tabulated using the frequency distribution score, as shown in Table 1. The table shows that the male-to-female ratio of the respondents was reasonably distributed, and most respondents were aged between 18 and 45. Among them, undergraduate degree accounted for the majority, followed by 38.9% (118) with a junior college degree or below. Furthermore, as can be seen from the table, most respondents used chatbots 6 to 20 times per month.

Demographics of the Respondents.

Reliability and Validity

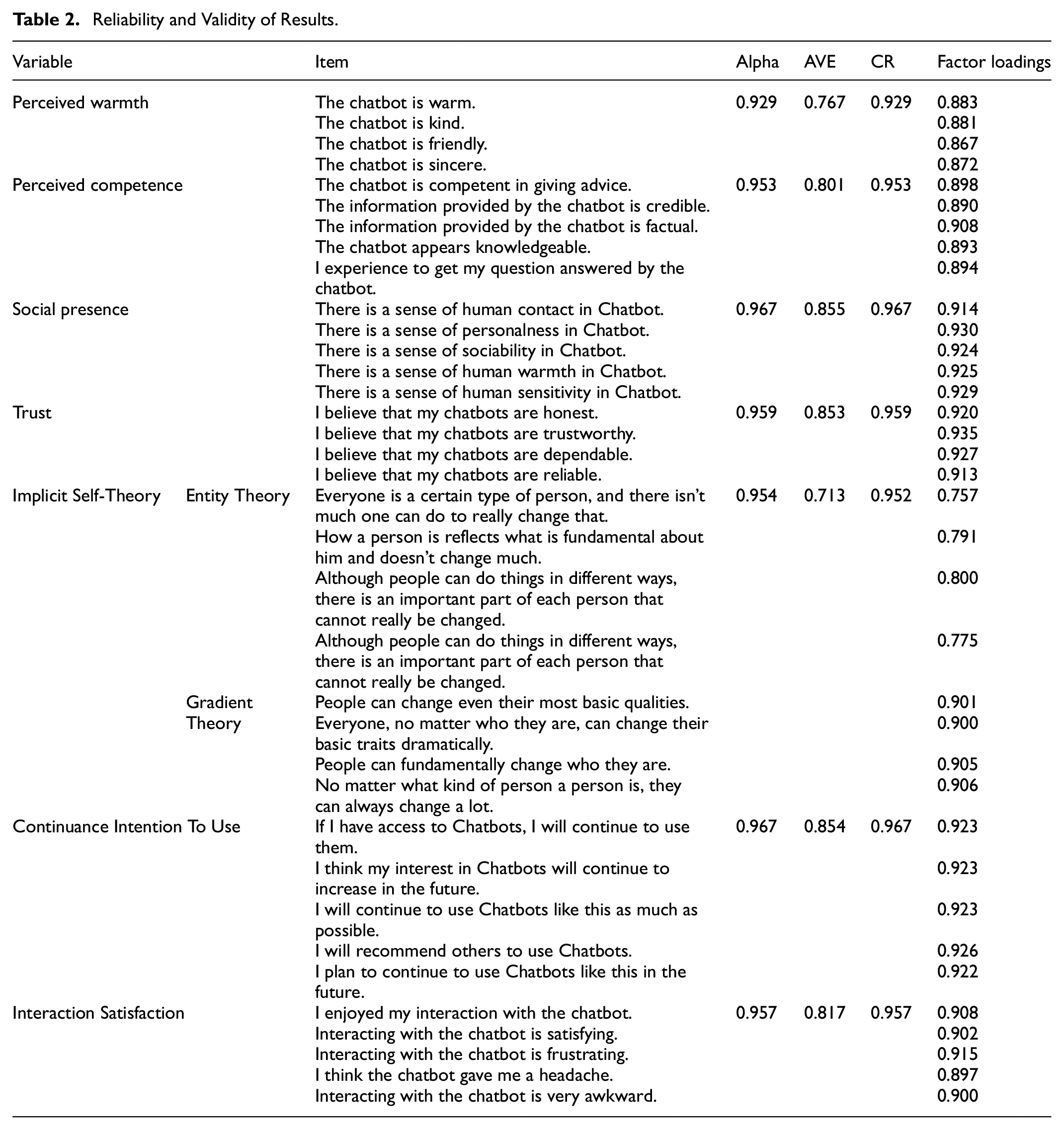

Internal consistency, convergent validity, and discriminant validity tests were conducted to test the reliability and validity of the model’s structure (Hair, 2009). Reliability and validity were evaluated by analyzing Cronbach’s alpha (α), factor loading, composite reliability (CR), and average variance extraction (AVE), as shown in Table 2. The reliability coefficients of all variables were greater than 0.7, indicating that the reliability of the questionnaire research data in this study was high. For the “alpha coefficient of deleted item,” after any item is deleted, the reliability coefficient will not increase significantly, which means that the item should not be deleted. Usually, the AVE is greater than 0.5, and the CR value is greater than 0.7. This means that the convergent validity is high. The confirmatory factor analysis (CFA) results confirmed that the AVE values corresponding to a total of eight factors were all greater than 0.5, and the CR values were all higher than 0.7, which meant that the data of this analysis had good convergent validity. In addition, we can also see from the table that when measuring the correlation between factors and analysis items (measurement items), the absolute values of the standardized load system are greater than 0.6 and are significant, which means that there is a good correlation between various variables.

Reliability and Validity of Results.

The validity analysis of the questionnaire is carried out through the SPSS26 version and the method of confirmatory factor analysis to realize the testing process. To assess discriminant validity, we adopted the method proposed by Fornell and Larcker (1981) for evaluating construct validity—factor loadings, considered latent variable correlations and cross-loading criteria to assess discriminant validity. Table 3 presents the discriminant validity test results. The square root of the AVE of all the variables was higher than the correlation between the variables, which supports discriminant validity. Table 4 provides the model fit of CFA and all the index value lager than criteria (root mean square error of approximation (RMSEA) = 0.048; comparative fit index (CFI) = 0.967; normalized fit index (NFI) = 0.920; standardized root mean square residual (SRMR) = 0.031).

Discriminant Validity Results.

Note. The square root value of AVE is in the diagonal.

Model Fit Summary.

Note. NFI = normalized fit index; CFI = comparative fit index; RMSEA = root mean square error of approximation; SRMR = standardized root mean square residual.

Common Method Bias

To assess the issue of common method bias, we employed Harman’s single-factor test. As the results of the explored factor analysis, the first factor alone could explain less than 40% (39.44%) of the variance. If a single factor (unrotated) extracted through exploratory factor analysis accounts for less than 50% of the variance, the common method bias is not severe (Podsakoff & Organ, 1986). Similarly, Hair (2009) suggested that if a single factor accounts for less than 50% of the variance, a severe common method bias does not exist. Consequently, it can be concluded that in the data used in this study, there is no significant common method variance issue.

Empirical Results

To verify H1 to H3, this study conducted a structural equation model (SEM) analysis using AMOS 28.0. The path analysis results demonstrated that perceived warmth is positively associated with trust (β = 0.184, p < 0.05; see Table 5), consistent with X. Cheng et al.’s (2022) findings on the effect of perceived warmth on trust (β = 0.179, p < .001), both supporting H1, which posits that perceived warmth positively influences consumer trust in chatbots. Additionally, perceived competence of the chatbot is positively associated with trust (β = 0.428, p < 0.001), aligning with X. Cheng et al.’s (2022) findings on the influence of perceived competence on trust (β = 0.581, p < 0.001), supporting H2, which posits that perceived competence positively affects consumer trust in chatbots. Finally, the social presence of the chatbot positively influences trust in the chatbot (β = 0.252, p < .001), consistent with Ng et al.’s (2020) research, which found that “Perception of social presence significantly predicted trust in chatbot, F(1, 409) = 91.539, p < 0.001, accounting for 18.3% of the variability in trust. An increase in perception of social presence was associated with a 0.348 unit increase in chatbot trust (β = 0.348),” and De Cicco et al.’s (2020) findings that social presence was positively associated with trust (β = 0.50, p < .001). These results support H3, which posits that social presence positively influences consumer trust in chatbots.

The Path Results.

To test the mechanism of trust among independent variable and dependent variables, this study adopted the method that Hayes (2013) proposed, Process Macro with Model 5 and 5,000 bootstrapping samples. The mediation test results (see Table 6) confirmed that trust mediates the relationship between perceived warmth and use intention (β = 0.133, 95%BootCI = [0.059, 0.158] excluded zero), the relationship between perceived competence and use intention (β = .125, 95%BootCI = [0.045, 0.169] excluded zero), and the relationship between social presence and use intention (β = 0.121, 95%BootCI = [0.066, 0.182], excluded zero), verified H4, H6, and H8. The mediation test results confirmed that trust mediates the relationship between perceived warmth and interaction satisfaction (β = 0.106, 95%BootCI = [0.052, 0.149], excluded zero), the relationship between perceived competence and interaction satisfaction (β = 0.109, 95%BootCI = [0.048, 0.169] excluded zero), and the relationship between social presence and interaction satisfaction (β = 0.103, 95%BootCI = [0.063, 0.179], excluded zero), verified H5, H7, and H9.

Mediation Results.

The moderation analysis indicated that implicit self-theory positively moderates the relationship between trust and the continuance intention to use (β = 0.100, p < 0.01; See Table 7), supporting H10. In other words, individuals who more strongly believe in gradient theory performed a higher use intention. The moderation analysis indicated that implicit self-theory positively moderates the relationship between trust and interaction satisfaction (β = 0.148, p < 0.01; See Table 7), supporting H11. In other words, individuals who more strongly believe in gradient theory performed a higher interaction satisfaction.

Moderation Analysis Results.

p < .05. **p < .01.

Discussion, Implications, Limitations, and Further Research Opportunities

Discussion

This study examined the evolution of consumer trust in chatbots and established a link between the three major social attributes of chatbots (perceived warmth, perceived competence, and social presence), consumer intention to use, and interaction satisfaction. In addition, research on implicit personality theory has shown that people have different essential beliefs about human traits, which, in turn, affect their understanding of and response to human behavior (Chiu et al., 1997). Therefore, this study further examines the moderating effect of implicit personality disposition on the relationship between consumer trust and willingness to use as well as trust and interaction satisfaction. Quantitative research was conducted using a the questionnaire survey, and our hypotheses were empirically verified using survey data from 303 consumers. The empirical test results supported most of the hypotheses.

First, the empirical results show that the social attributes of chatbots significantly impact consumers’ trust in chatbots, thus verifying hypotheses H1 to H3. More specifically, when consumers infer that a chatbot is warmer or more capable based on their interactions with it and when they can perceive it’s social presence, they tend to have more positive feelings and higher levels of trust toward it. Among the three social attributes, perceived ability has the largest effect size, explaining 22.6% of the variation in trust, and is therefore considered to have the highest priority among consumers. Chatbots should be able to intelligently answer complex questions to build trust with consumers. This finding is reasonable because consumers primarily interact with service providers (human agents or chatbots) to solve the problems encountered in the current consumption environment. Therefore, consumers are more inclined to emphasize the chatbots’ ability to solve problems.

Second, the findings suggest that trust mediates the relationship between the three social attributes, usage intention, and interaction satisfaction. As a key factor influencing consumers’ intention to use chatbots, trust has much to do with their social attributes. This study found that trust is important to mediate between perceived warmth, perceived competence, social presence, and interaction satisfaction. Perceived warmth, perceived ability, and social presence enhance user trust in chatbots, which further affect user satisfaction with the interaction process. This intermediary mechanism underscores the critical role of trust in building a positive user experience. Therefore, cultivating users’ trust in chatbots will help improve their interaction satisfaction, thereby increasing user loyalty and continued use intention. When consumers trust chatbots, they are more likely to rely on them to resolve issues and are less likely to turn to human agents for service assistance.

Finally, the findings validate the moderating effect of implicit personality disposition. Consumers’ implicit personality tendencies (entity vs. gradient) significantly moderate trust, usage intention, and interaction satisfaction. This shows that with the continuous updating of Internet technology, people are increasingly accepting chatbot services on e-commerce platforms, and consumers’ intention to use chatbots shows diversity. Entity theorists are more likely to focus on chatbots’ fixed characteristics and capabilities, whereas gradients focus on their underlying developmental potential. Therefore, consumers with different personality tendencies must provide corresponding design and customization during interaction to meet their needs and expectations better.

Theoretical Implications

This study responds to the call for academic research on consumer perceptions of and acceptance of smart services from the dual perspective of consumer willingness to accept chatbots and exchange satisfaction. The results can help enterprises improve smart services more effectively and meet consumer needs. First, the study successfully extends the literature on human-AI interaction to e-commerce. By exploring the reaction process of the three social attributes of perceived warmth, perceived ability, and social presence on consumer behavior, the relationship and intermediary mechanism between chatbot trust and consumers’ intention to use and interaction satisfaction were analyzed in depth. This helped clarify and explain the existing contradictory conclusions, enriching the literature on the three social attributes of chatbots, intelligent services, and the human nature of chatbots. Second, this study skillfully combines the SOR framework with consumer behavior theory and deeply examines chatbots’ consumer intentions and interaction satisfaction. Through an empirical analysis of chatbot usage intentions, we found that these social attributes significantly impact consumers’ usage intentions, which is consistent with previous research results. These insights provide a valuable theoretical basis for the development of chatbot products that better meet the needs of consumers, and provide new ideas for optimizing the interactive interface of chatbots and improving the interactive experience. Finally, from the perspective of in-depth research on consumer behavior and psychology, this study introduces the moderating effect of implicit personality tendencies (ontology vs. gradualism), which not only improves consumers’ contextualized cognition of the behavioral effects of chatbots but also expands the relevant literature on implicit personality tendencies in the field of intelligent services. Simultaneously, it strongly supplements the literature on consumer behavior and psychology. Based on the literature on consumer behavior and psychology, this study further elaborates on the application of implicit personality tendencies in intelligent services. This application focuses on how implicit personality tendencies affect consumer perceptions and attitudes toward chatbots by modulating chatbot behavior.

Managerial Implications

First, optimization of the design and promotional strategy of chatbots. Managers should focus on strengthening a robot’s emotional communication ability to improve problem-solving efficiency when designing and promoting chatbots. Simultaneously, it is crucial to consider social presence factors in order to create more attractive and efficient chatbot products, thereby increasing users’ willingness to use them. When analyzing the emotional communication ability of chatbots, managers also need to consider the reliability of their data collection technology. Only in this manner can we ensure a comprehensive and in-depth analysis of chatbots to meet the diverse needs of users. Cutting-edge technologies, such as machine learning, are used to mine chatbot data to discover potential information, thereby continuously optimizing the functional design of chatbots to meet the changing needs of consumers. Second, Strengthen trust-building and maintenance strategies. Building and maintaining user trust in chatbots is crucial in practical management. Managers can take steps to enhance robots’ emotional communication capabilities, increase technical capability transparency, and actively channel positive evaluations of their social presence. Through these efforts, consumers’ trust in chatbots can be enhanced, improving their willingness to use them and their interaction satisfaction. In addition, we should continue optimizing the personalization function of chatbots to ensure the continuous growth of trust. In-depth research on user needs provides a more personalized chat experience, thereby deepening the emotional connections between users. Simultaneously, attention should be paid to user satisfaction, user feedback should be collected promptly, targeted optimization should be carried out to meet consumer needs and a warmer and safer use environment should be created. Third, Firms’ should customize personalized services to meet the needs of people with different implicit personality traits. Managers should consider providing customized services and interactive experiences based on implicit personality traits to meet their specific needs and improve their satisfaction. Customized services need to pay attention to users’ implicit personality traits and consider their interests, living habits, values, and other factors to provide more diverse services and experiences. Merchants should adopt targeted marketing strategies based on different implicit personality tendencies to meet consumer needs, improve consumer satisfaction and trust, and create greater commercial value for enterprises.

Limitations and Future Research

This study conducted a comprehensive questionnaire survey across China to assess the level of awareness and usage patterns of chatbots among Chinese consumers, aiming to gather robust and broadly applicable data. However, considering that consumers from diverse cultural backgrounds may exhibit markedly different attitudes and behaviors toward chatbots, future research should aim to broaden the sample range to encompass a more varied demographic. This approach will enhance the universality and external validity of the findings, enabling the conclusions to better address the needs of consumers in various regions worldwide.

In this study, we primarily analyzed how factors like perceived warmth, perceived competence, and social presence influence consumers’ intention to use chatbots and their satisfaction with interactions. Nevertheless, due to the complexity of causal relationships, we cannot simply infer the direct impact of independent variables on the dependent variable. Other variables, such as users’ personal traits and emotional states, may also influence consumers’ intention to use and their satisfaction. Therefore, future research needs to explore the interactions between these potential factors and the research variables, in order to gain a more comprehensive understanding of the complex mechanisms of consumer behavior.

In addition, this study employs trust as a mediating variable to explore the issue of trust between chatbots and users. However, this approach may have limitations in terms of explanatory power and depth of understanding. Future research can delve into the formation process of trust and how users build trust in chatbots based on different information, adopting psychological and sociological perspectives. Simultaneously, research can further analyze the constituent elements and formation mechanisms of trust from three dimensions: cognition, emotion, and behavior; providing a deeper explanation for the mediating role of trust between perceived factors and interaction satisfaction.

Furthermore, this study has considered implicit personality tendencies (entity theorists and gradient theorists) as moderating variables. However, additional research is warranted to operationalize and investigate the impact mechanisms of these implicit personality tendencies on the dependent variable. To gain a more comprehensive understanding of how implicit personality traits influence consumers’ intention to use chatbots, future studies could focus on operationalizing the underlying mechanisms of these traits, exploring their dynamic patterns across different usage contexts, and examining their effects on consumers’ attitudes and behaviors toward chatbots.

Footnotes

Ethical Considerations

This study guaranteed the anonymity of the participants by ensuring that their responses were voluntary. The results of the study were also thoroughly analyzed. No additional ethical approval was necessary as per national legal requirements since this study did not involve the collection of personal identification information or private data. It adheres to the guidelines set forth in the Helsinki Declaration.

Consent for Publication

All the participants are required to provide the online version of informed consent.

Author Contributions

All authors listed have made substantial, direct, and intellectual contributions to the research. In addition, all authors have read and agreed to the published version of the approved it for publication.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author upon reasonable request.