Abstract

The National Matriculation English Test (NMET), also known as the Gaokao English Examination, is a high-stakes, large-scale selection test for tertiary education in China, with numerous provinces and regions adopting the standardized national test papers. The validity of the NMET has garnered extensive attention. To verify the NMET’s validity, it is essential to determine whether there is consistency between students’ actual English performance post-university admission and their NMET scores. This study compares the initial listening proficiency test results and first-year English achievement test scores of non-English majors at a local university in central China to their NMET scores, incorporating observations of the students’ learning processes and selective post-class interviews. The findings indicate that the NMET’s validity is relatively low regarding students’ listening proficiency, yet there is a higher degree of consistency between freshmen’s English achievement test results and their NMET scores. However, the predictive power of NMET scores for students’ college English performance diminishes over time. The consequences of the NMET also impact students’ academic performance in college, influenced by factors such as the test format and the application of scores in local contexts. This paper aims to contribute further empirical evidence to the NMET validation study and to highlight the importance of a smooth transition in English learning and teaching from the secondary to the tertiary level, advocating for assessments that facilitate learning and support students’ sustainable development.

Introduction

The National College Entrance Examination (commonly known as the “Gaokao”) is a pivotal examination for qualified high school graduates and equivalent candidates seeking university admission. Universities select and admit students based on their examination scores, in accordance with admission regulations and plans. The Gaokao’s nature and purpose necessitate consideration of the moral qualities and scientific, cultural literacy that colleges and universities expect from incoming students, as well as the National Curriculum Standards for General High Schools (Ministry of Education Examination Center, 2019).

In China’s current foreign language exams, summative assessments such as the National Matriculation English Test (NMET) hold significant importance. Due to its large scale and high stakes, it carries considerable social weight in talent selection, thus implying a need for high validity. However, the question arises: does the NMET meet these expectations? How do students’ English proficiency post-NMET correlate with their academic performance in university, and does it align with the Gaokao’s selection criteria? With an increasing number of provinces and cities adopting the unified national exam papers for the Gaokao English test, the interpretation and use of scores vary across regions. For instance, in Henan and Shanxi, the listening section is not included in the total score. This begs the question: does this inconsistency impact English teaching and learning from secondary school to university? These factors warrant consideration in research on the NMET’s validity in relation to other variables and the consequences of test interpretation.

This study compares the English listening proficiency test results of 520 first-year students at a provincial university in central China with their Gaokao English scores, and further analyzes the English academic performance of 193 students across four classes taught by the author. Observations of students’ learning processes, individual interviews and communication, monitoring of changes in student learning achievements are conducted to provide empirical evidence for the NMET’s validation research, stimulate reflection on the transition from secondary to university English teaching, and enhance the developmental and pedagogical effects of assessment while closely attending to students’ learning needs and sustainable development.

Current Status of Research

The Theoretical Study of Validity

Validity is regarded as the cornerstone of designing, developing, and evaluating assessments, primarily denoting whether an assessment measures the intended competencies (e.g., Bachman & Palmer, 1996; Messick, 1995; Weir, 2005). Validity is a complex concept, despite ongoing debates and evolving interpretations within the fields of educational measurement and language testing, the definition of validity widely accepted is that presented in the Standards for Educational and Psychological Testing (hereafter, American Educational Research Association et al., 2014), which frames validity in terms of the support that evidence and theory lend to the interpretations and uses of test scores for their intended purposes (e.g., Gafni, 2016; Geisinger, 2016; Kane, 2016; Shepard, 2016). Though there are also differing viewpoints which point out its flaw of combining score meaning and test score use (e.g., Cizek, 2012, 2016; Twing, 2016), this paper adopts the more accepted validity theory in the 2014 Standards.

The traditional perspective on validity posits it as an intrinsic test attribute, with distinct types such as content validity, construct validity, and criterion-related validity (Bachman, 1990; Hughes, 2003). Separate types of validity evidence should be established for different types of tests. As understanding of validity deepens, researchers and theorists increasingly view it as an evaluative judgment of test score interpretations and uses, proposing a unified concept of validity. The evidence collected to substantiate test validity can encompass various aspects (Messick, 1989, 1995, 1996; Weir, 2005). Weir (2005) suggests that the primary sources of test validation evidence are theory-based, context-based, and scoring validity, complemented by criterion-related and consequential validity. The American Educational Research Association et al. (2014) enumerates five types of evidence necessary to support test validity: evidence based on content, evidence based on response processes, evidence based on internal structure, evidence based on relations to other variables, and evidence based on consequences of testing. The consequential aspect addresses the actual and potential impacts of test score uses. This aligns with Messick (1996) and Weir’s core viewpoint, with the latter two types of evidence gaining increasing academic interest (H. Z. Yang, 2015). Diverse validation processes endeavor to answer key questions: whether the measured scores can be reasonably interpreted and appropriately applied for specific purposes. Test validation is not dichotomous (i.e., scores are either valid or invalid) but rather a matter of degree, demonstrated through the aforementioned aspects. Validity is also an empirical issue, particularly for large-scale assessments, necessitating empirical validation of their validity level and continuous evidence collection for public disclosure (Cizek, 2016; H. Z. Yang, 2015). The validation process is iterative, requiring evidence from as many sources as possible (Y. Chen, 2008).

The Empirical Study of NMET Validity

The NMET’s validity is a central concern due to its significant social weight. As a high-stakes examination, the NMET holds substantial practical value in educational assessment research, making its validity a focal point of interest. Current empirical studies on the NMET’s validity primarily concentrate on analyzing the content validity, cognitive validity or the constructs of certain test papers and task types (e.g., Cai, 2023; Y. B. Chen, 2024; Dong, 2008, 2010; Gu, 2008; Huang et al., 2023; Wang & Yang, 2009; Z. Q. Yang et al., 2018; C. Q. Zhang, 2015). They also delve into the examination of test design techniques or conduct comparative studies on validity (e.g., K. Chen, 2019a; Cheng & Wang, 2021; Deng, 2012; Li et al., 2014; Li & Xiu, 2009; Xu & Zou, 2013). Additionally, they explore the quality of test design and provide interpretations or reflections on it (e.g., Cheng, 2022; Han & Zhang, 2015; Lv, 2017; F. Zhang, 2012; Y. Zhang & Gao, 2015). There is also an exploration of the application of the Gaokao assessment framework in the NMET (e.g., Gao & Gao, 2021; Qiao & Li, 2021; Yu et al., 2018; Zhao et al., 2023) and an assessment of the rating quality or effectiveness of task types within the NMET (e.g., K. Chen, 2019b; K. Chen & Zhang, 2020; He et al., 2023; C. Q. Zhang et al., 2020).

While numerous studies have been conducted to assess the NMET’s validity, highlighting the extent to which test tasks elicited meet the expectations of test developers and proponents, or identifying existing issues and suggesting improvements for better content design, most focus on the validity of specific test papers or certain task types (Pan et al., 2022). There is a notable gap in research concerning the relationship between NMET results and other variables, as well as the actual and potential consequences of score use, which should be integral components of NMET validation studies. Validity should also be gauged against external and independent criteria, such as comparing students’ test outcomes with their subsequent academic performance, aligning students’ performances with the assessments of experienced teachers, and confirming test validity through long-term observations of candidates’ linguistic competencies (H. Z. Yang, 2015). This paper, therefore, endeavors to investigate the following questions to enrich and provide a comprehensive understanding of NMET validation studies: What is the consistency between college freshmen’s academic performance and their Gaokao English test results? Do these results meet the selection expectations? What are the implications for testing and assessment practices and research, and for the effective transition of English teaching from high school to university?

Research Design

Research Subjects

The study’s subjects are the first-year English learners of the Class of 2020 from a university in China’s central region. This university is a provincial application-oriented institution with the majority of its students hailing from within the province. Students from other provinces, including Hebei, Anhui, Shanxi, Hunan, Inner Mongolia, Hainan, and Gansu, constitute approximately one-third of the total student body. The NMET is administered on a national scale; however, in certain provinces like Henan and Shanxi, the listening test results are not factored into the final score.

Data Collection and Analysis

In line with the objectives of the college English curriculum and the importance of cultivating students’ competence in using language, the Academic Affairs Office organized a listening test for non-English majors immediately after their university enrollment. The purpose was to gauge the general listening proficiency level of the students and their readiness for college English courses. The test items were primarily sourced from a College English Listening and Speaking textbook1, encompassing multiple-choice questions on short dialogs, conversations, and monologs. A total of 565 first-year non-English major (studying English as a foreign language) students volunteered to participate, yielding 520 valid datasets of students’ listening test scores and NMET scores.

The study also draws on data from the NMET scores of 193 students from four distinct classes taught by the author, focusing on their English achievement scores during their first academic year. These include mid-term and final exams in the first semester and the final exam in the second semester (the mid-term exam was abolished from the second semester onward). The English achievement tests were curated from questions in the Public English Test System (PETS) Level 3 and the College English Test (CET) Band 4, adjusted to a lower difficulty level to match the language proficiency of first-year students. The test components included listening, reading, cloze, translation and writing, collaboratively designed by two experienced college English educators with more than 10 years of teaching experience, and flawless examination of test scripts was ensured. Correlation analysis and linear regression analysis were performed using SPSS20.0 to examine the relationship between students’ NMET results and their English achievement scores post-enrollment, with a focus on the significance of any differences. Throughout the teaching period, the author monitored and observed students’ English performance in class, conducting post-class communication and online interviews with individual students who displayed significant performance fluctuations, six students were included according to convenience and judgment sampling, to uncover the factors contributing to these variations. Questions including “How was your English listening practice during secondary school?”“How do you view your test results in the listening proficiency test?” etc. were asked. The interview data was analyzed through thematic analysis, the author engaged extensive discussion with another researcher on divergent points until consensus was reached.

Research Results and Analysis

Students’ English Listening Proficiency Test Results and NMET Results

A listening proficiency test was administered to the non-English majors upon entering the university and students were highly motivated, with the majority scoring above 100 on the NMET. The correlation between their NMET scores and the listening test results is presented in the tables below, indicating a significant discrepancy between the two sets of scores.

Tables 1 and 2 reveal that while the students’ average NMET score was 111.38 out of 150, the average score on the listening test was only 39.14 out of 100, suggesting a substantial gap in listening proficiency. The Pearson correlation coefficient between the NMET scores and the listening test scores was found to be .239, indicating a statistically significant yet weak positive correlation. This finding aligns with Grimm’s (1993) classification, where a coefficient below .39 is considered a low correlation, suggesting that the NMET may not be a robust predictor of listening skills.

Results of NMET and Listening Proficiency Test.

Correlation of NMET and Listening Proficiency Test.

Correlation is significant at the .01 level (two-tailed).

The author’s class observation and post-hoc interviews with individual students indicate that the question format and score use of the Gaokao listening section have exerted a profound impact on students’ listening and speaking skills throughout high school. As the students mentioned, In high school, we were accustomed to seeing the listening questions in writing before the audio was played. However, now we are confronted with questions that remain undisclosed until the conclusion of the audio, an approach we are unaccustomed. Listening practice was not a central focus in the high school, with textbooks including limited listening exercises. Usually, we would just finish a listening book before the final exam, most of the test questions were chosen directly from it.

This involves factors related to students’ question-solving habits and listening strategies, indicating that secondary school listening curriculum primarily revolves around exercises from textbooks, with focused training on exam-type questions before the NMET. In some provinces like Henan and Shanxi, the English listening test scores are not included in the total scores of Gaokao, leading to a lack of attention from both teachers and students. Another student revealed, A general lack of attention was paid to this aspect, compounded by a weak foundation in basic English skills. Listening was not integrated into daily study routine, and classroom time for question explanation was insufficient. So, we just give up on listening, especially as it was not tested in the third year of high school.

In high school, students’ listening and speaking skills were more focused on exam-oriented preparation, influenced by the NMET question format and interpretation of the score policy. This has resulted in students struggling to adapt to the requirements of college English courses, particularly in listening and speaking, which they find challenging and which affects their confidence. Students reported difficulty adjusting to the audio-based question format of college listening tests, having been previously accustomed to paper-based format. This highlights the need for a reevaluation of high school English curricula to better prepare students for the demands of university-level English proficiency assessments.

Students’ English Achievement Test Results and NMET Scores

The English achievement tests administered during the first year were graded using a combination of machine scoring for selected-response items and peer review for constructed-response items. To ensure the reliability of the scoring process for subjective questions such as constructed-response items, a rigorous training phase was conducted for the graders, where they are calibrated against a set of predefined criteria to minimize inter-rater variability. In cases of significant discrepancy between the two graders, a third grader is brought in to provide a decisive evaluation, thereby enhancing the reliability of the scores. Regular intervals of reliability checks are conducted to further fortify the consistency of the marking process.

The Pearson correlation coefficients between students’ NMET scores and first-year college English academic performance presented in Table 3 are .625, .635 and .479 respectively, indicating a varied moderate correlation. Figures 1 and 2 provide a visual representation of the relationship between NMET scores and first-semester English academic performance, illustrating a consistent trend that underscores the predictive value of NMET scores for university English performance. In Figure 3, there is a degree of dispersion in the relationship between students’ NMET results and final test scores in the second semester, showing a decrease in predictive power over time, suggesting that the correlation weakens as students progress through their university education.

Correlation of NMET and English Achievement Test Results.

Correlation is significant at the .01 level (two-tailed).

Relationship of NMET results and mid-term exam scores in first semester.

Relationship of NMET results and final exam scores in first semester.

Relationship of NMET results and final exam scores in second semester.

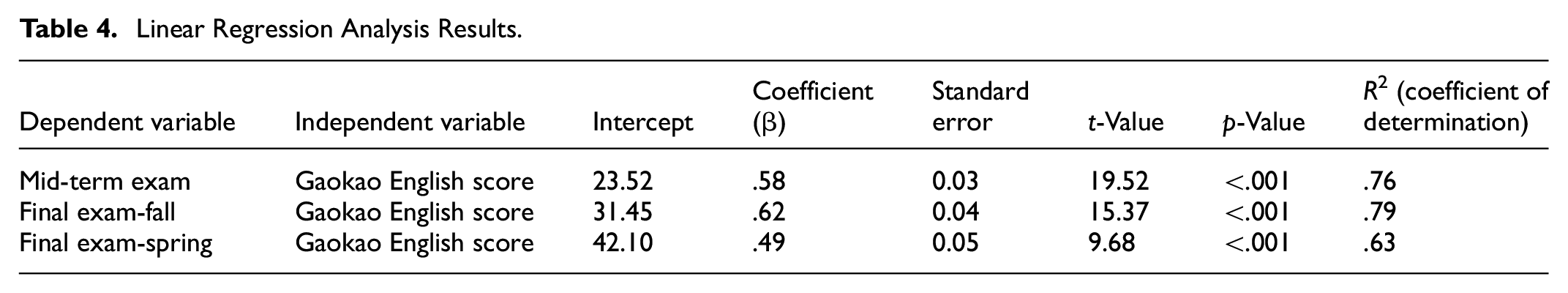

The linear regression analysis was conducted to further explore the relationship between Gaokao English results and students’ university academic performance.

Table 4 shows a significant positive correlation between students’ Gaokao English scores and their university English exam results in the first year, with statistics indicating the predicted scores based on Gaokao performance. The coefficients (β) indicate the expected change in the university exam scores for each one-point increase in the Gaokao English score. The p-value less than .001 indicates the model is statistically significant. The mid-term exam model with an R2 value of .76 implies that the Gaokao English scores explain 76% of the variability in mid-term exam scores. The first semester final exam model with an R2 value of .79 suggests that Gaokao scores account for 79% of the variability in final exam scores. The next semester final exam model with an R2 value of .63 implies that the Gaokao scores explain 63% of the variability in final exam scores for the spring semester. These statistical results support the research hypothesis that Gaokao English scores can serve as a predictive indicator for university English performance. Specifically, higher Gaokao English scores tend to predict higher university English exam results. However, the predictive power of Gaokao scores diminishes over time, suggesting other factors in the university setting may increasingly influence students’ academic performance. Therefore, educators and policymakers should consider these factors for a more comprehensive understanding and support of students’ learning development.

Linear Regression Analysis Results.

It should be noted that students hail from diverse regions nationwide, with varying levels of educational resources. Slight variations in the difficulty of national examination papers exist, alongside discrepancies in the policies for NMET score use. Relying exclusively on pre-admission NMET scores fails to fully determine students’ actual proficiency level.

Observations from teaching, individual interviews and communication with students reveal that the influence of a multitude of factors, including adaptation to university, motivation, self-discipline, and learning strategies on academic outcomes cannot be overlooked. For instance, two students despite achieving scores above 120 on the NEMT, exhibited a deficiency in motivation post-enrollment, culminating in mediocre academic achievements. Certain students, while earning relatively commendable Gaokao English scores, struggled to acclimate to university curricula and pedagogical approaches, demonstrating diminished autonomous learning capabilities and an inability to effectively leverage supplementary resources for task completion. Students scoring lower on the Gaokao or possessing weaker foundational knowledge desired that instructor concentrate on elucidating grammatical concepts and offer augmented exercises within the class, akin to practices in high school. Nonetheless, exceptions were observed among individual students with unremarkable Gaokao English scores, yet possessing strong motivation and self-discipline excelled academically. They evidenced enhanced learning motivation, classroom performance and persistent extracurricular efforts, thereby achieving marked improvements in their academic standing. However, a few students have been characterized by unclear academic objectives upon university entry, resulting in stagnation and a sense of limited learning gains. Should they fail to maintain sound study and reading habits, they may incur academic reversals. These individuals often concentrate solely on exam outcomes that fulfill the minimum criteria, neglecting the learning process and the pursuit of educational objectives.

When it comes to test preparation, students commonly engage in last-minute cramming during the final weeks preceding an exam. If they do not pass, they depend on subsequent makeup exams or a course retake. As a student remarked, “Should I fail once, I intend to retry, eventually I would pass the exam.” Summative assessments continue to occupy a significant position within the university’s evaluative framework. A subset of students remains focused on learning irrespective of assessments, exhibiting high self-discipline, diligence, and well-defined goals. However, this group represents only a minority. The second group confines their study efforts to periods immediately preceding exams or when aiming to pass a specific assessment. A third category of students refrains from studying, even facing exams, attributing this to low self-awareness and confidence, thus adopting a minimalist approach to academic engagement. The majority of students, even those with commendable academic performance, are categorized within the second group. Upon successfully passing an exam, be it a final assessment or achieving a proficiency certificate such as the CET Band 4 or Band 6, their motivation correspondingly wanes. Consequently, it is imperative for students to establish clear educational plans and objectives, alongside a unified and consistent proficiency benchmark throughout their language learning journey, thereby fostering a sustained integration of teaching and learning and mitigating the influence of summative assessments.

The analysis underscores the complexity of academic success and the multifaceted factors impacting student performance, advocating for a holistic educational approach that emphasizes the process of learning, encouraging students to develop a deep understanding and mastery of the subject matter that extends beyond mere exam results.

Discussions and Implications

About English Testing and Assessment

To ensure the fairness and rigor of the examination, an increasing number of provinces and regions in China are adopting a unified national exam for the NMET. However, regional disparities persist, particularly regarding type of examination papers and score policies. The Gaokao outcomes are pivotal for university admissions, yet the utility of scores extends to diverse applications, including student evaluations, selections, and evaluations of teachers and schools. The clarity and precision of the testing objectives enhance the probability of well-constructed tests (O’Sullivan, 2015), which is fundamental for achieving educational equity and validity. According to Bachman and Palmer’s (2010) classification of target language use domains, the test content scope of Gaokao English belongs to the instructional domain, and the purpose of it is clear—university entrance selection. When defining the scope of test content, it is necessary to base it on the teaching content of high school English and the English course requirements of university. The positive impact of the Gaokao English can only be fully realized through an integrated approach that combines high school and university English teaching requirements (Han & Zhang, 2015).

Therefore, the use of scores must be cautious, as any changes can potentially impact teaching and learning. Policies regarding the inclusion of listening scores within the Gaokao’s English component have significantly influenced high school English instruction, learning, and assessment. Proficiency in English listening and speaking is essential for a seamless transition into university studies and for the enduring advancement of linguistic abilities. Every adjustment requires rigorous evaluation and piloting, followed by further improvements and timely public disclosure, in order to enhance transparency and credibility of the scores (H. Z. Yang, 2015; Zhao et al., 2023). Therefore, it is imperative that educational policymakers, administrators, and educators collaboratively consider the implications of these policies on students’ language proficiency development.

Considering the current reality where summative assessments remain paramount, it is imperative that students’ prospects for higher education or employment focus significantly on academic performance. Educational institutions, both secondary and tertiary, must adopt a diverse array of school-based testing and evaluation methods, striving to integrate summative and formative assessments for a holistic appraisal of students’ learning achievements and competencies. Summative assessments must be scientifically rigorous, aligned with the curriculum, and structured in a manner that accurately reflects students’ language competency and application of learned content.

About English Teaching From Secondary to Tertiary Level

The “English Curriculum Standards for General High School” (Ministry of Education of the People’s Republic of China, 2017 Edition, Revised 2020) underscores the comprehensive development of students’ English proficiency, cultural consciousness, cognitive abilities, and learning skills, advocating for an educational approach that fosters core literacy and diverse learning methods. It aims to cultivate a strong foundation in English, facilitating the acquisition of interdisciplinary knowledge and an appreciation for global cultural diversity. Furthermore, it broadens opportunities for advanced English studies and professional development, advocating a pedagogical framework that emphasizes the cultivation of core competencies and encourages autonomous, collaborative, and inquiry-driven learning methodologies. Should teaching and learning become narrowly focused on exam preparation due to sociocultural influences and score policies, as O’Sullivan (2015) remarked, “what does not get tested usually does not get taught,” it could undermine the broader educational goals, impact high school instruction and impede the development of students’ lifelong learning capabilities. Despite Gaokao’s significant impact on secondary education direction, the English curriculum goals and structure should not be exclusively aligned with NMET content, it should be designed to foster a broad range of language skills that extend beyond the confines of the examination. This approach prepares students for the complexities of language use in real-world scenarios, rather than solely for the examination.

Likewise, tertiary English instruction should not be organized solely around proficiency tests like CET-4 and CET-6, nor should these exams be the sole criteria for teacher and institutional evaluations and ranking, or as the benchmark for pedagogical success. Clearly defined learning objectives at each stage of the English learning journey are crucial for enhancing their awareness and engagement of the targeted competencies within the college English curriculum (O’Sullivan, 2015). Uniform language proficiency scales facilitate comparability and coherence in students’ academic performance, which the descriptors in China’s Standards of English (CSE) can be fully used as a scientific reference (Liu & Han, 2018). This not only nurtures a proactive learning environment that encourages continuous improvement and self-assurance beyond the classroom, but also ensures that students are well-prepared for the demands of higher education and the workplace.

Teachers should integrate practical assessment methods into the English learning process, utilizing strategies such as oral presentation, formative classroom assessment, peer evaluation, and e-portfolio. As educational architects, teachers are equipped to evaluate students’ attainment of desired learning objectives, track student progress and provide targeted support where needed. Upon student encountering difficulties, teachers should diagnose the underlying reasons and implement targeted interventions to maintain students’ positive momentum. Feedback is most effective when delivered at optimal moments, preferably during the course of study rather than post-hoc, to maximize student engagement and learning outcomes (Hu, 2014). Teachers should engage in ongoing reflection on instructional efficacy, continuously refining course design, pedagogical strategies, and assessment techniques in alignment with students’ proficiency levels and targeted learning outcomes. The learning content, objectives and proficiency benchmarks should be aligned with the aspirations for student achievement, fostering an environment conducive to teaching, learning, and the sustained academic growth of each student. This approach not only enhances the immediate educational experience but also lays the foundation for students’ lifelong learning journey.

In conclusion, the educational community must navigate the complex interplay between assessment and teaching. Assessments should be learning-oriented, not as the ultimate goal. By aligning teaching practices with a comprehensive set of learning objectives and employing a variety of assessment methods, educators can foster an environment conducive to the long-term development of students’ English language proficiency.

Conclusion

This study provides further empirical evidence for the validation of NMET. Overall, there is a moderate correlation between students’ Gaokao English scores and their academic performance in college English courses, aligning with previous studies proposed by Weir (2005) and H. Z. Yang (2015) that underscore the external validity of high-stakes exams, that is evidence based on relations to other variables. However, over time, the predictive ability of Gaokao English scores for university academic performance decreases, echoing concerns raised by Messick (1995) regarding the temporal stability of test scores. There is a weak correlation between students’ Gaokao English scores and their listening proficiency at university, which warrants further investigation into the predictive validity of examination components in high-stakes exams, as highlighted by O’Sullivan (2015). The exam format and score policies of Gaokao English have brought about certain potential negative effects on the connection between high school and university English learning, a phenomenon also noted by Y. Zhang and Gao (2015) and Cheng and Wang (2021) in their discussions on the impact of test design on pedagogical practices. Students’ university academic performance is influenced by various factors such as high-stakes summative exams, motivation, and cognition.

This article reflects similar issues among colleges and universities of the same type, providing enlightening significance. Educational policy decision-makers, administrators and teachers should consider the broader implications of testing policies on teaching and learning, as suggested by Zumbo and Hubley (2016), and strive for a balanced approach that values both the assessment of language proficiency and the promotion of effective teaching strategies. Teaching should not be driven solely by exams, nor should the purpose be to pass various assessments, erroneously transforming “teaching” into “teaching for exams.” Instead, the focus should be on the educational value of assessments, allowing assessments to truly promote student learning, a stance also advocated by O’Sullivan (2015) and H. Z. Yang (2015).

Zumbo & Hubley argue that providing more evidence that is suitable for the exam and situation is better. For example, although referencing different clocks to determine the current time may yield controversial results, people still hope to obtain a richer and more comprehensive conclusion. The present study acknowledges a significant limitation in its scope, as the survey conducted encompasses a limited scale and the sample of university students does not include top-tier institutions, which may impede the representativeness of the outcomes. It is imperative for future research to extend its spectrum to encompass a diverse array of higher education institutions, differentiated by type and region and to examine the long-term effects of high-stakes testing on students’ language development. Concurrently, the comparative analysis between students’ Gaokao English results and their academic achievements in English post-enrollment necessitates additional inquiry to fortify the validation and refinement of its operational paradigm.