Abstract

In online learning, many teachers have adopted traditional assessment methods in offline assessments without adequately testing and assessing the consequences. Therefore, this descriptive study aims to identify teachers’ and students’ perceptions of online assessments in evaluating English as a Foreign Language (EFL) students’ learning. A convenient sample of teachers and students was recruited to respond to a questionnaire on their beliefs about and reported use of online assessment methods through management learning systems. Additionally, semi-structured interviews were conducted to explore participants’ experiences with online assessment. The results demonstrated that teachers’ and students’ beliefs regarding online assessments for EFL learning were moderate, indicating a shared but cautious acceptance of these methods. The analysis revealed no significant differences in perceptions based on participants’ roles, suggesting a common understanding of the challenges and benefits of online assessments among educators and learners alike. Qualitative responses highlighted six key themes: the nature of online assessments, time and effort required, performance outcomes, psychological pressure experienced, and overall user experience, reflecting the concerns regarding technological readiness, academic integrity, and the need for more diverse assessment methods. To enhance the effectiveness of online assessments in the EFL context, the study recommends ensuring a robust technological infrastructure, offering comprehensive training for teachers on various online assessment methods, diversifying assessment techniques to capture a broader range of student abilities, and fostering a culture of ethical assessment practices through close monitoring of student work.

Plain language summary

Many teachers have started using methods for online tests similar to those used for tests in classrooms, but they haven’t fully checked the results. According to teachers and students, this study wants to see how effective online tests are for EFL students. The research used a type of study where they describe things and ask questions to achieve its goals. They asked teachers and students to fill out a questionnaire they made to find out what they think about and how they use online tests. They also talked to some of them in more detail to learn about their experiences with online tests. The results showed that both teachers and students think online tests are okay for helping EFL students learn. They also found that how much people use online tests is just okay. Also, whether someone is a teacher or student or their gender doesn’t seem to change what they think. The interviews showed six main things: what online tests are, the time and effort they take, how well students do, how stressful they are, and what the overall experience is like. To make online tests better for EFL students, the study suggests a few things like making sure the technology works well, teaching teachers how to use online tests, using different kinds of tests, and making sure teachers pay close attention to their students. They also say it’s important to think about what’s fair and right when giving tests online.

Introduction

As education increasingly shifts toward online learning and assessment, understanding teachers’ and students’ perceptions of online assessment methods becomes crucial. This transformation, accelerated by global health crises, rising educational costs, and rapid technological advancements, necessitates a reevaluation of assessment strategies to effectively measure student performance and learning outcomes. In addition, traditional assessment methods face considerable challenges in the online learning environment. Issues such as cheating, dishonesty, technology competency, and internet connectivity have emerged as significant obstacles (Bilen & Matros, 2020; Elsalem et al., 2021; Guangul et al., 2020; Lee et al., 2022). For instance, online assessments can be more susceptible to dishonest practices due to the lack of direct supervision, while technology-related issues can hinder students’ ability to complete assessments effectively. Previous research has extensively explored the effectiveness of online teaching methods and their impact on students’ learning success (Akram et al., 2021; Elzainy et al., 2020; Erarslan & Topkaya, 2017). Studies have also assessed online evaluation methods in terms of student and teacher motivation and success in higher education more broadly (Almossa, 2021; Elsalem et al., 2021; Hazaymeh, 2021; Hidalgo-Camacho et al., 2021; Lee et al., 2022; Thambusamy & Singh, 2021; Zulfa & Ratri, 2022). However, there remains a notable gap in research specifically addressing the perceptions of online assessments among teachers and students within the EFL context in Saudi higher education institutions (Almossa, 2021). Understanding the perceptions of both teachers and students is essential for improving online assessment practices. Teachers’ and students’ beliefs about the effectiveness and fairness of online assessments can significantly impact their engagement with and success in these assessments. Furthermore, insights into these perceptions can help identify areas for improvement and guide the development of more effective assessment strategies. This study aims to address this gap by investigating how teachers and students perceive online assessment methods, particularly through management learning systems like Blackboard, in evaluating EFL students’ learning. By focusing on these perceptions and reported use, this research seeks to provide valuable insights that can inform the design and implementation of online assessments, ultimately enhancing the effectiveness of EFL education in Saudi higher education institutions. The statement of the problem is represented in the following research questions:

(1) What are teachers’ and students’ perceptions of online assessment methods in assessing EFL students’ learning?

(2) What online assessment methods do respondents report in assessing EFL students’ learning?

(3) Are there any differences between the respondents’ answers attributed to role?

Review of Literature

EFL Online Assessment

Online assessment refers to any method used to evaluate student achievement or provide feedback in fully online courses. This encompasses various forms, from entirely online exams to online submissions of assignments like essays (Weleschuk et al., 2019). Successful online formative assessment aligns with standards such as authenticity, individualized instruction, instant feedback, and student involvement (Gikandi, 2010). The advantages of online assessment include flexible timing, allowing students to complete assessments at their convenience, and opportunities for multiple attempts, which can enhance learning outcomes (Gikandi, 2010). Moreover, prompt feedback enables students to identify and address weaknesses, ultimately reducing anxiety levels prior to summative assessments (Cassady & Gridley, 2005; Wang et al., 2006).

Despite the recognized potential of online assessment to enhance educational practices, a significant gap exists in the literature regarding the perceptions and practices of both teachers and students. Understanding these perspectives is crucial, as they can significantly impact the effectiveness of online assessments. Teachers’ perceptions often emphasize pedagogical effectiveness, reliability, and the role of online assessments in monitoring student progress (DeLuca & Klinger, 2010; Gaytan & McEwen, 2007). In contrast, students typically assess online assessments based on their impact on grades and learning experiences, raising concerns about fairness and clarity (Hargreaves, 2007; Nicol & Macfarlane-Dick, 2006).

This divergence in focus suggests that investigating both teachers’ and students’ perceptions in a single study can provide a comprehensive understanding of online assessment practices. The literature indicates that while teachers acknowledge the importance of online assessments, their adoption remains inconsistent, often due to barriers such as limited technological proficiency and resistance to change (Rolim & Isaias, 2019). Exploring these factors together can reveal insights into the challenges faced by educators and the expectations of students, thereby enhancing the design and implementation of online assessments.

Previous Studies

Many studies have explored factors contributing to students’ satisfaction with online assessments. However, the initial focus on satisfaction overlooks the need for a more integrated understanding of how both teachers’ and students’ perceptions interact. For instance, while some research highlights student satisfaction with project-based e-assessments (Romeu Fontanillas et al., 2016), others note challenges, such as technical issues during online assessments (Almossa, 2021; Lee et al., 2022). These contrasting findings underscore the importance of examining how teachers’ strategies and students’ experiences influence the effectiveness and acceptance of online assessments.

Furthermore, studies have indicated that academic dishonesty and technical difficulties are significant challenges in online assessments (Bilen & Matros, 2020; Elsalem et al., 2021; Guangul et al., 2020). Addressing these challenges requires an understanding of both teachers’ perspectives on assessment integrity and students’ experiences with potential misconduct. A comprehensive investigation that includes both viewpoints can lead to more effective strategies for minimizing dishonesty and enhancing the credibility of online assessments.

While the existing literature acknowledges the benefits of online assessments, it often fails to critically analyze their impact on language proficiency and academic achievement. By exploring teachers’ and students’ perceptions together, future research can fill this gap, examining how these assessments align with learning objectives and whether they genuinely support language development. The integration of their insights can provide a more nuanced understanding of the effectiveness of online assessments in the EFL context, ultimately informing better practices and policies.

Importance of Exploring Teacher and Student Perceptions

A focused examination of both teachers’ and students’ perceptions is essential for understanding the challenges and effectiveness of online assessment in EFL contexts. This integrated approach can illuminate the interplay between pedagogical strategies and student experiences, revealing how each perspective can inform the other. For instance, teachers may benefit from understanding student concerns regarding assessment fairness, which can lead to adjustments in their assessment design. Conversely, students can gain insights into the pedagogical intentions behind assessments, fostering a deeper appreciation for their role in the learning process.

Additionally, an integrated perspective can facilitate the development of targeted professional development programs for teachers, addressing identified gaps in technological proficiency and pedagogical strategies. Understanding both perspectives can help in crafting assessments that not only meet educational goals but also resonate with students’ learning preferences and needs.

In summary, exploring the perceptions and practices of both teachers and students in a unified study is critical for enhancing online assessment practices in EFL contexts. This comprehensive understanding can contribute to identifying strategies that align online assessments with educational objectives, ultimately leading to improved student learning outcomes. By bridging the gap between teachers’ and students’ views, future research can advance the field of EFL education, ensuring that online assessments serve as effective tools for language development and academic success.

Methodology

This study examines teachers’ and students’ perceptions of online assessments in evaluating EFL students’ learning using a descriptive-survey design. Descriptive research aims to observe, document, and categorize current phenomena, highlighting relationships between variables for a clearer understanding of the subject (Dulock, 1993). A self-developed questionnaire was used to assess teachers’ and students’ beliefs and reported use of online assessments via Blackboard. Additionally, semi-structured interviews gathered in-depth insights into their views on online assessments and explored suggestions for enhancing their effectiveness.

Population and Sample of the Study

The current study was conducted among EFL teachers and undergraduates at Saudi higher institutions, specifically targeting individuals at the College of Languages and Translation at Najran University, comprising a study population of approximately 82 faculty members and 1,000 students. The researchers employed a convenient sampling technique to form the study sample. While acknowledging that this sample may lack clear generalizability, the researchers chose this method due to the practical difficulty in reaching the entire population. The tool link for the study was shared with the population via email and WhatsApp, accessible for 4 weeks, with reminders sent at different times. A total of 56 faculty members and 237 students responded to the questionnaire. In addition, a purposive sampling technique was applied to select interviewees (

Distribution of the Study Sample.

Instrumentation

The study utilized a self-developed questionnaire by the researchers, drawing on their teaching experience in online learning and assessment, as well as insights from existing research (Almossa, 2021; Elsalem et al., 2021; Elzainy et al., 2020; Lee et al., 2022; Ogange et al., 2018; Weleschuk et al., 2019). To construct the primary section of the questionnaire, which is divided into two domains—beliefs and reported use of online assessment methods via blackboard—several foundational studies were referenced to ensure the comprehensiveness and relevance of the items included.

The beliefs about the online assessment methods section of the questionnaire were primarily shaped by insights from various key studies. Almossa’s (2021) research was instrumental in formulating item 21, which addresses potential technological hindrances encountered during the online assessment process. In addition, the study by Elsalem et al. (2021) contributed to several items in this section: item 5 examines student comfort with online exams, item 12 addresses concerns about remote assessment potentially facilitating cheating, and item 20 evaluates whether high levels of student achievement in online settings are indicative of the effectiveness of the educational process. Elzainy et al. (2020) also provided valuable input, particularly for items such as item 6, which explores the capacity of online assessments to reflect students’ true abilities, and item 10, which questions the efficacy of objective questions in remote assessments. Furthermore, Lee et al. (2022) contributed a range of items, including item 1, which assesses whether online assessment reduces student anxiety, item 3, which investigates the effectiveness of essay questions, and item 5, which considers student comfort with online exams in terms of location and timing. Additional items from Lee et al. (2022) explore the role of online discussion forums (item 7), alternative assessment methods like portfolios (item 8), and the reliability of online assessments (items 9, 18, & 19).

For the reported use of online assessment methods section, the adaptation of items was guided by several studies. Elsalem et al. (2021) provided items such as item 1, which examines the practice of recording students for remote assessment, item 7, focusing on the creation of electronic tests, item 9, which looks at educational and research assignments, item 13, concerning the use of multiple-choice questions, and item 15, related to open book projects. Similarly, Lee et al. (2022) contributed items like item 4, which explores the use of discussion forums for topic discussions and assessments, item 6, involving student comments and suggestions, and item 10, related to electronic portfolios. Moreover, Ogange et al. (2018) provided items such as item 11, which investigates the use of individual interviews, and item 14, focusing on the use of essay questions to enhance assessment. Finally, Weleschuk et al. (2019) contributed to the questionnaire with items like item 2, which addresses the use of recordings in remote assessments, item 3, which explores various methods of remote assessment, and item 5, which investigates the use of audio and video recordings for assessment purposes. By integrating insights from these foundational studies, the questionnaire was designed to encompass a broad spectrum of beliefs and practices related to online assessment methods, ensuring a well-rounded and robust evaluation tool.

The questionnaire comprised two sections: background information (participants’ role and gender) and the main section (questionnaire items). The closed-item section consisted of 37 items, focusing on two main areas: beliefs about online assessment methods (22 items) and the reported use of online assessment methods via a management learning system (Blackboard) (15 items). To determine scores for responses in the questionnaire, numerical values were assigned based on a Likert scale. Respondents indicated their degree of agreement with each statement, ranging from 1 for “Strongly Disagree” to 5 for “Strongly Agree.” The scores for each question were then summed to calculate the overall score. The degree of participants’ agreement was categorized using the following equation: 1 to 1.80 = a very low degree, >1.80 to 2.60 = a low degree, >2.60 to 3.40 = a medium degree, >3.40 to 4.20 = a high degree, >4.20 to 5 = a very high degree.

In addition, semi-structured interviews were conducted to gather teachers’ and students’ views on online assessment methods and steps for improving their efficacy. The interviews included two main questions: “Could you tell us about your experience in online assessment (tests, assignments, and other assessment tools) via Blackboard?” and “In your opinion, what does online assessment need to raise its efficiency?” One researcher conducted the interviews, meeting male participants in their offices, while females were interviewed via telephone after scheduling sessions. All interviews were recorded and later transcribed, with an average interview time of 10 min.

Blackboard is widely utilized as a learning management system in global educational institutions. Serving as an online platform, it simplifies collaborative, communicative, evaluative, and administrative aspects of course content. Teachers can easily share resources, engage in class discussions, conduct assessments, and manage grades, establishing a comprehensive online learning environment. Enhancing communication through features like attendance tracking and collaboration tools, Blackboard fosters a more connected teacher-student relationship. Its broad appeal stems from its adaptability, seamlessly integrating with other technologies (Almelhi, 2021).

Validity

The questionnaire underwent validation through face validity and internal consistency methods. Face validity refers to the extent to which a research instrument, such as a questionnaire or measurement tool, appears to measure its intended construct or trait upon initial inspection. This subjective assessment relies on experts in the subject matter, even if they may not possess expertise in research methodology (Gaber & Gaber, 2010). Ten experts in EFL learning and education were enlisted to assess the face validity of the questionnaire. They were provided with the questionnaire and study objectives to determine whether the instrument accurately measured the research objectives, while also evaluating qualities such as wordiness, clarity, and language. The experts’ feedback resulted in modifications to some items. For example, they suggested revising statements like “Remote assessment of students reduces students’ creativity and innovation” and “The teacher asks students to make electronic portfolios for collecting work and present it to the teacher.” Additionally, they proposed adding new items, such as “Online assessment is not reliable and cannot reflect students’ real academic level,”“Technology hindrance in the online assessment process may affect students’ achievement level,”“Applying assessment methods online that reflect students’ actual level may overburden teachers with time, cost, and effort,” and “The teacher allows students to comment and suggest about their peers’ work.” Furthermore, the experts recommended merging two items to avoid redundancy: “Time allocated for exams is more than enough.”

The internal consistency of the questionnaire was assessed using the Pearson correlation coefficient. A pilot study involving 20 individuals, later excluded from the main study, was conducted to process the data and check whether the items corresponded to their related domains, and domains to the overall scale. The results indicated that the Pearson correlation coefficients between the items and the total score of the domain they belonged to were statistically significant at the levels of (.01) and (.05). The correlation coefficients between the items and the total score for the domain ranged between (.454* and .859**), and the correlation coefficients between the domain and the total score ranged between (.985** and .993**). These results affirm that the tool is valid and capable of achieving the study objectives.

Reliability

The questionnaire’s reliability was checked using two methods: Cronbach Alpha. The scale was applied to an exploratory sample of 20 participants, and then the data was analyzed and reported as depicted in Table 2.

Reliability Coefficients.

The results presented in Table 2, specifically the Cronbach’s alpha reliability coefficients, indicate the reliability of the study tool. Cronbach’s alpha serves as an indicator of internal consistency, assessing how closely the items in a test or scale are correlated. In this case, the overall Cronbach’s alpha for the entire tool is .95, and individual domain coefficients range from .91 to .93. A Cronbach’s alpha value approaching 1.0 signifies high internal consistency, indicating that the items within the tool have strong correlations and consistently measure the same underlying construct. The reported overall alpha of .95 and domain-specific coefficients ranging from 0.91 to 0.93 are considered substantial. Generally, a Cronbach’s alpha exceeding .70 is deemed acceptable for research, and higher values, as observed in this case, indicate robust internal consistency. In practical terms, these results affirm that the study tool is reliable and consistent in measuring the intended construct or concept.

Data Analysis

The quantitative data collected from teachers and students were analyzed using SPSS version 25 to address the research questions, aiming to provide insights into the perceptions and practices regarding online assessment methods in evaluating EFL students’ learning. Descriptive statistics, including means, standard deviations, ranks, and levels, were computed to answer Research Questions 1 and 2. For Research Question 1, which investigates teachers’ and students’ perceptions of online assessment methods, the analysis summarizes the overall beliefs regarding the effectiveness and suitability of these methods, identifying areas of agreement and divergence. For Research Question 2, focusing on the online assessment methods reported by respondents, the analysis sheds light on the actual practices and preferences of teachers and students, informing future improvements in assessment strategies. To explore Research Question 3, which examines whether there are differences between respondents’ answers based on their roles as teachers or students, Mann-Whitney

Study Results

The research questions were instrumental in organizing the presentation of the findings. Each section of the findings corresponds directly to a specific research question, allowing for a structured and coherent data exploration. This approach not only enhances clarity but also ensures that the presentation systematically addresses each aspect of the research focus. By aligning the findings with the research questions, we can effectively highlight how the data supports our overall conclusions and insights.

Perceptions of Online Assessment Methods in EFL Students’ Learning (Beliefs)

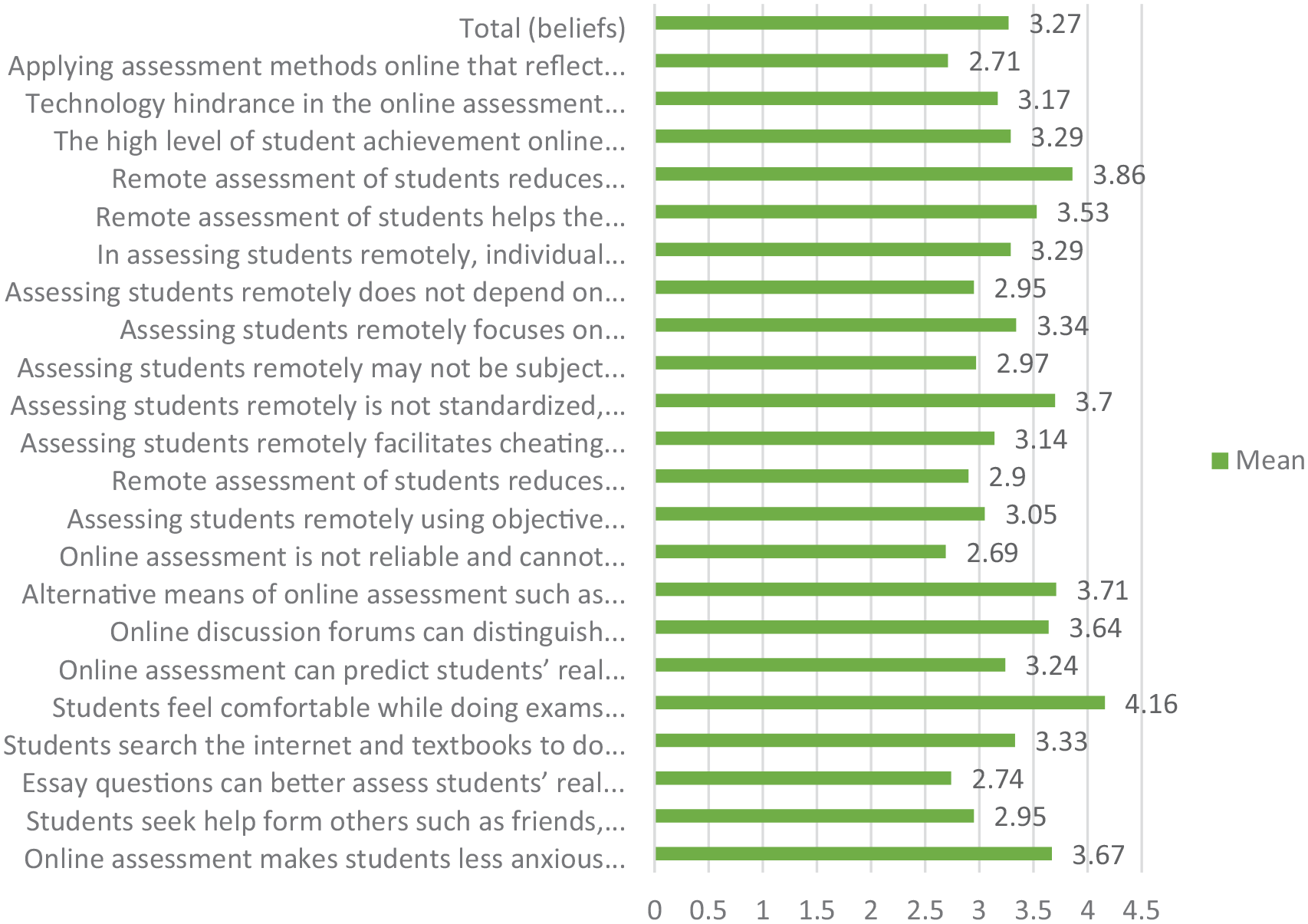

Figure 1 displays the responses of the study sample to their beliefs about online assessment methods in assessing EFL students’ learning level.

Teachers and students’ beliefs (means).

Figure 1 reveals that the total score of teachers’ and students’ beliefs about online assessment methods in assessing EFL students’ learning was moderate (

Reported Use of Online Assessment Methods in EFL Students’ Learning

Figure 2 displays teachers and students’ perceptions of reported use of online assessment methods in EFL students’ learning.

Reported use of online assessment methods (means).

Figure 2 presents the total scores reflecting the responses of the study sample concerning teachers’ reported use of online assessment methods for evaluating the academic performance of EFL students through online means, as perceived by both teachers and students. The results indicate a moderate level (

Respondents’ Role on Perceptions of Online Assessment Methods

The Mann-Whitney

Mann-Whitney

The results presented in Table 3 indicate that there were no significant differences in the responses of the study sample based on their roles (teacher or student). This result suggests that participants’ roles did not influence their responses. In other words, both teachers and students share similar opinions regarding online assessment methods in evaluating students’ learning.

Perceptions of Online Assessment Methods in EFL Students’ Learning (Views)

The interview questions gathered data on participants’ views with online assessment and their suggestions for improvement. Through qualitative analysis of the responses to the first interview question, three main themes emerged: advantages and disadvantages, concerns, and suggestions. These themes covered aspects such as time and effort, academic performance, psychological pressure, and overall experiences related to online assessment.

Advantages and Disadvantages of Online Assessment

Participants’ feedback on EFL online assessment reveals a strong consensus regarding its advantages, as well as some challenges. The predominant benefits cited include convenience and comfort. Many participants appreciate the ease with which online assessments can be conducted. For instance, one participant remarked on how the online format respects time management and simplifies the process, stating, “It is easy to go because everything is so easy as instructed and time pace is respected” (P4). Another participant noted that online assessments are comfortable and reduce physical strain and distance issues, highlighting how they save effort and eliminate the need for daily travel to the university (P5, P9). This sentiment is echoed by others who find the online format reduces mental pressure and increases focus, contributing to a more relaxed and effective learning environment (P6, P7).

In addition to comfort, the time and cost efficiency of online assessments are frequently mentioned. Participants noted that these assessments save significant time and effort for both students and teachers, which is crucial for optimizing the learning experience (P11, P9). This efficiency also translates into financial savings by removing the need for daily commuting, thus alleviating some of the logistical burdens associated with traditional in-person assessments.

The positive learning experiences afforded by online assessments are another highlight. Participants indicated that online platforms facilitate better understanding and advancement in studies, while also providing a more engaging and motivating environment for students. For example, one participant described the online assessment experience as “great” for helping students understand their studies better and advancing their learning (P12). Others emphasized the ease and fairness of online assessments for both students and teachers (P8) and noted how such assessments enhance communication between students and teachers (P16).

Despite these advantages, some participants pointed out significant challenges, primarily related to technical issues. Problems such as weak internet connections or device malfunctions can create confusion and disrupt the assessment process. One participant specifically mentioned how these technical glitches can affect the experience, saying, “Slightly confusing when the Internet connection is weak or when there is a defect in the device while solving an electronic test or homework” (P13). This highlights the need for reliable technology to fully leverage the benefits of online assessment.

Concerns and Suggestions

Participants’ feedback reveals a range of concerns about the limitations and potential drawbacks of remote assessment in education. A primary theme that emerged is the need for diversification in assessment methods. Several participants emphasized that relying solely on traditional approaches might not effectively capture students’ true understanding. For example, P1 highlighted the importance of varying assessment tools by incorporating open questions and take-home exams, arguing that such methods encourage deeper thinking and more accurately reflect students’ learning levels. This perspective underscores a broader concern that conventional remote assessment methods might fall short in measuring students’ comprehensive understanding and learning outcomes.

Another major theme is the transparency and accuracy of remote assessments. Participants expressed reservations about how well remote assessments reflect students’ true abilities. P6 pointed out that remote assessments can lack transparency unless technological tools are used to monitor student activity. They noted that impressive results from electronic tests might not align with students’ actual levels when compared to in-person evaluations. This observation suggests a potential gap between remote assessment results and students’ real performance. Similarly, P5 remarked on the ease of preparing remote assessments but questioned their effectiveness in accurately evaluating students’ abilities. These concerns highlight the need for improved methods to ensure that remote assessments are reliable and accurately represent students’ academic levels.

In response to these concerns, participants proposed several strategies for enhancing remote assessment practices. They recommended leveraging technological tools, such as artificial intelligence, to improve monitoring and ensure assessment transparency. P6’s comments on using technology for monitoring underscore the importance of addressing transparency issues. Additionally, participants suggested incorporating a range of assessment tools, including oral questions and varied types of assignments, to better capture students’ performance and understanding. P15 specifically advocated for oral questions to assess students’ real grasp of the material. These recommendations aim to address the limitations of current remote assessment practices by improving their authenticity, accuracy, and overall effectiveness.

Methods of Raising the Efficiency of Online Assessment

Participants’ insights into enhancing the efficacy of online assessment highlight several key themes: technological infrastructure, teachers’ training, variation of assessment methods, follow-up, and morality.

Technological infrastructure is viewed as a cornerstone for effective online assessment. Participants emphasized the necessity of robust technology and reliable internet connectivity to ensure seamless assessment processes. P1 underscored the importance of having “good infrastructure of technology and internet,” while P8 highlighted the need for “immediate maintenance whenever a fault occurs” to address any technical issues swiftly. Additionally, P14 suggested that using cameras and other scientific devices could enhance the reliability of online assessments. These points reflect a consensus on the crucial role that solid technological support plays in maintaining the integrity and effectiveness of online assessment systems.

Teachers’ training emerged as another critical theme. Participants stressed that educators need comprehensive training to effectively use online assessment technologies and diverse assessment methods. P1 noted the importance of “good training for teachers and students” alongside robust technological infrastructure. This need for training is further supported by P11, who proposed tailoring questions to assess each student’s talents and abilities, implying that teachers must be adept at implementing varied and individualized assessment strategies. This emphasis on training suggests that equipping teachers with the necessary skills and knowledge is vital for optimizing the effectiveness of online assessments.

The variation theme of assessment methods was also prominently featured. Participants highlighted the importance of using a range of assessment tools to better capture students’ understanding and to mitigate issues such as cheating. P7 and P13 both stressed the need for a “diversity of remote assessment methods” and a “variety of Qs to reduce the chance of copying,” respectively. P13 also recommended incorporating “time-bound tests” and “video recording of test sessions” to ensure assessment integrity and accuracy. Additionally, P4 proposed “creating electronic quizzes continuously without grades or a specific end time,” allowing students to self-assess and receive feedback. These suggestions underscore the value of diverse assessment approaches to provide a more accurate and comprehensive evaluation of student performance.

Follow-up is another crucial area identified by participants. Effective follow-up involves checking students’ academic levels with appropriate methods, applying consistent rules similar to those in traditional classrooms, and coordinating efforts between teachers and students. P11 suggested setting individualized questions to reveal students’ talents and mental abilities, providing ample time for students to express themselves through various means. P13 emphasized employing “multiple assessment methods to verify students’ levels” and conducting assessments during virtual classes. These recommendations aim to ensure that online assessments accurately reflect students’ true capabilities and learning outcomes.

The morality theme highlights the importance of ethical considerations in online assessments. Participants emphasized that instilling trust and promoting a sense of responsibility among students are crucial for successful online assessment practices. P15 noted the need to “raise the student’s sense of responsibility, monitoring God, and then, after God, total dependence on oneself.” This perspective suggests that fostering ethical values and self-dependence can significantly impact the effectiveness and integrity of online assessments. Promoting transparency and encouraging students to take responsibility for their own learning are seen as essential for maintaining the credibility of online assessments.

Discussion

The study shows that while online assessments in EFL education are perceived as moderately effective, the challenges of technological readiness, academic integrity, and the need for more diverse assessment methods are key areas for improvement. The findings from the quantitative and qualitative analyses of teachers’ and students’ beliefs about online assessments in evaluating EFL students’ learning reveal several similarities and differences. Both analyses indicate that these beliefs are generally average, suggesting neutral or moderate perceptions of the effectiveness of online assessments. In both approaches, participants expressed concerns about the reliability of online assessments, citing issues like cheating, technical problems, and lack of sufficient feedback. The quantitative results highlighted that while online assessments like multiple-choice questions were rated relatively higher, methods such as electronic portfolios and discussion forums were rated lower, though still considered moderately useful. The qualitative analysis supported these findings, emphasizing that participants felt online assessments offered certain advantages, such as convenience and reduced anxiety, but also highlighted concerns about academic integrity and technological challenges. These findings align with studies like those by Elsalem et al. (2021) and Bilen and Matros (2020), which similarly pointed out concerns over cheating and the reliability of online assessments. However, some studies, such as Lee et al. (2022), found lower satisfaction rates due to technical difficulties, aligning with the current study’s findings of moderate perceptions, especially in areas related to internet connectivity.

A key similarity between the two analyses is the emphasis on the challenges posed by technical issues. Both teachers and students noted that poor internet connectivity, limited access to devices, and low computer literacy were significant barriers to the effective implementation of online assessments. This was consistently mentioned in the qualitative feedback, where participants expressed frustration over these obstacles, and it was corroborated by the average ratings in the quantitative results. The concern about cheating and maintaining academic integrity during online assessments also emerged strongly in both analyses. Participants from the qualitative analysis mentioned doubts about the transparency and accuracy of online assessment results, highlighting a gap between impressive test outcomes and students’ actual performance. This issue of trust in the online assessment process reflects the moderate ratings given in the quantitative data, where the perceived effectiveness of these assessments remained average. These concerns align with previous studies such as those by Elsalem et al. (2021), which also found dishonesty to be a major challenge in online assessments.

While both analyses highlighted concerns, there were differences in how certain advantages were perceived. The qualitative data showed that participants recognized some benefits of online assessments, such as reduced test anxiety and the flexibility they offer. This emotional benefit of online exams, as noted by Stowell and Bennett (2010), contrasts with the more neutral quantitative data, which did not particularly highlight these advantages. Additionally, the qualitative findings revealed a strong call for diversifying online assessment methods to include open-ended questions and take-home exams to better assess comprehension levels. This was less emphasized in the quantitative findings, which focused more on rating existing methods rather than proposing alternatives. Furthermore, some qualitative participants advocated for the use of AI and technological monitoring to improve the security and authenticity of assessments, a suggestion not directly reflected in the quantitative analysis but discussed in detail during the qualitative feedback.

The findings of the current study both align with and diverge from previous research. For instance, the average perception of online assessments found in this study mirrors that of Lee et al. (2022) and Ogange et al. (2018), where participants did not identify a significant advantage or disadvantage of online assessments compared to traditional methods. However, the positive perceptions of certain online assessment practices found in studies such as Hidalgo-Camacho et al. (2021) and Romeu Fontanillas et al. (2016), where high satisfaction was reported, differ from the current study’s average rating. This difference suggests that the type of online assessments used and the context in which they are implemented can significantly influence perceptions. Additionally, while previous studies, such as those by Rolim and Isaias (2019), indicated that teachers were aware of the importance of online assessments but had not adopted them ambitiously, the current study found that teachers viewed their implementation as moderately effective, suggesting a slight shift in awareness and application.

Moreover, the results revealed no significant differences between the responses of the study sample based on their roles (teacher, student). This indicates that teachers and students share similar opinions regarding the perceived efficacy of online assessment methods in evaluating students’ academic levels. The consistent perceptions across teachers and students may be attributed to shared educational backgrounds, exposure to technology, training programs, technological infrastructure, cultural attitudes, learning environments, common challenges, uniform communication, and alignment with educational goals in the specific EFL context.

Comparing the current study’s results with previous research offers valuable insights into the perceptions of online assessment methods across different educational contexts and demographics. A notable similarity across studies, including Elzainy et al. (2020) and Morsi and Assem (2022), is the absence of significant differences between teachers’ and students’ perceptions of online assessment efficacy. This consistency suggests a general consensus among educators and students regarding the utility of online assessments in evaluating learning, regardless of their roles within the educational setting. This variation underscores the diverse lenses through which online assessment is studied, ranging from its practical impact on academic success to its perceived effectiveness in facilitating learning and evaluation processes.

Conclusion

The study examined EFL teachers’ and students’ perceptions (beliefs & reported use) of online assessment methods in assessing students’ learning. The results indicated that both teachers’ and students’ beliefs regarding the effectiveness of online assessment on EFL students’ learning were average. Also, the utilization of online assessment methods was perceived at an average level. In addition, the participants’ roles (whether they were teachers or students) did not have a significant impact on their responses to the questionnaire. Moreover, a statistically significant correlation was observed between the respondents’ beliefs and the employment of online assessments. The interviewees’ responses revealed six themes: online assessment means, time and effort, performance, psychological pressure, and overall experience.

Limitations

The current study employed a convenience sampling method due to challenges in reaching the population, potentially limiting the generalizability of findings. The sample size may pose a limitation, impacting the ability to draw robust conclusions. The study instruments were developed with a focus on validity and reliability; however, results are bound by these factors. This research specifically explored the application of online assessment methods in the EFL context of Saudi higher education, making generalization to other contexts challenging. Additionally, the examination of EFL online assessment was confined to the use of Blackboard as the learning management system, limiting the extension of results to other platforms.

Pedagogical Implications

The study provides valuable insights into the perceptions and implementation of online assessment in EFL instruction. The findings underscore the importance of addressing technical challenges, as improving access to reliable internet and devices, along with enhancing computer literacy among both teachers and students, is crucial for fostering a positive perception of online assessments. Institutions should invest in robust technological infrastructure and offer comprehensive training to ensure educators and students can navigate online assessment platforms.

In addition, the study emphasizes the need for customized training programs aimed at improving the proficiency and comfort of both instructors and students with online assessment technologies. These programs should also address nuanced concerns revealed through qualitative analyses. An important focus should be on elucidating the advantages of online assessment, particularly aspects such as individualized learning, prompt feedback mechanisms, and increased scholarly engagement, while also mitigating psychological strain. To enhance the overall online assessment environment, the study recommends initiatives such as seminars on technology integration and advocating for the adoption of diverse assessment modalities. Moving away from a reliance on traditional methods like multiple-choice questions, educators should consider incorporating tools such as open-ended questions, oral assessments, and project-based tasks. This diversification could provide a more accurate reflection of students’ abilities and help mitigate concerns over academic dishonesty.

Moreover, the findings indicate no significant differences in perceptions based on roles (teacher vs. student), suggesting a common understanding and acceptance of online assessment practices across different educational stakeholders. This uniformity implies that efforts to enhance online assessment effectiveness can be broadly applied without significant disparities in acceptance or resistance based on these demographic factors.

Furthermore, the implementation of psychological stress-reduction strategies, the cultivation of collaborative learning environments, and continuous scholarly inquiry are recommended. Institutional support should include both tools and avenues for professional advancement. Establishing professional learning communities is also advocated, as these platforms can facilitate sharing exemplary practices and foster collective learning among instructors. This collective approach aims to enhance the judicious utilization of online assessment in the EFL context.

Future Research

The current study findings offer a basis for several suggested future research directions. One promising area for future research is investigating the efficacy of various online assessment techniques on EFL students’ learning. This study would determine how different online assessment strategies impact EFL students’ learning. Another research direction involves a comparative analysis of EFL students’ learning in offline versus online learning modes. This study can compare the learning outcomes of EFL students assessed using online methods to those assessed in traditional offline methods. Understanding the performance differences between these two modes can help educators design more effective assessment strategies tailored to EFL students’ needs. Additionally, further evaluation of traditional versus alternative assessment methods for EFL students could provide valuable insights. This research would compare traditional assessment methods, such as written exams, with alternative assessment methods, like project-based assessments and peer assessments, in terms of their effectiveness on EFL students’ learning. This comparison can help identify which methods are more effective in measuring and promoting students’ language proficiency and overall academic achievement.

Footnotes

Acknowledgements

The authors are thankful to the Deanship of Graduate Studies and Scientific Research at Najran University for funding this work under the Elite Funding Program under the Elite Funding Program grant code (NU/EP/SEHRC/13/178-1).

Author Contribution

The authors confirm contribution to the paper as follows: study conception and design: AAFA, MN; data collection: AAFA; analysis and interpretation of results: AAFA, MN; draft manuscript preparation: AAFA, MN. AAFA, MN reviewed, revised, and approved the final version of the manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the Deanship of Graduate Studies and Scientific Research at Najran University under the Elite Funding Program grant code (NU/EP/SEHRC/13/178-1).

Availability of Data and Materials

The datasets used or analyzed during the current study are available from the corresponding author on reasonable request