Abstract

Social media has become a popular means for users to accept and share the news. At the same time, however, it has also enabled the wide spread of fake news. The negative impact of fake news on society has been rapidly increased. To mitigate this problem, this study aims to find out the effect of social media users’ types of perception of information toward the acceptance and intention of spreading fake news. We conducted an online experiment with 743 of social media users and showed the following results. First, users respond differently depending on the type of message provider even if the same fake news. Second, users who relied more on experiential information processing system were more likely to accept fake news regardless of their perceived social conformity.

Keywords

Introduction

“Repeat a lie often enough and it becomes the truth,” is a law of propaganda often attributed to Joseph Goebbels (Stafford, 2016). This is related to the “illusory truth effect” which describes the tendency to believe false information to be correct after repeated exposure (Pennycook et al., 2018). If people are repeatedly exposed to false information, they will become more familiar with that information and will eventually believe it to be true (Lewandowsky et al., 2012).

Recently, it has become very easy for anyone with a mobile device to easily create and share information with the world (Huda, 2021). The rapid development of such technologies has created a new wave of fake news. As users can access information at any time using their mobile devices, it has become increasingly difficult for them to distinguish between fake or misleading information and fact from a vast amount of data (Torabi Asr & Taboada, 2019).

In recent days, social media recommendation algorithms are designed to continuously recommend content similar to those users have previously encountered at least once (Geschke et al., 2019). Therefore, when users encounter fake news on social media once, they could be repeatedly exposed to fake news due to the recommendation algorithms, called curation algorithms, thereby increasing the probability of believing fake news to be true (Nechushtai & Lewis, 2019). Users who encounter false information often do not verify the integrity of the information before sharing it with others and create a vicious cycle. Therefore, even if fake news is created by a small number of users, it can spread out to the world once a certain number of users start sharing them. An analysis by BuzzFeed found that during the 2016 US presidential election, people shared more fake news on social media than real news (Silverman & Alexander, 2016).

Fake news generally refers to content in which false information is disseminated in the form of news (Allcott & Gentzkow, 2017). As the spread of fake news has become a serious global issue, a new term, “infodemic,” a portmanteau of “information” and “epidemic,” was created to explain the phenomena. It was attributed to the fact that fake news spreads rapidly through media, such as the Internet, similar to an epidemic (Cinelli et al., 2020; Zarocostas, 2020).

As fake news related to disease endangers people’s lives, it has emerged as a serious problem in today’s society. During the recent COVID-19 pandemic, there was a tendency among many users to rely on information from the Internet to prevent or treat COVID-19 infection. This is basically a defense mechanism for them to avoid risk (Hovick et al., 2014). However, not all information on the Internet is accurate, and unverified or incorrect health information can threaten people’s lives. For instance, one misinformation circulating in social media falsely suggested that consuming methanol protected against and cured COVID-19 infection. This resulted in a death of about 300 people in Iran who believed this news was true (Associated Press, 2020).

According to a recent survey, about 88% of users responded that they have experienced confusion and fatigue due to the spread of fake news on social media (Pew Research Center, 2018). Therefore, much effort is being made to detect and prevent the spreading of fake news online. Various studies have been performed to improve the accuracy of detecting fake news based on linguistics and compositional characteristics of fake news using machine learning techniques (Jiang et al., 2021). However, with the advent of AI Bot, fake news that looks exactly like real news can be automatically generated very quickly, so it is meaningless to distinguish fake news and real news based on the appearance and linguistic characteristics of fake news. In other words, as fake news has become more sophisticated recently by very closely mimicking real news, limitations in the existing algorithmic methods of identifying fake news by analyzing the characteristics of the text itself have become evident (Shu et al., 2019).

Therefore, this study mainly looks into the users’ characteristics and socio-psychological factors that could affect users’ acceptance and sharing of fake news, in addition to the characteristics of the news itself. In this study, it is hypothesized that a user’s information processing system, which is formed by the interactions between his or her cognitive, psychological, and social factors, will make a difference in their acceptance and sharing of fake news. This study also examines whether social conformity, which is a type of social influence seeking to identify or resemble individual attitudes and behaviors with group members (W. Zhang et al., 2019), has an effect on acceptance and sharing of fake news. We also identify the role of information spreaders since everyone, including the information creator, can easily share and spread information in social media. Finally, this study investigates the effects of the user’s information processing system, social conformity, and type of message source on the user’s acceptance and sharing of fake news through an online experiment. In addition, to examine the main effects of information processing tendency, social conformity, and type of message source independently on individuals’ acceptance and intent to spread fake news, this study hypothesizes that each factor will affect users’ acceptance and intent to spread fake news. During a user’s decision to accept and spread fake news, the three independent factors will inevitably influence one another. Nevertheless, few studies examine the interaction between various factors, affecting users’ acceptance and the spread of fake news. Therefore, in this study, users’ attitudes according to the interaction effect of the factors will be examined, considering the combination of possible factors. It differentiates from previous studies by examining the users’ attitudes toward fake news depending on the interaction effect based on the socio-psychological factors of users.

Literature Review

Fake News

Fake news has become an issue affecting society by spreading misinformation quickly and widely through social media (Gupta et al., 2022; Rodrigo et al., 2024). It spreads rapidly due to the characteristics of social media, in which information spreads quickly and easily. Social media includes functions such as “share” or “retweet” that could spread information simply with a single click. Therefore, regardless of the content and type of information, users can repeatedly share or re-share without verifying the authenticity of the information, aggravating the spread of fake news at an exponential rate (Budak et al., 2011). The spread of fake news was found to be about six times faster than that of real news (Vosoughi et al., 2018).

As fake news has become a social problem globally, relevant research has begun to be conducted actively. However, most prior studies mainly focused on improving algorithm performance for detecting fake news in social media, and not much attention was given to research from the perspective of information receivers who accept and spread fake news. Most studies on information receivers’ perspectives examined attitudes toward accepting fake news based on an individual’s demographic characteristics such as age, educational background, and nationality. People tend to share information without verifying its authenticity to show off their ability to retrieve information, build relationships with others, and communicate with friends (Rampersad & Althiyabi, 2020). Prior research found that characteristics of an individual such as age, education level, gender, and cultural difference and the degree of exposure to fake news influenced his or her acceptance of fake news. Furthermore, the results differed by nationality of individuals, indicating that the level of acceptance of fake news depended on an individual’s culture and social environment (Rampersad & Althiyabi, 2020). In addition, younger male social media users having higher educational background and income, more progressive political orientation, and more extroverted and open-minded, were more vulnerable to fake news (Yum & Jeong, 2019). It was also found that age and cultural environment have a greater influence on an individual’s acceptance attitude than an individual’s education level or gender (Rampersad & Althiyabi, 2020).

Recently, various studies have been conducted on the capabilities required for individuals to identify fake news themselves. As fake news related to various health, including COVID-19, spreads a lot on social media, it has been found that health literacy and digital literacy, which enable individuals to select and use appropriate information sharing channels, are commonly required (Balakrishnan et al., 2022; Bukhari et al., 2022; Cheng & Nishikawa, 2022; Htay et al., 2022). It has been also considered as important factors to identify news authenticity by educating the public on digital resilience and enhancing awareness around source credibility of individuals (Rodrigo et al., 2024). Digital resilience is the ability to help individuals recognize and manage the risks they face on social media and has recently been seen as a core ability to identify meaningful information among the multitude of social media content (Humprecht et al., 2020).

As fake news related to the COVID-19 vaccine increased on social media in a short period of time, various consultations and publicity were conducted to prevent the spread of fake news and improve acceptance of correct information, however, people could not break away from the fake news that they had previously accepted. (Gupta et al., 2022; X. Wang et al., 2022) . It was found that the group exposed to fake news related to COVID-19 did not change significantly in their will to recover compared to the group not exposed to fake news, despite enough counseling about COVID-19 (Talabi et al., 2022). It turns out that the one of the major motivators of sharing fake news are, followed by anxiety, deficient self-regulation, information-seeking, socialization, and entertainment (Malik et al., 2023). Furthermore, about 17% of the users who shared fake news intentionally diffused fake news even after being aware that it was fake (Carson et al., 2003; Pennycook et al., 2018). This indicates that the attitude of users to accept and share fake news as information even after recognizing it to be false is different from information processing system which explains users process information that is only perceived as useful. Therefore, unlike previous studies that looked into factual information only, this study will focus on users’ information processing system by exposure to fake news. Furthermore, as the information processing tendency on fake news is a complex system of a user’s cognitive social unit (S. Epstein, 2003), this study will investigate the characteristics of fake news receivers from a more diverse perspective unlike previous studies that focused only on general individual attributes (e.g., age, academic background, and gender).

Most previous studies related to detecting fake news in social media are mainly conducted in two perspectives: “news content” and “social context” (Shu et al., 2017). The “news content” approach pays attention to the linguistic characteristics including the title to distinguish fake news from real news by natural language processing (NLP). It presumes that texts in fake news and real news have linguistically distinct and differentiated features. Based on it, models have been established to automatically classify fake news in certain fields, by looking at how frequently certain terms are used from the Linguistic Inquiry and Word Count (LIWC) (Rashkin et al., 2017). In addition, there have been efforts to distinguish between fake and fact news by using LIWC, 11 punctuation including periods, commas, question marks, and exclamation marks, and words related to positive and negative vocabulary as dependent variables (Pérez-Rosas et al., 2017). Based on this feature, it was presented that a method to identify fake news by looking at the design of the news such as title, author, and source indication format (Kim & Dennis, 2019). Conroy et al. (2015) proposed a hybrid fake news detection method that combined machine learning and network analysis based on the linguistic cue of fake news. Using n-grams, they distinguished fake news from real news based on the frequency of consecutive words. A new hybrid fake news detection system using a propagation tree and stance network function is introduced as a new method of analyzing the sentiment of replies related to the news articles by extracting various graph-based features, and its performance has improved compared to previous studies using similar algorithms (Davoudi et al., 2022).

The prevalence of automatic news generators (i.e., AI Bots) facilitates the creation of fake news that is identical to real news and reveals the variance in prediction accuracy depending on the language in which it is written. It finally reduces the effectiveness of methods that focus on the text and composition. Therefore, the need for research beyond identifying the visible fake news is being raised.

The “social context” approach detects fake news by paying attention to the behavior of social media users. This approach determines the fact news by adopting functional factors that indirectly show users’ usage behavior, such as the number of “likes” on Facebook and the number of “retweets” on Twitter as explanatory variables (Della Vedova et al., 2018). Specifically, it was found that how author and source ratings are displayed within the news, affected readers’ level of trust, which influenced their overall “degree of participation” with the news, indicated by number of hits, likes, comments, and shares. Therefore, it is a method of judging news with high user participation in the form of “likes” or comments as reliable news. This approach lacks accuracy in identifying fake news because it can arbitrarily increase or manipulate user participation activities centered on a small group of people who intentionally and maliciously deceive the majority through fake news.

As suggested above, almost studies to date propose the model to detect fake news by mainly focusing on compositional and linguistic characteristics of fake news and users’ engagement in fake news. Not much considerations have been given to the characteristics of users who actually generate, accept, and spread fake news. It is essential to have a better understanding of the users who create and spread fake news to prevent the generation and spread of fake news in advance. In order to improve the accuracy of the prediction of algorithms to detect fake news and establish strategies to deter spread, this study will examine the change in users’ attitudes toward fake news according to their characteristics from a user-centered perspective which has not been covered in previous studies.

Hypotheses Development

Information-Processing Systems

Cognitive Experiential Self Theory (CEST) is a theory that integrates the user’s information processing system from the viewpoint of cognition (S. Epstein, 1998, 2003; W. M. Epstein, 1990). CEST is based around the idea that people operate using two separate systems for information processing: the rational system and the experiential system (S. Epstein, 2003; W. M. Epstein, 1990). The rational system is based on analytical principles, whereas the experiential system is driven by emotions (S. Epstein, 1998). Therefore, the experiential system adapts by learning through experience and intuition rather than logical reasoning (Hafner-Burton et al., 2013). The rational system mainly uses cognitive information based on process orientation, whereas the experiential system is mainly outcome-oriented (S. Epstein, 2003).

When users do not know anything about a rumor, they tend to believe the rumor more easily (Cass, 2009). For example, if a rumor requires expertise in science, finance, or medicine, it is identified that the general public easily believes the rumor due to its lack of knowledge to determine whether the rumor is true or false. That is, the users’ knowledge related to rumors affects their attitude toward acceptance (Evans, 2008). The information processing system, which influences the user’s decision to accept rumors, works synthetically with the user’s beliefs, stereotypes, experiences, and logical thinking. Users with higher analytical and logical thinking abilities tend to process information more rationally and are less likely to trust rumors (Pacini & Epstein, 1999). The intention to accept online rumors is also higher among those with the experiential system rather than those with the rational system (Ahn & Lee, 2011). Users who are more emotionally involved with online rumors tend to spread the rumors more (Kweon & Cho, 2017). The results of previous studies show that regardless of whether the information is true or not, it is mainly the users’ information processing system that affects their information-related activities, such as accepting and sharing information. Therefore, this study assumes that individuals’ information processing tendency will affect their attitude toward fake news.

H1a. The users’ type of information processing system is significantly associated with the acceptance of fake news.

H1b. The users’ type of information processing system is significantly associated with the intent to spread fake news.

Social Conformity

Social media users nowadays can easily experience “filter bubbles” and “echo chambers.” Filter bubbles refer to a phenomenon in which social media algorithms providing filtered information based on users’ preferences isolate certain users with similar tendencies into a bubble; similarly, echo chamber refers to a situation in which certain beliefs are amplified and reinforced by communication between users with similar opinions and thinking (Cinelli et al., 2021). By participating in an echo chamber or filter bubble, users can seek only information that reinforces their existing views without encountering opposing views. This is due to social conformity in which users tend to accept and present their opinions in limited spaces such as social media based on social reactions and moods rather than expressing their own thoughts (Pilditch, 2017). Thus, social conformity refers to the assimilation of an individual’s thoughts and behaviors with social norms or opinions of the majority (Mallinson & Hatemi, 2018).

Social conformity, as the information processing system, provides a cause for individuals to believe in information by complying with the opinion of the majority. During information processing, social conformity mainly plays a role when deciding whether to accept the information (Bikhchandani et al., 1992). When a user has to make a judgment on information and finds out that his or her view is different from that of the majority, the user tends to follow the majority’s decision due to concerns over forming a relationship with the majority (Rosnow et al., 1986). Thus, social conformity is more strongly expressed in individuals in collectivistic cultures than individuals in individualistic cultures (Kongsompong et al., 2009). In collectivistic cultures, emphasis and priority is given to group consent rather than an individual’s beliefs or opinions.

Social conformity within a group is a significant factor that influences users’ acceptance of online rumors. In online communities with high social conformity, users were more likely to accept online rumors than users in communities with lower social conformity (Ahn & Lee, 2011). When social conformity occurs to a malicious rumor about a company in social media, users become more confident that the rumors are true, while their trust in the company and purchase intention of the company’s product decreases (Jang & Jo, 2013).

If a certain number of people believe in a rumor, a rational person will also depend on that rumor due to social conformity regardless of whether the rumor is true (Bikhchandani et al., 1992). Comments or “Likes” in social media promote users’ awareness of social conformity because these functions express users’ thoughts to others in an online community. That is, an opinion expressed commonly by the majority or a common social implication leads users to follow the opinion or accept the information (Wijenayake et al., 2020). Therefore, people who read comments supporting the rumors are more likely to believe the rumors than those who do not (Q. Wang et al., 2018). Therefore, this study will investigate the effect of users’ perceived social conformity on their acceptance and intent to spread fake news.

H2a. The users’ perceived social conformity is significantly associated with the acceptance of fake news.

H2b. The users’ perceived social conformity is significantly associated with the intent to spread fake news.

Type of Message Source

Besides the content creator or the initial provider of information, information on social media can be shared and played repeatedly by anyone. As information can be transmitted anonymously in social media, the credibility of an information source is an essential factor when deciding whether to accept the information (Sussman & Siegal, 2003; Watts & Zhang, 2008). Therefore, the source of information, “who speaks the information,” is an essential factor for an individual when deciding whether to accept the information (Xiang & Gretzel, 2010). In contact-free environments where people do not have direct control over communications, receivers of information are affected by the reliability of the information source when deciding whether to accept the information (McCroskey et al., 1974). The perceived credibility of social media influencers is generally categorized into three types: trustworthiness, attractiveness, and expertise (Chekima et al., 2020).

A message provider’s trustworthiness can be defined as the belief in the provider’s professionalism and the authenticity of a persuasive message (Sussman & Siegal, 2003). When a user provides information through an unverified identification or in anonymity, it is difficult to verify the identity of the source on social media. Therefore, it is widely believed that the amount of information the message provider provides (i.e., trust in the message provider) influences a user’s decision to accept the information. When messages are provided by a message provider whose profile is made more public, the receivers’ confidence in the information source increases, increasing their willingness to accept the information (W. Zhang & Watts, 2003). If more profile of a message provider is provided, information receivers feel the more social presence of that message provider, thereby reducing their uncertainty towards the message providers. However, on Twitter, the amount of personal information disclosed has not always shown a significant positive correlation with trust in information sources (Morris, 2018; Stam, 2010).

Attractiveness is formed based on familiarity, similarity, and favorability of the information provider. Familiarity refers to how much information an information provider knows with the receiver, and favorability is based on emotional intimacy (Osei-Frimpong et al., 2019). Similarity refers to how similar the information provider is to the information receiver in various ways. In other words, it is the degree of various cognitive and emotional breadths of interactions that information receivers can expect to have with information providers. The attractiveness of information providers is formed comprehensively through familiarity, similarity, and likability (Osei-Frimpong et al., 2019). The attractiveness of information providers includes their intellectual ability, personality, and their lifestyle. Based on their attractiveness, information receivers become more willing to accept the information by identifying themselves with the information providers (Lim et al., 2021). This is the reason why many advertisements are made known to the public through celebrities or influencers.

The expertise of information providers refers to the relevant skills, competencies, and knowledge they possess (Reichelt et al., 2014; Till & Busler, 2000). More specifically, expertise is an attribute perceived by information receivers in relation to the education level, experience, ability, intelligence, achievement, and status of the information source, as well as knowledge or insight on the subject or issue (Kelly, 1992). Therefore, the more information receivers believe that the information provider who gave them information was sufficiently professional, the higher their intention to accept the information (Tien et al., 2019). In an online environment, the word-of-mouth effect of the information provider increases when the information receiver recognizes the information provider’s expertise (Lim et al., 2021).

Among the various characteristics of information providers (attraction, expertise, and trustworthiness), only expertise was found to affect purchase intention (Ilicic & Webster, 2013). Similarly, expertise rather than the attractiveness of information providers had a significant effect on product advertising effectiveness (S. W. Wang & Scheinbaum, 2018). However, only few studies focus on the expertise of information providers, rather than the attractiveness formed between information providers and receivers, to examine the effectiveness of information delivered by them. Therefore, it is necessary to categorize information providers into experts and the general public and examine the effect of information providers’ expertise on the receivers through message delivery. Based on the aforementioned findings, this study proposes the following hypothesis.

H3a. The type of message sources is significantly associated with the acceptance of fake news.

H3b. The type of message sources is significantly associated with the intent to spread fake news.

Information Processing, Social Conformity, and Type of Message Source

Individuals make decisions according to their main information processing systems, and at the same time, their decision-making is affected by interactions with other factors. This is confirmed through the information cascade phenomenon in which even individuals with strong rational information processing systems imitate the words and actions of others according to interaction with social conformity (Bikhchandani et al., 1992). In addition, users with a strong experiential system show a weak correlation with transformational leadership compared to users with a strong rational system, but their transformational leadership increases when they interact with others with constructive thinking experience (Cerni et al., 2008). Hence, recognition of social conformity for fake news from an uncertain source is expected to interfere with an individual’s rational judgment. Also, the gender of the information source affects the level of information acceptance for a given message, even if the information processing system remains the same (Bukhari et al., 2022). This shows that there is an interaction effect between information processing tendency and information source type on an individual’s information acceptance attitude. Therefore, this study aims to verify the interaction effect of the information processing system and social conformity to seek whether individuals handle fake news by adhering to their existing primary information processing systems even if in social conformity manifestation that confirms the actions and thoughts of a large number of people. In addition, the interaction effect between the type of information source and an individual’s information processing tendency will be examined together.

It was found that users are more willing to accept rumors when they hear them repeatedly, trust the source of the rumors, and confirm that the rumors are in line with the thoughts of many people (Rosnow, 1991). In addition, when a user encounters comments supporting a rumor through multiple media use, their intent to spread the rumor is higher than a rumor encountered through single media use. In other words, in a user’s intent to spread rumors, there is an interaction effect between the users’ social conformity (perceived by encountering comments supporting the rumor) and repeated confirmation of the source through multiple media use (Kim & Yoon, 2015). This study hypothesizes that a user’s information processing system, social conformity, and the type of message source will mutually influence one another on a user’s intention to accept and spread fake news, and hence propose additional hypotheses as follows.

H4. There will be a two-way interaction effect between the users’ type of information processing system and the social conformity (H4a) on the acceptance and (H4b) the intent to spread fake news.

H5. There will be a two-way interaction effect between the users’ type of information processing system and the types of message sources (H5a) on the acceptance and (H5b) the intent to spread fake news.

H6. There will be a two-way interaction effect between the users’ social conformity and the types of message sources (H6a) on the acceptance and (H6b) the intent to spread fake news.

H7. There will be a three-way interaction effect among the types of users’ information processing system, social conformity, and the type of message sources (H7a) on the acceptance and (H7b) the intent to spread fake news.

Methods

Participants and Procedures

In this study, an experiment was conducted online with 743 participants (N = 743), who has a Twitter account. The participants in the experiment were recruited through Amazon Mechanical Turk (MTurk), an online crowdsourcing marketplace where a requester posts a task in a virtual space and workers complete the task for a reward. MTurk represents a new technological advancement that is already being adopted by psychological and behavioral researchers (Paolacci & Chandler, 2014). A lot of social science fields have been continuously using MTurk for research mainly including behavioral science (Chandler & Kapelner, 2013; Horton et al., 2011), psychology (Buhrmester et al., 2011; Gomez et al., 2022; Rinderknecht, 2019), and political science (Berinsky et al., 2012; Healy & Lenz, 2014). The vast majority of the studies supported the use of MTurk for a variety of academic purposes. The studies find out overwhelmingly supportive of the reliability, and high quality of collected data by using MTurk (Bentley et al., 2017). Respondents in MTurk are generally divided into Masters and non-Master Workers depending on whether they possess expertise in a specific field. A number of previous studies verified that non-Master Workers are easier to guarantee the higher reliability of collected data than Masters based on the Cronbach’s alpha index (Rouse, 2019). Therefore, MTurk is considered to be more suitable when the collected data is required to be more diverse in income, education, and employment status (Berinsky et al., 2012; B. Zhang & Gearhart, 2020) like the sample population of this study. It was also confirmed that online survey based on crowd-sourced platform including Mturk can bring better results than in-person data collection in some behavioral studies (Casler et al., 2013). MTurk participants are more demographically diverse than standard Internet samples and collected data is as reliable as that collected through traditional methods (Buhrmester et al., 2011; Chen et al., 2022). Recent studies identified the validity of interactive experiments operated by MTurk by replicating a few of classic experiments that involved randomly assigning participants to each group (Summerville & Chartier, 2013). The results confirmed that manipulations that require direct explanation and training given by a researcher also seem to be equally effective with MTurk samples, despite the lack of direct personal interactions employed in the lab (Casler et al., 2013). Therefore, this study determined that Mturk was suitable for this study and, experiment participants were recruited, and an online experiment was conducted by Mturk. A total of 743 respondents participated in this survey, with ages ranging from 18 to 59 years old. Most of the respondents (63.5%) possess bachelor or diploma degree. Details of the respondents’ demographic characteristics are presented in Table 1.

Demographic Information of the Participants.

The experiment was a 2 (Information processing tendency: rational system versus experiential system) × 2 (social fit: high vs. low) × 2 (type of message source: expert vs. public) between-subjects factorial design. The participants were randomly assigned to four experimental stimuli and received tweet messages on unconfirmed COVID-19 vaccine-related information. When all participants read their tweets, they were requested to respond to a questionnaire whose purpose was to find out whether they thought the tweet message was manipulated and to measure their intent to accept and spread the message. The participants were also asked to indicate their information processing system and demographic information. Upon responding to the questionnaire, the experiment was terminated by debriefing the participants that the tweet messages were fabricated to conduct this study.

Experimental Stimulus Materials and Manipulation Check

The stimuli for the experiment were tweet messages related to COVID-19 vaccines. COVID-19 has been a topic of great interest across the globe regardless of gender, nationality, or age, today. As media reports on the development of COVID-19 vaccines surged, various fake news on the vaccines have also been circulating rapidly. Therefore, in this study, experimental stimuli were tweet messages on fake news related to the development of a COVID-19 vaccine. The tweet messages for the experiment were designed like real tweets, to simulate a real Twitter environment for the participants.

Based on 2 (social conformity: high vs. low) × 2 (information source type: expert vs. public) factorial design, the tweet messages for this experiment consisted of four conditions. First, the number of retweets and likes were indicated differently in the tweet so that the participants could recognize social conformity through the tweet messages. A tweet message with 1,810 retweets and 50,996 likes, and a differently structured tweet message with four likes and four retweets were created to categorize the participants into two groups upon receiving the message; a group recognizing high social conformity and another recognizing low social conformity.

To examine users’ attitudes toward fake news according to the type of message speaker, this study adopted a fictional character as Anton Mitchell, MD, Ph.D., who can be considered to have high expertise in COVID-19 and is a director affiliated with the FDA’s Center for Biologics Evaluation. “91% of Twitter users” was also selected as speakers to express the types of message provider of the general public. Fake news frequently uses a trick which include extremely large or small numbers or stimulating and negative words such as fear, anxiety, and urgency to appeal them, in the recent. Therefore, one of the representative methods presented in the guidelines for identifying fake news commonly includes “isn’t the news you are reading now presenting an extreme (very large or small) statistics or ratio?”

When the majority increases to a certain level, the change in people’s attitudes can occur more significantly (Clark & Maass, 1990). Therefore, this study assumed that the majority of users who conform information increases to a certain level, more people would accept the information.

About 10% of Twitter users who generate 92% of tweets worldwide are classified as heavy twitter users who create a ripple effect that can shape public opinion within Twitter (Popa, 2023). Twitter has adopted a blue badge system which has been attached next to user account names as a means to distinguish genuine notable account holders, such as celebrities and politicians. Users who have received a blue badge could be considered verified users by Twitter. On the contrary, the 91% of general users, who use Twitter only to make their profiles and communicate or read Tweets without specific purposes such as shaping public opinions or advertisements (Mislove et al., 2011). This study reflected these various Twitter statistics and the recent trends of fake news, so that created stimuli which indicates general users of Twitter. The tweet messages used in the experiment are shown in Figure 1.

Twitter message stimuli.

After reading the designated tweet, participants answered to several manipulation check questions in multiple choice format. They were required to answer to check whether social conformity had been manipulated properly, a 7-point Likert scale survey question on “This tweet is being actively retweeted” was conducted. The result showed that the scale value for a tweet with high social conformity (M = 5.52, SD = 0.83) was higher than a message with low social conformity (M = 2.27, SD = 0.86), indicating that the messages were properly manipulated (t = 16.50, p < .001). Participants were also asked to answer who the message provider of each tweet they received was with the following statement: “This message was written by an expert (M = 6.78, SD = 0.34)” or “This message was written by popularity (M = 6.23, SD = 0.46),” and confirming that the manipulation of the stimuli was successful (t = 13.24, p < .001).

Measurement Development

The present study measured five main variables: accept fake news, intention to share fake news, information processing system(rational/experiential). All the variables were measured on a 7-point Likert scale (1 = strongly disagree, 7 = strongly agree). We adopted the measurement scales from previous studies and modified them slightly to reflect the purpose of this study (see Table 2).

Questionnaire Items.

Accept fake news was measured using three items (Stieglitz & Dang-Xuan, 2013): (1) I will comply with this news and change my behavior, (2) I am likely to accept this news that I receive on Twitter, and (3) I am influenced by this news that I receive on Twitter (M = 4.87, SD = .97).

Intention to share fake news was measured using three items from Schultz et al. (2011): (1) I will share this information by retweeting it, (2) I will share this information with others through my other SNS network, and (3) I will share this information with others who are important to me (M = 4.86, SD = 1.09)

Rational and experiential of information processing system was measured using seven items respectively from Pacini and Epstein (1999). Sample items were “I enjoy intellectual challenges” and “I prefer complex problems to simple problems” (M = 4.89, SD = 0.70) of rational information processing system and “I believe in trusting my hunches” and “I trust my initial feelings about people” (M = 5.39, SD = 0.76).

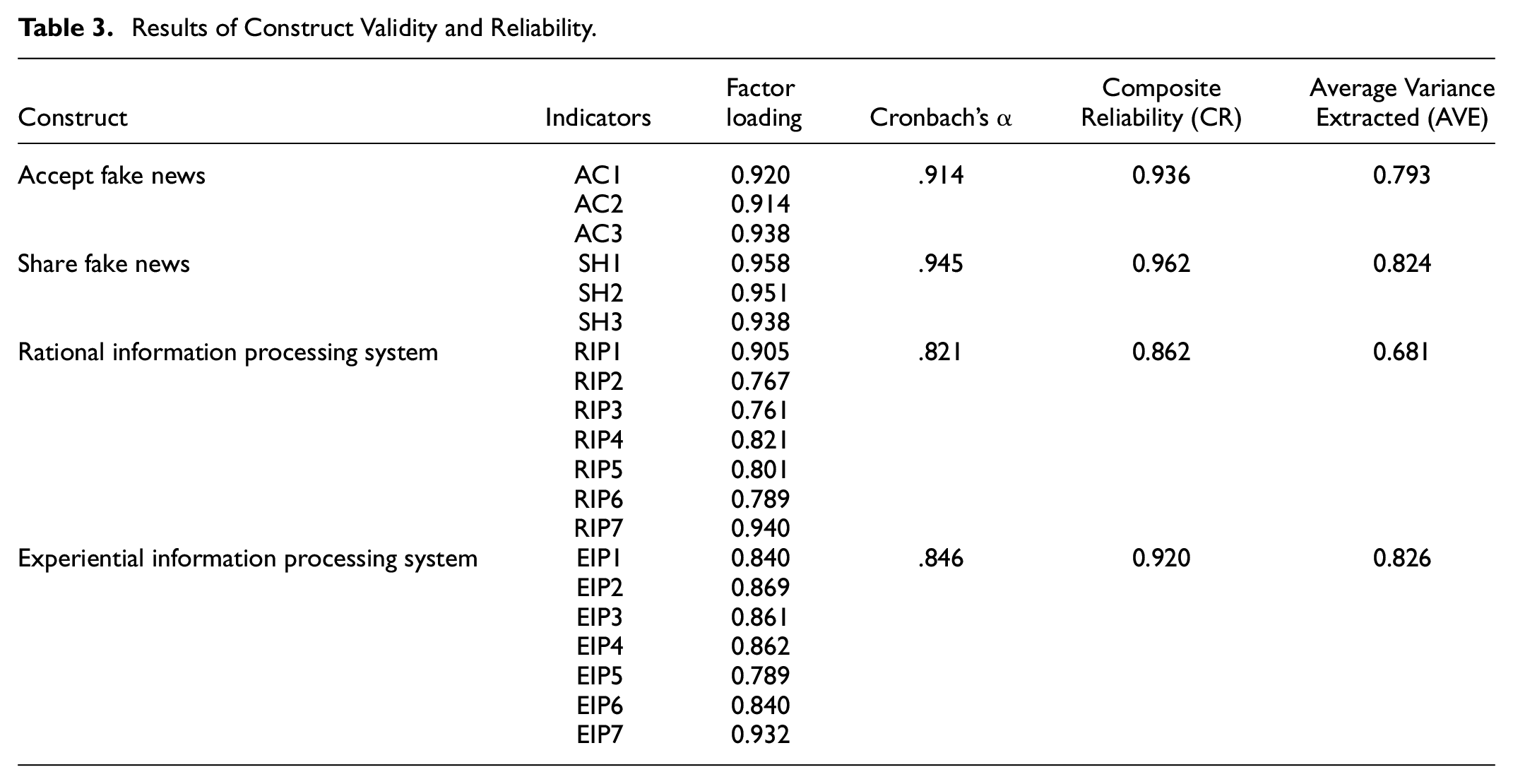

Convergent Validities

For accessing the reliability of our constructs, we examined Cronbach’s α and factor loadings. The values of Cronbach’s α and all factor loadings of all constructs are greater than 0.70, which is higher than the recommended minimum value of 0.70 (Chin, 1998). Therefore, all questionnaires items presented strong reliability. To identify convergent validity, we evaluated the composite reliability (CR) and average variance extracted (AVE). All the constructs of CR values, in this study, are higher than the criteria value of 0.80, suggested by Fornell and Larcker (1981). AVE of each construct is higher than 0.60, as shown in Table 3, indicating that all the constructs have good convergent validity (Fornell & Larcker, 1981). Table 4. demonstrates that the square root of AVE for all the constructs are larger than those for all related inter-construct correlations (Fornell & Larcker, 1981). Finally, we can conclude that all of questionnaire items, in this study, have suitable construct discriminant validity.

Results of Construct Validity and Reliability.

Result of Discriminant Validity.

Results

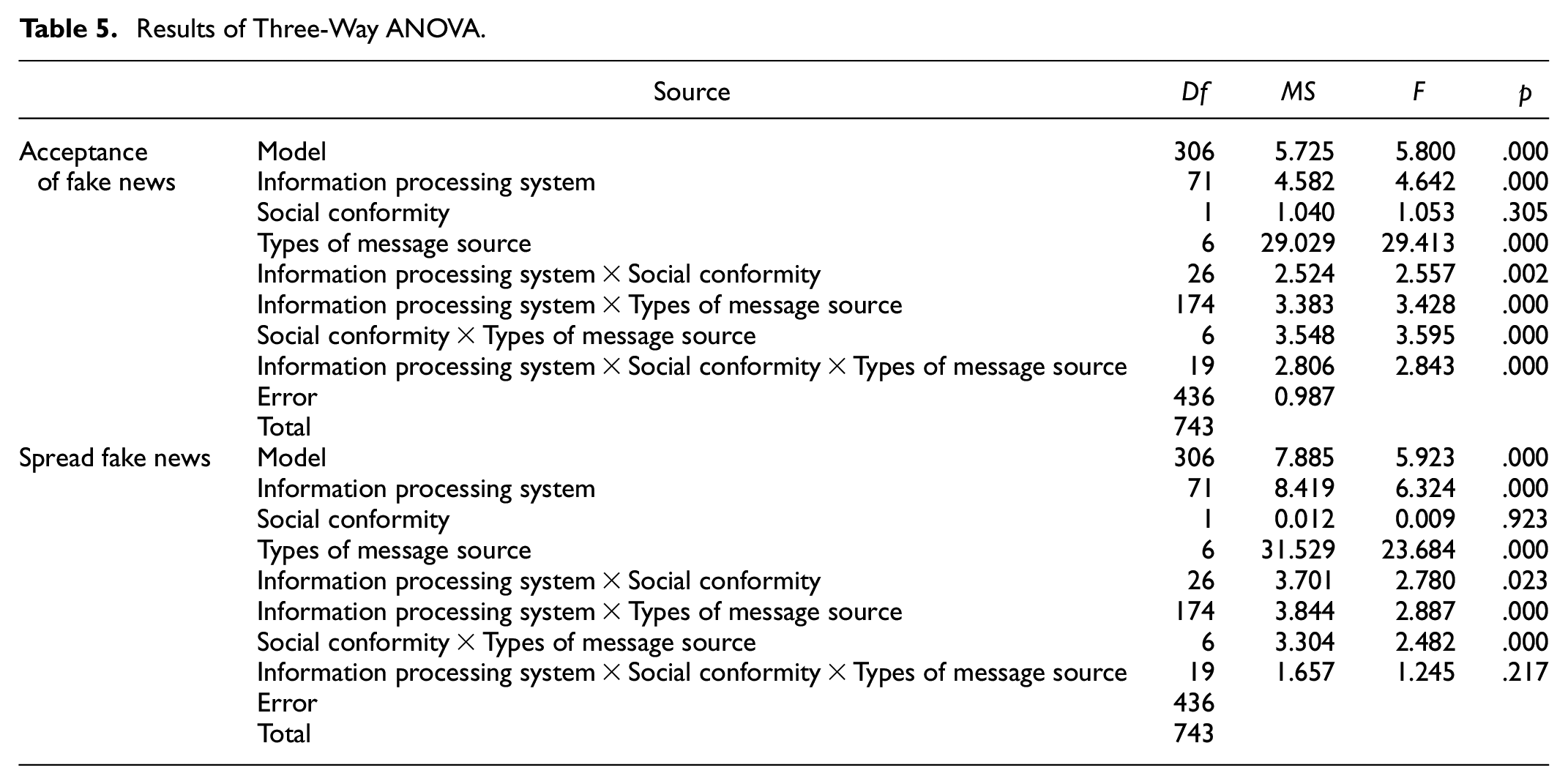

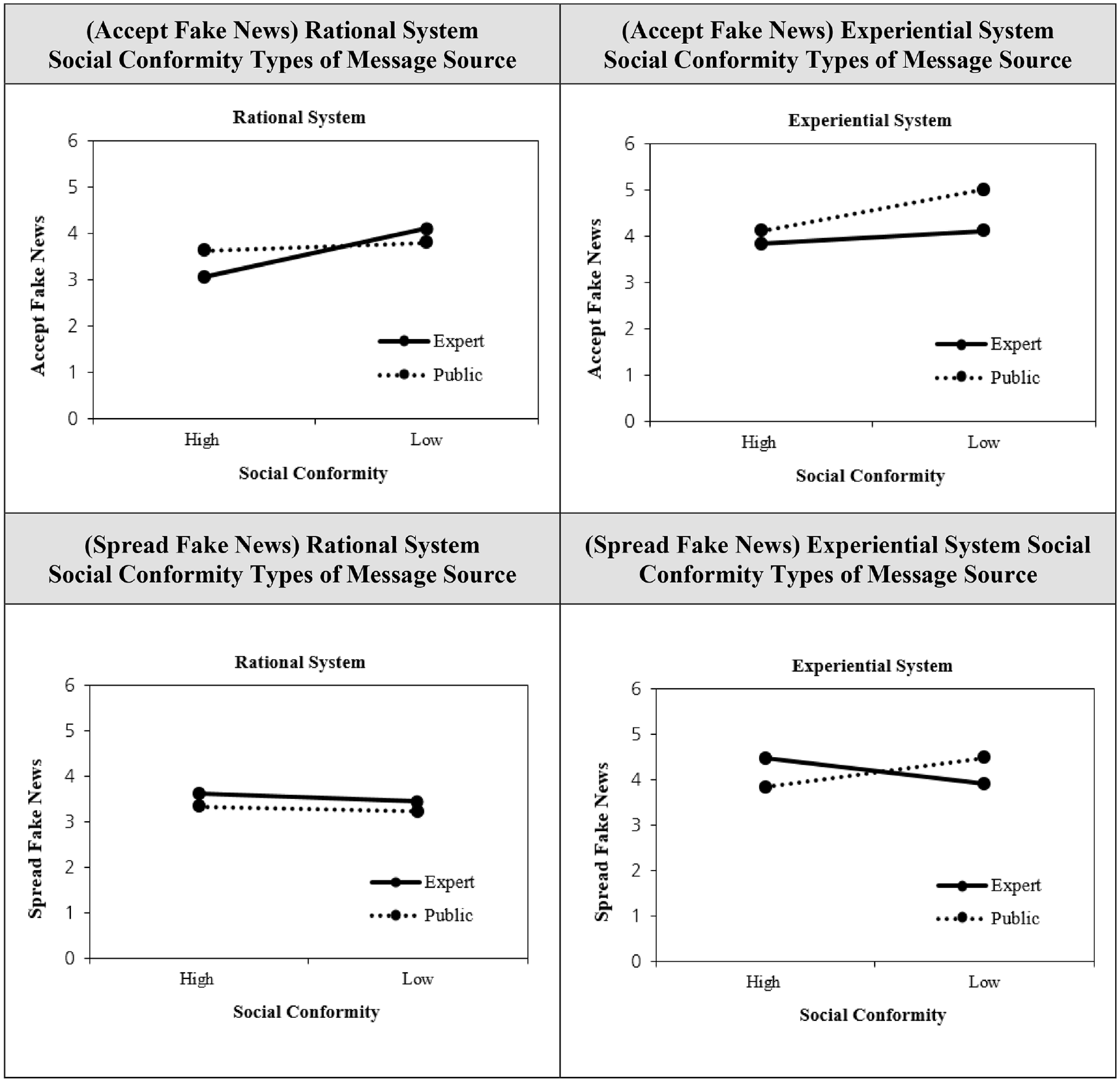

A three-way ANOVA test was conducted to examine the main effects of users’ information processing systems, social conformity, and the type of message source on fake news acceptance and spread intention, respectively, as well as differences in attitudes from the interactions among the variables. Results showed that the main effect of the users’ information processing systems was significant both in the acceptance of fake news (p = .000) and intention to spread (p = .000). The interaction effect between social conformity and the type of message source was significant for both the acceptance of fake news (p = .002) and intention to spread (p = .023). However, for social conformity corresponding to H2a and H2b, the main effect was not observed for both the acceptance and spread of fake news. On the other hand, the type of message had a main effect on both dependent variables, and the interaction effect with social conformity was also significant in both fake news acceptance (p = .000) and intention to spread (p = .000). To sum up, only two independent variables, information processing systems and the type of message source, had main effects, supporting H1a-b and H3 a-b. The interaction effect between the two independent variables was significant for both dependent variables, fake news acceptance, and spread intention, supporting H4, H5, and H6. However, only H7a was supported as an interaction effect between the three variables was observed only in acceptance of fake news (p = .000), and not in an intention to spread. Further details on the results are presented in the following Table 5 and Figure 2.

Results of Three-Way ANOVA.

Interaction effects of information processing system, social conformity, and the type of massage source toward the acceptance and spread fake news.

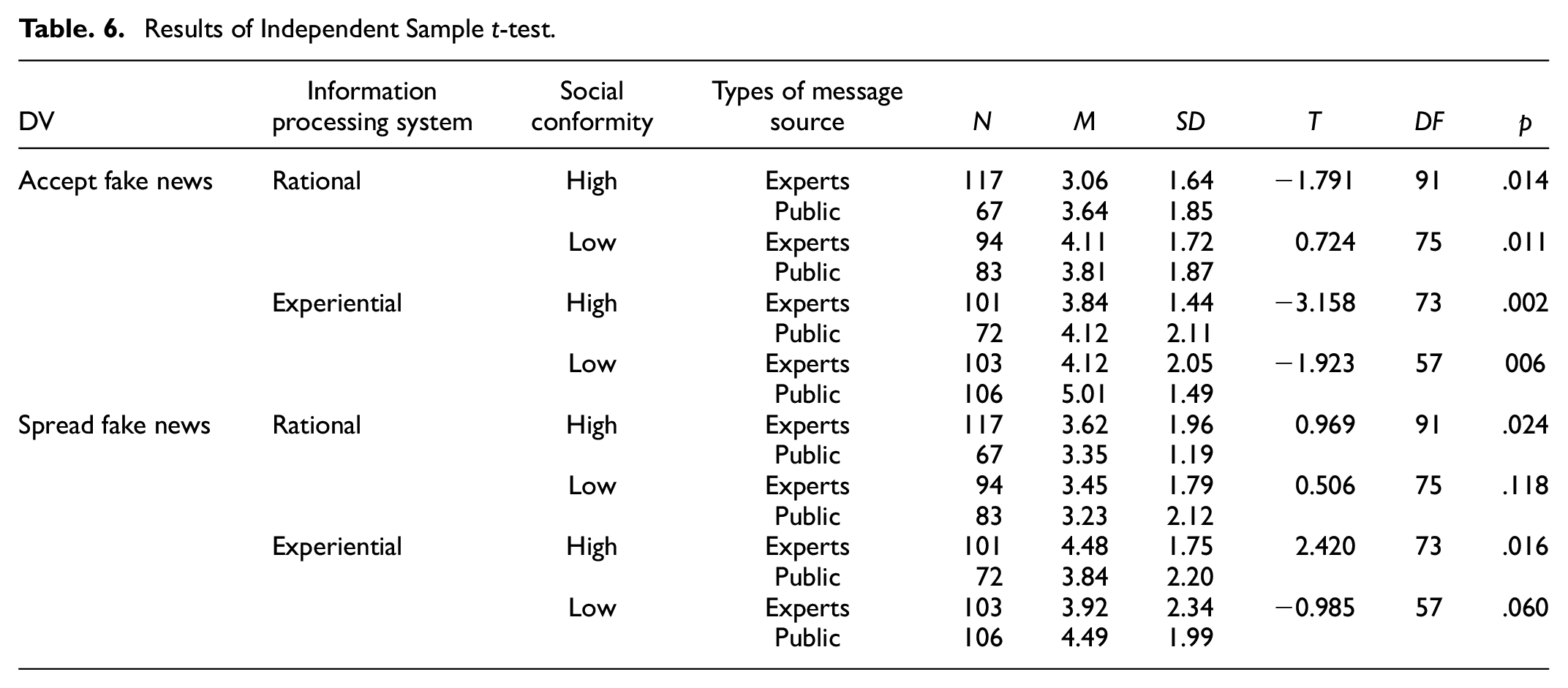

Additionally, an independent sample t-test was conducted for post-hoc analysis of interaction effects. The results of post-hoc analysis is shown in Table 3. When users with rational information processing systems were exposed to fake news in high social conformity condition, they tended to accept news sourced from the general public (M = 3.64, SD = 1.85) more than that sourced from an expert (M = 3.06, SD = 1.85) (t = −1.791 df = 91, p = .014). On the other hand, in low social conformity conditions, sources from an expert (M = 3.84, SD = 1.44) had greater influence than sources from the general public (M = 3.81, SD = 1.87) (t = .724, df = 75, p = .011). However, when users with strong rational information processing systems were exposed to fake news in high social conformity conditions, their intention to spread the news were higher for news sourced from the general public (M = 3.35, SD = 1.19) than that from an expert (M = 3.62, SD = 1.96) (t = .969, df = 91, p = .024). In addition, in the case of users with experiential information processing systems, the information source from the general public had a greater influence on the acceptance of fake news than that from an expert, regardless of perceived social conformity. However, as for the intention to spread fake news, information sources from an expert (M = 4.48, SD = 1.75) had greater influence than that from the public (M = 3.84, SD = 2.20) (t = 2.420, df = 73, p = .016), limited to groups perceiving high social conformity. Table 6 shows an independent smaple t-test results summary.

Results of Independent Sample t-test.

Conclusions

Summary of Key Findings

This study showed that the users’ acceptance and intent to spread fake news on Twitter, where fake news spreads rapidly, is affected by two user-centered factors: information processing tendency and social conformity. In addition, this study also attempted to verify the effect of type of message source on acceptance and intent to spread news. The main results of this study can be summarized as follows.

First, it was found that the users’ intentions to accept and spread fake news depended on their information processing systems and the type of message source but were not influenced by the perceived social conformity of the users. In other words, the study confirmed that a user’s information processing system and the type of message source significantly influenced the acceptance and spread of fake news. Second, users’ information processing systems affected acceptance and intent to spread fake news with interaction effects of the type of message source and their recognition of social conformity. In other words, the study found that users’ information processing systems have a fixed effect on acceptance and intent to spread fake news and can also change depending on the users’ perceived degree of social conformity and the type of message source. In addition, depending on the type of message source, the degree of users’ social conformity had different effects on the acceptance and intent to spread fake news. When users’ have a rational information processing system and perceive higher social conformity, message sources from the general public have a greater effect on the acceptance of fake news. Still, message sources from an expert had a greater impact on their intent to spread fake news rather than the general public. In addition, regardless of users’ information processing systems, perceived low social conformity did not significantly affect the intent to spread fake news. Therefore, it can be inferred that high recognition of social conformity has a major effect on generating motivation to spread fake news. Finally, users perceived high social conformity was found to have a greater impact on message sources from an expert than that from the general public, regardless of their information processing systems. Therefore, this study showed that users’ information processing system, social conformity, and type of message source have an interactive effect on the acceptance and intent to spread fake news and confirms that users’ attitudes toward fake news are affected by their interaction effects of each variable. It suggests when examining a user’s attitude toward fake news, one should not consider only one factor but must consider various factors and interactions among them.

Theoretical Implications

Based on the results presented above, this study made the following theoretical contributions. First, unlike previous studies, this study looked into users’ attitudes toward fake news by reflecting various user-centered factors and raised the need to research from the perspective of users who generate and accept fake news. Unlike most previous studies related to fake news which used components and format of fake news as the main explanatory variables to develop fake news detection algorithms and systems, this study is meaningful in that it examines fake news from a user-centered perspective. Most previous studies tried to accurately identify fake news from vast content in social media based solely on the length of the news, the level of trust in the speaker and source, and whether the news title was written in the headline. However, it is becoming increasingly more difficult to accurately identify fake news in recent days by the appearance of content only, as misused AI-based Twitterbot technology automatically creates and manipulates fake news that much resembles real news. Therefore, to improve the performance of existing fake news detection algorithms and identify fake news more accurately, research should go beyond focusing on superficial features only and be conducted from various perspectives. Therefore, this study looked into social-psychological factors of users who generate and spread fake news: information processing systems and social conformity. By finding out that users’ social-psychological factors affect their acceptance and intent to spread fake news, this study suggests that it is necessary to reflect various user factors in future research to improve the accuracy of fake news detection models.

Second, unlike previous studies that examined personal information processing systems based on factual information, this study examined it based on fake news. In an era in which the spread of false information and fake news has become a major problem, this study expands the scope of research related to a user’s information processing system by examining the effect of a user’s information processing system on the acceptance and intent to spread fake news. In other words, unlike the previous study that focused on the manifestation and operation of users’ information processing system based on only information, this study showed that users process fake news like facts even when that information has not been verified to be true. This raises the need to further study the scope and characteristics of information users perceive in the future. In addition, the results of this study provided a basis for understanding how individuals accept fake news as information through information processing systems.

Third, the results of this study contribute to the expansion of information diffusion theory by examining information acceptance and diffusion patterns in social media as well as fake news from a user-centered perspective. Information diffusion in social media describes the process by which information spreads to users through user interaction, and the rate of this diffusion depends on the network topology of the interaction and the number of users (Al-Taie & Kadry, 2017; Iribarren & Moro, 2011). This study showed that, in addition to the factors affecting the spread of information presented previously, there was a significant interaction effect between users’ information processing systems, social conformity, and the type of message source on the acceptance of fake news. When users with rational information processing systems perceived high social conformity, they tended to accept information from the general public more than that from experts, whereas users with strong experiential systems tended to accept information from the general public more than that from experts regardless of their level of perceived social conformity. These results suggest that users’ attitudes toward the type of message source delivering the information depend on their information processing systems. Therefore, even when providing fact, the information could be delivered more effectively by identifying the user’s information processing system and then providing the information by determining the type of message source. This study differentiates itself from previous studies which examined the effectiveness of social media-based marketing by focusing on the frequency, amount of information, and type of information delivered to users. Even with the same information, establishing a target marketing strategy based on users’ information processing systems and perceived social conformity can improve the advertising effect. This study finally contributes to the expansion of existing theories by suggesting differences in information acceptance and diffusion patterns according to users.

Practical Implications

This study’s practical contributions are as follows. First, this study suggests the need to accurately indicate information sources, information service providers, and media companies, including types of information sources when generating or providing information, to enhance the delivery of the information. This study showed that users use the type of message source as a clue when deciding whether to accept and spread the information. It was found that when reading a tweet message, users focused more on who provided the information rather than verifying the authenticity of the information and deciding whether to accept it as information or share it with others. In social media, content can be shared and spread by anyone in real-time without restrictions, thereby creating difficulties in ascertaining the original creator or source of specific information. Therefore, the importance of information sources and identification of sources on social media is being increasingly overlooked. In particular, fake news misleads readers by making itself look like real news by often citing statements from fictional experts (Flostrand et al., 2019). In the past, only a handful of media companies created and delivered information such as news, but the emergence of information providing platforms has allowed content to be directly created and provided online. As these information providing platforms secure revenue by generating and providing information, it is important for them to continuously attract users by delivering information efficiently. It would be in the information providing platform’s best interest to provide accurate information, increase user satisfaction, and prevent itself from being damaged by fake news. Therefore, the information providing platforms must come up with measures to prevent users from confusion due to the spread of fake news and, at the same time, secure users’ trust in the platform. This study shows that the information providing platforms should devise ways to continuously distribute information by ensuring the accurate display of information sources, creators, and providers and prevent the users from accepting fake news. In addition, previous studies proposed fake news detection algorithms by adopting the information source as the only explanatory variable to identify fake news. However, in this study, only two types of message sources (experts and public) were considered as the criteria for detecting fake news, but it is necessary to classify them into more diverse groups and reflect them.

Second, this study showed that the number of retweets and likes, which are types of social conformity expressed on Twitter, indirectly influences the intent to accept and spread fake news. This suggests the need to prevent the spread of fake news based on social conformity at an early stage. In social media, the value of certain information, such as fake news, is lost and disappears when the information loses interest or elapses after a certain amount of time. This is one reason why companies often do not actively investigate facts even when fake news or rumors about them are generated and spread. In some cases, companies do not actively refute because the cost of making a correction is greater than the loss caused by spreading false information. They wait, expecting that the information will disappear naturally (Kimmel & Audrain-Pontevia, 2010). However, this study showed that users’ perceived social conformity had a significant interaction effect with individuals’ information processing tendency and type of message source, and thus had an effect on acceptance and intent to spread fake news. In addition, the results of this study showed that a low degree of social conformity did not have a significant effect on individuals’ intent to spread fake news, whereas a high degree of social conformity had a significant effect on deciding to spread fake news. This suggests that users’ perceived social conformity can be a factor that encourages the spread of fake news when it interacts with the user’s information processing system and type of message source. For instance, when retweets are repeatedly made followed by “Likes,” individuals’ perceived social tendencies can be increased, which could further encourage the spread of that message. Therefore, this study suggests the need to prevent the spread of fake news early to prevent further spread by social conformity.

Third, based on the results of this study, fake news detection systems and strategies that comprehensively reflect the characteristics of users, contents, and message providers can be proposed as follows. To prevent the spread of fake news, increasing awareness and providing education is important for users to preemptively identify fake news. Users’ psychological factors like user’s information processing system and perceived social conformity as we identified in our study have an impact on the attitude to adopt the fake news. Since the media industry has been negatively affected by fake news spreading, there are a lot of efforts have been made to construct fake news detection system. Our study results indicate that the system must reflects users’ awareness and social psychological attitude on the system to prevent the fake news spreading. The efforts increase the accuracy of fake news detection cannot be reach 100% accuracy and cannot completely prevent users’ fake news spreading behaviors so that it is necessary for the social media service to develop an automated message generation, informing the probability of the fake news spreading, whenever users try to retweet the fake new. For example, in the case of tweets with too many “Retweets” and few “Likes,” there is a high probability that it is fake news that a specific group tries to spread by forming social conformity with users. If a user tries to retweet such a tweet, the system can alert users that the tweet could be fake news.

Limitations and Future Research

This study has the following limitations. First, there was a limitation in the content of fake news. In this study, fake news was constructed based on COVID-19, which has been a topic of great interest worldwide regardless of age and gender. COVID-19 was selected as a research subject as it has become a topic of global attention with relevant fake news spreading rapidly. As COVID-19 is a topic receiving worldwide attention, it was expected that the participants in the experiment would be able to respond without difficulty to the questionnaire based on the content of the tweet messages provided. However, it is believed that the respondents’ sense of crisis and anxiety about COVID-19 was somewhat reflected in the results. That is, the result of the research could vary depending on the topic and scope of fake news. Hence, it is necessary to expand the scope of fake news to various topics that have indirect impacts on individuals’ lives, such as economics and social fields, as well as ones that directly affect individuals’ lives, such as COVID-19.

Second, in this study, information processing systems and social conformity were selected as the only two characteristics to examine users’ acceptance and intent to spread fake news. However, there is a need for future research to consider users’ cultural orientation and their prior understandings of information as factors affecting the acceptance and intent to spread fake news. For users with a relatively sufficient understanding of certain subjects, they will be less prone to accepting and spreading fake news as they will be able to identify fake news more easily. It is also known that users’ risk aversion is mainly caused by their cultural orientation (Van de Werfhorst & Hofstede, 2007). Therefore, future research needs to reflect on users’ cultural characteristics, including their risk aversion. Furthermore, the effect of social conformity is expected to be greater in strong collectivist culture than in individualistic solid culture. In this study, users’ perceived social conformity did not show the main effect on both acceptance and intent to spread fake news. However, if the study is conducted on users from strong collectivistic cultures, there will be social conformity has the main effect on acceptance and intent to spread fake news.

Third, this study selected Twitter as the social media platform that delivered fake news, and developed experimental manipulations that looked identical to actual tweet messages. As the number of Twitter users is about 300 million worldwide according to a survey as of July 2020 published by Statista, a global statistical research institute, it was deemed relatively safe to generalize the research results from Twitter to the social media platform as a whole. Nevertheless, the type of social media platform could affect acceptance and intent to spread fake news even if the message source comes from the same individual. In order to reduce the differences in research results stemming from using different social media platforms, this study limited its study subjects to individuals with Twitter accounts. However, there is a possibility of differentiation from this study’s result when instant message platforms such as WeChat and WhatsApp, which focus on real-time message sharing, and other social media such as Facebook, Instagram, and YouTube are targeted. The inclusion of Instagram and YouTube in the study could bring different results as these platforms, unlike Twitter, transmit messages centered on images and videos. Therefore, additional research is required to find out whether the type of social media platform has an effect on individuals’ acceptance and intent to spread fake news.

Finally, in this study, although two-step manipulation check was performed, the following limitations exist. This study started with the assumption that there would be a difference in the user’s attitude depending on the effect of the message by the type of message provider revealed on the stimulus. Therefore, in the process of composing stimuli, the type of message provider and the number of “Likes” and “Retweets” were fabricated. However, tweets with relatively few of “Likes” and “Retweets” can be interpreted by users as the latest Tweets just posted on the timeline compared to Tweets with many of them. The fictitious posted date of “Oct. 24, 2020” was marked on all stimuli in order to prevent from the bias of participants’ or lowering the reliability of the experiment results, in this study. Thus, regardless of the number of “Likes” and “Retweets,” users can confirm that all stimuli were uploaded much earlier than the time the experiment was conducted. It helps users can understand the content is not a tweet just posted on the timeline, and created a long time ago, even if the number of “Likes” and “Retweets” by users is very few. Nevertheless, depending on the participant’s attitude of concentration and immersion in the experiment, there may be a difference in whether to check the date of the tweet post. Therefore, it is necessary to add a question that checks whether the participant has confirmed the posting date of the tweet, so that the participant can answer that question first before responding to other questions in the future. In the past, when verifying the effect of a message variation on an outcome, message manipulation check procedure was not required in experimental research in general (O’Keefe, 2003). On the contrary, as it is today, it is necessary to establish an experimental design and perform a manipulation check from a more systematic point of view, considering the diversity of participants and a vast amount of the number of participants.

This study examined the effects of type of message source, social conformity, and personal information processing system on the users’ acceptance and intent to spread fake news. It showed that users’ information processing system, social conformity, and type of message source had a significant effect on their attitudes toward fake news. Based on the results, it also proposed the need to consider the characteristics of information receivers to prevent the spread of fake news on social media in the future. Overall, this study suggested a way to prevent the generation and spread of fake news from the users’ point of view and provided a basis for understanding fake news by focusing on the social environment and characteristics of information receivers, which have been overlooked in previous studies.

Footnotes

Acknowledgements

Not applicable.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Ministry of Education of the Republic of Korea and the National Research Foundation of Korea(NRF-2020S1A5A2A01046634), The usual disclaimer applied.

Ethical Approval

An ethics statement (including the committee approval number) for animal and human studies. 146–9

Data Availability Statement

The data that support the findings of this study are not publicly available due to privacy restrictions. However, they are available from the corresponding author upon reasonable request