Abstract

This study examined the impact of online peer assessment based on descriptive questions on student learning in Korea. The validity of the scores for student-written descriptive responses rated by middle and high school students were compared to teacher grading. The impact of peer assessment activities based on descriptive questions on student learning was also investigated, as was investigated students’ perceptions of peer assessment. First-year middle and high school students studying science were recruited. The results reveal a high level of agreement between peer assessment scores of middle and high school students and teacher grades. The peer assessment activity based on descriptive questions enhanced students’ academic achievement. Students stated peer assessment activity was effective for conceptual learning. This study confirmed the effectiveness of peer assessment activities based on descriptive questions in students’ conceptual learning.

Plain Language Summary

This research delves into an examination of the efficiency, educational effectiveness, and overall experiences of middle and high school students with regard to the online descriptive peer evaluation process. The results of this study conclusively establish that online peer evaluation proves to be efficient for middle and high school students. These students exhibited a commendable ability to accurately score descriptive questions employing a designated scoring rubric, thus rendering their evaluations highly reliable. Moreover, the study confirms the beneficial impact of this process on students’ learning abilities, particularly in the context of composing sincere feedback. Furthermore, this investigation reveals noteworthy enhancements in student conceptual understanding, self-directedness, and writing skills stemming from their engagement in this evaluation process. Notably, this approach emerges as an exceptionally effective teaching and learning method for nurturing students’ comprehension of scientific concepts, especially within the middle school demographic. Additionally, the study highlights the cultivation of learning motivation and a sense of community among students as a direct consequence of their involvement in the online peer evaluation process. Moreover, during the process of evaluating their peers’ responses, students became acutely aware of their own areas for improvement, leading to observable advancements in student metacognition, particularly among high school students. In conclusion, the findings of this research hold significant implications for the development of policies pertaining to online teaching, learning methods, and evaluation systems, especially in countries characterized by highly competitive college entrance examinations.

Keywords

South Korea’s (henceforth, Korea) college enrollment rate of 72.5% is the highest among Organization for Economic Co-operation and Development (OECD) countries and has consistently been at the top in the Program for International Student Assessment (PISA) rankings. These rankings are indicative of a high level of public interest in education. The fierce competition among students to do well in college admission examinations is also a result of their widespread interest higher education. The College Scholastic Ability Test (CSAT) scores and the results of school tests determine the eligibility for admission into South Korean institutions. As the school-level assessment scores significantly impact the admissions process, the reliability of school-level assessment is crucial. Considering most students and teachers are highly sensitive to these scores, the teacher-centered assessment method of multiple-choice questions is predominant in Korea.

In recent years, however, there has been a push to eschew multiple-choice questions, which test students’ memorized knowledge, in favor of descriptive assessments that assess students’ critical-thinking skills. However, a large number of teachers avoid descriptive assessments because they are often responsible for more than a 100 students at a time, which makes feedback and grading difficult. Moreover, there are several objections to the rubrics of descriptive assessments compared to multiple choice questions (Kim et al., 2019). To overcome these obstacles, peer assessment can be an effective way of reducing teachers’ burden of assessment and feedback (Topping, 2003). Furthermore, advances in computer technology make conducting large numbers of peer assessments easier (Chen, 2016; Li et al., 2020).

Among the several benefits of peer assessment, its contribution to academic achievement is particularly significant (Double et al., 2020; Li et al., 2020; Sanchez et al., 2017). However, that peer assessment helps with the academic achievement of all K–12 students cannot be claimed. In general, learning achievements are more pronounced in middle and elementary school (Double et al., 2020; Sanchez et al., 2017). In high school, they are either absent for some students (Sanchez et al., 2017) or not significant enough (Double et al., 2020). However, as high school students are highly “grade-orientated” and consider peer assessment and grades crucial (Double et al., 2020), the inclusion of a grading component can significantly improve their learning outcomes. That is, if the process of peer review helps students improve their grades and learning in general, high school students may experience similar significant learning gains. Specifically, as peer review is primarily essay based (Zheng et al., 2020), formative assessments in the form of descriptive questions, such as testing the understanding of scientific concepts, may be more effective for high school students. It would also be interesting to assess whether it has a positive impact on middle school students who are less grade oriented.

Peer review has a positive impact on non-cognitive skills. In terms of the affective aspect, students enjoy learning through assessment-based activities in which they can participate directly (Tsivitanidou et al., 2011). Moreover, when they provide feedback during the process of recognizing improvement areas, their self-efficacy, patience, and confidence are enhanced (Kovac & Sirkovic, 2012; Panadero et al., 2016). However, as the non-cognitive benefits of peer assessment stem from a sense of psychological security, it is unclear whether they hold true in countries like Korea, where the education system is excruciatingly competitive (Panadero et al., 2016). In particular, the specificities of Korean classrooms may produce results different from that of previous studies, such as more positive attitude toward peer assessment and higher assessment validity (Cho et al., 2006). This is because prior studies on peer assessment did not consider cultural factors or classroom contexts (Li et al., 2020). Cachero et al. (2023) investigated the effect of students’ personalities on peer evaluation, but they were college students and did not consider the atmosphere of the class. Several studies have shown that students believe peer assessment to be occasionally unreliable and unfair (Kaufman & Schunn, 2011; Papinczak et al., 2007), and the higher the academic performance of a student, the less they trust the scores given by their peers in the descriptive evaluations (Paik & Ryu, 2014).

Thus, this study investigates the validity, learning achievement outputs, and the cognitive and non-cognitive effects of online peer assessment based on conceptual, understanding-based, descriptive questions for middle and high school students in the highly competitive educational environment of Korea. It aims to answer the following research questions:

Is online descriptive peer assessment valid for middle and high school students?

Is online descriptive peer assessment for middle and high school students effective for learning achievement?

What are students’ perceptions of peer review in the Korean classroom culture?

Theoretical Background

Impact of Peer Assessment on Academic Performance

The impact of peer assessment on cognitive learning is well researched. According to Panadero and Alqassab (2019), peer assessment encourages students’ autonomous learning and boosts their learning efficacy by offering them opportunities to focus on the learning content. Sanchez et al. (2017) showed that there is a significant positive effect of peer grading on the academic performance of primary and secondary school students, but not on those pursuing tertiary education. Through meta-analysis of 54 papers, Double et al. (2020) showed that peer assessment had a positive impact on academic achievement in a variety of contexts from elementary to high school. Specifically, peer assessment positively impacts students’ learning because it enables reflection on one’s own learning process while evaluating others’ learning processes and outcomes (Cheng & Warren, 1999; Chen & Tsai, 2009). Indeed, it can be a substitute for the feedback of instructors who either do not give feedback frequently or do so solely based on the level of students (Topping & Ehly, 2001). Thus, the quality of peer assessment review is heavily dependent on quality feedback (Boud & Molloy, 2013).

Peer assessment is an effective way for students to offer feedback in a participatory manner (Topping, 2003). Students reflect on their learning while providing feedback to others and simultaneously improve their learning by incorporating feedback from other peer evaluators (Patchan et al., 2016). However, there remain certain concerns, such as whether young pupils can provide quality feedback in the case of descriptive questions, and the significant variation in the quality of feedback based on the subjectivity and literacy of the reviewer (Alqassab et al., 2018; Wanner & Palmer, 2018).

To reduce the disadvantages of peer assessment caused by the lack of evaluators’ ability, multiple evaluators can be engaged, reflecting on the responses from different perspectives, which is more beneficial for the feedback process (Cho & Schunn, 2007; Li et al., 2020). Moreover, using rubrics will increase the positive effects of peer evaluation, making it more accurate (Panadero et al., 2013).

Validity of Peer Assessment for Descriptive Questions

Li et al. (2016) evaluated the degree of agreement between peer and teacher evaluation-based data from studies published from 1999 till 2015. They found that the correlation coefficient was .61. Sanchez et al. (2017) conducted a meta-analysis of peer assessment from elementary to high school and found that the correlation average was

Familiarity is one factor that influences the reliability of peer assessment (Falchikov & Goldfinch, 2000). Conducting peer assessment of unfamiliar tasks can impact the validity of the assessment. As Korean students are not familiar with descriptive questions, city and provincial education offices prepared a plan to extend descriptive evaluation from elementary to high school. This plan was implemented in 40% of the schools in Korea by 2014. However, despite the government’s efforts, the percentage of schools where this plan was implemented has remained the same nearly a decade later. One major reason descriptive assessments have not become widespread is the difficulty of grading them (Kim et al., 2019). Though valid peer assessment reduces the grading difficulty, most students and teachers believe that peer assessment is unreliable (Kaufman & Schunn, 2011). Thus, in Korea, peer assessment activities remain limited and descriptive peer assessment is conducted infrequently (Park & Park, 2018). Therefore, the validity of the evaluation must be assessed when conducting peer assessment of unfamiliar descriptive questions.

Cultural Dependence of Peer Assessment Effect

Studies have investigated the impact of peer assessment on various non-cognitive skills. For instance, Sanchez et al. (2017) noted that peer assessment improved students’ metacognition, motivated their learning, and fostered social competencies (e.g., communication, team work, and the ability to compromise). It also promoted reflective thinking (Tricio et al., 2016) and built confidence (Kovac & Sirkovic, 2012). However, Li et al. (2020) revealed contradictory results on the effects of peer evaluation.

As the impact of peer assessment varies based on culture and context (Li et al., 2020), the effects of peer assessment on learning in settings such as Korea, where the competition among students is intense, must be determined. Thus, it is necessary to analyze the findings of research on peer assessment with similar class conditions and the same subject.

Song (2006) conducted peer assessment with descriptive evaluation questions for first-year high school students studying biological sciences. The teacher briefly explained the solutions and scoring procedures; subsequently, the students exchanged answers with three peers, scored them, and added their scores to that of the preceding evaluators. Observing that the students’ interaction was not smooth, the researcher adjusted the procedure such that each small group was allowed to discuss one item at a time for scoring and noting their feedback. However, there was no significant improvement in small group activities. Consequently, it was concluded that providing an opportunity to explain the content systematically or to correct misconceptions to some extent by facilitating interactions with competent colleagues would be effective.

Baek and Ryu (2014) conducted a descriptive peer assessment study with heterogeneous groups of second-year high school students. For 10 week, the students performed peer assessment for 20 minutes every day, engaged in group discussions, established standards, and finally amended and submitted their answers in accordance with the standards. This method is similar to that of Song (2006), as both studies examined the changes in students’ attitudes, interests, and perceptions about science. The results of Baek and Ryu (2014) confirmed that low-performing students have high belief and positive perception of peer evaluation, whereas high-performing students have negative perception of peer assessment as they could not trust their peers.

Peer Evaluation Encouraging Reflection

Peer Evaluation Encouraging Reflection (PEER) is a system that optimizes the peer assessment of descriptive questions. It allows students to enhance their understanding by facilitating reflection while evaluating others’ responses. Students have additional opportunities to refine their understanding of concepts when scoring multiple responses of their peers to questions they already solved. The results of peer assessment under this system are individually organized and shared with the evaluator to facilitate another opportunity for reflection. Thus, students have two opportunities for reflection in the peer assessment process.

PEER makes peer assessment easier for teachers who are the primary users of the system. Student responses collected through various methods (e.g., Google Form and Learning Management System) and individual emails of students are saved in a Microsoft Excel document. The teacher uploads this file to the PEER system, specifies the number of responses to be scored for each student, and creates an assessment sheet for each of them. The responses of the peers being evaluated are randomly selected and designed so as to not include the responses of the student acting as the evaluator. The evaluation sheet includes a method of scoring and the guidance for scoring is based on a table created by the teacher. Scorecards can also be created in various ways depending on the characteristics of the items and the intention of the teacher.

In this study, as descriptive peer assessment was conducted, the overall scoring method was used to score each response. Students were asked to indicate their scores based on the grading standards provided by the teacher. In the PEER system, a score is displayed for each response, below which there is space for providing qualitative feedback. In other words, the evaluator provides a score based on the scoring criteria and offers feedback based on teachers’ guidance. In this study, the feedback was provided as a guide to “Explain what is lacking in the response.” The guidance for this feedback can be arbitrarily determined by the teacher; for example, the students can be instructed to note down the positive and creative points in the response. The teacher can also instruct students to score quantitatively, without such feedback.

If the teacher determines that five responses would be examined per student, the students must analyze five responses per question. The number of responses may be governed by factors, such as learners’ cognitive level, the length of the response, and the number of questions. However, in the case of an evaluation on a smaller scale, coincidence may affect the results. In this study, seventh grade middle school students were asked to evaluate the responses to a questionnaire comprising eight questions. Each student evaluated the responses of five students, that is, each student evaluated the responses to a total of 40 questions. As the scoring standard was different for each question, it was important for students to understand the scoring for these eight questions. Additionally, students in the 10th grade of high school were asked to evaluate 60 responses (i.e., they evaluated five peers’ responses to a questionnaire comprising 12 questions).

Research Methodology

Participants

First-year middle school and high school students participated in the peer assessment using the PEER system. The middle school that participated in the study is located in a small city near Seoul, and is coeducational with over 1,000 students. This middle school’s academic level was determined to be upper-intermediate among all middle schools in Korea, and both parental interest in education and economic power were upper-intermediate. The high school is a large-scale coeducational school in a provincial city with >700 students. Its academic level was classified as high, and both parental interest in education and economic power were at the upper-intermediate level.

Both schools administered their semester-ending examinations a few days prior to the start of the summer break on July 20, 2022. Though the exercise in the middle school aimed to review what students had learned over the previous months, their involvement was not very enthusiastic owing to the approaching summer break. The participation rate was high because the students were involved in the class under the teacher’s supervision; however, as this activity was not graded, the students were not pressured into achieving a good score. The high school informed its students that participation would be included in their September grades. Many students engaged in the activity because it was conducted in the class under the teacher’s supervision and was graded. Specifically, the high school students were more accurate in their response because of the teacher’s supervision.

A total of 198 middle school students took the pre-test, and 145 students took the post-test. Among these, 136 students participated in the entire process, which comprised the pre-test, the peer assessment process, and the post-test. Of these, 75 (55.1%) were male and the remaining 61 (44.9%) were female. Among the high school students, 202 participated in the pre-test and 171 in the post-test. Of these, only 113 students participated in the entire process; thus, a total of 56 male and female high school students participated. The gender of one student remained undisclosed.

Peer Assessment Process

In this study, peer assessment included four phases: pre-test, peer assessment, feedback, and post-test (Figure 1). Both the middle and high school students received a pre-test form that comprised descriptive questions. The evaluation was conducted online through Google Forms. Each student was assigned a computer by the school. Following the teacher’s guidance, students accessed Google Forms and completed the pre-test. Thereafter, the students’ peer assessment dataset and the evaluation sheet were created in the PEER. This enabled both the dataset and the evaluation sheets to be sent to each student, who received their own unique link and subsequently began the peer assessment process. In the second round, each student conducted peer assessment with their own evaluation sheet. Individual reports were generated based on the outcomes of students’ peer evaluation. These reports carried the average score of each student’s evaluation, along with their comments. Each student received their own individually printed report. The post-evaluation conducted to evaluate the achievement of the students was the same as the peer-evaluation questions.

Process of peer assessment.

As students participate more actively in peer assessment when anonymity is guaranteed (Nicol, 2011), the peer assessment process was conducted anonymously in this study. The post-test was administered 1 week after the peer assessment to accurately measure learning outcomes. According to Jeffery et al. (2016), at least three undergraduate students are required for peer assessment to be effective. In this study, five peer assessments were conducted to facilitate formative learning in the assessment and feedback process. After the pre-test, students answered six questions rated on a five-point Likert scale and one open-ended question about their perceptions on peer evaluation.

Data Analysis

To address the first research question, a correlation analysis of the students’ peer assessment scores and the scores assigned by the teacher was conducted. Specifically, Pearson’s correlation coefficient was employed as the statistical measure. The average score given by the students was utilized as the representative value for the students’ evaluation. The derived correlation between peer assessment scores and teacher scores was compared with the correlation observed in previous related studies.

A variety of scoring scales were used during peer evaluation. A composite score was generated using the partial credit Rasch model to create a valid total score required for statistical analysis. In this process, it was possible to confirm the MNSQ (mean-square) of the eight items related to the middle school students and the 12 items related to the high school students. For the former, the Infit MNSQ range was 0.805 to 1.388, and the Outfit MNSQ range was 0.692 to 2.043. Cronbach’s α value, that is, the measure of internal consistency reliability, was .827. For the 12 items related to the high school students, Infit MNSQ was 0.792 to 1.242, Outfit MNSQ was 0.665 to 1.337, and the Cronbach’s alpha value was .763. Overall, the students’ responses to the evaluation tasks performed were appropriate.

To address the second research question, a series of analyses was implemented. Initially, a repeated measure analysis of variance (ANOVA) was conducted to ascertain whether peer assessment activities positively impacted the enhancement of academic ability. During this analysis, we examined whether there were differential influences based on gender and grade. If no variations in the effectiveness of peer assessment activities were found across different genders and grades, it suggests that the peer assessment process is unbiased.

A t-test was also conducted to confirm the mean difference between the initial scores of each question and the scores following peer evaluation. This analysis aimed to identify which item exhibited the most substantial difference.

Notably, an analysis of the responses was conducted to examine whether a student’s peer assessment positively influenced the peer’s response. First, cases where words not previously written by the student emerged after the peer assessment were inspected. Thereafter, we checked if these words were present in the peer feedback. This analysis helps identify words that were integrated into the response through the feedback process. Words identified in this analysis were presumed to have been incorporated into the response through feedback, even though the student had not initially considered them.

An expert with a teaching certificate graded all student responses as per the scoring criteria of the questions. First, to confirm the accuracy of student peer evaluation, the correlation between student peer assessment scores and expert grading was identified. The correlation analysis aimed not to establish the accuracy of the peer assessment scores, but to assess how faithfully the students performed the evaluation based on the criteria. Furthermore, the pre- and post-test scores were compared to verify whether the students had gained knowledge through the peer assessment activities. The response to each question in the pre- and post-test was compared and studied using a paired sample

A keyword analysis was performed to confirm in further details whether peer assessment feedback contributed to the learning effect. Keyword analysis confirmed the frequency with which important keywords related to the correct answer did not appear in the student’s pre-test, but appeared in the feedback provided by a colleague, and subsequently, reappeared in the student’s post-test. If it did not exist in the pre-test but appeared in the feedback from the peer assessment and in the post-test, it was assumed that the feedback from the peer assessment likely caused this effect.

To understand the students’ perceptions of peer assessment, the post-test included six multiple-choice questions and one open-ended question about how they felt about the peer assessment activities. These questions were developed by two experts in science education. The multiple-choice questions included issues such as the perceptions of online use, whether it helps students learn concepts, rubrics, and feedback. Students’ perceptions of peer assessment were confirmed based on the responses to the questions rated on a 5-point Likert scale, and the discussion focused on the ratio of the two responses,

Results

Accuracy and Validity of Peer Evaluation

Under the PEER system, students conduct tasks that comprise providing quantitative scores on responses from other peers and providing feedback, including the shortcomings, in each response. Two studies were performed to confirm the accuracy and sincerity of the students’ peer assessment activities, despite possible disparities in how faithfully each student performed the peer evaluation. First, there was a correlation between the average of the scores of the students and that of the expert. In the case of the middle school students, the correlation between peer assessment and the expert grading of all eight questions was statistically significant at the levels of .001, and the

A similar level of correlation was observed among the high school students. The score results for all 12 questions were significant at the .001 level, and the

Furthermore, the average number of characters in the comments provided by students on their colleagues’ responses was determined. The number of characters was computed by including spaces and special characters. For the middle school students, the average number of letters for each of the eight questions was 17.1 characters (21.2, 21.4, 17.2, 21.5, 13.5, 9.6, 14.1, and 18.3). The high school students used an average of 16.4 characters in the feedback, with lengths of 19.7, 26.6, 19.5, 24.0, 10.6, 13.0, 10.8, 12.6, 14.7, 11.7, 15.1, and 18.9 characters. As one student was evaluating five other students, they had to leave five such comments.

Changes in the Scientific Concept Score After Peer Assessment Activities

This study investigated whether peer assessment activities and feedback affected student learning. Both the pre- and post-tests were carefully graded by professionals. To generate the total score, Rasch analysis was performed, and the Rasch person measure was used as a composite score. To determine whether there was a gender effect, a repeated-measures ANOVA was performed (Figure 2), with gender introduced as a between-group variable. The middle school students demonstrated a significantly high pre-test-post-test effect (pre-test-post-test F[1, 134] = 250.66,

Changes in Pre- and Post- Test Scores of Students by Gender and School Level

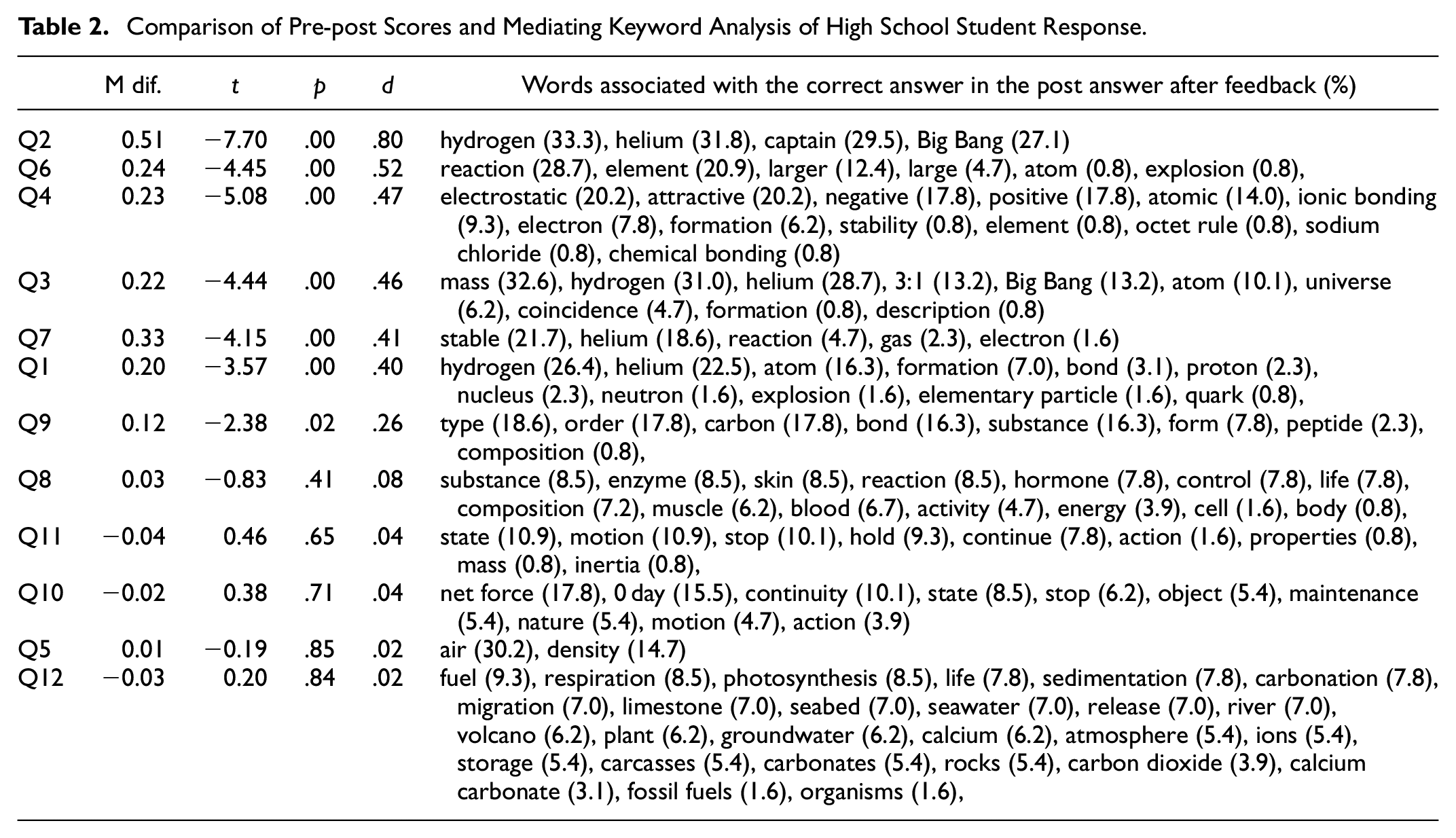

Table 1 compares the pre- and post-test results for each question. The results of two analyses are presented. The result of the paired sample

Comparison of Pre-post Scores and Mediating Keyword Analysis of Middle School Students’ Responses .

Peer assessment produced the greatest learning benefit in Question 1 for the middle school students, with an effect size of 1.97. There were four questions with effect sizes of one or more, and six with effect sizes of 0.8 or more. The effect size of a typical feedback is 0.4 (Hattie, 2008). Numerous keywords that appear in the grading criteria were not present in the pre-test, but appeared in the post-test after the feedback. In total, 70% of students did not include the word “pressure” in the pre-test responses, but did so in their post-test evaluation (see the numbers in parentheses in Table 1). Peer feedback correctly identified the weaknesses in students’ pre-test responses and, consequently, the pupils were able to address them in the post-test.

Peer assessment produced the largest learning effect for high school students in Question 2, with the effect size of 0.80, which is considerably high. The effect size of the learning for the high school students was lower than that of middle school students, with six items having the effect size of 0.4 or higher, half of the total. Numerous keywords that appeared in the grading criteria were not present in the pre-test, but appeared in the post-test after the feedback. In Q2, approximately 33% of the students did not initially use “hydrogen,” but included it in the post-test in the feedback (see the numbers in parentheses in Table 2).

Comparison of Pre-post Scores and Mediating Keyword Analysis of High School Student Response.

Students’ Thoughts on Peer Assessment Activities

The middle and high school students’ responses show that they were generally positive about the cognitive effects of peer assessment (Table 3). Peer assessment was advantageous in that both middle and high school students could determine what concepts were important by reviewing their peers’ responses. Furthermore, they learnt how to write descriptive feedback by examining the evaluation criteria. Middle school students responded more favorably, with an average of 73.2%, compared to the high school ones who responded positively with an average of 64.9%. They found that the educational benefits of their peers’ answers were higher than that of understanding the evaluation criteria while reading.

Analysis of Students’ Perceptions of Peer Assessment (Likert-Scale Questions).

Both cognitive and non-cognitive benefits were confirmed by identifying the subjective questions associated with feelings regarding peer evaluation (Table 4). Middle and high school students believed detecting one’s weakness was the most significant cognitive advantage of peer evaluation. Additionally, the method of writing descriptive answers had a useful impact; however, middle school students with fewer skills in writing descriptive answers experienced this effect more than the high school students.

It seemed that the children surprisingly lacked basic skills. Additionally, while I was evaluating the responses of my friends, I found the part that I had mistaken and misunderstood; so, I wrote the correct answer this time. I think it was a good experience in many ways because my writing skills improved while providing feedback. (A middle school student’s comment)

Regarding the non-cognitive effects of peer evaluation, all middle and high school students were fully aware of the complexities and usefulness of the descriptive evaluation system and agreed that the descriptive online peer assessment enhances learning motivation. In numerous cases, both middle and high school students developed social competencies. Peer evaluation, according to the students, allowed them to realize the differences between themselves and their peers. Consequently, they were able to appreciate and care for their peers, understand the concept of community, and learn the value of collaboration. Specifically, the positive perception of peers improved when middle school students gained an understanding of “colleagues” through peer assessment and realized that they were not very different from themselves.

It was good to see the answers of my friends who thought differently and those who thought like me. It was good to broaden my perspective on understanding the problem. (A high school student’s comment)

High school students experienced various non-cognitive effects more than the middle school students. The students, in general, underwent several internal changes, including meta-awareness.

Students’ Perception of Peer assessment.

*The numbers indicate the percentage of students who shared their opinions corresponding with the primary codes.

Discussion

Is Online Descriptive Peer Assessment Valid for Middle and High School Students?

This study shows that online descriptive peer reviews have high validity for middle and high school students, with a correlation of

In terms of methodology, previous studies have shown that computer-based peer evaluations have either very low correlations compared to paper-based peer evaluations (Li et al., 2016) or no correlation altogether (Double et al., 2020). This study confirms that computer-based peer assessments are highly correlated. Online peer review is similar to the offline one (van Popta et al., 2017), and a meta-analysis of recent studies confirms that though online technology does not have a significant effect on self-assessment, it has a positive effect on peer assessment that necessitates interaction with several people (Chen, 2016; Li et al., 2020).

There are two possible explanations for the high correlation between online descriptive peer assessment and teacher outcomes. First, grading was not difficult for the students due to the clear and detailed rubric. In general, rubrics in peer assessment take different forms, making it difficult to predict their effectiveness and leading some researchers to believe that they do not have a significant impact (Double et al., 2020). However, the results of this study support existing research that suggests that accurate and detailed rubrics benefit the validity of peer assessment (Panadero et al., 2013; Panadero & Jonsson, 2013). Thus, detailed rubrics help stabilize the quality of peer assessment, which varies with student ability (Alqassab et al., 2018; Wanner & Palmer, 2018).

Second, the validity of peer assessment varies depending on the type of question and the characteristics of the subject. In this study, peer assessment was conducted with descriptive questions about scientific knowledge, which requires students to apply their memorized knowledge to write an answer, rather than freely write down their thoughts. This is consistent with Sadler and Good (2006), who used 60% descriptive and 40% short-answer questions in a science class for middle school students and found a correlation coefficient of .91. This study also shows a high correlation coefficient due to the nature of a formative assessment of scientific concepts in a learning context.

Is Online Descriptive Peer Assessment for Middle and High School Students Effective for Learning Achievement?

This study shows that online descriptive peer assessment is an effective teaching and learning method for understanding scientific concepts. The partial credit Rasch analysis showed a significant difference in the pre-post score improvement; the students’ use of scientific terminology improved significantly. After completing the online descriptive peer assessment, the students reread the questions, understood their deficiencies in knowledge through the feedback, and composed an appropriate response by reusing the scientific phrases they had used in their peers’ assessment. This study also shows that online peer assessment positively impacts learning outcomes for both middle and high school students. This contradicts previous research (Double et al., 2020; Sanchez et al., 2017) that suggests that high school students do not benefit from peer review.

The first reason behind the significant increase in academic achievement among the middle and high school students in this study is their receiving multiple, high-quality feedback. The positive effects of peer assessment on academic achievement have been documented in several studies (Boud & Molloy, 2013; Patchan et al., 2016; Topping, 2017; Topping, 2003). The high quality of the feedback was the result of the assessment being centered on learning scientific concepts. Thus, the detailed rubrics provided by the teacher allowed students to provide generally accurate feedback to the evaluator. Previous research has shown that science and technology courses with clearer and more quantitative rubrics result in greater academic achievement in peer review than humanities courses (Li et al., 2020). The effectiveness of the number of feedback (five) corroborates the findings of research that suggests that students benefit from multiple feedback as it allows them to obtain different perspectives and improve their learning (Cho & Schunn, 2007; Li et al., 2020). In our survey of actual student perceptions, both middle and high school students mentioned that peer review was helpful in enhancing conceptual understanding.

The second reason is that peer assessment is a responsible learning activity. When the descriptive peer assessment was conducted in this study, the students attempted to understand the learning content by reading the textbook carefully and repeatedly with a sense of responsibility to understand the model answers and the grading standards, as well as to understand and grade the expressions of their peers that did not match the grading standards. During this process, they actively participated in learning and the effect of learning was maximized as they tried to understand the learning content.

The third is that as the peer assessment questions were descriptive, the activity of writing a large amount of feedback was helpful. Both middle school and high school students—especially middle school students—found writing descriptive answers helpful. Moreover, their scores improved significantly in the post-test results, not only in terms of conceptual explanations, but also difficult descriptive questions, such as causal relations. Due to the nature of science subjects, the accurate use of scientific terms and causal relationships must be clearly understood for systematic composition. Thus, younger students face more difficulty in responding to descriptive questions. However, online descriptive peer assessment provides students with sufficient experience to write descriptive answers by allowing them to compose feedback and grade peers’ descriptive answers.

What Are the Students’ Perceptions of Peer Review in a Korean Classroom Culture?

The most notable effects of peer assessment are: improved motivation for learning, understanding of peers, and fostering stronger sense of community. Students reported feeling an extrinsic learning motivation to get better grades in the forthcoming evaluation when they examined their peers’ well-written answers. Additionally, a high percentage of students responded feeling a sense of community while understanding the difference between their own and their peers’ answers. Students who are not confident about their learning were comforted and motivated to learn when they discovered other students in similar situations; students with excellent academic ability understood that several students face difficulty understanding concepts and writing feedback with sincerity. This process also fostered a matured sense of community. However, some students’ disrespectful feedback or grading results that did not match the rubric were expressed through their dissatisfaction with their peers. Thus, sufficient training on writing feedback should be provided to students before conducting peer evaluation.

The study confirmed that compared to high school students, middle school students have a very high positive perception of their colleagues rather than of learning strategies or academic thinking. Students participated in the evaluation with a sense of responsibility and wrote feedback sincerely. The sincere delivery of feedback enabled them to develop a positive perception of their peers. Thus, the use of peer assessment may be promoted to enhance peer relations, a finding that supports those of previous studies (Li et al., 2021; Topping, 2017) that showed that peer assessment helps cultivate non-cognitive skills, such as cooperation, consideration, and communication. In contrast, due to peer evaluation, high school students experienced personal growth and improvement of social skills, including academic thinking and learning strategies.

Specifically, more high school students reported an improvement of metacognition than middle school students. Some previous studies have shown that college students’ metacognition improved due to peer assessment (Kim & Ryu, 2013) and descriptive criticism tasks and evaluations (Aleven & Koedinger, 2002; Fiorella & Mayer, 2016). This study similarly confirms that both descriptive evaluation and peer assessment help improve metacognition. This finding can help future research determine the extent of influence of the convergence of descriptive evaluation and peer assessment on the improvement of metacognition among middle and high school students.

Suggestion

This study examines the involvement of middle school and high school students in descriptive peer assessment to determine whether it leads to improved academic achievement. However, it is necessary to investigate whether peer assessment also has a positive influence on the academic performance of students in disadvantaged regions who may have less interest in academics. Furthermore, additional research is warranted to explore whether the positive perception of peer assessment is maintained in schools with affluent parents, where a highly competitive culture prevails. Additionally, in this study, five feedback sessions were conducted. However, it was challenging to apply five feedback sessions to young middle school students due to difficulties in providing diligent and accurate feedback. Thus, it is essential to determine the appropriate minimum number of feedback sessions necessary for academic achievement and to develop a system using artificial intelligence evaluation tools that can help modify students’ feedback. This will enable the establishment of a more effective system for utilizing peer assessment.

Limitations

This study has several limitations. First, not all students shared the same experience of non-cognitive effects described in this study. This is due to students’ diverse experiences of analyzing students’ questionnaires and the lack of using a control group or quantitative instruments. Nonetheless, this study identified various possibilities for the students to experience the advantages of peer assessment by examining their experiences.

Second, it is difficult to extend this study’s findings to students with various backgrounds because the study participants comprised students residing in specific areas. The effectiveness of peer assessment may vary depending on the student’s background; it may be different for students from small towns or different cultural backgrounds.

Third, as the students were required to provide repeated feedback to the same questions and subsequently respond to the exact questions, the effect of peer assessment might be seen as a natural result. However, this study can provide further evidence regarding the learning effectiveness of online descriptive peer evaluation

Supplemental Material

sj-docx-1-sgo-10.1177_21582440241256538 – Supplemental material for Effects of Descriptive Peer Assessment on the Learning of Middle and High School Students in South Korea

Supplemental material, sj-docx-1-sgo-10.1177_21582440241256538 for Effects of Descriptive Peer Assessment on the Learning of Middle and High School Students in South Korea by Mihyun Son and Minsu Ha in SAGE Open

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Ministry of Education of the Republic of Korea and the National Research Foundation of Korea (NRF-2021S1A5A2A03061991)

Supplemental Material

Supplemental material for this article is available online.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.

References

[High School Students’ Perceptions on Descriptive Assessment Activity Experiences by Teacher or by Peer].

[High School Students’ Perceptions on Descriptive Assessment Activity Experiences by Teacher or by Peer].  [High school students’ perceptions on descriptive assessment activity experiences by teacher or by peer]

[High school students’ perceptions on descriptive assessment activity experiences by teacher or by peer] [The Current State and Prospects of Peer Assessment]

[The Current State and Prospects of Peer Assessment]

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.