Abstract

The global sparse representation method based on compressed sensing fails to capture the local texture and detail structure of an image. To address this, a local dictionary learning method based on the wavelet domain is proposed. The wavelet high-frequency subband sub-block classification and local dictionary learning are implemented using the FCM clustering method and K-L method, respectively. At the reconstruction end, a compressed sampling Matching pursuit algorithm based on iterative update of sparsity is proposed. This adaptive iteration can effectively reconstruct the original image under unknown sparsity conditions. Simulation experiments show that compared to existing reconstruction methods, this approach has the advantages of simple computation, strong adaptive sparse representation ability for the original image, and superior reconstructed image performance.

Introduction

Big data image processing techniques are gaining widespread attention, however, the transmission and storage of massive data can impose a great burden on the channel and memory, while the computational efficiency of the computer is becoming more and more stringent in order to adapt to handle big data information processing. The traditional data acquisition method based on Nyquist’s sampling theorem requires the sampling frequency to be at least twice the highest frequency of the signal, which will cause the waste of bandwidth in the frequency domain. The compressed sensing (Candes & Tao, 2006; Donoho, 2006) technique proposed by Candes, Tao, Donoho, and others can overcome the defects of the traditional sampling theorem by combining the sampling and compression of the signal, thus effectively improving the frequency utilization. CS theory emphasizes that when the signal to be processed is compressible or can be sparsely represented in a certain transform domain, the observation of the signal can be accomplished by an observation matrix and the original signal can be reconstructed with high probability by solving an optimization problem. Due to the advanced nature of this technique, it is now widely used in medical images, remote sensing observations, and infrared imaging (Huang et al., 2023).

There are two important components of CS theory, the reconstruction algorithm of the signal and the sparse representation of the signal. Sparse representation is widely used in signal processing and has shown good results. Kan et al. (2019) designed a dictionary based on the Gabor function model with small and constant parameter intervals, and utilized a sparse Bayesian learning algorithm for image pair sparse decomposition to achieve image denoising. However, the high dimensionality of images with rich details makes the corresponding Gabor dictionary too large, resulting in increased computational consumption. Yu et al. (2020) achieved good results by using Orthogonal Matching Pursuit (OMP) algorithms to sparse decompose and denoise images based on the Gabor dictionary. However, there is an error between the analytical dictionary based on the functional model and the actual image, which makes it impossible to ensure that the sparse decomposition results of the image are always accurate.

Another method for designing dictionaries is through training. S. Chen et al. (2021) proposed the K-SVD algorithm, which trains to obtain a dictionary that effectively reflects the structural features of a class of signals. Guo (2018) utilized K-SVD to train dictionaries to reduce errors between atoms and signals in the dictionary, thereby improving the sparse performance of the dictionary. Wang (2019) used K-SVD training dictionary and OMP algorithm to perform sparse decomposition on the image and effectively remove noise in the image. However, due to the high dimensionality of high-frequency images and limited computational complexity, K-SVD is unable to train dictionaries corresponding to signal dimensions.

In terms of signal reconstruction, how the low-dimensional observation matrix can be used to accurately reconstruct the corresponding high-dimensional original data signal is the core of the reconstruction algorithm. The research of reconstruction algorithms is mainly based on greedy algorithms, and the common greedy algorithms include orthogonal matching pursuit (OMP) (Jun & Shi-Chang, 2020), regularized matching pursuit (ROMP) (Zhao et al., 2021), compressed sampling matching pursuit (CoSaMP) (Song & Wang, 2020), and so on. Among them, OMP does not work well. In recent years, another hot topic of sparse representation research (Jun & Shi-Chang, 2020; Zhao et al., 2021) is the sparse decomposition of signals in redundant dictionaries (Song & Wang, 2020). This is a new theory of signal representation: The basis functions are replaced by a library of super completely redundant functions, called redundant dictionaries, and the elements in the dictionaries are called atoms. The dictionary should be chosen to match as closely as possible the structure of the signal being approximated, and its composition is not restricted. Finding term atoms from the redundant dictionary that represent the signal in the best linear combination is called sparse approximation or highly nonlinear approximation of the signal. This method can adaptively select a suitable dictionary for sparse representation according to the structure of the image itself, but the computational complexity of the dictionary training process is too high.

To address the shortcomings of the above algorithms, we propose a compressed-sensing reconstruction algorithm based on adaptive sparse representation and iterative updating of sparsity. First, a local adaptive sparse representation is constructed in the wavelet domain to achieve a sparser representation of the signal; in reconstruction, since the sparsity is unknown, a sparsity iterative updating matching tracking algorithm (SIU-CoSaMP) is constructed on the basis of the traditional CoSaMP algorithm to reconstruct the image. The proposed method was compared to traditional DWT, SWT, and NSCT sparse denoising methods. The results showed that using a local training dictionary for sparse decomposition of signals reduces computational complexity while ensuring denoising performance, as compared to sparse decomposition of high-frequency images on a global dictionary.

The rest of this article is organized as follows:

- Section 2 proposes a wavelet domain local dictionary learning model.

- Section 3 provides the process of iteratively updating the CS image reconstruction algorithm based on sparsity.

- Section 4 provides examples of experimental testing and analysis of results.

- The conclusion is briefly drawn in Section 5.

The Wavelet Domain Local Dictionary Learning Algorithm

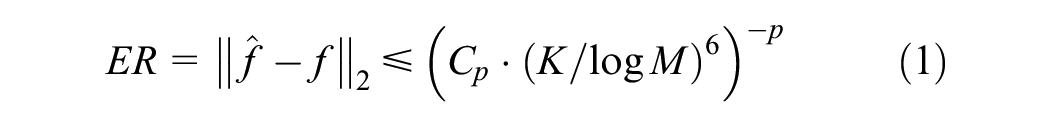

When studying the sparse representation of signals, the sparse representation capability of the transform base

where

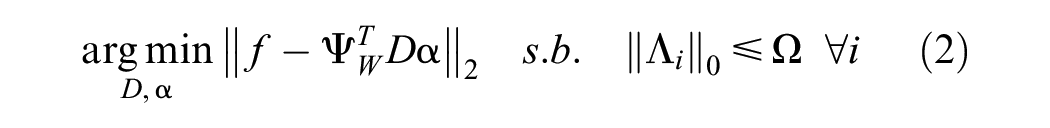

The discrete wavelet transform (DWT) (Edwards, 1991) is the most widely used orthogonal transform, with a small proportion of larger coefficients concentrated at low frequencies, which contain most of the energy of the image, and a sparse distribution of large coefficients in the high-frequency subbands, which are also an important component of accurate image reconstruction. Such an uneven distribution of subband coefficients leads to a very large sparsity threshold of the target vector. To address the shortcomings of the traditional single algorithm, the multiscale properties of wavelets are combined with the flexibility of a locally sparse dictionary. In the wavelet domain, a local learning dictionary is obtained by training a dictionary of high-frequency coefficients. The training dictionary can be viewed as solving the following equations:

where D characterizes the training dictionary,

where

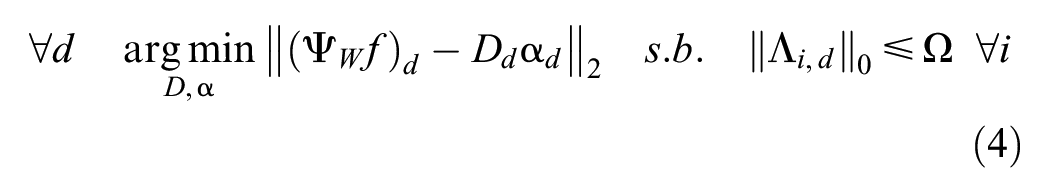

The formula shows that after wavelet decomposition in each of its high frequency direction subband training dictionary, let the subband dictionary is

Let

where

Since the dictionary training process is for wavelet high-frequency direction subband coefficients, the block effect is not obvious, and in solving for

where

The purpose of the CS is to use the observations obtained from the observation matrix to be able to recover the original signal

From the above equation, it can be seen that the training of sub-dictionaries

The wavelet high-frequency sub-bands mainly reflect the detailed information of image edges and textures, which exist in the energy concentration region of the sub-bands, and therefore can be described by the energy of the Brushlet sub-bands. The Brushlet transform (Meyer & Coifman, 1997) has a multi-layer structure similar to the wavelet packet, and can be optimally decomposed in the Fourier domain. The image after Brushlet decomposition contains

After Brushlet decomposition, the coefficients are in complex form, so the real and imaginary parts of the coefficients can be used to calculate the energy characteristics and phase characteristics, which can be used as the characteristics of the sub-block. Let

The information about the phase can be obtained from the phase angle distribution. The phase angle is obtained by solving the inverse tangent of the ratio of the imaginary and real parts, denoted by

Writing the above equation in vector form, that is,

The sub-block

Assuming that all subblocks are decomposed into

where

The above equation can be completed using the K-SVD algorithm (Aharon et al., 2006) to solve the overcomplete dictionary

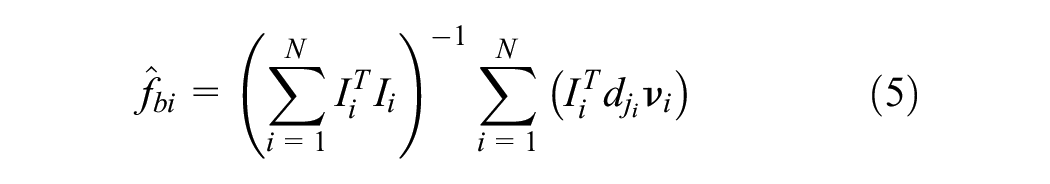

Let

Finally, the dictionary

The basic flow diagram based on a sparse representation of the wavelet multiscale local dictionary.

The Sparsity-Based Iterative Update CS Image Reconstruction Algorithm

The core of CS theory is sparse signal reconstruction. CoSaMP algorithm has the advantages of high signal reconstruction accuracy and low operation complexity, and has been widely used. The OMP algorithm (S. Chen et al., 2021) is an iterative greedy algorithm. The principle is that in each iteration, a column of the matrix with the largest absolute value of the internal product of the current residue is selected to gradually approximate the original signal. The OMP algorithm and CoSaMP algorithm usually require a priori information of known sparsity. In this paper, we use a local dictionary training method in the wavelet domain, which is an adaptive sparse representation, so it is difficult to obtain the sparse property of each block of an image accurately. To solve this problem, the compressed sampling matching tracking algorithm is improved, and a sparsity iterative update compressed sampling matching tracking algorithm (SIU-CoSaMP) is proposed, which can complete the calculation of sparsity at reconstruction when the RIP condition required by CS is satisfied. The basic principle is that the initial value of the sparsity is obtained by the sparsity estimation method, and then the inner product calculation is performed. The first 2K atoms with the largest absolute value of the inner product are taken out and merged with the current support set and then the original data in the candidate set that do not satisfy the conditions are deleted using the backtracking idea, and the remaining K atoms that match better are retained by iteration to form a new support set, and the update of the sparsity estimation value is completed during the iteration.

In this paper, the specific method to achieve the initial estimation of sparsity

The initial inputs are: the observation matrix

Step 1: Initialization: Iterative residuals

Step 2:

Step 3:

Step 4: Assume

Step 5:

Step 6: Update the atomic support collection

where

Step 7: According to Equation 11 least squares estimate

Step 8: According to the backtracking idea, the index value corresponding to the largest absolute value of the first

Step 9: Calculate the residual redundancy and compare it with the redundancy of the previous iteration, if

Step 10: If the reconfiguration signal satisfies

The observation matrix

Experimental Results and Analysis

Evaluation Metrics

In this paper, two evaluation metrics, peak signal-to-noise ratio (PSNR) (Fan et al., 2021) and reconstruction error probability (REP) (Yang et al., 2021), are chosen to measure the performance of the algorithm in this paper. The respective metrics are defined as follows:

The PSNR value reflects the closeness of the recovered image

Rep is the relative error, and the smaller the value, the better the recovered image effect.

Analysis of Simulation Results

Sparsity Estimation Experiments

The experimental platform used for the algorithm in this paper is Matlab 2020a, the main frequency of the simulation computer is 3.10 GHz, and the memory is 16 GB. Figure 2 shows the estimated test results obtained from the iterative update of the sparsity,

Sparsity iterative update estimates.

As can be seen from the curves in the figure, when the estimated value of K is 49 after

Image Reconstruction Experiments

To test the effectiveness of the proposed local dictionary learning method in the wavelet domain in compressive sensing (CS), we compared it with the conventional CS method. In the conventional method, the sparse representation approach is a global sparse representation. For our method, we separately selected the discrete wavelet transform (DWT) (Ouyang et al., 2019), stationary wavelet transform (SWT) (Liu et al., 2020), and the non-subsampled contourlet transform (NSCT) (Dai & Xu, 2021) as sparse representation methods. We chose Barbara, bike, the hat, and Lena images of all dimensions as the images to be measured.

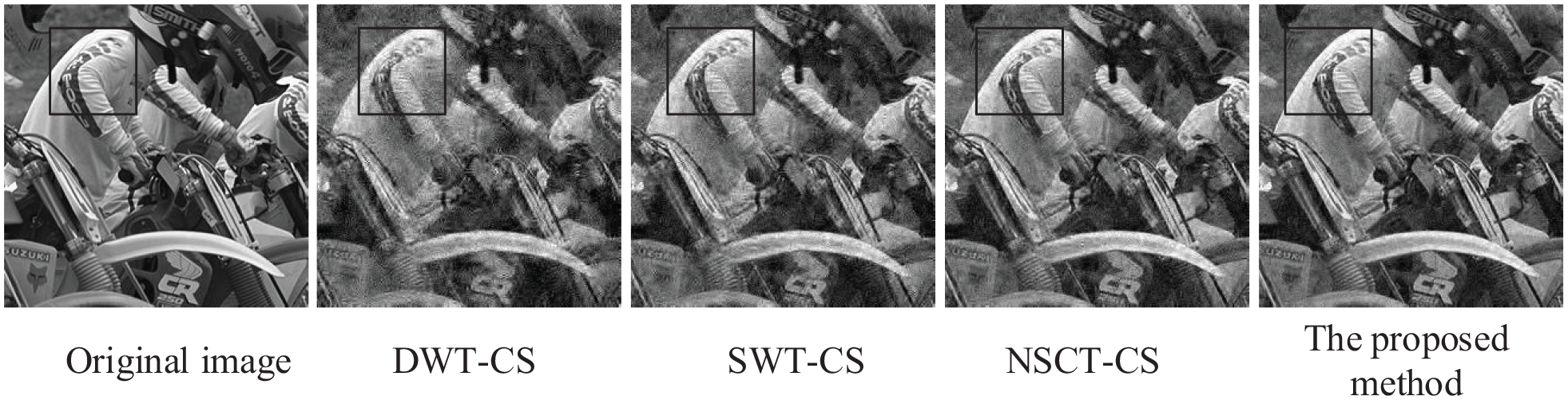

Since this experiment was conducted to test the effectiveness of the sparse representation method, the reconstruction method of CS is consistent with our method, both using the SIU-CoSaMP method. The visual effect of the images (as shown in Figures 3 and 4) is analyzed objectively by reconstructing the peak signal-to-noise ratio (PSNR) and relative entropy (Rep) values using the objective evaluation index presented in Table 1.

Comparison of the reconstructed image quality of traditional CS methods (DWT-CS, SWT-CS, NSCT-CS) and the proposed algorithm for the “Lena” image.

Comparison of the reconstructed image quality of traditional CS methods (DWT-CS, SWT-CS, NSCT-CS) and the proposed algorithm for the “Bike” image.

The Comparison of PSNR Values (in dB) and Rep Values of the Four Reconstructed Methods Under CS Theory.

Figures 3 and 4 show a comparison between the traditional CS and the algorithm for reconstructed image when

According to the objective evaluation index in Table 1, the reconstructed image using this algorithm has a PSNR value approximately 4 dB higher than the average, and a Rep value approximately 0.0033 lower. This indicates that the objective evaluation index of the reconstructed image using this algorithm is better than that of traditional CS algorithms. The algorithm is particularly effective in accurately recovering richly detailed images, such as Barara images.

As seen In Table 1, our proposed method indicates the best performance among the four reconstruction methods compared. Specifically, the proposed method achieves the highest PSNR values and the lowest Rep values across all images tested. For the image “Barbara,” the proposed method has a PSNR of 34.52 dB, which is the highest among all methods. Similarly, for the images “Bike,”“Hat,” and “Lena,” the proposed method achieves PSNR values of 33.94 dB, 33.91 dB, and 33.45 dB, respectively, all of which are the highest. Additionally, the proposed method consistently shows the lowest Rep values, indicating the smallest reconstruction error. This demonstrates the superior performance of the proposed method in terms of both image quality and reconstruction accuracy.

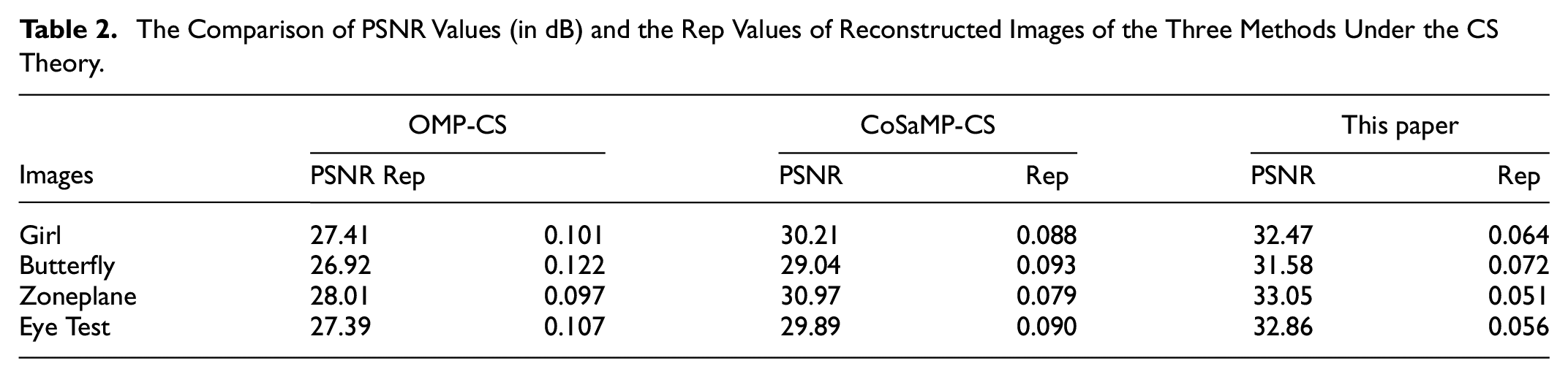

In order to verify the effectiveness of this paper’s algorithm in the reconstruction method using sparsity iterative update algorithm, Girl, Butterfly, Zoneplane, and Eye Test are selected as the images to be tested (256 × 256). The CS reconstruction algorithms based on OMP (Ouyang et al., 2019) and CoSaMP (Liu et al., 2020) are compared respectively, and since this experiment is to test the effectiveness of the validity of the sparsity iterative update algorithm, the other reconstruction methods are consistent with the method in this paper, and all adopt the wavelet domain local dictionary method. The visual effects of the reconstructed images (Figures 5 and 6) and the objective evaluation indexes PSNR and Rep values (Table 2) are used to objectively analyze the advantages and disadvantages of the algorithm in this paper.

Effect of three methods to reconstruct the “Girl” image under CS theory: (a) Original image, (b) OMP reconstructed image, (c) CoSaMP reconstructed image, and (d) the proposed reconstructed image.

Effect of three methods to reconstruct the “Butterfly” image under CS theory: (a) Original image, (b) OMP reconstructed image, (c) CoSaMP reconstructed image, and (d) the proposed reconstructed image.

The Comparison of PSNR Values (in dB) and the Rep Values of Reconstructed Images of the Three Methods Under the CS Theory.

As seen in Figures 5 and 6, the traditional CS reconstruction algorithms based on OMP and CoSaMP are not able to accurately predict the sparsity of the image, so there are errors in the iterations, and the contours and edges of the reconstructed image have “granular” noise, and the detail recovery effect is not satisfactory. The algorithm in this paper achieves adaptive estimation of sparsity by constantly updating the sparsity during the iteration process, so that the reconstructed image is ideal and the recovery of information such as edges and textures is less different from the original image.

As shown in Table 2, the average PSNR of the reconstructed images of this algorithm is 5.05 dB higher and the average Rep is 0.0535 lower than the corresponding metrics of the OMP-CS method, and the average PSNR is 2.46 dB higher and the average Rep 0.0343 lower than the corresponding metrics of the CoSaMP-CS method, indicating that the objective evaluation index of the reconstructed images of this algorithm is satisfactory.

Conclusion

This paper presents a sparse representation method in the context of Compressed Sensing theory. By combining the multiscale characteristics of wavelets and the flexibility of local sparse dictionaries, we use FCM clustering and K-L methods to classify wavelet high-frequency subband blocks and enable local dictionary learning. We construct a local dictionary learning method in the wavelet domain, which achieves more sparse representation by adapting to the image’s characteristics.

To address the uncertainty of sparsity (Alahari et al., 2022), we propose the SIU-CoSaMP algorithm, an improvement of the traditional CoSaMP algorithm. This algorithm obtains more accurate sparsity through iterative updates while ensuring complete signal reconstruction, thus improving the quality of the reconstructed image.

Subjective and objective evaluation indexes show that the proposed algorithm outperforms existing ones in Compressed Sensing theory. As the algorithm does not require any prior knowledge of sparsity and is practical, it can be applied in complex scene imaging fields (Ke et al., 2022) such as remote sensing imaging, medical imaging, and super-resolution reconstruction in the future.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.