Abstract

The purpose of this article is to describe and analyze the psychometric properties of the Learning Orientation Questionnaire (LOQ), which have not been previously published. Psychometric validation involves the accumulation of proper empirical evidence to confirm measurement of the intended construct, and to justify the intended uses of the scales. LOQ is based upon the intentional learning theory, which is a comprehensive, holistic learning theory. Through the expertise of the LOQ’s developer, educational researcher, and psychometrician, this article presents evidence of LOQ’s reliability and validity according to published best practices for scale development and validation. LOQ is a reliable and valid instrument determining where learners fall along the learning orientation continuum. Education researchers can use this information to support learners to move upward on the learning orientation continuum, improving their inclination to learn, high-order thinking, and life-long learning.

Keywords

Advances in neuroscience have revealed the extraordinary complexities and fundamental effects of emotions on learning (Amran et al., 2019; LeBlanc et al., 2015; Tyng et al., 2017), such as learners who believe intelligence is acquirable are more effective learners (Mangels et al., 2006) and fostering intrinsic and extrinsic motivation cultivates learning (Halamish et al., 2019). Many contemporary learning theories and models based on traditional primarily cognitive aspects are missing vital information, such as the dominant interaction of emotions, intentions, and social activities on learning. Too often, the traditional models focus on cognitive learning factors and subjugate other more influential factors. As a result, primarily cognitive learning models may overlook fundamental individual learning needs and foster uniform solutions not easily adapted to individual circumstances. Even as far back as the 1970s and 1980s, scholars suggested sound learning theories are incomplete or unrealistic if they do not include a comprehensive view that integrates cognitive, conative (intent or effort to do something), and affective aspects (Snow & Farr, 1987; Weiner, 1972). Healthcare professional education often lacks this comprehensive view of learners. This deficiency may limit strategies to promote learning in practical settings.

Healthcare professional education has long neglected how emotions affect cognitive processes, including memory, attention, and decision-making (LeBlanc et al., 2015) which is referred to as the “hidden curriculum” in healthcare learning (O’Callaghan, 2013). This is especially concerning, when healthcare professional education and practice are permeated with emotional experiences, both positive and negative, which likely influence learning (LeBlanc et al., 2015; McConnell et al., 2016; McConnell & Eva, 2012), transfer of knowledge in clinical situations (McConnell & Eva, 2012), higher cognitive function (Tyng et al., 2017), and even empathy (Piumatti et al., 2019). As educators prepare the next generation of healthcare professionals, who must learn complex knowledge and skills and be able to apply these in novel situations, it is imperative to analyze the role emotions have in learning (LeBlanc et al., 2015; McConnell et al., 2016; McConnell & Eva, 2012; O’Callaghan, 2013). This will require educators to adopt a learning theory that entails a holistic view of learners.

Despite the utilization of many holistic learning theories across multiple disciplines, only a very small set of constructs and assessments, featuring scant reliability and validity evidence, have been generated. It is important to have well-researched and validated tools to explore learning and properly measure learner outcomes based on a holistic learning theory. Therefore, this article will present one such broad, holistic learning theory—Intentional Learning Theory and its associated learning orientation constructs and model. The article will then describe an instrument to measure intentional learning and to present research demonstrating this instrument’s psychometric properties as well as validity evidence supporting its use.

Background

In their seminal article about intentional learning, Bereiter and Scardamalia (1989) use the term intentional learning to refer to using strategic thinking “processes that have learning as a goal rather than an incidental outcome” (p. 363). They describe successful intentional learning as the expenditure of effort in pursuit of personal cognitive goals, over and above the requirements of tasks, when the tasks could be accomplished by far less expenditure of effort. They suggest intentional learning results from persistent constructive problem solving toward innovation and goal attainment (Bereiter & Scardamalia, 1989).

The American Accounting Association describes a main tenet of intentional learning as learning how to learn, which is “a process of acquiring, understanding, and using a variety of strategies to improve one’s ability to attain and apply knowledge, a process which results from, leads to, and enhances a questioning spirit and a lifelong desire to learn” (Francis et al., 1995. p. 6). The intentional learner, then, is an active and engaged learner, rather than a passive learner, who has self-directed purpose, meaning they approach learning with intention; thus, choosing they will learn as well as how and what to learn. Intentional learning involves five attributes of learning: questioning, organizing, connecting, reflecting, and adapting (Francis et al., 1995).

Intentional Learning Theory

Intentional Learning Theory (ILT) was developed in the 1990s evolving from research of metacognition and cognitive monitoring (Flavell, 1979) and computer-supported intentional learning environment (Scardamalia et al., 1989). This work is further supported by Chee (2014), who stated intentional learning corresponds well with digital game-based learning. Flavell (1979) described metacognitive knowledge and experiences, as well as goals and actions, as higher-order cognitive processes. Bereiter and Scardamalia (1989) declared learning requires an understanding of intentions or the meaning of the behavior of the learner and their understanding of what they are doing. ILT encompasses both theories while extending to higher-order psychological processes: conative, affective, cognitive, and social aspects creating a holistic theory of learning as supported by Snow and Farr (1987).

A fundamental premise of ILT is that conative and affective aspects of learning are considered first and foremost, with metacognitive and cognitive aspects considered secondary and situational. Neuroscience research during the development of this theory supported this premise, explaining how emotions influence, guide, and may override our thinking and cognitive processes (Goleman, 1995; LeDoux, 1996). Current research also supports this premise, demonstrating strong predictive links between self-efficacy, emotions, metacognition, and academic performance (Hayat et al., 2020).

Martinez (1999b) developed the ILT and its learning orientation construct and model. Based upon these theoretical foundations, the Learning Orientation Questionnaire (LOQ) was developed to measure the construct of intentional learning so that researchers could investigate the predominant influences of learning orientation on successful learning and performance. Martinez and Bunderson (1998) introduced ILT and its conceptual construct to foster successful learning. ILT considers conative, affective, cognitive, and social factors that account for individual learning differences as well as how conative and affective factors influence learning success (Martinez, 1999a, 1999b). From inception, ILT has been a holistic and adaptive approach learners can use to develop their learning skills to achieve personal goals. A key aspect of this theory is motivation toward achievement, wherein learners credit their success to variables in their control, such as choice of task based on difficulty and effort, leading to higher levels of self-efficacy (Martinez & Bunderson, 1998). Thus, ILT is not solely concerned with improving learning within a certain content; but rather, improving learning ability with the acquisition of effective learning skills, such as setting their goals, solving problems, evaluating their progress, reflecting, and prioritizing (Martinez, 2023a). These learning skills are relevant and applicable to professional settings where successful intentional learners become life-long learners who engage in “…critical thinking, decision making, and innovation processes” (Graham, 2020, para 8). ILT is theoretically represented in the Learning Orientation Construct, which identifies different learning orientations based on dominant underlying factors that significantly impact learning and serve as key learning-difference variables.

Learning Orientation Construct

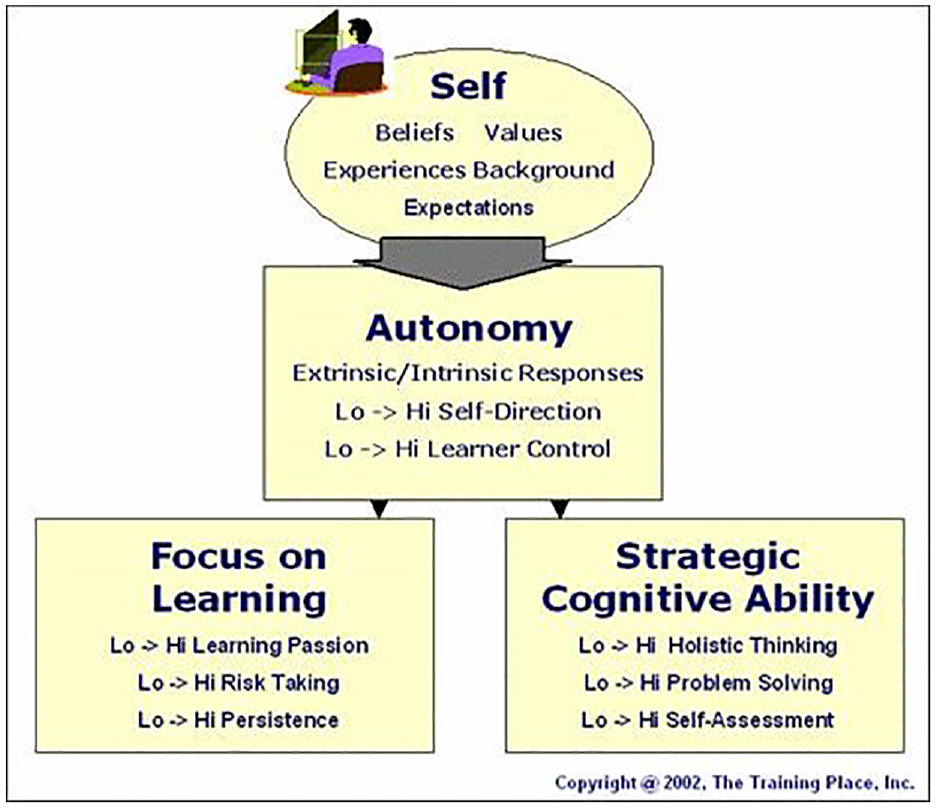

The Learning Orientation Construct (LOC) takes into consideration three factors: (1) individual’s self-motivation (influenced by feelings and attitude), (2) commitment to learning and strategic effort, and (3) learning independence or autonomy to identify an individual’s learning orientation. These three factors or learning attributes are vital to successful intentional learning (Martinez, 2020). These interrelated attributes constitute the individual’s learning orientation, which can be used to personalize learning environments and strategies to improve learning and performance proficiency (Martinez, 2023a). The Learning Orientation Construct categorizes the individual’s disposition to learning, or how this individual will “…take control, expend strategic effort, manage resources, and take risks to learn” (Martinez, 2005, p. 7). The LOC provides measure to assess learning ability and readiness to learn and serves as an underlying foundation for successful intentional learning, see Figure 1.

Conceptual model for successful intentional learning.

This viewpoint is more robust than primarily cognitive theories (e.g., learning styles and strategies) because it highlights and considers the developing, guiding, and managing of the influence of the learners’ emotions and intentions on cognitive and social processes. It views the learner as a whole person, from a biological, psychological, and emotional perspective (Martinez, 2020). Learning orientations represent how individuals (aggregated by varying beliefs, emotions, intentions, and ability) plan and set goals, commit and expend effort, and then experience learning to attain goals (Martinez, 2023a).

The LOC consists of four learning orientation profiles: transforming, performing, conforming, and resistant learners based on the summed scores of the LOQ (Martinez, 2005, 2023b; Mollman & Bondmass, 2020). Transforming learners exhibit greater effort, control, and emotions about learning and prefer flexible and challenging learning environments. Performing learners are self-directed and achieve necessary goals in semi-structured environments; however, they do not extend effort beyond what is necessary to achieve the goal. Whereas a structured environment with safe learning goals is needed for conforming learners to feel comfortable. Conforming learners exhibit low levels of control and effort and are extrinsically motivated to learn. If learners experience a negative learning environment, they may exhibit resistance to learning. Resistant learners lack confidence and efficacy in their learning ability (Martinez, 2005, 2023b; Mollman & Bondmass, 2020). These profiles describe a learner’s proclivity to learn or perform and distinguish common learner-difference attributes. Learning orientations are an effective way to differentiate learners according to the higher-order psychological factors that powerfully impact learning and performance and foster how they develop, manage, and sometimes override their cognitive learning preferences, strategies, and skills. These learner-difference profiles differentiate the learners, guide analysis and design of instruction and environment, and help tailor solutions that improve learning ability and the learning experience (Martinez, 1999, 2023b).

Learning Orientation Questionnaire

The Learning Orientation Questionnaire (LOQ) is a multidimensional measure of learning orientation designed to have broad applicability across different subject matter, curricula, learning goals, skills, roles, and instructional situations. The foundation of the LOQ is the ILT, the three-factor LOC previously described and learning orientation research. Refined through a series of analyses and revisions, the LOQ isolates and measures the three complex factors of the LOC that influence successful learning: (1) Conative/Affective Learning Focus, (2) Committed Strategic Planning and Learning Effort, and (3) Learning Independence/Autonomy, which are the three subscales of the instrument.

In its current form, the LOQ is a 25-item self-report questionnaire which takes approximately 15 minutes to complete and has been tested with over 15,000 learners at many secondary schools, universities, and corporations (Martinez, 2005). The items represent 25 statements reflecting different aspects of the learning orientation. Students completing the LOQ indicate their level of endorsement (agreement) on a 7-point Likert-type rating scale, ranging from Not at All True of Me (point value of 1) to Very True of Me (point value of 7). After taking the LOQ, learners receive four calculated scores: a score for each of the three factors or subscales (average rating on items pertaining to each subscale) and an overall learning orientation score (average across all 25 items). The subscale scores range between 1 and 7 and indicate the learner’s standing on the respective factor. The overall learning orientation score is calculated by averaging the LOQ’s 25 items resulting in a score between 1 and 7. It identifies the student’s learning orientation, provides a learner profile, and offers explanations about individual intentional learning differences. The overall learning orientation score distinguishes the learners into transforming, performing, conforming, or resistant learning profiles as each learning orientation profile has a specific overall score range. Learning orientations are generalizable to most learning situations and are not specific to any domain or environment. In other words, items are “agnostic” to specific topics, instructors, or courses. Resulting scores describe a general disposition to learn and indicate individuals’ general inclination toward learning.

A fundamental premise of the ILT is that learners fall along the learning orientation continuum, from transforming to resistant learners, which is situational and not fixed, which is supported by a recent study in nursing students (Mollman & Bondmass, 2020). A learner may move downward or upward along the continuum depending on the learning situation and is influenced by negative or positive responses, conditions, resources, results, expectations, and experiences. The desired upward trend into higher learning orientations necessitates stronger intentions, feelings, and beliefs about learning as well as greater effort and learning autonomy. Therefore, learners receive strategies for successful learning and areas for improvement for each of the factors/subscales based on their learning orientation profile, and they receive general resources for learning in addition to their scores.

In summary, ILT accounts for the psychological and cognitive factors that influence how learners approach, enjoy, experience, and achieve learning. ILT is a broad, holistic learning theory that reflects complex, variable, and diverse approaches to learning that impact learners’ success. The LOQ aligns with the ILT and the LOC as a practical measure to assess an individual’s inclination to learn. The learning orientations, as calculated by the LOQ, are distinct enough to differentiate between learners’ characteristics and profiles thus offering customizable, individualized solutions to support successful learning. A valid and reliable scale to assess learning orientations has the potential to support learners and advance educational research; however, results of psychometric analysis for the LOQ are dispersed across several unpublished sources and have not been published in a peer-reviewed journal. Therefore, this article compiles and evaluates the evidence generated by psychometric analysis of the LOQ, according to the rubrics and best practices for scale development and validation delineated by Boateng et al. (2018).

Methods

The primary developer of the ILT and LOQ, an educational researcher, and a psychometrician collaborated to accumulate and assess available evidence generated by past development, administration, and analysis of the LOQ. These sources include early published works (Martinez, 1999a, 1999b; Martinez & Bunderson, 1998), an internal report (Martinez & Bunderson, 1999), and the publicly available LOQ Manual (Martinez, 2005).

The framework of Boateng et al. (2018) delineate best practices for scale development and validation, which we have used to assess the available evidence for the LOQ. Readers are referred to the 2018 article for details of Boateng’s model. However, a brief summary of the validation process is provided here. Where appropriate, we provide the specific approach or analytic methodology used to substantiate each step.

The Boateng validation framework involves nine steps spread over three phases. Phase One is item development, including two steps: (1) Identification of a domain and item generation and (2) assessing if the items measure the intended domain (content validity). Identification of a domain (Step 1) presupposes that a scale is a manifestation of a particular domain of interest or subject of inquiry. Step 1 is itself an involved process which develops a conceptual definition of the domain, construct, or phenomenon under examination, to describe its characteristics, and delineate its boundaries. Domains are identified as part of an established framework or theoretical model. If so, the domain definition is best rooted in a thorough literature review and linked to the related field of study. Once the domain is delineated, items intended to assess the construct of interest are generated. Items may be written to elicit a variety of responses; however, selected response formats (e.g., dichotomously scored multiple choice items, or polytomous Likert-type rating scales) are most common due to their ease of scoring and statistical summarization. Items generated in Step 1 are drafted and reviewed in Step 2 (Content Validation) by qualified subject matter experts (SMEs) in the domain of interest. The SMEs qualitatively review the items for both (a) their relevance to and representativeness across the domain of interest, and (b) the appropriate matching of the items to the anticipated level of the target population on the domain of interest.

Phase Two in the Boateng scale development framework consists of the next four steps: (3) pre-testing questions to ensure the questions and responses are meaningful, (4) ensuring adequate data from the right people through survey administration and sample size, (5) item reduction, and (6) factor extraction to discover the number of latent constructs that fit the data. Step 3, pre-testing (or pilot-testing, or “piloting”) questions, features two components. The first is to assess the representativeness of items on the instrument with respect to the construct being assessed. The second purpose of Step 3 is to evaluate the degree to which the responses to the items contribute to coherent measurement of the construct. Pre-testing is typically conducted on a small sample of the target population, which generates initial data with which researchers assess the psychometric properties of the instrument, and its alignment with theorized aspects of the domain of interest. Step 4 addresses the approach to data collection, considering both adequate sample size and representation of the target population. Without the former, stable statistics cannot be estimated. Absent the latter, valid inferences cannot be generalized to the population. Factors influencing adequate sample size include (a) anticipated degree of variance, in particular, that of the population on the construct, (b) number of survey items, and (c) number of points on the rating scale. Also, different sample sizes may be indicated depending on the phase of development (beta-testing, pre-testing questions, item reduction, extraction of factor analysis, etc.). Step 5 (item reduction) describes a process of scale refinement which is informed by statistical analysis of how items function in the field, using data arising from operational administration. In this step, objective statistical criteria are specified and then applied to item performance. Items not meeting the criteria are removed from the scale instrument or indicated for revision. The goal of item reduction is parsimonious measurement, ensuring that only well-functioning items, which are internally consistent, aligned to the domain, and contributing to the overall measurement, are retained on the instrument. Classical test theory (CTT) and Item response theory (IRT) are the prevailing analytical frameworks for Step 5. The level of analysis in Step 5 can be the item or the response category (for instance, to investigate the functioning of rating scales). In the results which follow, researchers used the IRT framework to analyze LOQ items. Specifically, the 1-parameter IRT, or Rasch, Model (Wright & Stone, 1979) was fitted to ordinal LOQ data.

The final Step 6 of Phase Two is the extraction of factors via factor analysis. The purposes of factor analyses are (a) to understand the latent (unobserved) dimensions present in a of a set of items, (b) to quantify the internal consistency between the items (i.e., inter-item correlations). This is done by extracting latent factors which represent the shared variance in responses among the multiple items, followed by an evaluation of these extracted factors as tributary aspects (i.e., subdomains) of the construct under study. The results of factor analyses are interpreted as balancing (a) the number of factors retained as meaningfully relevant to the domain, with (b) the proportion of variance explained. Typically, with each retained factor, a diminishing amount of variance on the outcome variable is explained. Factor analysis is also related to Step 5 as a method of item reduction. Items loading beneath a certain threshold on retained factors may be removed from the instrument as insufficiently relevant to the domain. Approaches for factor analysis include exploratory factory analysis (EFA), principal components analysis (PCA), among others. Step 6 is substantiated in the next section with results from a PCA of the LOQ items, administered to a large international sample of learners (n = 6,178) using Promax rotation with Kaiser normalization.

Phase Three of Boateng’s scale development model proceeds in the final three steps of evaluating (7) dimensionality, (8) reliability, and (9) validity. Tests of dimensionality (Step 7) are intended to determine if a factor structure or model extracted from analysis of previous data (for instance, an EFA from Step six) persists longitudinally, either on the same sample in a future point in time, or on a different sample of subjects. These tests can be conducted with several methods, including confirmatory factor analysis (CFA), model fit indices (e.g., chi square statistic), and measurement invariance, and examination of correlation of subdomain scores. Neither CFA results nor holistic fit measures were found in the available LOQ documentation. However, one benefit of Rasch model analysis is that it provides estimates of model fit, based on a chi-squared statistic, for individual items. Thus, evidence for Step 7 is provided by evaluating item fit indices generated by Rasch analysis. The Mean-Square fit statistic is also called the relative chi-squared and the normed chi-squared. Mean-square Infit and Mean-square Outfit are convenient measures of fit discrepancy and based on summaries of mean-square residuals (Wright & Stone, 1979). These statistics have an expectation of 1.0 and range from 0 to infinity. Mean-squares greater than 1.0 indicate misfit to the Rasch model (i.e., the data are less predictable than the model expects); mean-squares less than 1.0 indicate overfit to the Rasch model, that is, the data are more predictable than the model expects. Evidence for dimensionality is also provided by inter-domain score correlations. Reliability (Step 8) pertains to the precision of scores, or conversely, the absence of systematic error in the scores. Reliability is also conceptualized as the extent to which scores are reproduceable over repeated administrations. Reliability is quantified in many different ways, most frequently by internal consistency indices (e.g., Cronbach’s alpha) or indices based on repeated measures (e.g., Test-retest reliability). Both frequently used indices are provided to satisfy Step 8 in the Results. Validity (Step 9) is the degree to which the scale actually measures what it purports to measure. Test validity is also the extent to which inferences, conclusions, and decisions made based on test scores are appropriate and meaningful. The validity of an instrument can be examined in numerous ways; one qualitative component of validity is content validity, described in Step 2. Step 9 of the Boateng process, however, addresses quantitative indicators of validity, namely, criterion and construct validity; These aspects of validity address how well the scores from the instrument correspond to those intended to measure similar or related constructs. These quantitative measures typically take the form of correlations, with a hypothesized direction (positive or negative) and magnitude (weak, moderate, or strong). Criterion validity examines the relationship between scores on a given scale and performance on another related measure (typically referred to as criterion). These related criterion measures may be performed at or near the same time as the focal measure (concurrent validity) or at a future time (predictive validity). Step 9 is demonstrated in the Results section focusing on criterion validity. Bivariate (Pearson product) correlations were computed to test the relationships in one study (N = 1,277) between measures from the LOQ (overall score) and related measures: Holistic thinking, problem-solving (both self-reported on a 7-point scale), avid book reading, and self-improvement, all hypothesized to be significant and positively aligned with LOQ measures.

Finally, validity is a continuous, nonlinear process, designed to accumulate evidence which supports the intended uses of the scale. Understanding best practices for scale development is key to proper measurement of latent constructs in health, social, and behavioral science (Boateng et al., 2018). Evaluation of the LOQ’s psychometric analysis followed these prescribed best practices for scale development and validation.

Results

In this section, the documentation of the scale development procedures used to validate the LOQ will be comprehensively consolidated and summarized, according to the steps outlined by Boateng et al. (2018). While scale development is not a linear process, the documented scale development procedures and psychometric evidence will be presented in order of the three phases and nine steps to allow for illustration of how the LOQ was developed and validated, demonstrating the best practices for developing and validating scales.

Phase One: Item Development, Steps 1 to 2

To begin the development of the theory, researchers who later developed the ILT and LOQ performed a literature review of educational research in psychological and developmental literature which highlighted the absence of a broad, holistic learning theory, and a measurement of that theory (Martinez, 1999b). Additionally, the literature review resulted in the identification of the primary intentional learning domain, with the construct featuring four subdomains. The literature review presented in the Background section of this paper provides a summary of the relevant literature which informed the definition of this domain of interest. Using this LOC as a foundation, two item writers initially assisted in the creation of the 75-item pool, including at least 12 items for each of four theoretical subordinate aspects: (1) control, (2) beliefs, (3) planning, strategy, and performance efforts, and (4) intentions. An SME panel of five reviewers assisted in the review of items and initial test construction, by revising and eliminating items as appropriate. For inclusion on the questionnaire, the items had to meet the following criteria: (1) the content of the items was consistent with the intentional LOC, (2) the items served as measures of intentional learning performance rather than outcomes of intentional learning performance, and (3) the items were judged as good indicators of intentional learning performance (Martinez & Bunderson, 1999). Thirty-two items were retained after this review process and served as the components of the LOQ which proceeded to field testing. The items comprised 32 statements describing various learning attitudes or dispositions of the intentional learning construct. Each item asked the student to endorse each statement, according to their level of agreement, using a seven-point Likert-type scale from Not at All True of Me (1) to Very True of Me (7) (Martinez & Bunderson, 1998). Approximately one-third of the items were negatively worded (e.g., LOQ7, I avoid learning situations if I can.), requiring reverse scoring (lowest rating category receives highest score) to align with the LOC (Martinez & Bunderson, 1998). The initial test design hypothesized four factors, reflecting the conative, affective, cognitive, and social aspects of learning which related to the four dimensions previously mentioned: (1) control, (2) beliefs, (3) planning, strategy, and performance efforts, and (4) intentions.

Phase Two: Scale Development, Steps 3 to 6

Steps 3 (pre-testing questions) and 4 (survey administration) and are addressed here in tandem, as an initial sample must be identified for field testing. Pre-Testing began with the first version of the LOQ (known then as Intentional Learning Orientation Questionnaire) being initially tested with over 125 participants.

After field testing on this initial sample, several revisions resulted from data arising from administration of the instrument to subsequent, successively larger samples. For example, Version II of the LOQ was tested with a “larger and more diverse sample” after refining the items based on the analysis of the first version (Martinez & Bunderson, 1999, p. 27). After further refinement, the LOQ Version III was administered to an even larger and more diverse sample (n = 539) and provided further evidence of validity and reliability. The compiled evidence documented a PCA with Promax rotation performed on this sample. The results demonstrated a multidimensional construct with three factors: conative and affective aspects, committed learning efforts, and learning autonomy (Martinez & Bunderson, 1998). Information from this subsequent administration also resulted in a slight revision of the seven-point Likert-type scale, changing the response categories to Uncharacteristic of Me (1) to Very Characteristics of Me (7). These changes were used for the 25 items retained on Version III of the LOQ.

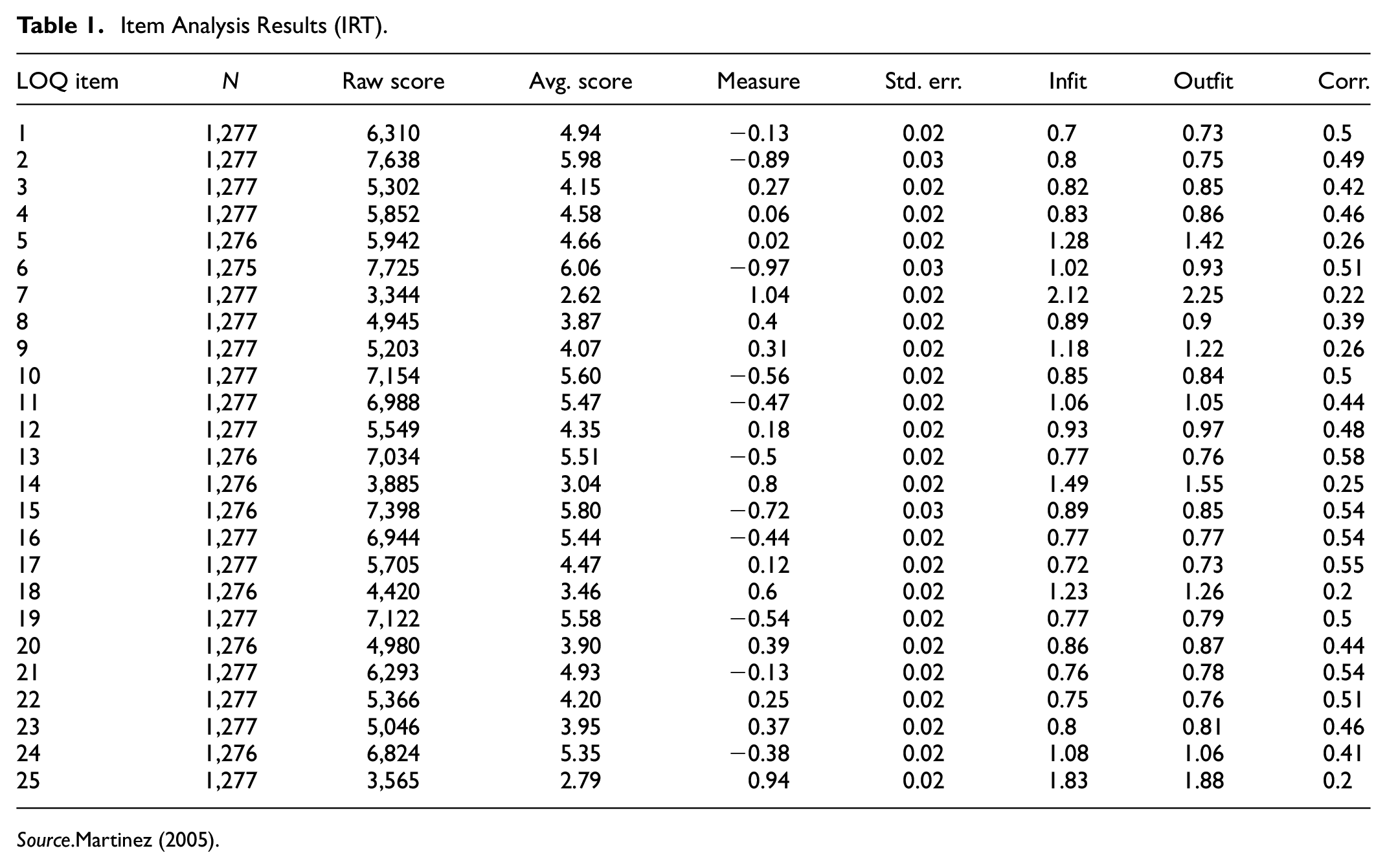

Step 5 of the Boateng validation process, Item Reduction Analysis, uses statistical item analysis to further refine the instrument, to identify any items that are not functioning to contribute to the overall measurement (i.e., are not internally consistent), and to exclude them from the measurement tool, or to recommend them for revision. The Rasch model was used to analyze the responses of 1,277 students on the 25 LOQ items (Martinez, 2005). Table 1 below contains the results of this item analysis, including item measures, discrimination, and other model-related statistics. The Rasch Model reports item measures on a logit (log of odds) scale with the mean measure identified at 0. The item measures reflect the inverse of the degree to which students endorsed, or provided high rating values, on the question. Higher measures indicate “difficult” items featuring low endorseability, and while low measures indicate “easy” items featuring high endorseability. The items reflected a range of difficulty covering the construct of interest, with the “easiest” (highest endorseability) items being LOQ6 (“I enjoy using learning as a vital resource in accomplishing my professional or personal goals.”) and LOQ2 (“I seek new learning opportunities because I enjoy learning.”), and the most difficult items being LOQ7 (“I avoid learning situations if I can”) and LOQ25 (“When I learn about new topics, it is not an enjoyable or comfortable process”). Also reported in Table 1 are discrimination indices (point-measure correlations), indicating for each LOQ item the correlation between the item response, and the total student score on the questionnaire. The item-total correlations are all positive, indicating each item is appropriately aligned with the overall construct. The items displayed desirable measurement properties.

Item Analysis Results (IRT).

Source.Martinez (2005).

Step 6: Extraction of Factors is the next step in the Boateng validation model. Primary evidence for this step includes results from a factor analysis methodology. A principal components analysis of all 25 items was conducted on a large international sample of learners (n = 6,178), using Promax rotation with Kaiser normalization, to reveal how many factors (construct scales) account for unique variance in the data (i.e., the number of factors to extract) and how the individual items load or correlate with the extracted factor constructs (Martinez, 2005). Factors were determined for retention by satisfying three key determinants: eigenvalue greater than 1 (i.e., Kaiser criterion), graphical evaluation of the factors in the scree plot (Figure 2), and the proportion of variance accounted for by each factor. The factor analysis of the LOQ revealed three independent factors accounted for 49% of the total sample variance. The first factor was characterized as Affective/Conative Aspects, the second as Learning Autonomy, and the third as Committed Strategic Planning and Learning Effort. Table 2 contains the factor loadings of all 25 items on the three retained factors. Fourteen items loaded highest on the first factor, six items loaded highest on the second factor, and four items loaded highly on the third factor (Martinez, 2005).

Scree plot from principal factor analysis.

Factor Loadings.

Source.Martinez (2005).

Scale independence was tested by a component correlation matrix. Table 3 lends further support to the conclusion of scale independence with all the off-diagonal elements being low. Results demonstrate construct validity, as each scale represents distinct aspects of the theoretical intentional learning (Martinez, 2005).

Component Correlation Matrix.

Source.Martinez (2005). N = 6,178.

Note. Extraction Method: Principal Component Analysis. Rotation Method: Promax with Kaiser Normalization.

Phase Three: Scale Evaluation, Steps 7 to 9

Table 1 contains Infit and Outfit indices for all 25 items on the LOQ based upon responses of 1,277 students (Martinez, 2005). The criterion for acceptance varies across researchers, ranging from less than 2 (Ullman, 2001) to less than 5 (Schumacker & Lomax, 2004). Wright and Linacre (1994) discuss acceptable values for fit indices and cite 0.6 to 1.4 as “reasonable” for items involving rating scales. Wright and Linacre (1994) also note simulation studies parameter-level mean square fit values between 0.5 and 1.5 are “productive for measurement, values between 1.5 and 2.0 as “unproductive for construction of measurement, but not degrading,” and values greater than2.0 as “distort[ing] or degrad[ing] the measurement system.” By this latter guidance, only two items are unproductive (LOQ7 and LOQ25), and only one item on the LOQ7 is actively distorting the measurement. Thus, only LOQ7 demonstrated variation beyond what is tolerable for coherent measurement.

Further evidence of dimensionality is provided in bivariate correlations of the subscales of the LOQ, with each other and the overall score. Table 4 contains these correlations, for two separate large sample studies (Martinez, 2005). The correlations between total and section scores are all positive, moderate-to-strong, and statistically significant at the α = .01 level, lending additional evidence for a single, unitary construct, and for the appropriateness of combining section and item scores additively into a total LOQ score.

Bivariate Correlations between Total and Section Scores of the LOQ.

Source.Martinez (2005).

The LOQ developers considered how an overall learning orientation score could be calculated and interpreted, determining the learner’s learning orientation profile. Evidence to support using the overall learning orientation score (as a raw some of item scores) included the high, positive, and similar magnitude, part-whole correlations between the three subscale scores and the total score shown in Table 4 (Martinez, 2005). Additionally, it was noted that the instrument demonstrated satisfactory utility based upon the developers’ perspective that it was economical to administer, score, and analyze for large samples (Martinez & Bunderson, 1999).

Step 8 of the validity framework involves tests of reliability, or the consistency of the instrument’s measurement capability. Boateng conceptualizes reliability as both (a) internal consistency, as measured by Cronbach’s alpha, and (b) consistency of repeated measurements using test-retest reliability measures (i.e., how consistent learner scores are across time). A reliability of 0.90 is the minimum recommended threshold for high stakes measurements but is rarely attained by most educational and social science researchers. A reliability of 0.70 has often been accepted as satisfactory for most scales. Table 5 contains evidence for both forms of reliability for several separate administrations of the LOQ (Martinez, 2005).

Reliability.

Source.Martinez (2005).

As shown in Table 5, the LOQ has continually shown internal consistency reliability for the total score, with values consistently above 0.8 (Martinez, 2005). The samples for test-retest reliability are smaller due to the logistic challenges of obtaining repeated, longitudinal measures for the same students. Nevertheless, test-retest reliability indices were computed as Pearson correlations between students’ scores on the LOQ from two independent administration separated by several weeks and exhibited values over 0.8.

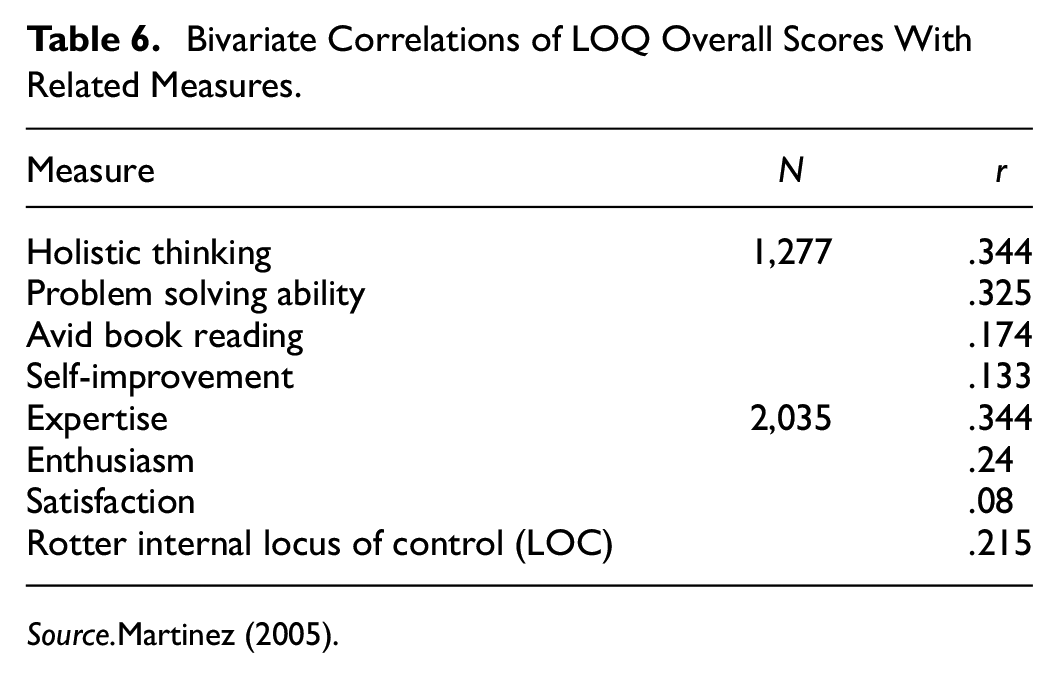

Statistical tests of validity constitute Step 9 of the Boateng validity framework. Bivariate (Pearson product) correlations were computed to test the relationships in one study (N = 1,277) between measures from the LOQ (overall score) and related study variables: holistic thinking problem-solving (both self-reported on a 7-point scale), avid book reading, and self-improvement, all hypothesized to be significant and positively aligned with LOQ measures. Table 6 contains these correlations with the LOQ score. All demonstrated statistically significant (p < .01), positive relationships with intentional learning (Martinez, 2005).

Bivariate Correlations of LOQ Overall Scores With Related Measures.

Source.Martinez (2005).

In another study (N = 2,035) involving corporate workers reflecting differing levels of computer, job, and business expertise, the LOQ was tested for correlation with expertise, enthusiasm, satisfaction, and Rotter’s (1966) Internal Locus of Control on Learning. Again, all hypothesized to be positively associated with LOQ. Table 6 also contains these correlations which were all moderate, positive, and significant (p < .01) (Martinez, 2005).

Discussion

The purpose of this article was to present psychometric analysis of the extensive testing of the LOQ. Various sources of validity evidence were gathered and layered within an established validity model by Boateng et al. (2018). LOQ was found to satisfy the best practices of scale development and validation by exhibiting by a high degree of validity for the measurement of its intended construct, Intentional Learning. The instrument is firmly grounded in the theory of intentional learning and was composed and reviewed by qualified subject matter experts (SMEs). Under Phase Two, the data resulting from administration of the LOQ was subjected to pilot testing with various groups, statistical data reduction (principal components analysis), and item quality studies, evaluating endorseability, and discrimination. Finally, Phase Three yielded evidence supporting the LOQ as a single unitary construct, exhibiting a high degree of reliability (using two indices), and consistent measurement with similar and related constructs.

Limitations

The primary limitation of the current study is the necessary reliance on secondary evidence (Martinez, 1999a, 1999b, 2005; Martinez & Bunderson, 1998, 1999), and the lack of access to original data sets to replicate the published analysis and results. The authors of the present work relied upon and distilled publicly available sources which contained the relevant evidence. At times, the desired detail, especially for the early stages of the tool’s development (Steps 1–4) was difficult, if not impossible, to ascertain. In contrast, the quantitative evidence for the latter stages (5–9) was quite robust, and the authors had to apply judgment for what to extract and include in this article, balancing evidential strength with verbal economy. As a result, the validity argument takes on increased viability as one progresses through the nine steps.

Future Directions of the LOQ

Just as the development of the LOQ is an ongoing process, validation evidence collection is a continuous process. This is because, among other things, the LOQ constructs are theoretical abstractions embedded in theoretical frameworks that are greatly influenced by continuing developments in the neurosciences. Nonetheless, the findings provided herein substantiate the use of LOQ. LOQ as a valid measure will allow education researchers to determine where learners fall along the learning orientation continuum (transforming, performing, conforming, or resistant learners). According to their learning orientation, strategies to support the learners and improve their inclination to learn can be developed, such as customizing the learning environment to the learner’s learning orientation which can have significant, positive influence on learner satisfaction and efficacy (Martinez, 1999a, 1999). Future research should examine the impact of these changes on the learner’s performance. Additionally, research should continue to test the LOQ’s psychometric properties as well as examining how intentional learning impacts health professionals’ learning in practical settings, filled with emotional experiences. According to Mollman and Candela (2018), the outcomes of intentional learning are high-order thinking and life-long learning which are vital skills for health professionals who must apply their knowledge to distinct patient situations.

Well-developed and validated instruments are essential to understanding and accurately measuring constructs in health, social, and behavioral science (Boateng et al., 2018) but scale development is a complicated, multi-faceted, and nonlinear process. Validity is not expressed as binary state (valid/invalid) but rather in degrees, as spectra amongst many different facets. The best of measurement scales accumulate a body of evidence, comprising both qualitative and quantitative elements, which supports and substantiates the computation and usage of scores. A validity argument is essentially a value proposition for the measurement instrument as a faithful “mirror” of the target construct, substantiating whether the scale is a trustworthy indicator of a quantifiable, and real attribute in the world.

Given the widespread use of psychological measures (scales, inventories, surveys, questionnaires, examinations, etc.), the dismal amount of evidence put forward in the public space to justify the use of the resulting scores is discouraging, especially given the consequential impact can have, in some cases, on learners’ futures. Contributing factors to the dearth of validity evidence could be that the work involved can be daunting, time- and resource-intensive, and include multiple avenues; hence, many investigators do not know where to begin. “Resource constraints, including time, money, and participant attention and patience are very real, and must be acknowledged as limits to rigorous scale development” (Boateng et al., 2018, p. 15).

The Boateng validity framework, thankfully, provides a helpful roadmap for scale developers to follow by outlining the general shape of the phases and steps in scale development, introducing the conceptual and methodological underpinnings of each step. This allows researchers to purposely utilize a principled and defensible manner to support the steps they pursue. It is the authors’ hope this work will serve as a useful model for researchers and developers of similar instruments, and further promote the publication of validity evidence for those tools.

Of course, with any instrument, there will be definite gaps in the validity. Not all the steps will always be followed or rigorously documented. Boateng et al. (2018) themselves are agnostic as to which steps are most important, acknowledging: the necessity of the nine steps…will vary from study to study. While studies focusing on developing scales de novo may use all nine steps, others (e.g., those that set out to validate existing scales), may end up using only the last four steps… difficult decisions about which steps to approach less rigorously can only be made by each scale developer, based on the purpose of the research, the proposed end-users of the scale, and resources available (p. 15).

Conclusions

The ITL provides a broad, holistic view of learners, acknowledging the impact of emotions, and achievement motivation that is often lacking in cognitive theories. This comprehensive view that incorporates cognitive, conative, affective, and social aspects of learning aligns with educating health professionals whose learning and practice occur in complex and often novel situations filled with emotional experiences, both positive and negative. The ILT has a well-defined construct and conceptual model, as well as a psychometrically valid instrument, to measure the construct which is often lacking in the literature. This article demonstrates the LOQ accurately and consistently measures learning orientation as proposed in the ILT which supports its use in research in health professional education.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.