Abstract

There are concerns that soft skills assessment has been conceptualized within the Western context and may not reflect the indigenous African worldview. Without relevant soft skills assessment contextualized in the African cultural cosmology, there is a limitation in assessing African conceptions of abilities. The purpose of this study was to identify relevant soft skills for secondary/high school students and develop a scale relevant for assessing soft skills in Botswana. An exploratory sequential mixed methods design was used to explore the perceptions of 23 education stakeholders on relevant soft skills for secondary students through in-depth interviews. The qualitative findings were used to develop a 63-item Soft Skills Assessment Scale which was administered to a sample of 306 senior secondary school students selected from three educational regions in Botswana. Exploratory factor analysis was conducted to assess the latent factor structure of the scale. Through principal component analysis, four factors were extracted with underlying 38 items. However, a confirmatory factor analysis confirmed a four-factor model (Perseverance, Civic virtue, Teamwork, and Communication) based on a final 14-item scale with Cronbach’s alphas above .60 and Cronbach’s alpha of .82 for the entire scale. Convergent and discriminant validities of the scale were within an acceptable range. The key contribution of this study was the development of a psychometrically valid and reliable Soft Skills Assessment Scale (SSAS) in the context of Botswana.

Plain Language Summary

There are concerns that soft skills assessment has been conceptualized within the Western context and may not reflect the indigenous African worldview. To address this gap, we set out to develop a Soft Skills Assessment Scale (SSAS) among senior secondary school students in Botswana. In this study, we use mixed-methods approach in which we collected qualitative data through interviews and did literature review to develop the SSAS while we conducted a survey to determine the degree to which SSAS measures what it claims to measure (i.e., soft skills). The findings showed that SSAS is a reliable and valid tool to use in the secondary school setting in Botswana.

Introduction

Educators in the 21st century have grown increasingly aware of the importance of soft skills crucial for effective academic, social, and moral functioning. Sadly, however, traditional instruction and assessment do not always provide appropriate tools for developing and measuring these skills (Assan & Nalutaaya, 2018; Free, 2017; Khaerudin et al., 2020). One of the concerns is that soft skill assessment has been conceptualized within the western context and may not reflect the indigenous African worldview. The lack of locally validated soft skill scales may contribute to poor development and detection of other cognitive abilities. There is a need for localized soft skill assessment scales since “people of different cultures differ not only in ability and process that the researcher test for, but also in those abilities the researcher test with” (Grigorenko et al., 2001, p. 368). Dependence on international assessment scales or scales developed in the Minority World has raised concern about how intelligence and other abilities are assessed within African context (Oppong, 2017, 2023b; Oppong et al., 2022, 2023; Oppong & Strader, 2022; Scheidecker et al., 2022). According to Oppong (2020), the Western model of cognitive abilities is valuable but limited in its capacity to account for the various conceptualizations of valued abilities in different human societies.

Aworanti (2012) highlighted that, in today’s knowledge, skills, and innovation (KSI) economy, hard skills account for only 15% of a person’s productivity and soft skills 85%. When students graduate from school and attend interviews, they are expected to be on time, be professional, communicate and express themselves comprehensively, think critically, and be quick to respond to questions. All these imply a good level of soft skills, which are regrettably, not assessed in schools. Student transcripts reflect grades on hard skills but nothing on soft skills. “If soft skills have been identified as veritable abilities needed for increasing productivity, then a deliberate effort is required to include its assessment in the public examining” (Obinna et al., 2014, p. 73). Assessment of soft skills provides information and awareness to students about the knowledge, skills, and other attributes they possess after completing coursework and academic programs.

Soft skills are a knowledge gap and reflects a demand-and-supply crisis in that there is a mismatch between the skills employers expect graduates to possess and the educational outcomes including the types of skills with which they graduate (Mahasneh, 2016; World Economic Forum, 2020). Employers are generally not satisfied with the quality of fresh graduates who have satisfied academic mastery but possess dissatisfactory levels of soft skills proficiency, hence, the prevalent youth unemployment (Balcar et al., 2018; Mitana et al., 2018; World Economic Forum, 2020). For instance, reports from Zambia, Rwanda, and Kenya indicate that secondary education does not adequately prepare students with soft skills required for globalized economy (Busaka et al., 2022; Rosekrans & Hwang, 2021).

International and national donors have begun to fund interventions that provide soft skills and work force training in Africa (Rosekrans & Hwang, 2021; Schultz, 2022). These programs are put in place to increase youth’s soft employability skills. However, these programs need to be designed and implemented in alignment with an evidence-based approach within the local context. In Africa, implementers, policy makers, and other stakeholders may sometimes lack access to contextually relevant evidence from Africa or African evidence is invisible to them due to the prevailing citation and publication biases (Draper et al., 2022; Gøtzsche, 2022; I. Samuel, 2023; Scheidecker et al., 2023). Employers have complained about poor quality graduates who have been taught, learned, and been tested on knowledge that is irrelevant to their setting and circumstances (Rosekrans & Hwang, 2021; Schultz, 2022). Inadequate skills offered by the education system are a serious barrier that precipitates unemployment in Africa (Assan & Nalutaaya, 2018; Rosekrans & Hwang, 2021; Schultz, 2022). Relatedly, instruments used to measure soft skills are known to originate from developed Western countries with limited validity. Muchera and Finch (2010) posited that some scales’ lack of construct equivalence is possibly due to some human society’s differing nature of beliefs, norms, and values. To a larger extent, Africa has failed and is still failing to produce knowledge, concepts, models, and contextually relevant theories to solve African problems (Oppong, 2013, 2023a, 2023b). A similar construct to western intelligence is wisdom (Oppong, 2020). It is the ability to think critically and examine thoughts and actions underlying human life to solve problems effectively (Gyekye, 2003; J. Samuel, 2008). According to Oppong (2020, p. 8), “Wisdom is the ability to combine both cognitive competencies and socio-emotional competencies to solve any problem successfully.” The African culture has rich heritage, skills, and metaphors which should be employed successfully in today’s education (Oppong & Strader, 2022). Unfortunately, Africa has and continues to yield to Western epistemic colonization to the extent that Western intelligence models dominates intellectual and practical concerns about cognitive abilities in Africa. However, Western conceptions of intelligence have been found to be limited in their capacity to account for the varied representation of intelligence worldwide (Oppong, 2020). Today’s youth face lots of problems and indulge in drugs, prostitution, alcohol, and many others for temporary emotional satisfaction or as an escape from difficult circumstances in their lives (Opondo et al., 2021). Therefore, today’s education should equip students with skills to make sound judgments, solve problems, and apply wisdom. Thus, there is a need for educators to utilize Africa-situated knowledge to assess students on relevant constructs (Laher et al., 2022; Oppong, 2013, 2017, 2020; Oppong et al., 2022, 2023).

In Botswana, some private organizations and early childhood centers use scales assessing behavior, personality, and attitudes (Laher et al., 2022). However, primary, and secondary education in Botswana ought to align the needs of the local industries and global labor demands by introducing assessment of soft skills in schools in pursuit of a dynamic and robust economy through quality education (Nenty & &Phuti, 2014; Obinna et al., 2014). In this regard, there is a need for further research on soft skills to unravel the gaps of assessment of soft skills in Botswana hence this study.

Educators in Botswana understand the value of soft skills but do not have appropriate formal assessment scales that measure secondary school students’ soft skills competence (Nenty & &Phuti, 2014; Obinna et al., 2014). The content of the senior secondary school curriculum in Botswana is assessed mainly on hard skills using achievement tests (Laher et al., 2022). It neglects other forms of abilities such as soft skills, which are crucial for 21st-century students. Soft skills are fundamental to lifelong learning; however, not enough is done to assess the affective domain (Durowoju & Onuka, 2014; Khaerudin et al., 2020). It is unbalanced, in our opinion, to build the cognitive aspect of a graduate and consider them educated without assessing their other abilities (Durowoju & Onuka, 2014; Hadiyanto et al., 2017; Khaerudin et al., 2020). Educational assessment on only the cognitive domain is less progressive as education ought to aim at producing well-rounded graduates. The failure of Botswana secondary schools to ensure that the assessment of soft skills is part of the educational assessment constitutes a challenge for national human resource development (Aworanti, 2012; Durowoju & Onuka, 2014). The issue of unemployment among graduates can be linked to the notion that graduates did not acquire soft skills during their studies (Asuru & Ogidi, 2013; Balcar et al., 2018; Clark, 2009; Succi & Wieandt, 2019; World Economic Forum, 2020). High unemployment rate among the youth leads to less desirable economic outcomes for Botswana (The Voice admin, 2014).

Botswana secondary education needs to mainstream the assessment of soft skills in schools in pursuit of a dynamic and robust economy through quality education (Nenty & &Phuti, 2014). The affective domain assessment and psychometric theory were applied to understand how soft skills are best assessed.

Affective Domain Assessment

Affective domain includes emotions, attitude, and behavior of an individual. This domain encompasses soft skills. Each soft skill has traits. These traits have action words, and it is based on such action words that items could be developed to measure the related soft skills (Nenty & &Phuti, 2014; Obinna et al., 2014). The items can then be developed into Likert scale or rating scale (Cahoy & Schroeder, 2012). Affective measurement is done by asking individuals to rate the extent to which they display behaviors associated with each affective sub-domain or the extent to which each behavioral item describes them well.

Psychometric Theory

The scale development process follows six steps: (1) defining the domain of constructs, (2) generating item pool, (3) initial item purification, (4) developing and pre-testing survey, (5) administering final survey to a wider sample, and (6) cross-validating the final scale (DeVillis, 2017). To test the psychometric properties of the scale, the statistical tests used include, Exploratory Factor Analysis (EFA) which is a variable reduction technique that identifies the number of latent constructs and the Confirmatory Factor Analysis (CFA) which confirms the number of factors and the loadings of observed variables. The Psychometric theory also advocates for establishing reliability (Cronbach alpha coefficient index and Construct Reliability) and validity (convergent and discriminate validity) of the scale.

Purpose of the Study

The purpose of this study was to identify relevant soft skill competencies for senior secondary school students and create a reliable and valid survey instrument for assessing these skills.

Ethical Considerations

The ethics approval for this study was obtained from an Institutional Review Board at a public university in Botswana with reference number UBR/RES/IRB/1732. Permission to collect data from education stakeholders was obtained from the Ministry of Education and Skills Development (MOESD). In schools, permission to collect data was obtained from the school heads. Individual consent was obtained from each participant.

Study 1

Study Setting, Participants, and Sampling

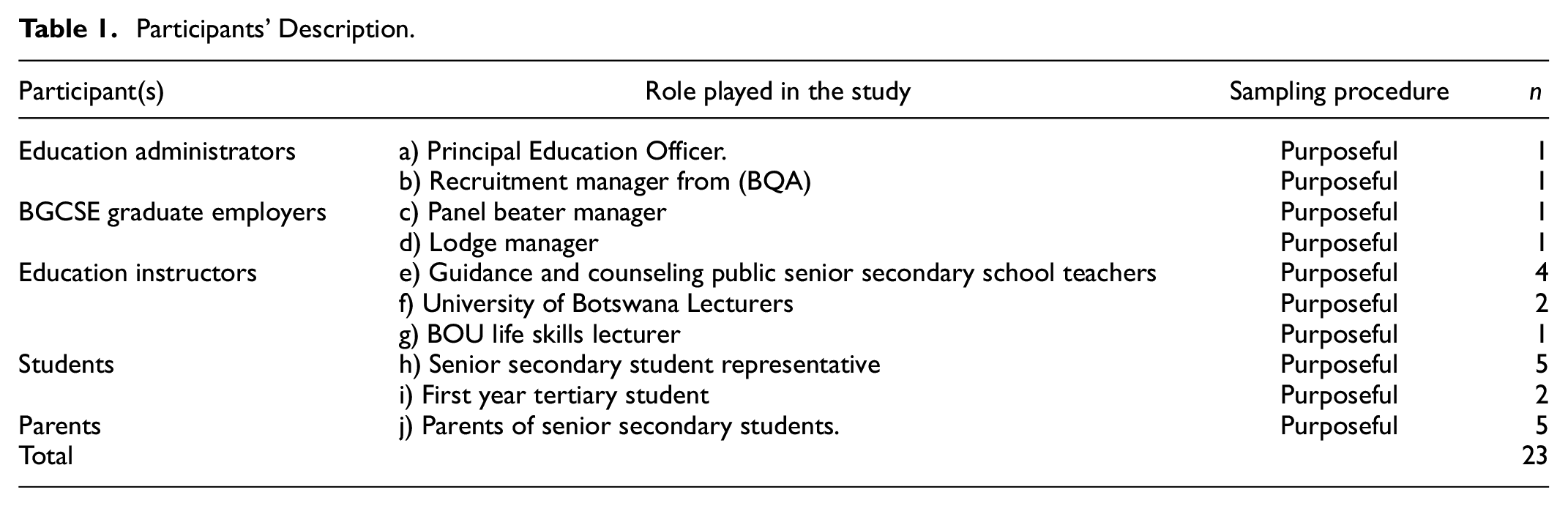

The study was conducted in settings such as government organizations and private business entities, public senior secondary schools, and institutions of higher learning in Gaborone, Botswana. Gaborone is the capital city of Botswana with most of the headquarters of organizations situated and research participants that reflect the ethnic, socioeconomic, and geographical distribution of the country. A sample of 23 participants was purposefully recruited from among education stakeholders such as education administrators, employers of senior secondary school graduates, senior secondary school teachers, university lecturers, senior secondary students, first year university students, parents of completing students at senior secondary (See Table 1).

Participants’ Description.

Instrument

Together with the literature review, a semi-structured interview guide was developed to facilitate in-depth interviews with education stakeholders to collect their perceptions on soft skills relevant for senior secondary school students in Botswana. The interview guide included such questions as (1) Propose soft skills you think are relevant for Form Five students [(a) Why do you think the stated skills are relevant?; (b) How would you define the stated soft skills?] and (2) Describe the character of a student with the stated relevant soft skills.

Procedure

Following consent from participants, interviews were conducted in areas convenient to participants such as offices and classrooms. Interactive interviews lasted for 45 min to 1 hr. All key informants were asked the same questions. Probes were used to refocus participants responses where possible. All interviews were audio taped and notes were also taken. Credibility of participants’ responses was done through member checking. This entailed participants responses sent through email, hand delivery or telephone to confirm whether what had been captured was an accurate reflection of what they had said. Pseudonyms were used for anonymity and confidentiality.

Data Analysis

The study adopted a model of thematic analysis by Braun and Clarke (2006). Initial transcripts were color-coded into meaningful chunks of text according to construct soft skill matter which was identified as categories by first author (FP). The categories were later compared, grouped, interpreted, and analyzed to create themes by whole research team members (SKK, NT, and SO). A thematic map was then generated. The codes and themes allowed the researchers to present the qualitative findings in the form of themes and direct quotes (excerpts).

Results

The core soft skill themes that emerged from the qualitative phase included confidence, communication, self-management, time management, teamwork, leadership, good mannerism, initiative, emotions, and creativity (See Table 2). The qualitative findings were used to generate a questionnaire of 63 items which was administered to 306 senior secondary students. Of the 63 items, 39 items were derived from qualitative findings and 24 items were adopted from the review of related literature.

Soft Skills Identified in the Qualitative Study.

Discussion for Study 1

Previous studies have documented similar findings reflecting communication, teamwork, time management, self-management, emotions, and creativity as important soft skills (Andrews & Higson, 2008; Bora, 2015; Lippman et al., 2015; Obilor, 2019; Pritchard, 2013; Wats & Wats, 2009). For instance, these findings echo those of Hanover Research (2014) which also identified the 4Cs soft skills of communication, collaboration, creativity, and critical thinking as recommended for students of age 5 to 18 years in K-12 Education in the USA. Findings of the qualitative phase resonate with the World Economic Forum (2020), sustainable development goals and Botswana national principles. However, other countries and organizations have recommended different soft skills such as problem solving, decision-making, and critical thinking, which are important for the 21st century (Cukier et al., 2015; World Economic Forum, 2020).

Study 2

Participants and Sampling

Participants for quantitative study comprised 306 senior secondary school students. A multi-stage sampling method was used to select participants from three (3) educational regions in Botswana. First, simple random sampling was used to select 30% of educational regions (North-East, Central and South-East) out of the 10 educational regions. Secondly, a simple random sample of 20% schools was selected from each region. Finally, simple random sampling was used to select a total of eight (8) Form Five classes from the selected senior secondary schools. Data was collected from a total of 306 senior secondary school students consisting of 166 females and 140 males.

Procedure

Appointments were made for data collection through the school head. The data was collected in the school hall where all volunteering students were assembled. Those who consented to participate were given the questionnaire to complete. The complete questionnaires were collected and immediately checked for any omissions.

Instrument

A Soft Skill Assessment Scale (SSAS) was developed to assess senior secondary school students’ level of soft skills. The scale had 68 items and two sections. Of the 63 items, 39 items were derived from qualitative findings and 24 items were adopted from the review of related literature. Items were measured on a 5-point Likert scale ranging from 1 (Poor) to 5 (Excellent). Both Self-rating and peer rating datasets were collected in which the self-rating was used for factor analysis.

Data Analysis

Data were subjected to descriptive analysis to examine item performance. Data were analyzed using SPSS version 23. An alpha level of .05 was used for statistical tests. The data from the questionnaire was split into two. The first half of the dataset was analyzed using EFA and the second dataset was analyzed using CFA. Item analysis was conducted to evaluate item performance prior to conducting the EFA.

Exploratory Factor Analysis

Data factorability was tested using the Kaiser-Meyer-Olkin (KMO) measure of sampling adequacy with an index of .70, exceeding the recommended limit of .50 (Kaiser, 1974). The Bartlett’s test of sphericity was significant, (X2 = 4958.56, df = 1953, p .000), implying that the intercorrelation matrix contained adequate common variance. The correlation matrix, anti-image correlation matrix and measures of sampling adequacy were analyzed to ensure that the application of the factor analysis to the data set was appropriate. A total number of 13 items indicated a consistently low correlation and were excluded from further analysis. Only 50 items were used for exploratory factor analysis.

Factors were extracted with Principal Component Analysis (PCA) and 50 items converged into 14 factors with eigenvalue > 1, that explained 67.64% of total variance. Parallel analysis was used to determine number of factors to retain compared to use of eigenvalues and scree plots. Using Varimax orthogonal rotation, the first four factors were retained for the 50 items of the SSAS scale. Only nine items loaded less than a threshold of .30, hence they were discarded. The results revealed a four-factor structure solution with 38 items (see Table 3). The four-factor solution explained a total of 44.00%. Considering the pattern representation of items, the factors were named botho, self-management. communication, and teamwork. All factors had Cronbach’s alpha above .70 (see Table 3).

Item Pattern Coefficients for SSAS Scale.

Confirmatory Factor Analysis

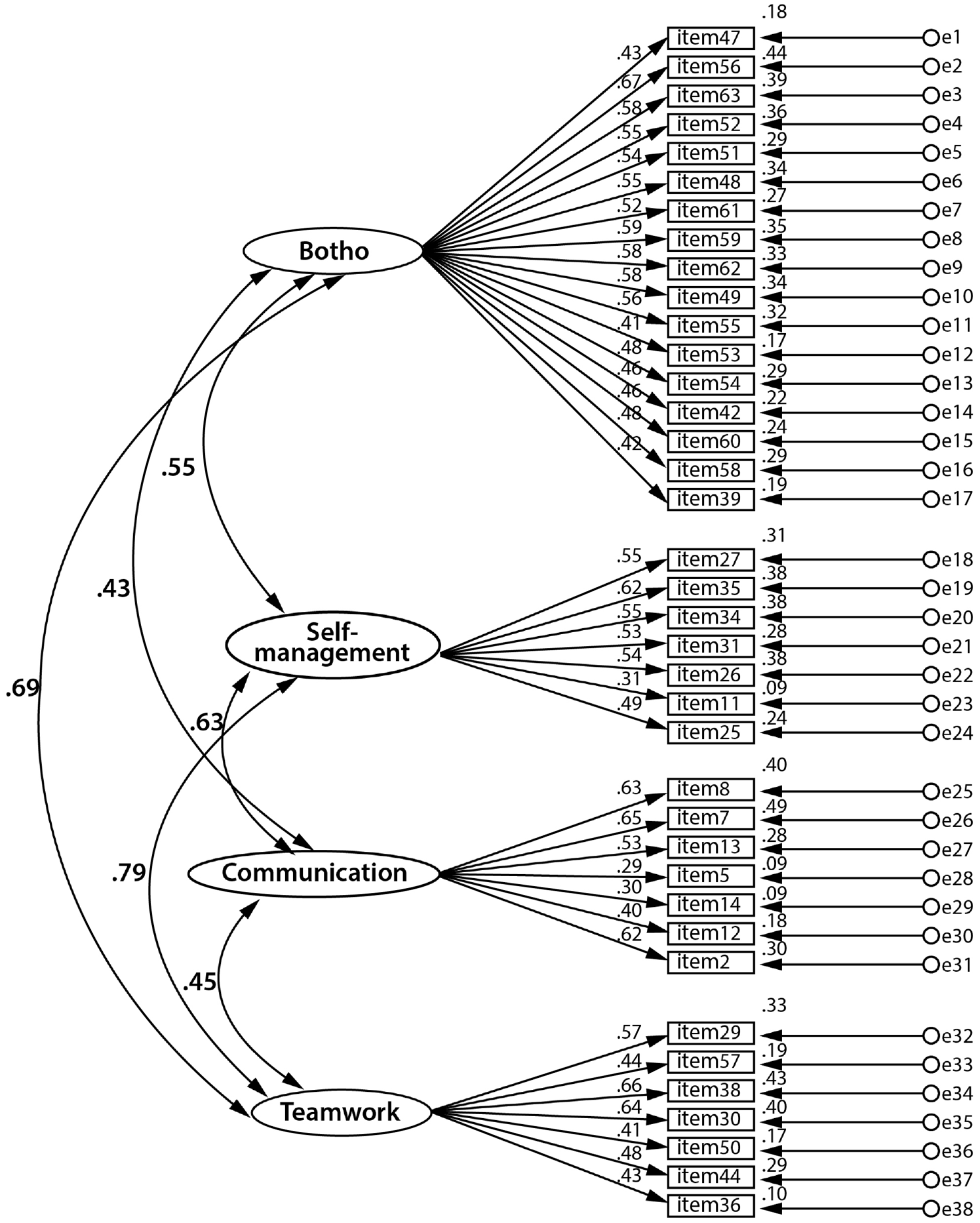

A measurement model of 38 items was analyzed using AMOS software in SPSS version 23 using a Maximum Likelihood (ML) estimation method. A sample size of 153 senior secondary students was used hence the sample size was considered appropriate. The goodness fit of the four factor CFA model 1 of the SSAS scale was explored using the three types of model fit indexes (Absolute fit indices, Incremental Fit indices, and Parsimony fit indices). The model one is displayed in Figure 1.

Four factor CFA Model One (1).

Results indicate that each fit index value compared to the benchmark values did not meet the acceptable levels. Therefore, the four-factor CFA model was not adequate and had poor overall fit with the data. There was needed to re-specify the measurement model.

Model Re-specification

The items 36, 50, 57, 12, 14, 5, 11, 39, 53, 47 had a squared multiple correlation value less than .20, thus ideal for deletion (Awang, 2015; Hooper et al., 2008). A total of 16 items (5, 47, 14, 53, 54, 12, 42, 57, 60, 50, 58, 44, 39, 36, 11, 25) had standard regression weights below .50 and were deleted. A standardized regression weight cut-off point of .60 was used to delete the following items: 26, 27, 3, 48, 52, 51, 49, & 61). Results indicate that at the eighth iteration, item 61 was removed which to improve the model fit to good and acceptable model fit for all indices (See Table 4).

Iterative Removal of Items and Its Impact on the Overall Mode Fit.

The CFA measurement model 2 had a total of four factors and 14 items that demonstrated a noticeable improvement in the fit over the initial CFA measurement model one of four factors and 38 items (See Figure 2).

Re-specified CFA Model Two (2).

Convergent Validity

Convergent validity was assessed through standardized item loadings, Average Variance Extracted (AVE) and Construct Reliability (CR) as suggested by Hair et al. (2009). The standardized item loadings indicate a good convergent validity since items loaded at above .50 (Hair et al., 2006). We used .60 as the set standard for construct reliability (Awang, 2015). Results indicate that factors Botho (.62), Self-management (.76), Communication (.81) and Teamwork (.75) fulfilled the required threshold and thus confirmed that convergent validity is established. Convergent validity can be established when CR value corresponding to a Factor exceed respective AVE (Abdulka et al., 2017; Hair et al., 2010). Based on the recommendation, the results of the study indicate that CR for each factor exceeds the AVE hence convergent validity was confirmed.

Discriminant Validity

Average Variance Extracted (AVE) greater than squared correlation indicates good discriminant validity (Fornell & Larcker, 1981). Results indicate that AVE for each factor exceeds the squared correlation for all paired factors. There was a good factor distinctiveness between factors (See Table 5).

Evidence of Discriminant Validity.

Nomological Validity

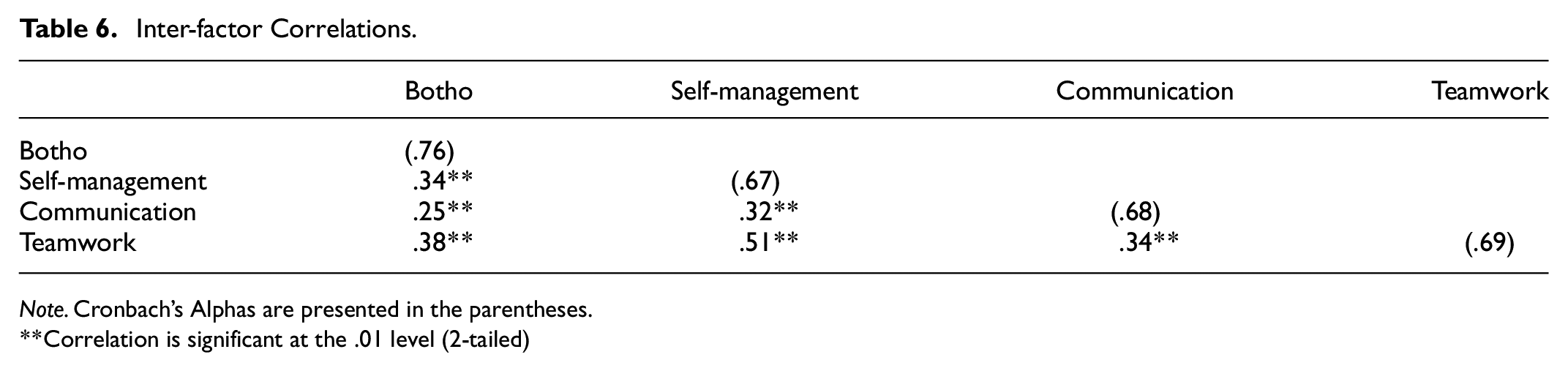

Inter-correlation value between factors was examined. The correlations indicated that the factors related positively and were all statistically significant. The results support the prediction that the factors are positively related to one another thus support nomological validity of the model (see Table 6).

Inter-factor Correlations.

Note. Cronbach’s Alphas are presented in the parentheses.

Correlation is significant at the .01 level (2-tailed)

Internal Consistency Reliability

The internal consistency reliability using Cronbach’s alpha was analyzed from the SSAS factors. All factors had moderate Cronbach’s alpha above .60 (Hair et al., 2018). None of the items on each factor indicated that their deletion would lead to an increase in the Cronbach’s alpha coefficient hence no item was deleted.

Final Soft Skill Assessment Scale

The final scale Soft Skill Assessment Scale (SSAS) consists of four (4) sub scales and a total of 14 items (See Table 7). Each sub scale has at least three items (AdyHameme, 2017). The SSAS used a five-point Likert scale. The Soft Skill Assessment Scale was confirmed to have Construct, Discriminant, Convergent and Nomological validity and was also reliable.

Final Soft Skill Assessment Scale (SSAS).

Group Differences on the Final Scale

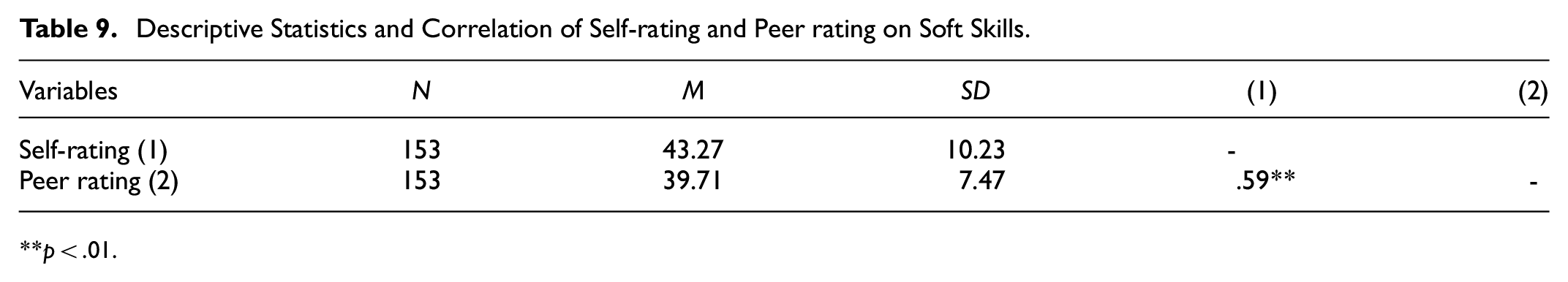

Paired sample t-test was computed to assess the significant difference in scores of students’ self-ratings and peer ratings of the final scale. Results suggest that there is a statistically significant difference in the scores of students’ self-rating (M = 43.27, SD = 10.23) and Peer Rating (M = 39.71, SD = 7.47); t (152) = 5.26. p = .00 (see Table 8). Results indicate that students rated themselves higher compared to the ratings from their peers on soft skill.

Descriptive Statistics and Pair t-test of Soft Skill Rating.

A Pearson product-moment correlation coefficient was computed to assess the relationship between self-ratings and peer rating. The results showed a moderate and positive significant relationship between students’ self-rating and peer rating, r = .59, n = 153. p = .00, an indication that when students self-rating goes up, peer rating also goes up (see Table 9).

Descriptive Statistics and Correlation of Self-rating and Peer rating on Soft Skills.

p < .01.

An independent sample t-test was computed to assess the mean difference of SS ratings on gender Results showed no statistically significant difference in soft skills rating scores for the Male (M = 43.79, SD = 10.76) and Female (M = 42.75; SD = 9.73); t (151) = 0.63. p = .53. Results imply that the SSAS scale does not favor either male or female in soft skills ability.

One-way analysis of variance (ANOVA) results showed that students self-rating on soft skill differed significantly across different educational regions F (2,150) = 17.32, p = .00). Pair-wise comparisons of the means using Tukey HSD post-hoc comparisons of three regions indicate that Southeast region (M = 36.15, SD = 8.45) was (significantly different) lower in students’ soft skill ratings than educational region North-East (M = 46.85, SD = 9.60) and Central (M = 44.53, SD = 9.54). Results imply that soft skills ability varies across regions (see Table 10).

One-way ANOVA, the Difference in School Region on Soft Skill Performance.

Note.**p<.01.

Discussion for Study 2

The four factors of the SSAS resonate with skills need by 2025 as posited by World Economic Forum (2020). Furthermore, the identified soft skills in this study are in accordance with the basic soft skills recommended across cultures (World Health Organization [WHO], 1997, 2012). In the ensuing paragraphs, the sub-scales of SSAS are discussed.

Perseverance

The sub-scale of perseverance has five items that measure ability to overcome challenges to achieve. Findings of the study reveal that perseverance is about the ability to keep a positive mood, work under pressure, be willing and strategize to achieve. Contrary to the Grit scale (Duckworth et al., 2007), the perseverance subscale was designed to construct items that depict the outcome traits of perseverance hence the difference between the scales. The Grit scale was designed as a predictor of perseverance. Students must have the desire to learn, the ability to strategies and to keep a positive mood to compete in the world. Perseverance allows one to link their thoughts and actions. Students need to have the desire to take charge of what they want to accomplish (Goleman, 2000).

Civic-Virtue

The SSAS has items that measure civic virtue. The findings of this study include items that measure ability to engage with others, show interest in them and share ideas. This factor is linked to one of Botswana’s national principles, Botho. Thus, botho is a community- building ethic that urges an individual to define their identity by caring welcoming, affirming, and respecting others. The findings from the developed soft skill assessment scale suggest that the psychometric features of the scale are feasible as a research instrument to measure civic virtue.

Communication

Communication involves the ability to receive information and respond through actions, oral or written communication (Cukier et al., 2015; Schulz, 2008). In SSAS, communication has three items that focus on ability to listen, oral and written communication. The items on the communication sub scale represented a category/ level of the affective domain. For example, the item, “ability to listen” is categorized under the receiving level in Krathwohl et al.’s (1964) affective domain. Receiving level deals with willingness to receive or willingness to hear and tolerance of new ideas (Allen & Friedman, 2010; Mon et al., 2013). Students need to communicate clearly, intellectually with good emotions to create a healthy environment for effective processes.

Teamwork

The subscale teamwork of the SSAS, has only three items that assess team coordination and team cooperation. Teamwork subscale of SSAS demonstrated good psychometric properties. In the 21st century, students need teamwork skills to have the ability to function well within a group. After all, humans are social beings and need to interact at school, work, and family (Cukier et al., 2015; Tarricone & Luca, 2002). Teamwork is a soft skill that links to other soft skills such as communication (ability to influence other ideas and share ideas), confidence (fearless in expressing one’s thoughts), civic virtue (respects other ideas, be accommodative of different character) (Holmes, 2014; Obilor, 2019). The connectivity with other soft skills is a possible explanation for the correlation among factors of this study’s CFA model.

General Discussion

The SSAS is intended for use by senior secondary students. The 14 items of the SSAS use a five-point Likert scale measure which is labeled as follows: Poor = 1, fair = 2, Good = 3, Very Good = 4 and Excellent = 5. The use of the SSAS allows for self-rating. For example, if a student rates themselves Very Good in all 14 items, that is, 14 items × 4(Very Good) = 56. A score of 56 out of 70 is obtained. This translates to a percentage score of 80. Such a percentage score could then be assigned a Grade A as done in Cognitive assessment (Botswana Examination Council, 2005). A certificate indicating the attainment of these skills should then be given to students (Obinna et al., 2014; Wats & Wats, 2009). Findings of the study indicate that the SSAS can be used to assess students through both peer and self-rating.

Limitations

First, data for this study was collected from three (3) out of 10 education regions. Though we used multistage sampling in the Northern and Southern part of Botswana and sampled an acceptable sample size, there is a need for further research seeking to have wider research coverage nationwide. Second, this study developed a scale suitable for senior secondary school students only. Future research seeking to deploy a similar approach might consider the inclusion of students from junior secondary and elementary school.

This study provided limited sources of validity evidence. The current study focused mainly on sources of validity evidence based on internal structure by using factor analysis to determine the dimensional structure of the scale’s scores and determine the reliability of scores. This study calculated the convergent (Construct Reliability for each factor exceeded the AVE which confirmed convergent validity) and discriminant validity (the AVE for each variable exceeded the squared correlation for all paired factors) but did not test for criterion-related relationships; thus, evidence based on relationship to other variable could not be provided. Similarly, the source of validity evidence based on consequences is not outlined in the current study. This study did not include a broad sub-group of participants (academic streaming, schools, age, class groups) to check for theory-consistent group differences while we did not provide validity evidence based on the response process. Also, focus group was not carried out to ascertain the relevance of items and perspectives of participants in a scale evaluation.

Even though a formal process of producing evidence of content validity was not followed per se, we still offered some evidence in terms of domain definition, domain representation, domain relevance, and appropriateness of test construction procedures (Sireci & Faulkner-Bond, 2014). For instance, we defined the domain through the qualitative study using the local understandings of soft skills to identify the dimensions relevant to Botswana. What we could not do was to use experts to confirm or rate the scope of the domains for domain definition, relevance, and representation. Sireci and Faulkner-Bond (2014) have recommended the use of quality assurance mechanisms such as item analysis to provide evidence of appropriateness of test construction procedures. In this study, we conducted item analysis to determine item performance prior to conducting EFA. Besides, it has been suggested that multiple sources of evidence of validity is preferable to a single source and no one source is more important than the others (Downing, 2003). After all, construct validity is the most frequently used source of evidence with measures of typical behavior such as soft skills and there are currently no “gold standards” (standard measures of soft skills in the Botswana context as this is the first attempt) to allow for the use of criterion validity while content validity only provides preliminary evidence of validity (Frost et al., 2007). Thus, the reliance on construct validity evidence is in order. All these concerns should be addressed systematically in future studies that use this scale. Therefore, the evidence provided in this study should be considered only exploratory.

Furthermore, the final SSAS is limited to assessment of only four soft skills. One could argue that the scale has inadequate constructs and is missing important constructs such as problem-solving, leadership and other soft skills recommended by education stakeholders. However, those constructs organically emerged from both the qualitative and quantitative data. Problem solving and leadership are soft skills that have been identified as basic soft skills relevant across cultures (Agarwal & Ahuja, 2014; Cukier et al., 2015; Holmes, 2014; WHO, 2012; Zhong et al., 2010). Despite data-driven nature of this scale development, we will still suggest that there should investigate the degree to which assessment of the problem-solving skill and leadership skills fits the context of Botswana in order to expand the scale for future use.

Implications

From a policy perspective, the study recommends the alignment of the soft skills to both curriculum and assessment. National bodies such as the Botswana Curriculum Development and Evaluation department and Human Recourse Development Council should work toward implementing a soft skills curriculum. The alignment is a key aspect in addressing skills mismatch and labor market. It is recommended that the Botswana General Certificate of Secondary Education (BGCSE) examination should not only serve purpose for assessing cognitive ability, but should also serve in development of a holistic being that is also assessed in affective ability.

This study recommends also the consideration for instituting a soft skill certification program. As much as cognitive skills are assessed and students are awarded certificates, soft skills should also be assessed, and certificates should be awarded to senior secondary students to promote practical application of soft skill among students and teachers (Aworanti, 2012).

Conclusion

The development of the Soft Skill Assessment Scale is contribution to knowledge on assessment of intelligences in African as perceived by Botswana stakeholders. We assert that the lack of relevant assessment scale has resulted in half-baked graduates who lack skills relevant for success in Africa. As such, time has come for localized soft skill assessment scale, a measure that would empower human development relevant to Botswana. This study addresses the growing need for researchers to engage local stakeholders in generating an assessment scale that is informed by the country’s needs, culture, and ecological context, as a strategy for addressing limited availability of assessment scales that are of relevance to African human society. Assessment of soft skills offers information to students about the knowledge, skills, and other attributes they can expect to possess after successfully completing coursework and academic programs.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

Data will be available upon request.