Abstract

In today’s world, Global Competence represents a fundamental disposition for teachers who must be able to teach effectively in classrooms with students from diverse backgrounds and manage multiple learning contexts. For these reasons, it is necessary to set up educational activities during initial teacher education programs aimed at developing and assessing preservice teachers’ ability to be Global Competent. This study was aimed at designing and creating a set of rubrics that can be used by either teacher educators or preservice teachers, in this case, as a self-assessment instrument. The research design was based on a modified Delphi method composed of five rounds to collect both qualitative and quantitative data. A panel of 31 experts was involved in an iterative process until a consensus among the experts was reached. The rubrics can be employed in several contexts and situations such as: before and after an international experience, during or after a simulation or a workshop based on intercultural and real-world situations.

Introduction

Teachers are increasingly asked to work in classrooms with students from diverse backgrounds “in terms of nationality, ethnicity, communication style, and cultural context, as well as ability, socio-economic circumstance, and educational background” (Sanger, 2020, p. 32). Consequently, they have to manage complex learning environments to ensure high levels of inclusion for all students. The European Commission (2020), presenting the aims of the European Education Area to be achieved by 2025, underlined that “the need for more flexible and inclusive learning paths has increased as the student population is becoming more diverse and the learning needs more dynamic” (p. 15).

To create inclusive, multifaceted and versatile learning environments, Global Competence represents a key notion to educate teachers in facing this demanding challenge (Byker & Putman, 2019; Tichnor-Wagner et al., 2016; van Werven et al., 2021). For these reasons, it is important to set up educational activities during initial teacher education programs aimed at improving and supervising the professional growth of preservice teachers concerning Global Competence issues. This study was aimed at designing and developing a structured but also adaptable and flexible instrument to assess the development of Global Competence within teacher education programs and support prospective teachers in understanding their own level of Global Competence. Teacher educators can begin to check such improvements already during the initial teacher training programs, to ensure that the future teacher will be able to tackle several educational situations and contexts and face effectively diverse learners, students with diverse backgrounds and several types of diversities, as stated by Beutel (2018), Niemi and Hahl (2019), Anderson (2019), and Krebs (2020).

Theoretical Framework

Global Competence for Teacher Education Programs

The OECD defines global competence as

“the capacity to examine local, global and intercultural issues, to understand and appreciate the perspectives and world views of others, to engage in open, appropriate and effective interactions with people from different cultures, and to act for collective well-being and sustainable development” (2018, p. 7).

This definition identifies four main and interconnected target dimensions: knowledge, understanding, engagement, and action. To be globally competent involves the capacity to (a) build knowledge of local and global contexts and issues, (b) to develop an understanding of various global perspectives, (c) to engage with diverse cultures, and (d) to take individual action that demonstrates an awareness of our global interconnectedness (OECD, 2018).

These aspects include knowledge, skills, attitudes, and values and are argued for (Kahn & Agnew, 2017; Schleicher, 2018) in our modern world “in an effort to prepare students with the global mindset and cultural competencies necessary for effectiveness as professionals and citizens in an increasingly globally interdependent world” (Smith-Isabell & Rubaii, 2020, p. 3).

The interconnectedness, which refers to the state of feeling and being connected with each other, is related to the concept of global education (Buchanan & Varadharajan, 2018; Ferguson Patrick et al., 2014; O’Connor & Zeichner, 2011) that is aimed at promoting global understanding. Future generations will face sustainability challenges that require consideration of “social, cultural, political, economic, and environmental dimensions” (Kopish, 2016, p. 76) from both local and global perspectives. For these reasons, educators should arrange activities for young people focused on the development of global competencies that include that include “skills and dispositions that facilitate local/global inquiry and cooperation, promote critical reflection, and inspire action for social transformation” (Kopish, 2016, p. 76).

To support the development of global education, it is necessary to educate globally competent teachers (Darji & Lang-Wojtasik, 2014) able to teach global competence (Majewska, 2022; Rensink, 2020). Kirby and Crawford (2012) showed how “policymakers are incorporating global competencies in professional teacher education standards” (Kopish et al., 2019, p. 4). In addition to emphasis on global competencies in professional standards, teacher education programing and curricula should be structured to include several types of teaching experience: international, cross-cultural, and any opportunities to face diverse contexts and contents to encourage and promote “learning about local/global issues from multiple perspectives and foster greater awareness of personal and social responsibility, and the impact of one’s choices on others” (Kopish et al., 2019, p. 6).

Since global competence is a multidimensional concept, Parmigiani et al. (2022) investigated “what aspects of global competence should be integrated into initial teacher education programs” (p. 3) and how to incorporate the notion of global competence in formal educational paths for prospective teachers. In particular, Parmigiani et al. (2022) underlined two main issues: organizational and educational. The organizational issues indicated that global competence should be combined in an explicit way within different areas of a teacher education program, appointing specific teacher educators to develop those features. The educational issues expressed and revealed the diverse aspects of global competence: citizenship education (González-Valencia et al., 2022; Mulder, 2021); intercultural citizenship (Wagner & Byram, 2017); cultural and intercultural relationships (Owusu-Agyeman, 2022); communication and cooperation (Awada & Gutiérrez-Colón, 2019); acceptance of diversity (Rapanta & Trovão, 2021); self-reflection and understanding (Murray-García et al., 2005); sustainability and well-being (Sorkos & Hajisoteriou, 2021).

Globally Competent Teaching

Globally competent teaching has been described by O’Connor and Zeichner (2011) when they affirm that this notion is, at the same time, broad and specific. Globally competent teaching is broad because it transcends subjects, age of pupils/students or school levels. But globally competent teaching is also specific “in that it should be relevant to local socio-political contexts and students’ cultural identities” (Kerkhoff & Cloud, 2020, p. 3).

Globally competent teachers are able to combine the local needs with challenges coming from different parts of the world. They are able to live and work in small school communities but facilitate the “development of young people to become informed, engaged, and globally competent citizens” (Kopish, 2016, p. 76). From this perspective, it can be seen as necessary to support the creation of a culture that allows future teachers to live educational experiences aimed at “expanding their horizons, changing their perspectives, and cultivating a positive disposition toward the world” (Zhao, 2010, p. 428).

In this sense, global teachers are, at the same time, global educators and global learners (Byker & Xu, 2019; Carano, 2013; Little et al., 2019) since it is necessary to support the development of their attitudes toward global learning during teacher education programs (Bamber et al., 2013).

Globally competent teaching is based on two main aspects: diversity and inclusion. Regarding diversity, Krebs declared that

“global, international, and intercultural triad refers to a full range of experiences and learning resulting from intentional pedagogies to prepare students for diversities in their immediate communities and professional environments, the international or global reach of their professions, and their responsibilities as citizens in the world” (Krebs, 2020, p. 37).

In this way, teachers can face effectively diverse learning environments (Beutel, 2018) and several kinds of diversities “such as gender, socio-economic backgrounds and worldviews” (Niemi & Hahl, 2019, p. 320) as supported also by Gu et al. (2014) and Poulter et al. (2016).

Concerning inclusion, global competence can actually operationalize the idea of inclusion through several policies, activities, educational strategies, tools, and technologies (Anderson, 2019). To make effective these actions, it is important to emphasize the notion of inclusive excellence. This concept goes beyond the concept of inclusion because it is not only focused on the importance of access. Inclusive excellence underscores the significance of “creating high-quality, challenging learning environments for all and recognizes diversity of institutional members as a strong contributor to the educational experience” (Islam & Stamp, 2020, p. 70).

Ultimately, Killick (2020) stated that globally competent teaching is based on the notions of global learning, global citizenship, intercultural competencies and global perspectives and should be embedded into the learning experiences of preservice teachers throughout of their teacher education program.

Assessing Global Competence: Tools and Rubrics for Teacher Education Programs

In the scientific literature, there are several tools to measure the development of Global Competence both at a school level and higher education level. We indicate, in this paragraph, the existing tools related more closely with teacher education programs.

The Globally Competent Teaching Continuum (Carter, 2020; Tichnor-Wagner et al., 2019) is divided into three areas (Teacher Dispositions, Teacher Knowledge, Teacher Skills) and it is designed to prepare educators to teach in a global society. The GCLC is a tool for self-reflection, based on a 5-point Likert scale (Nascent, Beginning, Progressing, Proficient, Advanced), containing a detailed description for each level. van Werven et al. (2021) developed a rubric consisting of three areas (Foundational competencies, Facilitation competencies, Curriculum design competencies) for Global teaching competencies in primary education. Liu, Yin et al. (2020) constructed a questionnaire, based on a 5-point Likert scale, structured in three areas including “knowledge and understanding, skills, attitudes and values” (p. 4). This study focuses on measuring Global Competence in graduate students. The OECD (2019, 2020) instrument is part of the Program for International Student Assessment (PISA) for 15-year-old students. It is divided into two parts: a cognitive assessment and a background questionnaire. The cognitive assessment is designed to examine students’ critical skills on global issues through real-world examples, whilst the questionnaire is based on awareness of global and cultural events, social and cognitive skills, and behaviors. The OECD instrument includes also some elements focused on teachers and their ability in embedding in their lessons aspects related to Global Competence. So, indirectly, the OECD instrument indicates some aspects which are considered important for the development of the teaching profession. The instrument developed by Asia Society (2017) has a broader spectrum, measuring Global Competence for pupils aged between 4 and 18 and also for postsecondary students. It is structured into four areas (Investigate the World, Recognize Perspectives, Communicate Ideas, Take Action), using different criteria based on age. The Global Teaching Model (GTM) was developed by Kerkhoff (2017) and Kerkhoff and Cloud (2020). This model comprises four factors: situated, integrated, critical, and transactional. The first two factors indicate in what way global teaching should be linked to existing educational contexts and instructional practices. The latter two factors “explain how teaching about the world can be approached from a critical frame and commitment to equity” (Kerkhoff & Cloud, 2020, p. 3).

Research Design

Aim and Research Question

This study investigated how to assess the growth of the aspects related to Global Competence within teacher education programs. On this basis the overall aim of this study was to create a set of rubrics to be used by either academics, international coordinators or teacher educators to assess the preservice teacher’s development of Global Competence. Consequently, the research question can be expressed as follows: how to validate a set of rubrics focused on the preservice teacher’s development of Global Competence through a modified Delphi method?

Rubrics are complex instruments composed of several parts and sectors, including for example, different areas, dimensions, indicators/criteria, levels and captions. In order to create such a rubric for global competence, it was necessary to design a robust and consistent research procedure that could ensure a deep validity in the final outcome. The Delphi technique is commonly utilized in the research context and its validity for development of research instruments, such as questionnaires and rubrics, has already been described by Landeta (2006), Manizade and Mason (2011), Ballesteros and Mata (2009), Yeh and Cheng (2015), Peeraer and Van Petegem (2015), Mengual-Andrés et al. (2016), and Lecours (2020). For this reason, we involved a large number of experts who took part in a modified Delphi method, as explained in the following section.

Research Procedure

The Modified Delphi Method

The research design was based on a modified Delphi method (Avella, 2016; McPherson et al., 2018; Revez et al., 2020; Stewart et al., 2017). The traditional or conventional Delphi method was created

“at the end of the 40s, when researchers at the RAND Corporation (Santa Monica, California) started to investigate the scientific use of expert opinion. The Delphi method was conceived as a group technique whose aim was to obtain the most reliable consensus of opinion of a group of experts by means of a series of intensive questionnaires with controlled opinion feedback” (Landeta, 2006, p. 468).

This method allows one or more panels of experts to come to a consensus about themes, topics or matters. This method is composed of multiple revisions of an instrument through the administration of different surveys to the experts in the field until a consensus is reached. Delphi methodology offers multiple opportunities for experts to either send or receive feedback so that they can revise and detail their ideas and judgments “based upon their reaction to the collective views of the group” (Manizade & Mason, 2011, p. 191). The conventional Delphi method is founded on a process where the expert panel begins to discuss the initial options and alternatives starting from the researcher’s question(s). In the modified Delphi method, the researchers select initial alternatives and provide them to the panel for discussion (Avella, 2016).

Both conventional and modified Delphi methods are usually arranged with a series of iterative questionnaires or interviews until a consensus among the experts is reached (Baines & Regan de Bere, 2018; de Meyrick, 2003). Our research was drawn on a five-phase methodology:

preliminary round: the researchers, in a pilot study, highlighted the main aspects of Global Competence related with the teacher education programs (Parmigiani et al., 2022) and prepared the first draft rubric;

first round (qualitative): the researchers administered a semi-structured interview to each expert individually to allow them to talk deeply about the rubrics underlining strengths and weaknesses; following the suggestions made by Jonsson and Svingby (2007), the experts were asked to stress the relevance and the clarity of: the selected areas of global competence; the dimensions and the indicators/criteria indicated for each area; the captions related to the levels of performance. After the analysis of the interviews, the researchers modified the first draft rubric and created the second draft rubric;

second round (quantitative): the experts were independently asked to fill in an online questionnaire; each expert had to assess the relevance and the clarity of all dimensions and indicators/criteria. After the quantitative analysis, the researchers identified the dimensions and the indicators/criteria that needed to be either confirmed, eliminated or needed a revision (to be completed during the third round);

third round (qualitative): this round was focused on the improvement of the indicators/criteria with acceptable but not high consensus; in this case, the experts had to fill in a qualitative online questionnaire aimed at revising and refining the above-mentioned items. After the qualitative analysis of the questionnaire, the researchers created the third draft rubric, modifying the indicators/criteria;

final round: the experts had to finally assess the third draft rubric through a qualitative questionnaire where they found a checkbox where they were able to write their comments concerning the last changes made.

The experts’ panel

As specified by Stewart et al. (2017), the recruitment of the panel members represents the fundamental moment for the success of the Delphi method. To select the experts, the researchers established three requirements: experts must (a) have publications on international/intercultural educational issues; (b) work within teacher education programs; (c) have experiences in international/intercultural programs for preservice or in-service teachers. The researchers identified and contacted 124 experts from all continents with the above-mentioned characteristics and 31 of them accepted to take part in the panel. In particular, 10 experts were from the European area (France, Germany, Israel, Italy, the Netherlands, Norway, Portugal, Slovakia, Sweden, Switzerland), 12 experts were from North America (Canada and USA), 8 experts were from the Pacific area (Australia and New Zealand). Unfortunately, all experts from Africa, Asia and South America rejected our invitation to take part in the panel. According to the Lawshe table (Lawshe, 1975), the total amount of experts should be between 5 and 40.

The instruments

First round

The first round was based on a semi-structured interview. We chose this instrument because the interviews have some advantages, listed by Harris and Brown (2010) and Ary et al. (2019): interviews are flexible, questions can be repeated and the interviewers can ask supplementary information. The interviewers are able to observe participants and the interactive situation can facilitate a deep reflection because the interviews can trigger strong affective responses.

The interview was composed of five main questions:

we identified four areas: exploring, understanding, engagement and taking action; are they suitable or not? Would you change them?

we identified a certain number of dimensions for each area; are they suitable or not? Would you change them?

we specified the criteria for each dimension; are they suitable or not? Would you change them?

we decided to set up 4 levels; do you think it is the right number or would you like to modify them?

we wrote overall captions related to each level; do you think it is a good idea or is it better to specify all descriptors for each criterion?

A final open-ended question (free comments) was aimed at allowing participants to express any elements that had not emerged previously. “It was important that the respondents had the possibility to freely express their feelings and ideas” (Parmigiani et al., 2022, p. 4) about the set of rubrics. Each expert was interviewed randomly by a member of the research team, composed of eight researchers. “The interviews were conducted in English in order to simplify the data analysis” (Parmigiani et al., 2022, p. 5). The interviews lasted 30 minutes approximately and were recorded. Then, they were transcribed by another member of the research team. All transcriptions were merged into one file in order to keep the experts’ anonymity. The data were analyzed with Nvivo 12. We carried out a coding analysis based on a three-step technique as presented by Corbin and Strauss (2015) and described by Vollstedt and Rezat (2019).

Second round

The second round was based on a quantitative questionnaire to measure the inter-rater reliability about the relevance and the linguistic clarity of all dimensions and indicators/criteria included into the second draft rubric. We calculated the following coefficients:

the content validity index (I-CVI); a 4-point scale was used to avoid a neutral point; the four points used along the item rating continuum were 1 = not relevant/clear, 2 = somewhat relevant/clear, 3 = quite relevant/clear, 4 = highly relevant/clear; the cut-off for an excellent level is >0.78 for the expert panels composed at least by nine experts, (Lynn, 1986; Davis, 1992; Polit et al., 2007; Larsson et al., 2015);

the content validity index for the overall scale (S-CVI) in both its versions S-CVI/UA (Universal Agreement) and S-CVI/Ave (Average); in this case, the cut-offs for an excellent level are >.8 and >.9 or higher, respectively (Polit & Beck, 2006; Shi et al., 2012);

a modified Kappa; we considered, as significance levels, the criteria proposed by Cicchetti and Sparrow (1981): fair (.40–0.59), good (.60–0.74), excellent (.75–1.00);

the coefficients Gwet’s AC2 and Fleiss’ Kappa; we considered the following critical values: poor (0–.20), fair (.20–.40), moderate (.40–.60), good (.60–.80), very good (.80 or higher) (Gwet, 2014; Popplewell et al., 2019).

Third round

The third round instrument was a qualitative questionnaire presenting the indicators/criteria to be revised. The experts could either confirm the original version of the item or suggest a new version. Finally, they could find a text box where they were able to express any final comments.

Final round

The final round instrument was composed of a form to suggest any final comments about changes made during the third round.

Limitations of this study

This study presents some limitations. First, the panel is composed of experts only from Western countries. The quality of the experts recruited is high but it would be necessary, in future, to have experts from other areas of the world. The second limitation is related to the modified Delphi method in which, as indicated before, the researchers establish the initial alternatives. It would be interesting to consider using, in future, the conventional Delphi procedure where the experts decide, from the beginning, the initial alternatives. The last limitation refers to the opportunity to use other methods together with the Delphi method, such as focus groups. To maintain anonymity, the Delphi method does not incorporate direct interaction among the experts. Focus groups can allow generative dialog among members, providing an opportunity for additional information to support the creation of the instrument (Dimitrakopoulou, 2021; Mukherjee et al., 2018; Ogbeifun et al., 2016; Yıldırım & Büyüköztürk, 2018).

Data Analysis and Findings

Preliminary Round

The preliminary round was aimed at creating a first draft of the rubric to assess the preservice teachers’ development of Global Competence. The first draft rubric was based on the outcomes of the studies conducted by Parmigiani et al. (2022).

The structure of the first draft rubric is provided in the Supplemental Materials (Table S1). It was composed of four main areas and each area included a certain number of dimensions. Then, each dimension was specified into one or more indicators/criteria and, finally, each indicator/criterion could be assessed through four levels which were described by the captions indicated at the bottom of the rubrics. This first draft of the rubric was submitted to the expert panel and modified in the first round through the qualitative analysis of the expert interviews.

First Round

This stage lasted 3 months: the interviews were conducted in the first month and during the next 2 months the qualitative data was analyzed. The analysis of the expert interviews highlighted three main aspects regarding: (1) areas, (2) dimensions and indicators/criteria, (3) levels and captions. In particular, the analysis identified three main categories for each aspect: (a) level of agreement, (b) critical points, and (c) suggestions.

Areas

The analysis highlighted an overall positive view about the areas. Twenty-five experts stated for 34 times that the areas were suitable not only in their completeness according to the assessment of Global Competence, but also in terms of logical connections and sequences: “I think they’re suitable. I like the fact that you move through to taking action. I think that they fit logically together.”

Regarding the critical points, 7 experts affirmed for 13 times that the title of the second area, “Understanding,” was unclear, since the process of understanding itself is unclear: “Understanding is often difficult to measure. I think it’s fine as an overarching area but I have got some thoughts about what it needs to look like deep down at the objective level.”

The most critical point was represented by the overlapping between area C “Engagement” and D “Taking Action.” Ten experts asserted for 19 times that these two areas were very similar and there were no evident differences: “The areas C and D seemed to me to be quite alike in lots of ways there are pieces of each of them that are not repeated but it’s just the distinctions are not super clear to me.”

Most of the suggestions given by the experts were related to the language and terminology used in the rubrics. They suggested changing the name of “Exploring” into “Examining” and they recommended deleting the terms in brackets, near the names of the areas, to avoid any misunderstanding and they proposed to use only verbs as names of the areas. So, we made the following changes for the areas:

we modified the title of Area B: “Engaging” instead of “Understanding”;

due to the overlapping between area C and D, we merged them into a new area named “Acting”;

we deleted the terms in brackets included into the names of the areas.

Dimensions and indicators/criteria

Thirteen experts declared for 20 times that the dimensions and the indicators/criteria were “clear, concisely labeled” and well described”. Concerning the number of the dimensions, the experts stated that “they’re enough to get at the characteristics that you’re looking for in each of the areas but not too many.” In terms of the number of indicators/criteria, 5 experts with 7 references argued that it would be better to “define the criteria and have fewer in each dimension” and to merge the criteria that overlap (9 experts with 15 references), in order to “keep the questionnaire short and simple.” On the other hand, 12 experts for 31 times suggested many times to add some important dimensions/criteria that seemed to be missing. Eight experts expressed doubts about the clarity of some dimensions from a linguistic point of view (20 references) and 7 experts for 10 times emphasized a problem related to the subjectivity of some of the criteria, as an expert said: “How can you know that one person will understand the same as the next person filling in this form?.”

The experts underlined some specific aspects concerning each area. Regarding Area A, 19 experts claimed that the dimensions and criteria were “very important and well chosen” (22 references). As for area B, 14 experts expressed agreement stating that it “captures the type of knowledge that teachers need,” “it was really clear” and “it is well structured” (17 references). In particular, they underscored some critical points regarding the dimensions B2 (8 experts with 11 references) and B6 (7 experts with 9 references) since they were too broad and difficult to be assessed. Regarding the area C, 19 experts for 20 times expressed agreement, although 4 experts for 5 times indicated some doubts about the use of the word “deeper” in the criterion C1a. The dimension C2 was too broad and difficult to be assessed and the dimension C3 was unclear: “It’s referring to being able to teach or practice in several different languages, or if it means to teach or practice to students with different language backgrounds.” Finally, about the area D, 19 experts with 24 references agreed that the dimensions and criteria were suitable and clear. The main critical points were related to linguistic issues (5 experts with 6 references).

We made several changes for dimensions and criteria. The second draft rubric can be found in the Supplemental Materials (Table S2).

Levels and captions

Concerning the levels, 21 experts expressed for 26 times their agreement on maintaining four levels: “I like the four levels and I like the terms to avoid the middle one” also to avoid the risk, as one expert said, that people “tend to put in center.” Nonetheless, six experts underlined two critical points: reducing the number of the levels in order to make the tool easier to use and changing “experienced” and “advanced” because they were quite similar and, in some cases, they overlapped each other: “What I have difficulty to understand is a difference between experienced and advanced for me this is quite difficult.” As for the suggestions, 3 experts underlined the opportunity to include a level that could be useful to express either a neutral position or the possibility not to answer to some items: “If you wanted to include a midline, which I think is more common in research literature then I would have a middle criterion that would say ‘no change’ or something along those lines.”

Regarding the descriptors, 19 experts with 23 references stated their agreement on the idea to have overall descriptors, instead of specific ones for each criterion: “I think the overall descriptors is better cause if you wish to write individual descriptors for each one you would basically just stick these words into that sentence.” As a critical point, the analysis showed that caption levels were not sufficiently clear and exhaustive, they could be misinterpreted, so they needed to be explained better.

Furthermore, the analysis highlighted some additional suggestions and critical points about the design of the tool. One expert suggested adding a qualitative box, including a space for additional comments in order to write further items useful for the Global Competence assessment: “I would consider creating an additional qualitative response section after each of the sections I could see some utility and having an opportunity for qualitative elaboration so that folks who were completing this rubric could illuminate the responses in each of these areas and potentially provide important contextual data not captured by four levels.”

The changes made for level and captions are as follows:

we added a box called “not applicable” to indicate that the preservice teacher is not involved in that criterion;

we modified the names of the levels: emerging, developing, achieving, extending;

we added a box to write qualitative comments concerning the Global competence’s development of the preservice teacher.

Second round

This stage lasted 2 months, considering the time to administer the questionnaire (around 1 month) and 1 month to analyze the quantitative data. The data collected during the second round are shown in Table 1. In addition to the coefficients, we indicated also the number of experts who declared a disagreement concerning either the relevance or the clarity. All items were considered as relevant, so we decided to maintain the structure of the second draft rubric. Instead, the items, marked with an asterisk, needed to be expressed in a clearer way. In particular, the items C4c and C6b were under the critical value for both the I-CVI and the modified k. Moreover, even if the I-CVI coefficient reached the critical value, we decided to revise also the items A3b, C1a, C4d and C5a since 5 experts underlined a lack of accuracy and it caused a low level of S-CVI/Ave and/or Fleiss’ Kappa. All these items were revised from a linguistic point of view during the third round.

Content validity analysis—Second Round.

Third and Final Round

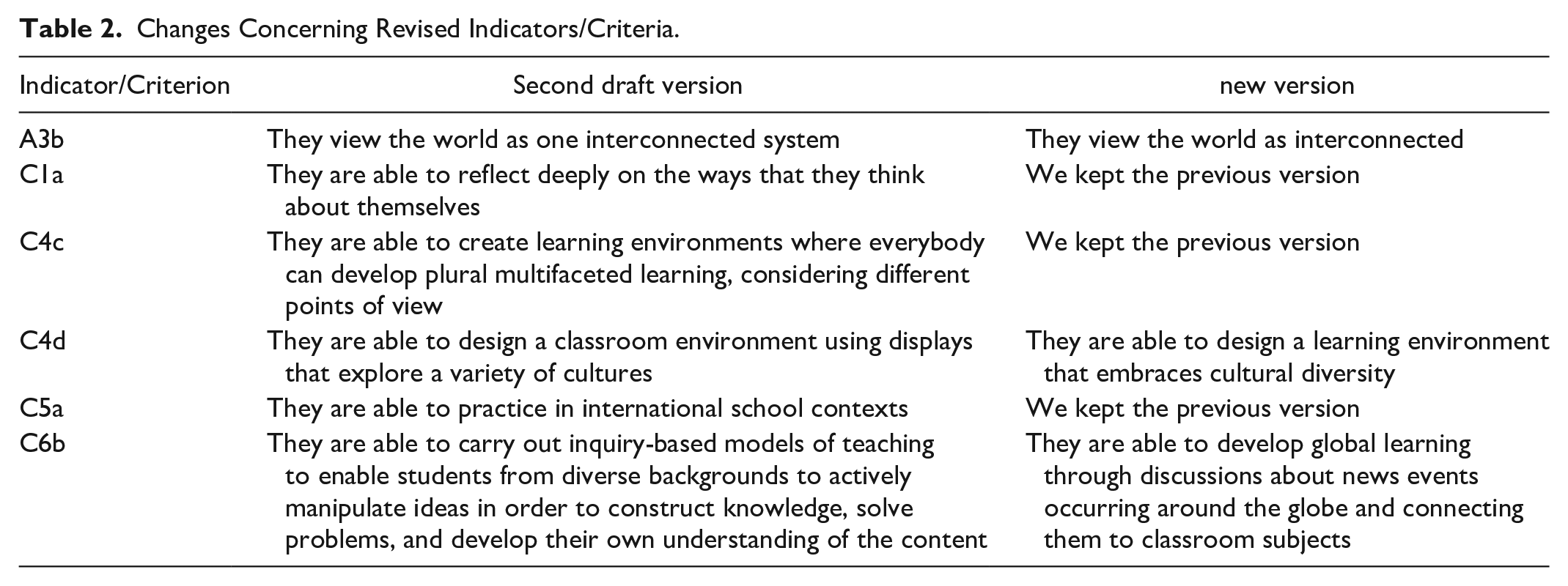

The experts confirmed the indicators/criteria C1a, C4c, and C5a. Additionally, they suggested new versions of the indicators/criteria A3b, C4d, and C6b. Table 2 shows the versions included into the second draft rubric and the new versions.

Changes Concerning Revised Indicators/Criteria.

In addition, the experts also suggested some changes concerning the captions. Table 3 shows the versions of the captions included into the second draft rubric and the new versions. This stage lasted 1 month.

Changes Concerning the Captions.

After the third round, the researchers wrote the third draft rubric. The final round, which lasted 2 weeks, did not provide any requests for changes or modifications. Consequently, the third draft reached the consensus and it can be considered the final version of the rubric. This version is provided in the Supplemental Materials (Table S3).

Discussion

The first remark concerns the method used to conduct this study. The modified Delphi method proved to be an effective strategy to face a complex task such as creating a set of rubrics to assess preservice teacher development of Global Competence. In particular, to carry out a modified Delphi method effectively, we recommend to pay attention to three main aspects. First of all, it is necessary to create a clear document/statement during the preliminary round, indicating the potential/initial alternatives. In this way, the experts can have the opportunity to focus the problem/topic and indicate/underline either limitations or potentialities of the introductory document. The second aspect concerns the importance of alternating qualitative and quantitative rounds, highlighting both advantages and disadvantages of qualitative and quantitative data. The last aspect confirms that a successful Delphi method should be planned only with individual interviews to keep the experts’ anonymity and allow them to express their ideas freely (Fletcher & Marchildon, 2014).

Regarding this specific study, focused on the creation of a set of rubrics, it is important to underline that a rubric is not simply a questionnaire because it is composed of several parts: the areas which focus on the main sectors concerning the competence; the dimensions which represent the core aspects of the competence; the indicators/criteria which specify the dimensions to make them measurable; the levels which indicate the level of proficiency; the captions which denote and describe the levels. All these elements, together, within a rubric to represent the development of a competence.

In addition, a competence is always a complex construct to be observed and assessed because a competence is a dynamic notion (Coulet, 2011; Oiry, 2005; Piccardo & North, 2019; Zollo & Winter, 2002) and it can be represented by a combination of resources (Coulet, 2016). The Global Competence is even more complex because it is multifaceted, composite, multi-layered, multidimensional and it can be seen from various and several perspectives. The Delphi method allowed us to define progressive steps/rounds and reach a high level consensus among the experts.

The evolution of the draft rubrics throughout the rounds shows that preservice teachers should be able, at the beginning, to explore the global realities, demonstrating a willingness to experience diverse contexts and feel a responsibility for ethical and global challenges. After this general and overall step, valid for all higher education students, the preservice teachers should be engaged in their own school environments, seeking the inclusion and the integration of all students in their classroom and exploring “resources from varied perspectives and opportunities to stay informed on local and global issues” (Tichnor-Wagner et al., 2019, p. 59). The third step is specifically educational and linked with the didactic matters: through professional interactions, preservice teachers should be able to manage complex learning environments where they can set up teaching strategies useful to embrace cultural diversity and include students from diverse backgrounds.

To compare our rubrics with the existing tools, we can underline that the Globally Competent Teaching Continuum (GCTC) (Tichnor-Wagner et al., 2019) is composed of three sections similar to our rubrics: Teacher Dispositions, Teacher Knowledge, Teacher Skills. The last area of our rubrics (Acting) is more specific for preservice teacher indicating how to manage the classrooms and specifying which strategies is better to use to develop the Global Competence within the classrooms. In addition, we developed more explicit and detailed indicators/criteria.

There are also similarities with the tool developed by van Werven et al. (2021) since the authors underscore the curriculum design competencies, especially for in-service primary teachers. Our rubrics are more focused on preservice teachers’ needs so the rubrics are more suitable for teacher education programs. The instrument elaborated by Liu, Yin et al. (2020) concerns all graduate students so there are sections appropriate for all higher education students but it is not specific for graduate students who are growing professionally as teachers, in particular, to enhance and improve teaching and assessment strategies aimed at creating global competence experiences in the classrooms.

The OECD instrument (2019; 2020) is mainly focused on high school students but it includes also some sections focused on teachers’ competencies and their capacity to integrate aspects related to Global Competence in the everyday lessons. These competencies (e.g. Teaching in a multicultural or multilingual setting; Communicating with people from different cultures or countries; Teaching about equity and diversity) are principally related with areas A (Exploring) and B (Engaging) of our rubrics. The instrument developed by Asia society (2017) is the most similar in the part of postsecondary. In this instrument, “take action” means taking action in the society instead, in our rubrics, “acting” means performing educational actions in the schools and in the classrooms.

All tools identified diverse aspects of Global Competence and we can find overlaps and intersections. Starting from the first steps of all instruments, the idea of Global Competence is focused on the idea of feeling a deep global responsibility. The second and the third steps of our rubrics are increasingly dedicated to the engagement in seeking inclusion and integration of all students in the classroom and the actions aimed at designing and carrying out inclusive learning environments for pupils with diverse backgrounds.

As mentioned before, globally competent teaching is based on two main aspects: diversity and inclusion (Anderson, 2019; Krebs, 2020). These rubrics can support preservice teachers in growing some professional aspects on how to face effectively diverse learners and several types of diversities, as stated by Beutel (2018) and Niemi and Hahl (2019). Ultimately, these rubrics can reinforce a progressive self-assessment of preservice teachers, in developing the inclusive excellence, suggested by Islam and Stamp (2020).

Conclusions

As already stated, Global Competence is a complex notion so our aim was to develop a tool that, at the same time, could include the various aspects of the relationship between Global Competence and the teaching profession and it could be used easily within the teacher education programs. This rubric can be used by either the teacher educators or the preservice teachers, in the latter, as a self-assessment instrument. The rubric can be employed in several contexts and situations such as: pre- and post-test before and after an international placement/internship; during an academic course focused on intercultural/international issues; during or after simulations based on real-life/real-world situations; during or after workshops focused on intercultural/international issues.

The main reason to use this rubric is two-fold. On the one hand, the teacher educators can observe the preservice teachers while they are acting in the classroom during the teaching practice or during a workshop at university. On the other, the preservice teachers can reflect on their own activities using the rubrics as a self-assessment reflective instrument.

In the end, this rubric can support the development of future teachers globally competent who will be able to arrange several learning environments suitable to growth globally competent pupils and citizens.

Supplemental Material

sj-docx-1-sgo-10.1177_21582440221128794 – Supplemental material for Assessing Global Competence Within Teacher Education Programs. How to Design and Create a Set of Rubrics With a Modified Delphi Method

Supplemental material, sj-docx-1-sgo-10.1177_21582440221128794 for Assessing Global Competence Within Teacher Education Programs. How to Design and Create a Set of Rubrics With a Modified Delphi Method by Davide Parmigiani, Sarah-Louise Jones, Chiara Silvaggio, Elisabetta Nicchia, Asia Ambrosini, Myrna Pario, Andrea Pedevilla and Ilaria Sardi in SAGE Open

Supplemental Material

sj-docx-2-sgo-10.1177_21582440221128794 – Supplemental material for Assessing Global Competence Within Teacher Education Programs. How to Design and Create a Set of Rubrics With a Modified Delphi Method

Supplemental material, sj-docx-2-sgo-10.1177_21582440221128794 for Assessing Global Competence Within Teacher Education Programs. How to Design and Create a Set of Rubrics With a Modified Delphi Method by Davide Parmigiani, Sarah-Louise Jones, Chiara Silvaggio, Elisabetta Nicchia, Asia Ambrosini, Myrna Pario, Andrea Pedevilla and Ilaria Sardi in SAGE Open

Supplemental Material

sj-docx-3-sgo-10.1177_21582440221128794 – Supplemental material for Assessing Global Competence Within Teacher Education Programs. How to Design and Create a Set of Rubrics With a Modified Delphi Method

Supplemental material, sj-docx-3-sgo-10.1177_21582440221128794 for Assessing Global Competence Within Teacher Education Programs. How to Design and Create a Set of Rubrics With a Modified Delphi Method by Davide Parmigiani, Sarah-Louise Jones, Chiara Silvaggio, Elisabetta Nicchia, Asia Ambrosini, Myrna Pario, Andrea Pedevilla and Ilaria Sardi in SAGE Open

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by the EU Erasmus+ KA2 project “Global Competence in Teacher Education” European Commission 2019-1-UK01-KA203-061503.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.