Abstract

The purpose of this study was to examine the influence of program admission requirements, university-district partnerships, and course content integration on the internship within principal preparation programs. University principal preparation programs have been a focus of recent attention, specifically since 2005 when the report Arthur Levine published his report, Educating School Leaders. Research identified the field-based internship as a valuable part of principal preparation programs. We developed the Principal Preparation Program Policy Survey (4Ps) to collect data from program chairs of public universities across the nation. We employed structural equation modeling to answer the research questions. The findings revealed that partnership policies have significant relationships with the principal internship and with admission requirements. Universities that develop strong district partnership policies are more likely to have strong internship and admission policies for their preparation programs. Similarly, admission policies have a significant relationship with program-course content integration, which means that the universities that produce strong admission policies are more likely to produce strong program course-content integration policies. Because this study provides empirical evidence that universitydistrict partnership policies and admissions policies have a significant relationship with internship policies, it is important for university principal preparation faculty to review their university-district partnership and admission policies and practices in relation to their internship policies and practices. The outcome based on the results implies that the stronger the university-district partnerships and admissions policies are, the stronger the internship will be.

Keywords

Introduction

Policies related to principal preparation and subsequent certification vary from one state to another to respond to the ensuring education equity based on the available resources for each state. This response emphasized the development of high standards of education and the development of assessment systems. Mendels (2016) synthesized three main policy areas that require an emphasis on university principal preparation programs (a) the quality of the content of the principal preparation programs, (b) the partnership with districts, and (c) internship experiences. Few researchers have conducted studies in which they analyze principal preparation policies in those three areas. There have been commentaries (Davis & Darling-Hammond, 2012; Orr, 2011); however, there is a paucity of studies focused on principal preparation policies (i.e., Anderson & Reynolds, 2015a,b). Herein, we share first the commentaries, next, the results of studies, and other significant studies directly linked to principal preparation. Due to the lack of policy studies directly linked to principal preparation, we proposed to study said policies empirically with a correlational study, using structural equation modeling, in which we analyzed four observed variables (demographics, admissions policy components, university-district partnership policy components, and course content integration with the internship policy components) and the latent variable of principal preparation program internship policies.

Literature Review

As one of the principal preparation program main requirements, the internship is identified as a major component of principal preparation programs (Campbell & Parker, 2016; Davis & Darling-Hammond, 2012; Figueiredo-Brown et al., 2015). Smylie and Murphy (2018) emphasized that a robust internship integrates the theoretical learning with leadership experiences because clinical internships are valuable instruments in which interns synthesize theoretical knowledge and put it into action. Pannell et al. (2016) addressed the need of interns to examine their competencies to place the theory into practice, so that the internship plays a role to ensure the validity of theoretical knowledge and the courses that are taught in principal preparation programs. Milstein et al. (1991) emphasized these needs to include several domains of professional knowledge such as (a) understanding the community setting; (b) tacit and administrative knowledge; (c) organizational culture such as organizational believes, vision, and mission; (d) research knowledge (e.g., data-based decision making); (e) personal knowledge (e.g., abilities and skills); (f) theoretical knowledge, critical knowledge, political knowledge, and craft knowledge. Milstein et al. further linked these domains of knowledge with three skills: technical skills, human skills, and conceptual skills.

Robust internship programs are required to provide prospective principals with knowledge and skills. Most preparation programs require an internship as an essential requirement of program completion (Holloman & Novey, 2018). The interns need to obtain formal approval from the school in which the internship takes place and from the site supervisor who follows up, mentor, and evaluates the intern’s progress. The internship should link the theoretical knowledge gained during the coursework to the practical experience (Amin et al., 2020; Sanchez et al., 2019).

As such, site supervision and or mentoring becomes a valuable part in the internship through which the intern works directly with a school supervisor/mentor who guides her/him to practice leadership roles. Mentoring provides a real-world experience opportunities because mentors shares their experiences with the mentees, build rigorous relationships, and evaluate the internship experience that the principal candidates have (Guerra et al., 2017). Davis et al. (2005) argued that mentorship loses its rigor if the intern does not genuinely practice leadership roles. Williamson and Hudson (2001) also found challenges that interns encounter when they are not assigned relevant leadership roles or when the site supervisor is not giving opportunities to make decisions without the risk of receiving severe judgments.

Direct Studies Related to Principal Preparation Program Policy

Principal preparation programs refer to the programs that provide principal candidates with the knowledge and the skills they need to qualify them leading their campuses (Grissom et al., 2019). The policies to develop formal principal preparation programs were passed in the beginning of the 20th century to provide the principal candidates with technical and efficient skills (Pannell et al., 2016). The role of the principal changed over time to meet the changes in the accountability policies and systems; thus, changes in the principal preparation programs have been made to meet these changes (Pannell et al., 2016). Currently, most of the universities offer traditional and/or non-traditional programs that lead to licensure in the public-school administration (Pannell et al., 2016; Styron & LeMire, 2009). Anderson et al. (2022) reported that master’s programs are the common path to principalship. Principal preparation programs are delivered in the face-to-face, online, and hybrid format; but there is the tendency to the online delivery is increasing (Richardson et al., 2020). The need to the online preparation programs increased in the COVID era (Perrone et al., 2020). Researchers have suggested that based on their analyses of principal preparation programs, there are three major constructs that influence internship policies: (a) university-district partnership policies (Hale & Moorman, 2003; Orphanos & Orr, 2014; Orr, 2011; Orr & Orphanos, 2011; (b) program course integration policies (Hale & Moorman, 2003; Orr, 2011); and (c) admission policies (Orr & Orphanos, 2011).

University-District Partnership

The university-district partnership can take the form of hosting meaningful internship experiences in the district to support principal preparation programs (Campanotta et al., 2018; Gray, 2018; Orr & Orphanos, 2011; Sutcher et al., 2017). Concurrent with the expansion of principal preparation programs, the internship has become a major requirement of principal preparation programs. Klostermann et al. (2015) affirmed that the collaborative partnership with the district is significantly beneficial to the internship. Based on previous research, there were common components among the principal preparation programs that include (a) developing purpose and vision in collaboration with students school district personnel, and prospective school leaders (Campanotta et al., 2018; Gray, 2018; Guerra et al., 2017; Williamson & Hudson, 2001; (b) incorporating the program knowledge and skills to meet school leadership requirements (Gray, 2018; Murphy, 1991; (c) enhancing teaching and student achievement and to make an overall change at school (Campanotta et al., 2018; Figueiredo-Brown et al., 2015); and (d) expanding the inclusion of clinical activities such as a clinically-rich internship (Orr, 2011).

Admission Policies of Principal Preparation Programs

The admission requirements represent the first and the controversial component of the principal preparation programs component because it affects the preparation program as a whole. The admission requirements include the high score of Grade Point Average (GPA), Graduate Record Examination (GRE) score (if applicable), a face to face interviews, recommendation letters, personal statement, leadership experience, teaching experience, strong commitment to social justice, and additional certification if applicable (Barakat et al., 2019; Campanotta et al., 2018; Pannell et al., 2016; Wang et al., 2018). Kozlov (2018) argued that the admission of the principal preparation program does not follow a one-size-fits-all approach but it varies widely. Davis and Darling-Hammond (2012) emphasized that these requirements represent significant features of the exemplary programs. However, these high standard admission requirements would reduce the number of candidates (Guerra et al., 2017; Klostermann et al., 2015). Klostermann and her colleges found that the face-to-face interviews, the extensive portfolio, and the recommendation letters would be intimidating to applicants of minority groups. Thus, Klostermann et al. emphasized that the admission requirements would affect the internship racial and gender diversity because the admission requirements will lead the program to be highly selective. However, some researchers have argued that admission policies do not significantly help identify the high potential applicants (Davis et al., 2005; Hale & Moorman, 2003; Hess & Kelly, 2007). Quin et al. (2015) indicated that there is an indirect relationship between the program admission and the internship policies; however, several principal preparation programs failed to provide rigorous internship and recruit qualified principal candidates. He also found that the university-district partnership mediates this relationship and plays a role to identify the high potential candidates besides hosting meaningful internships.

The Internship Policies

The internship is one of the most important and integral components of a principal preparation program. It is defined as the component through which students are bridging the theoretical knowledge with practical field experience (Cunningham, 2007; Deschaine & Jankens, 2017; Dodson, 2015; Figueiredo-Brown et al., 2015; Hackmann et al., 1999; Nicks et al., 2018). The internship has been described as the “professional seal of approval before a new principal is certified” (Bottoms et al., 2007; Dodson, 2015, p. 10). Cross disciplinary research has suggested that the internship builds the ability of new leaders by allowing them to put leadership theories into action (Browne-Ferrigno, 2003, 2004; Butler, 2008; Cunningham, 2007; Cunningham & Sherman, 2008; Figueiredo-Brown et al., 2015). Cunningham and Sherman (2008) defined a robust internship as a field experience with which the intern possesses the principal professional requirements and skills. They summarized these requirements to include: (a) authentic opportunities; (b) development of skills that match with diverse situations; (c) coverage of specific state and national standards; (d) linkage of theory with practice; (e) feasibility, sustainability, and accessibility of resources; and (f) building of competencies and confidence in managing administrative roles.

An internship should be robust, rich, and clinical. Internships vary from one program to another, but typically it spans an academic year as part-time service. The required duration ranges from 100 working hours to 800 working hours (Kappler-Hewitt et al., 2020). Interns are required to practice field-work experience at schools to implement the coursework they completed. Milstein et al. (1991) gave an example of the University of Washington in which the internship requires a student to intern for 720 hours (at least 16 hours per week) in a school that is different from her/his own school. The length of internship is important because it determines the extent to which interns experience various cultural and administrative situations, build relationships, and are rigorously involved in school leadership practices (Figueiredo-Brown et al., 2015). Gaining leadership experience is one of major components of the internship. Figueiredo-Brown et al. (2015) studied reflections of interns who completed a principal preparation program at East Carolina University (ECU) and found most of the reflections supported the notion that interns learn better when they have exposure to diverse settings. Thus, Figueredo-Brown et al. emphasized the importance of providing the principal candidates (the interns) with culturally responsive mentors and university supervisors.

Earlier research results have been promising, in terms of identifying the purpose of the fieldwork experience (e.g., Campbell & Parker, 2016; Cunningham, 2007; Darling-Hammond et al., 2010; Figueiredo-Brown et al., 2015). These results emphasized that the purpose of having fieldwork experience is to provide interns with developmental opportunities and to have authentic applications of the leadership knowledge they acquire during their time in principal preparation programs (Davis & Darling-Hammond, 2012). Every preparation program requires certain quantities of field hours or hours spent in a field experience as part of their principal preparation programs. This requirement varies from one program to another ranging from 100 to 400 hours or more (Anderson & Reynolds, 2015b; Campbell & Parker, 2016; Hackmann et al., 1999), it is mostly a yearlong internship or residency (Campanotta et al., 2018; Klostermann et al., 2015; Sanchez et al., 2019). For example, the principal preparation program at Colorado State University requires 300 hours of field work, while New Mexico State University requires 120 hours (Dodson, 2015; New Mexico State University, 2015; Texas A&M University [TAMU], 2021). In order to make the internship experience more rigorous, most universities require the school in which the intern is assigned to be different from the school in which the intern serves as a teacher. In order to complete principal certification, an internship course (at least 3-credit hours) is required. This course should follow specific state standards. Many programs, such as programs at New Mexico State University (College of Education at Tennessee State University, 2022), Texas A&M University (TAMU School of Education, 2022), and Tennessee State University (UNM College of Education, 2022) require this course to be taken within the last 12 credit hours of the program. In addition, students are required to have a mentor from the internship school who supports the learning experience (Darling-Hammond et al., 2010; Figueiredo-Brown et al., 2015) and a university supervisor who follows up the implementation of the internship program (Cunningham, 2007).

Coursework Integration

In exemplary principal preparation programs, it is beneficial to have strong link between the coursework and the internship practice. The coursework of the principal program must be aligned with the national and state leadership standards (Campanotta et al., 2018; Davis & Darling-Hammond, 2012; Klostermann et al., 2015) and integrated with the internship practices (Jones & Ringler, 2017; Sanchez et al., 2019; Sullivan & Pena, 2019). In their qualitative study, Davis and Darling-Hammond (2012) examined preparation programs on five university campuses. They contended that a curriculum-based internship was the common significant feature among the five programs. Lynch (2012) demonstrated that the knowledge and skills that received in the coursework are key to formulate the leadership skills of the principal candidates; thus, Lynch confirmed the importance of including special education knowledge in the course in order to strengthen the future leaders’ positive attitude toward students with disabilities and/or students of underrepresented groups. The coursework of the internship was mainly online. Most of the university-based preparation programs require a three credit-hours internship course. For example, the College of Education and Human Development at Texas A&M University (TAMU) offers a principal certification that includes a three credit-hour internship course which should be completed within the last 12 hours of their certification program. This internship course is online and students are expected to login on a regular basis. In addition, interns should create their online weekly journal in which they log their learning experiences (TAMU, 2021).

Summary of State Policies With Regard to Principal Preparation Internship

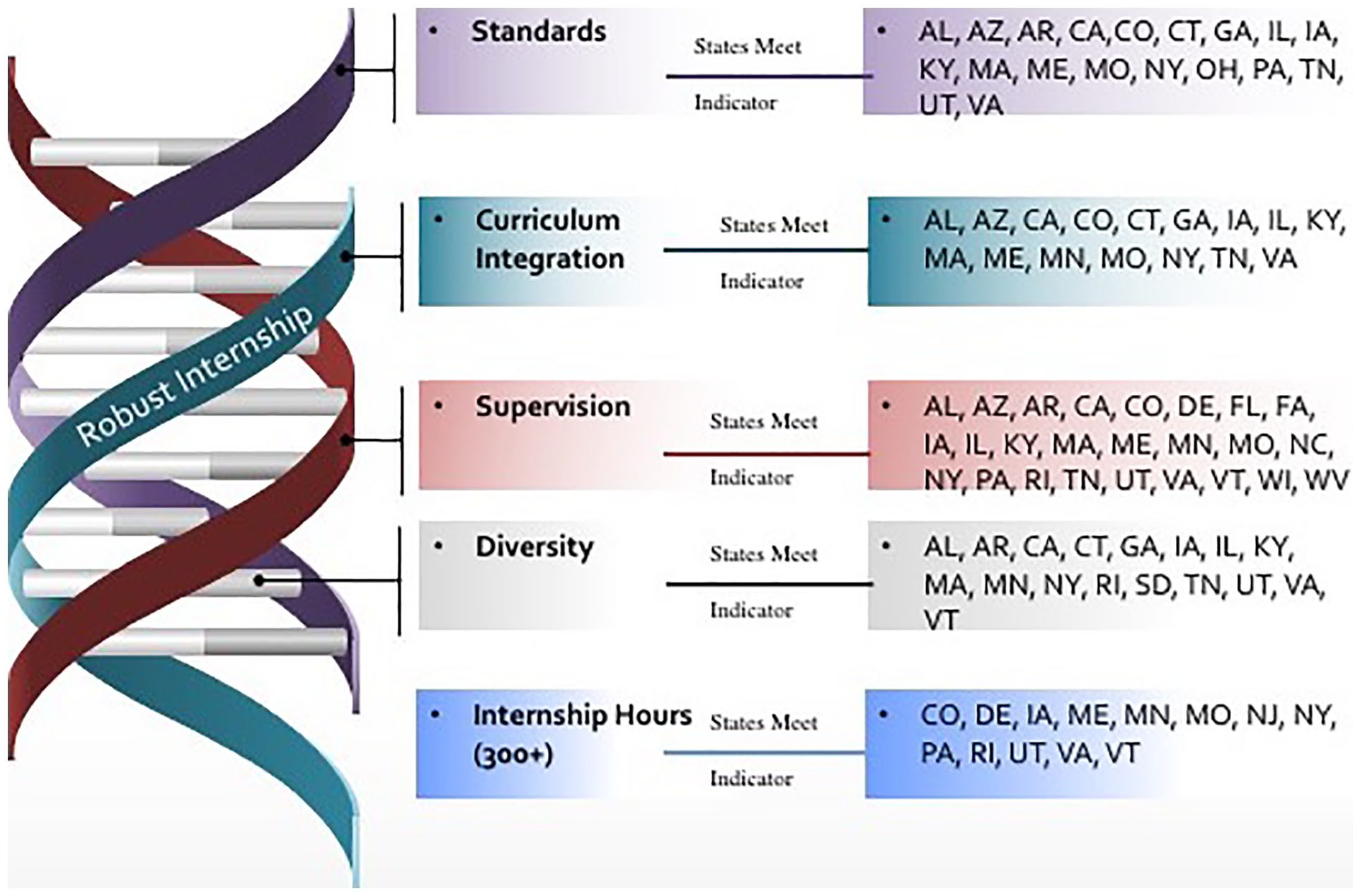

At the state level, policies to ensure robust internship opportunities exist. Based on previous research, the University Council for Educational Administration (UCEA) reported seven main criteria of a robust internship: (a) deliberate structure which is addressed in principal preparation policies of 21 states such as Alabama, Arizona, California, Colorado, Connecticut, Georgia, Illinois, Iowa, Kentucky, Maine, Massachusetts, Minnesota, Missouri, New York, Ohio, Pennsylvania, Tennessee, Texas, Utah, and Virginia); (b) field work that is linked to the theoretical curriculum (addressed in policies of 16 states such as Alabama, New York, and Tennessee); (c) engagement in core leadership roles (addressed in policies of 18 states, e.g., Alabama, Florida, Georgia, and Tennessee); (e) supervision by an expert (addressed in policies of 25 states, e.g., Arizona, Iowa, Montana, and New York); (f) exposure to diversity (addressed in policies of 18 states, e.g., Alabama, Illinois, South Dakota, and Tennessee); and (g) more than 300 hours of field experience (addressed in policies of 14 states, e.g., Colorado, Delaware, New Jersey, and Utah). Only policies of Iowa, Massachusetts, Minnesota, and Virginia met the six criteria while five criteria out of the six were addressed in policies of Alabama, California, Georgia, Illinois, Kentucky, Maine, Missouri, New York, Tennessee, and Utah. As shown in Figure 1, the state policies addressing the criteria of robust internship requirements vary.

States with internship policies based on the UCEA report.

Method

Research Design

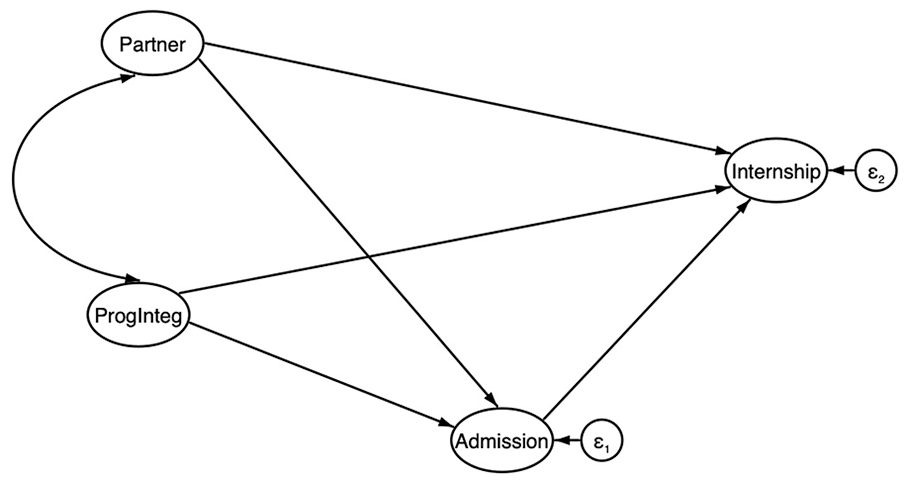

The research design used in this study is correlational using structural equation modeling (SEM) to determine specific relationships with observed and latent variables: (a) university-district partnership policies; (b) admission policies; and (c) course integration policies. The three concepts capture various characteristics and relate to the quality of the internship. The proposed model, as shown in Figure 2, indicates direct paths from district-university partnership, program integration, and admission to internship. Indirect path from the university-district partnership to the internship using the admission is a mediation.

The hypothesized model of policy relationships with robust internship indicators.

Purpose of the Study

Studying principal preparation program policies is critical—first, because there is a paucity of such studies, and second, because preparing individuals for leading schools is as important as preparing the teachers for the classrooms (references). Importantly, within the program policies is that of internship policies. The internship is the major component in principal preparation programs (Davis & Darling-Hammond, 2012; Orr, 2011), and the quality of the internship is measured by the structure of the internship (Dodson, 2015; Hackmann et al., 2002), the integration with the course work (Orr, 2011), mentoring and supervision (Davis & Darling-Hammond, 2012; Hackmann et al., 2002), exposure to diverse populations, and the number of working hours (Anderson & Reynolds, 2015a; Orr, 2011)—all are policy related issues within a program. Therefore, the purpose of this study was to examine the relationship of the policies inclusive of program admissions, university-district partnerships, and course content integration to internship policies within principal preparation programs. A sub-purpose was to determine how university location, type of delivery of instruction, and the type of cohort affect the relationship of the policies analyzed.

Research Questions

There were two research questions included in this study. Those questions follow.

What is the relationship between policies inclusive of program admissions, university-district partnerships, and course content integration and internship policies within principal preparation programs?

By adding the university location, the type of instructional delivery, and the type of cohort as controlling variables, what is the effect on the relationship between the policies of program admissions, university-district partnerships, and course content integration and internship policies?

Data Source and Participants

Carnegie Classification of Institution (2018) was employed to determine the sample population. The population includes 4,665 universities across the United States. Guion (2008) asserted that sample selection must be built on the basis of criteria that is relevant to the purpose of the target measurement. Therefore, two main criteria were considered: (a) only public universities of the states; and (b) public universities in which 1,000+ master’s students were enrolled as noted by Carnegie classification of 2015. Thus, a total of 518 universities were included in the sample. In order to achieve significant accuracy in SEM, a large sample size is required (Kline, 2011; Nevitt & Hancock, 2004).

Instrumentation and Variables

The Principal Preparation Program Policy (4Ps) survey was developed to collect the data required for this study. 4Ps was developed based on the literature, the UCEA principal preparation rubric (Anderson & Reynolds, 2015a), and the Taskforce on Evaluating Leadership Preparation Programs (UCEA/LTEL-SIG) survey (Orr, 2006). The researchers identified five constructs in the UCEA rubric: (a) admission; (b) standards; (c) internship; (d) partnership with the district; and (e) program oversight (Anderson & Reynolds, 2015a). The Taskforce on Evaluating Leadership Preparation Programs (UCEA/LTEL-SIG) survey included items to address the perceptions of the program graduate on the quality of the program. In 4Ps, we adopted items from the UCEA/LTEL-SIG survey to address the university characteristics. 4Ps included 84 items (a) one item for signing the consent form; (b) 14 items for university characteristics; (c) 12 items for the admission construct; (d) 30 items for the internship construct; (e) seven items for the university-district construct; (f) 16 items for the program course-content integration construct; and (g) four items for feedback.

Data were collected using the 4Ps that was developed and utilized as the instrument of this study. Questions about internship requirements, description, and policies were included. This survey was built on the basis of the UCEA rubric (Anderson & Reynolds, 2015a), the UCEA INSPIRE survey, and the UCEA/LTEL-SIG Survey of Leadership Preparation and Practice. The focus of the survey questions measured four constructs, based on the literature, related to the internship: (a) demographic information; (b) admission; (c) program course integration; and (d) partnership with the school district. The survey was tested through two pilot tests and face-validation with two experts in education administration (the reliability is explained in details in the results).

Data Collection

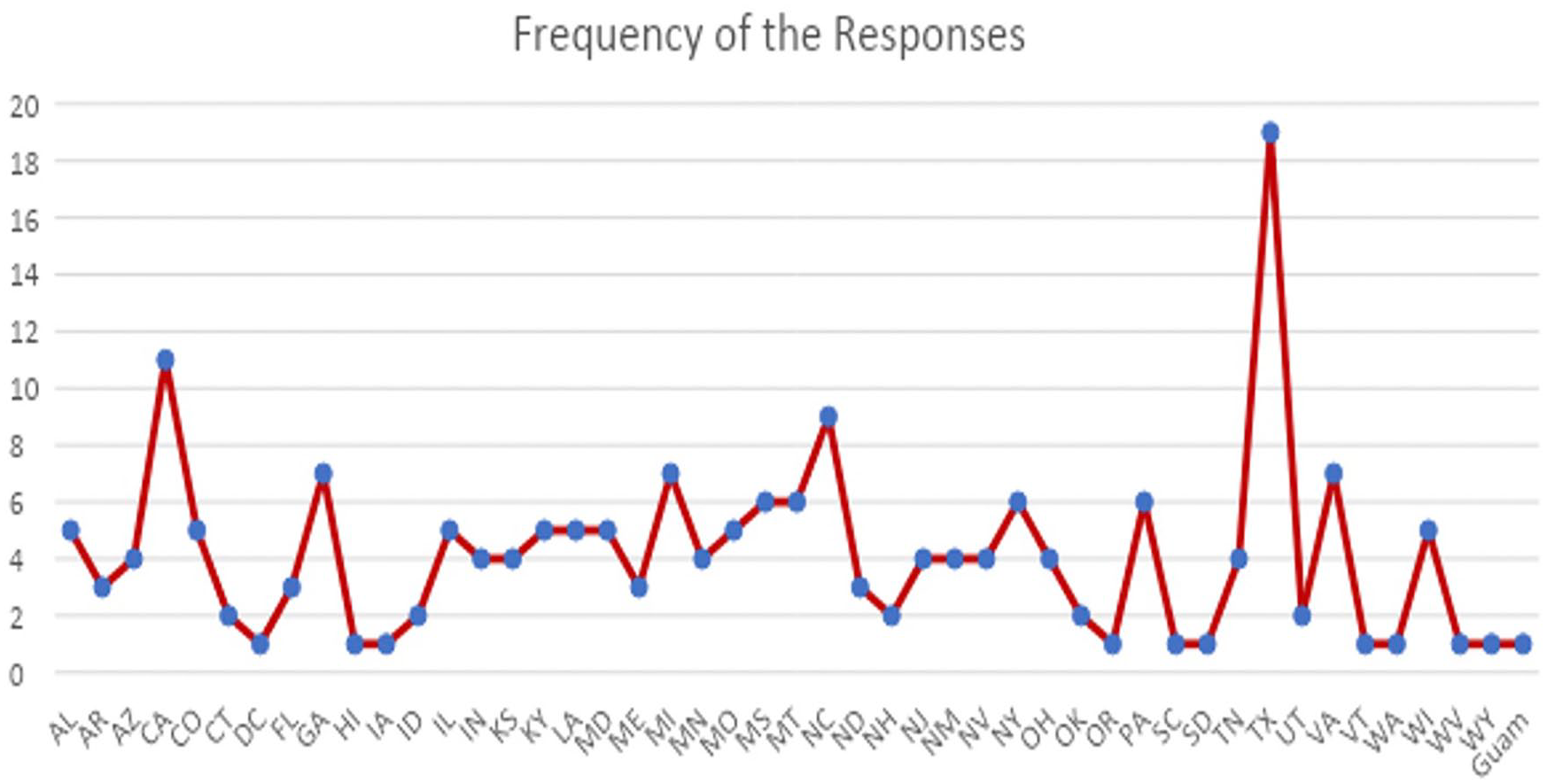

The survey was distributed online, using Qualitrics, and sent to the chairs of the principal preparation programs at 518 large size public universities between October 3, 2017 and November 5, 2017. There were 193 responses collected (37.25%). The responses represented all states as shown in Figure 3 that demonstrates the locations of the universities represented in terms of being urban, suburban, and rural locations.

The responses frequencies per state.

Data Analysis

Structural equation modeling (SEM), using MPLUS 8, was employed to examine the hypothesized model within a simple path model that assessed the relationships between the effectiveness of internship policies on the implemented internship programs. The Weighted Least Squares Means and Variance (WLSMV) estimator using MPLUS was utilized because it does not assume normally distributed variables, in addition it works well with small sample size (DiStefano & Morgan, 2014). Hancock (2003) emphasized SEM is commonly employed in social science, because it has the ability to make relationships between the unobservable (latent variable) constructs and the observable ones (indicators).

Cronbach’s Alpha was calculated to measure the internal consistency of the survey. Because the survey was developed for this study, exploratory factor analysis (EFA) was conducted to divulge the underlying structural relationships between the measured variables (Fabrigar et al., 1999; Norris & Lecavalier, 2010). Further, the Confirmatory Factor Analysis (CFA) was conducted to test the hypothesis of the existence of relationships between the observed and the latent variables (Kline, 2015). The two-index presentation strategy was employed to evaluate the model fit (Hu & Bentler, 1999). More specifically, Hu and Bentler (1999) identified a good fit model combined with comparative fit index (CFI) and Tucker-Lewis index (TLI) with a value close to .95 for each; and root mean square error of approximation (RMSEA) with a value close to .06. Because the final version of the survey was dichotomous, the weighted root mean square residual (WRMR), CFI, TLI, and the RMSEA, were computed. Mplus 8 was used to examine the model fit. Because this study was exploratory, the alpha was set at .10 to examine the significance level of path estimation.

Instrumentation

There were two stages to assess the reliability of the new developed instrument. In stage 1, we assessed the reliability of a pilot survey responses using Cronbach alpha. In stage 2, we revised the survey based on the results of the pilot survey and we employed face validity on which two experts reviewed the revised version of the survey.

Stage 1: Reliability of the piloted survey

The pilot survey was distributed among the program chairs of 100 medium-sized universities with 52 responses being received. The medium sized university was identified as the university that has between 5,000 and 15,000 students (College Data, 2020). This survey included 62 questions under the same five constructs. A Cronbach reliability test was utilized to evaluate the appropriateness of the measurement model used for final SEM (Doloi et al., 2011; Jin et al., 2007). The range of Cronbach alpha is from 0 to 1 (Gliem & Gliem, 2003). The value of Cronbach alpha value, for this survey, was .601 which is lower than the cut-off of .7 proposed by Santos (1999). Thus, this version needed more revisions.

Stage 2 (Face and content validity)

Stage 2 in the 4Ps survey validation was the content validity assessment. In this stage, we assessed the adequacy and relevance of the instrument constructs (Masuwai & Saad, 2017). Two experts in educational administration reviewed each of the 62 survey questions. The expert reviews assessed the extent to which the items in the scale fit their dimensions (Lou et al., 2008). The scale was also altered to a binary Yes = 1 and No = 0 response. Some of the existing questions were modified, and more questions were added. For example, we found it is important to include more specific questions to identify whether the university has a policy to assign a faculty field supervisor to the intern or not (See Table 1 for the list of the questions). The final instrument (4Ps) was comprised of 85 questions under the five constructs as mentioned above. After excluding the demographic and open-ended questions, the Cronbach alpha value for the final version was .81 which indicates high reliability (Cronbach, 1951). The survey was designed for a larger project. For the purpose of this study, we included 21 questions related to the indicators of the principal preparation programs policies.

The Latent Variables of the Constructs.

Measures of the study

Internship was measured as the dependent variable and then the three independent constructs (e.g., university-district partnership, program course integration, and admission). The four variables were measured using binary scale: yes = 1 and no = 0. As shown in Table 1, the endogenous variables of internship construct include seven items (see Q15 through Q21 in Table 1). There are three exogenous variables. University-district partnership represents the first exogenous variable that includes four endogenous variables (see Q11 through Q14 in Table 1). Program course integration represents the second exogenous variable. It includes five endogenous variables (see Q 1 through Q5 in Table 1). Admission represents the third exogenous variable. It includes five endogenous variables (see Q 6 through Q10 in Table 1).

Factor Analysis Analyzing the Constructs of the Instrument

An exploratory and confirmatory factor analysis was conducted to determine the number of constructs of the instrument. First, the exploratory factor analysis is presented, and then the confirmatory factor analysis follows.

Exploratory Factor Analysis Using 4-Factor Models

Factor analysis was utilized to determine the underlying constructs for measures on the 21 questions of the 4Ps survey. The survey was on a dichotomous scale representing categorical data (the data include yes or no responses). Mplus 8 provided functions that dealt better with the categorical data (Muthén & Muthén, 2015; Schmitt, 2011). Four models were produced to examine the underlying constructs. The 1-factor model did not have a good fit because the chi-square (

The Fit Indices of EFA Four Models.

Prior to examining the fit of the hypothesized model, the reliability coefficients of Cronbach’s alpha coefficient values for the four constructs were calculated. The reliability coefficients of Cronbach alpha for the four constructs: (1) admission; (2) internship; (3) university-district partnership; and (4) course-content integration were .81, .84, .83, and .74 respectively which means that the reliability coefficients of the four constructs are larger than .7 (Cronbach, 1951).

Confirmatory Factor Analysis (CFA)

The next step was to examine the discriminant and the convergent validity. Confirmatory factor analysis was used to examine the theoretical relationships among both the observed variables (latent variables) and the unobserved variables (the question items of the survey) (Jackson et al., 2009; Schreiber et al., 2006). The goodness-of-fit indices indicated that the model provides an adequate representation of the data. The fit indices for the four-factor model are as follows:

Confirmatory Factor Analysis.

Results

Descriptive Results

Descriptive frequencies of the demographic data were calculated to draw an overview of the examined programs. Figure 5 demonstrated the distribution of the location frequency of the responses indicating the number of the institutions participated and the type of the institution (urban, suburban, and rural).

The Distribution of the Location Frequency of the Responses.

Most of the programs were accredited by more than one accreditation agency. The majority were accredited by (a) CAEP-ELCC; (b) a national accrediting agency such as SACSCOC; and (c) a state accrediting agency. Table 3 illustrated the frequency of the accreditation agencies that accredited the programs of the institutions. In addition, participants shared additional accrediting agencies their programs joined such as California Commission on Teacher Credentialing (CCTC), ENCATE, NCATE, CPED, THECB, and WASC.

The Accrediting Agencies that Accredited the Programs Participated in This Study.

Results of SEM Models

The results are reported by specific models. SEM Model 1 answered the first research question in which we inquire what the relationships between policies inclusive of program admissions, university-district partnerships, and course content integration and internship policies within principal preparation programs are. In SEM Model 2, we explored the change in these relationships after adding controlling variables such as the university location, the type of instructional delivery, and the type of cohort as controlling variables.

SEM- Model 1: Internship Policy Relationships With Significant Paths

Based on Model 1, university-district partnership does not have a significant relationship with the program course integration policies. After removing this relationship, model 2 (as shown in Figure 6) was developed to show the relationships between the four constructs without partnership-course integration relationship. Model 1 shows that the model has an acceptable fit (

SEM Model.

Variables (e.g., the University Location, and the Learning Approach, and the Type of Program) on Internship Policies

In order to examine whether the relationships between the four constructs would change when adding an additional controlling variable, we have included the location of the university (rural, suburban, and urban), the type of the cohort (mixed cohort), and the type of the course platform (online). Traditional cohort and non-cohort, face-to-face, and hybrid variables were excluded due to their low factor loading. The new developed model shows an acceptable fit because the values of the comparative fit index (CFI) and the Tucker–Lewis index (TLI) are .931 and .923, respectively, which are larger than .9. Chi square also reports an acceptable fit 757.468 with a significant p-value of .001.

As shown in Figure 7, the new model drew a significant relationship between partnership and the admission (p-value .001), which means that universities that develop good university-district partnerships policies are more likely to have good internship policies. In addition, the university-district partnership has a significant relationship with the program course integration (p-value .02), which means that the universities that develop good partnership policies are more likely to have good course integration policies. Similarly, there is a significant relationship between the admission and program course integration (p-value .001), which illustrates that universities that develop good admission policies are more likely to have good policies related to program course integration. This model also illustrated that there is a significant relationship between program course integration policies and internship policies (p-value .001), which means that universities that develop good program course integration policies are more likely to have good internship policies. Internship and the university-district partnership policies have a significant relationship (p-value .001). Admission, mixed-cohort, and the rural location of the university have negative and significant relationship with internship policies (p-value .004, p-value .07, and p-value < .001, respectively) which means that universities in the rural locations and/or develop good admission policies are less likely to have good internship policies. An online platform has a significant relationship with internship (p-value .78), which means that the universities that promote online education are more likely to have good internship policies. Only universities in suburban locations do not have a significant relationship with internship (p-value .34).

SEM Model with Controlling Variables.

Comparing the two models, model 2 has the smaller Chi-square (

Fit Indices for the Basic Model 1 and Model 2.

Discussion and Conclusion

The purpose of this study was to identify the relationships between the internship policies and the policies of principal preparation program admission, university-district partnerships, and course content integration. The directors of principal preparation programs and/or the program chairs were surveyed to examine these relationships based on their experiences. The responses revealed overall precise mapping of the internship relationships. The internship policies are associated with the university-district partnership, program course integration, admission, location, the type of the program, and the type of instructional platform.

In addition to addressing the relationships between internship policies and the policies of admission, partnership, and program course-content integration, the effects of the university location, instructional type, and cohort type were considered. Using structural equation models helped to answer the research questions. In the two questions, we examined the relationship between internship and the university-district partnership, course-content integration, and admission respectively. We found that there was a significant relationship between the three constructs and the internship. In question two, we quantified the relationships between internship and the university location, the instructional type, and the cohort type. We found that online instructional type and the mixed cohort type have significant relationships with the internship.

The Effects of University-District Partnership on Internship

The results of the relationship between the internship and the partnership with the district are consistent with the previous research (Davis & Darling-Hammond, 2012; Davis et al., 2005; Hale & Moorman, 2003; Williamson & Hudson, 2001). The university-district partnership helps achieve the vision in the standards. The internship was perceived as the main pillar of the preparation program, therefore, there is a need to develop good internship policies. Universities realized that the internship would not be accomplished without having effective collaboration with school districts. The analyses of the collected data show that there was a significant relationship between the university-partnership policies and the internship policies. This significant relationship emphasizes the importance of establishing collaborative communications and cooperation between the university and the district with which the internship would be enriched.

The university-district partnership is an important component of preparation program improvement because it affects directly and indirectly the quality of the internship. Consistent with the previous literature, the results revealed that the program decision makers emphasize that there should be collaboration with school districts by which districts are committed to provide internships of high quality (Murphy, 2006). These results are consistent with the previous findings on the district’s role in improving the quality of the preparation programs (Davis et al., 2005; Hess & Kelly, 2007; Orr et al., 2011). The high frequency of the number of required internship hours illustrated that the more internship hours the program requires, the better internship would be received when these hours are associated with on-site mentoring, faculty supervision, and engagement in leadership practices. On the one hand, the district collaboration and commitment to the preparation program extended to build trust between the university and the district based on which the district: (a) would be involved in the nomination of the program candidates; and (b) would hire the graduates of the preparation program upon their graduation. On the other hand, the university seeks district support to align the preparation program with the district needs.

The Effects of Admission on Internship

Admission requirements are the first step of enrollment in the preparation program. Applicants need to meet at least the minimum requirements that includes: (a) >3.0 GPA in the bachelor’s degree; (b) GRE score (if applicable); (c) student’s resume; (d) personal interview; (e) essay sample; and (f) leadership experience. Admission was a controversial component because researchers argued that preparation program admission requirements do not reflect the program features (Levine, 2005; Orphanos & Orr, 2014; Orr & Orphanos, 2011). Therefore, the exemplary preparation programs do not show distinct relationships between admission and the internship. Consistent with the previous literature, this study revealed that there is a negative relationship, which means that the universities do not align the admission policies with the internship requirements and with the program as a whole.

In order to enrich the internship experience, policymakers of the preparation programs need to integrate internship policies with the admission policies. The awareness of the importance of school leadership to students’ achievement led the policymakers to share their concerns about the variation of the principal preparation programs’ quality (Grissom et al., 2019). Since passing the NCLB in 2001, principal preparation policies added more responsibilities for the school principals to help enhance students’ achievement (Grissom et al., 2021; Louis, 2015). Therefore, there was an increased interest in principal preparation to achieve this goal. As the internship is a key component of the preparation program, there is a need to strengthen this component. In order to enrich the internship, policymakers of the preparation programs need to integrate internship policies with the admission policies. For example, the admission policies would include a requirement that requesting the candidate to develop a plan of the internship. The admission committee would be responsible to evaluate this plan to ensure that the candidate has an understanding of the school leadership needs. Quin et al. (2015) put great emphasis on connecting the admission with the leadership practices; therefore, our finding was consistent with this finding since we proved that the relationship between admission and internship policies is significant.

The Effects of the Course-Content Integration on the Internship

There is a significant relationship between the internship and the course-content integration. This finding was consistent with the previous studies because it reflects the importance of linking the field experience with the coursework. Davis and Darling-Hammond (2012) emphasized that exemplary preparation programs should include active instructional strategies that link to the internship in order to link theory to practice. The course integration would be beneficial when the internship activities are embedded in the course structure. In other words, embedding certain hours of the internship activities in each course will provide effective connection between the courses and the internship experience.

The Effects of the University Location on the Internship

Results indicate that the location of the university has a negative effect on the internship only when the university is located in rural and suburban locations. For instance, the internships in rural districts would lack the resources and funding; thus, the internship activities are not offered in all rural campuses. The results showed that the university which serves urban settings would have more opportunity to develop rigorous internship programs because interns would have better opportunity to be exposed to diverse settings and serve students with various ethnic and racial backgrounds. This finding indicates that the location of the university would help enrich the internship by designing activities that suit the rural districts.

Conclusion and Implications for Research and for Practice

Because the focus of this study centered on the relationships between internship, admission, university-district partnership, and the program course integration policies, there are many implications for research and practice that can be made for the field of educational leadership. First, because this study provides empirical evidence that the university-district partnership has a significant impact on the internship, it is important to review the exemplary university-district partnership and to hold regular meetings with the district administrators to discuss their needs and their recommendations to improve the preparation programs.

Additionally, there is a need to hold semi-annual meetings with the district superintendents as well as school principals to review the current policies and to evaluate their effectiveness. University-district assessment of the program is also required in order to help enhance the program. Internship is a major component of the program, therefore, the dynamic partnership with the district is required to make the internship valuable.

Second, because it was found that internship policies have a negative relationship with the admission policies, it is more likely that the states with rich internship policies lack to have good admission policies. This finding guides us to conclude that there is a need to develop admission policies that depend not only on the GPA, GRE scores, and the personal statement, the admission policies need also to include items that provide evidence of the suitability of the candidate to the program such as well-structured interviews that assess their understanding of school leadership, social justice, and culturally responsive leadership. These admission items will help determine the potential leadership qualities of the students who intern at schools at some points of the preparation program. The policymakers of leadership preparation programs need to include more requirements that affirm the potential leadership skills that an applicant demonstrates or acquires, or they need to include more admission requirements that assesses the applicant’s needs and expectations from the program. The admission interview for the program should include questions about the relationship between the previous experience and the applicant’s expectations of the program. The interview also needs to include questions about their expectation of the internship, and their perceptions about mentoring, diversity, and collaboration with school administrators.

Third, because there was a significant correlation between the program course integration and the admission policies, it might be important for the admission faculty to collaborate with the team of the faculty members who develop the syllabi for the program. Those two teams need to create one vision with which the applicant can connect the admission requirements with their needs and expectations of the program.

Fourth, because it was found that the location of the university has a negative effect on the internship only when the university is in rural and suburban locations, it was important for those universities to reconsider their internship policies. The faculty of the leadership preparation program in the universities located in the rural and suburban locations need to create active and strong communication channels with school districts where the internships will take place. These communications should help create better internship plans as well as better partnerships with the district.

Finally, we found that online courses are important for the internship as students can access the program content and communicate better with their faculty members. For the internship, it is important for the policymakers of educational leadership programs to increase the availability and accessibility of online courses particularly after COVID-19 pandemic. COVID-19 added another significant need to increase the online platforms for all students through which they can complete their own assignments, communicate with peers and instructors, and complete the requirements of the internship with alternative assignments in case the schools closed. Therefore, we are recommending the faculty to consider increasing the online presence on the principal preparation programs.