Abstract

Despite the consensus in the medical discipline that certain treatments lack scientific evidence and are worthless if not potentially dangerous, the promotion and selling of fake cures advertised as safe and effective has long plagued health care systems, praying on vulnerable patients and their loved ones. The web and social media are now playing a fundamental role in the propagation of non-science-based treatments and fraudulent medical claims, and in the rise of false health and lifestyle experts. This study combines criminological and computer science expertise to explore and critically analyze the Twitter presence of providers of non-science-based anticancer treatments and their active supporters in the English-speaking online community to investigate their structural relationships and to analyze the characteristics of the most popular actors. The features of the social network observed indicate that there is not a stable community of promoters and supporters of non-science-based medical treatments in the Twittersphere, suggesting the lack of a defined subculture and the presence of transient collectives rather than identifiable groups. Nonetheless, it is possible to observe dynamic conversational networks clustering around popular actors, tweets, and themes, prompting avenues for further research.

Introduction

The commercialization and popularization of cyberspace has led to a revolution in health care, allowing—among other things—patients to connect among themselves, and with medical practitioners. The establishment of self-help communities has been initially welcomed (Ferguson, 1997) and can still have a great potential to provide important information and emotional support, as well as to give patients a sense of empowerment (Chung, 2014; Fullwood et al., 2019; Zhu et al., 2020). However, cyberspace has been extensively used also as a vector to disseminate medical misinformation and even fraudulent medical claims.

The promotion and selling of fake cures and well-being practices advertised as safe and effective is not new, but it has long plagued health care systems (Cattaneo & Corbellini, 2014; Gazzola, 2019; Herbert, 1986; Lerner, 1984; Offit, 2013). Because of the opportunities offered by cyberspace in creating like-minded virtual communities and in giving a platform to false experts, however, this type of medical misinformation seems to have boomed in recent years (Lavorgna & Di Ronco, 2017, 2019; Broniatowski et al., 2018; Lavorgna & Sugiura, 2019; Horsburgh & Barron, 2019; Klawitter & Hargittai, 2018; Rojek, 2017), exposing the general public to (at times fatal) hazards. In several countries, a number of court trials and journalistic reports have provided evidence of the social harm of these types of malpractices, which negatively impact not only the health of people, but also the confidence in the professional scientific and medical norms (D’Amato, 2019; Editorial, 2018; Grimes, 2019; Hernandez & McMillan, 2019; Ohlheiser, 2019). It is estimated that patients who solely choose alternative therapies have a twofold risk of mortality compared with those who have standard therapy (Johnson et al., 2018); those with early-stage cancers such as those of the breast and bowel face a four- to sixfold increase in mortality (American Society of Clinical Oncology [ASCO], 2018).

The topic of misleading medical information and, specifically, of the harms related to non-science-based health practices has been addressed by criminologists only in recent years through some pilot studies (see, for instance, Horsburgh & Barron, 2019; Lavorgna & Di Ronco, 2017, 2019; Lavorgna & Sugiura, 2019; Markovska et al., 2019; Massa, 2019), having recognized that the social sciences and criminology in particular should be very concerned with the investigation of practices that can cause serious harms. These investigations can be traced back to the critical strand in criminology advocating for the discipline to engage with a broader notion of social harm (Hillyard & Tombs, 2004; Paoli & Greenfield, 2013; Tombs, 2016), which also (but not only) encompasses criminal harm.

The study of non-science-based health practices, however, is also a multi- and cross-disciplinary endeavor; indeed, a synergetic approach between criminology and other disciplines seems promising to unpack the complex social dynamics characterizing this research area (Lavorgna & Di Ronco, 2019), with web/data science and communication studies playing increasingly a prominent role in shedding light on behavioral and communicative patterns in cyberspace (Del Vicario et al., 2016; Zhu et al., 2020). This article aims to further this path of inquiry by combining criminological and computer science expertise to investigate the existence of structural relationships and to analyze the characteristics of popular actors active in promoting harmful alternative health practices via the online microblogging and social media networking service Twitter, with a specific focus on anticancer treatments in the English-speaking online community.

Background

As mentioned in the Introduction, the propagation of misleading or even fraudulent information on health practices that are not based on scientific evidence, which are often harmful yet tend to be widely accepted by many, has boomed in recent years with the commercialization and popularization of cyberspace. At the same time, this topic has also started to receive increasing academic attention, including in the social sciences and criminology (Lavorgna & Di Ronco, 2017, 2019; Lavorgna & Sugiura, 2019; Horsburgh & Barron, 2019; Markovska et al., 2019; Massa, 2019; Rojek, 2017). Debunkers and journalistic inquiries had long dwelled on this issue (consider, among some recent examples, D’Amato, 2010, 2019; Di Grazia, 2017; Konnikova, 2016; Ohlheiser, 2019; Sense About Science, 2013), while academic attention was initially rare and mostly limited to the medical sciences (Angell & Kassirer, 1998; Barrier & Yarett, 2012; Bashford, 1911; Deng & Cassileth, 2013; Ernst, 2002; Greenberg, 1975; Herbert, 1986; Lerner, 1984), even if with some notable exceptions (Blume, 2006; Coulter & Willis, 2004; Pedersen, 2013).

A minority of social sciences and criminology studies have focused, among other things, on “old media” and how they convey information on alternative information (Capurro et al., 2018; Coulter & Willis, 2004; Lavorgna & Di Ronco, 2019; Lavorgna & Sugiura, 2019), on the institutional context that allows certain ineffective pseudoscientific practices to thrive (Markovska et al., 2019), on the role of the political debate in generating antiscientific positions on health care issues (Massa, 2019), and presented a typology of harmful health practitioners offering non-science-based treatments (Lavorgna & Di Ronco, 2019). Most social sciences–led investigations on non-science-based harmful health practices (including in public health literature), however, have rather dealt with their “online” features. In this context, it is possible to identify three main research strands that are particularly relevant to provide some background to the study here presented.

First, most attention has been given to vaccine hesitancy and the role of cyberspace in propagating anti-vaxxers’ ideas (Kata, 2010, 2012; Sak et al., 2015; Smith & Graham, 2017). Often by relying on digital sociology approaches, researchers have analyzed the characteristics of online conversations and messages (including on Twitter). They have identifying key themes (ranging from freedom of choice to Big Pharma conspiracies) and emphasized some elements of strength in anti-vaxxers’ communication—for instance, in how they use personal stories and an emotionally laden language to describe the alleged negative effects of vaccines (Davies et al., 2002; Love et al., 2013), in their use of language (for instance, often they do not explicitly oppose vaccines but rather claim to be “pro-safe vaccines” or “pro-choice”; Kata, 2012), or in the use of dubious evidence such as privately published materials to support questionable claims (Davies et al., 2002; Di Ronco & Allen-Robertson, 2019; Kata, 2010, 2012; Smith & Graham, 2017). Researchers have also underlined the polarized nature of this type of online debates (Ahn & Park, 2015; Di Ronco & Allen-Robertson, 2019; Gunnarsson Lorentzen, 2014).

Second, researchers have investigated the role and behaviors of online false health and well-being “experts” (Konnikova, 2016; Lavorgna & Sugiura, 2019; Rojek, 2017; Rousseau, 2015). Individuals without recognized qualifications who peddle dubious remedies find in cyberspace a platform for global, large-scale, interactive, and commercial communication, where they can construct a highly curated and cleansed version of themselves; in this way, they take a role that was once reserved for highly trained specialists (Khamis et al., 2016; Lavorgna & Sugiura, 2019; Rojek, 2017). As explained in Horsburgh and Barron (2019), a key issue is that evidence-based information is often complex and difficult to understand by the public, leaving room to many different voices to communicate erroneous, biased, or fraudulent information to consumers—leading to psychological, physical, and economic harms. Being bombarded by misleading information, the public can also experience information overload, which may result in people making poor health-related decisions (Ramondt & Ramírez, 2019).

Third, there has been some exploratory research looking specifically at online communities promoting or otherwise supporting dangerous fraudulent health information, including non-science-based anticancer treatments (Lavorgna & Di Ronco, 2017). In this case, however, the researchers have qualitatively analyzed only a limited sample of threads on a very popular Italian online forum, shedding light on the support received by three convicted fraudulent medical “experts” by their believers, who formed sect-like chat groups contributing to the continuation of these harmful criminal practices. Interestingly, these “believers” did not feel themselves victimized; if they felt victimized, it was by the medical establishment and “Big Pharma.” Nevertheless, they are victims of dangerous frauds, even if at the same time they are actively contributing to the scam by promoting dangerous treatments and spreading misinformation to others.

Overall, all these three main research strands have shown how non-science-based health approaches are at the heart of very polarized debates. For many “believers” and “skeptics,” being curious about alternative medical practices can signal a proximity to a certain lifestyle; members of both these groups are generally active in cyberspace and especially via social media in defending their arguments or opinion, and often also in mocking or disparaging their counterparts (Lavorgna & Di Ronco, 2017; D’Amato, 2019; Di Ronco, & Allen-Robertson, 2019; Petts & Niemeyer, 2004; Schmidt et al., 2018). While previous studies have looked at both groups, looking at their interactions on social media (e.g., Di Ronco & Allen-Robertson, 2019), in this study, we decided to focus only on “believers.” More specifically, furthering the third research strand as described above, we are focusing on providers of non-science-based anticancer treatments and their most active supporters (on Twitter) who we expect to often play the ambivalent role of being victims while having a moral responsibility as they facilitate the dissemination and adoption of dangerous practices (Lavorgna & Di Ronco, 2017; Pedersen, 2013).

We are focusing on the specific case of anticancer treatments, which are a particularly heinous and traditionally “successful” form of fraudulent remedies. As reported by Lerner already in the 1980s, a lack of confidence in the public institutions, a public panic toward cancer or “the big C,” and a general feeling of frustration with the official medicine for not finding a “cure” for cancer created a fertile soil for fraudsters, who often refer to surgery, radiation, and chemotherapy as mutilation, burning, and poisoning (Lerner, 1984: 816). Unfortunately, despite the pervasiveness of different types of cancer, cancer is extremely complex and, in some cases, still poorly understood; treatments that are accepted by the scientific community often come with major side effects—a fertile soil for fraudulent and unscrupulous providers, who prey on those more vulnerable and their hopes of having access to a panacea (Grimes, 2019).

Health care frauds are criminalized in many countries, even if in most cases there is not a specific legislation to protect cancer patients and their beloved ones from “quackeries.” An exception is the United Kingdom, where the Cancer Act 1939, among other things, prohibits certain advertisements relating to cancer. More specifically, it prohibits the publication, except under specified conditions, of advertisements that “offer to treat any person for cancer, or to prescribe any remedy therefor, or to give any advice in connection with the treatment thereof” (Section 4(1)). The Act, however, has been sparsely used (approximately 20 cases in the last three decades), with only a few cases that had been tried under the Act resulting in convictions and fines. However, many other providers have had to remove or modify advertising after being contacted by Trading Standards under the Cancer Act. If not under the Cancer Act (which covers also websites and social media accessible within the United Kingdom if they are aimed at the general public) many of the tweets analyzed are likely to be not only potentially harmful but might be potentially illegal under consumer protection laws and other comparable regulations in other jurisdictions.

Apart from criminalizing and/or regulating those making dangerous cancer claims, in recent years, there has been an increasing pressure to make tech companies and service providers (more) accountable for what passes through them—after all, it is because of them if fringe and conspiracy theories, including misleading, fraudulent, and potentially dangerous cancer “treatments” often reach the masses. The plumbing metaphor used by Ohlheiser (2019) (“companies [such as Google and Facebook] have been more focused on building out the plumbing than keeping the pipes clean of misinformation”) is quite effective to stress the responsibility and accountability of intermediaries in having a more active role in regulating online content with ad hoc policies speech (Leiter, 2010; Pavan, 2017)—an issue which remain controversial because it impacts the boundaries of freedom of expression and of speech. For the moment being, as misleading or false health information is removed in an inconsistent way (if at all, as it is generally not recognized as harmful content by tech companies’ moderators), the problem persists, and bogus medical advices proliferate online. Tech companies are experimenting with strategies to limit health misinformation, first and foremost around vaccine hesitancy. Facebook, for instance, is considering alerting users who are invited to join groups known to have spread debunked false information (Ohlheiser, 2019), and it has reportedly changed its News Feed algorithms to reduce promotion of posts promising miracle cures (Hernandez & McMillan, 2019); YouTube is reportedly working with medical doctors to identify and remove content promoting unproven dangerous medical claims (Hernandez & McMillan, 2019). These attempts to better police harmful health-related information online are welcomed, but a quick keyword search on the most popular social media platforms shows clearly that there is still a long way to go.

The Twitter platform was chosen as object of this study as it had not been studied yet as regard to its role in the propagation of dangerous non-science-based health information such as fake anticancer treatments, even if other studies (such as on the anti-vaxx community) have suggested that online communities active on Twitter have an important role in the proliferation of medical misinformation.

Method

This study, funded by the Web Science Institute (WSI) of the University of Southampton (which promotes collaboration between disciplines to focus the analytical power of researchers to understand and explain the Web), has been carried out by a criminologist and a computer scientist. The approach chosen is interdisciplinary and combines a computational approach and traditional content analysis to “zoom in” and analyze more in-depth a subset of data of particular relevance.

Data Collection

Tweets were collected for a 5 months period of observation (January 2019–May 2019). The Web Data Research Assistant, a Chrome browser plugin (Lavorgna & Di Ronco, 2019), was used to retrieve data from the Twitter web app. We retrieved data (i.e., tweets and the accounts that have participated with at least one tweet during the period investigated) in shanks using a combination of 20 sets of two keywords (e.g., “tumor + holistic”; “tumor + natural”; “cancer natural”) in English each time. This search produced a total number of 15,813 tweets from 11,285 Twitter users. Duplicate tweets were eliminated. Being conscious that automatic keyword searches can produce many false positives which could negatively impact our analysis, we decided to increase data quality by adding a manual element in the data gathering. This approach is in line with recent attempts to improve the capability of social scientists to gather empirical evidence at scale from online communities for sociotechnical analysis without losing data accuracy (see Halford et al., 2018; Halford & Savage, 2017; Middleton et al., 2020), and promotes a constant interaction between the computer scientist managing the data collection and the criminologists providing subject matter expertise.

After the initial data set was created, the criminologist provided incremental feedback to the computer scientist suggesting words and/or users to be used for filtering as manifestly irrelevant for the scope of this work (e.g., “cancer” can refer to horoscope-related jargon, or combination of keywords such as “cancer + alternative” can lead to tweets posted by reputable publishing houses promoting new science-based academic articles on cancer treatments). In total, 275 words and 58 users were blacklisted, reducing our final data set for the social media analysis to 7,676 relevant tweets from 5,615 users.

Network Data Analysis

One part of the analysis was dedicated to investigating the relationships among the promoters of alternative anticancer treatments—whether they grouped together (how interlinked was the network), how they grouped together (how did those links structure the network), and why they grouped together (what factors encouraged them to group). To do this, we focused on the existing dynamics in the dialogues among the 5,615 “relevant users” who created the set of 7,676 relevant tweets. We identified the following:

The conversational network created by the relevant users either mentioning each other in their tweets or replying to each other’s tweets (N = 320);

A number of engaged conversational subnetworks that are significant well-connected components within the whole conversational network (N = 236);

The friendship network of Twitter follower relationships between the members of the engaged conversational subnetworks.

A second stage of the analysis aimed to analyze the characteristics of actors active in promoting harmful alternative health practices. This stage allowed us to “zoom in” toward tweets and authors of particular relevance and involved a qualitative content analysis of (a) the tweets that attracted the most “attention” and (b) the authors with the most “network capital.”

The “attention” of a single tweet was determined by the number of retweets, likes, and responses it received. Generally speaking (though not always), a like or a retweet indicates that the content is considered important/endorsed by the user; liking or retweeting is not just about the diffusion of information, but it is also used to express agreement, form or maintain a social relationship, and share public sentiment. Mentioning other accounts indicates discourse between users (Ahn & Park, 2015; Di Ronco & Allen-Robertson, 2019). The average of the sum of the replies, likes, and retweets in our set of 7,676 tweets was 20 (ranging from 56,700 to 0); only 317 popular tweets were above average, and they were considered for the qualitative analysis.

Each Twitter user’s “network capital” was determined by the number of followers that their account has attracted, and hence the number of Twitter users that would be expected to see (and potentially interact with) their tweets. In our set of 5,615 users, the average of number of followers is 234,212 (ranging from 3,984,677 to 0); only 85 authors were above this average, and also their tweets were considered for qualitative analysis.

The relatively limited number of above-average tweets and authors identified is not surprising and in line with previous research which stressed how communication on Twitter is largely dependent on relatively small numbers of users critically positioned in the structure of the network (Garcia et al., 2017; Jürgens et al., 2011). The two subsets of data (for a total of 394 tweets, as eight tweets were common to both subsets) were qualitatively analyzed with the support of relevant software (NVivo 12). Data were organized through the following main codes: author (with the subsections: relevant author; to be dismissed), type of treatment, motivation, and attitude.

Ethical Considerations

In online research, ethical standards are still under refinement and may still vary among disciplines and jurisdictions. Overall, there is still insufficient consistency or agreement on current practice (Zimmer & Kinder-Kurlanda, 2017). Unfortunately, some research frameworks and ethical standards traditionally used by researchers and ethical boards (e.g., around the concepts of informed consent, expectations of privacy and anonymity) are inadequate to respond to the new challenges and possibilities of online research, especially in sensitive areas; there is the need to find new balances between mitigating risks to both researchers and research subjects, while allowing the exploration of unchartered research territories (Markham & Buchanan, 2012; Middleton et al., 2020). As suggested by the state-of-the-art literature on online research ethics, to carry out our study, we took all the necessary precautions to ensure that our research was “ethically informed” (Zimmer & Kinder-Kurlanda, 2017). Particularly, we collected data by using a software tool compatible with Twitter policies, and we collected information that had already been posted on the Twitter platform (hence, the participants had already accepted Twitter’s Terms and Conditions). For the nature of the information posted and the platform used, we could assume that the participants expected the virtual space used to be public. Seeking informed consent from Twitter users would have been virtually impossible and seen as intrusive, but as we only observed passively participants, this omission is in line with current research standards (British Sociological Association [BSA], 2017; Social Data Lab, 2019). For concerns related to Twitter users’ anonymity, we did not use personal identifiers and are not quoting directly the content of the tweets (in line with Di Ronco & Allen-Robertson, 2019; Williams & Burnap, 2017; Williams et al., 2017). The retrieved data are not going to be published, but they have been stored securely in line with the University Research Data Management Policy. We submitted our data study plans to our university Research Ethics Committee for approval (approved—ERGO/FPSE/49000).

Results and Discussion

Social Media Analysis

As is indicated by the ratio of relevant tweets (7,676) to relevant users (5,615), the overall network is sparse with the vast majority of users (5,181) responsible for only a single tweet. In addition, most tweets (4,680) are isolated, connecting to no other users via mention or reply-to relationships. These isolated users feature as the outer rings in Figure 1, with the more connected authors as the inner structure which has been highlighted in Figure 2. In both figures, the size of the individual author nodes is related to the number of Twitter followers of that account.

Entire network of relevant tweets, as produced by Gephi Force Atlas2 layout algorithm.

Highly linked conversational network components isolated by Gephi Modularity algorithm.

The structure of the network was identified (and colored) by the Louvain modularity algorithm, which determines subparts of a network that have more linking internally than to the rest of the network. The modularity statistic for the entire network is 0.96, given a possible range of [−0.5. . .1], indicating that it is not a homogenously interconnected whole, but characterized by internally connected clusters (seen in Figures 1 and 2).

The resulting “doughnut-shaped” conversational network features clusters around significant actors such as a user presenting himself as a doctor and promoting alternative treatments; a radio program dedicated to wellness and well-being; publishers of natural health and wellness books and Twitter pages dedicated to books and books promotion; health news and pharmaceutical companies.

The activity of the smaller conversational network was observed over time as a day-by-day animation, allowing us to observe the network’s activity at any given point in the period January 2019–May 2019. From this dynamic visualization, it was evident that only very small and disconnected sets of even the most interconnected parts of the conversational network were active at any time.

Overall, the features of the social network observed suggest that, contrary to previous research describing supporters of non-science-based medical treatments online (including in the Twittersphere, in the case of anti-vaxxers) as part of a community or a movement, most users tend to cluster in transient and loosely formed collectives around a common interest or activity (Forsyth, 2019); they resemble more a crowd of strangers springing up because something happens catching briefly their attention (e.g., a book is published and advertised, or a popular promoter posted some interesting information) rather than a defined group displaying specific subcultural characteristics.

Friendship networks

As well as the “in the moment” engaged conversational network that emerged from the tweets, we identified the more permanent “friendship” relationship between the actors in that network. Data about each actor’s friends were retrieved using TWARC (a package for Python for archiving Twitter data), and the network of friendship between these actors was again plotted using Gephi. Looking at friendship networks allowed us to consider the network of attention—the choices of each actor about whose media to consume or which other actors to align to and an indication of how actively they pursue their Twitter life. Mostly, these Twitter accounts followed hundreds or thousands of other accounts in general (i.e., not constrained to the accounts in this study), while minority only followed tens or fewer and a handful followed hundreds of thousands of other accounts.

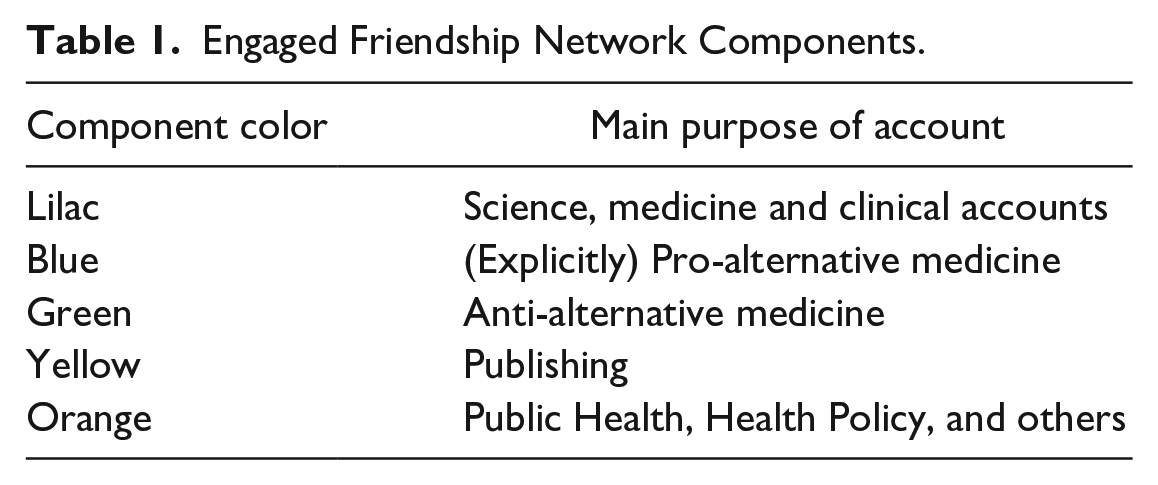

The components of this network were also calculated using Gephi’s Modularity function, to determine those parts of the network that had significant internal relationships. Examination of the account profiles of the members of the components demonstrates the principle of homophily that causes the actors to closely align themselves (by choosing whom to follow) with other actors that share their purpose (see Table 1). This results in a more densely structured relationship than shown in the conversational networks.

Engaged Friendship Network Components.

As well as those relationships shown in Figure 1, each actor follows a potentially very large number of other accounts. These “other accounts” were examined, to see which were most commonly followed by (a) the network as a whole (see Table 2) and (b) the pro-alternative medicine accounts in particular (see Table 3). In both cases, the most commonly followed accounts had huge network capital, with many tens or hundreds of thousands of followers. A sharp distinction emerges between the network as a whole (who mainly following official science and medical sources) and the pro-alternative medicine accounts (who mainly following conservative political or celebrity sources).

Other Accounts Most Commonly Followed (by 50 or More Accounts in the Whole Network. i.e., > 20%).

Note. Descending Order of Frequency.

Other Accounts Most Commonly Followed by Pro-Alternative Clique (12+ members of the Clique, i.e., >50%).

Note. Descending Order of Frequency.

This result suggests that, even if a well-defined online community cannot be identified (as shown above), there are interesting sociopolitical and cultural elements shared by the providers of non-science-based anticancer treatments and their supporters on Twitter. While further research investigating values and motivations of these users is needed to validate this finding, a better understanding of their system of beliefs could certainly help to develop and implement better tailored awareness-raising strategies, both online and offline (as convergence in political ideologies, values and systems of belief suggest that medical misinformation is likely be also prevalent among people aligned to those ideas in the physical world, regardless of their online engagement).

Content Analysis

Author

Approximately one third of the tweets considered for the qualitative analysis had to be dismissed as they were not posted by providers of non-science-based anticancer treatments or by their supporters. Rather, more than half of the dismissed tweets had been posted by doctors or debunkers when trying to convince supporters of “quack” treatments of their dangerousness, or when attacking or mocking providers. The remaining dismissed tweets, while relevant to the topic of non-science-based anticancer treatments, were mostly posted by a variety of actors (research centers, medical schools, reputable academic publishing houses, media outlets) that were discussing such a topic not to support it whatsoever, but to inform other users and to share research results. Only a very limited number of tweets (10, which corresponds to less than 3% of the set considered) were irrelevant to the topic (e.g., tweets about the horoscope, politics, or veteran support). It is suggested that Twitter users active in sharing cancer-related research-based information might form relatively more active and more stable networks on Twitter, and hence their presence is higher among the “more popular” tweets or among the authors with more network capital; providers of non-science-based anticancer treatments and those sympathizing with them tend on the contrary to be very disperse in the Twittersphere (in line with the results of the social media analysis).

It would be out of the scope of this study to focus in detail on debunkers. However, as they made up a relatively large part of the tweets gathered, it is worthwhile to spend a few words on them. In line with what was shown in the engaged conversational network (see Figure 3, specifically the part in green), doctors or debunkers intervening in the attempt to counter medical misinformation end up engaging online mostly with people sharing their views and purpose, and interactions with the twitter users at the core of our study are overall limited. When engagement takes place, debunkers tend to be dismissive, oppositional, at times offensive (e.g., supporters are depicted as “morons”). Research on science communication, however, suggests that this attitude can be very problematic, as it can lead to a dangerous alienation of potentially vulnerable members of the society (Middleton et al., 2020), and potentially give further traction to pseudoscience: simply providing people with scientific facts (without emphatic communication) is not effective to counter or reduce science denial (Duchsherer et al., 2020; Landrum & Olshansky, 2019; Preece, 1999), and entering an endless duel with antiscientific or conspiratorial thinking can unintentionally stimulate it (Hristov, 2019).

Friendships between the engaged conversational network.

If we focus on the tweets relevant for the scope of this study, their authors tend to be very heterogeneous: providers are present on Twitter, but only in very limited cases (12 “popular” tweets) they posted presenting themselves as individual users (e.g., integrative medicine practitioners, advocates for traditional natural remedies, healers); in a higher number of cases (30 “popular” tweets and 18 tweets selected for the network capital of their author) the post came from providers presenting themselves as business companies, alternative/integrative medicine clinics, or foundations/associations for integrative cancer care. The lion’s share of Twitter presence is held by (more or less) conscious supporters of non-science-based anticancer treatments—that is, those that through their posts defend, validate, disseminate, or otherwise popularize these treatments and/or their providers. Supporters are mostly individuals (self-defining themselves as, e.g., social media enthusiasts, authors and speakers, world travelers) with no specific health expertise, even if a handful of them were presenting themselves as, for example, health tweeters, health activists, and the like, and were tweeting from accounts presenting a “dr.” alongside their name, or words such as “science” as part of their username to presumably give credibility to their claims. Cyberspace and its convergent technologies, in combination with a neoliberal environment somehow incentivizing exaggeration and over-glamorization in self-promotion, have indeed given individuals without recognized qualifications a platform for potentially large-scale communication, where they can construct a cleansed version of themselves as experts or quasi-experts (Khamis et al., 2016; Rojek, 2017).

What is particularly concerning, however, is the presence of tweets that indirectly validate and disseminate questionable treatments coming from accounts belonging to traditional media outlets (local/national newspapers article with offline distribution, 26 tweets). This is in line with the findings of recent analyses focusing on the media representation of harmful alternative medicine (Lavorgna & Di Ronco, 2019; Lavorgna & Sugiura, 2019), which emphasized how (at least in Italy and in the United Kingdom) media understanding and framing of alternative medicine-adjacent health scams is potentially confounding for readers, and urged better dissemination of scientific research via mainstream media to prevent vulnerable victims to fell pray of potentially dangerous practices. In addition, a total of 14 further tweets could be attributed to blogs and magazines published online and dedicated to generalist topics such as wellness and beauty—which is again in line with recent research findings underlying the role of social media platforms such as Twitter in promoting potentially dangerous lifestyle trends by allowing, for example, nutrition misinformation to spread (Horsburgh & Barron, 2019).

Of relevance were also tweets linked to Twitter accounts dedicated to book promotion and symposia, used to advertise (apparently self-published) books on questionable health practices and upcoming alternative care events—forms of publicity that have already been observed before in this context (Di Ronco & Allen-Robertson, 2019; Lavorgna & Di Ronco, 2019), and that have the double aim both to promote a specific book/practice and to build trust toward potential “customers” as publications and conference attendance can give a patina of scientificity in the eye of the layperson, who is generally not in position to easily assess—for instance—the difference between a reputable academic publication and a publication in predatory or substandard journals.

Type of treatment

Non-science-based anticancer treatments come in many forms. This variety is reflected in the tweets analyzed. Among the tweets explicitly endorsing or otherwise disseminating non-science-based treatments, a big majority (168) simply mentioned “alternative treatments” in general; often they then referred to a hyperlink, a book, a movie, or other source for further information outside of the Twitter platform. Mainstream forms of complementary and alternative medicine, such as acupuncture (one tweet) or chiropractic (one tweet), were rarely mentioned. Several tweets generally referred to herbal or otherwise “natural” remedies (10 tweets), specific types of common foods or spices (11 tweets), and yoga (three tweets). Laetrile, an infamous substance advertised for decades as cancer treatment that has no other effect than potentially cyanide poisoning the patient (Lerner, 1984), was also referred to in three tweets. Spiritual forms of healing (promoting a healing school, the “decree healing” services offered by self-proclaimed prophets, or “spiritual” providers promising to stop all cancer and pain) were also referred to (eight tweets). Specific named forms of non-science-based types of treatments (a form of quantum healing, a tribal rite, the therapies offered by two well-known American providers, and those offered via an Indian app advertised in English) were proactively suggested by supporters. Last but not least, it is interesting to note the presence of medical cannabis advocates (eight tweets), promoting the use of cannabis not only in treating sickness caused by chemotherapy or improving a person’s appetite (there is scientific research indicating that cannabinoids can help with symptoms such as nausea and pain; see MacMillan Cancer Support, 2019), but also describing cannabis as a “magical” plant that “cures” cancer.

Motivation and attitude

The active presence of providers on Twitter seems to be moved by diverse motivations, ranging from their search for profit (e.g., when they sell products or training courses) to their desire to be credited (i.e., to receive validation and legitimation for the identity they have built, for example, when they define themselves as “scientists” or “clinicians,” or promote their book and scientific conference-style events—see aforementioned text). It is also likely that some providers do genuinely believe in what they advertise, being motivated by strong inner beliefs and opinions, and specifically by conspiratorial beliefs (e.g., they do “unbiased” research and are not at Big Pharma’s service) or by an antagonistic attitude against science-based medicine (which is considered, e.g., “arrogant” or not untrustworthy). This variety of (apparent) providers’ motivations is in line with recent research investigating providers’ motivations behind their actions and their modus operandi and offering a multidimensional criminological typology of providers of non-science-based practices (Lavorgna & Di Ronco, 2019).

Also supporters are moved by diverse motivations: in line with research carried out on an Italian popular online forum—or, to be more precise, on its threads specifically dedicated to some potentially dangerous non-science-based health practices (Lavorgna & Di Ronco, 2017)—supporters range from being active in providing information (e.g., advising another user to try a certain alternative treatment) to using the online platform to ask for advice (e.g., requesting information on providers of alternative treatments active in a certain area). In the Twittersphere, however, a few other interesting motivations could be identified, in primis the willingness to let other people know about a cancer patient success story that they attribute to some alternative treatment (also in veterinary context, e.g., two users mentioned integrative treatments received by their dogs). In all these cases, a common element can be identified in the fact that all supporters, in a way or the other, seem to embrace a certain “alternative health lifestyle”: they search for a more “natural” way (perceived as safer or less painful) to cure themselves or their beloved ones. Interestingly but not surprisingly, if we look at the word frequency feature on NVivo, the word “natural” is the single word more repeated (67 times) in the subsets analyzed after more obvious words dictated by the topic of investigation such as “cancer,” “alternative,” or “curing.”

Requests for monetary contributions are also present: we identified 11 tweets linked to crowdfunding initiatives trying to raise money to help a patient to access a specific type of non-science-based cancer treatment, usually abroad (plus one additional tweet where an alternative medicine provider was raising money for her new clinic). Non-science-based treatments, indeed, can be quite expensive and, as lamented by a couple of users, they are not accessible though national health care, nor covered by insurance. Cancer fundraising has been soaring in recent years (Newmann, 2018), and health risks associated with this trend (as it can promote useless or potentially dangerous treatments) have already been discussed by recent scholarship (Newmann, 2018; Snyder & Caulfield, 2019).

In some tweets, however, another important type of monetary motivation emerges—that of affordability: two users posted how they/a friend of them were following an “alternative” treatment as they could not afford science-based cancer drug medications (from the context, it could be inferred that the authors were from the United States, and that one of the patients had health insurance). Additional research would be needed to explore in more detail what could be a further worrisome outcome of the lack of proper universal health care. Lack of trust in the medical context and, more broadly, lack of trust in contemporary society have often been identified as a key factor in explaining why people fall for medical misinformation (Baron & Berinsky, 2019; Devaney & Holm, 2018). Particularly, the breakdown of communication between some medical professional and their patients, and between some research communities and the general population, has been recognized as a widespread problem that should require a structural and sustained institutional response (Garland & Travis 2020). However, the existence of financial considerations at the core of (bad) health choices suggests that better communication is not enough, and solutions addressing directly financial constraints are needed.

Before concluding, it is interesting to note that a conspiracy-led narrative (conspiratorial thinking is a well-known mechanism of science denial; see Prot & Anderson, 2019) was relatively common also among supporters in the subset analyzed: an attitude of distrust toward the established health care system was present, in more or less explicit ways, in several tweets, but in 10 of them there were explicit attacks against the pharmaceutical system, or suggestions of the existence of secret clandestine governmental plans to oppose alternative medicine (e.g., it was said that a cure for cancer already exists but it is kept secret to allow elites to make money, or that holistic doctors get killed to impede them to promote their cures).

Conclusion

Nowadays, important criminal and deviant acts increasingly occur in hybrid spaces (Brown, 2006) where neither cyberspace nor physical space are predominant. The propagation of potentially dangerous non-science-based information, such as fake anticancer treatments, offers a pertinent example of this phenomenon, with discourses that can have a direct effect on the physical health of individuals and their loved ones spreading online. In this context, online data, and especially online social media data, can offer a precious yet accessible treasure trove of information also for criminology researchers, creating new opportunities for understanding crime and deviance in and beyond cyberspace.

Twitter data, as many comparable data from online social media, can be seen as qualitative data but on a quantitative scale; novel methodological approaches can be used alongside the traditional tools of social science researchers to make a better, more comprehensive sense of these data. The interdisciplinary approach adopted in this study proved useful to ethically study online networks and their discourses, allowing the researchers to investigate providers of non-science-based anticancer treatments and their supporters on Twitter with both sufficient breadth and depth. As such, we hope that our study, by offering a cross-disciplinary way to integrate intellectual and methodological insights from different disciplines, can methodologically contribute to enhance our understanding of complex contemporary societal phenomena that occur at least partially online.

Social media analytics have allowed us to explore the existence of structural relationships among actors involved in relevant discourses in the Twittersphere, and have shown the lack of a proper community of interest, and rather the existence of transient collectives clustering around specific and popular discussions, themes, or actors in the social network. This result suggests the need to be careful in depicting people connected in an online social network as belonging to a same community or even subculture, as when we look at conversational networks overtime rather than in a specific point in time we could notice (as we noticed in our study) the lack of sufficient stability in the network to allow the construction of lasting relationships or a strong identification in a certain network. However, looking outside the network analyzed it was possible to acknowledge the presence of a recognizable shared system of values, political beliefs, and possibly also lifestyle and cultural preferences—suggesting an important avenue of further research. The approach proposed in this study could be useful to study other social networks or online “communities” grouping deviant or even criminal behaviors (ranging from the anti-vaxxers “movement” to right-wing extremists), as it would allow us to better comprehend the cohesiveness of said networks, to identify their “core” (if there is one), and to better determine the stability of their fringes.

Of course, our study focused only on anticancer treatments, which traditionally have been (and still are) a particularly fertile ground for harmful non-science-based anticancer treatments because of the public panic toward cancer and the lack of a general, simple, or painless cure (Gorski, 2019; Lerner, 1984). Previous analyses on supporters’ roles and attitudes on other diseases (for instance, those which might benefit from stem-cell therapy, Lavorgna & Di Ronco, 2017) suggest that certain online dynamics (such as motivations and general attitudes) are likely to be similar. Nonetheless, because of the unique nature of fear and fatalism related to cancer, especially in some demographics (Almeida et al., 2019; Curran et al., 2020), and the gatekeepers and influencers’ role in the Twitter cancer community (Wang & Wei, 2020), we would expect, for instance, to see a more defined group (rather than transient crowds of strangers) pivoting around, for instance, a rarer disease. Further research, therefore, is needed before our findings can be safely generalized.

From the content analysis of the identified subsets, in line with previous research on vaccine hesitancy (Amin et al., 2017), it appears that messages appealing to core individual values (or lifestyles, in our case) are particularly successful. These findings have potential for application to health communication, as they suggest the importance to develop interventions that work with individual values and identities, and to include themes such as (lack of) “natural,” “affordability,” and “trust” in discussions on potentially harmful non-science-based treatments to reach those that might be more vulnerable to their appeal. Focusing only on their potential harm, and insisting on the fact that they are not “science-based,” might be not much effective (if not counterproductive).

There have been attempts to intervene in online communications to limit the spread of fake medical news and bogus cures, while trying to respond to the growing demand for online health information (see, for instance, Macmillan Cancer Support, 2017). However, using the web to promote relevant information and debunk false medical claims while trying to break into polarized or isolated echo chambers can be a daunting task (D’Amato, 2019). In this scenario, a better understanding of the unique characteristics of specific online social networks and of the features and motivations of their most successful actors can have a fundamental role to better focus and tailor awareness-raising efforts, for instance, by intervening on those actors or in communications around those topics having more appeal for those users that are maybe intrigued, but have not yet developed a strong positive opinion, toward harmful practices.

Footnotes

Acknowledgements

We would like to thank Dr. Nada Albunni for her contribution in the data collection.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study has been supported by the Web Science Institute Stimulus Funding, University of Southampton.

Compliance With Ethical Standards

REC approved (ERGO/FPSE/49000).