Abstract

We sought to evaluate the context of potential implementation of an automated quality measurement system for inpatients with heart failure in the U.S. Department of Veterans Affairs (VA). The research methodology was guided by the Promoting Action on Research Implementation in Health Sciences (PARIHS) framework and the sociotechnical model of health information technology. Data sources comprised semi-structured interviews (n = 15), archival review of internal VA documents, and literature review. The interviewees consisted of four VA key informants and 11 subject matter experts (SMEs). Interviewees were VA quality management (QM) staff, clinicians, data analysts, and quality measurement experts, among others. Our interviews identified themes, which confirmed that the automated system is aligned with current internal organizational features, hardware and software infrastructure, and workflow and communication needs. We also identified facilitators and barriers to adoption of the automated system. The themes found will be used to inform future implementation of the system.

Heart failure (HF) is associated with a high rate of morbidity and mortality in the U.S. Department of Veterans Affairs (VA) and the Unites States (Benjamin et al., 2018, 2019; Yoon et al., 2016). More Medicare dollars are spent for the treatment of HF than for any other chronic condition (Kilgore et al., 2017; Mozaffarian et al., 2016), with total direct and indirect costs estimated to increase from US$31 billion in 2012 to US$70 billion in 2030 (Heidenreich et al., 2013; Inamdar & Inamdar, 2016; Nuys et al., 2018). About 6.2 million American adults 20 years of age or older have HF (Benjamin et al., 2019), a number that is estimated to increase to 8.5 million by 2030 (Chrysant & Chrysant, 2019). Due to the increasing number of HF patients, there is an urgent need to improve the quality of care for HF patients in the VA and the United States (Segal et al., 2019).

We designed a new tool to automate hospital congestive heart failure (CHF) quality measurement, using natural language processing (NLP) (Meystre et al., 2016). The VA CHF quality measure, known as Congestive Heart Failure Inpatient Measure 19 (CHI19), describes how often correct medical therapy, in the form of angiotensin-converting enzyme inhibitor (ACEI) or angiotensin-receptor blocker (ARB), is provided to patients with left ventricular ejection fraction (LVEF) of less than 40% (Garvin et al., 2018). Our tool was designed to measure and track the quality of health care services provided (Kern et al., 2013). We named the automated system the Congestive Heart Failure Information Extraction Framework (CHIEF; Garvin et al., 2018; Meystre et al., 2016), the development of which is described elsewhere (Gobbel et al., 2014). NLP applications are used to extract diagnosis and other findings from clinical text documents with reasonable accuracy (Khalifa & Meystre, 2015). In our case, we used NLP to reduce the need for human review. The NLP pipeline that we designed extracts data for CHF quality measurement, including assessment of left ventricular systolic function, LVEF mentions and values, ACEI or ARB therapy, if applicable, and contraindications exempting guideline-concordant treatment (Garvin et al., 2018).

Prior research on informatics generally and VA specifically shows that health information technology (HIT) implementation has the potential to facilitate evidence-based care and increase quality, reduce costs, and increase patient and clinician satisfaction (Goldstein, 2008; Stabile & Cooper, 2012), but it is often accompanied by numerous implementation barriers. Some common barriers to HIT include lack of bidirectional communication between clinical staff and implementers, underestimation of complexity, and inadequate testing and resistance to change, among other factors (Tomines et al., 2013). To address potential barriers to the adoption of an automated quality measurement system, it is beneficial to engage stakeholders early on in the development process of the quality measurement system (Goodman & Thompson, 2017). To guide the formative evaluation process and potential future implementation of the CHIEF automated system, we conducted semi-structured interviews (n = 15) for stakeholder feedback on implementation. We combined the Promoting Action on Research Implementation in Health Services (PARIHS) framework (Goldstein, 2008; Kitson et al., 2008; Seers et al., 2018) with the sociotechnical model of HIT (STMHIT; Sittig & Singh, 2010, 2015) as our unified overarching implementation framework. We posed research questions as follows:

Finally, we conducted an archival review of VA internal documents and a review of VA-specific scientific literature to augment our findings based on the research questions and the themes identified during thematic analysis. The results of our findings from the stakeholder engagement facilitated development of themes that were then effectively used to address gaps in system design and implementation for optimal uptake and adoption of the CHIEF automated system.

Method

Theoretical Model

We used the PARIHS framework, which postulates that evidence, context, and facilitation are related to successful implementation of evidence-based practices (Kitson et al., 2008; Seers et al., 2018). With regard to our work, CHF quality measures are formulated from evidence-based clinical practice guidelines developed by the American College of Cardiology (ACC) and the American Heart Association (AHA) based on Level 1A clinical evidence from HF guidelines (Yancy et al., 2017). For example, Level 1A clinical evidence recommends the prescription of an ACEI or ARB therapy for CHF patients with LVEF of <40% and symptoms of HF unless a specific contraindication exists.

We use an automated HIT system because previous studies have demonstrated that HIT can facilitate, as described in the PARIHS framework (Kitson et al., 2008; Seers et al., 2018), implementation of evidence-based practice (Goldstein, 2008). To strengthen our understanding of context, we also examined dimensions of the STMHIT; (Sittig & Singh, 2010, 2015) to assess the context in which the implementation of the automated system will potentially occur. The STMHIT model contains eight dimensions: (a) hardware and software, (b) workflow and communication, (c) internal organizational factors, (d) clinical content, (e) human–computer interface, (f) people, (g) external rules, and (h) measuring and monitoring. In this article, we present the results of the first four dimensions of the STMHIT: (a) hardware and software, (b) workflow and communication, (c) internal organizational factors, and (d) clinical content. These dimensions will help us address important areas relevant to the context for improving adoption and uptake of an automated quality measurement system.

Semi-Structured Interview Guide

We developed semi-structured interview guides with relevant stakeholder engagement questions for all interviews, focusing subject matter expert (SME) questions on the interviewee’s specific area of expertise. The interview guide contained demographic questions, including the number of years of work experience in VA and quality management (QM), position title, and job description. Stakeholder engagement questions pertained to the sociotechnical model dimensions of hardware and software, clinical content, workflow and communication, and internal organizational features for collecting information. The stakeholder engagement questions also sought feedback on identifying and overcoming potential system barriers.

These were the research questions we wanted to answer via semi-structured interviews:

What is the context of implementation at the national, VISN, and local levels in terms of: (a) Hardware and software (b) Workflow and communication (c) Internal organizational features (d) Clinical content

What facilitation is needed to overcome barriers identified at the national, VISN, and local levels?

How can we use the output of the system not only for quality measurement but also for CDS?

What are the clinical extensions of the tool?

Inclusion Criteria

We identified key informants (Schiller et al., 2013) to initiate our snowball sampling (Heckathorn, 2011; Sedgwick, 2013) process whereby during each interview, we asked each participant whether they could recommend another acquaintance or colleague for an interview. We then interviewed SMEs who were recommended to us. Our goal was to interview stakeholders from various backgrounds, such as VA QM staff, VA quality improvement (QI) staff, clinicians, data analysts, and quality measurement experts, among others. We have described the details of the stakeholder characteristics in Table 1 under Results.

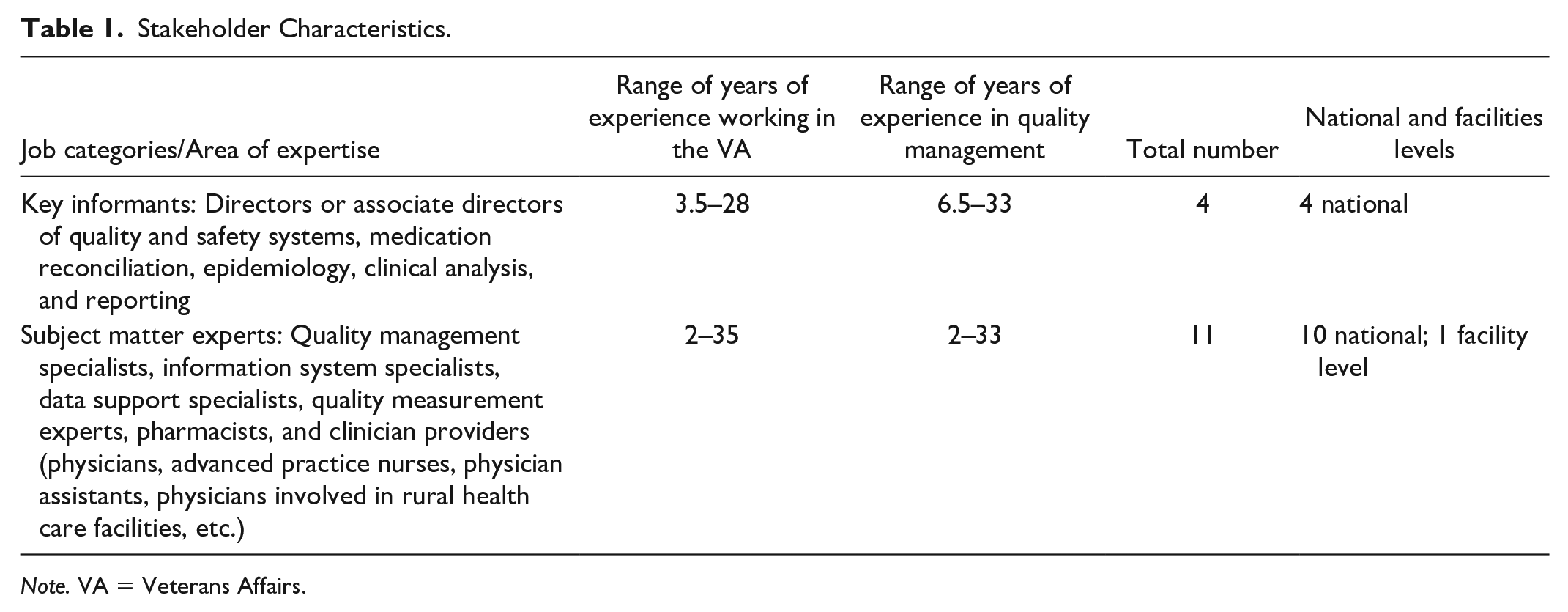

Stakeholder Characteristics.

Note. VA = Veterans Affairs.

Stakeholder Engagement Interview Process

We engaged stakeholders individually in person or in small groups over the phone with virtual meeting software (LiveMeeting and Lync). We conducted open-ended, semi-structured interviews, which typically lasted 45 min (range, 30–60 min). Two independent note-takers listened on the call and populated the interview guide independently. The two transcripts were combined into a single summary through a consensus process. The final single summarized interview was then sent to each interviewee for review and editing (i.e., member checking). We used this set of validated summaries to identify themes pertaining to our research questions. Through our stakeholder engagement, we identified and addressed critical implementation gaps in the effective use and availability of electronic data to support health care. We obtained key clinical data to support the adoption and uptake of our system and thus increase the efficiency of performance measurement and clinical quality in the area of HF. Next, we conducted an archival review of VA internal documents and a review of VA-specific scientific literature to augment our findings based on the research questions and themes identified during thematic analysis.

Thematic Analysis

To increase the adoption and uptake of our tool, we engaged key stakeholders, utilizing a semi-structured interviewing process with applied thematic analysis (Ando et al., 2014; Braun & Clarke, 2006, 2016) while developing and evaluating our tool. An “applied” thematic analysis focuses on answering research questions (Braun & Clarke, 2006; Guest et al., 2012). With the collective set of validated summaries, we documented the current workflow related to the HF performance measure and identified tools and processes within the workflow of obtaining data for electronic quality measures. Iterative rounds of review and summarization were based on the dimensions of the sociotechnical model (Sittig & Singh, 2010, 2015) and the PARIHS framework (Kitson et al., 2008; Seers et al., 2018).

Archival Review

After completing the thematic analysis of the stakeholder interviews, we conducted a review of the VA intranet (VA internal documents) and VA-specific peer-reviewed literature (between 2009 and 2015) to augment our findings. We called this process archival review. We used search key words such as quality measurement in VA, quality measurement in VA for HF, and quality measurement in VA for CDS system. For example, a search of VA intranet was helpful in identifying details regarding the general process for manual data collection for quality measures by the External Peer Review Process (EPRP) as described in Figure 3 (below).

We have described our process of summarizing the findings about context, including augmenting the findings from interview data via an archival review, in Figure 1.

Process to gather information about context.

Overall Analysis

We compiled the demographic information to summarize the number of years of VA and QM work experience, position titles, and job descriptions of the key informants and SMEs as described in Table 1. To present the results of stakeholder engagement analysis in a clear, concise manner, we identified key informants and SMEs by job category only. Supporting information for each of the themes we identified is summarized below, supplemented by data flow diagrams and figures informed by the review of scientific literature and VA intranet searches and information from stakeholder interviews (examples of which are also provided below).

Summarization of Data

After gathering the data as described above, we combined the results of the initial literature review, thematic analysis of stakeholder interview data, support snippets, and archival review to provide synthesized findings related to each research question and sociotechnical dimension.

We have described the entire process to gather information about context in Figure 1.

Results

Stakeholders Who Participated in the Study

To inform the design of our automated system and to facilitate adoption, we interviewed both key informants and SMEs. We conducted 15 stakeholder interviews with four key informants and 11 SMEs as described in Table 1. The key informants were VA quality measurement experts with national leadership and technical roles and VA-wide knowledge about inpatient CHF quality measurement within the VA. We then interviewed 11 clinical VA SMEs responsible for receiving and interpreting quality monitoring data, including pharmacists, physicians, advanced practice nurses, physician assistants, and physicians in rural health care facilities.

In addition, we included VA cardiologists and CHF quality experts with extensive experience making decisions regarding quality measures to be used and the presentation of quality assessment results. The CHF quality measure domain SMEs were selected based on their likelihood of being frontline users of the automated system being developed and were VA QM staff, information systems specialists, data support analysts, and quality measurement experts.

Summarization of Results for Each Research Question

We organized the resulting interview data into themes as reflected in Table 2.

Overview of themes.

Note. VA = Veterans Affairs; HIT = health information technology; CHIEF = Congestive Heart Failure Information Extraction Framework.

In the following sections, we provide the results of the initial literature review, thematic analysis of stakeholder interview data, support snippets, and archival review of the sociotechnical dimensions of hardware and software, workflow and communication, internal organizational features, clinical content, facilitating factors, CDS, and clinical extensions.

Theme 1: Computing Infrastructure (Hardware/Software)

Summary Statement: VA has an overall hardware infrastructure and architecture that makes implementation of the automated system possible.

We summarized the description of an informatics-rich environment at the VA that is part of the computing infrastructure environment (Theme 1) in Figure 2. Figure 2 shows the VA electronic health record (EHR) (VistA) as a repository of data that is used by multiple VA applications and tools.

Description of an informatics-rich environment at the VA.

Evidence From the Initial Literature Review

We found evidence in the literature that VA has hardware infrastructure and architecture to support a variety of informatics tools (Fihn et al., 2014), for example, My HealtheVET, the Blue Button, the Primary Care Almanac, Templates, Interactive Kiosks, computerized patient record system (CPRS), and VistA (Cohen et al., 2013; Coughlin et al., 2017; Haun, Chavez, et al., 2017; Hogan et al., 2014; Hysong et al., 2019; Rajeevan et al., 2017; Savoy et al., 2017).

Thematic Analysis of Stakeholder Interview Data

Key informants indicated that VA has a robust and reliable, but evolving, hardware and software infrastructure and architecture that makes the implementation of an automated system possible. In addition, stakeholders also identified hardware functionality such as servers within VINCI, as well as software components such as general database management and analytic software, along with multiple types of reporting and display software, and VA applications, for example, My HealtheVET, the Blue Button, the Primary Care Almanac, Templates, Interactive Kiosks, and CPRS. Additional software applications include the core information technology system (VistA), which is built on an MUMPS platform, the data warehouse that can be queried with Structured Query Language (SQL), and NLP tools for extracting free text from VA clinical notes. Several interviewees noted that VISTA supports key aspects of caring for patients with HF and provided snippets to support this theme.

Supporting Snippets

Data can be pulled into VISTA to see every step in medication reconciliation and to know which facility where the patient has been seen . . . . . . has a menu with a list of tools that may be useful to clinicians including the primary care almanac. Templates are generally used as task managers rather than as communication tools.

Archival Review

This theme was also substantiated by our archival analysis. VA intranet searches revealed information about the various components of the VA hardware and software infrastructure. For example, the My HealtheVet intranet search page contains information about My HealtheVet, registration and login, and Blue Button DownLoad. The intranet searches also located user manuals for understanding how to use the software tools.

Theme 2: Workflow and Communication

Summary Statement: VA is using evidence-based HIT to improve clinical and operational workflow and communication.

Evidence From the Initial Literature Review

We found evidence that VA continuously works to enhance the workflow and communication between nurses, patients, and other health care professionals by incorporating newer clinical communication tools, software technology, and more reliable sources of data (Bradley et al., 2016; Coles et al., 2019; D’Avolio et al., 2010; Haun, Hathaway, et al., 2017; Rehwald et al., 2015; Shiu & Mysak, 2017; Weston & Roberts, 2013).

Thematic Analysis of Stakeholder Interview Data

We found supporting evidence in the stakeholder interviews stating that VA is making efforts to promote automated data extraction for performance measurement to enhance workflow and communication between patients and health care professionals by using evidence-based HIT. Stakeholders identified that VA currently has a manual abstraction process for the abstraction of performance measure data that are contracted through the EPRP, but it is making efforts to promote automated data extraction for performance measurement by reporting abstracted data to the Corporate Data Warehouse (CDW), by comparing and refreshing outpatient medications in VistA, and by using central repository data at the national level.

Supporting Snippets

Snapshots provided by performance measurement provide information on the current status of clinical decisions and can be useful in providing timely, actionable, at the point of care decisions. A proactive approach for clinicians to invite their patients for consultation can help in quality improvement efforts if any information has initially been missed by the physician. This can be pre-determined during the process of performance measurement if the quality measurement has not been met.

Archival Review

We also found substantiation of this theme in our archival analysis. VA intranet searches revealed information about the general process of manual data collection for quality measures by the EPRP (Hysong et al., 2012), which is summarized in Figure 3. A significant portion of the clinical content for implementing our automated system is available in electronic format.

The general process of manual data collection for quality measures by the EPRP.

Theme 3: Internal Organizational Features

Summary Statement: VA has a culture of continuous QI (Baughman et al., 2018; Young et al., 1997), which is enhanced by its internal organizational factors that make implementation of the automated system possible.

Evidence From the Initial Literature Review

The literature search revealed that VA has an overall culture of continuous QI (Young et al., 1997) that is enhanced by internal organizational factors. Within the VA, there is a culture of QI, evidence-based care, quality measurement, measurement and accountability processes, and quality control reporting as part of the internal organizational factors (Atkins et al., 2017; Brandenburg et al., 2015; Byrd et al., 2013; Danz et al., 2013; Fortney et al., 2012; Fox et al., 2016; Glance et al., 2011; Weston & Roberts, 2013).

Thematic Analysis of Stakeholder Interview Data

In general, we found that VA has a culture of continuous QI, which is enhanced by its internal organizational factors. We identified several themes associated with internal organizational features, including efforts made by VA to achieve Meaningful Use Certification by 2015, using HIT as a facilitator for QI and routine use of quality control reporting as a feedback loop. In addition, interviewees identified that VA is routinely using quality control reporting as a feedback loop by identifying and implementing intervention to improve HF care throughout the VA system; improving the quality, safety, and value of clinical data in warehouse dashboards; and comparing the results of performance measurement at local, VISN, and national levels.

Supporting Snippets

Current level of concordance with performance measures can be described through the efforts of sound providers that read evidence and participate in research and delivering evidence-based care. There is an overall culture of quality improvement in the VA but there is more that can be done in this area. The supervisors and leaders understanding of processes of quality improvement could improve understanding in the front-line level. It is also known as clinical reporting at the VA. A futuristic approach to performance measurement, if the EPRP review would not exist in the future, would be the use of algorithms and research at the VA to support the automation of performance measures.

Archival Review

We also found substantiation of this theme in our archival analysis. VA intranet searches revealed information about the spreadsheets for performance measurement results for each VISN.

Theme 4: Clinical Content

Summary Statement: VA has the availability of appropriate clinical content in the form of structured, unstructured, and semi-structured data, in VA electronic medical databases, that can be extracted through automated systems.

Evidence From the Initial Literature Review

We found evidence that VA’s capabilities of NLP is enabling the transformation of semi-structured data into structured data for use in data warehouses to support the automation of inpatient performance measures (Garvin et al., 2012, 2018; Gobbel et al., 2014; Hwang et al., 2014; Kim et al., 2017; Meystre et al., 2016).

Thematic Analysis of Stakeholder Interview Data

In general, we found that VA has the availability of appropriate clinical content in the form of structured, unstructured, and semi-structured data, located in multiple electronic medical databases that can be extracted by informatics and text processing techniques for automated performance measurement. Structured clinical data within VA’s EHR are aggregated within specialized databases. Data are extracted from clinical and administrative systems. Standard clinical terminologies are used in many of the databases. Information extraction (IE) and NLP techniques extract unstructured data from clinical notes; NLP techniques transform semi-structured data into structured data which are stored in data warehouses. Key informants indicated that VA is making efforts to capture the clinical content that is related to the identification of clinical circumstances, for example, high-risk patients; patient-related information, for example, information about patient medication reconciliation; and data availability, for example, the use of diagnostic and discharge notes for clinical review, recording of health factor data and vital signs for clinical data analysis, and the analysis of clinician-reported metrics to determine whether criteria are achieved.

Supporting Snippets

Performance measurement is elevated in the VA. We realize that health care can be measured with metrics, and we have the evidence to support it. We are working with the VISN dashboard to incorporate CDS into the dashboard used by the PACT team. We intend to do a total of five clinical domains, one of which is HF. We look at outpatient data, administrative records, and utilization data for sampling. For the purpose of inpatients we use diagnostic, discharge and other codes.

Archival Review

We also found substantiation of this theme in our archival analysis. VA intranet searches revealed information about the Veterans Health Administration (VHA) Joint Commission National Hospital Inpatient Quality Measures Heart Failure Instrument, the Specifications Manual for National Hospital Inpatient Quality Measures, and the list of data elements and their corresponding EPRP abstraction algorithms for quality measures.

Theme 5: Facilitation Needed to Overcome System Barriers Identified at the National, VISN, and Local Levels

Summary Statement: VA is using HIT as a facilitator to overcome barriers to the automation of performance measures.

Evidence From the Initial Literature Review

The literature search revealed that VA has an informatics-rich environment and is increasing the adoption of informatics tools in research, which supports the implementation and uptake of the automated system (Chapman et al., 2011; Garvin et al., 2018; Kim et al., 2017; Meystre et al., 2016). Within the VA, there are barriers associated with the electronic capture of structured data for CHF patients. We are trying to address the gaps associated with the automated data collection for HF and provide mechanisms to promote national efforts to develop EHRs that facilitate quality care (Garvin et al., 2012, 2018).

Thematic Analysis of Stakeholder Interview Data

Stakeholders described several aspects of data and their use related to quality measurement for which we developed themes we considered were facilitating factors. They identified major potential benefits of the automated NLP process as well as alignment of the technology with organizational goals. In addition, interviewees mentioned the need for standardization and consist of availability of structured data, engaging informed clinical staff for QI to potentially facilitate HIT implementation in a timely manner, drill down capabilities of HIT tools at the provider level and the individual level, development of structured data systems for the extraction of data elements from the EHR, and doing a gap analysis to compare manual abstraction versus electronic extraction through the automated system.

Supporting Snippets

One of the measurements is access for veterans and a metric is timeliness of service. How the timeliness is measured is not clear. We don’t have a huge problem with ACE inhibitor prescription but we have problems managing CHF patients in general. My opinion is that the electronic extraction of data will pull the review of required data away from the facility. Anything you can do to pull review of data into central office would help . . . If the form is not completed, the measure is failed . . . But there is no such form for CHF.

Archival Review

We did not find substantiation of this theme in our archival analysis. VA intranet searches revealed no results about the facilitating factors.

Theme 6: Use of the Output of the System Not Only for Quality Measurement But Also for Clinical Decision Support

Summary Statement: VA emphasizes the use of CDS to improve the quality of care through timely information and advisories.

Evidence From the Initial Literature Review

The literature search revealed that a number of integrated health care delivery systems are using advanced information systems and integrated decision support to carry out quality assurance activities, but none are as large as the VHA. In addition, HIT can serve as an intervention component to address a performance gap. Interventional use of HIT might require access to patient medical record data, organizational data, and CDS tools (Gifford, 2016; Rajeevan et al., 2017).

Thematic Analysis of Stakeholder Interview Data

We found evidence from the stakeholder interviews and a search supporting the theme that VA is moving from a retrospective quality measurement to the use of CDS. CDS systems could potentially improve staff workflows to support efficient, safe, and cost-effective patient care at the VA. Use of CDS could also facilitate Meaningful Use Certification, further electronic quality measurement, and assist in real-time rather than retrospective performance measurement.

Supporting Snippets

I want to move away from retrospective measurement evolving to clinical decision support. It matters how it is communicated to facilities. The framing needs to be at the point of care rather than retrospective. . . . working with the VISN dashboard to incorporate CDS into the dashboard used by the PACT team. We intend to do a total of 5 clinical domains, one of which is HF. For inpatient providers, before the patient is discharged, consider using certain meds. It’s not possible to look at future med records at this point in time. If you are talking about CDS after a hospital stay, there are different notes you would look at.

Archival Review

We did not find substantiation of this theme in our archival analysis. VA intranet searches revealed no results about the CDS.

Theme 7: Clinical Extensions of CHIEF

Summary Statement: VA encourages the development of clinical tools and extensions to support QI.

Evidence From the Initial Literature Review

The literature search revealed that VA encourages development of clinical tools and extensions to support QI (Byrne et al., 2010; Garvin et al., 2018; Kim et al., 2017; Meystre et al., 2016; Weston & Roberts, 2013).

Thematic Analysis of Stakeholder Interview Data

CHIEF can improve the efficiency, timeliness, and utility of quality measurement and provide data for clinical applications. In addition, CHIEF is aligned with VA’s Blueprint for Excellence because it has the potential to improve performance, advance innovation, and increase operational effectiveness and accountability. CHIEF has the capability to provide essential data for informatics tools such as dashboards, which can be potentially used during patient care (Garvin et al., 2018)

Supporting Snippets

A user could quickly roll down to patients that don’t meet the measure. . . . we really need to collect data indicative that the provider reviewed or was aware of the EF during the visit. I reviewed the EF, which was 15%. The system could notify them automatically of the EF and register that it was reviewed by the provider. . . . you could monitor telehealth notes and create an algorithm to alert a clinician of patients with urgent hospitalization or needs. Or if a patient has living will, what was discussed or documented. The work could be generalizable when applied to different clinical questions.

Archival Review

We did not find substantiation of this theme in our archival analysis. VA intranet searches did not reveal results about the clinical extensions.

Discussion

The stakeholders provided valuable information for the implementation of CHIEF. The themes were summarized as follows. (a) Hardware and software: VA has a robust and reliable, but evolving, hardware and software infrastructure and architecture that makes the implementation of an automated system possible. (b) Workflow and communication: VA is making efforts to promote automated data extraction for performance measurement to enhance workflow and communication between patients and health care professionals, by using evidence-based HIT. (c) Internal organizational features: VA is routinely using quality control reporting as a feedback loop by identifying and implementing intervention to improve HF care throughout the VA system; improving the quality, safety, and value of clinical data in warehouse dashboards; and comparing the results of performance measurement at local, VISN and national levels. (d) Clinical content: VA has the availability of appropriate clinical content in the form of structured, unstructured, and semi-structured data, located in multiple electronic medical databases that can be extracted by informatics and text processing techniques for automated performance measurement. (e) Facilitating factors: Interviewees mentioned the need for standardization and consist of availability of structured data, engaging informed clinical staff for QI to potentially facilitate HIT implementation in a timely manner, drill down capabilities of HIT tools at the provider level and the individual level, development of structured data systems for the extraction of data elements from the EHR, and doing a gap analysis to compare manual abstraction versus electronic extraction through the automated system. (f) Use of CDS: VA is moving from a retrospective quality measurement to the use of CDS. CDS systems could potentially improve staff workflows to support efficient, safe, and cost-effective patient care at the VA. Use of CDS could also facilitate Meaningful Use Certification, further electronic quality measurement, and assist in real-time rather than retrospective performance measurement. (g) Clinical uses of CHIEF: CHIEF can improve the efficiency, timeliness, and utility of quality measurement and provide data for clinical applications. In addition, CHIEF is aligned with VA’s Blueprint for Excellence because it has the potential to improve performance, advance innovation, and increase operational effectiveness and accountability. CHIEF has the capability to provide essential data for informatics tools such as dashboards, which can be potentially used during patient care.

Prior research illustrates the value of stakeholder engagement to inform the design and implementation of an automated system for CHF inpatients (Garvin et al., 2018). Through our stakeholder engagement, we identified and addressed critical implementation gaps in the effective use and availability of electronic data to support health care. We identified facilitators to increase efficiency of performance measurement and clinical QI in the area of HF by obtaining key clinical data to support implementation of our system. The engagement process also identified barriers associated with the automation of quality measures, such as the measurement of timeliness, unavailability of a structured form for CHF data, the lack of available structured data (e.g., EF less than 40%), and the inability to classify EF into meaningful information for quality measurement. The detailed results about the effectiveness of CHIEF have been described elsewhere (Garvin et al., 2018). Our innovative approach to stakeholder engagement based on implementation science and the STMHIT was effective in planning for the implementation of our automated HIT system in VA.

Conclusion

We designed a new tool to automate CHF quality measurement, using NLP and named it CHIEF (Garvin et al., 2018; Meystre et al., 2016). We conducted stakeholder interviews to characterize the context for implementation of an automated process to measure and report quality metrics for CHF patients. Our work demonstrated that the stakeholder engagement process facilitated development of themes that were then effectively used to address gaps in system design and implementation for optimal uptake and adoption of the CHIEF system. Our work suggests that themes identified during the stakeholder engagement process can be used to plan the implementation of a similar system.

Footnotes

Acknowledgements

The views expressed in this article are those of the authors and do not necessarily reflect the position or policy of the Department of Veterans Affairs, the United States Government, or the academic affiliate organizations. This work was approved by the University of Utah IRB.

Ethical Statement

The views expressed in this article are those of the authors and do not necessarily reflect the position or policy of the Department of Veterans Affairs, the U.S. Government, or the academic affiliate organizations.

Authors Note

Stephane Meystre and Youngjun Kim is now affiliated to Medical University of South Carolina, Charleston, SC, USA.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Department of Veterans Affairs, Veterans Health Administration, Office of Research and Development, IDEAS 2.0 Center, Health Services Research and Development project #IBE 09-069, and HIR 08-374 (Consortium for Healthcare Informatics Research).