Abstract

Evaluating knowledge and learning in psychotherapy is a growing field of research. Studies that develop and evaluate valid tests are lacking, however. Here, in the context of internet-based cognitive behavioral therapy (ICBT) for adolescents, a new test was developed using subject matter experts, consensus among researchers, self-reports by youths, and a literature review. An explorative factor analysis was performed on 93 adolescents between 15 and 19 years old, resulting in a three-factor solution with 20 items, accounting for 41% of the total variance. The factors were

Keywords

Introduction

Evaluating Knowledge in General Health Care

The role of knowledge and learning in health care interventions is a growing field of research. Researchers in the areas of psychoeducation and patient education stress the importance of assessing knowledge when evaluating interventions in relation to mental illness (Fox, 2009; Lukens & McFarlande, 2004; Wei et al., 2013). Overall, these areas aim to teach individuals about their conditions and their treatments to improve their capacity to recognize, prevent, and manage their mental illness. They are often based on the notion that psychoeducation and explicit knowledge can enhance processes such as cognitive mastery and experiences of empowerment, and thereby help patients engage in healthier behaviors in the presence of their problems (Brewin, 1996; Ryhänen et al., 2010; Sajatovic et al., 2007). For instance, the importance of knowledge is particularly evident in research on mental health literacy. This is a research area which aims to teach the general public about risks, symptoms, and treatments, to affect other desirable outcomes such as less stigmatized attitudes and increased help-seeking behavior (Coles et al., 2016). Various measures of knowledge have been used and studies are published on how to quantify knowledge acquisition in robust ways, to make more valid conclusions about interventions’ effects on knowledge gain (Wei et al., 2013).

Evaluating Knowledge in Cognitive Behavioral Therapy

An important area of research when evaluating knowledge and learning in psychotherapy is to assess what clients actually learn and remember during and after their treatment. The literature on evaluation of knowledge acquisition and learning in psychotherapy is, however, surprisingly scarce. One relevant form of psychotherapy with regard to knowledge and learning is cognitive behavioral therapy (CBT; Andersson, 2016; Harvey et al., 2014). The overall goal of CBT is to teach patients how to recognize unwanted symptoms and to let these symptoms serve as cues for the use of more adaptive management strategies (Albano & Kendall, 2002; Arch & Craske, 2008; Brewin, 1996). CBT emphasizes educational components and most treatment manuals involve thorough rationales and instructions to help clients learn new behavioral and cognitive strategies. One major purpose of CBT is to involve clients in more adaptive learning experiences, targeting both implicit knowledge gained through exercises such as behavioral activation, and exposure, as well as explicit knowledge through interventions such as psychoeducation, self-monitoring strategies, or identifying and challenging patient’s maladaptive cognitive assumptions through logic or alternative, more adaptive ones (Brewin, 1996). Thus, there is a clinical value in assessing the role of knowledge and learning in CBT.

Researchers have begun to assess and report data on patient learning, memory, and knowledge acquisition. For instance, by integrating learning theories and findings from cognitive science and educational science, Harvey et al. (2014) highlighted the potential importance of remembering treatment content to enhance treatment outcome in CBT. Poor memory of treatment information has been associated with poor treatment adherence and outcome (Gumport et al., 2015; Harvey et al., 2016). These researchers have mainly measured memory and learning through free-recall tasks, open-ended questions, and vignettes. For instance, by using a free-recall task, patients were asked to write down recalled information from their treatment during a 10-min period (Dong et al., 2017; Harvey et al., 2016; Lee & Harvey, 2015). Each response was compared with a predetermined list of important treatment points in cognitive therapy (CT), and answers were scored by independent coders. Results to date have revealed mixed effects and the studies have often been underpowered, but overall, remembering treatment content seems to be of relevance for treatment outcome in terms of depressive symptoms and individual functioning. Moreover, these researchers assessed learning during treatment by asking clients via telephone about their thoughts concerning the last therapy session and whether they lately had applied any skills learned in therapy (Gumport et al., 2015, 2018). Learning is also defined and measured as clients’ capability to generalize treatment content, by presenting participants with two fictive vignettes, asking them what they would think (cognitive generalization) and how they would respond in a similar situation (behavioral generalization). Data are then coded by independent raters in terms of number of accurately recalled therapy points. Taken together, the studies show mixed findings, but there are some promising findings showing that learning treatment outcome is related to depression levels after treatment. Gumport et al. (2018) highlighted that their instrument was not psychometrically robust and called for further research to establish its validity and reliability.

Evaluating Knowledge in Internet-Based CBT

One suitable research field for evaluating knowledge acquisition and learning during therapy is when CBT is provided over the internet (ICBT [internet-based cognitive behavioral therapy]; Harvey et al., 2014), as ICBT is mainly based on educational texts containing rationales, psychoeducation, and explicit instructions for learning new skills (Andersson, 2016). Thus, the contents and principles of CBT are the same but in ICBT they are administered in a more structured and mainly text-based format. ICBT makes it possible for clients to receive the same information and instructions on a weekly basis which enables control over the type and amount of information provided.

To our knowledge, three published controlled treatment studies have evaluated knowledge gain in ICBT. Inspired by earlier research on knowledge acquisition in bibliotherapy (Friedberg et al., 1998; Scogin et al., 1998), two studies have evaluated knowledge gain in ICBT for adults (Andersson et al., 2012; Strandskov et al., 2017) and one similar gain in adolescents with depression (Berg et al., 2019). In these studies, knowledge was measured via multiple-choice tests containing items covering the specific diagnosis/problem and CBT for the condition. Generally, the use of multiple-choice tests has several advantages. It is important to develop and use objective scales as a complement to open-ended questions. Open-ended questions have a tendency of inducing bias (Keulers et al., 2007) and recall tasks tend to result in less information recalled compared with recognition and cued-recall tasks (Gumport et al., 2018). Andersson et al. (2012) constructed a test of 11 multiple-choice items related to social anxiety and its treatment, whereas Strandskov et al. (2017) designed a multiple-choice test of 16 items to measure knowledge about symptoms and CBT treatment in eating disorders. Berg et al. (2019) developed a test of 17 items measuring knowledge about depression and comorbid anxiety and how to treat it with CBT.

Furthermore, these studies incorporated certainty ratings in the tests, asking the clients to rate level of certainty for each response on a 3- or 4-point Likert-type scale (i.e.,

In conclusion, we believe this topic warrants further research. Overall, as in research in general health care, studies lack thorough descriptions of their test development procedures and psychometrically robust measures. For instance, Andersson et al. (2012) reported an internal consistency of Cronbach’s α = .56, Strandskov et al., (2017) α = .62, and Berg et al. (2019) α =.64, all being less than satisfactory. No further analyses on psychometrics were described in these studies. Thus, there is a need to develop tests with higher reliability and construct validity to improve how explicit knowledge acquisition and learning in CBT and ICBT are captured and conceptualized. It is also important to validate subscales and make distinctions between different knowledge constructs (O’Connor et al., 2014).

Creating a Test of Knowledge Acquisition in ICBT

A valid test needs a valid theory. Overall, a clear definition of learning and knowledge in CBT is an important issue in all of these above-mentioned studies. As mentioned, knowing the central components in CBT could be regarded as a continuum, ranging from implicit skills (procedural knowledge) to explicit facts, concepts, and principles (declarative knowledge; Brewin, 1996). On one hand, CBT focuses on behavior in critical situations, learning new ways to respond to external and internal stimuli, such as staying in a crucial situation in the presence of intrusive thoughts or fear (Arch & Craske, 2008). Terides et al. (2016) aimed to quantitatively capture procedural knowledge in CBT and constructed a test evaluating practical skills usage, called the frequency of actions and thoughts questionnaire (FATS). It contained 12 items covering four factors labeled cognitive restructuring, rewarding behaviors, social interactions, and activity scheduling. Preliminary evidence confirms its validity and that higher self-rated skill usage is associated with greater symptom reduction of anxiety and depression.

In addition, however, CBT is also about learning explicit concepts and principles, such as understanding the rationale for exposure or how to manage negative thoughts through cognitive restructuring. Harvey et al. (2014) used the term “treatment points” to capture these concepts and principles. More specifically, a treatment point was defined as an insight, skill, or strategy from therapy deemed important for patients to remember or implement in their everyday lives. In CBT, there is a handful of treatment points believed to be helpful and meaningful for clients to understand explicitly (to cope with life and applying it in new situations later on). These explicit CBT concepts and principles could be well suited for evaluation when delivered through an internet format (ICBT) as researchers can have control over which information the participants have received through the texts in the treatment program. Knowledge evaluation could easily be based on the content of the modules and its intended learning outcome. Little is known what clients actually remember from the content in the modules and in which degree they can explicitly retrieve CBT principles learned through internet. One important issue, however, concerns the type and depth of knowledge measured in earlier ICBT trials (Andersson et al., 2012; Berg et al., 2019; Strandskov et al., 2017). In contrast to more general knowledge about symptoms and treatment, as measured in studies of patient education and mental health literacy, tests in ICBT could preferably measure application or generalization of knowledge from the treatment modules, focusing on strategies and skills that aim to aid clients in their everyday life. For instance, it can be feasible to assess clients’ ability to apply principles or skills learned during treatment in hypothetical scenarios (Dong et al., 2017).

Finally, evaluating knowledge and learning could be of extra relevance for certain target groups. Youths with depression and anxiety symptoms are such a group, given the high relapse rate of depression and its comorbidity with anxiety (Costello et al., 2003; Ebert et al., 2015; Thapar et al., 2012), and the potential protective effect knowledge could have on these conditions. For instance, in a study on adults, Kronmüller et al. (2007) found that knowledge about treatment could predict level of depressive symptoms 2 years later. Research in mental health literacy highlights the importance of early knowledge intervention, commonly focusing on the adolescent population (Burns & Rapee, 2006; Coles et al., 2016). If clients learn and gain knowledge early on, risk of relapse might decrease.

On the basis of these previous findings and to address some of the shortcomings in the evaluation procedures of knowledge acquisition and learning, a new instrument for assessing what adolescents learn during therapy was developed, focusing on explicit knowledge of general and applied CBT principles. We will present the test construction procedure that was based on both theoretical considerations and empirical findings. Hopefully, the procedure presented here can contribute to the field by illustrating one way to evaluate knowledge gain in ICBT.

Materials and Methods

Item Design

A primary goal when developing an instrument or a test is to create a valid measure in which the items reflect the underlying latent construct(s). Thus, the theoretical concepts used in a knowledge test should preferably be conceptualized before testing it empirically (Clark & Watson, 1995). If an instrument lacks a conceptualized theory of what it is supposed to target, the instrument might also lack construct validity. Here, the conceptualization of the construct(s) was briefly formulated as “explicit knowledge about general and applied core CBT-principles” in the context of adolescent anxiety and depression.

Furthermore, a valid theory does not only articulate what a construct is, but also what it is not. Therefore, when creating the initial item pool, an overinclusive number of items were generated. An overinclusive initial item pool enables psychometric analyses to detect and separate weak or unrelated items from items more strongly related to the underlying construct(s) (Clark & Watson, 1995).

Items were generated based on three main sources. One source was the treatment content and findings from previous studies on youths, to see what can be addressed in the modules and thus preferably be a part of a knowledge test (Silfvernagel et al., 2015, 2017; Topooco et al., 2018). Moreover, items were created based on a thorough literature review covering research about knowledge acquisition, memory, and learning in general health care, CBT, and ICBT. Third, items were generated by consulting three clinical psychologists with approximately 10 years of experience in treating youths with anxiety and depression. The experts stated and summarized the core CBT principles for these conditions.

Furthermore, the phrasing of the items was carefully attended to. Some items were phrased as general questions but most of them as mini-vignettes. The aim was to tap application and generalization of CBT principles, rather than only assessing recognition and recall of basic facts only. Preferably, a knowledge test in ICBT should tap a form of explicit knowledge closer to the concept of procedural knowledge ICBT, as in explicit application of CBT principles on relevant everyday life situations. The items were constructed in a multiple-choice format (three response options), as it is expected to give more reliable and stable results (Clark & Watson, 1995; Haladyna, 1994). Initially, 46 items were generated, targeting theoretical facts, concepts, and treatment points about exposure, behavioral activation, management of intrusive negative thoughts, and applied behavior analysis.

Next, to evaluate whether the items were appropriate and readable for the target population, the instrument was initially tested on nine adolescents aged about 16 years old. Eight of them were girls and one was a boy. They were recruited via teachers from three different classes from two different high schools. The pilot test was conducted using cognitive interviews, a method where the individuals are asked to read and reflect about items out loud (Krosnick, 1999). By observing and listening to the respondents’ reactions during the interview, the observer can revise or remove ambiguous or irrelevant items and hence increase the construct validity of the instrument. Several revisions and clarifications had to be made. The items were phrased more concrete, straightforward, and at a more adequate level of difficulty. A total of 13 items were removed, with 33 remaining. The revised version went through a final review with the clinical experts, assuring that the revised items were in line with CBT theory and that the test covered relevant treatment points.

In addition, certainty ratings were added in the questionnaire, that is, respondents were asked about how certain they felt with each response (“Guessing,” “Pretty certain,” or “Totally certain”). As mentioned, incorporating certainty is one way to evaluate whether respondents actually have gained the correct knowledge rather than guessing the right answer. Furthermore, it is important given the potential “common sense” aspect of CBT and the clinical relevance certainty could have on clients’ motivation to actually apply what they learn.

Participants

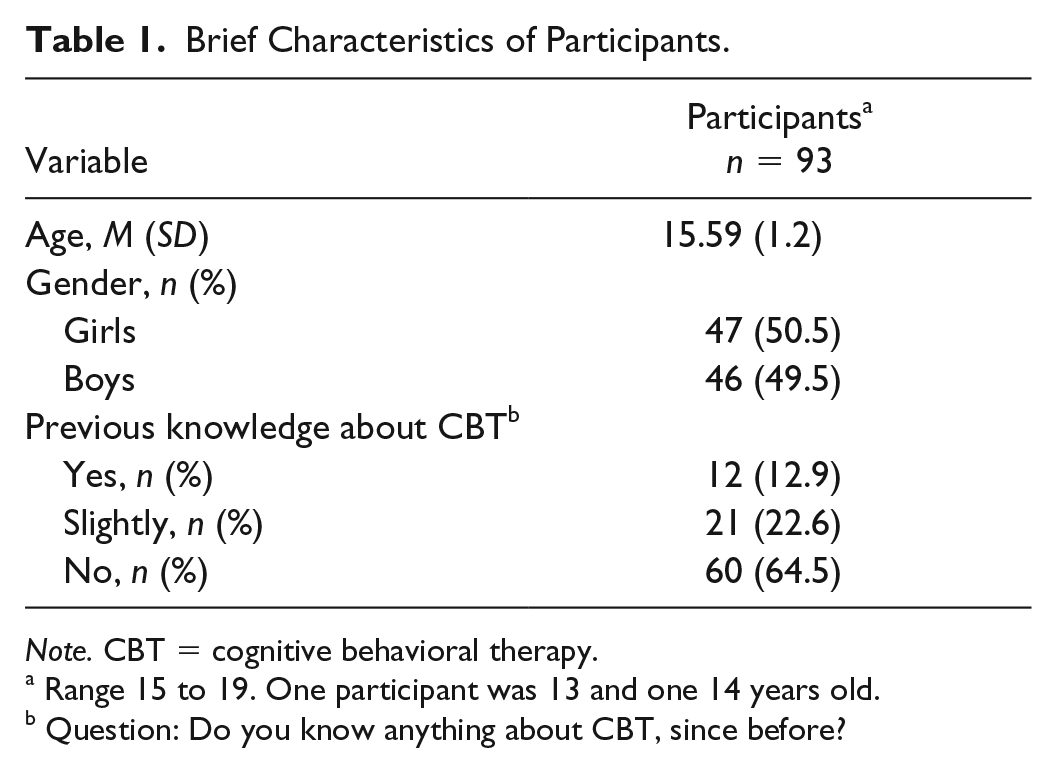

A total of 93 participants were included in the study. They were mainly recruited from three high schools in Sweden, where teachers asked their students to fill out the test during a class. See Table 1 for a brief description of their demographics.

Brief Characteristics of Participants.

Range 15 to 19. One participant was 13 and one 14 years old.

Question: Do you know anything about CBT, since before?

Data Collection

The instrument was distributed via Limesurvey (https://www.limesurvey.org), an online interface for administering surveys. Respondents were mainly students from two high schools in Sweden who were between 15 and 19 years old. A total of 192 individuals initiated the test and a total of 93 individuals submitted their answers. Among the 93 individuals who submitted their answers, a total of 86 individuals answered all of the questions while seven of the respondents left some of the questions unanswered. No differences between those who completed all the questions and those who did not, in terms of age, gender, or previous knowledge about CBT were found (

Statistical Analyses

All statistical analyses were performed on IBM SPSS statistics, Version 24. To assess internal consistency, we used alpha.

An exploratory factor analysis (EFA) was performed. EFA is a suitable method when the purpose is to investigate theoretically interesting latent constructs and establish the psychometric properties of a new instrument (Fabrigar et al., 1999). EFA enables the detection of other possible latent dimensions in the material and can reveal how each item contributes to these potential underlying factors, beyond internal consistency. Using EFA, all individual inter-item correlations can be examined. Preferably, all items should correlate moderately to each other in the range of .30 to .90 (Clark & Watson, 1995). Furthermore, if items are clustered together in meaningful underlying factors, each item should correlate with the underlying factor somewhere in the range of .30 to .50 (Fabrigar et al., 1999; DeVellis, 2016). EFA accounts for measurements errors and require data on interval or quasi-interval level, as assumed for the data collected here.

First, it is important to determine whether data are suitable for performing an EFA, that is, if there is a reasonable level of inter-item correlations to further evaluate possible subfactors. The diagnostic analyses used were the Kaiser–Meyer–Olkin (KMO) measure of sampling adequacy, assessing the potential of finding reliable and distinct constructs. A KMO of 0.60 is generally considered as the cutoff value for continuing with an EFA. Furthermore, Bartlett’s test of sphericity was implemented to assess whether correlations are significantly different from zero. Finally, the determinant analysis examined whether there were the inter-item correlations were at a suitable level.

Next, using principal axis factoring, the numbers of potential factors in the material were estimated. Principal axis factoring is an EFA method that aims to determine the number of factors by creating an extracted factor solution that maximizes the amount of explained variance (De Winter & Dodou, 2012). Moreover, an orthogonal rotation was implemented (varimax), to clarify the suggested factor solution. The purpose was to find the factor solution that was the easiest one to interpret (simple solution).

To explore the validity of the extracted factor solution, eigenvalues were used, establishing factors that are greater than 1, according to the Kaiser criterion. To graphically inspect the number of factors before the last major drop in eigenvalues, the scree test was also used. As a final step in validating the extracted factor solution, a parallel analysis was performed, using the syntax by O’Connor (2000), comparing the retained factor solution with randomly generated data sets.

Results

General Results

The reliability analysis revealed a strong internal consistency of α = .85 for the 33 initial items and an excellent alpha rating for the certainty ratings α = .92.

The determinant analysis indicated a low level of inter-item correlations, suggesting risk for multicollinearity and thus implying that it would be inappropriate to perform an EFA. However, the diagnostic assessments also revealed a KMO of 0.77, that is, over diagnostic threshold, and Bartlett’s test of sphericity was significant, χ2(528) = 1,278.68,

The rotated EFA indicated six factors with an eigenvalue above 1, explaining 45% of the variance. However, inspecting the scree plot, it suggested a factor solution of three factors. In addition, the parallel analysis also supported a three-factor solution (see Figure 1).

Parallel analysis of the factor solution.

Thus, a three-factor solution was evaluated further by running the EFA analysis again. The three-factor solution explained 32% of the total variance. Items not loading above .4 on any of the three factors were removed. Thus, 13 items were removed. Finally, the EFA analysis was performed on the 20 remaining items. This three-factor explained 41% of the total variance. A full overview of the specific items, the three-factor solution, and the correlations between each item and their respective factor can be found in Table 2. A review of the variance explained by the three-factor solution can be obtained in Table 3. The final knowledge test can be found in Supplemental Appendix A. The inter-correlations between the three factors were as follows: Factors 1 and 2:

Final Three-Factor Solution.

Total Variance Explained With the Three Factor Solution.

Final Factor Solution

The results of the factor solution revealed one factor labeled “Act in aversive states.” It contained 12 items relating to the core CBT principle of approaching and exposing yourself to situations and negative internal experiences, rather than avoiding them. This sometimes includes the ability to tolerate fear in the present moment, for example, “Marcus is just about to attend an important class, but becomes nervous, gets palpitations and chills. According to CBT, what could he try?” (Item 7), or engage in behavioral activation in the presence of depressive thoughts, for example, “Peter is tired even though he sleeps a lot. He does not have the energy to do things he used to. What would be most helpful for him to try, according to CBT?” (Item 9). The goal is to replace strategies of short-term aversion reduction with strategies more in line with valued long-term consequences.

The second factor was labeled “Using positive reinforcement,” with five items reflecting the CBT principle of using, focusing, and planning for positive reinforcement, such as engaging in behaviors in line with positive goals, for example, “Lisa is afraid to use the subway to school. According to CBT, what would make her feel better?” (Item 19) or scheduling rewards contingent upon valued behavior, for example “Oscar wants to be more helpful at home but has trouble getting started. How can he help himself, according to CBT?” (Item 20).

The third factor relates to “Shifting attention,” containing three items reflecting CBT strategies how to shift your focus and attention in the presence of unhelpful cognitions or reactions, for example, “Sofia is at a party and feels socially excluded. She is thinking that nobody likes her and that her friends find her boring. What is most helpful for her to do, according to CBT?” (Item 14).

The internal consistency for the final factor solution was α = .84. Factor 1 had an α of .82, Factor 2 had an α of .73, and Factor 3 had an α of .58. See Table 4 for the means, standard deviations, and internal consistencies among the factors.

Means, Standard Deviations, and Internal Consistency of the Three Factors.

Discussion

General Discussion

This aim of this study was to develop and evaluate a new knowledge test, with the aim to assess explicit knowledge about general and applied CBT principles in the context of adolescents suffering from anxiety and depression. Our goal was to address some of the issues raised in relation to previous research on knowledge gain and learning in general health care, CBT, and ICBT, in particular, exemplifying one way to construct a knowledge test for adolescents. Items were mainly created using a literature review, subject matter experts, and content from earlier studies on ICBT for adolescents with anxiety and depression.

Via an exploration of theoretically relevant constructs using EFA, a three-factor solution with 20 items was found with a high internal consistency for the whole instrument. The first factor was defined as “Act in aversive states.” This factor seems to capture the central CBT-principle of approaching aversive situations, that is, how to enhance exposure to internal negative responses and reactions and reduce tendencies of avoiding them (Abramowitz, 2013; Arch & Craske, 2008). The factor reflects the core CBT approach to act with negative and aversive thoughts, feelings, and sensations, in line with adaptive long-term consequences. The ability to accept and challenge negative emotional states is associated with reduced long-term distress, whereas experiential avoidance strategies tend to be associated with more severe symptoms of anxiety (Abramowitz, 2013). Some of these items are in line with the learning-based exposure paradigms of CBT, where a major goal is to teach clients that they can tolerate fear and aversive states (Arch & Craske, 2008). It is a shift from teaching clients to stay in a situation until fear has declined and instead help them to stay in the situation in presence of aversive states, while getting in contact with more neutral or positive consequences of the situation. Factor 2, “Using positive reinforcers,” seems to capture the CBT principle about using positive reinforces contingent upon valued action (Albano & Kendall, 2002; Brewin, 1996). This can be illustrated using rewards or focusing on the positive aspects of a previously avoided behavior. Increasing contact with positive reinforcement is a generic CBT principle, but more explicitly and evidently used when treating depressive states (Dimidjian et al., 2011). Factor 3, “Shifting attention,” contained three items and reflected the central role of managing mental processes of negative beliefs, impulses, and attentional biases in CBT for anxiety-related problems (Arch & Craske, 2008; Waters & Craske, 2016). This factor seems to capture strategies of shifting your attention, for example, strategies in line with the CBT notion that thoughts are hypotheses, rather than objective facts, not needed to be acted upon. Attention shifting seems to be connected to the ability to inhibit and interrupt pre-potent impulses and to engage in more adaptive behaviors (Bögels et al., 2008; Donald et al., 2014).

To our knowledge, this is the first study in which a knowledge test to be used in ICBT was developed using EFA, showing three distinct factors in the context of adolescent depression and anxiety. The procedure resulted in a knowledge test with higher reliability and validity than the previous tests used in ICBT (e.g., Andersson et al., 2012; Berg et al., 2019; Strandskov et al., 2017). This is in line with the need of developing more robust measures and validated subscales when assessing knowledge in mental health interventions (O’Connor et al., 2014; Wei et al., 2013). The third factor, however, had poorer internal consistency, which could be due to the number of items or reflecting a lack of reliability for the subconstruct. Importantly, the factor solution explained 41% and it is possible that other interesting dimensions in the knowledge of CBT are missing. Also, overall, the scales’ convergent and discriminant validity needs to be evaluated further, for instance, by correlating the scale against other scales of knowledge, symptoms, or behavioral outcomes in clinical samples.

One part of the instrument warranting further investigation is the role of certainty ratings. As argued above, it is of importance to separate knowing from guessing or believing (O’Connor et al., 2014; Tiedens & Linton, 2001), and thus adjusting for the fact that clients can be certain but incorrect or correct but unsure about their answer. Especially, there is a commonsense aspect in CBT where the right alternative could be more or less obvious (as commented by the subject matter experts and the participants in the cognitive interview). Incorporating ratings of certainty is one way to measure this aspect, but there might also be other more useful ways to tap and control for accuracy in contrast to certainty. Furthermore, the clinical importance of certainty warrants future research. Does certainty increase clients’ motivation in trying out strategies and is higher certainty a desirable outcome in psychotherapy?

This, finally, relates to the clinical use of the instrument, which needs further evaluation. Studies measuring knowledge acquisition through multiple-choice tests have found unclear or no associations with treatment outcome (Andersson et al., 2012; Berg et al., 2019; Scogin et al., 1998; Strandskov et al., 2017). In comparison with free-recall tasks, multiple-choice test rather measures recognition or cued recall (Gumport et al., 2018). Even if clients can recognize the correct answers about CBTsstrategies, it does not necessarily imply that they also recall and apply them in real-life situations. This kind of acquired knowledge might be better captured through open-ended questions assessing free-recall and clients’ own words (Gumport et al., 2018).

Other main important future research questions regarding the clinical use of the instrument are whether acquired knowledge about these core aspects during therapy can serve as a protective function in the long run for youths with anxiety and depression. In addition, if measured and evaluated in a valid and reliable way, knowledge and learning could be studied as a potential mediator of treatment outcome. This is in line with recent trends in clinical research attempting to understand the active components in successful treatments, rather than only evaluating its general effects (Kazdin, 2007).

Limitations

This study has several important limitations. For instance, as revealed by the diagnostic test a priori EFA, the determinant indicated that the overall inter-item correlations were too low. Thus, there is a risk of multicollinearity. Furthermore, to perform an EFA, 100 participants are recommended (Fabrigar & Wegner, 2012). In our study, 93 individuals submitted the test, and 86 completed all of the items. A larger sample size is preferred and the results should thus be interpreted with caution. Together, these two issues decrease the possibility to make stable conclusions from the EFA. Future studies should test the scale in larger samples. Furthermore, there is a need of replicating the factor solution in the context of adolescent depression and anxiety and also for other target groups. We do not know whether the results obtained here can be generalized to other populations. The present test was developed in a school setting and not in a clinical group and the next step is to evaluate the test in a clinical setting.

Importantly, EFA is partly a subjective method, as the interpretation of results is based on subjective decisions. Using the Kaiser criterion or scree test can lead to over-or-under factoring, or missing factors that are theoretically relevant. Theoretically, a test covering general and applied CBT principles captures more than three underlying dimensions, as there are several important theoretical underpinnings, psychoeducative models, and managing strategies in CBT for anxiety and depression (sleeping strategies, breathing and relaxation techniques, etc.). It is important, however, to highlight that this knowledge test aimed to target core, transdiagnostic aspects of CBT strategies in the context of adolescent anxiety and depression, rather than being a generic test applicable in diverse populations. It is also reasonable that the content in knowledge tests varies in relevance, depending on where and for whom an ICBT treatment is provided. When evaluating CBT for other problems, such as schizophrenia, other types of items and knowledge content would be of relevance. Indeed, it is difficult to develop a test that covers all content deemed necessary. Hopefully, this test construction procedure can exemplify one way to capture knowledge gain about anxiety and depression when receiving CBT for youths in an internet-based format.

Another important issue when measuring knowledge and learning about CBT principles, as highlighted by the subject matter experts during the test construction procedure, is how to frame and measure flexibility in the use and application of explicit CBT strategies when constructing a multiple-choice test. The items in the knowledge test might capture the core point about tolerating and challenging one’s fears and internal negative reactions, rather than avoiding them by thinking positively or using safety behaviors. However, various avoidance strategies are also helpful for clients in their everyday life. For instance, research has shown the potential functionality of safety behaviors in certain contexts (Rachman et al., 2008). Thus, one challenge regarding the evaluation of knowledge about CBT strategies is how to evaluate a pragmatic application of the strategies in question. Knowing declarative knowledge does not necessarily equal using it in real life, and the clinical relevance of the construct measured here needs to be examined further.

Despite these limitations, this study has several important contributions. Hopefully, by describing one way to perform a test construction procedure, the field can keep developing psychometrically robust tests that enable more useful conclusions regarding what clients know, learn, and remember from ICBT.

Supplemental Material

Appendix_A._Knowledge_test – Supplemental material for Knowledge About Treatment, Anxiety, and Depression in Association With Internet-Based Cognitive Behavioral Therapy for Adolescents: Development and Initial Evaluation of a New Test

Supplemental material, Appendix_A._Knowledge_test for Knowledge About Treatment, Anxiety, and Depression in Association With Internet-Based Cognitive Behavioral Therapy for Adolescents: Development and Initial Evaluation of a New Test by Matilda Berg, Gerhard Andersson and Alexander Rozental in SAGE Open

Footnotes

Acknowledgements

The authors of the current study would like to acknowledge the clinicians Maria Zetterqvist, Hana Jamali, and Helena Klemetz for their valuable contributions as subject matter experts during the test construction procedure. George Vlaescu is thanked for his excellent technical support. Furthermore, all the teachers and adolescents who participated are thanked for responding and providing feedback on the test.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by a grant from the Swedish Foundation for Humanities and Social Sciences, Grant P16-0883:1 (from the Swedish Central Bank). The funding source was not involved in the execution or content of the study.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.