Abstract

The measure created by H. Li offers a useful tool for investigating justice perceptions among team members or peer justice climate; however, more research is required to confirm its structure in lieu of competing models. This study provides evidence for the three-dimensional structure of peer justice climate within a multiethnic context and explores its relation to outcome variable performance. Participants were 304 undergraduate students from universities in the multiethnic, Republic of Trinidad and Tobago. Competing structures of peer justice climate were compared using exploratory and confirmatory factor analyses. Results indicated that peer justice climate is best conceptualized as having three dimensions (distributive, procedural, interactional) with an overarching justice factor connecting them. Suggestions are made for improving the measure. Procedural peer justice climate was found to have a significant positive relation with team performance.

Introduction

Organizations have evolved in many ways to meet the demands of a world that has been changed by globalization and technology. One relatively recent and highly popular development among organizations of all sizes is that of employee work groups or teams and their means of tapping into the talents of various persons for the fulfillment of a shared objective. Within the realm of organizational research, organizational justice describes the behaviors and attitudes persons develop in response to how fairly they perceive their workplace (Cropanzano & Ambrose, 2015; Cropanzano et al., 2001; H. Li, 2008; Moorman, 1991). Similarly, peer justice is a version of organizational justice based upon the feelings of fairness that colleagues within a work group have due to their treatment of each other. Empirical studies have found that peer justice can have far reaching effects on how individuals feel and how they interact with each other (Cropanzano et al., 2007). Despite this potential for explaining team behavior, peer justice climate has few examples of research upon which to base its utility (e.g., Cropanzano et al., 2007, 2011; H. Li, 2008; A. Li et al., 2013).

Currently, peer justice perceptions are measured using a questionnaire developed by Hongcai Li (H. Li, 2008; A. Li et al., 2007; Molina et al., 2015, 2016; Rupp et al., 2017). Li’s questionnaire is comprised of items taken from three separate sources, which were then adapted to measure the tree subdimensions of peer justice (distributive, procedural, and interactional justice) when teammates are considered as the source of these perceptions. The peer justice climate measure in question has great potential for applicability in future organizational research as there is an increasing trend toward the use of work teams (Moreland et al., 1994).

The following is a brief introduction to organizational justice and the factors that affect it. Next, focus is given to a more recent expansion of justice theory; peer justice climate—the degree of fairness with which team members treat each other. After introducing the construct, the article will outline the way the peer justice climate measure was constructed with the objective of verifying its structure. This study also aims to provide evidence of the validity and internal consistency for utilizing the measure of peer justice climate in a multiethnic environment. Thus far, there have not been very many studies within the realm of peer justice, this gap underscores the need for repeated use of the construct’s only measure to create a variety of findings and expose the underpinnings of intergroup justice relations.

Organizational Justice

Organizational justice describes the behaviors and attitudes persons develop in response to how fairly they perceive their workplace (Cropanzano et al., 2001; H. Li, 2008; Moorman, 1991). Organizational justice perceptions have commonly been considered as having three dimensions: distributive, procedural, and interactional justice (Kovačević, Zunić, & Mihailović, 2013). Alternatively, there has been strong support for a four-dimensional model which replaces the interactional justice dimension with the two dimensions: interpersonal and informational justice (Colquitt, Conlon, Wesson, Porter, & Ng, 2001). The popularity of both the three-factor and four-factor models inspired a suggestion by Lind and Tyler (1988) to apply both models to research questions in an attempt to advance the field of organizational justice in general.

At the level of the individual, organizational justice perceptions can be affected by a range of variables. In summary, these variables can be placed into three major categories: (a) the outcomes one receives from the organization; (b) organizational practices such as procedures and quality of interactions; and (c) the characteristics of the perceiver. The effect each variable has on justice perceptions can vary and may also depend on or be interconnected with other variables; a common occurrence among psychological constructs. Some of the more prominent variables that fall into the category of outcomes one receives from the organization are salary, organizational support, outcome feasibility, and outcome satisfaction. The category of organizational practices can include variables such as voice, communication with employees, and leader–member exchange. Examples of characteristics of the perceiver include age, gender, cultural background, education type and level, organizational tenure (time spent within the organization), performance, level of personal compliance with decisions, as well as personality characteristics such as negative affectivity and self-esteem (Cohen-Charash & Spector, 2001; Colquitt et al., 2001).

The effects or outcomes of justice in organizations were found to be far reaching, affecting the way persons feel and how they interact with each other (Lind & Tyler, 1988). Greenberg (1990, 1987) put it simply, if people believed that they were being treated fairly, they were then more likely to have positive attitudes about their work and their work outcomes and were more satisfied with the decisions resulting from group procedures. Outcomes associated with organizational justice (procedural, distributive, or interactional) include work performance, organizational citizenship behavior (OCB), counterproductive work behavior, job satisfaction (various types), organizational commitment, employee turnover intentions, and trust (in management and in the organization). Work performance is strongly related to procedural justice, but hardly to distributive and interactional justice (Konovsky & Cropanzano, 1991). Procedural, distributive, and interactional justice types are all related to OCBs (Colquitt et al., 2001). On the contrary, counterproductive work behaviors are also related to both procedural and to distributive justice types. Findings also show that there exist similar relations among all justice types and job satisfaction (including supervisor satisfaction, union satisfaction, and intrinsic satisfaction). Only pay satisfaction is different as it is more highly correlated to distributive justice than the types of satisfaction—an effect that is predicated by social exchange theory (Colquitt et al., 2001). Organizational commitment is related to all three types of organizational justice. In more specific terms, affective commitment (emotional attachment to the organization) is related to all three types of justice while continuance commitment (attachment to the organization that is based on an inability to quit rather than on positive endorsement of the organization) has been found to be negatively related to procedural and interactional justice (Tepper, 2000). Employee turnover intentions are related to organizational justice in various ways (Daly & Geyer, 1994; Konovsky & Cropanzano, 1991). Specifically, procedural and distributive justice types both have been found to equally predict turnover intentions while interactional justice least predicted intentions (Colquitt et al., 2001). Procedural, distributive, and interactional justice types were all found to predict trust in organization/management. Furthermore, leader–member exchange quality is also related to all three justice types though it is most highly related to interactional justice, as this form of justice deals primarily with the relationship between persons in the organization (Colquitt et al., 2001; Folger & Konovsky, 1989; Konovsky & Cropanzano, 1991; Konovsky & Pugh, 1994).

Peer Justice Climate

Employee work teams have developed over the last 40 years into being one of the preferred methods of structuring work within most organizations (Stevens & Campion, 1994). In keeping with this widespread use of work groups, peer justice climate research emerged from organizational justice research to explore the subtleties and nuances that come out of how fairly persons perceive members within their team. In 2002, De Cremer found that respectful treatment by one’s teammates was related to individuals’ perceptions of inclusiveness and contribution toward the team. There was thus a move by researchers to go beyond the established sources of justice perceptions to consider the likelihood of team members or coworkers as being a source. Cropanzano et al. (2011) have described peer justice as the perception of fairness held by individuals—who work together within the same unit without formal authority over each other.

The dimensions of peer justice climate are defined in a similar way to those of organizational justice perceptions. Distributive peer justice climate perceptions are based on the reward that team members received based on their contributions. Rewards must be seen as appropriate considering the effort each person has placed in arriving at the team’s goals. Procedural peer justice climate perceptions are based on the fairness of decisions made by team members while also considering the consistency of these fair decisions. Team members must be allowed to voice their dissent and manage the decision-making process in a consistent and accurate fashion in keeping with the rules prescribed by Leventhal (1976). Interpersonal peer justice climate perceptions are based on the manner, in which, team members treat each other. Team members are expected to treat each other in a respectful manner and refrain from inappropriate interactions (Bies & Moag, 1986).

The dimensionality of peer justice was questioned in a similar fashion as when organizational justice studies were carried out. In their theoretical model, Cropanzano et al. (2007) argued that peer justice, similar to its individual-level counterpart (organizational justice), should include three parallel dimensions: distributive, procedural, and interactional. Various researchers, however, have proposed differing models claiming otherwise. For example, researchers Ambrose and Schminke (2007) proposed that all peer justice perceptions would fall within the realm of interpersonal justice as these perceptions are linked to the interpersonal treatment and communication between persons. They stated, “allocation decisions and the procedures used to make those decisions are not the role of coworkers” (p. 404). Otherwise put, peer justice could only be a subset of the interactional justice dimension of organizational justice. Further research comparing the outcome of peer justice climate, with those of organizational justice, rejected the explanation of Ambrose and Schminke (2007), stating it inadequate, as peer justice climate and its dimensions produced team-level effects comparable to the individual-level effects produced by organizational justice (A. Li & Cropanzano, 2009). This type of result could not be possible if peer justice only existed as a subset of one the dimensions of organizational justice.

Although there has not been extensive research related to peer justice climate as there is with organizational justice, there is evidence to show that there are likely to be similarities between how both constructs interact with other variables. Thus far, empirical studies have linked peer justice climate to various outcome variables. A. Li and Cropanzano (2008) proposed that peer justice climate boosts the quality of interaction among team members. This enhanced quality goes on to engender favorable attitudes and OCBs. Results demonstrated that peer justice climate was related to teamwork quality, satisfaction with teammates, and unit-level citizenship behavior. Teamwork quality was found also to mediate the relationships between both distributive and procedural peer justice climate and satisfaction and OCB. Furthermore, to these effects, Cropanzano et al. (2011) noted that interpersonal and procedural peer justice climate each explained variance in team processes beyond the effects found at the individual level.

According to multifoci justice literature, there are various sources within the social environment capable of acting fairly or unfairly (A. Li et al., 2013). The level of fairness exhibited by these sources can vary such that an individual may be treated fairly by one source and unfairly by another. For example, one’s workplace may promote fair treatment with clearly outlined rules; however, the person administering these rules may do so in a biased and inconsistent manner. In such a situation, distributive organizational justice perceptions can be high (due to the clear rules) meanwhile procedural and interactional organizational justice perceptions may be understandably low (due to the procedures and persons). Individuals are likely to develop different justice perceptions for each of these sources and are capable of distinguishing these perceptions (Liao & Rupp, 2005).

Research to support investigating within unit relations came from De Cremer (2002), who found that respectful treatment by one’s teammates was related to individuals’ perceptions of inclusiveness and contribution toward the team. Also, Simon and Sturmer (2003) found that when group members treated each other with respect, a collective identification with the group developed, which motivated well-treated team members to make greater considerations for the team. Similarly, Lavelle and colleagues (2007) demonstrated through research that work group commitment mediated the relationship between the group’s procedural fairness perceptions and the citizenship behaviors expressed toward each other. These various sources of evidence called attention to the need for considering team members as a potentially important and influential source of justice perceptions.

H. Li (2008) therefore relies on the supposition that individuals can determine that justice perceptions are originating from their peers, differentiating these perceptions from traditional organizational justice perceptions. The author uses his understanding of justice as a guide to finding measures that are able to capture perceptions related to either team-level distribution, team-level procedure, or team-level interaction, and modified them to serve the required purpose of measuring peer justice climate perceptions.

Measure of Peer Justice Climate

As teamwork has become more of a norm within organizational contexts, the development of peer justice climate research is likely or perhaps even essential (Rupp et al., 2017). At this time, only one published measure for peer justice climate was discovered within the organizational justice literature (see the appendix). The measure, constructed by H. Li (2008) utilizes primarily items from other measures that were deemed to be adequate—with some modifications—at capturing the desired construct based on its theoretical origins. It is important to point out here that Li’s approach must also be considered in the context of the multifocal justice literature on an individual’s ability to distinguish between sources of justice perceptions.

According to the version of the measure used in H. Li’s (2008) thesis, the items for the peer justice climate measure were carefully constructed by considering the theoretical basis for each dimension of peer justice climate. The rationale used for the measure was as follows: organizational distributive justice perceptions were based on a tendency of individuals to allocate resources based on rules of equity, equality, need, entitlement, or justified self-interest (Deutsch, 1975; Leventhal, 1976). Thus, to detect peer justice climate perceptions, Li adapted a measure of how team members contribute equitably to their team’s effort (George, 1992). Next, organizational procedural justice perceptions were based, in theory, on how persons assess the processes they are subjected to using general rules of consistency (e.g., the process is applied consistently across persons and time), bias suppression (e.g., decision makers are neutral), accuracy of information (e.g., procedures are not based on inaccurate information), correctability (e.g., appeal procedures exist for correcting bad outcomes), representation (e.g., all subgroups in the population affected by the decision are heard from), and ethicality (e.g., the process upholds personal standards of ethics and morality) (Leventhal, 1980; Leventhal et al., 1980). By revisiting these criteria, Li developed five items to evaluate the peer justice climate procedures used in teams. Third, Bies and Moag (1986) introduced organizational interpersonal justice as the human side of procedural justice, which considered the quality of treatment persons received while implementing procedures that, in turn, determined how outcomes were distributed. Li therefore based his items for measuring interpersonal peer justice climate perception on a four-item scale developed by Donovan et al. (1998) for determining the likelihood of teammates treating each other with respect and helping each other perform tasks.

As the measure for each dimension of the peer justice climate has been newly conceptualized, a practical follow-up would be to test its robustness. After the measure was formally introduced by H. Li (2008), few studies have been carried out to support its reliability and validity (e.g., Cropanzano et al., 2011; A. Li & Cropanzano, 2008, 2009; A. Li et al., 2013; Molina et al., 2015, 2016). Also, though there may be much evidence to support the structure of organizational justice perceptions in different cultures (Brockner et al., 2001; Shao et al., 2013; Shapiro & Brett, 2005), caution must be exercised before accepting that such differences exist in the same way for peer justice climate perceptions. One key reason for exploring the applicability of the measure to a diverse sample is to offer a contrasting example to the population with which the measure was initially designed. Existing studies have been taken from what can be described as Western, Educated, Industrialized, Rich, and Democratic (WEIRD) societies (Henrich et al., 2010). The use of WEIRD samples carries implications for construct validity of the peer justice climate measure, as such samples are arguably more of an exceptional example of human behavioral patterns than a norm. Second, on a theoretical level, organizational justice perceptions originate from factors within an organization whereas peer justice climate perceptions are based on the way one is treated by others. As there is this significant difference between the origins of these two constructs, peer justice climate may exhibit potential differences from organizational justice.

Application of Measure to a Diverse Culture

The setting for the study is in Trinidad and Tobago, a twin-island developing state in the Caribbean with a multiethnic population of approximately 1.3 million persons. The culture on these islands is primarily a mixture of African and East Indian, with a heavy influence from British, French, and Spanish cultures due to its colonial history. Furthermore, the country explicitly recognizes and celebrates the multiethnic nature and heritage of its population (Brown & Conrad, 2007). The location of this study may be considered as unique in its nature, due to its size as a small society, and its diversity due to its multicultural character which presents an interesting representation of various cultures. A sample from Trinidad and Tobago would therefore provide an excellent opportunity to demonstrate if peer justice climate perceptions maintain their dimensionality, internal consistency, and relation to performance. Rupp et al. (2017), in their review of organizational justice literature, emphasized that “little is known about how employees are affected by the challenge of working with culturally diverse others” (p. 943).

Peer justice climate perceptions are subjective in nature. Subjective perceptions present a challenge to construct validity among persons (Cronbach & Meehl, 1955). As persons differ, their interpretation of scale items is likely to differ. This leaves the question of whether or not the measure of peer justice climate is a reflection of the same construct from person to person. In a multicultural context, as that of Trinidad and Tobago, persons of different ethnicities may be likely to interpret the peer justice climate measure differently. Any variety of interpretations may be caused by difference in cultural backgrounds which may assign distinct meanings or interpretations to similar stimuli.

Hypotheses

Peer justice climate research, as a relatively new offshoot of organizational justice research, requires support to determine its potential for explaining team dynamics. Support for its structure is possible through the use and improvement of its measure. The measure provided by H. Li (2008) is likely to produce data that reflects a three-dimensional structure as opposed to a one-dimensional structure.

In addition to the premise that peer justice climate will be made up of three dimensions, there has also been evidence to support that these three dimensions form part of an overall peer justice climate factor (H. Li, 2008; A. Li et al., 2013).

Empirical studies have found that peer justice climate can have far reaching effects on how individuals feel and how they interact with each other (Cropanzano et al., 2007). However, no direct comparisons have been made to team performance related to performance. The relationship between organizational justice perceptions and team performance suggests that there may be a similar relationship between peer justice climate perceptions and team performance (Konovsky & Cropanzano, 1991). It is expected that individuals with more positive peer justice climate perceptions will have better team performance.

Method

Participants

Researchers A. Li et al. (2013) suggested that student work teams are analogous to real-world teams with practical consequences for their members. As such, data were collected from 304 undergraduate students (N = 304) of the three largest universities in the country. Students were allowed to participate only if they had already undergone a group project for a shared grade while studying at their respective universities. Participation was voluntary and participants were recruited via convenience sampling during the same week around the middle of the semester. Participant ages across all university samples ranged from 17 to 30 years old (M = 21.16, SD = 2.39). Fifty-four percent of the students were female. The total population of Trinidad and Tobago is estimated at 40% Black, 40% East Indian, 18% mixed, with the remaining 2% accounting for White, Chinese, and other races (Trinidad and Tobago Population Census, 2000). Similarly, the racial distribution of the sample was 34% Africans, 33% East Indians, and 31% Mixed race. Of the remaining 2%, 1% identified their race as “Other,” while the remaining 1% remained unidentified.

Measures

The Measure of Peer Justice Climate (α = .77) was used for collecting data on participant’s peer justice climate perceptions (Li et al., 2008). This measure was compiled using measures developed separately for procedural, distributive, and interactional justice. The items that measure distributive peer justice climate (α = .33) are based on a scale developed by George (1992) for determining the extent to which team members contribute equitably to the efforts of the team (example item: “The grade that my teammates have received for the projects is appropriate considering the quality of the work they have completed”). The items that measure procedural peer justice climate (α = .58) are similar to those written by Colquitt (2001) and evaluate the procedures used within the team (example item: “The way my teammates make decisions is applied consistently”). These items were based on criteria initially proposed by Leventhal (1976). The items measuring interactional peer justice climate (α = .76) were developed by Donovan et al. (1998) and assess the extent to which teammates treat each other with respect and help each other perform tasks (example item: “My teammates treat each other with respect”). The measure used a 5-point Likert-type scale (1 = strongly disagree; 5 = strongly agree) for all items.

Performance data were collected from students’ self-reports of their grades from a team project on which they worked. Team projects were collaborative research assignments that required students to present their findings together in a written paper for a shared grade. The main requirement for participation was that the grade reported should be one that was assigned to the team and not individually. The grade was reported as a value out of 100 to ensure a consistent value across varying types of scoring.

Procedure

Student participants were screened and invited by trained research assistants to participate in the study confidentially. Each participant was individually informed and consent was received after which the interested participants were ushered to a quiet public space near to the data collection site at each university to complete a questionnaire. Upon completion, each student was thanked for their willingness to participate and allowed to leave contact details if they wished to receive a follow-up email with results after the study was completed.

Data Analysis

Exploratory factor analysis (EFA) and confirmatory factor analysis (CFA) were both used to test the dimensionality of the peer justice climate questionnaire (PJQ). For the rotation method, organizational justice factors have been traditionally analyzed using either the method of maximum likelihood (MLE) or the more robust method of unweighted least squares (ULS) estimator which has been the preferred method for use when handling data derived from Likert-type scales (e.g., Castaño & García-Izquierdo, 2018; Lloret-Segura et al., 2014). Results from both methods are reported for comparison. Model fit of CFAs was evaluated by the following indices: Chi-square test (χ2), comparative fit index (CFI), normed fit index (NFI), and the root mean square error of approximation (RMSEA). Pearson’s r correlational analyses were used to compare the relationship between peer justice climate and performance. Scale reliability was verified using the Cronbach’s alpha index. The Statistical Package for the Social Sciences (SPSS) version 25 was utilized for running EFA, correlational, and reliability analyses, while the software package MPlus was used to run all CFAs.

Results

The complete sample contained 304 undergraduate students (N = 304), from the three universities; average scores for peer justice climate for the entire group are listed in Table 1, alongside the sample’s asymmetry and kurtosis coefficients. Correlations between the various subscales of the peer justice climate measure and their corresponding alpha values are listed in Table 1. The means of item responses of the PJQ ranged from 2.17 to 3.74, with standard deviations for individual items ranging from 0.89 to 1.19. As the data were collected from three different universities, average student scores on each dimension were compared across universities using a one-way analysis of variance (ANOVA). These results showed that there was no significant difference in the scores depending on university of origin thus all subsequent analyses were carried out using the entire sample.

Correlation Between Subscales of Peer Justice Climate.

Correlation is significant at the .01 level (two-tailed).

The factor analysis with the ULS method used an oblique rotation, as variables are linked theoretically and were expected to be connected by a single, second-order justice factor. Three factors were produced (eigenvalue of Factor 1 = 4.43; Factor 2 = 2.01; Factor 3 = 1.34; variance explained by Factor 1 = 31.66%; Factor 2 = 14.34%; Factor 3 = 9.56%), which accounted for 55.57% of the variance. Bartlett’s test of sphericity was significant, χ2(91) = 1,358.29, p < .001, an indication that the data were suitable for using the factor analytic model. The Kaiser–Meyer–Olkin (KMO) measure of sampling adequacy was a satisfactory 0.82; however, item communalities were quite low and ranged between 0.19 and 0.60. Furthermore, factor loadings were irregular, with various factors loading onto incorrect scales when considering the proposed theoretical model (H. Li, 2008). The MLE method showed similar results with a significant result on Bartlett’s test of sphericity, χ2(91) = 1,358.29, p < .001, and a KMO of 0.82. The factor loading of the MLE proved to be less adequate for distinguishing the underlying factors, as various items were either loaded on more than one factor or did not load on any factor at all. Factor loadings for both the principal component method and the MLE can be found in Table 2. For the sake of comparison, EFA, using the ULS method of extraction and promax rotation, was done forcing the extraction of one factor. Results of the one factor reproduced a poor fit to the model (Factor 1 = 4.43) and explained only 31.7% of variance.

Exploratory Factor Analysis Loadings for the Methods of ULS and MLE for Complete PJC Measure (N = 298).

Note. Loadings lower than 0.400 were omitted. Loadings in a different factor from expected are italicized. ULS = unweighted least squares; MLE = maximum likelihood; PJC = peer justice climate.

Simplification of Measure

On the basis of the indicators set out above, it was considered necessary to run a simplification of the peer justice climate measure developed by H. Li (2008). Items were then removed from the peer justice climate measure in the attempt to produce a factor structure that would explain more variance (e.g., Molina et al., 2015). To address the cross loading of factors, the decision was taken to eliminate five items (Items: DJ1, DJ2, PJ2, PJ3, IJ1). Items DJ1 and DJ2 originated from the first two items of the subscale for distributive peer justice climate; Items PJ2 and PJ3 from the subscale for procedural peer justice climate; and Item IJ1 from the first item on the subscale for interactional peer justice climate. Item DJ1 was eliminated for the negative factor loading it produced compared with the other positively loaded items on the same factor, while Items DJ2, PJ3, and IJ1 were eliminated due to their consistent loadings onto the wrong factor regardless of the method used, without having a comparable loading on the expected factor, as was the case with Item IJ4 (see Table 2). Item PJ2, the second item of the procedural peer justice climate scale, was retained in the first instance, but was eventually also eliminated as it consistently had a low-factor loading.

An EFA using the ULS method of extraction was done for the simplified measure and produced three factors which explained a total of 67.26% of variance, 11% more than the previous version of the measure (eigenvalue of Factor 1 = 3.21; Factor 2 = 1.77; Factor 3 = 1.08; variance explained by Factor 1 = 35.65%; Factor 2 = 19.64%; Factor 3 = 11.97%). The KMO was 0.75 and Bartlett’s test: χ2(36) = 837.91, p < .001, indicating that conditions were adequate for the EFA. For the sake of comparison, an analysis using the MLE was done; however, results were identical and are therefore not reported. The measure with the omitted items produced better factor loadings, which were in keeping with expectations. Distributive peer justice climate items loaded onto the first factor, interactional peer justice climate items onto the second, and procedural peer justice climate items onto the third, with only one instance of cross loading (IJ4), which also happened to be the lowest factor loading of all. Factor loadings of the simplified peer justice climate measure using both the ULS and MLE are reproduced in Table 3.

Exploratory Factor Analysis Loadings for the Methods of ULS and MLE for Simplified PJC Measure (N = 300).

Note. Loadings lower than 0.400 were omitted. Loadings in a different factor from expected are italicized. ULS = unweighted least squares; MLE = maximum likelihood; PJC = peer justice climate.

The value for Cronbach’s alpha for the complete peer justice climate measure was acceptable at .76; however, this value increased to .82 when item DJ1, related to distributive peer justice climate, was removed from the measure (Item DJ1: Some of my teammates received a better grade for the course/coursework than they would have deserved). The consistency of the simplified version of the measure was 0.77, a marginal increase compared with the original score, however, with greater consistency within each of its subscales.

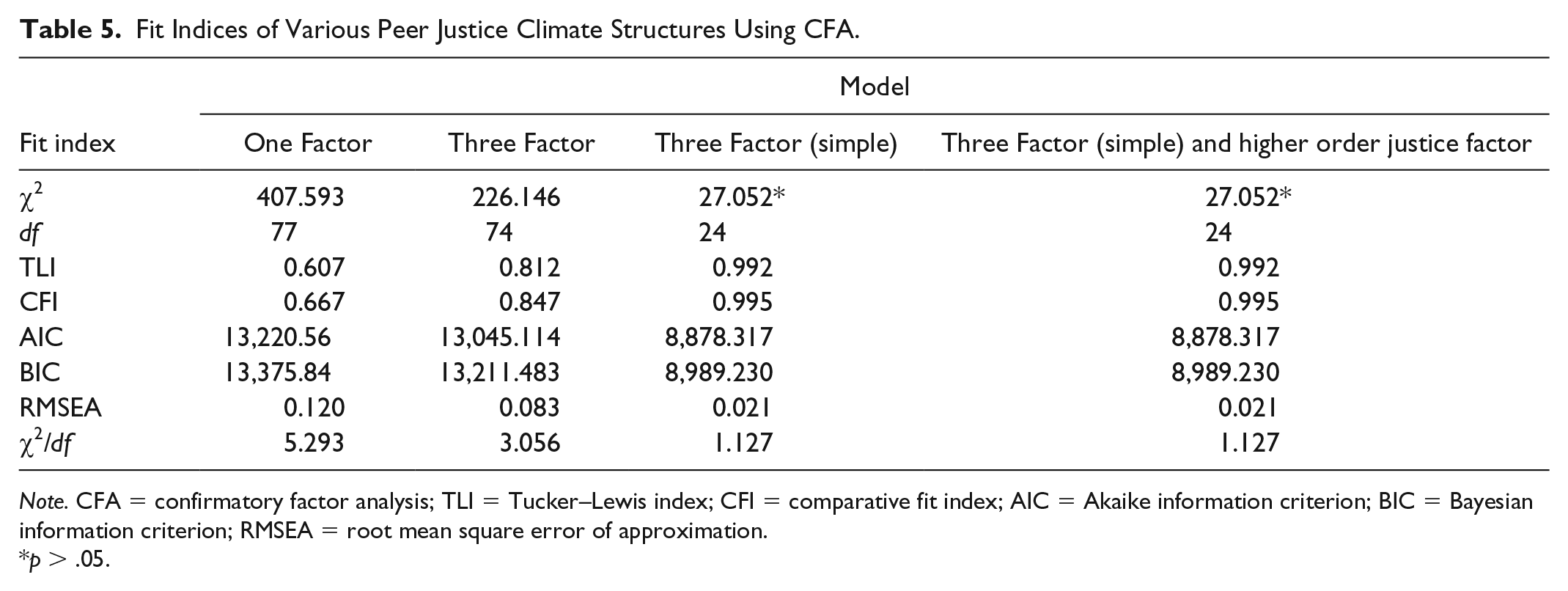

In preparation for the carrying out of the CFA, the Tucker–Lewis index (TLI), Comparative Fit index (CFI), the Bentler–Bonett nonnormed fit index (NFI), RMSEA, and the χ2/degree of freedom ratio were calculated to be used as the basis for comparing which model had the best fit (Browne & Cudeck, 1993; Hoyle, 1995; Hu & Bentler, 1999). The model fit for the one-dimensional model, three-dimensional model, and the three-dimensional model using the simplified measure are compared in Table 4.

Fit Indices of Various Peer Justice Climate Structures Using the MLE Method in the EFA.

Note. (a) CFI and TLI values of 0.90 and −0.95 indicate acceptable fit and values above 0.95 indicate good fit; (b) RMSEA values of 0.05 or lower indicate a well-fitting model, values of 0.05 to 0.08 indicate a moderate fit, and 0.10 or greater indicate a poor fit; (c) χ2/degree of freedom ratio values between 1 and 3 indicate a great fit, with values below 5 being acceptable (Carmines & McIver, 1981; Jöreskog, 1970). MLE = maximum likelihood; EFA = exploratory factor analysis; NFI = normed fit index; CFI = comparative fit index; TLI = Tucker–Lewis index; RMSEA = root mean square error of approximation.

CFAs

The structure of the peer justice climate measure was examined using CFA. The simplified three-dimensional model for peer justice climate, which separated peer justice climate perceptions into distributive, procedural, and interpersonal justice dimensions (χ2 = 27.05, df = 24, p > .05; χ2/df = 1.127; CFI = 0.995; TLI = 0.992; RMSEA = 0.021), was superior to the one-dimensional structure that combined all factors into one (χ2 = 407.59, df = 77, p < .001; χ2/df = 5.29; CFI = 0.667; TLI = 0.607; RMSEA = 0.120), and was also superior to the three-dimensional structure of the original model containing all items (χ2 = 226.146, df = 74, p < .001; χ2/df = 3.056; CFI = 0.847; TLI = 0.812; RMSEA = 0.083). To replicate the structure proposed by H. Li (2008) of the three peer justice climate dimensions as being a part of a single “higher order justice factor,” a CFA was run, which included a second-order structure. The results were the same as with the three-dimensional model (χ2 = 27.05, df = 24, p > .05; χ2/df = 1.127; CFI = 0.995; TLI = 0.992; RMSEA = 0.021) showing no improvement of fit. Table 5 shows the full details for all the fit indices of the models tested. Results for the modified questionnaire show good support for H1 and H2, whereas the original questionnaire did not provide evidence good enough to clearly support the second hypothesis.

Fit Indices of Various Peer Justice Climate Structures Using CFA.

Note. CFA = confirmatory factor analysis; TLI = Tucker–Lewis index; CFI = comparative fit index; AIC = Akaike information criterion; BIC = Bayesian information criterion; RMSEA = root mean square error of approximation.

p > .05.

The simplified peer justice climate structure was then used to calculate participant scores for each of the peer justice climate subscales (distributive, procedural, interactional) as well as an overall score on peer justice climate. These new values were preferred for use in the correlational analyses for testing the remaining hypotheses. The updated correlations between the subscales of the peer justice climate measure are shown in Table 6.

Correlation Between Subscales of Simplified Peer Justice Climate Measure (PJC).

Note. PJC = peer justice climate.

Correlation is significant at the .01 level (two-tailed).

There was a positive relationship between peer justice climate and student team performance, r(288) = .17, p < .01. Whenever peer justice climate perceptions increased, so did the team’s performance scores; however, only a miniscule amount of the variance of performance could be explained by peer justice climate, or vice versa. The relationship between peer justice climate perceptions was then examined controlling for the effects of team performance scores and the correlation decreased, r(281) = .21, p < .01.

Each dimension of peer justice climate was also correlated with the outcome variable performance. Distributive peer justice climate showed no relation to performance. Procedural peer justice climate showed a significant relation with performance, r(287) = .24, p < .01. Interactional peer justice climate showed no relation to performance.

Discussion

H1 tries to verify a three-dimensional structure of peer justice climate perception as opposed to a one-dimensional structure. This was done within a multiethnic context to both replicate findings and shed some light on how robust the measure proves to be when used within a multiethnic sample. Findings showed a three-dimensional structure of peer justice climate (distributive, procedural, and interpersonal) to be a superior fit for the data than a one-dimensional structure (A. Li et al., 2013). These findings confirm the hypothesis and are also in line with the findings of the limited available recent examples of peer justice climate research (Molina et al., 2015, 2016). H2 investigates if the three dimensions of peer justice climate are indicators of an overall peer justice climate factor. The results of CFA support this model; however, the three-dimensional model that includes an overarching justice factor does not seem to fit the data significantly better than the more parsimonious three-dimensional model without an overarching justice factor. Note that hypotheses were supported when the simplified version of the measure, which included nine questions (three for each dimension), was utilized. The original measure produced poorer results with the multiethnic sample.

With respect to the original measure, the data showed that although three distinct dimensions were produced, participant responses caused some questions to load onto dimensions not consistent with what they were expected to measure. This error in loading should not occur when considering the claims of Cohen-Charash and Spector (2001), which propose that participants should be able to distinguish between the various dimensions of peer justice climate despite their strong association. Thus, although the data gained using the original measure fit the three-dimensional model, the fit of the model was improved when the measure was simplified by eliminating a few questions. The changes made to the original measure also caused a few other effects. There was an increase in the alpha levels of the subscale for distributive peer justice climate, while the other subscales maintained more or less stable alpha levels. The alpha level of the entire scale also increased from .76 to .77, indicating a marginal improvement in internal consistency. Furthermore, the simplified scale caused a reduction in the strength of the correlations of the three subscales with each other (Table 6), this allows for a clearer distinction between scales and perhaps, with further research, can allow for making better distinctions of the relation that each peer justice climate dimension has with antecedent or outcome variables.

The need to eliminate several of the questions to allow for a more internally consistent model suggests that if the questions of the original measure are to be retained, they may need to be clarified for use in new contexts such as within multiethnic societies. This may have been the case, for example, with the first question of the subscale for distributive peer justice climate (Question DJ1), which seriously affected the internal consistency of the subscale, as well as the ability of the entire measure to fit the model. Although the results obtained from this question may be considered as a potential “bad reading” of this question, similar results were also found by Molina et al. (2015). One must, therefore, be open to consider the possibility that the diversity of the groups used for this study may have had an influence on participant responses.

H3 sought to investigate the relations between peer justice climate and team performance. The null hypothesis was rejected in this case, as there existed a significant relation. When the dimensions of peer justice climate are observed, procedural peer justice climate produced a significant positive relation with team performance. The results of these relations were very consistent with the literature on the proposed effects of peer justice climate. The findings of Konovsky and Cropanzano (1991) also demonstrated that work performance related significantly to procedural justice, but not to distributive and interactional justice. These results are reasonable considering that peer distributive justice climate perceptions should reflect perceptions linked to how well rewards (grades) were being distributed among teammates. In the case of group work, the grades received were likely to be perceived as disconnected from the actions of colleagues in the same group. Procedural peer justice climate perceptions, however, were more likely to be linked to the actions of colleagues as they were based on the fairness and consistency of decisions made by team members as well the ability of team members to voice their dissent and manage the decision-making process (Leventhal, 1976). Thus, a team member could have more easily linked the team’s internal procedures to the team’s output which led to the grade received (performance).

Limitations

The relation between overall peer justice climate perception and team performance was lower than expected. This may have been due to the varying methods of grading used across the institutions, courses and projects within the sample collected. Furthermore, there was no certainty in determining what each reported final grade included or what part of the grade could be ascribed to individual efforts. More controlled parameters for collecting performance data could yield a stronger correlation between variables. The research study would have been better served by using multi-item measures of variables. Finally, there is, at the time of this publication, no other published measure of peer justice climate perceptions that could be used to compare construct validity.

Conclusion

In sum, the measure originally proposed by H. Li (2008), and used in various studies (e.g., Cropanzano et al., 2011; A. Li et al., 2013; Molina et al., 2015, 2016), can be used for the study of peer justice climate perceptions within a multiethnic context, although it may be necessary to eliminate some of the items initially proposed or modify the measure in some other way. The modified version of the measure used on this study identifies peer justice climate as having a three-dimensional structure.

When performance is considered, the measure is most useful in determining how persons feel about the fairness of the group’s operations (procedural peer justice climate) as it (the group) produced its outcome. The measure is unable to make meaningful links about the fairness of how rewards are distributed for performance (distributive peer justice climate) unless there is sufficient information on the performance of others as well as some mechanism that links the distribution of rewards to the teammates involved. As far as the link between performance and the respectful interactions between team members (interactional peer justice climate), again, the measure cannot provide much feedback unless a more obvious link can be made as to how one variable affects the other. Finally, as results were derived from student teams as opposed to work teams, some caution must be exercised in extending these findings to work team and the work environment.

Footnotes

Appendix

Measure of Peer Justice Climate from A. Li et al. (2007):

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.