Abstract

The Vietnam Era Health Retrospective Observational Study (VE-HEROeS) is a nationwide study designed to compare the health of U.S. Vietnam era veterans to age- and sex-matched U.S. residents. Two self-administered mail questionnaires, one for veterans and the other for the U.S. nonmilitary population, were developed using already validated and newly developed items. A pretest was conducted to evaluate item recall and comprehension, new-item response validity, and the overall survey experience (usability of survey materials including the screener questionnaire for nonveterans). Subject recruitment was completed using convenience sampling and a $50 incentive. Cognitive interviewing and usability interviewing, two qualitative research methods, were implemented. Interviews were conducted in two stages (Stage 1, cognitive interviewing, n = 12; Stage 2, usability testing, n = 8) by three experienced methodologists. Concurrent probing techniques, unscripted probes, and retroactive probing were used to elicit response from 14 veterans and six nonveterans (mostly male, White, and aged 65-70 years). Information about the overall survey process was also obtained through observation during usability testing. Results signify that qualitative research is an important part of questionnaire development targeting older veterans due to issues involving comprehension, interpretability, and recall.

The Vietnam Era Health Retrospective Observational Study (VE-HEROeS) is a large nationwide study designed to assess and compare the current health and well-being of U.S. Vietnam era veterans with the health of age- and sex-matched U.S. residents who never served in the military (Vietnam era veterans comprise all individuals who served in Vietnam, Cambodia, or Laos, or other areas of the world during the Vietnam War). The study employed two self-administered mail questionnaires. One was sent to a stratified random sample of veterans who were selected from a sampling frame of all known individuals who served in the U.S. military from 1961 to 1975 and the other to U.S. residents selected from an enumeration of a sample of households with U.S. mailing addresses.

The use of qualitative methods as a primary step in questionnaire development is important (Dillman, Smyth, & Christian, 2014; Levine, Fowler, & Brown, 2005) because it improves the quality of the survey data collected. Although large surveys of Vietnam era veterans have been conducted in the past, there has been limited published research that discusses any qualitative testing of the survey questionnaires used. This is the case for major studies conducted by the U.S. Centers for Disease Control and Prevention (CDC) between 1984 and 1988 that examined morbidity and mortality in Army Vietnam War veterans (CDC, 1987; CDC Vietnam Experience Study, 1988a, 1988b, 1988c; The Selected Cancers Cooperative Study Group, 1990); other major Vietnam War veteran studies such as the National Survey of the Vietnam Generation, one of three components of the National Vietnam Veterans Readjustment Study (Kulka et al., 1988; Kulka et al., 1990); the 2012-2013 National Vietnam Veterans Longitudinal Study (Schlenger et al., 2015); and the 2013-2015 HealthViEWS focused on women Vietnam War veterans (Magruder et al., 2015). Cognitive interviewing was conducted and described in a published report for the U.S. Department of Veterans Affairs’ (VA) 2010 National Survey of Veterans to identify problems with questionnaires prior to final fielding of the survey (VA, 2010). Much of the qualitative testing of large-scale survey questionnaires has been conducted on the U.S. general population (National Center for Health Statistics, CDC, 2017, 2018; U.S. Census Bureau, 2014).

Veterans, particularly those veterans who lived through the “Vietnam experience” (Boyle, Decouflé, & O’Brien, 1989), may differ from nonveterans in their approach to surveys. This is due to many factors: their stigmatization derived from involvement in a very unpopular war, the lack of community support after returning from the war theater (Boscarino et al., 2018) and poor reintegration into civilian life, and their various deployment-related exposures that have created prolonged effects on their physical and mental health status (Boyle et al., 1989; Kulka et al., 1988; Magruder et al., 2015; National Academies of Sciences, Engineering, and Medicine, 2016; Pease, Billera, & Gerard, 2016; Schlenger et al., 2015; Spiro, Settersten, & Aldwin, 2016). Thus, qualitative methods are useful in assessing veterans’ perceptions and interpretation of questionnaire items, and their receptivity to surveys prior to main questionnaire administration. Changes to questionnaires made after qualitative testing can improve question wording, recall, and overall response quality and quantity.

Two types of qualitative testing that are frequently employed by social science researchers to reduce response error are cognitive interviewing and usability interviewing (Dillman et al., 2014). Cognitive interviewing achieves this by identifying problems during a four-stage response process outlined in a model proposed by Tourangeau (1984): (a) comprehension (understanding the questions and instructions), (b) retrieval of information from memory (remembering the details to answer the question), (c) judgment (developing an answer based on retrieval), and (d) response selection (choosing an answer that corresponds with the previous three steps) (Groves et al., 2009; Tourangeau, Rips, & Rasinski, 2000). It can also include an assessment of the subject’s understanding and interpretation of all questionnaire items and the difficulty of the questionnaire.

The goal of cognitive interviewing is to make the questionnaire as easy to comprehend as possible, to make it understood in the same way by all respondents, and to make each response reflect the intent of the question reliably across subjects (Dillman et al., 2014; Fowler, 2014; Groves et al., 2009). In contrast, usability interviewing aims to minimize the respondent’s level of effort to complete the questionnaire correctly, which includes understanding instructions, navigating skip patterns, and enhancing readability. Usability interviews are also an opportunity to evaluate the length of time it takes the respondent to complete the questionnaire (Fowler, 2014). Overall, the testing process improves the validity of responses (Willis, 2005) by identifying sources of response error related to question complexity, question patterns, and the formatting and appearance of questionnaires.

The objective of this article was to share our experiences working with veterans and their civilian counterparts as part of the development of VE-HEROeS, the first comprehensive and comparative survey of Vietnam era veterans’ health in nearly 30 years. The purpose of this article is to describe the qualitative methods used to improve the VE-HEROeS questionnaires and to report our findings.

Method

VE-HEROeS’s qualitative testing occurred in the spring 2016. Both the qualitative testing and survey administration protocols were approved by the VA Central Institutional Review Board (IRB). Because the qualitative testing and all remaining phases of the VE-HEROeS study were administered by Westat, a private contractor based in Rockville, MD, approval was also obtained from their IRB to conduct the qualitative research.

VE-HEROeS Survey Questionnaires

The predominant mode of data collection in VE-HEROeS was a 91-item (veteran version) or 78-item (nonveteran version—nonmilitary U.S. population) mailed, self-administered questionnaire. A screener questionnaire was also administered initially to the nonveteran sample to identify potentially eligible participants. In developing the VE-HEROeS questionnaires, some topics of interest had not been explored before by the VA or in other research studies and required new question development while the majority of survey questions had already been validated or were previously used. A study team member also reviewed the final draft of the questionnaire to ensure that each question related to one or more of the specific aims of VE-HEROeS as questionnaires often expand beyond their original intent during the development process. Questionnaires were also reviewed by a research study steering committee that was composed primarily of Vietnam theater veterans (those who served in Vietnam, Cambodia, or Laos), clinicians who treat Vietnam War veterans, survey research experts, and representatives of veteran’s service organizations (VSOs). These members provided further input into the usefulness and wording of items, the pretest design (e.g., suggested increasing pretest sample from 12 to 20), as well as input on other issues throughout the study’s course. Qualitative methods were then implemented to determine whether the new questionnaire items were comprehensible and usable. No quantitative analysis of responses was conducted beyond the reporting of basic frequencies of the characteristics of the sample.

In addition, because the veteran population and the nonveteran control group consisted of individuals whose average age is 70 years, we wanted to pay special attention to the ability of respondents to recall information and to assess the usability of the questionnaire—that is, making sure that the font was easily read and instructions easily followed.

The validated questions that were used included those focused on assessing overall physical and mental health as well as selected aspects of psychological health; they included the eight-item Short Form Health Survey (SF-8) (Ware, Kosinski, Dewey, & Gandek, 2001), Brief Trauma Questionnaire (Schnurr, Vielhauer, Weathers, & Findler, 1999), Kessler-6 (Kessler et al., 2002), Primary Care Posttraumatic stress disorder (PTSD) Screen for Diagnostic and Statistical Manual of Mental Disorders (5th ed.; DSM-5; American Psychiatric Association, 2013) (PC-PTSD-5) (Prins et al., 2016), Functional Assessment of Cancer Therapy–Cognitive Function (FACT-COG) (Wagner, Sweet, Butt, Lai, & Cella, 2009), and the Alcohol Use Disorders Identification Test (AUDIT-C) (Bush et al., 1998).

Although there were separate versions of the questionnaire for veterans and nonveterans, the questions themselves were identical where possible. The main differences between the two questionnaires were that the veteran questionnaire included military service questions that the nonveteran questionnaire did not, and some questions queried experiences and attribution of health conditions relating back to the Vietnam War on the veteran questionnaire while the nonveteran questionnaire asked for health concerns related to one’s past and current occupations.

Qualitative Testing

Recruitment of qualitative testing participants

Subjects for the qualitative testing of the questionnaire were recruited using convenience sampling. This sampling approach is frequently used in qualitative research where samples of 12 to 16 are typically utilized (Dillman et al., 2014; Luborsky & Rubinstein, 1995). Although the use of small samples in qualitative research makes bias highly probable (Marshall, 1996), the sampling process applied for this pretest was purposive. The samples of veterans who participated were heterogeneous and representative with regard to basic characteristics of the population under study such as military service, sex, age, and race/ethnicity. Similarly, we attempted to achieve the same range of characteristics when collecting the nonveteran sample. The collection of a large amount of verbal, open-ended responses limited the numbers that could be feasibly used for evaluation.

We oversampled veterans because it is our contention that veterans, especially those veterans who lived through the “Vietnam experience” (Boyle et al., 1989), may differ from nonveterans in their approach to surveys due mainly to service-related factors (Boyle et al., 1989; Kulka et al., 1988; Magruder et al., 2015; National Academies of Sciences, Engineering, and Medicine, 2016; Pease et al., 2016; Schlenger et al., 2015). Veterans of various conflicts, especially those of the Vietnam War, have mental health issues related to their service (Jordan et al., 1991; Kulka et al., 1988; Magruder et al., 2015; Marmar et al., 2015; Schlenger et al., 2015). Although past surveys of these veterans have reported good overall survey response rates (CDC, 1988; Schlenger et al., 2015), issues related to sensitivity of questionnaire items about military service or willingness to engage in research were still at question in this population. Moreover, our research focus was on veterans and therefore it was vitally important to make sure that we obtained sufficient numbers to allow for justifiable changes to the survey questionnaire. Recruitment of veterans would be affected by health status, which has been shown to frequently be much poorer in veterans than in nonveterans (Agha, Lofgren, VanRuiswyk, & Layde, 2000; Kulka et al., 1988; O’Toole et al., 1996).

Recruitment strategies differed for veterans and nonveterans. Subjects were recruited using various venues including the community local to Westat in Rockville, MD. For both groups, the study contractor advertised the study on their intranet and posted flyers in their office buildings, inviting individuals other than Westat employees to take part. For veterans, the research team also worked with VSOs to elicit study participation through the assistance of the VE-HEROeS steering committee members—this added to the legitimacy of the research that in turn helped with recruitment. All recruitment materials gave a brief overview of the objective of the study and other pertinent information such as the length of the interview and the incentive amount. A $50 incentive was provided to individuals who participated in qualitative testing.

Interested individuals were invited to contact the VE-HEROeS Study Assistance Center where they were asked eligibility questions before being scheduled for an interview; the Center was a service planned to offer all study participants help with any questions or concerns they might have about the study. The eligibility questions were scripted and included demographic questions such as age, sex, and military service. Interviews were conducted in two stages or rounds—the first round comprised the cognitive interviewing while the second comprised usability interviewing. The goal for number of participants across the two types of qualitative testing was 20: nine veterans and three nonveterans for cognitive interviewing, and five veterans and three nonveterans for usability testing. Because most of the questions are the same in the veteran and nonveteran questionnaire versions, and the focus of the study overall is about veterans, most of the qualitative testing participants were veterans.

Cognitive interviewing

Cognitive interviews were conducted with individual participants in May 2016. Three experienced survey methodologists were trained for this study prior to conducting the interviews. The training session reviewed the objectives of the cognitive interview, reviewed the protocol and procedures, and addressed questions of the survey methodologists. The cognitive interviewing protocol included a review of the entire questionnaire with the participant plus several scripted probes for the survey methodologist to use to ask the participant questions about how they understood the questions and how they arrived at their responses. One of the three note-takers was also present at each interview to relieve the methodologist of this responsibility.

The administration of the cognitive interview proceeded with introductions and a brief background and overview of what the participant would be asked to do. All participants signed the informed consent and Health Insurance Portability and Accountability Act (HIPAA) authorization form after a review by the survey methodologist. Participants were provided hard copies of these materials for their records.

Each interview was scheduled to be 75 minutes long. Participants were asked to think out loud as they completed the questionnaire, derived from a reporting methodology developed by Ericsson and Simon (1980), and to answer the questions verbally as well as on the paper questionnaire. The survey methodologist used a concurrent probing technique (Willis, Royston, & Bercini, 1991). After the participant answered key questions in the survey, the interviewer stopped the participant and asked scripted probes about how the participant came up with an answer or what the question meant. The survey methodologist also asked unscripted probes when appropriate, based on observations during the interview. Observations that prompted use of unscripted probes included questions from the participant on how to proceed, hesitation in providing a response, adjusting body position, and changing responses. For example, if the survey methodologist noticed some participants rereading a question several times, they then asked the participant to talk out loud about what prompted the rereading.

Usability interviewing

After the cognitive interviews were completed, the study team reviewed findings and agreed on questionnaire revisions. The questionnaires were then updated to reflect the findings; updated versions were used for usability interviews with different participants than those who completed the cognitive interviews. Usability interviews took place in June 2016 and used the new versions of the questionnaires.

As was done with the cognitive interviews, the usability interviews were conducted by the same three experienced survey methodologists. These interviews were also 75 minutes in length and participants completed informed consent forms and HIPAA authorization forms as in the cognitive interviews.

The protocol for usability interviews differed from that used for the cognitive interviews. Usability interviews were generally based on observation and understanding of the participants’ entire survey experience. All materials that a selected study respondent would receive for the main survey were tested. A screening questionnaire (“screener”) was used to identify potential participants within a sampled household for the main survey’s U.S. resident respondents, so this additional component was tested only on nonveterans. During the usability interview the materials were provided in sequential order to elicit information first on the screener and accompanying letter for the U.S. resident group, and then on the invitation letter, information sheet, and last, on the questionnaire for all veteran and U.S. resident participants. Participants reacted to a hypothetical scenario of receiving the mailing at home and were asked to act as naturally as possible to the materials. Before starting on the questionnaire, the participants were asked follow-up questions about what they noticed or did not notice on the materials, and if they thought they would have participated had they received those materials in the mail.

Once the usability-testing participants began the questionnaire, they went through it without interruption by the survey methodologist. The survey methodologist was present to answer any questions asked by participants, but there was no concurrent probing like there was during the cognitive interviews. When the participant finished the questionnaire, the survey methodologist reviewed answers with the participant, particularly in places where confusion or other problems were observed. The survey methodologist also did retroactive probing on the questions that were changed after the cognitive interviews to see if the changes performed as intended.

Results

Cognitive Interviewing

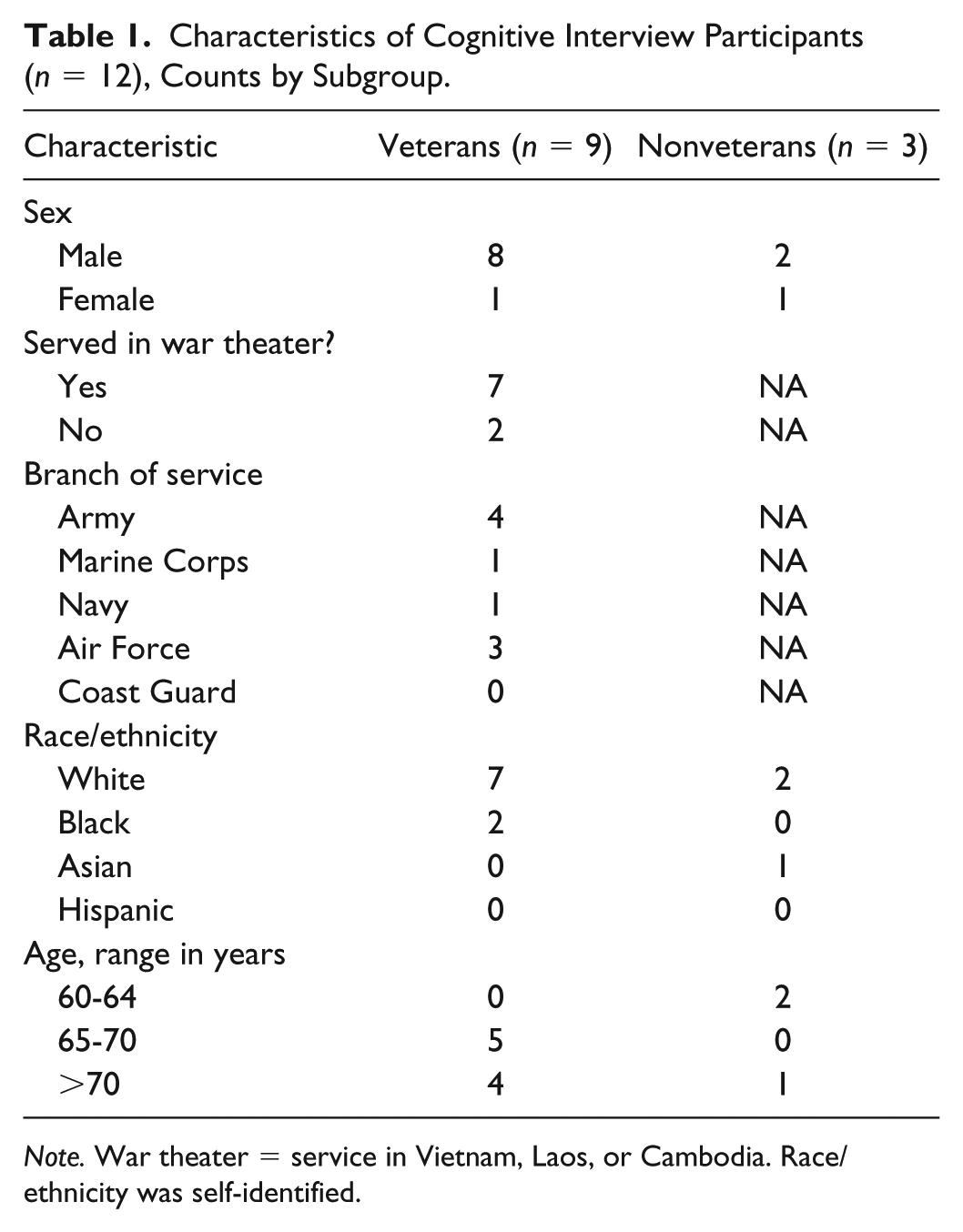

Table 1 shows the characteristics of the 12 cognitive interview participants (nine veterans, three nonveterans) who completed interviews. Race/ethnicity was self-identified. Most participants in each group were male and White, although two veterans were Black and one nonveteran was Asian. Most nonveterans were 60 to 64 years of age or older while veterans were 65 years of age or older. Of veterans, seven served in the Vietnam War theater and most served in the Army (n = 4) and Air Force (n = 3).

Characteristics of Cognitive Interview Participants (n = 12), Counts by Subgroup.

Note. War theater = service in Vietnam, Laos, or Cambodia. Race/ethnicity was self-identified.

Have you ever been heavily exposed to any of the following, either because of your military service, an occupation, or at home?

Have you ever been heavily exposed to any of the following, either because of your military service, an occupation, or at home? By heavily, we mean long-term, daily, or extreme exposure.

The major results from the cognitive interviews can be summarized based on Tourangeau’s model of a four-step response process (comprehension, information retrieval, judgment, and response selection) (Tourangeau, 1984; Tourangeau et al., 2000).

Comprehension

Issues regarding comprehension had to do with participants failing to read instructions, missing important key words, and needing definitions for unfamiliar or vague terms. To address this, we added definitions and emphasized key words by underlining them.

Participants had two different issues with the question as shown in Example 1—some missed the word “heavily” entirely and answered the question based on any amount of exposure and some participants had difficulty answering the question because they were not sure what was meant by “heavily.” We added a definition for “heavily” so that participants would know how to interpret the word and recognize its importance.

Information retrieval and judgment

Issues regarding retrieval of information and judgment had to do with difficulty recalling dates during the Vietnam War. We expected this because we were asking for recall from more than 40 years ago. Because we wanted to collect as much information as possible, we added statements letting respondents know that an estimate was acceptable (see Example 2).

Only include tours of duty that were on the ground, in the air, or on the inland waters of Vietnam, Cambodia, or Laos.

□ I did not serve on the ground, in the air, or on the inland waters of Vietnam, Cambodia, or Laos. → Go to question 11

a. Dates of your From: |_|_|/|1|9|_|_| To: |_|_|/|1|9|_|_| b. Dates of your From: |_|_|/|1|9|_|_| To: |_|_|/|1|9|_|_|

Only include tours of duty that were on the ground, in the air, or on the inland waters of Vietnam, Cambodia, or Laos.

□ I did not serve on the ground, in the air, or on the inland waters of Vietnam, Cambodia, or Laos. → Go to question 11

a. Dates of your (Your best estimate is fine) From: |_|_|/|1|9|_|_| To: |_|_|/|1|9|_|_| b. Dates of your (Your best estimate is fine) From: |_|_|/|1|9|_|_| To: |_|_|/|1|9|_|_|

Response selection

Issues regarding response selection mostly had to do with select all that apply type questions missing options that covered potential responses, such as none of the above or does not apply option, or participants wanting a don’t know option for certain questions where they could not retrieve a “yes” or “no” answer. In addition, participants expressed confusion with certain response items that were not commonly used in surveys.

To make such questions clearer, in Example 3 we added an option to the first question for participants to indicate they did not have any of these injuries, and that option was accompanied with a skip instruction so that participants knew not to answer the second question.

□ Fragment or shrapnel

□ Bullet

□ Vehicular (any type of vehicle, including airplane)

□ Fall

□ Blast (Improvised Explosive Device, RPG, Land mine, Grenade, etc.)

□ Other specify: _______________________________

□ Being dazed, confused or “seeing stars”

□ Not remembering the injury

□ Losing consciousness (knocked out) for less than a minute

□ Losing consciousness for 1-20 minutes

□ Losing consciousness for longer than 20 minutes

□ Having symptoms of concussion (headache, nausea, fatigue, difficulty concentrating) afterward

□ Penetrating (open) head wound

□ None of the above

□ I did not have any injuries during the Vietnam War era →

□ Fragment or shrapnel

□ Bullet

□ Vehicular (any type of vehicle, including airplane)

□ Fall

□ Blast (Improvised Explosive Device, RPG, Land mine, Grenade, etc.)

□ Other specify: _______________________________

□ Being dazed, confused or “seeing stars”

□ Not remembering the injury

□ Losing consciousness (knocked out) for less than a minute

□ Losing consciousness for 1-20 minutes

□ Losing consciousness for longer than 20 minutes

□ Having symptoms of concussion (headache, nausea, fatigue, difficulty concentrating) afterward

□ Penetrating (open) head wound

□ None of the above

Five questions were ultimately dropped from the questionnaire altogether because of reactions received during testing. Three questions asked about the health of great-grandchildren; however, none of the 12 qualitative testing participants in cognitive interviews had great-grandchildren. Because these questions took up an entire page of the questionnaire, and it was not feasible to calculate the number of main survey respondents who would have great-grandchildren, we removed them but retained questions about children and grandchildren.

The questionnaire also originally asked about testing and vaccinations for hepatitis B, as well as testing and treatment for hepatitis C. We asked about both viral infections because we were aware that hepatitis B prevalence, treatment, and vaccination were not well studied in veterans while hepatitis C is prevalent among Vietnam era veterans. And, testing and treatment for hepatitis C has been a major focus of prevention and treatment messaging and programs in the VA health care system and Vietnam veterans’ organizations (Belperio et al., 2018; VA, 2018; Vietnam Veterans of America, 2016; Yee et al., 2012) for more than 15 years. However, when three qualitative testing participants were not entirely sure of the difference and confused the two viral infections, we believed the confusion expressed was due to lack of familiarity with hepatitis B, and decided to eliminate the hepatitis B questions.

Usability Testing

Table 2 shows the characteristics of the eight usability interview participants (five veterans, three nonveterans) who completed interviews. The participants were mainly drawn from the list of potential participants who were not selected for cognitive testing. Therefore, participants in the usability-testing phase had not seen the questionnaire before, which enabled us to test revised questions. Most participants in each group were male and White, although one veteran was Black and one nonveteran was Asian (race/ethnicity was self-identified). Most were 65 years or older but one nonveteran was 59 years old. Of the veterans, three served in the Vietnam War theater and most served in the Army (n = 3).

Characteristics of Usability Interview Participants (n = 8), Counts by Subgroup.

Note. War theater = service in Vietnam, Laos, or Cambodia. Race/ethnicity was self-identified.

Usability-testing participants seemed overwhelmed by the study mailing packet that was to be sent to the sample of nonveterans and veterans. They expressed most concern over the information sheet that served in lieu of a written informed consent, the content of which was determined by the IRB. Although we did not find ways to shorten or simplify the information sheet, the questions raised by usability-testing participants helped the study team institute measures to address questions. For example, we expected additional communications from respondents and set up the Survey Assistance Center that was made available for questions by phone during business hours, in addition to placing phone numbers for the IRB and the principal investigator in the invitation packet. Moreover, we developed a “frequently asked questions” section that was based on the responses received during the usability interviewing and was ultimately included in the survey packet mailer.

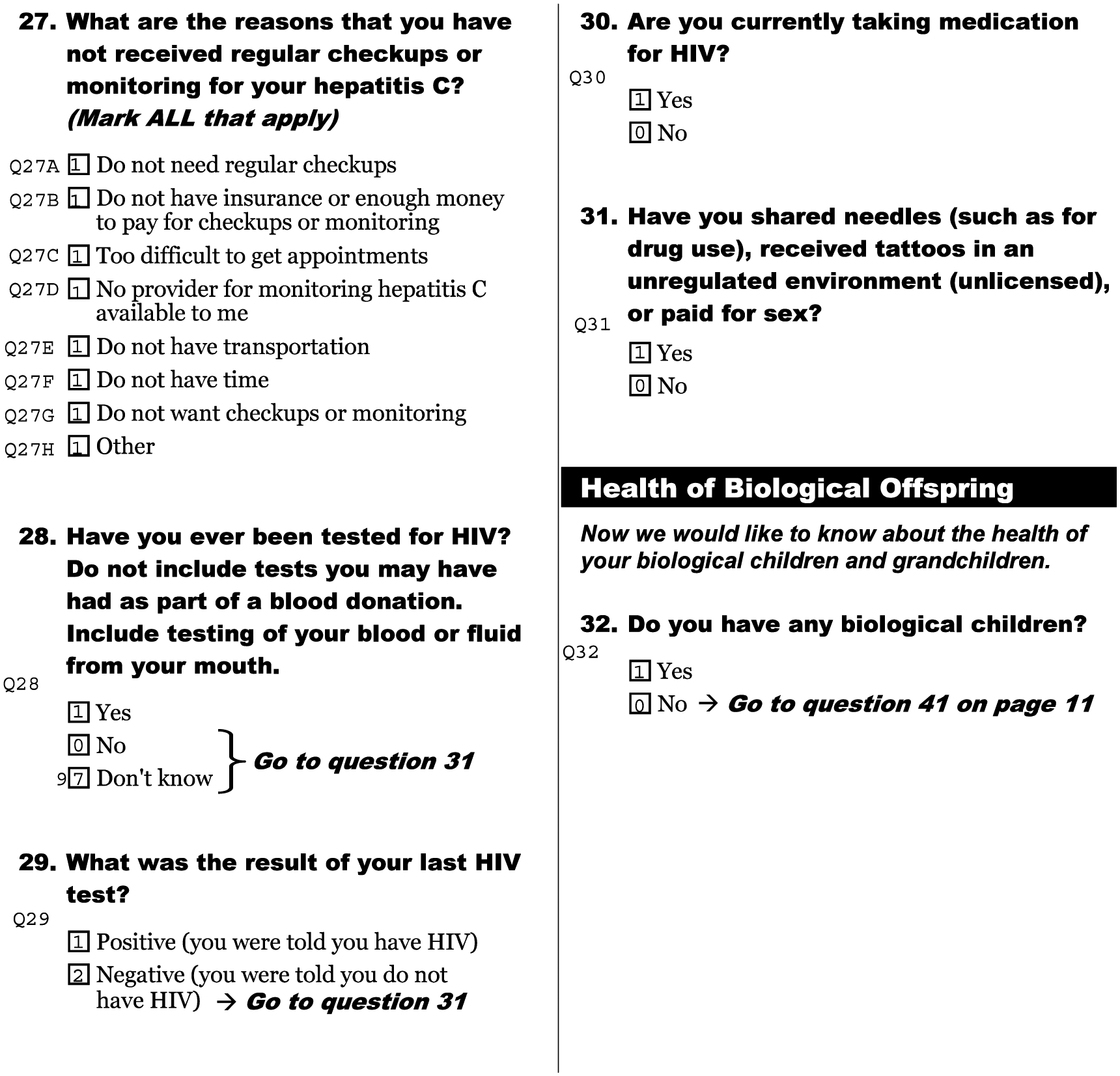

There were two major issues regarding usability in the questionnaires. One was that participants frequently missed skip pattern instructions and only stopped answering questions when they got to a question that did not seem applicable to them. Also, if the question that the participant was supposed to skip to was on a different page, participants who did see the instruction expressed confusion over where to go. Therefore, we enlarged the skip instructions and added page numbers for questions that skipped to questions on other pages. This is shown in the changes made in the skip instruction that we added based on the cognitive interview findings (see Example 3).

In Example 3 (e.g., injuries sustained during the Vietnam War era), participants who had no injuries had a much easier time answering the question with the addition of the first response option, but we still needed to adjust the presentation of the skip instructions to make this easier to follow. The final version (Figure 1) shows how we added the page number and emphasized the skip instruction to make it easier on respondents.

Question 29 on injuries, version used in usability interviews and final version.

The other major issue was questions that were presented in the format of large grids of Yes/No questions. Many participants responded “Yes” appropriately but left the rest of the questions blank instead of answering “No.” This was problematic because researchers would have had to develop business rules or omit questions because of these partially missing responses, as we could not know whether the participant meant “No” or purposely skipped the response for other reasons. In response to this issue, we added an instruction to these types of questions that read, “Please mark Yes or No for each item.”

The changes we made to the questions based on the cognitive interviews were tested in the usability interviews to see if the edits mitigated the issues. For the most part, these edits did work. The change we made based on usability testing in Example 1 on exposures (those experienced while serving in the military, in an occupation outside of their military service, or at home) helped with the understanding of the meaning of “heavily,” but some participants still missed that key word. Therefore, we underlined the word “heavily” in the question and added it to each of the column headers.

For the issue presented in Example 2 (e.g., dates of tours of duty during the War), some participants expressed appreciation that they could provide an estimate.

Have you ever been

Discussion

We conducted qualitative testing of the questionnaires used in VE-HEROeS. By conducting cognitive and usability interviewing based on established testing methodologies (Dillman et al., 2014; Fowler, 2014; Groves et al., 2009; Levine et al., 2005; Tourangeau, 1984; Tourangeau et al., 2000; Willis, 2005; Willis et al., 1991), we modified question wording to avoid misinterpretations and modified questionnaire design to reduce response error in this large national survey of veterans. Overall, these results assisted us in developing a questionnaire that could be successfully implemented in collecting information from veterans aged 60 years or older to improve our questionnaires’ item comprehensibility, respondents’ recall of service-related events, item flow, and overall ease of the survey response process. Several key aspects of this approach have assisted us in this and probably future survey activities, and may help others when conducting research of this kind (see “Lessons Learned,” Figure 2).

“Lessons Learned.”

During questionnaire development, VE-HEROeS enlisted Vietnam era veterans from its steering committee and the veteran community to advise the study team on questionnaire content and question and response option wording, which resulted in modifications that certainly appeared to improve the questionnaire. We conducted two stages or rounds of qualitative testing—the first that examined cognitive aspects of the questionnaire and the second, which was based on round 1 modifications to the questionnaires, targeted overall survey usability. This test-edit-retest process was found to be very helpful in questionnaire development because it ensured that revisions made in round 1 performed as expected in subsequent testing. Moreover, the responses to the survey and associated materials caused us to realize the importance of the inclusion of a “frequently asked questions” section that we ultimately made part of the initial survey mailer packet. We also found that participants appreciate definitions and instructions, particularly when it is done early in the survey process. Moreover, response errors such as omitted data from Yes/No grids and errors in skip patterns were likely reduced because of improved comprehension and presentation of information on the questionnaire form.

Although we did not find any items to be particularly sensitive for veterans, we did identify problems with the interpretation of response options by our pretest sample on a standardized measure that is commonly used today in health research—the SF-8 Health Survey (Ware et al., 2001). The testing of established, validated sets of questions like the SF-8 was important because responses to survey items may differ from the population on whom the scales were developed and validated against. The SF-8 response option set that was problematic was associated with this item:

During the past 4 weeks, how much energy did you have?

□ Very much

□ Quite a lot

□ Some

□ A little

□ None

Participants had a difficult time distinguishing between very much and quite a lot in this question, n = 3 (25%) (not reported in tables). Because this is part of the SF-8 scale that was used to compute the scale’s Physical and Mental Component Scores, we did not make any changes to them but this still made us aware of how these types of options should be used in other scale development for future surveys. Similarly, participants (n = 4 (33%), not reported in tables) did not see a difference or thought the responses were out of order for the FACT-COG options “quite a bit” and “very much.” This highlights the importance of pretesting all survey questions, even those questions that were previously validated or standardized using a sample from a population that may have different characteristics than the target population. The relevance of this is greater with questions that may be perceived as sensitive.

Some may question the use of convenience sampling and consider it a limitation of the research. But convenience sampling is a frequently used method for acquiring sample in qualitative research. Cognitive research is typically not conducted using standard probability sampling guidelines and thus resultant conclusions from any qualitative study may suffer due to issues with bias and generalizability (Marshall, 1996). However, our sampling process was still purposive. The samples of veterans and nonveterans were heterogeneous and representative with regard to basic characteristics of their respective populations. Sample heterogeneity may have been partly due to our enlisting aid from the veteran community through the veterans and VSO representatives from the VE-HEROeS steering committee, as well as the release of numerous social media briefs that informed lay individuals about the study and its value before and during onset of the study. All qualitative testing was completed in 2 months, which was an indication to us of the willingness of veterans and U.S. residents to participate. The monetary incentive may have influenced quick recruitment and scheduling, but we did not test this.

There are limitations to this qualitative study. The testing of the instruments may have minimized response error, however, cognitive- and usability-testing situations do not always reflect real life. Participants in these types of interviews may be especially interested in research and more careful with their answers than the actual survey respondents as a whole, particularly with an in-home self-administered questionnaire where there is no researcher observing the process. Accordingly, we suggested that researchers should observe how individuals respond to the questionnaire materials in order of administration without supervision—this enables the research team to gain a better understanding of respondents’ natural reactions to the survey process. In addition, without geographic diversity of participants, we were not able to observe whether there may have been regional differences in cognition of and usability of the VE-HEROeS survey questionnaires. The changes that were made to the questionnaires were generally based on just a few responses that brings into question the reliability for item modification—yet testing of this type typically occurs with sample sizes of approximately 12 to 20 (Dillman et al., 2014; Luborsky & Rubinstein, 1995).

We were limited by time and budget during the conduct of the two types of qualitative testing, and while we tested changes made resulting from cognitive testing, there is no clear way to know whether the usability-testing changes were successful. Accordingly, we were unable to detect problems that may have been resolved with an additional round of testing. For example, we discovered a problem with usability that was detected postsurvey administration. There was one question on the survey about whether the participant had any biological children that all participants in the usability testing answered. However, when we reviewed the item response from the actual survey data, 10% of veteran respondents omitted it, but only 3% of U.S. residents did. We examined a sample of returned questionnaire forms, and found that the question was probably not noticed by veteran respondents because of its placement in the form. Because veterans received more questions than nonveterans, the same question could appear in different locations in the two questionnaires. The question came after a section break in both questionnaires. However, in the nonveteran version, it was the last question on the page (Figure 3, Question 32), with white space under the question; for the veteran version, the question (Figure 4, Question 43) was followed immediately by a large table, making it more difficult to see. Thus, a review of postsurvey administration-item omission rates provided information on problems with questionnaire formatting that might have been identified and resolved with additional qualitative testing. We suggest, as in this prior example, for researchers to implement an item-nonresponse analysis specifically for questions that participants skipped over during qualitative testing, keeping in mind that qualitative testing participants may be research-motivated and more likely to be careful in completing a questionnaire than the rest of the population of interest.

Nonveteran version, “health of biological offspring” question.

Veteran version, “health of biological offspring question.”

In conclusion, we believe that qualitative testing has a place in studies of military veterans alongside the pilot testing of the entire survey process. An improved survey testing design might include quantitative measures of pre- and postsurvey modification response error. Qualitative testing of survey measures should be strongly encouraged, reported on, and comprise an integral component of veteran-centric survey research.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by funding from the Epidemiology Program, Post Deployment Health Services, U.S. Department of Veterans Affairs.