Abstract

Large-scale assessments are generally designed for summative purposes to compare achievement among participating countries. However, these nondiagnostic assessments have also been adapted in the context of cognitive diagnostic assessment for diagnostic purposes. Following the large amount of investments in these assessments, it would be cost-effective to draw finer-grained inferences about the attribute mastery. Nonetheless, the correctness of attribute specifications in the Q-matrix has not been verified, despite being designed by domain experts. Furthermore, the underlying process of TIMSS (Trends in International Mathematics and Science Study) assessment is unknown as it was not developed for diagnostic purposes. Thus, this study suggests an initial validating attribute specifications in the Q-matrix and thereafter defining specific reduced or saturated models for each item. In doing so, the two analyses were validated across 20 countries that were selected randomly for TIMSS 2011 data. Results show that attribute specifications can differ from expert opinions and the underlying model for each item can vary.

Introduction

A recent popular psychometric model, called cognitive diagnosis model (CDM), in contrast to classical test theory (CTT) and item response theory (IRT), aims to mainly investigate a specific finer-grained set of multiple skills within a domain of interest. These predefined skills are used to classify examinees based on whether they have mastered or not. This is a critical point where CDMs differ from the other two commonly used unidimensional test theories. The CTT and IRT usually locate and assess examinees on an ability continuum by a single overall test score. Instead of reporting a single overall score, CDMs provide pedagogical information by which students’ strengths and weaknesses regarding the acquisitions of specific skills in a domain can be identified. Therefore, CDMs serve important purposes, such as offering a more precise tool to diagnose academic needs and creating a different perspective to design a better learning environment.

Till date, several models and methodological developments have been introduced in the context of cognitively diagnostic assessments (CDAs). In terms of model developments, two types of CDMs have been proposed, classified as reduced and general models. Specifically, the deterministic inputs, noisy “

Generally, international large-scale exams (e.g., TIMSS; Trends in International Mathematics and Science Study) have been analyzed with IRT models, which provide a single total score for each examinee. With recent advancements in CDAs, however, there has been a trend toward providing more elaborate results on testing practices. A number of CDMs have been developed to obtain more detailed test results (Rupp, Templin, & Henson, 2010). The shift from single score reporting practices to CDM approaches has also been applied to TIMSS data in several studies (Birenbaum, Tatsuoka, & Yamada, 2004; Choi, Lee, & Park, 2015; Dogan & Tatsuoka, 2008; Im & Park, 2010; Lee et al., 2013; Lee et al., 2011; Liu, Huggins-Manley, & Bulut, 2018; Sen & Arıcan, 2015; Toker & Green, 2012). The applied diagnostic models have varied on these studies. These applications of CDMs to TIMSS data can be considered examples of retrofitting of CDMs to large-scale assessments, which have been generally developed and analyzed with IRT or CTT. For example, the rule space method has been used in several studies, including Im and Park (2010), Toker and Green (2012), Dogan and Tatsuoka (2008), and Birenbaum et al. (2004). Another example is that the TIMSS data have been analyzed using one of the commonly used reduced models, the DINA model, as highlighted by Lee et al. (2011), Lee et al. (2013), Choi et al. (2015), and Sen and Arıcan (2015). While carrying out these types of relevant analyses, CDMs typically assume that the test was developed based on specific attributes and a Q-matrix (Tatsuoka, 1983), which relates test items to particular attributes. For instance, Lee et al. (2011) focused on the DINA model with two purposes in view—to identify item characteristics and to investigate the mastery of attributes. Two main limitations of Lee et al. (2011)’s study were solely relying on the domain experts for attribute specifications without validating the correctness of attribute specifications and assuming the DINA model as the underlying correct model without evaluating model-data fit.

Moreover, the underlying process of TIMSS assessment for each item is unknown because it was not developed for diagnostic purposes. Nonetheless, nondiagnostic assessments can still be adapted for diagnostic purposes (Chen & de la Torre, 2014). As such large-scale assessments are not designed to obtain diagnostic information given the intensive efforts required, retrofitting multidimensional CDMs to these assessments can provide a way of obtaining the benefits of CDMs based on the current promises (Liu et al., 2018). Given the opportunity, large-scale assessments (TIMSS and PISA) have been adapted in the context of CDA. Considering the large amount of investments in these assessments, it would be cost-effective to draw finer-grained inferences about what attributes students have or have not mastered (Chen & de la Torre, 2014). Thus, there is need to emphasize the importance of doing CDA analyses using such large-scale data sets.

In particular, Chen et al. (2013) proposed a systematic procedure to adapt large-scale assessments in the context of CDM using the following steps: constructing initial and final attributes, and Q-matrix; evaluating reduced CDMs; and cross-validating the selected models. Chen et al. (2013) demonstrated using 26 released items in reading-domain of the Program for International Student Assessment (PISA), administered in 2000; initial attributes were defined by domain experts, followed by statistical analyses based on absolute and relative fit indices. After redefining those initial attributes and Q-matrix specifications, the selected Q-matrix was evaluated across reduced CDMs. Finally, the results were investigated using data from different countries. However, in Chen et al. (2013)’s study, attribute specifications were not validated using statistical procedures. Therefore, validating the correctness of attribute specifications in the Q-matrix and then defining a specific reduced or general model for each item, if possible, should be one of the earlier steps to be taken. Otherwise, attribute misspecifications in the Q-matrix and model-data misfit can classify examinees into inaccurate latent classes.

Purpose of the Study

Using the eighth-grade mathematics section of the TIMSS 2011 assessment (Mullis, Martin, Foy, & Arora, 2012), this study has three purposes. The first purpose is to validate attribute specifications in the Q-matrix under the G-DINA model in that any reduced model needs not to be known. Rather than constructing the Q-matrix of the test, it was adapted from Şen and Arıcan (2015). The validation of attribute specifications was implemented by the G-DINA model discrimination index (GDI; de la Torre & Chiu, 2016). After verifying the correctness of attribute specifications, the second purpose is to define the most appropriate model. This step is important because the fit of the model to the data should be evaluated (Chen et al., 2013). The Wald test used to investigate the item-level fit of a saturated CDM relative to the fitting of three reduced models (DINA, DINO, and

Background

Q-Matrix

Regardless of assuming a reduced or general model, the Q-matrix is a crucial component of CDMs, in that each item is associated with the required attributes to be mastered by examinees for correctly answering the item. Let

The process of constructing the Q-matrix typically involves experts’ judgments that could be considered subjective in nature. This can cause serious validation problems as a result of inaccurate parameter estimation and attribute classifications. Moreover, there have been some studies implemented for Q-matrix validation (Chiu, 2013; DeCarlo, 2011; de la Torre, 2008; de la Torre & Chiu, 2016; Liu et al., 2012; Terzi & de la Torre, 2018).

Saturated and Reduced CDMs

The primary purpose of CDMs is to classify examinees into latent classes based on which among

Nonetheless, each of these three reduced models has its own limitations. The G-DINA model is a generalization of the DINA model that partitions examinees into

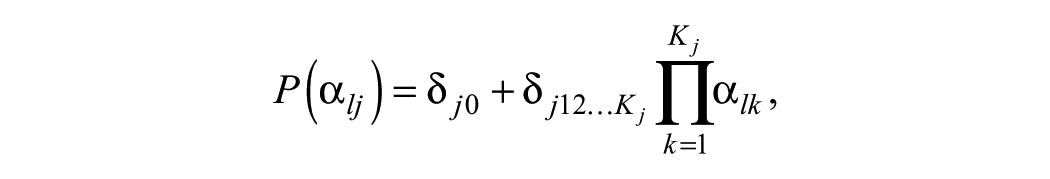

where

As earlier mentioned, if all the parameters in Equation 1 are set to zero except for

where

These models were compared and contrasted in a number of studies for various purposes (Chen & de la Torre, 2014; de la Torre & Lee, 2013; Liu et al., 2018; Ma, Iaconangelo, & de la Torre, 2016; Sorrel et al., 2017). Such studies demonstrated the importance of implementing model-data fit analyses. Focusing on model-data fit at the item level is crucial because using a single model for all the test items does not reflect the reality according to current empirical applications (Sorrel et al., 2017). Thus, this present study aims to carry out model-data fit analyses after verifying the correctness of attribute specifications in the Q-matrix.

Given the purpose of this study, the next section of this article first presents information about the data source and statistical procedures implemented. Second, results regarding the Q-matrix validation and model-data fit evaluation are provided in the following section, followed by summary and discussions.

Method

Data Source

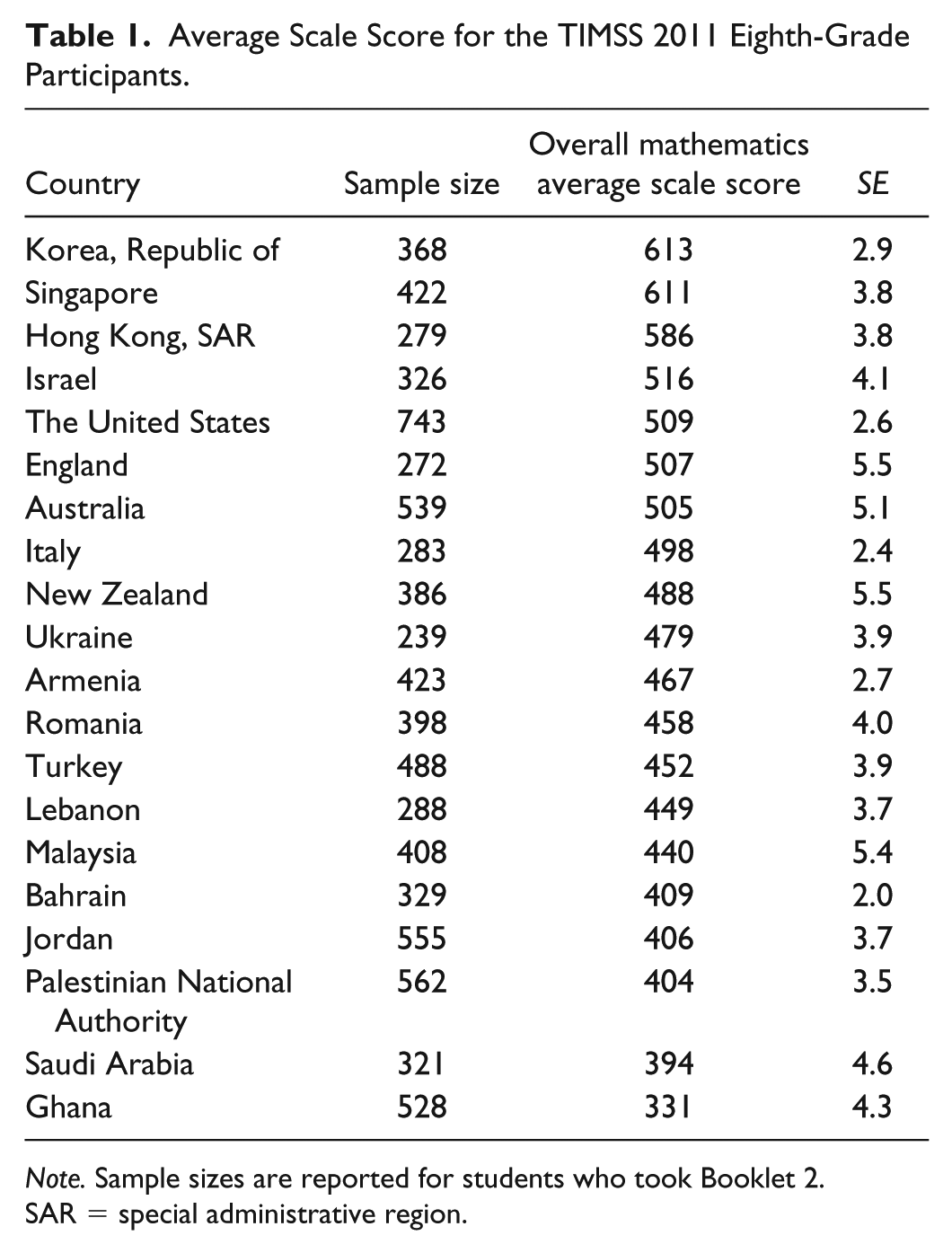

TIMSS 2011 eighth-grade mathematics responses from the students of 20 countries (e.g., Australia, Bahrain, Italy, Korea, Malaysia, Romania, Turkey, and the United States) were randomly selected for this study (Table 1). The administration of Booklet 2 to students from these countries was selected for CDM analyses in this study. Booklet 2 was composed of 32 items, including 15 multiple choice and 17 constructed response items. The sample sizes ranged from 272 (England) to 743 (the United States) students who took Booklet 2.

Average Scale Score for the TIMSS 2011 Eighth-Grade Participants.

SAR = special administrative region.

In addition to test items, CDM analyses require constructing a Q-matrix that shows relationships between items and attributes, which are required to correctly answer the items. The attributes of Q-matrix in this study were adapted from the Common Core State Standards for Mathematics (Common Core State Standards Initiative, 2010). Table 2 presents attribute description for each content domain reported by TIMSS researchers (Mullis et al., 2012), and subattributes were determined by four experts in mathematics education (Şen & Arıcan, 2015). Note that as Items M052503A and M052503B were the same in the original 32-item list, one of them (Item M052503A) was dropped from the Q-matrix (Sen & Arıcan, 2015), thus, a total of 31 items was used in this study.

Attributes Adopted From the Common Core State Standards Initiative (2010).

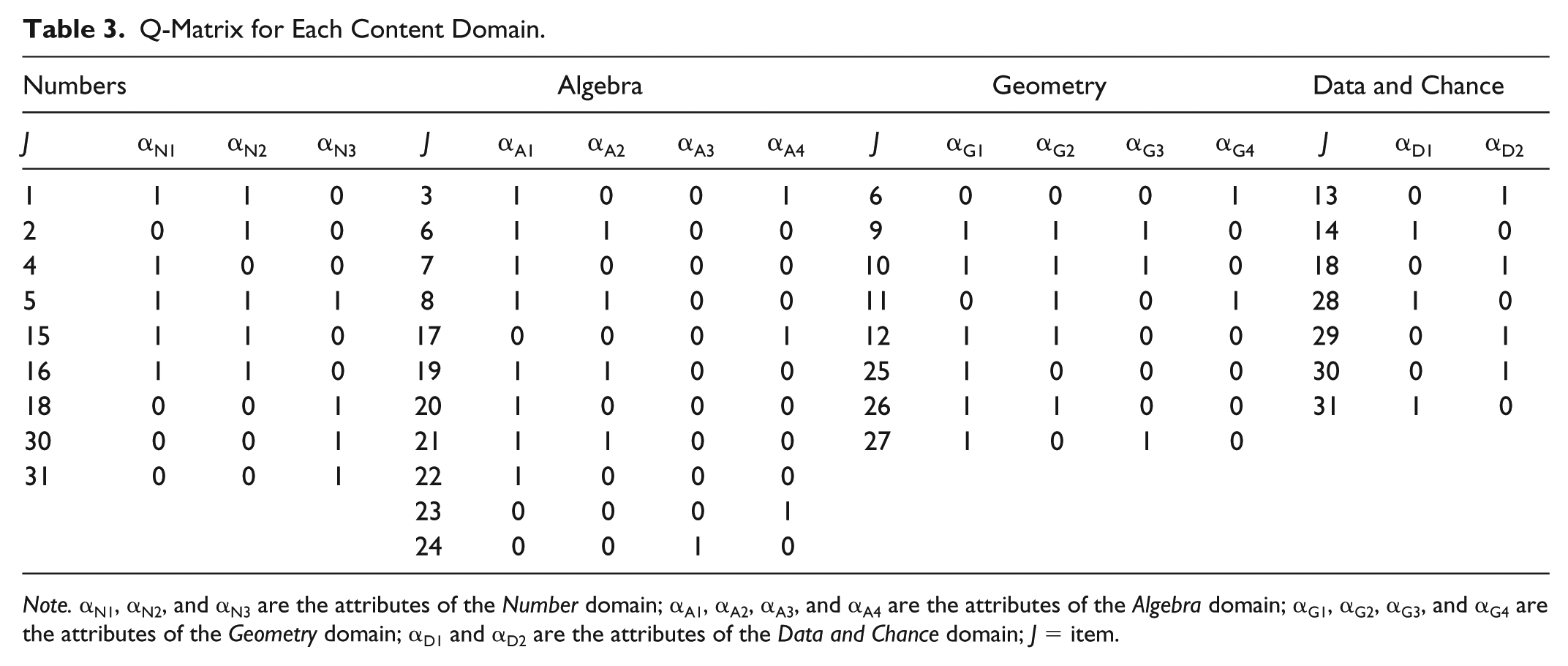

Similar to Hou’s (2013) study, because sample sizes of the randomly selected countries were limited for the G-DINA model estimation, Q-matrix validation and model-data fit evaluation were separately carried out at the attribute level and content domain level. For example, the Q-matrix for each content domain displayed in Table 3 was used in this study. That is, items in each content domain were defined if there is need for a particular attribute to answer the item correctly in the corresponding content domain. At the content domain level shown in Table 4, each domain was specified as an attribute if a particular domain is required to answer the item correctly. That is, four content domains were adapted as the attributes without disaggregating them into the 13 finer-grained attributes. The analyses were implemented in the Ox language (Doornik, 2009).

Q-Matrix for Each Content Domain.

Aggregated Q-Matrix of the Content Domains.

Statistical Procedures

Q-Matrix validation

This study applied the GDI (de la Torre & Chiu, 2016), denoted by

Given an attribute distribution, the

where

Model fit evaluation

The Wald test was first introduced by de la Torre (2011) to examine whether the G-DINA model can be replaced by one of the reduced models. The Wald test was further applied by de la Torre and Lee (2013) where the most appropriate CDM at the item level was investigated, which was applied in this study using the TIMSS 2011 large-scale data set. As stated earlier, each reduced CDM can be obtained from the saturated model using different restriction matrices based on the model specifications. Note that items requiring multiple attributes were analyzed because there is no need to distinguish between the reduced and saturated CDMs for one-attribute items (de la Torre & Lee, 2013).

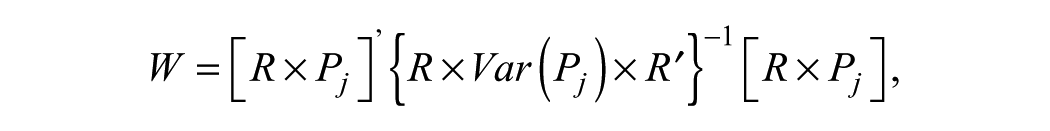

Given

for the DINA model, the DINO model, and the

where

Results

First study was carried out for the Q-matrix validation under the G-DINA model. After validating the current attribute specifications given in the Q-matrix, the second study evaluated model-data fit at the item level. Results were further reported separately based on each content domain and aggregated content domains. The important contribution of this study is to propose two steps of model-data fit evaluation for each content domain and aggregated content domains. The reason for following such sequence is to implement model-data fit evaluation based on statistically validated attribute specifications. Therefore, unintended consequences of any misspecified attribute specification, if available, can be eliminated for the model-data fit evaluation. Moreover, results for both purposes were validated across the 20 countries.

Q-Matrix Validation

The validation of attribute specifications is displayed in Tables 5 and 6 for the attributes at the attribute level and content domain level, respectively. Those results were obtained using the GDI based on the G-DINA model. According to the validation of results across the 20 countries that were randomly selected, each attribute was specified as 1 if attribute specification was suggested by more than 50% of the countries on average; otherwise, it was specified as 0.

Suggested Q-Matrix for Each Content Domain.

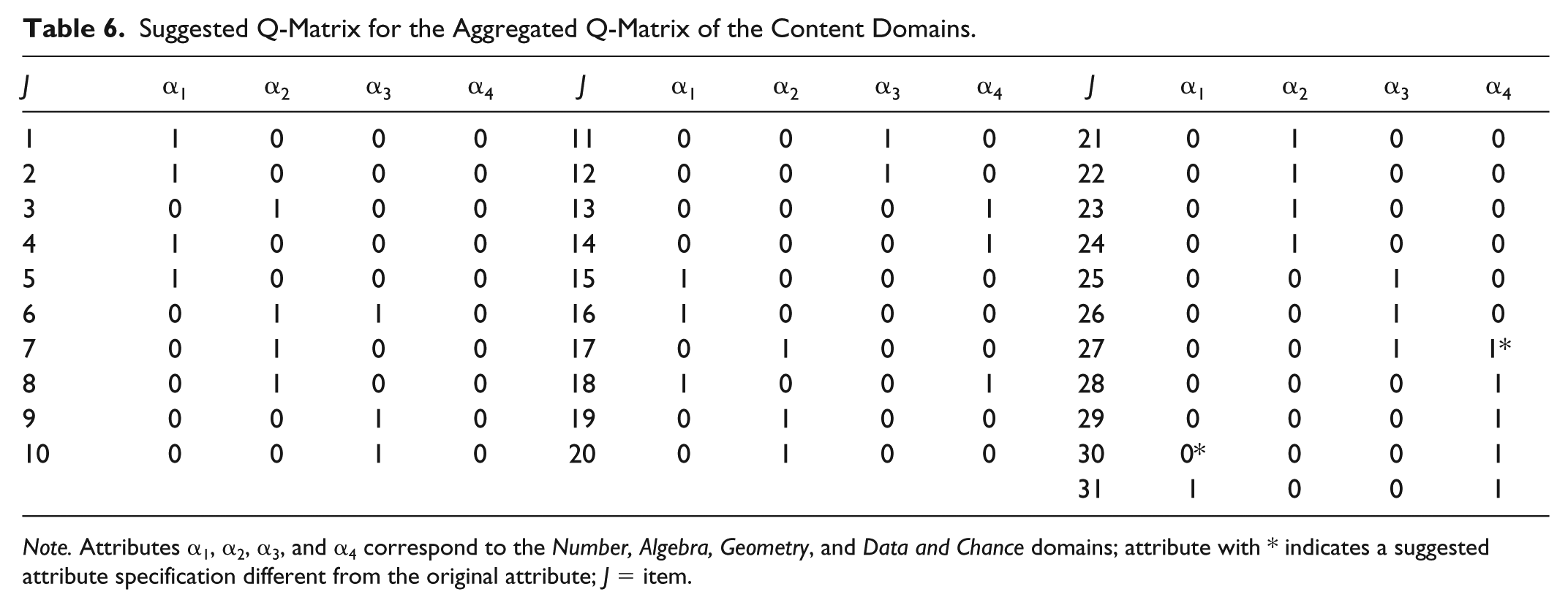

Suggested Q-Matrix for the Aggregated Q-Matrix of the Content Domains.

Given separate results for each content domain, all attribute specifications were deemed correct. There was only one exception where one attribute specification (αA2) for Item 19 in

For the content domains, as the attributes were investigated for the Q-matrix validation as shown in Table 6, two attribute specifications were changed. In Item 27 (i.e., which of the options show the result of a half-turn clockwise around point 0?), α4 was changed to 1, meaning that examinees also have to master the

Model Fit Evaluation

The Wald test,

Given the suggested attribute specifications, the next focus was on the model-data fit evaluation. The Wald test was applied at item level where any reduced model fits the data if the null hypothesis is retained. Tables 7 and 8 show model-data fit evaluation for each content domain. If the averaged proportions of the retained fitting models are more than 50% for the 20 countries, a reduced model was selected; otherwise, the G-DINA model was selected. As observed, items requiring multiple attributes were analyzed using model-data fit evaluation.

Suggested Models for Each Content Domain.

Suggested Models for the Aggregated Content Domains.

For the content domain of

For the content domain of

When the content domain of

For the content domains, as the attributes were investigated for the model-data fit evaluation as shown in Table 8, the

Summary and Discussion

One aspect for the evidence of validity is that an assessment tool should be useful (Kane, 2013). However, the utility of some applications, such as the model-data fit evaluation or retrofitting, can be improved given the assumption that reliable and accurate results are obtained (Liu et al., 2018). Even though model-data fit and retrofitting are not the ultimate solution, CDMs can still be applied to large-scale assessments in conjunction with an appropriate Q-matrix to obtain diagnostic information (Chen & de la Torre, 2014). Nonetheless, it is worthwhile using these assessments to draw finer-grained inferences about the mastery and nonmastery of specific attributes at the country levels because of the large amount of investments involved in developing the assessments (Chen & de la Torre, 2014). Moreover, attribute specifications in the Q-matrix constructed by domain experts are usually considered subjective in nature, which can lead to misclassification of examinees as a result of inaccurate parameter estimates (de la Torre & Chiu, 2016). Thus, employing such large-scale assessments require additional precaution.

This study used the eighth-grade mathematics section of the TIMSS 2011 assessment to analyze nondiagnostic assessments for diagnostic purposes. Two separate analyses were carried out at the attribute level and content domain level. After this separation and due to a large number of attributes that could cause problems for G-DINA model parameter estimates (Hou, 2013), first, attribute specifications in the Q-matrix were validated under the G-DINA model using the GDI index, in that any reduced model is not assumed. After verifying the correctness of attribute specifications for both cases, the model-data fit evaluation of the data was implemented. The Wald test used to evaluate the fit of a saturated CDM in contrast to the three reduced models (DINA, DINO, and

Findings in this study suggest that, each item should be analyzed separately in terms of Q-matrix validation and model-data fit evaluation purposes based on validating results across countries. Instead of assuming the correctness of attribute specifications in the Q-matrix as well as having a single model for all the test items, researchers should start off the analyses with a notion that the correctness of attribute specifications should be verified, while the underlying latent process is unknown. After carrying out the Q-matrix validation, fitting models for each item should be identified. Otherwise, as the results show, assuming a single model for all items without validating the attribute specifications in the Q-matrix can cause serious validation problems as a result of inaccurate parameter estimates and attribute classifications. In general, according to results, some attribute specifications were changed and some items showed different reduced and general models. Due to the interactions among the attributes explained by the G-DINA model for specific items, caution should be taken while interpreting the items. Finally, for those items, which suggested multiple fitting models, it would be safer to follow the interpretation of a more general model—G-DINA.

This study has some limitations. First, the TIMSS 2011 mathematics questions were not designed for diagnostic purposes. However, because of the large amount of investments in such a large data set, this article was intended to obtain diagnostic information from this nondiagnostic assessment. Moreover, due to the fixed sample sizes, test lengths, and the number of attributes for the TIMSS 2011 data, we were unable to investigate the results under various conditions, in particular, for the G-DINA model parameter estimates. Therefore, inferences should be made carefully when retrofitting CDMs to responses from large-scale assessments is considered.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.