Abstract

Numerous overlapping and inconsistent views of academicians and practitioners on construct of employee engagement have led to the development of various measurement instruments that differ in terms of the variables. The article attempts to develop an assessment instrument and to assess content validity of the proposed variables/dimensions. The construct is generated through grounded theory method, conducting structured interviews of human resource heads (15 best firms). The assessment of content validity is done through six domain experts. Content validity index, Kappa statistic, and content validity ratio (Lawshe test) were implemented for content validity. Three dimensions (alignment, affective, and action-oriented) having 10 items each were identified. The item content validity index (I-CVI) ranged from 0.66 to 1 and scale content validity index (S-CVI/Ave) ranged from 0.848 to 0.932. The instrument is assessed with high content validity. It bridges the research gap of incongruity among academic and industry. The next step of research will involve testing of this instrument for psychometric properties and testing its comprehensiveness for respondents.

Keywords

Introduction

Human resource (HR) is the most valuable asset for an organization but until a decade ago not many companies focused on creating a competitive advantage through their employees. Globalization and disruptive technology have led to a dynamic business environment which is changing every minute, making decision-making a difficult task. Surviving cut-throat competition requires companies to be innovative, and HR has a significant role to play as it is responsible for the effective utilization of all the other resources possessed by a firm. Therefore, managing people and engaging talent becomes very critical for an organization (Cartwright & Holmes, 2006). However, surveys around the world show engagement among employees at an alarming low rate (Blessing White, 2011; Chartered Institute of Personnel and Development [CIPD], 2015; Gallup, 2016). Gallup’s (2017) survey revealed only 15% employees to be fully engaged worldwide. The need of the hour is to implement strategies and programs that increase the engagement level of employees and help employers capitalize the benefits of having an engaged workforce. Implementing the right kind of engagement programs, foremost, requires accurately measuring the engagement level of employees. Over the past two decades, numerous overlapping and inconsistent definitions on the construct of employee engagement have been given by both academicians and practitioners (Church, 2011; Dalal, Brummel, Wee, & Thomas, 2008; Saks, 2006, 2008; Shuck & Wollard, 2010; Wefald & Downey, 2009). As a result, measurement tools focusing on different aspects of employee engagement have been developed and used (Blessing White, 2011; Harter, Schmidt, & Hayes, 2002; James, Mckechnie, & Swanberg, 2011; May, Gilson, & Harter, 2004; Pati & Kumar, 2011; Saks, 2006; Schaufeli, Salanova, Gonzalez-Roma, & Bakker, 2002; Shuck, Adelson, & Reio, 2016; Soane et al., 2012; Rich, Lepine, & Crawford, 2010). It is matter of great concern to use a valid assessment instrument of employee engagement as organizational interventions based on its results shall be a leap of faith if overlapping and ambiguous constructs are measured in the name of employee engagement (Macey & Schneider, 2008). It is imperative to use measurement instruments having scales linked to the agreed-upon construct of employee engagement (Albrecht, 2010) to ensure designing and implementing the right strategies for engaging workforce and attaining a sustainable competitive advantage through an engaged workforce.

Researchers and HRD practitioners are measuring constructs like employee engagement which are formulated at a high level of abstraction. Therefore, the assessment instruments used to measure such constructs must be validated. Validity ensures that the assessment instrument is measuring what it intends to measure. It ensures that the instrument is reflecting the theoretical concept rather than some other phenomenon (Carmines & Zeller, 1979). Validity lies in the purpose for which an instrument is being used and is determined by three common forms of content, construct, and criterion validity. Because content validity is a precondition for other forms of validity, it must be checked first in the process of development of an assessment instrument. The purpose of content validation is to minimize the potential error associated with the instrument operationalization in the initial stages and to increase the probability of obtaining supportive construct validity in the later stages. Content validity shows the extent to which an empirical measurement reflects a specific domain of content (Carmines & Zeller, 1979). It reflects the degree to which the sample items taken together constitute an adequate operational definition of the construct (Polit & Beck, 2006). It also measures the degree to which elements of the measurement instrument are relevant, representative, and comprehensive of the construct for a particular assessment purpose (Haynes, Richard, & Kubany, 1995). It helps the researcher gain invaluable feedback from panel of experts and develop and assess dimensions and subdimensions of the construct intended to be measured.

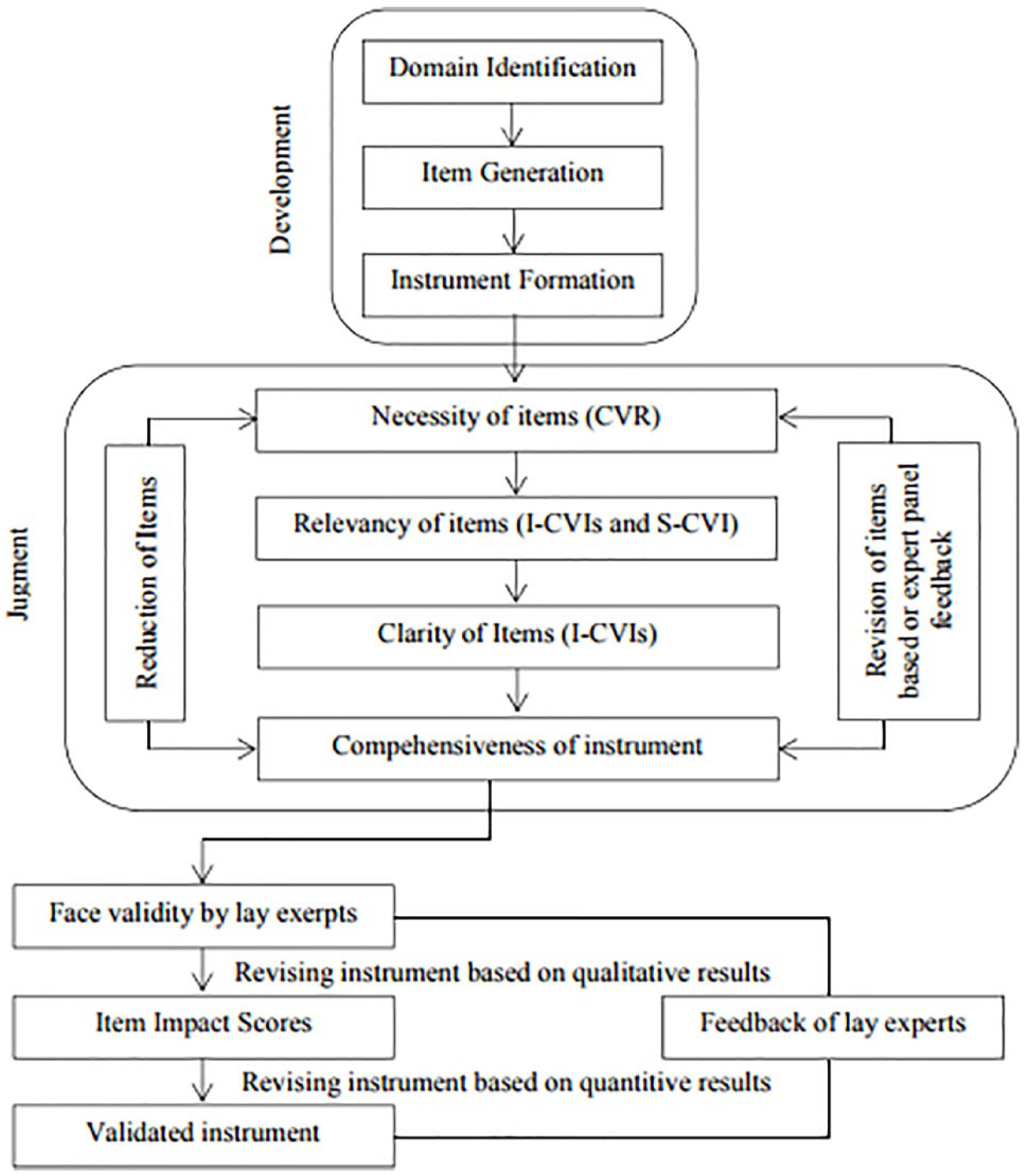

Addressing content validity begins with the development of instrument itself. It involves a two-stage process of instrument development and judgment. The two-stage process ensures determining and quantifying content validity throughout the process of instrumentation. The article attempts to develop an assessment instrument of employee engagement and assess the content validity of the proposed dimensions. The construct of employee engagement is generated through grounded theory methodology by conducting structured interviews of HR heads of 15 best firms in India to work for. Later, content validity of the items generated is done with the cooperation of six domain experts. Quantification of content validity is done using content validity index (CVI), Kappa statistic, and content validity ratio (CVR; Lawshe test).

Stage 1: Instrument Development

The first stage of instrument development is performed in three steps—identifying the content domain, generating the sample items, and constructing the instrument (Zamanzadeh et al., 2014). The content domain of the construct is identified by literature review, content analysis, and/or by conducting interviews with the respondents or focused groups. These methods are used to identify an agreed-upon definition of the construct and generate items in the preliminary stage (Figure 1).

Steps involved in content validity.

To determine the content domain for employee engagement, an extensive review of literature was conducted. Literature review helped the researchers identify various research gaps in the foundation of the construct. The presence of numerous, often inconsistent, definitions of employee engagement indicated the lack of conceptual clarity. A clear divergence was seen between academic and industrial view on employee engagement (Saks, 2006). To bridge this research gap, it was considered important to develop the construct using inputs from both academicians and practitioners. For this purpose, the researchers selected 30 best companies to work for in India based on their rankings among five popular surveys (Great places to work survey, Most admired companies in India, Most respected companies in India, Best employers in India by

The researchers used the grounded theory methodology by Charmaz (2006) to generate items for the construct of employee engagement. The transcribed data were used for coding to capture and condense it into various dimensions of the construct. Initial, focused, and theoretical coding helped researchers identify three dimensions for the construct of employee engagement, namely, alignment, affective, and action-oriented (Figure 2). The three stages of coding helped in the reduction from 81 initial codes to 38 focused codes and finally 30 theoretical codes (10 items for each dimension) (Table 1). A detailed explanation of usage of grounded theory method and generation of these items are beyond the domain of this article.

Dimensions of employee engagement identified through grounded theory method.

Items for Each Dimension Identified Through Grounded Theory Method.

Stage 2: Judgment

The second stage of judgment involves confirming the items by specific number of experts to ensure content validity of the assessment instrument. Selection of the domain experts must be done on the basis of criteria such as expert knowledge, specific training, or professional experience on the subject matter. It is recommended to involve a minimum of three experts in determining the content validity. The maximum number of experts has not been determined; however, involving more than 10 experts in the process is unlikely as increase in the number of experts decreases the chances of agreement (Polit & Beck, 2006).

For assessing the content validity of the items generated, the researchers selected six domain experts—three academicians and three practitioners based on their expertise and experience in the area of employee engagement (Table 2).

Details of the Subject Matter Experts (SMEs) Selected for Judging Content Validity.

The expert panel was then asked to give their professional subjective judgment on the items of each dimension of the construct. Both qualitative and quantitative viewpoints on the relevance, necessity, representativeness, and comprehensiveness were collected to ensure content validity of the items generated. The viewpoints of the domain experts were quantified by computing CVI, Kappa statistic, and CVR.

Quantification of Content Validity

CVI

The researchers asked the panel of experts to give their viewpoints on the items generated for the construct of employee engagement. The CVI was calculated for all individual items (I-CVI) and the overall scale (S-CVI). For CVI, the panel of experts was asked to rate each scale item in terms of its relevance to the underlying construct. A 4-point scale was used to avoid a neutral point. The four points used along the item rating continuum were 1 =

For each item, I-CVI was computed as the number of experts giving a rating of 3 or 4, divided by the total number of experts. For example, an item rated 3 or 4 by four out of five experts has I-CVI of 0.80. It is advised that I-CVI should be 1.00 in case of five or fewer judges and in case of six or more judges; I-CVI should not be less than 0.78. The S-CVI was computed for ensuring content validity of the overall scale. It can be conceptualized in two ways—S-CVI (universal Agreement) and S-CVI (Average). S-CVI (Universal agreement) reflects the proportion of items on an instrument that achieved a rating of 3 or 4 by all the experts in the panel. S-CVI (Average) is the liberal interpretation of Scale validity Index, and it is computed by using average I-CVI. S-CVI (Average) emphasizes on average item quality rather than on average performance of the experts. It is recommended that a minimum S-CVI should be 0.8 for reflecting content validity (Lynn, 1986; Polit & Beck, 2006; Rubio, Berg Weger, Tebb, Lee, & Rauch, 2003)

Kappa Statistic coefficient

CVI is extensively used by researchers for determining the content validity. However, it does not consider the inflated values that may occur because of possibility of chance agreement. Therefore, computation of Kappa coefficient ensures better understanding of content validity as it removes any random chance agreement. Kappa statistic is a consensus index of interrater agreement that supplements CVI to ensure that the agreement among experts is beyond chance. Computation of Kappa Statistic requires the calculation of probability of chance agreement, that is, Pc = [N! / A! (N – A)!] × 0.5N. In this formula, N = number of experts in the panel, A = number of experts in the panel who agree that the item is relevant. Kappa statistic is then calculated as K = (I-CVI – Pc) / (1 – Pc). Evaluation criteria for Kappa is that values above 0.74, between 0.6 and 0.74, and the ones between 0.4 and 0.59 are considered to be excellent, good, and fair, respectively(Polit & Beck, 2006; Zamanzadeh et al., 2014).

CVR

CVR according to the Lawshe test is computed to specify whether an item is necessary for operating a construct in a set of items or not. For this, the expert panel was asked to give a score of 1 to 3 to each item ranging from

Results

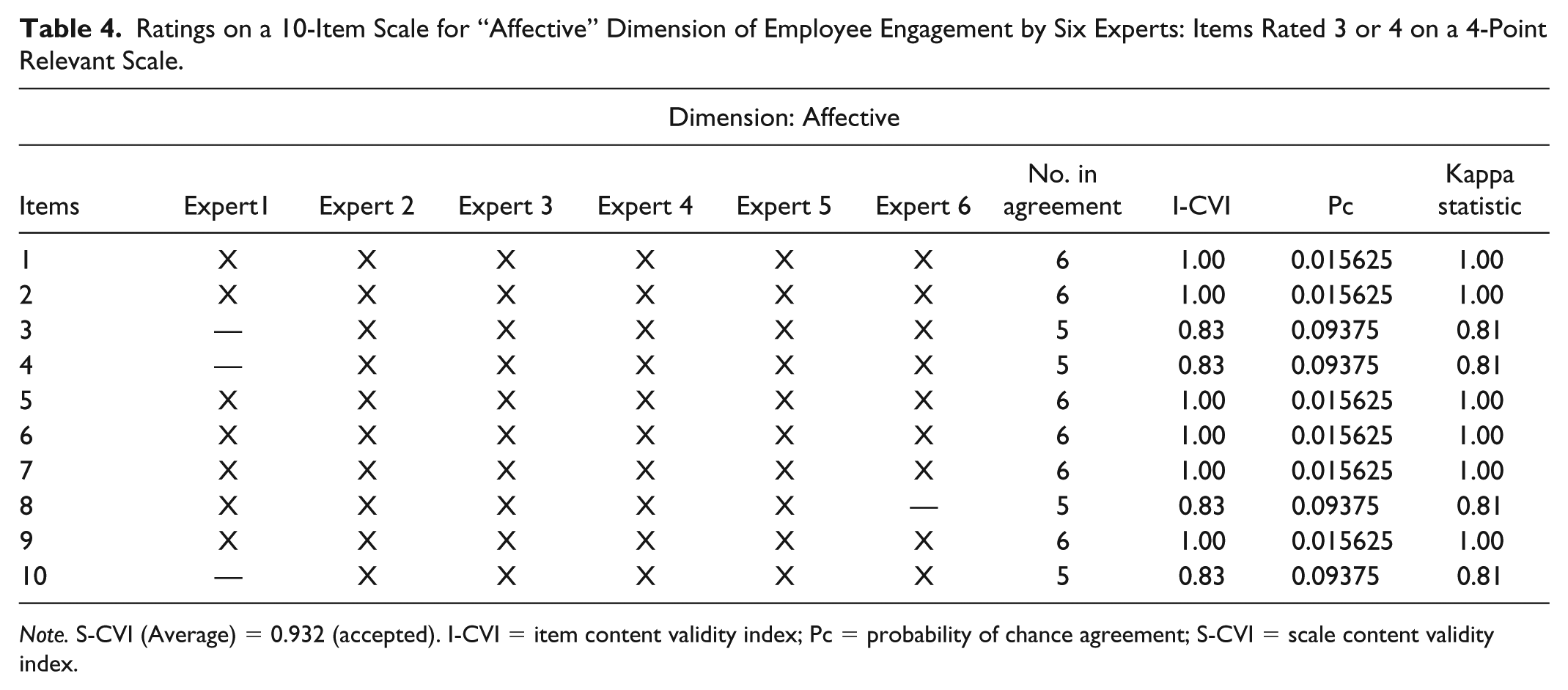

The I-CVI for all the items of the three dimensions ranged from 0.66 to 1. The S-CVI (Average) for alignment, affective, and action-oriented dimension of employee engagement was 0.898 (Table 3), 0.932 (Table 4), and 0.864 (Table 5) respectively. The overall S-CVI for the 30-item scale was 0.89 which indicated high content validity of the items for the construct of employee engagement. Items which had a 0.66 I-CVI indicated the need for revising them. Kappa statistic ranged from 0.81 to 1 for most of the items. Three items with negative kappa coefficient reflected a disagreement among raters regarding their inclusion in the construct of employee engagement. CVR for the items reflected the percentage of panelists rating an item as “essential.” Two items of the 30-item scale were rated as “not necessary” by more than half of the domain experts as their CVR was negative (Table 6). CVR ranged from 0 to 1 for other items on the scale indicating that half or more number of panelists rated these items to be essential for the construct of employee engagement.

Ratings on a 10-Item Scale for “Alignment” Dimension of Employee Engagement by Six Experts: Items Rated 3 or 4 on a 4-Point Relevant Scale.

Ratings on a 10-Item Scale for “Affective” Dimension of Employee Engagement by Six Experts: Items Rated 3 or 4 on a 4-Point Relevant Scale.

Ratings on a 10-Item Scale for “Action-Oriented” Dimension of Employee Engagement by Six Experts: Items Rated 3 or 4 on a 4-Point Relevant Scale.

CVR for Items of Each Dimension Where Ne Represents the Number of Experts Who Rate an Item as “Essential.”.

Conclusion

The process of measurement involves linking the abstract concepts to empirical indicants. Social Science researches involve formulation of concepts at a high level of abstraction which are difficult to measure. Content Validity ensures that operationalization of the construct is based on items which are taken from the specific domain of content relevant to the particular measurement situation. Content validity of the assessment instrument for employee engagement is done through a systematic two-stage process. In the first stage of instrument development, 30 items were identified using grounded theory method by conducting structured interviews with 15 HR heads of best companies to work for in India. Later, in the judgment stage, six domain experts were asked to rate the items on the basis of their relevance and necessity. The quantification of content validity on the basis of CVI (I-CVI & S-CVI), Kappa coefficient, and CVR indicated high content validity for the items. Computation of content validity for the construct helped in reducing the incongruity between academic and industrial view on employee engagement as identification of content domain was well grounded to ensure the development of the instrument is based on agreed-upon definition. However, the measurement instruments must also be subjected to rigorous testing of their psychometric properties. Therefore, the future research can ensure checking the instrument for reliability and other forms of validity such as face, construct, and criterion validity for better applicability of the assessment instrument.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.