Abstract

This article explores the degree of consensus on the validity status of Mintzberg’s configuration theory following a test in which the theory was refuted. The 218 articles that have cited the award-winning article by Doty, Glick, and Huber, and 89 articles and 12 books published by Mintzberg after the test, are reviewed. None of the reviewed articles contained any discussion about the implications for Mintzberg’s theory. It is then discussed whether the test was exhaustive and whether the lack of debate should be interpreted as tacit agreement with Doty et al. Normative aspects of a silent research community are also discussed. It is argued that it has not been proved beyond reasonable doubt that the test is exhaustive and that there are sociological explanations for the lack of debate other than “silence implies agreement.” Finally, it is argued that it would have been fruitful if the test had stirred debate.

Keywords

Ours is not a field that systematically builds and acknowledges foundational contributions. Instead, usually we move on or simply forget and later reinvent.

This article explores the degree of consensus on the validity status of Mintzberg’s (1979) configuration theory following a test in which the theory was refuted (Doty, Glick, & Huber, 1993). The motivation for this was a practical question: How to organize a battleship? Following the statement “nothing is as practical as a good theory” (Lewin, 1945, p. 129), the search for a good theory about designing an organization began. Of the countless theories to choose from (Cummings & Worley, 2009), Mintzberg’s theory on the structuring of organizations is one of the most acclaimed (e.g., Fiss, 2011; Groth, 2012; Pollitt, 2005). It is described by one author as a “monument” in organizational design research (Nesheim, 2010, p. 66). However, several organizational researchers have referred to the somewhat paradoxical situation that, although Mintzberg’s theory enjoys widespread popularity, there are surprisingly few studies in which the theory has been tested as a representation of organizational reality (Donaldson, 1996; Doty et al., 1993; Meyer, Tsui, & Hinings, 1993; Miller, 1996; Strand, 2007). Given that testing is an important part of the theory development process (McKinley, 2010), there should be great interest in testing this popular theory. In 1993, Doty et al. (1993) won the Academy of Management Journal’s article of the year award for the article “Fit, Equifinality, and Organizational Effectiveness—A Test of Two Configurational Theories.” The article was recently described as one of the “most influential and path-breaking studies” (Fiss, 2011, p. 402). The configurational theories tested in Doty et al. were Mintzberg’s theory and Miles and Snow’s theory (1978). While Miles and Snow received support in the test, Mintzberg did not. Doty et al. rather unexpectedly concluded that “until other researches can provide empirical support for Mintzberg’s work, we are unable to conclude that either the typology or the theory is valid” (p. 1243). According to several authors, positivist-inspired research is (still) the governing norm in the social sciences and organization theory (e.g., Alexander, 1982; Bailey, 1992; Bort & Kieser, 2011; Flyvbjerg, 2006). Thus, one would expect that a negative test of Mintzberg’s theory would stir debate in the research community and, consequently, lead to a consensus on a corrected version or a replacement theory. As Mintzberg (1989) himself puts it, anomalies should be cherished. Strong voices have argued, however, that the research community may not be as organized as the image of researchers as a community of puzzle solvers might indicate. According to some authors, the community is fragmented (e.g., Scott, 2003), fashion is an important norm (Bort & Kieser, 2011), and the development of new theory has become an end in itself (McKinley, 2010). Schwarz, Clegg, Cummings, Donaldson, and Miner (2007) polemically ask whether researchers “see ‘dead’ themes and paradigms without realizing that they are moribund?” A review of the 218 articles that, according to Thomson Reuters Web of Knowledge (2011), cite Doty et al. (1993) and of the works published by Mintzberg after the test should provide interesting material for exploring the degree of consensus on the validity status of Mintzberg’s theory. Is Mintzberg’s theory still considered to be “a good theory?” How has the test been received by the research community during the almost 20 years since its publication? Has the anomaly been cherished?

The Basic Argument in Mintzberg’s Organizational Configuration Theory

According to Doty et al. (1993), Mintzberg’s configuration theory consists of two parts. It provides a rich typology for describing organizational and contingency factors. The typology consists of five configurations—Mintzberg underscores that the configurations are pure or ideal types labeled Simple, Machine, Professional, Diversified, and Innovative configuration. This limited number of configurations is supposed to be sufficient to classify any organization. 1 These configurations or ideal types form the boundaries of a pentagon within which real structures can be found (Mintzberg, 1979). It is also a theory that proposes that organizational effectiveness can be predicted by the degree of similarity between a real organization and one or more of the configurations identified in the typology. In a given context, one of the organizational configurations is predicted to be more effective than the others. For instance, if an organization is to operate effectively in a machine context, it should be designed as a machine structure.

Testing Mintzberg’s Configuration Theory—The Doty et al. Study

Doty et al.’s (1993) motivation for testing Mintzberg’s configuration theory came from what they saw as a somewhat paradoxical situation: “Few theories have received so much attention in management textbooks and organizational science journals with such meager empirical support” (p. 1197). To test the theory, Doty et al. proposed three logical steps for developing testable quantitative models.

First, the organizational configurations identified in the theory must be conceptualized and modeled as ideal types. Being ideal types, it is expected that there will be deviation between a given organization and the configurations. It is therefore necessary to construct a method to measure this deviation. Given the complexity of the configurations, Doty et al. (1993) argue that measuring deviation will require a multivariate profile analysis. They use 15 variables to create a profile of organizational design configurations: vertical decentralization, selective decentralization, direct supervision, standardization of work, standardization of skills, standardization of outputs, mutual adjustment, formalization, hierarchy of authority, specialization and relative percentage of personnel in each of the five organizational parts, operating core, middle line, technostructure, support staff, and strategic apex. Six variables were used to create a profile of the organizational context: environmental complexity, environmental turbulence, analyzability, number of expectations, age, and number of employees. A panel of experts on Mintzberg’s theory was asked to score each ideal type configuration on the identified variables. The interrater reliabilities for the variables are .6 to 1.0 (intraclass correlation [ICC]; Doty et al., 1993). The variables used to measure organizational effectiveness were efficiency, human relations, quality, and costs (Doty et al., 1993).

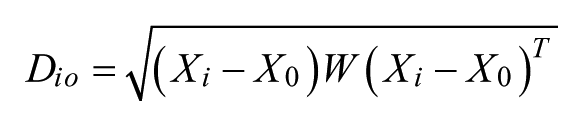

The average result from the experts’ ratings was used as a template for calculating the deviation between a real organization and the different ideal types based on the following formula (Doty et al., 1993):

where

Di o = the distance between ideal type i and organization o,

Xi = a 1 × j vector that represents the value of ideal type i on attribute j,

Xo = a 1 × j vector that represents the value of organization o on attribute j

and

W = a j × j diagonal matrix that represents the theoretical importance of attribute j to ideal type i.

Second, a model of fit must be developed that is consistent with the fit assertions in the theory. The purpose of measuring fit is to be able to test the assumption in configuration theory that, the better the fit among the contextual and structural factors, the more effective the organization is predicted to be. Doty et al. (1993) measure fit as the deviation between the ideal type profile of the different configurations stated in the theory and a given organization. Hence, the smaller the deviation calculated using the above formula between an organization and an ideal type, the better the fit.

The third and final step consists of modeling equifinality. Equifinality means that an organization can reach the same level of effectiveness from different initial conditions and by a variety of paths (Doty et al., 1993). As most configurational theories identify multiple effective ideal types of organizations, the assumption of equifinality must be interpreted and integrated with the model of fit (Doty et al., 1993). In Mintzberg’s theory, all of the configurations are said to be maximally effective. The degree of effectiveness is dependent on context, however. To Doty et al. (1993), the question is how to identify constraints on the ideal types that an organization can adapt to be effective. They presented four kinds of restrictions or models of fit and equifinality:

Ideal types fit, implying that “an organization will be effective if it closely resembles any one of the ideal types in the theory.”

Contingent ideal type fit, implying that “an organization must mimic the one ideal type that is most congruent with the contingencies facing it” to be effective.

Contingent hybrid types fit, implying that there are an infinite number of hybrid contexts paired with an infinite number of hybrid designs, but the one hybrid type that an organization must mimic to remain effective is determined by the unique contingencies that the organization faces.

Hybrid types fit is the least restrictive model and implies that “an organization is free to mimic any hybrid of the initial ideal types and remain effective.”

The models as simultaneous processes means that the four abovementioned models are used in combination.

Based on these models, seven hypotheses were derived to test Mintzberg’s topology and theory on data collected from top executives in a sample of 128 organizations from various U.S. industries. To control for the possibility that, at any given time, an organization’s design will be related to its context at some earlier time, data were collected twice from the same organizations at a 1-year interval; 85 organizations responded the second time (Doty et al., 1993).

Hypotheses and Results in Doty et al

Testing Mintzberg’s Typology

To test Mintzberg’s typology, the organizations in the sample were first classified into the categories identified in the typology. The organizations were classified as the design and contextual configuration with the smallest measured deviation score. Thus, an organization may, for example, be classified as a Machine design configuration operating in an Adhocracy context. The first hypothesis derived to test Mintzberg’s typology proposed that “Each of Mintzberg’s five ideal-type configurations will be associated with a unique contextual configuration” (Doty et al., 1993, p. 1207). The hypothesis suggests a relationship between the classifications of the organizations’ designs and contexts. A “maximum likelihood ‘log linear’ analysis” on the resulting contingency table (Contextual design × Design configuration) failed to reject the null hypothesis of independence between the organizational designs and contexts (Doty et al., 1993, p. 1218). A second hypothesis was tested to control for the possibility that there may be a time lag between changes in an organization’s context and organizational design: “Each of the five design configurations measured at time 2 will be associated with a unique contextual configuration measured at time 1” (Doty et al., 1993, p. 1208). The null hypothesis of independence was not refuted this time either (Doty et al., 1993).

Testing Mintzberg’s Theory

Mintzberg’s work is not only an attempt to classify organizations. As pointed out by Doty et al. (1993), Mintzberg is concerned with effective organizational forms. Thus, Doty et al. proposed that the reason the relationships expected in the previous hypothesis were not found might be due to ineffectiveness. Doty et al. therefore derived hypotheses to test aspects of Mintzberg’s propositions about the relations between organization, context, and effectiveness, that is, Mintzberg’s theory.

First, Doty et al. (1993) hypothesize that “An organization with the correct paring between its design and contextual configuration will be more effective than an organization with an incorrect design-context paring” (p. 1207). An example of correct pairing would be that an organization classified as operating in a Machine configuration context had adopted a Machine design configuration. In addition, t tests were used to explore whether there was a significant difference in effectiveness between the group of organizations that had adopted designs, which, according to Doty et al.’s interpretation of Mintzberg’s theory, should be appropriate to the context and the group of organizations that had not adopted the appropriate design. Ten t tests, a t test for each of the five effectiveness variables at Times 1 and 2, revealed no significant differences between the two groups. Thus, the hypothesis was not supported (Doty et al., 1993). To test the longitudinal version of the hypothesis, Doty et al. conducted five more t tests to see if there were any differences in the effectiveness variables taken at Time 2 between the two groups of organizations classified at Time 1. No significant results were found.

The next step in Doty et al.’s (1993) analysis was to test the assumption in Mintzberg’s theory that “effective structuring requires an internal consistency among the design factors” (p. 1208). The hypothesis was formulated as, “The greater an organization’s ideal types fit, the greater the organization’s effectiveness” (Doty et al., 1993, p. 1208). To test this hypothesis, the correlations between measured deviation from the ideal type and the effectiveness variables were analyzed. None of the 10 correlations between the measures of ideal type fit and effectiveness was significant. This hypothesis was thereby not supported. However, as with the test of Mintzberg’s typology, there may be a time lag between adopted organizational design and effectiveness. Hence, the measures of ideal type fit at Time 1 were correlated with measures of effectiveness at Time 2. This time, one of five correlations, the efficiency measure of effectiveness, was significant but small (r = .22, p < .05). Doty et al. (1993) concluded that the longitudinal version of the hypothesis was not supported either.

As pointed out, however, Mintzberg supposes a relation between context, organization, and effectiveness. Doty et al. (1993) therefore hypnotized that “The greater an organization’s contingent ideal types fit, the greater the organization’s effectiveness” (p. 1209). The same procedure as the previous test was used to test this hypothesis. Doty et al. found one significant relationship between degree of fit and the efficiency variable at Time 1 (r = −.21, p < .05), and the same relationship was found at Time 2 (r = –.21, p < .05). The direction of both these correlations was the opposite of the predicted direction, however. The longitudinal version of the test revealed no significant correlations. Thus, this hypothesis was not supported (Doty et al., 1993).

Mintzberg theorizes that organizations often function in a world of conflicting contingencies and that, as a result, hybrid types can be expected to be effective. Doty et al. (1993) formulated the following hypothesis to test that assumption: “The greater an organization’s contingent hybrid types fit, the greater the organization’s effectiveness” (p. 1209). The same procedure as the two previous tests was used to test this hypothesis. This test returned two significant correlations at Time 1: the relationship between degree of fit and cost (r = −.18, p < .05) and the relationship between degree of fit and efficiency (r = −.21, p < .05), but, again, in the opposite direction to that predicted. Neither of the correlations at Time 2 or in the longitudinal version was significant. Thus, this hypothesis was not supported (Doty et al., 1993).

Finally, Doty et al. (1993) tested the possibility that the different models of fit represent simultaneous processes rather than mutually exclusive ones, and hypothesized that “The greater an organization’s ideal types, contingent ideal types and contingent hybrid types fit, the greater the organization’s effectiveness” (p. 1209). To test this hypothesis, the canonical correlation between the three measures of fit and the five measures of effectiveness was calculated. None of the cross-sectional or longitudinal correlations was significant. Thus, this hypothesis was not supported (Doty et al., 1993).

Given the popularity and intuitive appeal of Mintzberg’s configuration theory, Doty et al. decided to test an alternative configurational theory. The results of the second test provided support for Miles and Snow’s (1978) theory. Doty et al. (1993) argue that the support found for the alternative theory using the same methodology, and with the same respondents in the same organizations, is an indication of the validity of their test methodology.

Doty et al. (1993) concluded that “until other researchers can provide empirical support for Mintzberg’s work, we are unable to conclude that either the typology or the theory is valid” (p. 1243). At the very least, they hoped that the results they presented would stimulate some revisions of Mintzberg’s theory (Doty et al., 1993). That expectation will be explored in the next section.

Exploring the Degree of Consensus on the Validity Status of Mintzberg’s Configuration Theory

To explore the degree of consensus on the validity status of Mintzberg’s configuration theory, Thomson Reuters Web of Knowledge was used to find research referring to Doty et al.’s test. The search turned up 218 references. The 218 articles were then searched for references to Mintzberg’s work on configuration theory. That search returned 41 articles that will be studied in this section to see whether and how the result was discussed.

The Research Community; Observing the Negative Test of Mintzberg

The negative result with regard to Mintzberg’s configuration theory was mentioned in five articles (Gresov & Drazin, 1997; Miller, 1996; Pagell & Krause, 2002; Peng, Tan, & Tong, 2004; Sinha & Van de Ven, 2005). However, none of the articles included a discussion of the implications for Mintzberg’s theory. The first of these articles, chronologically, was written by one of Mintzberg’s former colleagues at McGill University, Danny Miller (1996). After having described Mintzberg’s typology as an exemplary one, in contrast to many others that “appear thin and arbitrary,” Miller comments that it is “unfortunate that many typologies are never tested empirically, and those that are fail usually to be borne out (Doty et al., 1993)” (p. 506). In a footnote to the quoted statement, Miller explains that this is partly due to great variance in approaches to testing the typologies. Studies use different variables, operationalizations, and samples. Moreover, conflicting results are seldom resolved due to the lack of cumulative work. In the next paragraph in the article, Miller continues to use Mintzberg as an example of “good typologies” without further discussion of Doty et al.

Gresov and Drazin (1997) write that

The authors [Doty et al.] tested four different fit models, of which two were contingent and two equifinal, using first the typology elaborated by Mintzberg (1979) . . . none of the fit models was supported for the Mintzberg model.(p. 417)

After that, no more space is devoted to the Mintzberg theory. But Gresov and Drazin do give attention to the results of the Miles and Snow test. In light of Doty et al.’s argumentation that, having found support for Miles and Snow’s theory using the same method as in the negative test of Mintzberg’s theory as an indication of validity of their method, it is relevant to mention that, according to Gresov and Drazin, “Researchers can now use this methodology, and creative variations of it, to test fit-performance predictions for theoretically derived theories” (p. 423). There are no explicit references to Mintzberg’s theory in this last quote, but, given the general formulation of the statement, it seems reasonable to interpret it as providing support for the methodology Doty et al. used to test Mintzberg’s theory as well. Consequently, it can be interpreted as support for Doty et al.’s results (i.e., it is Mintzberg’s theory that is invalid, not the methodology used to test the theory). However, the test also depends on valid operationalization of Mintzberg’s theory, which is not discussed.

Pagell and Krause (2002) mention the result in a review of the literature examining external fit and performance. They observe that Doty et al. found that organizations that correspond to one of Miles and Snow’s ideal types did have enhanced performance, while Mintzberg’s typology did not distinguish between high and low performing organizations. Pagell and Krause do not elaborate on this issue, however. Peng et al. (2004) comment that, as the Mintzberg typology does not hold up as well as the Miles and Snow typology in the test by Doty et al., they decided to build their analysis on Miles and Snow’s typology. Finally, Sinha and Van de Ven (2005) also comment that Doty et al. in a “pioneering study . . . found no evidence for Mintzberg model” (p. 397). And, like Gresov and Drazin (1997), Sinha and Van de Ven claim that Doty et al.’s method for modeling ideal types is “useful and appropriate for testing theoretical configurations” (p. 397). Sinha and Van de Ven do raise a few questions concerning the methodology (e.g., how new configurations can be discovered). These are general questions, however, and not directed explicitly at the test of Mintzberg.

The Research Community; Using Mintzberg’s Theory Analytically and Referring to Doty et al. in the Same Article Without Commenting on the Test

In 4 of the 42 articles, the authors have chosen to use Mintzberg’s theory as an important analytical tool in their analysis without commenting on the results from Doty et al. even though they use Doty et al. as a reference in the article. The first of these, chronologically, is Johnston and Yetton (1996), who identified three fit typologies that could be utilized in their analysis: Burns and Stalker (1961), Miles and Snow (1978), and Mintzberg (1979). They selected Mintzberg “because it provides a more richly detailed and sophisticated treatment of ideal type organizational forms” (Johnston & Yetton, 1996, p. 195). As Johnston and Yetton use Doty et al. to document the claim that organizations resembling an ideal type configuration are hypothesized to be more effective, it seems reasonable to expect a comment on Doty et al.’s test of Miles and Snow, and Mintzberg.

However, Doty et al. neither actually test nor discuss the validity of Mintzberg’s classification of organizational forms. What they tested and found unsupported was a hypothesis suggesting that each of the ideal typical organizational forms will be associated with a unique contextual configuration (Doty et al., 1993), and the classification of both organizational forms and contexts was based on a template derived by Doty et al., not by Mintzberg. Johnston and Yetton (1996) found that each of the two IT organizations they analyzed turned out to be close to one of the ideal types. One organization resembled the machine and the other the divisionalized configuration. They concluded that their study demonstrated the importance of configurational analysis (Johnston & Yetton, 1996), an analysis that was based on Mintzberg’s typology. However, they do not discuss how their result might supplement or compete with Doty et al.’s result.

In an article on using configurations as a theoretical approach to studying health service organizations, Reeves, Duncan, and Ginter (2003) use a reference to Doty et al. in the introduction to their article to support a claim that, as organizations and environments increase in complexity, it becomes more difficult for the organizations to decide how to achieve fit with the environment. Reeves et al. (2003) draw upon Mintzberg’s configurations in support of their own taxonomy. In the discussion section, they claim that Mintzberg’s “theoretical work is supported, but as would be expected, not duplicated in this study of health care organizations” (Reeves et al., 2003, p. 40). Why the authors do not use this opportunity to discuss Doty et al.’s unsupportive results remains unanswered.

To develop a theoretical model for studying management accounting systems (MAS), Gerdin (2005) leans quite extensively on Mintzberg’s work, for example, to develop an organizational structure variable he calls “The Simple Unit.” Mintzberg was also used in support when Gerdin looked for possible explanations for results that did not conform to prior research on MAS: “Contrary to expectations, Rudimentary MASs were somewhat overrepresented. However, the concept of equifinality may help to explain this finding . . . Mintzberg (1983, p. 7) argued that ‘direct supervisjon effected through the superstructure and standardization of work processes emerge as key mechanisms to coordinate the work in functional structures.’” (p. 118). And in the conclusion, Doty et al. are used as an example of researchers who have “explicitly taken up the concept of equifinality in their empirical work” (Gerdin, 2005, p. 120). Gerdin does not comment on Doty et al.’s empirical work on Mintzberg, however.

In a discussion on the implementation of information systems in hospitals, Lapointe and Rivard (2007) present an analytical model covering the individual, group, and organizational levels. Lapointe and Rivard state that predictions based on the template they use at the organization level are not fully developed, but they write, “It is strongly grounded in theory because it is derived from Mintzberg’s model of configurations (OC; 1979, 1980), which has been extensively used since its publication (Doty et al., 1993)” (p. 91). Doty et al. (1993) do write that Mintzberg’s theory has been extensively used, but they also emphasize that few theories have received so much attention with such meager empirical support and that Mintzberg’s theory is invalid.

That said, extensive use might perhaps be as good an indication of the usefulness of a theory in the social sciences as a quantitative test. In addition, Doty et al. tested the ability of Mintzberg’s theory to classify and predict organizational effectiveness, while what Lapointe and Rivard discuss is implementation. At the end of their discussion, Lapointe and Rivard (2007) state that they are “confident enough in the soundness of their theoretical foundation and the richness of our findings to offer practical design” (p. 105). Mintzberg’s theory thus seems to have passed Lapointe and Rivard’s test of usability. They do not give any advice, however, on how to interpret that result compared with Doty et al.’s result.

The Research Community; Citing Mintzberg and Doty et al. Within the Same Set of Brackets Without Commenting on the Test

Both Mintzberg and Doty et al. were cited in the same reference in five articles (i.e., within the same pair of brackets), but without any advisory comments on how to interpret the results in Doty et al. (Nickerson & Zenger, 1999, 2002; Palthe & Kossek, 2003; Rivkin & Siggelkow, 2003; Zott & Amit, 2008). A quote from the first of these articles, chronologically, illustrates this: “Attempts to structure organizational forms that deviate from these commonly found clusters are lower performing (Doty et al., 1993; Mintzberg, 1979)” (Nickerson & Zenger, 1999, p. 48). As Doty et al. claim that Mintzberg’s typology cannot be used to separate low performing from high performing organizations, it is rather difficult to interpret what the intention behind this reference actually is.

However, an example from these articles illustrates that, even though it can seem a bit odd to use Doty et al. and Mintzberg in the same reference, it could be defensible. In this article, the authors use Mintzberg and Doty et al. as support when describing a concern among contingency theorists: “A prominent concern among contingency theorists has been to explore variables related to the strategy and structure of firms (e.g., Doty et al., 1993; Galbraith, 1977; Miles & Snow, 1978; Mintzberg 1979)” (Zott & Amit, 2008, p. 2). Regardless, knowing that Doty et al. claim that Mintzberg’s theorizing is invalid, a comment from the authors on Doty et al.’s results would have been enlightening.

The Research Community; Citing Mintzberg and Doty et al. in Different Places in the Text Without Commenting on the Test

The last category of articles found in this review consists of 27 articles (Bezencon & Blili, 2009; Brown & Iverson, 2004; El Sawy, Malhotra, Park, & Pavlou, 2010; Ferratt, Agarwal, Brown, & Moore, 2005; Fiss, 2007, 2011; Gomez-Gras & Verdu-Jover, 2005; Gosain, Lee, & Kim, 2005; Greckhamer, Misangyi, Elms, & Lacey, 2008; Hill & Birkinshaw, 2008; Hult, Ketchen, Cavusgil, & Calantone, 2006; Jaspers & van den Ende, 2006; Lee, Miranda, & Kim, 2004; Lejeune & Yakova, 2005; Love, Priem, & Lumpkin, 2002; Mathiassen & Sorensen, 2008; Meijaard, Brand, & Mosselman, 2005; Naude, Henneberg, & Jiang, 2010; Nissen & Burton, 2011; O’Reilly & Finnegan, 2010; Payne, 2006; Raisch & Birkinshaw, 2008; Sandelin, 2008; Siggelkow & Rivkin, 2009; Smirnov, Dankiv, & Dankiv, 2009; Sun, Hsu, & Hwang, 2009; Visscher & Visscher-Voerman, 2010).

In these articles, the authors use Mintzberg in one or more parts of the text in support of their argumentation and, in other parts, use Doty et al. to support their argumentation, without commenting on the negative test. To illustrate this category, the first, last, and median articles, chronologically, will be used. In the first of these articles, Mintzberg’s configurations are drawn upon in the discussion part, in which Love et al. (2002) discuss the relevance of generalizing their results to other kinds of organizations. Doty et al. are used in a discussion of methodological considerations when using a “key respondent survey approach” (Love et al., 2002). Although Mintzberg and Doty et al. are used in different places, it would perhaps have been fruitful to comment on Doty et al.’s results.

In the median article, Raisch and Birkinshaw (2008) first use Mintzberg together with Miles and Snow (Miles & Snow, 1978) and Meyer et al. (1993) to document that there is a long tradition of defining effective configurations and trying to distinguish them from less effective ones. In this context, it is worth noting that Meyer et al., which was the introduction article to a special issue on configuration theory in which Doty et al. were published, present the results from Doty et al. Raisch and Birkinshaw use Doty et al. to document that different authors have argued that mixed strategies and structures lead to lower performance. Thus, it could be reasoned that Raisch and Birkinshaw use these references independently. However, Mintzberg and Doty et al. are referred to in the same section of the article, and they should thus probably be read as part of a related, consistent argument.

Finally, the last article, chronologically, in this review is by Nissen and Burton (2011), who, in their introduction, use Mintzberg as one of 13 different references to support the argument that a “a myriad of empirical articles . . . have confirmed and reconfirmed that poor organizational fit degrades performance and many diverse organizational structures have been theorized to enhance fit” (p. 418). Interestingly, in a footnote to the quoted statement, the authors note that they recognize differences in meaning between organizational structure, form, configuration, and so forth, referring among others to Doty et al. However, it is not, at least not explicitly, acknowledged that Doty et al. do not reconfirm the argument about fit and performance in Mintzberg’s theory.

The Research Community; Snowballing a Phrase Found in Miller (1996)

The first article that was discussed in this review contains a reference to an article with the interesting title “Against Configuration: Miller, Mintzberg and McGillomania” (Miller, 1996). Following this path finally led to Donaldson’s (2001) book The Contingency Theory of Organizations, in which he claims that Doty et al.’s “findings challenge the whole typology of Mintzberg (1979) and those typologies derived from this typology” (p. 147). While Donaldson does not discuss the test of Mintzberg’s theory either, it is relevant to note that, according to Donaldson, there are problems with Doty et al.’s analysis of the Miles and Snow data, which lead Doty et al. to erroneously conclude that there is support for Miles and Snow’s theory. This is relevant because an important argument Doty et al. use to justify the validity of their method is that using the same method on the same sample resulted in support for one theory and not the other.

Somewhat surprisingly, Donaldson does not give any reason why he does not discuss Doty et al.’s analysis of their Mintzberg data. One explanation can be found in the tendency many of us have to seek information that conforms to what we expect to see (March & Heath, 1994). Donaldson (1996, 2001) is generally critical of the configurational approach—Donaldson was the source of the term McGillomania. Consequently, a negative test that conforms to his a priori assumption does not need any further scrutiny. Donaldson does not refer to any other empirical tests of Mintzberg’s theory.

Two rather surprising results stand out. First, none of the articles has suggested any revisions of Mintzberg’s configuration theory or discussed the methodical approach used by Doty et al. to test Mintzberg’s typology and theory. However, one article contained an intriguing reference to Donaldson’s discussion of Doty et al., but Donaldson did not discuss the test of Mintzberg’s theory either. Second, Mintzberg’s name did not turn up on the list generated from Thomson Reuters Web of Knowledge. Thus, in the next section, research published by Mintzberg after 1993 will be searched for references to Doty et al.

Across the Table—Mintzberg’s Response to the “Null Result”

To cross check whether Mintzberg has responded to the “null result,” 74 articles and 12 books listed on Mintzberg’s own Internet page were searched for references to Doty et al. (Mintzberg, 2011). No references to Doty et al. were found. As Mintzberg is a very productive researcher, it might be that he had forgotten to put some of his articles on the list. Thus, Thomson Reuters Web of Knowledge was searched for articles written by Mintzberg from 1993 onwards. This search returned an additional 15 articles, but no references to Doty et al. were found this time either. Finally, an email was sent to Mintzberg asking whether he had made any comments on Doty et al. Mintzberg confirmed that he had not, explaining that “They published in 1993 and all my writing on the subject happened before that” (personal communication, H. Mintzberg,February 9, 2012).

Even though Mintzberg has not responded to Doty et al.’s article, he has commented on anomalies and suggested implications for his theory. Due to the self-reflective nature of this comment, it is presented in some length. Mintzberg (1989) starts with a reference to Darwin’s distinction between two types of researchers: lumpers and splitters. Lumpers are those who categorize; splitters are those who claim the opposite: that nothing can be categorized and that styles vary indefinitely. Based on that distinction, Mintzberg presents a very interesting self-reflection:

For several years I worked as a lumper, seeking to identify types of organizations . . . I developed various “configurations” of organizations. My premise was that an effective organization “got it all together” . . . All of the anomalies I had encountered—all those nasty, well functioning organizations that refused to fit into one or another of my neat categories—suddenly became opportunities to think beyond configurations. I could become a splitter too. (pp. 254-255)

Mintzberg elaborates that many organizations seem to fit more or less naturally into one of his categories, but some do not. To meet the critique from splitters, Mintzberg (1989) has suggested that the configurations can also be thought of as forces pulling the organization in different directions, that is, the simple configuration represents a force for direction, the machine configuration a force for efficiency, the professional configuration a force for proficiency, the divisionalized configuration a force for concentration, and the adhocracy configuration a force for learning. 2

That said, it should be added that the perspective of configurations as forces seems to be quite clear in the original presentation in The Structuring of Organizations, in which Mintzberg states

the configurations represent a set of five forces that pull organizations in five different structural directions . . . Almost every organization experiences these five pulls; what structure it designs depends in large part on how strong each one is. (Mintzberg, 1979, pp. 469-472)

Doty et al. make reference to the publication in which Mintzberg presents this explicit reorientation. The perspective of configurations as forces is not integrated in their test, however. Thus, Doty et al.’s claim of invalidity only appears to be relevant to a lumped version of Mintzberg’s theory.

Discussion

One promising result was that, even though Mintzberg has not responded to Doty et al., the review of Mintzberg’s work showed that he has acknowledged anomalies and proposed an additional set of auxiliary hypotheses (i.e., perceiving the configurations as forces). Mintzberg’s own revision remains untested, however.

From the perspective of scientific development as an explicit cumulative and dialectic process, the result of this review was rather surprising. The 322 items of research that were reviewed in this study revealed no indications of attempts to correct Mintzberg’s theory based on the anomalies suggested by Doty et al. Neither did this review reveal any discussions of the methodology on which Doty et al. based their test of Mintzberg. Considered one by one, the authors of the articles discussed above may have had good reasons for not discussing the implications of the negative test of Mintzberg in more depth. Those reasons were not presented, however.

Consequently, except if the test were exhaustive and the lack of discussion could be interpreted as agreement with Doty et al.’s claim of invalidity, it will be difficult to draw a definite conclusion about the degree of consensus on the validity of Mintzberg’s configuration theory. However, the review identified research in which it was concluded that using Mintzberg’s configurational theory had proved fruitful. In addition, in a recent article, Mintzberg’s theory has been presented as a solid framework for analyzing organizations today (Groth, 2012), and it is still in use by Mintzberg (e.g., 2009) himself.

So, what now? Are Doty et al.’s findings just another example of research providing what Bailey (1992) labeled “so what results” (i.e., research that has produced reliable but insignificant results)? The question will be addressed from three perspectives. First, it will be discussed whether there are methodological reasons for challenging the exhaustiveness of the test. Then, from a sociological perspective, it will be discussed how the lack of debate should be understood. Should it be interpreted as tacit agreement with the claim of invalidity, or are there other reasonable explanations for the silence? Finally, it is one thing to describe where one is heading and try to explain why, but, from a value rational perspective, the most important question is perhaps to ask whether that development is desirable (Flyvbjerg, 2006). Therefore, from a normative perspective, it will be discussed whether the test should have stirred debate.

The Exhaustiveness of the Test

The most pressing question, at least from an applied perspective, is whether the test was exhaustive, that is, was this the crucial test that falsified Mintzberg’s theory once and for all? However, due to the subjective, intuitive space between axioms and an empirical test, it has been questioned whether a social theory should be rejected on the basis of an ultimate, crucial experiment (Alexander, 1982; Flyvbjerg, 2006), a challenge Miller (1996) has pointed to as an explicit difficulty when testing configurational theories in general, where the variables, operationalizations, and samples used have varied greatly.

That there might be room for interpretation is illustrated by Doty et al.’s (1993) discussion of the limitations of their own study: using perceptual measures from a single informant—the top manager of the organizations in the sample—to describe the structure of an organization, not measuring all components in Mintzberg’s theory, and using only two experts to make quantified profiles of the ideal typical configurations. The top manager’s perception might not be accurate (Mezias & Starbuck, 2003; Mintzberg, 1979), and including other variables could of course produce other results, and, as explicitly reflected upon by Doty et al., “Another group of organizational researchers might interpret Mintzberg’s typology differently and find different results from the current data” (p. 1224).

Another important aspect that Doty et al. do not discuss as a possible limitation is the dependent variable in the test, organizational effectiveness. As noted by Mintzberg (1989), organizational effectiveness is a complex variable to measure, and different stakeholders are likely to evaluate organizational performance in terms that benefit themselves (Scott, 2003). Having relied on the top managers’ perceptions, the question of how other stakeholders might have evaluated organizational performance remains open.

Doty et al. (1993) argue that, by finding support for another configuration theory (i.e., Miles and Snow), using the same methodology and the same informants in the same organizations, enhances the validity of their conclusion. It is difficult to see, however, how making a valid interpretation of one theory (i.e., Miles and Snow) is an argument for making a valid interpretation of another theory (i.e., Mintzberg). In addition, Donaldson (2001) has pointed to problems in Doty et al.’s analysis of the Miles and Snow data.

There is also the question of whether measuring ideal types is consistent with the ideal typical approach (J. O. Jacobsen, 1992). Mintzberg (1979) explicitly writes that “Each configuration is a pure type (what Weber called an ‘ideal’ type)” (p. 304). Weber (1922/1971) was quite explicit that ideal types are not hypotheses; they are not portraits of reality. They should be used to develop unambiguous ways of expression, as a tool for developing hypotheses about reality. Consequently, it does not make sense to test whether an ideal type is true or false in the empirical world. It is more a case of an ideal type being more or less useful (J. O. Jacobsen, 1992).

In this context, it is useful to mention Donaldson’s (2001) claim that there seems to be some confusion in configuration theory. According to Donaldson, there is a distinction between treating organizational types as “cognitive configurations” serving as mental models that can be used as a basis for evaluating organizations to guide peoples’ orientation and so forth and the perspective Donaldson calls “existential configurations,” where it is implied that reality is actually composed of a limited number of types.

From a cognitive perspective, configurations will remain abstract; they will never be found as empirical objects. Such use of configurations is valid, Donaldson claims, and it does also seem to be consistent with Weber’s description of ideal typical use of concepts and Mintzberg’s description of how to use his configurations. However, Donaldson writes that, “As we have seen, existential configurations fail to explain the organizational world” (Donaldson, 2001, p. 152).

As Donaldson does not have any references to what or who “we have seen,” it is not immediately apparent whether he is referring to Mintzberg’s (and Miles and Snow’s) theory or to Doty et al.’s test of the theory, or both. However, based on Weber’s definition of ideal types, and Mintzberg’s emphasis that the configurations are ideal types—which Doty et al. do acknowledge—it seems that Doty et al. can be criticized for conflation when they claim that Mintzberg’s typology and theory are invalid (i.e., treating Mintzberg’s configurations as existential configurations when, according to Mintzberg, they are pure types, or, in Donaldson’s terminology, cognitive configurations). An example of this possible conflation is where Doty et al. (1993) write “the degree of deviation between each real organization and the ideal types is measured” (p. 1200). It would probably be more correct to claim that it is Doty et al.’s model that is invalidated. However, this review identified researchers who found Doty et al.’s method for testing theoretical ideal types appropriate (Gresov & Drazin, 1997; Sinha & Van de Ven, 2005).

In sum, there seems to be enough room for interpretation in relation to these few aspects to question the exhaustiveness of the test. Given the complexity of Mintzberg’s configuration theory, the subjective room for interpretation is possibly too large for one critical test to falsify the theory. Then there is the question of whether it is possible to test ideal types. In addition, Doty et al. have not tested Mintzberg’s split version of his theory in which the configurations are presented as forces pulling on the organization. That is not the same as arguing that the test should be ignored, however.

Rather, as Mintzberg (1989) has claimed, cherishing anomalies is the most important prescription for effective theory development: “Breakthroughs . . . come from anomalies that have been identified and held onto” (p. 253). Even though Mintzberg has commented on anomalies in relation to perceiving the configurations as exhaustive categories, after this review, it still seems relevant to ask how long the anomalies proposed by Doty et al. will be held on to.

Does Silence Imply Agreement?

How then, should the lack of debate be interpreted? Does the silence imply agreement or is it more probably an indication of ignorance? In a review of a book Mintzberg coauthored, Strategy Safari (Mintzberg, Ahlstrand, & Lampel, 1998), the reviewer claimed that a fault in the book was that configurations may have no practical use, adding, however, “In some businesses having a useless idea may be fatal, but in the management theory market it hardly matters” (Carney, 1998). From this rather uncomforting and defensive perspective, whether or not a test of Mintzberg’s theory turned out to be supportive of the theory is actually irrelevant. Thus, to gain a better understanding of why the test did not provoke debate, it seems fruitful to examine whether explanations might not be found in the sociology of the research community.

First, Doty et al.’s study is well within the positivist-inspired tradition of organizational research (i.e., collecting quantitative data to test hypotheses about a given theory’s predictive potential in relation to an objective reality). Given that emulating positivistic natural science is still the governing norm in organization science (Bort & Kieser, 2011), methodological unorthodoxy does not present itself as a very likely explanation for the lack of interest in discussing the negative result. The positivistic norm may not be the governing one, however.

In a recent study, Bort and Kieser (2011) challenged the view of researchers as being mainly concerned with solving problems identified by their peers to contribute to scientific progress. Perhaps not very surprisingly, Bort and Kieser claim that scientists are just like everyone else: Their attention is influenced by what is fashionable. One example they give of a subfield of organizational theory that is just not popular anymore is contingency theory, under which the configuration theory is categorized (D. I. Jacobsen & Thorsvik, 2002). Organizational design is another subfield of organization theory that embraces Mintzberg’s theory and that is said to be unfashionable (Miller, Greenwood, & Prakash, 2009). Thus, the lack of interest might be explained by the mere fact that it is unfashionable. Consequently, silence in this context means ignorance, not agreement.

One of the hypotheses that was supported in Bort and Kieser’s study was that concepts from authors with a high reputation will be referred to more often than concepts from authors with a lesser reputation. Mintzberg’s The Structuring of Organizations has been described by one author as a monument of classic research on organizational design (Nesheim, 2010). Tom Peters suggests that Mintzberg is “perhaps the world’s premier management thinker” (Mintzberg, 2009), and, according to Pollitt (2005), Mintzberg has been a particularly influential voice over the past 30 years. Thus, scientists could be expected to be quite eager to start working on an anomaly found in a premier thinker’s theory.

A classic explanation of why that expectation was not met and why silence may be an indication of something other than agreement can be found in Kuhn’s (1964) theory of paradigms in which Kuhn describes how researchers working within an established research program are not willing to accept information that is not consistent with a prevailing theory. The term McGillomaniacs or McGillomania is probably meant to draw attention to this tendency among Mintzberg and his colleagues, who work at or have worked at McGill University. It hints at their unanimous promotion of the idea that a limited number of configurations will provide a valid representation of organizations (Miller, 1996; Mintzberg, Ahlstrand, & Lampel, 1998/2009). However, with his version of configurations as forces, Mintzberg has displayed a willingness to accept and implement anomalies. It is the larger community that does not seem to be interested. However, it remains a nagging question whether Mintzberg would have something to lose if he were to cherish the anomaly presented by Doty et al.?

A related explanation might be found in a Foucauldian-inspired power analysis in which attention is turned to knowledge that is subjugated (Bacchi, 2009), that is, Mintzberg’s popularity and influence might be one reason why the need for revision suggested by Doty et al. has not stirred debate in the larger community. Even though Doty et al. were awarded a prize for the article, it is still Mintzberg who is the influential one. Bort and Kieser (2011) further speculate that, due to the pressure to mass produce to successfully pursue an academic career, researchers might be more inclined to follow theoretical concepts rather than contribute to developing new ones (i.e., it is more effective to exploit than to explore). Hence, to an aspiring scientist, it might appear to be “smarter” to conform and produce work that supports, rather than challenge an influential author’s theory. In this context, the task of developing suggestions for revising Mintzberg’s typology and theory could be associated with too much uncertainty. As Miller (1996) writes, there are no cookbooks for generating good typologies. Thus, a reasonable explanation for the silence might again be ignorance.

In sharp contrast to the description of the research community as being most interested in exploiting established theories stands McKinley’s (2010) observation that the current overriding goal in organization studies is the development of new theories at the expense of trying to establish a consensus on the validity of an existing theory by testing and replication before a new theory is proposed. If McKinley’s observation is correct, this could be one more argument for interpreting the lack of debate as a sign of ignorance rather than agreement.

Even though Bort and Kieser (2011) and McKinley (2010) seem to be in total disagreement as to whether organizational researchers primarily produce new theories or replicate old ones, they seem to agree on the tendency toward self-containedness, that is, what other researches find or discuss is not automatically interesting. What seems to unite the positions of Bort and Kieser and McKinley is the concern raised by several authors about the lack of interest in engaging in cumulative research (e.g., Groth, 2012; Ketchen et al., 1997; McKinley, 2010; Miller, 1996; Miller et al., 2009; Nesheim, 2010; Short, Payne, & Ketchen, 2008). The lack indicated in this review is not a lack of an empirical test of (Mintzberg’s) theory, which seems to be an aspect of what Bort and Kieser (2011) call exploiting. What is lacking is the next step in the process of evaluating the validity status of the theory, reachieving a sense of intersubjective consensus after an identified anomaly, which seems to be an aspect of what Bort and Kieser call exploring.

An alternative to the positivistic norm of accumulation is that knowledge about organizations does not accumulate (Czarniawska, 2003). That validity is achieved at the expense of the reliability on which the ambition of a stable, testable theoretical framework rests (Bailey, 1992). Perhaps the collective phenomenon labeled organization is simply too varied and complex to be measured (Weick, 1979), and the process of getting an organization into a reliable measurable form involves stripping it of what made it worth counting in the first place, that is, validity is sacrificed.

Clegg is an interesting example of a researcher who is skeptical about trying to establish a unified understanding of organizations by accumulating quantitative, questionnaire-based data. In a debate article, Clegg suggests that theories generated

in terms of a dream of completion, of unitarian understanding [of organizations] produce the nightmares of dead data crowding the page—frozen moments captured in average responses to survey items calibrated as scales, correlated with other dead traces of life lived elsewhere. (Schwarz et al., 2007, p. 304)

From such a position, even if it is probably somewhat overstated, it seems perfectly explainable that Doty et al.’s study did not stir much debate. Who would want to spend time on “dead data?”

However, Clegg does claim that there are no privileged grounds on which a scientist can falsify or confirm certain representations (Schwarz et al., 2007). Consequently, no theory, method, or test is in principle exhaustive and, to Clegg, it is important to continuously reflect (i.e., reexamine) on methodological and theoretical assumptions.

In sum, there are quite a few arguments indicating that there may be other reasonable explanations for the lack of debate than interpreting the silence as consensus among the research community on the invalidity of Mintzberg’s theory as a result of the negative test. In the next section, we will turn to the normative question of whether the publication of a negative test of Mintzberg’s theory should have stirred debate.

Should the Claim of Invalidity Have Stirred Debate?

As information is said to be found in differences, the point of departure for this section will be the epistemological position represented by Clegg, who in principle appears to be skeptical of the methodological approach used by Doty et al. In cooperation with Hardy, Clegg suggests that it is from active theoretical debate that we learn and not “through the recitation of a presumed uniformity, consensus, and unity” (Clegg & Hardy 2006, p. 438).

To categorize Doty et al.’s study as research that recites a presumed uniformity and has thereby produced dead data would appear to be an injustice. Rather, it seems fair to claim that they approached the organizational phenomenon with an explicit a priori theoretical expectation, namely, to see whether the symbolic system synthesized by Mintzberg enabled them to map back to the empirical world. That attempt at mapping back identified possible tensions in Mintzberg’s theory. Thus, being explicit about theories, methods, and prior assumptions should provide a fruitful point of departure for discussion. To ignore the test seems to be a violation of the norm of reflection, regardless of the method used (Clegg & Hardy, 2006). However, what Doty et al. do not discuss is whether they managed to connect the experiences of those in the actual organizations with the concepts they operationalized; did they have a realistic content? From a reflective position, that question deserves debate, not ignorance (Schwarz et al., 2007).

Clegg and Hardy (2006) regard pluralism as an important aspect of theoretical development, but, as noted by McKinley, theoretical pluralism as a goal could have drawbacks. In an interesting discussion of pluralism and scientific progress, Knudsen (2003) warns against what he calls the fragmentation trap. A troubling thing about this trap is that it is a self-reinforcing situation in which new theories are proposed at a pace that puts severe limitations on the time the larger theoretical community needs to give each contribution the attention it deserves and to contribute thorough reflection (Knudsen, 2003). Thus, too much diversity seems to undermine one of Clegg and Hardy’s criteria for judging theoretical positions: thorough reflection and active debate. So, in arguing against unification, Clegg and Hardy seem to be very close to falling into the fragmentation trap. Knudsen further questions the argument that, the more pluralistic a theoretical community is, the more competition and the better possibilities for a scientific “breakthrough,” claiming that the validity of the argument has never been established.

However, there is a ditch to fall into on the other side of the road as well: what Knudsen (2003) calls the specialization trap. This trap is also presented as a self-reinforcing process, where the focus is turned inward to exploit and fine-tune existing research programs. And as exploitation is more efficient (i.e., yields faster and safer returns on known problems), it is less likely that scientists will take the chance of experimenting with new approaches. In Clegg and Hardy’s (2006) terminology, this would mean that researchers who have fallen into the specialization trap will be less interested in reflecting on the foundations of their research, which is consistent with Bort and Kieser’s (2011) observation about the tendency among researchers to exploit rather than explore.

The overarching dilemma when it comes to studying organizations is nicely put by Clegg:

Although we have no problem with recognizing the objectivity of organizations as a phenomenon that exists independently of those more or less theorized representations that we have of them, simultaneously, we know of them only through such representation. (Schwarz et al., 2007, p. 304)

To Clegg, this dilemma implies that knowledge must be applied in a practical and context-dependent way, premised on Aristotelian virtue phronesis (Schwarz et al., 2007). The scientist cannot rely on predescribed, generalized theories and methods, but must rely on his wisdom or practical rationality in an “inherently unlawlike way” when engaging with the organization in question. From this perspective, the real test of a theory should be in the situated, practical encounter with the individuals working in the organization or organizations in question. However, as discussed above, if “unlawlike” is interpreted as laissez-faire research, it could potentially end in the fragmentation trap.

Knudsen (2003) also seems to have found an Aristotelian-inspired solution to the challenge of theorizing about organizations. Knudsen has emphasized that the art of representing the representation of organizations lies in striking a balance between too much fragmentation and too much specialization. In contrast to Clegg, however, Knudsen explicitly claims that scientific progress is made when a new theory relieves the tension by clarifying the domain of application and proposing a new explanation or explanations. So, when Doty et al. present anomalies, this should have stirred debate to clarify the alleged tensions. However, it could be that it is those who produce research inspired by Mintzberg’s configurations—like Doty et al.—who are operating on a self-contained theoretical island.

In sum, even though they claim to adhere to different positions in the philosophy of science, Clegg and Hardy (2006; anti-Popperian) and Knudsen (2003; pro-Popperian) seem to agree that theoretical debates are a necessary ingredient in arriving at a valid representation of the organization phenomenon. One of the fundamental insights in organization theory is that people have bounded rationality (Simon, 1997). One possible approach to remedying this “boundedness” is to try to stand on each others’ shoulders by trying to find possible relationships between new and old theories. Thus, based on this triangulation, it seems reasonable to claim that the study presented by Doty et al. should have stirred debate.

Conclusion

Based on this discussion, it seems reasonable to doubt the exhaustiveness of Doty et al.’s claim that Mintzberg’s typology and theory are invalid. It also appears reasonable to doubt that the lack of debate should be interpreted as general agreement with Doty et al.’s claim. It is difficult, however, to make claims about the consensus on the validity of Mintzberg’s theory as no debate has been found that could form the basis for reaching a kind of intersubjective agreement.

Given the vast number of available theories and perspectives that can be used to analyze how organizations function (Cummings & Worley, 2009), it does not initially seem to be critical if one test of one of these theories questions the validity of that theory. To practicing managers, it could be as important to follow the norms of their institutional environment as to try to find theories that are valid according to organizational theorists. However, it is hard to escape the feeling that it would have been comforting to know that the theory you choose to use when entering the practical field had been scrutinized by other researchers, and that you were actually standing on the shoulders of giants. As Stablein (2006) remarks, the “we” is important in organizational research; social science is a social practice. If the ambition is to be an applied science, the tendency toward laissez-faire, or, to put it in more positive terms, to “let a thousand flowers bloom,” does not seem very productive if you prefer to use a “good theory,” that is, a theory where there is a consensus on its validity status (McKinley, 2010).

Thus, seen from an applied perspective today, it would have been fruitful if the study by Doty et al. published almost 20 years ago had inspired a cumulative engagement in arriving at a consensus on the validity of Mintzberg’s typology and theory. However, an important disclaimer is that Doty et al. may have stimulated research that was not discovered in the 322 pieces of research explored in this article. Donaldson (2001) is an example who could indicate that Doty et al. may have found resonance in research that was not found on Thomson Reuters Web of Knowledge. However, and following Popperian logic of falsification (Gilje & Grimen, 1993), the articles found using Thomson Reuters Web of Knowledge have been checked out. A possible next step in a cumulative process of establishing the status of the degree of consensus on the validity status of Mintzberg’s configuration theory would be to extend the search.

Footnotes

Acknowledgements

The author wants to thank Steinar Askvik, Jan O. Jacobsen, Henry Mintzberg and Paul G. Roness, for helpful comments.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research and/or authorship of this article.