Abstract

Recent calls to improve the quality of education in schools have drawn attention to the importance of teachers’ preparation for work in classroom settings. Although the practicum has long been the traditional means for pre-service teachers to learn and practice classroom teaching, it does not always offer student teachers the time, safe practice experiences, repetition, or extensive feedback needed for them to gain adequate knowledge, skills, and confidence. Well-designed simulations can augment the practicum and address these gaps. This study evaluated the design of simSchool (v.1), an online simulation for pre-service teachers, using student teachers’ ratings of selected factors, including realism, appropriateness of content and curriculum, appropriateness for target users, and user interaction. Based on these ratings, the study identified strengths and weaknesses, and suggested improvements for the software. Participant ratings varied considerably but indicated that certain aspects of the simulation, such as its educational value, classroom challenges, and simulated student characteristics, were moderately well received. However, user interface navigation and the range and realism of simulated teacher–student interactions should be improved.

Teacher education programs must now train teachers to work in environments that will demand increasingly complex skills and knowledge, along with greater accountability and demonstrated teaching effectiveness. Practice teaching is a long-respected education component of this training, allowing student teachers to build their classroom knowledge, skills, and confidence before taking full responsibility for classroom teaching (Arnett & Freeburg, 2008). However, providing an effective practicum experience is often an administrative and logistical challenge, demanding strong university–school partnerships, the integration of theory and practice, and, ideally, a broad range of teaching situations in the face of limited time and resources (Allen & Wright, 2013; Bradley & Kendall, 2014).

Simulation techniques have been used as training and feedback tools for many years in occupations such as medicine, aviation, military training, and large-scale investment where real-world practice is dangerous, costly, or difficult to organize (for example, see Drews & Backdash, 2013). In pre-service teacher education, classroom simulations can help pre-service teachers to translate their theoretical knowledge into action through repeated trials without harming vulnerable students, and they can provide more practice time and diversity than limited live practicum sessions (Carrington, Kervin, & Ferry, 2011; Hixon & So, 2009).

One such simulation is simSchool (www.simschool.org), designed to provide teaching skills practice in a simulated classroom with a variety of students, each with an individual personality and learning needs. simSchool has been shown in several studies to have potential as a practice and learning tool for pre-service teachers (Badiee & Kaufman, 2014; Christensen, Knezek, Tyler-Wood, & Gibson, 2011; Gibson, 2007). Although simSchool has been under development for more than 10 years (Gibson & Halverson, 2004), very little published research has addressed its design as an instructional tool. To address this gap, the current study evaluated the design of simSchool (v.1) from the perspective of its target users, pre-service teachers, providing both quantitative and qualitative evidence of its strengths, weaknesses, and areas for improvement.

Background

Research on student learning maintains that teachers are the most important school-related factor influencing student achievement (Edutopia, 2008). Teacher education programs train our teachers, providing initial and ongoing support, resources, and hands-on experience, to prepare them for their teaching careers.

These programs face at least two important challenges that call for a more sophisticated education process. On one hand, teachers need an ever-growing set of knowledge, skills, and attitudes to meet their responsibilities; on the other hand, faced with decreased funding, increased regulation, and growing competition for available teaching jobs, they must clearly demonstrate their competencies in enhancing students’ learning (Girod & Girod, 2008).

Teaching Practice and the Practicum

Classroom teaching practice provides most student teachers with their first experience in applying the knowledge and exercising the skills that they study. The practicum is intended to give pre-service teachers the opportunity to develop practical skills and knowledge, receive feedback from experts and professionals, and gain experience with students and the school environment that can directly help them to prepare for classroom teaching. Also, practicum experiences allow teacher candidates to learn and grow in protected settings (Girod & Girod, 2008). Therefore, field experiences are often identified as the most important aspect of teacher education programs (Arnett & Freeburg, 2008; Phillion, Miller, & Lehman, 2005).

However, the practicum is fraught with difficulties, including a lack of appropriate field placements, particularly for rural, special-needs, and rarely found conditions; shortages of host teachers willing to provide their time and expertise; host teachers’ poor teaching practices, particularly with special-needs students; limited opportunity for repeated practice; and poor integration with the university curriculum (Billingsley & Scheuermann, 2014; Howey, 1996; McPherson, Tyler-Wood, Mcenturff, & Peak, 2011; Wilson, Floden, & Ferrini-Mundy, 2001; Young, 1998). It is, therefore, important to consider ways to augment the traditional practicum to enhance both the quantity and quality of students’ pre-service teaching experience.

Simulation-Based Practice

Classroom simulations are starting to offer the possibility of enhancing the practicum by providing new opportunities for pre-service teachers to practice their skills. A simulation is a simplified but accurate, valid, and dynamic model of reality implemented as a system (Sauvé, Renaud, Kaufman, & Marquis, 2007). R. D. Duke (1980), the founder of simulation and gaming as a scientific discipline, noted that the meaning of “to simulate” stems from the Latin simulare, “to imitate,” and defined it as “a conscious endeavor to reproduce the central characteristics of a system in order to understand, experiment with, and/or predict the behavior of that system” (cited in Duke & Geurts, 2004, Section 1.5.2). Simulation involves play, exploration, and discovery, all elements of learning (Huizinga, 1938/1955). It has a long history in adult education, initially in the form of abstract representations using physical components such as paper and pencil or playing boards and, more recently, in many types of computer-based virtual environments (Ramsey, 2000).

Simulations are distinguished from games in that they do not involve explicit competition; instead of trying to “win,” simulation participants take on roles, try out actions, see the results, and try new actions without causing real-life harm. Simulations, when paired with reflection, offer the possibility of experiential learning (Dewey, 1938; Kolb, 1984; Lyons, 2012; Ulrich, 1997). Dieker, Rodriguez, Lignugaris/Kraft, Hynes, and Hughes (2014) pointed out that an effective simulation produces a sense of realism that leads the user to regard the simulated world as real in some sense: These environments must provide a personalized experience that each teacher believes is real (i.e., the teacher “suspends his/her disbelief”). At the same time, the teacher must feel a sense of personal responsibility for improving his or her practice grounded in a process of critical self-reflection. (p. 22)

Suspension of disbelief and this sense of personal responsibility work together to engage the learner in the simulation process so that it becomes a “live” experience; feedback and reflection complete a cycle so that the learner can conceptualize and ultimately apply the new learning (Kolb, 1984).

Simulations have many advantages for teacher education, particularly now that new technologies support more realistic modeling of classrooms and students. McKeachie (1994) maintained that the main advantage of an effective educational simulation is that students are active participants rather than passive observers, and such a shift in roles motivates students. Simulations can provide a venue for practicing and refining the transfer to the classroom of newly learned theory and skills, based on experimentation, feedback from simulated students, reflection/debriefing, and repetition (Carrington et al., 2011; Crookall, 2010; Parente, 1995). In this way, failure becomes part of an ongoing learning process rather than a block to achievement (Carstens & Beck, 2005). The role-play aspect of classroom simulations supports students in taking on and practicing unfamiliar teaching roles, developing new self-efficacy and professional identity over time (Carrington et al., 2011; Gibson, Christensen, Tyler-Wood, & Knezek, 2011). In simulations, scenarios can be encountered that are ethically or logistically difficult to create in the real world, such as high-stress urban environments or mixed groups of special-needs students; pre-service teachers can begin to prepare for these before they experience them in real life (Dieker, Hynes, Stapleton, & Hughes, 2007; Dieker et al., 2014). With simulations, learners can make mistakes without harming actual students—an advantage that is particularly pronounced when training for work with difficult or special-needs learners (Dieker et al., 2014; Ferry et al., 2004). Finally, simulations using commonly available technologies offer a low-cost alternative to extending teaching practice time in the field.

The Importance of Simulation Design

Realizing a simulation’s potential in any learning situation depends on a range of factors, including its fidelity, usability, relationship to learning goals, and learning processes. As simulations have developed in sophistication and have become accepted teaching tools in business, medicine, and other disciplines, researchers and practitioners have come to agree on a broad set of design and implementation principles for effective learning support.

Drawing on experience in disciplines other than teaching, Dieker et al. (2014) identified three critical components in teaching simulations that affect learning of new behaviors. One is “a sense of real presence” so that the users in some sense “suspend disbelief,” engage with the simulated environment as real, and feel personal responsibility to improve their practice (Dede, 2009). Related but distinct is the concept of fidelity, the validity of the simulation model (the degree to which the simulation represents reality); fidelity ensures that learning from the simulation is valid and transfers to practice in real life (Alessi & Trollip, 2001).

Dieker et al. (2014) also highlighted the importance for simulation-based learning of a cyclical process of action, feedback and debriefing, and modified action. This is known in the military as ARC, or the Action Review Cycle (Holman, Devene, & Cady, 2007). A meta-analysis by Gegenfurtner, Quesada-Pallarès, and Knogler (2014) confirmed that feedback after simulation activity led to greater self-efficacy and skills transfer.

The third component, only partly realized in today’s simulations, is personalized learning through flexible environments that focus on assessing and teaching the specific new skills needed by the learner (Dieker et al., 2014). One aspect of personalized learning that is incorporated into some simulations is user control over levels of difficulty, which increases self-efficacy beliefs and skills transfer (Gegenfurtner et al., 2014). Gibbons, Fairweather, Anderson, and Merrill (1997) maintained that effective simulations should allow learners to change simulation parameters, repeat the experiment, and directly observe the consequences.

Perhaps the most comprehensive set of recommended simulation design features comes from Issenberg, McGaghie, Petrusa, Gordon, and Scalese’s (2005) comprehensive systematic review, covering 34 years and 109 studies of high-fidelity medical simulations. This review identified 10 simulation features that facilitate effective learning (Table 1); the features are applicable outside the medical domain and have been recommended by many simulation experts and education researchers, including Aldrich (2004, 2005), Alessi and Trollip (2001), Duffy and Cunningham (2001), Ferry et al. (2005), and others.

Simulation Conditions for Effective Learning.

Source. Summarized from Issenberg, McGaghie, Petrusa, Gordon, and Scalese (2005, p. 10).

Percentage of 109 reviewed articles reporting evidence of effectiveness.

The above design criteria, focusing on learning processes, are chiefly concerned with whether or not the simulation is effective in terms of producing defined learning outcomes for users. Usability, or the ease with which users are able carry out tasks using the software, is not directly addressed but is a fundamental determinant of the user’s experience with the software. Usability is distinct from utility, or whether the software is capable of carrying out its intended tasks (Microsoft, 2000). Clearly, both are important, if implied, design criteria for an effective simulation.

The simSchool Classroom Simulation

simSchool (www.simschool.org) is a web-based classroom simulation designed to provide pre-service teachers with the opportunity to practice different classroom teaching skills. The player in simSchool has the role of a teacher responsible for teaching and managing a classroom of students, choosing a grade between 7 and 12. simSchool provides student teachers with the opportunity of practicing classroom teaching skills by analyzing student differences, adapting instructions to learners’ needs and characteristics, and getting feedback from the simulation as the results of their teaching actions and choices (simSchool, 2011). Each simStudent (simulated student) has a profile that includes information about personality, academics, and teacher’s reflections; these profiles are modeled on real student profiles in actual teachers’ records. The profile include statements about the simStudent’s behavior and learning preferences. Each has an individual personality with settings on six dimensions: expected academic performance, openness to learning, conscientiousness toward tasks, extroversion or introversion, agreeableness, and emotional stability; settings range from very negative to very positive on each dimension, with about 20 different possible points on each of the six dimensions (Badiee & Kaufman, 2014; Christensen et al., 2011; Deale & Pastore, 2014; Gibson, 2007; Hettler, Gibson, Christensen, & Zibit, 2008).

In the simSchool classroom, the player must select tasks and conversational exchanges that best fit the students’ needs, and simStudents respond with changes in their expressions and responses. The teacher’s choices in interaction with simStudents affect their academic outcomes and behaviors, and the player should make appropriate decisions to help students on their given learning tasks (Zibit & Gibson, 2005).

As the simulation runs, the player is required to make many choices about organizing the lesson, managing the classroom, and interacting with individual students. These issues have been identified as significant areas that underlie the quality of instruction for teachers (Nelson, 2002). Based on their simulation experience, student teachers can practice decision making and refine their classroom teaching strategies (Zibit & Gibson, 2005). simSchool is designed to support the user in developing expertise and thinking like a teacher. Success in the simulation comes through helping simStudents improve, both in their academic performance and their behavior. simSchool is intended to be used on an ongoing basis as part of the pre-service curriculum, with an instructor’s guidance (Deale & Pastore, 2014).

Recent studies have evaluated simSchool’s effectiveness for general teaching practice (Badiee & Kaufman, 2014; Deale & Pastore, 2014), for the development of student teachers’ self-efficacy (Christensen et al., 2011; Gibson et al., 2011), and for learning to work with diverse and special-needs student populations (McPherson et al., 2011; Rayner & Fluck, 2014). These have indicated a range of positive learning outcomes for pre-service teachers after simSchool use. The Rayner and Fluck study also captured, in qualitative comments, users’ general opinions about the simulation’s realism and ease of use. These questioned the simulation’s realism (particularly simulated student responses) and identified difficulties with the user interface (particularly the mechanics of task and response selection). The article also suggested that simSchool’s realism could be improved by extending its virtual classroom to include inter-student interactions and by improving the classroom’s visual realism.

Unlike the above studies, the research reported considers the initial user experience with simSchool based on a series of brief introductory sessions. Rather than introducing the simulation as part of a formal teaching preparation program, this experiment studies initial user perceptions of and experiences with the software, identifying the key factors that support or inhibit its user acceptance, usability, and utility for augmenting the practicum experience.

Method

This research was done as part of a pilot study of the overall effectiveness of simSchool in a pre-service teacher education program in a mid-sized Western Canadian university. Results related to simSchool’s effectiveness for teacher preparation are presented in Badiee and Kaufman (2014) and are not addressed in this article.

Research Questions

The design evaluation, intended for a preliminary user-oriented evaluation of simSchool’s usability and relevance, was guided by two broad questions:

The questions were addressed through a combination of quantitative and qualitative methods, as described below.

Participants

Twenty-two student teacher volunteers from a teacher education program at a Western Canadian university took part in the study. Their program is made up of a combination of professional coursework and practicum experience. Because they came from several class cohorts in the program, some participants had participated in a practicum, whereas others had not. For the purposes of this evaluation, the study did not distinguish among cohorts.

Experiment and Instrument

Because the study relied on busy volunteer participants, the experiment was conducted in a single session with breaks between brief experimental tasks. The experiment was conducted outside the education curriculum; in contrast, Rayner and Fluck’s (2014) study embedded the simulation sessions in the formal curriculum, was conducted over a longer period, and included formal training for the simulation facilitator. In this study, the facilitator relied on the simSchool manual and self-study to learn the software prior to the experiment.

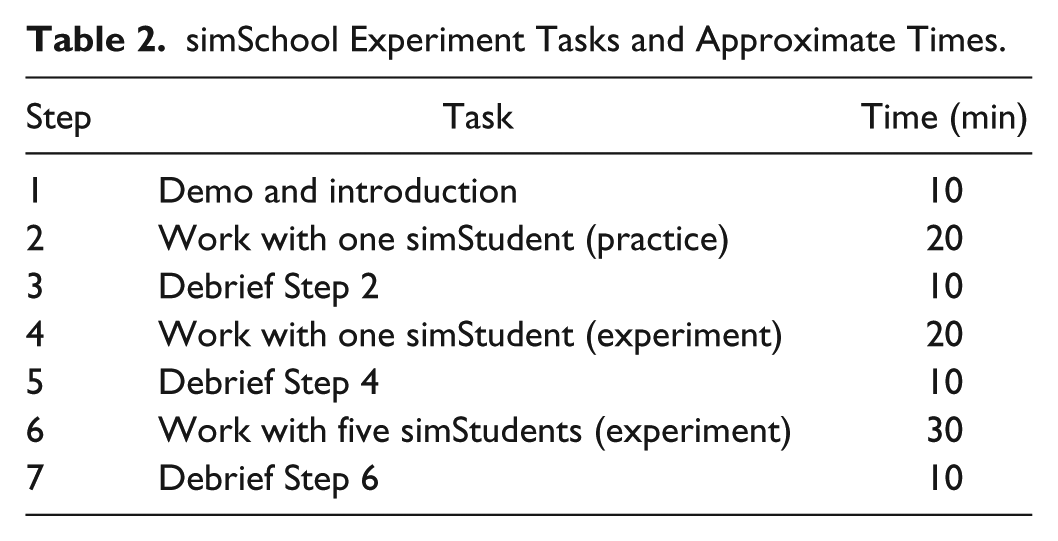

The experiment consisted of three sessions with simSchool Version 1. The first session was used simply for practice with one simStudent, and the research assistant circulated and assisted any student teachers who were unclear about what to do. Then, student teachers worked through the simulation “for real” with one simStudent and then with five simStudents. There was a debriefing step after each session. During the debriefings, participants received and were able to discuss their simSchool-generated performance results. The experimental tasks and assigned times are shown in Table 2.

simSchool Experiment Tasks and Approximate Times.

Data related to simSchool’s design were collected from a post-experiment questionnaire based on standards of effective simulation design. The questionnaire used five-point scales to rate the simulation’s realism and other features, as well as three open-ended questions about the simulation’s design. SPSS Version 21 was used to produce descriptive statistics (frequencies and percentages). Due to the small number of responses, thematic analysis for the open-ended questions was done manually using Microsoft Word.

Results

Participant Backgrounds

Table 3 shows selected participant background characteristics. The great majority of participants (86.4%) were female. Two thirds rated their computer skills as intermediate. Less than a third (31.8%) had used computer-based simulations for education, but the majority (84.2%) had used computer-based simulations in another context. Following their simSchool use, less than one fifth (18.2%) of student teacher participants indicated that they planned to use what they had learned in the simulation in actual classrooms; however, almost three quarters (72.7%) responded that they were not sure whether they wanted to do so in the future.

Selected Participant Background Characteristics.

Quantitative Ratings

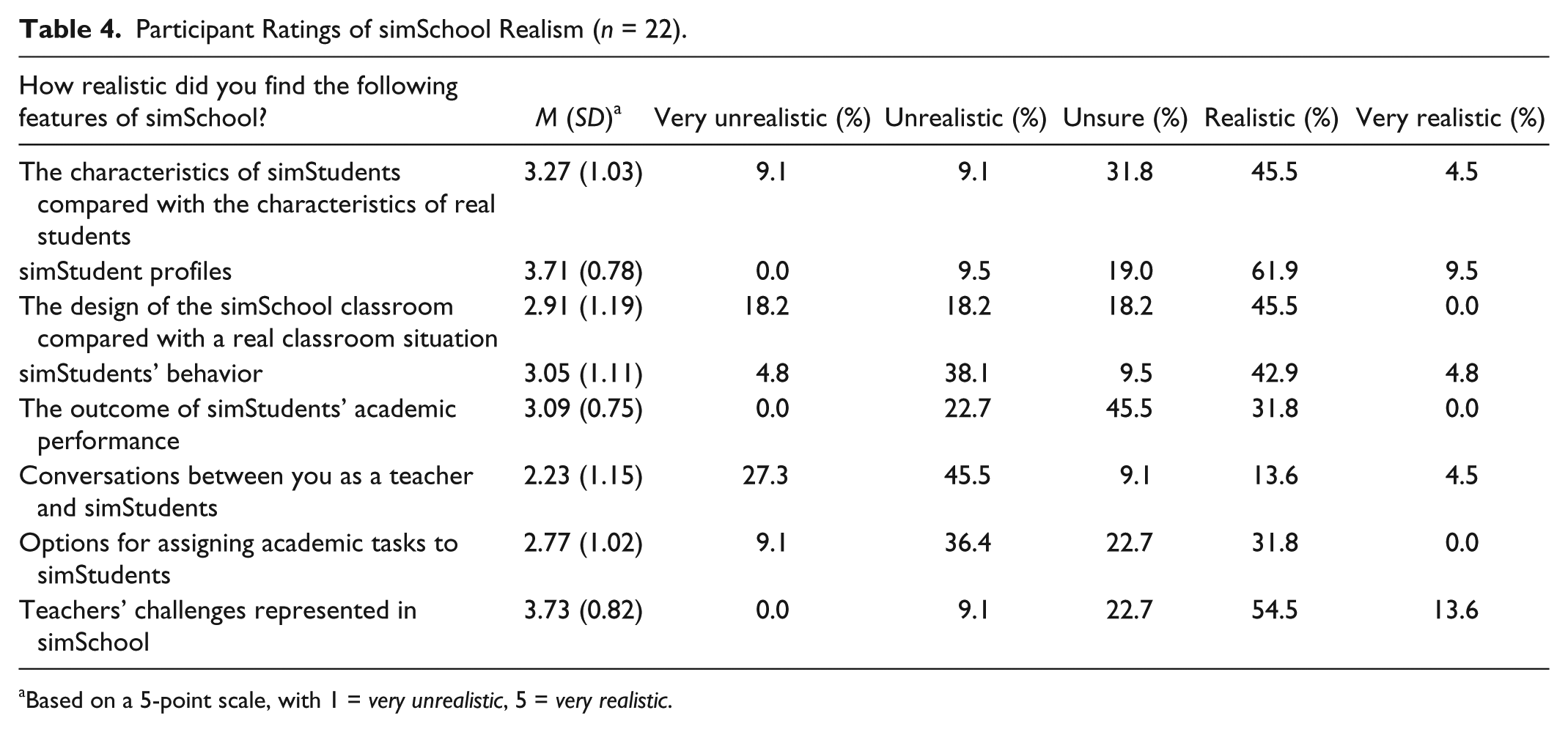

Participant ratings of simSchool’s realism (fidelity) are summarized in Table 4. Overall, ratings were moderate, with the mean rating of the highest rated characteristic, “the challenges of a typical teacher in the classroom,” 3.73 out of 5. Of the participants, 68.1% rated these challenges as “realistic” or “very realistic.” The realism of simStudent profiles was rated at a similar level, with a mean of 3.71; 71.4% rated the profiles as “realistic” or “very realistic.” “Characteristics of simSchool students compared to those of real students” received a mean rating of 3.27, with 50.0% of the participants rating these as “realistic or “very realistic.” The lowest rated characteristic was “conversations between you as a teacher and simStudents,” with a mean of 2.23; 72.8% of the participants rated these simulated conversations as “unrealistic” or “very unrealistic.” The remaining three items were rated toward the midpoint of the rating range, with ratings spread across “unrealistic,” “unsure,” and “realistic.” “Options for assigning classroom tasks to simStudents” was rated 2.77 out of 5, with ratings somewhat evenly spread over the middle three rating categories. The remaining three characteristics—classroom design, simStudents’ behavior, and academic performance outcomes—were rated close to the scale midpoint of 3, indicating that participants were divided about these features and on average were unsure about their realism.

Participant Ratings of simSchool Realism (n = 22).

Based on a 5-point scale, with 1 = very unrealistic, 5 = very realistic.

Tables 5 through 7 show participant ratings for other aspects of the simulation. Four items (clarity of purpose, educational value, concept coverage, and generalizability) were rated with respect to content and curriculum appropriateness (Table 5); the highest mean rating was for the simulation’s educational value (3.14 out of 5, with 3 = “good”). It is worth noting that 86.3% of the respondents rated this characteristic “good,” or higher. The lowest rating (2.77) was for its generalizability. All four items were rated “good” (the midpoint of the scale) by the largest percentage of participants.

Ratings of simSchool Content and Curriculum (n = 22).

Based on a 5-point scale, with 1 = very poor, 5 = excellent.

Ratings of simSchool Appropriateness for Target Users (n = 22).

Based on a 5-point scale with 1 = very poor, 5 = excellent.

Ratings of simSchool User Interaction (n = 22).

Based on a 5-point scale: 1 = very poor, 5 = excellent

Table 6 shows participant ratings for the appropriateness of simSchool for its target users. All mean ratings in this group were below the scale midpoint of 3, between “poor” and “good,” reflecting users’ somewhat negative opinions. The items “It is motivational to use” and “I find it fun” received the highest mean ratings (2.77 and 2.73, respectively). Lowest rated were “It effectively stimulates my creativity” (M = 2.41) and “It matches with my previous experience” (M = 2.50). The item “It is flexible for different users,” which refers to an important criterion for effective simulations, received a “poor” or “very poor” rating by 50.0% of the participants.

When asked about simSchool’s user interaction (Table 7), participants gave a rating over 3 to only one item—the appropriate use of graphics, color, and sound (3.23 out of 5). Other items were rated less than 3.0 (“good”). Participants gave low to moderate ratings (Ms between 2 and 3, or “poor” and “good”) to other items about ease of use and about effective use of feedback and user control.

Finally, one additional item asking about the simulation’s freedom from racial, ethnic, and gender stereotypes received a relatively high mean rating of 3.55 out of 5.

Open-Ended Questions

Three open-ended questions gathered qualitative data from the 22 participants about their opinions and perceptions of simSchool. The following themes in their comments were relevant to the simulation design:

The variety of options for interaction and having conversation with simStudents (n = 5)

The variety in responses and attitudes of simStudents and the change and development of their academic performance (n = 5)

Simulation feedback in the form of interim and final results, spreadsheets and graphs, and/or student performance (n = 4)

Some participants appreciated the richness of certain aspects of the simulation, including the interactions with simStudents, the simStudents’ different attitudes, and the responsiveness of their academic achievement to the teacher’s decisions in the simulation. These are all key design features that contribute to simSchool’s effectiveness for pre-service teacher classroom practice. However, these features were only identified by small numbers of participants (four or five for each theme).

Inappropriate, limited, or unrealistic options for conversation and interaction with simStudents (n = 12)

Difficulty with the interface in navigating through the options for interaction and conversation with simStudents (n = 9)

simStudents’ responses to the chosen tasks/conversation options did not always seem to suit or make sense (n = 5)

A larger number of participants noted difficulties with the realism of the simulation’s conversation and interaction options (n = 12); some also criticized the plausibility of student responses (n = 5). Nine participants noted difficulties with the user interface.

Have a clearer, more user friendly, and ordered categorization of comments in the interface for navigation and interaction with simStudents (n = 9)

Allow more realistic options for a variety of interactions, conversations, and teaching styles (n = 6)

Allow users to create their original comments for interaction with simStudents (n = 5)

These suggestions are consistent with the weaknesses identified in response to Question 2 above.

Discussion

In general, participant ratings of simSchool varied widely and were moderate rather than highly positive. Regarding the simulation’s fidelity, respondents regarded as most realistic the classroom challenges experienced by the user as a simulated teacher, the simStudent profiles and learning characteristics, their simulated classroom behaviors, and their academic performance outcomes (although the last two only received ratings close to “good”). The realism of simulated conversations between simStudents and the teacher received a low rating, as did correspondence with users’ previous experience. These ratings were consistent with participants’ written comments, which identified “most liked” features as conversation and interaction options, variety in simStudent responses and attitudes, and changes in their academic performance in response to teacher actions.

Overall, ease of use and stimulation of user creativity received low ratings, whereas comments identified the “least liked” features as the user interface for conversation and interaction with simStudents, general navigation in the user interface, the realism of simStudents’ responses, and general ease of use.

These results suggest that users were not quite able to suspend their disbelief and enter fully into their roles as teachers, and that they were not able, given their short exposure to the simulation, to easily choose and carry out required tasks. Results of other studies (e.g., Christensen et al., 2011) suggest that using and believing the simulation might become easier given time and support for new users to become more familiar with the software and with how to respond to its underlying student models. Also, simSchool’s flexibility for different users, an important simulation design criterion, was rated “poor” or “very poor” by 50% of users, indicating that they were not aware of the software’s capabilities for defining multiple student learning needs and for changing the class size and learning requirements; this was probably also due to the short experimental time.

Ratings were above 3 (“good”) for the simulation’s clarity of purpose; the educational value of simSchool content; the appropriate use of graphics, color, and sound; and simSchool’s freedom from racial, ethnic, and gender stereotypes. These, together with the high proportion (86.3%) of participants rating “educational value” as “good” or higher, and positive comments on simSchool’s feedback, suggest that new users in the study recognized the simulation’s potential as a learning tool despite their initial difficulties.

These findings are valuable because they come from the reflection and feedback of student teachers—the main target users of simSchool. However, given the short times available for participants to practice and work with the simulation, they reflect first impressions about the simulation as well as frustrations that might have been mitigated with longer practice time and simulation sessions. For example, the effectiveness of feedback through student responses received a low rating, although the student teachers appreciated receiving feedback and being able to see and compare their performance results. It is worth noting here that in longer experiments, instructors worked with users to help them fully understand and learn from the system’s feedback, suggesting that limited debriefing time might have negatively affected user opinions about feedback.

Through low ratings of some aspects of the program and in written comments, participants argued for improvements in the conversation and interaction options between simStudents and teachers, as well as improvements in the user interface. These comments suggest that improving these aspects of the simulation could lessen initial user frustration and improve its overall effectiveness.

Conclusion

This study looked at users’ initial responses to simSchool based on limited training and brief simulation sessions. Although these initial perceptions and opinions might well change with increased exposure and instructor support, they indicate issues that need to be addressed to use the simulation effectively for pre-service teaching practice. These results are consistent with Rayner and Fluck’s (2014) observations in that both reflect the effects of participants’ limited time working with the simulation. Taken together, these two studies confirm that for effective training, the version of simSchool evaluated in this study requires longer periods of use and stronger instructor support than in their experiments. The results reported in this article do suggest that addressing usability and fidelity issues could reduce these time and resource requirements, encouraging its wider use. Despite the moderate to low ratings, the student teacher participants in this study found overall that simSchool is an instructional program of educational value.

Study Limitations

This design evaluation was conducted within a short time frame. Due to participants’ extremely full schedules, they were only available for one simulation period, which limited the time available for them to practice with the simulation. This did not allow time for them to become comfortable with the user interface, to acclimate to the simulated teacher’s role and required behaviors, or to practice with a more realistic 18-student classroom. Finally, the sample of students involved in the study might be considered biased, because it involved willing volunteer student teachers (primarily female) rather than a randomly selected sample. Therefore, the generalizability of the results is limited.

Further Research

The results of this study suggest several areas for further design evaluation work, beginning with addressing the limitations identified above by providing a longer time frame and a larger participant sample to test whether the negative ratings in this study would lessen with more learning time. Evaluation of specific design criteria could provide more targeted feedback for simSchool developers. Some of these issues have been addressed in Version 2 of the software, so future studies will be conducted with this version.

Using a sample of participants at different stages in their teacher education program could help to evaluate how participants’ prior knowledge and experience affect simSchool’s perceived design strengths and weaknesses and whether practice with the simulation might affect whether or not student teachers plan to use simulations such as simSchool in the future. It would also be useful to evaluate, with teacher educators, the simulation’s content and curriculum appropriateness.

Footnotes

Authors’ Note

Farnaz Badiee is now at the Center for Teaching, Learning, and Technology, University of British Columbia.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research and/or authorship of this article.