Abstract

School curriculum change processes have traditionally been managed internally. However, in Queensland, Australia, as a response to the current high-stakes accountability regime, more and more principals are outsourcing this work to external change agents (ECAs). In 2009, one of the authors (a university lecturer and ECA) developed a curriculum change model (the Controlled Rapid Approach to Curriculum Change [CRACC]), specifically outlining the involvement of an ECA in the initiation phase of a school’s curriculum change process. The purpose of this article is to extend the CRACC model by unpacking the implementation phase, drawing on data from a pilot study of a single school. Interview responses revealed that during the implementation phase, teachers wanted to be kept informed of the wider educational context, use data to constantly track students, relate pedagogical practices to testing practices, share information between departments and professional levels, and own whole school performance. It is suggested that the findings would be transferable to other school settings and internal leadership of curriculum change. The article also strikes a chord of concern: Do the responses from teachers operating under thecurrent accountability regime live their professional lives within this corporate and globalized ideology whether they want to or not?

Introduction

Over the last decade, many nations have tied their educational systems to funding to establish a degree of accountability for invested public money (Lingard & McGregor, 2013). Education in Australia has been subjected to this escalating agenda of accountability measures by stakeholders, most notably governments. According to Lingard and McGregor (2013), these measures have led to a realigning of the curriculum to focus on literacy and numeracy and other market-oriented reforms. In Australia, nation-wide high-stakes testing is a relatively recent phenomenon compared with other Western and newly industrializing nations. Introduced in 2008, literacy and numeracy tests commonly referred to as National Assessment Program—Literacy and Numeracy [NAPLAN]) are mandatory in all Australian states and territories where Years 3, 5, 7, and 9 are tested annually. In addition to justifying government funding allocations, national high-stakes test data are used to compare schools’ and states’ educational performance. In this marketplace mentality, governments increasingly expect positive returns for their funding outlay in terms of what students know and can demonstrate as a result of their learning (Corson, 2002). Inevitably, this has put pressure on schools to produce publicly acceptable test data.

As well as national testing in the primary and lower secondary schools, Australian states conduct their own Year 12 exit testing programs. In Queensland, this examination is known as the Queensland Core Skills (QCS) Test and is undertaken by final year students from both independent and state schools. The QCS is based on the Common Curriculum Elements 1 (CCEs) underpinning the senior school syllabuses. Results from this test are combined with school-based assessment data to give students their exit results. The mean of the combined students’ exit results (known as the school result) are released to the public through the major statewide media outlet. This practice carries high-stakes consequences for schools as even a minimal risk of atypically poor annual academic results could have negative effects on the most stable institutions (Kasperson et al., 1988).

One risk management strategy or market-oriented reform is mirroring part of the school’s curriculum to the requirements of the high-stakes test. By using such a strategy, some principals are demanding immediate and focused changes to their schools’ curricula (Smeed, 2010).

According to some writers such as Fullan (2005), Fullan and Hargreaves (1991), and Gross, Giacquinta, and Bernstein (1971), attempts to change school curricula have historically met with limited success. Wetton (2010) specifically notes that one of the reasons that past reforms failed is because of the actual change process. Prior to heightened accountability regimes, curriculum change processes in schools tended to be slow, collaborative, cumbersome, activities-centered, and managed in-house (Brady & Kennedy, 2003). In contrast to this, the Controlled Rapid Approach to Curriculum Change (CRACC) developed by one of the authors of this article (Smeed, 2008) promoted change that was externally facilitated, tightly focused, results-driven (Schaffer & Thomson, 1992), and timely in implementation. It outlined specific practices that needed to be considered for controlled rapid change in the initiation phase (Fullan, 2007) of a curriculum change process. The streamlined model (Smeed, 2008) allowed the completion of the initiation phase in 10% of the allocated project time, leaving 90% for the implementation phase. However, it is often at this transition point from initiation to implementation that change processes flounder (Fullan, 2007). To counteract this, the authors thought it timely to revisit and reexamine the model giving consideration to the essential practices of the implementation phase of a change process, specifically one in response to high-stakes testing accountability and led by an external change agent (ECA). The authors acknowledge that such a change process responds to a neoliberal agenda and may leave some readers feeling uneasy as a “teach to the test” approach springs to mind. However, this is neither the practice nor the intention. Rather, what is being posited is using the neoliberal paradigm, in particular, data from high-stakes testing, in a focused, results-driven change process. Alternative change processes that are more activities-based (see Pertuzé, Calder, Greitzer, & Lucas, 2010; Schaffer & Thomson, 1992) may achieve success, but this is not the context here, and the writers make no apology for the stance taken. With these caveats in mind, this article outlines the required practices for the implementation phase as spoken by the participants, by addressing single case study research findings from a school that undertook a CRACC in recent years. In developing the extended model, the authors critically reflect on whether teachers embrace curriculum change or have become reform weary (Mansell, 2012), feeling forced to accommodate the accountability agenda whether they want to or not.

The opening gambit of this article provides a discussion on high-stakes testing and how this accountability regime can drive curriculum change. This is followed by a detailed outline of the CRACC model originally developed by (Smeed, (2008). Current literature relevant to the implementation phase of curriculum change is then considered. The research methodology is explicated, including limitations, before findings from semistructured interviews are critically examined to extend the CRACC model, detailing the implementation phase.

High-Stakes Testing and Curriculum Change

Even though high-stakes tests in schools tend to be standardized, they do differ greatly depending on their focus. For example, in Queensland, some high-stakes tests are skills-based, whereas others test knowledge. An important point about high-stakes tests is not so much what is tested but that the results are used to make significant educational decisions such as funding allocations, student movement through year levels, teacher competency, student enrollment, enrollment screening, rewarding and sanctioning of institutions, and narrowing and targeting of specific aspects of the curriculum (Amrein & Berliner, 2002; Berliner, 2009; Greene, Winters, & Forster, 2003). O’Neill (2013) labels these practices as “second order ways of using [assessment] evidence” (p. 4). In contrast, first-order ways relate to how teachers use assessment data to make judgments about what students do or do not know. She argues that using assessment data for second-order reasons not directly related to learning is questionable. Regardless of this, governments worldwide are increasingly monitoring accountability (Flores, 2005; Fullan, 2001; Kostogriz, 2012) as it relates to the curriculum through student and school performances in standardized tests, which are often high stakes (Amrein & Berliner, 2002).

The literature on the use of high-stakes testing to drive curriculum change is divided, albeit a one-sided debate. Critics of high-stakes testing highlight the following themes about their use: a narrowing of the curriculum, performance- rather than learning-oriented schools, increased drop-out rates, increased teaching to the test, lowering of teacher morale and defection from the profession, promoting cultural biases, increased teacher and student stress, increased pressure to cheat, negative and discriminating effects on life chances particularly for minorities, and the marginalization of subjects that are not explicitly tested, such as the humanities and physical education (Amrein & Berliner, 2002; Dweck, 1999; Ingersoll, 2003; Lingard, 2010; Mathers & King, 2001; Parkay, 2006). Parkay commenting on schools in the United States takes this even further, suggesting that standards are in fact lowered as districts downgrade benchmarks to attract more funding.

However, proponents of high-stakes standardized testing purport that such tests reduce educational inequality, increase objectivity in assessment, increase accountability, allow funding to be directed where needed, and ensure consistent comparison between international education systems (Dreher, 2012). An example where the use of high-stakes test data is seen as helpful is Greene et al.’s (2003) work on the State of Florida’s testing program. Greene et al. compare results from high- and low-stakes (school-based) testing and find that improved performance in high-stakes testing does translate into improved performance in low-stakes testing. However, many, such as Amrein and Berliner (2002), argue that there are no clearly identified links between these tests and increased student learning performance.

Regardless of what side of the debate you straddle, it is clear that high-stakes testing is a global phenomenon placing ever increasing pressure on schools (Fullan, 2001) demanding considerable time and energy in schools’ curriculum agendas (Pinar, 2004). How these pressures are responded to at an individual and school level varies according to the personnel and the context. One such strategy has been mirroring the curriculum to the demands of the test with the change brought about by an ECA. The CRACC model underpinned by Schaffer and Thomson’s (1998) results-driven improvement program relies on an incremental approach to change building on what works and discarding what does not. Targets are set through the various stages, and measurable goals are achieved. This model is similar to the Program Logic Model (PLM; Cooksy, Gill, & Kelly, 2001; Dwyer & Makin, 1997) used in Victoria, Australia, to expand teachers’ pedagogical knowledge. The PLM provided a framework to measure outputs against predetermined goals where ECAs worked with students and teachers. The CRACC model comes from a similar mind-set, the framework outlining time, stages, and involvement in curriculum change, and is now outlined in detail.

The CRACC Model

Traditionally, curriculum change models in education have been activities-centered (Smeed, 2008). Such models concentrate on activity, rather than results or impact. Teachers are continually bombarded with change programs for improvements in areas such as literacy and numeracy; however, according to Pertuzé et al. (2010), change processes often focus on the program and not on the results. Writers such as Fullan (2005) and Gross et al. (1971) suggest that utilizing such an approach leads to inevitable failure, and the school naively moves on to a new activities-based model.

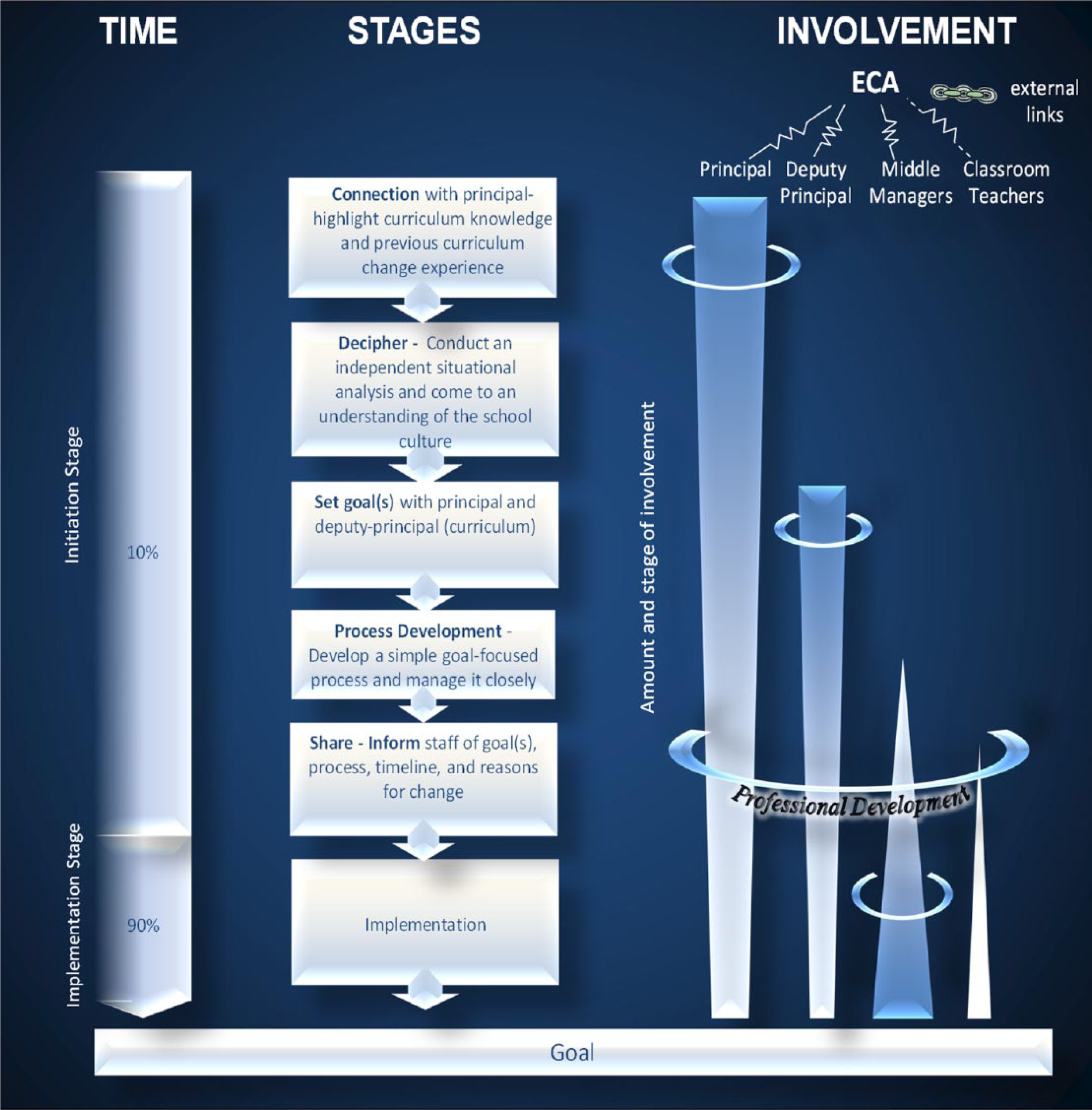

Alternatively, the CRACC model (Smeed, 2008) was designed to resemble change models more closely aligned with the corporate world (Beer & Nohria, 2000; Bennis, Benne, & Chin, 1961; Schaffer & Thomson, 1992), that is, results-driven. Schaffer and Thomson (1992) conduct extensive research in the corporate world and claim that managers often falsely assume that results will materialize if activities-centered programs are initiated. Their research illustrated that change that is not results-driven rarely leads to improvements. With these thoughts in mind, the CRACC model (Smeed, 2008) for curriculum change in education was developed and is shown in Figure 1.

CRACC model.

The model is divided into three facets, namely, “time,” “stages,” and “involvement.” As the name suggests, the “time” facet details the amount of time allocated to initiation and implementation during the curriculum change. The “stages” facet details sequential practices or activities, and “involvement” indicates which members of the teaching team are involved and their relative degree of involvement. These three facets are given further consideration below.

Time

In the current climate of accountability, school principals cannot afford to let a change process meander aimlessly. This pressure on school leaders was given serious attention in the development of the original CRACC model (Smeed, 2008) and is discussed in further detail in Smeed’s later work (Smeed, 2010). In reality, ECA-facilitated change processes are restricted by time as well as budget limitations (Fullan, 2007), so one of the key principles underpinning the original model was the development of an appropriate timeline. The model’s timeline was divided into two phases—initiation and implementation—terminology borrowed from Fullan (2007). These phases were represented sequentially following suggestions by Rogers (1983) where the initiation phase was considered to be a process through which an individual, or another decision-making unit or organization (such as a school), must initially pass. As the transition between initiation and implementation is where curriculum change processes can often falter (Fullan, 2007), the CRACC model recommended quick movement through initiation with contact mainly limited to the principal and deputy principal. This enabled the energies of middle-management and classroom teachers to be preserved for implementation.

Stages

The CRACC model outlined sequentially what the ECA had to achieve within the initiation phase. The ECA was required to (a) connect with the principal (connection), (b) conduct an independent situational analysis (decipher), (c) set goal(s), (d) outline the development of the process, and (e) share information with staff (inform). These five stages are further elaborated.

Stage 1: Connection

This stage highlighted the importance of the initial connection with the principal. In essence, an externally led curriculum change process does not occur without the principal’s support of the ECA and the process itself. For principals, the ECA’s knowledge and track record in leading curriculum change were crucial. Once the principal was comfortable with the external person, the ECA then moved to deciphering the situation or the situational analysis.

Stage 2: Decipher

The deciphering stage allowed the ECA to make independent judgments about the school’s current context. Prior to the construction of the CRACC model, the ECA had always relied on the perceptions of the principal to gain an understanding of the schools’ needs. In conducting the relevant research for the CRACC model’s development, it became clear that the ECA should seek information from wider sources. In response to this, the deciphering stage of initiation in the CRACC model involved conducting an independent situational analysis that incorporated the voices of more than just the school leader.

Stage 3: Goal setting

In line with the thoughts of Hall and Hord (1987), any long-term change success needs support from the leader. Therefore, the goal of the change process had to be one that the principal was committed to. In addition, the “goal setting” stage of the CRACC model also assisted in driving the process. The ECA had to bring about the desired changes quickly, so it was important that she or he knew exactly what she or he had to achieve. This goal setting stage involved the ECA in consultation with the principal, or principal and deputy principal only.

Stage 4: Process development

In the original research undertaken to develop the CRACC model, the participants strongly articulated the desire for a simple process. By this, they meant that it was easy to follow and that the requirements were specific. Therefore, the ECA developed a process that was easy for participants to follow and could be overseen closely by the external person. Once this process had the principal’s support, it was then shared with staff.

Stage 5: Share

The CRACC model recommended that staff should be informed about the reasons for change, as well as the goal(s), the process, and the timeline, but not necessarily until the final stage of initiation. This is contrary to advice from various writers and academics (Brady & Kennedy, 2003; Luke, 2007) who suggest that decisions should be made in collaboration with staff. However, findings from the development of the original model refuted this claim. Some teachers went as far as to say that they just wanted to be told what to do. This could be seen as managerial professionalism (Sachs, 2001), but as the findings here reveal, the term compliance professionalism (Bourke & Smeed, 2010) is more fitting. The compliant professional works with the objectives of external accountability regimes, but does so in a way that provides space or “wriggle room” (Hoyle & Wallace, 2009) for their own professional judgment and practice. The teachers in the original study did not mind who set the goal, but did want to know what the goal was and, more importantly, how it would be accomplished. Therefore, the CRACC model responded to this request by limiting the involvement of middle managers and classroom teachers until the sharing stage of the process. Their only involvement prior to this was voicing their opinions to assist the ECA in deciphering the situation (Stage 2).

Involvement

During the change process, the ECA worked with different professional levels of the school hierarchy, moving from one level to the next. By “stepping” the involvement of the ECA, the process could be closely managed and controlled. As the ECA moved from one professional level to the next, her time with the previous level decreased. In Figure 1, the CRACC model depicts the amount of time spent with each professional level by a widening or narrowing of the arrows and heavier shading where the main involvement occurred. Figure 1 also shows that the first contact was with the principal. After the initial meeting, the deputy principal was invited into the process. The middle managers were then introduced just before the implementation phase. Finally, the classroom teachers joined their leaders and managers.

This time spent working with the ECA was considered professional development. Desimone (2009) points out that the key characteristics of effective reform-oriented professional development include a focus on content, active learning, coherence, duration, and collective participation. This implementation project concurs with Desimone’s incorporation of such key characteristics. Furthermore, it concurs with Hattie’s (2009) findings that teachers are the most valuable resource in a school. Therefore, their professional development as part of the process was crucial. However, not all change processes are straightforward; there is always a degree of resistance. This is illustrated by the jagged lines as shown in Figure 1.

The details of the initiation phase of the original CRACC model have been outlined. Some may see this as a top-down approach to change. However, the authors argue that rather than top-down, the focus is results-driven (Schaffer & Thomson, 1992) and based on the use of evidence or data gathered from the ECA working collaboratively with various levels across the school at specific times (see “Involvement” section). Over 20 years ago and with little contradictory evidence in the academic literature since, commentators such as Basom and Crandall (1991) and Print (1993) identify top-down approaches as barriers to change, forcing people to comply and being sanctioned if they did not. Print maintains that under such conditions, participants had little ownership of, or motivation to ensure, the success of the change. The CRACC model counteracts these criticisms by identifying the needs based on data and working collaboratively with all stakeholders during the situational analysis.

As already mentioned, curriculum change processes often fail between initiation and implementation, so this article’s purpose is to elaborate and expand the CRACC model to incorporate details for the implementation phase of a curriculum change process led by an ECA in response to high-stakes testing accountability. The article also critically analyzes whether Basom and Crandall’s (1991) and Print’s (1993) criticisms apply to change in this marketplace mentality. Figure 2 provides a graphic explanation of the purpose of this research.

Research position.

Implementation

The implementation phase follows on sequentially and immediately from initiation. Fullan (2007) states that this phase “consists of the process of putting into practice an idea, program, or set of activities and structures new to the people attempting or expected to change” (p. 84).

During this phase, participants are asked to think and act differently. Lewin (1951) refers to this as “cognitive restructuring.” It is at the point of implementation that resources and knowledge are reorganized to give new meaning to what is happening on the ground.

Harris (2001) claims that the implementation phase should start after a decision to undertake change is made. However, the CRACC model argues that the decision to undertake change is made by the principal at the beginning of the initiation phase, and therefore, the implementation stage follows sequentially without the need for further decision making at this point in time. In addition, some authors (Cothran, 2001; Visser, 2004) posit that for implementation to begin, those in the organization need to develop the understanding gained in the initiation phase to the point where they feel comfortable to work actively toward the change. However, once again, the CRACC model challenges this notion in that the teachers are informed of the change process at the end of the initiation stage. The research for the development of the CRACC model strongly indicated that apart from having their say in the deciphering phase, teachers did not want to be involved with the initial development. They just wanted explicit direction and strong leadership.

According to Fullan and Stiegelbauer (1991), once the implementation phase is underway, the success or failure of the project relies upon the type and amount of quality assistance that is provided for the participants. Various writers suggest that this assistance should be in the areas of skill development, resources, motivation (Darkenwald & Merriam, 1982), encouragement and problem solving, conflict resolution, and day-to-day support (Booz Allen Hamilton, 2004; Fullan & Stiegelbauer, 1991; Huberman & Miles, 1984; Visser, 2004). In addition, other authors postulate that during the implementation phase, continued education and training are also essential (Fullan, 1992; Louis & Miles, 1990).

Fullan (2007) stresses the importance of clarity during and throughout the period of implementation, stating that a lack of such can “represent a major problem” (p. 89). He maintains that quite often teachers and others find that the proposed changes are “not very clear . . . in practice” (Fullan, 2007, p. 89). He also warns about “false clarity” (Fullan, 2007) which he maintains can occur if the people involved perceive and interpret the change process in an oversimplified manner. Therefore, care must be taken so that the change process provides teachers with clear, specific details about what is required, and that changes are not implemented on a superficial basis (Fullan, 2007). With clear expectations, teachers are more likely to own the process (Smith & Lovat, 2003).

The length of any implementation period varies depending on the project and the setting. According to Harris (2001), maintaining momentum has been identified as an inherent problem in this phase. Fullan and Stiegelbauer (1991) concur, stating that momentum is sometimes lost as the initial excitement wears off and the hard work and reality of making the change begins.

This research draws on the findings of a single case in a recent curriculum change process to outline essential practices within the implementation phase similar to what had already been developed for the initiation phase of the CRACC model. Hence, the extension and elaboration of the implementation phase of the CRACC model are needed to further chances of curriculum change success.

Method

A qualitative case study approach was chosen for this study to answer the following question: What essential practices are needed for the implementation phase of a curriculum change process in times of high-stakes accountability? According to Freebody (2003), “case studies focus on one particular instance of educational experience and attempt to gain theoretical and professional insights from a full documentation of that instance” (p. 81). He says, in its most general form, the goal of a case study is “an inquiry in which both researchers and educators can reflect upon particular instances of educational practice” (Freebody, 2003, p. 81); here, the practices useful in a curriculum change process in response to high-stakes testing. The boundaries are set by the school, thus meeting Merriam’s (1998) requirement that unless the intended phenomenon is bounded, it is not a case study. As well as having a strong sense of place, this study also has a strong temporal dimension. Yin (1994) maintains that a case study is an empirical inquiry that investigates a contemporary phenomenon within its real-life context. Changing curriculum in response to high-stakes testing is currently a global phenomenon, and as Hamel, Dufour, and Fortin (1993) point out, these local cases can be a reflection of a much larger global educational force. The study gives voice to the participants (Hatch, 2002), is based on a contemporary phenomenon (Yin, 1994), and focuses on a particular educational instance (Freebody, 2003), thus justifying the case study approach.

Two of the authors were employed as ECAs by the case study school in Queensland, Australia, with the aim of changing the curriculum in the school to meet the demands of high-stakes testing. The school was a large state-owned, preschool to Year 12 college on the urban fringe of Brisbane, the capital city of Queensland. When the research was conducted, the school had only been open for around 10 years with an enrollment of approximately 3,000. In Australia, all schools are scaled according to socioeducational advantage, which is based on socioeconomic data, parent qualification data, percentage of indigenous and percentage of language background other than English students, and, finally, whether the school is metropolitan, rural, or remote. This particular school services a predominantly White, middle-class clientele and could be classified as slightly socioeducationally advantaged. The college is owned by the state government, but the day-to-day management is the responsibility of the executive principal (EP).

It should be noted that this school was not one of the schools involved in the development of the original CRACC model in 2008. The EP, like many other school leaders, had the unenviable task of improving student and school performances within a relatively short time frame, so there was a degree of urgency for curriculum change to accommodate the performance agenda. This is a common reality for principals who lead schools within systems that prioritize improvement in performance, and the state school system in Australia has been overtly focusing on an improvement agenda since 2007 (Lingard & McGregor, 2013). He addressed this challenge by inviting an external team to facilitate a curriculum change process using the CRACC model. Prior to this, attempts to change the school’s curriculum had been facilitated “in house” and led by a member of the school’s leadership team.

The majority of schools in Queensland follow the syllabuses of the Queensland Curriculum and Assessment Authority (QCAA), formerly known as the Queensland Studies Authority (QSA), and the college in this study is no exception. The QCAA is a statutory body of the Queensland Government, responsible for the provision of a range of curriculum services and materials relating to syllabuses, testing, assessment, moderation, certification, accreditation, vocational education, tertiary entrance, and research.

Six months into the 12-month project, the EP was promoted to a new position. The new appointee quickly became engaged with the project and conducted interviews with staff involved, and it is these data that this article draws on.

Interviews were conducted with nine staff members who will be identified as A, B, C, and so on. In Queensland, the people responsible for managing school curriculum are heads of departments (HODs) or middle managers. Five of the nine people interviewed in this research came from this level. They worked extensively with the ECAs, evaluating data and implementing programs based on these data. Of the other four people interviewed, three were deputy principals and one was the principal of one of the school’s three subschools.

Interviews took place at the college over a 4-week period. This qualitative method featuring semistructured interviews was undertaken by the new EP. As the interviews took place within the first 2 weeks of her taking up the position, she had no ownership of the project and was therefore attempting to come to an understanding of the program without biases. Interviews were 60-min long and conducted on a one-on-one basis. They were recorded and then transcribed verbatim. Transcripts were read by three different researchers who worked independently to identify recurrent themes revealing the practices and activities that the teachers found useful in the curriculum change process. The findings were then used to detail the implementation phase of an externally facilitated curriculum change process.

As previously stated, interviews were conducted by the new EP who is one of the authors of this article. This may be perceived by some as the first limitation of the research. However, in line with participative research as outlined by Denzin and Lincoln (2005), genuine collaboration was valued. It was also in this principal’s interests to make sure the process that someone else had initiated was going to work with her vision for the school. In addition, three of the authors have had experience on school leadership teams and used a technique called “dissociation” in their everyday dealings with staff, students, and teachers. Dissociation occurs when a person emotionally distances herself or himself from incidents while conducting an investigation or an analysis.

A second limitation could be the choice of a single case study so that the findings may not be transferable to other school settings. As already mentioned, this was a pilot project to extend a curriculum change model. An evaluation of the successes or failures of the findings continue to be tried and tested in other school settings. These are not commented on here, as this is not the focus of this article.

Finally, to further limit bias, interview data were analyzed, and recurrent themes established by using category construction (Merriam, 1998). The researchers undertook this analysis in isolation, as it was necessary to establish a strong degree of confirmability. While it is unrealistic to presume an absence of all bias, as the discussion shows, many strategies were put into place to limit its occurrence.

Findings and Discussion

Five themes revealing the practices of the implementation phase emerged from the analyzed data. These were as follows:

Keep teachers informed of the wider educational context through professional development activities,

Use data to constantly track students to achieve better outcomes,

Ensure that changes improve classroom pedagogical practices,

Share information between departments and professional levels, and

Encourage ownership of full school performance.

These five themes are given further consideration below.

Keep Teachers Informed of the Wider Educational Context

Participants’ responses revealed the importance of being kept informed of the wider educational context. By this, the participants were not only referring to overarching federal and state educational policies and their associated agendas but also what other schools in their area and beyond were incorporating into their practices to improve performance. Again, the authors acknowledge that this could be buying into the neoliberal agenda of increasing competitiveness, but on the flip side, it could also be looked upon as a thirst for knowledge of the local educational landscape. Teacher A comments, We wanted to know what those other high-performing schools were doing because we wanted to get there.

In line with Teacher A, Teachers D and C also benefited from this type of information, stating, I found comparisons to other schools really helpful. It helped me understand where we were going. (Teacher D) By finding out what was happening in other schools, we could see the whole picture, therefore we had a much better understanding. (Teacher C)

In addition, the participants showed an appreciation of the ECA’s knowledge of this type of information. Teacher B comments, [The ECA] presents in a good way—including giving us examples what other schools are doing both in Australia and beyond.

This theme reveals an element of competition between teachers in different schools within districts—“we wanted to get there.” In line with the Schaffer and Thomson’s (1992) results-driven model, the “there” which Teacher A refers to is the explicit targets that were set as part of the change process. Teachers could see examples of how other national and international high-performing schools transferred policy into practice with successful results so that their understanding of what they were doing was enhanced—“it helped me understand,” “we had a much better understanding.” Teachers could easily utilize these examples to translate and own the process (Block, 2004; Smith & Lovat, 2003) in their immediate school context. As well as the element of competition (perhaps keeping the change process thriving and ongoing), according to participants, resources, motivation (Darkenwald & Merriam, 1982), and continual education and training (Fullan, 1992; Louis & Miles, 1990) provided by the ECAs were also integral to driving the process. In addition, Burridge and Carpenter (2013) recognize that programs collaboratively implemented by schools with external providers can expand teachers’ teaching practice. In this program, the ECAs worked directly with both teachers and students to achieve an outcome.

Various authors including Chin and Benne (1969, 1976), Fullan (2007), Harris (2001), Iles and Sutherland (2001), and Razik and Swanson (1995) recognize the importance of the knowledge of the ECA when leading a curriculum change process. The original CRACC model highlighted the ECA’s knowledge and prior experience as essential for the initiation phase, and as the findings here reveal, this link to the wider educational context, whether for theories and practices from overseas or for local area performance data knowledge, is equally important for any chance of success through implementation. However, this competitive spirit could simply reveal concerns over the publication of data and where their school performance sits in relation to surrounding schools. However, as an example of O’Neill’s (2013) primary use of assessment data, the focus was on improving learning rather than on second-order ways of using assessment data.

Use Data to Constantly Track Student Performance

The second theme that emerged from the analyzed data was the practice of using data to track student performance. Participants articulated the need for ongoing engagement with achievement data for the benefit of their students. This notion is revealed by the following comment by Teacher I: I loved the fact that we have kids as our focus . . . We never lost sight of that. We were working for the kids—even when . . . talking pathways it was always about the individual student.

By constantly engaging with data, the participants were able to identify not only students who were performing well but also those who might be having problems. Teacher B comments, To have all our focus on a kid at risk was incredible . . . Our kids have been advantaged by the process.

This reveals how teachers deemed the process worthwhile, as they could see how it benefited their students. Fullan (2000) argues that “continual assessment of student progress” (p. 16) or “monitoring of student progress and performance” (p. 17) is a central component of the implementation phase. In this study, the teaching group met every week and discussed and reported on every student within the cohort. In addition, the information collated from tracking was used by teachers as an effective teaching device to encourage further motivation and increased achievement. Once again, this is in line with O’Neill’s (2013) use of primary assessment data. Teacher C reveals that she could “give better advice to kids—more accurate than previously.” The data provided achievement knowledge and enabled more informed feedback to be given, as Teacher A suggests, The most significant learning has been for me as HOD. It has given me a process for how to have discussions with kids who aren’t succeeding.

Using educational data to track student performance was new to the participants. First, they had to come to an understanding of the data and, second, make decisions about the student which were supported by the data. This required those involved to act and think differently or restructure cognitively (Lewin, 1951). Not only were the participants putting into place new ideas, programs, or activities that Fullan (2007) maintains are essential processes for curriculum change, they were engaged in a learning process themselves, which Teacher E describes as a “massive learning curve.” Furthermore, the use of words such as “loved,” “focus,” and “incredible” reveals that the teachers involved embraced the change process.

Ensure Changes Improve Pedagogical Practices

The third theme was the improvement of pedagogical practices in the classroom and how such could lead to improved assessment outcomes. It was quite obvious that many of the participants prior to this research felt negatively about high-stakes testing regimes (Mansell, 2012), and indeed, these sentiments are echoed by many academics as outlined earlier. However, as can be seen from the comments below, teachers were encouraged to think about these testing regimes in a different light after instruction and demonstration from one of the ECAs: [The ECA] opened my eyes on how to teach kids to do multiple choice . . . Made me think about how to incorporate the practices she was doing into our school program. (Teacher A)

Furthermore, Teacher A added, We need to use [the practices of the ECA] in the everyday learning in our classrooms, including doing the explanation of “why.”

Teacher B said, “Our teaching will be better because of this,” and Teacher D commented on how “the process made me think more.”

These comments illustrate how, by watching the ECAs in action, the participants considered their own practice as reflective practitioners (Shulman, 1987) and could easily see how they could do things differently. Not only did teachers receive professional development, but the explicit modeling of high expectations through direct observation and the deconstruction of tasks to encourage higher order thinking was also an integral part of the change process. Lawless and Pellegrino (2007) argue the most important impact a professional development activity has on a teacher is that of pedagogical practice change. In other words, teachers change their classroom practices as a result of the professional development. In addition to self-reflection, the sharing of practice between departments and professional levels was also encouraged, which is revealed in the next theme that emerged from the interview responses.

Sharing Information Between Departments and Professional Levels

Many of the participants commented on the level of sharing that was engendered as a result of the change process suggesting that collaboration is key for change. Each week, the group met for 1 hr and focused on student and school performance. The vast majority of participants commented positively on this. They particularly liked learning about what was happening in other departments and other areas of the school.

We can learn really exciting things from the other areas [other departments in the school]—invaluable . . . Collective listening and seeing other HODs’ skills . . . I have loved it. (Teacher I)

They felt a strong sense of everyone working together for a common purpose as clearly articulated by Teachers G and H: The collegiality from everyone meeting together is powerful. (Teacher G) Everyone was on task—same boat. (Participant H)

The collegiality was not limited to the participants in the Wednesday group meeting, but became part of rich professional dialogue among other members of the teaching staff. Teachers B and D commented, I have been able to share my learnings with my staffroom. (Teacher B) I talk to my faculty about what we do—or at the lunch table. (Teacher D)

As the comments above demonstrate, there was a sense of worth and enjoyment in working together as a team. However, as Teachers B and D further comment, collaboration was also an important method for gaining more information about individual students. They say, It is essential to continue Wednesday practices as it is the only time we look beyond seeing our kids in just our faculty . . . we can see the student as a whole. (Teacher B) I’ve realized that while a student can have very studious habits in one subject, they may not be the same in another subject. (Teacher D)

Therefore, the sharing and collaboration worked on two levels, promoting stronger working relationships as well as having more knowledge about individual students. Fullan (2007) maintains that these notions are critical for successful change: Change involves learning to do something new, and interaction is the primary basis for social learning. New meanings, new behaviours, new skills, and new beliefs depend significantly on whether teachers are working as isolated individuals or are exchanging ideas, support, and positive feelings about their work. The quality of working relationships among teachers is strongly related to implementation. (p. 97)

Furthermore, the comment, “it is essential to continue Wednesday practices,” reveals a desire for sustainability of the process.

In earlier writings, Hargreaves (1994) refers to the balkanization of staff, particularly in secondary schools. He suggests that balkanization “restricted the opportunities for teachers to learn from each other—particularly across subject boundaries” (Hargreaves, 1994, p. 222). The participants’ comments make it obvious that balkanization once existed within this school, but the change process had served as a tool to “debalkanize.” The debalkanization not only enabled teachers to interact outside their own areas of expertise but also promoted ownership of whole school performance, which is the fifth and last theme that emerged from the interview responses.

Encourage Ownership of WholeSchool Performance

Several participants commented on the importance of all teachers owning the school performance agenda referring to “a culture of collective ownership” (Teacher F) or “a whole of college approach” (Teacher I). They felt that all members of the community benefited from such an approach, Teacher H saying, We pulled together, the process gave structure and empowered the Administration and even empowered the students which is very important.

This comment is in line with Block’s (2004) thoughts where he asserts that if change is to be successful, people need to feel powerfulness, rather than powerlessness. He developed this notion further by suggesting that ownership is the cornerstone of accountability and, without it, people will not want to take responsibility for any change or change process. Teacher I comments, Discussing the information and data has given everyone ownership to do something about it.

In this case study, according to participants, all stakeholders, the administration team, the classroom teachers, and the students became involved in the implementation phase of the change process and felt a sense of ownership of the college’s performance. Many authors (Barth, 1990; Block, 2004; Booz Allen Hamilton, 2004; Cothran, 2001; Fullan, 1993; Fullan & Stiegelbauer, 1991; Glickman, 1993; Kemmis & McTaggart, 1988; Rogers, 1995; Sarason, 1990; Smith & Lovat, 1995; Strebel, 1996; Visser, 2004) have suggested that ownership is essential for successful change.

However, although not articulated in this study, some comments from the original study strike a chord of concern—“just tell us what to do and we will do it” and “we just want to be told, explicit direction and strong leadership.” These statements could be indicative of a loss of teacher autonomy/agency, reform weariness, or a general acceptance of the current regime where their teacher professional identities are shaped by the performance agenda. They either have no choice, do not see a choice, or do not articulate a choice. They comply. The focus in this change process was improvement through learning, but there are airs of competition and students as data products, all that accommodate the present accountability regime. Perhaps there is a degree of docility and rather than challenging, teachers have just found ways of coping with the demands.

The five themes that emerged from the analyzed data are now used to reconfigure the CRACC model, revealing the useful practices and processes for the implementation phase of curriculum change, specifically change led by an ECA in response to high-stakes testing. The reconfigured model is shown in Figure 3.

CRACC-I.

Conclusion

The aim of this study was to inform the practices of schools and ECAs by extending the CRACC model through more explicitly mapping the implementation phase of a curriculum change process. This pilot case study illustrates how a single school using the CRACC model navigated this reality and, with the help of two ECAs, implemented change with both the interests of the teachers and the students in mind. The five practices—keep teachers informed of the wider educational context through professional development activities, use data to constantly track students to achieve better outcomes, ensure changes relate to pedagogical practices, share information between departments and professional levels, and encourage ownership of full school performance—have been added to the CRACC model and used to underpin the creation of the CRACC–Implementation (CRACC-I) model. This model is different from previous curriculum change theories in that it is results-driven, was implemented within a shorter time frame, and did not wait for all staff to come onboard. In pursuit of fulfilling a neoliberalist agenda to achieve better student outcomes, teachers created a positive learning environment where self-reflection, compliance professionalism, in situ professional learning, sharing practice, enhanced pedagogy, and collaboration were engendered. Moving this from a pilot to an extended study will yield further findings and elaborate our understanding of curriculum change to take into account high-stakes testing accountability.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research and/or authorship of this article.