Abstract

One of the challenges for instructors in online education is to create learning opportunities through online text-based discourse. The goal of this study was to examine the use of content analysis to better understand graduate students’ learning in online discussions. The discussion transcripts of a hybrid graduate course were analyzed to determine levels and frequencies of learning or cognitive presence. The analysis of the online discussions was guided by social constructivist rationale, and includes descriptive statistics and inter-rater reliability measurements. Findings show that cognitive presence levels were concentrated in categories marked for exploration and integration. Non-cognitive messages resulted in the highest frequencies of discussion messages, demonstrating indications of social presence and teaching presence. Training was found to be a key factor in resolving coding inconsistencies to improve the reliability of the content analysis. The processes of content analysis applied in this study to evaluate learning in online discussions provided useful information for the development and study of online discussions for learning.

Online education continues to grow. Over the past decade, increasing numbers of students in the United States have enrolled in online college courses. In 2011, approximately 6,700,000, or 32%, of all higher education students in the United States had enrolled in one or more online courses (Allen & Seaman, 2013). The literature suggests that online education will continue to expand throughout the upcoming decade, although not without concerns. Even though higher education administrators and faculty seemingly have become more accepting of online courses as an extension to the physical campus (Bowen, 2013), chief academic officers of universities perceive that the majority of university faculty members remain skeptical of teaching online (Allen & Seaman, 2013). Many faculty have concerns about the integrity and quality of online courses (Jaggars & Bailey, 2010), although the literature suggests that learning outcomes in hybrid courses are the same and in some respects superior to learning outcomes in face-to-face courses (Means, Toyama, Murphy, Bakia, & Jones, 2010). More research of online discussions across the various disciplines would be helpful those interested in applying asynchronous discussions in their courses. In this research, an instructor of a hybrid course applied an empirical method to assess evidence of learning in the course’s online discussions. Faculty who want to substantiate the learning in their courses’ asynchronous discussions may want to consider applying similar methods described in this study, and consider publishing the results.

Research on the use of asynchronous discussions used in courses for learning is relatively new, considering that the Internet has been available in schools for only a few decades. In 2003, approximately 93% of the public school classrooms in the United States had Internet access, which is in stark contrast with less than 5% of classrooms having Internet access in 1993 (Parsad, Jones, & Greene, 2005). Over the past decade, there has been an increase in the use of asynchronous discussions as an instructional strategy in face-to-face courses as well as in hybrid and online courses. More recently, strategies such as “flipping a classroom” have increasingly involved the use of online discussions for learning in face-to-face courses to extend instruction outside the physical classroom in preparation for learning inside the classroom (Bergmann & Sams, 2012).

In this study, content analysis was applied to examine the discussion transcripts for learning with respect to a conceptual framework developed from a 4-year study of computer conferencing (Garrison, Anderson, & Archer, 2001). The goal was to gain a broader, deeper understanding of the levels of learning in the discussions. An assumption of this study is that online discussions are essential elements of online learning. Throughout this article, the terms online discussions or asynchronous discussion are used interchangeably and are intended to refer to time-delayed discussions, not synchronous real-time discussions.

Literature Review

Learning theories provide a basis for understanding how learning occurs in online discussions. Principles of learning theories are used to explain or support teaching in meeting the learning needs of students (Borich & Tombari, 1997; Dillenbourg, 1999; Hofer, 2001). Particularly relevant to online learning are the principles of constructivist learning theories, which include collaboration, interaction, and the use of language (Bruner, 1986; John-Steiner & Mahn, 1996; Vygotsky, 1980). In turn, these constructivist principles fundamentally characterize how learning occurs in asynchronous discussions (Lebow, 1993; McLoughlin & Oliver, 1998; Swan, 2005; Yuan & Kim, 2014) and serve as the basis underlying the approach to this study in examining the discussion transcripts of graduate students for evidence of learning.

Choosing discussion strategies is an important step in the planning of online learning. A review of literature identifies factors to consider in planning for online discussions, which may help planning for the discussions.

Online Discussion Strategies

Asynchronous discussions are typically an important part of learning in online or hybrid courses. Discussions offer a way for students to learn and articulate their understanding of learning through interactions with each other and the instructor (Parker & Hess, 2001). Darabi, Liang, Suryavanshi, and Yurekli (2013), in their meta-analysis of 80 studies of online discussions, found that “learners responded better to strategic and productive discussion than when they were asked to elaborate on a topic” (p. 239). Although the purpose of this study does not involve an in-depth analysis of the various online discussion strategies, it does give an overview of strategies and factors that are relevant in planning. Further studies that describe the processes of choosing and applying text-based discussion strategies would be helpful.

The Socratic method of discussion has traditionally been recognized as an effective way to help students learn beyond memorizing and regurgitating facts (Hansen, 1988). Socratic discussion methods employ thought-provoking questions which are evident in online discussion strategies such as in online debates and case studies (Yang, Newby, & Bill, 2005). Literature circles are another Socratic method of discussion that has been used effectively to support learning in both online and face-to-face discussions. Literature circles begin with a discussion of a text, and are guided by open-ended questions that are designed to promote deeper thinking and discussion of the text. Having literature circles online provides for added reflection opportunities for students in reading the transcripts of the discussions, which supports broader and deeper perspectives and understanding of the discussion topic (Larson, 2009). The think-pair-share discussion method has been structured effectively for use in online discussions (Johnson & Aragon, 2003). In this strategy, students first think independently about a problem or a question, are then paired or grouped to discuss their thoughts, ending with the whole class sharing and discussing the topic. The think-pair-share discussion strategy builds a strong foundation for communicating ideas with a range of fellow students and the instructor (King, 1993).

In a study by R. S. Anderson, Goode, Mitchell, and Thompson (2013), they examined a group of doctoral students’ perceptions of four different online constructivist discussion strategies, including problem-based learning (PBL), discussion web strategy, 3-2-1 strategy, and case study. Each of the strategies was said to reflect social constructivist learning because of the interaction involved in the discussions and the development of personal meanings associated with the discussions. In the PBL discussion strategy, the students discussed self-directed problem-solving objectives. In the discussion web strategy, the students identified and described the pros and cons of a particular topic, justifying their position. In the 3-2-1 online discussion strategy, the students read a text, selected three key points from the text, provided two supportive explanations, and then posed one question related to the reading. In the case study strategy, the students analyzed real-life situations, read case information, made reflective connections, stated opinions, and asked and responded to questions. Students’ perceptions of these discussion strategies were collected through surveys and interviews, and were analyzed, coded, and categorized by four coders based on a process of inductive reasoning. The results showed that the students believed all of the discussion strategies to be effective for particular types of discussions.

Factors in Planning Online Discussions

Consideration of the practical aspects or factors of online discussions can be helpful in planning for the use of them in online learning. Among the factors to consider are time, student attitudes, and technology.

Asynchronous discussions require reading, writing, and comprehension tasks that arise from students needing to develop, post, and read text-based communication. This can result in more time required of the students to complete online discussions compared with the amount of time typically spent in face-to-face class discussions (Meyer, 2003). Instructors would be wise to consider the coordination needs in scheduling the time for students to read and post to the discussions. Reading, writing, and the increased amount of time that students spend in text-based discussions can provide for more focused and deeper learning through the increased opportunities to read, reflect, and respond (Benbunan-Fich & Hiltz, 1999; Bliuc, Ellis, Goodyear, & Piggott, 2011; Maor, 2003).

Asynchronous text-based discussions may have a positive impact on student attitudes toward discussions by lessening any difficulties arising from students who are reluctant to participate, feel confronted in the discussions, or are not given enough time to voice their opinions. The atmosphere in asynchronous discussions is seen by some students to be easier to handle, less stressful, equitable, and less likely to be dominated by a few individuals (Wang & Woo, 2007). However, there are disadvantages of text-based discussions that students may perceive negatively. Text by itself does not communicate the nuances of tone of voice nor does it replace visual cues of face-to-face discussions. The use of emoticons in online discussions can suggest tone of voice that may clarify the meaning behind the text (Tiene, 2000). More studies are needed to understand the effect and practice of using emoticons in online academic discussions.

Students need to possess functional technical skills to participate in asynchronous discussions. The necessary level of technical difficulty is not extremely high for most students, but a lack of familiarity with the online discussion features may cause frustration among some students. Frustration with technical difficulties may affect the quality of participation in the discussions (Davidson-Shivers, Muilenburg, & Tanner, 2001). The knowledge and skills necessary for students to participate in asynchronous discussions can be effectively addressed in instruction.

Reviewing various discussion strategies and considering factors that can affect asynchronous discussions will aid in planning. Analyzing the discussions is an additional task that can provide information for reflecting and revising the overall discussion strategy in efforts to understand and improve the online discussion’s effectiveness for learning.

Methods to Analyze Online Discussions

There are many analysis methods reported in the literature that have been used to assess learning in online discussions, few have been applied extensively. Among the analysis methods, two methods appeared to have been applied in several other studies, and were considered for this study, the Interaction Analysis Model (Lucas, Gunawardena, & Moreira, 2014) and the Community of Inquiry (COI) framework (Garrison & Arbaugh, 2007).

A study that used the Interaction Analysis Model from Gunawardena, Lowe, and Anderson (1997) described an analysis of an online debate. Gunawardena et al. characterized online debate as a “ . . . co-creation of knowledge and negotiation of meaning” (p. 406). The Interaction Analysis Model consisted of five categories or phases, including (a) sharing or comparing information, (b) discovery and exploration, (c) negotiating of meaning or co-construction of knowledge, (d) testing and modification or synthesis, and (e) agreeing or application. From the coding and analysis, based on these five categories, much of the participants’ discussions were determined to be characteristic of two categories, exploration or discovery, and negotiation of meaning. The Interaction Analysis Model categories have some similarities of categories with Garrison’s COI framework’s practical inquiry model. However, the COI framework, which was chosen for this study, appeared more broad-based, including categories of teaching presence, social presence, and cognitive presence.

Theoretical Rationale

The theoretical framework of this study is based on the COI framework (Garrison et al., 2001), which is grounded in constructivist learning theory that considers collaboration, reflection, and critical analysis as essential to learning. The COI framework is comprised of three core elements: social presence, teaching presence, and cognitive presence. Social presence, teaching presence, and cognitive presence are all intertwined. “Cognitive presence is defined as the extent to which learners are able to construct and confirm meaning through sustained reflection and discourse” (p. 11), and can be used as a “ . . . means to assess the nature and quality of critical, reflective discourse that occurs within the text based educational environment” (p. 7).

The element of cognitive presence was selected as a primary focus for this study based on reviews of research studies that employed content analysis to assess online discussions. Many of the content analysis studies that were reviewed were identified in articles from De Wever, Schellens, Valcke, and Van Keer (2006) and Rourke, Anderson, Garrison, and Archer (2001). Content analysis is a qualitative research method that has been used fairly extensively, and its selection followed a “directed approach,” where the analysis method is based on methods identified in the literature (Hsieh & Shannon, 2005). In research pertaining to the content analysis of asynchronous discussions, research literature highlights the importance of reporting the basis of its theoretical foundation including information regarding the unit of analysis and the reliability of the study (De Wever et al., 2006). The theoretical rationale, unit of analysis, and reliability of analysis are all reported in this study.

In developing the COI framework, a practical inquiry model was developed. The practical inquiry model was designed to be applied to analyze transcripts of online discussions for cognitive presence. The practical inquiry model consists of four phases grounded in perception, deliberation, conception, and action. Each phase reflects a process leading to problem resolution beginning with identifying or understanding the problem, termed the triggering event. The second phase involves exploration of the topic. In the third phase, integration, possible resolutions or solutions or conclusions are identified, and the fourth phase, resolution, is where the solutions or conclusions are selected and applied. These four phases or categories (triggering event, exploration, integration, and resolution) represent a process of evolving learning in asynchronous discussions that can be supported and analyzed. The process to identify evidence of each of the four phases is an interpretive process. To guide the process of identifying cognitive presence in online discussions, categories of descriptors, indicators, sociocognitive processes, and examples act as guidelines to facilitate content analysis coding of the discussion transcripts (Garrison et al., 2001). According to Garrison et al. (2001), it is important to note that the practical inquiry model indicators should “not be seen as immutable” (p. 9), meaning that other studies using the practical inquiry model may find a need to refine or revise the criteria to meet specific analysis needs, as was the case for this study.

Method

In this study, an empirical method, content analysis, was chosen to assess cognitive presence of three asynchronous discussions of graduate students in one of their courses. “Content analysis is a research technique for making replicable and valid inferences from texts (or other meaningful matter) to the contexts of their use” (Krippendorff, 2012, p. 24). Content analysis enables a process to systematically examine the quality of learning in online discussions (Gunawardena et al., 1997). Although the use of content analysis dates back to the 1940s and 1950s, it was not until the 1980s and 1990s that it began to be more frequently applied to study learning in asynchronous discussions. Henri (1992) described computer conferencing as a “gold mine of information” (p. 118) that would provide researchers a rich resource to analyze and advance online learning. Use of content analysis to assess online discussions has increased over the past 20 years, just as Henri had predicted, but concerns about lack of uniformity and disclosure of the analysis methods have arisen (De Wever et al., 2006; Rourke et al., 2001).

Issues in comparing content analysis studies of online discussions have arisen due to a lack of consistency in the different analysis instruments used (Rourke & Anderson, 2004). “This lack of replication (i.e., of successful applications of other researchers’ coding schemes) should be regarded as a serious problem” (Rourke et al., 2001, p. 6). Consequently, research literature has stressed the need for more studies to employ similar instruments (T. Anderson, 2005; De Wever et al., 2006), which in turn should increase the reliability and validity of these types of studies (Stacey & Gerbic, 2003). The importance of building on previous research influenced choosing the method and the coding instrument of this study. Repeating research designs helps establish the reliability of the results, which can be obtained from repeated use of the same instrument. Further information regarding this practical inquiry model, which has been applied in other content analysis studies of online discussions, can be found in studies from Akyol and Garrison (2011), de Leng, Dolmans, Jöbsis, Muijtjens, and van der Vleuten (2009), De Wever et al. (2006), Fahy (2005), and others.

Analysis of the results of this study is aimed to (a) aid the researcher in the planning of future asynchronous discussions in the graduate program, (b) provide information for instructors who are interested in studying the learning effectiveness of their asynchronous discussions, and (c) apply lessons learned from this study to further studies involving the use of content analysis as a method to evaluate online discussions. One goal is to continue using content analysis to better understand the use of this method to assess online learning strategies. Analysis of other discussions from the same graduate cohort of this study is planned.

Research Questions

In the “Discussion” section of this study, the researcher reflects on the use of content analysis and the practical inquiry model as a means to assess the learning effectiveness of online discussions.

Participants and Context

In this study, three online discussions of a hybrid graduate course, the “Fundamentals of Online Pedagogy,” were examined for cognitive presence. The researcher of this study was the instructor of the course, which was primarily online, but included three face-to-face class sessions at the beginning, the middle, and the end of the semester. The course is part of a master’s program designed to be completed entirely through a hybrid delivery system over four consecutive semesters. All of the courses in the students’ graduate program typically met face-to-face only 2 to 3 times each semester. The course examined for this study was situated in the first semester of the students’ graduate program. There were a total of 19 students (10 females, 9 males) participating in the course, although only 15 students finished the course. A random sample size of students (N = 15) was chosen for the analysis. Three separate discussions were analyzed out of eight total online discussions in the course. Each of these discussions included three discussion groups, except the first discussion which had five groups, and each of the discussions occurred over a week time period. The reasoning behind selecting and analyzing the first three online discussions of the course was to allow for the opportunity to make observations of the students’ learning progression in the course from the beginning of the semester. Studies of the other discussions in the same course and same students will be based on the results of this study.

Online Discussion Strategy

The online discussion strategy used in each of the three discussions of the study was identical, consisting of a format similar to the 3-2-1 discussion strategy, which included reading a text, finding key points in the text, providing supportive explanations, and posing questions. The instructions for the discussion included several items:

Students were divided into groups for the online discussions.

Each discussion required reading of an initial journal article.

Groups were to identify and discuss three main points from the article with a goal of discussing and identifying related topics to broaden and deepen students’ understanding of the topic.

Students were then to search for another article based on the three main points that had been identified and discussed; read the new article; and then describe, discuss, and synthesize the concepts from the two articles.

To conclude the discussions, summaries were posed synthesizing each group’s discussions.

Role assignments were assigned to each member of the discussion group, which included monitor, encourager, facilitator, quality assurance checker, and summarizer.

Data Collection

The analysis of the online discussions was unobtrusive, occurring after the students had already completed the course and been given their final grades. Technology was used to collect, process, and organize the data for analysis. Blackboard, the University’s learning management system, was used to archive the discussion transcripts. Learning management systems, such as Blackboard, support the use of content analysis to evaluate online discussions with their capacities to store online discussion transcripts that are easily accessible for later analysis. The discussion transcripts for this study were exported from Blackboard, and then imported into HyperRESEARCH, a qualitative software program that was used to organize and code the discussion transcripts.

A secondary data source included student survey data which were collected at the end of the semester. The survey inquired of the students about their perceptions regarding the use of roles in online asynchronous discussions, and was the subject of Discussion 1, which was based on the reading of an article on the use of roles in online discussions from De Wever, Van Keer, Schellens, and Valcke (2010).

Coding

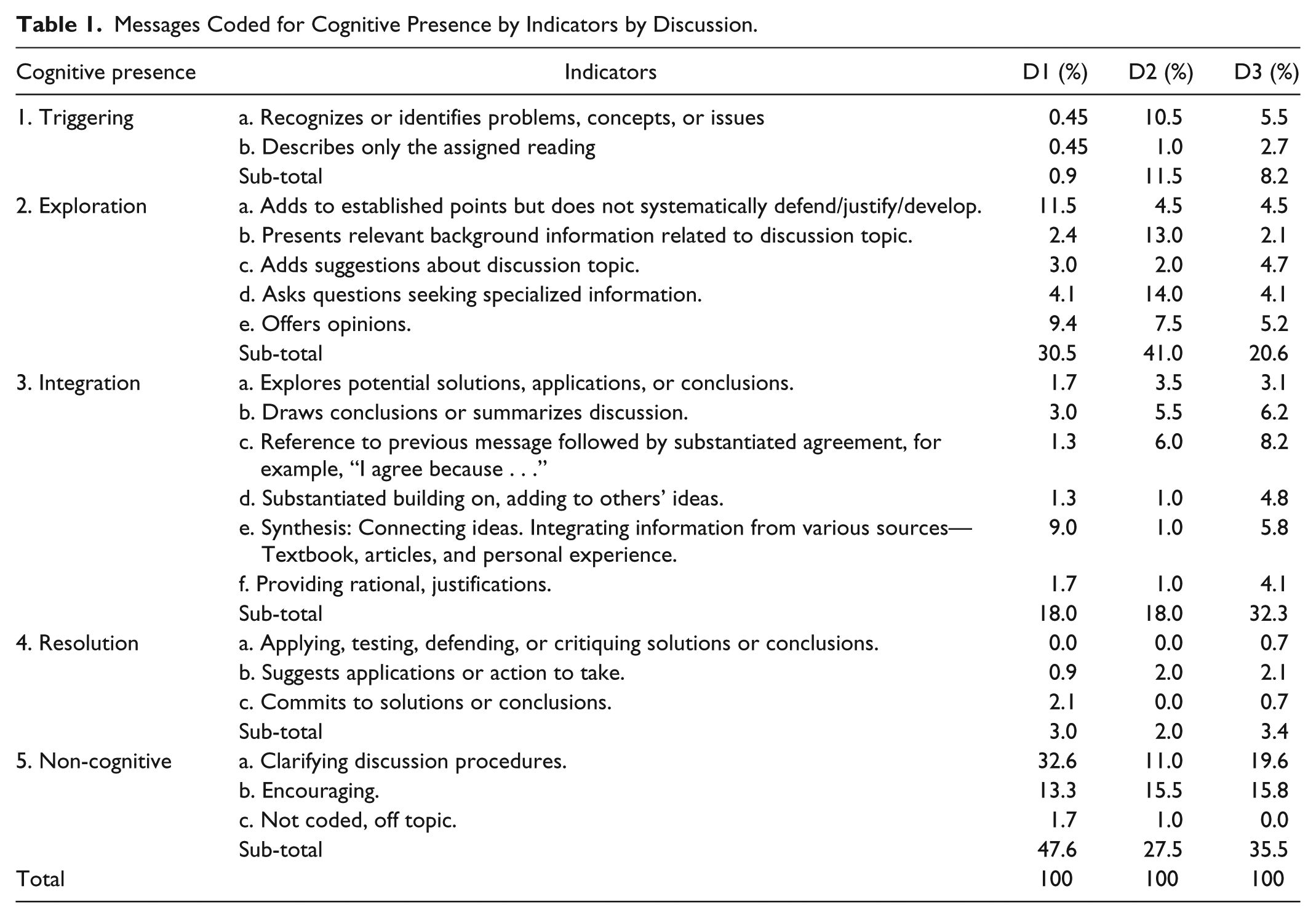

Two coders, the researcher, who was the instructor of the course, and another university instructor coded the three sets of discussion transcripts. Training was provided to the coders to aid them in coding the discussion transcripts. The training involved the researcher/instructor of the course describing and demonstrating to the second coder how to apply the coding instrument (Table 1) to the discussion transcripts. Included in the training were discussions between the two coders regarding the instructions for Discussion 1 that had been given to the students who participated in the discussion. The training occurred during 1 week, totaling approximately 3 to 4 hr. Discussion transcripts from Discussion 1 were used in the training to demonstrate the coding. Following the training, the coders worked independently in coding the units of analysis.

Messages Coded for Cognitive Presence by Indicators by Discussion.

The units of analysis consisted of each message thread from each of the discussion participants in the three discussions. Clear demarcation at the beginning of each message identified for the coders where to begin and end each coding effort. Each of the messages was analyzed by the two coders and classified according to the indicator representing its level of cognitive presence (see Table 1), which was based on a modified version of the practical inquiry model (Garrison et al., 2001). During the coding, if the coders determined that more than one indicator of cognitive presence was evident in a message thread, the indicator selected was to be based on a preponderance of evidence in the message. Training for the coders was considered a critically important factor that could affect the reliability of the content analysis applied in this study.

Variables

The variables related to cognitive presence were defined based on four categories of Garrison’s practical inquiry model (Garrison et al., 2001), including triggering events, exploration, integration, and resolution. Each broad category contained a sub-level of indicators, which were used as the basis to code each discussion message. Although the sub-level indicators were drawn from Garrison’s practical inquiry model, they were modified to facilitate the coders in identifying evidence in the online discussion transcripts of this study. Categorization of the sub-level indicators provided deeper insight into the cognitive and non-cognitive presence displayed by the students in the discussions. Table 1 shows the sub-level indicators for the broad categories of cognitive and non-cognitive presence, including the percentages of occurrence for the three discussions. The percentages of messages identified for each indicator reflected in Table 1 represent an agreed-on coding of each message based on discussion and reconciliation of messages between the two coders after all of the discussion messages had been coded.

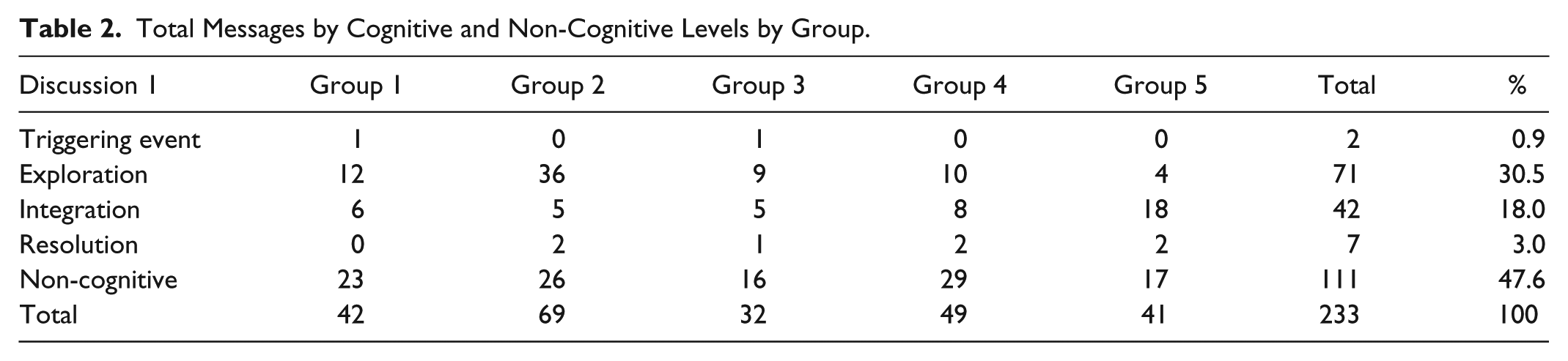

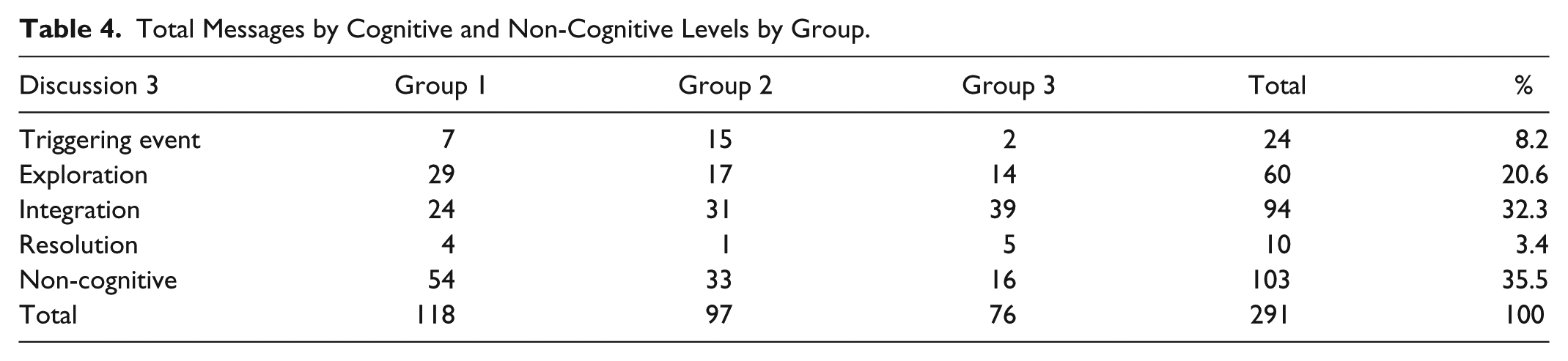

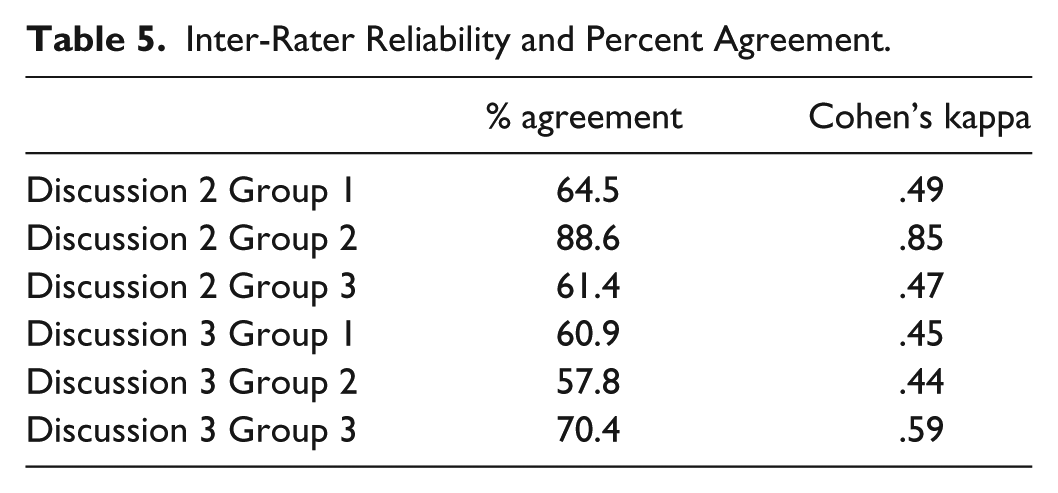

The discussion messages were separated for each cognitive and non-cognitive broad category by group for each of the three discussions (see Tables 2, 3, and 4). These three tables show the number of messages coded for each broad category, including the triggering event, exploration, integration, resolution, and non-cognitive. Inter-rater reliability of the coding was calculated for Discussions 2 and 3 with the use of Cohen’s kappa statistical measurements, including percentages of agreement between coders (see Table 5).

Total Messages by Cognitive and Non-Cognitive Levels by Group.

Total Messages by Cognitive and Non-Cognitive Levels by Group.

Total Messages by Cognitive and Non-Cognitive Levels by Group.

Inter-Rater Reliability and Percent Agreement.

Potential for Coding Error and Bias

The researcher of this study was the instructor of the course with 20 years of experience in the field of educational technology. Steps were taken to reduce any ambiguity and bias in the coding process: (a) Student names were removed from the discussion transcripts in preparation for the coding. This eliminated coder bias toward any student names. (b) Training and practice coding of Discussion 1 was provided to emphasize procedures for the coding based strictly on the criteria established for each indicator (see Table 1). Training was considered essential to reduce ambiguity in the judgment process for coding each message. (c) Demarcation of the unit of analysis of the coding was discussed between the coders to eliminate any errors from confusion in the unit of data to code. The unit of analysis for coding consisted of the message as opposed to sentences or paragraphs. However, there was the possibility of messages containing evidence of more than one indicator, which was addressed in this study by coding the messages based on the preponderance of evidence in the message.

Results

A total of 724 messages were posted in the three discussions. Discussion 1 included a total of 233 messages, Discussion 2 contained 200 messages, and Discussion 3 had 291 messages. For all of the three discussions, the average percentage of messages coded for each cognitive presence category resulted in 6.8% triggering events, 29.4% exploration, 23.8% integration, and 2.9% resolution. In addition, messages coded as non-cognitive accounted for 37.1% of the total messages.

Three categories, non-cognitive, exploration, and integration, accounted for roughly 90% of the total messages in the three discussions. The lowest percentage of responses was in the categories of resolution (2.9%) and the triggering event category (6.8%), which were both considerably lower than any of the other three categories. The messages categorized as triggering events primarily consisted of descriptions of the articles assigned by the instructor for the discussions. The discussion messages categorized as resolution primarily consisted of student summaries of the discussions with little back-and-forth discussion among the students about the summaries. In online discussions, students appear to need more direct guidance to increase discussion that would be characteristic of resolution.

Messages coded as exploration (29.4%) and integration (23.8%) accounted for the highest frequencies of cognitive presence in this study. High frequencies of exploration and integration messages have been similarly reported in the findings of other studies that also relied on the primary inquiry model and content analysis to evaluate learning in the discussions (Garrison et al., 2001; Liu & Yang, 2012). The non-cognitive category accounted for the overall highest frequency (37.1%) of messages, which was 7.7% higher than the next highest category of exploration (29.4%). Students who did not follow the instructions in Discussion 1 appeared to directly influence the higher frequency of messages coded as non-cognitive (47.1%), which was substantially higher than non-cognitive messages in Discussions 2 (27.5%) and 3 (35.5%). Discussions 2 and 3 did not have as many messages asking questions about the instructions and procedures of the online discussion. A solution to reduce potential non-cognitive messages is to teach the students about the procedures and processes prior to the first discussion. In a fully online course, this could be accomplished through a separate discussion thread that focused exclusively on protocols of the discussions. In a hybrid course, this could be addressed in the first face-to-face class.

Displayed in Tables 2, 3, and 4 are the total number of messages for each of the three discussions which are listed by group showing the total percentages of cognitive presence (triggering event, exploration, integration, and resolution) and non-cognitive presence. There were five groups participating in Discussion 1, three groups in Discussion 2, and three groups in Discussion 3. A pattern appeared to be emerging from the overall frequency of messages coded for the different categories of cognitive and non-cognitive presence. Messages coded as non-cognitive or exploration were usually the most frequent type of messages identified in each of the three discussions. Messages coded as resolution or as triggering events were identified as the least frequent messages in all three discussions. Messages coded as integration were usually identified as the third most frequently occurring messages in the three discussions, except in Discussion 3 where they were second highest, only 3.2% lower than total non-cognitive messages.

Discussion 1 Cognitive and Non-Cognitive Presence

Discussion 1 had the highest percentage of messages coded as non-cognitive, 47.6% (see Table 2) of the messages, compared with an average of 37.1% of non-cognitive messages for all three discussions. Table 1 shows that most of the messages classified as non-cognitive were coded for clarifying discussion procedures. Messages coded as exploration were slightly higher at 30.5%, when compared with an average of 29.4% for all three discussions. Integration messages in Discussion 1 were lower at 18%, similar to Discussion 2’s, but considerably lower than Discussions 3’s percentage of messages categorized for integration at 32.3%. Few messages (0.9%) were coded as triggering events. However, some of the messages that were coded as exploration and integration contained evidence characteristic of triggering event criteria, but were coded as exploration or integration due to the preponderance of evidence in the message. Another factor affecting the low frequency of triggering events in Discussion 1 was partially a result of the students being introduced to the topic of Discussion 1 during the first face-to-face class meeting. Students having the opportunity to talk to each other about Discussion 1’s reading assignment in a face-to-face environment eliminated some of the online discussion that likely would have occurred.

Discussion 2 Cognitive and Non-Cognitive Presence

In Discussion 2 (see Table 3), the data show an increase of 10.6% in messages coded as triggering events, up 11.5% compared with Discussion 1 at 0.9%, while the average for triggering events for all three discussions was at 6.8%. The content of the triggering event messages in Discussion 2 clearly showed that the students were able to adequately identify and describe the topic of the discussion, which was based on an article focused on social presence in asynchronous discussions.

Also in Discussion 2, students were much more active exploring and discussing additional perspectives related to the topic of the assigned reading. The increased discussion activity resulted in the highest percentage of messages coded for exploration with 41%, compared with the 29.4% exploration average of all three discussions. Furthermore, data for Discussion 2, as seen in Table 1, show that 14% of the students’ messages focused on asking questions seeking specialized information. Discussion 2 also showed a 20.1% decrease of messages coded as non-cognitive compared with Discussion 1, due to fewer messages coded for clarifying discussion procedures. It appeared that the students had become more comfortable with the procedures for the online discussion. Also, in Discussion 2, messages identified as resolution remained low at 2% of the total messages, which consisted of students reporting a summary that synthesized the overall discussion, although there continued to be limited discussion among the students regarding the summary.

Discussion 3 Cognitive and Non-Cognitive Presence

Discussion 3 had the highest frequency of messages coded for integration at 32.3%, compared with an average of 23.8% for all three discussions. Integration reflects a higher level of thinking skills reflecting students’ ability to synthesize the discussion concepts and form summary conclusions. In Discussion 3, there was a higher percent of non-cognitive messages at 35.4%, compared with 27.5% in Discussion 2. The increase of non-cognitive messages in Discussion 3 from Discussion 2 was due to a moderate increase in messages that were seeking clarification (see Table 1). Discussion 3 was focused on “concept mapping,” which was for some students a difficult concept to read about and discuss and then apply. In the course, there was an assignment that required the creation of concept maps. Some confusion arose among the students between the assignments requiring the actual building of a concept map with the online discussion about concept mapping. Conversely, the increase in integration messages appeared to be a result of more discussion requiring the forming of solutions of how to develop concept maps that would be applied.

Reliability

Inter-rater reliability identifies the extent of agreement among the coders and takes into account any agreement occurring by chance. Information of the inter-rater reliability provides an indication of the reproducibility and stability of a study, and is considered an essential element of content analysis (De Wever et al., 2006; Lombard, Snyder-Duch, & Bracken, 2002).

There are differences in interpretations regarding acceptable levels of inter-rater reliability coefficients. According to Landis and Koch (1977), kappa coefficients of “0-.20 are slight, .21-.40 fair, .41-.60 moderate, .61-.80 substantial, and .81-1.00 perfect” (p. 165). However, literature also shows the existence of differing interpretations regarding coefficient levels, including interpretations saying that coefficients below .8 give rise to concern (Lombard et al., 2002). In a similar study, Garrison et al. (2001) assessed cognitive presence in two online discussions and calculated kappa coefficients at levels of .35, .49, and .74, and .45, .65, and .84. The increasingly higher coefficients, from .35 to .75, and from .45 to 84, were thought to be a result of increased training for the coders. Training appeared to be an influencing factor.

In content analysis, latent content is often considered a challenging area of concern. The latent nature of discussions involves potential reliability issues related to the coders’ “ . . . interpretations of the meaning of the content” (Potter & Levine-Donnerstein, 1999, p. 259). To alleviate any issues of reliability associated with latent content, training proves to be a key factor. Higher coefficients are thought to be more achievable as a result of coders having more training with the processes related to coding (Riffe, Lacy, & Fico, 1998).

In Table 5, the kappa coefficients and percentages are displayed for Discussions 2 and 3. Discussion 1 is not displayed as it was used in the training process for the two coders. There were three separate group discussions in Discussion 2 and three group discussions for Discussion 3. Inter-rater reliability coefficients were calculated for each group discussion. Most of the coefficients were in the moderate range from .41 to .60. However, for Discussion 2, Group 2, an inter-rater reliability of .85 was calculated, which is a substantially higher kappa coefficient than all of the other discussions. The coders periodically compared and discussed their coding of Discussion 1. For Discussions 2 and 3, the coders compared and discussed their coding only after the entire coding was completed, and did not periodically compare coding results during the coding of the discussion transcripts.

The training that was provided to the coders of this study included (a) discussions of the coding instrument and the indicators, (b) discussions on how to apply and record the coding, (c) a review of the instructions and procedures for the discussions, and (d) practice coding of Discussion 1, including discussing the differences in the coding results and arriving at an agreement to resolve differences.

The low reliability coefficients were a result of differing interpretations between the two coders regarding the content of the reading assignments. These differences were examined, discussed, and resolved after the coding of Discussions 2 and 3. The sub-level indicators appeared to be a source of the problem in applying and coding the discussion messages. In discussing the differences of the coding, each message was considered in relation to the sub-level indicator and in relation to the broader category (triggering event, exploration, integration, and resolution).

Descriptions of the type of content in a message would meet specific categorization criteria based on the sub-level indicators of each broad category. Messages coded as triggering event were to basically describe the initial article. Differing scores arose from the coder not knowing the difference between new information from a new article from information that was derived from the assigned article of the discussion. Thorough review of the assigned article was needed.

Sub-level indictors for integration and exploration were the areas where many other scores differed. Examination of differences of integration and exploration made apparent a need to further refine the sub-level indicators along with an examination of the prompts or instructions for the discussion. Each of the messages coded differently in these two areas were discussed in relation to the articles associated with the discussions until an agreement was made.

As a result, specific type of training was also identified that would need to focus on the subject matter of the topics that were the focus of each online discussion. Overall, the process of evaluating the coding provided insight into refinements or changes to the discussion strategy that may have a positive impact on the use of online discussions for learning.

Survey Results

Among the learning goals for the students in the course involved in this study was to develop knowledge of online discussion strategies. A survey was given to the students at the end of the semester to examine their perceptions of a discussion strategy that was the main topic of Discussion 1, which was focused on the use of roles in online discussion.

The survey results collected at the end of the semester showed that all of the students, except one, believed that the use of roles in online discussions would result in generating greater participation from all the members of the discussions, which is a contributing factor for quality and productivity in online discussions. The student who did not wholeheartedly support the use of roles as essential to online discussions believed that roles required too much structure in an online discussion, which could inhibit free-flowing discourse. Second, the role of summarizer was identified by a majority of students as the most important and difficult role in an online discussion. Students also indicated in the survey that they had difficulty with summarizing skills, although they did believe that summarizing discussions would help foster deeper understanding of the topics being discussed. In addition, students were evenly divided in their beliefs about rotating roles in discussions. Half of the students believed that maintaining the same role was more beneficial to the discussions than changing roles from discussion to discussion. Maintaining the same role was believed to help the students master the role, which would lead to higher quality discussions. The other half believed that changing roles from discussion to discussion would help learning new skills from roles that may not have been chosen. Most students believed that their choice of roles would be influenced by their comfort level, although the students also indicated that there was value in being forced out of their comfort zone. Overall, there was a strong indication that the students favored the use of roles as an important online discussion strategy for learning.

Discussion

Examining the three discussions through the research framework of this study provided deeper insights into the impact of online discussion strategies in learning. Reviewing the literature for online discussion strategies revealed a need for more perspectives of research-based reviews of online discussion strategies. For example, Darabi et al. (2013), in their meta-analysis of online discussion strategies, could only locate eight articles that met their rigorous research criteria. In determining a theoretical rationale to frame this study of online discussions, also revealed was a dearth of frameworks that had been applied extensively in research. However, the practical inquiry of Garrison et al. (2001) that was applied in this study appeared to be flexible and adaptable enough to serve as a basis to analyze many types of online discussions. Applying the practical inquiry framework to analyze the three online discussions of this study made evident the need for careful instructional planning of the online discussion prompts with consideration of triggering events, exploration, integration, and resolution.

Overall, Tables 2, 3, and 4 show strong evidence of cognitive presence in the three online discussions. The distribution of discussion frequencies was acceptable with a substantial volume of the discussion posts evident of exploration and integration. The small percentage of triggering event messages was acceptable with 8% to 10% of the messages adequately covering the necessary information essential to the discussions. Students appeared to progress fairly well through the discussions, first identifying the topic of discussion (triggering event), expanding with ideas for further discussion (exploration), and then discussing and integrating new ideas. Strengthening the discussions strategy could be accomplished with more direction in applying solutions or conclusions to real or virtual situations. In the original instructions for the online discussions given to the students, there were not any directions instructing the students to apply their conclusions to real-life or virtual settings, although there were a few students who were K-12 teachers that did discuss applications of the conclusion to their real-life classroom situations.

Non-Cognitive Presence

The percentages of non-cognitive messages appeared rather high in the first discussion, went down in the second discussion, and then increased slightly in the third discussion. Online discussions are sometimes filled with non-cognitive off-task messages, which was not a problem in this study. Non-cognitive messages that seek clarifications of the discussion instructions can help facilitate the discussion, and can be supported in various ways depending on whether the course is a hybrid or fully online course. Clarification of instructions can be taught in face-to-face class sessions, or separate threads dedicated to clarifying instructions for the discussion would help. “Teaching presence,” which is a major concept of the COI (Garrison et al., 2001), can be elevated in online discussions through separate discussion threads led by the instructor of the course. Students appear to expect the instructor to have strong teaching presence in online discussions.

The messages coded as encouraging are characteristic of social presence, also a major concept of the COI model. Messages coded as encouraging were moderately evident throughout the three discussions. Encouraging messages can increase social presence in online discussions, which promotes positive outcomes. Students in this study were influenced by the use of encourager roles, which was used in all three discussions. The use of roles as a discussion strategy has been shown to have a positive impact on student interaction in asynchronous discussions (De Wever et al., 2010; Yeh, 2010), which was confirmed by this study.

Reflections on Content Analysis and the Practical Inquiry Model

T. Anderson (2005) suggested that there must be an easier way to support and confirm acceptance of online learning other than through content analysis, and that few of the content analysis methods for online discussions have been used repeatedly enough, creating a problem comparing studies. Although difficulties do arise in applying content analysis to study online discussions, for those desiring to improve their online discussions, the benefits of applying content analysis outweigh the difficulties in its use.

Major difficulties in using content analysis include (a) modifying the indicators’ criteria, (b) developing training for the coders, (c) training coders, and (d) the time it takes to complete these tasks. Content analysis is a tedious process to apply in the analysis of online discussion transcripts. Thorough training is required for the coders to establish acceptable reliability measurements. There has been some difficulty in training others to discern the differences between phases of the practical inquiry model. The integration “ . . . phase is the most difficult to detect from a teaching or research perspective” (Garrison et al., 2001, p. 10). The inter-rater reliability of this study was affected by issues that typically affect content analysis studies, relating to the coders being knowledgeable of the subject matter in the study, and crafting measurability instruments that address this issue (Potter & Levine-Donnerstein, 1999).

Limitations

Limitations of this study include a small sample size involving 15 graduate students from an educational technology master’s program in their first semester of the program. The results may be generalizable to analogous populations using comparable online discussion strategies. However, the design of coder training and the development of criteria for coding indicators may possibly affect the results. The primary inquiry model, which includes the categories of triggering events, exploration, integration, and resolution, appears to provide a strong framework to serve as a base for the development of the coding criteria. Other factors that may affect generalizability include variables related to student backgrounds (e.g., student context factors including academic, language, social, and socioeconomic factors). These factors should be considered in developing and examining online learning (Swan, 2001).

Other limitations include the following: The study only examined one element, cognitive presence, from the COI model (Garrison et al., 2001), excluding elements of teaching presence and social presence. Although there were no plans at the onset of the study to examine teaching and social presence, there is some indication of their presence in the non-cognitive messages. Social presence appears to be evident in the messages containing “encouraging” comments, and teaching presence is potentially present in the messages containing comments related to instructional procedures. It is worthwhile to examine these two areas more closely in future studies.

Conclusion

The research literature on content analysis of online discussions calls repeatedly in its conclusions for the need of studies that contain all of the following: (a) a systematic coherence between theory and analysis categories, (b) clear choice of the unit of analysis, and (c) information about the inter-rater reliability procedures (De Wever et al., 2006). These are the essential requirements needed for content analysis studies that provide the means for professional comparisons that may contribute to improved conditions in online teaching and learning. In addition to meeting the above three requirements necessary to compare studies applying content analysis to online discussions, there are other concerns to address in the process of applying content analysis to study online discussions.

There are several important steps in applying methods of content analysis to analyze learning in online discussions. These steps are not meant to be comprehensive but are lessons learned from this study:

Use a software program such as HyperResearch or Atlas.ti, which is very helpful in sorting, analyzing, and reporting data.

Use an existing theoretical rationale or research design such as the COI (Garrison et al., 2001). Although there is pressure to develop an individualized instrument, researchers and practitioners can benefit from repetitive studies that thoroughly vet research designs.

Examine and understand the online discussion strategies in meeting learning objectives associated with the online discussions.

Modify the criteria (indicators) of the coding instrument to align with the identification of the content of the online discussion messages in relation to the learning objectives.

Select coders who are knowledgeable with the subject matter of the online discussions.

Plan thorough training for the coders.

Assess training of coders for inter-rater reliability measurements that are at least .8.

In analyzing the online discussions, analyze for cognitive presence, teaching presence, and social presence.

This study used an existing model for content analysis to analyze online discussions of a course previously taught. The purpose was to improve the use of online discussions to support learning in hybrid courses in education. The study confirms the value by applying the framework practical inquiry model (Garrison et al., 2001) and further identifies important steps or lessons learned in applying content analysis to assess learning of online discussions. Finally, it was the experience of the researcher of this study that the process of applying content analysis to examine a course’s online discussions will provide additional perspectives on development and use of online discussions for learning.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research and/or authorship of this article.