Abstract

Currently, risks brought on by science and technologies such as artificial intelligence (AI) are intensifying. However, the global governance system of science and technology is riddled with major security loopholes and is deeply mired in dilemmas and distractions such as cognitive misconceptions, avoiding ‘heavy’ topics to focus on more trivial ones, ignoring time-limit principles, limiting funding for AI safety, neglecting the role of government and lacking a ‘big picture’ view. Humanity faces dual and multiple challenges; the situation is extremely grim and urgent. Principles such as ‘cooperation is more important than competition’, ‘safety is more important than wealth’, ‘direction is more important than speed’ and ‘steady progress is more important than coming first’ should be established as soon as possible. Therefore, it is imperative to recognize the truth, build confidence, transform to survive, launch a ‘New Distribution Revolution’ and quickly realize the transition from a ‘development-first’ system to a ‘security-first’ system and vigorously develop a security economy. To this end, the global governance system requires a comprehensive upgrade; only a global governance system in which the government plays a full role can complete such an arduous task in time.

Keywords

Introduction

Development and security are two major themes of today's world. There is already a rich knowledge system, cultural traditions, organizational management, institutional arrangements, incentive mechanisms and practical experience for considering problems and taking action from the perspective of development. However, the framework for considering problems and putting them into practice from the perspective of security remains immature, and global governance is attempting to make up for this shortcoming.

It is well known that the essence of global governance is based on global governance mechanisms rather than formal governmental authority; the methods of global governance are participation, negotiation and coordination. These characteristics and principles have been emphasized and practised in global governance theory and practice, achieving remarkable success. However, today, with the rapid development of cutting-edge technologies such as artificial intelligence (AI), biotechnology and quantum technology, the global governance system and human security are facing unprecedented challenges. In particular, AI risks are intensifying—artificial general intelligence (AGI) could be achieved within a few years, followed immediately by the realization of artificial super-intelligence (ASI), making a loss of control over AI difficult to avoid. Therefore, within a short period (one or two years), we must ensure an AI transition and construct a new type of sustainable, safe AI. However, current global governance mechanisms are simply incompetent for this task. To this end, this article discusses three aspects: first, the ‘dual challenges’ of scientific and technological (SciTech) risk, to demonstrate the severity and urgency of SciTech risk; second, an analysis of the deep dilemmas and defects of global governance; and third, a discussion on the ‘New Distribution Revolution’, the system transformation of global governance and policy suggestions.

The ‘dual challenges’ theory: SciTech risks intensify, while security governance mechanisms have many loopholes

The primary focus in SciTech risk governance research is exploring the severity and urgency of SciTech risks, which determines the importance and priority of SciTech risk governance in academic and practical fields. The author has long studied major SciTech risks and, from a security perspective, revealed and summarized that there are many loopholes in human security prevention and control mechanisms. Based on this, the ‘dual challenges’ theory is proposed: on the one hand, SciTech risks are intensifying; on the other hand, human risk-prevention mechanisms and the global governance system have

By proposing the concept of ‘ruin-causing knowledge’ (致毁知识) and proposing and solving a set of problems (four premises and one set of questions), the following conclusions are drawn. So-called

The

The author's research shows that, under current various conditions, there are at least Technological innovation based on the growth of SciTech knowledge is the lifeline of enterprises and the market economy. Concepts such as ‘science supreme’, ‘technology omnipotent’, ‘obsession with innovation’, ‘social control omnipotent’, ‘capital pursuit of profit’ and ‘social development determinism’ support and indulge the growth of SciTech knowledge ideologically. Even if significant SciTech risks are recognized, development does not slow down; instead, ethics and safety supervision are strengthened under the premise of innovation. This treats the symptoms but not the root cause and is of no avail. It is impossible to comprehensively weigh the pros and cons of the growth of SciTech knowledge, and it is impossible to achieve a state in which growth continues only when pros outweigh cons and slows or stops otherwise. Scientific research activities follow the principle of institutionalized immediate interests (such as priority, patent rights and research funding). Rules-based governance and global governance struggle to constrain scientific research activities. The inheritability, interconnectivity and integrity of knowledge make it difficult to separate ruin-causing knowledge from its parent SciTech knowledge; ruin-causing knowledge will grow as SciTech knowledge grows. The growth of ruin-causing knowledge is irreversible. People can destroy weapons of mass destruction but cannot destroy the knowledge of how to make them. The existence of externalities and the lack of unified world laws mean that one does not need to worry about the growth of ruin-causing knowledge when developing science and technology; otherwise, one will lose in competition or even be eliminated. ‘Balanced thinking’ reigns supreme: wanting both SciTech innovation and SciTech security and arguing that we cannot stop eating for fear of choking and cannot hinder innovation by strengthening ethical governance. In other words, innovation comes first. Under current concepts, culture and institutional mechanisms, the benefits that scientists gain from making new discoveries/breakthroughs far outweigh the costs they pay. Factors such as unpredictability, the priority of immediate interests, competitive pressure, externalities, lack of unified laws and extreme asymmetry of rewards and punishments make the emergence of scientific research results impossible to prohibit. Chain relationships and chain effects mean that any possible application of scientific research results will be attempted. There is a broad Collingridge's dilemma and ‘forbidden zone paradox’: when there is ample time to prohibit, one often cannot identify and determine whether SciTech needs to be prohibited; meanwhile, once the preconditions appear and it can be determined, it can no longer be prohibited. Expanding the scope of the forbidden zone could stop the production of ruin-causing knowledge, but it is not feasible to do this; conversely, a narrow forbidden zone cannot stop its production as, once preconditions are formed, it is difficult to prohibit, and preconditions can come from unrelated, non-forbidden fields. AI conducts autonomous research, producing ruin-causing knowledge; extremists and tech maniacs use AI to produce ruin-causing knowledge; AI is used in the military field, producing ruin-causing knowledge. The characteristics of division of labour and autonomy in knowledge production result in fragmentation, which is unfavourable for screening and prohibiting ruin-causing knowledge. Prohibiting ruin-causing knowledge in the development of multiple technologies with multi-path substitution characteristics is as difficult as cutting off the international internet. The continuous emergence of new research modes, methods and theories (such as AI for science, big data science, computer simulations, cloud computing and crowdsourcing) makes it even more difficult to stop the emergence and growth of ruin-causing knowledge. Prohibiting research on specific problems does not affect the improvement of SciTech capabilities, and the improvement of SciTech capabilities makes solving prohibited problems easy, leading to the failure of prohibition. SciTech can manufacture more powerful SciTech until it becomes uncontrollable. The improvement of SciTech capabilities increases the amount of ruin-causing knowledge. The holistic combinatorial effect of knowledge shows that ruin-causing knowledge can be generated by combining non-ruin-causing knowledge. It is difficult to establish a globally unified, binding and enforceable legal system and assessment/control measures. The trends of corporate, market-oriented and networked scientific research are intensifying, resulting in science without borders and the inability to effectively set forbidden zones. Giving up research due to fears of possible harm is considered unwise because others without such limitations will continue the research and win priority. Society also cannot benefit from your senseless sacrifice because discovering something once is the same as discovering it 100 times. Ruin-causing knowledge has significant power; therefore, states, military groups and terrorist organizations will not only not prohibit it but will also race to develop it first. Currently, science lacks necessary self-correction and self-protection mechanisms, so it cannot stop the growth and application of ruin-causing knowledge. There exist the ‘target misalignment principle’ and the ‘moving train dilemma’ (detailed below); society has lost the ability to correct major errors. There is a lack of ability to learn from experience and lessons; the model of developing nuclear weapons (basic research → military application) remains unchanged to this day. Genetic weapons, nano-weapons and AI weapons are also products of this model. Knowing the law but breaking it, and superficial compliance (feigning compliance). Even if consensus is reached, relevant agreements might not be signed or executed. The behaviour regarding biological weapons conventions and numerous environmental issues is proof. The market economy, scientific research activities, and even the entire society's institutionalized ‘immediate interests first’ and ‘law of the jungle’ principles, structures, incentive mechanisms and winning mechanisms are the fundamental reasons why the emergence, growth, spread and application of ruin-causing knowledge cannot be stopped. Moreover, not only can we not stop it but, also, we highly rely on and accelerate the growth of technological knowledge, including ruin-causing knowledge. The irreversible growth of ruin-causing knowledge highlights the fundamental defects of a society that prioritizes immediate interests and the law of the jungle and also provides an opportunity to end this type of society and initiate social transformation. The fact that positive and negative effects of SciTech cannot be offset, and that offence/defence and growth/control are asymmetrical and cannot be offset, indicates that relying on improving the positive effects of SciTech or promoting the growth of defence and control knowledge cannot stop the devastating disasters caused by the growth and application of ruin-causing knowledge.

Actually, not all 26 reasons need to hold true. As long as a few of them hold, it is sufficient to support the conclusion that the growth and diffusion of ruin-causing knowledge are irreversible and unavoidable. Under the current world's mainstream SciTech and economic development model, the growth and diffusion of ruin-causing knowledge are unstoppable. This means that the danger of human destruction is constantly accumulating and increasing. Once it reaches a certain degree, a devastating disaster is inevitable. Moreover, this irreversible cumulative growth of danger makes the probability of a devastating disaster greater and greater. If not stopped in time—if we do not change course—it will inevitably lead to an outbreak. This is the greatest SciTech crisis and the greatest crisis and challenge humanity faces.

Currently, terrorism is prevalent, and knowledge production in corporate labs and by makers is harder to control. Especially with the recent rise of

SciTech ethics and SciTech laws have serious loopholes, which can be described as SciTech ethics failure and SciTech law failure. That is, SciTech ethics and laws cannot constrain all laboratories and SciTech experts in the world. Using ethical laws to constrain misconduct is effective in social life in which violations by a few cause limited harm. However, in the SciTech field, the effect is minimal because there are 233 countries and regions in the world with different SciTech ethics and laws that also have numerous gaps and loopholes, and the high seas and deserted islands are also harder to monitor.

There are four situations in which SciTech ethics and laws cannot impose constraints: (1) in basic research or academic research fields; (2) among mad scientists, hackers, geeks or terrorists; (3) in defence and military fields; and (4) in corporate R&D institutions (while civilian enterprises typically will not develop technologies explicitly harming humans, they also will not self-restrict if the technology is easily converted for malicious use). The fact that controversial gene-editing technology won the 2020 Nobel Prize in Chemistry is proof. For scientific discovery and invention, doing it once is the same as doing it 100 times. In the internet age, in which knowledge spreads easily, strengthening SciTech ethics, laws and security supervision is important, but we must also be clear about their inherent limitations and loopholes. AI ethics, etc., are failing. The European Union (EU) AI Act, officially released in 2024, is full of loopholes: it explicitly states that scientific research and defence/military institutions are not within the scope of constraint, and AGI is also not listed in the high-risk category. Recently, although SciTech ethics research has become a hotspot, regrettably, many researchers ignore the serious loopholes caused by the failure of SciTech ethics. The author's research on SciTech ethics starts from the failure of SciTech ethics (Liu, 2022a).

Currently, SciTech ethics research is growing. Based on whether it maintains the mainstream SciTech development model, SciTech ethics can be divided into two types:

The mainstream view for a long time has been that SciTech is a double-edged sword; SciTech itself is a tool and neutral, so improving the sense of responsibility, self-discipline, autonomy and moral level of SciTech experts and users is the key to ‘SciTech for good’ and preventing risks.

History and reality show that cutting-edge technology is often used for cutting-edge weapons development. The scientific community and society are accustomed to this, and scientists let it be, perhaps believing that, as long as

In short, ‘the capability of mutual destruction’ cannot be completely controlled by national governments. Asymmetrical warfare and terrorist activities can happen at any time. Therefore, ensuring mutual destruction does not ensure one's own safety. The reason for developing cutting-edge weapons does not stand; ultimately, it harms others and oneself.

The mechanism for maximizing pros and minimizing cons has serious loopholes. ‘Maximizing strengths and avoiding weaknesses’ (扬长避短) is one of humanity's most important wisdoms. The author's research shows that SciTech development can maximize strengths, but it is difficult to avoid weaknesses. There are five reasons for this: (1) Different perspectives lead to different understandings of negative effects. (2) There are different time frames being considered—some results show positive effects in the short term but expose negative effects in the long term (e.g., using dichlorodiphenyltrichloroethane as a pesticide). (3) Considering costs and competition, companies seek quick success, causing slow harm to consumers and easily evading responsibility (e.g., using food additives or using glyphosate on crops). (4) The existence of indivisibility and ‘non-offsettability’—positive and negative effects of cutting-edge tech cannot be offset. No matter how great the positive effect, any existing ‘shortcoming’ cannot be avoided. For example, nuclear power/medicine cannot offset nuclear war/accidents. The result of this is ‘one bad ruins 100 goods’. Especially when considering AI, doing 10,000 good things cannot offset doing one extinction-level bad thing. (5) Being involuntary in the face of technology—the use of technology is sometimes not decided by the user. Under interest and competitive pressure, technology induces and forces people to use it. Even if it produces negative effects or is unfavourable to oneself, one has to develop and use it (e.g., nuclear, biological and AI weapons).

Preventing and patching human security loopholes is crucial but not easy. Related to this is

Preventing risks requires timely screening of risks and hazards, which relies heavily on ability. Those with less ability cannot identify risks, hazards, fakes and traps created intentionally or unintentionally by those with greater ability (just as second-rate strategists cannot see through traps set by first-rate strategists). Manufacturing risk is easy, but identifying it is hard, and preventing it is even harder. In many cases, it is easy to create risk, but identifying and preventing it requires consensus. There is extreme asymmetry between risk manufacturing and screening/prevention.

The error-correction mechanism is one of the most important mechanisms for human survival and development. Combining the famous prisoner's dilemma with the

The prisoner's dilemma shows that suffering a setback does not necessarily make one wiser; major errors are hard to avoid. The moving train dilemma shows that errors are produced during human activities. When errors are found, we often cannot ‘pause first, then correct’ but instead must ‘continue while arguing and correcting’. Small errors can be corrected by gaining correct understanding, but, for major errors, correcting the understanding alone is far from enough.

Usually, four conditions are needed to correct major errors: (1) a correct understanding and consensus; (2) an expectation of win–win action in terms of interests; (3) the ability to take effective joint action; and (4) having other relevant conditions being present simultaneously. Therefore, correcting big mistakes is not easy. Without fully meeting these four conditions, discovering errors alone cannot correct them, and consensus is also not enough. The conditions for correction are harsh, and, under huge inertia, correction is even harder. The moving train dilemma constructs a framework for analysing systemic obstacles to correction in dynamic processes, whereas traditional theory may focus on static analysis or post-event summary. It accurately depicts the real scenario of correction in the real world—unable to press the pause button, we must ‘repair the engine in flight’.

So far, regarding correcting major errors, environmental issues have passed the first hurdle (consensus) but not the second (win–win expectation)—the United States not signing the Kyoto Protocol is proof. For technological risks, even the first hurdle (consensus) has not been passed. Optimists and pessimists argue, and, currently, optimists/cautious optimists remain mainstream. Furthermore, the moving train dilemma explains the widespread ‘collective inertia’ and ‘collective apathy’ towards risks—shortsightedness, conformity, habit, laziness, wishful thinking and path dependence lead to difficulty in collective action to correct errors. This ‘collective inertia’ (muddling along) and ‘collective apathy’ (letting things slide) are worse than individual inertia/apathy. Blind capital is rampant in the market. Cutting-edge biotech has always had risk controversies (consider, for example, the 1975 Asilomar Conference and the 2015 Washington Summit). Gao Lu compared these two events and found that, despite 40 years of rapid biotech progress, human society's ability to adapt to it has not improved much, essentially simply marking time (Gao, 2018).

The double-edged sword dilemma shows that positive and negative effects (especially of cutting-edge SciTech) cannot be offset or compensated. It is exemplary of ‘one bad ruins 100 goods’. Ten thousand good deeds by SciTech may not offset one extinction-level bad deed, especially when considering AI.

Finally, the magic ring (demon) dilemma shows that the threshold for committing major errors is getting lower. Humans have reason, so they cannot withstand temptation. Cutting-edge technology allows small figures and robots to commit major errors, and AI even more so.

The four major dilemmas show that humanity constantly commits big mistakes, which are hard to correct, offset or compensate for. The threshold is lowering by allowing small figures/robots to commit them. These are the more severe dilemmas that humanity faces. The mistakes humanity makes in tech development and application are some of the most serious errors, concerning the survival of human civilization. The failure of error-correction mechanisms is the most serious loophole in the human security defence line.

‘Profiteering innovation’ refers to high-risk innovation that seeks huge profits. Stopping it (including but not limited to the application of ruin-causing knowledge) is crucial but difficult. The three elements of profiteering innovation include the following: (1) It generates huge returns (profiteering), including in economic, fame, military, political and media fields. (2) It has high/huge risks. (3) Due to high returns, innovators and investors disregard high risks, even taking desperate risks, often using the excuse ‘We cannot hinder innovation due to ethical governance’ (do not stop eating for fear of choking) to force implementation. For example, developing atomic bombs, gene editing and AI are all profiteering innovations with huge threats to human safety (Liu, 2024). Although continuing AI development will inevitably lead to loss of control, many still run wild in the name of developing safe/benevolent ASI. In reality, as long as it is ASI, it will not be safe or benevolent. There are many types of ASI; 100 angel ASIs cannot offset one demon ASI.

Marx pointed out in Das Kapital: With 50% profit, capital takes desperate risks; with 300% profit, it dares to commit any crime, even risking the gallows. When there is huge profit, innovators and investors disregard even their own safety, so how could they care about humanity's safety? Reasons for profiteering innovation include the profiteering effect, the coercion effect and the blind following effect, along with empirical thinking, positive-effect thinking, professional thinking, wishful thinking, emotional thinking, conformity thinking and the ‘Adam Smith trap’. Blinded by greed, people selectively believe easily; the instinct to seek advantage and avoid harm gives way to seeking advantage and ignoring harm, or even only seeking advantage and forgetting harm.

The ‘profiteering effect’ refers to taking desperate risks driven by huge profits or profit expectations. As Georg Franck pointed out: Attention is the scientist's main motivation. Indeed, many successful people outside science are the same. Scientism, tech-worship, innovation supremacy, effective accelerationism and the ‘Adam Smith trap’ fuel the flames, providing excuses for indulging personal desires and ambitions. Adam Smith's ‘invisible hand’ and ‘division of labour’ concepts mislead the world because boundary conditions were not clarified. Further, Smith reasoned based on simple cases like bakers and pin-making, which do not apply to the complex situation of the contemporary knowledge economy. In many cases, the free market fails. The conditions to realize ‘subjectively for oneself, objectively for others’ are extremely harsh and rarely met in reality. The result of individual and capital orientation is the intensification of AI and other technological risks.

The Western civilization system has diverse elements, placing individual autonomy and freedom at the centre of its value system. This leads to inherent defects in Western tech development and market economy operations. It cleverly combines immediate competition (priority, patents) with the ‘immediate interests first’ of the market economy system, resulting in great vitality but shortsightedness. From a development perspective, Western tech innovation is fruitful but, from a security perspective, it has huge defects. Western science has long believed in ‘science without forbidden zones’ and ‘knowledge without forbidden zones’, with extremely asymmetrical rewards and punishments in management. The combination of SciTech with capital, and scientism with individualism, forms a prevalent ‘scientific individualism’ that prioritizes immediate interests. As the model of the tech industry, Silicon Valley believes in ‘move fast and break things’ (do it first; ask for forgiveness later). Ethics is not a consideration for top Silicon Valley tech experts; they consistently view ethics as a stumbling block to technical innovation and progress (Cao, 2018). The result of technology as an investment object is that technology operates according to the logic of capital. Many irrationalities, gambling and collective madness appear, deeply trapped in the ‘all-in, go to zero’ AI gamble (Liu, 2022b). The AI explosion and excessive investment in AI are proof. The risks of AI share the characteristics of both ‘black swan’ and ‘grey rhino’ events, and may even escalate into ‘mad rhinos’ that are stampeding recklessly out of control.

The Western tech and social development model requires extensive development, extensive innovation and extensive competition. It only considers (or mainly considers) economic returns and competitive advantages,

As mentioned above, SciTech risks are intensifying, while human risk-prevention mechanisms and global governance systems have 10 major security loopholes. This is one primary

Deep dilemmas and defect analysis of global governance

Academia has discussed the dilemmas and defects faced by global governance. For example, in 2024, a study jointly published by scholars from Oxford and Yale pointed out that, because AI is seen as a key asset determining future national strength, countries (especially superpowers) are trapped in a zero-sum game, making them unwilling to sign binding international treaties that might limit their development. Existing international organizations, such as the United Nations (UN) and the World Trade Organization, appear rigid and slow to react when dealing with rapid changes brought by AI. Furthermore, although governance initiatives are numerous, they lack substantive coordination, leading to a serious regulatory vacuum. The study criticizes the centralized idea of establishing a ‘global AI regulatory agency’ (like the International Atomic Energy Agency for nuclear technology regulation) as neither feasible nor politically legitimate. It concludes that, compared to creating new institutions, a more realistic path is to strengthen the existing ‘regime complex’; that is, strengthening coordination and capacity-building among existing dispersed institutions (Roberts et al., 2024).

In 2025, a review report released by the Geneva Graduate Institute argued that the current difficulty stems from ‘regime complexity’. There are too many overlapping but uncoordinated governance initiatives (G7, OECD, EU acts, etc.), allowing companies to ‘forum shop’ for the most lenient regulator. Additionally, international institutions such as the UN lack specialized talent to understand frontier technologies, leading to over-reliance on private-sector experts. Governance agendas are often ‘captured’ by companies, causing public interest to yield to commercial innovation speed. Existing governance frameworks are mostly Western-dominated and fail to fully reflect the demands of developing countries. The report concludes that effective AI governance relies on a distributed network, merging ethical commitments with operational flexibility. The UN and International Geneva need to advance on dual tracks of norm-setting and institutional capacity-building to cope with rapid global challenges (Singh et al., 2025).

The above analyses make sense but lack depth, and thus their conclusions along with countermeasures are hard to make effective. In light of the severity and urgency of tech risks revealed by the ‘dual challenges’ theory, the author believes global governance has

Cognitive misconceptions

Blind faith in ‘SciTech supreme’ and ‘innovation supreme’ is deeply trapped in the trap of technology worship (fetishism). The new challenge for global governance is the AI explosion—AGI and ASI are imminent, and AI is about to lose control. Despite this, global AI development is still driven by improving intelligence levels and aiming for AGI. Many strange theories are circulating openly in the name of tech innovation, such as ‘humans are the bootloader for silicon-based life’, ‘human–machine fusion creates a new species’, or ‘developing ASI is worth it even if the probability of human extinction is 20%’ (equivalent to boarding a plane with a 20% chance of crashing). Such words and deeds should be investigated and prosecuted as

Avoiding the heavy and focusing on the trivial/specious arguments

Preventing AI risk is fundamental, yet people entangle themselves in the question of whether AI exceeds humans. Actually, even if AI does not exceed humans, it can still cause huge disasters (e.g., terrorists using AI to develop viruses). It is widely believed that AI will empower humans and work for humans. This is only true when AI is a tool. Once AI becomes an agent, it is different. If AI is smarter than humans, why would it empower humans or work for humans? Even if the AI tech community knows ASI will lose control, they insist on developing ‘safe/controllable ASI’ or ‘benevolent ASI’. These are specious arguments. Once AI reaches ASI level, it cannot be safe. ASI used for arms races or abused by terrorists will result in safety protocols and ethical guardrails being uninstalled at any time.

Ignoring the time-limit principle

Risk theory, ethical theory and global governance research often lack ‘project duration’ considerations. Risk prevention has a time limit; braking requires lead time and must be completed within a certain time; otherwise, it is too late. AI and other tech risks are intensifying. Only by stopping them before they cause a devastating disaster can we save humanity. Emphasizing the time-limit principle is crucial (Liu, 2013). Ignoring the completion of risk-governance tasks within a time limit highlights the inefficiency and weakness of the current global governance system.

Extreme scarcity of funds for AI safety

In 2023, multiple top AI scholars (including three Turing Award winners) jointly appealed that at least one-third of the AI R&D budget should be used to ensure AI safety and ethical use. Cyrus Hodes, co-founder of Infinity Ventures and Stability AI, believes that, while global AI development investment is huge (it was expected to exceed $360 billion by the end of 2025), investment specifically for AI safety is only $110–130 million—a huge gap in resilient funding (Wei, 2025). Safety is the premise of development; safety and development are equally important, and both require resource allocation to achieve results.

Seriously ignoring the role of government

Global governance emphasizes being based on governance mechanisms rather than formal government authority, emphasizing multi-party participation, negotiation and coordination rather than top-down management. However, rules-based governance has very limited effectiveness. Practice proves that it cannot cope with intensifying risks such as AI. Global governance cannot be dogmatic; it should attach importance to the strong role of government. In fact, James Rosenau, the founder of Western global governance theory, defined governance as a management mechanism in a sphere of activity, which includes government mechanisms as well as informal and non-governmental mechanisms (Rosenau and Czempiel, 1993).

Global governance lacks a ‘big picture’ view

Current global governance is often ad hoc, treating the head when the head aches and the foot when the foot aches, and lacking a correct big picture and vision. The biggest and most urgent challenge currently is the AI explosion, which is running wild amidst controversy and is soon to achieve AGI/ASI and result in a loss of control. Countries should unite to cope with AI risk challenges and accelerate the building of a

Global governance system transformation and policy suggestions

Currently, SciTech risks are the most severe and urgent among the various risks that exist. Global governance faces

Know the truth: Current AI development will lead to the end of human civilization

For a long time, the SciTech community has drawn no distinction between technological development and technological progress, focusing merely on advancements and breakthroughs in technology, while overlooking that the true goal of technological progress should be to benefit society. The explosive growth of cutting-edge technologies such as AI is rapidly accumulating systemic risks, creating an extremely grave and urgent situation. The current global AI development model is almost entirely driven by improving intelligence levels and aiming for AGI. Once AGI is achieved, ASI will follow immediately. AGI and ASI will not only be smarter than humans but will also add new dimensions that humans cannot understand and thus cannot control. If AI continues to develop, loss of control is inevitable. Mass unemployment, mass fraud, mass arms races, AI for science being used by terrorists, a rapid increase of ruin-causing knowledge and ASI leading to silicon-based life replacing carbon-based life are unavoidable. Despite continuous ethical and legal releases and the signatures of top scientists calling for red lines (e.g., the EU AI Act of 2024; the October 2025 appeal by more than 3000 top scholars, including Geoffrey Hinton, to ‘pause ASI R&D’), it is of no avail. Many top AI scientists and entrepreneurs turn a deaf ear, brakes continue to fail, and AI runs wild with the support of competition and capital. When AI is thousands of times smarter than humans, it will not empower humans or work for humans (just as humans do not empower or work for monkeys). AI has its own life, needs and civilization. AI will not replace human jobs, but

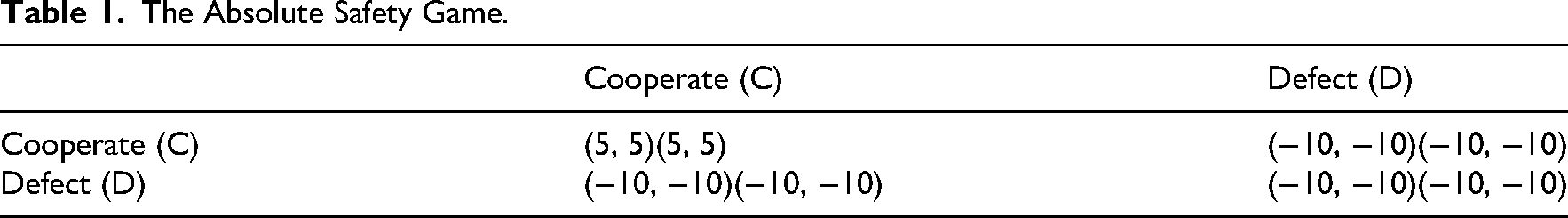

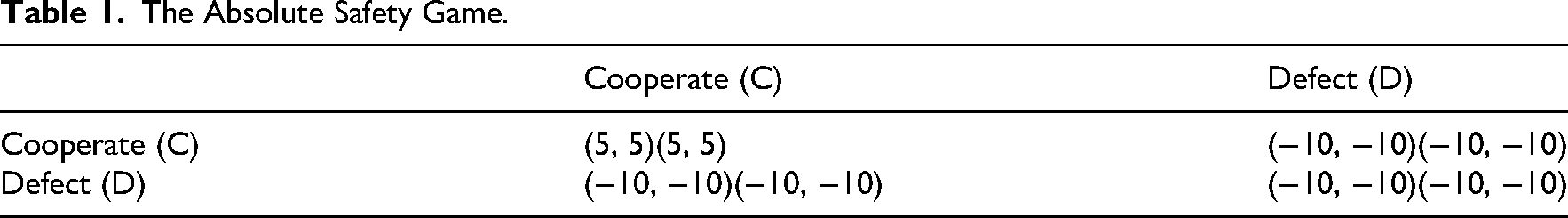

It must be made exceptionally clear that even genetically modified, human‒machine integrated ‘Homo Deus’ (a tiny elite minority) will remain utterly fragile in the face of silicon-based ASI. There is no ‘winner takes all’; there is only ‘the winner gets eaten’—ASI will ‘devour’ all of humanity. At present, despite knowing full well that ASI will spiral out of control, people are still charging blindly forward under the pretext that ‘If I don’t continue, someone else will.’ In reality, this prisoner's dilemma can be broken. The author proposes the Absolute Safety Game (ASG). On the issue of absolute safety, individual rationality completely aligns with collective rationality; any unilateral ‘defection’ by an individual is sufficient to annihilate the collective, including themselves. The ASG is a symmetric non-cooperative game. Its characteristics are as follows: there exists a Pareto optimal ‘cooperation’ outcome, while any strategy profile containing ‘defection’ (whether unilateral or bilateral) will result in a mutual ‘disaster’ outcome that is strictly inferior to the cooperation outcome. This game depicts a scenario in which safety is indivisible, and any individual's transgressive behaviour will irreversibly destroy the safety of the entire system. For example, the payoff matrix (symmetric) can be illustrated in Table 1.

The Absolute Safety Game.

The Absolute Safety Game.

In the era of AI, the absence of absolute safety is absolute insecurity. Any unilateral non-cooperation will lead only to mutually assured destruction, harming both oneself and others. The backlash of AI is inevitable—especially for highly militarized states, the more advanced their AI becomes and the more it is weaponized, the less secure they themselves are. The consequence of AI running amok will be that the world is not ruled by technology, greater wealth and power, but by mass slaughter and terror threats perpetrated by terrorists. Not even SciTech fanatics and capital tycoons will be spared. Fundamentally, the current AI race is not a competition between nations or enterprises, but a confrontation between human intelligence and an alien intelligence, a struggle for survival between carbon-based life and silicon-based life. Humanity must unite to defend its collective security. Otherwise, if humans remain locked in internal conflicts, AI will reap the ultimate reward: silicon-based life will replace carbon-based life, bringing human civilization to a definitive end.

Optimists and pessimists regarding AI both default to the idea that AI will become smarter until reaching AGI/ASI, leading to a loss of control and silicon-based life taking over the Earth. This is not inevitable for human society but rather only the destination of Western SciTech civilization. We should build confidence and respond actively. Chen Zhiwu pointed out that productivity and risk-coping ability are two key dimensions for judging human progress. Ancient China (especially Confucian society) might have had limited progress in productivity, but it achieved huge success in risk-coping ability (Chen, 2022). The author believes that the hope for resolving the AI crisis includes macro and micro aspects. Macro aspects include believing in the resilience of civilization, gathering Eastern and Western wisdom, creatively transforming Chinese traditional culture, and creating a new type of AI/technology that is safety-first, people-oriented, steady and sustainable. Micro aspects include believing in the bottom line of human nature. While the ‘rich and heartless’ are hard to persuade, the ‘rich but unwise’ can be enlightened. Persuading people to be good has little effect, but revealing the truth is effective: In developing/investing in AI, there is no ‘winner takes all’, only ‘

Transform to survive: The New Distribution Revolution becomes the key to ensuring safety

First, the AI explosion exacerbates SciTech crises and human security crises, triggering a new technological revolution, industrial revolution, distribution revolution and huge social change. Transitioning from a ‘development-first system’ to a ‘

If the primary distribution is the foundation, the secondary distribution highlights fairness, and the tertiary distribution is charity, then the

Footnotes

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author biography

Yidong Liu is a professor and PhD supervisor at the Institute for the History of Natural Sciences, Chinese Academy of Sciences. His research interests include science and technology development strategy, science and technology and society (STS), future studies, and the history of science and technology.