Abstract

Albeit the measurement of debate quality is not a new endeavour, this paper raises two research questions for which we still have limited knowledge: What are important and reliable indicators of debate quality on social media? How does debate quality relate to individual factors on social media? First, we empirically analysed how two well-established discourse’ quality indices (the DQI and CC index) correlate to each other using a random sample of 1000 tweets selected from the full history of tweets written by Swiss elected politicians between 2011 and 2021. While the sample was automatically coded for CC using LIWC, we manually annotated the tweets according to an adapted version of the DQI for social media texts. Second, we conducted a correspondence analysis to investigate the relations between these dimensions, additional debate quality features, as well as individual political factors. Results show a good correlation between both indices (r up to 0.46), while also highlighting their respective weaknesses. Furthermore, the results highlight the necessity to include alternative dimensions of debate quality (such as emotion and inclusive or exclusive views) to enhance future measurements of debate quality in the realm of social media.

Introduction

This study investigates the quality of political debate on social media and offers a comparative perspective on existing methodologies to capture the deliberative quality of debate. Although developing measures of debate quality is not a new endeavour, more descriptive knowledge is necessary for understanding the congruence (or complementarity) of these different measures and for improving the generalisation of these measures outside the framework of official parliamentary debate. More specifically, this study addresses two research questions. First, it aims to assess what are the important and reliable dimensions of debate quality on social media. Second, it investigates how individual factors relate to indicators of debate quality in political social media messages.

Consensus-building in decision-making is essential, and it can only be accomplished gaining support for policy proposals that are made known through the expression of various perspectives and opinions. In this regard, the goals of discourse (or conversation), dialogue, debate and deliberation vary. For instance, discourse suggests that there is at least an exchange of information, which does not necessarily aim to reach a solution or consensus. In contrast, the focus of a dialogue lies in reaching a mutual understanding of a given issue. Debate suggests that different ideas are expressed with the aim of convincing another person, thus making potential disagreements salient. Deliberation represents the process when arguments are exchanged with the aim of reaching an agreement about what procedures or policies will best foster the public good (Manosevitch and Walker, 2009). In this paper, the focus is on the quality of the political debate conducted by elected politicians on Twitter, and the debate is considered in the context of the concept of deliberative democracy and the theory of communicative action (Habermas, 1996).

Accounting for the quality of political debate is important as democracies are highly dependent on the exchange of arguments. From the perspective of elected politicians, this is considered a necessary condition for the introduction of legitimate policies (Chambers, 2003). It is assumed that a higher discourse quality will produce better informed decisions (Fishkin, 1995) and more legitimate decisions (Cohen, 1989). As such, debate quality is considered an essential component of deliberative democracy (Tschentscher et al., 2010). The Internet in general, and social media in particular, complement traditional forms of political participation (Coleman and Shane, 2012), notably by providing important spaces for public deliberation (Esau et al., 2017). For instance, research indicates that people’s access to social media can have a positive effect on political efficacy and participation in politics (Gil de Zúñiga et al., 2010). An increase in the scholarly interest in the conception and theorisation of the public sphere has coincided with the usage of the Internet in general, and of social media in particular, for political discussion (Bruns and Highfield, 2015). However, there are a number of factors that have been brought up as potential threats to online discourse, including ideological division, hate speech and misinformation (Tucker et al., 2018).

Unlike research on parliamentary debate, it remains unclear which dimensions are important when accounting for the quality of online debate. However, in today’s communication environment, both parliamentary and online debate are indispensable channels of communication for spreading political messages. Against this background, it is important to gain knowledge about what are reliable measures of quality in political debate on social media (Dobbrick et al., 2022). To date, changes in debate quality have essentially been investigated within offline political communication arenas, albeit there are a few exceptions (e.g. Brundidge et al., 2014; Suiter et al., 2022). As journalists and news media are increasingly reporting on politicians’ social media messages (McGregor, 2019), the quality of the political content disseminated on social media has serious implications for democratic discourse and citizens’ opinion formation.

The purpose of this article is to provide detailed illustrations of the validity of both measures of debate quality on a sample of political social media messages. We seek to establish what are important and reliable dimensions of debate quality for online political discourse by examining the internal consistency of the different indices capturing debate quality, as well as by comparing these indices to investigate their congruence (or complementarity). We further investigate which individual factors related to dimensions of debate quality prevail in politicians’ social media discussions. Our study thus complements existing research about how to automatise the detection of important dimensions of debate quality (e.g. Dobbrick et al., 2022; Fournier-Tombs and MacKenzie, 2021).

Study background

Effective deliberation, democracy and communication arenas

In the last 30 years, deliberative theories, and the analysis of the deliberative quality in political communication have become popular within political theory. Habermas’ (1996) vision of the ‘deliberative public sphere’ laid down the framework for considerations about how the online sphere can promote democracy prior to the development of the Internet. Deliberative communication in particular, and communicative involvement in general, are needed to strengthen democracy, going beyond economic (or liberal) and voting-centric conceptions of democracy (Chambers, 2003, 2009). Less clear, however, are the sufficient conditions for defining deliberation (Beauchamp, 2019). There is, indeed, little consensus about what should be the core aspects of deliberation that may be empirically measured (for instance, see the dozens of criteria summarised by Friess and Eilders, 2015).

From the perspective of its process (which must be differentiated from its conditions and outcomes), deliberation is often defined as a rational, interactive and respectful form of communication (Bächtiger and Pedrini, 2010). Efficient deliberation suggests that discussions providing reasoned arguments, and reflection upon them, can have beneficial effects on citizenship (Dryzek, 2000) and can enhance interest in political discussions (Kim et al., 1999). Therefore, accounting for the level of argumentation is important to judge the quality of political discussions, as it prepares citizens to get involved in deliberation (Dutwin, 2003). Against the background of a public perception of a declining legitimacy of political debate (Thomassen et al., 2017), the quality of political debate has implications both for the electoral competition to mobilise voters and for the information available to citizens to forge their opinion.

Research on the quality of deliberation has heavily focused on deliberation in ‘mini publics’ (e.g. Fishkin, 1995) and in political institutions (e.g. Spörndli, 2003; Steiner et al., 2004), although online deliberation has also been receiving more attention (Esau et al., 2021). According to a summary of the extensive research on deliberation (Friess and Eilders, 2015), most empirical studies on political deliberation (offline and/or online) focus on the perspective of the communication process by defining it based on several theoretical dimensions, primarily those inspired by Habermas’ (1996) and Barbers’ (1984) views on democracy. Important dimensions of deliberation are rationality (the critical exchange and challenging of rational arguments), interactivity (including both listening and responding to arguments), equality (implying the same opportunity to articulate arguments and to reply to others’ claims), civility (including a balanced exchange of arguments and respectful listening) and constructiveness (implying a constructive atmosphere in which consensus is the final goal). The reference to a common good (e.g. Manin, 1987) is also considered as a specific component of justification (e.g. Bächtiger and Wyss, 2013).

The deployment of the Internet and of social media platforms was associated with a hope for a more open exchange of information and, thereby, for a revival of the public sphere (Bruns and Highfield, 2015; Dahlberg, 2001). However, discussions on social media have often been found to be unconstructive (e.g. outrage and polarisation), especially in the framework of political debates (Beauchamp, 2019). Politicians around the world are increasingly active on social media platforms to address broader public concerns and to communicate with journalists (Barberá and Zeitzoff, 2017; Keller, 2020; Spierings et al., 2019). These platforms enable politicians to bypass traditional gatekeepers, such as parties and media, and to communicate with the public directly (Jungherr, 2014a; Jungherr et al., 2020). Meanwhile, politicians are held accountable given the fact that their opinions and actions on social media are intensively scrutinised by the public and the media (McGregor, 2019).

The more direct (and personalised) communication enabled by social media stands in stark contrast to the regulated debates in parliaments where time constraints and procedural rules limit politicians’ ability to express their opinions on specific policy issues. However, social media platforms also constrain the scope of the debate. For instance, even though Twitter is among the most widely used social networks for political debate (Jungherr, 2014b), doubts persist about the deliberation potential of Twitter and whether it can function as a public sphere (Bouvier and Rosenbaum, 2020). The specific brevity structure of tweets may indeed impede the open exchange of information that is needed in the public sphere. Although Twitter doubled its character limit in November 2017, research has shown that it provided limited opportunity for improving the quality of political discussions (Jaidka et al., 2019). Indeed, while doubling the allowed length of tweets led to more civil, more polite and more constructive discussions, there was also a decline in the empathy and respectfulness of tweets.

Parliamentary debate and social media constitute communication arenas offering politicians complementary communication opportunities (Popa et al., 2020) which are characterised by different communication logics. For instance, Castanho Silva and Proksch (2022) have shown that politicians who participate less in parliamentary debates tend to have bigger differences with their party communication on Twitter, with social media enabling politicians to adapt the way they express their opinions on policy matters. To date, we still have little knowledge about how political talk on social media follows the rules of deliberation. Indeed, the study of the quality of political debate has, until recently, rarely been measured beyond the parliamentary arena of communication, such as parliamentary speeches and legislative acts. Recently, Esau et al. (2021) compared deliberative quality across different formal (government consultation platforms), semi-formal (mass media platforms) and informal (social media) arenas. They showed that the highest level of (aggregated) deliberative quality was displayed in highly formal arenas of deliberation, although this varied across dimensions of deliberative quality.

Existing measures of debate quality

Until recently, the measurement of debate quality was mainly conducted either through approaches relying on rollcall data (Bütikofer and Hug, 2010) or through the manual coding of textual documents (e.g. Suiter and Reidy, 2020). However, given the proliferation of digital content, such as social media content, computer-aided methods developed in corpus linguistics and psychology are an essential complement to the dominant approach, which consists of manually annotating political content (Bächtiger and Parkinson, 2019).

Traditionally, assessments of the deliberative quality of political discussions or debates have been conducted through measures of interaction processes (e.g. types of arguments, equality of participation and balance of viewpoints). The Discourse Quality Index (DQI) is an aggregate measure that was developed for measuring the quality of group processes in deliberative structures (Steenbergen et al., 2003). However, as noted by Jennstal (2019), the DQI is not well suited to account for changes in individuals’ reasoning as a result of deliberative engagement, which is an essential component of the theories on deliberative stance (Owen and Smith, 2015).

Measuring this cognitive process necessitates the use of a dependable method to assess individuals’ cognitive complexity. Here, the concept of integrative complexity taps into the measurement of deliberative quality by accounting for both the reasoning and listening norms of deliberation (Brundidge et al., 2014). Integrative complexity constitutes one aspect of the general concept of cognitive complexity (CC), which measures both the differentiation and the integration components of deliberation (Owens and Wedeking, 2011; Suedfeld et al., 1992). In general, the CC index has been implemented using either manual coding (see the standardised coding procedure by Baker-Brown et al., 1992) or automated scoring relying on pre-defined lists of words to identify key dimensions of the debate quality (e.g. Wyss et al., 2015). The CC index is increasingly relied on by researchers to assess debate quality from text. For instance, Wyss et al. (2015) draw from this concept to account for the evolution of debate quality in the Swiss parliamentary chambers surrounding immigration policy. Kesting et al. (2018) conducted a similar analysis on the German parliamentary debates about immigration policy. Most recently, Suiter et al. (2021) relied on the same concept to compare the debate quality in the plenary sessions of a Citizens’ Assembly and a parliamentary committee to assess the epistemic effects of public deliberation on abortion.

To date, the DQI and the CC are certainly the most relied-upon measures of debate quality (be it manually or (semi)automatically). Other indices and frameworks have been proposed, but they still rely on (a subset of) similar dimensions. For instance, Klinger and Russmann (2015) have investigated citizens’ participation in deliberation processes by proposing an index of the quality of understanding that includes dimensions about statements of reasons, proposals for solutions, respect, doubts and reciprocity. Other studies have developed a more comprehensive framework that accounts for the multifaceted concept of deliberation. For instance, Collins and Nerlich (2015) have relied on an automated corpus analysis, which is based on the statistical analysis of word frequencies to identify features of online discourse that can determine deliberation. Moreover, Gold et al. (2013) have combined linguistic and statistical cues that seek to measure the core aspects of deliberative discourse in the transcribed data of a public arbitration: equal participation, mutual respect, justification and persuasive effects. More recently, Del Valle et al. (2020) have proposed a framework for assessing the presence of rational-critical elements in political social media messages and networks. The authors were able to demonstrate the presence of deliberative elements, such as high levels of external justification, reciprocity, civility, cross-party interactions and equality, but also demonstrated low levels of internal justification, critical stances and reflexivity.

Aside from the measurement of deliberative quality at the verbal level, other studies have accounted for non-textual features by pointing to a deliberation potential, such as network features (Gonzalez-Bailon et al., 2010; Shin and Rask, 2021). Non-textual features are also important since uncivil messages and behaviours on social media are likely to be banned or self-censored, which makes them hard to collect a posteriori. Other research has paid attention to the logics of interaction or persuasion underpinning discussion groups. For instance, Beauchamp (2019) proposed a way to account for productive conversations online by emphasising the mutual consideration of conceptually interrelated ideas. The proposed methodology goes beyond the analysis of deliberative quality at the word or individual level and proposes computational models of argument quality and interdependence.

Exiting research gaps

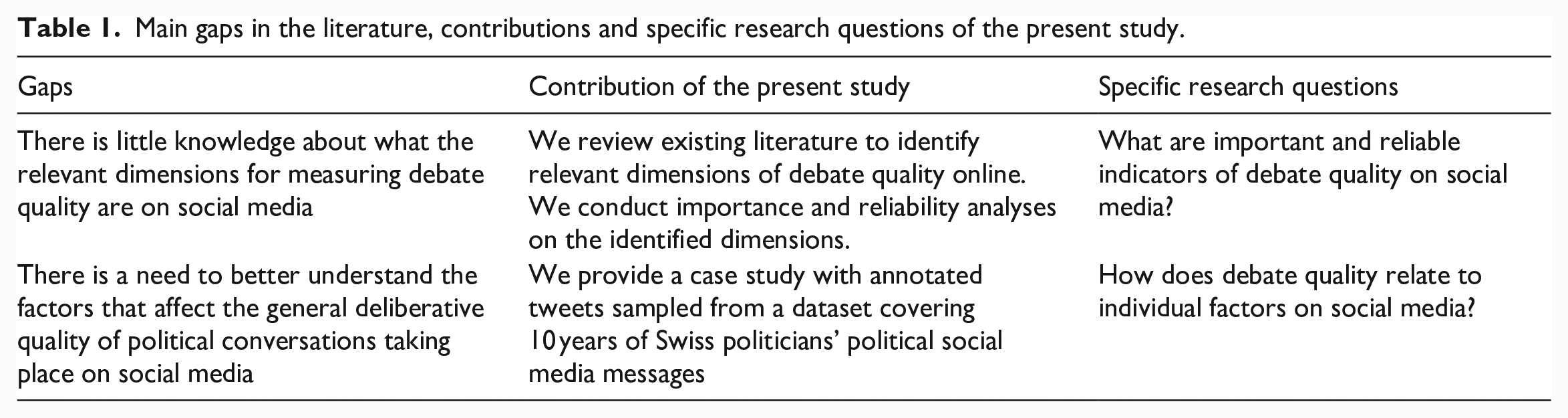

The present study contributes to address specific gaps in the existing literature about the detection of debate quality. Table 1 summarises these gaps and highlights the contributions of the present study, as well as its specific research questions.

Main gaps in the literature, contributions and specific research questions of the present study.

A first research gap suggests that, despite the numerous approaches (e.g. manual, dictionary-based and machine learning approaches) and the proliferation of criteria to measure deliberation, we possess little knowledge about what are the core dimensions for measuring debate quality on social media. However, there seems to be a consensus that the DQI and CC index can be useful measures for assessing debate quality both in an offline and online contexts. Now, there is a need to better understand the congruence (and divergence) between these different measures (e.g. what is the overlap? which measure is better and why?), while relying on the most recent versions of these measures. For instance, Esau et al. (2021) have adapted the DQI to be applicable in online contexts. They considered four theoretically recognised dimensions of deliberative quality – rationality, reciprocity, respect and constructiveness – while also including alternative forms of communication, such as storytelling and expressions of emotions. The CC has also been adapted according to the communication arena and the topic of discussion by Suiter et al. (2022). The authors conducted factor analysis to extract the relevant cognitive complexity clusters in their data instead of using the entire set of dimensions contained in the CC index. Moving beyond the DQI and the CC index, other dimensions of debate quality have been proposed. For instance, the study of Brundidge et al. (2014) on political blogs in the United States focused on the association between political ideology and linguistic indicators. In addition to integrative complexity, the authors rely on several measures of discourse quality, notably on psychological distance (which is the frequency of words greater than six letters, articles, prepositions and inverse scores for first-person singular, discrepancies and present-tense verbs), as well as on emotional language. Furthermore, Halpern and Gibbs (2013) analysed the potential of social media as a channel to foster democratic deliberation by annotating the content offered by the White House on Facebook and YouTube. They operationalised the deliberative potential of social media as a combination of several variables, including the type of argumentation, conversational coherence, equality of participation, degree of civility and politeness. Also focusing on YouTube, Edgerly et al. (2009) investigated the ability of the platform to contribute to an online public sphere through fostering quality political exchanges by focusing on argumentative content and on the level of incivility. Furthermore, Jakob (2022) investigated the extent to which the limited space for explicit reasoning on Twitter can be counterbalanced by sharing links (or URLs) and analysed the deliberative potential of those links. Results showed that links are used for substantiating user statements in the context of both information-sharing and argumentation (e.g. links can serve as empirical evidence for truth claims or fulfill an argumentative function to legitimise normative positions against social standards).

A second gap consists in the need to better understand the factors that affect the general deliberative quality of political conversations in general and on social media in particular. For instance, Wyss et al. (2015) found a decrease over time in the cognitive complexity of parliamentary debates that was correlated with the rise of populism, which can point to a trend towards a lower level of accommodation and a higher simplicity of political talk. Accounting for the effect of populism on debate quality is especially important in the context of social media discussions. Indeed, studies have demonstrated the relevance of social media for fostering populist rhetoric and the diffusion of populist ideas to the broader public (Ernst et al., 2019; Krämer, 2017). Concerning the realm of social media, Brundidge et al. (2014) showed that conservative bloggers were less integrative than liberal bloggers, thus highlighting the risk of a potential exacerbation of divisions in cognitive-linguistic styles on polarised political blogs. On a contextual level, Jakob et al. (2022) showed that toxic outrage depends on the type of democratic system, and that it is mainly higher in majoritarian democracies than in consensus-oriented democracies and in arenas that afford plural and issue-driven, rather than like-minded and preference-driven, debates. From the broader perspective of democratic deliberation, there are additional factors worth considering that can impact on the quality of political debate. Furthermore, an election year can decisively impact the tonality of political communication. For instance, Osnabrügge et al. (2021) have shown that legislators are more likely to use emotive rhetoric in debates that have a large general audience. This is in line with Hager and Hilbig (2020) who have shown that sudden exposure to public opinion leads elites to align the tone (and the content) of their discourse to that of the public opinion. At the same time, Iandoli et al. (2021) showed that social media tend to favour elite polarisation, especially during electoral campaigns. Additionally, politicians’ involvement in interactive behaviour can also affect the level of the quality of discourse. Indeed, Rathje et al. (2021) demonstrated that out-group animosity drives engagement on social media. Finally, it is important to consider political variables that relate to the status of politicians, notably whether they stem from the lower or the upper house of Parliament, whether they are political incumbents and politicians’ level of involvement in parliamentary debates.

Advantages and disadvantages of manual and (semi)automatic methods of measuring debate quality

The dimensions constitutive of both the DQI and the CC index have been implemented manually and (semi)automatically. Both approaches display strengths and drawbacks in measuring deliberation.

The primary strength of manual coding is that (expert) coders can make more reliable judgements. In the specific context of deliberative quality, coders typically search for key words and make comprehensive assessments of the meaning of textual information and its broader communication context (Jennstål, 2019; Tetlock et al., 2014). In this view, human judgement is considered as the ‘gold standard’ for coding strategies. However, human judgement might also be subject to subjectivity and other biases that lead to substantial disagreement among annotators (Black et al., 2010). Furthermore, when compared to semi-automatic classification methods, such as dictionary methods and machine learning methods, manual coding is relatively expensive in terms of labor and time.

In general, (semi)automatic implementation methods of measuring deliberation have the advantage of reducing the burdens of manual coding, as well as reducing inter-coder reliability tests. (Semi)automatic methods also have the major advantage of allowing for the labelling of larger datasets than would be feasible with rule-based indicators requiring manual annotation. For instance, Jaidka et al. (2019) offer several language models that can automatically label texts according to their uncivil and deliberative qualities.

However, there are also concerns regarding (semi)automatic approaches. For instance, despite being appreciated for its simplicity and ease of implementation, which requires little human input, the fully automatic detection of dimensions via dictionaries can pose issues regarding the validity of scoring and calculating the presence of given dimensions, since the focus on single words (e.g. ‘yet’, ‘between’ and ‘however’) might lead to miss-coding relevant sections of the data or to measuring concepts at a superficial level (Beauchamp, 2019). More specifically, off-the-shelf dictionaries typically suffer from major challenges arising from bag-of-words, domain transferability and additivity assumptions (Chan et al., 2021). These difficulties also apply to semi-automatic classification methods – primarily machine learning models– which are trained on a specific sample of annotated data and typically do not apply as well to other (albeit related) contexts (Jaidka, 2022). Furthermore, these models require extensive verification against human coding to ensure that they capture core concepts.

More generally, the (semi)automatic ways to detect debate quality might be less suited to short social media messages than to parliamentary speeches, for instance, by not being able to account for all dimensions (see discussions about this matter in the study of Suiter et al. (2022) using the CC index). A possible solution to this issue is to combine both fully and semi-automatic methods of classification, thereby combining theoretical knowledge and data-driven computational power. For instance, Jaidka (2022) proposed to build and validate data-driven lexica to account for the deliberative quality of social media texts. Different supervised machine learning classifiers trained on different sets of (open or closed vocabulary) features were evaluated for out-of-sample label prediction and generalisability to new contexts. Dobbrick et al. (2022) further proposed to combine off-the-shelf dictionaries with supervised machine learning to locate the major source of weakness in the dictionary approach and, thereby, to improve the detection of integrative complexity. Furthermore, Fournier-Tombs and MacKenzie (2021) have proposed to rely on machine learning to study discourse quality covering dimensions of an adapted version of the DQI. Their method demonstrated the ability to select which features are most relevant to measure deliberation quality.

Proposed case study

We address two main research questions (see Table 1): (i) we aim to assess what the important and reliable indicators of debate quality on social media are and (ii) we investigate what factors impact the level of debate quality on social media. We conducted a case study to detect the quality of user-generated political discussions on social media from a random sample of tweets from a corpus covering 10 years of elected politicians’ tweets in Switzerland.

This study focuses on the quality of political debate on Twitter and involves a sample of tweets from elected Swiss politicians. Previous research has shown that there are important variations in the active use of social media, including Twitter, by politicians. For instance, politicians marginalised by the media and politicians from opposing parties might benefit more from social media than politicians from the ruling majority or politicians whose views are in line with the major news media (Hong et al., 2019). Furthermore, it is worth differentiating platforms when analysing politicians’ social media reliance. For instance, Quinlan et al. (2018) argue that the larger share of politicians using Facebook in comparison to Twitter can be explained by its larger audience, thereby making Facebook more attractive for campaigning purposes. Moreover, the phases of the political agenda (namely, election campaign periods and non-election periods) can impact user types among politicians in terms of their activity rate and the amount of media attention they receive, although there is a persistent distinction between politicians remaining passive and those being active in either phase (Rauchfleisch and Metag, 2020). To date, databases have enabled researchers to better understand variations across political systems and party families by conducting comparative and transnational studies (see one of such databases for Twitter by van Vliet et al., 2020).

To address the identified research gaps, this article proposes to assess the congruence between two of the most relied-upon measures of deliberation: a dictionary-based annotation of the CC and a manual coding of the DQI. On the one hand, we rely on the psychological concept of CC as a proxy to assess debate quality. Reliability tests analysing whether deliberation actually correlates with higher degrees of cognitive complexity (Beste and Wyss, 2014) have led to the operationalisation of the CC index with semi-automated means of inquiry, especially using a dictionary-based approach (Kesting et al., 2018; Wyss et al., 2015). We follow this path of inquiry and rely on the implementation based on the LIWC dictionary (Tausczik and Pennebaker, 2010). On the other hand, we rely on the DQI from Steenbergen et al. (2003), which was traditionally developed on hand-coded parliamentary speeches and is the most widely used measure of debate quality. This index has been subsequently modified to improve its validity and transferability to other communication arenas (Esau et al., 2021), but also to big datasets (Fournier-Tombs and MacKenzie, 2021) where quantification is necessary to allow for computational analysis. As the DQI has been perceived as not being entirely suitable beyond the context of parliamentary debates, we follow Esau et al.’s (2021) suggestion of adopting an integrative analytical framework analysing not only rational reason giving, but also alternative forms of communication such as emotional communication and storytelling.

Data and method

Tweets from elected politicians over the last decade

To measure changes in debate quality associated with elected politicians’ online communication over the last decade, we retrieved all tweets emitted by Swiss politicians covering the period from 2011 to 2020. Before 2011, Swiss parliamentarians showed little activity on Twitter, which is why we decided to conduct our analyses from this year on. We used the records of tweets emitted by elected politicians using the Twitter API for Academic research that enabled us to access historical data. The corpus of tweets consisted of 302,025 tweets published by 262 elected politicians owning a Twitter account. We only included politicians’ tweets while they were active in Parliament. To permit this, we built a record of politicians’ mandates over time, stating when each politician was active in the lower or upper chamber of Parliament, not re-elected or reintegrating into Parliament after a break. We filtered tweets that were not retweets and that contained minimally five words. The analyses are conducted using (the version 4.0.4 of the) R programming language.

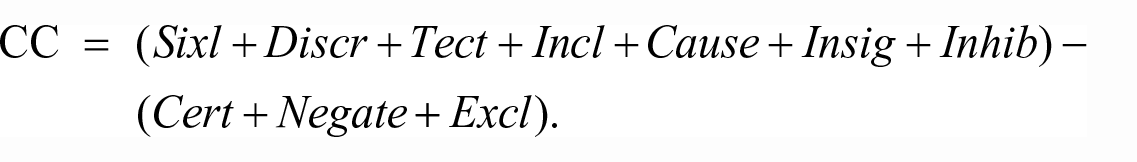

Measure of cognitive complexity

The CC score was constructed for each politician’s tweet. To do so, we relied on the LIWC dictionary (Pennebaker et al., 2015) using categories that reflected cognitive processes. LIWC calculates the percentages of words in a document (here: tweet) that match up with categories. For the cognitive categories, mean values indicate the mean percentages of all the words that politicians used that fall into a particular category. We relied on the implementation proposed by Wyss et al. (2015) which is expressed in the following formula:

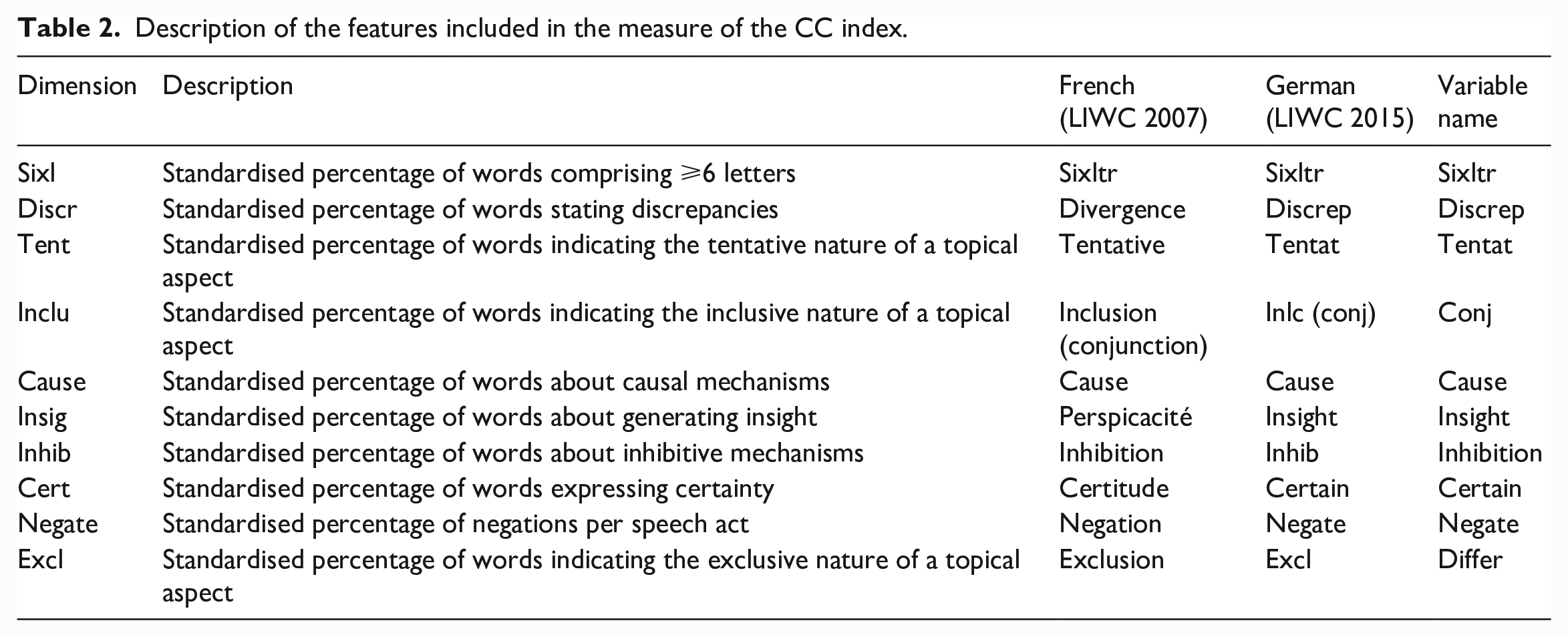

We conducted a pre-processing step before applying the off-the-shelf LIWC dictionary, including port-of-speech tagging using the (version 2.4 of the) package udpipe (Wijffels et al., 2019) from the R programming language. This enabled us to keep only words with specific grammatical functions (Jacobi et al., 2016) including common nouns, proper nouns, verbs, adverbs and adjectives. We also conducted a lemmanisation using the library udpipe. Lemmanisation reduces a word to its fundamental form. We then applied the LIWC dictionary. We did not remove stop-words nor negations. However, we followed Haselmayer and Jenny (2017) to exclude all words located after these negation signifiers (French: non, peu, pas and sans; German: kein*, nicht, niemals, kaum and wenig*). Instead of translating politicians’ parliamentary utterances and tweets, we decided to preserve their original languages. We used the translated version of the LIWC dictionary in German (version 2015, from Wolf et al., 2008) and in French (version 2007, from Piolat et al., 2011). The description of each component of the debate quality score is given in Table 2 with the last column providing the variable name as included in the subsequent analyses.

Description of the features included in the measure of the CC index.

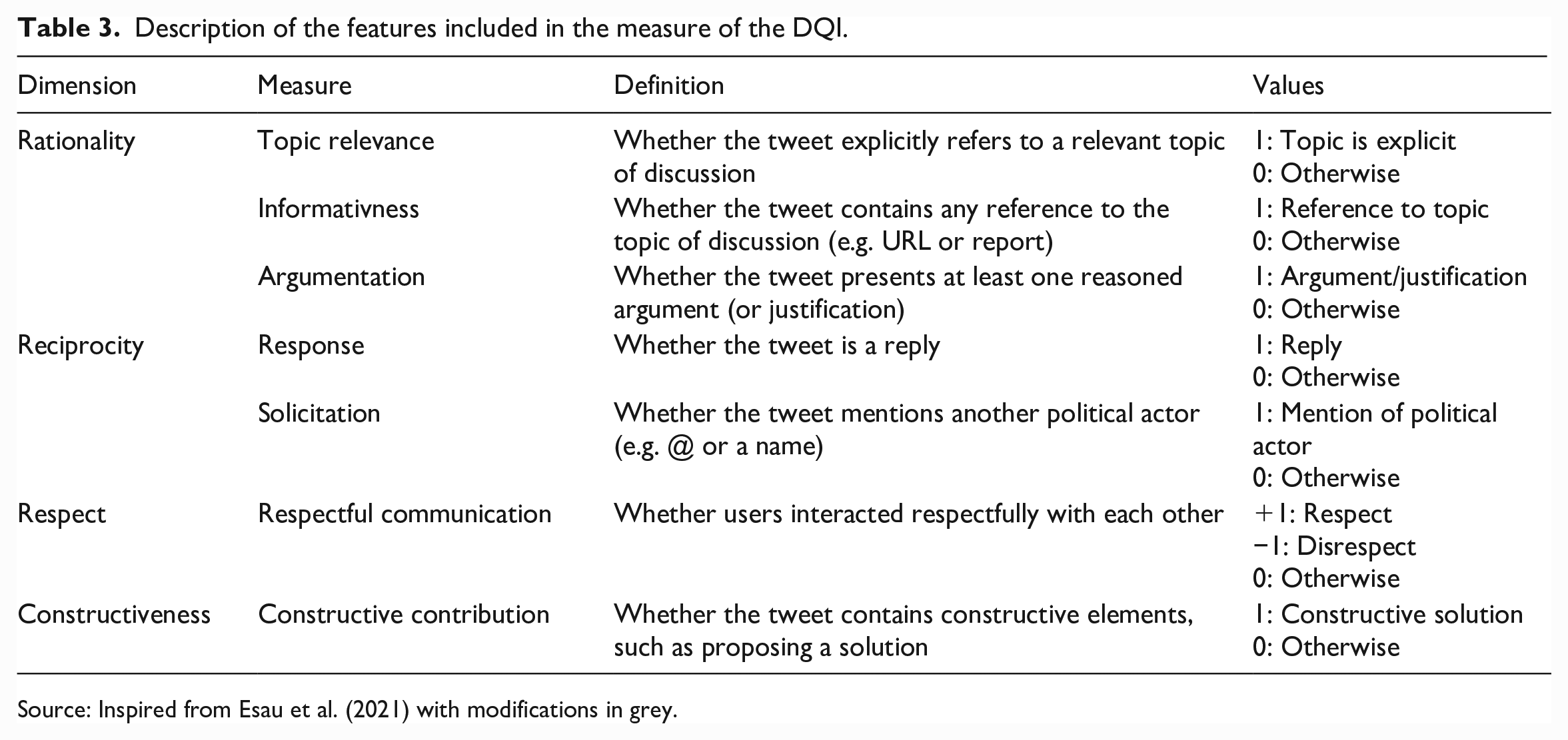

Measure of debate quality based on DQI and other indicators

To manually account for the debate quality, we relied on an extended version of the DQI including the coding framework proposed by Esau et al. (2021) to which we added some refinements to adapt the coding scheme for Twitter (see Table 3). Esau et al. (2021) consider four dimensions of deliberative quality, namely rationality, reciprocity, respect and constructiveness. In the original coding scheme, all variables were coded dichotomously. However, we introduced a change for measuring respect by allowing a negative value (non-respect) to be coded. In line with Jakob (2022), we further introduced two more specifications. First, we added a measure of informativeness to account for whether a tweet contains any reference to the topic of discussion (e.g. URL or report). As politicians drift quickly from one topic to another in reaction to events on social media, it is important to differentiate tweets that engage with a topic of discussion from the tweets that provide a reference to further information. Second, we introduced a measure of solicitation to account for whether the tweet mentions another political actor (e.g. @ or a name). This is a different measure from tweets being labelled as replies. Table 3 summarises each of the manually coded dimensions.

Description of the features included in the measure of the DQI.

Source: Inspired from Esau et al. (2021) with modifications in grey.

We included three additional dimensions of debate quality not contained in the DQI or CC scores:

First, we relied on elements from Esau et al. (2021) who considered alternative forms of communication, namely the expression of emotion and storytelling. To account for the presence of storytelling in politicians’ tweets, we reported whether a tweet contains a personal experience (or an experience of known others) expressed in a narrative form. To account for emotionality, we captured whether a tweet contains positively (coded as +1) or negatively (coded as −1) loaded terms.

Second, we also distinguished between tweets containing personal (e.g. using the pronoun ‘I’ or the possessive ‘my’), inclusive (e.g. ‘we’, ‘us’ and ‘our’) and exclusive (e.g. ‘they’, ‘them’, ‘their’, ‘he/she’, ‘him/her’ and ‘his/her’) views. Indeed, pronouns have been shown to be markers of social categories which occur in contexts that reflect self and group-serving biases (Menegatti and Rubini, 2013; Sendén et al., 2014).

The final score of the extended version of the DQI reads as follows and corresponds to the sum of the values for the different dimensions as noted in Table 3.

Measure of individual factors affecting debate quality

We included several individual-level factors to investigate how they related to dimensions of debate quality:

We specified the political affiliation of each politician. The party affiliations are as follows: GPS for the Green Party, SP for the Social Democratic Party, GPL for the Green Liberals, CVP for the Christian Democratic People’s Party, EVP for the Evangelical People’s Party, BDP for the Conservative Democratic Party, FDP for the Liberals, SVP for the Swiss People’s Green Party.

We indicated whether a politician has extreme positions compared to his/her party positioning on similar policy issues. To do so, we calculated an attitudinal measure of progressivism-conservatism for each politician. This measure was derived from the self-completion of the Smartvote 1 survey by the politicians. Each politician received a score from 0 to 100 on several dimensions and we constructed an index for progressivism (averaging the following dimensions: open foreign policy, liberal society, expanded welfare state and extended environmental protection) and for conservatism (averaging the following dimensions: restrictive immigration policy, law and order, restrictive finance policy, liberal economy). Extremeness is a dichotomous variable. It takes the value of 1 if the politician has more than two standard deviations on either the progressist or conservative score compared to the overall score of politicians from the same party. Otherwise, it takes the value of 0.

We also accounted for the parliamentary chambers by distinguishing between tweets emitted when politicians sat in the lower house (coded as ‘National Council’) and upper house (coded as ‘Council of States’) of the parliament.

We also indicated gender by coding whether the politician is male or female.

Methods of analysis

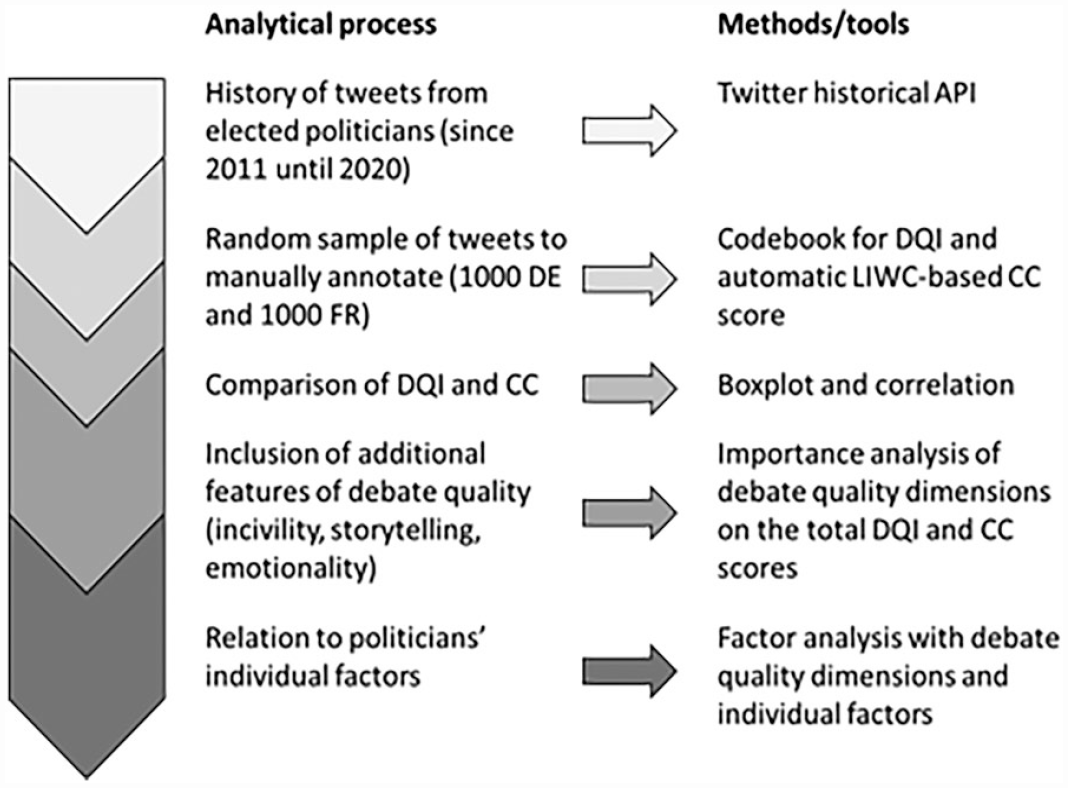

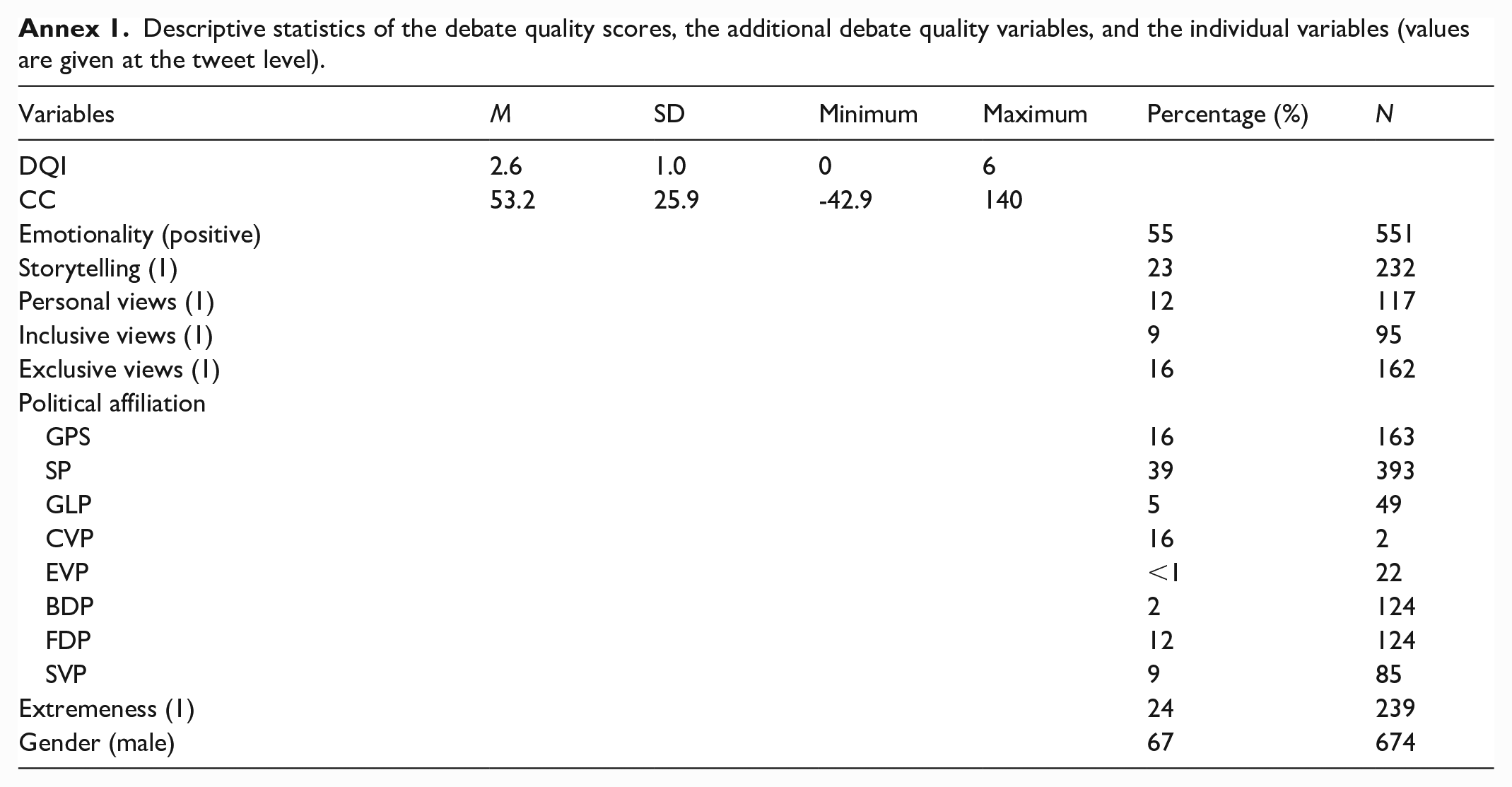

Figure 1 displays the methodological framework of the study from the data collection to the data analyses, which were conducted in two main steps. To answer the first research question, we assessed the correlation between the extended DQI and the CC index in our sample of annotated tweets. We also investigated the internal consistency of both indices by calculating the Pearson correlation of the individual dimensions with the final DQI and CC index. To answer our second research question, we conducted a factor analysis including the dimensions of the DQI and CC index, as well as the individual factors and the other indicators of debate quality. We relied on the (version 0.8.5 of the) package FactoMineR (Lê et al, 2008) for the R programming language. We also scaled the numeric dimensions of the CC between 0 and 1. Annex 1 gives the descriptive statistics for the variables included in our analysis.

Methodological framework of the study.

Results

Convergence between scores

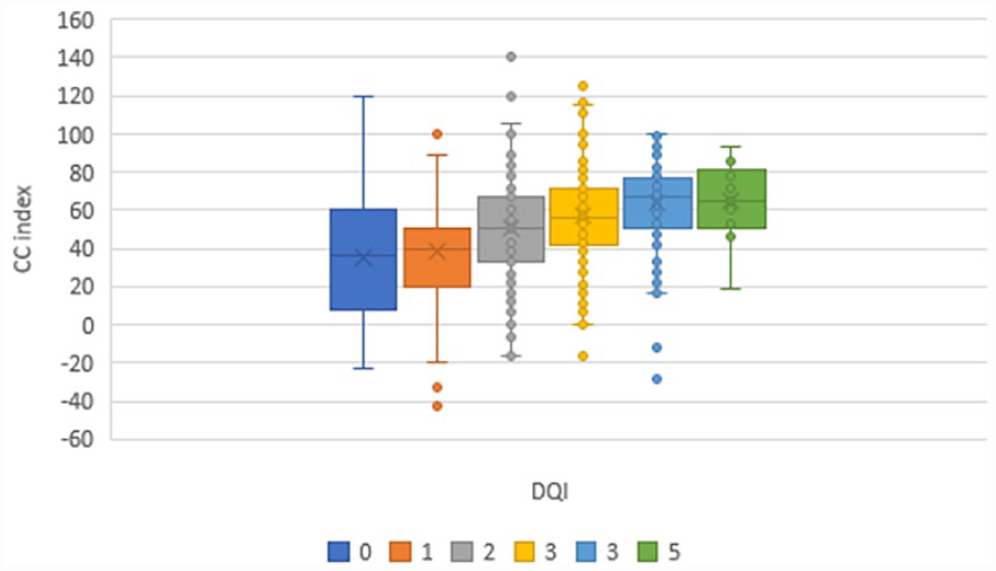

Figure 2 demonstrates the convergence between the automatically coded CC index and the manually annotated DQI resulting from our randomly sampled corpus of tweets (both German and French tweets are included in Figure 2). It displays that both measures of debate quality are positively correlated (0.29 Pearson correlation for the overall corpus, 0.32 for French and 0.24 for German). We noted that this positive relationship is less clear for extreme values of the DQI. Indeed, the Pearson correlation between the DQI and the CC turns to 0.46 when the 0 and 5 DQI coding categories are not considered.

Boxplots of CC index according to the manual DQI.

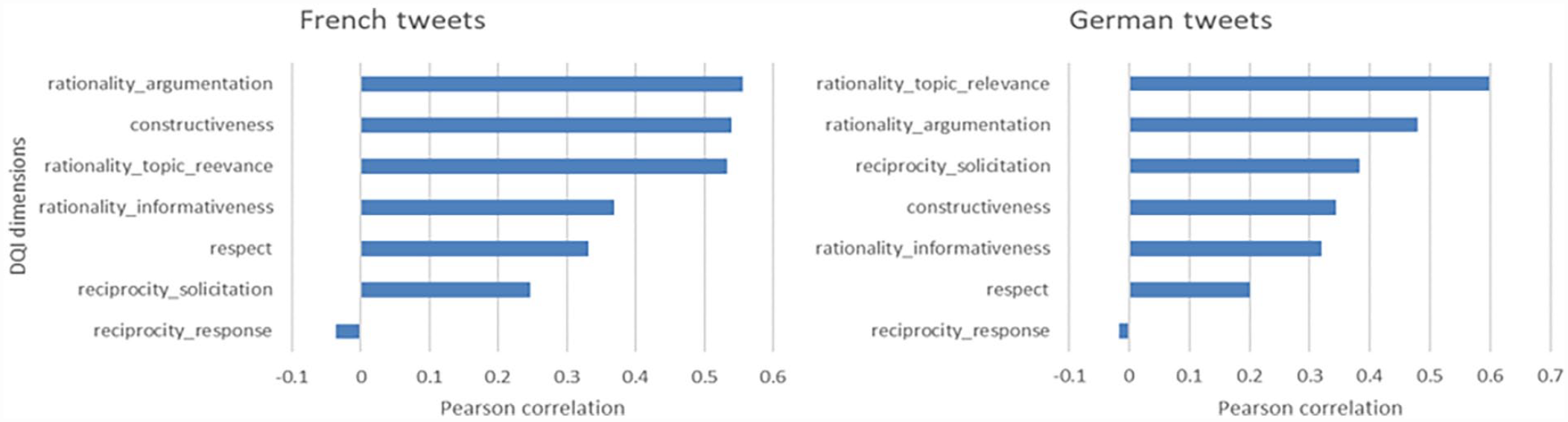

We also observed that the importance of the dimensions of each debate quality score varied across language (see Figure 3). For instance, the constructiveness dimension was more important for predicting the DQI in French tweets compared to German tweets. Furthermore, the solicitation dimension was more important in German than in French tweets.

Correlation of each dimension constitutive of the DQI by language.

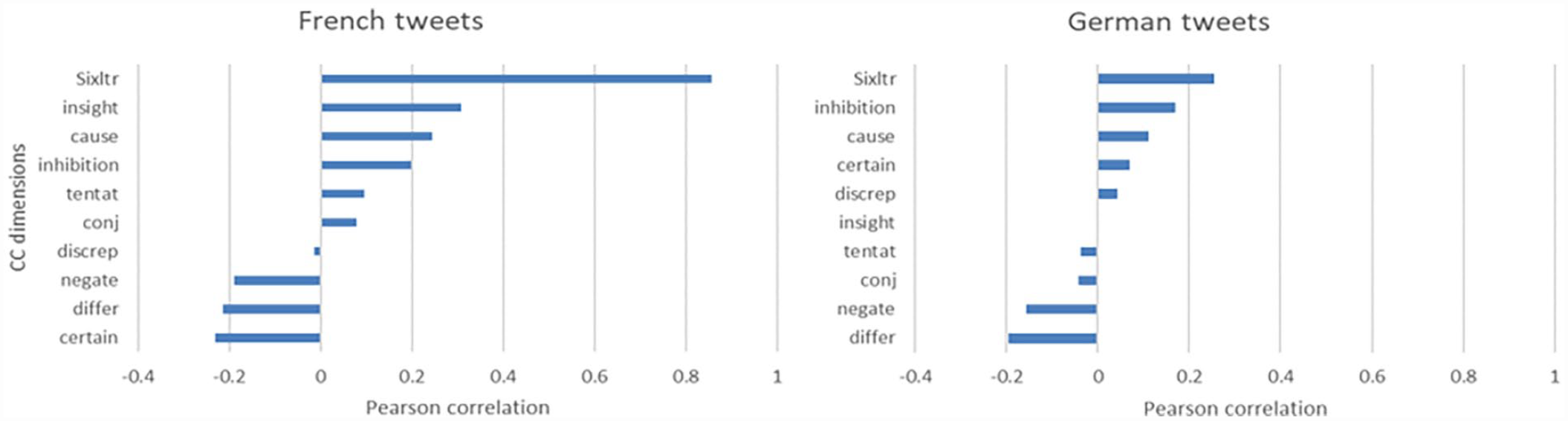

Figure 4 provide a similar analysis for the CC index and demonstrates differences across languages. For instance, the Sixltr dimension was far more important for predicting the CC index in French tweets compared to German tweets. Furthermore, the dimensions of insight, cause and inhibition were the most important in French, whereas the dimensions of inhibition, cause and certainty were most important in German. We also noted that the dimensions of the CC index did not all follow the direction of the formula. For instance, while differentiation (‘differ’) and negation (‘negate’) were correctly negatively associated with CC, certainty (‘certain’) was correctly and negatively associated with CC for French but wrongly positively associated with CC in German. Furthermore, divergence (‘discrep’) was wrongly and negatively associated with CC in French, whereas tentativeness (‘tent’) and inclusion (‘conj’) were wrongly and negatively associated with CC in German.

Correlation of each dimension constitutive of the CC index by language.

Relationship of the debate quality with individual factors

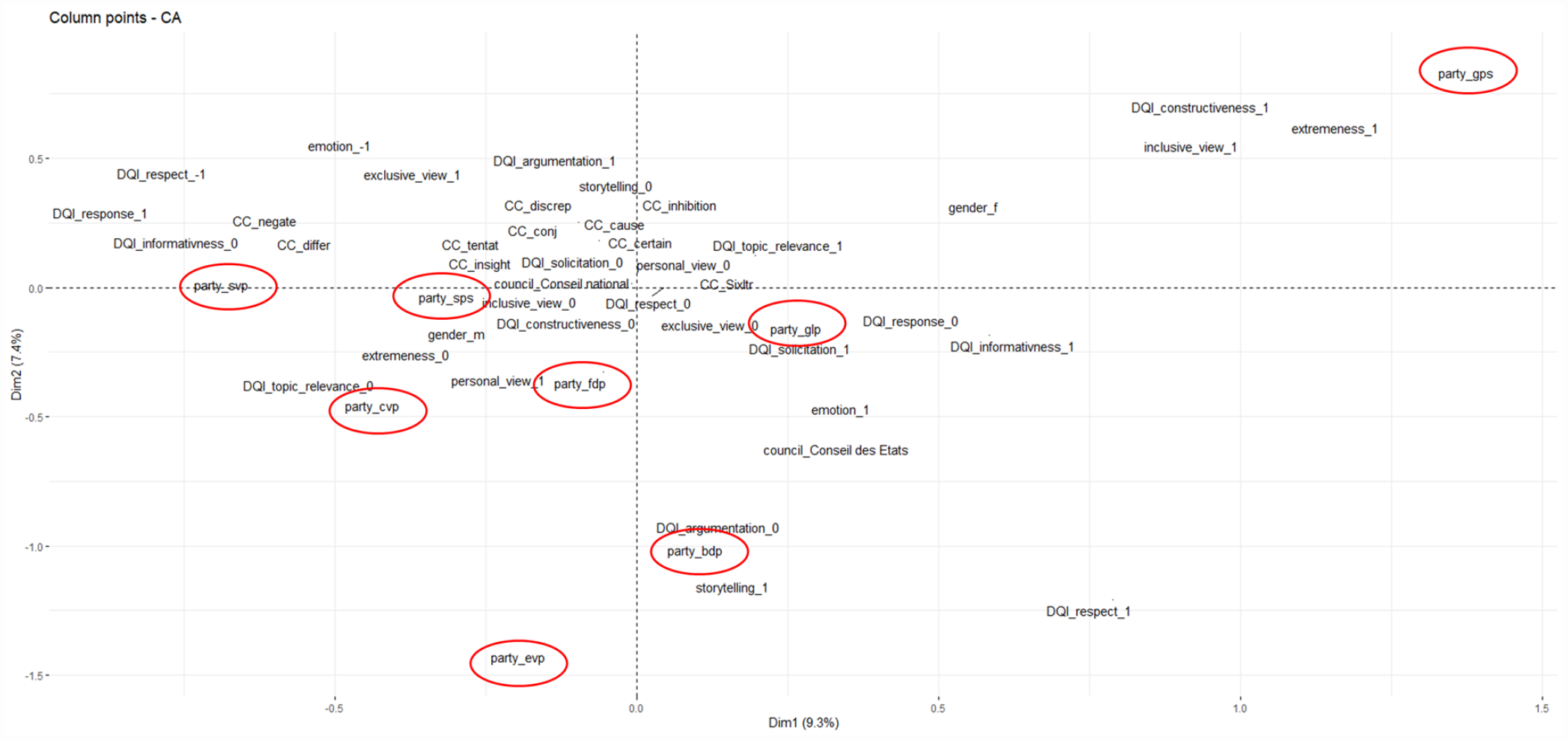

To provide further insights about the generalisability of the DQI and CC dimensions, Figure 5 displays the results from the correspondence analysis including every dimension composing the DQI and the CC index, as well as the additional debate quality variables and politicians’ individual factors. Figure 5 enables us to assess the relationship between these variables, with variables that are away from the centre being the most impactful on the resulting clustering map.

Result of the correspondence analysis (DQI: indicates variables composing the DQI; CC: indicates variables composing the CC index; the ending _1, _0 or _−1 correspond to the coded values).

We can see a first cluster grouping the following dimensions: constructiveness from the DQI, and inclusiveness from the additional debate quality dimensions (see upper right quadrant). This first cluster is related to the progressist political orientations (namely, GPS), to political extremeness and to gender (namely, female). The upper left quadrant defines a second cluster including the following dimensions: responsiveness, disrespect and lack of informativeness for the DQI. It also groups several dimensions of the CC index, including differentiation (‘differ’) and negation (‘negate’). This cluster is associated with additional dimensions of debate quality, such as a negative emotionality and the prevalence of exclusive views. It is closely linked to a rightist political orientation (namely, SVP). The lower right quadrant highlights a third cluster including responsiveness and the absence of topic relevance from the DQI, as well as the prevalence of personal views as an additional debate quality dimension. It tends to be associated with a more right-centrist political orientation (namely, CVP, EVP and FDP) and to an absence of political extremeness. The lower right quadrant displays a fourth cluster grouping the presence of respect and the absence of argumentation from the DQI. It is also linked to storytelling and the presence of positive emotions as additional dimensions of debate quality. This cluster is associated with centrist political orientations (namely, BDP and, to a lesser extent, GLP). It is also associated with the upper chamber of parliament (namely, ‘Conseil des Etats’).

Based on the results of the different clusters, we can argue that the first axis of the clustering map (explaining 9.3% of the variance) is organised along a ‘constructive-unconstructive’ definition of debate quality, with more positive components of democratic debate on the right (such as constructiveness, inclusive views, informativeness and respect) and more negative deliberative components of the left (such as disrespect, exclusive views, lack of informativeness and lack of topic relevance). However, this more negative side is also associated to responsiveness, which is an important feature of political deliberation. Conversely, the more positive side is associated with a lack of argumentation. The second axis (explaining 7.4% of the variance) displays an ‘emotional’ definition of debate quality, differentiating between more positive and storytelling communication (at the bottom: respect, positive emotion and storytelling) and more negative and perhaps conflictive communication (at the top: disrespect, argumentation and exclusive views).

Discussion of the main findings

Important dimensions of debate quality

In a first analytical step, we aimed to assess the congruence between two well-established indices of debate quality, the DQI and CC index. To do so, we annotated a random sample of tweets from elected politicians after adapting the coding of the DQI to the social media context following the framework of Esau et al. (2021). We also automatically coded the tweets according to the formula proposed by Wyss et al. (2015). We found that both indices tend to measure a similar concept of debate quality since we observed a position of Pearson correlation. However, we noted that the obtained correlation was lower in the context of social media than the one found in studies about parliamentary debate (for instance, Wyss et al. (2015) found a positive correlation of 0.56 between the CC index and the DQI). This could be explained by the fact that both indices are not fully suitable for analysing social media texts and that it may also vary across languages. Therefore, we also assessed their internal consistency. We found that dimensions most related to the DQI were different for French and German. Thus, pointing to different deliberative cultures. Furthermore, the dimensions of the CC index did not all behave in the direction expected by the formula. Furthermore, there was an over-influence of the dimension measuring the presence of words longer than six letters (‘Sixltr’) for French. Inhibition and causality were found to be the most important dimensions for predicting the CC index in both languages. In a nutshell, it seems that both indices suffer from different biases. Indeed, whereas the DQI suffers from a partisan bias in the communication styles, the CC is more dependent on the morphological characteristics of specific languages, as well as on the specificities of communication channels.

Other important factors impacting debate quality

In a second analytical step, we assessed the relationship between the dimensions of the debate quality indices, the additional debate quality variables and politicians’ individual variables. Using correspondence analysis, we identified four main clusters grouping dimensions of debate quality in association with specific political orientations. Overall, the debate quality space tends to be organised along a ‘constructive-unconstructive’ definition of debate quality, opposing positive components of democratic debate (such as constructiveness, informativeness and respect from the DQI) and negative deliberative components (such as disrespect, lack of informativeness and lack of topic relevance from the DQI, as well as divergence, differentiation and negation from the CC). We also noted that the emotional component of political debate, as well as the presence of inclusive and exclusive views, had a strong impact on the formation of the debate quality space. Among the individual factors, the political orientation was clearly indicative of different deliberative cultures across the parties. Furthermore, tweets from politicians that diverged from the party line (‘extremeness’) tended to relate to increased constructive and inclusive views. In line with previous studies of debate quality (e.g. Wyss et al., 2015), we also noted that the type of parliamentary status also played a role, since politicians from the upper chamber (Council of the States in Switzerland) tended to have a more consensual style of political communication (e.g. absence of argumentation, respect, informativeness and solicitation).

Concluding remarks

Study limitations

We would like to point out the potential limitations of our study:

First, the sample of elected politicians active on Twitter may possess different characteristics from politicians who do not possess an account. Even within the group of politicians with Twitter accounts, there were significant variations in the adoption and activity rates (as well as in terms of popularity measures, such as the size of the follower network).

Second, Twitter is a specific social media, with particular conventions and audiences. These factors may not be reflected in debate quality patterns on other social media platforms such as Facebook. This could raise concerns about the generalisability and external validity of the research findings. Most notably, tweets are very short compared to other social media messages and this can impact the measure of debate quality (Jaidka et al., 2019). Furthermore, platform-specific regulations can also impact the quality of political debate. For instance, Edgerly et al. (2009) showed that YouTube videos that were uncivil or humorous, or of a filmed-live event, tended to decrease the quality of comments that are generated online. Furthermore, Moore et al. (2021) investigated the effect of anonymity, pseudonyms and real-name requirements on the quality of debate in online new comments. Their results point to the value of pseudonymity in maintaining deliberative quality.

Third, Switzerland has a specific political deliberation culture traditionally based on political consensus and consociationalism (Hänggli and Häusermann, 2015). Therefore, more cross-country comparisons are needed to propose a widely applicable way to measure debate quality online (for instance, future studies could draw on a similar cross-country comparison study as in Urman (2020) about political polarisation on Twitter). Indeed, our study points to the necessity to adapt the CC index to different languages and cultural contexts. Furthermore, the reliance on social media, especially Twitter, for political debate in Switzerland still narrow comparatively to other (non)European countries (Kovic et al., 2017). However, the larger trends in terms of user selectivity, especially the elitist bias of Twitter network compared to Facebook, also apply to Switzerland (Rauchfleisch and Metag, 2016), thus building confidence in the generalisability of our findings to other contexts.

Fourth, in line with Esau et al. (2021), we suggest that there is a need to adopt a multidimensional measure of debate quality, thus also including dimensions going beyond rationality, such as emotionality, storytelling and inclusive or exclusive appeals. For instance, emotional debating has been associated with detrimental outcomes, such as affective polarisation (Iyengar et al., 2019). On social media platforms, emotions have a decisive role in political communication (Settle, 2018), as social media constitute a tool that politicians can use strategically to appeal to voters. However, further dimensions could be included in the definition of debate quality. For instance, Jakob et al. (2022) assessed how the political system of a country and the type of discussion arena (namely, blog posts, Facebook posts and tweets) condition toxic outrage online as a violation of civility norms. Debate quality could also be supplemented by the analysis of argument quality (e.g. Wachsmuth et al., 2017), as well as by measures of incivility.

Main study contributions

Despite these limitations, our article contributes to the study of political communication by expanding previous measurements of debate quality to another communication channel, Twitter. Social media and Parliamentary debates provide politicians with different discursive opportunities characterised by a mix of public and personalised communication, as well as by political expertise and entertainment, all of which affect the status of a hegemonic data source in comparative research on political communication. We add to the existing literature by investigating the importance of recognised dimensions to measure debate quality, but also by investigating which individual aspects affect the level of online debate quality, thus forging a link between automated measurements and theory-driven constructs (e.g. Baden et al., 2020; Grimmer et al., 2021). The results from this explorative study can be used by other scholars who are interested in conducting textual analyses using large datasets, notably by incorporating machine learning techniques, notably because the obtained results call researchers to be self-conscious about potential linguistic differences. They can also be useful for practitioners and political actors as a guideline for the promotion of qualitative deliberation.

Footnotes

Annex

Descriptive statistics of the debate quality scores, the additional debate quality variables, and the individual variables (values are given at the tweet level).

| Variables | M | SD | Minimum | Maximum | Percentage (%) | N |

|---|---|---|---|---|---|---|

| DQI | 2.6 | 1.0 | 0 | 6 | ||

| CC | 53.2 | 25.9 | -42.9 | 140 | ||

| Emotionality (positive) | 55 | 551 | ||||

| Storytelling (1) | 23 | 232 | ||||

| Personal views (1) | 12 | 117 | ||||

| Inclusive views (1) | 9 | 95 | ||||

| Exclusive views (1) | 16 | 162 | ||||

| Political affiliation | ||||||

| GPS | 16 | 163 | ||||

| SP | 39 | 393 | ||||

| GLP | 5 | 49 | ||||

| CVP | 16 | 2 | ||||

| EVP | <1 | 22 | ||||

| BDP | 2 | 124 | ||||

| FDP | 12 | 124 | ||||

| SVP | 9 | 85 | ||||

| Extremeness (1) | 24 | 239 | ||||

| Gender (male) | 67 | 674 | ||||

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Availability of data and material

Available upon manuscript acceptance.