Abstract

Qualitative Comparative Analysis (QCA) is a relatively young method of causal inference that continues to diffuse across the social sciences. However, recent methodological research has found the conservative (QCA-CS) and the intermediate solution type (QCA-IS) of QCA to fail fundamental tests of correctness. Even under conditions otherwise ideal for causal discovery, both solution types frequently committed causal fallacies by presenting inferences that were in direct disagreement with the underlying data-generating structure to be discovered by QCA. None of these problems affected the parsimonious solution type (QCA-PS). These findings conflict with conventional wisdom in the QCA literature, which has it that QCA-CS uses empirical information only and that QCA-IS is preferable to both QCA-CS and QCA-PS. The present article resolves these contradictions. It shows that QCA-CS and QCA-IS systematically supplement empirical data with matching artificial data. These artificial data, however, regularly induce causal fallacies of severe magnitude. Researchers who employ QCA-CS or QCA-IS in empirical analyses thus always risk moving further away from the truth rather than closer to it.

Keywords

Qualitative Comparative Analysis (QCA) is a relatively young method of causal inference that continues to diffuse across management, political science, sociology, and neighboring areas of social research (Baumgartner and Thiem 2017a:955; Rihoux et al. 2013; Thiem 2017, 2018). At the same time, this diffusion process has been accompanied by a number of methodological debates about QCA. For example, a recent symposium in

Against this backdrop, Baumgartner and Thiem (2017b) have recently added an important front of inquiry by providing the first comprehensive evaluation of QCA’s three solution types: complex/conservative (QCA-CS), intermediate (QCA-IS), and parsimonious (QCA-PS). Across all of their sets of inverse-search trials, QCA-CS and QCA-IS proved unsound. Both of these solution types frequently committed causal fallacies of varying magnitude by presenting inferences that violated the very causal structure QCA was supposed to recover from a set of data, even under conditions otherwise ideal. For QCA-CS in particular, fallacies were severe: “between diversity values of 81.25 percent and 12.5 percent—the span covering the majority of configurational research designs—correctness does not even exceed 10 percent” (Baumgartner and Thiem 2017b:19-20).

Baumgartner and Thiem’s (2017b) results are all the more relevant because they conflict fundamentally with current convictions in a considerable part of the QCA literature, which have it that QCA-CS relies on empirical information only (Ragin 1987:110; Schneider and Wagemann 2012:162; Vis 2012:174; Wagemann and Schneider 2015:40) and that QCA-IS is preferable to QCA-PS and QCA-CS (De Meur, Rihoux, and Yamasaki 2009:155; Ragin 2008:171, 173; Ragin 2009:111; Ragin and Sonnett, 2005:196; Schneider and Wagemann 2012:175; Schneider and Wagemann 2013:211).

To date, a methodological explanation for Baumgartner and Thiem’s (2017b) results has not been put forward. The present articles fills this gap and resolves the seeming contradiction between Baumgartner and Thiem’s (2017b) findings and conventional views. More precisely, it demonstrates that, and how, QCA-CS and QCA-IS supplement empirical data with artificial data. However, these artificial data are often incompatible with the very causal structure QCA seeks to uncover from empirical facts, whereby causal fallacies of varying magnitude are induced. 2

Current Views on QCA-CS and QCA-IS

In his pioneering book on the comparative method, Ragin (1987) introduced QCA-CS some 30 years ago. Ever since, it has been argued that this solution type is the most conservative one because it draws only on empirical data. Ragin (2008:173) writes that “[t]he complex solution […] does not permit any counterfactual cases and thus no simplifying assumptions regarding combinations of conditions that do not exist in the data.” 3 In support, Schneider and Wagemann (2012:162) maintain that QCA-CS is “[c]onservative because […] the researcher […] is exclusively guided by the empirical information at hand.” 4

Despite the apparent advantage of relying on empirical information only, many methodologists soon began to criticize QCA-CS for the high complexity of the solutions it often produced, which supposedly detracted from the interpretability of its findings. In reaction to this perceived shortcoming of QCA-CS and the simultaneously growing skepticism toward QCA-PS, Ragin and Sonnett (2005) devised QCA-IS, which is now widely recommended to applied researchers as the most attractive one of QCA’s three solution types (cf. Baumgartner 2015:840). As Ragin (2008:171) argues, “the intermediate solution […] is optimal because it incorporates only easy counterfactuals, eschewing the difficult ones that have been incorporated into the most parsimonious solution. The intermediate solution thus strikes a balance between complexity and parsimony […].” In consequence, solutions produced by QCA-IS “are superior to both the ‘complex’ and ‘parsimonious’ solutions and should be a routine part of any application of any version of QCA” (Ragin 2009:111). Similarly, Schneider and Wagemann (2012:175) hold that “[t]he rationale for creating intermediate solution terms is that, on the one hand, the conservative solution often tends to be too complex to be interpreted in a theoretically meaningful or plausible manner and that, on the other hand, the most parsimonious solution term risks resting on assumptions about logical remainders that contradict theoretical expectations, common sense, or both” (see also Schneider and Wagemann 2013:211). 5

Explaining the Incorrectness of QCA

Given the aforecited claims in conventional QCA literature that QCA-CS avoids counterfactuals and that QCA-IS provides some sort of golden mean between QCA-CS and QCA-PS, many applied QCA studies published over the last decade in management, political science, and sociology have presented solutions based on QCA-CS or QCA-IS to their readers. Against this background, Baumgartner and Thiem (2017b) have demonstrated in the most elaborate method evaluation of QCA to date that both QCA-CS and QCA-IS, in fact, fail to meet fundamental criteria for empirical research methods of causal inference. Irrespective of the form and complexity of the causal structure they presupposed, and irrespective of the amount of data fed into QCA, QCA-CS and QCA-IS often presented inferences that were in direct disagreement with the structure that should have been uncovered. As it was outside the scope of their article, Baumgartner and Thiem (2017b) did not present a methodological explanation for this finding. The remainder of the present article fills this gap.

To understand why QCA-CS and QCA-IS regularly fail in their inferences, the relevant components of the theory of causation QCA posits must first be recalled. In QCA, causes of an effect, in relation to some specified background against which causation is to be inferred about, are functionally understood to be

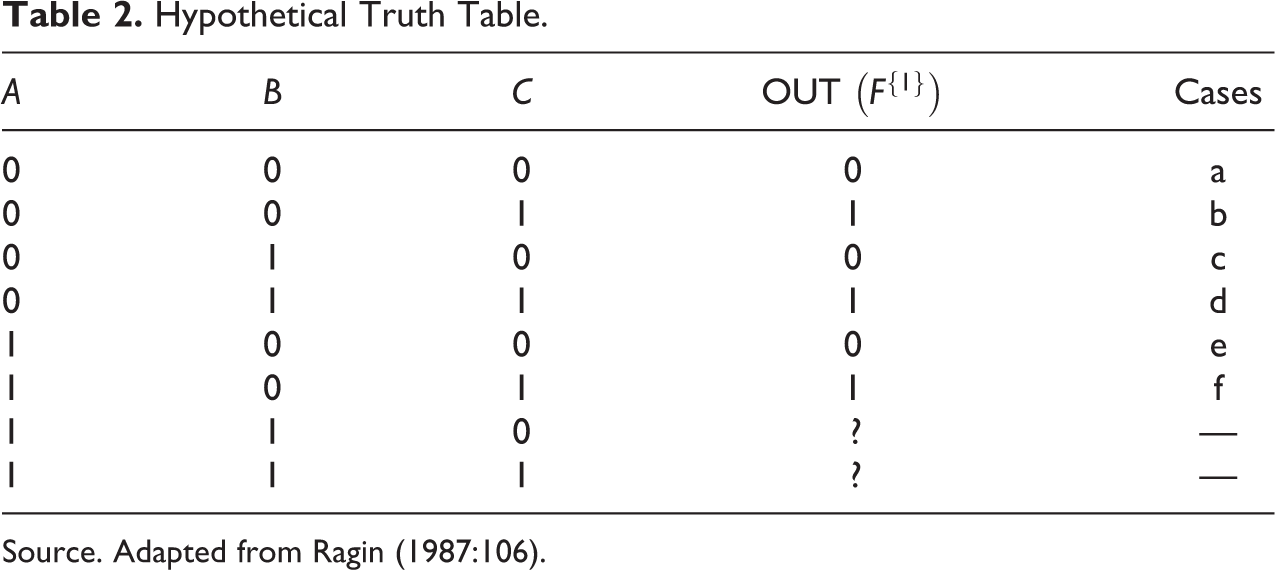

Truth Table for Establishing Insufficient Nonredundant unnecessary sufficient (INUS) Conditionality.

Pairs of Boolean-algebraic conjunctions

For instance, if

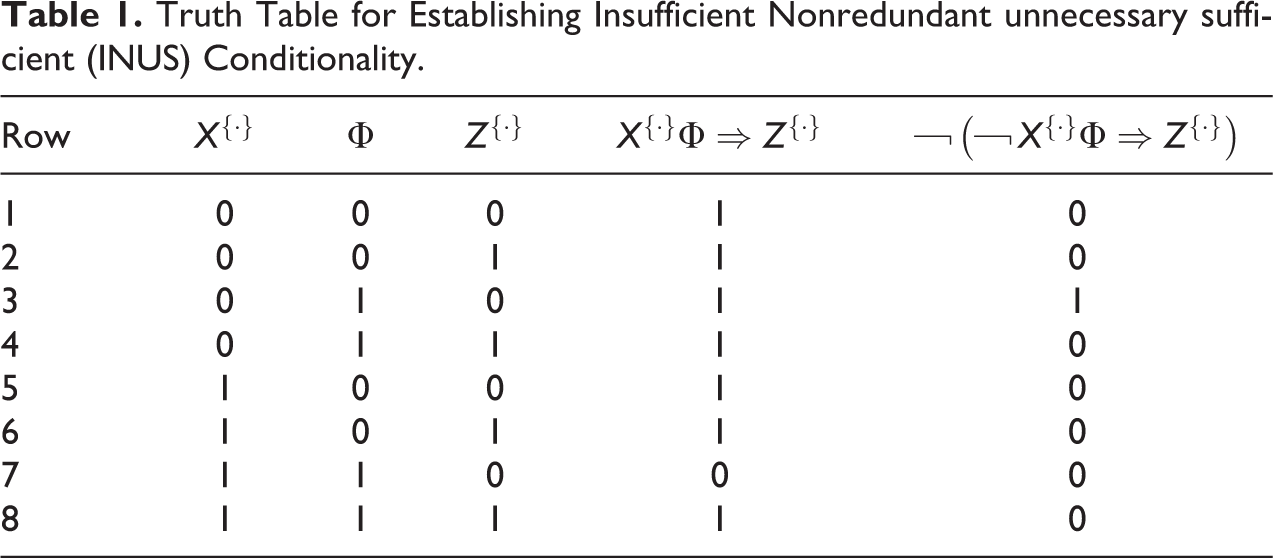

Now consider the truth table of three exogenous factors

According to Ragin (1987:105), “[a]

Conjunctively,

Conjunctively,

Clearly, these five claims are not all independent of each other: Claim (4) necessitates the truth of claims (1) and (2), claim (5) the truth of claims (1) and (3). Yet, how “conservative,” that is, how faithful to the empirical data, are these claims in actuality? Let us begin with claim (1) about

each of which satisfies the requirements for attributing causal relevance to

What about

How can QCA-CS attribute causal relevance to

However, the mere augmentation of the empirical data with matching artificial data does not, perforce, render QCA-CS incorrect. After all, QCA-CS may have outstanding predictive properties insofar as it lies about the current existence of a case, but not necessarily about the truth of the corresponding proposition in a future instantiation of that case. So as to understand what exactly causes QCA-CS to ultimately fail, all possibilities for a causal structure

If

QCA-CS goes beyond the facts, but it would not commit a causal fallacy if either

Although QCA-IS has also been shown to be incorrect by Baumgartner and Thiem (2017b), its performance has always been at least as good as that of QCA-CS, and usually better, but it has never been better than that of QCA-PS, and usually worse. This result can be explained by the distinction QCA-IS makes between so-called

As intermediate solutions are derived from pairings of models from a conservative solution and a parsimonious solution of an analysis that uses the same set of empirical data, QCA-PS must first be applied to Table 2. The parsimonious solution for the truth table in Table 2 consists of one model, namely

Whether QCA-IS will commit a causal fallacy on

Conclusions

QCA is a relatively young method of causal inference that continues to diffuse across the social sciences. However, recent research has demonstrated that the complex/conservative solution type and the intermediate solution type of QCA fail fundamental tests of methodological correctness for configurational comparative methods. A methodological explanation for this finding has not yet been put forward so far.

The present article has filled this gap. It has been illustrated that, and how, conservative and intermediate solutions introduce matching artificial data which QCA supplements the empirical data with. Yet, these artificial data often lead to inferences that violate the very causal structure that had generated the empirical data in the first place and that QCA is meant to uncover. Researchers who employ QCA-CS or QCA-IS in empirical analyses thus always risk moving (much) further away from the truth rather than closer to it. For producing evidence-based findings not affected by such risks, QCA-PS provides the appropriate method.

Footnotes

Acknowledgement

Previous versions of this article were presented at the Annual Conference of the Methods of Political Science-Section of the German Political Science Association, University of Mainz, Germany, 12-13 May 2017; and the 7th General Conference of the European Political Science Association, Milan, Italy, 22-24 June 2017. I thank all participants at these conferences who contributed feedback and comments that contributed to improving this article. Last, but not least, I also wish to thank the four anonymous reviewers at Sociological Methods & Research and Lusine Mkrtchyan for their time and enormously helpful feedback.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Swiss National Science Foundation (PP00P1_170442).