Abstract

Polyrhythms are central to many musical practices. However, the extent to which people can simultaneously track multiple non-metrically related beat patterns remains unclear. Here we studied people's ability to simultaneously track multiple periodic streams containing beat patterns that cannot be perceived according to a single metric framework. Participants listened to one or two beat patterns simultaneously in three conditions: single stream, selectively attending to one of the streams, and simultaneously attending to both streams. Using a probe-tone paradigm, we assessed whether they were tracking the pattern's periodicity, while recording their brain activity using EEG. The EEG showed limited effects of attention. However, our behavioral results show that, while performance during dual attending is overall worse than selective attending and single stream listening, people are able to perform the dual attending task above chance levels, indicating that they can at least extract and evaluate temporal information from both periodic streams simultaneously. We also found evidence of one group being better at dual attending than another. Our findings suggest that tracking of polymetric streams is possible, and that this ability may depend on individual difference.

Introduction

Rhythms are a ubiquitous feature of human experience: our hearts beat, our lungs breathe, and our movement can spontaneously synchronize with others. Musical rhythms are widely enjoyed, and danced to across cultures (Mehr et al., 2019; Singer et al., 2023). Beyond its role in music and dance, periodic rhythms are also essential in speech and general motor control (e.g., Birkett & Talcott, 2012; Harding et al., 2019; Thaut et al., 2015). Therefore, beat perception—extracting temporal regularities from a perceived underlying pulse that arises in response to (musical) rhythm (Honing, 2013; Large & Palmer, 2002; Merchant et al., 2015; Møller et al., 2021; Nozaradan et al., 2011; Rathcke et al., 2024)—is an essential skill to many of our daily activities.

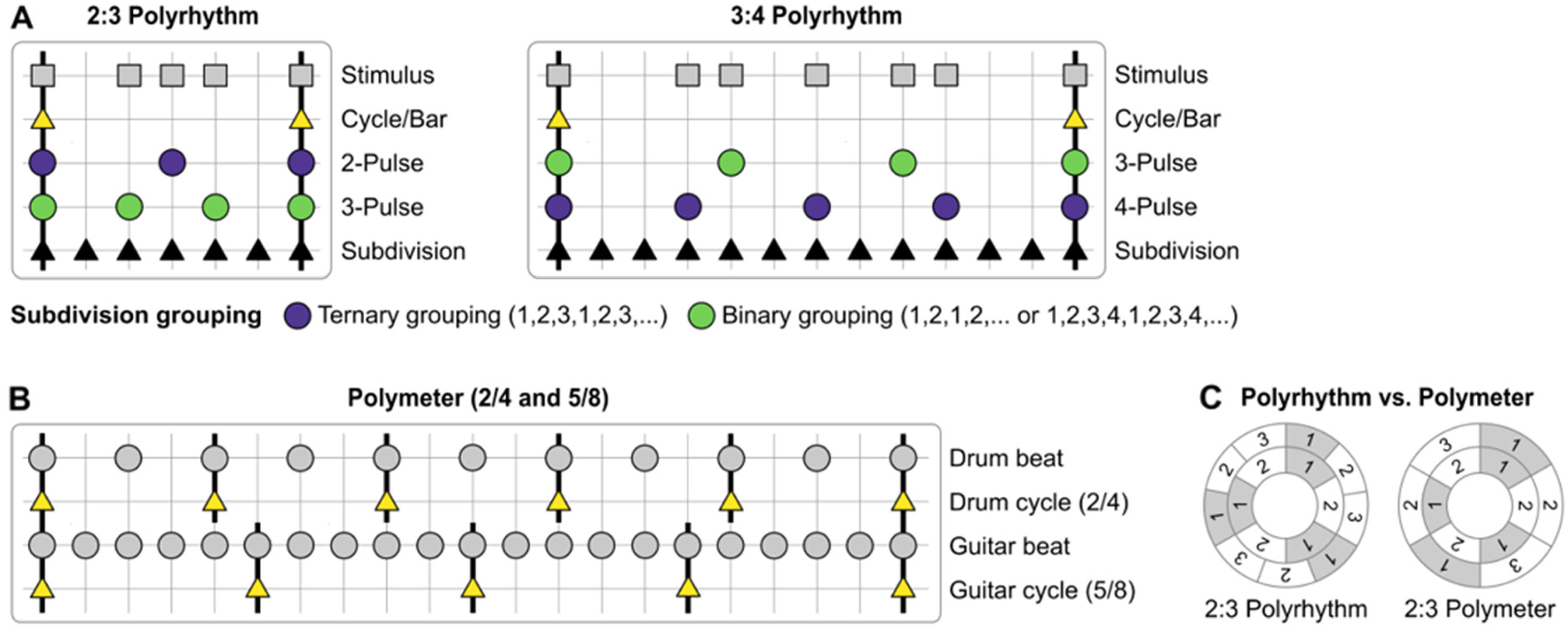

In many styles of music, such as much of European classical music and contemporary global pop music, beats are usually perceived within groupings of regularly alternating weak and strong beats. This grouping is known as the meter, which provides the timing framework from which a given rhythmic pattern is understood (e.g., a waltz is a three-beat meter of strong-weak-weak alternation, Levitin et al., 2018; London, 2012). The metric framework relies on multiple levels of subdivisions which are integers of each other, such as a half-time or double time. Rhythms of different meters can be combined if the meters share a common metrical level. For example, when a three-beat meter rhythm is combined with a four-beat meter rhythm, the metrical level of the bar can be shared, creating a 3:4 polyrhythm. The beats of each level are of different lengths, so they equally subdivide the bar by 3 and 4, respectively (see Figure 1). In such instances, listeners perceive the combined rhythms according to one of the meters, either three- or four-beat (Møller et al., 2021; Stupacher et al., 2017). But when two rhythms cannot be perceived as sharing a common metrical level, it is unclear if the beat of those separate rhythms can be perceived simultaneously.

Adapted from Nijhuis et al., (2026). (A) Schematic representation of 2:3 and 3:4 polyrhythms (Stimulus) and their key metrical levels ranging from

Polyrhythms are therefore largely taught and perceived as integrated rhythms of repeating sequences that fit within the perceived meter (Jagacinski et al., 1988). When people are asked to tap the beat, they are strongly biased towards a binary meter (divisible by two), although it is also possible to prime towards a ternary meter (divisible by three) (Møller et al., 2021). Many studies report the perception of a composite rhythm (i.e., the combination of all the event onsets in the two (or more) sequences placed on a single timeline), suggesting integrated rather than segregated perception of the two separate streams within the rhythm (e.g., Jagacinski et al., 1988; Keller & Burnham, 2005). To perceive two separate streams of unrelated meters simultaneously would represent true polymetric perception, and is largely thought to be impossible (Jagacinski et al., 1988; Keller & Burnham, 2005; London, 2012).

Yet polymetric structures are not altogether uncommon in music. Polyrhythm and polymeter are important compositional techniques in various musical practices around the world, from West-African drumming to jazz and death metal (see Poudrier & Repp, 2013 for a summary). In particular, drummers, conductors, and DJs might benefit from polymetric perception, when tracking multiple streams in complex musical pieces.

For true polymetric perception to occur, metrically competing rhythmic structures must be tracked individually but simultaneously. While each rhythm can have its own metrical structure of alternating patterns of strong and weak beats, the tempo of the beats are not related in a 1:N manner (Poudrier & Repp, 2013). This would require divided (or segregated) attention, rather than integrative attention (Loui & Guetta, 2019).

The ability to segregate multiple rhythmic auditory streams, a process that is part of Auditory Scene Analysis (ASA), partially depends on acoustic features. Key factors that aid segregation of streams are pitch and tempo (Bregman & Campbell, 1971; Deike et al., 2012; van Noorden, 1975). The larger the pitch difference and the faster the presentation of the two streams, the easier it is to segregate the streams without interference (Bregman & Campbell, 1971; Miller & Heise, 1950; van Noorden, 1975). In contrast, when two rhythmic auditory streams are similar in pitch and slower, they can interfere with one another and will ultimately be integrated into a single composite stream, making it difficult to track them individually (Snyder & Large, 2005).

Poudrier and Repp (2013) suggest that humans seem to rely on such a composite rhythm to track two separate rhythmic streams. Using a task that required detection of timing deviation, they first tested the perception of 2:3 polyrhythms with and without phase shifts (an eighth note), which can relatively easily be perceived as one composite rhythmic pattern. These rhythms were made up of two streams that represented two non-isochronous rhythms of different meters (2/4 and 6/8). The authors first tested participants’ performance when selectively attending to one stream. Then, when told to attend to both streams simultaneously, participants were found to outperform their performance as compared with a random selective attending strategy (randomly selecting a stream) which was calculated based on their selective attending performance. When the authors tested polyrhythms of higher complexity (where the phase relationship was shifted half of an eighth note), with ambiguous metrical frameworks which could not easily be perceived as a single integrated composite rhythm, participants seemed unable to simultaneously keep track of the two rhythms in the separate streams. Hence, Poudrier and Repp (2013) could not find statistical support for true polymetric perception requiring divided attention to simultaneously monitor two rhythmic auditory streams, and suggested that polymetric perception is likely largely supported by perception of the composite rhythm. However, some individual participants' behavioral patterns did indicate better polymetric perception than expected.

However, Demany et al. (2015) suggest that humans

Stream segregation has previously indeed been found to be influenced by individual capacities such as musical training (Benocci & Calcus, 2024), attention (Dalton et al., 2009), and working memory (Conway et al., 2001; Lotfi et al., 2016). Training such individual capacities has been shown to improve stream segregation, for example in children with Auditory Processing Disorder (Moossavi et al., 2015). Highly trained musicians have also been shown to have higher working memory capacities (Talamini et al., 2016) and better selective attending (Loui & Wessel, 2007) and beat perception abilities (Nguyen et al., 2022; for a review see Repp & Su, 2013). This highlights the potential for further investigation into the interindividual differences of segregating and integrating streams in complex polyrhythm perception.

Neural tracking of rhythm can be assessed using frequency tagging of the beat (Nozaradan et al., 2011; 2014). The frequency spectrum of EEG activity while listening to a rhythm shows clear peaks at beat-related frequencies, known as the Steady State Evoked Potential (SSEP). The magnitude of the beat-related SSEPs is functionally relevant. For example, the SSEP is larger when the beat is played by low-frequency notes than high-frequency notes, which explains the special role bass sounds play in conveying the beat of music (Lenc et al., 2018). Furthermore, the strength of neural beat tracking has been correlated with the ability to synchronously tap to the beat (Nozaradan et al., 2016).

Using frequency tagging, Stupacher et al. (2017) demonstrated that the brain tracks the beat of both rhythms in complex auditory streams like polyrhythms. SSEPs reflected the rhythmic structure of both rhythms, with beat-related SSEPs even present during silent periods after listening. The study also underlined the functional relevance of neural tracking for beat perception, as these neural responses correlated positively with the behavioral accuracy of temporal judgments. Frequency tagging has also recently been used to study the perceptual integration of multiple rhythms in the visual domain, during interpersonal coordination (Alp et al., 2017; Varlet et al., 2020). Not only can the tagged frequency of each person's movement during an interaction be observed in the cortical signal, but intermodulation frequencies (frequency A + B) were also identified. The intermodulation frequency was shown to be functionally relevant for the integration of a person's own and the other's movement, with higher amplitude of the integration frequency correlating with higher movement synchrony. Alp et al. (2017) also noted higher-order perceptual integration in addition to low-level sensory integration. These studies highlight the utility of analyzing neural dynamics to understand the perception and processing of complex stimuli like auditory polyrhythms.

In this study, we used a behavioral beat perception task (judging a probe tone) and EEG frequency tagging to investigate whether people can track two simultaneous periodicities that do not share a metrical framework. We presented listeners with either one or two auditory periodicities at two different non-metrically related tempi. When presented with two periodicities, listeners were asked to either attend to one (selective attending) or both (dual attending). Participants judged a probe tone at the end of the periodicities as in or out of time with either of the patterns, indicating whether the periodicities were perceptually tracked. In addition to the temporal judgments trials, participants tapped along to separate trials to assess movement tracking of the two beats. We recorded auditory SSEPs using EEG and tested whether the amplitudes of neural frequencies of the two periodicities reflected simultaneous tracking. To assess effects of individual differences we tested each participant's beat perception abilities.

We hypothesized that people

Methods

Participants

Forty-eight participants volunteered to take part (26 females and 22 males, aged from 18 to 47; M = 24.8, SD = 7.15), receiving £20. All participants had normal hearing. The median years of musical experience (YoME) of participants was 4 ± 11.75.

Participants who did not perform above chance level (50%) in the single track listening condition were excluded (n = 6), since this suggests they were not able to perform any of the tasks. This leaves 42 participants included (21 male, 21 female, MedianYoME = 3 ± 9.5).

There were six additional participants with corrupt files, faulty triggers, or missing data from the EEG recordings, that prevented reading in and processing their EEG data (see detailed list in supplementary materials). Hence, 36 participants were analyzed for the EEG results (MedianYoME = 3.5 ± 10.0). Five participants had to be excluded from the analysis of tapping data altogether, because their taps did not register consistently, or they did not perform the tapping at all (e.g., > 5 s of no tapping, see supplementary materials for details). Therefore, 43 participants were included in tapping-only analyses and 32 participants remained for correlation analyses between tapping and EEG measures (i.e., one participant had been excluded for both tapping and EEG).

Stimuli

Participants were presented with two isochronous periodicities of a woodblock with different tempi, which, when presented simultaneously, did not combine into a composite rhythm within the same metric framework. Both periodicities had loudness accentuations every other beat, expressed via the increase of sound level (by 8 dB). This implied a binary metrical structure. Loudness was chosen as the metric cue (Grahn & Rowe, 2009) over other cues such as pitch and melody, to avoid adding additional complexity to the already complex patterns.

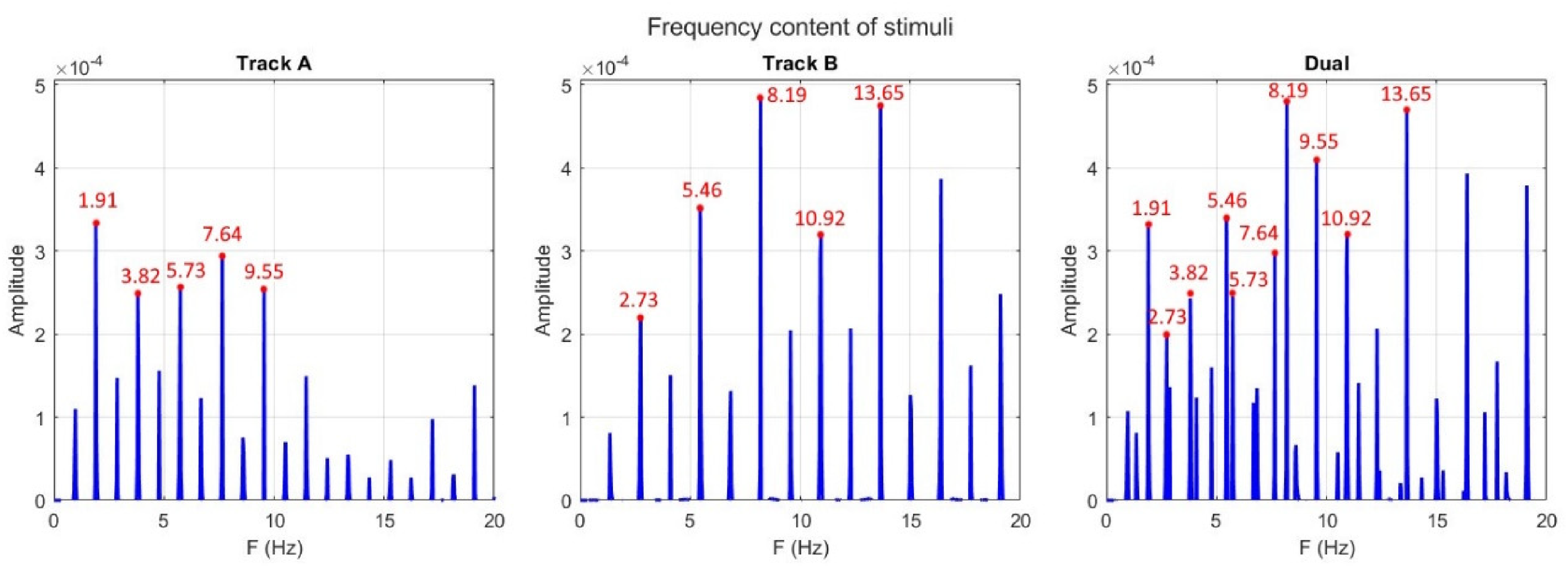

Both patterns were each presented at a unique tempo; Pattern A had a tempo of 114.6 BPM and pattern B of 163.8 BPM. Patterns with these two tempi are difficult to perceptually combine into a single metric framework, as their phases do not align until after eight onsets of the A pattern and 11 onsets of the B pattern. Furthermore, at the point of alignment, there is still a small phase difference between the two (B at 0.002 ms ahead of A), which accumulates over the course of the whole pattern (0.015 ms at the final alignment), but this difference is not perceptually detectable (London, 2012). Pattern A was five semitones higher pitched than pattern B. As such, the two patterns fall within the ambiguous zone of streaming and integration (Bregman & Campbell, 1971; Miller & Heise, 1950; van Noorden, 1975). Furthermore, the combination of higher pitch with slower tempo for pattern A vs lower pitch with higher tempo for pattern B balanced out the attention that is given to each musical beat pattern in the streams based on pitch and speed (Møller et al., 2021). The patterns were continuously presented for 30 s followed by a probe sine tone. This probe could either coincide with the next imagined note of pattern A, B, or arrive late by 30% of the period for either pattern. Stimuli (Figure 2) were presented via in-ear headphones (Etymotic Research Studio, ER2SE).

Amplitude spectra (noise corrected) for track A, B, and their combined track. Audio files and code for the calculation of this FFT can be found on the OSF repository (https://osf.io/zrwmy/files).

Procedure

Procedure

On arrival, participants were provided with an information sheet that described the perceptual task, and the EEG equipment used. All participants gave informed consent. Participants were then fitted with the EEG caps and electrodes. Participants first completed the computerized adaptive Beat alignment test (ca-bat v0.11.0), (Harrison & Müllensiefen, 2018) run via R (version 4.2.2), to test their general beat perception skills.

Participants then received further instructions about the task and performed a training block of 12 trials to learn to discriminate between pattern A and B. To pass the training, participants had to recognize both patterns correctly and answer the question ‘which pattern is the slower, higher pitched pattern, A or B?’ correctly. If they answered incorrectly, the training block was set to reinitiate, however, all participants passed the training in one attempt.

The experiment was run in psychoPy (version 2022.1.4) on a Dell latitude (Windows version) with a screen refresh rate of 60 Hz. The trials were self-paced by pressing the space bar to continue to the next trial. Participants were encouraged to take breaks between the blocks of trials. Before starting the dual attending block, participants were once again asked to confirm that they were able to recognize which beat pattern was which.

Task

Participants’ main task was to listen and attend to beat patterns and judge whether a probe tone that appeared after the pattern would have coincided with the next beat in the attended pattern, had it continued (i.e., ‘did the probe tone coincide with the beat you were attending to?’).

They judged the probe tone in three conditions: single pattern listening, selective attending, and dual attending. During single pattern listening participants heard a single beat pattern at a time (either A or B) and judged whether the probe tone was in time with the pattern (y/n). For selective attending, participants heard both beat patterns but were instructed to attend to either pattern A or B. They then judged whether the probe tone was in time with the attended pattern or not (y/n). During the dual attending condition, participants had to actively attend to both patterns simultaneously and judge whether the probe coincided with pattern A, B, or neither (a/b/n). We wanted the probe task to reflect the cognitive process and task load when asking to track both patterns simultaneously. If they are tracking both sequences, they should be able to evaluate the interval for both tracks.

A total of 62 trials were grouped into blocks according to conditions. Block 1 contained 20 single pattern listening trials, including 10 repetitions of each pattern, with five ‘on the beat’ (ON) probe trials and five ‘late’ probe trials (OFF). Block 2 contained 20 trials for the selective attending condition, with half of the trials instructing to attend to A and the other half to attend to B. Again, each pattern had five ON and five OFF probe trials. Block 3 contained only 12 dual attending trials, as the stimuli and task are the same for all trials, consisting of pattern A and B playing simultaneously. Half the trials contained probes for pattern A, and the other half for pattern B. Each group of six contained three trials that were ON and three trials that were OFF. This meant that half of the trials contained ON probes where participants were meant to answer A or B and the other half contained OFF probes where the correct answer was ‘neither’ (n). The final block (4) contained 10 tapping trials, 2 for each condition (single tapping to A, single tapping to B, selectively tapping to A, selectively tapping to B, free tapping with dual presentation), to assess motor entrainment. Trials were randomized within blocks.

Behavioral Analysis

The behavioral performance was quantified as the ratio of correct responses within each block. In the single listening block, for example, this would be the number of correct responses divided by 20 trials.

Predicted Behavioral Performance

To investigate whether participants actually attended to and could track both beat patterns simultaneously during the dual attending condition, we tested whether they outperformed not only random chance (33%) but also a selective attending strategy. This strategy assumes that, without instruction to attend to a particular pattern, participants will randomly choose either pattern A or B to attend to, which would be the correct pattern in 50% of trials. This strategy is similar to the selective attending trials, where participants attend to only one pattern. Therefore, based on each participant's performance in the selective attending block, we can estimate how many of the trials they would answer correctly if taking a selective attending strategy during the dual attending trials. The remaining trials would then be performed at chance level.

More specifically, within the 12 dual attending trials, six trials have the probe tones ON the beat, of which three are on A and three on B. During ON trials, if the participant is only paying attention to one pattern (for example all A), they are correct 50% of the time, and the selective attention performance applies to the three trials where the correct pattern is attended:

The remaining six trials are all OFF beat trials, where the probe tone coincides with neither (n) pattern A or B. Therefore, regardless of which pattern is being attended (A or B), participants would correctly identify them as OFF at the rate of their selective attending performance. Participants would correctly identify that the probes are OFF in

This same calculation applies to the three ON beat trials where the probe tone falls in line with the other (not attended) pattern. In (Rselective * 3) trials participants should correctly identify the probe as OFF beat, therefore leaving a 50% chance of correct response (the other pattern or neither):

Adding up the six OFF and three unattended ON responses results in

The predicted performance that participants would need to outperform to establish a dual attending strategy was thus calculated with the following formula:

Predicted performance ratio (using a selective attending strategy) =

We refer to this as their predicted score from hereon.

EEG Recordings and Analysis

EEG signals were recorded at a sampling rate of 2048 Hz using an ANT neuro (Enschede, Netherlands) mobile EEG system with 64 channels, placed over the scalp according to the international 10/10 system. All electrodes were referenced to the CPz electrode and their impedance was kept below 50 kOhm.

Upon the start of the trial and stimulus presentation, a trigger was sent via serial port command to ensure synchronized EEG recording with the audio presentation.

The EEG data were processed using MATLAB 2022a (The MathWorks, Inc., Natick, MA). Data were first segmented into 30 s trials, then high-pass filtered with a cut-off frequency of .2 Hz to remove slow drifts in the recorded signals and notch filtered to remove 50 Hz (and corresponding harmonics) electrical power contamination with a bandwidth of 1 Hz using a fourth-order Butterworth filter. Based on visual inspection, channels containing excessive artifacts or noise were then interpolated with the neighboring channels (i.e., an average of 2 [SD = .7] interpolated electrodes per participant, and never more than 3 electrodes). After filtering, the EEG signals were decomposed using an independent component analysis (FastICA), as implemented in Fieldtrip (Oostenveld et al., 2011) to remove eye movement artifacts. Based on visual inspection of the topography and time-course of independent components, components reflecting eye-blinks and lateralized eye movements were removed from the data (i.e., an average of .51 components [SD = .74] per participant). EEG data were then re-referenced to the average of all scalp electrodes.

At the next stage of data processing, we used a Fast Fourier Transformation (FFT) with zero-padding to compute the amplitude spectra with a frequency resolution of 0.01 Hz to align frequency bins with the frequencies of interest (i.e., 1.91 and 2.73). In order to examine the occurrence of significant EEG responses at the beat-related frequencies, we pooled the spectra of all EEG channels together and computed Z-scores at each frequency bin as the difference in amplitude between that frequency bin and the mean of 20 neighboring frequency bins (excluding the four immediately adjacent frequency bins, two on each side), divided by the standard deviation of those 20 neighboring bins. Z-scores were computed at the group-level (amplitude spectra averaged across conditions and participants) and individual-level (amplitude spectra averaged across conditions). EEG responses at specific frequency bins were considered to be significant when the Z-score value was greater than 1.645 (p < .05, one-tailed), in line with previous studies that used frequency-tagging techniques ( Jacques et al., 2016; Quek et al., 2018), which indicated signal amplitude significantly larger than the background noise. Background noise and muscular artifacts affect amplitude spectra over a large range of frequency bins around those of interest (Varlet et al., 2020). To minimize the effect of such irregularities on participants’ EEG responses, baseline subtraction was applied. At each frequency bin of the amplitude spectra we subtracted the average amplitude of the 20 neighboring frequency bins excluding the two immediately adjacent frequency bins (Jacques et al., 2016; Lenc et al., 2018; Nozaradan et al., 2011; Varlet et al., 2020). Finally, noise-subtracted amplitude spectra averaged across all EEG channels were computed for each participant and condition and then log-transformed to satisfy normality assumptions. The log-transformed SSEP amplitudes were then used for further statistical analyses in order to compare the amplitude of participants’ beat-related frequency responses (SSEPs) across the different conditions and to investigate correlations with the behavioral performance on the probe-tone task.

In addition to the fundamental frequency and harmonics of the beat frequencies in track A and B, we also explored intermodulation frequencies representing non-linear integration between stimuli (i.e., A + B = 4.64 Hz, and 2*A + B = 6.55 Hz) (Alp et al., 2017; Norcia et al., 2015; Varlet et al., 2020).

Statistical Analyses

Statistical analyses were conducted using JASP (version 0.16.4.0). Mixed ANOVAs were performed with Greenhouse–Geisser correction applied when the assumption of sphericity was violated. Pairwise contrasts were used to further examine the significant main effects using Bonferroni corrections for multiple comparisons.

To compare the behavioral performance across the different conditions, a mixed ANOVA with the between-factor Group (musician, non-musician), and the within-factors Pattern (A: 1.91 Hz; B: 2.73 Hz), and condition (Single pattern listening, Selective attending, and Dual attending) was performed on the ratio of correct responses.

The behavioral performance during dual attending was compared to the chance level (33.33%) with a one-sample t-test, and participants’ predicted performance assuming selective attending strategy using a two-tailed paired samples t-test.

Neural tracking strength, operationalized as the beat-related EEG amplitude peaks (SSEPs), was compared across the different conditions using a one-way repeated measures ANCOVA with the factors Group (musician, non-musician), Track (A: 1.91 Hz; B: 2.73 Hz), and condition (Single pattern listening A, Single pattern listening B, Selective attending A, Selective attending B, and Dual attending).

To investigate the relation between the neural responses and the behavioral performance on the probe task, linear correlations (multiple regressions) were calculated between the behavioral performance (ratio of correct responses) and the amplitude of the beat-related frequency peaks. Finally, we explored correlations between participants’ beat perception ability as measured by their ca-BAT performance and their neural and behavioral responses.

To evaluate tapping performance, we computed the discrete relative phase between the onset of the sounds in the beat patterns and the tap times of participants, expressed between 0 and 360 degrees. These asynchronies were calculated by finding the closest beat to each tap, to avoid problems with missed taps. We then used circular statistics to compute the average relative phase (mean vector angle) and standard deviation of the relative phase (resultant vector length) for each participant (Batschelet, 1981). A smaller relative phase (mean vector angle) would mean more accurate tapping performance, as the temporal gap between the tap and the beat is smaller. Whereas a longer resultant vector length indicates more precise tapping, as this is the result of a less variable relative phase. These were then compared across conditions using repeated measures ANOVAs and paired t-tests.

Whenever assumptions of normality were violated, non-parametric tests were performed.

Transparency and Openness

Data was collected in 2022. This study's design was not pre-registered. Data, stimuli and statistical analysis files are posted on OSF: https://osf.io/zrwmy/overview. (Nijhuis & Witek, 2025)

Results

Behavioral Results

Dual Attending Performance

In the dual attending condition, participants outperformed chance levels (33%) in a one-sample t-test (

Participants outperforming (a) chance level and (b) expected success rate during dual attending on the behavioral probe-tone task. Error bars represent 95% CI.

A mixed repeated measures ANOVA showed a significant effect of attending condition on the ability to correctly judge the probe tone (

Performance on the probe-tone task (ratio correct responses) across attending conditions. Error bars represent 95% CI.

Tapping

Accuracy

A significant interaction between track and attending condition was found (

Tapping performance represented on unit circles. In the left column are the tapping to A conditions (top: single tapping, bottom: selective tapping), in the middle column are the tapping to B conditions (top: single tapping, bottom: selective tapping), and in the right column are the free tapping conditions (top: referenced to pattern A, bottom: referenced to pattern B).

The significant main effect of the attentional condition on tapping accuracy is driven by the pattern found for tapping to track A, as described in the interaction (

Across conditions, accuracy of tapping was lower for track B, as indicated by the increase in the circular statistic angle of the mean resultant length (track B

The single tapping condition was significantly more accurate in track A (

Precision

There was a significant main effect of condition (

Free Trapping to Dual Beat Patterns

When investigating the free tapping during dual attending, we allocated the trials depending on whether their inter-tap intervals (ITIs) were on average within 10% above or below the inter-onset interval (IOI) of A or B. We found a roughly equal split between participants choosing to tap along to pattern A (10) or B (8), changing between trials (12), or tapping a different tempo altogether (7) (Figure 6).

Mean ITI per participant. Legend; orange = track A, cyan = track B, purple = different beat.

EEG Frequency Responses

After initial exploration, only the fundamental frequencies of the beat patterns’ SSEPs were found to be above significance levels (z-score >1.645) in all conditions, but not their harmonics. We therefore report only results for the fundamental frequencies (see supplementary materials for details and comparison).

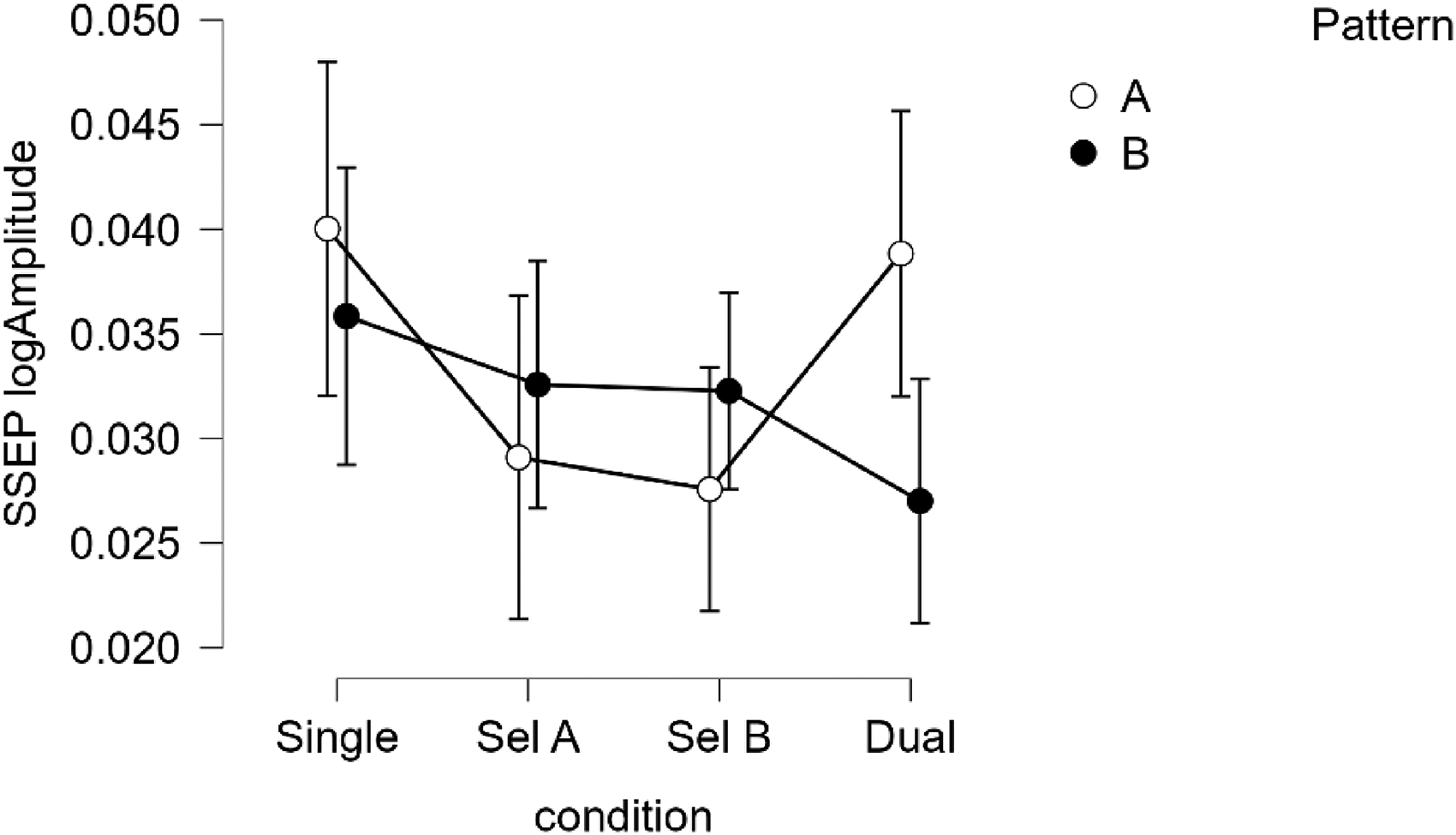

There was a significant interaction between condition and pattern (

EEG amplitude (log10-transformed) of beat-related frequencies across conditions, split by track. Error bars represent 95% CI.

To investigate the interaction of track and condition, we ran two separate ANOVAs, one for each track. Both ANOVAs included musical experience (years) as a covariate. The effect of condition was significant for track A (

Correlations

ca-BAT Score Correlations

Participants’ beat perception abilities, as measured with the ca-BAT, did not correlate with their performance in the single listening (Spearman's

Neural Tracking Correlations

No significant correlations were found between the neural tracking of A (SSEP 1.91 Hz) and the behavioral task performance (all

Performance on the dual attending task significantly correlated with the second-order integration frequency (SSEP at 6.55) (Spearman's

Tapping Correlations

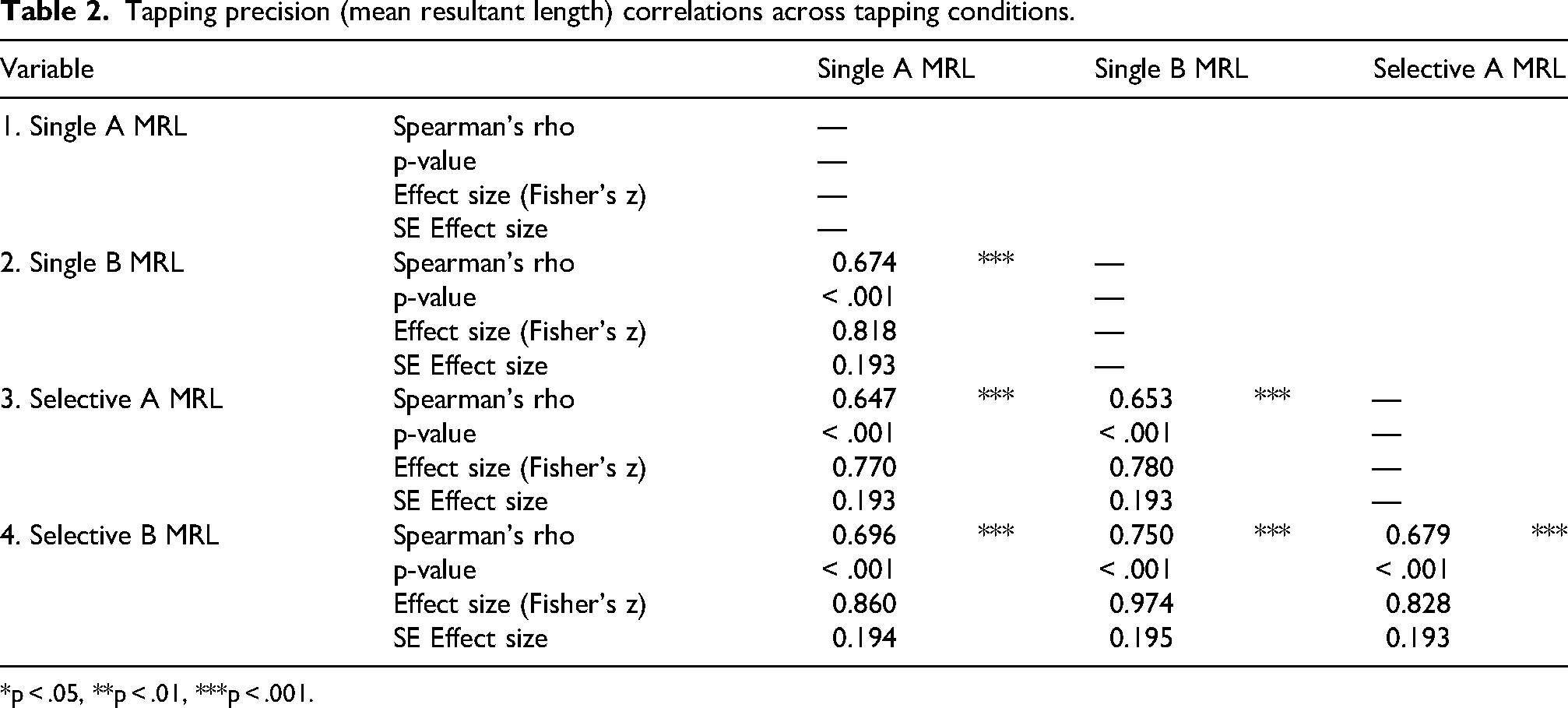

Tapping performance was strongly correlated across conditions (Tables 1 & 2).

Tapping accuracy (absolute angle) correlations across tapping conditions.

*p < .05, **p < .01, ***p < .001.

Tapping precision (mean resultant length) correlations across tapping conditions.

*p < .05, **p < .01, ***p < .001.

For tapping accuracy (absolute angle, Table 1), moderate to strong correlations between track A and B were found during single tapping (Spearman's

For tapping precision (mean resultant length, Table 2), moderate to strong correlations between track A and B were found during single tapping (Spearman's

Tapping precision for single tapping to A (

We found a spurious correlation between tapping accuracy when tapping to B (single tapping) and probe task performance during the selective attending condition (

No significant correlations (after corrections) were found between tapping performance and the neural tracking of the beat frequencies.

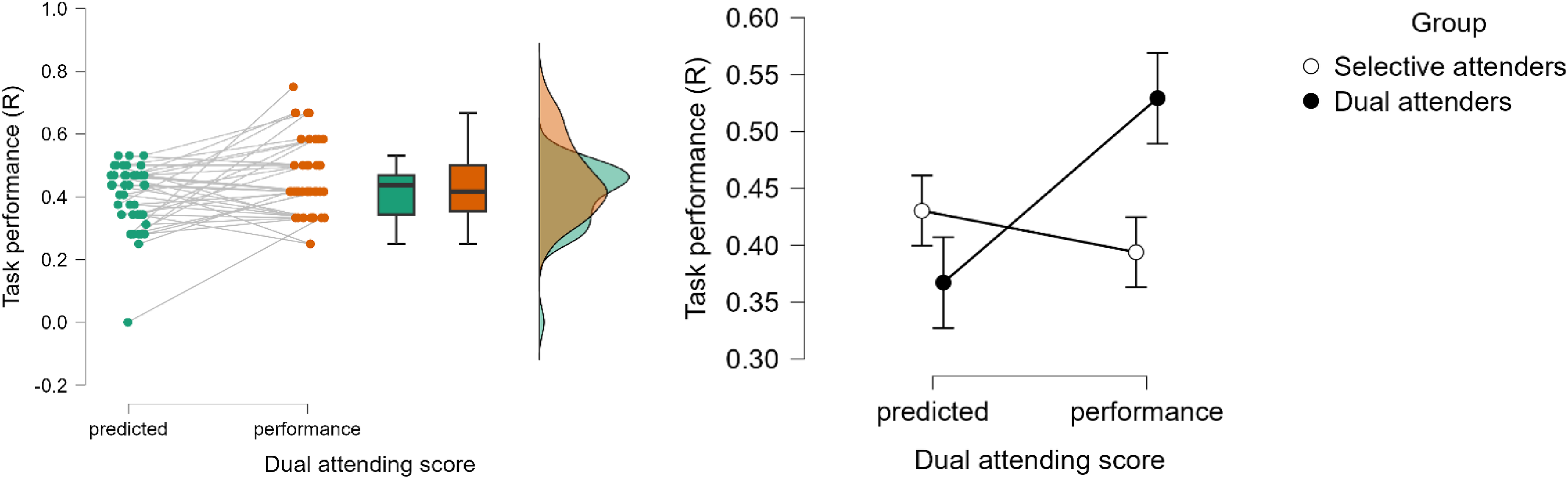

Post-hoc Group Split Analysis: Selective and Dual Attenders

Upon closer inspection, we identified two distinct groups: one that outperformed their predicted score in the dual attending condition (dual attenders) and one that did not (selective attenders) (see Figures 8 and 9). This split results in equal group sizes (20 and 22, respectively). The group split was not confounded by years of musical experience or age. The selective attenders had a median age of 21.0 ± 5.5 years old and had a median 5 ± 10 years of musical experience. Dual attenders had the same median age (21.0 ± 8.3 years old) and had a median of 2.5 ± 12 years of musical experience. A non-parametric Mann–Whitney test showed that these differences were not significant between the Selective and Dual attenders, U = 265.000,

(a) Predicted and performed dual attending scores for all participants. (b) Predicted and performed dual attending scores grouped by attending type, based on their ability (or inability) to outperform the predicted score.

Distribution of difference scores (Performance – predicted score) in the dual attending condition, suggesting a bimodal distribution at just below 0 (unable to outperform predicted score) and just above 0.1 (able to outperform predicted score). Positive scores are indicative of outperforming a selective attending strategy.

The groups also did not significantly differ on their ca-BAT scores (MSelective = .530 ± .822, MDual = .753 ± .958,

When comparing the effect of attending condition (

The interaction (Figure 10) shows that the group that did not outperform their own predicted score (i.e., selective attenders) was very accurate in the selective attending condition to the point that their performance was similar (i.e., not significantly different) to their performance during single track listening (

Interaction between group and the attending conditions for performance on the probe-tone task, split by group (black = dual attenders, white = selective attenders).

The group that outperformed their predicted score also clearly outperformed selective attenders on the dual attending task (

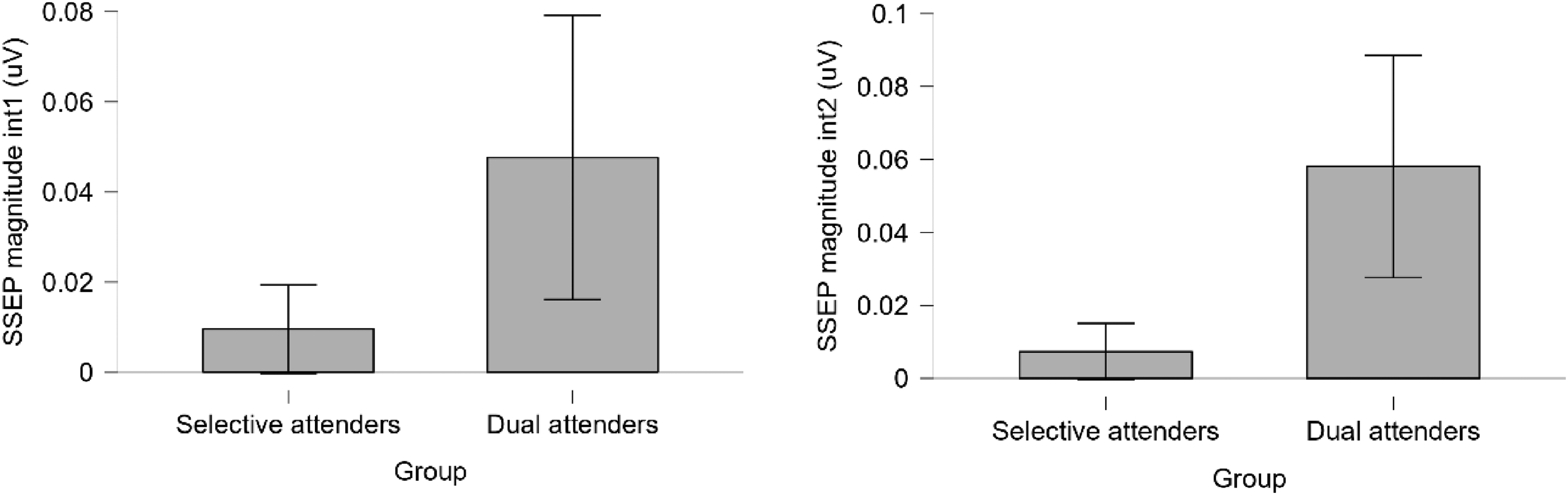

SSEP Intermodulation

While an ANOVA on the two attending type groups and the two orders of intermodulation frequencies did not show any effect of the order (first- vs second-order,

(a) The magnitude of the first intermodulation frequency split by group. (b) The magnitude of the second intermodulation frequency split by group.

The dual attenders demonstrated significantly higher amplitudes of the SSEP at intermodulation frequencies of the tracks (4.64 = A + B and 6.55 Hz = (2*A)+B) than the selective attenders (

No significant differences between the dual attenders and selective attenders were observed for the tapping tasks.

Discussion

In this study, we assessed people's ability to simultaneously track multiple periodic streams containing beat patterns that cannot be perceived according to a single metric framework. Participants listened to one or two isochronous beat patterns simultaneously, in which every other tone was louder, in three conditions: single stream, selectively attending to one of the streams, and simultaneously attending (dual attending) to both streams. After 30 s they judged whether a probe tone at the end would have coincided with the beat of one of the beat patterns (A or B), or neither, indicating whether they were tracking the pattern's periodicity. Using this paradigm, our behavioral results show that, while performance on dual attending is overall worse than selective attending and single stream listening, people are able to perform the dual attending task above chance levels, indicating that they can at least extract and evaluate temporal information from both periodic streams during the 30 s of the trial. Our neurophysiological data, i.e., SSEPs at the beat patterns’ periodicities in the EEG, show that both patterns are at least passively tracked. However, no effects of (selective) attention were observed. We found no effect of musical training or beat perception abilities, but participants showed some individual difference in their ability to selectively or dually attend to the two tracks.

Behavioral Tracking

In addition to performing above chance level, participants also outperformed a selective attending strategy in the dual attending condition. Thus, our participants demonstrate the ability to track period information from two independent, metrically unrelated patterns when simultaneously presented. This implies that, in certain circumstances, people might employ multiple uncoupled time-keepers to keep track of different parts of the auditory scene, such as a musical piece. This might benefit certain types of musicians whose practice involves tracking multiple rhythms, such as drummers, conductors, or DJs.

Poudrier and Repp (2013) have previously suggested that attending to two metrically unrelated auditory streams in parallel, by equally splitting attention across the streams, is likely impossible. They did note that the rhythmic complexity of their non-metrically related auditory streams might have confounded their results. Hence, we used simple isochronous sequences in the streams with fixed frequency registers, which Demany et al. (2015) suggested may aid in the streams’ parallel tracking. Contrary to Poudrier and Repp (2013) and in line with Demany et al. (2015), our results show that temporal information can be processed from simultaneously occurring non-metrically related periodicities. This suggest that parallel attention might be occurring, or that it may not be required for keeping track of the overall periodicities. Other mechanisms than parallel attention might be at play, such as rapidly switching attention between the two streams, or holding temporal intervals in short-term memory.

An alternative explanation for why we find performance above chance level and above the ‘predicted’ performance from a selective attending strategy, where Poudrier and Repp (2013) did not, may be our use of a third-choice option. In forced choice tasks like ours and Poudrier and Repp (2013), the chance level is determined by the number of choices. In our task, when attending to both streams, participants did not simply choose between A or B or ‘on’ versus ‘off’ beat like in Poudrier et al.'s study, but also the third option of ‘neither’. By increasing the number of choices, we reduced the random chance level. In a protocol of only 12 trials, this allowed our participants to outperform random chance and the ‘predicted performance’ under a random selective attending strategy. Hence, our design with a lower chance level might be more suitable for finding task performance above chance, allowing fewer of the correct answers to be assigned to random luck.

Adding a third-choice option for the dual attending condition can also be considered a limitation, as the task is now not identical in all attending conditions. A follow-up study could address this by using a 50/50 guess between ‘on’ and ‘off’ beat. Another important difference between Poudrier and Repp (2013) and the current experiment is in the specific stimuli. Poudrier & Repp's stimuli were made up of non-isochronous rhythmic patterns, each of which was supported by a different metric framework. The current stimuli, as described, are made up of two isochronous sequences at different rates, in which loudness is used to create a duple pattern. Both sequences thus support a duple meter, while at different rates—they do not support categorically different metrical frameworks such as Poudrier and Repp's stimuli. Finally, our differing results on behavioral tracking could be due to Poudrier and Repp (2013) (n = 10) very small sample sizes which included (some of) the authors of the papers. Besides the fact that findings from small samples might prove difficult to replicate, our finding of different ability groups among our participants adds further complexity to the reliance on small sample sizes; such small samples are more likely to randomly contain low proportions of dual attenders.

Neural Tracking

The neural tracking of the simultaneous beat patterns in the periodic streams was not attenuated by attention. Single stream listening resulted in greater amplitudes at the beat frequency than any simultaneous stream presentation (selective and dual attending). A reduction of amplitude is expected when attention has to be directed towards or divided between multiple sources. However, this effect was found only for track B (163 bpm) and not for track A (114bpm). Furthermore, within each selective attending condition, there were no significant differences between the SSEP amplitudes of A and B, regardless of which one was attended to. Moreover, SSEP amplitudes also did not significantly differ when comparing A as the attended track and the unattended track, across selective attending conditions. This was also true for the SSEPs to track B. Therefore, interpretation beyond low-level hearing cannot be substantiated (Nave et al., 2022).

Furthermore, no significant differences were found between selective and dual attending SSEP amplitudes. Together, the EEG results indicate similar neural responses to the beat in the attended and unattended streams during selective attending, as well as simultaneously attended streams. The steady-state response to more than one periodic stream was thus not enhanced by selectively attending to one, compared to equally attending to both. This could imply that unattended auditory streams are still monitored (e.g., Aydelott et al., 2015; Hutmacher & Kuhbandner, 2020) for temporal information in a similar way to attended streams. The only evidence we found in support of attentional effects was a negative correlation between tapping precision and the amplitude of the first-order SSEP integration frequency during selective tapping conditions. This suggests that neural integration of the two tracks might hinder the tracking of the beat when selective attending is required. However, since the correlation was only found when attending to track B, and not A, and there were no further correlations supporting this interpretation, we cannot conclude any clear attentional effects on SSEPs in our study.

The lack of attentional effects on SSEPs may not seem in line with traditional frequency tagging approaches in the visual domain that show attentional enhancement and suppression (e.g., Toffanin et al., 2009; Gulbinaite et al., 2017; Quek et al., 2018). However, in the auditory domain, the effect of attentional enhancement and suppression is less established (e.g., Bharadwaj et al., 2014). While some studies have found some modulation of the brain's response by auditory attention, they are not simple changes in magnitude of the SSEP (Linden et al., 1987). The findings are complex and mixed (Tiitinen et al., 1993), confounded by interhemispheric differences (Bidet-Caulet et al., 2007; Müller et al., 2009; Saupe et al., 2009). A recent study by Lenc et al. (2020) also showed no difference between two auditory attention tasks: attending to tempo versus pitch. In line with our findings, they report that the auditory SSEP was only attenuated when attention was directed at a different modality. Even when the visual modality was attended, the relative strength of beat-related frequencies was the same as in the auditory attention conditions, only the overall gain in the auditory response was reduced. We might thus suggest that the auditory SSEP of beat-related frequencies is not sensitive to additional selective attentional enhancement, as beat-related frequencies have been shown to be selectively enhanced, regardless of attentional tasks (Lenc et al., 2020; Nozaradan et al., 2012). Perhaps timing plays such a crucial role in our environment that temporal information is always processed to a high degree, even without overt attention.

However, it is also important to consider that the task might not have required as much continuous attention as intended. Given that the stimulus is 30 s long, the phase aligns (perceptually) between the two tracks a total of 11 times during a trial. If this alignment could be heard and quickly encoded by participants, they might not need to pay attention until the final couple of alignments. This could explain why we did not observe big attention differences. This potential fluctuation in attention should be controlled for in future studies, for example by inserting probe tones at randomly allocated points in the stimuli, rather than always at the end.

Attention to Tracks A and B

Importantly, we also found no difference in neural tracking strength between the two periodic streams. We found no evidence that either of the beat patterns in the streams evokes a significantly stronger neural response. While track A (114 bpm) is closer to what is traditionally considered human's preferred movement frequency (2 Hz, e.g., Fraisse, 1974), it did not result in stronger neural tracking. Our tapping results also did not show a preference for either beat pattern during the free tapping, when both beat patterns were presented, and participants were free to tap along in whichever way they felt was most suitable. Tapping performance also did not show a clear advantage of one of the beat patterns. Participants tapped closer to the beat for pattern A, but were equally consistent for both patterns, showing a similar lack of clear preference in the movement responses as the neural responses. This suggests neither beat pattern attracted more attention simply because of the stimulus design.

Nonetheless, the more accurate tapping to pattern A indicates a potential (perceptual) difference between the patterns that might be addressed in future studies by counterbalancing the tempo (i.e., each pattern being played twice, at 114 and 163 bpm). This would require further training of the participants and might prove to be practically challenging. Another potential limitation to the stimuli is that the loudness accentuation was not validated for its capacity to induce meter in the listeners. Since we did not ask the participants about the perceived meter, we cannot be entirely sure that the duple meter was successfully induced. While loudness accentuation has been shown to work before (e.g., Grahn & Rowe, 2009), it has not been used in a polyrhythmic context.

Dual Attenders vs Selective Attenders

We identified two distinct groups of participants based on their performance on the dual attending task: ‘Selective attenders’, who are better at extracting period information when selectively attending to a single beat pattern compared to dual attending, and ‘dual attenders’, who are better at dual attending than the selective attenders.

The groups were identified and separated based on whether their task performance in the dual attending condition outperformed their predicted score based on a selective attending strategy. It is important to note that a high selective attending score would make it more difficult for a participant to outperform their predicted score in the dual attending condition. For example, 100% accuracy (R = 1) during selective attending would result in a prediction of ((3 (trials)*1) + (1 * 9(trials) *.5(chance))) /12 (trials) = 7.5/12 correct responses predicted. Whereas 80% accuracy (R = .8) would result in a prediction of ((3 (trials)*.8) + (.8 * 9(trials) *.5(chance))) /12 (trials) = 6/12 correct responses predicted. Therefore, doing well in the selective attending condition increases the predicted score for the dual attending condition, and thus makes it harder to outperform compared to someone who performed poorly in the selective attending condition.

However, if selective attenders were just ‘better’ at the task in general than the dual attenders, making it harder to outperform their predicted scores, then the interaction we found between the group and task performance (shown in Figure 10) would not appear. The interaction shows that selective attenders were worse than dual attenders at the dual attending task. If the selective attenders were simply ‘better’ at the task overall, they would have performed well during selective attending and might not have outperformed their own predicted scores, but would still have outperformed the dual attenders in general, and thus on the dual attending task as well. The interaction shows, however, that the selective attenders perform significantly worse than the dual attenders during dual attending. This suggests that selectively attending and dual attending may be two separate skills. These separate skills may also explain the somewhat mixed findings regarding the ability to track multiple non-metrically related beats in the previous literature.

The finding of these two groups existing also supports the fact that dual attending is an incredibly challenging task, to the point that half of the participants cannot attend to more than one stream simultaneously when explicitly instructed to do so. However, we should not ignore that despite the task being challenging, some people seem to be able to dually attend to the two patterns. Poudrier and Repp (2013) also noted that some individual participants performed above chance level in their task. It would be particularly interesting for future research to study what it is about these participants or their listening strategies that makes them able to do this, especially because our participants’ SSEPs of the individual streams did not differ between the groups.

While the selective and dual attenders did not differ in their neural tracking of the fundamental beat frequencies in the periodic streams, the SSEP magnitude of the intermodulation frequencies was larger for the dual attenders group. This suggests that the non-linear integration of the two periodicities that is happening at the cortical level, even if there is no perceptible stable composite rhythm, is stronger for the dual attenders than the selective attenders. The fact that both the first- and second-order integration frequencies were stronger in dual attenders suggests that they integrated the auditory streams more effectively at both early and intermediate stages of processing (Alp et al., 2017; Varlet et al., 2020). The correlation between the amplitude of the second-order integration frequency and dual attending performance was also found when both groups were combined, but the group split analyses suggests this was driven by the dual attenders.

The split between selective and dual attenders was not confounded by musicianship. There were roughly equal numbers of musicians in each group. There were also no effects of musical expertise in our sample. Musicians performed similarly to non-musicians on the task, showed similar neural tracking (SSEP amplitudes), and movement responses. This might be due to the relatively simple patterns used in the task—more complex polyrhythms might have been better able to highlight the effect of musicianship. The lack of difference might also be due to the heterogeneity of the musician group, i.e., many different kinds of musicians were grouped together. This is also evidenced by the lack of difference in ca-BAT scores between musicians and non-musicians. Previous studies suggest that certain musician groups have better perceptual and motor timing abilities, such as percussionists (Repp & Su, 2013). We were unable to systematically test the effect of type of musician on our effects in this study. Nonetheless, it seems relevant to musical skills that segregating and integrating multiple periodic streams appear to be two independent skills, especially for those who might use simultaneous beat tracking in their musical practice (conductors, DJs, drummers). They might develop a particular expertise for dual attending (or switching) that allows them to keep track of multiple periodicities, potentially at the expense of selectively attending and blocking out other parts of the sound.

While broad musicianship may not explain the difference between selective attenders and dual attenders, studies suggest that auditory stream segregation is constrained by individual capacities, such as working memory limits (Lotfi et al., 2016) and attentional resource allocation (Heinrich et al., 2008). Lower working memory capacity correlates with reduced stream segregation (Lotfi et al., 2016). Working memory is also implicated in anticipating auditory events in a series (Colley et al., 2018) and producing rhythmic intervals from memory (Grahn & Schuit, 2012). Working memory thus plays a role in perceiving and producing rhythms, and likely affects the ability to perceive multiple rhythms, or hold multiple time intervals in memory at once. In future studies, individuals’ performance on (auditory) working memory tasks (such as auditory digit span tasks (Hilbert et al., 2015) or rhythm span tasks (Schaal et al., 2014)), should be controlled for, or correlated with their ability to selectively attend a single beat pattern embedded in simultaneous auditory streams.

Here, we show that people can track two simultaneous isochronous patterns that cannot easily map onto a single metric framework (approximates 8:11). To further understand whether people can become

Conclusion

We found behavioral evidence that people can extract period information from two non-metrically related isochronous beat patterns that are playing simultaneously, and that there is individual difference in the ability to simultaneously attend to the two beat patterns. Neural correlates suggest that the ability for simultaneous attention could be linked to higher-order integration of period information within the beat patterns.

Supplemental Material

sj-docx-1-mns-10.1177_20592043261435532 - Supplemental material for Simultaneous Beat Tracking of Two Auditory Rhythmic Periodicities

Supplemental material, sj-docx-1-mns-10.1177_20592043261435532 for Simultaneous Beat Tracking of Two Auditory Rhythmic Periodicities by Patti Nijhuis and Maria A. G. Witek in Music & Science

Footnotes

Acknowledgments

The authors want to thank Rhys Yewbrey for his work on the tapping analysis. This research was supported by UK Research and Innovation (AH/W000954/1) and the Research Council of Finland (346210)

Author Contribution Statement

P. Nijhuis contributed to concept, design, data acquisition, analysis, interpretation, drafted the first manuscript, gave final approval, and agrees to be accountable for all aspects of the work ensuring integrity and accuracy.

M. Witek contributed to concept, design, data acquisition, interpretation, critically revised the first manuscript, gave final approval, and agrees to be accountable for all aspects of the work ensuring integrity and accuracy.

Ethical Approval Statement

All participants provided written informed consent prior to the experiment, which was approved by the University of Birmingham Humanities and Social Sciences (HASS) ethics committee under number ERN_21-1258.

Ethical approval: ERN_21-1258 approved by the University of Birmingham's College of Arts and Law review. All participants provided written informed consent prior to participation.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by UK research and Innovation (Arts and Humanities Research Council) (grant number UKRI AH/W000954/1) and The Research Council of Finland (grant number 346210).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability

Data and statistical analyses are available on OSF: https://osf.io/zrwmy/files/osfstorage. (Nijhuis & Witek, 2025).

Action Editor

Jessica Grahn, Western University, Brain and Mind Institute and Department of Psychology.

Peer Review

Karli Nave, University of Michigan, Kresge Hearing Research Institute. Department of Otolaryngology – Head Neck and Surgery. Eve Poudrier, University of British Columbia, School of Music.

Supplemental Material

Supplemental material for this article is available online.