Abstract

Digital tools, referring to a wide range of hardware and software, are increasingly integrated into music education due to advancements in technology and growing accessibility. This trend is evident in an increasing number of teachers utilizing digital tools in their classrooms and a growing body of studies examining how these tools can support children's learning. In elementary school-related studies, the primary objective of using digital tools is to increase student engagement, which in turn can support the development of musical skills and learning motivation. This systematic review evaluates the current state and effectiveness of interventions in this field. A total of 2,382 records were identified, with 15 studies meeting the inclusion criteria (e.g., studies from peer-reviewed journals, including at least one digital tool, and focusing on elementary students). These studies examined outcomes such as musical performance, music theory skills, creativity, and proficiency in digital tool use. Most studies reported positive effects of using digital tools on musical skills. In several studies, the interventions not only improved measured musical skills but also had significant impacts on motivation and engagement. To assess the trustworthiness of these interventions, the review conducted a comprehensive critical appraisal of their methodological designs. The findings revealed that all studies exhibited certain limitations in their study designs, which undermined the strength of the available evidence. This review highlights the variety of interventions, measurement approaches, and outcomes in this field, acknowledging the challenges of this diversity in drawing overall conclusions. It highlights the necessity of rigorously designed interventions in future studies and provides valuable insights into the effective and broader application of digital tools in elementary music education.

Keywords

Introduction

Digital tools, encompassing a wide range of hardware and software such as mobile devices, digital instruments, and applications (Rice, 2003), play a crucial role in enhancing the teaching and learning experience in elementary music classrooms. Similarly, in music education, digital tools refer to technological resources and software designed to enhance music education by facilitating various aspects of teaching and learning (Sánchez-Jara et al., 2023; Sularso et al., 2023). These tools, collectively referred to as Information and Communication Technology (ICT), include (but are not limited to) web-based platforms; software applications for mobile and computer devices; digital games; video resources; assistive technologies; immersive technologies such as virtual reality (VR) and augmented reality (AR) systems; and tangible hardware such as projection, tablets, computers, and robotic devices. These tools integrate a variety of media forms (e.g., text, graphics, animation, and audio/video) that enrich and diversify learning and teaching content, methods, and their effectiveness (Ruan, 2009). These various media forms are claimed to provide students with multiple modes of engagement, such as visual, auditory, and kinesthetic experiences, enhancing overall comprehension and retention (Revano & Juanatas, 2024). Due to this variety, it is necessary to map out the current landscape of the tools being used and the evidence for their effectiveness to foster future intervention and education practices.

Students using digital tools in elementary music classrooms often demonstrate a better understanding of musical concepts, increased interest in learning, and enhanced creative skills, particularly in composition and improvisation (Gagica Rexhepi et al., 2024). These digital tools can support personalized learning by allowing students to progress at their own pace, engage with interactive platforms, and access extensive digital resources, catering to creativity and collaborative learning. Digital tools can improve engagement, support diverse learning needs, and foster independent learning and creativity (Roman et al., 2024). Some tools, such as GarageBand, Sequencer Software (Lam, 2024), and MIDI controllers (Kladder, 2020), enable hands-on composition, and facilitate real-time musical exploration, and interactive tutorials provide guided instruction. Besides, adaptive music technologies, such as haptic metronomes and visual notation software, assist students with hearing impairments by providing alternative ways to experience rhythm and pitch, ensuring they can actively participate in instrument playing and ensemble activities (Lafuente & Jurado, 2018).

Furthermore, digital tools help students with self-regulation (e.g., managing their practice schedules, setting personal learning goals, and adjusting their performance based on feedback (Saarikallio, 2016) and provide immediate feedback, which is crucial for self-assessment and improvement (Moldovan & Nedelcut, 2022). Digital music recordings function as instructional models that guide students in skill acquisition and serve as self-corrective tools, fostering enhanced auditory discernment and creative development (Chao-Fernández et al., 2020). Moreover, digital tools support music-making activities that can foster students’ social skills, such as negotiation (e.g., deciding on song arrangements in collaborative software), empathy (e.g., responding to peers’ musical ideas in a shared digital workspace), and verbal communication (e.g., discussing creative choices while using online music platforms) (Hallam & Himonides, 2022). Additionally, tools such as practice logs, note feedback tools, and music software can help track students’ progress and provide real-time feedback by offering a sense of achievement and encouraging consistent practice. These tools can promote engagement by making practice more interactive and personalized, helping students stay focused and invested in their musical development (Wan & Gregory, 2018).

Moreover, digital tools can enhance students’ motivation in learning music. In the context of elementary music education, motivation plays a crucial role in student engagement and learning outcomes. For example, the Makey application has been shown to significantly increase student motivation, particularly by nurturing their emotional engagement (Serra-Marín & Berbel-Gómez, 2021). Studies assessing motivational aspects (e.g., Danso et al., 2021; Song et al., 2024; Szabó et al., 2019) have used various frameworks, measuring components such as autonomy, delight, stress, interest, emotional expressions, task-related behaviors, and robot-related behaviors. However, the operational definitions of these constructs vary across studies, with some research not explicitly specifying subscales or indicators. This systematic review aims to synthesize how these motivational aspects are assessed and their relevance to digital tools in music education.

However, challenges remain regarding the use of digital tools in elementary music education. In certain regions, such as rural areas in China, factors such as limited resources and insufficient infrastructure hinder their integration (F. L. Wang et al., 2009). A similar situation exists in Germany, where digital tools are rarely used in music education (Förster & Lepa, 2023). One of the reasons can be that teachers often lack sufficient knowledge and confidence to use these tools effectively (García et al., 2021). Thus, there is a need for professional development to help educators effectively integrate these tools. Additionally, equitable access to technology remains a significant challenge, as schools with greater financial resources are more likely to afford digital tools and infrastructure, while underfunded schools, particularly in rural areas, face barriers such as limited devices, poor internet connectivity, and inadequate technical support (Sularso et al., 2023).

The lack of domain-specific knowledge among music teachers (Ruiz et al., 2023) and the rare use of digital tools in music classrooms highlight the need to develop accessible digital tools designed for classroom music education and to identify music teachers’ positive attitudes toward the use of these digital tools as part of their teaching practices in music classrooms (Förster & Lepa, 2023). Not only do the teachers have some challenges, but the students should also have equitable access to technology. Not all students have the same level of access to digital tools, which can create disparities in learning opportunities (Sularso et al., 2023). Also, while digital tools offer significant benefits in music education, their effectiveness depends on factors such as usability, accessibility, and ease of integration into classroom settings (Stefan et al., 2020). Some tools are difficult to use, require extensive teacher training, or present technical challenges that hinder their potential benefits (Peretti et al., 2025). Therefore, a critical evaluation of digital tools is necessary to ensure they truly enhance learning rather than introducing new obstacles.

Despite the importance of digital tools for students’ musical skills development, enhancing their engagement, creativity, and learning outcomes, specific musical instruments (e.g., recorders, xylophones, ukuleles) and digital tools in elementary music classrooms (e.g., GarageBand, SmartMusic, or interactive whiteboards) remain underexplored and inconsistently implemented. Moreover, although there are several digital assessment tools, few studies have systematically evaluated specific musical skills of students through the self-developed digital tools (e.g., developing video games, Music Island app for active participation), especially in the context of young learners’ cognitive and developmental needs. For example, Weatherly et al. (2024) wrote a systematic literature review about digital games for music education but did not target any age range and did not conduct a critical appraisal to evaluate the strengths of the evidence. Existing studies often focus on general benefits without systematically assessing specific musical skills, engagement levels, or long-term learning outcomes (Förster & Lepa, 2023). Additionally, while digital assessment tools are available, few studies critically examine their reliability and validity in measuring students’ musical development. A systematic review synthesizing findings on the benefits of digital tools, challenges, and assessment effectiveness is essential to address these gaps. By systematically analyzing existing literature, this study will provide a comprehensive overview of how digital tools impact elementary music education, what specific skills they enhance, and how they are assessed.

Research Aim and Research Questions

This study aims to review and synthesize existing research on the use and effectiveness of digital tools in elementary music education. Based on this aim, the following research questions (RQ) are addressed.

Methods

Search Strategy

This study conducted a systematic review adhering to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines (Page et al., 2021). To ensure comprehensive and reliable results, a search strategy was designed to identify studies on using digital tools in elementary music education. Four databases—Web of Science, Scopus, ProQuest, and PubMed—were selected for their extensive indexing of peer-reviewed education, musicology, and digital technology journals. The search focused on English-language articles and conference proceedings published between 2014 and 2024 to capture recent advancements in applying digital tools for music education. Conference proceedings were also included in this systematic review due to the limited availability of peer-reviewed studies on this topic.

The search terms were systematically developed based on the research questions and organized around three core dimensions: “music,” “education,” and “digital technology.” Boolean operators “AND” and “OR” were used to refine the search logic, and tailored keyword combinations were applied to each database to ensure thorough coverage (Supplementary File S1). Searches were restricted to article titles to enhance specificity and reduce irrelevant results, with iterative refinement of keywords to maximize accuracy. An initial search yielded 2,382 articles. To maintain the rigor of the selection process, two researchers independently conducted the searches, and any disagreements were resolved through consultation with a third researcher, ensuring consistency and objectivity in the screening process.

Selection Criteria

Table 1 presents the inclusion criteria and exclusion criteria of the studies included in this review.

Selection criteria.

Study Selection

The PRISMA flow diagram (Figure 1) illustrates the data selection and screening process. After the retrieved search results were imported into Rayyan and duplicates removed, the article screening process commenced, identifying 1,898 relevant studies. Two authors had independently screened the titles, excluding those unrelated to the three screening dimensions. In the second stage, abstracts were independently reviewed, and when disagreements arose between the two authors, a third author was consulted to discuss and resolve all conflicts. Subsequently, 49 records were subjected to full-text screening based on the eligibility criteria outlined in Table 1. To ensure a thorough and unbiased evaluation, the researchers independently assessed these records. Inter-rater reliability, measured using Cohen's Kappa (κ = 0.89), indicated strong agreement among the raters. Ultimately, 34 articles were excluded for not meeting the inclusion criteria, resulting in 15 studies being included in the review.

PRISMA 2020 flow diagram of the study selection process.

Critical Appraisal of Studies

A critical appraisal was performed to assess the strength of the evidence from the included studies. Given that our review encompassed studies with diverse methodologies, we employed different critical appraisal tools as appropriate. Specifically, the Mixed Methods Appraisal Tool (MMAT) was used for mixed methods studies (Hong et al., 2018). The remaining quantitative studies were assessed using the JBI quasi-experimental checklist (Barker et al., 2024). Prior to employing the MMAT and the JBI checklist, the research team gathered to discuss and finalize the specific indicators of each criterion. This practice aligns with the guidance provided in the MMAT and JBI frameworks, recognizing that each research field may emphasize different indicators for quality assessment. The complete and finalized set of indicators and appraisal results is available in the Supplementary File S2. After agreeing on a set of indicators and criteria, the first and second authors independently appraised the quality of the included studies following certain rules below:

When a criterion fulfills at least half of the indicators but not all, the criterion is marked as a minor concern (colored yellow). If the criterion fulfills all the indicators, the criterion is marked as no concern (colored green). Otherwise, the criterion is marked as a major concern (colored pink) The study has overall strong evidence (colored green) if there is no concern among the criteria or if there is only one minor concern. If there are two concerns (either major or minor), the study has moderate evidence (colored yellow). Otherwise, the study has weak evidence (colored pink)

While aligning with the MMAT (Hong et al., 2018) and JBI guidelines (Barker et al., 2024), our appraisal process was adapted and structured with reference to Hamilton et al.'s (2024) methodology to ensure a thorough evaluation of study quality. After independently appraising, an interrater reliability coefficient, Cohen's κ, was computed using SPSS, κ = .77, indicating a good agreement between the two raters. The final results of the trustworthiness ratings were generated after discussing and resolving conflicts between the two raters.

Narrative Synthesis Approach

It is important to note that while the aforementioned tools (MMAT and JBI) assess study quality and bias risk, they do not directly measure efficacy. Consequently, this systematic review employed a narrative synthesis approach to examine the effectiveness of digital tools in music education, following the Synthesis Without Meta-Analysis (SWiM) guidelines (Campbell et al., 2020). Given the methodological heterogeneity of the included studies, such as diverse research designs, intervention types, and outcome measures, a meta-analysis was deemed inappropriate in this systematic review. Instead, findings were synthesized thematically to identify common patterns and assess efficacy across different dimensions of music learning.

To synthesize findings, studies were categorized into four thematic areas: (1) performance skills (e.g., instrumental, vocal, rhythmic skills), (2) theoretical knowledge (e.g., music literacy, sight-reading, tonal recognition), (3) creative skills (e.g., improvisation, composition), and (4) motivational aspects (e.g., engagement, attitude toward digital tools). Within each category, the findings were analyzed to identify trends, differences, and methodological limitations. Studies demonstrating similar outcomes were compared for consistency, while those reporting conflicting results were examined for potential moderating factors, such as sample characteristics, intervention duration, and assessment tools.

By integrating both critical appraisal tools and this structured narrative synthesis, this study ensures a methodologically transparent assessment of digital tool effectiveness in music education.

Results

Coding Process

Table 2 presents the 15 studies’ key characteristics based on the research questions and inclusion criteria. Detailed data and responses to all research questions across the different analytical sections are provided in the subsequent sections.

Study characteristics.

Note. PT = publication type; CP = conference proceeding; JA = journal article; RCT = randomized controlled trial; N/A = information is not available in the publication.

Overview of Included Studies

A total of 15 studies were included in this review, comprising 87% (n = 13) peer-reviewed journal articles and 13% (n = 2) conference proceedings (Table 2). The publications span a decade, from 2014 to 2024, and primarily focus on educational technology, music education, and multidisciplinary technology applications. Geographically, the journals and conferences represent a range of countries. Regarding the study implementation locations, 20% were conducted in Asia (studies 1, 2, 13), 13.3% in the United States (studies 8, 10), and approximately 46.7% in Europe (studies 3, 4, 6, 7, 9, 11, 12), while 20% did not specify the country of implementation (study 5, 14, 15). All 15 studies employed quantitative analysis methods; among them, three studies utilized a mixed-methods approach (studies 8, 11, and 12).

Overall, approximately 73% (n = 11) of the studies incorporated a control group design, comparing one or more experimental groups using digital tools with a control group using a traditional method without digital tools. Approximately 46.7% (n = 7) studies used multi-condition designs to compare different versions of a digital tool. The distribution of sample sizes ranged from a minimum of n = 31 to a maximum of n = 246, with a median sample size of 47. This indicates that most studies in the review used relatively small sample sizes. The duration of the interventions demonstrated considerable variability, ranging from single sessions lasting 50–60 min to extended periods spanning an entire academic year. Notably, 60% of the studies had an intervention period of less than six months, reflecting a tendency towards shorter-term implementations in the reviewed literature.

Digital Tools

Among all the articles, four articles (studies 12 and 15, studies 13 and 14) report on the same two experiments, utilizing the same digital tool in each pair, while the remaining studies each employed unique digital tools. An analysis of the 15 studies reveals three main types of digital tools used in music classroom intervention:

Software: The most common category, including applications, learning platforms, and sharing platforms, with various functional subcategories (studies 1, 2, 5, 6, 7, 8, 9, 10, and 14). Hardware: Primarily standalone devices, such as interactive or feedback robots, used exclusively in studies 12 and 15. These two studies are part of the same longitudinal project and utilized identical hardware tools. Hybrid Tools: Combining software with supplementary hardware devices, these tools represent a multi-tool intervention approach (studies 3, 4, 11, and 13).

We applied a flexible categorization rather than rigidly sorting studies into exclusive groups. For example, although study 8 employed an iPad alongside video games, its main focus was on digital game-based learning software to teach musical concepts to fifth- and sixth-grade students. Accordingly, it was categorized in the software group of digital tools.

Functions of Digital Tools

The functions of the digital tools used in these studies vary significantly, with some tools encompassing multiple functionalities. To systematically categorize their primary functionalities, we examined how each tool was used in the study, the learning objectives it targeted, and the pedagogical approach applied. Based on their primary purposes, these tools can be categorized into four main groups:

Practice-Oriented Tools: These tools are designed to enhance instrumental or vocal performance through structured exercises, skill-building tasks, or guided practice sessions. They typically include rhythm exercises, pitch training, or playing simulation (studies 4, 6, 7, 8, 9, 11, 13, and 14). Music Theory and Literacy Tools: These tools focus on developing theoretical knowledge and musical literacy skills, such as note recognition, sight-singing, and ear training. This category includes tools designed explicitly for theory instruction or integrated with performance training (studies 1, 3, 4, 7, 8, 9, 11, 13, and 14). Creativity-Focused Tools: This category includes tools aimed at providing musical composition and improvisation, and creative exploration. These tools encourage students to experiment with sounds, structures, and musical ideas, often through digital composition software or improvisation-based applications (studies 2, 5, 7, 9, and 10). Feedback Tools: These tools provide real-time or post-performance analysis to guide students’ learning. They may evaluate pitch accuracy, rhythm, dynamics, or other performance aspects, offering immediate feedback to enhance skill development (studies 12 and 15).

This classification system was developed by analyzing how each study described the primary function of the digital tool used, rather than solely relying on the tool's features. Many tools serve multiple purposes, but they were categorized based on their primary educational function as reported in the studies.

Musical Skills and Motivational Aspects

Musical skills represent a broad and multifaceted concept in music education. Although challenging to define comprehensively, music learning is best understood as an integrated process that relies on audition—the internal ability to hear and comprehend music—while incorporating performance and creative practices to deepen and expand musical understanding (Gordon, 2012). This study categorizes musical skills into three primary dimensions—understanding, performance, and creativity—drawing on Bloom's Taxonomy (Anderson & Krathwohl, 2001) to better conceptualize and analyze musical skills. Each dimension represents a distinct yet interconnected aspect of music learning, providing a comprehensive framework for examining the development of musical abilities. The process can be categorized into three primary dimensions: understanding, performance, and creativity.

The understanding dimension focuses on students’ comprehension of pitch and rhythm (Gordon, 2012) and the integration of visual and auditory methods to learn music notation, writing, and symbols (Tóth-Bakos, 2016). These foundational knowledge areas, rooted in perception and cognition, are defined in this study as musical literacy, aligning with theoretical skills.

The performance dimension builds on musical literacy, emphasizing the application and expression of this foundational knowledge. It encompasses the development of instrumental, singing, rhythm, and listening skills through auditory input, imitation, and practice (Gordon, 2012). In this study, these abilities are collectively referred to as performance skills.

The creativity dimension represents the advancement from foundational musical skills to improvisation and composition. It involves the natural development of creative abilities, such as composing and improvising, following the mastery of basic auditory patterns (Gordon, 2012). Here, creative skills denote the fostering of students’ innovative capacities in music education, primarily through composition and improvisation.

The purpose of this categorization is to systematically identify the types of skills measured across the 15 selected studies. This framework facilitates the observation of research focuses and the identification of prevalent trends while also serving as a basis for the classification analysis in the Effectiveness Analysis section (3.6). Table 3 presents the categorized dimensions and their key descriptors.

Categories of music skills among studies.

Similarly, to facilitate the observation and analysis of the effectiveness of digital tools, this section reports on studies related to motivational aspects. The report is based on the content measured in 15 studies, without discussing the theoretical concepts themselves.

Skills Assessed in the Studies

Studies 5, 6, 10, and 12 focused on a single music skill. Studies 1, 2, 3, 8, 11, 14, and 15 addressed two types of music skills. Studies 4, 7, 9, and 13 examined three or more types of musical skills.

Performance Skills were the most frequently measured category: Instrumental skills, rhythmic skills, vocal skills, and listening skills are addressed in multiple Studies (1, 3, 4, 6, 7, 8, 9, 11, 13, 14, and 15). These skills are often central to music education due to their observable and measurable nature.

Theoretical Skills were also a common focus in studies, such as music literacy (Studies 1, 2, 3, 4, 6, 7, 8, 9, 11, 13, and 14), which reflects the importance of theoretical understanding in music education. These skills highlight students’ cognitive abilities and comprehension of musical knowledge.

Creative Skills received a moderate level of attention: Composition and improvisation (Studies 2, 5, and 10) were discussed in three studies, indicating a growing interest in fostering creative abilities in music education.

Motivational Aspects Assessed in the Studies

Of the 15 included studies, five measured the motivational aspects of students in classrooms. One study measured motivation without specifying further details of the subscale and content (Study 7). Also, another study observed the behaviors of students, including motivation, without specifying details of aspects, indicators, or themes (Study 11). Measured aspects in this study included autonomy, delight, stress, interest, emotional expressions, task-related behaviors, and robot-related behaviors. One study measured concentration-related behavior through observation, including off-task behaviors and on-task behaviors (Study 12). One study assessed learning attitudes with cognitive components, affective components, and behavioral tendency components (Study 2).

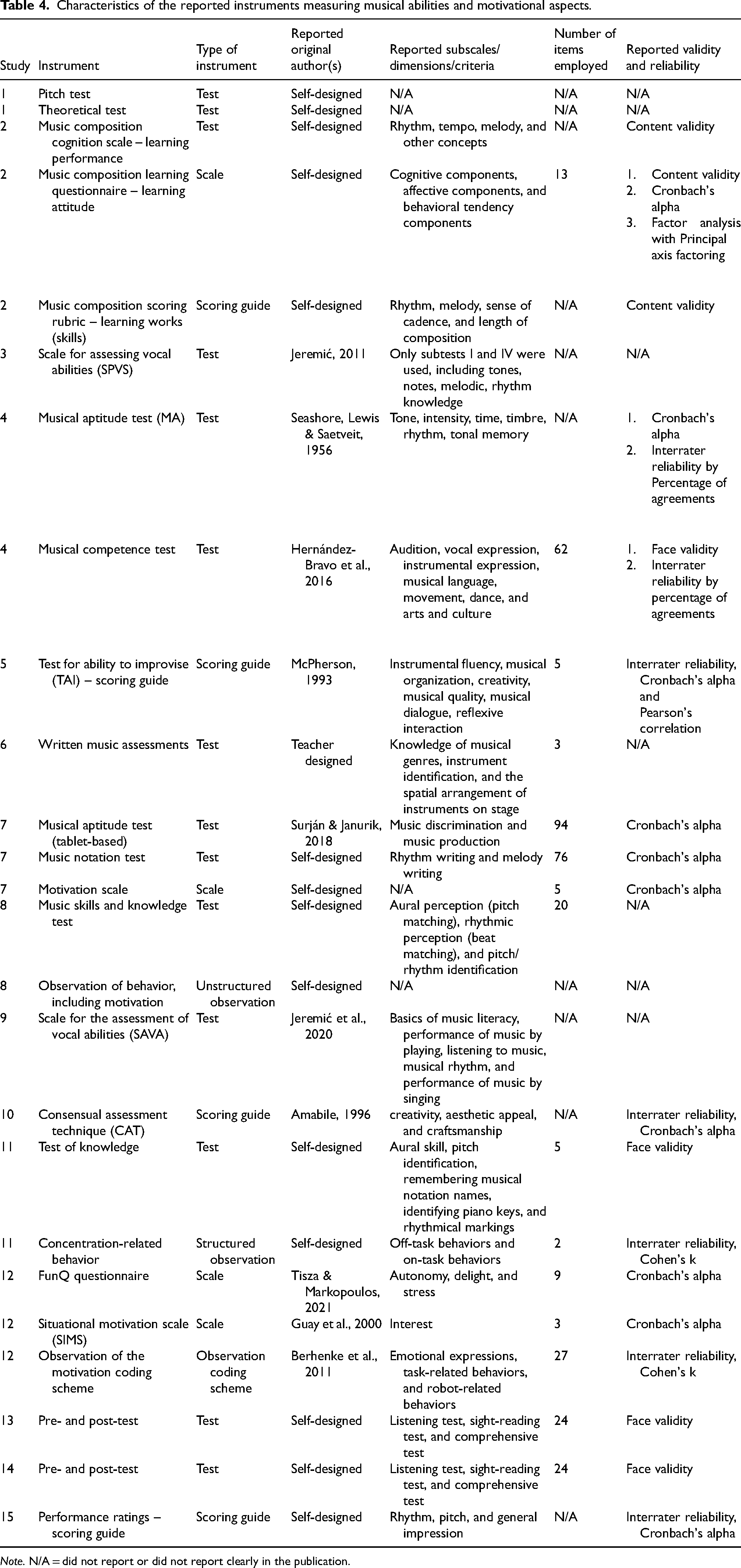

Instruments for Assessing the Impact of Digital Tools on Musical Skills and Motivational Aspects

The overall characteristics of the instruments used in the included studies are provided in Table 4. Almost all instruments in the 15 articles were uniquely developed or tailored to the specific context and research questions. Among them, 24 unique instruments were identified, and only one instrument was repeatedly employed in Studies 13 and 14. Thirteen unique tests were reported, and it was the most common type of instrument. There were four scales to measure learning motivation, four scoring guides, two structured observations using coding schemes, and one unstructured observation; no interviews were used to assess musical abilities or musical learning motivation.

Characteristics of the reported instruments measuring musical abilities and motivational aspects.

Note. N/A = did not report or did not report clearly in the publication.

Of the 23 uniquely identified instruments, 9 lacked specifications of the number of items employed. For the other instruments, the total number of items ranges from 3 to 94. When considering the total number of tested items in a study, the number ranges from 3 to 175, a huge number of test items for primary students.

Of the 15 included studies, 9 used at least a self-designed measurement tool (Studies 1, 2, 6, 7, 8, 11, 13, 14, and 15). Among them, one test was designed by the music teacher who also taught participants in the experiment (Study 6). Seven studies employed at least an adapted measurement (Studies 3, 4, 5, 7, 9, 10, and 12). Four studies employed at least one measurement tool that had been previously developed by one of the authors (Studies 3, 4, 7, and 9). Specifically, three studies conducted observations to study the behaviors of students related to motivation in learning (Studies 8 and 12) or concentration-related behaviors (Study 11). Among them, two studies provided coding schemes for observing students’ behaviors (Studies 11 and 12).

Regarding examiners of the tests, three studies utilized at least one measurement based on teacher judgments: marks in music (Study 4), consensual assessment technique (Study 10), and performance ratings (Study 15). One study (Study 5) assessed students’ ability based on judgments of university professors using a pre-defined scoring guide (based on the Test for Ability to Improvise). In the remaining studies, the tests were either marked by researchers or groups of researchers; however, it was not specified whether the researchers were blinded to the treatment condition or not.

Regarding the reliability and validity of the instruments, six studies did not clearly report any form of validity and reliability; these characteristics can include pilot testing the instruments, face validity or content validity, Cronbach's Alpha for internal consistency or interrater reliability, and factor analysis. Overall, two studies assessed face validity (Studies 4 and 11), while content validity was reported in three studies (Studies 2, 13, and 14). As reported in seven studies, Cronbach's Alpha was mostly used (Studies 2, 4, 5, 7, 10, 12, and 15). Where a group of experts marked the tests, three studies reported interrater reliability by estimating the percentage of agreements (Study 4), Cronbach's Alpha (Studies 10 and 15), or combining the Pearson correlation coefficient with Cronbach's Alpha (Study 5). Two studies conducting observations based on a coding scheme reported interrater reliability between coders by estimating Cohen's kappa coefficient (Studies 11 and 12). Finally, one study performed factor analysis, but the authors did not report the results (Study 2).

The Effectiveness of Digital Tools Used in Music Education

Table 5 provides an overview of the tools used and reported outcomes of each included study, along with critical appraisal commentary and a trustworthiness indicator. Table 6 shows the specific risk of bias for each included study, using three colors to represent the trustworthiness of evidence and indicating the confidence rating for each study with color coding. The criteria for proportion standards are detailed in Section 2.4, Critical Appraisal.

Measured outcomes and critical appraisal of included studies.

Risk of bias assessment for included studies.

Note. The table summarizes the critical appraisal results using the MMAT checklist. The JBI checklist yielded similar overall ratings. Due to space constraints, full bias assessment results for both checklists are provided in Supplementary File S2.

Impact on Performance Skills

In the context of performance skills, Studies 4, 9, and 15 addressed instrumental performance skills and reported positive outcomes. However, Study 15 lacked methodological rigor; for example, although confounding variables were considered during the pre-test phase, there was inconsistency between the study design description and the result assessment methods.

Study 9 offered a more comprehensive focus on performance skills, including vocal performance, rhythm skills, and auditory abilities. All sub-skills demonstrated notable improvements in the experimental group. However, the study contained numerous ambiguities, such as unclear hypotheses and the absence of reliability testing, which limited the trustworthiness of both the study design and results.

Study 4 also measured singing and listening skills, both of which yielded higher outcomes. Nevertheless, the study failed to report the random allocation process for participants, and evaluator blinding was not ensured throughout the experiment.

Studies measuring rhythm skills also included Studies 7, 8, 13, and 15. Among these, Studies 8 and 15 demonstrated positive improvements in post-tests. However, Study 8 revealed design limitations, including an unclear rationale for the mixed-methods approach, a lack of explicit qualitative data analysis, and insufficient integration of qualitative and quantitative findings, which diminished the overall impact of the results.

In other studies, involving listening skills, Study 1 showed improvement in relative pitch in the post-test results. However, this study lacked detailed intervention descriptions and was the only one that measured a single group. Study 7 did not show significant differences in auditory discrimination skills during post-tests, but significant progress was observed in repetition tasks. Study 13 reported significant improvement only in performance on the simplified version of the digital game. Despite this limitation, the study demonstrated high design reliability, with the primary constraint being a non-representative sample. A similar pattern was observed in Study 14, where differences in outcomes across groups highlighted the varying impacts of the same digital tool. The findings were heavily dependent on group-level comparisons, underscoring the importance of contextual factors in interpreting results.

Impact on Theoretical Skills

Among studies assessing multiple musical skills, Study 6 focused specifically on improving music literacy, targeting specific knowledge points. While it compared baseline results and showed significant post-test improvement, it lacked control of confounding variables, had an unclear research question, and used unvalidated tests.

Studies 7 and 13 both measured reading skills (sight-singing) and reported significant improvements. However, Study 13, despite utilizing MIDI keyboards and other instruments, focused primarily on fundamental knowledge derived from instrumental practice. Positive effects were observed only in the simplified version of the digital application.

Study 8 assessed rhythm and pitch matching but appeared to include composition tasks as well, with research questions lacking clarity. Post-test results showed significant improvements in all groups using digital tools, but qualitative analysis lacked integration with quantitative findings. Study 14 focused on the recognition of notes and melodies, with students’ performance improvements depending on the design implementation and instructional approach with the digital tool.

Studies 2, 3, 4, and 11 assessed multiple dimensions, including tone, melody, and rhythm. Studies 3 and 4 employed multiple digital tools and considered individual student differences but failed to control for confounding variables. Despite this limitation, both studies reported positive outcomes. Study 2 used composition-focused software and compared baseline performance but did not further control for confounding variables. The results revealed significantly better performance in the experimental group. Study 11 also employed a combination of tools but uniquely compared their effectiveness. Post-test results indicated significant knowledge gains in both experimental groups using digital tools, particularly the tablet-based group. However, inconsistencies were noted between qualitative data analysis and interpretation.

Studies 1 and 9 assessed basic theoretical skills without clearly defining sub-skills. Although both studies showed substantial post-test improvements, their intervention descriptions lacked detail, and reliability testing was absent.

Impact on Creative Skills

Studies 5 and 10 focused on creative skills. Study 5 demonstrated the positive impact of a reflexive interaction system on improvisation but highlighted the lack of consistent statistical significance between groups. This may be due to the lack of a pretest to control baseline differences. Study 10 showed significant improvements in creativity scores, but no significant differences were observed in craftsmanship and aesthetic appeal compared with the traditional handwritten method. Study 2 assessed students’ compositional outputs and reported positive results. However, the greater composition length during teaching may reflect the researcher's dual role as both instructor and assessor during the intervention.

Impact on Motivational Aspects

Studies 2, 7, 8, 11, and 12 examined motivations. Studies 2 and 7 reported significant improvements in students’ attitudes toward using tools for music learning. Studies 11 and 12 analyzed engagement through mixed methods, noting positive behavioral responses, but inconsistencies between data, findings, and interpretation undermined the trustworthiness of their conclusions. Study 8 observed indicators of intrinsic motivation among the iPad groups but failed to specify whether these indicators reflected learning motivation or merely the motivation to play. Additionally, this study failed to compare the motivational aspects across the three groups. Furthermore, Study 8 unintentionally introduced bias, potentially lowering the control group's performance and motivation when students demonstrated frustration and complained about not receiving the treatment.

Synthesis of Digital Tools’ Effectiveness in Primary Music Education

By synthesizing the empirical evidence from the 15 studies, the study found that the integration of digital tools in primary music education significantly enhances both students’ musical skills and motivation to engage in learning.

Digital tools have a demonstrable impact on the acquisition of theoretical, practical, and creative music skills in primary students. Multiple studies (e.g., Studies 2, 3, 4, 6, 7, 9, 11, 13) showed significant gains in areas such as music literacy (note writing, rhythm, melody understanding), pitch and rhythm recognition, instrumental and vocal performance, sight-reading skills, and composition creativity and craftsmanship. While improvements in creativity were noted (especially in Study 10), the aesthetic appeal to experts was not significant, suggesting that digital composition may require additional complementary instruction or may be more effective for certain creative domains. Moreover, even with methodological limitations (non-representative samples, unclear hypotheses, lack of validated tools), the trend consistently favored digital interventions over traditional methods.

Digital tools were also linked to increased motivation, autonomy, and behavioral engagement in music learning (e.g., Studies 2, 7, 8, 11, 12). Specific findings included positive student attitudes toward music lessons, increased persistence in practice, and higher engagement, especially with interactive or gamified tools (e.g., Music Island, Music Fun Games, SocibotMini). However, mixed-method studies often lacked coherence between qualitative and quantitative data, limiting deeper insights into the motivational mechanisms.

Critically, the effectiveness of digital tools varied depending on the instructional approach (simple vs. full versions in Study 13), tool usability and accessibility, teacher involvement, and potential bias (e.g., when researchers acted as instructors). Some studies (e.g., Study 14) highlighted that the design of the digital tool directly influenced which music skills were developed, underscoring the importance of aligning tool design with educational goals.

Finally, despite generally positive findings, many studies shared common limitations, including non-representative samples, inadequate control for confounding variables, lack of validated measurement tools, and poor description of interventions. These methodological weaknesses reduce the generalizability and reliability of results, although they do not negate the observed trends.

Benefits and Challenges

The use of digital tools in music education offers numerous benefits beyond the development of musical skills. Most included studies reveal that digital tools enhance students’ motivation (Studies 3, 5, 7, 8, 11, 12,13, and 14) and make lessons more enjoyable (Studies 2, 7, 8, and 10). However, this motivation does not always stem from the educational content itself; as shown in Lesser's (Study 8) work, students are primarily motivated by the interactive experience. Studies also show that ICT promotes immediate feedback (Studies 2, 4, and 8), visualization (Study 2), and ease of use (Studies 10, 11, and 14). Huang et al. (Study 2) highlighted that music composition with digital tools was more accessible for students than anticipated, as they could instantly listen to their creations, boosting their learning satisfaction. The playback function was also favored in another case (Study 10). According to Lesser (Study 8), digital tools enable students to work independently and progress at their own pace. Beyond supporting independent learning, these tools foster collaboration among students. Danso et al. (Study 11) and Lesser (Study 8) observed that students readily helped each other when facing difficulties, and as a result, fewer behavioral problems were reported (Study 11). Study 6, though not directly measuring motivation, found that mobile virtual reality provided a positive user experience, potentially enhancing engagement. Study 5 explored human–computer interaction, noting that MIROR-Impro could enhance intrinsic motivation, particularly attention span—a key aspect of user experience.

Despite these advantages, several studies identified challenges related to user experience, which is the efficiency and effectiveness of a product that affects the emotions of users (Lewis & Sauro, 2021). Study 2 emphasized the importance of usability testing before software implementation but omitted details on measurement or improvement strategies. Study 11 showed that the new learning device impeded learning because of its low ease of use, which negatively affected learning performance. Similarly, Study 10 highlighted that one of the disadvantages of notation-based software was technical difficulty. Also, Study 8 showed that while iPads were perceived as a familiar tool to students, they still faced some issues that needed assistance from teachers or other students. Similarly, Study 6 required two technicians to help with wearing virtual reality devices and applications. Another challenge that arose from Study 12 is the limitation in the function of the system that can cause distrust and low adaptability following student development. Lastly, while Study 8 observed that iPad apps increased the intrinsic motivation of students, it remained unclear whether this was directed toward learning or simply the enjoyment of interaction.

Discussion

The current systematic review examined the use and effectiveness of digital tools in elementary music classrooms. Drawing on empirical evidence from 15 studies from 2014 to 2024, along with critical appraisal tools and a narrative synthesis, our analysis offers insights into the use and impact of digital tools in music education. The research addresses three key questions: (1) classification of digital tools implemented, (2) evaluation methodologies and research designs employed, and (3) assessment of tool effectiveness in developing musical competencies and enhancing student motivation.

The first question is related to the types of digital tools that are commonly used in music class experiments. Our synthesis identified three primary categories: software-based, hardware-based, and hybrid tools. Analysis revealed software tools to be the predominant choice, likely due to their accessibility, cost-effectiveness, and scalability across devices. Additionally, software tools often feature flexible functionalities, allowing them to be adapted to diverse educational objectives. In contrast, hardware tools, though less frequently employed, offer unique advantages in specific instructional contexts. For example, feedback robots excel at capturing and analyzing students’ musical performances in real time, providing tailored feedback for improvement (Song et al., 2024). Meanwhile, hybrid tools represent an integrated intervention strategy, combining the flexibility of software with the interactive capabilities of hardware. This multi-dimensional approach proves highly effective in addressing complex instructional needs, particularly by fostering collaborative learning and accommodating students with diverse skill levels (F. L. Wang et al., 2009). From the perspective of functionality, all studies measuring performance and theoretical skills utilized software tools, likely due to the diversity of skills they target. Software tools are designed with rich content that enables the integration of multiple skill objectives, utilizing visual and interactive exercises to enhance mastery (Dore et al., 2018; Kirkorian & Choi, 2017; Szabó et al., 2019). Studies focusing on creative skills exclusively employed software tools, indicating their advantage in facilitating content analysis and recording. Hardware tools, by contrast, emphasize user experience and student acceptance. For instance, Study 11 reported higher acceptance of software over hardware due to reduced technological anxiety, which promotes engagement (Gonzalez, 2013). Furthermore, multimodal combinations better support cognitive processing and improve learning efficiency (Moreno & Mayer, 1999). Interestingly, unassessed learning processes were more favored (Study 12). Without the pressure of assessment, students tended to focus on the intrinsic enjoyment and meaning of tasks rather than external rewards or grades.

The second research question addresses assessment methods and intervention designs. Analysis of the 15 studies identified 24 distinct assessment instruments, with most being self-developed or adapted. Tests emerged as the most common instrument type, followed by motivation scales, scoring guides, and structured observations. Concerning the reliability and validity of the instruments, six studies did not clearly report any form of validity or reliability. Face or content validity was assessed in a few studies (Studies 2, 4, 11, 13, and 14), while Cronbach's alpha was the most frequently used reliability measure in several studies (Studies 2, 4, 5, 7, 10, 12, and 15). Some studies reported interrater reliability through percentage agreements, Cohen's kappa, or correlation coefficients, but only one (Study 2) attempted factor analysis—without disclosing results. The lack of factor analysis and standardized validation methods in the reviewed studies limits comparability and confidence in findings (Schmitt et al., 2018). Future research should focus on developing and validating standardized instruments to ensure more reliable assessments of digital tools in music education.

From the perspective of research design and its association with digital tool use, single-group designs and post-test-only approaches were predominantly employed to evaluate isolated tool effects or short-term changes. In contrast, non-equivalent pretest-posttest designs and randomized controlled trials (RCTs) were more commonly used in studies comparing digital tools with traditional methods. This indicates a potential trend: Rigorous research designs are typically linked to comparative studies, ensuring greater control over confounding variables and stronger causal inferences (Talavera et al., 2023). Simpler designs, such as single-group or post-test-only approaches, tend to focus on isolated evaluations of specific tools, offering insights into their standalone efficacy.

We observed that an advantage of one mode of composition was also a disadvantage of the other, as notation-based software facilitated creativity, whereas handwritten notation supported craftsmanship (Kang & Yoo, 2021). This reflects a broader reality in educational research—no single technology or method consistently excels across all contexts (Agres et al., 2021). Instead, their advantages and disadvantages are often relative and context dependent. This insight serves as a reminder that intervention designs must consider specific objectives, task demands, and resource availability. Similarly, under the scrutiny of MMAT and JBI standards, while all 15 studies exhibited methodological imperfections to varying degrees, their strengths became apparent when juxtaposed with the weaknesses of others. Studies with more robust designs should carry greater weight in shaping the collective understanding of digital interventions (Louis et al., 2002). Nevertheless, the positive findings from these studies, though often localized in scope, offer valuable preliminary evidence for the promising potential of digital tools in educational contexts.

The last research question investigates the efficacy of digital music technology in enhancing children's skills and motivational aspects. Among all the included studies, musical literacy emerged as the most frequently measured skill, followed by performance skills, with some studies even assessing multiple sub-skills. This underscores the central role of musical literacy in music education, prioritizing the cultivation of auditory abilities and cultural awareness in early education (Buzás, 2019; Pitts, 2017). However, this does not imply that less-measured skills, such as creativity, are undervalued. In the process of learning musical skills, each sub-skill is interconnected. As reflected in the Bloom's Taxonomy framework, the progression from absorbing knowledge to creating is a robust cycle, where higher-level learning outcomes depend on a solid foundational accumulation (Anderson & Krathwohl, 2001). It also aligns with Carl Orff's pedagogical philosophy, which emphasizes an experiential approach to music learning. By integrating singing, play-based activities, and improvisation, students are encouraged to explore melodies and rhythms in an engaging environment, embodying the principle of “learning through play” (Shiyao & Noordin, 2024). Therefore, while the development of creative skills in elementary music education should not be overlooked, the foundational role of musical knowledge remains critical for students’ overall musical growth.

In synthesizing effectiveness across performance skills, theoretical skills, creative skills, and motivation, the most consistent impact was observed on motivation and engagement in music education. Most studies affirmed the motivational benefits of digital tools, which extend beyond gamification to include reflective and interactive learning experiences. This highlights the effectiveness of learning within technology-driven and entertainment-oriented digital environments, as noted by Affah (2019). However, there are concerns about whether video games actually enhance motivation to learn or only the motivation to play (Lesser, 2020). Across the 15 studies analyzed, shorter interventions were generally associated with higher levels of student motivation. However, longer interventions, such as Study 7, also demonstrated significant increases in motivation, suggesting that duration alone is not the sole determinant of motivational outcomes. As direct causal relationships between intervention duration and motivation remain challenging to establish, these findings underscore the importance of rigorous research designs. For example, Studies 13 and 14 implemented multi-condition-controlled trials with robust methodological frameworks, enabling more reliable causal inferences.

Although all studies reported either significant or modest positive impacts on measured musical skills, they generally lacked detailed descriptions of sample representativeness. This limitation was particularly evident in most studies, with Studies 4 and 5 showing moderate constraints due to sampling issues. When a digital tool encompasses a wide range of musical skills, it inevitably involves complex programming and high-level design requirements. This, in turn, demands rigorous experimental objectives and robust research designs (Renney & Gaster, 2024). Studies 7, 13, and 14 exemplify such intricate and challenging research designs, highlighting the need for methodological precision in evaluating advanced tools. Additionally, as Ivascu emphasized, tracking and evaluating application effectiveness is crucial for improving the quality and relevance of these digital tools (Ivascu et al., 2024). Supporting this notion, Studies 12 and 15, as well as 13 and 14, assessed the same digital tool across different times and experimental setups, indirectly validating its efficacy and consistency in various contexts.

It is challenging to discuss the significance of a single tool, as nearly every study incorporates multiple tools in their experiments. However, it is crucial to explicitly detail which tools are used during interventions, how they are used, and how they interact with each other to influence the outcomes to maintain internal validity. Furthermore, such complex design introduces challenges, including limited external validity due to short intervention durations and small sample sizes (Kostis & Dobrzynski, 2020). For example, while Studies 12, 13, 14, and 15 reported statistically significant results, they were potentially constrained by reduced statistical power and limited intervention effects. In contrast, Studies 3, 7, and 9, despite some confounding factors, demonstrated improved external validity through their longer intervention period and larger sample size. Among the studies analyzed, those incorporating pre- and post-tests with control groups (e.g., Studies 2, 3, 4, 6, 7, and 9) provided more compelling evidence of significant effects. Furthermore, studies that establish validity evidence, such as 2, 4, 11, 13, and 14, highlighted greater methodological rigor and reliability, underscoring their contributions to the field.

Overall, the literature highlighted that using digital tools in music education enhances students’ motivation and enjoyment of lessons. Immediate feedback, ease of use, and support for both independent learning and collaboration are among the key benefits of ICT in this field. However, several challenges related to user experience may hinder students’ learning motivation and performance, such as low ease of use, technical difficulties, low adaptability, limited functionality, and required technical assistance. Therefore, the successful improvement of musical skills relies heavily on the integration of students’ learning environments. It is crucial for students to possess the capacity to adapt to digital technologies and for educators to have strong musical expertise and ICT competencies (Peretti et al., 2025). This combination ensures that ICT-based learning is both meaningful and effective.

Implications, Suggestions for Future Research, and Limitations

This study has several implications for music education in primary classrooms. First, primary students and teachers can leverage diverse digital tools, including software, hardware, or a combination of both, to enhance musical skills and motivation. However, teachers should be mindful that while gamified tools may increase engagement in play, they do not necessarily cultivate long-term motivation for learning. When selecting tools, priority should be given to usability, students’ familiarity, and readiness for effective implementation. Tools with poor usability, such as those with steep learning curves, cluttered interfaces, or frequent technical failures, can impede student progress rather than support it. Additionally, teachers need to possess not only foundational music knowledge and skills but also adequate digital literacy. Experienced teachers are often better equipped to maximize the effectiveness of digital teaching methods. Furthermore, while combining multiple tools may seem advantageous, several studies indicate that a single, well-chosen tool can significantly enhance both performance and motivation. Thus, teachers are encouraged to begin with accessible, user-friendly tools. Through experimentation, they can determine optimal implementations.

For future research, a robust research design is foundational. Researchers must clearly define their objectives, employ rigorous methods, and ensure intervention measure reliability. While integrating multiple technologies reflects real-world classroom practices, it hinders isolating specific tools’ impacts, making it challenging for teachers to evaluate their effectiveness. Researchers should adopt carefully designed studies that address these complexities, enabling clearer insights into the effectiveness of individual tools.

This study has some limitations. First, we identified many irrelevant studies during the screening process that deviated from the search logic, suggesting inaccuracies in the search strategy or systemic database issues. We suggest that future reviews should be mindful of these concerns and develop more effective methods to improve the results obtained from search databases. Second, the assessment methods used in the included studies were limited. Most studies relied on pre- and post-test designs, which, while useful for measuring learning outcomes, do not capture real-time changes in student engagement or learning processes. This limits understanding of how digital tools influence students during actual use. Although some studies used structured observations, only a few employed coding schemes to systematically analyze behavior. Future research should use real-time data (e.g., log data, eye-tracking, continuous behavioral observations) to provide deeper insights into the effects of digital tools on learning performance and motivation. Finally, appraisal tools (MMAT and JBI checklists) revealed limited credibility in included studies due to small sample sizes, limited control groups, or unclear reporting of assessment reliability and validity. Methodological variability precluded definitive conclusions. Therefore, future reviews may benefit from focusing on studies with stronger experimental designs and validated assessment tools to improve the reliability of findings. Despite these limitations, this review offers valuable insights into the role of digital tools in music education and highlights key areas for further research and methodological improvement.

Conclusion

This study conducted a systematic review and analysis of digital tools’ usage and effectiveness in elementary music education, synthesizing evidence from 15 included studies. Several key findings emerged. First, digital tools are diverse in nature, and the integration of innovative tools with creative research designs highlights the vast potential of digital methods in education. Among these, software applications stand out due to their flexibility and multifunctionality, dominating many music intervention experiments, albeit with reliance on supporting hardware infrastructure.

Within these applications, core musical literacy and performance skills in elementary music education are prominently addressed. In terms of effectiveness, all studies reported positive impacts on students’ musical skill development, regardless of the scale or duration of the experiments. While limitations in representativeness and reliability were observed in some studies, their overall value remains undeniable. The significance of such studies lies in clarifying their specific objectives and contexts and in maximizing the effectiveness of their interventions.

Thus, this review not only provides an overview of the current application of digital tools in music education but also underscores the importance of rigorous research design. Furthermore, it offers direction for future studies to contribute effectively to the broader application and deeper exploration of digital tools in music education.

Supplemental Material

sj-docx-1-mns-10.1177_20592043251363338 - Supplemental material for The Use and Effectiveness of Digital Tools in Elementary Music Education: A Systematic Review

Supplemental material, sj-docx-1-mns-10.1177_20592043251363338 for The Use and Effectiveness of Digital Tools in Elementary Music Education: A Systematic Review by Liu Yihan, Tran Van Cuong, Bernadett Kiss, Tun Zaw Oo, Norbert Szabó and Krisztián Józsa in Music & Science

Footnotes

Action Editor

Jessica Pitt, Royal College of Music.

Peer Review

Two anonymous reviewers.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

We did not collect or analyze data from students, parents, or teachers directly, but instead conducted a systematic review of published literature. Therefore, no ethical concerns arise.

Funding

University of Szeged Open Access Fund. Grant ID: 7956. This study was funded by the Scientific Foundations of Education Research Program of the Hungarian Academy of Sciences and by the Digital Society Competence Centre of the Humanities and Social Sciences Cluster of the Centre of Excellence for Interdisciplinary Research, Development and Innovation of the University of Szeged. The authors are members of the New Tools and Techniques for Assessing Students Research Group.

Institutional Review Board Statement

The study was conducted according to the guidelines of the Declaration of Helsinki and approved by the Institutional Review Board of the University of Szeged Doctoral School of Education (Reference number: 5/2025, and approval date: 3 February 2025).

Informed Consent Statement

This study does not require an informed consent statement.

Data Availability Statement

This study does not include Humans' data.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.