Abstract

Over recent years, many researchers have developed and tested interventions to help music students practice and prepare for performances effectively. While these interventions have led to positive outcomes, their scalability is currently limited. To address this challenge, we developed PractiseWell, an online intervention to equip tertiary piano students with skills and strategies for effective practice. We used a theory- and evidence-based approach to develop the content. In designing the intervention (i.e., how the content is delivered), we drew on the person-based approach and the literature on design features from the field of healthcare. This article reports the development of PractiseWell in three parts. Part I reports a systematic review that was conducted to inform the content of the intervention. Part II reports the development of PractiseWell using the Guidance for Reporting of Intervention Development (GUIDED) checklist. Part III describes the intervention using the Template for Intervention Description and Replication (TIDieR). We discuss implications and future directions for intervention research in the context of performance psychology for musicians.

Keywords

Music students invest thousands of hours in individual practice, to hone their skills (Macnamara & Maitra, 2019). Tertiary music students (i.e., students in higher education) typically spend 20–30 hr per week on private practice (Jørgensen, 2004; Macnamara & Maitra, 2019) but many of these students do not know how to use this time effectively (McPherson et al., 2019; Miksza et al., 2018; Mornell et al., 2020). This is a concern, since quality of practice is paramount to performance quality and achievement (Duke et al., 2009; Suzuki & Mitchell, 2022; Williamon & Valentine, 2000). Furthermore, the development of effective practice methods forms an important component of holistic care for musicians’ physical and psychological health (Bird, 2013; Kegelaers & Oudejans, 2020; Matei et al., 2018; Perkins et al., 2017; Yang et al., 2021). To practice effectively, students must learn to regulate their own practice, using skills such as goal setting, time management, and self-evaluation (McPherson, 2022), yet these self-regulatory skills are rarely taught in instrumental lessons or at conservatoires (Concina, 2019; Gaunt, 2010; Koopman et al., 2007).

In response, researchers have tested interventions aimed to promote effective practice methods and have reported positive outcomes, such as improved practice quality and increased confidence (e.g., Clark & Williamon, 2011; Hatfield, 2016). Now that the findings of studies have demonstrated the feasibility of teaching these skills, the next challenge for researchers is to make such interventions more scalable. These interventions are currently not widely available, owing to such issues as financial cost, time constraints, and the unavailability of experts to deliver them. One way to increase the availability of such interventions would be to develop and implement an online one.1 Its practical advantages would be that no expert would need to be present and the students would have flexibility in terms of time and location. Online interventions provide a higher degree of anonymity, which may be appealing to musicians who associate receiving performance psychology support with stigma (Pecen et al., 2016; Suzuki & Pitts, 2023). From the point of view of researchers, online interventions allow a wider range of participants to be recruited and their progress, engagement, and adherence to be tracked (Miller et al., 2021).

Nevertheless, online interventions present a range of unique challenges. The main challenge pertains to engagement: in healthcare, online interventions typically have low usage and high dropout rates, and these can also lead to low effect sizes in trial studies (Eysenbach, 2005; Kohl et al., 2013; Murray et al., 2009). In the context of performance psychology interventions for musicians, recruiting and retaining sufficient participants can be a challenge, even for in-person interventions (Clark & Williamon, 2011; Suzuki & Pitts, 2023). Moreover, music students are often reluctant to invest time in activities other than physical practice as they feel that they are a waste of time, even when they understand the potential benefits (Kruse-Weber & Sari, 2019). Taken together, this suggests that recruiting and engaging music students for an online intervention could be difficult. It is therefore critical that online interventions for musicians are designed carefully to maximize user engagement and that measures are taken to minimize potential issues.

Intervention Development

Developing an intervention is a complex process involving various components, such as planning, reviewing the available evidence, and involving stakeholders (O’Cathain et al., 2019a). This process is a critical stage in an intervention study because it directly influences the potential efficacy of the intervention and could lead to a waste of resources if not conducted carefully and rigorously. However, researchers rarely report this process in publications, typically reporting only the results of pilot and trial studies. In recent years, intervention development studies describing “the rationale, decision-making processes, methods and findings which occur between the idea or inception of an intervention until it is ready for formal feasibility, pilot or efficacy testing prior to a full trial or evaluation” (Hoddinott, 2015, p. 1) have gained traction in healthcare research. Researchers have investigated ways in which the acceptability and engagement of interventions can be optimized, and various guidelines for and approaches to intervention development have been published (for a review, see O’Cathain et al., 2019b). In terms of reporting the development process, Duncan et al. (2020) conducted a consensus study with researchers and stakeholders, and developed the Guidance for the Reporting of Intervention Development (GUIDED), in the form of a checklist of 14 items that should be reported in publishing intervention development studies.

While this literature on intervention development comes from the field of healthcare, we believe that this body of knowledge is highly applicable to music performance science. By utilizing guidelines and theories for intervention development published in healthcare research, we can maximize the rigor, transparency, and efficacy of interventions developed and implemented for musicians.

Aims and Overview

The aim of this study was to develop PractiseWell, which is an online intervention designed to equip tertiary piano students with skills and strategies for effective practice and performance preparation. A secondary aim of this article is to demonstrate the value and utility of applying theories and guidelines from intervention design in healthcare to music performance science.

To develop PractiseWell, we gathered information over two phases. In the first phase, we aimed to gather information regarding the content of the intervention. To do this, we first established the theoretical and empirical basis for the content of the intervention through a review of the relevant literature and a systematic review of existing interventions. The systematic review is reported in this article. We also gathered information through interviews with conservatoire piano teachers about effective practice. This is reported elsewhere (Suzuki et al., 2024). In the second phase, we aimed to gather information on how the intervention should be designed and delivered to maximize user engagement. To do this, we consulted the literature on intervention design in healthcare and conducted an ad hoc survey with tertiary music students.

This article is organized in three parts. Part I presents the systematic review that was conducted in the first phase of the study. Part II describes the development of PractiseWell using GUIDED. Part III describes the final intervention using the Template for Intervention Description and Replication (TIDieR; Hoffmann et al., 2014), which is the method for reporting intervention content recommended by GUIDED. TIDieR is a 12-item checklist, developed by Hoffmann et al. (2014), to facilitate the reporting of intervention content and therefore improve replicability.

Part I: Systematic Review of Existing Interventions

To inform the development of PractiseWell, we conducted a systematic review of interventions for improving tertiary music students’ practice. While PractiseWell was designed for piano students, we looked at interventions for improving practice on other instruments too, since many of the skills required for effective practice should be applicable to all instruments. In this review, we investigated the following research questions:

RQ1. What types of intervention have been conducted to help tertiary music students practice effectively? RQ2. How effective were the interventions in terms of the outcomes measured? RQ3. What are the strengths and limitations of these interventions?

This review was carried out following guidelines set out in the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) statement (Moher et al., 2009). A protocol was created a priori, following the PRISMA protocol guidelines (Moher et al., 2015) and registered on the Open Science Framework Registries (https://doi.org/10.17605/OSF.IO/GYX53)

Methods

Inclusion Criteria

The review concerned interventions that addressed music practice, which we defined as “individually oriented self-study directed, no matter how strictly, toward attaining musical proficiency on an instrument or the voice,” after Miksza (2011, p. 52). We were interested only in individual practice, so we excluded studies of ensemble rehearsals. Since advanced musicians—such as conservatoire students—generally practice to prepare for a performance, we considered memorization and performance preparation part of music practice. We therefore included interventions that addressed these aspects in the review. However, we excluded interventions that were concerned only with performance anxiety or health. Furthermore, the intervention had to address skills for practice directly; we excluded interventions that targeted a skill or a behavior that might help practice as a secondary effect (e.g., mindfulness for effective practice; yoga for better performance). When an intervention consisted of a mixture of practice-relevant and practice-irrelevant components (e.g., health and wellbeing), we only included the study in the review if more than half of the intervention content was relevant.

Regarding the population, we were primarily interested in students studying music at a tertiary (i.e., higher education) institution. However, we also included graduate, semi-professional, and professional musicians, as we assumed that these groups had similar skills and used similar strategies for effective practice. We also restricted participants to classical musicians, as goals and strategies for practice and performance might differ across genres. We only included studies with participants at different levels (e.g., some tertiary and some pre-tertiary students) or in different genres (e.g., some classical and some jazz musicians) if at least half of the participants met these inclusion criteria.

Other inclusion criteria included the availability of full-text and English as the language of publication. Eligible publication types were empirical studies reported in peer-reviewed articles, doctoral theses, book chapters, and conference proceedings. There was no restriction on sample size or study strategy.

Search Procedure

Studies were identified from three sources: (a) a search of selected online databases (see Appendix A in the Supplementary Material for details), (b) a manual search of selected peer-reviewed journals, and (c) the reference list of a systematic review of studies related to music practice (How et al., 2022). We also conducted backward- and forward-cite searches by looking at the lists of references of identified studies and the cited-by list on Google Scholar, respectively.

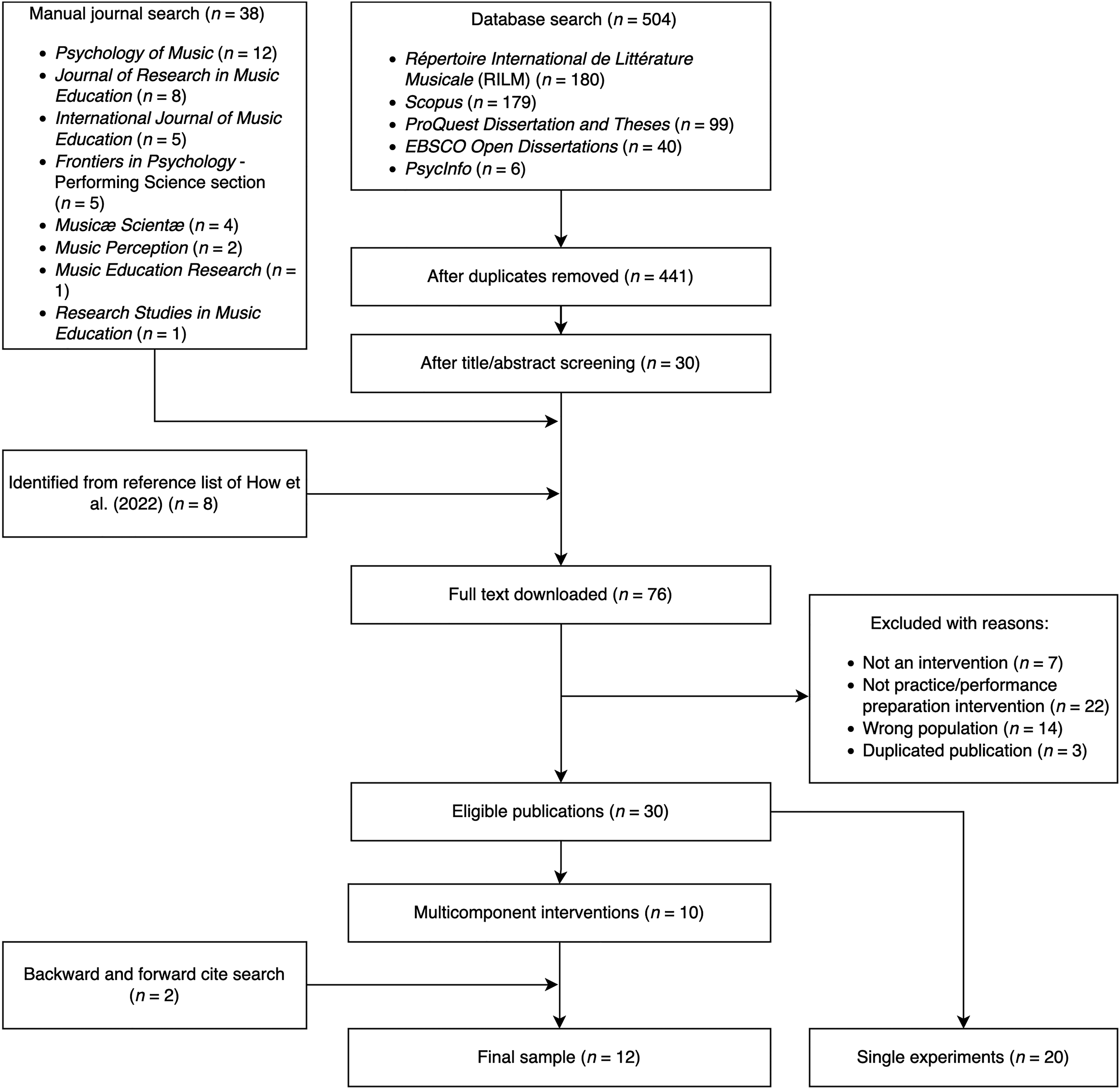

Figure 1 is a flowchart of the search and selection process. We conducted the searches in October and November 2021. The initial database search yielded 504 results. After removing duplicates and screening titles and abstracts, 30 publications remained. Another 38 publications were identified through the manual search, while eight more were identified through the reference list of the systematic review (How et al., 2021). This resulted in 76 full texts that were assessed for eligibility, of which 30 publications were deemed eligible for inclusion. The search and selection process was carried out primarily by the first author, while borderline cases were discussed and resolved in conjunction with the other authors.

PRISMA flow chart.

To gain an overview of the identified studies, we initially extracted the following information: main topic or strategy under investigation, study design and strategy, duration and frequency of intervention, and main outcomes measured (see Appendix B in the Supplementary Material). It appeared from this preliminary coding that the identified studies could be grouped into two broad categories: single experiments that tested the effect of a specific strategy (e.g., mental practice) and multicomponent interventions that delivered a program designed to teach a range of skills and strategies. Single experiments were often conducted in controlled settings over relatively short periods of time, ranging from one session to several days. In contrast, multicomponent interventions tended to involve a larger number of sessions held over several weeks. Of the 30 studies identified, 20 were categorized as single experiments and 10 as multicomponent interventions. In this review, we focused only on the multicomponent interventions, as they were more pertinent to the development of PractiseWell. Two additional articles were identified through forward and backward searches, yielding a total of 12 publications for inclusion. Each publication reported exactly one study.

Data Collection

The following information was extracted from these 12 studies: study strategy and design; participant characteristics; summary of intervention content; rationale for content, including theoretical basis; outcomes measured and measurement methods utilized; follow-up measures, if any; delivery mode; main findings; and participant feedback on the course. Furthermore, each study was rated according to the level of detail (low, medium, or high) provided by the authors regarding the intervention content (Table 1 gives a description of each category). The first author rated all the studies, after which 50% of studies were chosen randomly and rated by the second and third authors (25% each). Any disagreement between the two sets of ratings was resolved through discussion between the three authors.

Criteria for determining level of detail of intervention content provided.

Results

Table 2 gives an overview of the 12 intervention studies. Participants in all but one study were tertiary students; the exception was the study of Kegelaers and Oudejans (2020), in which participants were professional orchestral players or fellows of an orchestral academy. Ten studies involved interventions designed for musicians, regardless of the instrument they played; one was for pianists only (Osborne et al., 2021); and one was for woodwind or brass players only (Miksza, 2015). The sample size ranged from one to 31 participants. In terms of study strategy, five studies were qualitative, four were multistrategy, and three were quantitative.

Overview of studies included in systematic review.

Note. D = doctoral student; grp = group sessions; ind = individual sessions; PG = postgraduate students; prof. = professional musicians; PST = psychological skills training; SRL = self-regulated learning intervention; UG = undergraduate students.

RQ1. What Types of Intervention Have Been Conducted to Help Tertiary Music Students Practice Effectively?

Of the 12 studies identified, psychological skills training (PST; Weinberg & Gould, 2015) was implemented in six, while interventions based on self-regulated learning (SRL; Zimmerman, 2000) were tested in the other six. Psychological skills were implemented in the PST interventions to enhance both practice and performance preparation. All these interventions included mental practice or imagery and concentration. Other skills commonly addressed included goal setting (n = 5), arousal regulation (n = 5), and self-talk (n = 4). Time management was addressed in three studies, while resilience and cognitive restructuring or positive thinking were addressed in two studies.

The SRL interventions were all based, albeit to varying degrees, on the three-phase cyclical model of SRL (Zimmerman, 2000), which conceptualizes learning as a process that occurs over three phases (forethought, performance, reflection) with each phase involving distinct cognitive, metacognitive, emotional, and motivational subprocesses. Five of the six SRL interventions incorporated individual coaching, where the researcher acted as a coach and helped the students reflect on their practice and find ways to regulate it better. In these coaching sessions, students were often encouraged to take an active role in the process rather than being passive receivers of information. In some interventions, the coaching sessions took place as the researcher and the student watched a video recording of the student's practice together (Burwell & Shipton, 2013; Pike, 2016, 2017). Another common element of these interventions was the use of practice journals (Burwell & Shipton, 2013; Miksza et al., 2018; Osborne et al., 2021; Pike, 2017) for data collection, participant reflection, or both. Practice journals were generally used to supplement the intervention or data, except in one study (Osborne et al., 2021), in which it was a core element.

The SRL intervention by Miksza (2015) did not involve individual coaching sessions but instead utilized an instructional video. The video contained information and demonstrations of various specific practice strategies (both groups), and SRL strategies (experimental group only). Demonstrations were performed by postgraduate saxophone students for woodwind players and by postgraduate trombone students for brass players.

Of the six SRL interventions, two studies (Miksza, 2015; Miksza et al., 2018) incorporated the teaching of specific practice strategies identified in the literature, such as chaining, whole-part-whole, slow practice, and repetition with variation (e.g., varied rhythm, varied articulation, buzzing, whistling). In addition, Burwell and Shipton (2013) used 15-min block schedules, in which students chose a specific task to work on for 15 min.

Authors of studies involving PST interventions rarely provided precise accounts of the specific strategies or methods used for implementing particular skills. A notable exception was Osborne et al. (2014), who referred to a centering technique, used for arousal regulation and attention focusing, and described it in step-by-step detail. This technique was also reported in two other studies (Cohen & Bodner, 2019; Hatfield, 2016). Furthermore, Kegelaers and Oudejans (2020) implemented a strategy of dividing practice sessions into shorter manageable blocks, like that described by Burwell and Shipton (2013).

Delivery Mode

In five studies, the intervention was delivered in a combination of group and individual sessions. Generally, group sessions involved the provision of information, activities designed for students to practice new skills, and group discussions, while individual sessions provided participants with an opportunity to share personal experiences and have the program tailored to their needs. In two studies, only group sessions were implemented. Osborne et al. (2014) conducted group sessions with all participants, as well as sessions for groups of players of the same instrument family (e.g., brass). Four studies used individual sessions only and these all involved individual coaching for practice.

All interventions were delivered by the researchers who authored the articles, other than the intervention in the form of a video described by Miksza (2015). In several instances, the researcher who delivered the intervention was a musician with experience in a relevant field, such as clinical or sport psychology (Clark & Williamon, 2011; Cohen & Bodner, 2019; Hatfield, 2016). In contrast, Kegelaers and Oudejans (2020) indicated that they had a background in sport and performance psychology, but not music.

RQ2. How Effective Were the Interventions in Terms of the Outcomes Measured?

Outcomes reported for more than one study were participants’ experiences (n = 8), practice quality (n = 7), performance quality (n = 4), mental skills (n = 3), self-efficacy (n = 2), and music performance anxiety (MPA; n = 2). We looked at the two outcomes that were reported for at least half the studies (i.e., participants’ experiences and practice quality).

Participants’ Experiences

All studies using participant’s experiences as an outcome obtained this information using qualitative data collection methods, such as interviews, focus groups, and open-ended written responses. Participants generally reported that interventions were useful, leading to such outcomes as increased self-awareness of practice and performance preparation (Clark & Williamon, 2011; Kegelaers & Oudejans, 2020; Miksza et al., 2018); improved practice efficiency (Clark & Williamon, 2011; Kegelaers & Oudejans, 2020; Pike, 2017); and increased self-efficacy or confidence (Clark & Williamon, 2011; Hatfield, 2016). Specific aspects that participants found helpful included the setting of specific goals (Hatfield, 2016; Miksza et al., 2018); watching their own practice videos with a researcher (Burwell & Shipton, 2013; Pike, 2016); and the use of short blocks of practice (Burwell & Shipton, 2013; Kegelaers & Oudejans, 2020). They also appreciated group settings because these facilitated peer support or provided a low-stress performance context in which they could try out newly learned strategies (Burwell & Shipton, 2013; Clark & Williamon, 2011; Kegelaers & Oudejans, 2020).

In several studies, participants provided feedback to researchers about how the intervention could be improved. Participants in the study of Clark and Williamon (2011) would have liked the sessions to have involved less discussion of research findings and more practical applications of skills, including the integration of performance and audition settings, and examples from musicians. The transitioning-elite musicians (fellows of an orchestral academy) who took part in the study of Kegelears and Oudejans (2020) reported that the session provided limited novelty, in that they were not provided with information that was new to them. These transitioning-elite musicians also reported that they would have preferred the sessions to be delivered by someone with a musical background.

Practice Quality

Practice quality was measured in seven studies through self-report measures (n = 5) or observational methods (n = 3). Self-report measures included questionnaires, practice diaries, and microanalysis. In three studies, questionnaires (Clark & Williamon, 2011; Hatfield, 2016; Osborne et al., 2014) were employed and statistically significant improvements were found using relevant scales or subscales after the intervention. Practice diaries were used in two small-scale studies (Miksza et al., 2018; Osborne et al., 2021). Although improvements were found in some aspects of self-regulated practice, this finding was not derived from the results of statistical tests. Miksza et al. (2018) conducted microanalysis by having a researcher observe participants’ practice and ask “questions that were strategically timed to elicit information about the forethought, performance, and self-reflection phases of self-regulated learning” (p. 301). Differences in pre- and post-intervention microanalyses were reported to be “subtle” (p. 311), but participants set more detailed goals and reported a more varied repertoire of strategies. The participant who started with the lowest score for self-regulation benefited the most from the intervention.

Observational methods of measuring practice quality involved the analysis of video recordings of participants’ practice. Miksza (2015) divided practice sessions into segments and documented the types of practice strategy employed and the main objective for each segment. He found that the experimental group had focused more than the control group on musical objectives after the intervention. Pike (2016) analyzed practice videos but did not report in detail how they were analyzed or how participants’ practice changed over time. Miksza et al. (2018) analyzed video recordings in the same way as Miksza (2015), but provided little detail of the analysis other than to report that findings were consistent with the microanalysis.

RQ3. What Are the Strengths and Limitations of These Interventions?

The strengths and limitations of the reviewed studies are discussed in relation to three issues: study design, outcome measures, and intervention content.

Study Design

Of the 12 studies, only four employed a control group. Of these, only Miksza (2015) and Pike (2016) randomized participants into experimental and alternative-treatment control groups. In the study of Miksza (2015), both groups were instructed on specific practice strategies but the experimental group were also instructed on SRL strategies. The performance quality of both groups was found to have improved after the intervention, but this improvement was significantly greater for the experimental group. The control group in the study of Pike (2016) differed from the experimental group in that they did not receive coaching, but they still video-recorded their practice sessions and verbalized their thoughts out loud, which one participant reported was helpful. The control groups in both studies therefore benefited to some extent from the control condition, highlighting the importance of including control groups.

Outcome Measures

As seen in the results for RQ2, participants’ experiences in the form of qualitative data were the most frequently reported outcome among the reviewed studies. While this type of data is typically rich, capturing individual experiences in the real world, it is severely limited because it is highly vulnerable to bias. For example, if music students participated in an intervention expecting to benefit from it and the researcher who conducted the intervention asked what they had thought about it, the students would be unlikely to report that they had not benefited from it. Many studies employed one-to-one interviews or focus groups that were presumably conducted by the researcher who had designed or administered the intervention, introducing additional biases. Furthermore, expecting an intervention to be beneficial increases the likelihood of benefiting from it (Linde et al., 2007); all the more so if the participant chose freely to take part in it (Geers & Rose, 2011). Consequently, the use of qualitative methods in studies in which participants were volunteers could have inflated their reported opinions of the interventions.

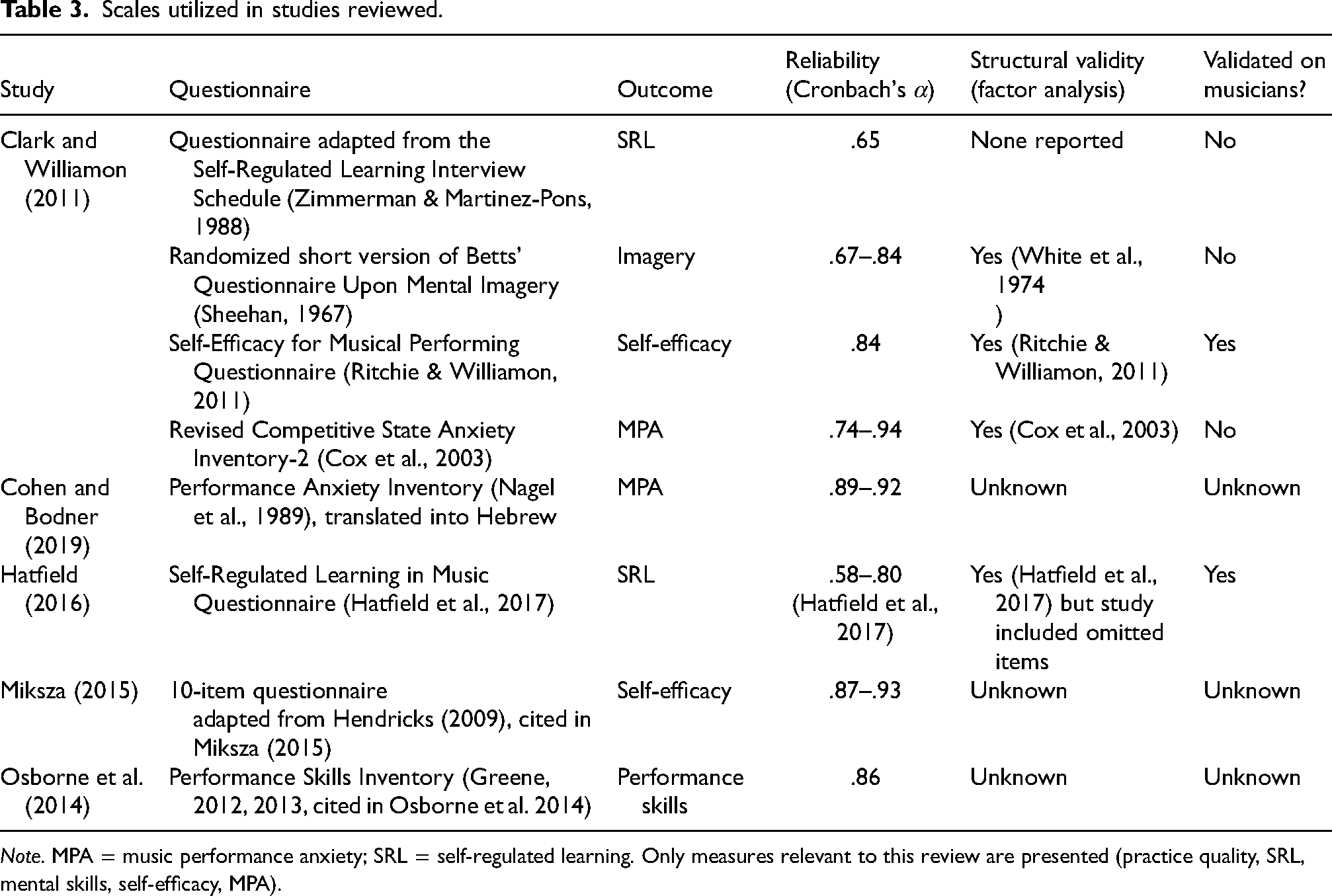

The use of scales as outcome measures was also problematic (Table 3). While most scales had acceptable internal consistency (α > .70; Boateng et al., 2018), only half of the scales had known factor structures, and only two of these had been validated for musicians. However, Hatfield (2016) utilized subscales that were shown to cross-load; these were therefore omitted in later work Hatfield et al. (2017). Thus, only one of the eight scales (Self-Efficacy for Musical Performing; Ritchie & Williamon, 2011) was known to be a reliable and valid scale for use with musicians. This is an important issue, because if the validity of the measurement tools is called into question, the validity of the entire study is jeopardized.

Scales utilized in studies reviewed.

Note. MPA = music performance anxiety; SRL = self-regulated learning. Only measures relevant to this review are presented (practice quality, SRL, mental skills, self-efficacy, MPA).

Intervention Content

Studies were scored as reporting high, medium, or low levels of detail regarding the intervention content (Table 2). Five studies were categorized as providing low levels of detail and five studies were categorized as providing medium levels of detail. In only two studies, the intervention content was reported in levels of detail sufficiently high that the study could be replicated (Miksza, 2015; Miksza et al., 2018). In these studies, the full script of the intervention content was included as supplementary material. Additionally, Osborne et al. (2021) provided detailed descriptions about the practice diary utilized, but not the one-to-one session. For the other studies, sufficient information was not provided for interventions to be replicated. Furthermore, details were not provided about how interventions were developed or the rationale for choices regarding intervention content or delivery method.

Summary

In this systematic review, we aimed to gain an overview of existing intervention studies designed to equip tertiary music students with skills for effective practice, to inform the development of PractiseWell. Twelve studies were identified and reviewed, comprising six studies that implemented PST and six studies that implemented an SRL intervention.

From the results of this review, we identified the following strategies to include in PractiseWell:

setting specific goals, watching video recordings of own practice, time blocking. examples and demonstrations, practical activities.

We also identified the following as important features to include in the interventions:

However, the lack of detailed information about the intervention content in most studies presented a challenge for our project, as it was unclear exactly how skills were taught or implemented. While the reviewed studies generally reported positive outcomes, we found that the evidence for the efficacy of the interventions was weak, owing to such issues as the lack of randomized control groups, reliance on participants' experiences as an outcome measure, and the use of unvalidated scales.

Nevertheless, a promising characteristic of some of the interventions was their strong grounding in theory, namely the theory of SRL (Hatfield, 2016; Hatfield & Lemyre, 2016; Miksza et al., 2018; Osborne et al., 2021; Pike, 2017). Interventions with theoretical bases have several advantages, such as allowing researchers to investigate why interventions are effective (or ineffective), explore mechanisms of change, and generate and test hypotheses (Michie & Prestwich, 2010). For example, in some studies it was found that the setting of specific goals led to a variety of positive outcomes, including improved concentration and self-efficacy, which led to stronger feelings of satisfaction and adaptive affective state (Hatfield, 2016; Miksza et al., 2018). Such findings are important for future interventions and can also contribute to the understanding of effective practice and expertise development, which can ultimately lead to invaluable knowledge for instrumental teachers that they can apply in the context of one-to-one tuition.

Part II: Development of PractiseWell

The development of PractiseWell is described next, following the guidelines provided by the GUIDED (Duncan et al., 2020). The GUIDED checklist can be found in Appendix C in the Supplementary Material.

Approach to Intervention Development

Two aspects of PractiseWell were developed: its content (the actual information and activities included in the intervention) and its design (how the content is presented). While these two aspects are described separately in the following section, it should be noted that they were developed in parallel and influenced each other constantly. The content of PractiseWell was developed using a theory- and evidence-based approach. To develop the design of the intervention, we started with the question, “How can we maximize user acceptability and engagement?” and turned to the healthcare field for potential answers, as there is a growing body of literature in this field on intervention development and design (e.g., O’Cathain et al., 2019b). Ultimately, the design of PractiseWell was driven by two concepts from healthcare research: design features (Morrison et al., 2012) and the person-based approach (Yardley et al., 2015a).

Design features are characteristics of an online intervention that facilitate the delivery of its content (Morrison et al., 2012). These features are unrelated to the actual content of the intervention; for example, online interventions for depression, obesity, and mathematical skills have different content but may all involve sending weekly email reminders to participants with motivational messages. Design features can influence users’ engagement with an intervention, which can in turn influence its effect (Perski et al., 2017).

The person-based approach prioritizes an in-depth understanding of the attitudes, beliefs, needs, and situations of the target population to maximize the acceptability and feasibility of an intervention (Yardley et al., 2015a, 2015b). One way in which this can be achieved is by developing guiding principles that aim to address potential challenges and barriers that the target population might face when completing the intervention.

Sources of Evidence and How They Informed the Development of PractiseWell

For the Content of PractiseWell

The socio-cognitive theory of SRL and its cyclical three-phase model (Zimmerman, 2000) was used as the theoretical basis for the content of PractiseWell. The theory of SRL has been used extensively to study and understand effective practice (McPherson, 2022; McPherson & Zimmerman, 2011; Varela et al., 2016) and was the theoretical basis of half the interventions identified in the systematic review.

In addition to the systematic review reported previously, the following sources of information were consulted to identify specific components to incorporate in PractiseWell: (a) a review of the literature on effective practice, (b) a review of the literature on SRL interventions for academic studies, and (c) semistructured interviews with 11 conservatoire piano teachers about their experiences of effective practice and teaching practice strategies to conservatoire students (reported in Suzuki et al., 2024). From these sources, we produced a list of the skills and strategies to be included in PractiseWell, which is presented in Table 4. Some of these are general self-regulatory skills applied to music practice (e.g., goal setting), while others are specific to music practice (e.g., performance preparation).

Skills and strategies to be included in PractiseWell.

In addition, SRL served as one of the theoretical frameworks for maximizing user engagement. One of the reasons that online interventions are rarely used is that participants are unmonitored and self-directed, and therefore need to be sufficiently motivated and organized to complete the intervention (Broadbent et al., 2020). In other words, if participants are to benefit from an intervention, they already need to be able to regulate their own learning. We call this meta self-regulation (i.e., the ability to use self-regulation skills to take part in an intervention designed to teach or enhance them).

The review of literature reporting SRL interventions for academic studies was used to generate the following principles for promoting meta self-regulation in PractiseWell:

Provide guidance for managing learning on the course (e.g., encourage participants to set aside a regular day and time to work through the intervention each week). Include activities to facilitate meta self-regulation (e.g., ask participants to set goals for the course) (Bellhäuser et al., 2000). Incorporate metacognitive reflection: explain how and why the strategies presented can be useful (e.g., explain how specific goals can lead to more effective practice) (Dignath et al., 2008; Dignath & Büttner, 2008).

For the Design of PractiseWell

Design Features

We reviewed the literature on the design features of online interventions in healthcare for the purpose of identifying key design features to incorporate in PractiseWell. Our review was guided by the framework proposed by Morrison et al. (2012), which identified 11 types of design feature. We supplemented the findings of this review with evidence from the music performance psychology literature and an ad hoc survey in which 25 tertiary music students reported their preferences for an online course on effective practice (see Appendix D in the Supplementary Material for the characteristics of this sample). The final list of design features is presented in Table 5, along with a summary of the findings and an explanation of how each feature was implemented.

Design features to be incorporated in PractiseWell.

The Person-Based Approach: Guiding Principles

To develop guiding principles for PractiseWell, we reviewed the literature on performance psychology interventions for musicians to identify the needs of tertiary piano students and the potential challenges they face. We then formulated (a) design objectives to address the needs and challenges that were identified in this review and (b) intervention features aiming to meet these objectives (Table 6). Some of these intervention features were directly drawn from the findings of the systematic review and optimal design features.

Guiding principles for PractiseWell.

Part III: Description of PractiseWell

The resulting intervention is described next, following the TIDieR checklist. All materials for the intervention can be found at https://osf.io/yz58j/?view_only=3ebee3555dc24e5aad73a0964803391d.

Overview

PractiseWell is a self-paced, online intervention that aims to equip tertiary piano students with skills and strategies for effective practice and performance preparation. It is based on the socio-cognitive theory of SRL (Zimmerman, 2000) and addresses self-regulatory skills to help music students plan, monitor, and reflect on their practice, so that they can empowered to be autonomous practicers. It is presented to participants as a “course” rather than an “intervention”.

Content

Table 7 provides an overview of the content and activities that form each module. The content was loosely structured according to the three phases of SRL, which were labeled “before practice”, “during practice”, and “after practice” (Bellhäuser et al., 2000). After an introductory Week 1 module, Week 2 and 3 modules address the “before practice” phase by discussing goal setting and time management. Week 4 and 5 modules map onto the “during practice” phase by looking at specific practice strategies, concentration, and self-monitoring. The Week 6 module addresses self-evaluation and therefore corresponds to the “after practice” phase. The Week 7 module addresses skills for performance preparation, while in Week 8 participants can choose a module on skills for either (a) memorization or (b) further performance preparation. The final Week 9 module aims to address broader ideas, such as motivation and artistic identity.

Summary of PractiseWell content and activities.

Materials and Delivery

PractiseWell is fully self-paced, meaning that participants work through the content independently. It is designed to be completed without any guidance or additional support, although there is scope for the intervention to be supplemented by individual or group sessions led by an expert. Each of the nine modules is designed to be completed in a week. The modules are drip-fed to participants, one per week. The entire intervention lasts 9 weeks.

The intervention is available through a website where users can create an account to login (Figure 2). The website was created in WordPress, using the LearnPress plugin and Eduma theme. Plugins are available on WordPress to track participants’ usage of the intervention (e.g., time spent on the website, number of modules completed), which could be used as a measure of adherence in future trial studies.

Screenshot of PractiseWell website.

Each module is delivered via three to five short videos ranging in durations from 1 to 8 min. The videos are presentation-style (i.e., animated slides with a voice-over; Figure 3(a)). For musical demonstrations, the score is shown while the audio recording is played (Figure 3(b)). The course comes with a supplementary pdf workbook (Figure 3(c)), which contains instructions for the activities, as well as space to write down responses where appropriate. The workbook is available as an electronic version that can be filled in and a print-ready version. Each module also comes with a separate downloadable PDF summary sheet (Figure 3(d)).

Examples: (a) videos; (b) musical demonstration in videos; (c) workbook; (d) weekly summary sheets.

All of the materials, including the voice recordings and musical demonstrations, were created by the first author, who is also a classically trained pianist. The presentation slides were created using Microsoft PowerPoint, the workbook and worksheets were prepared using Canva, and the audio and video files were edited using Audacity and iMovie, respectively.

It is planned that PractiseWell will be accompanied by a practice diary app, which is still under development. The app will allow users to track their routine practice sessions on their smartphones. It could also act as a data collection tool in future evaluation studies.

General Discussion

In this article, we describe the development of PractiseWell, an online intervention designed to teach tertiary piano students the skills and strategies needed for effective practice and performance preparation. The resulting intervention was based on the three-phase cyclical model of SRL (Zimmerman, 2000) and consisted of nine weekly modules, covering a wide range of skills and strategies. The content was delivered primarily through videos and featured demonstrations and practical activities. The self-paced, online nature of the intervention means that it is highly scalable, compared with traditional face-to-face sessions, but it also comes with the challenge of potentially low usage. We therefore designed PractiseWell to maximize user engagement. However, it is unclear how effective our measures for user engagement are until they are tested on actual users. The next stage of the project is to pilot the intervention to assess its acceptability and revise it according to feedback from participants. This intervention could also be adapted in future to groups of musicians other than tertiary piano students (e.g., players of other instruments, in different age groups, and with different levels of expertise).

We have also demonstrated how guidelines from healthcare research can be applied to interventions in music performance science. We have described the process whereby we developed the intervention, using GUIDED, and the final content of the intervention, using TIDieR. We believe that these guidelines have allowed us to share our development process in a detailed and transparent manner. Our systematic review of the literature reveals that the development process or the content of the intervention in detail have not been reported in most intervention studies. Sharing the intervention content is critical as (a) it allows other researchers to replicate the intervention study; and (b) it allows for the implementation of the intervention outside research contexts (e.g., in conservatoires). Additionally, reporting the intervention development process can help to increase the transparency of the study and allow fellow researchers planning to develop other interventions to learn from the findings (Hoddinott, 2015).

The development of PractiseWell was informed by approaches to intervention development in healthcare research, namely the person-centered approach and optimal design features for online intervention. These approaches allowed us to design PractiseWell in such a way as to maximize user engagement. Given that low participation and engagement rates can be an issue in intervention studies with music students (Clark & Williamon, 2011; Suzuki & Pitts, 2023), user engagement is a topic that urgently needs to be investigated further in music performance psychology. Even if researchers and institutions provide useful interventions and training programs for music students, these efforts are wasted if the students are not willing to utilize them. Therefore, it is vital that future research is focused on understanding what music students want and what would make them more likely to engage with such programs.

Conclusion

In this study, we developed an online intervention, PractiseWell, designed to equip tertiary piano students with skills and strategies for effective practice and performance preparation. To our knowledge, this is the first study to develop such an intervention. We have demonstrated the utility and importance of reporting the content and the development process of interventions in detail, and encourage other researchers to do so in future as we believe that this is a necessity if research in music education, psychology, and performance science is to create effective and positive changes for musicians.

Supplemental Material

sj-pdf-1-mns-10.1177_20592043241262612 - Supplemental material for Developing an Online Intervention to Equip Tertiary Piano Students With Skills and Strategies for Effective Practice

Supplemental material, sj-pdf-1-mns-10.1177_20592043241262612 for Developing an Online Intervention to Equip Tertiary Piano Students With Skills and Strategies for Effective Practice by Akiho Suzuki, Jane Ginsborg, Michelle Phillips and Zoe Franklin in Music & Science

Supplemental Material

sj-pdf-2-mns-10.1177_20592043241262612 - Supplemental material for Developing an Online Intervention to Equip Tertiary Piano Students With Skills and Strategies for Effective Practice

Supplemental material, sj-pdf-2-mns-10.1177_20592043241262612 for Developing an Online Intervention to Equip Tertiary Piano Students With Skills and Strategies for Effective Practice by Akiho Suzuki, Jane Ginsborg, Michelle Phillips and Zoe Franklin in Music & Science

Supplemental Material

sj-pdf-3-mns-10.1177_20592043241262612 - Supplemental material for Developing an Online Intervention to Equip Tertiary Piano Students With Skills and Strategies for Effective Practice

Supplemental material, sj-pdf-3-mns-10.1177_20592043241262612 for Developing an Online Intervention to Equip Tertiary Piano Students With Skills and Strategies for Effective Practice by Akiho Suzuki, Jane Ginsborg, Michelle Phillips and Zoe Franklin in Music & Science

Supplemental Material

sj-docx-4-mns-10.1177_20592043241262612 - Supplemental material for Developing an Online Intervention to Equip Tertiary Piano Students With Skills and Strategies for Effective Practice

Supplemental material, sj-docx-4-mns-10.1177_20592043241262612 for Developing an Online Intervention to Equip Tertiary Piano Students With Skills and Strategies for Effective Practice by Akiho Suzuki, Jane Ginsborg, Michelle Phillips and Zoe Franklin in Music & Science

Footnotes

Action Editor

Eckart Altenmüller, Hochschule für Musik, Theater und Medien Hannover, Institut für Musikphysiologie und Musikermedizin.

Peer Review

Adina Mornell, University of Music and Performing Arts Munich.

Hannah Losch, Hochschule für Musik Theater und Medien Hannover.

Contributorship

As conceived and carried out the project. JG, MP, and ZF supervised the project. AS wrote the first draft of the manuscript. All authors reviewed and edited the manuscript and approved the final version of the manuscript.

Data Availability

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

Conservatoires UK Research Ethics Committee approved the questionnaire study (Reference number: CUK/SF/2021-22/16).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Arts and Humanities Research Council, UK.

Note

1. By online interventions, we mean self-paced interventions, where participants complete the intervention independently. We are not considering interventions that are led by practitioners in real time but are delivered online.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.