Abstract

Although dancing often takes place in social contexts such as a club or party, previous study of such music-induced movement has focused mainly on individuals. The current study explores music-induced movement in a naturalistic dyadic context, focusing on the influence of personality, using five-factor model (FFM) traits, and trait empathy on participants’ responses to their partners. Fifty-four participants were recorded using motion capture while dancing to music excerpts alone and in dyads with three different partners, using a round-robin approach. Analysis using the Social Relations Model (SRM) suggested that the unique combination of each pair caused more variation in participants’ amount of movement than did individual factors. Comparison with self-reported personality and empathy measures provided some preliminary insights into the role of individual differences in such interaction. Self-reported empathy was linked to greater differences in amount of movement in responses to different partners. When looking at males only, this effect persisted for the whole body, head, and hands. For females, there was a significant relationship between participants’ Agreeableness (an FFM trait) and their partners’ head movements, suggesting that head movement may function socially to indicate affiliation in a dance context. Although consisting of modest effect sizes resulting from multiple comparisons, these results align with current theory and suggest possible ways that social context may affect music-induced movement and provide some direction for future study of the topic.

Despite the ubiquity of posters, t-shirts, and internet memes urging us to “dance like no one is watching,” dance often takes place in social contexts such as clubs, concerts, or parties, where being seen by others is almost inevitable. Being seen may even be part of the point of dance; recent studies have suggested that synchronizing with others to music can promote social bonding (Quiroga Murcia, Kreutz, Clift, & Bongard, 2010; Rabinowitch et al., 2015; Vicary, Sperling, Von Zimmermann, Richardson, & Orgs, 2017) and even increase pain tolerance (Tarr, Launay, & Dunbar, 2016), supporting evolutionary theories that music and dance developed to support social cooperation necessary for human survival, in contexts such as group chorusing or sexual selection (Hodges, 2009; Huron, 2001; Phillips-Silver, Aktipis, & Bryant, 2010). Factors such as personality, felt and perceived emotion, music preference, and even sexual attractiveness have been related to qualities of free dance movements (Burger, 2013; Burger, Saarikallio, Luck, Thompson, & Toiviainen, 2013; Luck, Saarikallio, Burger, Thompson, & Toiviainen, 2010; Saarikallio, Luck, Burger, Thompson, & Toiviainen, 2013), all of which could possibly be decoded by observers, allowing dance to function as a kind of social signaling.

Previous studies of dance have tended to focus on individual participants, and have shown free dance movement to be reflective of individual qualities such as personality, or felt or perceived emotion (e.g., Burger, 2013; Carlson, Burger, London, Thompson, & Toiviainen, 2016; van Dyck, Maes, Hargreaves, Lesaffre, & Leman, 2013; Luck et al., 2010). A few recent studies suggest that social context plays an important role in such music-induced movement. Solberg and Jensenius (2017) used a naturalistic electronic dance music (EDM) setting to show that the presence of and increased movement with other dancers increased subjective enjoyment of the dance experience. De Bruyn, Leman, and Moelants (2008) found that, in nine-year-old children, movement intensity as measured by Wii-remotes was increased in a social compared with individual setting, while van Dyck, Moelants, et al. (2013) found evidence for group entrainment in that there were greater correlations between dancers’ tempos and activity within, rather than between, groups. Using choreographed movement exercises, von Zimmermann, Vicary, Sperling, Orgs, and Richardson (2018) found that movement similarity between dyads in a group predicted group affiliation better than synchronization of the full group. However, while these studies provide valuable information about interpersonal coordination and the behaviors of a group, they are unable to provide information about how an individual’s improvised, spontaneous dance movements, such as have been shown to reflect personality (Luck et al., 2010), might be influenced by the presence of another dancer in a naturalistic setting. Do we, in fact, dance differently when someone is watching, and, moreover, when we are watching someone else dance?

There is reason to believe that we do. Although there is a wealth of evidence that personality is generally stable across condition and time and indeed may be biologically based (Digman, 1990; Jang, Livesley, & Vemon, 1996; Letzring & Adamcik, 2015; Schaefer, Heinze, & Rotte, 2012; Soldz & Vaillant, 1999), there is similar evidence from social psychology research on that social context is a major determinant of behavior (Holtgraves, 2011; Malloy, Barcelos, Arruda, DeRosa, & Fonseca, 2005; Webster & Ward, 2011). It is thus imperative to consider both an individuals’ own tendencies and the influence of other individuals (and their own natural tendencies) when exploring social behavior (Griffin & Gonzalez, 2003; Wagerman & Funder, 2009). The widely-used five-factor model (FFM) of personality provides a useful measure of behavioral tendency through the measurement of five bipolar traits: Openness to Experience, Conscientiousness, Extraversion, Agreeableness, and Neuroticism. Of these, Agreeableness and Extraversion are considered to be primarily interpersonal and therefore most relevant to social functioning, while Openness to Experience, Neuroticism and Conscientiousness are primarily intrapsychic (Ansell & Pincus, 2004). Agreeableness, which has also been labeled “likeability” and “friendliness,” is characterized by tact, kindness, warmth, conformity, and compliance (Graziano & Tobin, 2002) and has been linked to pro-social behavior (Graziano, Habashi, Sheese, & Tobin, 2007; Jensen-Campbell et al., 2002). Extraversion is characterized by positive affect, interest in social engagement and sensation-seeking (Ashton, Lee, & Paunonen, 2002; Digman, 1990; Gray, 1970) and has been related to peer acceptance, goal-oriented behavior, and modest advantages in decoding nonverbal behavior (Berry & Hansen, 2000; Jensen-Campbell et al., 2002; McCabe & Fleeson, 2012). Across cultures, females report higher levels of both Extraversion and Agreeableness than males (Costa, Terracciano, & McCrae, 2001; Schmitt, Realo, Voracek, & Allik, 2008). There is evidence that high levels of both Extraversion and Agreeableness provide advantages in social interactions, although this can depend on the particular combination of personalities in a given dyad (Berry & Hansen, 2000; Cuperman & Ickes, 2009; Isbister & Nass, 2000).

Dyadic interactions may also be influenced by empathy. Empathy may be broadly defined as a complex psychological process, including both cognitive and affective components, which allows for the understanding of others’ emotions and perceptions (Decety & Jackson, 2004; Harari, Shamay-Tsoory, Ravid, & Levkovitz, 2010; Shamay-Tsoory, Tomer, Goldsher, Berger, & Aharon-Peretz, 2004; Zahavi, 2010). As with Agreeableness and Extraversion, males tend to report lower levels of trait empathy than females, while neuroimaging has shown differences between males and females in brain networks recruited for empathy (Schulte-Rüther, Markowitsch, Shah, Fink, & Piefke, 2008). Empathy is particularly important to study in the context of music and dance, as deficiencies and abnormalities in empathic function, such as autism and schizophrenia, have been associated with various serious mental disorders currently being treated in clinical-music and dance-therapeutic settings (Koch, Mehl, Sobanski, Sieber, & Fuchs, 2015; LaGasse, 2017; Lee, Jang, Lee, & Hwang, 2015), Promisingly, some studies have suggested a relationship between engaging in rhythmic entrainment, such as joint drumming activities or being swung in synchrony with a partner, and increased empathy or pro-social behavior (Kirschner & Tomasello, 2009; Rabinowitch et al., 2015; Rabinowitch, Cross, & Burnard, 2013; Rabinowitch & Meltzoff, 2017). Regarding free dance movement, Bamford and Davidson (2017) found that trait empathy was associated with better adjustment to abrupt tempo changes, while Carlson, Burger, London, Thompson, and Toiviainen (2016) found no relationship between empathy and adjustment to small tempo differences across stimuli. Both studies included individual dancing only; it may be that the effect of empathy on dance and other music-induced movement is clearer in an overtly social context. In the area of music performance, Novembre, Ticini, Schütz-Bosbach, and Keller (2014), found that more empathic participants appeared to rely more on motor simulations when adjusting their piano playing to a partner, supporting the importance of a social context in studying empathy in a dyadic movement context. Taken together, the previous work discussed above suggest that examining how individuals respond to a social setting in the context of free dance, taking both individual and social factors into account, is likely to provide new insights into music-induced movement in general.

Using movement variables gathered simultaneously from two dyad members to investigate the influence of one dancer on the other (and vice versa) raises unique analytical complications that do not come up in individual dance research. Parametric statistical tests assume independence (Field, 2009), violations of this assumption can have serious consequences for tests of significance, with marked increases in the likelihood of both Type I and Type II errors (Field, 2009; Kenny & La Voie, 1984; Nimon, 2012; Wiedermann & von Eye, 2013), and may take the form of partner effects, the influence of one participant on another, mutual influence between partners, or common fate, where both partners are exposed to the same conditions resulting in similar responses (Kenny, 1996). For example, Dancer B might wave her hands while dancing and thereby encourage Dancer A to dance more vigorously than he would otherwise; this would be a partner effect. Dancer A and Dancer B may try to outdo each other in who can jump up and down the most, so the more Dancer A jumps the more Dancer B jumps and vice versa; this is an example of mutual influence. Finally, Dancer A and Dancer B may both be pretending to be chickens because they are both listening to the “Chicken Dance”; this is common fate. Untangling these influences on behavior is one of the main tasks of dyadic data analysis.

One approach to this problem has historically been the use of confederates whose behavior is constrained (Griffin & Gonzalez, 2003). Although this simplifies matters statistically, multiple researchers have pointed out that to capture truly naturalistic behavior, both actors should be free to respond to the other as they wish (Cupeman & Ickes, 2009). Various statistical models have been developed to cope with, explore, and understand such non-independence, such as the Actor–Partner Interdependence Model (APIM) (Kenny et al., 2006) which considers the causal contribution of members of unique dyads while correcting for non-independence, or the latent dyadic model which assesses shared variance between members (Griffin & Gonzalez, 2003). However, considering a person within the context of only one dyad, as opposed to multiple dyads, entails some notable limitations for generalization (Back & Kenny, 2010; Malloy et al., 2005). Imagine, for example, that the reason Dancer A dances more vigorously when Dancer B waves her hands is that Dancer A wants to please Dancer B because they are friends. We might erroneously conclude that hand-waving is related to vigorous dancing, whereas Dancer A may dance less vigorously if Dancer C waves her hands, because he does not particularly like Dancer C. These are examples of relationship effects, knowledge of which are crucial in understanding dyadic effects, but which, as can be seen in the example, can be mathematically determined only if each participant has more than one partner. The Social Relations Model (SRM) provides a structure for determining general knowledge about dyadic phenomena by comparing an individuals’ behavior with multiple partners (Back & Kenny, 2010; Gill & Swartz, 2001; Kenny et al., 2006). The aim of SRM is to separate causality of a given behavior as it takes place in a dyad (vigorous dancing, for example) into actor effects (the degree to which an individual tends to dance very vigorously), partner effects (the degree to which an individual tends to cause their partners to dance vigorously) and relationship effects (the degree to which an individual and a given partner have unique effects on the vigor of each other’s dancing when compared with their behaviors with other partners). Across a sample, these are expressed in terms of variances. If we wished to investigate whether the presence of a partner influences hand-waving in dance and found a very large actor variance and a very small partner and relationship variances, we might conclude that the amount of hand-waving in dance depends chiefly by the person doing the hand-waving, regardless of their partner. On the other hand, if we found a very large partner variance, we could conclude that the amount of hand-waving would vary depending on who a person’s partner is for a given interaction. 1 Similarly, a large relationship variance would indicate that the amount of hand-waving in determined by unique characteristics of a given dyad, such as friendship, liking, attraction, personality, and so on (Back & Kenny, 2010; Kenny et al., 2006; Kenny, Mannetti, Pierro, Livi, & Kashy, 2002; Kenny & Cook, 1999).

The aim of the current study is to explore the relative influence of actor, partner, and relationship effects using full-body motion capture in a naturalistic, free dance movement context using the SRM, taking into account individual differences of personality and empathy. The study poses two research questions: Does the presence of a partner moving to the same music affect music-induced movements of the individual? Do characteristics of an individual, specifically Agreeableness, Extraversion, and trait empathy, relate to responsiveness to a partner in a dance setting?

While previous work has been limited in its ability to extract movement from more than one body part per dancer (e.g., De Bruyn, Leman, & Moelants, 2008; Solberg & Jensenius, 2017), the current study employs full-body motion capture, making it is possible here to consider where specifically in the body social behavior might manifest in dance. While the whole body may be considered globally in dance (Carlson et al., 2016), in a social context we may also expect the hands to be important as hand gestures are particularly associated with communication (Bernardis & Gentilucci, 2006; Goldin-Meadow, 2006; Krauss, Chen, & Chawla, 1996) . Head movements have also been shown to be important in communication, particularly in non-verbally communicating rapport (Beck, Daughtridge, & Sloane, 2002; Helweg-Larsen, Cunningham, Carrico, & Pergram, 2004; Tickle-Degnen & Rosenthal, 1990). Both may be implicated in musical contexts as well (Davidson, 2001; Luck & Thompson, 2010; Thompson & Luck, 2012). An eye-tracking study found that observers focused relatively little on dancers’ feet and core body, focusing more on the head. Therefore, in addition to the body as a whole, head and hand movement are considered separately in the current study.

Kenny and Malloy (1988) reviewed SRM literature and found that across samples, against their expectations, partner effects (the degree to which an individual elicits consistent responses from all of their partners) were weak in affective and cognitive domains and virtually non-existent in behavioral domains, except for being slightly more apparent in nonverbal communication. It is therefore reasonable to assume there may be a similar pattern in the current context. Kenny and Malloy suggest that this may be due to individual differences as well as experimental context. Given these observations, as well as previous findings and theoretical considerations regarding personality and empathy, we make the following predictions:

Methods

Stimuli

Since music preference and genre have previously been related to qualities of music-induced movement (e.g., Burger, 2013), a stimuli set including multiple genres was considered desirable, both to allow for non-independence related to common fate, and to ensure that dyadic effects could not be attributed to the characteristics of a single genre. As genre in music is notably difficult to define (Pachet & Cazaly, 2000), to avoid researcher bias in stimuli selection a data-driven approach was devised using the methods described by Carlson, Saari, Burger, and Toiviainen (2017). A total of 2,407 tracks were collected from online music service Last.fm from those tagged by users as “danceable,” “dancing,” “head banging,” or “headbanging,” and which had been tagged with only one genre label (e.g., “Country” or “Jazz”). Tracks were retained only if they had a non-zero danceability score according to Echo Nest (the.echonest.com, an online music and data intelligence service where music categorization is determined by computational analysis of a given track’s acoustic features, including beat strength, tempo, and loudness), and only if the track’s tempo fell between 118–132 beats per minute (BPM). Four randomly selected excerpts from each genre were checked for tempo and stylistic consistency by the researchers, leaving 48 stimuli from 12 genres: Blues, Country, Dance, Funk, Jazz, Metal, Oldies, Pop, Rap, Reggae, Rock, and Soul. For a complete description of this stimuli-selection methodology, see Carlson et al. (2017). Participants (n = 210) were recruited using University student and departmental email lists and social media to rate their preference for these 48 excerpts in an online listening experiment using Survey Gizmo (www.surveygizmo.eu). Participants were entered into a lottery to win one of ten movie ticket vouchers, and were given feedback about their music preferences and personality upon completing the survey. Participants who completed the survey were also given the chance to sign up for the motion capture study.

For the motion capture study, the number of genres was reduced from 12 to eight, and the number of stimuli per genre from four to two, in order to keep the experiment sufficiently short and limit the effects of fatigue. From each genre, two stimuli with the highest variability in preference ratings were chosen. This resulted in a final set of 16 from the following eight genres: Blues, Country, Dance, Jazz, Metal, Pop, Rap, and Reggae. Funk, Oldies, Rock, and Soul had the least variability in preference ratings and were therefore eliminated. Stimuli were 35 seconds in duration, including a 2.5-second fade-in and 2.5-second fade-out, as well as a sinusoidal beep at the start of each excerpt to mark the beginning for later synchronization with the motion capture data.

Participants

A total of 73 participants (54 females) completed the motion capture experiment. However, due to several cancelations and “no-shows,” only 52 (38 female) completed the experiment in groups of four. Since the SRM requires a minimum of four participants (Kenny, Kashy & Cook, 2006), only data from these groups were included in the current analysis. Thus, each group consisted of four participants, resulting in six dyads per group. Participants ranged in age from 19 to 40 years (M = 25.74, SD = 4.72). Thirty held bachelor’s degrees while 16 held master’s degrees. Thirty-three participants reported having received some formal musical training; 7 reported 1–3 years, 10 reported 7–10 years, while 16 reported 10 or more years of training. Seventeen participants reported having received some formal dance training; 10 reported 1–3 years, five reported 4–6 years, while two reported 7–10 years. Participants were of 24 different nationalities, with Finland, the United States, and Vietnam being the most represented. Participants received two movie ticket vouchers each for attending the experiment. All participants spoke and received instructions in English.

Participant grouping

Previous work has shown small but fairly consistent relationships between personality and music preference (e.g., Greenberg et al., 2016; Rawlings & Ciancarelli, 1997; Rentfrow, Goldberg, & Levitin, 2011). While it is not known how music preference affects music-induced movement in a dyadic setting, it is known that people make social judgements based on the music preferences of others (Rentfrow & Gosling, 2007; Rentfrow & Gosling, 2006; Rentfrow, McDonald, & Oldmeadow, 2009, Schäfer et al., 2015). Therefore, groups with evenly varied musical preferences were sought, such that effects were not confounded by unusual similarity or unusual difference in preference between participants in a given group.

To achieve this, principal component analysis (PCA) was performed on the participants’ preference ratings of the 16 stimuli. The first component accounted for 22.6% of variance and included high negative loadings for both Metal genre excerpts and moderately high positive loadings for Reggae, Rap, and Pop excerpts, while loadings for other excerpts were small, suggesting a preference for upbeat, contemporary, danceable music and a dislike for Metal. The second component accounted for 22.1% of variance and included high positive loadings for both Jazz excerpts and moderately high positive loadings for Metal, suggesting a preference for one may relate to a dislike of the other. Scores for these first two components were subjected to a median-split, and participants were subsequently divided into four categories: high in both components, low in both components, or high in one and low in the other, respectively. Participants were grouped such that there was one member of each category in each group, limiting the possibility that movement effects could be attributed to unexpected convergence or lack of convergence in the dancers’ music preferences. This approach allowed for the use of multiple genres while still allowing for participants to have varied music preferences.

Although an effort was made to prevent participants who knew each other well from being in the same group (for example, not granting requests from participants to be grouped with friends), a minority of participants (n ≈ 12) were acquainted before the experiment.

Personality measures

FFM personality dimensions were measured using the Big Five Inventory (BFI), a 44-item self-report measure in which participants rank their agreement on a seven-point Likert scale with statements such as “I see myself as someone who is talkative” or “…tends to be lazy” (Pervin & John, 1999). Only the Agreeableness (A) and Extraversion (E) scales were used in analysis, as these are considered interpersonal traits, most relevant for social functioning (Ansell & Pincus, 2004). In addition to personality, trait empathy was also measured. The Empathy Quotient (EQ), developed by Baron-Cohen and Wheelwright (2004), measures trait empathy as a whole, including both cognitive and affective aspects. For the current study, trait empathizing was measured using the short-form (22-item) version of the EQ, developed and validated by Wakabayashi et al. (2006).

Apparatus

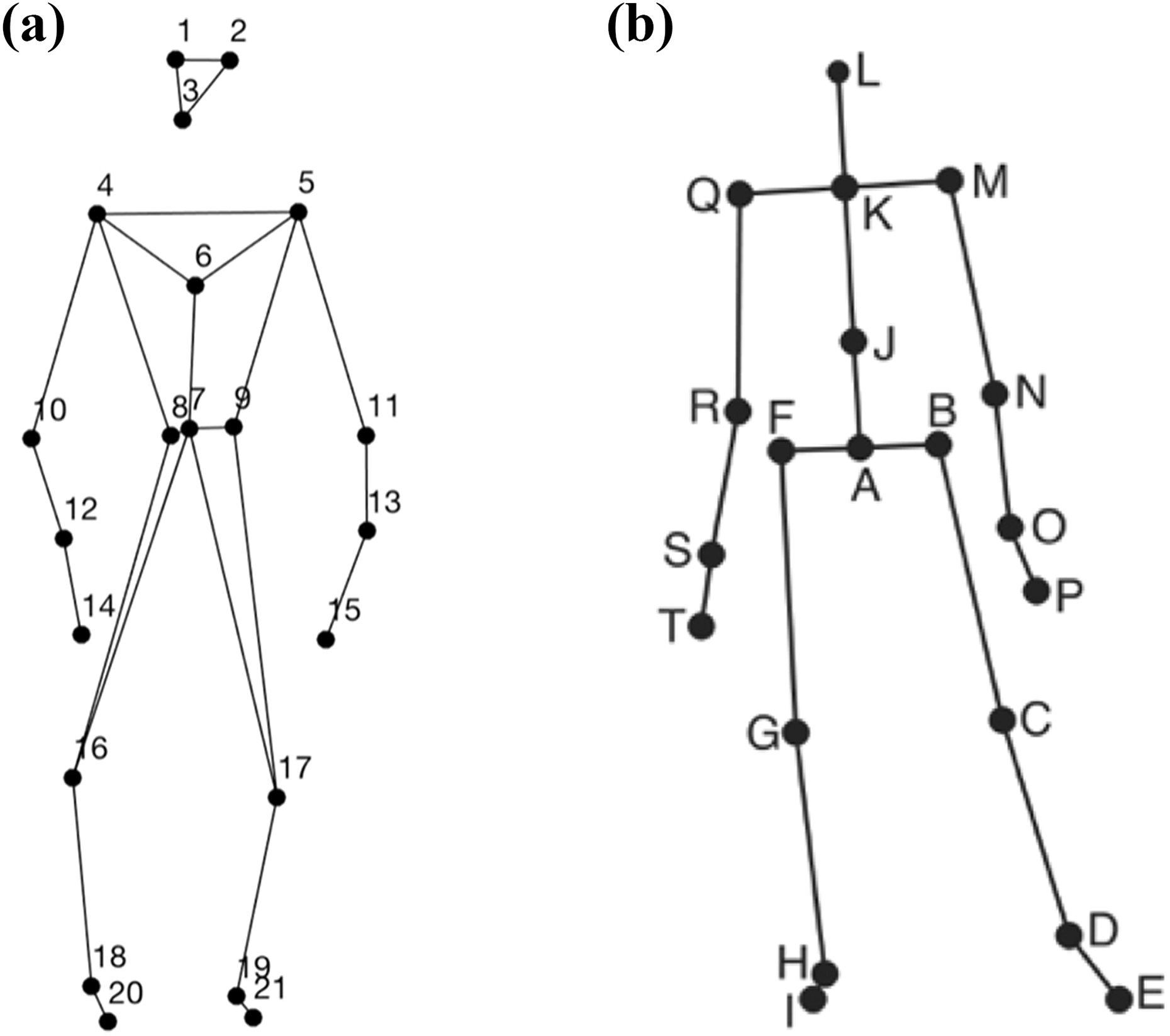

The SRM dictates that to calculate actor and partner effects each individual must act with a minimum of three different partners. It was therefore necessary for participants to attend the experiment in groups of four, allowing for the creation of six unique dyads, and to capture not only multiple dancers but multiple dyads at once. Participants’ movements were recorded using a 12-camera optical motion capture system (Qualisys Oqus 5+, Göteborg, Sweden) that tracked, at a frame rate of 120 Hz, the three-dimensional positions of 21 reflective markers attached to each participant. Eight cameras were mounted on the ceiling, and four were placed near the wall of the capture space (see Figure 1). The locations of the numbered markers were as follows (where L = left, R = right, F = front, and B = back): 1. LF head; 2. RF head; 3. B head; 4. L shoulder; 5. R shoulder; 6. sternum; 7. stomach; 8. LB hip; 9. RB hip; 10. L elbow; 11. R elbow; 12. L wrist; 13. R wrist; 14. L middle finger; 15. R middle finger; 16. L knee; 17. R knee; 18. L ankle; 19. R ankle; 20. L toe; 21. R tow. These can be seen in Figure 1(a). As multiple dancers in a motion capture space may also be difficult to differentiate once captured (Haugen & Nymoen, 2016), each participant was given either one, two, three or four extra markers attached to their leg. These markers were not used in data analysis. The musical stimuli were played in a random order in each condition via four Genelec 8030A loudspeakers and a sub-woofer using a Max patch (Cycling ‘74, San Francisco, CA) running on an Apple Mac computer. The direct (line-in) audio signal of the playback and the synchronization pulse transmitted by the Qualisys cameras when recording were recorded using ProTools software

Marker and joint locations: (a) anterior view of the marker locations a stick figure illustration; (b) anterior view of the locations of the secondary markers/joints used in the analysis.

To keep the experiment sufficiently short, it was necessary to capture multiple dancers and multiple dyads at once without their seeing one another. To facilitate this, a wall was installed that divided the visible capture space in half. An additional screen stood between the researchers and the capture space during motion capture to provide the participants with privacy from immediate observation so as to increase their comfort level. To minimize missing data, the capture space visible to the cameras was marked off on the floor using tape, and four of the cameras were set up on tripods on either side of the wall mitigate marker occlusion (Haugen & Nymoen, 2016). Additionally, Arabic numerals 1 through 4 were marked on the floor in order to guide participants where to be during dyadic conditions. The capture space set-up can be seen in Figure 2.

Motion capture space and divider wall.

Procedure

For each group of four, participants were labeled A, B, C, or D, and wore a badge displaying their letter to enable easy identification amongst themselves and by the researchers. Participants were told to imagine that they were dancing in a social setting such as a club or party, and that they would hear a wide variety of music. They were asked to listen to the music and move as freely as they desired, but staying within the marked capture space. The aim of these instructions was to create a naturalistic paradigm, such that participants would feel free to behave as they might in a real-world situation. Stimuli were presented in a randomized order. In the first condition, participants moved alone in one half of the capture space. As only two participants could be motion captured at once in this way, this condition was repeated such that two participants completed their “individual” condition while the other two participants left the laboratory and completed personality questionnaires. In the remaining conditions, participants were organized into dyads on either side of the wall, such that all six possible combinations were recorded over three conditions: AB, AC, AD, BC, BD, and CD. This design is referred to as Round Robin in SRM research; see the section on statistical analysis for more detail (Back & Kenny, 2010). Participants were told that they could interact or not interact with their partner, as they felt comfortable, but were asked not to hold hands or switch places in the capture space, to avoid undue difficulty in labeling the data. To limit the effects of fatigue, participants were given 3- to 10-minute breaks between each condition and were offered water, juice, and biscuits as light refreshment. Participants were informed that they were free to ask for a break or to stop the experiment at any time.

After all conditions were complete, participants filled out a form providing demographic information, were debriefed about the experiment, and given the opportunity to ask questions and share feedback. The experiment lasted approximately two hours.

Movement data processing

Using the Motion Capture (MoCap) Toolbox (Burger & Toiviainen, 2013) in MATLAB [Version R2016b], movement data of the 21 markers were first trimmed to match the exact duration of the musical excerpts. Gaps in the data were linearly filled. Following this, the data were transformed into a set of 20 secondary markers—subsequently referred to as joints. The locations of these 20 joints are depicted in Figure 1(b). The locations of joints B, C, D, E, F, G, H, I, M, N, O, P, Q, R, S, and T are, in each case, identical to the locations of one of the original markers, while the locations of the remaining joints were obtained by averaging the locations of two or more markers: Joint A is the midpoint of the two back hip markers, joint J is the midpoint of the shoulder and hip markers, joint K is the midpoint of shoulder markers, and joint L is the midpoint of the three head markers.

Acceleration data were chosen to assess participants’ overall amount of movement, and to reflect participants’ global complex movement in response to stimuli. Acceleration has been identified as a key movement feature in allowing musicians to synchronize to conductors’ gestures (Luck & Toiviainen, 2006), and has previously been used to give broad information about overall amount of movement within whole performances (Carlson et al., 2016). This approach allows for wide variety to exist between dancers’ free, improvised movements, as individuals may choose to embody the music in many different ways while still responding to and interacting with each other. Using overall acceleration also mitigates potential differences in dancers’ movements related to culture, while still providing broad information on essential aspects of their movements. Acceleration in three dimensions of the joints was calculated using numerical differentiation and a Butterworth smoothing filter (second order zero-phase digital filter). For each participant, the instantaneous magnitudes of acceleration were estimated for each joint and stimulus, and subsequently temporally averaged over each stimulus.

Previous research has shown that rhythmic, timbral, and structural features, as well as perceived emotional content of music, can affect music-induced movement (Burger, Saarikallio, et al., 2013; Burger, Thompson, Luck, Saarikallio, & Toiviainen, 2013; Luck, Saarikallio, Burger, Thompson, & Toiviainen, 2014; Solberg & Jensenius, 2017). Since such features may vary significantly between genres (Lidy & Rauber, 2005; Pachet & Cazaly, 2000; Sordo, Celma, Blech, & Guaus, 2008), acceleration was averaged across genre rather than condition (condition in this case refers to all stimuli danced to with a given partner) to avoid the potential confound. Thus, for each participant, for each genre in each condition, mean acceleration across all 20 joints was obtained, as well as mean acceleration for the head and across the hands. These variables are hereafter referred to as Mean movement, Head movement, and Hand movement, respectively. These variables were analyzed using the SRM.

Statistical analysis

SRM analysis was implemented in MATLAB using a Round Robin design. An example of the Round Robin design can be seen in Table 1.

Example of round robin design.

In the hypothetical data presented in Table 1, participant A’s score when dancing with participant B is 4, while participant B’s score while dancing with participant A is 3. Participant A’s actor effect can be determined via scores in row A, as each of these represent participant A’s scores in various conditions (with various partners). Participant A’s partner effect can be determined via the scores in column A, as these represent the scores of each partner when they were acting with participant A. Were participant A to score a six, regardless of who their partner was, this would indicate a strong actor effect. Similarly, were each of participant A’s partners to score a six when acting with participant A, this would indicate a strong partner effect, that is, participant A would have a similar effect on all of his partners (see Kenny et al., 2006, pp. 194–198 for a thorough discussion of the estimation of SRM effects). Using the SRM, the score of a given participant dancing with a given partner, for example A dancing with B, is modeled using the following equation:

where μ is the mean of all scores, α A is participant A’s actor effect (i.e., A’s level of consistency across interactions), β B is participant B’s partner effect (i.e., the consistency of responses of B’s partners to B), γ AB is the unique response of A and B after controlling for each other’s actor and partner effects respectively, and ε is random error. To take an example from the context of dyadic dancing, the amount that Dancer A waves his hands while dancing with Dancer B is estimated as the group mean amount of hand waving plus Dancer A’s tendency to wave his hands, plus Dancer B’s tendency to elicit hand-waving from her dance partners, plus Dancer A’s unique response to Dancer B, plus random error. Participant A’s actor effect can be estimated as:

where n is the group size, MaA is the participant’s actor scores (row A), MpA is the mean of their partners’ scores (column A), and M is the mean of all observations. Similarly, participant A’s partner effect can be estimated as:

The relationship effect of Dancer A with Dancer B, or the degree to which Dancer A’s response to Dancer B is unique given A’s actor effect and B’s partner effect, is estimated using the above terms as follows:

Actor, partner and relationship variances are used to indicate the degree to which effects vary across individuals. In our example, the actor variance would indicate the degree to which some participants tend to wave their hands in dancing a lot while some tend to wave their hands very little. Variances are calculated using the mean squares of scores within and between dyads. The mean of variances is taken across groups. For further details of the estimation of the SRM can be found in Kenny et al. (2006), and well as Appendix B of Kenny (1994).

Results

To assess overall differences between individual and dyadic conditions, and to check for significant differences between musical genres, a two-way repeated-measures ANOVA was run for each of the three movement features using condition (individual or the mean of dyadic conditions) and genre as within-subject factors. For genre, Mauchly’s Test of Sphericity indicated that the assumption of sphericity had been violated for Mean movement (χ 2 (27) = 85.97, p < .001), Head movement (χ 2(27) = 208.30, p < .001), and Hand movement (χ 2(27) = 148.23, p < .001). Mauchly’s Test of Sphericity was also significant for genre and condition for Mean movement (χ 2(27) = 64.76, p < .001), Head movement (χ 2(27) = 210.06, p < .001), and Hand movement (χ 2(27) = 190.55, p < .001). Therefore, a Greenhouse-Geisser correction was used in these cases.

Results showed that there was a significant effect of condition on Hand movement (F(1,51) = 182.46, p < .05), but not on Mean movement or Head movement. There was a significant effect of genre on Mean movement (F(7,243) = 22.91, p < .001), Head movement (F(7,137) =18.83, p < .001), and Hand movement (F(7,176) =14.67, p < .001). There was a significant interaction of genre*condition for Hand movement only (F(2.8,144) =3.97, p < .01). Bonferroni-corrected pairwise comparisons revealed a number of significant differences in movement features that differed per genre. Results are summarized in Figure 3, which shows that movement patterns per genre are similar across individual compared with dyadic conditions, with dyadic conditions showing more movement. Figures indicate that Metal stimuli resulted in the most head movement while Jazz stimuli resulted in the most movement overall. Results can be viewed in detail in Appendix A.

Estimated marginal means of Mean movement, Head movement and Hand movement for individual (solid line) and the mean of dyadic (dotted line) conditions, in mm/s2.

To learn whether these movement differences between genres affected SRM results, actor and partner variances were calculated for each genre separately. The mean of these was taken to obtain an overall measure of variances. Actor variances (AV) and relationship variances (RV) by genre can be viewed in Table 2. As all partner variances were very close to zero or slightly negative (a statistical anomaly that can occur in the SRM, see Kenny et al. (2006) for an explanation), these variances are considered to be zero and not reported here.

Actor and relationship variances for all genres across the 13 groups.

AV: actor variance; RV: relationship variance.

Actor variance ranged from .19 to .86, while relationship variances ranged from .42 to .89, suggesting that, between all dyads, movement features were differently influenced by characteristics of the individual, by characteristics of each given dyad, and that this differed somewhat by genre. Partner effects did not vary noticeably within any movement feature, suggesting that individual dancers did not tend to reliably elicit similar movement features from their different partners. These variances are more or less in line with results found by Kenny and Malloy (1988), suggesting that free dance may be similar to other socially interactive behaviors in terms of these relative contributions to variance.

To assess whether genre had a significant effect on SRM estimates, a two-way repeated-measures ANOVA was run for actor, partner, and relationship variances using movement feature and genre as within-subject factors; as variances are calculated across each group, the subject in this case is each group of four participants. Results showed no significant effect of genre or movement feature on variances (p ranged from .21 to .96), suggesting that, although genres elicited different movement features from individual dancers, genre did not significantly affect dancers’ responses to each other on the chosen movement features. Because of this, individual actor and partner effects were averaged across genre for comparison with self-report variables, to reduce the number of overall comparisons made.

To assess the possibility of individual difference affecting overall movement quality (Luck et al., 2010), the mean of movement variables was taken across conditions and correlated with individual personality scores. However, there were no significant relationships between Agreeableness (A), Extraversion (E), and Empathy Quotient (EQ) scores across conditions. Next, to assess the degree that individual differences influenced participants’ responses to their partners, participants’ A, E, and EQ scores were correlated with their actor and partner effects for Mean movement, Head movement, and Hand movement, controlling for group. The results are summarized in Table 3.

Correlation of actor and partner effects for all participants (n = 54, df = 51).

* p < .05. AE: actor effects, PE: partner effects. E: extraversion, A: agreeableness, EQ: empathy quotient. AE: actor effects, PE: partner effects. E: extraversion, A: agreeableness, EQ: empathy quotient, df: degrees of freedom.

Effect sizes were small to moderate. There was a significant negative correlation between empathy and actor effect for Hand movement, suggesting empathic participants changed their hand movement more across partners. Previous research has suggested difference between males and females in non-verbal behavior, personality, and empathy (Baron-Cohen, 2009; Berry & Hansen, 2000; Hall, 1978; Costa Jr., Terracciano, & McCrae, 2001; Koppensteiner & Grammer, 2011). Independent sample t-tests were first carried to assess differences between male and female actor and partner effects. As no significant differences were found in actor and partner effects overall, correlation analyses were also carried out for both sexes separately to assess differences in relationships between personality and SRM variables, controlling for group. Correlation results for males can be seen in Table 4.

Correlation of actor and partner effects for males (n = 16, df = 13).

* p < .05. AE: actor effects, PE: partner effects. E: extraversion, A: agreeableness, EQ: empathy quotient, df: degrees of freedom.

Although effect sizes are moderate, they should be treated with caution due to the very small sample size and multiple comparisons made. For males alone, there were significant negative correlations between empathy and actor effects of both Head movement and Hand movement. There was a significant positive correlation between Agreeableness and Actor effect for Hand movement, and no significant relationships between Extraversion and actor or partner effects. The results for female participants can be seen in Table 5.

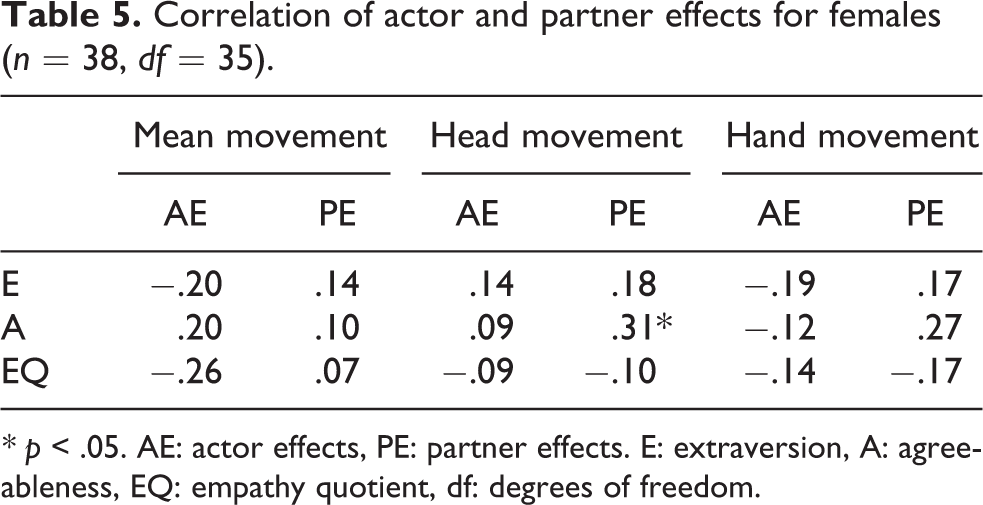

Correlation of actor and partner effects for females (n = 38, df = 35).

* p < .05. AE: actor effects, PE: partner effects. E: extraversion, A: agreeableness, EQ: empathy quotient, df: degrees of freedom.

For females alone, there was a significant positive correlation between Agreeableness and partner effect of head movement. There were no other significant correlations.

Discussion

The current study used the SRM to examine music-induced movement in a dyadic context, taking individual differences and sex into account. Previous research has largely employed individual contexts (e.g., Burger, 2013; Carlson et al., 2016; Luck et al., 2010), stylistically trained dyads (Haugen, 2014) or aggregated group data (Solberg & Jensenius, 2017), but to the authors’ knowledge, this is the first study of music-induced movement to examine dyads that has employed SRM analysis.

Our first hypothesis (H1), that participants would change their movements in response to the presence of a partner, was partly supported by ANOVA results comparing individual and dyadic movement. In general, participants tended to move more during dyadic conditions than individual conditions, although this was only significantly so of hand movement. This may indicate that the presence of another dancer increased participants’ desire to move, and corroborates evidence that hand movement may be particularly communicative (Goldin-Meadow, 2006; Krauss et al., 1996). Some increased movement could be attributed to order effects, as participants became used to the laboratory setting and MoCap equipment, but it is also possible that increased hand movement in dyadic conditions indicates that the presence of a partner afforded more movement through interaction, or protected against the effects of fatigue and boredom, in line with previous results (De Bruyn et al., 2008).

Overall, results regarding actor, partner, and relationship variances support our second hypothesis (H2), that SRM results would show higher actor and relationship variances and lower partner variances. SRM analysis showed moderately large actor variances for movement variables, suggesting that characteristics and tendencies of the individual to act in a consistent manner, rather than characteristics of their partner or their dyadic relationship, accounted for a significant amount of variance of these features. That the ANOVA results showed significantly different movement profiles between genres, but not significantly different variances, suggests that common fate related to dyads’ exposure to the same stimuli did not overwhelm other aspects of non-independence, specifically actor and relationship effects. Mean actor variances were higher for Mean movement and Hand movement than for Head movement, suggesting that the latter variable was more influenced by the presence of a partner. Actor variance was lowest and relationship variance highest for Head movement, suggesting that head movements may be particularly indicative of how participants respond to and engage with a partner. Though differences between genres were not found to be significant, it can be noted that both Country and Jazz showed relatively low actor variance and relatively high relationship variance across movement features, suggesting that these genres might particularly afford interaction.

There were no variables for which there was a quantifiable amount of partner variance, suggesting that individuals generally did not consistency elicit the same types of responses from all their dance partners. This is in line with previous research into actor and partner variances, which suggests that, overall, individuals do not typically elicit consistent behavioral responses across partners (Kenny & Malloy, 1988; Malloy et al., 2005). In music and movement research, partner effects may be more evident in contexts other than free dance, such as overt synchronization tasks, leader–follower tasks, or trained dance styles, such as tango. Partner effects may also be visible in contexts such as music or dance therapy, wherein the therapist may have the overt intention to affect the movements of the client. The highest mean variances were relationship variances, suggesting that the individual relationship between partners strongly affected dancers’ movements. There are quite a few variables that could contribute to the uniqueness of each dyad, including similarity or differences of personality or empathic abilities, cultural similarities or differences, or sex makeup of the dyad; such variables have also been shown to affect the quality of non-dance social interactions (e.g., Berry & Hansen, 2000; Cuperman & Ickes, 2009; Webster & Ward, 2011). Additionally, individual differences exist in music preference (Rentfrow et al., 2011) and rhythmic and synchronization ability (Pecenka & Keller, 2011). Individuals may well differ in terms of higher-level forms of entrainment such as a tendency to imitate a partner’s specific dance moves.

Analysis found no significant relationships between personality dimensions Agreeableness and Extraversion and extracted movement features across conditions. Since previous studies have used extreme scorers to observe movement differences related to personality (e.g., Luck et al., 2010), it may be that personality differences were subtler in the current sample and therefore not captured by this analysis. However, since previous research used individual conditions only, it is possible that the presence of various partners influenced participants differently across conditions, obscuring personality effects in analysis of the individual. Agreeableness and Extraversion, however, also did not show any significant relationships with individuals’ actor and partner effects regarding movement features across the whole group, contrary to our third hypothesis (H3). Empathy was related negatively to actor effects for Hand movement, indicating that more empathic participants’ hand movement was determined less by themselves than by other actors. In the experimental context, the most salient changing factor would be their dance partner, so from this, we can tentatively conclude that empathic participants may have responded more to their partners than non-empathic participants, in line with some theoretical definitions of empathy and providing partial support for our third hypothesis (H3) that empathy would relate negatively to actor effects (Gerdes, 2011; Iacoboni, 2005; Tomei & Grivel, 2014). Further research could explore the relationship between this finding and previous work showing that higher empathy is related to increased automatic mimicry (Sonnby-Borgström, Jönsson, & Svensson, 2003; Sonnby–Borgström, 2002), and the close link between empathy and motoric representation of and responsiveness to a partner in music performance (Novembre, Ticini, Schütz-Bosbach, & Keller, 2012, 2014). As the effect size of this finding is relatively small, these interpretations are tentative and further research should be conducted to corroborate this finding. Please see the supplementary material for this article for an example of a dancer with low actor effect (Video 1) and an example of a dancer with a high actor effect (Video 2).

While this relationship did not persist significantly when females were analyzed separately, it did persist for male participants. For males, there was additionally a negative relationship between empathy and actor effect for Head movement, suggesting that empathic males may have adjusted their amount of head movement to their partners more than less empathic males. This may relate to previous findings showing that males tend to have lower levels of empathy than females, (Baron-Cohen, Richler, Bisarya, Gurunathan, & Wheelwright, 2003; Baron-Cohen & Wheelwright, 2004; Baron-Cohen, 2009), as behavioral adjustments may be more unusual in males and thus more easily detected by statistical analysis. However, as the current sample included only a small number of males, these interpretations are made cautiously. Further research involving larger samples is needed to corroborate and clarify these results.

A few significant relationships emerged for Agreeableness and actor and partner effects when divided by sex. For females, Agreeableness was associated positively with partner effect of Head movement, indicating that agreeable females elicited relatively consistent responses in the head movement in their partners. This further supports the idea of head movement as a particularly important feature for dyadic movement. Nodding is a nonverbal behavior associated with agreement and affiliation (e.g., Beck et al., 2002; Helweg-Larsen et al., 2004), while head-bobbing to music has been associated with spontaneous music-induced movement and rhythmic perception. It may be that, in dyadic contexts, head movement is a natural way to express affiliation or to rhythmically entrain. For males, Agreeableness was positively related to actor effects for Hand movement. Agreeableness is associated with prosocial behavior and “the desire to contribute” (Graziano & Tobin, 2002, p. 584), so it may be that this correlation reflects a social motivation. Further study with a larger sample size, and a gender-balanced design, are needed to corroborate and clarify this finding.

The lack of significant findings related to Extraversion bears noting. Although one of the defining features of Extraversion is a drive towards social interaction, the current results do not indicate that extraverted participants adjusted their movements to their partners, nor did they elicit any type of consistent movement patterns from their partners. It is possible that extraverted participants might respond to music and to the presence of a partner with movement and even communicative intent, but this behavior is relatively consistent across dance partners. In other words, extraverts tend to respond in an extraverted way to everyone they meet.

Some limitations of the current study should be noted. Though the minimum requirement of four participants per group was met, Kenny et al. (2006) note that, in an SRM study, using smaller group sizes can limit statistical power. Thus, although this study as demonstrated the feasibility of the application of the SRM to motion capture movement research, the results should be interpreted with caution. Replication of the current study could strengthen the results. Future research could also attempt a similar methodology using larger group sizes, although this would create practical difficulties that need to be addressed (capture space size, time required to complete experiment, fatigue). The varied cultural background of the participants could also have contributed to the current results, although the authors believe that the use of a course-grained measure of movement (acceleration) may have mitigated some cultural differences in music-induced movement. That some (approximately twelve, although data were not formally collected about this) participants were acquainted with one another while others were strangers may be a limitation to the current research. Future work might address this weakness by specifically recruiting well-acquainted and non-acquainted dyads. Future work should also include the exploration of how various dyadic interactions during dance are perceived by others, and as well as analysis of dyads’ synchronization to the music and to each other over time.

These limitations notwithstanding, the current study shows that the SRM can be meaningfully applied to motion capture data of free dance movement. The results of the current study suggest that the presence of another person can indeed affect our movement to music, and that the unique characteristics of a given dyad have the greatest influence over how our movements change, providing rationale for further study of free dance movement focused on dyads. Further research could focus, for example, on measures of synchronization and movement coupling between dancers or of leader-follower relationships, which might be compared with dyadic indexes representative of individual differences, such as measures of similarity between partners’ personality scores or music preferences (Cook & Kenny, 2005; Kenny et al., 2006; Levesque, Lafontaine, Caron, Lyn Flesch, & Bjornson, 2014). Further study on the relationship between empathy and dyadic aspects of free dance movement is also needed, as well as replication and expansion of the methodologies described here. This study represents a first step in the application of the SRM to dance data to quantify how social context affects free dance movement, and a further step in understanding how who we are is manifested in our embodied responses to music.

Footnotes

Contributorship

All authors collaborated in conceptualizing and planning of the study. BB and EC preprocessed the data. EC carried out the analysis and wrote the first draft, with input and feedback from PT and BB. All authors reviewed and edited the final manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

Although no ethical review was required for this study, data have been collected ethically with careful regard for participant privacy and anonymity.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Peer review

Jacques Launay, Brunel University London, Division of Psychology.

Matthew Woolhouse, McMaster University, School of the Arts.

One anonymous reviewer.

Supplementary material

Supplementary material for this article is available online.

Notes

Appendix A

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.