Abstract

During the Russia–Ukraine conflict, social media has become an outlet for public opinion; therefore, besides the hot war, information warfare is also taking place. It was discovered that a large number of new social bot accounts had emerged on Twitter since the outbreak of the conflict. In particular, the Twitter account @UAWeapons has grown its following by hundreds of thousands in fewer than 30 days and has established itself as an influential opinion leader. Through data mining and three different analysis methods, this study investigated how social bots grew to influence public opinion. Specifically, time-series analysis revealed an unusual pattern of “pulsing” changes in the number of tweets posted by @UAWeapons over time. The content analysis illustrated that the account posted biased tweets in favor of Ukraine under the guise of being a neutral messenger, which led to frequent retweets from social bots with similar opinions. Finally, the results of the social network analysis indicated that @UAWeapons’ rapid growth might be attributed to a strategy of network clustering implemented by a core group of social bot accounts.

Introduction

Since the outbreak of the Russia–Ukraine conflict, a large number of new social bot accounts (created between February and March 2022) have emerged on the Twitter platform (Oremus & Zakrzewski, 2022), but most of the accounts were not very influential due to limited followers (Shen et al., 2023). However, the bot account @UAWeapons had rapidly grown into a large account with hundreds of thousands of followers in less than a month since it entered the Twitter platform on 20 February 2022. It has become an important public opinion leader on issues related to the Russia–Ukraine conflict.

Social bots are often used for computational propaganda and political opinion warfare (Woolley & Howard, 2017). This new type of political propaganda tool manipulates public opinion by deliberately managing and distributing highly inflammatory and misleading information on social media platforms such as Facebook and Twitter (Woolley & Howard, 2016). Guilbeault (2016) argues that political actors are deploying social bots on social media, posing a substantial threat to internet security while also raising ethical and political concerns. In addition to playing an important role in political events such as the US election (Howard et al., 2018) and Brexit (Howard & Kollanyi, 2016), they are also widely used in international public opinion warfare in various emergencies such as COVID-19 (Shi et al., 2020).

According to previous studies, social bots negatively impact the court of public opinion, so the sudden appearance and growth of @UAWeapons in Russia–Ukraine conflict should be noted. Previous research on social bots has mainly focused on the behavioral characteristics (Varol et al., 2017), communication effects (Varol & Uluturk, 2020), and how to form a social network under a specific topic to drive public opinion (Cheng et al., 2020), while there are few studies on the growth of social bot accounts themselves and how social bots are implemented internally through mutual boosting. Therefore, this study focuses on the Twitter account @UAWeapons as a case study and explores how social bots influence public opinion via time-series analysis, content analysis, and social network analysis.

Literature review

Social bot

With the development of information and communication technologies, online social networks have become an important virtual space for people to communicate with each other, where millions of active users participate and interact every day. Platforms such as Twitter and Facebook are not only social platforms for the general public but also a new stage or battlefield for politicians, celebrities, and revolutionaries (Boshmaf et al., 2011). To some degree, social media were considered effective in promoting democratic dialogue on social and political issues (Bessi & Ferrara, 2016). Nevertheless, certain challenges have been identified as well, such as the dissemination of misinformation, the manipulation of public opinion, and the implications on social equality, and scholars underscored the necessity of examining the role of “social bots” within these spheres (Howard & Kollanyi, 2016; Samanci & Thulin, 2022; Woolley & Howard, 2017).

Social bots can automatically generate online messages such as tweets and comments (Howard & Kollanyi, 2016). As a result, they can create fake news and trends to affect people’s judgments on the support rate of certain politicians or other controversial issues (Michael, 2017). Allem and Ferrara (2018) defined that “social bots are automated accounts that use artificial intelligence technology to guide discussions and promote specific ideas or products on social media such as Twitter and Facebook (p.1).” Depending on their purposes, social bots can be divided into two categories, benign and malicious. Benign social bots include chatbots that help improve public relations (Men et al., 2022), content-generated bots that can automatically aggregate information to improve news production efficiency (Pozzana & Ferrara, 2020), and customer service bots which are widely used by companies and brands (Ferrara et al., 2016) and so on. There are also malicious social bots designed to infiltrate public opinion (Fazil & Abulaish, 2017), spread disinformation (Woolley & Howard, 2017), manipulate the stock market (Ferrara et al., 2016), harm or discredit individuals (Yan et al., 2020), and political bots are one example.

Political bot in computational propaganda

Abokhodair et al. (2015) described social bots as automatic social actors, while Ferrara et al. (2016) argued that political bots were more like puppets of masters that were manipulated by entities such as political organizations for their own benefit. Political bots rely on algorithms and automated scripts, disseminating misleading information purposefully by imitating the behavior of real people to guide or participate in political events (Woolley & Howard, 2016). Political bots played a strategic role in political events, for example, COVID-19 (Robles et al., 2022), the Brexit referendum (Howard & Kollanyi, 2016), political elections (Bessi & Ferrara, 2016; Ferrara, 2017; Vogt, 2012), climate change (Gallwitz & Kreil, 2022) and so on. More specifically, Woolley and Howard (2017) found that political bots would constantly integrate information and quickly produce content to influence votes or slander critics. Samanci and Thulin (2022) reported that political bots could increase the number of followers or tweets of a specific user and exaggerate the influence of celebrities or events. During the 2016 US presidential election, Howard and Kollanyi (2016) proposed that political bots played an increasingly important role in the globalized political system in the form of botnets, fake news, and algorithmic manipulation, which was also known as “computational propaganda,” that referred to “assemblage of social media platforms, autonomous agents, and big data tasked with the manipulation of public opinion” (p. 4). In short, political bots—a subset of social bots—can be leveraged to influence public sentiment and intervene in the opinion climate, commonly known as “social media astroturf” (Ratkiewicz et al., 2011). Moreover, political bots would intensify the polarization of attitudes, amplify negative emotions, and subtly endanger democracy (Robles et al., 2022).

Social bot and social ecosystem

Many problems brought by social bots to the social ecosystem have been repeatedly mentioned in previous research. Scholars raised ethical concerns about the misuse of algorithms and bots, and called for supervision and intervention (Guilbeault, 2016; Maréchal, 2016). Cresci et al. (2015) pointed out that fake followers negatively impacted the ecosystem of social media platforms, as well as, in some cases, society as a whole. In the later stages, academia and industry jointly released a series of social bot detection tools based on research into the behavior characteristics of social bots and the cracking of algorithm technology. Studies on the mechanism of detection tools such as Debot (Chavoshi et al., 2016), SocialBotHunter (Dorri et al., 2018), and Botometer (Sayyadiharikandeh et al., 2020) have emerged. However, Gallwitz and Kreil (2022) pointed out that the current research about the prevalence or influence of social bots might be misleading, possibly caused by flawed bot detection methods. Therefore, relevant research needs to strictly examine the IDs of social bots to ensure authenticity.

To conclude, most relevant studies focus on how social bots influence social networks, especially how they shape public discourse in political communication. While it remains empirically understudied as to the growth of an account, particularly a social bot account, and factors associated with its growth. Therefore, this study focuses on the Twitter bot account @UAWeapons as a case study and explores how social bots grow to influence public opinion, with respect to the characteristics of tweets and social bot engagement. As such, the research questions are formulated as follows:

Methods

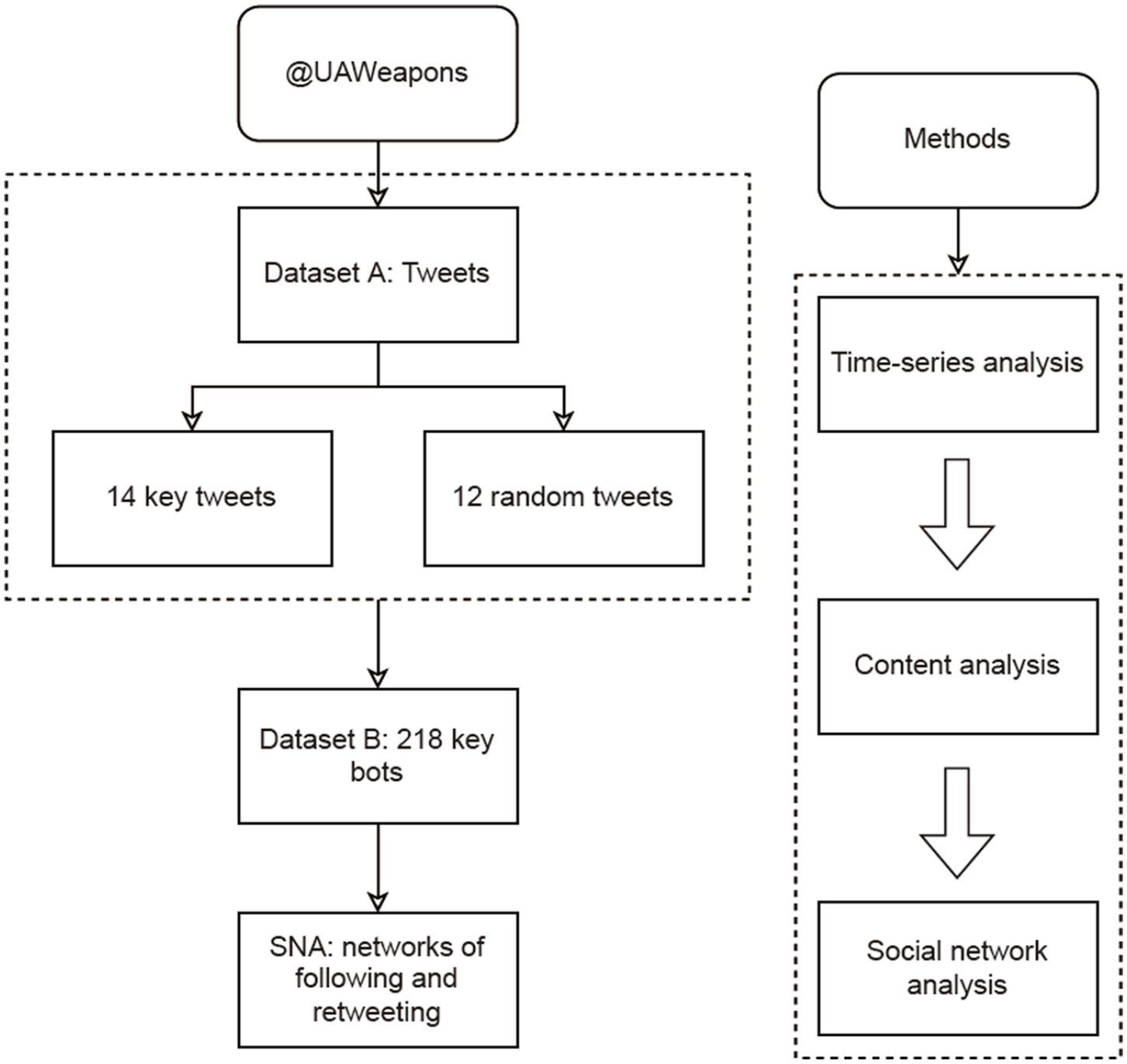

To answer the above-mentioned research questions, a Python program was used to collect data sets for tweets as well as accounts. Meanwhile, time-series, content, and social network analyses were conducted to investigate the reasons for the rapid growth. Figure 1 illustrates the research framework.

Research framework.

Data collection

Acquisition of the tweets (Data Set A)

The social bot @UAWeapons, screen name Ukraine Weapons Tracker, created in February 2022, had 410,000 followers within 1 month and over 480,000 by the end of April 2022. Shen et al. (2023) conducted a similar study by collecting 3,816 random bot accounts related to the Russian–Ukrainian conflict from 17 February to 17 March 2022 and concluded that the average number of bot account followers was 988, and the median number was 174. In another study, 96 bots were deployed on a social platform and gained 5,546 followers in total over the course of 42 days (Wang et al., 2020). As such, @UAWeapon stands out from similar ones due to its rapid growth in followers within a relatively short period of time, and therefore, it was selected as the subject of this study.

Using its Twitter ID @UAWeapons as the keyword, we utilized the Twitter API to collect original tweets throughout a 44-day span from 21 February 2022 (the date of the first tweet) to 5 April 2022. The tweets were sorted and numbered according to posting time, with 0 being the oldest and 1,707 being the most recent, which resulted in a data set of 1,708 tweets posted by @UAWeapons (labeled Data Set A).

For Data Set A, 14 key tweets with explosive growth in tweet engagement metrics were chosen for analysis (see Table 6). Selection criteria contain four aspects: (1) the first tweet; (2) the tweets posted during the first and last 5 days of the statistical period; (3) those tweets whose retweets or likes were at least 10 times greater than their previous tweets; and (4) those tweets whose retweets or likes had reached a significant number. For instance, tweet No. 76 was chosen because it received more than 1,000 likes for the first time, and tweet No. 282 was chosen because it received more than 2,000 retweets for the first time.

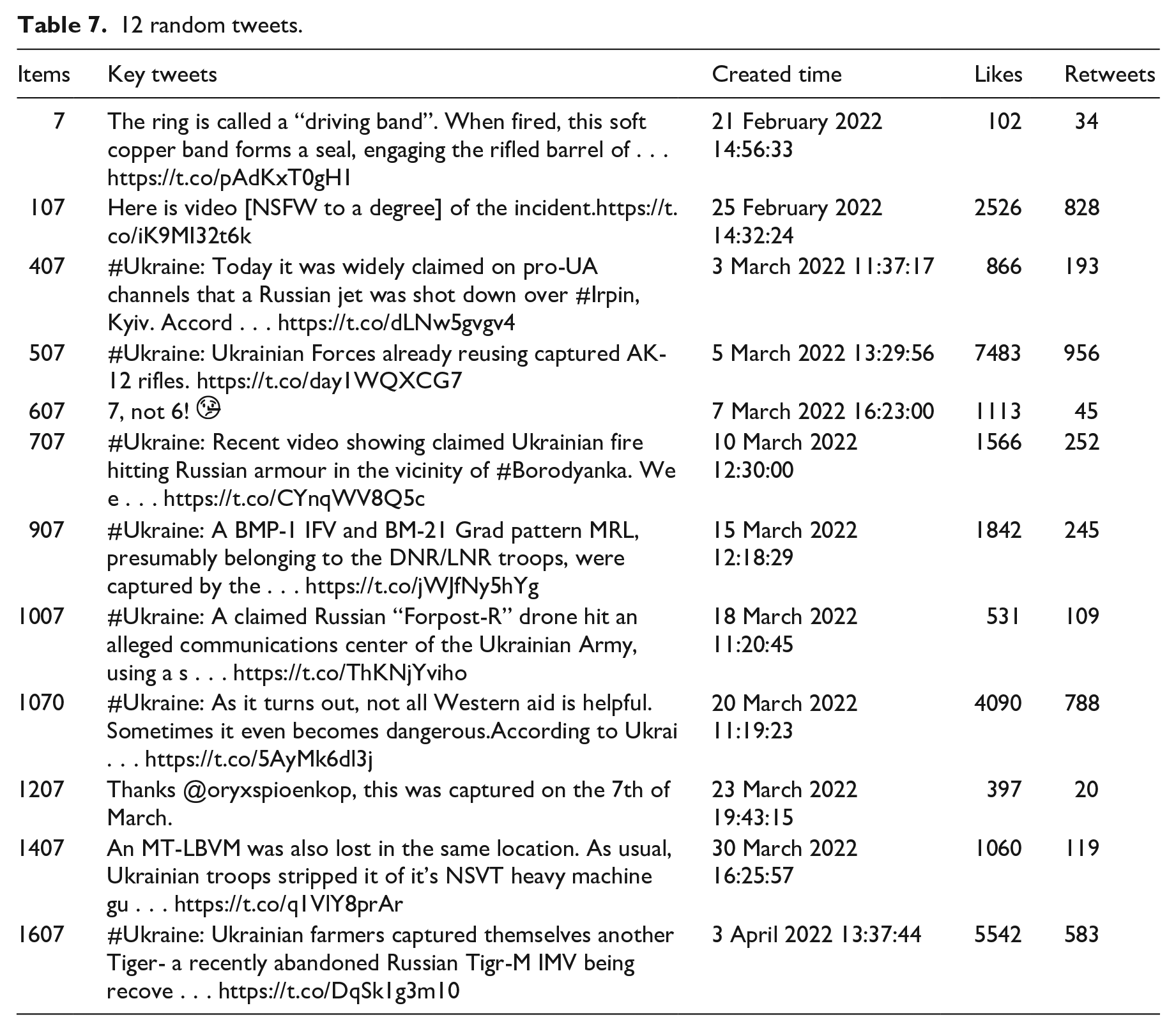

And then, 12 random tweets were selected via systematic sampling for comparative analysis: first, the number 7 was randomly selected from 0 to 9 by drawing lots; second, all tweets were sorted chronologically; after that, 28 tweets containing the ending number 7 were selected in intervals of 100, such as 7, 107, and 207; finally, 12 random tweets were selected by excluding tweets that were comments and retweets (see Table 7).

Acquisition of the followers of key bots (Data Set B)

The accounts of those who retweeted these 26 tweets were collected. It included 32,145 accounts, out of which 31,178 retweeted the 14 key tweets, resulting in 41,727 retweets; 2,614 retweeted the 12 random tweets, resulting in 3,250 retweets; and 1,647 accounts retweeted both key and random tweets. Among them, 1,045 accounts retweeted at least four key tweets, of which 482 accounts retweeted at least one random tweet. The bot scores of these 482 accounts were calculated using the Botometer API (for details, refer to the “Social bot detection” section).

It suggested that 264 accounts were identified as humans, while 218 accounts were identified as bots. These 218 bots, regarded as key bots, accounted for only 0.7% of all retweeters of the 26 selected tweets, which contributed 30.48% of retweets together with their followers. And more than half (54%) of the followers that were influenced were also bot accounts. Accordingly, all followers of these 218 bot accounts were collected via the Twitter API, and their bot scores were calculated via the Botometer API. Consequently, Data Set B, containing the 218 key bots and their followers, was acquired.

Social bot detection

In general, social bot detection methods can be classified into four categories: crowdsourcing, supervised learning, unsupervised learning, and generative adversarial networks. Specifically, crowdsourcing-based approaches refer to the recruitment of numerous trained individuals to classify social accounts according to predetermined rules (Wang et al., 2012); examples of social bot detection systems based on this approach include CopyCatch (Beutel et al., 2013) and SynchroTrap (Cao et al., 2014). Supervised learning-based methods extract features from social account profiles, social networks, content, and so on, using labeled data sets to determine the differences between bot accounts and real accounts (Davis et al., 2016). Unsupervised learning-based methods address clusters of accounts without labeled data sets (Cresci et al., 2017). Generative adversarial networks are novel machine learning techniques that improve bot recognition models by incorporating adversarial training of generators and discriminators (Cresci, 2020). Among them, supervised learning is the most popular and widely used method of social bot detection (Sayyadiharikandeh et al., 2020).

The Botometer, developed by the Observatory on Social Media (OSoMe) at Indiana University, is a social bot detection tool that is based on supervised learning (Sayyadiharikandeh et al., 2020). Botometer has been applied to a number of studies. Broniatowski et al. (2018), for instance, used Botometer and found that bots spread false information by masquerading as legitimate users and jeopardizing the public consensus regarding vaccinations. Duan et al. (2022) detected social bots on Twitter via Botometer and reported that social bots acted as algorithms capable of amplifying certain views and topics on social media and, as a result, shaping partisan political news coverage.

In this study, Twitter accounts were scored on a scale of 0 to 5 using the Botometer API, with higher scores indicating more bot-like behavior. Due to the accuracy of the tool and the difficulty of distinguishing human accounts (Keller & Klinger, 2019), this study determined a threshold of 3 (0.6) for determining bot accounts, which is more conservative than previous studies that set the threshold at 0.5 (Martini et al., 2021), reducing the likelihood of false positives as well. Consequently, Twitter accounts with a bot score of three or higher are considered to be bot accounts, whereas Twitter accounts with a bot score of less than 3 are considered to be human accounts.

Data analysis

Three different analysis methods were employed to gain a comprehensive understanding of the bot account’s characteristics and growth. In particular, the time-series analysis provided insight into how the account’s tweets were disseminated; the content analysis further clarified the account’s content characteristics, stance tendencies, as well as behavioral performance; and the social network analysis facilitated an in-depth study of the network clustering factors influencing the growth of @UAWeapons.

Time-series analysis

Using time-series analysis, all tweets posted by @UAWeapons from 21 February 2022 to 5 April 2022 on Twitter were analyzed to learn how the account progressed, and especially, to identify the abnormal phenomenon during the dissemination cycle.

Content analysis

By manually coding 26 tweets and 218 key bots, this study examined the characteristics of tweets posted by the account @UAWeapons and its stance tendency. For Data Set A, three coders analyzed tweets regarding tweet characteristics and political stances. A Fleiss’ Kappa of 0.86 indicated strong agreement among the three coders (Banerjee et al., 1999). Likewise, for Data Set B, 218 social bot accounts’ creation date and registration countries or regions were obtained, as well as the number of followers and the number of followings; and then four coders analyzed the political stance of these accounts with a Fleiss’ Kappa of 0.74, indicating good consistency.

Social network analysis

Social network analysis (SNA) is an important method to identify social network influencers. Regarding research on computational propaganda and social bots, many previous studies have used SNA methods. For example, Cheng et al. (2020) found that social bots in online social networks (OSN) could influence the formation of public opinions. Abokhodair et al. (2015) used SNA to analyze the characteristics of the Syrian social bot network and found that there were significant differences in the network’s proliferation trend and the content of tweets between bots and ordinary users in the network (Abokhodair et al., 2015). Social science believes that individuals are embedded in networks of social relationships and interpersonal interactions (Borgatti et al., 2009). Social networks are usually represented by graphs, which are data structures that describe the properties of the network through nodes connected by edges. In a social network, nodes are individuals, while edges refer to relationships between nodes. Among them, the connection patterns between nodes are neither random nor purely regular (Thai & Pardalos, 2012). Graph theory (West, 2001) is the basic theory to study the data structure, which plays an important role in data processing and analysis. This theory can generalize and analyze the interactions between users at a certain stage and their behaviors on social platforms (Bello-Orgaz et al., 2017). The methods and techniques of graph theory have been able to solve problems related to large networks, such as online social networks and social media applications such as Facebook and Twitter (Carrington et al., 2005).

By weighting SNA measurements, the main influencers in the interactive network and their relationships with other accounts can be found (Pudjajana et al., 2018). In terms of metrics, some traditional SNA indicators such as degree centrality, betweenness centrality, closeness centrality, K-shell, and semi-local centrality are popular ways to measure key nodes (Zhao et al., 2017).

In this study, to determine whether there was a direct correlation between the account’s growth of @UAWeapons and the key bots as well as their followers, SNA was conducted to examine the relationship and retweet of key bots and their followers, with Gephi 0.9.5 as a tool for visual network analysis (VNA). An investigation of the core nodes in the network was carried out using classical SNS metrics such as degree centrality, betweenness centrality, and closeness centrality (Tabassum et al., 2018). In addition, key network clusters were analyzed to identify the group dynamics factors that promote the spread of tweets (Johnson et al., 2020).

Results

To answer RQ1, time analysis showed the progress and abnormal characteristics during the dissemination cycle, and content analysis revealed the content characteristics and stance trends. To answer RQ2, a quantitative description of social bots retweeting @UAWeapons tweets was displayed regarding basic profiles, behavioral characteristics, and impacts; and social network analysis was conducted to identify the network clustering factors that influenced the account growth from a group dynamics perspective.

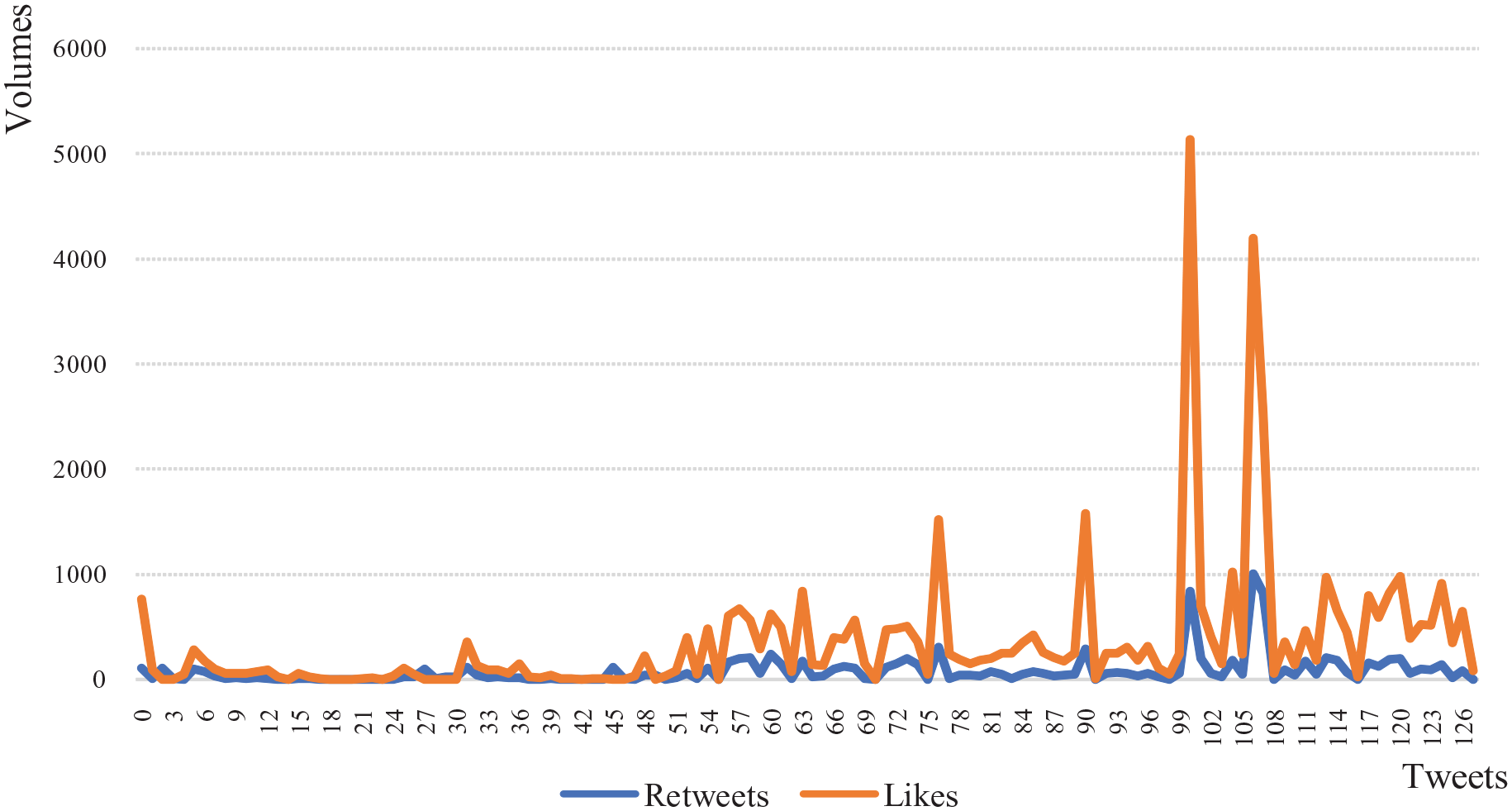

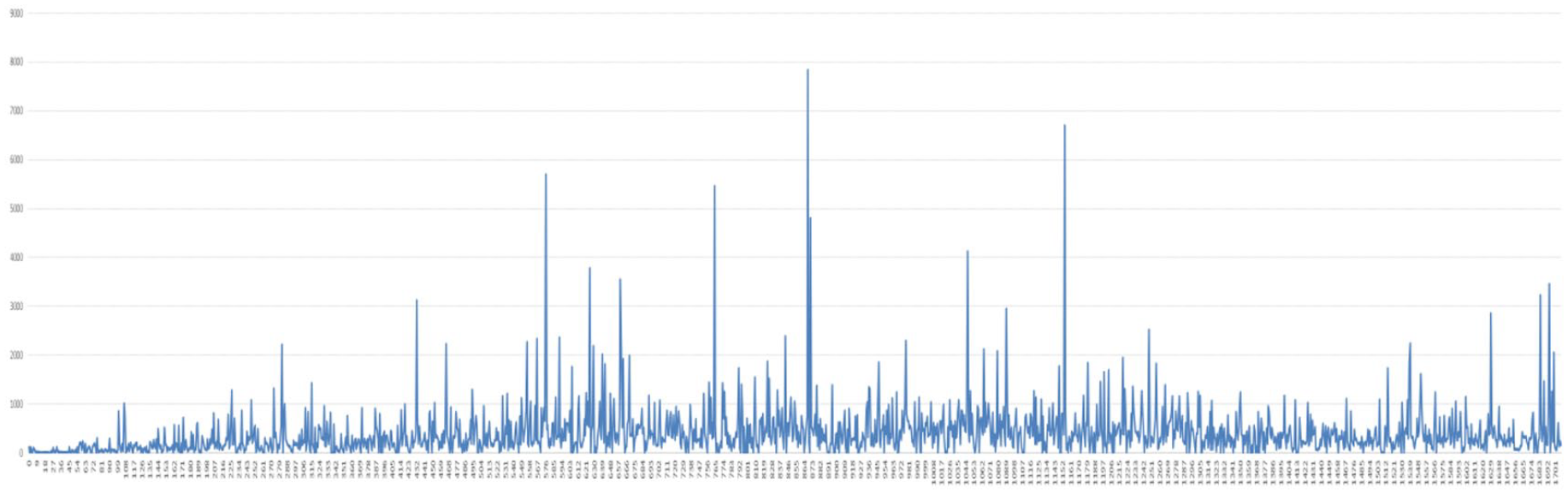

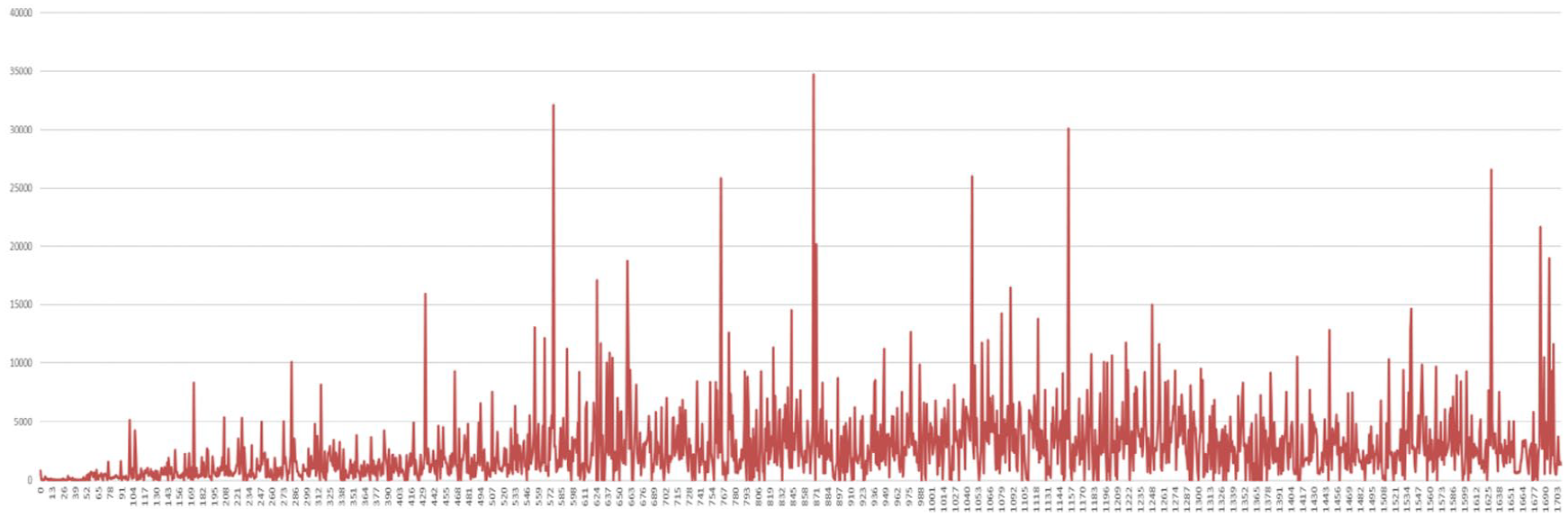

Time-series analysis: pulsed change in communication volume

During the period studied, some tweets from the account @UAWeapons appeared to have received an unusually high number of retweets and likes compared with neighboring tweets. In other words, a “pulsating” pattern was observed. For example, as indicated in Figure 2, among the 128 tweets posted from 21 February to 26 February, tweets No. 76, No. 90, No. 100, and No. 106 showed significant increases in retweets and likes, with the metrics returning to normal levels on subsequent tweets. A closer examination of the account’s tweets over longer periods of time revealed intermittent explosive growth in retweets and likes (see Figures 3 and 4).

Trends in the number of retweets and likes from 21 February to 26 February.

Trends in the number of retweets per tweet.

Trends in the number of likes per tweet.

Time-series analysis of Data Set A revealed dramatic and abnormal changes in tweet engagement metrics, including retweets, comments, and likes. To figure out this anomaly, further investigation was conducted into the characteristics of the account and its tweets.

Content analysis: pro-Ukraine stance

Description of the account @UAWeapons

Ukraine Weapons Tracker, whose account ID is @UAWeapons, joined Twitter on 20 February 2022. The account (see Figure 5) soon grew to over 486,000 followers by 24 April 2022. And, it was identified as a bot account with a bot score of 3.6 by the Botometer API detection.

Screenshot of the Twitter profile @UAWeapons.

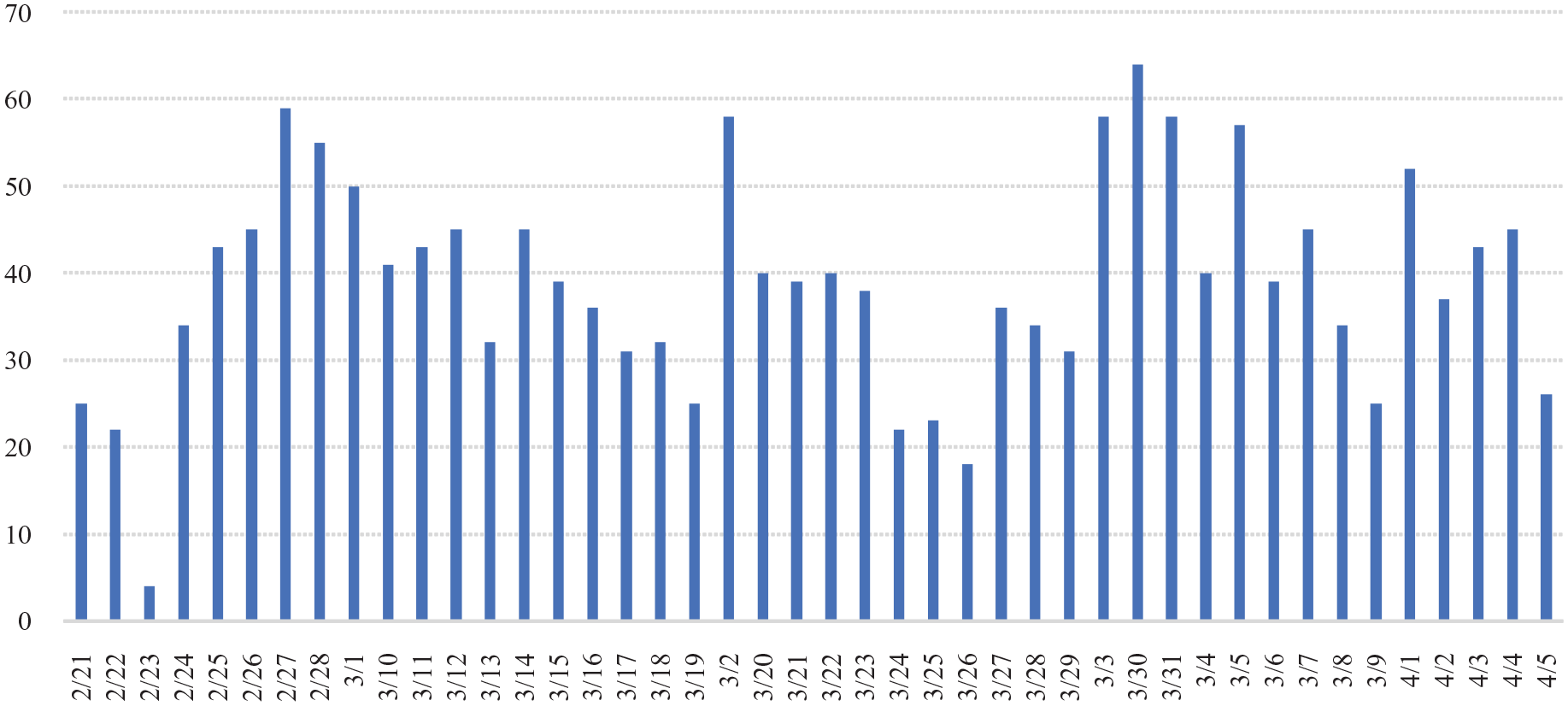

It was found that this account was extremely active and became more and more popular. With regard to posting frequency (see Figure 6), the rate was lower prior to the Russia–Ukraine conflict (21–23 February), with only four tweets on 23 February. Since the outbreak of the conflict, the account has been posting tweets all day, except for 8–9 hours at night. It is estimated that 1,708 tweets were posted during the statistical period (21 February–5 April), an average of 38.8 tweets per day, with the most tweets being 64 on 30 March.

Variation in the number of tweets day by day.

When it comes to the dissemination effect, @UAWeapons posted its first tweet at 7 a.m. on 21 February (see Figure 7); as of 24 April, that tweet had received 127 retweets and 766 likes. The account posted 1,708 tweets from 21 February to 5 April, with an average of 2,651 likes per tweet. In addition, there were 684,138 retweets in total, with an average of 400 retweets per tweet.

The first tweet posted by @UAWeapons.

Tweet characteristics that contribute to the account’s growth

Two characteristics of these tweets helped the account grow to some extent, as noted below.

Abounding hashtags and multimedia tweets

Hashtags were widely used by this account, and tweets with hashtags had a significantly higher probability of being disseminated and interacted with, averaging 5,297 likes and 800 retweets per tweet. In particular, the hashtag #Ukraine was used in 1,318 tweets, accounting for 77% of all tweets. Specifically, it was used in 13 of the 14 key tweets, with the exception of the first tweet; In contrast, 9 of the 12 random tweets used the hashtag. Moreover, most of the tweets were a combination of pictures/videos and texts, and tweets with only texts were few. Specifically, 7 out of 14 key tweets contained videos, while 2 out of 12 random tweets contained videos.

Pro-Ukraine stance

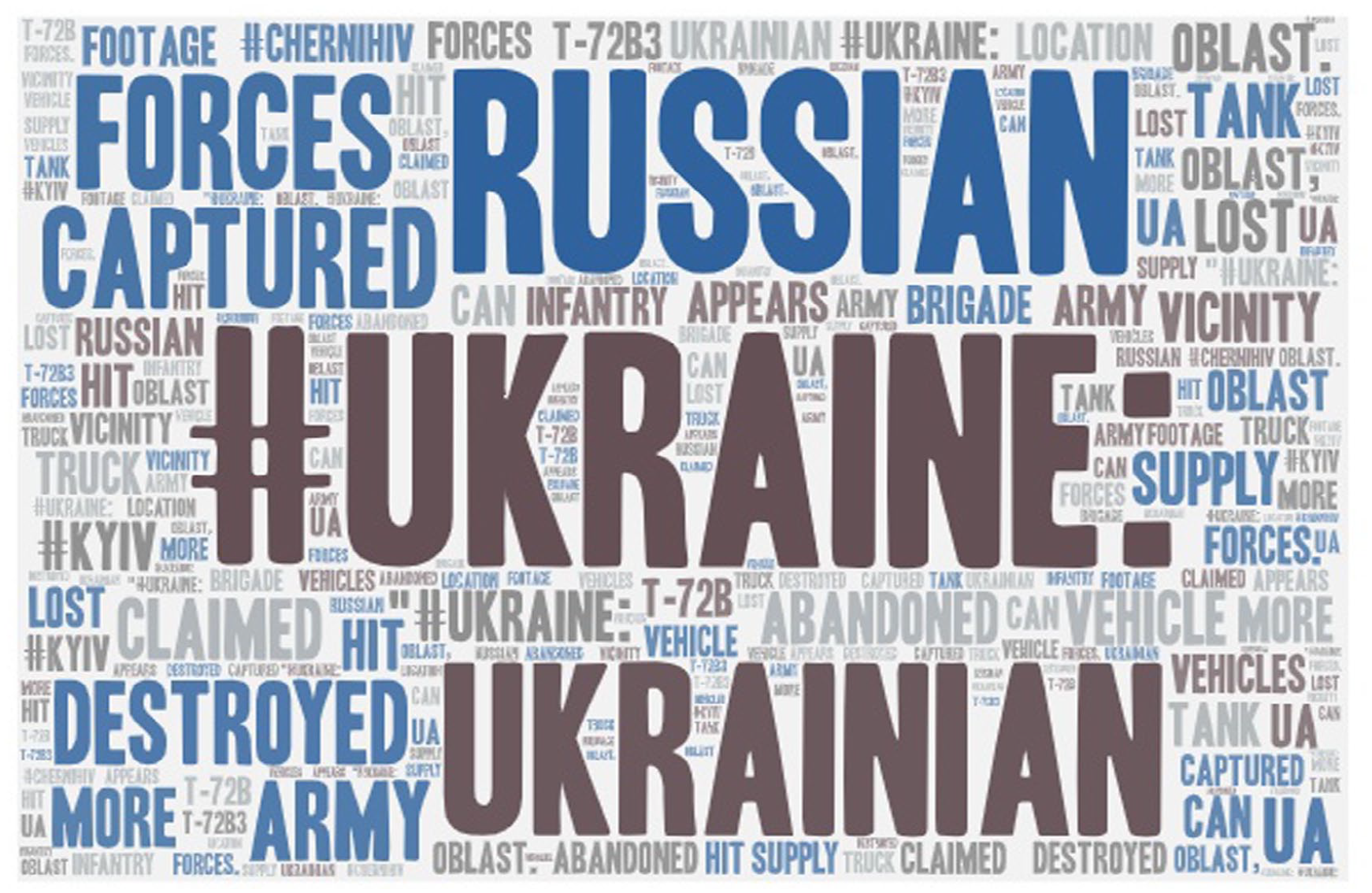

@UAWeapons posted tweets primarily about the Ukrainian–Russian conflict and the types of weapons used by both sides, as described in its profile: Debunking & Tracking Usage/Capture of Materiel in Ukraine. However. the account proclaims to be neutral yet politically inclined. Despite the absence of apparent attitudinal undertones, most of the descriptions involved Ukrainian military strikes against Russian military forces and the seizure of their military supplies, suggesting Ukraine could fight back against Russian aggression. According to a word frequency analysis of 1,708 tweets from 21 February to 5 April, the most frequently used words (see Figure 8) included “ukraine” (1,347 times), “russian” (937 times), “ukrainian” (674 times), “forces” (392 times), “captured” (309 times), and “destroyed” (305 times), armed (275 times), “oblast” (228 times), “tank” (158 times), “claimed” (153 times), and “vehicle” (146 times).

Most frequent keywords in tweets.

For further analysis, some typical tweets were coded and analyzed. Specifically, 26 tweets were selected for validation, including 14 key tweets with the highest engagement metrics (retweets, likes, and comments) and 12 random tweets. Appendix 1 provides detailed information about the tweets’ content.

Comparison results showed pro-Ukrainian stances were detected in key tweets: 11 of the 14 key tweets contained the content of active attacks, such as killing, capturing, and defeating Russian troops by Ukrainian forces; whereas only 4 of the 12 random tweets were pro-Ukrainian.

Stances of the key bots retweeting the account

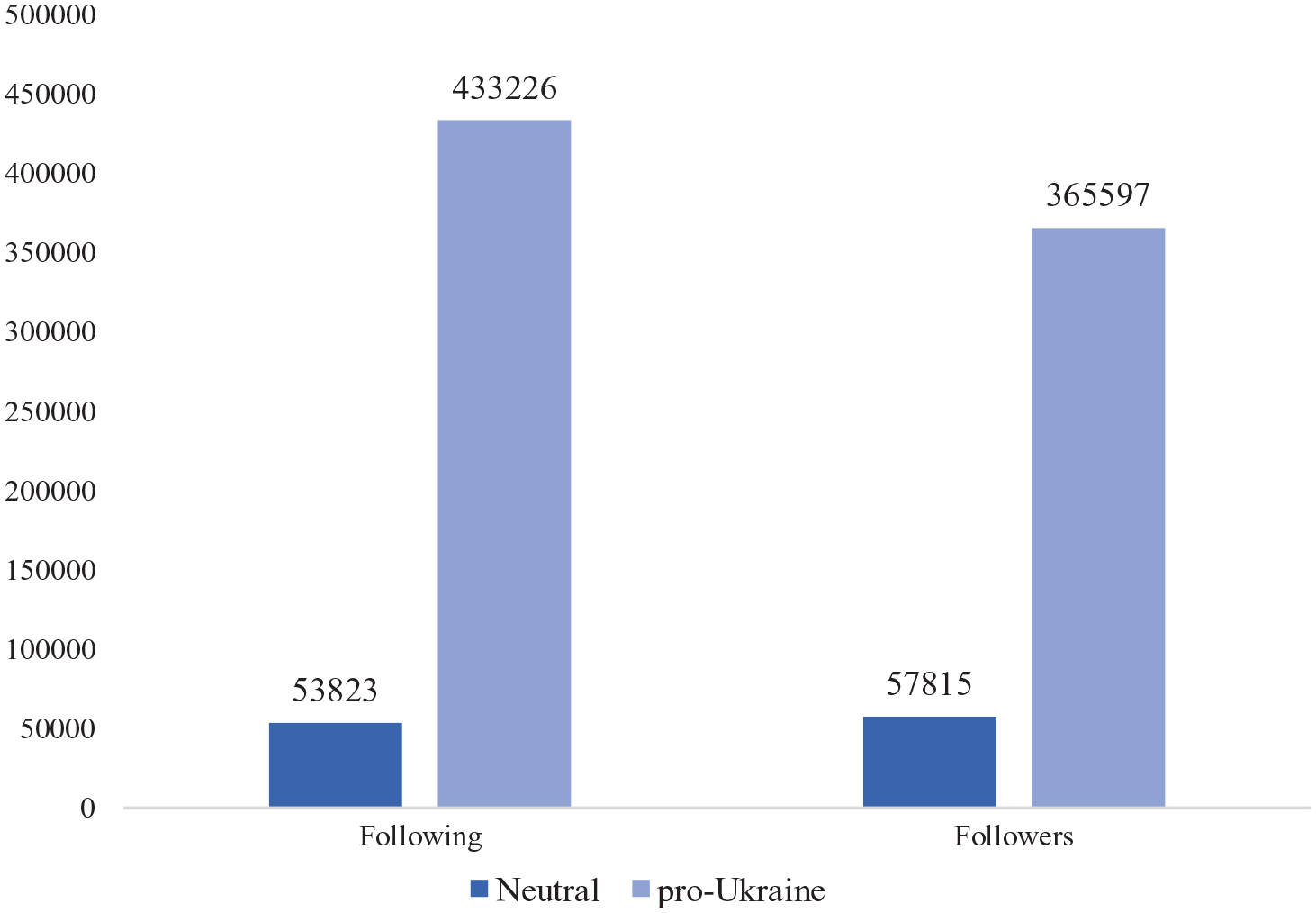

It was found that most of the bot accounts (86.11%) tended to support Ukraine and oppose Russia. It was primarily manifested in two aspects: first, Ukrainian flags appeared on the names, homepage backgrounds, and profiles of some accounts such as @mog7546, @VolunteerReport, and @Petteri96008017; second, the tweets demonstrated support for Ukraine and opposition to Russia on military and political levels. Meanwhile, the remaining 13.89% of accounts tended to be objective and neutral, mainly describing the situation on the battlefield, the military action on both sides, and views from around the world. Interestingly, no account appeared to be clearly pro-Russian. In general, bot accounts supporting Ukraine followed more users (433,200) and had more followers (365,600), accounting for 88.95% and 86.35%, respectively (see Figure 9).

Following and followers of different stances.

In summary, @UAWeapons posted tweets implying a pro-Ukrainian stance with hashtags and multimedia. And the more of these characteristics a tweet exhibited, the more likely it was to be popular. Furthermore, the majority of the key bot accounts that retweeted its tweets appeared to support Ukraine as well.

Content analysis: social bot involvement

As noted above, some key bot accounts retweeted @UAWeapons frequently. These key bot accounts accounted for only 0.7% of all accounts that retweeted the selected 26 tweets, but contributed 30.48% of the retweets along with their followers; notably, more than half (54%) of the influenced followers were also bot accounts. This suggests that these 218 bot accounts contributed significantly to the growth of @UAWeapons and therefore were discussed as follows.

Basic facts about the key bots

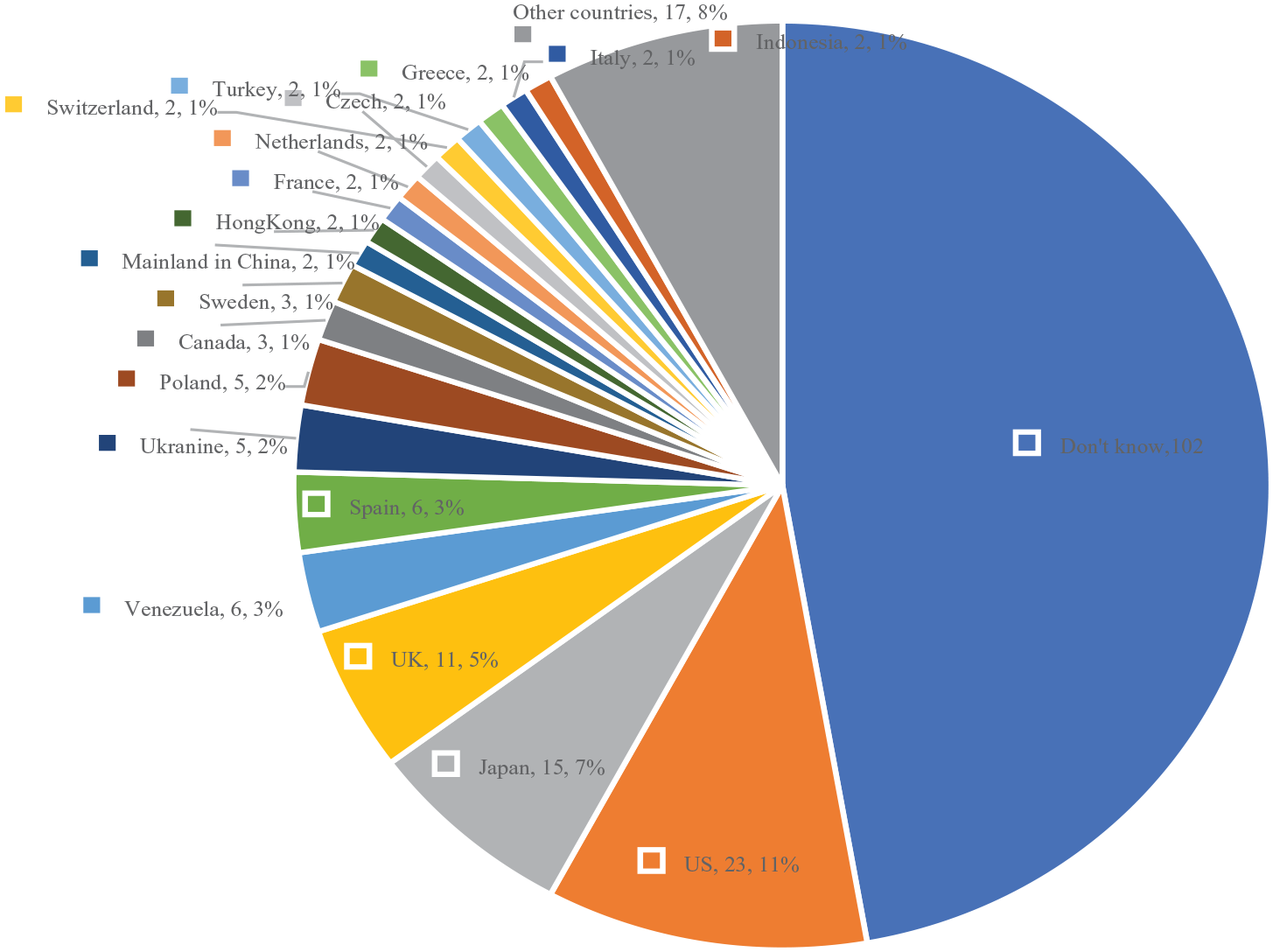

The API calling method provided by Botometer was used to detect social bots among 32,145 retweet accounts, and 218 key bots were identified. Except for two suspended bot accounts, the remaining 216 bot accounts were created between December 2007 and March 2022, with 17 accounts being created in February 2022, the most ever created in a single month. Notably, none of the 216 bot accounts were authenticated by the platform, meaning that they may not be authentic, notable, or active (Twitter, 2022). Figure 10 depicts the registration countries/regions of the 216 active bots. 47% of the bot accounts (102) were unspecified. As for the rest of the bot accounts, the majority were from the United States (23, 11%), followed by Japan (15, 7%), and the United Kingdom (11, 5%), while only 2% (5) came from Ukraine.

Registration countries/regions of the 216 active bots.

As of 22 May, the 216 active bot accounts followed 487,000 accounts and had 423,400 followers. Among them, @TrumpluvsObama followed the most accounts with 44,300 and had 44,300 followers, while @mog7546 had the most followers with 54,100 and followed 43,100 accounts. Both accounts had roughly the same number of followings and followers, which was consistent with the characteristics of bot accounts.

Retweeting frequently

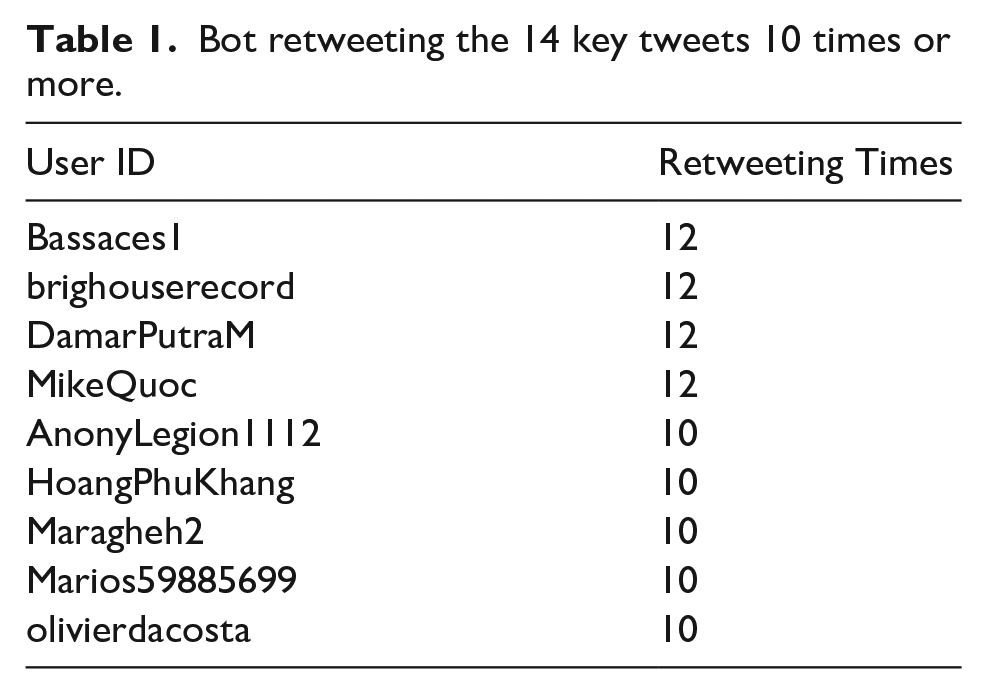

218 key bots frequently retweeting the 14 key and 12 random tweets posted by @UAWeapons contributed to 1,218 retweets in total. Among them, 9 bots retweeted 10 or more times the 14 key tweets (as shown in Table 1); and 44 bots retweeted 7 or more times.

Bot retweeting the 14 key tweets 10 times or more.

Engaging valid followers

It was found that the key 218 bots not only retweeted the tweets of the account @UAWeapons themselves, but also drove their followers to do the same. By April 5, the 14 key tweets posted by the account @UAWeapons were retweeted a total of 40,680 times, with the 218 core bots and their followers contributing 12,398 times, accounting for 30.48%; and the 12 random tweets were retweeted a total of 3,250 times, with the 218 core bots and their followers contributing 663 times, accounting for 20.4%.

Upon filtering the followers of the 218 key bots, it was discovered that the bot accounts co-occurred with 2,610 followers in the @UAWeapons retweet list, indicating that both the bots and their followers retweeted the same tweets. These were considered “valid followers,” who were truly influenced by the key bots they followed.

The bot score of “valid followers” was calculated via Botometer. 1202 accounts with scores of less than 3 were judged to be real accounts, while 1,408 accounts with scores of 3 and above were judged to be bot accounts, which included 441 accounts with scores of 3–4, and the remaining 967 accounts with scores of 4 or higher.

Social network analysis: core social bots within swarms

Three communities around some core social bots

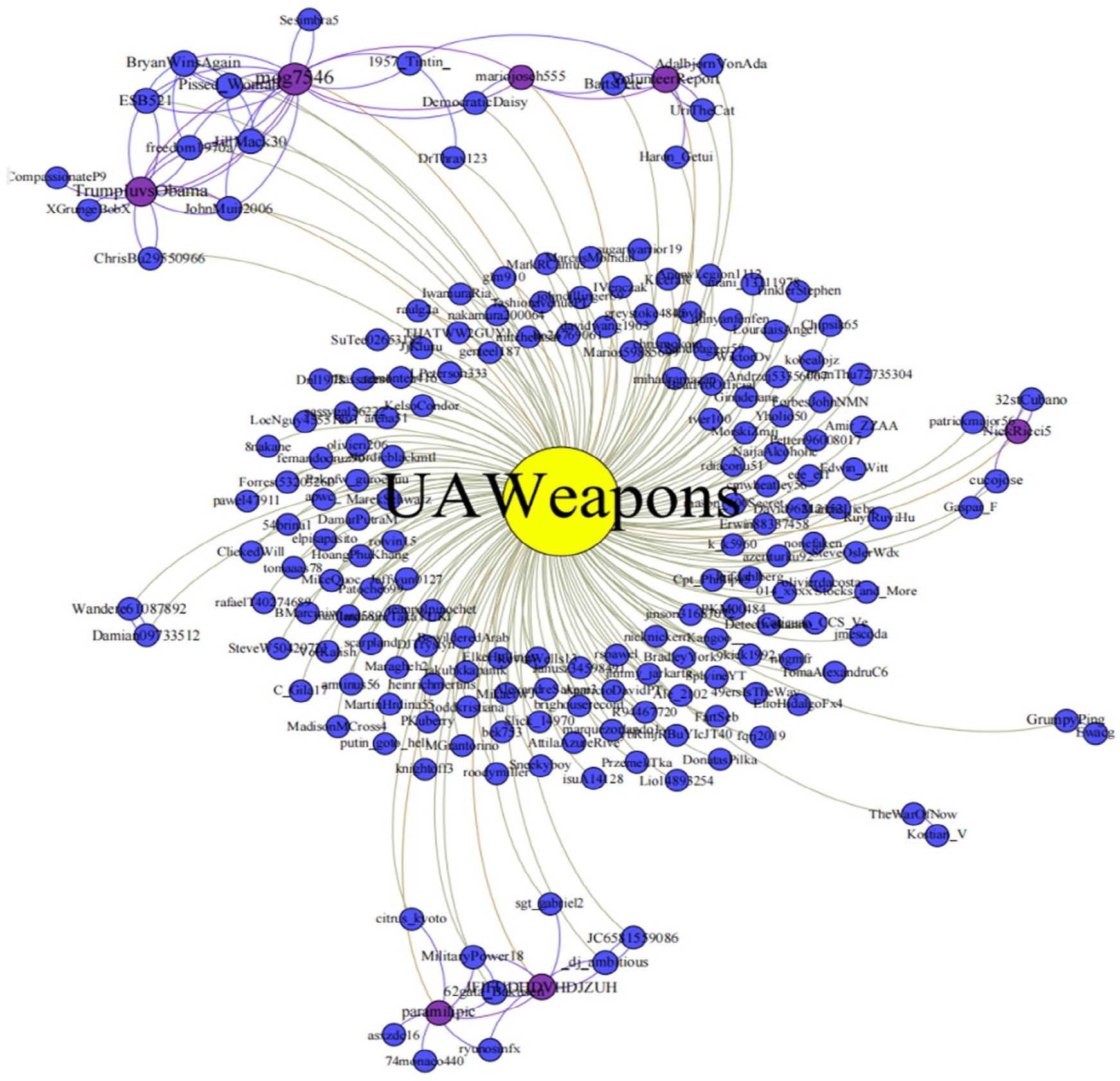

There are 218 key bots that follow the account @UAWeapons, and they frequently retweet the account. Figure 11 illustrates the relationship between 218 key bots and UAWeapons. 182 accounts followed @UAWeapons, but @UAWeapons did not follow any of the 216 accounts (two bots were suspended). The relationships among 216 key bots were explored via SNA using three metrics—degree centrality, betweenness centrality, and closeness centrality—to comprehensively examine the core nodes.

Followings between key bots and @UAWeapons.

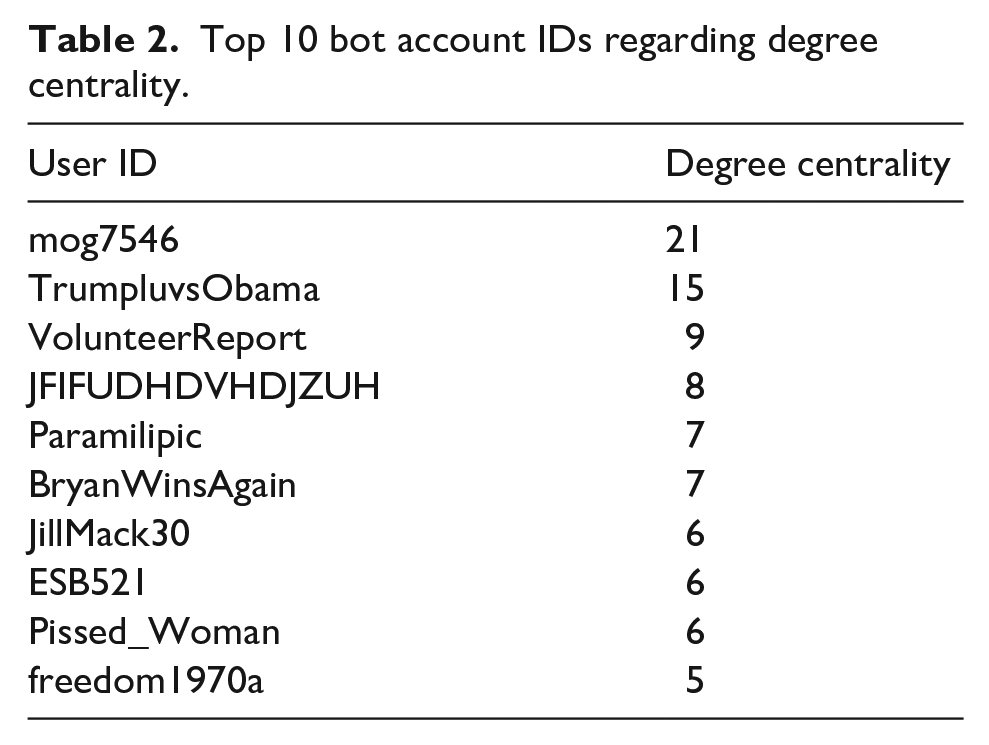

Degree centrality implies that the node with the greatest number of connections is the most central one (Pudjajana et al., 2018). Table 2 displays that there are several nodes in the network with high connections, of which the top five bot account IDs are @mog7546, @TrumpluvsObama, @VolunteerReport, @JFIFUDHDVHDJZUH, and @paramilipic. These five bot accounts have very strong connections with other accounts.

Top 10 bot account IDs regarding degree centrality.

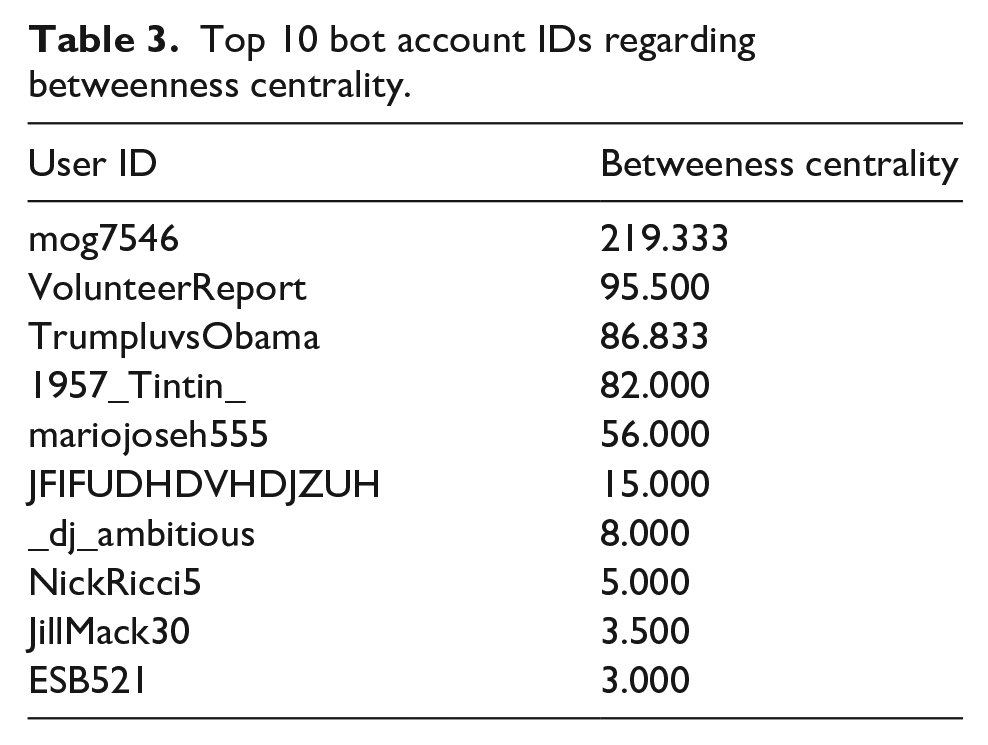

Betweenness centrality refers to how frequently a node appears on the many shortest paths in the network (Grandjean, 2015), indicating how much it contributes to crosstalk in the network. Table 3 reveals that the five bots with the highest betweenness centrality are @mog7546, @VolunteerReport, @TrumpluvsObama, @1957_Tintin_, and @mariojoseh555. A strong bridging role is played by these accounts in the network, with other accounts generating indirect connections by following and being followed by these core nodes.

Top 10 bot account IDs regarding betweenness centrality.

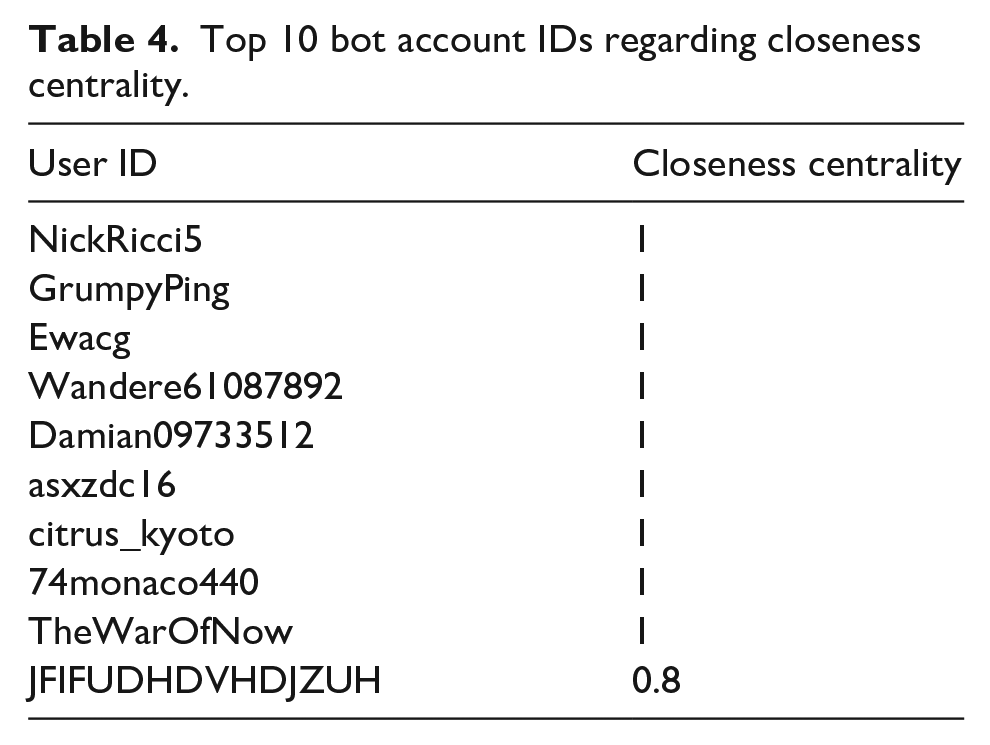

Closeness centrality measures the closeness of a node to others in the network and is obtained by calculating the average length (reciprocal) of the shortest paths between nodes (Oroh et al., 2021). According to Table 4, the bot account IDs with the highest closeness centrality are @NickRicci5, @GrumpyPing, @Ewacg, @Wandere61087892, and @Damian09733512. It is most convenient to connect these accounts to others in the network.

Top 10 bot account IDs regarding closeness centrality.

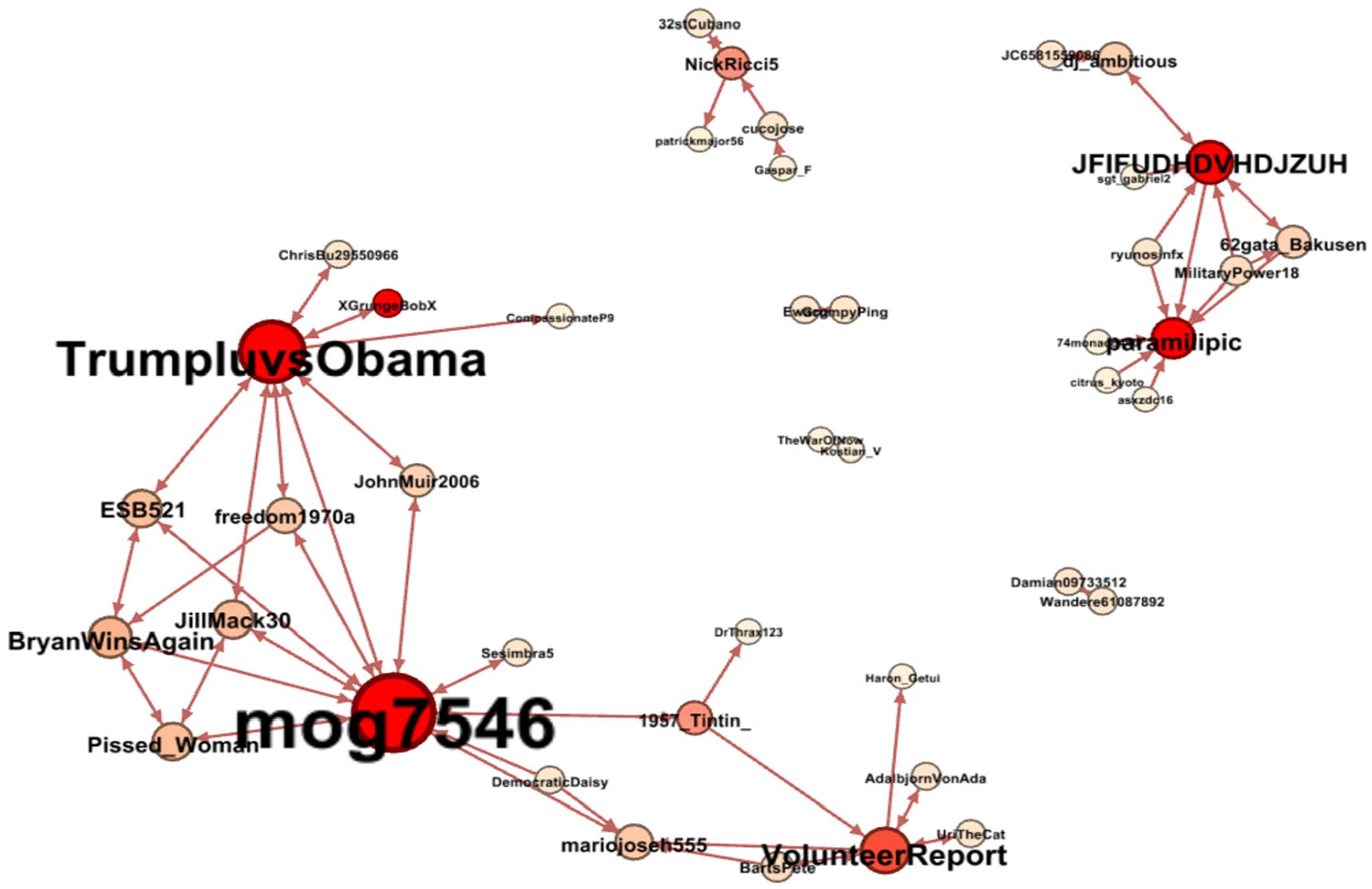

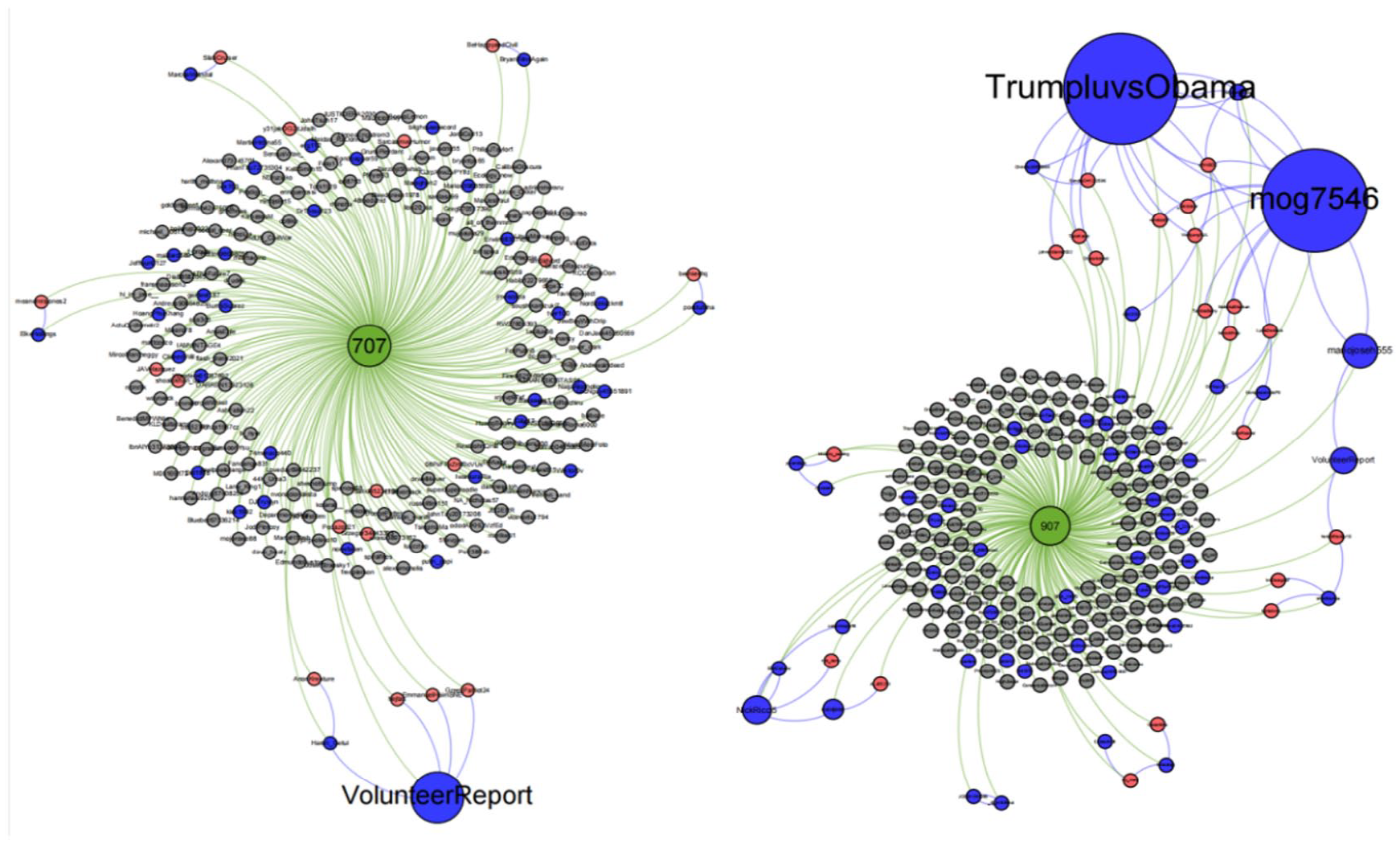

In summary, some of the 216 key bots were strongly interconnected and formed multiple communities throughout the activity. Specifically, 43 bots formed a following network with 80 edges. As is shown in Figure 12, the core bots are organized into at least three communities: the first community is centered on @mog7546, @VolunteerReport, and @TrumpluvsObama; the second community is centered on @JFIFUDHDVHDJZUH and @paramilipic; and the third community is centered on @NickRicci5.

Network of key bots.

Bot swarms promoting tweets

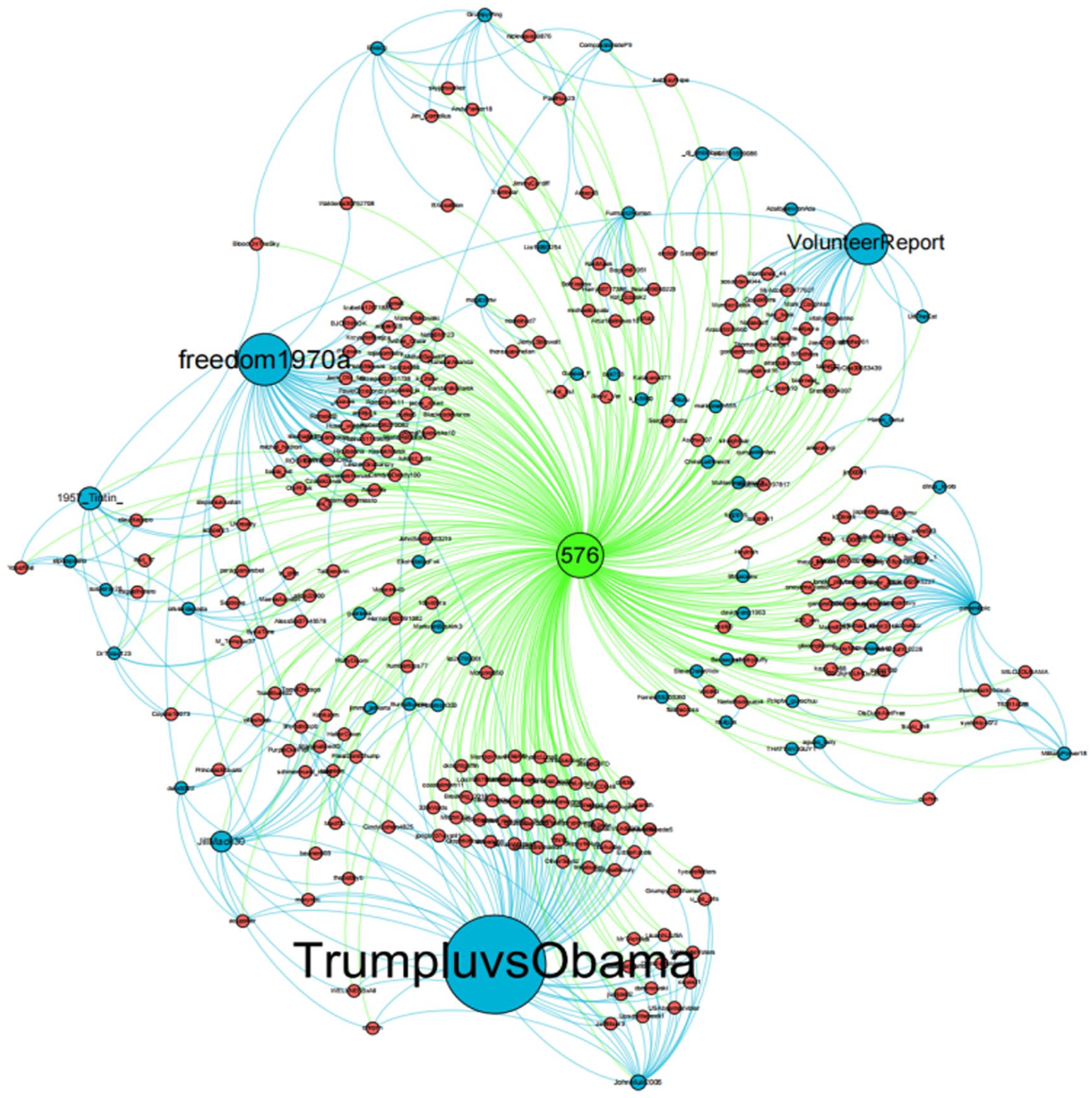

Gephi 0.9.5 was deployed to visualize the network of accounts and retweets. Therefore, No. 576, No. 868, and No. 1629 key tweets, as well as No. 707 and No. 907 random tweets, were transformed into CSV files and imported into Gephi. A Gephi network consists of nodes and edges. Nodes stand for the disseminators, which are Twitter accounts that post information, wherein blue nodes represent bots, red nodes represent followers, and gray nodes represent other retweet accounts. Edges indicate relationships between the disseminators, such as retweets (green edges) and follows (blue edges).

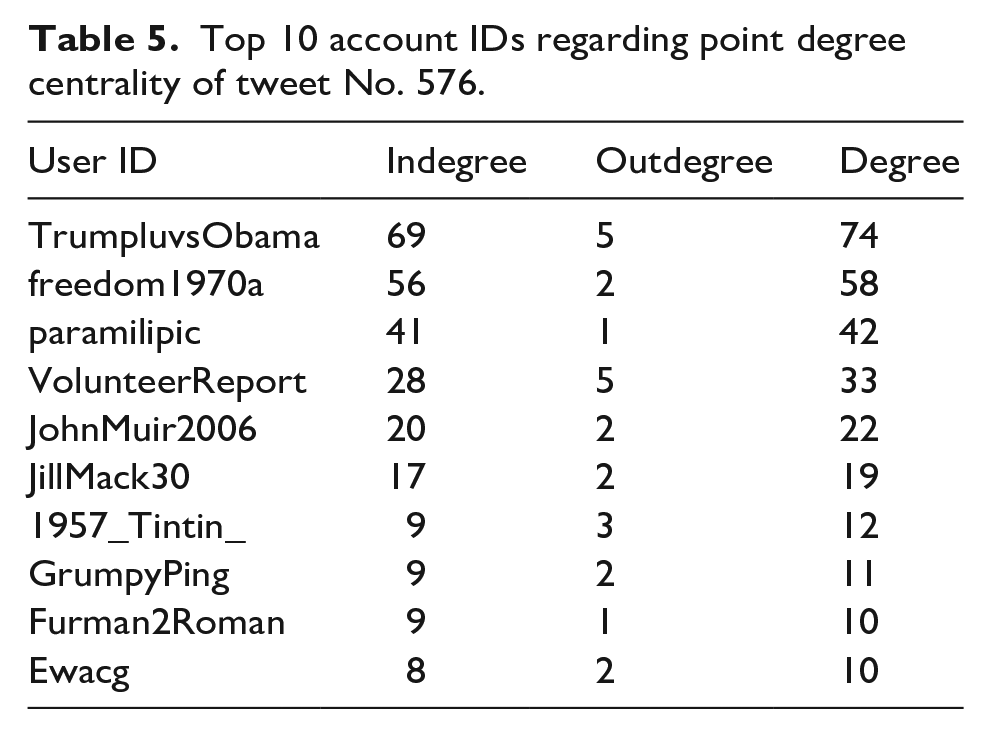

No. 576 contains 309 nodes and 647 directed edges, with an average degree (number of edges) of 1.066 and an average path length (a network’s average shortest distance between any two nodes) of 1.147. As shown in Table 5, the nodes @TrumpluvsObama, @freedom1970a, @paramilipic, @VolunteerReport, and @JohnMuir2006 have the highest point degree of centrality and are the core of retweets in the spread of this tweet, which implies that they play an important disseminating role. Indegree and outdegree values indicate the interaction between two points. Accordingly, the nodes where these social bots are located are highly influential and interactive, suggesting that these nodes serve as “opinion leaders” in the dissemination of tweets.

Top 10 account IDs regarding point degree centrality of tweet No. 576.

Figure 13 depicts the topological structure of retweeting the key tweet No. 576. Upon modular analysis of the network topology, multiple communities were identified, such as @freedom1970a and @paramilipic. As the largest cluster with the densest network, @TrumpluvsObama is the most influential opinion leader retweeting the tweet.

Topological structure of retweeting key tweet No. 576.

In sum, there were several communities evident in the 14 key tweets, with the accounts @VolunteerReport, @mog7546, @freedom1970a, @paramilipic, @JohnMuir2006, and @1957_Tintin_ appearing repeatedly, suggesting substantial involvement by bot clusters was an influential factor contributing to the popularity of these tweets. However, based on the topology of 12 random tweets (as shown in Figure 14), the nodes in the retweeting network were not closely connected, some core bots were effective only to a limited extent, and the larger bot swarm strategy was not demonstrated.

Topological structure of retweeting random tweets No. 707 and No. 907.

Discussion

In this study, we examined a Twitter account dedicated to disseminating information about weapon-related information in the Russia–Ukraine conflict to uncover how social bots could be utilized for the account to boost popularity.

To answer RQ1, account and communication strategies were analyzed—most findings are consistent with most previous studies. For example, the way how Twitter accounts used strategies such as hashtags, mentions, URLs, pictures, or videos in their tweets to attract other users (McDonnell & Wheeler, 2019). In short, the account @UAWeapons utilized hashtags and videos extensively in order to increase its popularity. Furthermore, it was argued that tweets with a clear stance and congruent with the retweeter’s perspective are more likely to be disseminated (Wischnewski et al., 2022), which was also confirmed in this study. Despite posting clearly pro-Ukrainian- and anti-Russian content, this account tried to portray itself as a neutral information provider via its profile and hashtag (e.g., the most commonly used hashtag #Ukraine). Another study found that users with the same political stances tend to share common bot followers, and the automatic retweet behavior of these bots will amplify the effect of information posted by these users (Caldarelli et al., 2020). Given the specificity of the Russian–Ukrainian conflict context, a neutral persona may be more likely to gain the trust of social media users and drive retweets and comments from real users. Our further study found that not only would the political voice of real users be amplified by the bot account, but @UAWeapons, as a bot account, would also have its politically inclined information amplified by other bot accounts. And these key bot accounts followed each other and played a boosting role for each other. Among them, 86.11% also showed a certain political inclination. In the context of the Russia–Ukraine conflict, they all supported Ukraine and opposed Russia. Similar to previous studies, bot accounts show “hyper-social” when retweeting tweets with the same opinion tendency, that is, they frequently retweet other users’ tweets (Yuan et al., 2019). In this study, a total of 31,178 accounts retweeted 14 key tweets a total of 40,680 times, of which 218 key bot accounts and the 2,160 fan accounts they influenced had 12,398 retweets. In other words, a very small proportion (about 0.7%) of bot accounts drove their fans to contribute 30.48% of these retweets, and more than half (54%) of the fans that were driven were also bot accounts.

SNS analysis was conducted to answer RQ2. We found that some network clusters with key bot accounts as the core had been automatically, at scale, and continuously disseminating specific information and exposing @UAWeapons. These core key bot accounts included @mog7546, @VolunteerReport, @TrumpluvsObama, @JFIFUDHDVHDJZUH, @paramilipic, and @mariojoseh555. These bot accounts and their followers formed multiple network clusters (group matrices). This batch of key bot accounts had intelligent features, and automated algorithms enabled them to track the behavior of the original accounts in real time. When @UAWeapons posted new tweets, the algorithm automatically triggerred their retweet behavior. For every tweet posted by @UAWeapons, a key bot account would repost it in time, which subsequently prompted their followers to do the same. Nearly 54% of these affected fans were also auto-running bot accounts. The key bot accounts and their followers formed an automated and clustered way of communication. We also found that the retweets of @UAWeapons tweets by these key bots and their network clusters of followers were not accidental, but continuous. For example, among the 26 tweets examined in this article, the key bot account @mog7546 has been always retweeting @UAWeapons since February 28 (the time of tweet No. 282) to April 5 (the time of tweet No. 1684), and it does not seem to stop.

The rapid growth of Twitter accounts in a short period of time was actually inseparable from the promotion of cluster power, and social robots played a role in network clusters. With the role of influencers, their frequent retweets increased the exposure of the original account. Not only that, the participation of followers of the main bot accounts in the network cluster would make some tweets more likely to become “popular.” During this process, real users may also become “collaborators” unintentionally, following and forwarding some specific tweets. The strategy of social media “astroturfing” (Ratkiewicz et al., 2011) really came into play through social networks. In this study, there were a large number of real human users in the retweet network of those “popular” tweets who inadvertently became “collaborators” to promote the proliferation of tweets, following key bots and their followers to retweet @UAWeapons’ tweets. Therefore, the rapid growth of the Twitter account @UAWeapons benefited from the promotion of social robot network clusters, which further confirms previous research that social media “astroturfing” strategies are beneficial to expand the influence of specific actors (Cresci et al., 2015). Regarding the behavioral representation of influence, Cha et al. (2010) believed that forwarding might be more useful than following in social network relationships because some users may follow other users only for etiquette considerations, and following does not mean tweets of the followed account are seen or retweeted. Our results also confirmed that, some key social bot followers, although they did not follow the account @UAWeapons, might retweet related tweets under the influence of their following accounts. When these tweets were retweeted by a certain number of followers, their spread was more likely to increase sharply.

Conclusion

The internet has morphed social media into a battlefield of public opinion in international political games, and the cold war and the information war in the Russia–Ukraine conflict are raging simultaneously. It is believed that social bots have a negative influence on the online opinion field, as they provide a large amount of mixed information containing both truths and falsehoods. In this regard, the sudden appearance of the @UAWeapons account and the surge in attention are of interest to us.

Most of the existing studies have examined how online social networks were formed and spread under a specific issue, such as who dominated the issue of climate change (Vu et al., 2020) and how disinformation spread in the 2016 US presidential election (Grinberg et al., 2019). Unlike these studies, this one focuses more on how a single account in a vertical field is boosted by online social networks to grow rapidly.

There are three new findings in our study compared with previous studies: The first is the pulsed change in communication volume. Despite existing empirical studies indicating that social bots often adopt certain strategies, such as hashtag pushing, to increase communication on an issue (Graham et al., 2021), our study has indicated that such strategies may not necessarily be effective at all times. As determined by the time-series analysis, some bot accounts were always present in tweets, sometimes with explosive growth and sometimes without. The primary reason for this is that when tweets did not increase rapidly, there tended to be fewer bots and no clustering generated. In turn, this resulted in a “pulsed” variation in the volume of tweets from the same account about the same topic over time. In our opinion, this is due to the fact that bots are constantly adapting their models or algorithms to best mimic human behavior, including human work and rest patterns. If too frequent behavior is detected by the platform, the bots will be banned, which is also a self-protection mechanism implemented by the manipulator. Note that this is only preliminary speculation that needs further investigation. Therefore, if this is the case, and highly human-like bots engage in social platform discussions, it seems to be of little value for the platform to detect the bot properties of the participants, but rather to focus on the content of their posts.

Second, in the case of the information warfare between Russia and Ukraine, it seems doubtful that tweets with a clear stance (Luceri et al., 2019) and that are congruent with the retweeter’s perspective are more likely to be disseminated (Duan et al., 2022; Wischnewski et al., 2022). Politically inclined individuals may be skeptical of neutral news messages that disagree with their views (Hameleers, 2022). However, the account @UAWeapons presented itself as an objective and neutral source of information by packaging basic information and hashtags. Moreover, a recent study indicates that the majority of tweets posted by social bots regarding the Russia–Ukraine conflict were neutral in sentiment (Shen et al., 2023). We speculate that given the specificity of the Russian–Ukrainian conflict context, posting information with a neutral persona as a “sharer” of information about weapons and the war front may lead to greater trust by social media users, resulting in more retweets and comments. However, the content analysis showed that the account had a strong stance and supported Ukraine. In this study, however, it is impossible to determine whether a disguised neutral persona or a biased message is playing a role. It is possible that false balance is not necessary for the successful amplification of an argument, and content quality or backing strength moderates relationships with social bots and reception. To identify which variable has an influence, further research can be conducted with the two variables separated or combined.

Third, the rapid growth of @UAWeapons is largely attributed to three clusters of network accounts formed around the core bot accounts. It has been well established that bot network clusters are responsible for the proliferation of relevant issues, but the existing literature does not identify exactly which bot accounts are included in a particular bot network. As an example, Bastos and Mercea (2019) determined that the activity of bot networks on the topic of Brexit was greater than activity by real active users; Twitter bot networks’ retweet networks displayed greater integration and modularity when compared with that of real users’ networks. Our study goes one step further by identifying specific accounts that form bot clusters and pinpointing these accounts.

Despite these findings, we also found some similarities with previous research. The social bot utilizes similar strategies to extend its reach, as previous studies have shown, including hashtags, mentions, pictures, and videos in tweets (McDonnell & Wheeler, 2019). Moreover, retweets are more likely to extend reach than follows in terms of communication outcomes, demonstrating the existence of repost storms (Samanci & Thulin, 2022). Finally, even though the focus of this study varies, one consistent conclusion is that online social networks are crucial in detecting communities and disseminating information (Camacho et al., 2020).

Limitations

As a case study, our research has an obvious limitation. We focused on a single Twitter account, and the research results may be influenced by other factors, such as Twitter’s black box algorithm, in this specific context of the Russia–Ukraine conflict. Follow-up research can consider adopting an experimental method, simulating a batch of bot accounts and putting them on real social media platforms to observe their interaction strategies and growth patterns, as a verification and supplement to this research.

To conclude, this study investigated how the bot account @UAWeapons grew rapidly by specifically sharing information related to the Russia–Ukraine conflict using time-series analysis, content analysis, and network analysis. This research, although it is a case study, offers an insight into the role social bots play in information warfare.

Footnotes

Appendix 1

12 random tweets.

| Items | Key tweets | Created time | Likes | Retweets |

|---|---|---|---|---|

| 7 | The ring is called a “driving band”. When fired, this soft copper band forms a seal, engaging the rifled barrel of . . . https://t.co/pAdKxT0gH1 | 21 February 2022 14:56:33 | 102 | 34 |

| 107 | Here is video [NSFW to a degree] of the incident.https://t.co/iK9MI32t6k | 25 February 2022 14:32:24 | 2526 | 828 |

| 407 | #Ukraine: Today it was widely claimed on pro-UA channels that a Russian jet was shot down over #Irpin, Kyiv. Accord . . . https://t.co/dLNw5gvgv4 | 3 March 2022 11:37:17 | 866 | 193 |

| 507 | #Ukraine: Ukrainian Forces already reusing captured AK-12 rifles. https://t.co/day1WQXCG7 | 5 March 2022 13:29:56 | 7483 | 956 |

| 607 | 7, not 6! | 7 March 2022 16:23:00 | 1113 | 45 |

| 707 | #Ukraine: Recent video showing claimed Ukrainian fire hitting Russian armour in the vicinity of #Borodyanka. We e . . . https://t.co/CYnqWV8Q5c | 10 March 2022 12:30:00 | 1566 | 252 |

| 907 | #Ukraine: A BMP-1 IFV and BM-21 Grad pattern MRL, presumably belonging to the DNR/LNR troops, were captured by the . . . https://t.co/jWJfNy5hYg | 15 March 2022 12:18:29 | 1842 | 245 |

| 1007 | #Ukraine: A claimed Russian “Forpost-R” drone hit an alleged communications center of the Ukrainian Army, using a s . . . https://t.co/ThKNjYviho | 18 March 2022 11:20:45 | 531 | 109 |

| 1070 | #Ukraine: As it turns out, not all Western aid is helpful. Sometimes it even becomes dangerous.According to Ukrai . . . https://t.co/5AyMk6dl3j | 20 March 2022 11:19:23 | 4090 | 788 |

| 1207 | Thanks @oryxspioenkop, this was captured on the 7th of March. | 23 March 2022 19:43:15 | 397 | 20 |

| 1407 | An MT-LBVM was also lost in the same location. As usual, Ukrainian troops stripped it of it’s NSVT heavy machine gu . . . https://t.co/q1VlY8prAr | 30 March 2022 16:25:57 | 1060 | 119 |

| 1607 | #Ukraine: Ukrainian farmers captured themselves another Tiger- a recently abandoned Russian Tigr-M IMV being recove . . . https://t.co/DqSk1g3m10 | 3 April 2022 13:37:44 | 5542 | 583 |

Author contributions

HZ was the principal researcher and supervised the research project. Q Li and Q Liu contributed to the study design and the draft composition. SL, XD, and SC were responsible for data collection and analysis, as well as drafting and revising the manuscript. All authors participated in writing the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.