Abstract

Although online political incivility has increasingly become an object of scholarly inquiry, there exists little agreement on the construct’s precise definition. The goal of this work was therefore to explore the relational dynamics among previously identified dimensions of online political incivility. The results of a regularized partial correlation network indicated that a communicator’s desire to exclude attitude-discrepant others from discussion played an especially influential role in the variable network. The data also suggested that certain facets of incivility may be likely to be deployed together. Specifically, the data suggested the existence of two identifiable groupings of incivility factors: (1) variables pertaining to violation of speech-based norms and (2) variables pertaining to the violation of the inclusion-based norms that underlie democratic communication processes. These results are discussed in the context of political discussion and deliberation.

Recent public opinion polling suggests that Americans increasingly see political incivility as a major social issue (e.g. “Nearly 80 Percent of Americans Concerned Lack of Civility in Politics Will Lead to Violence, Poll Says,” 2018; Weber Shandwick, 2018). Reacting to this concern, scholars have increasingly sought to understand the antecedents to and consequences of incivility, particularly as it relates to online political communication. Despite, however, the perception that online spaces are increasingly fraught with incivility, there exists little agreement regarding what is and what is not uncivil communication. Herbst (2010), for example, asserted that incivility exists primarily in the “eyes of the beholder” (p. 3), while Muddiman (2017) suggested that the incivility literature currently features “multiple and often contradictory conceptualizations across projects” (p. 3183). Similarly, a recent study conducted by Su et al. (2018) pointedly noted that “defining incivility is a complex problem, insofar as achieving consensus about where to draw the line between civil and uncivil discourse is almost impossible” (p. 3680).

That being said, prior research is somewhat clear that political incivility, as a broad class of discursive behaviors, extends beyond spontaneous negative affect and is, instead, a potentially insidious social phenomenon that runs counter to the norms governing political interaction generally and political deliberation specifically. Papacharissi (2004), for instance, noted that civility can be understood as “individual behaviors that threaten a collective founded on democratic norms and mandates” (p. 271). More recently, Muddiman (2017) conceptualized incivility perceptions (i.e. people’s evaluation of communication between others) in terms of private (i.e. the violation of norms governing interpersonal interactions) and public (i.e. the violation of norms governing democratic and deliberative communication processes) incivility. However, despite general agreement that incivility is both anti-normative and multi-dimensional in nature, there exists broad variance in its operationalization across empirical studies. For instance, some scholars (e.g. Coe, Kenski, & Rains, 2014; Hopp, Vargo, Dixon, & Thain, 2018; Santana, 2014; Vargo & Hopp, 2017) have focused on the prevalence of factors such swear words, slurs, and racist/xenophobic commentary. Others (e.g. Stryker, Conway, & Danielson, 2014) have highlighted communicator goals, specifically constructing incivility as a means of silencing or excluding one’s perceived ideological opponents. Taken together, these conflicting approaches result in serious questions pertaining to the fundamental nature of online political incivility and the typical ways that it manifests. Further complicating matters, most prior studies have focused on either the frequency of uncivil language (e.g. Coe et al., 2014) or audience perceptions of uncivil language and behavior (e.g. Stryker, Conway, & Danielson, 2014; Muddiman, 2017). Missing from the literature is a study that focuses on communicators’ self-reported use of incivility. Such oversight should be corrected: if incivility is, indeed, a purposeful rejection of social and democratic norms (e.g. Muddiman, 2017; Mutz, 2015; Orwin, 1992; Papacharissi, 2004), it is essential, from the standpoint of theoretical development and refinement, to understand uncivil discursive practices from a communicator’s perspective.

In light of the varied and sometimes conflicting ways in which incivility has been handled in the literature, this study comprehensively explored patterns of association among previously theorized incivility dimensions. Specifically, self-report data from 777 individuals who regularly talk about politics online were collected. These data were analyzed using a regularized partial correlation network (Epskamp & Fried, 2018), an emergent analytic technique that can afford researchers, on an exploratory basis, the ability to highlight substantive relationships between variable pairs, to identify especially influential/central variables, and the ability of identify variable clusters known in network analysis as “communities.”

A theoretical rendering of online political incivility

Causes and effects of online political incivility

The precise causes of uncivil political discussion have been the subject of considerable debate. Those in the popular media have commonly tied perceived increases of incivility to the emergence of digital platforms, which frequently allow for anonymous participation that is de-coupled from interpersonal ramifications (e.g. Konnikova, 2013). For their part, scholars have increasingly linked incivility to partisanship-based factors, including factors such as negative partisanship and partisan extremity. Negative partisanship refers to behaviors driven not by one’s affinity for his or her political party, but instead by dislike of the oppositional party and its members (Abramowitz & Webster, 2016). Partisan extremity refers to extreme adherence to party doctrine and, therein, a general unwillingness to entertain arguments and ideas emerging from outside of one’s political center. In both cases, there exists cause to believe that Americans are becoming increasingly tribal in their political beliefs, resulting in a fractured, polarized citizenry. For its part, scholars have linked polarization to democratically worrisome citizen behaviors in a variety of contexts, including voting behavior, policy support, and communication practices (e.g. Abramowitz & Webster, 2016; Jost, 2017; Muddiman & Stroud, 2017). As it pertains specifically to political discussion in digital spaces, communication scholars have inched toward a perspective that views incivility as the product of complex interdependencies between partisanship strength, social factors, and factors pertaining to the information environment within which discussion occurs (Gervais, 2016; Rains, Kenski, Coe, & Harwood, 2017; Vargo & Hopp, 2017).

Regarding the effects of discursive incivility, research has shown that uncivil political communication inhibits democratic performance, specifically as it pertains to deliberation. The management of disagreement is a crucial part of democracy (Barabas, 2004; McClurg, 2006; Mutz, 2006). When disagreement is confronted in civil discursive contexts, there is cause to believe that its negative consequences can be mitigated and, in some situations, that disagreement can actually facilitate democratically positive outcomes (Barabas, 2004; Mutz, 2002). However, when disagreement is accompanied by incivility, research has associated it with a host of negative outcomes, including trust degradation, the emergence of negative attitudes toward others, and the development of negative affect and polarization among discussants and observers (e.g. Anderson, Brossard, Scheufele, Xenos, & Ladwig, 2014; Borah, 2014; Brooks & Geer, 2007; Chen & Lu, 2017). As such, incivility can—in some cases—exert a damaging influence on democracy because it negatively affects discussion quality and hinders deliberation, thereby further perpetuating to the partisan fragmentation thought to contribute to its emergence.

Networked ingredients and characteristics of political incivility

Despite its potentially deleterious effects on democratic functioning, scholars have yet to settle upon a consistent definition of political incivility (Hopp et al., 2018; Stryker, Conway, & Danielson, 2014; Su et al., 2018). Building upon a synthesis of prior work, however, it is here suggested that incivility attributes can be segmented into two categories, both of which deal with purposeful normative violations. The first category tends to conceptualize incivility in terms of a violation of speech-related norms. These violations are characterized by discursive behaviors that represent the rejection of communication norms pertaining to considerate, courteous, and respectful discussion. The second category understands incivility as a violation of inclusion and identity-related norms. These violations describe behaviors that seek to exclude attitude-divergent others from deliberative and political processes that necessarily require heterogeneity. Notably, these distinctions are drawn, in part, from Muddiman’s (2017) perceptive model of personal and public incivility. While Muddiman’s model focused primarily on interactions among political elites and included both communicative and non-communicative behaviors, the approach’s comprehensive handling of normative violations in political scenarios make it useful in the current research context, which focuses on non-elite, Internet-based communication (i.e. online political communication by and among members of the public). By extending this framework to self-reported behaviors, this study attempts to work toward a generalized conceptual model of discussion-based online political incivility.

Incivility as a violation of speech-related norms

While some scholars such as Papacharissi (2004) and Rowe (2015) note that incivility is more than just rude, abrasive, and/or impolite talk, there is reason to believe that habitual violation of speech-related norms pertaining to acceptable public communication signals a fundamental disrespect for the collective norms that serve as the foundation of modern democracy. 1 Muddiman’s (2017) conceptualization of personal-level incivility, for instance, suggests that when people observe communicative exchanges involving name-calling, swearing, and other forms of discursive impoliteness, they are increasingly likely to perceive a discussion environment as uncivil in character. Likewise, Anderson et al. (2014) found that exposure to “nasty” language was linked to issue-based polarization. Given the obvious visibility of bad language online, and its presumed negative effects on citizen discussion and deliberation, conceptualizations of incivility on the basis of communicated content are fairly common in the literature. Santana (2014) posited that incivility is reflected in the existence of one or more of the following components: name-calling, threats, vulgarity, abusive/foul language, xenophobia, hateful language, racist or bigoted language, disparaging comments on the basis of ethnicity, and use of stereotypes. Sobieraj and Berry (2011) focused their construction of incivility on outrage speech, including factors such as name-calling, insulting language, purposeful misrepresentation, mockery, emotional language, and ideologically extreme language. Likewise, Coe et al. (2014) conceptualized incivility as existing in five distinct forms: name-calling, aspersion, lying, vulgarity, and pejorative speech. Synthesizing these approaches, Hopp and Vargo (2017) operationalized Twitter-based political incivility as language that was extreme, vulgar, abusive, or otherwise hurtful in nature. Gervais (2014, 2015a, 2015b) used the above instances of uncivil speech to create four broad categories of incivility relevant to online communication: invectives and ridicule, hyperbole and distortion, histrionics and obscenity, and the use of conspiracy. From a perhaps higher order perspective, the above instances of speech are built around the notion that uncivil discourse is inherently unnecessary. Coe et al. (2014), for instance, defined incivility as “features of discussion that convey an unnecessarily disrespectful tone toward the discussion forum, its participants, or its topics” (p. 660).

Synthesizing the foregoing, this work suggests that there broadly exist six general categories of textually apparent political incivility that pertain to the violation of speech-related norms: profane language, intentional disrespect, use of threat, persuasive deception, invocation of negative of stereotypes, and gratuitousness. The profane language dimension of incivility refers to the untargeted use of vulgar, crude, or profane language and corresponds with the incivility dimensions identified by Coe et al. (2014), Santana (2014), Gervais (2015b), Su et al. (2018), and Hopp et al. (2018). The intentional disrespect dimension corresponds with the operationalization approaches used in Santana (2014; name-calling and abusive language), Coe et al. (2014; name-calling), Gervais (2015a; 2015b; invectives and ridicule), Ng and Detenber (2005; name-calling and personal attacks), and Brooks and Geer (2007; insulting language and name-calling). The threat utilization dimension covers direct interpersonal threats made by a communicator, the encouragement of like-minded others to post threatening content, and discussion related to perceived social/economic/way of life threats posed by a communicator’s opponents. In both cases, threatening language is affectively negative in nature and employed for the purposes of designating the target as malignant and, thus, worthy derision. This dimension addresses the interpersonal threat component included in Massaro and Stryker (2012), Santana (2014), and Hopp et al. (2018), and the threat to democracy dimension found in Rowe (2015). Consistent with prior renderings of incivility in Coe et al. (2014), Gervais’ (2014, 2015b), Sobieraj and Berry (2011), and Stryker, Conway, and Danielson (2014), the persuasive deception dimension refers specifically to purposeful lying or weaponized use of ambiguity. As illustrated above, a number of prior conceptualizations of incivility (e.g. Coe et al., 2014; Papacharissi, 2004; Rowe, 2015; Santana, 2014) include factors addressing the use of negative/antagonistic stereotypes, disparagement on the basis of race and ethnicity, and racist/bigoted language. As such, the here-described dimension (labeled here as invocation negative of stereotypes) covers the use of racist, stereotypical, or other forms of language that negatively categorize others. Finally, the gratuitousness dimension directly addresses the idea that incivility is unnecessary (Brooks and Geer, 2007; Coe et al., 2014; Mutz & Reeves, 2005). Specifically, this dimension describes online political communication that either lacks the intent to persuade or occurs after any reasonable ability to persuade exists.

Incivility as a violation of inclusion-related norms

In addition to normative violations of speech, prior models of incivility also suggest that incivility can be understood as the purposeful violation of norms pertaining to the inclusion of attitude-discrepant others (e.g. Papacharissi, 2004). These approaches are generally linked to deliberative models of democracy, which hold that democratic enactment is contingent reliant upon inclusive, public discussion and a rational, reasoned approach to settling differences (e.g. Gastil, 2008). Muddiman’s (2017) conceptualization of incivility perceptions suggested that these violations could be understood as public-level normative transgressions against democratic and deliberative norms, specifically those pertaining to the inclusion of and good faith interaction with discrepant others. In this study, this approach is conceptualized in terms of a communicator’s desire to deflate “spaces of reason” through the use of “speech that intentionally denies the right of political opponents to participate equally in applicable procedural processes or debates” (Massaro & Stryker, 2012, p. 409). A survey of extant research (e.g. Gervais, 2015a, 2015b; Massaro & Stryker, 2012; Papacharissi, 2004; Stryker, Conway, & Danielson, 2014) suggests that operationalization of inclusion-related norms can be pursued along four general lines: the desire to exclude oppositional voices, to negatively frame oppositional actors’ motivations, to undermine faith in democratic systems and institutions, and to suppress all discussion on a given topic or issue. The following paragraphs decompose each of these categories in greater depth.

Communicator motivations related to the exclusion of others represent a strategic desire to circumvent good faith democratic deliberation by excluding those who espouse attitude-discrepant perspectives (e.g. Massaro & Stryker, 2012; Stryker, Conway, & Danielson, 2014). By excising attitude-discrepant others from the conversation, political communicators are violating the norms of reciprocity, inclusion, and cooperation, effectively narrowing the public sphere. If, as Papacharissi (2004) asserts, civility is a “deference to the social and democratic identity of an individual” (p. 287), the desire for exclusion is inherently uncivil as it seeks to delegitimize discrepant others. Stryker and Danielson (2013) summarized this perspective by noting that “deliberative civility entails questioning and disputing, but in a way that respects and affirms all persons, even while critiquing their arguments” (p. 9; quotation from Stryker, Conway, & Danielson, 2014).

Like the exclusion measure, the measure describing communicator intentions to negatively frame the motivations of oppositional others again refers to a communicator’s purposeful attempt to narrow the conversational sphere. However, instead of silencing/excluding perceived opponents, this measure represents a strategic desire to negatively frame the motivations of oppositional actors as corrupted, hypocritical, and, ultimately, untrustworthy. In the absence of interpersonal trust, communicators may then be increasingly likely to rely on party-based heuristics and, as such, less likely to engage in good faith deliberation. This dimension (referred to hereafter as negative motives frame for brevity) addresses the conspiracy theory component of Gervais’ (2015a, 2015b) model of incivility and Mutz’s (2007) perspective that suggests uncivil communication patterns are frequently centered on implying that attitude-discrepant others have insidious or otherwise impure intentions.

Trust degradation can also exist on the institutional level. Democratic participation presumes that governmental and civic institutions are both fair and exist to serve the interests of the public. The strategic undermining of faith in these institutions has the potentially disastrous result of delegitimizing democracy in its entirety. If, for instance, a system is seen as fundamentally unfair, legitimate democratic victories can be dismissed as fraudulent in nature. This dimension addresses the conspiracy theory component of Gervais’ (2015a, 2015b) rendering of incivility and the aspect of Papacharissi’s (2004) incivility model that speaks to the verbalization of threats to democracy.

Finally, uncivil communicators may strategically attempt to suppress all discussion on a specific topic. If it is indeed the case that discussion constitutes the soul of democracy, it thus stands to reason that discussion suppression is inherently uncivil in nature. Several prior works (Massaro & Stryker, 2012; Papacharissi, 2004) on incivility have included description of a communicator’s goal to suppress, limit, or otherwise chill democratic deliberation. For instance, Massaro and Stryker (2012) previously argued that uncivil communication is “speech intentionally aimed at closing down spaces of reason and ceasing discourse, rather than maintaining speech zones for future consideration of issues and policies” (p. 409). Likewise, Coe et al. (2014) noted that incivility may effectively debase public discourse, “ultimately weakening the marketplace of ideas” (p. 665).

The current study

This study’s goal was to acquire a more robust understanding of incivility as a theoretical construct. Using the literature to develop a relatively comprehensive pool of uncivil communication dimensions and, subsequently, assessing interrelationships among these dimensions, it is hoped the results of this study will help future researchers better understand if certain features share especially strong connections with one another and, furthermore, if specific incivility dimensions are especially integral to an understanding of the broader construct. These general research goals can be formally expressed using three research questions.

First, are there especially strong associations among the identified incivility dimensions (Research Question 1)? By identifying strong pairwise associations among incivility dimensions, it is possible to highlight variables that are especially likely to co-occur (Dalege, Borsboom, van Harreveld, & van der Maas, 2017).

Second, are there particularly influential dimensions (Research Question 2)? By assessing the “structural importance” (Dalege et al., 2017, p. 531) of the identified incivility dimensions, it is possible to determine the features that lie at the heart of the concept. This question is important given the lack of theoretical clarity pertaining to what incivility is and what it is not (Herbst, 2010; Massaro & Stryker, 2012; Muddiman, 2017; Su et al., 2018).

Third, do the incivility dimensions form identifiable clusters/communities (Research Question 3)? Prior research on people’s perceptions of incivility indicates incivility may pertain to both violation of speech-related norms and norms pertaining to political and deliberative processes. However, research has not, to-date, explored this question in terms self-reported behaviors. If it is the case that perceptions of others’ behavior align with perceptions of one’s own behavior, it becomes increasingly likely the public/private distinction put forth by Muddiman (2017) serves as an appropriate theoretical handling of discursive incivility as a social phenomenon. Moreover, although this study offered a general set of expectations regarding variable membership in the identified speech or inclusion-based variable communities, inconsistency across studies and operational approaches has led to a lack of clarity regarding how, exactly, incivility dimensions relate to one another on a specifically multivariate basis. Through the assembly and use of a comprehensive pool of incivility dimensions, this study sought to understand the degree and precise ways that aspects of incivility may group together on a sub-conceptual basis.

Method

Participants were recruited using the TurkPrime service (TurkPrime; Litman, Robinson, & Abberbock, 2017). TurkPrime draws from the Amazon Mechanical Turk (MTurk) population, but offers researchers a number of additional controls that better ensure high-quality data. In this study, verification controls were used to ensure that participants were located in the United States. Duplicate ID addresses were blocked from participating in the survey more than once. To qualify for the study, participants were required to be at least 18 years old and to indicate that they discuss political and social issues online at least monthly. Several attention checks were incorporated into the survey to identify and remove careless respondents.

Sample

In all, 777 respondents provided complete responses. The sample was predominantly male (53.3%) and White (76.3%). The sample’s average age was 35.54 (SD = 10.85) years. To assess political ideology, participants were asked what type of candidates they tend to support (1 = very liberal, 7 = very conservative). The sample mean for the ideology measure was 3.53 (SD = 1.85). Respondents frequently talked about politics online: on a 7-point discussion frequency measure scaled such that 1 = never and 7 = frequently, the mean score was 5.00 (SD = 1.37).

Measures

Based upon the literature presented above, a total of 10 measures of online political incivility were generated a priori. All individual questionnaire items were placed on 7-point scales where 1 = rarely and 7 = often. Given this study’s focus on the degree to which a communicator habitually injects incivility into political discussion, all questions were placed on unipolar frequency scales. The items used in this study were conceptually based upon prior research but, given the paucity of studies examining incivility using self-report data, were developed by the researcher specifically for this project. Moreover, in light of a lack of prior guidance regarding the construction of self-reported indicators of online incivility, each of the dimensional measures were comprised of fairly large number of individual indicators (between 6 and 8 items); this better allowed for assessment of unidimensionality and ensured broad conceptual coverage. Each indicator was attached to the following stem: “When discussing political and social issues on the Internet, how often do you . . .” Within each of the measurement inventories, individual indicators varied in severity. 2

Profane language

Profane language was measured using seven items, examples of which include the following: “use language that is vulgar?” and “use language words that would be ‘off limits’ in polite discussion?”

Intentional disrespect

Intentional disrespect was measured using eight items. Sample items include the following: “make intentionally sharp remarks about opponents’ character, experience, or capabilities?” and “use words or terms to describe your opponents that you would not want to be used to describe you?”

Use of threat

Seven individual indicators comprised the measure describing use of threat. These items covered both the use interpersonal verbal threat (e.g. “verbally threaten people you perceive to be your opponents?”) and the degree to which a communicator identified others as threats to democracy, society, and or a way of life (e.g. “point out that your opponents’ beliefs present a grave threat to our society and way of life?”).

Persuasive deception

Eight items were used to measure the use of persuasive deception. Sample items include “make statements that are mostly but not totally true to persuade?” and “slightly exaggerate your claims to create the best possible argument?”

Invocation of negative stereotypes

The measure was comprised of six items in total. Sample items include “make negative generalizations on the basis of political affiliation, geographic location, race, or other group-based affiliation?” and “use slurs to describe your opponents?”

Gratuitousness

The gratuitousness measure was comprised of eight items, including the following: “make statements that, honestly speaking, probably don’t need to be made?” and “continue to argue with others even though you know it won’t change their mind/perspective?”

Exclusion of opponents

This measure was comprised of six items, including “try to show the world that your opponents have nothing legitimate to add to the conversation?” and “attempt to show other posters/readers that your opponents don’t have viable ideas and, as such, should just stop talking?”

Negative motives frame

This measure contained seven indicators, including “point out the hypocrisy of your opponents?” and “call attention to the disingenuous nature of your opponents?”

Undermine faith in democratic systems

This measure was comprised of eight items, including “point out that our democracy is a farce?” and “point out that normal people just can’t win in our democracy?”

Discussion suppression

Seven items were used to comprise this measure. Example items include “attempt to cut off discussion on a specific topic?” and “attempt to change the topic of discussion to what is really important?”

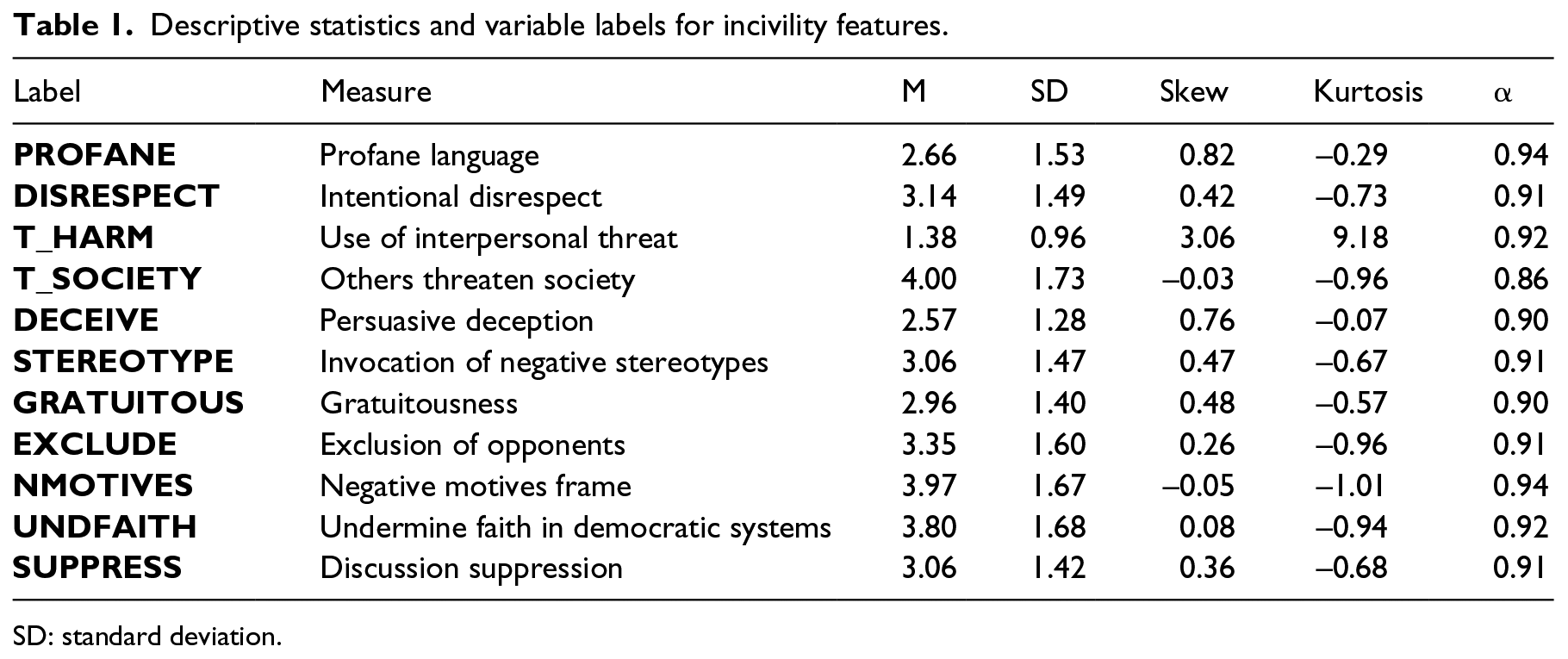

To ensure that the measures were unidimensional, a parallel analysis was conducted on each of the proposed 10 incivility measures. Parallel analyses involve the generation of a pool of random data sets derived from an original set of data. These data sets, each of which contains the same number of observations as the original data set, are factor analyzed and the eigenvalues from each trial are recorded. The random data eigenvalues are then compared to the eigvenvalues derived from the original data, where “factors or components are retained as long as the ith eigenvalue from the actual data is greater than the ith eigenvalue from the random data” (O’Connor, 2018, p. 22). Following best practices identified in the literature (e.g. Crawford, Green, Levy, & Thompson, 2010; O’Connor, 2000), the comparison data sets in this study were constructed using 1000 random permutations of the original data. For comparison, the eigenvalues at the 95th percentile of the random data were selected. Principal components extraction was used (see Crawford et al., 2010 and O’Connor, 2018 for rationale). For the threat inventory, the parallel analysis procedure indicated the presence of two distinct dimensions. A follow-up exploratory factor analysis (EFA), conducted using principal axis factoring with promax rotation, suggested that items pertaining to the use of interpersonal verbal threat loaded on one factor (four items total; factor loading range = 0.83–0.88), while items pertaining to the identification of others as a threat to democracy and society on another (three items total; factor loading range = 0.70–0.92). The correlation between the two threat dimensions was weak and positive (r = 0.13). For all other measures, the parallel analyses suggested the presence of a unidimensional structure. 3 To create the final measure for each incivility dimension, individual item scores were averaged. Variable labels, descriptive statistics, and reliability coefficients for the above measures are provided in Table 1.

Descriptive statistics and variable labels for incivility features.

SD: standard deviation.

Analytic approach

The present analytic approach involved using the R package qgraph to estimate a regularized partial correlation network. Regularized partial correlation networks are a type of pairwise Markov random field analysis that allows researchers to generate network-like models describing variable interrelationships (Epskamp & Fried, 2018). The currently employed modeling approach accomplished regularization (i.e. the use of a penalty term to shrink the parameter estimates and, in so doing, fix substantially weak associations at zero) via the least absolute shrinkage and selection operator (LASSO; Tibshirani, 1996). In the current case, regularization is important because the large number of possible pairwise associations is likely to yield a number of false positives, which can complicate model interpretation and lead to erroneous and/or non-replicable conclusions (Armour et al., 2016). Regularization helps “obtain a network structure in which as few connections as possible are required to parsimoniously explain the covariance among variables in the data” (Epskamp, 2017, p. 13).

Based upon recommendations found in the literature, the tuning hyperparameter (γ) was set to 0.50. Typically speaking, γ values are set between 0.00 and 0.50. According to Epskamp (2017), setting the γ value to 0 “errs on the side of discovery: more connections are estimated, including possible spurious ones,” while setting the γ value to 0.50 “errs on the side of caution or parsimony: fewer connections are obtained including hardly any spurious connections but also less true connections” (p. 15). Given the exploratory nature of this study, and, therein, the desire to generate a replicable network, a conservative γ value was selected. To further ensure network replicability, the generated network was thresholded. So-called thresholded networks remove elements of the precision matrix (i.e. the inverse of the covariance matrix) that are indicative of weak (and, therein, potentially spurious) relationships between modeled variables. 4 Removal of these elements occurs both before and after regularization. The goal of this function is simply to remove weak associations from the model for the purposes of obtaining a highly specific model (i.e. a final model that features only the most robust between-variable associations). Practically speaking, the combined use of regularization and thresholding is a conservative means of estimating a sparse network, or a network that focuses only on the strongest and most important relationships. Because these processes together result in the removal of potentially spurious associations, the current approach better facilitates replicability of relationships across data sets and contexts. The estimated relationships (also referred to as edgeweights) between variables (also referred to as nodes) themselves can be interpreted as partial correlation coefficients (ranging from −1 to +1) and represent the between-variable associations after both controlling for the effects of all other variables included in the model and the use of model regularization. Like other network models, the present approach also yields centrality indices that can be used to identify particularly influential variables (Epskamp, Borsboom, & Fried, 2018).

The decision in this study to use a regularized partial correlation network, rather than more commonly employed techniques, such as exploratory and confirmatory factor analysis, was made for several reasons. First, factor analytic techniques presume that a set of indicators together reflect an unobserved social phenomenon. The primary goal of this study, however, was neither to test the degree to which various incivility dimensions reflect a higher order concept nor to reduce the overall number of measurement items (a common EFA application). Instead, this study sought to obtain a better understanding of key associations among a pool of variables and, therein, in the identification of especially influential dimensions. Moreover, as described in further depth in the “Results” section, the network-based approach affords researchers the ability to apply so-called “community identification” techniques (e.g. Fried, 2016; Golino & Epskamp, 2017). These techniques yield information analogous to factor analytic techniques (Golino & Epskamp, 2017). In this sense, the current approach yields information that is similar to the type of information one could get from a factor analysis but, in addition, provides critical information regarding between-variable associations and variable centrality.

Results

Research Question 1

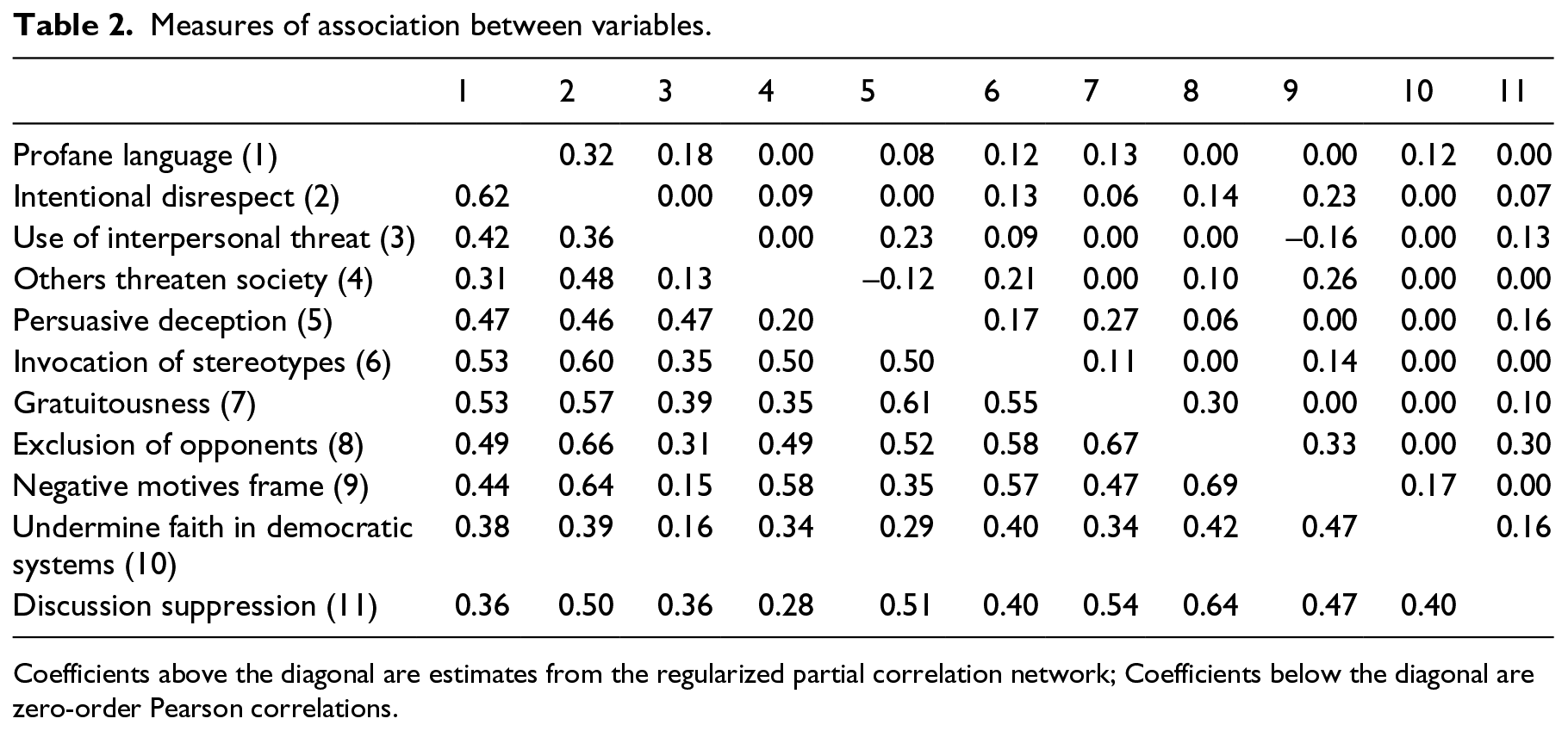

The first research question was concerned with the identification of especially strong relationships between the various online political incivility dimensions. Table 2 shows the regularized partial correlations for the network. In addition, the bivariate Pearson correlations are presented. The estimates from the regularized partial correlation model are much smaller than the Pearson correlations, and a number of associations are fixed at zero, indicating bivariate spuriousness. Moreover, after controlling for the influence of the other incivility dimensions, some between-dimension associations are actually negative in nature. Figure 1, Plot A visually depicts the regularized partial correlation network. In this plot, thicker lines indicate stronger relationships between variables. Solid lines represent positive relations, while dotted lines represent negative relationships. Variables that are positioned closer together are more strongly related. For clarity of visual representation, line thickness has been scaled by fixing the maximum width to the absolute value of the strongest observed relationship (0.33). Figure 1, Plot B depicts the exact same network; however, here, all estimated relationships below the network’s median absolute value (0.14) have been removed. Figure 1, Plot C provides an even more granular look at the network by retaining only those edgeweights greater than the network’s 75th percentile absolute value (0.22).

Measures of association between variables.

Coefficients above the diagonal are estimates from the regularized partial correlation network; Coefficients below the diagonal are zero-order Pearson correlations.

Network graphs.

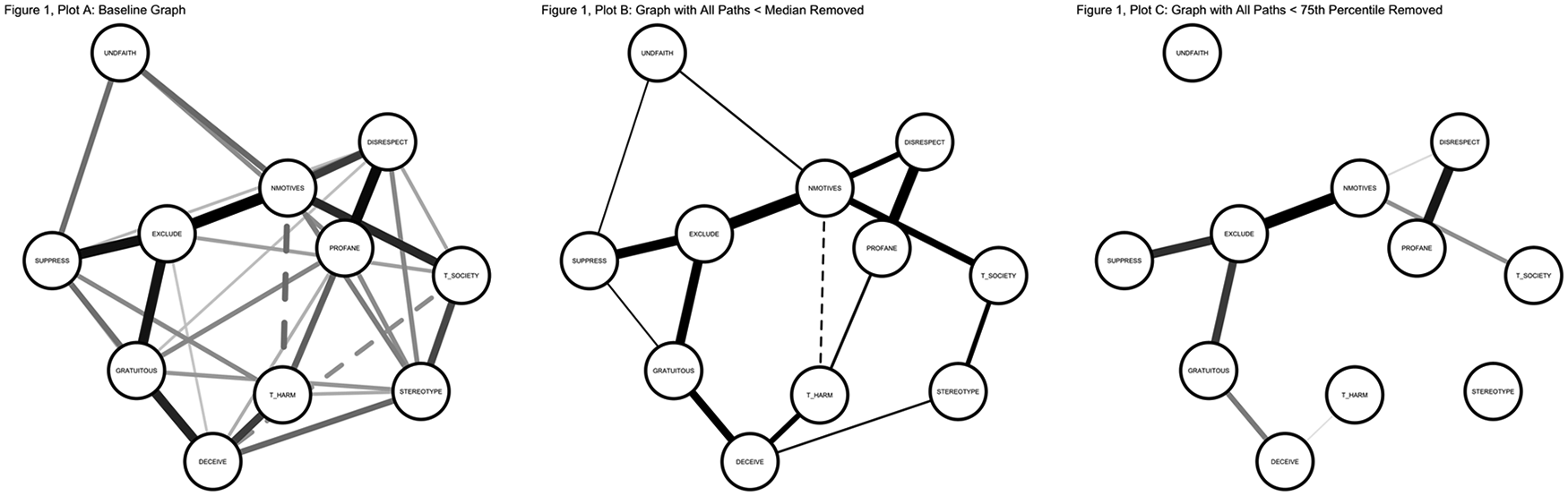

Next, Figure 2 depicts the bootstrapped 95th percentile confidence intervals (CIs) for each edgeweight in the model. Essentially, this procedure involves repeated re-estimation of the model shown in Table 2/Figure 1 using 1000 random re-samples (with replacement) of the data. The resulting distribution of values is used to construct confidence intervals at a given confidence level (here the 95% confidence level). Unlike bootstrap estimates obtained in other contexts, estimate ranges that include 0 are still deemed to be meaningful in regularized contexts (Armour et al., 2016). Thus, as it pertains to the current research goals, the rationale for obtaining the bootstrapped CIs is primarily to examine the degree which individual point estimates are different from one another. If the lower bound of a given estimate does not overlap with the upper bound of a second estimate, it can be concluded that the two associations are significantly different from one another (Epskamp et al., 2018). In the present context, this technique is useful because it allows for identification of associations that are especially robust.

Bootstrapped confidence intervals for parameter estimates.

Table 2 and Figures 1 and 2 together suggest that handful of dimensions are especially strongly linked to one another. Looking at Figure 1, it can be seen that five positive relationships appeared especially strong: the relationship between the exclusion variable and the negative motives frame variable (point estimate = 0.33); the relationship between the profanity variable and the variable describing purposeful use of disrespect (point estimate = 0.32); the relationship between the exclusion variable and the variable describing discussion suppression (point estimate = 0.30); the relationship between the exclusion variable and the variable describing gratuitous language (point estimate = 0.30); and the relationship between the persuasive deception and gratuitous language variables (point estimate = 0.27). The relationships become increasingly visually apparent in the plots showing only those relationships with magnitude coefficients above the median (Plot B) and 75th percentile (Plot C). Figure 2 further contextualizes these relationships by showing that the lower CI bounds for each of these five relationships are greater than the upper bounds for a large majority of the of the associations comprising the network. Notably, of the five strongest observed pairwise relationships, the exclusion dimension was included in three, suggesting that a desire to exclude attitude-discrepant others may be substantively embedded within the conceptual marrow of online political incivility. These findings also illuminate some potentially important co-occurring behaviors. Specifically, the data show that profanity and disrespect are likely to occur in concert with one another, that the use of deception and gratuitousness may frequently be employed together, and that the desire to exclude others may manifest alongside behaviors seeking to negatively frame the motives of others, suppress all topical discussion, and/or employ gratuitous language/unnecessary discussion.

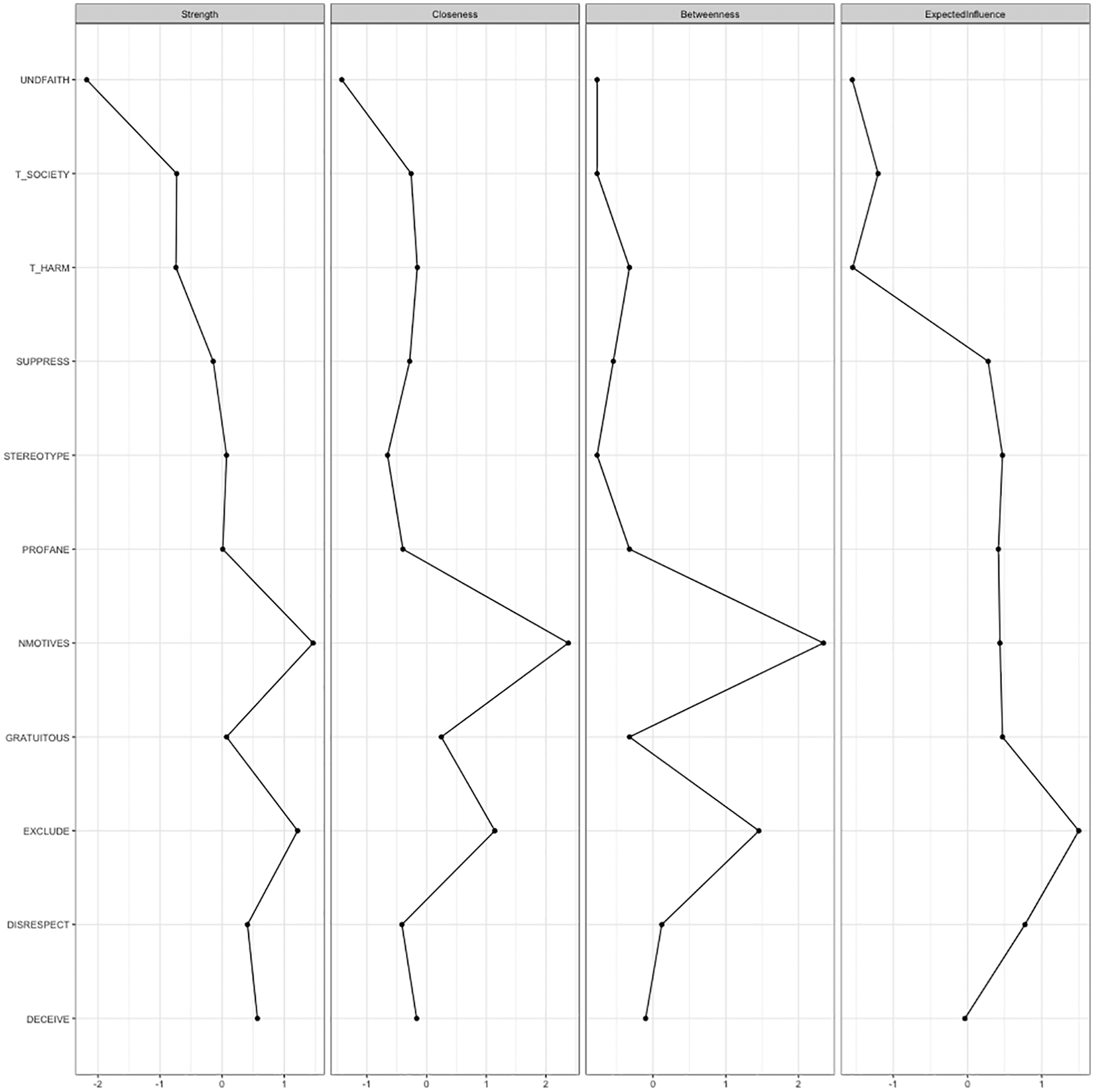

Research Question 2

The second research question was concerned with the identification of dimensions that play an especially central role in the variable network. Four centrality measures were considered (Epskamp et al., 2018; Robinaugh, Millner, & McNally, 2016). These measures were as follows: betweenness, or how important each variable is in the average path between any two other random variables; closeness, or the degree to which a variable is indirectly connected to other variables in the network; strength, or the degree to which a given variable is connected to all other variables in the network; and expected influence, or the sum of all edges extending from a node of interest. Taken as a whole, these measures together provide distinct ways of understanding and quantifying the centrality of a given variable within the overall network. Standardized estimates for each measure are presented in Figure 3. As shown, the desire to exclude attitude-discrepant others and the negative motives dimensions occupy an especially central place in the network structure, suggesting that these variables are especially important in the theoretical conceptualization of incivility. 5 Notably, across the indices, there is some disagreement, which emerges from the various ways that the different measures account for influence. For instance, the expected influence index clearly indicates that the exclusion dimension occupies the most central place in the estimated network, while the betweenness, closeness, and strength indices slightly prefer the negative motives frame. This is because the expected influence measure accounts for the directionality of each bivariate relationship while the betweenness, closeness, and strength indices consider variable relationships in terms of absolute values (recall that after controlling for the influence of all model-included dimensions, some associations were negative in direction; Robinaugh et al., 2016). Given the nature of the variable codes (i.e. all measures were coded such that higher scores were indicative of more frequent incivility) and this study’s interest in uncivil discursive outcomes, the expected influence measure is perhaps especially important in the current research context.

Standardized centrality indices.

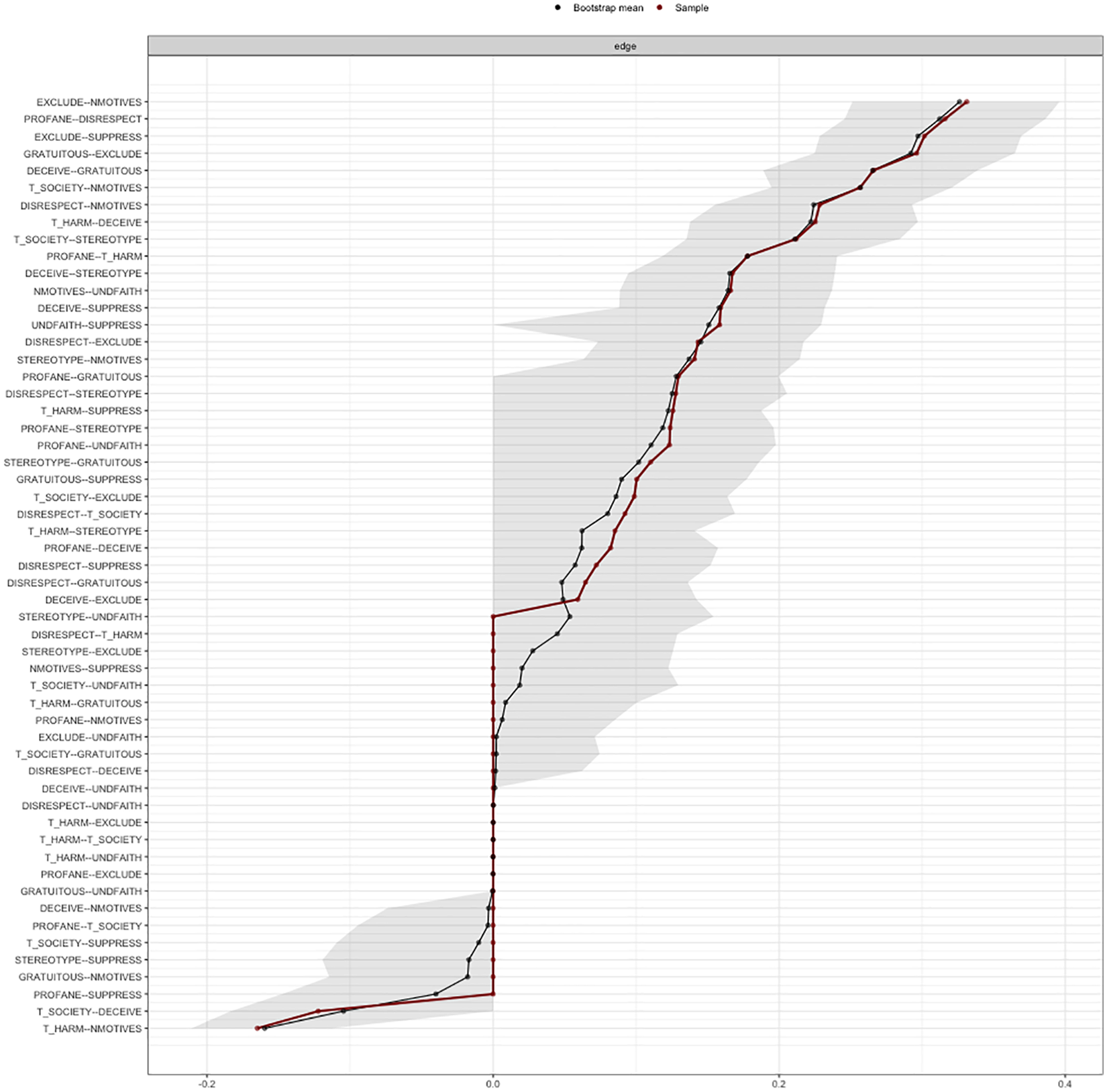

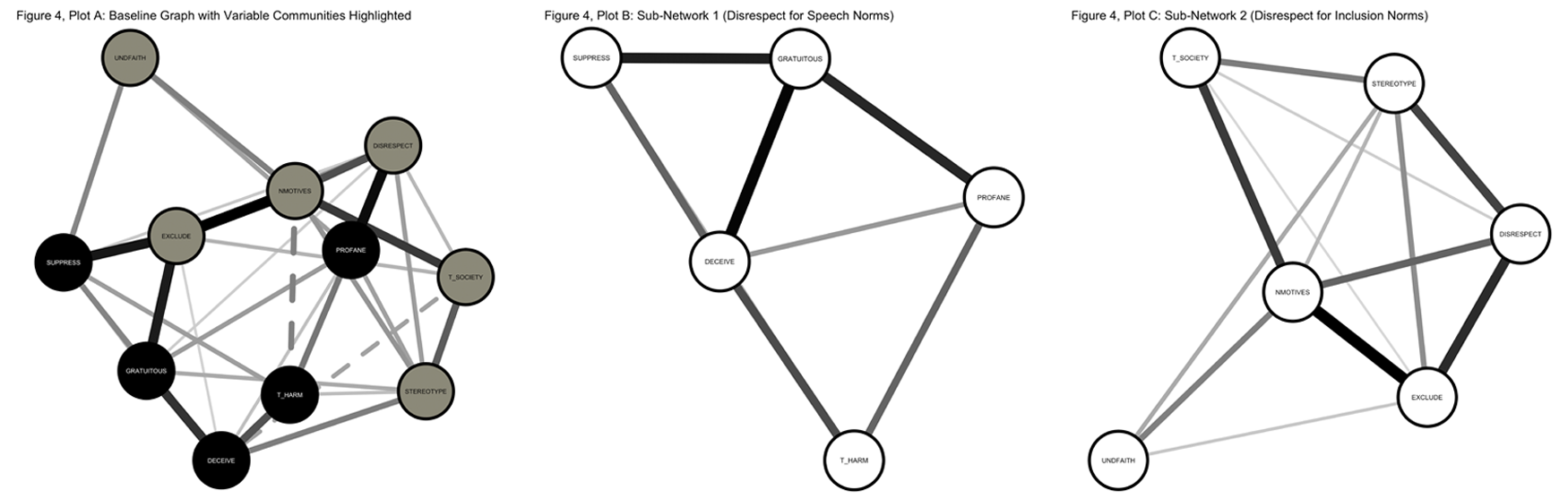

Research Question 3

Two approaches, both recommended by Fried (2016), were used to identify clusters of variables within with the overall network. These so-called “communities” speak to the degree to which certain facets of the network are especially strongly associated with one another. The first method was, essentially, an EFA. Specifically, a parallel analysis was conducted (based on 1000 random permutations of the data and principal components extraction) to determine the number of variable clusters. Upon obtaining the number of variable clusters, an EFA (principal axis factoring with promax rotation) was employed to determine community membership for each variable. An exploratory graph analysis (EGA; Golino & Epskamp, 2017) was also conducted. EGA first involves the estimation of a correlation matrix. Using this matrix, “graphical LASSO estimation is used to obtain the sparse inverse covariance matrix, with the regularization parameter . . . Finally, the walktrap algorithm is used to find the number of dense subgraphs” (Golino & Epskamp, 2017, p. 6). For its part, the walktrap algorithm—a frequently employed means of identifying community structure in traditional network structures—identifies variable communities by talking random “walks” (see Hanneman & Riddle, 2005 for description) through the nodes comprising the estimated network. 6

The results of both of these approaches suggested the existence of two identical dimension communities within the network. As it pertained to the EFA-based approach, the parallel analysis indicated the presence of two distinct statistical communities. The follow-up EFA suggested that first community was comprised of the persuasive deception (factor loading = 0.87), interpersonal threat (factor loading = 0.77), gratuitous language (factor loading = 0.66), profane language (factor loading = 0.52), and discussion suppression (factor loading = 0.50) dimensions, while the second community was comprised of the negative motives frame (factor loading = 0.97), social threat (factor loading = 0.78), exclusion (factor loading = 0.57), disrespect (factor loading = 0.54), negative stereotype (factor loading = 0.47), and system-based faith (factor loading = 0.46) dimensions. The EGA-based approach resulted in an essentially identical set of conclusions. Figure 4, Plot A shows the network depicted in Figure 1, and Plots A–C with the variable communities highlighted. Figure 4, Plot B shows a sub-network comprised of only the variables constituting the first identified community while Figure 4, and Plot C shows a sub-network of consisting of those variables found to comprise the second community.

Network graphs showing variable communities.

Interpretation of the statistical communities suggests that the first cluster (comprised of the persuasive deception, interpersonal threat, gratuitous language, profane language, and discussion suppression dimensions) describes a violation of speech norms. In other words, these dimensions all relate, in various ways, to a disregard for the tenets of polite communication. Alternately, the second community (characterized by the negative motives frame, social threat, exclusion, disrespect, negative stereotype, and system-based faith dimensions) speaks to a communicator’s desire to designate, on the basis of political and social identity, who is and who is not acceptable for inclusion in political communication. Taken as such, this cluster describes a violation of the inclusion-based norms that underlie most models of democratic functioning. Notably, while community membership did not conform exactly to the incoming expectations, the results were generally consistent with the public and private forms of perceptive incivility proposed by Muddiman (2017). Finally, the speech-based and inclusion-based sub-networks were strongly related to one another: the Pearson correlation observed between the two sets of variables was 0.70. 7

Discussion and conclusion

Although uncivil online political discourse has increasingly become an object of study, there exists very little agreement either on the construct’s precise conceptual definition or the degree to which the various theorized features of uncivil online communication relate to one another. In light of these deficiencies, this study drew upon pre-existing conceptual and operational definitions of incivility as a means of better understanding how the construct’s various theorized dimensions relate to one another in the specific context of online political discussion. The results of the current inquiry make three primary theoretical contributions. First, this study used the literature to develop a comparatively comprehensive corpus of incivility attributes. By assessing issues of association and centrality within a broad constellation of previously identified incivility factors, this study helps address an identified need (e.g. Herbst, 2010; Hopp et al., 2018; Muddiman, 2017; Su et al., 2018) for the development of a general theoretical model of online political incivility that illuminates the concept’s defining characteristics. This study’s focus on online communication between non-elites is especially important. Second, in light of the various and divergent ways that incivility has been construed in the prior literature, this study conceptualized and explored online political incivility in terms of both speech-based and inclusion-based normative violations. Such inquiry is important in light of prior theorizing that has sometimes divergently positioned incivility as either the violation of communicative norms relating to politeness (e.g. Mutz, 2015) or, alternately, as the violation of democratic norms pertaining to inclusion and reciprocity (e.g. Papacharissi, 2004). This work took both of these perspectives into account by building, in part, on Muddiman’s (2017) presentation of private and public forms of incivility. Third, the current focus on self-reported data was both unique and important. By showing general congruity between self-report and perceptive models of incivility, this study helps equip future researchers with a consistent theoretical means of addressing uncivil political communication practices on the Internet. These theoretical implications are expanded upon below.

As it pertains to the identification of especially important incivility dimensions, the data suggested that the desire to exclude one’s perceived opponents played in an important role in the variable network. On a bivariate basis, robust relationships were observed between the exclusion variable and the use of negative motives framing, the desire to suppress all discussion on a given political topic, the use of gratuitous language, and the use of disrespectful language. Support for the influential and important nature of a desire to exclude others can be further derived from interpretation of the centrality indices, which indicated that the exclusion variable occupied a central place in the network. The overall centrality of the exclusion feature is consistent with prior research on cognitive dissonance. Since the early 1960s (e.g. Stempel, 1961), researchers have known that dissonance avoidance is a key driver of selective exposure to political information. By suggesting that communicators may utilize incivility as a means of defensive avoidance (Garrett, Carnahan, & Lynch, 2013), the present data indicate that the impulse to avoid attitudinally inconstant political information may have implications that extend beyond media consumption. When communicators exclude ideologically heterogeneous others from discussion, they are, in effect, taking defensive measures to avoid exposure to dissonance-inducing information. In extension, the exclusion of discrepant others may subsequently offer the illusion of deliberation and social consensus, which may then actually facilitate further attitudinal hardening and, potentially, increased polarization along political lines.

This study’s results further suggest that incivility features may fit into two broad categories: violations of “good speech” norms and violations of norms related to inclusion of others. In several important ways, these variable communities map onto earlier models of perceived incivility (Muddiman, 2017; Stryker, Conway, & Danielson, 2014) and, in so doing, help address the tension between approaches that have conceptualized incivility as speech acts and incivility as democratically anti-normative behaviors. Notably, the exact configuration of the variable communities was somewhat surprising. Looking specifically at the community describing inclusion norm violations, for instance, it becomes apparent that these variables, in differing ways, address political and social identity in ways that extend beyond this work’s a priori expectation. In retrospect, these findings make sense. While features like the invocation of negative stereotypes and the use of purposeful disrespect commonly violate speech norms, the violation of inclusion-based norms may be, in many cases, more severe. Moreover, these findings conform with an increasingly large body of recent work that suggests that identity functions play an important role in political thought and behavior (e.g. Huddy, Mason, & Aarøe, 2015; Mason & Wronski, 2018).

One question may be asked of this study’s results is: are violations of speech norms truly uncivil? Some authors (e.g. Oz, Zheng, & Chen, 2018; Papacharissi, 2004) have drawn fairly strong distinctions between impoliteness and incivility. In this study, it was posited that the habitual and purposeful violation of speech-related norms is, indeed, an important form of incivility. If it is the case that incivility pertains to the “fundamental tone and practice of democracy” (Herbst, 2010, p. 3), it stands to reason that the way that political commentary is framed has substantial implications for the democratic potential of a given online discussion space. This presumption is drawn from prior research that indicates toxic language has an undesirable effect on political discussion quality (e.g. Mutz, 2015). However, given the instant study’s broad conceptualization of incivility as anti-normative, the present argument is conditioned on the idea that the degree to which impolite, rude, or toxic language is uncivil rests, in large part, upon the degree to which it is both purposefully and habitually applied. Spontaneous negative affect is a part of political conversation, especially among impassioned communicators. This contention broadly conforms with Papacharissi’s (2004) assertion that occasional impoliteness occurs “in the heat of the moment” and is “spontaneous, unintentional, and frequently regretted” while incivility is “expressed more firmly” and is not regretted by communicators (p. 277). It should also be noted that the variable communities describing speech- and inclusion-based norm violations were strongly related to one another (r = 0.70). This finding further calls into doubt the notion that impoliteness and incivility in political discussion are starkly different in character, at least when considered in terms of habitual modes of communication.

A final contribution that emerges from the current data relates to the use of self-report data. Prior studies have generally not sought to understand online incivility from the perspective of those creating it. The fact that the current results are broadly compatible with Muddiman’s (2017) earlier model of perceived incivility provide important theoretical evidence for conceptualization of incivility as a multi-faceted phenomenon that encompasses normative violations pertaining to both speech and inclusivity. That said, Muddiman’s (2017) model was not discussion-specific, as it contained a variety of communicative and non-communicative behaviors. Moreover, this model dealt primarily with behavior by political elites. As such, the currently presented conceptual rendering may be especially/comparatively useful in research projects concerned with specifically understanding online political discussion among non-elites.

Based upon the results of this study, it seems important that future studies on online political incivility understand it in terms of normative violations pertaining to both speech and inclusion. These factors may be disproportionally important in different research contexts. One can, for example, imagine that inclusion norms limit the scope of participation on a given platform (e.g. Facebook), while speech norms pertain more strongly to the degree to which conducted discussion is democratically fruitful (i.e. discussion quality). However, at the current juncture, such conclusions are merely assumptions in need of empirical investigation. That said, considering incivility in terms of speech and inclusion norm-violations will help address ongoing tensions pertaining to the construct’s definition. By addressing both facets of incivility, researchers can both move beyond definitional conflicts and obtain an enhanced, comparatively comprehensive ability to understand why online discussion frequently fails to live up to its democratic potential. Such knowledge, in extension, may be able to support the design of targeted interventions that encourage higher quality political communication online.

Several factors limit the current findings. First, although the model presented here could have causal implications (i.e. highlight potential causal relationships; Epskamp et al., 2018), the exploratory nature of the current research posture, the cross-sectional nature of the present data, and the applied analytic technique together provide an insufficient basis for causal claims. Second, these data were collected during the 2016 presidential election, a highly idiosyncratic contest that may have biased the observed relationships in unknown ways. Third, the sample employed in this study was convenience-based, cross-sectional in nature, and was generally younger, more liberal, and more politically active than the US population as a whole. Moreover, the use of Amazon’s MTurk for sample construction has been criticized (e.g. Harms & DeSimone, 2015). These factors, considered together, limit the ability to draw population-wide inferences from the present data. Fourth, this study assesses a very specific type of incivility (i.e. online political incivility); the findings presented here are not necessarily transferable to relational or non-political discussion contexts. Finally, because prior work, with some limited exceptions (Hopp et al., 2018), has not explored incivility from a self-report perspective, the indicators employed here were developed specifically for this study and are in need of cross-validation.

In spite of its limitations, this study contributes to the present body of theoretical knowledge on online political incivility, and offers particular benefit to researchers studying online political communication between non-elites. Using a large corpus of incivility features, this study, on an exploratory basis, sought to understand patterns of interrelationship on both bivariate and multivariate bases. The results suggest that a desire to exclude attitude-discrepant others from political conversation may play a central (and perhaps defining) role in the theoretical conceptualization of uncivil online political communication. Moreover, the results, generated using self-report data, were mostly consistent with Muddiman’s (2017) private/public model of incivility perceptions, suggesting that there exists conformity between communicator intent and audience evaluations.

Building off the currently presented findings, future research could be taken in a number of directions. First, in light of the above-described limitations, future research should confirm the findings presented here in a representative sample. Therein, researchers could attempt to both replicate the network structure presented here and, as an additional step, apply confirmatory techniques such as confirmatory factor analysis. Second, it stands to reason the measures presented in this study could be improved through empirical refinement processes. Third, it may be of importance to situate the presently described incivility components within a broader network that includes individual factors such as verbal aggression, emotionality, or narcissism. Fourth, future research should endeavor to situate the here-discussed incivility dimensions into an explicitly causal framework. It stands to reason, for instance, that the violation of speech norms may arise as a function of the desire strategic desire to include attitude- and identity-discrepant others from political conversation. Such research could be conducted through the use of experimental methods or lagged behavioral models. Fifth, the notion that the desire to exclude attitude-discrepant others from conversation occupies a central place in the theoretical rendering of incivility introduces a potentially challenging problematic: some discussion ought to excluded from democratic communications (e.g. racism, homophobia, and so on). Future research should work to parse out the scenarios and conditions under which excluding attitude-discrepant others may actually be a civil act. Finally, the results presented here can be used by scholars and platform managers interested in designing moderation systems designed to facilitate higher quality online political discussion. Notably, most current community management approaches and tools (see, for instance, Google’s Perspective API (www.perspectiveapi.com)) tend to focus solely and specifically on the use of toxic or otherwise impolite communication. The results presented here suggest that it may be worthwhile to instead focus on the development of interventions that make discussion more inclusive and that seek to build trust among attitude-discrepant communicators.