Abstract

Deliberative democratic theory has proposed the use of mini-publics to discern a more reflective public opinion, which can then be conveyed to policymakers or back to the wider public. In 2009, the legislature in the State of Oregon (USA) created one such process in the Citizens’ Initiative Review to help the public make informed choices on statewide ballot measures. This study investigated how the public conceptualizes and assesses the Citizens’ Statements that Citizens’ Initiative Review panels place in the statewide Voters’ Pamphlet. We pose a series of research questions concerning how the public perceives the role of the Citizens’ Initiative Review in initiative elections. We investigate those questions with usability testing sessions held in the final weeks before the 2014 election. Forty interviews were conducted in Portland, Oregon, and 20 were held in Denver, CO, where a pilot version of the Citizens’ Initiative Review was held. Online survey data collected in Oregon and Colorado followed up on the themes that emerged from the usability tests to obtain more general findings about these electorates’ views of elections and the Citizens’ Initiative Review. Key results showed that voters found the Citizens’ Initiative Review Statements to be a useful alternative source of information, although they required more information about the Citizens’ Initiative Review to make robust trust judgments about the process. Voters were uncertain of the value of the vote tally provided by Citizens’ Initiative Review panelists, but reading the Citizens’ Initiative Review Statement inspired some to vote on ballot measures they might have skipped.

Democratic theorists have long celebrated the concept of convening “mini-publics,” which stand in as a microcosm for a larger public and exercise some of their deliberative responsibilities (Dahl, 1989; Fishkin, 1991; Fung, 2003; Gastil, 2000). These typically feature a body formed through random selection, sometimes called “sortition” (Elstub, 2010), which meets for a period of days to offer insight or judgment on a specific question (Gronlund, Bachtiger, & Setälä, 2014).

Mini-publics represent a novel application of deliberative democratic principles, but critics have questioned the wisdom and efficacy of such bodies (Lee, 2014). Evidence certainly suggests that the participants in such events have rewarding and empowering experiences (Knobloch & Gastil, 2015; Niemeyer, 2011), but the evidence of their relevance for larger publics and public policy is more mixed (Gronlund et al., 2014). The link between mini-publics and the larger public is important, because some democratic theorists believe the appropriate role for mini-publics is to enhance macro-level politics, rather than trying to supplant it (Lafont, 2015). Many deliberative mini-publics are designed to serve precisely this purpose (Fishkin, 2009; Warren & Gastil, 2015).

As scholars advocate applying mini-publics to an ever wider range of electoral and policymaking contexts (Fishkin, 2013; Gastil & Richards, 2013), it is imperative that research clarify the relationship between mini-publics and the larger publics they aim to serve.

One mini-public that warrants attention in this regard is the Citizens’ Initiative Review (CIR). In 2009, the legislature in the state of Oregon (USA) created the CIR to help its voters make informed choices on statewide ballot measures. Every even-numbered year, that state’s electorate must make decisions about proposed legislation, which has either been put on the ballot by virtue of a citizen petition or as a referendum from the state legislature. To assist voters, the CIR Commission convenes 20–24 citizens to deliberate on a single ballot measure. After 3–5 days of hearing from witnesses, meeting in small groups, and weighing rival claims about the proposed policy, the citizen panel writes a one-page Citizens’ Statement that then appears in the official Voters’ Pamphlet, which the Oregon Secretary of State distributes to every registered voter in the state.

To date, however, no study has looked directly at whether—and how—voters can make sense of this novel institution, which was born from the marriage of abstract political theory and a self-assured civic reform movement (Lee, 2014; Ryfe, 2007). Longstanding deliberative institutions, such as the jury system, have embedded themselves in lay conceptions of democracy over the course of centuries (Dwyer, 2002), but mini-publics such as the CIR have only weak historical moorings. From a distance, the CIR seems capable of functioning as a “trustee” institution where voters rely on the guidance of their fellow citizens who have become quasi-experts on the issue (Warren & Gastil, 2015), but this study investigates what features of the CIR voters identify as critical for earning—or losing—their trust in its Citizens’ Statements. Finally, we examine how a public renowned for avoiding deliberation (e.g. Hibbing & Theiss-Morse, 2002; Yan, Abril, Kyoung, & Jing, 2016) actually uses these Citizens’ Statements.

We begin by placing these research questions, and the CIR itself, in the broader context of deliberative democracy. We then describe our research method, which combines face-to-face usability testing and online voter surveys. In the results section, we juxtapose these qualitative and quantitative findings in relation to our research questions. Our concluding section highlights the most important theoretical and practical implications of our results.

Connecting mini-publics with larger publics

This study aims to advance a broader theoretical literature on deliberative democracy, which holds that a democratic society requires ongoing deliberation to ensure more well-reasoned public judgments and to sustain the legitimacy of its governing institutions (Chambers, 2003). This democratic theory emphasizes the quality of public participation and political talk, not just the volume of it (Dryzek, 2010; Gutmann & Thompson, 2009; Leighninger, 2006).

One important strain of this broad literature is the epistemic variant of deliberative theory (Estlund, 2008). This account stresses the importance of circulating accurate information to render improved judgments in collective decision making. The deliberative process itself can lead to more sensible judgments, even without improving a decision-making body’s information base, but higher quality information distributed throughout the system should yield judgments more in accord with the long-term interests of that body (Landemore, 2013).

Recent critiques, however, emphasize that in a pluralist society there is no independent ground on which to judge the objective quality of decisions rendered by deliberative bodies (Ingham, 2013). A stronger foundation for deliberative theory must stress the expressive and educational functions of deliberating. Thus, Richards and Gastil (2015) argue that deliberation sustains democratic legitimacy by making the public more knowledgeable and by sustaining the procedural integrity of public decision making.

That conception of deliberation may seem abstract, but it finds concrete expression in many public discussion programs, such as the National Issues Forums (Gastil & Dillard, 1999; Mathews, 1994; Melville, Willingham, & Dedrick, 2005). The purpose of such forums is so obviously educational that educators routinely include them in the curriculum to give students a sense of how to work with both values claims and factual information when reaching a common judgment on policy questions (Leppard, 1993; O’Connell & McKenzie, 1995).

Deliberative interventions have also aimed to serve a broader legitimizing function by drawing public deliberation and governance closer together. Before deliberative democracy established itself as a powerful alternative model of democracy (Held, 2006), political theorist Robert Dahl (1989) proposed the creation of a “minipopulous” of a thousand people, who would learn about a problem and provide a more enlightened judgment of what course of action a government should take (p. 340). The idea was offered as more than a thought experiment, but the proposal to conduct a large-scale Deliberative Poll followed closely on its heels (Fishkin, 1991). In fact, these proposals came well after the inception of experimentation with Citizens’ Juries, Planning Cells, and other smaller scale processes (Gastil & Levine, 2005).

In the present day, such deliberative designs fall under the term “mini-public,” which refers to representative samples of the public brought together to meet face to face or online to study a public problem and develop considered opinions, novel insights, recommendations, or even formal decisions (Fung, 2003; Gronlund et al., 2014). A subset of these link directly to the electoral process, as in the case of the British Columbia Citizens’ Assembly, which drafted an electoral reform that was then put on a province-wide ballot for ratification (Warren & Pearse, 2008). Such processes aim to use the mini-public as a trustee that the public can turn to when asked to play its role by voting on legislation or candidates (Warren & Gastil, 2015).

Our focal case, the Oregon CIR, belongs to this larger family of deliberative citizen panels that advise voters (Crosby, 2003; Gastil, 2000). It also resembles, in some respects, the Citizens’ Assembly (Warren & Pearse, 2008) and various processes developed by civic entrepreneurs in the United States, including Citizens’ Juries (Crosby & Nethercutt, 2005; Smith & Wales, 1999) and a wide variety of other processes (Gastil & Levine, 2005; Nabatchi, Gastil, Weiksner, & Leighninger, 2012).

The novelty of processes like these means that many empirical questions remain about whether (and how) voters understand, trust, and use them. For instance, the expressive or symbolic justifications of deliberation (Richards & Gastil, 2015) may not extend beyond those who participate directly in a mini-public. Shah (2016) and others have critiqued deliberative theory for privileging the knowledge-building function of deliberation while overlooking the value that comes from expressing opinions freely in naturally occurring conversation. Mini-publics may not function effectively if the wider public’s relation to them is that of passive consumer, rather than as active participant.

Before introducing the three broad questions that guide our study, however, we must first clarify the CIR process itself. Some readers may already be familiar with the CIRs held in previous years (Knobloch, Gastil, Reedy, & Walsh, 2013; Knobloch, Gastil, Richards, & Feller, 2014), but there were many procedural modifications undertaken for the 2014 CIR panels that are pertinent to the issues we investigate in this study.

The CIR

The Oregon CIR convenes a small deliberative panel to provide direct guidance to the larger electorate, which must decide on legislation already placed on its statewide ballot. The crucial feature is the fact that the CIR’s Citizens’ Statements appear in the official Voters’ Pamphlet, which the Secretary of State mails to every registered voter. Thus, the CIR can have a tremendous influence on the wider electorate, which it can induce to engage in a kind of “vicarious deliberation” by reading the one-page statement in produces (Gastil, Richards, & Knobloch, 2014). In doing so, the Review provides an alternative to the simple voting cues provided by campaigns for and against each ballot measure, and this can help voters make more informed decisions (Gastil, 2014).

Healthy Democracy is the principal non-governmental organization that convenes the CIR. After hosting the initial rounds of CIR panels in 2010 and 2012, Healthy Democracy received a matching grant from the Democracy Fund to expand the CIR’s reach. In addition to two statewide processes in Oregon, Healthy Democracy worked with local organizations to conduct test processes in Jackson County, OR, Colorado, and Phoenix, AZ. The 2014 cycle also brought significant structural changes to the CIR, in particular, the reduction of the process from 5 to 4 days, the downsizing of the panel from 24 participants to 20 participants, and the elimination of background witnesses. A key feature that did not change was the stratified random selection of the panelists themselves, who were paid the equivalent of the state’s median hourly wage.

All the 2014 CIRs followed the same basic process design. Each panel met for 4 consecutive days and heard from advocates in favor of and opposed to the measure. At the end of their deliberations, the panelists had created a list of findings relevant to the measure and then used these findings to craft their Citizens’ Statements. The basic agenda was as follows:

Day 1: Orientation to CIR and the ballot measure;

Day 2: Identification of questions for advocates and expert panel 1;

Day 3: Expert panels 2 and 3 and identification of additional findings

Day 4: Key Findings prioritization and development of arguments in favor of and opposed to the measure.

Although each panel followed roughly the same agenda, modifications were made to the structure based on the success of previous agenda segments and the needs of the panel. For example, although the panelists heard primarily from advocates in favor of and opposed to the measure, a few of the reviews contained one panel with a neutral witness. In addition, the method for distributing the Citizens’ Statement varied by location. The statewide Oregon reviews were the only ones to have their statements appear in the Voters’ Pamphlet. The other reviews distributed their statements through websites, direct mail, and media coverage.

The process also saw significant structural modifications from previous years. The format for developing and voting on the sections of the Citizens’ Statements saw a number of process modifications. Rather than independently developing findings and arguments for the statement, as had been done in years past, participants began deliberations with a set of claims developed by advocates and largely worked to prioritize and edit these claims for inclusion in the Citizens’ Statement. Moreover, in 2014 multiple reviews studied similar measures in different locations, and, for the first time, advocates appeared at multiple reviews. These process modifications did not change the CIR process in any fundamental way, but Gastil et al. (2015) provides a detailed analysis of their process impact.

The particular CIR sessions relevant to this study occurred in Oregon and Colorado. The first of the two Oregon panels met from 17–20 August and reviewed Measure 90, which would allow voters to select one candidate for office in an open primary, and then the top two candidates would advance to the general election, regardless of their party affiliation. The second panel met from 21–24 August and reviewed Measure 92, which would require food manufacturers and retailers to label packaged foods that contain genetically modified organisms (GMOs) in their ingredients. Both reviews were conducted in Salem, OR, the state’s capital. The CIR commission oversaw the process, determining which measures to study. Veteran facilitators with experience from the 2010 and 2012 CIR processes moderated the panel discussions. The Citizens’ Statements were distributed via the state’s Voters’ Pamphlet and indicated the number of panelists who voted for and against the measure.

The other CIR that we discuss in this essay is the 2014 Colorado CIR, which was the first review held outside Oregon. To conduct this review, Healthy Democracy partnered with a local civic engagement organization, Engaged Public, as well as a local facilitation organization, Civic Canopy, and meeting space was provided by the University of Colorado–Denver. Twenty Colorado voters met from 7–10 September and reviewed Proposition 105, which would require raw or processed foods containing genetically modified organisms to contain the label “produced with genetic engineering.” The Citizens’ Statement was distributed via Healthy Democracy and Engaged Colorado’s websites, as well as through direct mail and saw relatively high levels of media coverage, including stories by local television stations, newspapers, and public radio.

Research questions

Past research has found that the CIR panels themselves practice high-quality deliberation (Knobloch et al., 2013; Knobloch et al., 2014) and produce high-quality Citizens’ Statements (Gastil, Knobloch, & Richards, 2015; Gastil et al., 2014). What remains to be seen, however, is how voters “make sense” of these Statements (Richards, 2016). How do voters understand these Statements in relation to conventional voting guides and campaign materials? What information about the CIR do they require to consider the Citizens’ Statements trustworthy? And how do they report using them as a voting aid?

Those broad questions were used to inform our study design, which began with one-on-one interviews with voters followed by online voter surveys. After reviewing the theoretical questions guiding our research, we describe this research method in more detail.

Making sense of a mini-public

Civic educational processes such as the National Issues Forums make good sense to participants, who voluntarily choose to step into a deliberative space to integrate background materials with the insights of their peers (Melville et al., 2005). The voting booth is a long walk from the classroom, however, and this raises the question of how the public actually construes a tool such as the CIR’s Citizens’ Statements. In the broadest sense, this is a question about how users of the CIR “make sense” of it as a communicative practice and information resource (Dervin, 1998).

We break down that larger question into two related parts concerning the status quo and the change that the CIR could represent. The first part concerns how the public understands initiative elections and the existing information sources available. Such elections ask the public to play the role of legislator, but they pose a communication problem. Even if the public values having the power of the initiative, it also has some concerns about the process (Bowler & Donovan, 1998; Broder, 2001; Gerber, 1999). Initiatives pose a challenge to voters because they rarely convey simple partisan voting cues, and they often involve complex and/or esoteric subject matter (Gerber, 1999; Gerber & Lupia, 1999). Although elites and special interest groups can serve to fill these knowledge gaps (Forehand, Gastil, & Smith, 2004; Lupia, 1994), voters may not trust these sources of information. For example, a study of insurance initiatives in California found that when voters knew the preferences of the insurance industry, they were more likely to vote in opposition to them (Lupia, 1994). Social media have not resolved this problem, as they principally serve to recirculate partisan information and reinforce public concerns about biased communication channels (Rojas, Barnidge, & Abril, 2016).

Attempting to address this problem, states such as Oregon provide members of the electorate with an official voter guide. Such guides contain plain-language summaries of the initiative, estimates of its fiscal impact, and endorsements by special interest groups. Previous work has found that up to 80% of Oregon voters utilize that state’s Voters’ Pamphlet when filling in their ballots (Gastil & Knobloch, 2011). Little is known, however, about how voters interact with such information, although one study of voter discussions found that the voters guides were often cited as a source of information or expertise (Reedy & Gastil, 2015). Thus, our research begins with the question: How useful do voters find existing information sources in initiative elections?

The second—and more central—part of this question concerns mini-publics as a supplement to existing sources of initiative information. Previous scholarship has stressed the public’s appetite for more deliberative politics (Gutmann & Thompson, 2009; Leighninger, 2006; Mathews, 1994), and some experimental evidence has borne this out (Neblo, Esterling, Kennedy, Lazer, & Sokhey, 2010). Also, the public already has a clear appreciation of longstanding deliberative processes, such as the jury system (Dwyer, 2002; Ferguson, 2013; Gastil, Deess, Weiser, & Simmons, 2010). The CIR, however, has no precedent, and it is unclear how the public will understand its design and function. The public may think of the CIR as a means of deliberating on complex public issues (Leighninger, 2006), or citizens may see it as a novel kind of voting cue (Lupia, 1994).

Even prior theoretical work on citizen panels remains equivocal on which of these roles such a body might play (Gastil, 2000). Mini-publics may provide individuals with the information needed to engage in a second-order deliberative process (Gastil et al., 2014). In short, voters would utilize the information provided by mini-publics such as the CIR to engage in deliberative reflection by considering the insights put before them by a mini-public (Goodin, 2000; Niemeyer, 2011). Processes such as the CIR scrutinize the information offered by traditional advocates and provide the wider public with the resources to learn about the policy from a deliberative perspective (Niemeyer, 2014). Conversely, the wider public might simply use the outcomes of mini-publics as a heuristic, trusting the outcome of their deliberations because they trust the process and the citizens who took part in it (Gastil, 2000). Thus, our second question is: How do voters conceptualize the purpose of this novel process?

Placing trust in a trustee

The next issue we address concerns what features of a mini-public are key to earning the public’s trust in such processes. In this regard, the fact that the CIR statement comes from a body of peers is particularly important. This feature could enable voters to see the citizen panelists as disinterested “trustees,” who view their purpose as providing impartial and policy-relevant information (Warren & Gastil, 2015).

Some past research has found publics willing to place a measure of trust in mini-publics. For instance, a study of the British Columbia Citizens’ Assembly found that the process appealed to different voters for different reasons. Populists were more likely to support the proposed referendum if they considered assembly members ordinary citizens, whereas non-populists were more likely to trust the assembly’s recommendation if they considered assembly members to be experts on the issue (Cutler, Johnston, Carty, Blais, & Fournier, 2008).

One cannot assume, however, that the public inherently trusts a small-scale mini-public such as the CIR. Some critics question whether citizens are better served by forming large-sample events, such as Deliberative Polls (Fishkin, 1991, 2009), versus smaller bodies with more intensive deliberation like the CIR and related processes (Crosby, 2003; Crosby & Nethercutt, 2005). Fishkin (2013) has argued that the CIR, in particular, has too small a sample to be a trustworthy source of information for voters, who may doubt its representativeness of the wider public. Other critics have cast doubt on what one learns from Deliberative Polls, (Mitofsky, 1996). Broader criticisms raise questions about how the public might perceive any advisory deliberative mini-public (Collingwood & Reedy, 2012).

Asen’s (2016) assessment of American politics questions cultivating public trust, whether in conventional institutions or novel mini-publics is even possible. He asks, Is increasing economic inequality leading the United States to a point where people of different backgrounds and standing may no longer be able engage in perspective-taking? Are life experiences becoming so unequal that people cannot imagine the experiences and appreciate the perspectives of others? (p. 7)

Our study investigates this question, as it pertains to the CIR, in two parts. Does the public have qualms about the CIR process’ neutrality or trustworthiness? Relatedly, what aspects of the CIR are key to earning (or losing) public trust?

Using the CIR as a voting aid

Finally, the in-depth interview approach taken in this study had a section that left room for discovery of other public attitudes, concerns, or experiences related to using a mini-public such as the CIR as a decision aid when voting. Because of the novelty of the CIR, we left open the possibility of discovering particular aspects of the CIR that interested or worried voters, as well as any evidence of non-obvious impacts from using the CIR.

This final focus for the investigation is inspired partly by the success of previous research on deliberation that took a similar approach. One widely cited study of deliberative processes used an inductive method to identifying discourse norms, such as the previously unrecognized “free flow” concept (Mansbridge, Hartz-Karp, Amengual, & Gastil, 2006). A similarly open-ended approach could identify original descriptions, benefits, or hazards of the CIR. Thus, our last and broadest research question asks: How do voters describe their use of the CIR as a voting aid?

Methods of investigation

Answers to questions such as that come in two parts in our study. For each question, we begin by reporting the results of a usability interview, which consisted of one-on-one interviews with voters reading CIR statements at a testing facility. We then follow up the findings from these usability interviews with questions included in online survey panels.

Usability testing

The usability approach has not been used widely, if ever, in deliberation research, but it is appropriate for testing new communication technologies. Communication theorists can understand part of the scholarly enterprise as engineering or designing new communication modalities (Aakhus, 2007). Information science and other fields often want to investigate directly how users of such technology “make sense” of them (Dervin, 1998).

We adopted this approach by enlisting the Bentley University Design and Usability Center. The Center conducted one-on-one interviews in 2014 with 40 voters in Oregon and 20 in Colorado, a state where the CIR process was pilot tested in 2014. This approach permitted direct observation of how voters understand the CIR process and the Statements it produces. An extended one-on-one interview format also left open room for discovering new themes in the data beyond those questions.

This method has the obvious limitation of having an interviewer in the room with a voter, which raises the risk of social desirability. Social desirability bias can be successfully mitigated through several interview techniques (Rosenzweig, 2015), which the Bentley User Experience Center employed. For example, this involves asking indirect questions for sensitive topics and then balancing that by adding questions that get at the same information in different ways. Using techniques such as these, the Bentley University group has successfully elicited both positive and critical appraisal of new technology from voters in previous research (Selker, Rosenzweig, & Pandolfo, 2006).

In Oregon, the usability study sample consisted of 20 voters who read the Citizens’ Statement on Measure 90 (open primaries) and an equal number who read the Statement for Measure 92 (GMO labeling). Half of the Oregon participants were chosen because they had used CIR statements in the past, with the other half being novices. To compare the Oregon experience with a state considering adopting the CIR, another sample of 20 voters were interviewed in Colorado as they read the pilot CIR’s statement on Proposition 105 (another GMO labeling measure).

Test sessions were one-on-one, video recorded, and lasted approximately 60 minutes each in research labs in Portland and Denver. Test facilitators followed a structured script that went through five steps: introduction to the interview process, background questions on the upcoming election (e.g. “Are you familiar with any of the statewide measures that will be on the ballot?”), previous experiences using the CIR (for Oregon voters only), a 10-minute silent period to read the CIR Statement, and questions about the CIR Statement (e.g. “What are your impressions of the CIR process and the one-page statement it produced on this issue?” and “Do you trust this review or not? Why or why not? What parts do you trust more/less?”). All these protocols were approved by the Pennsylvania State University Institutional Review Board (IRB).

Online surveys

One additional limitation of the usability interviews was their small sample size. To address this, we timed the interviews such that we could follow up on themes that emerged with online surveys that used larger voting populations. In this sense, the survey data are meant to validate inferences that emerged from the interviews, whenever it was possible to do so. The lone exception was that the online surveys had no items relating to voters’ perceptions of initiative elections and conventional voting materials; previous research had established the high statistical frequency of voters’ frustration with conventional politics and initiative campaigns (e.g. Broder, 2001; Gastil, 2000).

The online surveys were conducted using Internet survey panels provided by Qualtrics. The Oregon survey included 2077 respondents, and the Colorado survey had 1816 respondents. Descriptive statistics shown in this report were calculated using demographic weights to adjust frequency data such that the sample was representative of the population in terms of age, education, political party registration, and sex. For ease of presentation, the particular survey questions employed are introduced below, in the context of findings that emerged during the usability tests. Again, IRB approval was obtained for these surveys.

Findings

Making sense of the CIR

The first questions this study addresses ask whether voters perceive a deficiency in status quo information sources and, if so, whether the CIR makes sense as a solution. Many participants in the usability testing interviews complained about the conventional voting materials available to them, when they lack a Citizens’ Statement.

In the case of Oregon, the CIR analyzes only two ballot measures during the statewide initiative elections, which are held in that state only during even-numbered years. Participants complained that the official Ballot Title and full text of a given measure were sometimes hard to understand. Even if a person knew what his or her position was, it was sometimes confusing whether a yes or no vote would support that position. Sometimes voting “yes” actually means saying “no” to a law, and vice versa. One interviewee said that “bigger typeface and less words …” are needed because the Voters’ Pamphlet is “too wordy … The level of accessibility is not there” (P33). 1 Another participant said that sometimes the Pamphlet text has “a lot of science words, like WHOOSH … over my head” (P11).

In Colorado, voters are largely informed by television advertising, which they recognize as biased. The ads can be overwhelming; as one voter pleaded, “Make it stop!” (P9). That state, however, has a more detailed voting guide than most states. 2 Many Colorado voters refer to it simply as “the Blue Book.” Most participants reported using this guide, but many also said that the language in it ends up confusing them. As one voter said, it just “Doesn’t make sense to me” (P16). “Sometimes,” one participant said, “there are words where I wish they had a glossary or index definition kind of thing” (P3). Another wondered “how much of it is a waste of paper” because “it’s pretty dense, repetitive, and the layout is not engaging” (P18). One concluded, “When you can’t even understand what it says, there must be so many mis-votes” (P9). In sum, both Oregon and Colorado voters find conventional information sources at best limited and at worst misleading.

Even if voters expressed frustration with conventional voting guides, it was possible that they would find the CIR was essentially no different. In Oregon, many of those interviewed had a clear conception of the Citizens’ Statement. They often noted that it served as a condensed version of the state voting guides. The CIR Statement provided simpler language and shorter length. One said, “[I] don’t feel as overwhelmed as [with] the booklet” (P40). Voters believed that the Statement “gave a lot more insights” (P6) because it “explains in layman’s terms” (P11) the ballot issues it addresses.

More generally, many Oregonians understood the purpose of the Review and what makes it work. The Review is “really trying hard to reach the general masses to keep them informed” (P33). A key to its effectiveness was that it was written by citizens, for citizens. As one interviewee said, the Statements are “written by people like me and not politicians” (P39). Moreover, “because the panelists are normal people and the statements are very direct” (P6). The result is “keeping interest groups in check” (P6).

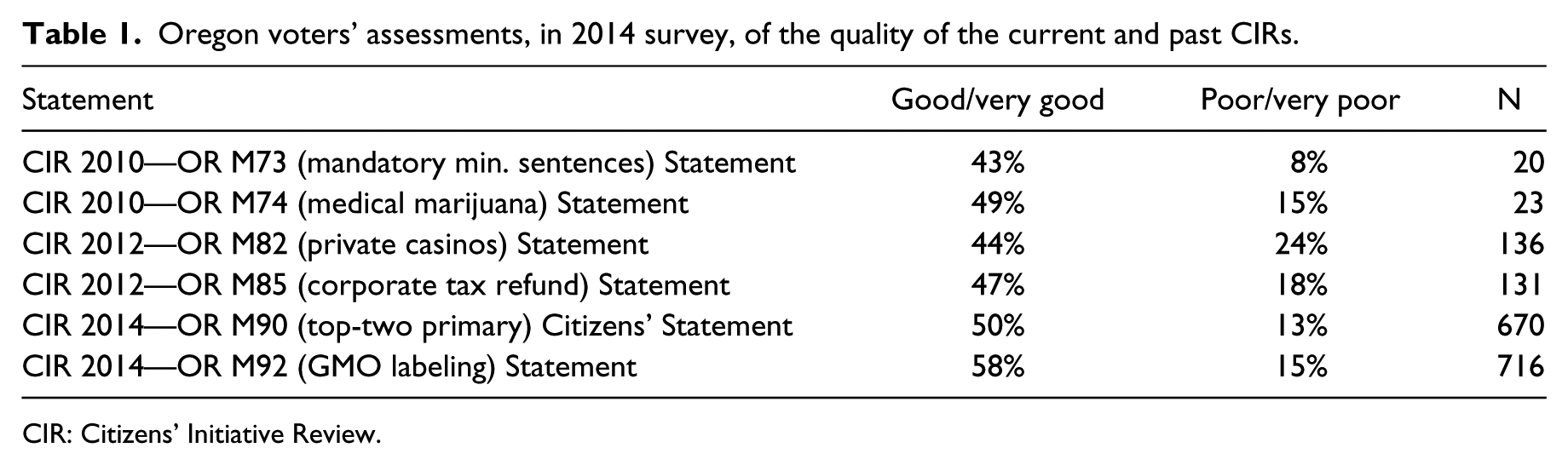

Those qualitative findings were then compared with assessments of past CIRs by those Statement readers who participated in our 2014 online survey in Oregon. This subset of survey respondents who had at least some awareness of CIR were asked to rate the overall quality of all six of the CIRs held in Oregon since 2010, as shown in Table 1. Although many had to decline, owing to unfamiliarity with the measures, the results provide a useful point of comparison. 3 The table below skips the mid-point rating and just contrasts the percentages who rated each as either good or poor in quality. Acknowledging the very low sample size for 2010, the table shows that almost half of respondents typically give one of the two highest ratings (“good” or “very good”) and about one-in-six usually offer a “poor” or “very poor” mark.

Oregon voters’ assessments, in 2014 survey, of the quality of the current and past CIRs.

CIR: Citizens’ Initiative Review.

The 20 Colorado participants were all new to the very idea of a CIR, but most immediately recognized its value. Some participants said that reading the CIR Statement did not cause a change in opinion, but it made them want to explore the measure further. As one said, “It raises more questions in my mind, which is a good thing” (P19). Other participants indicated that reading the CIR Statement changed their mind, or helped form an opinion on the proposition. One said simply, “I feel more enlightened” (P5) on the issue.

The most poignant moment in all the usability tests came from a session with a woman who had turned in her ballot before arriving at the testing session. The participant (P9) begins the session by answering the interviewer’s questions, like all the others. When asked to read the CIR Statement, she did so willingly, even though she had already returned her ballot in the mail. 4 She read carefully the CIR’s analysis of the GMO labeling measure, on which she cast a “Yes” vote. She read quietly to herself, told the interviewer that an insight in the CIR Statement surprised her:

It says two-thirds of the food and beverages we buy would be exempt. Meat and dairy products are exempt, even if they’re from animals raised on GMOs. Alcoholic beverages. So, why are they exempt?

[long pause, as she continues to read the next part of the CIR Statement]

I wish I would have read this before I voted. Wow!

Why?

Because I would have voted differently.

Okay.

[another silent pause]

Yeah. I would have voted differently.

Many of the Colorado participants came to the view that more experienced Oregonians had already reached. They found the CIR Statement useful as a short summary of the measure with key points and pros and cons—essentially, a boiled-down version of the existing Blue Book, but with less legal language. Like the Blue Book, which includes a pro and con section, the CIR “gives both side of the argument” (P14), but by comparison, the Statement “is very clear” and “much easier to read” (P20). Some participants said it made them think differently about the whole experience of voting: “It makes me realize that there are available things that are a lot less cumbersome than some of the things that I’ve relied on. I’d be interested in seeing more of the CIRs” (P8). Another participant seemed optimistic that the Reviews were already becoming a regular part of Colorado elections: “Now that I see the [CIR Statement], [I] skip [the Blue Book] and do [the CIR Statement] … We need to promote that this is available and we need to have this for all measures” (P9). In fact, the CIR was only a pilot project in Colorado, not a regularly available resource—at least not yet.

Placing trust in the CIR

The preceding results are suggestive of the trust that the public might place in a mini-public such as the CIR. But whereas Colorado participants in the usability testing expressed enthusiasm for the idea of a CIR, the more experienced Oregonian users of the Statements tempered their enthusiasm with practical suggestions for how their existing Reviews could be improved. Two primary reasons were given by those participants who were reluctant to place too much trust in the CIR Statements. Many participants expressed a desire for more information about the panelists, specifically, who they were and how they were recruited. Many other participants were skeptical about the objectivity of sources of information the panelists received. As one participant said, “I don’t know what they [the panelists] read—where did they get that?” (P4). More generally, the panelists wanted to know more about the CIR: “I like the idea, but it doesn’t seem transparent” (P4).

Some Oregon participants went further to say that they thought the Key Findings in the Statements could show bias. In the case of Measure 92 (GMO labeling), for example, one panelist thought using the phrase “eel-like” organism had a sensationalist tone (P9). Some participants reported that duplicated content between Key Findings and either the pro or con arguments makes the Key Findings appear biased in the corresponding direction. Some thought the con arguments were not so clearly opposed to the measure, and one thought the Statement was “one-sided, against the issue” and included “lots of pessimistic statements” (P22). More generally, many participants indicated that they wanted more numbers and concrete data, which would make the information seem more objective.

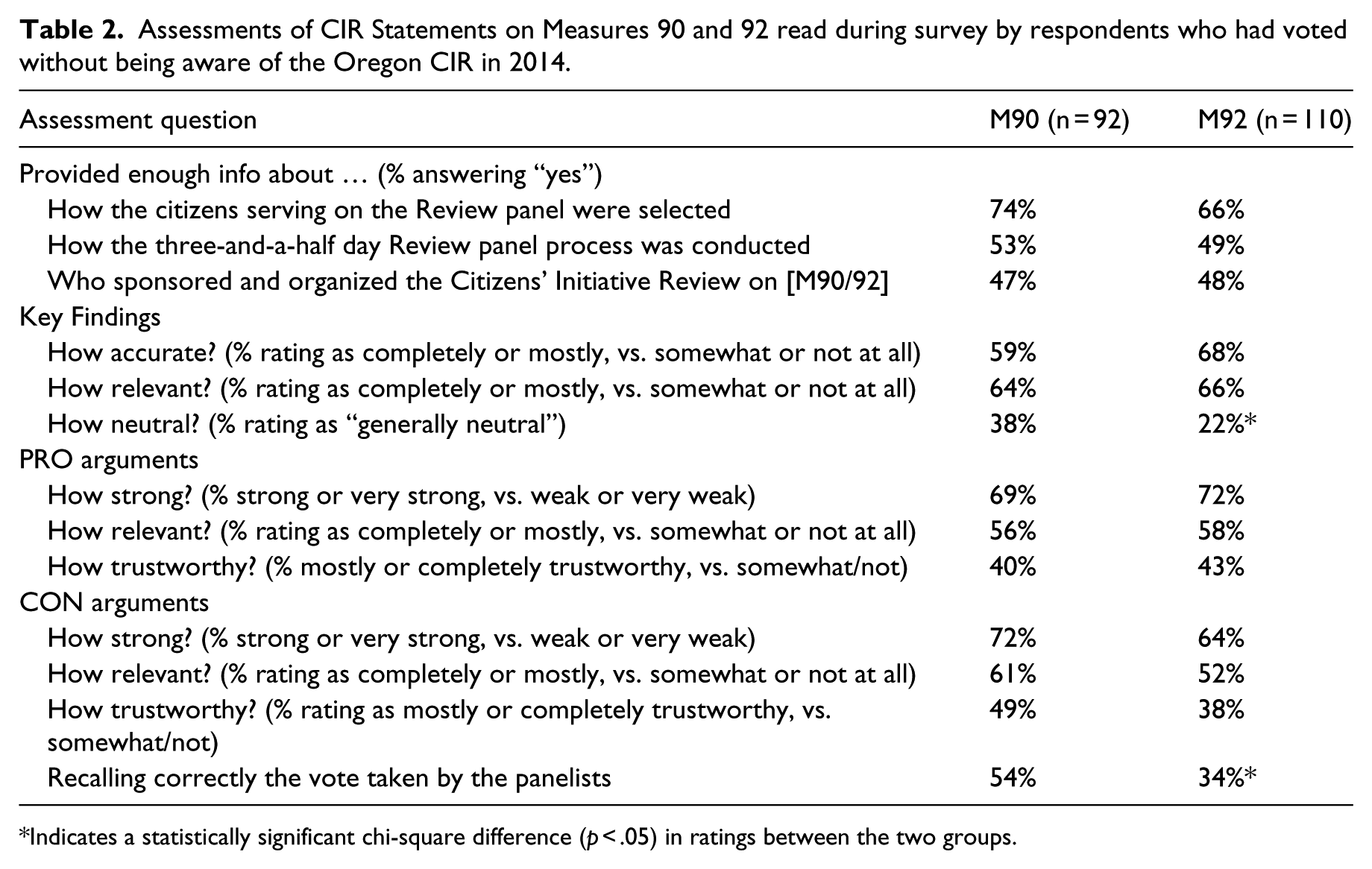

Those concerns prompted us to include in our online Oregon survey a series of questions about the CIR Statements. First, we conducted a special study on the subgroup of survey participants who had already voted but were not at all aware of the CIR process. We randomly assigned these respondents to read the Citizens’ Statement on either Measure 90 or Measure 92. Between 92 and 100 participants (depending on the issue) spent at least a minute reading the Statement assigned to them, and afterward, they answered a series of questions. 5 The results are shown in Table 2.

Assessments of CIR Statements on Measures 90 and 92 read during survey by respondents who had voted without being aware of the Oregon CIR in 2014.

Indicates a statistically significant chi-square difference (p < .05) in ratings between the two groups.

In both cases, participants said they knew enough about how the panelists were chosen, but a majority of respondents in both cases did not believe they had been provided enough information in the CIR Statement about who sponsored and organized the CIR. Respondents were also evenly split on whether they knew enough about how the panel was conducted.

As for assessing the Statements themselves, the results were similar across Measures 90 and 92, with only two differences in the ratings across the issues. First, readers found the Key Findings in Measure 90 to be more neutral, even though only 38% gave it a “generally neutral” rating. In the case of Measure 90, panelists were relatively evenly divided in sensing a bias for or against, but in the case of Measure 92, 49% thought the Key Findings were stacked against the measure compared to 30% seeing bias in the other direction.

Second, in both cases a plurality of those giving a guess recalled correctly how the panelists voted on their respective measure, but the proportion was much higher for Measure 90 (54%) than for Measure 92 (34%). When respondents who skipped the question are included, the figures are not as different (38% for Measure 90 vs. 28% for Measure 92), because fully 29% chose not to guess on Measure 90. That fact makes this difference harder to interpret, because it makes it unclear whether a strong CIR vote (Measure 90) is more memorable than a closely divided one.

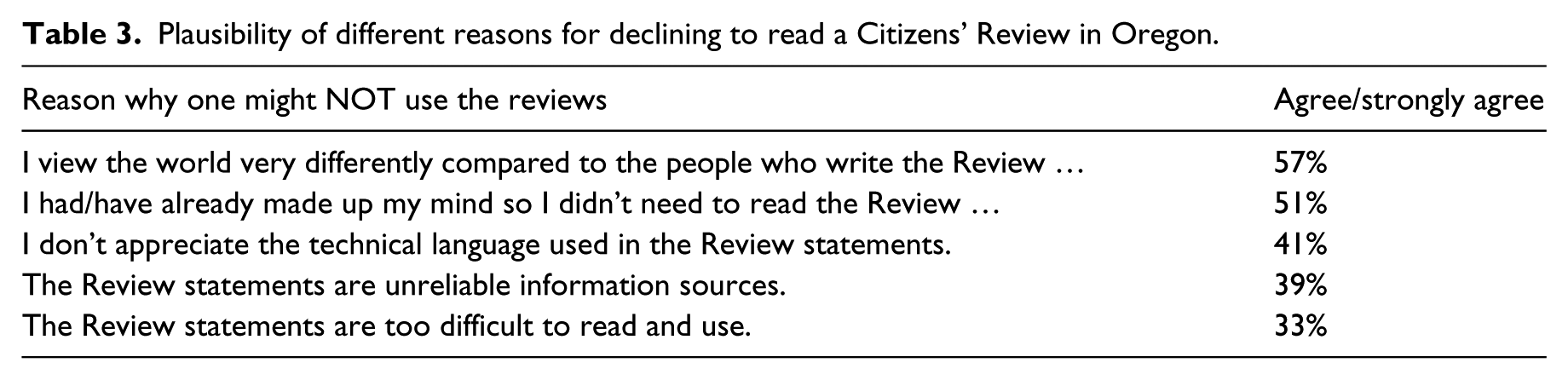

The online survey also afforded the opportunity to ask Oregonians who knew about the Citizens’ Statements what they thought about the CIR more generally, now that they had a few years of experience with it. Between a third and a majority of respondents agreed with each of five reasons one might not use the CIR Statements, although those agreement percentages were generally lower the more familiar one was with the CIR. 6 The reasons shown in Table 3 were presented in random order but are sorted from most to least plausible explanation for not reading the Statements.

Plausibility of different reasons for declining to read a Citizens’ Review in Oregon.

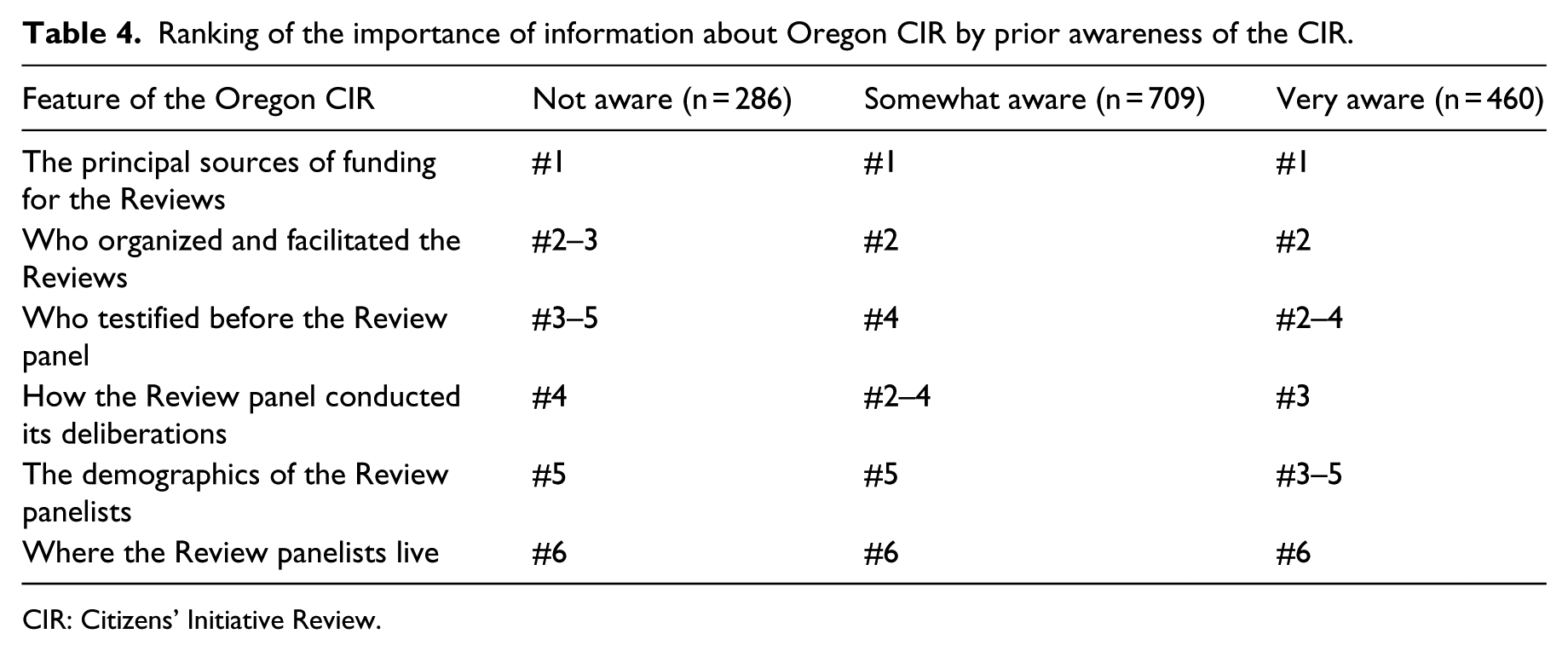

A final set of questions asked all online survey respondents (except those exposed to the CIR in the experiment) to rate the importance of knowing key details about each CIR. They ranked six items, shown in random order, and the results in Table 4 show how consistent those rating were regardless of how aware one had been of the CIR prior to taking the survey. 7 When asked to “rank the importance of each piece of information in judging the trustworthiness of the Citizens’ Initiative Review statements,” the highest priority was knowing who funded the CIR.

Ranking of the importance of information about Oregon CIR by prior awareness of the CIR.

CIR: Citizens’ Initiative Review.

Using the CIR statement

The preceding results suggest that for many voters, the CIR makes sense as a modestly reliable source of information during initiative elections. Additional questions sought to learn more about how such voters actually used the CIR Statements, and from these data two themes emerged regarding how voters used the CIR’s “vote tally” and how it nudged reluctant voters to mark their ballot on CIR issues.

Discounting the CIR panel vote

One special detail in an Oregon Citizens’ Statement is the vote tally that shows how the panelists split on the measure at the end of their deliberations. Although there were variations feature across this in the 2014 CIR pilots, the basic idea is that voters might want to know how the panelists themselves intended to vote after spending studying a ballot measure.

In Oregon, most participants understood that the Statements showed how many panelists voted for and against the measures and liked knowing that result. Some participants, however, were unclear whether or not there was any overlap in terms of the findings. As one said, I assume they all had these statements to look at, to give a thumbs up thumbs down on. It seems too neat and clean to think that nine would choose these [bullet points] and eleven would choose [other bullets] without some sense of, in part, a middle that they all tended to agree on. Or, maybe there was a set of bullets that almost none of them [agreed on]? (P7)

Many participants indicated that the panelists’ votes did not affect their own positions. For instance, one participant said, “I really don’t care who is for it or against it. I just want the findings that they found” (P3). Another said, “It matters more what you vote than what other people vote” (P11). A third commented, “Just because a lot of people think something doesn’t mean it’s right” (P14).

One panelist, however, worried how others might construe that vote: “I think the majority/minority things … are actually really screwed up. I know that a lot of people just look at those words and want to side with the majority … It’s the only way it’s weighted” (P19). Indeed, some participants whose views matched the minority of the panelists said that result made them feel less confident about their positions. “When I’m in [the] minority,” one said, “… am I missing the boat?” (P1).

Turning to the online survey data, Table 2 shows that many of those who read the CIR Statements did not recall the balance of panelist votes. Moreover, the direction of the panel vote is no guarantee of influence. In a 2010 phone survey, for instance, those reading the Citizens’ Statement on medical marijuana became more likely to oppose the measure, even though the panelists had split 13–11 in favor (Gastil & Knobloch, 2011). That particular Statement led with a Key Finding that worried about the enforceability of the measure, and substantive concerns such as those appeared more influential than the balance of panelist votes.

Our 2014 online Oregon survey provided a direct test of the importance of showing the panelist vote, and the results suggest it was not a critical piece of information. Those who had neither voted nor read the Voters’ Pamphlet at the time of the survey were shown a Citizens’ Statement on either Measure 90 or 92, and half within each group saw a Statement that had the panel vote removed. 8 A fifth group served as a control group and saw no Statement before answering the questions that followed.

To see whether the difference in CIR Statements worked as designed, a quick check tested voter inferences about how the panelists voted on each measure, using only those respondents who spent at least 30 seconds on the screen that showed them the CIR Statement. Recall that for Measure 90, the CIR panel opposed the measure on a 14-5 vote. A majority (58%) of respondents not shown how the panel voted said they did not know the vote result; those who did venture a guess responded evenly across possible outcomes. 9 In contrast, only 28% of those who read the Statement that included the panel vote were unsure, after the fact, how it had voted. The most common response (39%) was that “a large majority of citizen panelists opposed Measure 90,” with 7% recalling opposition by a smaller margin, 4% thinking the vote was even, and the remaining 21% having it backward.

The result for Measure 92 was similar, in that those not shown the vote were left to guess (with 49% admitting they did not know the vote result), whereas a modest plurality of those seeing the result recalled it correctly (26% remembering that “a small majority opposed Measure 92”). Given that the panelists opposed the measure 11-9, it is noteworthy that 15% of those shown the Statement with that tally recalled it as “a large majority” opposing the measure, and 27% recalled that the panel favored it.

Did seeing the panelist vote tally influence voters’ own decisions? There were no net voting effects for Measure 92 (GMO labeling), and the voting effect for Measure 90 was simply between the control group and the two groups that read the Statement, with or without panelist votes showing. The control group was more favorable toward the Measure 90 (56% intending to vote “Yes”) than were those who were asked how they would vote after reading the CIR Statement (44%). Once again, the result was not significantly different for those who saw the CIR panel’s 14-5 split opposing Measure 90 than for those who saw the Statement without it.

Encouraging reluctant voters to mark their ballot

Whether or not voters gave credence to the CIR panel vote, per se, some Oregon participants in the usability tests reported that the CIR Statement reinforced their opinions and made them more confident in their vote. That translated for at least one participant, however, into encouragement to participate in the election itself: “It definitely makes me want to vote more because it helps you understand the issues” (P17).

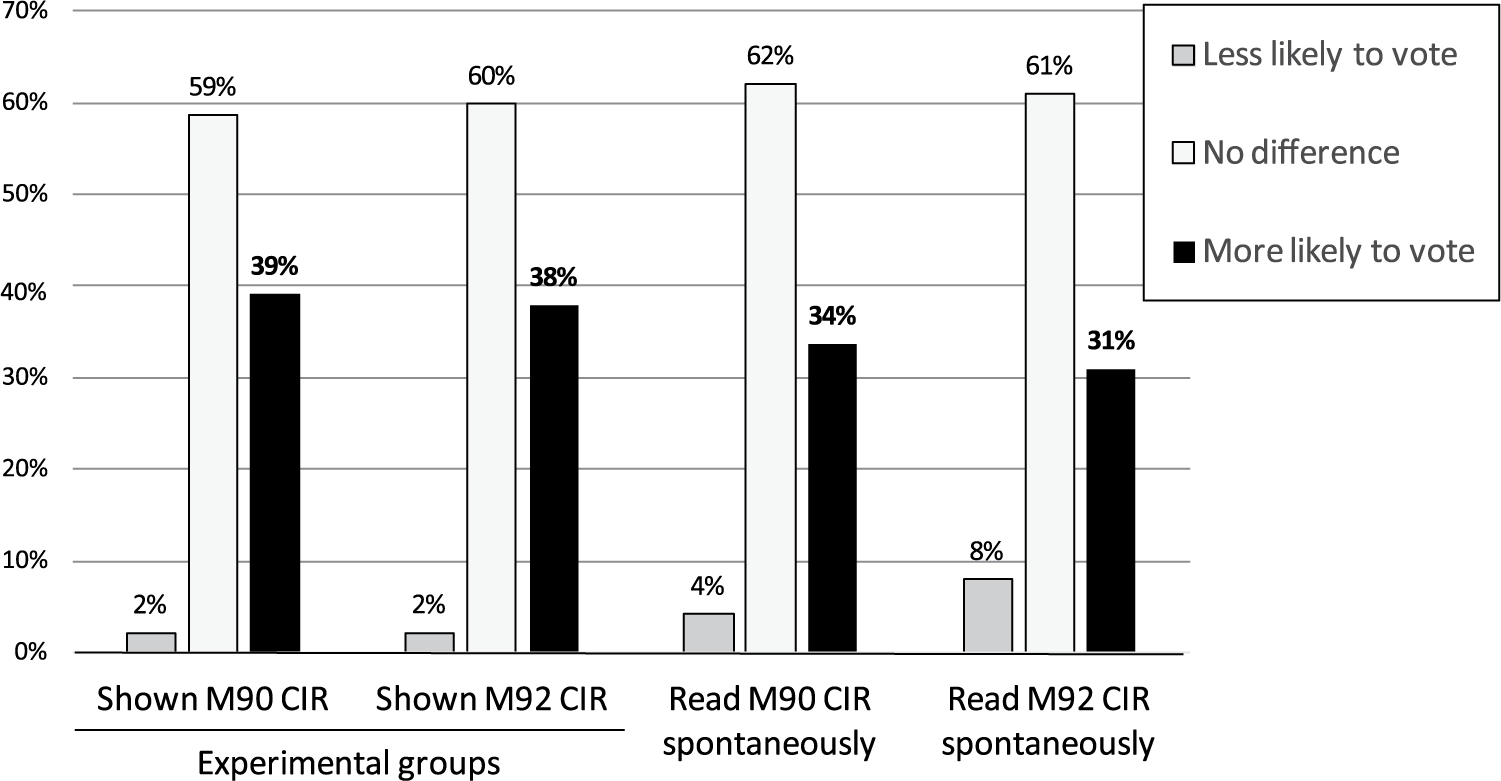

Although this theme did not arise often, it was intriguing enough to investigate in the online Oregon survey. How many voters might, as a result of reading the Citizens’ Statement, cast a vote on a measure that might have otherwise been left blank? We approached this subject with a question wording that acknowledged the fact that voters sometimes pass over issues on their ballot—a phenomenon known as ballot “drop off.” The question read, Some people choose to skip over particular ballot measures while filling out their ballot. Did reading the Citizens Initiative Review statement … make you more likely to MARK YOUR BALLOT on this particular measure, less likely to do so, or did it make no difference?

Figure 1 shows the results from our online study for two different populations. The two sets of columns on the left of the figure are for those respondents who intended to vote but had not yet read the Voters’ Pamphlet; they were shown the CIR during the experiment, and nearly 40% of them said reading it made them more likely to vote. The two sets of columns on the right are for voters who had already read CIR before the survey, and the result was similar, with a third being more likely to vote for having read the Statement. (Using a binomial nonparametric test, the difference between being more versus less likely to vote was statistically significant for all four groups, p < .01.)

Estimated likelihood that reading an Oregon Citizens’ Statement increases or decreases the likelihood that one will vote on the corresponding ballot measure, 2014.

Conclusion

The findings across these two studies have a range of implications both for deliberative theory generally and for the practice of the CIR, in particular. We begin with the broader significance, and then suggest practical steps to improve the CIR.

The CIR represents a deliberative body that could function as a “trustee” for the general public by providing it with neutral information that helps voters make decisions (Warren & Gastil, 2015). This is analogous to the “recommending force” that Fishkin (1991, p. 81) attributes to deliberative polls, although our research shows that the CIR has a ready audience prepared to read its findings. Moreover, voters clearly think of the CIR as a voting aid, not a guide, so it may be more appropriate to think of such bodies not as policy advisors but as providers of information that helps the public reach judgments.

The idea that a deliberative mini-public conducted at the micro-level could influence the macro-level public has great potential for designing deliberative systems, but the case of the CIR foregrounds the importance of ensuring public trust. Although most voters in our studies appreciated the role that the CIR plays in helping them make voting decisions, that trust may require more knowledge of how the CIR operates. An analogy to the jury is interesting here. The public’s faith in the jury system may reflect a greater familiarity with how juries operate, either from direct experience with that institution or from indirect exposure via popular media and social networks (Gastil et al., 2010). Given the small public samples participating in the CIR and processes like it, building public trust may prove more challenging owing to persistent unfamiliarity with the processes’ details.

The most heartening finding may be voters’ sense that reading a CIR Statement increases the likelihood that they participate in elections. It has become fashionable to contrast participatory and deliberative theories of democracy (e.g. Mutz, 2006), but this constitutes an example of a deliberative process increasing public willingness to participate in a conventional political act. The data in these studies do not show that access to the CIR makes non-voters into voters, but it does suggest that the Citizens’ Statements encourage some voters to complete sections of their ballots that they might otherwise have left blank.

Turning to the practical significance of these findings, the most pressing issue may be increasing the public’s familiarity with the CIR process. Such information can be provided in detail online, but most Statement readers will only learn what they read on the page presented in the Voters’ Pamphlet. The most economical way to reassure voters may be to provide a short link to the information online, as a kind of promissory note that voters who want to know more about the details can access readily. A full sentence atop the CIR statement regarding the conduct of the panel might also provide some reassurance regarding the rigorousness of the CIR.

More generally, the CIR needs a more robust public information campaign, both in Oregon and in any other state/municipality that adopts it. The CIR will have maximum impact if the Statement reaches a wider population. The fact that nearly half the Oregon electorate remains unaware of the CIR suggests it has a much larger potential audience. Either Oregon or one of the new CIR adopters should experiment with a more concerted public outreach effort to see how many voters can find their way to the CIR. Doing so is a logical next step after investing the effort into implementing the CIR.

Finally, the 20–24 CIR panelists represent only a small portion of those initially invited to participate. That larger public body could be invited to follow more closely the CIR deliberation and spread the word about the process. It is difficult to say how actively they might participate, but the Australian Citizens’ Parliament had modest success encouraging online engagement by those who were invited to apply but not selected for the face-to-face meetings it held in Canberra (Carson, Gastil, Hartz-Karp, & Lubensky, 2013).

Regardless of how the CIR changes in coming years, more work remains to be done to understand how this and other deliberative processes can effectively engage the public. The addition of five new CIR panels in 2014 more than doubled the total dataset available for investigating CIR deliberation and impact, and more will be learned in coming years that will refine it and processes that follow its general design. Only through such research can we continue to close the gap between the broad aspirations of deliberative theory and a strong empirical understanding of its real potential and its most persistent limitations.

Footnotes

Acknowledgements

For assistance with the design and execution of this study, we wish to thank Robert Richards and Brendan Lounsbury at Penn State, Lena Dmitrieva at the User Experience Center at Bentley University, Dustin Simmons and the staff at Qualtrics, and Tyrone Reitman and Lucy Greenfield at Healthy Democracy.

Funding

This ongoing research project has been supported by the National Science Foundation (Decision, Risk and Management Sciences Program, Award 1357276/1357444), the Kettering Foundation, The Democracy Fund, the University of Washington, Colorado State University, and the Pennsylvania State University. Any opinions, findings, conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of these foundations or universities.