Abstract

This study investigates COVID-19-related misinformation on Norwegian Twitter (X), using a mixed-method approach to analyze a large corpus of 426,000 Norwegian-language tweets posted over the course of 3 years, focusing on the interplay between discursive strategies, ideological dynamics, and power relations. The quantitative analysis uses Structural Topic Modeling (STM) to identify and map the prevalence of key discourses. The STM revealed how the COVID-19 misinformation on the platforms was mainly concentrated around two discourses: politics and health. A qualitative critical discourse analysis was used to explore how vaccine-related misinformation reinforced or challenged broader power dynamics and hegemonic ideologies around health, science, and freedom. Informed by the quantitative analysis, the discourse analysis focused on two prevalent misinformation topics, revealing how vaccine-critical discourses contest the authority of health institutions and the government by framing vaccines as dangerous, experimental, and illegal. These findings contribute to the broader understanding of how misinformation circulates and evolves in specific sociopolitical contexts. By analyzing the intersections of ideology, power, and discourse, the study highlights social media’s role in mediating public debates during health crises. The results emphasize that misinformation is not merely false or misleading information but a strategic challenge to hegemony, ideology, and power. Implications include the need for more nuanced approaches to combating misinformation, addressing its ideological and discursive appeal.

Keywords

Introduction

On 12 March 2020, then-prime minister of Norway, Erna Solberg, announced the strongest and most intrusive measures implemented in Norway during peacetime (Ministry of Health and Care Services, 2020). Schools and businesses closed, borders tightened, and the daily life shifted dramatically. With this declaration, the COVID-19 pandemic was no longer a distant threat, but a present and pressing reality for Norwegians. Over the next few years, the virus would disrupt social life, reshape public discourse and fuel a parallel crisis: an infodemic. As people sought information on how to protect themselves and deal with an uncertain and volatile reality, cascades of false and misleading information flooded the internet (Aïmeur et al., 2023).

Recent research on misinformation dissemination has explored its presence, reach, and implications, showing that misinformation spreads faster and is shared more often than true information on social media (Kornides et al., 2023; Pierri et al., 2023; Vosoughi et al., 2018). Belief in misinformation negatively correlates with trust in science (Agley & Xiao, 2021), and positively with rejecting expert information (Stecula & Pickup, 2021). Moreover, individuals are less likely to question information that aligns with their preconceptions (Lazer et al., 2018), raising concerns about how encountering, engaging with, and believing in misinformation might affect perception of reality. From a critical discourse analysis (CDA) perspective, the misinformation reflects a struggle over hegemony, where competing ideologies seek to shape the public understanding. In this context, misinformation functions as a tool of power, legitimizing certain worldviews while marginalizing others, influencing societal norms, beliefs, and practices.

Norway offers an interesting perspective on misinformation discourses. The country is characterized by a high-trust media environment, with 53% of the population trusting most news ranking as eight in a global context (Newman et al., 2023). The country is highly digitalized, and the use of social media as a primary news source increased during the pandemic, from 17% in spring 2020 to 21% by October 2022 (Medietilsynet, 2023). This combination of high media trust and increased reliance on social media as an information source presents a unique context for exploring how misinformation circulates and gains traction within public discourse. Building on this context, this study investigates the COVID-19 misinformation discourses among Norwegian Twitter (also known as X) users. Using a mixed-methods approach, the analysis combines topic modeling and CDA to explore how these discourses were used to reinforce or challenge hegemonic ideologies related to health, science, and freedom of choice.

Literature review

Defining misinformation

The term misinformation lacks a universally accepted definition and is often conceptually ambiguous in academic and media discourse. Some researchers use it interchangeably with disinformation and fake news (Kim et al., 2020; Micallef et al., 2020; Zeng & Chan, 2021), while others emphasize distinctions (Faisal & Mahendra, 2022; Kolluri et al., 2022). A common distinction is that misinformation refers to false and misleading information shared unintentionally, whereas disinformation refers to deliberate falsehoods. However, since intent is difficult to assess, this study adopts a broader definition of misinformation, encompassing any false, inaccurate, or misleading information, regardless of intent (Sanaullah et al., 2022). As such, the concept of misinformation discussed here may intersect with related phenomena such as conspiracy theories, rumors, propaganda, satire, and trolling.

Investigating misinformation

A key interest in CDA, separating the tradition from other approaches to discourse analysis, is the central role of power and ideology, and how language is used to create, reinforce, or challenge power relations and ideologies. Given that misinformation often serves ideological functions, like that of legitimizing or contesting authority (Bhatia & Arora, 2024; Larki & Manouchehri, 2022; Maci, 2019), CDA is believed to be particularly useful in the study of misinformation. Despite this, only a limited number of studies have used CDA to explore the propagation of COVID-19 misinformation on social media.

Existing qualitative CDA studies on COVID-19 misinformation reveal diverse ideological and geopolitical dynamics. Bhatia and Arora (2024) analyzed anti-Muslim disinformation on Twitter, revealing how discursive strategies were used to reinforce pro-Hindutva ideologies, particularly through the use of the us/them binary. In a similar vein, Furman et al. (2023) investigated Russian vaccine misinformation on Turkish Twitter, demonstrating how SputnikTR’s coverage of the COVID vaccines propagated pro-Russian and anti-Western narratives. Scardigno et al. (2023) examined fake and conspiratorial news that circulated on Italian social media during the pandemic, focusing on the rhetorical strategies used by the disseminators. Their findings highlight that, even though fake news and conspiracy news may share common roots, they engage in different rhetoric patterns, with fake news focusing more on plausibility and credibility to reveal pandemic-related effects, while conspiracy news relied more on empathy to emphasize hidden systemic flaws.

Given the vast scale of misinformation propagation on social media, qualitative studies alone may struggle to capture broader patterns. To address this challenge, researchers have increasingly combined qualitative CDA with topic modeling, a computational method that identifies thematic patterns within text corpora. By identifying patterns and structures in large datasets, topic modeling provides a valuable complement to qualitative approaches, enabling a comprehensive examination of how misinformation is propagated and understood in online environments.

Several studies have demonstrated the effectiveness of integrating topic modeling with qualitative analysis. In a recent paper, Hamdi (2024) applied a combination of topic modeling and Van Dijk’s framework of the ideological square to analyze Arabic-language misinformation on Twitter. The findings demonstrate how fake news was used to promote ideological struggle between social groups, and how the discourse structures surrounding misinformation involved ideological polarization, self-identification, and goal description. Haupt et al. (2023) combined topic modeling, sentiment analysis and qualitative content coding to examine COVID-19 and 5G conspiracy theories on Twitter, identifying key thematic clusters such as distrust in global elites and medical authorities, as well as anti-vax sentiments. Cárcamo-Ulloa et al. (2023) used topic modeling alongside multimodal discourse analysis to investigate fake news discussions across multiple social media platforms in Chile. Their findings revealed that the Chilean media discussed fake news more prominently on Twitter and Facebook than on Instagram, and tended to focus on replicating controversies involving fake news rather than trying to debunk or clarify them.

These studies illustrate the global diversity of misinformation and provide valuable methodological insights. In the study of misinformation, Norway presents a unique case, where the trust in news media, the government and health authorities is generally high, but vaccine skepticism has nonetheless persisted in specific online communities (Delebekk, 2024; Skiphamn, 2022). The COVID-19 pandemic saw an increase in individuals challenging the legitimacy of Norwegian public health policies and criticizing the governments’ handling of the crisis. Particularly, a 2019 report from the Norwegian Directorate for Civil Protection (DSB, 2019) which identifies a pandemic as the most likely and most devastating crisis scenario to occur in the near future was widely used to critique the authorities’ lack of preparation (Andreassen & Furuly, 2021). However, no previous study has systematically investigated how Norwegian social media users engaged with misinformation to challenge and contest hegemonic structures during the pandemic. By applying a mixed-methods approach that integrates topic modeling with CDA, this study aims to uncover the discursive strategies used in vaccine-related misinformation on Norwegian Twitter, contributing to a deeper understanding of how misinformation challenges or reinforces dominant perspectives within Norway’s sociopolitical framework.

Contribution and research questions

This study contributes to the literature on COVID-19 misinformation in three distinct ways. First, it examines the Norwegian context, offering a unique perspective from a country characterized by high trust in media and institutions (Newman et al., 2023; Organization for Economic Cooperation and Development (OECD), 2024), contrasting with the previous literature which typically focuses on general online discussions, or discussions within low-trust environments. Second, it addresses a significant gap in the comparative analysis of misinformation and non-misinformation discussions, providing insights into their similarities and differences. Third, the study incorporates a temporal dimension, investigating how misinformation-related discourses and their prevalence evolved over a time period of 3 years.

A particular focus is placed on vaccine-related misinformation due to its potential societal impact. Vaccine misinformation has the potential of directly influencing public health behavior, with consequences related to the vaccine uptake, herd immunity, and trust in public health institutions. In the Norwegian context, vaccine programs have historically enjoyed broad public support (Norwegian Institute of Public Health [FHI], 2023), yet skepticism has persisted in certain cases, most notably following the Pandemrix-narcolepsy controversy in the aftermath of the 2009 H1N1 pandemic (FHI, 2017; Jansen, 2018). This event contributed to lingering concerns about vaccine safety and may have shaped the reception of COVID-19 vaccines among segments of the population. The study thus situates vaccine-related misinformation within a broader historical and sociopolitical landscape, recognizing its capacity to both challenge and reinforce dominant power structures.

To explore this, the study attempts to answer the following research questions:

RQ1: What discourses surrounding COVID-19-related misinformation were prominent on Norwegian Twitter during the pandemic?

RQ2: How did the use of misinformation-related discourses compare to those of non-misinformation discourses in terms of prevalence?

RQ3: In what ways do vaccine-related misinformation discourses contribute to reinforcing or challenging broader power dynamics and hegemonic ideologies around health, science, and freedom?

By addressing these questions, this study aims not only to provide an in-depth analysis of COVID-19 misinformation on Norwegian Twitter, but also to contribute to the broader discussions on discourse, ideology, and power in the context of a global health crisis.

Theoretical framework: CDA

This study is informed by CDA, as related mainly to Norman Fairclough’s understanding of hegemony, ideology and power (Fairclough, 2003, 2010). Fairclough’s (2003) approach to discourse analysis is based on the premise that language is an integral part of social life, “dialectically interconnected with other elements of social life” (p. 2), thus CDA is the analysis of these dialectical relationships. This interconnectedness means that discourse both shapes and is shaped by social structures, making language key mechanism through which power is exercised, resisted, and negotiated. CDA, therefore, provides a systematic approach to analyzing how discourse contributes to the maintenance or contestation of social realities.

The misinformation discourse surrounding COVID-19 on Norwegian Twitter is deeply entwined with ongoing ideological struggles over societal issues, such as trust in science, government authority, and personal freedoms. In this context, CDA is particularly well-suited for investigating how misinformation discourses function as counter-hegemonic narratives that challenge dominant public health discourses. Fairclough’s concepts of hegemony and power are particularly relevant, as they allow us to examine how dominant narratives surrounding the pandemic are constructed and maintained through discourse, shaping public perception and legitimizing certain viewpoints while marginalizing others. Thus, CDA enables a nuanced examination of how power is enacted through language, uncovering the ideological underpinnings of misinformation discourses and their role in broader struggles over meaning and legitimacy. By applying CDA to the examination of misinformation discourses, we can uncover how these discourses not only reflect existing power relations but also contribute to their reproduction and transformation, influencing public opinion and behavior throughout the crisis.

Hegemony, ideology, and power

Fairclough (2003) relates his understanding of hegemony to Gramsci, viewing it as the process of universalizing particular meanings in the service of achieving and maintaining dominance. Drawing on Gramsci’s concept of hegemony, Fairclough thus emphasizes that power is not maintained simply through coercion, but also by securing the consent of marginalized groups through the normalization of certain discourses. However, hegemony is never completely stable, it’s only ever partially and temporarily achieved, as an “unstable equilibrium” (Fairclough, 2010). Hegemony is thus not solely about dominance, but also about the process of negotiation in which a consensus regarding meaning is established. This perspective is particularly relevant when examining vaccine misinformation, as it provides a framework for understanding how misinformation discourses emerge as counter-discourses that contest hegemonic health narratives.

The process of establishing and maintaining hegemony is in Fairclough’s (2003) view ideological work. Ideologies are constructions of meaning that contribute to the establishing, maintaining, and changing social relations of power, domination, and exploitation (Fairclough, 2003). Ideologies are embedded within discourse and play a central role in reinforcing social power by shaping how people perceive social relations, often in ways that uphold existing hierarchies. Consequently, ideology acts as a modality of power, influencing social practices and contributing to the “common sense” of a society. The power of misinformation discourses lies in their ability to construct alternative social realities that resonate with specific audiences, such as by framing dominant health discourses as oppressive or untrustworthy. As such, misinformation is not simply false information but part of an ongoing ideological struggle over meaning and authority.

Within CDA, language does not possess power in itself; rather, it derives power through the ways in which people use it (Baker et al., 2008)—power is exercised and negotiated through discourse. Yet, power in discourse is not fixed, but rather contested. This specific focus on power in discourse distinguishes CDA from other discourse analysis methods, allowing for an investigation of how discursive structures reinforce or challenge dominant perspectives. As discursive practices contribute to the (re)construction of power relations, discourses can promote the interests of certain social groups, and sustain or challenge existing power structures (Fairclough, 2015). Following this, misinformation about COVID-19 represents both a challenge to hegemonic discourses and an ideological struggle over power. CDA provides a robust analytical framework for understanding how misinformation discourses function as ideological frames that seek to (re)shape public understandings of health and governance, and through CDA it becomes possible to examine how misinformation gains traction by offering alternative social realities that appeal to specific audiences, thereby challenging existing hierarchies and influencing the broader discourse landscape.

Importantly, CDA emphasizes that discourses are dynamic, continuously evolving in response to shifting social conditions (Fairclough, 2003). The misinformation discourse on Norwegian Twitter likely transformed over the course of the pandemic. Analyzing these shifts provides insight into how misinformation discourses adapt to maintain relevance and influence, reflecting broader ideological and power struggles as the pandemic unfolded.

Methodology and data

Drawing on a mixed-methods framework, this study uses topic modeling analysis to identify and map the discourses related to COVID-19 discussions on Norwegian Twitter during the pandemic. The result from this quantitative analysis is then used to inform the later qualitative discourse analysis. Studies implementing such a combination are typically referred to as corpus-assisted discourse studies (CADS). In the following sections, I first present the data used for this study, including how it was collected and preprocessed. Following this, the specific type of topic model used in this article (STM) is presented, before discussing the combination of STM and CDA in regard to CADS.

Data and preprocessing

The data used in this study consists of approximately 426,000 COVID-19-related tweets in Norwegian, spanning from 1 January 2020 to 31 December 2022. This dataset has previously been classified to identify tweets containing misinformation, as detailed in Frisli (2025). Specifically, a small subsample of the dataset was first manually classified by three independent annotators, using a binary coding scheme (misinformation or not misinformation). This sample was then subsequently used in a semi-supervised self-training framework to train a classifier to identify misinformation in the rest of the data. Through 20 self-training iterations, this model was able to classify approximately half of the dataset with an F1-score of 0.98. A manual validation of the classified data resulted in a satisfactory kappa score of 0.791.

Every study exploring texts quantitatively necessarily needs to make some decisions on how to preprocess the data—that is, how to best convert the texts into numbers. In this study, several steps were taken. Texts were tokenized into single words, with two exceptions: hyphenated words like “COVID-19” were kept intact, and common n-grams identified through manual exploration were treated as single tokens. This approach enhances model interpretability by preserving some important context, such as distinguishing between “news” and “fake news.” After the tokenization, punctuation and symbols were removed from the texts, except for the symbol “#,” which were kept within the data due to its very specific use on Twitter. Furthermore, URLs were removed from the texts, before stopwords were removed as the last preprocessing step.

STM

Topic modeling is an unsupervised machine learning approach used to uncover latent thematic structures in large text collections. A topic is defined as a distribution of words that frequently co-occur in similar contexts. The model assumes that each document (in this case, a tweet) consists of a mixture of topics, with certain topics being more or less dominant depending on the text. STM is a topic modeling algorithm specifically designed for social science research, where textual data typically needs to be analyzed in ways that account for external factors like time, authorship, or other covariates (Roberts et al., 2019). Unlike other popular algorithms, such as latent Dirichlet allocation (LDA), STM incorporates metadata, allowing for the analysis of how both the prevalence and content of topics are influenced by contextual variables. This feature makes STM particularly well-suited for this article, allowing for the investigation of how misinformation discourses evolve over time and differ from non-misinformation discourses.

In the present analysis, two types of covariates are specified. First, the interaction between misinformation label and month of posting is included as a prevalence covariate, enabling an investigation of how topic prevalence varies between misinformation and non-misinformation tweets over a 3-year period. Second, the misinformation label is specified as a content covariate, meaning that the vocabulary within topics can shift depending on whether the tweet is categorized as misinformation or not. This allows for a nuanced comparison of how discourses are structured within and outside of misinformation narratives. The ability of STM to model both prevalence and content variation provides a significant advantage over alternative topic modeling approaches, making it particularly useful for investigating discursive dynamics.

Although topic modeling is generally considered an unsupervised machine learning approach, one hyperparameter has to be specified a priori to the model fitting, namely the number of topics (K) to be identified. There exists no objectively “correct” value for K, as it depends on the structure and purpose of the data (Roberts et al., 2019). The choice of K influences topic interpretability, with higher values yielding more granular but narrower topics, while lower values produce broader but less distinct topics (Maier et al., 2018). Given this trade-off, the selection of K should be guided by the research questions. In this study, topics are understood as discursive elements (Jacobs & Tschötschel, 2019), meaning the model must capture both the diversity of discourses and their underlying structures without over-fragmenting the data. To determine an appropriate K, multiple models were tested, balancing semantic coherence (how well topics align with human interpretation) and exclusivity (the degree to which key terms in one topic are distinct from those in others). A K of 30 was selected as it provided an optimal balance between interpretability and granularity, ensuring that topics remained meaningful while capturing important distinctions within the discourse.

Through the use of STM, the analysis benefits from a data-driven, systematic approach to identifying discourse patterns, while still maintaining sensitivity to contextual shifts and ideological struggles, as emphasized in the CDA framework. This methodological choice enhances the study’s ability to track how misinformation-related discourses evolve, interact with hegemonic ideas, and reproduce or challenge dominant ideologies.

CADS

CADS is a methodological approach that combines corpus linguistics and discourse analysis, making it particularly effective for identifying patterns, themes, and discursive strategies in large datasets. Traditionally, CDA begins with a macro-level examination of power and ideologies before analyzing their contextualization in specific texts. This approach faces two main critiques: the risk of arbitrary text selection and the reliance on small datasets that may miss systematic linguistic patterns. By combining CDA with more quantitative methods for text analysis, these limitations can be addressed, by providing an inductive, data-driven approach. It allows for the identification of recurring patterns without pre-selecting texts, improving representativeness by analyzing larger datasets (DiMaggio et al., 2013; Törnberg & Törnberg, 2016). This enhances the discovery of subtle, cumulative discursive patterns, offering a more comprehensive and systematic understanding of social practices and underlying ideologies.

In this article, CDA is combined with topic modeling, as this combination is found to be particularly well-suited for the investigation of power and ideology in discourse. Fairclough’s dialectical-relational approach to discourse, words derive meaning from their context rather than possessing fixed definitions. This principle is also reflected in topic modeling, which assigns words to multiple topics depending on contextual usage (Jacobs & Tschötschel, 2019). For instance, the word “mask” may appear in topics related to public health, political resistance, or conspiracy theories, each with different connotations. By integrating CADS, this study ensures that the analysis of misinformation discourse remains sensitive to these contextual shifts, capturing the fluidity of meaning in ideological struggles.

Due to CDA’s generally small data sample, the study of hegemony in discourse is typically focused on moments where a hegemony breaks down or is established (Jacobs & Tschötschel, 2019). But within CDA, hegemony is also about naturalization; the unquestioned acceptance of an idea as commonsensical (Fairclough, 2010). Studying hegemony only through moments of rupture provides an indirect understanding of the phenomenon: it assumes that hegemony exists and then examines its establishment or breakdown (Jacobs & Tschötschel, 2019). If naturalized discourse is the most effective mechanism for sustaining and reproducing hegemony (Fairclough, 2010), then analyzing patterns and regularities in texts offers a way to study hegemony in its ongoing reproduction. Topic modeling addresses this by complementing the exceptional cases with numerous ordinary instances where hegemony is unquestionably reproduced. By identifying recurring patterns and implicit assumptions in a large corpus, it enables the systematic study of hegemony’s reproduction, normalization, and gradual transformations over time.

Through this mixed-methods approach, CADS strengthens the paper’s ability to systematically analyze how misinformation discourses challenge or reinforce hegemonic narratives, ensuring that the qualitative findings are empirically grounded while maintaining the critical lens of CDA.

Results: STM

The first step of the analysis employs topic modeling to identify prominent discourse in COVID-19 related misinformation and compare their prevalence to non-misinformation. While this section does not offer a full discourse analysis in the CDA tradition, it serves a crucial role in uncovering systematic patterns that inform the later qualitative analyses. By mapping the thematic structure of the dataset, the topic model allows for a data-driven approach to identify which discourses are more strongly associated with misinformation and how they evolved over the time period.

Table 1 summarizes the topics from the STM, ordered from highest to lowest average topic proportion. The topics were interpreted manually by a close reading of the top 20 FREX words, which is the weighted harmonic mean of the words in terms of exclusivity and frequency within a topic (Roberts et al., 2019). Out of the 30 topics, 4 were not easily interpretable, largely consisting of function words. These four topics were excluded from further analysis. A fifth topic (Sports) were also excluded due to its deemed irrelevance to the analysis.

Summary of STM. The Columns Contain Topics’ Interpretation and Average Proportion.

Importantly, by using the classification label as a covariate in the STM, it becomes possible to compare the prevalence of each topic between misinformation and non-misinformation tweets. To do this, a regression is estimated using the interaction between classification label and month of publishing for each tweet. The outcome is the expected topic proportion per group. Figure 1 shows the estimated proportions for each topic, with a 95% confidence interval. As illustrated by the figure, while some topics experience closely the same topic proportion between the two groups (e.g. 22 and 16), certain topics exhibit greater differences.

Topic proportion by classification label with 95% CI.

One notable trend is the higher prevalence of misinformation within certain topics. As seen from Figure 1, the topics 6 (Economy and Finance), 12 (Mortality Rates and Vaccination Efforts), 1 (Characteristics of the Virus), 13 (International News Discussions), 17 (Vaccine Discussions—Experimental and Illegal Vaccines), 18 (International Politics), 2 (Dangerous Vaccines and Side Effects), and 4 (American Politics) are distinctly more prevalent among the misinformation tweets, compared to the non-misinformation ones. Conversely, there’s fewer topics with a distinctively higher topic proportion among the non-misinformation tweets, with the sole exception being topic 26: Local News. Among these identified topics heavily associated with misinformation, two general clusters can be seen; topics related to politics and topics related to health. In contrast, non-misinformation tweets seem to exhibit a broader thematic scope, emphasizing local news and general political content.

These two clusters are understood as representing two broader discourses within the data, one pertaining discussions about health and one about politics, both in the context of COVID-19. To further address the first and second research questions, the next section takes a closer look at these two discourses to see how their prevalence evolved throughout the pandemic in relation to misinformation and non-misinformation tweets.

Political discourses

Four topics, characterized by an overall heavy presence of misinformation, are understood as representing a political discourse within the twitter discussions. Figure 2 shows how the prevalence of each of these four topics evolved over the course of the 3 years between January 2020 and December 2022, in the respect to the use of misinformation and non-misinformation. For each topic, the dark line shows the patterns for the misinformation tweets, while the lighter line represents the non-misinformation tweets. To make the patterns across the different topics more comparable, the y-axis is kept constant.

The temporal trends for the political discourses.

In terms of prevalence, the different topics exhibit distinctively different trends. For topic 4 (American Politics), the misinformation prevalence shows a marked increase around mid-2020, peaking notably in late 2020, correlating with the US presidential election period. After this the prevalence of misinformation drops significantly and stays relatively stable at a lower prevalence for the rest of the pandemic. Comparatively, the non-misinformation tweets follow a similar pattern, but with an overall smaller prevalence. Within topic 6 (Economy and Finance), the misinformation tweets show some minor fluctuations over the period, while the non-misinformation tweets experience a sharp increase from mid-2022, likely corresponding to the ongoing economic trough, with the cost of electricity being a widely discussed topic in the latter half of 2022.

In topic 13 (International News), the misinformation tweets peaked in mid-2020, followed by a notable drop before climbing again in early 2022. The non-misinformation tweets exhibit a general low and stable prevalence, except at the very start and end of the time period shown, where the prevalence seems to be relatively high. Finally, topic 18 (International Politics) sees an overall steady decline in terms of prevalence over the course of the pandemic, however, the misinformation tweets do experience a slight peak in mid-2021.

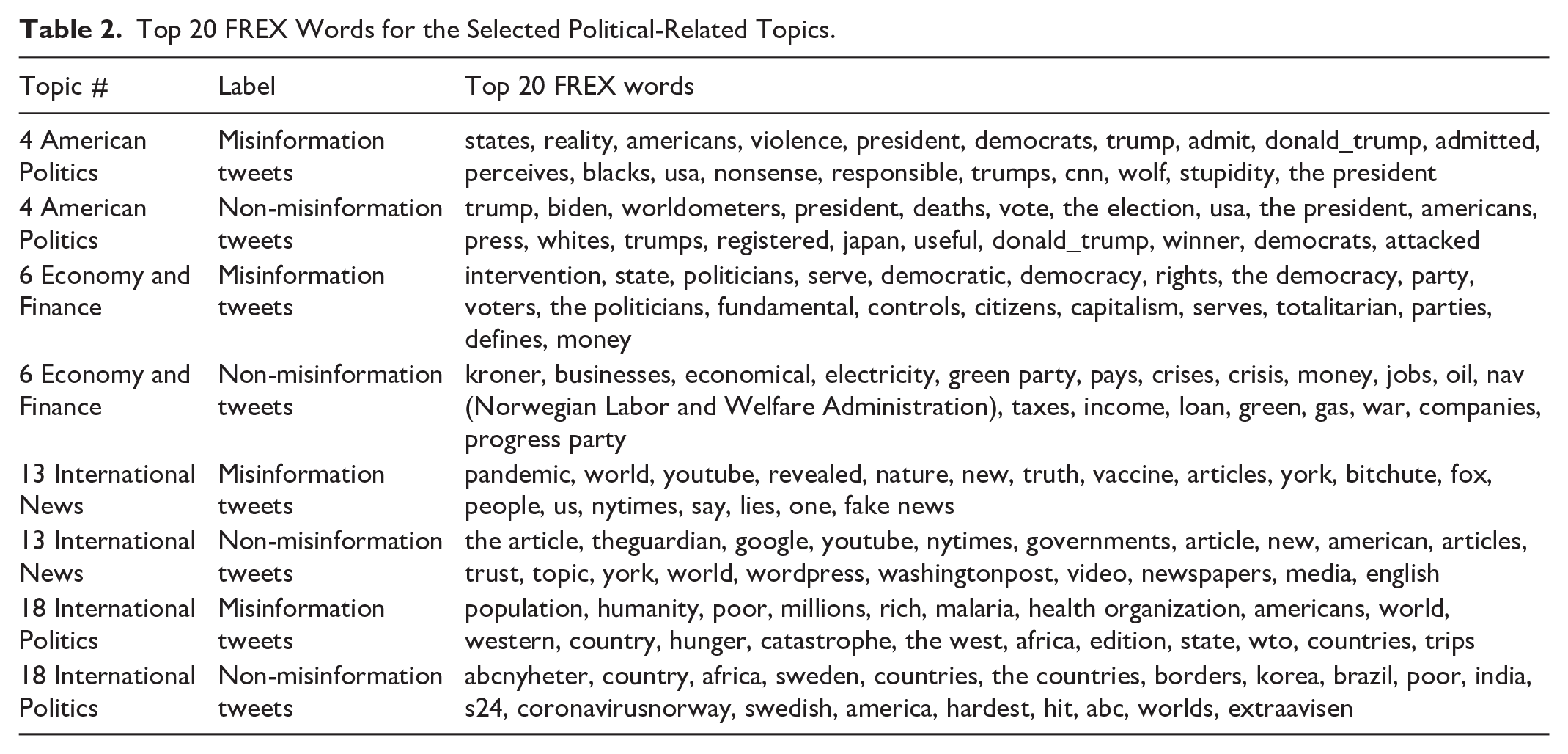

Table 2 lists the 20 most exclusive and frequent words (FREX) for the misinformation and non-misinformation tweets within each of the political topics. These words are analyzed in their original Norwegian form but translated to English by the author.

Top 20 FREX Words for the Selected Political-Related Topics.

The FREX words for topic 4 (American Politics) confirm that this topic is heavily influenced by the 2020 US election period, with several words directly referencing key political figures and events. However, notable differences emerge in sentiment and framing. Misinformation tweets feature words like “violence,” “nonsense,” “stupidity,” and “responsible,” suggesting a more confrontational and critical tone. In addition, terms such as “admit” and “perceives” may indicate a focus on claims of deception or hidden truths. In contrast, non-misinformation tweets include words such as “vote,” “worldometers,” and “winner” reflecting a possible more neutral or factual discussion about election processes and outcomes.

For topic 6 (Economy and Finance), the two categories exhibit strikingly different focus. Misinformation tweets prominently feature words related to governance and political ideology, such as “state,” “democratic,” “rights,” “totalitarian,” and “controls,” reflecting a discourse that seemingly questions government intervention and economic policies. Terms like “capitalism,” “citizens,” and “serves” further suggest a critical perspective on political and economic structures. In contrast, non-misinformation tweets display a more pragmatic economic focus, with words like “kroner” (the Norwegian currency), “electricity,” “businesses,” “taxes,” “oil,” and “loan” indicating discussions about financial policies, economic crises, and energy costs. This divergence underscores a possible trend in how misinformation tweets tend to frame economic discussions within broader ideological narratives, whereas non-misinformation tweets are more oriented toward specific economic issues.

Topic 13 (International News) presents another clear distinction in framing. While both misinformation and non-misinformation tweets reference global and online media outlets such as The New York Times and YouTube, misinformation tweets introduce words like “truth,” “lies,” “fake news” and “revealed,” reflecting a distrust in media narratives. The presence of alternative media sources like Bitchute further highlights a reliance on non-traditional news platforms. Conversely, non-misinformation tweets more frequently reference mainstream media platforms, like The Guardian and The Washington Post, suggesting a more conventional engagement with international news.

In topic 18 (International Politics), misinformation tweets focus on broad, global themes, with words like “humanity,” “population,” “hunger,” and “catastrophe,” indicating a narrative that frames international politics in terms of existential crises and systemic failures. In contrast, non-misinformation tweets feature a more regionally specific focus, with references to Sweden, Brazil, and India, pointing toward discussions of national responses and international relations.

These differences in word distributions highlight how misinformation tweets within the political discourse seemingly frequently engaged in ideological contestation, skepticism toward mainstream narratives, and broad systemic critiques. Meanwhile, non-misinformation tweets seem to be more likely to engage with concrete events, policies and institutional perspectives.

Health discourses

Within the health-related discourses, misinformation is especially prevalent among four topics as shown in Figure 3.

The temporal trends for the health discourses.

Topic 1 is interpreted as being related to discussions about the virus itself. While this topic is most prevalent among the misinformation tweets throughout the pandemic, the patterns between the two groups are mostly identical. These discussions were mostly widespread at the very beginning of the pandemic, before experiencing a sharp drop down to a stable, but relatively low prevalence. This likely corresponds to discussions on the virus’ characteristics (e.g. its origin, how it spreads, symptoms) being more prominent in the early stages of the pandemic when there was little information available.

Topics 2 (Dangerous Vaccines and Side Effects), 12 (Mortality Rates and Vaccination Efforts), and 17 (Vaccine Discussions), all show a comparable pattern in terms of prevalence, with the misinformation tweets being most prominent, and the topics experiencing an overall steady increase across the 3 years. A slight peak around mid-2021 is found across all three topics, followed by a new increase in the second half of 2022.

Table 3 presents the top 20 FREX words for the selected health-related topics.

Top 20 FREX Words for the Selected Health-Related Topics.

In topic 1 (Characteristics of the Virus), both misinformation and non-misinformation discuss the virus’ characteristics, with shared terms such as “SARS,” “variant,” and “contagious.” However, misinformation tweets place a strong emphasis on the virus’ mutations and origin (Wuhan). The inclusion of “HIV” and “MERS” could indicate a narrative drawing parallels between COVID-19 and other viruses. In contrast, non-misinformation tweets include words such as “effective,” “potential,” “stop,” and “natural” which may indicate a discussion more centered on how to manage the virus and its spread.

For topic 2 (Dangerous Vaccines and Side Effects), misinformation tweets appear to highlight concerns about vaccine safety and side effects, with words like “side effects,” “spike,” “autoimmune,” and “the blood,” suggesting a focus on potential risks. The frequent mention of the “immune system” could indicate discussions on how vaccines interact with the body’s natural defenses. The inclusion of “cannabis” suggests references to alternative treatments. In contrast, non-misinformation tweets include words like “serious,” “mild,” “study,” and “protects,” which may indicate a more neutral or research-based discussion of vaccine effects. Terms such as “headache,” “common cold,” and “symptoms” further suggest that non-misinformation tweets discuss vaccine side effects in the context of typical, expected reactions rather than severe health risks.

Topic 12 (Mortality Rates and Vaccination Efforts) reveals some differences in how risk and mortality are framed. Both groups of tweets often reference deaths and vaccinated/unvaccinated. Among the non-misinformation tweets, these are in combination with words like “proportion,” “mortality,” “hospitalization,” and “statistics,” reflecting a quantitative and comparative approach to mortality rates. In comparison, among the misinformation tweets we can find words like “cancer” and “myocarditis,” in the presence of “NIH,” “NCBI,” and “NLM,” suggesting and emphasis on worst-case scenarios and attempts to invoke scientific legitimacy.

In topic 17 (General Vaccine Discussions), the misinformation tweets seem to focus on skepticism toward vaccines, with words like “ivermectin,” “experimental,” “pharmaceuticals,” and “patent,” suggesting concerns over vaccine safety, corporate interests, and alternative treatments. In addition, “mass vaccination” implies a framing of vaccine campaigns as coercive rather than protective. Non-misinformation tweets, on the other hand, emphasize mainstream vaccine discussions, with words like “Pfizer,” “Moderna,” “fair,” “distribution,” and “development” pointing to conversations about vaccine availability, efficacy, and public health policy. The presence of “safe,” “benefit,” and “dosage” further reflects a discourse focused on vaccine administration.

Across these four topics, the differences in FREX words highlight how misinformation tweets tend to emphasize fear, risk, and skepticism, often questioning scientific consensus or mainstream narratives. In contrast, non-misinformation tweets are more likely to engage with policy discussions and public health perspectives. In answer to research questions 1 and 2, this study has found that COVID-19-related misinformation on Norwegian Twitter was primarily concentrated in two areas: political and health-related discourses. Politically, misinformation was prevalent in discussions around American politics, international politics, economy and finance, and international news. Health-related misinformation was most prominent in topics related to characteristics of the virus, vaccine discussions, and mortality rates.

Vaccine-related misinformation discourses

The third research question asks how misinformation in vaccine-related discourses contributed to the reinforcement of, or challenge to, power dynamics and hegemonic ideologies around health, science and freedom. To answer this, a CDA if performed on topics 2 (Dangerous Vaccines and Side Effects) and 17 (General Vaccine Discussions). These are chosen based on the quantitative analysis, where they were found to reflect a vaccine-related subfield within a broader health discourse on Norwegian Twitter. Moreover, the chosen topics contain a heavy presence of misinformation, providing a unique point of view of the ideological battles between different health and science discourses in the context of the coronavirus vaccines.

A particular emphasis in the analysis is placed on the concept of assumptions, which Fairclough (2003) identifies as central to the ideological work of texts. Assumptions, that is, taken-for-granted meanings, are particularly powerful because they present certain perspectives as common sense, thus playing a part in naturalizing specific ways of understanding the world. In the context of vaccine-related misinformation, assumptions play a key role in shaping how authority, expertise, and individual autonomy are framed. By analyzing what is left unsaid, and what is presupposed rather than explicitly stated, this study examines how misinformation contributes to the contestation or reinforcement of hegemonic ideologies surrounding health, science, and freedom.

The analysis is informed by a close reading of the top 100 misinformation tweets associated with each of the two topics. The tweets are analyzed in their original Norwegian form, with selected examples translated into English by the author. Given the informal nature of language on social media, with slang, spelling mistakes and dialectical variations being commonplace, the English translations might read as more formal and standardized than the original tweets. However, care was taken to ensure that the original intent and tone is preserved. To preserve anonymity, usernames mentioned within the tweets are simply indicated by “

Dangerous vaccines and side effects

Topic 2 is interpreted as relating to discussions on the dangers of the vaccines and their possible side effects, reflecting a vaccine-critical health discourse. One key ideological struggle within this discourse centers on the definition of a “vaccine,” with tweets drawing on assumptions about what a vaccine is and should do. Importantly, this discourse does not support an all-in anti-vaccine attitude; users are not necessarily “anti-vaxx,” but instead they are specifically invested in contesting the hegemonic status of the COVID-19 vaccines. Throughout the topic, this contestation occurs through an ideological struggle over the scientific definition of vaccines.

[1] @USENAME That can’t even be compared at all? A locked door doesn’t make a house immune to break-ins. Blood-thinning medication does not make some patients “immune” to heart problems. A vaccine, on the other, is supposed to make you immune, but when it doesn’t, is it still a vaccine?

[2] It’s nauseating how people can compare covid “vaccines” with, for example, the BCG vaccine. In the old days (two years ago), a vaccine was a medication that prevented transmission and made you immune to a disease. The covid “vaccines” are not vaccines, but experimental medicine.

[3] A vaccine is supposed to make you immune, from what I’ve learned. So if there exists a vaccine for a “super dangerous virus” like covid, and people who have taken the vaccine still end up infected? No thanks. I’ve done well since the beginning of the pandemic with no disease or vaccine.

These tweets rely on the assumption that vaccines, by definition, needs to fully prevent transmission and provide and lasting and complete immunity. By this logic, the COVID-19 vaccines fail to meet the definitional criterion. The tweets presuppose their definition as a given, inviting the reader to simply accept it as common sense. This assumed common sense logic of vaccines providing immunity is in [2] highlighted by a comparison to the BCG vaccine, clearly positioning the COVID-19 vaccines as a deviation from a prior understanding of vaccines. Moreover, in [2] the phrase “the old days (two years ago)” juxtaposes the idea of a distant past with a recent timeframe, creating a subtle irony that further undermines the legitimacy of COVID-19 vaccines by implying an abrupt and unwarranted shift in definition.

Quotation marks function as a key linguistic strategy, signaling skepticism and delegitimization to further challenge the hegemonic status of COVID-19 vaccines. Notably, [2] contrasts COVID-19 vaccines with the BCG vaccine by placing “vaccine” in quotation marks only when referencing the COVID-19 vaccines, creating an ideological separation between “true vaccines” and “fake vaccines.” In [3], quotation marks around “super dangerous virus” further suggests skepticism about the virus’ severity, framing it as an exaggerated threat and delegitimizing the need for vaccination at all.

Assumptions about the threat of harm from the vaccines are also highly prominent within this discourse:

[4] I defeated corona with vitamins, minerals and hydrogen peroxide, my weakened immune system did the rest, so no toxic vaccine that damages the immune system. “Vaccine” is an illusion, it’s impossible to create a vaccine for coronaviruses that mutates and are spread by vaccinated.

In [4] we again see the deliberate use of quotation marks around “vaccine.” Here, the assumption presented is that no legitimate vaccine for coronaviruses could ever exist due to their mutation rate, thus, COVID-19 vaccines are merely illusions. Instead, the user claims to have beaten the virus with “natural” remedies, like minerals, drawing on a binary opposition between “natural” versus “artificial” treatments. By writing that their natural remedies leave no need for any “toxic vaccine that damages the immune system,” the user presupposes that the vaccines are toxic and does damage the immune system. These claims of the vaccines being not only toxic and dangerous, but also not real vaccines, raises and important question: What are they then? One answer to this was already seen in [2]; they are experimental medicine. This view is reframed by [5] who claims that the vaccines are “gene therapy”:

[5]

Here, by presupposing that the vaccines are in truth gene therapy, the user relies on the assumption that gene therapy is inherently dangerous. By further referencing the existence of a “mafia,” the user presupposes not only that a mafia actually exists, but also that this mafia makes money from people dying or becoming permanently ill, thus implying that the mafia in question is the pharmaceutical industry operating as a criminal enterprise. These assumptions and presuppositions serve to delegitimize the pharmaceutical industry and naturalize a conspiratorial view reminiscent of Big Pharma. [5] also asks the rhetorical question of what they would “get” from taking the vaccine, followed by another rhetorical question; “what if I get heart issues?,” seemingly answering their own question by implying that the vaccine causes heart issues. After this, they make a comment about being young and never sick, giving further support to the idea of the “vaccines” being dangerous, but also unnecessary for young and healthy individuals.

Through this vaccine-critical discourse, the threat of the pandemic lies not in the virus but in the contested vaccines. The tweets exemplify how misinformation challenges the hegemony of dominant health narratives. One key ideological move is the strategic mobilization of the “common sense” understanding of vaccines to delegitimize COVID-19 vaccines, undermining the hegemonic understanding of COVID-19 vaccination as a collective good. The idea that the COVID-19 vaccines are dangerous to individuals’ health resonates with a broader ideological understanding of personal freedom and bodily autonomy. By presenting the act of vaccination as something that will impair your health, the discourse aligns with a broader ideological resistance to state intervention in personal health choices.

Experimental and illegal vaccines

Topic 17 is similarly interpretated as representing a vaccine-critical discourse. While the idea that the vaccines are dangerous remains central, the primary argument found here is that the COVID-19 vaccines are experimental and illegal. These arguments challenge hegemonic ideologies underpinning public trust in regulatory processes, scientific authority, and the legitimacy of health institutions. One user evokes the notion of bodily autonomy to challenge this trust:

[6] @USERNAME Molbo logic! [laughing emoji] There are perfect medicines against covid that don’t cause side effects and are already on the market. But, we would rather force people to take an experimental mRNA injection that is potentially deadly . . . fascinating.

The word “molbo” is commonly used in Denmark and Norway when talking about people acting in stupid or uninformed ways. As such, “molbo logic” is a foolish typic of logic, in this case the logic of taking “experimental” and “potentially deadly” injections, when safe treatments are available. Importantly, the user claims that people are being forced to take the injection, suggesting an abuse of power, positioning the public as victims of state or corporate control. Again, the users are consistently expressing that the COVID-19 vaccines are not proper vaccines, but rather dangerous genetic experiments. This idea of the genetic experiment is particularly present within the topic:

[7] @USERNAME Especially when it comes to the COVID vaccines, which are experimental gene therapy. Moderna stated in 2018 that they did not expect mRNA medicine to ever be approved, but here we are, millions of people willing to be guinea pigs until the trial period ends in 2023.

The metaphor of guinea pigs evokes imagery of human experimentation, constructing vaccine recipients as victims of an unethical medical trial. By portraying the public as “guinea pigs,” this discourse implies that ordinary citizens are exploited by powerful elites, a classic conspiracy narrative. The “guinea pig” metaphor reconstructs the power relationship between citizens and health authorities, casting the latter as untrustworthy actors and questions the integrity of public health initiatives. The assumption is that if this technology was not approved in 2018, it should not have been approved now either.

Throughout the topic, there exists an intertextuality with users engaging in a legal discourse, visible through a central claim of the vaccines being illegal. This claim hinges on the assertion that emergency authorization was granted under false premises, namely, the non-existence of alternative treatments. Users argue that because effective alternatives like ivermectin and hydroxychloroquine (HCQ) are available, the legal basis emergency use is invalid, rendering the vaccines unlawful:

[8] @USERNAME The experimental vaccines are distributed under an emergency authorization which assumes that there is NO EXISTING medicine for Covid-19. But it’s now documented that there is safe, cheap and effective medicine. Therefore, the vaccines are illegal.

[9] @Folkehelseinst [Norwegian Institute of Public Health] Science has long shown that both Ivermectin and HCQ are safe, cheap, and effective treatments. The consequence of this is that the emergency authorization for the experimental gene therapy is invalid, and the so-called “vaccines” are illegal.

Tweet [9] again challenges the understanding of COVID-19 vaccines with the phrase “the so-called ‘vaccines,’” however, this is now done to delegitimize the legal status of the vaccines. By drawing on a legal discourse and addressing the Norwegian Institute of Public Health directly and publicly, the user aims to undermine trust in the institution, by implicitly claiming that they are knowingly operating outside of the law.

The reasons for pushing experimental and illegal vaccines are explained in two ways. The first, and most common, being financial incentives. The assumption is clear: alternative treatments are too affordable and accessible, making them bad business for the pharmaceutical industry (or “Big Pharma”).

[10] @USERNAME I’m doing very well, but it scares me that many hundreds of millions of people have been fooled by Big Pharma and all the powerful people they have bought and paid to trick people into taking a vaccine that is not approved.

[11] The COVID vaccine was only made so that the pharmaceutical industry could become even richer than they already are. We had and [still] have the medicine against COVID, but suddenly they couldn’t use it because it was cheap and effective with few side effects. It’s all about money.

This representation of pharmaceutical companies as profit-driven entities echoes Fairclough’s Gramscian understanding of hegemony, where corporate power seeks to naturalize profit-seeking behavior. However, in this discourse, corporate profit motives are not framed as normal but as illegitimate. The tweets construct a dichotomy between “cheap, effective, alternative treatments” and “expensive, patented vaccines,” implying that the latter are favored purely due to their profitability. The goal is to maximize profit, so affordable alternatives must be suppressed.

A second, more extreme, explanation suggests that the government is not merely indifferent to vaccine-related harm but actively complicit in harming the population, furthering the notion of a corrupt elite.

[12] @USERNAME That was soon. Was he murdered by the health authorities with illegal experimental gene therapy (what the health authorities mistakenly call the COVID-vaccine)?

[13] @jonasgahrstore [Norwegian Prime Minister] I assume you are aware of all the side effects of the COVID vaccine. When will you stop pushing this deadly vaccine on people? If you care about the best for the population, you will stop injecting this poison into people NOW!

The concept of agency is crucial here, with Big Pharma and the government portrayed as active agents complicit in corruption and murder. This framing reflects a clear ideological position: the government is an enemy of the people, while everyday citizens are passive victims of corporate greed. This framing reinforces an us-vs-them logic typical of populist discourse. [13] specifically calls out Prime Minister Jonas Gahr Støre. The user presents the deadly side effects of the vaccine as an existential presupposition; they are assumed to be an established fact, not something that requires evidence. The structure of the sentence “if you care [. . .], you will stop [. . .]” implicitly questions the prime minister’s moral character. The unspoken corollary is that if the vaccination efforts does not stop, that is proof of the Prime Minister not caring about the people.

Thus, this vaccine-critical discourse identifies COVID-19 vaccines as both experimental and illegal, arguing that the emergency use authorization was granted under false pretenses. The tweets emphasize the existence of alternative treatments, such as ivermectin and HCQ, which are portrayed as safer, more effective, and deliberately suppressed due to their affordability and lack of profitability. This suppression is presented as evidence of financial corruption, with pharmaceutical companies and government officials accused of colluding for financial gain. In addition, a more extreme narrative suggests that the government is either indifferent to vaccine-related deaths or actively seeking to harm the population, framing vaccines as tools of control or population reduction. These findings illustrate how misinformation leverages financial and conspiratorial arguments to undermine and challenge governmental and institutional authority.

Discussion and conclusion

The CDA has identified how vaccine-critical discourses on Norwegian Twitter construct the COVID-19 vaccines not as a public health intervention, but as a dangerous and experimental practice forced upon the population. This ideological struggle lies at the core of how misinformation functions within the analyzed discourses: by reframing vaccination as a threat rather than a safeguard, the discourses challenge the hegemonic understandings of scientific authority and public health policy. In this concluding section, I discuss how these vaccine-critical arguments serve to reinforce a broader distrust in governmental and institutional power within the Norwegian sociopolitical context.

The ideological struggle over vaccination

At the core of the vaccine-critical discourse analyzed here is the claim that COVID-19 vaccines are not proper vaccines but rather experimental gene therapy. This framing does ideological work by undermining the legitimacy of vaccination, positioning it as a risky genetic experiment rather than a tested medical intervention. By referring to vaccinated individuals as “guinea pigs,” these tweets evoke anxieties around medical ethics, aligning vaccination with unethical human experimentation rather than a public good. This rhetorical move is key in recasting the government and health authorities: rather than being a protective force, the government and health authorities are cast as actively complicit in harming their own citizens.

The idea that the COVID-19 vaccines are illegal builds on this distrust, arguing that emergency authorization was granted under false premises. This claim is rooted in the belief that effective, safe and cheap treatments were deliberately suppressed to justify vaccine distribution. This serves to delegitimize governmental health policies and implies corruption at the highest levels of power, suggesting a worldview reminiscent of Big Pharma conspiracy theories. As similar discourses have been observed globally (Erokhin et al., 2022), the specificity of these claims in the Norwegian context, for example, through targeting national institutions such as the Norwegian Institute of Public Health (FHI) and Prime Minister Jonas Gahr Støre, suggests an adaptation of global vaccine skepticism to a local political landscape.

Power and trust in Norway

Although governmental distrust is a common feature of vaccine skepticism, it is particularly significant in Norway, a country traditionally characterized by high levels of institutional trust. The Norwegian welfare state model emphasizes transparency and social responsibility, and historically, vaccine programs have enjoyed broad public support (FHI, 2023). The emergence of vaccine-critical discourses that construct the government as an active agent of harm challenges this tradition of trust in public health governance.

Moreover, the framing of COVID-19 vaccination as an act of state coercion, “forcing” people to take an experimental injection, is notable given Norway’s relatively moderate approach to pandemic restrictions. Unlike some European countries (Burki, 2022), Norway did not implement vaccine mandates, and the government framed vaccination as a personal but socially responsible choice (Skjesol & Tritter, 2022). However, the vaccine-critical discourse effectively disregards this distinction, portraying vaccination as a form of oppression regardless of actual policy.

In addition, the economic argument, that pharmaceutical companies suppressed alternative treatments to maximize profits, gains a particular traction within Norway’s sociopolitical landscape. While Norway’s universal health care system is designed to limit private sector control over public health, these tweets construct a narrative in which “Big Pharma” holds unchecked power, manipulating both scientific research and governmental policy. This aligns with a broader anti-elite sentiment that, while not dominant in Norwegian political culture, has gained increased visibility in certain populist and conspiracy-oriented networks in recent years (Morelock & Narita, 2022; Zulianello & Guasti, 2023).

Conclusion

In this study, a mixed-methods approach to text analysis was implemented to study COVID-19 misinformation discourses on Norwegian Twitter. By investigating trends and patterns in language-use over the course of 3 years, the topic model highlighted how COVID-19 misinformation on the platform was mainly concentrated around two discourses: politics and health. A qualitative CDA illustrated how vaccine-critical misinformation is used to contest the power of health institutions and the government by portraying the COVID-19 vaccines as dangerous, experimental, and illegal. By drawing on existing skepticism toward corporate power, these discourses challenge the legitimacy of public health governance, by constructing government institutions as either corrupt, complicit, or actively harmful. As Fairclough emphasizes, discourses are sites of ideological struggle, where power is contested and reconstituted. Through the analysis, it is clear how misinformation operates not simply as false information but as a strategic challenge to hegemony, ideology, and power.

These findings contribute to our understanding of misinformation as an ideological tool, showing how vaccine-critical discourse not only spreads false claims but also actively seeks to reshape perceptions of authority, responsibility, and public health. As misinformation continues to evolve, future research should further explore how these narratives influence public attitudes and whether they contribute to lasting shifts in institutional trust. Understanding the ideological mechanisms of misinformation is crucial for developing effective countermeasures, particularly in societies where trust in public institutions has historically been strong but is now increasingly contested.

Footnotes

Ethical considerations

The Norwegian Agency for Shared Services in Education and Research approved the current study.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The Twitter data that supports the findings of this study is not publicly available due to restrictions set by The Norwegian Agency for Shared Services in Education and Research, to ensure the right of privacy to any individual whose data is collected.