Abstract

Generative chatbots based on artificial intelligence technology have become an essential channel for people to obtain health information. They provide not only comprehensive health information but also real-time virtual companionship. However, the health information provided by AI may not be completely accurate. Employing a 3 × 2 × 2 experimental design, the research examines the effects of interaction types with AI-generated content (AIGC), specifically under virtual companionship and knowledge acquisition scenarios, on the willingness to share health-related rumors. In addition, it explores the impact of the nature of the rumors (fear vs hope) and the role of altruistic tendencies in this context. The results show that people are more willing to share rumors in a knowledge acquisition situation. Fear-type rumors can stimulate people’s willingness to share more than hope-type rumors. Altruism plays a moderating role, increasing the willingness to share health rumors in the scenario of virtual companionship, while decreasing the willingness to share health rumors in the scenario of knowledge acquisition. These findings support Kelley’s three-dimensional attribution theory and negativity bias theory, and extend these results to the field of human–computer interaction. The results of this study help to understand the rumor spreading mechanism in the context of human–computer interaction and provide theoretical support for the improvement of health chatbots.

Introduction

With the development of deep learning and generative AI, chatbots are now widely used to help people access information and knowledge (Chang et al., 2022; Hsu et al., 2023). They are especially useful in providing health information and health management advice (Goertz et al., 2023; Park et al., 2022). For example, many users rely on chatbots like Babylon Health and Ada Health to get medical guidance and support. These tools have become important for managing minor health concerns and providing emotional support (Chandel et al., 2018; Saracevic et al., 2020). Studies show that millions of people now use health-related chatbots, which demonstrates their growing impact (Ludin et al., 2022; Omarov et al., 2023).

Although chatbots often provide accurate and detailed health information, they are not infallible. They can also spread misinformation, including health rumors, which may influence users’ perceptions and behaviors (Shin, Jitkajornwanich, et al., 2024; Shin, Koerber, & Lim, 2024). Previous research has mainly focused on how health rumors spread through traditional and social media, examining factors such as the characteristics of the rumors and the social contexts in which they spread (Chua & Banerjee, 2017; Guo et al., 2023; Yang et al., 2023). Yet, little is known about how users interact with AI systems like chatbots to share health rumors, which leaves an important gap in the study of human–computer interaction and AI-mediated health communication. In recent years, scholars have begun exploring algorithmic interventions and cognitive mechanisms to counteract misinformation, such as implementing inoculation treatments to strengthen users’ cognitive resistance to false information and examining how human–algorithm interactions shape the spread and impact of misinformation. These studies provide valuable insights for addressing the challenges posed by AI-mediated misinformation and for designing more reliable and user-resilient systems (Shin, 2024; Shin & Akhtar, 2024).

Chatbots not only deliver health information but also offer emotional companionship, giving users a sense of support and comfort (Bilquise et al., 2022). For example, people who feel stressed or lonely can find relief by interacting with chatbots, as studies show that chatbot-based support can reduce anxiety and provide emotional value (Lim et al., 2022; Medeiros et al., 2022; Park et al., 2023). Unlike traditional media, chatbots allow users to engage in personalized interactions, which may significantly influence their willingness to share the information received.

To better understand this, we employ Kelley’s Attribution Model as it provides a comprehensive framework for linking behavior to situational, informational, and actor factors, making it particularly suitable for studying the complex dynamics of AI-mediated communication. The model allows us to systematically explore how contextual factors like chatbot usage scenarios (virtual companionship and knowledge acquisition), informational factors like rumor type (fear-type and hope-type), and actor factors like altruistic tendencies interact to influence health rumor sharing. Virtual companionship reflects a chatbot’s ability to provide psychological support, fostering emotional connections that may enhance trust and influence sharing behaviors, while knowledge acquisition involves a more transactional use of chatbots to access health-related information. These scenarios capture the dual nature of chatbot interaction, which is increasingly relevant in AI-mediated communication (Z. H. Xie, Wu, & Xie, 2024). We also examine two types of rumors—which evoke anxiety, and hope-type, which inspire optimism—previous research suggests that emotional valence significantly influences information sharing, with negativity bias making fear-type rumors particularly potent (Kankham & Hou, 2024). In addition, we consider altruistic tendencies, a key personal characteristic, as they can amplify or mitigate sharing behavior depending on whether the motivation is to help others or avoid spreading harm. Using Kelley’s Attribution Model, which links behavior to situational, informational, and actor factors, our study integrates these variables to uncover the nuanced mechanisms driving AI-generated health rumor sharing.

We conducted a 3 × 2 × 2 experiment from October to November 2023. This experiment analyzed how chatbot usage scenarios, rumor types, and altruistic tendencies together affect people’s willingness to share health rumors. Our study focuses on AI-generated health rumors, defining the sharing of health rumors based on the nature of the content being shared, which is inherently a rumor, rather than how users perceive it (e.g., as accurate health information). Our research contributes to elucidating the spreading mechanism of health rumors in the context of artificial intelligence and offers practical references for mitigating the dissemination of health rumors in the AI era.

Literature Review

Theoretical Framework: Kelley’s Attribution Model

In exploring people’s willingness to share health rumors informed by generative AI, the theoretical framework we adopt is Kelley’s Attribution Model. After Heider (1958) first proposed the concept of attribution in social psychology, American psychologist Kelley expanded and developed the attribution theory, expanding the two types of attributions (internal attribution and external attribution) into three types. She pointed out that people’s attribution behavior involves three aspects: actor factors, stimuli, and the situation of the behavior, which depicts a causal relationship schema for people’s attribution process (Kelley, 1967, 1971, 1972, 1973). Attribution theory has been proven effective in predicting consumption behavior, environmental protection behavior, and self-management behavior (Boudreaux et al., 2010; Daryanto et al., 2022; Long & Liao, 2021). Especially in the field of health communication, existing research has also confirmed that people’s attributions to physical problems have a great impact on their willingness to take mitigating actions (Jeong, 2007; Polk, 2005).

In the empirical research of the past decade, it is common to take health information sharing as the main focus. For example, K. Li and Xiao (2022) mentioned the role of individual reciprocity or reciprocity norms, Grande et al. (2015) and Vaala et al. (2018) compared the differences in willingness to share health information between patients and non-patients. We found that when exploring health information sharing willingness, some studies focused on people’s inner psychological motivations, starting from people’s objective identity or health status (Kye et al., 2019); while the other explored the social and cultural factors behind people’s sharing behavior, observing how the nature of the medium or information affects people’s information sharing patterns (Wang et al., 2020; Zhang et al., 2023; Zhu et al., 2023). Most of the research on health rumor sharing are based on the rumor type or information source (Chua & Banerjee, 2018; Chua et al., 2016; Guo et al., 2023; Xue & Taylor, 2023) or the user’s own characteristics such as health knowledge literacy (Chua & Banerjee, 2017; Luo et al., 2021; Xue & Taylor, 2023). We found that these studies are still limited to the scope of Heider’s internal and external attributions. Therefore, we would like to discuss whether situational factors become an important influencing factor in addition to information type and public individual characteristics from the perspective of Kelley’s three-dimensional attribution theory. In particular, we want to explore whether different situations play an impact in the context of artificial intelligence, and whether situational factors, information characteristics, and actor factors complement each other and work together on people’s willingness to share health rumors.

We align Kelley’s Attribution Model’s three dimensions—situational factors, stimuli, and actor factors—with our research framework. Situational factors in Kelley’s model refer to the conditions or contexts that shape behavior. In our study, this is represented by the two chatbot usage scenarios: virtual companionship and knowledge acquisition. These scenarios align with Kelley’s concept as they define the external environment in which behavior occurs, influencing individuals’ actions based on the context-specific interaction between users and chatbots. Stimuli represent the characteristics of information that trigger specific behaviors, which in our study correspond to rumor type—fear-type versus hope-type rumors. This aligns with Kelley’s model as stimuli are central to shaping behavioral responses; the emotional tone of the rumor serves as a key external factor that drives the user’s decision-making process. Actor factors in Kelley’s model capture the individual characteristics that influence behavior. In our study, this is reflected in altruism, which represents a person’s motivation to help others. This corresponds to Kelley’s framework as actor factors account for personal dispositions and traits that determine how individuals interpret and respond to external stimuli and situational contexts.

Virtual Companionship Scenario and Knowledge Acquisition Scenario

The emergence of generative AI has changed the way people obtain health information, and its ability to provide various health information anytime and anywhere has been supported by a large number of studies (Gamble, 2020; Hopkins et al., 2023; Liu & Xiao, 2021; Raddatz et al., 2023; Y. Xie et al., 2023). However, the information provided by AI is not necessarily completely accurate, and sometimes they also provide rumors. Based on Kelley’s three-dimensional attribution model, the background and environment in which behavior occurs will affect people’s behavioral intentions. In terms of rumor sharing, it can be understood that different information acquisition modes may affect people’s information sharing behavior, which has been confirmed by previous research (Ali et al., 2021; Fishbein & Cappella, 2006; Guo et al., 2023; Kim & Hawkins, 2020). Therefore, we wanted to explore how, from the perspective of artificial intelligence, the information acquisition environment affects people’s willingness to share rumors provided by AI. We distinguish two scenarios: the virtual companionship scenario and the knowledge acquisition scenario.

Virtual companionship refers to a chatbot acting as a companion, providing users with psychological support and emotional value anytime and anywhere. In addition to providing people with necessary information, an important difference between generative AI and traditional media and social media is that it can provide people with emotional support and companionship (Merrill et al., 2022; Zhou & Association for Computing Machinery, 2020). It can provide a kind of intelligent patient companionship (Kahambing, 2023), making people feel as if they are communicating with a real person, and feel that it is like their friend or guide (Chatterjee & Dethlefs, 2023). Even this kind of humanized instant companionship may reduce people’s anxiety and negative emotions (Sachan, 2018). Knowledge acquisition refers to conversations that people have with chatbots simply for the purpose of obtaining health information. As a powerful information aggregation platform, generative AI’s most basic function is to provide people with necessary, comprehensive, and immediate information. Based on Kelley’s three-dimensional attribution model, we believe that the usage environment may affect people’s willingness to share AI-generated health rumors. In the context of non-artificial intelligence, research by Yang et al. (2023) pointed out that the main motivation for people to share rumors is to find out the facts. Guo et al. (2023) found that people believe rumors provided by traditional media more than social media. However, there are few studies that distinguish the difference between people’s willingness to share health rumors under the two scenarios of virtual companionship and knowledge acquisition in the context of AI. Distinguishing these two scenarios is essential because they reflect distinct user needs and may directly influence rumor-sharing behaviors. Unlike prior AI interaction research that typically focuses on single task-oriented or transactional functions, virtual companionship emphasizes emotional engagement and social connection, while knowledge acquisition prioritizes the efficient delivery of accurate information. These differing interaction modes shape the user’s trust, motivations, and subsequent behaviors.

First, under the scenario of virtual companionship, people interact with chatbots not just for information but for emotional support, which fosters a sense of trust and intimacy with the chatbot. This trust, often described as user-perceived intimacy, has been a key focus for chatbot developers (Lee et al., 2020; Park et al., 2022). As a result, users may perceive chatbots as close companions or even real friends. This emotional connection may increase the likelihood of users believing the information provided by the chatbot is accurate and trustworthy. While users may view this health information as accurate, it is, in fact, inherently a rumor. Since perceived authenticity of information and trust in the source are critical factors influencing health information sharing (Zhang et al., 2023), users in virtual companionship scenarios may be more inclined to share rumors due to this heightened trust. Second, users in virtual companionship scenarios often seek social belonging and interpersonal interaction, which distinguishes this scenario from purely transactional chatbot interactions. This need for connection and identity, often driven by feelings of loneliness, can motivate users to share information as a means of establishing or reinforcing social capital (Hong et al., 2021). Sharing health information may serve as a way for these users to engage with others, fulfill emotional needs, and foster a sense of community. Third, people in virtual companionship scenarios may prioritize emotional and entertainment needs, which could make them more inclined to share novel or intriguing health information to elicit reactions from their social network (Z. H. Xie, Hui, & Wang, 2024). This contrasts with users in the knowledge acquisition scenario, whose primary goal is to obtain accurate health information. Once their informational needs are met, these users may see little value in sharing, as their purpose is fulfilled without further engagement.

Therefore, we propose:

Hypothesis 1. People in the virtual companionship scenario are more willing to share rumors than users in the knowledge acquisition scenario.

The Impact of Fear-Type and Hope-Type Information on Rumor Sharing Intention

Building on our exploration of chatbot interaction scenarios, it is also critical to examine how the characteristics of the information itself, particularly its emotional tone, influence the sharing behavior of AI-generated health rumors. We examined the hypothesis that previous research has verified many times in non-human–computer interaction situations: the difference between fear-type and hope-type information. These two types of rumors are critical stimuli in rumor-sharing research, as they trigger different emotional reactions that significantly influence individuals’ sharing behaviors. In three-dimensional attribution theory, this falls under the category of stimuli. Usually, fearful information is usually more credible and can stimulate people’s willingness to share more than hopeful information (Chua & Banerjee, 2015, 2018; Kamins et al., 1997). The theory of negativity bias may explain this situation well, that is, “bad is stronger than good.” The negativity bias effect explains our usual cognitive biases: negative events have a greater impact on people than neutral and positive events, even when the intensity is the same (Baumeister et al., 2001; Lewicka et al., 1992; Rozin & Royzman, 2001). In particular, Rozin and Royzman (2001) mentioned that an important element of negativity bias is negative differentiation, which related to mobilization-minimization hypothesis, arguing that negative concepts are more refined and complex than positive concepts, therefore, people need to mobilize more and greater resources to deal with the emotional experience caused by negative events (Taylor, 1991). From this point of view, in the context of health rumors and information sharing, we can reasonably speculate that when people receive fear-type rumors provided by AI, they may feel greater emotional impact than when they receive hope-type rumors. For example, when people see that “a certain drink contains carcinogens,” they may have a strong fear, in order to alleviate it, people may take actions of sharing and forwarding. We want to explore this phenomenon in the context of human–computer interaction. We found that Chua and Banerjee (2017) pointed out that in the Internet context, there is no difference in people’s willingness to share fearful rumors and hopeful rumors. This may be because Apps like Facebook and Twitter have made sharing information increasingly easy, resulting in people not thinking too much before sharing. With the popularity of chatbots, it has become more convenient for people to obtain knowledge. Does the negative bias effect still follow in the perception and processing of negative emotions?

In addition, we also focus on the interaction of environment and stimuli. That is, in the two scenarios of virtual companionship and knowledge acquisition, can fear-type rumors regulate people’s willingness to share? Because negativity bias theory is a theoretical model based on emotion and behavior, we hypothesized that people would be more affected by emotional companionship scenarios. Compared with users who simply regard AI as a knowledge aggregation platform, users who view it as a virtual companion may be more likely to be stimulated by negative and fearful emotions and have a greater willingness to share, therefore we propose:

Hypothesis 2. In both virtual companionship and knowledge acquisition scenarios, people are more willing to share AI-generated fear-type health rumors than hope-type health rumors.

The Impact of Altruism on Rumor Sharing Willingness

According to Kelley’s three-dimensional attribution theory, individual characteristics—categorized as actor factors—critical in shaping behavior, as they influence how people interpret and respond to external stimuli and situational contexts. Building on the idea that emotional and behavioral responses are shaped by both internal traits and external environments, altruism serves as a key actor factor in our study. Altruism may amplify or mitigate individuals’ willingness to share AI-generated health rumors depending on the context in which they interact with chatbots. Previous research on health communication pointed out that altruism is a pivotal factor influencing people’s fact-checking of health rumors and health information sharing (P. Li et al., 2021; Raj et al., 2020; Wu, 2023). Therefore, in terms of actor factors, we consider the effect of altruism. Altruism refers to people’s behavior of helping others to bring benefits to others without expecting anything in return (Fehr & Gächter, 2000; Kankanhalli et al., 2005; W. W. K. Ma & Chan, 2014; Tang et al., 2022). Generally, people with higher levels of altruism are more inclined to engage in prosocial behavior, but the key lies in whether rumor sharing is viewed as a prosocial behavior. Apparently, when people are unaware that the information they are receiving is a health rumor, they are sharing it simply to help others, which is clearly a prosocial behavior. However, when people realize that the information is incorrect, those with high altruistic tendencies may instead combat or prevent the spread of rumors in order to protect others (Tang et al., 2022), which is also worth noting. Therefore, the effect of altruism on people’s rumor-sharing behavior is a complex mechanism. Numerous studies have confirmed that people’s altruistic tendencies are an important motivation or predictor for people to spread health rumors on social media (Adnan et al., 2022; Apuke & Omar, 2021; W. W. K. Ma & Chan, 2014; Malik et al., 2023; Tang et al., 2022), with both positive and negative effects. But we found that most research was conducted during Covid-19 to explore people’s motivations for spreading health rumors on social media, and research in human–computer interaction contexts is still rare. So, we want to know whether altruism affects people’s willingness to share AI-generated health rumors.

Specifically, we wish to consider the moderating role of altruism in two contexts. Considering that under the knowledge acquisition scenario, people may use AI more for the purpose of obtaining resources and information, while under the virtual companionship scenario, people may be more susceptible to emotional influence. We found that altruism affects positive prosocial behavior intention usually through an emotional mechanism, which is closely related to interpersonal interaction (Barasch et al., 2014; Kassim & Md Yunus, 2019). In essence, people share information out of a selfless mentality of helping others. In this case, under the scenario of virtual companionship, will people themselves seek help for emotional purposes, and will they also help others based on emotional purposes? Under the knowledge acquisition scenario, when people’s interaction with chatbots is mainly for the purpose of obtaining specific information, will people with high altruism still be willing to share health information? Based on Kelley’s three-dimensional attribution theory, we hope to observe whether actor factors and environment interact, so we propose:

Research Question 1. Does people’s altruistic tendencies influence people’s willingness to share AI-generated health rumors? Is this influence mechanism different under the two scenarios of virtual companionship and knowledge acquisition?

Method

Building on the theoretical framework and prior research reviewed above, this study adopts a rigorous experimental design to empirically examine how interaction scenarios, rumor types, and altruistic tendencies influence the willingness to share AI-generated health rumors, providing a structured approach to address the research questions outlined.

Participants

The participants in this study consisted of 600 undergraduate students recruited from three universities in Eastern China. According to the official China Internet Network Information Center (CNNIC, 2023) statistical report, users of AI-generated content (AIGC) are predominantly young, making the age distribution of our participants representative of the target demographic. Participants were specifically chosen from those enrolled in elective computer science courses, as these students were presumed to have a higher propensity to engage with and understand AI technologies, which was essential for the research focus on interactions with AI-generated health rumors. In March 2023, the three universities introduced a chatbot system based on GPT-4, freely available to students for emotional support and knowledge acquisition. This system provided the foundation for distinguishing interaction scenarios in our study (Z. H. Xie, Wu, & Xie, 2024). Freshmen who had not interacted with the previously available school chatbot system or any other AIGC chatbot were assigned to the control group, ensuring that their baseline measurements of interaction were not influenced by prior chatbot experiences. For non-freshman participants, the same type of GPT-4-based chatbot was used to maintain consistency in the experimental setup. To minimize any potential bias stemming from professional knowledge, participants with a background in medical studies, including those enrolled in elective medical courses or those with family members engaged in medical professions, were deliberately excluded from the experiment. By recruiting participants from similar educational environments and backgrounds, we aimed to minimize external variability and maintain a controlled research context.

The initial pool for potential participants comprised 1,029 students. Based on participants’ self-reported interaction types, quota sampling was employed to obtain the final 600 participants, ensuring balanced representation across different experimental conditions and alignment with the study’s specific requirements. The selection process was carefully designed to maintain a balanced sample size for each group involved in the experiment. The age of participants is denoted by an average age of 20.334 years and a standard deviation of 1.502. When it comes to gender, where 0 represents females and 1 signifies males, the mean stood at 0.594, accompanied by a standard deviation of 0.145. The study was approved by the Institutional Review Board at the authors’ institution, and the participation of all subjects was based on informed consent. Participants who completed all experiments were compensated with 20 yuan each, via WeChat Pay or Alipay.

Our study’s sample size determination was informed by a rigorous power analysis using G*Power. We selected the analysis of variance (ANOVA): fixed effects, main effects, and interactions model under the F-test family to accommodate our factorial experimental design with 12 groups. The effect size was set to a medium effect size (f = 0.25), based on empirical evidence from prior research (Köbis & Mossink, 2021; Mou et al., 2024). We aimed for a power (1 − β error probability) of 0.95, ensuring a high probability of detecting a true effect if it exists, while maintaining the standard α error probability (Type I error rate) of 0.05. The analysis indicated that a total sample size of 413 participants would be required to achieve the desired power under these parameters. Given that our actual sample size is 600 participants, this exceeds the minimum required, providing greater robustness to detect the specified effect size with the chosen level of power and alpha error probability. These parameters ensure the validity and reliability of our findings across the factorial design.

Experimental Procedure

Experimental Materials

Data Collection and Thematic Clustering

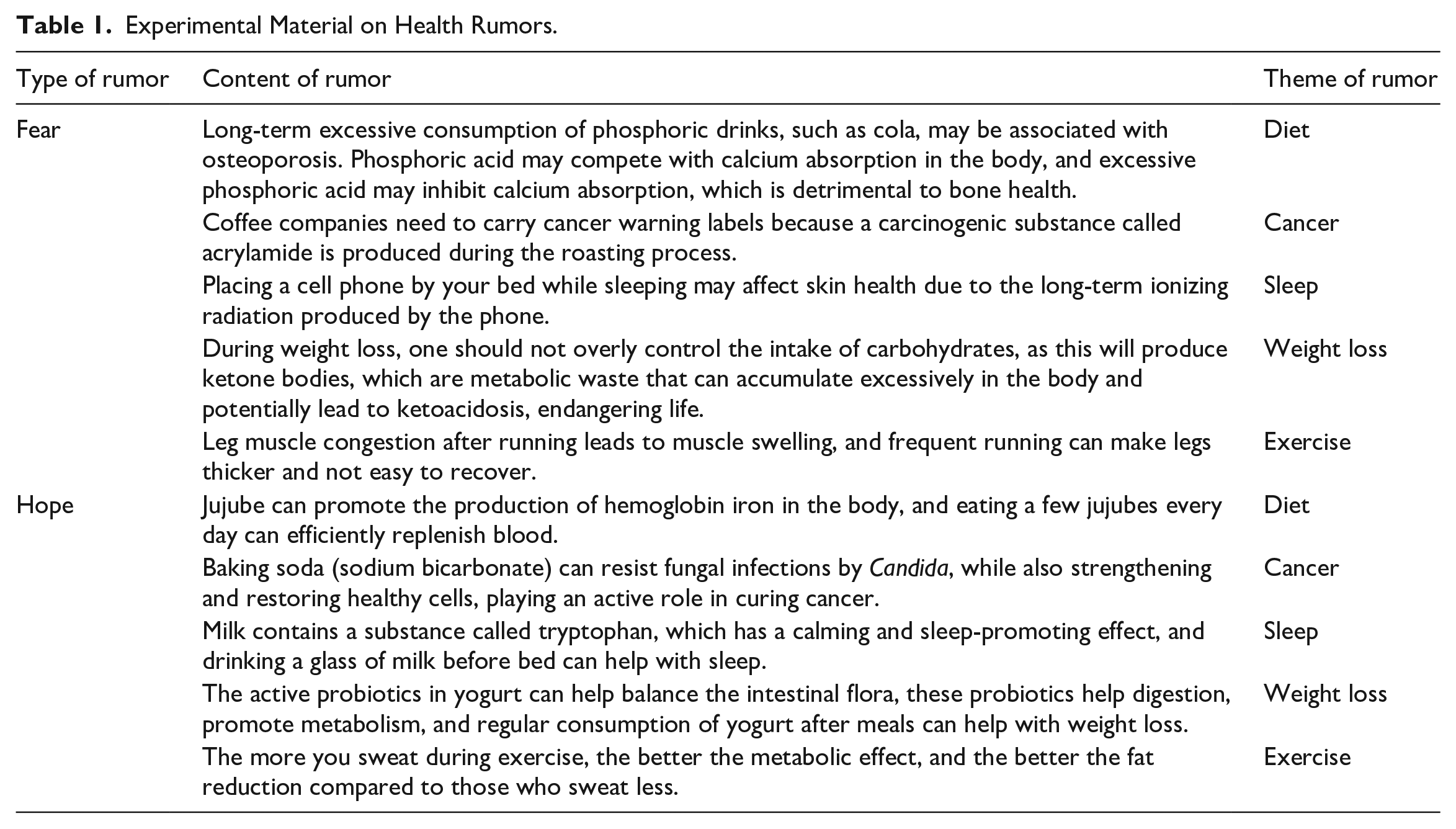

On 15 September 2023, we utilized Python to scrape all Weibo posts (a platform akin to Twitter in China) containing combinations of the keywords “AIGC,” “GPT,” “health,” and “rumor.” In our scraping criteria, posts were included if at least one keyword from the group “GPT,” or “generative AI” was present in conjunction with both “health” and “rumor.” This step and the selection of these specific keywords were driven by two objectives: first, to gauge the Chinese audience’s interest in health-related rumors, and second, to ensure that the collected rumors were indeed AI-generated. To perform thematic clustering on the data gathered through Python, we employed the KH Coder software. This tool enabled us to systematically analyze the content, identify underlying themes, and categorize the data based on thematic similarities. The thematic clustering resulted in the identification of 11 distinct health rumor themes. During this process, we focused on extracting meaningful themes relevant to AI-generated health rumors, emphasizing clusters with substantive content that reflected user interest and engagement. Clusters predominantly composed of adverbs, adjectives, conjunctions, and auxiliary verbs were excluded from further analysis because they lacked meaningful content related to the subject matter and did not contribute to understanding the topics of user concern. Ultimately, the five health rumor themes retained—Cancer, Sleep, Weight Loss, and Exercise—were selected based on their high frequency of occurrence, reflecting topics that users most actively discussed and engaged with. These themes were visualized in a thematic clustering diagram, denoted as Figure 1. The visualization highlights the dominant areas of public discourse around AI-generated health rumors on social media platforms like Weibo, providing insights into the key topics of concern to users.

Collinear network diagram of Health Rumor Themes Concerned by Chinese Netizens on Weibo.

Rumor Material Selection

We chose the 20 rumors with the highest number of likes on Weibo from each of the five identified health rumor themes, totaling 100 rumors. Considering that AIGC users are predominantly young and similar in age to social media users (CNNIC, 2023), scraping data from Weibo was deemed appropriate. Selecting trending health rumors aimed to minimize the inclusion of non-trending rumors, which participants might naturally be less inclined to share. To further verify the rumors as such, we input these 100 health rumors into China’s official rumor refutation platform (piyao.org.cn), confirming their status as rumors. Those not recognized by the platform were excluded to ensure research accuracy, leaving 63 rumors.

Rumor Type Coding

We invited two coders with Doctorates in Medicine to classify the 63 rumors into themes of hope or fear. Beyond their medical training, the coders received a brief orientation to ensure a consistent understanding of hope-type and fear-type rumors. They focused solely on the emotional tone and thematic content of the rumors and were blinded to the study’s conditions and hypotheses to avoid bias. The consistency between the two coders’ results was 100%, with nine rumors not fitting into either the hope or fear category. After removing these nine, we retained 54 rumors, comprising 25 hopeful and 29 fear-inducing rumors. For each rumor type, we specifically selected the AI-generated health rumors with the highest number of likes from each health theme for use as experimental materials. Our selection was deliberately focused on health rumors that closely relate to everyday life, aiming to minimize the likelihood of reduced willingness to share due to the rumors being irrelevant to participants’ daily experiences. The wording of the selected rumors was modified to prevent participants from recognizing any rumors they might have previously encountered, without changing the essence of the rumors.

GPT Expansion and Experiment Material Preparation

We input the selected experimental materials into GPT, which then expanded upon these materials to generate more realistic AI-produced health rumors. One of the health rumors about sleep was identified by GPT as a rumor and thus could not be effectively expanded. We replaced this particular piece of experimental material with a new health rumor, which was successfully expanded. As a result, we obtained 10 health rumors, divided into 2 types: 5 hopeful and 5 fear-inducing, as depicted in Table 1. To ensure the reliability and consistency of the experiment, we used a video recording method to simulate the process of GPT generating health-related information. This approach served as our experimental material, allowing us to control for variations that could arise from real-time interactions. By using standardized pre-recorded videos, we ensured that all participants were exposed to identical stimuli, enabling direct comparisons across experimental groups and minimizing potential confounding variables such as differences in chatbot responses or individual user prompts. As shown in Figure 2, we posed a question to GPT, “Does drinking cola frequently lead to osteoporosis?” (which is, in fact, a rumor). GPT then generated the specific content of the rumor. This generation process was screen-recorded. We then compiled the recordings of the five rumors from both the hope and fear categories into two separate videos, each serving as the experimental material for the respective groups of participants.

Experimental Material on Health Rumors.

Screenshot of the experimental video.

Implementation of the Experiment

The experiment was conducted from 20 October 2023 to 18 November 2023. It was designed as a 3 × 2 × 2 factorial experiment to explore the relationship between the willingness to share health rumors and three variables: types of interaction with the chatbot (virtual companionship, knowledge acquisition, and control group), the nature of the health rumors (hope and fear), and the level of altruism (high and low). Each group consisted of 50 participants. In total, 300 participants were exposed to AI-generated health rumors of a hopeful nature, while the remaining 300 were exposed to fear-inducing health rumors.

Participants faced a computer screen to watch videos of GPT generating health information. Participants were unaware that the health information presented was, in fact, health rumors. After the generation of each piece of health information, there was a 15-s pause. During this pause, participants were provided with a scale on a piece of paper in front of them to indicate their willingness to share that piece of health information.

Upon completion of the experiment, participants were given accurate health information to prevent them from being misled by the rumors encountered during the experiment in their real-life situations. They were explicitly informed that the health information shown during the experiment was intentionally fabricated as part of the research design to simulate the spread of health rumors, and they were encouraged to disregard any misinformation. To address the ethical implications of exposing participants to health misinformation, a thorough debriefing procedure was implemented. Participants were provided with verified health information and official resources, such as links to credible health platforms, along with guidance on how to critically evaluate health-related content. This aimed to mitigate any potential short-term effects of misinformation exposure while equipping participants with skills to critically assess similar scenarios in the future. In addition, participants were informed of their right to contact the research team with any questions or concerns after the study and were assured they could withdraw their data at any time.

Measures

Interaction Types

As previously mentioned, the three universities introduced a GPT-4-based chatbot system in March 2023, which was freely available to students. Freshmen with no prior interaction with this or other AIGC chatbots were assigned to the control group, ensuring their baseline measurements were unaffected by earlier experiences.

For students who had used the school-provided chatbot, we categorized their interactions into virtual companionship and knowledge acquisition scenarios based on self-reported primary modes of engagement. Participants who viewed chatbots as companions and derived emotional support from them were classified under the virtual companionship scenario. This category encompassed individuals who sought solace, expressed emotions, or engaged in heartfelt conversations with the chatbot (Z. H. Xie & Wang, 2024). The focus of these interactions was less on fulfilling tangible needs and more on fostering emotional connections and promoting mental well-being ( X. Y.Ma & Huo, 2025). Users in this mode often shared their anxieties, fears, or hopes, looking for understanding, comfort, or simply a listening ear (Chaturvedi et al., 2023). For instance, participants might have reflected on scenarios like, “I chatted with the chatbot when I felt lonely,” as indicative of their primary mode of engagement.

Conversely, participants who primarily viewed chatbots as tools for obtaining information and knowledge were categorized under the knowledge acquisition scenario. These interactions were utilitarian, focused on acquiring specific information rather than emotional support (Jo & Park, 2023). Examples included using chatbots for academic tasks such as drafting assignments, retrieving information, or resolving course-related queries (Al-Sharafi et al., 2023). Participants in this category resonated with scenarios like, “I used the chatbot to help draft my assignment,” highlighting their practical, task-oriented usage.

To ensure a reliable and objective classification, participants were asked to report their primary interaction mode using a 7-point Likert-type scale, where 1 represented a strong inclination toward virtual companionship and 7 represented a strong inclination toward knowledge acquisition. Participants selecting 1 were classified under the virtual companionship scenario, while those selecting 7 were classified under the knowledge acquisition scenario. Participants who selected values between 2 and 6 were excluded from further analysis to ensure a clear distinction between the two groups (Borsci et al., 2021; Haoyue & Cho, 2024).

In addition, participants were presented with representative use-case examples for both scenarios to minimize subjective interpretation and promote consistency in responses. To further reduce potential biases, such as social desirability bias, the informed consent process explicitly assured participants of the anonymity of their data and emphasized that their responses would be used solely for research purposes. This assurance of confidentiality was intended to encourage honest self-reporting and mitigate the influence of factors like social desirability or memory recall issues. By combining the structured Likert-type scale approach, illustrative examples, and a commitment to data anonymity, we aimed to ensure robust and reliable categorization of the two interaction types.

Altruism

To evaluate participants’ altruistic tendencies, we utilized the Altruism Scale by Rushton et al. (1981). This instrument was administered to the 1,029 initial candidates to categorize their levels of altruism. Participants scoring above the average were identified as high in altruism, while those below the average were considered low in altruism. By assessing altruism in the full sample of 1,029 individuals, we were able to allocate a sufficient number of participants to each category of variable, to ensure equal numbers in each experimental group. The Altruism Scale prompts individuals to report the frequency of their altruistic acts, with responses ranging from Never (1) to Very Often (5). The items on the Altruism Scale include examples such as “I have helped someone whose car broke down on the road” and “I have given directions to a stranger.” For more details, please refer to the Appendix.

Intention to Share

Intention to share is conceptualized as the likelihood of an individual’s willingness to disseminate rumors, as outlined by So and Bolloju (2005). To assess this intention, participants were presented with statements such as “I will share the message with others,” and “I intend to share the message with others,” following the methodology of Chua and Banerjee (2018). Responses were measured using a Likert-type scale ranging from 1 to 5, representing a spectrum from strong disagreement to strong agreement. The average score of these items was calculated to derive a composite score for each participant, with higher scores indicating a greater willingness to share the rumors.

The analysis of the collected data was conducted using ANOVA and regression techniques. ANOVA was utilized to identify any significant differences between groups, while regression analysis helped in understanding the predictive value of the independent variables on the intention to share.

Results

Manipulation Check

Two manipulation checks were conducted as part of the experimental procedure. The first aimed to determine whether participants could identify the material as rumors. At the conclusion of the experiment, we asked participants directly if they had recognized any of the test materials as rumors during the test. Eleven participants expressed suspicions about the validity of the health information presented during the experiment but still considered them to be accurate health information at the time. The rest of the participants did not recognize the materials as rumors.

The second manipulation check focused on whether participants could discern the type of rumors—hope or fear—presented in their group’s test materials. This was measured using a Likert-type scale ranging from 1 (indicative of hope) to 5 (indicative of fear). The ANOVA results from Table 2 confirm that the manipulation was successful. The 300 participants in the hope group scored lower, indicating a tendency toward hope, while the 300 participants in the fear group scored higher, leaning toward fear. This significant difference in perception between the two groups, as indicated by the F statistic and the p-value (<.001), ensures that the experimental manipulation of rumor type was effective.

ANOVA for the Manipulation Check of Health Rumor Types.

SD = standard deviation. Mean represents the average score of perceived rumor type.

Effect of Interaction Types on Rumor Sharing Willingness

To investigate how different types of interactions with artificial intelligence-generated content (AIGC) affect the willingness to share health rumors, an ANOVA test was conducted, and the results are presented in Table 3. This analysis aimed to explore the impact of interaction types—Control Group, Knowledge Acquisition, and Virtual Companionship—on participants’ propensity to disseminate health-related rumors. The mean scores indicate participants’ average willingness to share health rumors, where higher scores suggest a greater inclination toward sharing. The significant F value (12.486) with a p-value less than .001 demonstrates that there are statistically significant differences in the willingness to share health rumors across the different interaction types. Control Group participants had a mean willingness score of 3.73, suggesting a moderate inclination toward sharing health rumors. Knowledge Acquisition participants showed a slightly higher mean score of 3.93, indicating a stronger willingness to share. Virtual Companionship participants had the lowest mean score of 3.48, suggesting they were the least likely to share health rumors among the groups. These results suggest that the context of interaction with AI-generated content significantly affects individuals’ propensity to disseminate health rumors, with those seeking knowledge showing the highest willingness to share.

ANOVA for Interaction Types and Health Rumor Sharing Willingness.

SD = standard deviation. Mean represents the average score of Health Rumor Sharing Willingness.

To further elucidate these differences, a post hoc analysis using the least significant difference (LSD) method was carried out, with detailed findings presented in Table 4. This analysis helped identify specific differences between the interaction types regarding the willingness to share health rumors. The Knowledge Acquisition group was significantly more inclined to share health rumors compared to the Virtual Companionship group, with a mean difference of 0.45 and a p-value less than .001. This indicates a robust effect where seeking knowledge merely from AI leads to a greater likelihood of spreading health rumors. Comparing the Knowledge Acquisition group to the Control Group, the mean difference is 0.20 (p = .030), suggesting that even when compared to a neutral baseline, individuals seeking knowledge are more prone to share rumors. The comparison between the Virtual Companionship group and the Control Group reveals a mean difference of −0.25 (p = .005), indicating that participants who engage with AI for companionship purposes are less likely to share health rumors than those in the control group.

LSD Post Hoc Analysis of Interaction Types and Health Rumor Sharing Willingness.

Mean represents the average score of Health Rumor Sharing Willingness.

Contrary to Research Hypothesis 1, which posited that users in a virtual companionship scenario would be more inclined to share rumors, the findings from both the ANOVA and post hoc analysis reveal a different pattern. Participants engaged in knowledge acquisition scenarios exhibited a higher willingness to share health rumors compared to those in virtual companionship and control groups. This suggests that individuals who interact with AI-generated content primarily for knowledge acquisition are more likely to share health rumors, which contrasts with our initial hypothesis.

Effect of Rumor Types on Rumor Sharing Willingness

The analysis of the effect of rumor types on the willingness to share health rumors was conducted using an ANOVA, as detailed in Table 5. This analysis aimed to compare the impact of hope-type and fear-type health rumors on participants’ propensity to disseminate such information.

ANOVA for Rumor Types and Health Rumor Sharing Willingness.

SD = standard deviation. Mean represents the average score of Health Rumor Sharing Willingness.

The results from Table 5 demonstrate a significant difference in the willingness to share between hope-type and fear-type health rumors. Participants showed a markedly higher propensity to share fear-type rumors, with a mean score of 4.26, compared to hope-type rumors, which had a lower mean score of 3.03. The large F value (566.547) with a p-value of less than .001 strongly supports the existence of a significant effect of rumor type on sharing willingness.

These findings confirm the research hypothesis (Hypothesis 2) that people are more willing to share AI-generated fear-type health rumors than hope-type health rumors. This pattern holds true across both scenarios—whether individuals are seeking knowledge or companionship from AI-generated content—indicating a universal tendency to prioritize the spread of fear-inducing rumors over hopeful rumors.

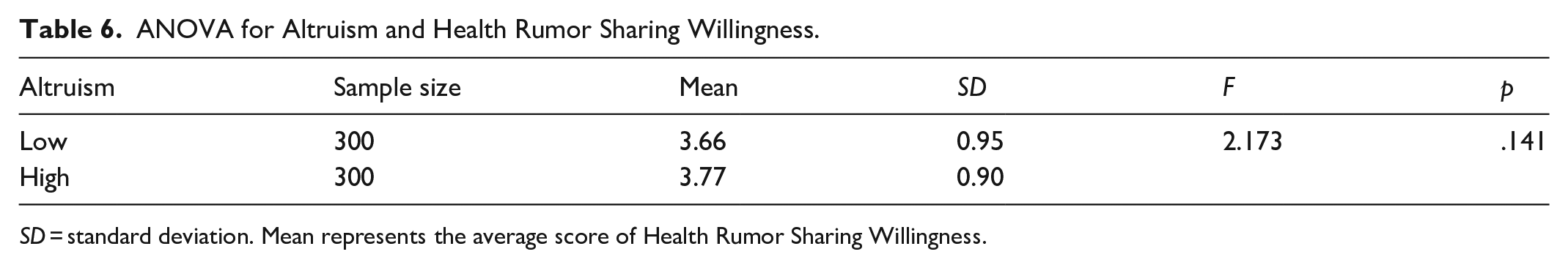

Effect of Altruism on Rumor Sharing Willingness

To explore the potential effect of altruistic tendencies on the willingness to share health rumors, an ANOVA was conducted.

The results from Table 6 show a slight difference in the willingness to share health rumors between individuals with low and high altruistic tendencies, with mean scores of 3.66 and 3.77, respectively. However, the F value of 2.173 with a p-value of .141 indicates that this difference is not statistically significant. This suggests that, within the context of this study, altruistic tendencies do not have a substantial impact on the likelihood of sharing AI-generated health rumors.

ANOVA for Altruism and Health Rumor Sharing Willingness.

SD = standard deviation. Mean represents the average score of Health Rumor Sharing Willingness.

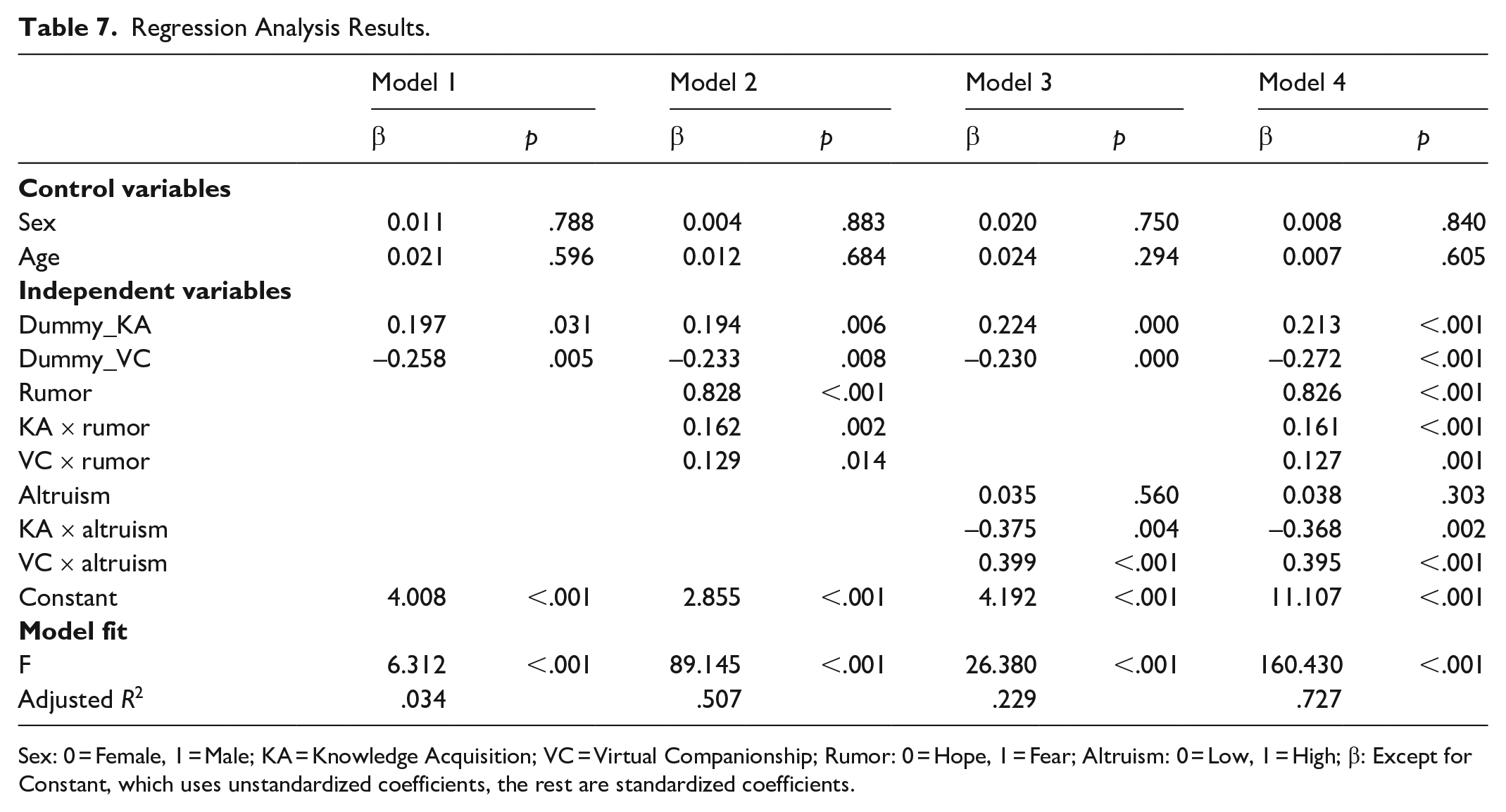

Regression Analysis Results

The regression analysis conducted aimed to further elucidate the moderating effects of rumor type and altruism on the willingness to share health rumors in different interaction scenarios. This analytical approach not only lends further support to Research Hypothesis 2, asserting that individuals are more predisposed to disseminate AI-generated health rumors that evoke fear rather than those instilling hope, but also addresses research question 1: “Does people’s altruistic tendencies influence their willingness to share AI-generated health rumors, and does this influence vary across different scenarios?” Table 7 presents the comprehensive regression analysis results, integrating all key factors and their interactions.

Regression Analysis Results.

Sex: 0 = Female, 1 = Male; KA = Knowledge Acquisition; VC = Virtual Companionship; Rumor: 0 = Hope, 1 = Fear; Altruism: 0 = Low, 1 = High; β: Except for Constant, which uses unstandardized coefficients, the rest are standardized coefficients.

The analysis shows that demographic factors such as sex (β ranging from 0.004 to 0.020, p > .05) and age (β ranging from 0.007 to 0.024, p > .05) have no significant impact on the likelihood of sharing health rumors. This suggests that the propensity to share health rumors is not strongly associated with these demographic variables.

Knowledge Acquisition (Dummy_KA)

Individuals engaging with AI for knowledge acquisition are significantly more likely to share health rumors (β = 0.197, p = .031 in Model 1; β = 0.213, p < .001 in Model 4), highlighting the role of knowledge-seeking behavior in rumor dissemination.

Virtual Companionship (Dummy_VC)

In contrast, those seeking virtual companionship show a lower tendency to share health rumors (β = −0.258, p = .005 in Model 1; β = −0.272, p < .001 in Model 4), suggesting that emotional or social engagement with AI does not promote rumor sharing as strongly.

Rumor Type

The analysis confirms that fear-type rumors (coded as 1) are significantly more likely to be shared than hope-type rumors (coded as 0), with a substantial effect size (β = 0.828, p < .001 in Model 2; β = 0.826, p < .001 in Model 4). This supports Research Hypothesis 2 and indicates a strong preference for spreading fear-inducing content.

Interactions With Rumor Type

The interaction terms (KA × rumor and VC × rumor) reveal that the effect of knowledge acquisition and virtual companionship on rumor sharing is further influenced by the type of rumor, with both scenarios showing increased sharing for fear-type rumors (β = 0.162, p = .002 for KA × rumor; β = 0.129, p = .014 for VC × rumor in Model 2).

Altruism

Although altruism alone does not significantly predict rumor sharing willingness (β = 0.035, p = .560 in Model 3; β = 0.038, p = .303 in Model 4), the interaction terms (KA × altruism and VC × altruism) demonstrate that altruistic tendencies significantly moderate the relationship between interaction type and rumor sharing. Specifically, altruism decreases the likelihood of sharing in knowledge acquisition contexts (β = −0.375, p = .004 in Model 3) but increases it in virtual companionship scenarios (β = 0.399, p < .001 in Model 3).

The models exhibit robust fit as evidenced by significant F values across all models (F ranging from 6.312 to 160.430, all p < .001) and increasing adjusted R2 values, indicating that a significant proportion of the variance in the willingness to share health rumors is explained by the variables in the analysis.

Discussion

As artificial intelligence and deep learning technology continue to make breakthroughs, chatbots have become an important way for people to obtain health information (Ludin et al., 2022; Omarov et al., 2023; Softic et al., 2021). In addition to integrating health knowledge and information, the ability of chatbots to provide people with emotional value and support as a virtual companion has also been proven (de Gennaro et al., 2020; Lim et al., 2022; Medeiros et al., 2022; Narynov et al., 2021; Park et al., 2023). However, previous research on health rumors lacks an artificial intelligence perspective, especially the distinction between the two scenarios. Consequently, the aim of this study is to explore the propagation mechanism of health rumors within the context of artificial intelligence. We sorted out past empirical research on health communication and found that when exploring health information sharing intention, most studies are based on Heider’s classic binary attribution theory and only take into account psychological factors and social factors (Chua & Banerjee, 2018; Guo et al., 2023; Kye et al., 2019; Xue & Taylor, 2023; Zhang et al., 2023; Zhu et al., 2023). Therefore, we hope to verify whether the situation, information characteristics, and actor factors mentioned in Kelley’s three-dimensional attribution theory jointly influence people’s health information sharing behavior (Kelley, 1967, 1971, 1972, 1973). Grounded on Kelley’s attribution theory, the experiment was designed to observe people’s willingness to spread AI-generated health rumors under two scenarios: virtual companionship and knowledge acquisition, and to examine whether fear/hope type rumors and people’s own altruistic tendencies affect this willingness.

First, the results showed that compared with the control group, people in the virtual companionship situation were less willing to share rumors, whereas those in the knowledge acquisition situation were more willing to share rumors, contrary to our speculation. This finding indicated that usage scenarios can indeed influence people’s willingness to share AI-generated health rumors, which confirms that environmental factors, as proposed by the three-dimensional attribution theory, still play a role within the context of artificial intelligence. In the previous comparison between traditional media and social media, audiences who value the authenticity of information and choose traditional media are more likely to share rumors (Guo et al., 2023; Yang et al., 2023). Within the domain of artificial intelligence, users who value emotional support and social connection are less inclined to share health rumors, while those who value knowledge acquisition are more proactive in sharing information. Contrary to our prediction, under the virtual companionship scenario, people were not more likely to share health information due to increased trust and intimacy with the chatbot or a stronger need for social connection. We speculate that under this scenario, people use chatbots to obtain emotional support and relieve personal anxiety. Once chatbots provide instant companionship to meet emotional needs, their purpose has been fulfilled. But in the context of knowledge acquisition, people’s motivation for using chatbots is the desire for accurate health information. While chatbots provide information, the accuracy and reliability remain uncertain. Out of motivation to ascertain facts, people may arouse more attention by forwarding and sharing to let relatives and friends confirm whether the health information is reliable. Another possibility is that people may perceive the information provided by the chatbot is credible and authoritative, prompting them to share important information actively and complete the closed loop of knowledge acquisition and knowledge sharing.

Second, our findings reveal that fear-type rumors indeed elicit greater sharing intentions than hope-type rumors, aligning with our hypothesis. Building on the negativity bias theory, we posit that negative events such as fear-type rumors can trigger people’s emotional fluctuations, making people more willing to share rumors (Taylor, 1991). Upon receiving fear-type health rumors provided by AI, people experience feelings of anxious, uncertain, and urgent mental states, consequently adopting more proactive sharing behaviors out of an urgent need to explain and alleviate these negative events. Chua and Banerjee (2017) noted that online media and social media have made information sharing more convenient, resulting in the insignificant impact of rumor type on people’s willingness to share. Our results align with the predictions of negativity bias theory: fear-type rumors continue to have a stronger stimulating effect, even in the context of human–computer interaction. Notably, fear-type rumors have a positive moderating effect under the scenarios of virtual companionship and knowledge acquisition. In other words, no matter what the situation, fear-type information can motivate people to share more actively. It is easily understandable that under the scenario of virtual companionship, people are readily stimulated by negative emotions. Interestingly, even users viewed chatbots purely as information aggregation tools were more likely to share fear-based rumors. It can be seen that the characteristics of fear-based health rumors are powerful in terms of novelty, negativity, and anxiety-inducing. Therefore, fear-based health information is likely to be shared on a larger scale across various contexts and scenarios, which underscores the necessity for establishing a fact-checking mechanism to ensure such health information undergoes a more rigorous and meticulous evaluation.

Third, our finding indicated that people’s altruistic tendencies do not directly impact the willingness to share health rumors. Previous empirical studies examining traditional media and social media have shown that altruism may drive people to share health rumors, or be the motivation for people actively preventing the spread of health rumors (Adnan et al., 2022; Apuke & Omar, 2021; W. W. K. Ma & Chan, 2014; Malik et al., 2023; Tang et al., 2022). This may depend on people’s awareness of whether the information they share qualified as a rumor, which in turn determines whether the sharing behavior can be understood as a prosocial behavior. However, our research provides evidence that in the context of human–computer interaction, people with higher altruistic tendencies are not necessarily more proactive in sharing health rumors. We speculate this may stem from the separation of knowledge acquisition and sharing in the context of artificial intelligence. The knowledge people obtain from chatbots cannot be shared through chatbots but instead requires social media and face-to-face interpersonal communication. Consequently, the effect of altruism at this time may be mitigated by the time lag and the complexity of switching between platforms.

While altruistic tendencies exhibit no direct effect, the moderating effect occurred when we combined the two chatbot usage scenarios. Altruism has the opposite effect in the scenarios of virtual companionship and knowledge acquisition: it plays a moderating role under the virtual companionship scenario, making people more willing to share health rumors; conversely, under the knowledge acquisition context, it diminishes people’s willing to share health rumors. We speculate that this may be because in the virtual companionship scenario, people with high altruistic tendencies help others by establishing emotional connections and social relationships. Therefore, when these people receive health information that may be beneficial to others, they may share the health information based on their concern, sympathy and helpfulness for others’ health conditions. But under the scenario of knowledge acquisition, the basic purpose of people using chatbots is to obtain accurate, specific and effective health information. Due to the mixed information available on the Internet, these people may have doubts about the accuracy, reliability, and validity of the information. People may worry that spreading false health information will adversely affect others. This is also consistent with previous research showing that when people realize that information is incorrect, people with higher altruistic tendencies may instead combat or prevent the spread of rumors in order to protect others (Tang et al., 2022). Therefore, out of a sense of responsibility and motivation to protect others, people with high altruistic tendencies in this situation may instead consider more carefully whether to share health information. This result also suggests that when we consider situational factors and personal characteristics factors in the three-dimensional attribution theory at the same time, the two may interact to jointly affect people’s information sharing behavior.

Overall, our findings provide evidence that Kelley’s three-dimensional attribution theory is effective in explaining the mechanism behind the spread of health rumors in the context of artificial intelligence. As exploring whether people are willing to share chatbot-generated rumors, we found that context, stimuli, and actors continue to be important factors that influence people’s actions. Among them, the contextual difference has changed from the original distinction between traditional media and social media to the difference between virtual companionship and knowledge acquisition. Under the scenario of knowledge acquisition, people are more willing to share rumors, whether for verification or knowledge sharing. We also demonstrated that whether the stimulus is of fear or hope type still has a significant impact on people’s willingness to share. Although the actor factor, namely altruistic tendency, no longer has a direct effect, it plays a moderating role to a certain extent when combined with the two scenarios.

Our research has both theoretical and practical significance.

Theoretically, our study contributes to the emerging research on how AI-generated health rumors are shared. In the field of health communication, especially the research on the spreading mechanism of health rumors, we have updated the perspective of human-computer interaction. First, this study extends social media research to AI-generated content by bridging the gap between traditional health rumor research and the emerging dynamics of human–computer interaction in AI-mediated communication. By exploring how AI-generated health rumors are shared, our work highlights the evolving role of platforms as interactive tools rather than passive information repositories. This contributes to a deeper understanding of platform-based communication behaviors in the context of AI-driven health communication. Second, our findings advance Kelley’s three-dimensional attribution theory by integrating it into the domain of AI-driven platforms. Unlike prior studies that primarily relied on Heider’s dual attribution framework, we emphasize the interaction between context, stimuli, and actor factors. This multidimensional approach offers a richer lens for understanding the mechanisms of health rumor sharing, particularly within AI-mediated environments. Third, we introduce and validate two distinct interaction scenarios—virtual companionship and knowledge acquisition. This classification moves beyond the oversimplified and generalized definitions of generative AI usage in the past, offering a nuanced framework for understanding user behaviors. It provides a critical reference point for examining user behavior dimensions as generative AI becomes increasingly integrated into social media platforms. Fourth, by demonstrating that fear-type health rumors exert a stronger influence on sharing behaviors than hope-type rumors, our study reaffirms the applicability of negativity bias theory in AI-mediated communication. This finding underscores the enduring role of emotional stimuli in shaping online behavior, even as communication mediums evolve to include AI-generated content. Fifth, our research highlights the moderating role of altruism in rumor-sharing behaviors, showing that altruistic tendencies can either amplify or reduce sharing depending on the interaction scenarios. Finally, our work speaks to multidisciplinary audiences, including scholars in HCI, communication studies, and psychology. By examining the behavioral and emotional dimensions of AI-mediated communication, this study provides valuable insights for understanding how emerging technologies influence social behaviors, platform interactions, and emotional engagement.

In practice, our study provides valuable insights for improving social media platforms and AI systems to address the challenges posed by AI-generated health rumors. First, our findings offer specific strategies for social media platforms, AI developers, and policymakers to mitigate misinformation. By identifying the distinct scenarios of virtual companionship and knowledge acquisition that influence rumor-sharing behaviors, we propose tailored interventions. For emotionally charged virtual companionship scenarios, stricter moderation and emotional content monitoring are essential to prevent misinformation from exploiting users’ emotional engagement. In contrast, knowledge acquisition scenarios require advanced fact-checking mechanisms integrated into AI systems, ensuring the reliability of shared information. These mechanisms could include real-time recognition of emotionally charged or fear-based content, enabling chatbots to flag potentially harmful information and provide users with verified alternatives or disclaimers. Second, this research highlights the importance of addressing the influence of fear-inducing health rumors. Given their strong impact on sharing behavior, social media platforms and AI developers should prioritize the detection and correction of fear-based misinformation. Algorithms capable of identifying linguistic markers of fear in real time could be integrated into chatbots and platforms, allowing for immediate intervention. These systems could also incorporate interactive elements, such as providing contextual explanations or links to trusted health resources, to counteract the emotional pull of fear-type rumors and prevent their widespread dissemination. Third, our findings highlight the significant moderating role of altruistic tendencies in shaping rumor-sharing behaviors, depending on the interaction scenario. In virtual companionship scenarios, altruistic users are more likely to share health rumors, driven by their desire to help or emotionally support others. Platforms could introduce features such as prompts or pop-ups encouraging users to verify information before sharing, particularly for emotionally charged or unverified health claims. For knowledge acquisition scenarios, where altruistic users exhibit greater skepticism, integrating interactive fact-checking tools or verification systems could enable users to cross-check health information in real time, fostering critical thinking and reducing misinformation spread. Finally, while our study focuses on young, tech-savvy users, our findings can guide the development of interventions tailored to diverse demographic groups. Social media platforms and AI developers should implement features that address variations in digital literacy, such as user-friendly fact-checking tools and step-by-step guides on evaluating health information. For older users or those less familiar with AI technologies, platforms could provide simplified interfaces and video tutorials to enhance their ability to critically assess AI-generated content. For culturally diverse audiences, developers can design chatbots and platform features that recognize linguistic and contextual nuances, ensuring misinformation detection systems are adaptable to regional and cultural variations.

Conclusion

Our study examines people’s willingness to share AI-generated health rumors within two scenarios: virtual companionship and knowledge acquisition in the context of artificial intelligence. We examined the relationship between people’s willingness to share and health rumor fear/hope, as well as their altruistic tendencies in both scenarios. Our study provides empirical support for Kelley’s three-dimensional attribution model and negativity bias theory, extending their applicability in empirical research on AI. Our research results show that in a knowledge acquisition scenario, people are more willing to share AI-generated health rumors, and fear-type rumors will inspire people to share more actively. Although altruistic tendency does not directly affect people’s sharing behavior, it has the opposite moderating effect in the two scenarios. In the knowledge acquisition situation, altruistic tendency will make people more willing to share rumors. These findings provide new insights and perspectives for exploring the health rumor spreading mechanism in the context of artificial intelligence, offering theoretical basis and support for improving robots that provide health information and establishing fact-checking mechanisms in the context of AI.

However, our study also has limitations. First, our research subjects were 600 undergraduate students from three universities in eastern China, which may limit the generalizability of our findings. Future studies should consider including participants from more diverse academic disciplines, cultural backgrounds, and age groups to ensure broader applicability of the results. Second, participant categorization into virtual companionship and knowledge acquisition scenarios relied on self-reported data using a Likert-type scale, which may introduce biases such as reliance on subjective memory. Future studies could integrate behavioral data for greater accuracy. Third, participants watched pre-recorded videos of GPT generating health-related information rather than interacting with the AI in real time. This controlled setup allowed for consistency but did not capture the dynamic and iterative nature of real-world chatbot interactions. Future research could explore real-time interactions to better reflect ecological validity. Despite these limitations, our findings provide a foundation for understanding AI-driven health rumor sharing and offer valuable insights for improving AI health information systems.

Footnotes

Appendix

Acknowledgements

Thanks to all the participants in the study.

Data Availability

Data will be made available on request.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical Statement

The studies involving human participants were reviewed and approved by the Ethics Committee of Shanghai Jiao Tong University. The participants provided their written informed consent to participate in this study.