Abstract

The online harassment of female politicians who focus on climate change and environmental policy has become a major problem in Canada and other democratic nations. Despite growing awareness of the problem, there is little agreement among scholars on how to measure these nuanced forms of harassment. This study develops an original seven-point scale to measure the severity of harassment three Canadian female politicians receive when Tweeting about climate change and a six-point schema to categorize the types of accounts behind the replies. My results reveal that 86% of replies contained some form of harassment, most often name-calling or questioning the authority of the female politicians, and come from users with spam or anonymous accounts. Further results from my Bayesian hierarchical model suggest that despite differences in status and political affiliation across the three politicians, they are almost equally impacted by harassment when Tweeting about climate change. These findings contribute to understanding the intersection between climate change denialism and the gendered nature of online harassment. This article contains language and themes that some readers may find offensive.

Introduction

“F*ck off with your climate scam . . . nazi traitor b*tch,” reads a reply sent to a Tweet 1 about climate change that originated on the account of Canada’s Deputy Prime Minister (DPM) and Minister of Finance, the Honorable Chrystia Freeland. For DPM Freeland and women across all political parties and levels of government in Canada and other democratic nations, coping with online and offline harassment is a reality of being an elected official (Southern & Harmer, 2021). Known as “gendertrolling,” this harassment is part of a global trend of violence against women (Mantilla, 2013; Wagner, 2020). Gender-based harassment on Twitter is well documented in academic literature, and inexplicably, pro-environmental behaviors are often viewed as “feminine” (Anshelm & Hultman, 2014; Citron, 2014; Vickery & Everbach, 2018). Female politicians who adopt strong policy stances are more likely to face online harassment because it is not consistent with existing gender stereotypes and notions of power and poses a threat to male climate deniers (de Geus et al., 2021; Rheault et al., 2019).

To gain a deeper understanding of the intersection of climate change denialism and online gendered-harassment faced by Canadian female politicians, I collected and manually coded replies to Tweets from DPM and MP for University–Rosedale (Ontario), Hon. Chrystia Freeland (from the Liberal Party), MP for Saanich–Gulf Islands (British Columbia), Elizabeth May (Leader of the Green Party), and MP for Victoria (British Columbia), Laurel Collins (from the New Democratic Party [NDP]) that specifically discuss climate change and environmental policy. Replies were coded using a seven-point scale to measure the severity of harassment and a six-point schema to categorize the types of accounts behind the replies. I then built a Bayesian hierarchical model to explore the relationship between the type of account, whether a user was verified through Twitter’s subscription program, and which female politician the reply was directed toward, to see whether certain combinations of these variables are more likely to lead to a harassing reply.

My analysis reveals that 86% of all replies contain some form of harassment. Questioning authority followed by name-calling/gender insults were the most common forms identified, accounting for over 80% of all replies. Harassing replies were most likely to be sent by spammers or anonymous accounts. My Bayesian hierarchical model suggests that there are no significant quantitative differences between the severity of harassment received by the three politicians, although qualitative differences were detected during the coding process.

This study contributes to our knowledge of the online harassment faced by female politicians by introducing a seven-point scale to measure the severity of harassment and a six-point schema to categorize the types of accounts behind the replies. The third category of harassment, questioning authority, is a specific contribution, as it is a phenomenon recognized in the literature (Krook, 2020), but not included in coding schemes designed to measure harassment. Finally, this study advances our understanding of climate change denialism and online misogyny, revealing that the intersection of these two areas is toxic for female politicians and democratic discourse.

Literature Review

Twitter and the Digital Public Sphere

The notion of having a public sphere for political deliberation is as old as Athenian democracy itself (Papacharissi, 2002). The public sphere is a place where “. . . society engages in critical public debate” (Habermas, 1989, p. 52) and meaning is “. . . generated, circulated, contested, and reconstructed” (Fraser, 1995, p. 287). Through Habermas’ conceptualization, the public sphere tends to magnify the voices of the political elites (traditionally White men), while diminishing and even vilifying the voices of minorities—traits which have been transposed into the digital public sphere (Vickery & Everbach, 2018). The past few decades have led to the structural transformation and fragmentation of the public sphere, moving from having a singular, “official” public sphere to a multitude of parallel smaller ones, called “subaltern counterpublics” and networked publics mediated by technological affordances (boyd, 2010; Bruns & Highfield, 2015; Fraser, 1991). Technology and social media reorganized the flow of information in society, theoretically breaking down barriers of who has access to the walled garden of democratic discourse (Trifiro et al., 2021; Vickery & Everbach, 2018). Despite the potential for the formation of new publics online, including issue-specific publics driven by shared policy interests such as climate change, the leading voices in defensive networked publics largely continue to be White, male, and Christian and who have free time (often dictated by patriarchal norms) to actively contribute to discussions (Bruns, 2023; de Geus et al., 2021; Jackson & Kreiss, 2023; Mantilla, 2013). Networked publics use trolling and harassment to undermine the authority of female politicians whose actions do not fit traditional gender roles and conceptions of power (Mantilla, 2015; Marwick, 2021; Megarry, 2014). Consequently, women have and continue to be on the margins of the public sphere, both in physical spaces like the House of Commons and online. This is underscored by an Inter-Parliamentary Union (2022) global survey which found that 82% of female politicians reported being subjected to psychological violence (including harassment, threats, and sexist comments). In Canada, specific examples of ongoing marginalization can be seen through the lived experiences of women in politics, with only one female Prime Minister to date (the Rt. Hon. Kim Campbell) and only 30% of federal MPs being female (House of Commons, 2024).

The original mindset for the development of the internet in the second half of the twentieth century was one of utopianism and democratic renewal—a new space for civil conversations (Papacharissi, 2002, 2004). However, early 2000s political communication theorists often overlooked the aims and agendas of the people who engineered these technologies (Papacharissi, 2004). Megarry (2020) adds that the internet was seen as the “last frontier” for male domination, much like the classic image of the American Wild West. The behaviors and norms of the Wild West continue to play out through the actions of male tech CEOs, such as Elon Musk, and through the way platforms are governed. While often lacking in application, social media platforms including Twitter have tended to slowly adopt content-moderation and community safety policies (Dubois & Reepschlager, 2024). This is specifically visible with Musk firing Twitter’s Chief Content Moderator, the Trust and Safety Council, and other content-moderation staff, leading to limited content moderation and more harassing content circulating on the platform (Hickey et al., 2023).

Harassment of Female Politicians and Climate Leaders

Gender-based harassment on Twitter is well documented in the literature (Bjarnegård & Zetterberg, 2023; Mantilla, 2013; Vickery & Everbach, 2018). Previous research has started distinguishing traits of harassment based on a politician’s status and visibility in politics and across traditional and social media. Blatant harassment is most likely to impact politicians of higher status and visibility (Rheault et al., 2019), while harassment of backbench MPs is often through subtle microaggressions (Harmer & Southern, 2021). Both Collignon and Rüdig (2020) and Erikson et al. (2021) found that the intersection between a politician’s age, gender, and political affiliation largely determines the type and frequency of harassment they face, leading to younger, female politicians more frequently receiving harassing messages than older politicians. Female politicians of color often face more harassment than any other group (Sobieraj, 2020).

To further contextualize this harassment, previous research and the lived experiences of female politicians emphasizes connections between climate change denialism and online misogyny (Anshelm & Hultman, 2014). A key example highlighting this intersection is the experiences of former Canadian Minister of the Environment and Climate Change, Hon. Catherine McKenna who was called “Climate Barbie” on Twitter by former Conservative MP Gerry Ritz in 2017, leading to relentless online and offline harassment (Raney & Gregory, 2019). While mitigating the impacts of climate change is a priority for two-thirds of Canadians and Prime Minister (PM) Trudeau’s government, it is increasingly becoming a divisive and polarizing issue, fueled by disinformation/misinformation (Coletto, 2021; de Nadal, 2024). Male climate deniers often have more leisure time than women, and they view making small behavioral modifications to help offset climate change as “feminine” (Anshelm & Hultman, 2014; Holmberg & Hellsten, 2015). Therefore, when female politicians tell the public to modify their behavior, male trolls feel doubly threatened, leading to severe and persist forms of online harassment to discredit the female politicians and uphold the status quo (Mantilla, 2015). Consequently, it is hypothesized that the replies to Tweets that originated on the three female politicians’ accounts will contain more severe forms of harassment (

Types of Accounts and Harassment

Identifying connections between types of accounts and forms of online harassment has previous research precedents. Users who have accounts with identifiable information and those with anonymous accounts are equally likely to send uncivil Tweets (Theocharis et al., 2020; Trifiro et al., 2021; Ward & McLoughlin, 2020), while users with anonymous and pseudonymous accounts are more likely to spread disinformation (McKay & Tenove, 2021). Mantilla (2015) emphasizes that gendertrolls are more likely to use spammer or anonymous accounts to send harassing messages. Uddin et al. (2014) found that spammers are most likely to spread more uncivil and harmful content, while personal users could sometimes mimic the behavior of spammers. Moreover, professional users are most likely to be involved in civil discussions (Uddin et al., 2014). Research emphasizes that bots can share helpful information (Dubois & McKelvey, 2019); however, they often amplify Tweets containing defamatory and uncivil language (Trifiro et al., 2021). Considering the literature, I have hypothesized that all types of accounts are pre-disposed to send harassing replies, but personal, anonymous, and spammer accounts will send the most replies containing harassment (

Theoretical Framework

By employing the theory of deliberative democracy, this article analyzes replies to Tweets sent by female politicians which explicitly discuss climate and environmental policy to evaluate the extent in which they fulfill the characteristics of healthy democratic discourse. Scholars note that the multiple viewpoints and “bottom-up” activism which accompany climate change debate makes it an important policy issue to test the strengths and weaknesses of the theory in the contemporary networked political ecosystem (Katz, 2014), specifically focusing on how platform affordances impact the quality and quantity of discourse (Collins & Nerlich, 2015; Treen et al., 2022). Recently, scholars have re-conceptualized the formation of the public sphere, evaluating the role of platforms with promised democratizing qualities, disinformation/misinformation, and how these mediums impact the original notion of deliberative democracy (Bruns, 2023; Slavtcheva-Petkova, 2023).

At its core, deliberative democracy “is a normative theory of democratic legitimacy based on the idea that those affected by a collective decision have the right, opportunity, and capacity to participate in consequential deliberation about the content of decisions” (Ercan et al., 2019, p. 23). Democratic legitimacy is established by allowing the public to collectively participate in respectful and reasonable deliberation about consequential decisions, while recognizing that there are multiple viewpoints on every issue (McKay & Tenove, 2021; Pain & Masullo Chen, 2019).

Diverging scholarly viewpoints exist when operationalizing and applying the theory of deliberative democracy. Some scholars view the theory in rigid terms, evaluating all characteristics and scenarios, such as political parties, systems, and institutions on a “yes” or “no” basis (Sokolon, 2019). However, as Rossini (2022) suggests, the theory can be viewed on a scale—looking at the extent to which healthy democratic speech exists in specific contexts. In this article, I adopt a “to what extent” perspective, following Rossini (2022). This perspective aligns with the scale I developed to measure the severity of harassment on the basis that democratic discourse is nuanced, and that shades of civil and uncivil speech can simultaneously exist, but not to the extent of female politicians self-censoring out of fear for their physical and psychological safety (Sobieraj, 2020). Results from my data analysis and model will be evaluated to see the extent in which the discourse among the replies in my dataset can be considered healthy for Canadian democracy.

Data and Methods

Data Collection and Cleaning

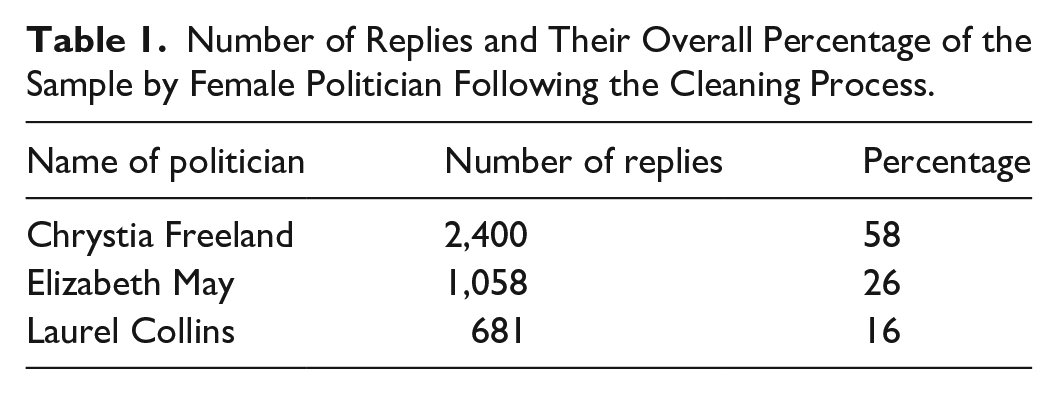

All data were collected via paid access to Twitter’s Basic v2 API endpoint using the statistical programming software R (R Core Team, 2023). Using the advanced search feature, I identified all original Tweets written in English, which contain the words “climate,” “environment,” and/or “environmental” that originated on the accounts of DPM Freeland (@cafreeland), MP May (@elizabethmay), and MP Collins (@laurel_bc) that were posted between September 1, 2022, and October 31, 2023. For threaded Tweets sent by the politicians, the first Tweet of the thread had to contain at least one of the key words, and the overall focus of the thread had to be on climate change and/or environmental policy. Key words were checked for applicability by reading through the entire Tweet and opening any links and media, given the use of words such as “climate” and “environment” to reference geopolitics and economic situations and not actual climate or environmental policy. After eliminating Tweets that did not match the criteria, I collected 11 original Tweets from DPM Freeland, 32 original Tweets from MP May, and 25 original Tweets from MP Collins. Between all the original Tweets and replies, there were 2,424 Tweets for DPM Freeland, 1,094 Tweets for MP May, and 711 Tweets for MP Collins for a total of 4,229. Replies sent in languages other than English (such as French, Ukrainian, and Arabic), replies sent by the politicians to their own threaded Tweets, and replies sent by MPs May and Collins to other users in response to their original Tweets were all removed. The replies sent by MPs May and Collins to other users were removed upon analysis because they re-iterated the same political messaging as the original Tweet, but any user replies to those replies remain in the dataset. Table 1 shows the final number of replies. This distribution of the number of replies by a politician aligns with the finding of Rheault et al. (2019) that status and visibility impact the quantity of replies received by Canadian politicians.

Number of Replies and Their Overall Percentage of the Sample by Female Politician Following the Cleaning Process.

DPM Freeland was selected because her current senior ministerial portfolios put her in charge of key climate and environmental initiatives—a priority of PM Trudeau’s government (Prime Minister of Canada, 2021). MP May was selected because she is the Leader of the Green Party of Canada and had a long career prior to politics as an environmental lawyer (May, 2024). MP Collins was selected as the NDP’s Critic for Environment and Climate Change and ran an environmental justice organization before seeking elected office (Collins, 2024). Moreover, these three politicians were selected because of evidence of harassing replies, diversity in regional representation, and their current positions within their caucuses afford them power in assisting with the development of policy positions and messaging on climate change. This is a limited privilege given the high level of centralization and message control by party leaders in Canadian politics (Marland, 2016). Only 6% of female MPs identify as a visible minority or Indigenous and do not frequently discuss climate change on Twitter, which is why my sample contains only White MPs (House of Commons, 2024).

Data Coding

My codebook (see Supplemental Material) and seven categories for severity of harassment (positive, neutral, questioning authority, name-calling/gender insults, vicious language, credible threats, and hate speech) were developed by consulting relevant literature and reviewing a sample of replies. Research published by Mantilla (2013, 2015), Wagner (2020), and Nadim and Fladmoe (2021) informed my thoughts surrounding how to measure harassment and many of the categories on the scale (name-calling/gender insults to hate speech), while work from Krook (2020) foremost motivated the questioning authority category. The placement of hate speech as the most severe category was informed by the Canadian criminal code (Tenove et al., 2018), while the categories that contain no harassment, positive and neutral, were included on the scale to account for the wide range of expressions in the replies (Tenove et al., 2023). Furthermore, I qualitatively reviewed a sample of 300 randomly selected replies sent to all three politicians, and following the approach of Tenove et al. (2023), I selected 100 replies from my dataset which contained keywords frequently associated with harassment in the literature (such as b!tch and Nazi). This seven-point scale, especially the questioning authority category, is helpful for bringing awareness to and categorizing nuanced forms of harassment. When employing machine learning models to code harassment, these more nuanced forms of harassment are sometimes misclassified as not containing harassment; however, they are forms of harassment that have a negative impact on women serving in elected office (Harmer & Southern, 2021). My seven-point scale also combines the nuance from previous qualitative projects that employ content analysis and interviews, while recognizing the benefits of more quantitative approaches. The six categories for type of account (personal, professional, bots, spammers, anonymous, and suspended/deleted) were developed by primarily reviewing research from Singh et al. (2018), Uddin et al. (2014), Dubois and McKelvey (2019), and Trifiro et al. (2021), and by manually analyzing account metadata from 400 randomly selected accounts in my dataset.

To determine the severity of harassment, each coder read through the text of the reply, looking for keywords, emojis, and media indicated in the codebook as being associated with each category of harassment. Coders then opened any URLs and embedded images, gifs, or videos as part of the analysis. All aspects of the Tweet, including text, emojis, hashtags, and embedded media were considered equally when determining the severity of harassment. For replies that contained multiple forms of harassment, coders were instructed to code the reply as the most severe category on the seven-point scale. To identify the type of account, coders then looked at the account metadata linked in the dataset and searched for the account on Twitter, checking how frequently a user posted and replied to Tweets. The codebook provides descriptions of characteristics that are frequently associated with each type of account, including features of users’ names, bios, and profile pictures. Both variables were coded mutually exclusively; therefore, only one of the seven categories of harassment were selected per reply, and only one of the six types of accounts was selected per account.

To ensure validity, an undergraduate research assistant coded a random selection of 10% (413) of the total number of Tweets in the sample. Intercoder reliability was measured between two coders using the R package irr (Gamer et al., 2019). Moderate levels of agreement were reached, according to Krippendorff (2019), with α ⩾ .684 for severity of harassment and α ⩾ .691 for type of account.

Data Limitations

A major limitation of using Twitter data is the inability to determine a representative sample of voting-age Canadians, limiting our ability to draw generalizable results about public opinion (Bermingham & Smeaton, 2011). Selection bias exists because Twitter tends to represent the views of people who are “. . . more partisan, polarized, and uncivil” and motivated to be active users (McGregor, 2020, p. 237). We are also limited by the information users choose to publicly display on their profiles, making it hard to confirm if an individual is Canadian or other identifying demographic information (Holmberg & Hellsten, 2015).

Moreover, Twitter data are collected as a snapshot in time, often reducing users to a single interaction on the platform, meaning we cannot see their full range of political expressions and frequency of certain behaviors, leading to potential measurement bias. Consequently, with context collapse that happens when an individual user’s Tweets are taken out of the context and imagined audience they were intended for, users’ true beliefs may be misrepresented (Marwick & boyd, 2011). Some users may also show up more than once if they are prolific Twitter users, leading to overrepresentation of their views and type of account. With these identified limitations in mind, results should be considered in the context of this study, and generalizations can only be reflective of the users in the dataset and not the wider Canadian public.

There are several restrictions placed on the storage and sharing of data collected via Twitter’s API, limiting the reproducibility of this research project (Alexander, 2023; Twitter Developer, 2023). Datasets, even if personally identifying information such as usernames and locations has been removed, cannot be uploaded to open source platforms like GitHub.

Results

Severity of Harassment

Among the 4,139 replies to the three politicians, 2,284 (55%) were identified as questioning authority, 1,060 (26%) as name calling/gender insults, and 542 (13%) as neutral (see Table 2). One hundred forty-four (3%) contained vicious language, while positive, credible threats, and hate speech all, respectively, accounted for 1% of the replies detected. For all politicians, the most frequently selected categories are questioning authority, followed by name-calling/gender insults, and neutral (see Figure 1).

Breakdown of Severity of Harassment on the Seven-Point Scale Detected in Replies to All Three Politicians.

Severity of harassment in the replies received, broken down by politician.

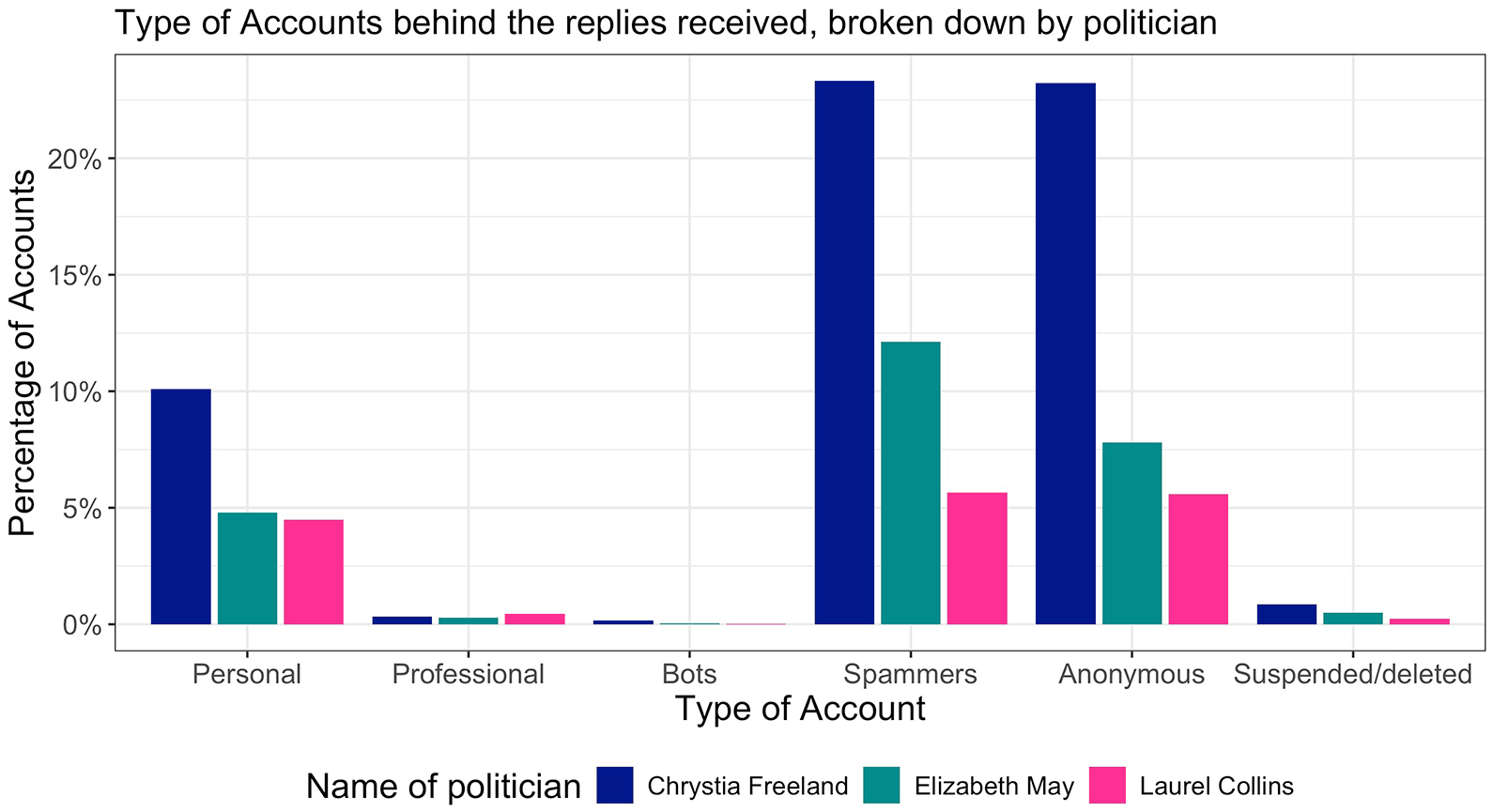

Type of Account

Table 3 summarizes the distribution of types of accounts identified. Spammers accounted for 1,071 (41%) of all accounts, followed by 1,515 (37%) anonymous accounts, and 802 (19%) personal accounts. Small numbers of suspended/deleted (2%), professional (1%), and bots (0%) were detected (see Figure 2). While some users sent multiple replies to one, two, or all the female politicians, over 75% of users only replied once, aligning with previous results from Theocharis et al. (2020) and Ward and McLoughlin (2020).

Breakdown of Type of Accounts Detected in Replies to All Three Female Politicians.

Type of accounts behind the replies received, broken down by politician.

Figure 3 highlights the relationship between severity of harassment and type of account for all politicians. For questioning authority and name-calling/gender insults, the replies to DPM Freeland were most likely to come from anonymous users, while replies to MPs May and Collins were most likely to come from spammers. For neutral, the replies to DPM Freeland were most likely to be sent by spammers, while personal accounts most frequently sent replies to MPs May and Collins.

Relationship between severity of harassment and type of account, broken down by politician.

Chrystia Freeland

For DPM Freeland, 1,189 (50%) of her replies were categorized as questioning authority, followed by name-calling/gender insults with 678 (28%), and 356 (15%) neutral replies (see Table 4). Ninety-nine (4%) of her replies contained vicious language, with the remaining categories of positive, credible threats, and hate speech each accounting for 1% of the total replies. The fewest number of replies in DPM Freeland’s sample were categorized as positive, with hate speech being more prominent, which illustrates the increasingly toxic nature of discourse directed at DPM Freeland.

Breakdown of the Severity of Harassment on the Seven-Point Scale Detected Among Replies to DPM Freeland.

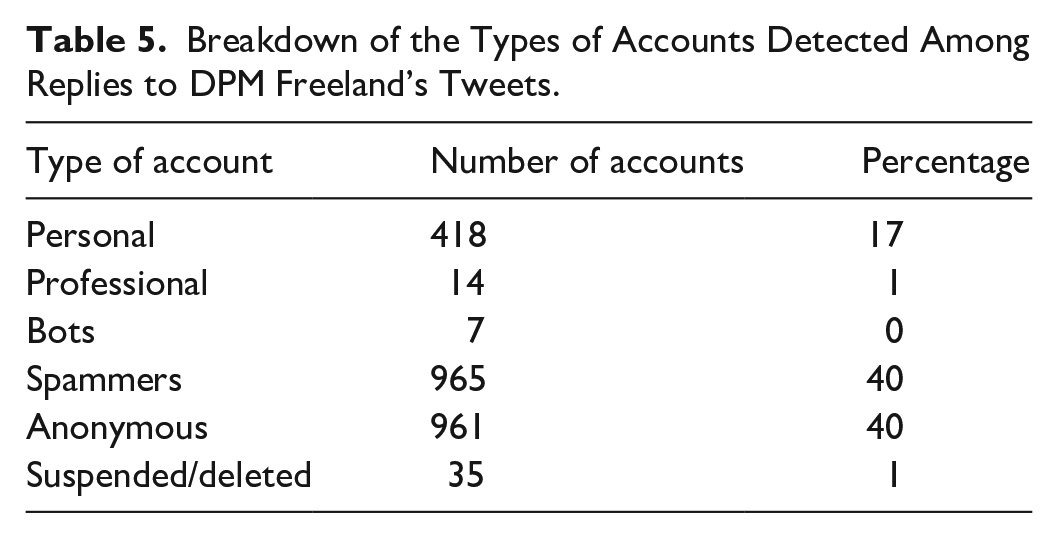

As illustrated by Table 5, spammers and anonymous accounts made up 80% of all identified accounts, followed by 418 (17%) personal accounts. Very small numbers of professional, bots, and suspended/deleted accounts were detected.

Breakdown of the Types of Accounts Detected Among Replies to DPM Freeland’s Tweets.

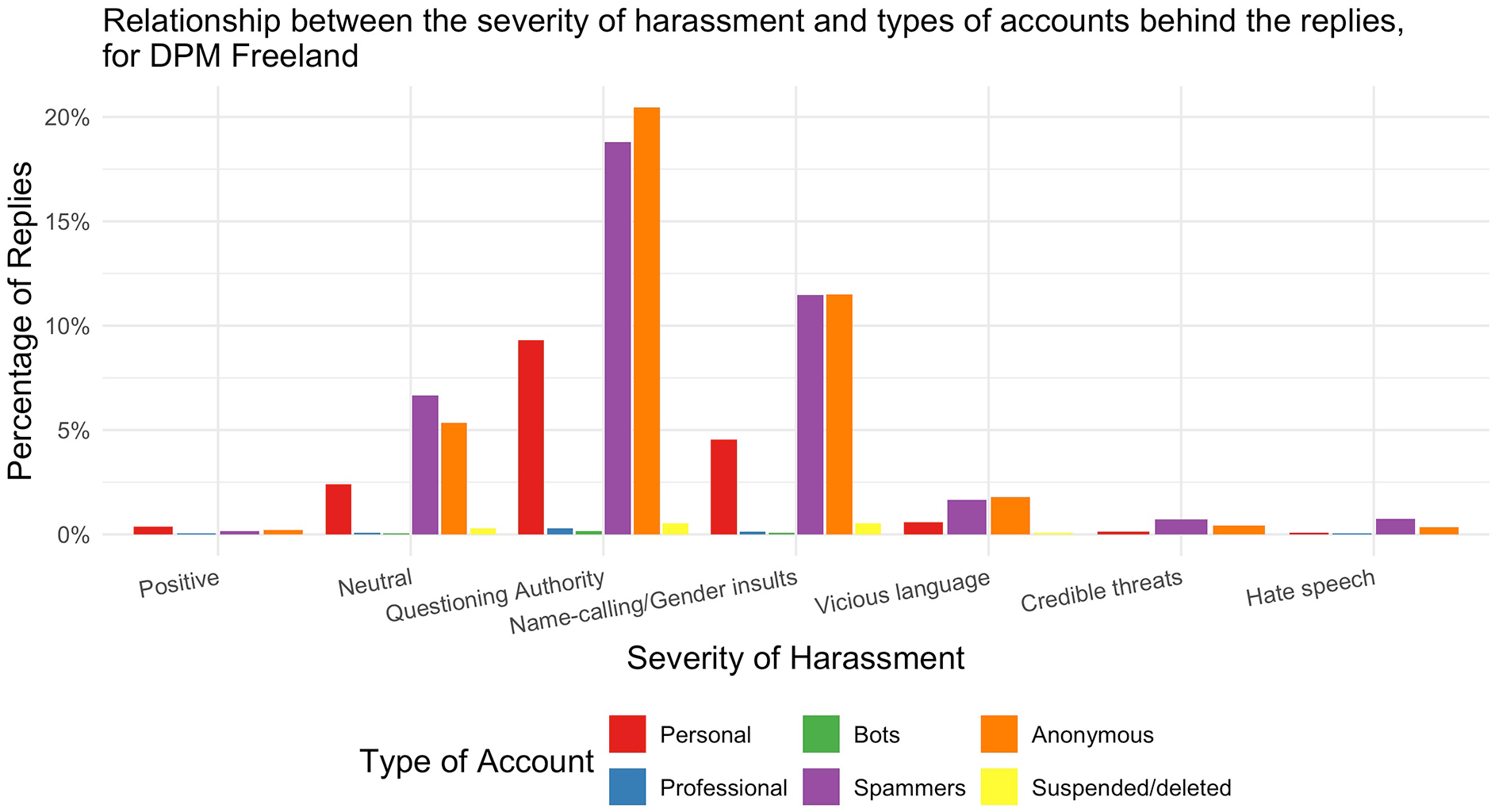

Relationship between severity of harassment and types of accounts detected among replies to DPM Freeland’s Tweets.

For DPM Freeland, the relationship between severity of harassment and type of account can be seen in Figure 2. Positive and neutral replies were most frequently sent by personal accounts, while replies questioning authority and name-calling/gender insults most frequently came from anonymous accounts, closely followed by spammers. Anonymous accounts, closely followed by spammers, were responsible for sending the most replies containing vicious language, while credible threats and hate speech were most often sent by spammers.

Elizabeth May

Among the replies to MP May’s Tweets, 583 (55%) were categorized as questioning authority, 295 (28%) as name-calling/gender insults, and 133 (13%) as neutral (see Table 6). Twelve (1%) of the replies in MP May’s sample were positive, with 1 (0%) Tweet belonging to both the credible threats and hate speech categories.

Breakdown of the Severity of Harassment on the Seven-Point Scale Detected Among Replies to MP May’s Tweets.

Table 7 emphasizes that 502 (47%) of the accounts in MP May’s sample belong to spammers, followed by 323 (31%) anonymous accounts, and 198 (19%) personal accounts. Moreover, 21 (2%) accounts in MP May’s sample are suspended/deleted, 12 (1%) were professional, and 2 (0%) bots.

Breakdown of the Types of Accounts Detected Among Replies to MP May’s Tweets.

The relationship between severity of harassment and type of account in replies sent to MP May can be seen in Figure 5. Positive and neutral replies were most frequently sent by personal accounts, while replies questioning authority and name-calling/gender insults most frequently came from spammers. Anonymous accounts were responsible for sending the most replies containing vicious language, while credible threats were only sent by anonymous accounts, and hate speech was only sent by spammers.

Relationship between severity of harassment and types of accounts detected among replies to MP May’s Tweets.

Laurel Collins

Of the replies in MP Collins’ sample, 512 (75%) were categorized as questioning authority, 87 (13%) as name-calling/gender insults, and 53 (8%) as neutral. Table 8 shows that the remaining replies were categorized as positive (13 or 2%), vicious language (12 or 2%), and credible threats and hate speech (2 replies per category or 0%).

Breakdown of the Severity of Harassment on the Seven-Point Scale Detected Among Replies to MP Collins’ Tweets.

For MP Collins, 68% of the accounts were categorized as both spammers and anonymous, followed by personal (186 or 27%). As shown in Table 9, the remaining accounts were categorized as professional (19 or 3%), suspended/deleted (10 or 1%), and bots (1 or 0%).

Breakdown of the Types of Accounts Detected Among Replies to MP Collins’ Tweets.

Figure 6 emphasizes the relationship between severity of harassment and type of account. Positive, neutral, and credible threat replies were most frequently sent by personal accounts, while replies questioning authority and name-calling/gender insults most frequently came from spammers, closely followed by anonymous accounts. In addition, anonymous accounts were responsible for sending the most replies containing vicious language, while hate speech was equally sent by spammers and anonymous accounts.

Relationship between severity of harassment and types of accounts detected among replies to MP Collins’ Tweets.

Model

This model investigates the combination of the type of account a user has, whether they are verified, and which politician they are replying to in order to predict if they are more likely to send a harassing reply. Historically, accounts with the blue checkmark/tick were regarded as credible individuals and/or organizations, sharing legitimate information (Haman & Školník, 2023). However, since Musk introduced changes to Twitter’s verification policy in April 2023, the Center for Countering Digital Hate (2023) found that 99% of online hate was spread by malicious users who paid to be verified, allowing for circumvention of content-moderation rules. Bayesian hierarchical modeling using R, employing rstanarm (Goodrich et al., 2023), marginaleffects (Arel-Bundock, 2023), and modelsummary (Arel-Bundock, 2022), can help predict and further investigate this relationship. The model was fit using rstanarm (Goodrich et al., 2023). For the priors, I followed the standard weakly informed prior distributions by using the normal definition with mean 0 and standard deviation 2.5 as used in the rstanarm package. The model is:

where

Table 10 presents the estimates generated by the model. For ease of analysis, Table 11 displays the estimates as predictions in a table, and Figure 7 shows the predictions graphed.

Predicting Whether a Reply Contains Harassment or Not, on the Basis of the Type of Account Behind the Tweet, Which Politician the Tweet Was in Response to, and Whether a User Is Verified.

Predicting Whether a Reply Contains Harassment or Not, on the Basis of the Type of Account Behind the Tweet, Which Politician the Tweet Was in Response to, and Whether a User Is Verified.

Predicting whether a reply contains harassment or not, on the basis of the type of account behind the Tweet, which politician the reply was in response to, and whether a user is verified.

Table 11 predicts whether a user is likely to send replies that contain harassment. In comparison to DPM Freeland, the likelihood of replies to MP May containing harassment decreases by 2%, while replies to MP Collins containing harassment decreases by 6%. For type of account, when personal accounts are compared to professional accounts, professional accounts are an estimated 13% less likely to send replies that do not contain harassment. Bots, when compared to personal accounts, are an estimated 8% more likely to send replies that do not contain harassment, while spammers are an estimated 11% and anonymous accounts are an estimated 12% more likely to send replies that do not contain harassment. Suspended/deleted accounts are an estimated 3% more likely to send replies that do not contain harassment in comparison to personal accounts. The model is unable to find a quantitative difference in whether users who are verified or not verified are more likely to send harassing replies. The results from the model suggest that despite differences in status and political affiliation across the three politicians, they are almost equally impacted by harassment on Twitter. Moreover, all types of accounts are predisposed to sending harassing replies, especially professional accounts, which will be explored in the discussion.

Discussion

Severity of Harassment

It was anticipated that DPM Freeland, followed by MP May, and MP Collins would receive the most severe replies (

Fifty-five percent of replies fell under the questioning authority category, which was ultimately higher than expected (

Sample of a Reply Questioning Authority Sent to DPM Freeland.

Type of Account

Previous research and our understanding of the increasingly toxic nature of Twitter supports the high number of spammers and anonymous accounts identified (Phillips, 2015; Theocharis et al., 2020; Wagner, 2020). The high percentage of anonymous accounts (37%) reinforces McKay and Tenove’s (2021) findings that anonymous (and often harassing) political speech is increasingly common in our contemporary era of deliberative democracy. Given recent changes to Twitter’s affordances, anonymous accounts continue to exist because people are not pleased with the new direction of the platform and do not wish to actively engage but still wish to have an account.

The high number of spammers (41%) is consistent with the “Wild West” attitude conceptualized by Megarry (2020). Under previous ownership, many of these accounts would likely be suspended because of enforcement of content-moderation rules. The low number of suspended/deleted accounts was anticipated, since Musk loosened content-moderation policies upon purchasing Twitter, leading to an increase in hateful content and malicious users on the platform (Hickey et al., 2023). The few deleted accounts were likely people who intentionally wanted to leave the platform. Of those people who are suspended, there are identified instances of temporary suspension (Twitter Developer, 2023) or permanent suspension, which users circumvented by making a new account. Users who made new accounts often indicated this in their bio through phrases like “Back again, hunting libs” or “3rd time? @TXIndep1836 & @TXIndepndnt1836 SILENCED!”

For all three politicians, positive and neutral replies were most likely to be sent by personal users, while nearly all other categories of replies (questioning authority to hate speech) were most likely to be sent by anonymous and spammer accounts, confirming

Although only 1% of accounts were categorized as professional, who they belong to and their corresponding severity of harassment are notable. Of the replies that were sent by professional accounts, 64% contained some form of harassment, most often questioning authority or name-calling/gender insults. Several prominent Conservative MPs, including Leader Pierre Poilievre and MPs Stephanie Kusie and Larry Brock, responded to Tweets originating on DPM Freeland’s account. The estimate the model produced suggesting that professional accounts are 13% less likely to send a Tweet that does not contain harassment is noteworthy because it illustrates how Conservative politicians are pandering to their base by engaging in aggressive, confrontational behavior in the House of Commons and then mimicking that behavior online by sending harassing replies (Delacourt, 2016). Replies sent by Conservative MPs were coded as either questioning authority or name-calling/gender insults, which suggests a level of disregard for Parliament as an institution, the authority of female politicians who work on environmental policy, and deliberative speech.

While coding type of accounts, I noticed a number of accounts had at least one red apple emoji and/or sometimes a green apple emoji in their bio or display name. Although there is no academic literature explaining this trend, Conservative supporters likely added this emoji to their profile as a sign of support for Leader Pierre Poilievre, following his October 2023 viral video “chomping down” on an apple while scolding a journalist (Tunney, 2023). The addition of these emojis to user profiles highlights Conservative politicians’ abilities to mobilize their supporters to mimic how they use their own professional accounts to send harassing Tweets. At the time of data collection, 90 unique users added apple emojis to their display name and/or bio, but that number is likely higher with the trend’s increasing popularity. Table 13 provides an example. This subtle show of support for the Conservative Party is augmented by direct support for Conservative and right-wing political movements in Canada and the United States by the use of words and phrases in user bios such as “#TrudeauMustGo,” “#Pierre4PM,” “#Trump2024,” “MAGA,” “CPC,” “Conservative,” and “PPC.” Two hundred eighty-eight unique users in my dataset explicitly identified themselves as having right-wing and ideological views.

Example of How Twitter Users Chose to Show Their Support for the Conservative Party and Their Leader Using Apple Emoji in Their Display Name.

Chrystia Freeland

DPM Freeland’s results for severity of harassment and type of account are largely consistent with previous research into the harassment faced by eminent female politicians (Rheault et al., 2019). The use of memes, to undermine and harass DPM Freeland, prominently features in her sample. The memes in DPM Freeland’s sample are often unflattering photographs of her, presenting anti-feminist narratives, and intending to undermine her based on her physical appearance (Ging & Siapera, 2019). There was no evidence of deepfakes or highly sophisticated manipulated audiovisual content detected in DPM Freeland’s sample (Appel & Prietzel, 2022). Moreover, historical pictures from Nazi Germany in the 1930s/1940s were memed to include the faces of DPM Freeland and sometimes PM Trudeau and NDP Leader Jagmeet Singh, aligning with research conducted by Boudana et al. (2017) which reveals that the revival of historic photographs in the form of memes bolsters public discussion and draws symbolic connections between the past and the present. The memes sent in response to Tweets by DPM Freeland are similar in sentiment to anti-suffragette posters circulating in the early twentieth century which intended to undermine women campaigning for the right to vote and discourage the voices of women in the public sphere (Ging & Siapera, 2019). Memes and photographs, including Figure 8, are subjected to Twitter’s content-moderation rules, as hate speech can be expressed through symbols and images yet continue to freely circulate on the platform (Carlson, 2021; Twitter Developer, 2023).

Example of a reply to a Tweet sent by DPM Freeland, which revives historical photographs through memes and contains hate speech.

Although there were only 29 identified instances of hate speech in DPM Freeland’s sample (see Table 4), qualitative signs of far-right activity and hateful behavior appeared throughout her sample. Users belonging to fringe minorities will often assign ironic humor and hateful meanings to seemingly normal words and objects, such as the Pepe the Frog character which originated on 4chan, allowing later denial of their political views if questioned (Sarah and Chaim Neuberger Holocaust Education Centre, 2022). A number of users made reference to Pepe in their username, bio, profile picture, or individual replies to Tweets that originated on DPM Freeland’s account. Furthermore, at least one user in DPM Freeland’s sample also stated their involvement in the far-right online men’s rights movement called “men going their own way” (MGOW) (Marwick & Caplan, 2018). These observations of qualitative far-right behavior are concerning for the future of democracy and the active participation of women in politics.

Elizabeth May

The results for severity of harassment and type of accounts detected in MP May’s sample are consistent with expectations, although there were slightly fewer positive and neutral replies than anticipated. Fifty-five percent of Tweets in MP May’s sample were coded as questioning authority, while 28% fall under the name-calling/gender insults category (see Table 6). Table 7 emphasizes that combined, spammers and anonymous accounts total nearly 80% of all accounts in MP May’s networked public—a significant number compared to the 19% of personal accounts. Given MP May’s decades of climate advocacy and personal popularity, a higher number of personal accounts were anticipated, but the number of spammers and anonymous accounts could be explained by the changing platform affordances.

Although memes are not as prominent in MP May’s sample of Tweets, user-generated science infographics and links to YouTube videos and websites containing disinformation/misinformation repeatedly appeared. Replies presenting disinformation/misinformation were often coded as questioning authority due to the tone and use of demeaning language (see Table 14). Instead of having an entirely civil conversation, MP May’s grasp of science and qualifications is challenged.

Sample of a Reply Questioning Authority Sent to MP May.

Laurel Collins

Replies in MP Collins’ sample, for both severity of harassment and type of account, were generally more severe than hypothesized. I expected to see an even split between replies coded as questioning authority and neutral, but ultimately 75% of replies were coded as questioning authority, and 8% as neutral (see Table 8). Unlike DPM Freeland and MP May, the number of replies sent to MP Collins categorized as name-calling/gender insults is lower (at 13%), but there are more replies coded as vicious language, credible threats, and hate speech when compared to MP May. For type of account, MP Collins had the highest number of personal accounts at 27%, the third most chosen category behind spammers and anonymous accounts (see Table 9).

Moreover, I identified qualitative differences in the word choice and tone used in replies to MP Collins. Table 15 illustrates the use of patriarchal and paternalistic language directed toward MP Collins, with the use of “my dear.” In addition to talking down the qualifications of MP Collins, this user also questions her understanding of climate science and forest fires. Replies like this one and others in MP Collins’ sample continue to emphasize the microaggressions faced by female politicians as they communicate about consequential policy issues (Harmer & Southern, 2021; Vickery & Everbach, 2018).

Sample of a Tweet Containing Name-Calling/Gender Insults Sent to MP Collins.

Deliberative Democracy

Of the three politicians, MP May’s Twitter account best fulfills the definition of deliberative democracy, having open conversations with multiple viewpoints and two-way flows of communication. All viewpoints, including the fact Canada is not going far enough on climate action and is going too far features prominently throughout the replies. Among the users who share disinformation/misinformation, some claim climate change is simply changing weather, or that forest fires are caused by arson, or other reasons supported by user-generated websites. Although over 80% of the replies in her sample were coded as either questioning authority or name-calling/gender insults, people in MP May’s networked public more frequently discussed science and policy, albeit sometimes disinformation/misinformation. MP Collins’ networked public also possessed these characteristics, although she was less willing to respond. DPM Freeland received significant amounts of verbal backlash, and her staff intentionally did not respond to replies from the public, meaning her Twitter account is a space to broadcast political messages instead of engaging in discourse with the public (Marland, 2016). The term post-deliberation public sphere aptly explains how the three politicians engage with other users in consequential discussions on Twitter, as digital public spheres can never fully live up to Habermas’ ideals (Slavtcheva-Petkova, 2023). Considering that it is still important to have public discussions in some form in post-deliberation public spheres, Canadian politicians should facilitate offline townhall meetings to engage constituents in critical conversations about climate change.

Limitations

Twitter’s ever-changing affordances and focuses as a platform (such as promoting “free speech”) impact the severity of harassment and types of accounts deemed acceptable to keep on the platform, likely contributing to the lower agreement between coders for severity of harassment and type of account (Hickey et al., 2023). Future studies should further refine and validate the severity of harassment scale and type of account schema, including using comment and account data from other social media platforms like YouTube and Instagram. Many of the third-party tools, such as Botometer (Yang et al., 2022), which previously assisted researchers in determining types of accounts have been rendered unusable with Twitter API changes, limiting my ability to determine which accounts in my study are bots. The number of accounts categorized as bots in my study is likely smaller than the actual number of bots. It is impossible to know on a granular level how every change to Twitter affordances impacts each harassing reply and account on the platform but can generally inform hypotheses and patterns established when analyzing the data.

Conclusion

This study examined replies to Tweets explicitly discussing climate change and environmental policy sent to DPM Freeland, MP May, and MP Collins. My results from manually coding and my model suggest that the three politicians are equally impacted by harassment, with 86% of replies containing some form of harassment. Questioning authority and name-calling/gender insults were the most identified forms of harassment, while spammers and anonymous accounts were most frequently associated with sending harassing replies. This study contributes to our understanding of the online harassment of female politicians by developing a seven-point scale to measure more nuanced forms of harassment and a six-point schema to categorize the types of accounts behind the replies.

The replies analyzed for this study generally match the criteria of what constitutes healthy democratic discourse, with users with multiple, diverse viewpoints weighing in on the issue of climate change. It is crucial to ensure we continue studying and discussing the intersection of climate change denialism and misogyny because if we cannot mediate the risks of gendertrolling at the highest echelons of power, then female politicians may self-censor and risk everyone’s ability to engage in respectful discourse surrounding climate change (Vickery & Everbach, 2018).

Supplemental Material

sj-docx-1-sms-10.1177_20563051241304493 – Supplemental material for Torrential Twitter? Measuring the Severity of Harassment When Canadian Female Politicians Tweet About Climate Change

Supplemental material, sj-docx-1-sms-10.1177_20563051241304493 for Torrential Twitter? Measuring the Severity of Harassment When Canadian Female Politicians Tweet About Climate Change by Inessa De Angelis in Social Media + Society

Footnotes

Acknowledgements

The author is grateful for the guidance and helpful suggestions from Rohan Alexander, Kelly Lyons, Semra Sevi, Elizabeth Dubois, and the two anonymous reviewers. The author also thanks Sarah Xie and Allyson Cui for their research assistance.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded in part by the Social Sciences and Humanities Research Council of Canada (grant ID: 766-2022-0719) and the Government of Ontario, Allan and Jean Howarth Ontario Graduate Scholarship.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.