Abstract

Educational, political, or moral/religious content is increasingly present on TikTok, so contemporary social dynamics legitimize the process of digital mediation regarding these institutional values. Based on 286 open-ended survey answers and subsequent interviews with 45 Romanian TikTok users, this article applies social constructivism to explore the intersubjective side of algorithmic experiences. The significance of such a framework lies in its ability to elucidate the manner in which users actively construct their social environments, which may initially appear as isolated individual experiences but ultimately unveil shared algorithmic interpretations. Thus, the participants highlight three recurrent institutional themes in relation to TikTok’s algorithm: (1) algorithm as political profiling, (2) algorithm as moral plethora, and (3) algorithm as educational benchmark. Findings show that users’ stories related to algorithms are widely conceived within institutional frameworks. These narratives play a role in shaping what Berger and Luckmann call “intersubjective sedimentation” within the intricate interconnection between institutional and algorithmic realities. The ways in which TikTok users legitimize the presence of these institutional actors on their For You page should be seen as a form of agency negotiation between users and machines. The legitimating role of stories about algorithms also highlights the institutional necessity of intergenerational socialization, which is why the contents made by such institutional actors are more and more actively mediated through TikTok.

Introduction

This study highlights the institutional aspect of algorithmic sensemaking on TikTok, which refers to how users make sense of their interactions as part of a larger and objective social reality (Berger & Luckmann, 1967). It is observed that different institutional actors present on TikTok, such as priests, politicians, nutritionists, or teachers, contribute to creating an objectified world for TikTok users. By objectified versions of reality, I mainly refer to the constructivist orientation according to which the emergence of these institutional actors on TikTok contributes to discursive practices that confirm a digitally mediated socialization. Thus, TikTok actively contributes to this process, given the fact that users outline their multiple stories about algorithms (Schellewald, 2022) precisely through the emergence of institutional actors. Since several politicians, priests, doctors, or teachers—who are also influencers on TikTok—are frequently encountered and narrated by Romanian TikTok users, it becomes imperative to investigate how these contents are legitimized by these users.

TikTok’s algorithm has been the subject of several privacy controversies, given that TikTok discloses very few technical details about how its algorithm functions (Wall Street Journal, 2021; WIRED, 2021). As a result, such technical information, which is almost non-existent in the public sphere, contributes to shaping the most diverse algorithmic stories, through which users legitimize their presence on TikTok in different ways. This application has been downloaded more than 3 billion times since its inception in 2016 and presently boasts a user base of over 1 billion monthly active users as of September 2021, indicating that a fifth of all internet users engage with TikTok (Woodward, 2023). It is no accident that algorithmic user experiences are frequently questioned and subject to ideological controversy (Moga, 2022).

Drawing on social constructivism based on 286 open-ended survey responses and interviews with TikTok Romanian users, this article traces how users discursively legitimize both the presence of these institutional actors on TikTok and the role that recommendation algorithms play in this process. The socially constructed aspect of such a digital presence is well noticed, given that users create their own meaningful stories about their TikTok-mediated interaction. Therefore, this article contends that legitimizing—practical and discursive—the presence of institutional actors on TikTok serves to underscore the conceptual framework regarding the constant process of agency negotiation between users and technology. Within this context, Romanian participants provide several justifications to support the inclusion of these institutional actors on the For You page. As a result, the presence of recurring algorithmic themes, such as “political profiling,” “moral plethora,” and “educational benchmark,” highlights how different contents on TikTok assume roles that were usually reserved for “traditional” social institutions. These institutions include political parties for political socialization, churches and religious communities for moral socialization, and schools for educational socialization. The prevalence of political, moral, or educational content on TikTok justifies this institutional understanding of the negotiation between user agency and machine agency. This perspective is grounded in the belief that TikTok can be seen as an effective social and technological environment for transmitting institutional values to new generations through legitimation (Berger & Luckmann, 1967).

Romania is among the countries with an intensive presence on TikTok, boasting a substantial user base of nearly 6.5 million users aged 18 and above as of 2022. This figure accounts for approximately one-third of the country’s entire population. In addition, the use of TikTok exhibits gender equilibrium, with 51% of users being women and 49% men (Neagu, 2022). The explicit socio-demographic establishment of TikTok users holds significant relevance, particularly for advertising agencies, which use TikTok to reach different target groups.

This analysis examines the constructivist perspective on intersubjective social realities and their implications for the interaction between users and algorithms. Specifically, it highlights the constant process of agency negotiation, through which the participants integrate their own stories in relation to the contents in which institutional actors appear. Therefore, it is imperative to explore its algorithmic existence as a form of institutional process through which Romanian TikTok users make sense of their digital activity. This investigation is situated within the framework of a social perspective on algorithms, which acknowledges the idea that “software conditions our very existence” (Kitchin & Dodge, 2011, p. IX). Therefore, drawing from a data set consisting of 286 open-ended survey responses and subsequent in-depth interviews, the researcher of the current study follows the main justification strategies that Romanian TikTok users have in realizing what Bhandari and Bimo (2022) call “the algorithmized self,” an ever-increasing social awareness toward the role of algorithms within users’ digital lives.

To understand the institutional role that emerges from the algorithmic presence on TikTok, the conceptualization of stories about algorithms was used as a starting point. Precisely, these stories were designated as emerging from the perspectives of everyday individuals, following a bottom-up approach that takes place through the values and meanings that these users experience in relation to themselves. Thus, the use of the conceptualization related to stories about algorithms extends beyond mere imaginative representations of algorithms, given that these imaginaries can now be identified and presented as a particular form of knowledge (Schellewald, 2022). Understanding the shared experiences of TikTok users in institutional terms has visible practical implications since the digital and dynamic character of contemporary social realities makes social institutions such as schools, political parties, and even churches—through their representatives: politicians, teachers, priests, and so on—more present on digital platforms such as TikTok. The fact that users constantly receive content from one or more institutional actors creates the premises for a process of legitimizing such content. This process involves users evaluating and either endorsing or questioning algorithmic recommendations, resulting in a constant process of user–machine agency negotiation. This is precisely the context in which “intersubjective sedimentation” (Berger & Luckmann, 1967, p. 85) takes shape within these stories about algorithms.

Imaginaries and Stories Related to TikTok’s Algorithm

TikTok’s algorithms use better metrics for measuring desirable content compared to Facebook’s and Instagram’s algorithms. For example, TikTok’s algorithm can bypass users’ social networks using more subtle indicators, such as the time dedicated to viewing a video, the repetition of the same videos, and the scrolling frequency (WIRED, 2020a, 2020b). As a result, users’ interpretations of the algorithm are seemingly endless when it comes to TikTok’s personalized recommendations.

The potential for exploring various sensemaking approaches related to TikTok is heightened due to the intentionally obscure nature of the application’s algorithmic practices (Kaye et al., 2022), making room for numerous speculations and social universes related to algorithms. The concept of “algorithm awareness” was introduced in this context, which refers to understanding the fact that “our daily digital life is full of algorithmically selected content” (Eslami et al., 2015, p. 153). Thus, beyond the operational functionality of algorithms, which pertains to their objective state, there is ongoing discourse surrounding how algorithms contribute to users’ affective or emotional dimension, specifically, how these algorithms are experienced or perceived during usual interactions. Therefore, the phenomenon of surprise in relation to algorithms has been a topic of discussion (Swart, 2021), with some scholars linking the activity of algorithms to a form of digital irritation (Ytre-Arne & Moe, 2021). For Siles et al. (2022), users’ awareness of TikTok algorithms can be conceptualized as a process in which recurring activities—such as sensing algorithmic personalization or oscillations in relation to recommendation algorithms—seem to follow rhythmic patterns of sensemaking.

Based on Papacharissi’s (2014) discussion of affect, the researcher of the present study is aware that several stimuli may lead individuals to a particular feeling. Rader and Gray (2015) show that most Facebook users demonstrated an understanding that the content displayed on their news feeds did not encompass the entirety of their friends’ posts. In relation to the TikTok algorithm, Siles et al. (2022) highlight that users are often aware of their exposure to content that is deemed highly viral. Espinoza-Rojas et al. (2023) also show how different digital platforms accessed by users shape several types of awareness. For example, an algorithm seen as more advanced can be perceived as more dangerous, as such opaque and complex systems might “violate” users’ expectations (Kizilcec, 2016). Several scholarly investigations have examined the harmonious character of the interaction between users and algorithms. This harmony is achieved through a complex entanglement of what Lupinacci (2022, p. 9) presents as “your individual preferences and past engagement.” According to Bucher (2020), algorithmic harmony is characterized by the presence of carefully structured temporal markers, as it delivers content recommendations at opportune moments. For Siles (2023, p. 35), TikTok users feel a kind of interpellation, defining it as “the work embedded in algorithms to convince [users] that [such platforms] are speaking directly to them.” Bucher (2019) also explored the concept of identity profiling as a key function of algorithms. Such profiling is reminiscent of systematic efforts to describe these algorithms as representing advanced forms of surveillance (De Vries, 2010; Fuchs et al., 2012).

In this context, it is crucial to perceive the institutional aspect of TikTok’s algorithms as the confluence of imaginaries and stories about such algorithms. While algorithmic imaginary refers to how users, including ordinary users, content creators, or public figures, perceive algorithms (Bucher, 2019), stories about algorithms represent a conceptualization rather specific to the activity of ordinary users, through which they share specific ideas or experiences among themselves (Schellewald, 2022). It is also important to mention the specific contributions of algorithmic folk theories, on the basis of which the main relevant theories are outlined within groups of users who interact with algorithms. Although such an approach is effective in outlining a systematic framework for understanding social realities, folk theories fall short in emphasizing “how theories and imaginaries of algorithms relate to specific sets of action strategies that shape modalities of power and resistance” (Siles et al., 2020, p. 13). Therefore, when content generators assume institutional values rendered through political, educational or moral topics, an adjacent conceptual framework is needed to describe the legitimation processes involving ordinary users.

When users’ shared experiences are involved, the role of institutions becomes evident, given the fact that what they consider “appropriate” is formed within common stocks of knowledge, through which they assimilate what is moral, correct, or desirable. Furthermore, the institutional valences associated with TikTok should be seen in line with the perspective of Siles et al. (2022), who refer to algorithms as assemblages through which users, algorithms, and applications work together in the concretization of these stocks of knowledge specific to TikTok.

When imaginaries related to algorithms are represented both on a personal level and on a social and collective level, it becomes evident that these imaginaries turn into stories about algorithms, given that they are based on lived experiences that users sometimes try to project on other users. These lived experiences become demoralizing in moments of dissonance because, as Ruckenstein and Granroth (2020) show, users’ algorithmic sensemaking becomes disturbing in moments when the recommendations produced by the algorithm become confusing. Thus, in the context of almost absent technical information regarding the TikTok algorithm, it is necessary to emphasize the need to study stories as a relevant form of knowledge (Schellewald, 2022) in the user–algorithm relationship.

Drawing on social constructivism, this study goes beyond the classic dichotomy between objectivity and subjectivity by involving the intersubjective dimension in understanding the perceptions associated with TikTok algorithms. Thus, by investigating the common experiences of Romanian TikTok users, this study examines the institutional character “behind” these forms of experience.

Institutional Legitimation as a Form of Agency Negotiation

Digital platforms based on algorithms create the premises of constant agency negotiation, and the increasingly performing algorithm on TikTok confirms precisely such a framework (Kang & Lou, 2022). Therefore, the issue of legitimization is as concrete as possible, considering that most users align themselves with one of two positions: either supporting the incorporation of AI agency in the process of guiding users’ experiences or advocating for the implementation of specific practices—both discursively and in terms of affordances—to exert control over algorithms. The continuous observation and response of the participants in this study to various institutional actors on TikTok serve to underscore the diverse modes of negotiation employed by users in their efforts to counteract or identify instances of “algorithmic sovereignty” (Reviglio & Agosti, 2020). This refers to the computational activities on social media platforms that prevent users from participating in the decision-making process. For example, certain users can legitimize the visualization of popular institutional actors, such as politicians, priests, doctors, or teachers, who have already gone viral on the TikTok platform. This validation is achieved by accentuating the heightened level of user agency that exists within this particular platform. However, the designers of such digital platforms recognize the significance of self-determination for users, given that users’ need for autonomy is a recurring psychological mechanism observed in relation to both mobile health apps (Bol et al., 2019) and mobile smoking-cessation apps (Rughiniş et al., 2015).

In this context, it is worth focusing on the role of stories about algorithms in the legitimization process by examining the ambivalent dynamics of institutional actors on TikTok in the synergy process between humans and AI agencies (Kang & Lou, 2022). As Berger and Luckmann (1969, p. 79) put it concerning the aforementioned process, “the institutional world requires legitimation, that is, ways by which it can be ‘explained’ and justified” (own emphasis). Within the framework of this study, the participants describe the recurring presence of institutional actors on the TikTok platform, encompassing politicians, priests, doctors, and so on. It is noteworthy to acknowledge that the “traditional” institutional order is now digitally mediated. Therefore, this study focuses on the role of this legitimation in the digital environment, given that “the objectivations of the (now historic) institutional order are to be transmitted to a new generation” (Berger & Luckmann, 1967, p. 111) so that these discursive and practical legitimations are transposed into more sophisticated forms of stories about algorithms (Schellewald, 2022). However, it should also be mentioned that the sedimentation processes presented within the constructivist paradigm have undergone certain changes. This is due to the influence of the surrounding infrastructures associated with the affordances of social media, which shape the behaviors of individuals using these platforms (Couldry & Hepp, 2017). Consequently, this does not inherently imply the abolition of user agency; rather, it entails understanding the technological context in which users perpetually engage in activity via digital platforms. Through their activity on platforms like TikTok, users evaluate the algorithm as an objective instance, given that it is “free from subjectivity, error, or attempted influence” (Gillespie, 2014, p. 179). As a result, stories about algorithms transition toward intersubjective realities, through which they gain legitimacy in accordance with a social, moral, or political order that governs these realities to some extent.

Focusing on the institutional legitimation related to TikTok’s algorithm at the confluence between “machine agency” and “user agency,” it is important to highlight that not all the interactions with institutional actors on TikTok legitimize algorithmic forms of knowledge; some of them seem rather to challenge it. For example, Simpson and Semaan’s (2021) study shows how TikTok creates a paradoxical social space. While the algorithm promotes inclusivity and diverse identities, it seems to violate individual users’ identities by highlighting content that encourages different forms of harassment, especially when it comes to sexual minorities—a discussion that strengthens the arguments regarding the institutional character of gender and sexuality (Martin, 2004) in this case. Schellewald (2022) conducted a study that provides an additional illustration of institutional legitimation concerning the TikTok algorithm. The research reveals that users have mixed reactions to educational video recommendations, as some perceive the recommendation algorithms, which are tailored to users’ personalities and lifestyles, as a form of digital surveillance. Therefore, it can be discerned that the various means by which algorithmic realities are legitimized stem from constant processes of agency negotiation between users and technology. See Table 1 below to examine how the algorithmic imaginaries versus stories dichotomy interacts with the legitimation of contents generated by institutional actors on TikTok.

The Conceptual Roles of Legitimacy and Institutional Actors When Discussing the Algorithmic Imaginaries Versus Stories Dichotomy.

There are several functions of social institutions within the social constructivism paradigm. For example, they actively contribute to the knowledge construction process by providing certain frameworks and common symbols based on which individuals interpret reality. In addition, social institutions participate in the process of identity formation, given the fact that the manner in which individuals build coherent frameworks for sensemaking depends on the institutional activities ensured by schools (Due et al., 2003), gender (Martin, 2004), media (Silverblatt, 2004), political parties, and so on. Another function of social institutions in the social construction of reality is their role in reinforcing norms and values. This is achieved through their active participation in shaping individuals’ shared expectations regarding what is considered “appropriate” (Berger & Luckmann, 1967). Social institutions also highlight the dynamic side of our social worlds by showing us how individuals and social groups constantly refer to these institutional landmarks to explain their changing social realities.

Although not explicitly stated, numerous studies have examined the social realities of TikTok, highlighting the presence of institutional valence as much as possible. For example, in a study conducted by Siles et al. (2022), it was observed that participants attributed political valences to TikTok recommendations, specifically linking them to a presidential candidate from Costa Rica based on the interests of users “with the same profile” (p. 12). Another example is Kamran’s (2023) recent study on Pakistani TikTok users, where the author observes that the presence on TikTok is often associated with a “low-class femininity” (p. 1) in Pakistani society. Algorithms play a significant role in how users search for information and, simultaneously, in how knowledge systems are embodied through algorithms (Gillespie, 2014). Hence, when politicians, teachers, priests, or other representatives of “traditional” social institutions participate in the propagation of viral content on TikTok, multiple “formalized inscriptions” (van Dijck, 2013, p. 6) gain institutional legitimacy. This institutionalization process is facilitated by the utilization of social media platforms such as TikTok, where users encounter educational guidance, moral benchmarks, or political information that subsequently influences their actions and behaviors in their everyday routines, whether willingly or involuntarily. For example, Duggan et al. (2015) find that three-quarters of U.S. parents who use social media seek parenting guidance from other parents.

Methodology

This research is part of a broader study conducted over a period of approximately 15 months among TikTok users in Romania, with the aim of examining the primary social realities and stories related to algorithmic activities (RQ1). In addition, this examination traces, in parallel, the latent roles of social institutions in shaping these stories (RQ2). The design of this research comprises two stages. In the first stage of this research, a questionnaire was created with mainly open-ended questions, which targeted TikTok users who, at the time, had just started using the platform. This questionnaire was distributed through the author’s Facebook page and in the form of a QR code in various franchise cafes across Romania. The aim was to diversify the participant sample by including individuals from different socio-demographic backgrounds. Among the questions in the questionnaire, the participants were asked about what they think “the algorithm does” and whether they evaluate it as “useful,” “dangerous,” or “neutral.” In addition, participants are prompted to recount any notable occurrences during their brief engagement with TikTok wherein they perceived the influence of the algorithm, if applicable. Such an initial approach might prove useful because, as per previous approaches (Moe & Ytre-Arne, 2021; Siles et al., 2022), recording first impressions regarding TikTok provides a coherent basis for understanding the processes related to algorithm awareness. From October to December 2022, 286 responses were obtained, and 45 participants agreed to participate in a subsequent in-depth interview regarding their activity on TikTok.

The interviews were conducted in the Romanian language via online platforms, such as Zoom or Google Meet, between February and May 2023. The interview participants exhibited diverse sociodemographic characteristics, as depicted in Table 2. The interviews lasted between 35 and 68 min, with an average duration of 55 min. The interview followed a semi-structured format, primarily emphasizing exploring the roles that TikTok users attribute to algorithms. It also examines the dynamism of values and the sensemaking processes associated with these algorithms. Examining this dynamism becomes feasible due to the evident rise in users’ familiarity with TikTok at the time of the interview. This increased familiarity has facilitated the convergence of more refined social perceptions regarding “what algorithms really do.”

Participants’ Socio-Demographics.

An abductive approach was used to analyze the data obtained from surveys and interviews. This choice was made based on the deliberate incorporation of a social constructivist framework within the analysis, which allowed for the potential emergence of unexpected evidence. The utilization of an abductive approach is deemed appropriate within this study’s framework, given that “In-depth knowledge of multiple theorizations is thus necessary both to find out what is missing or anomalous in an area of study and to stimulate insights about innovative or original theoretical contributions” (Timmermans & Tavory, 2012, p. 173).

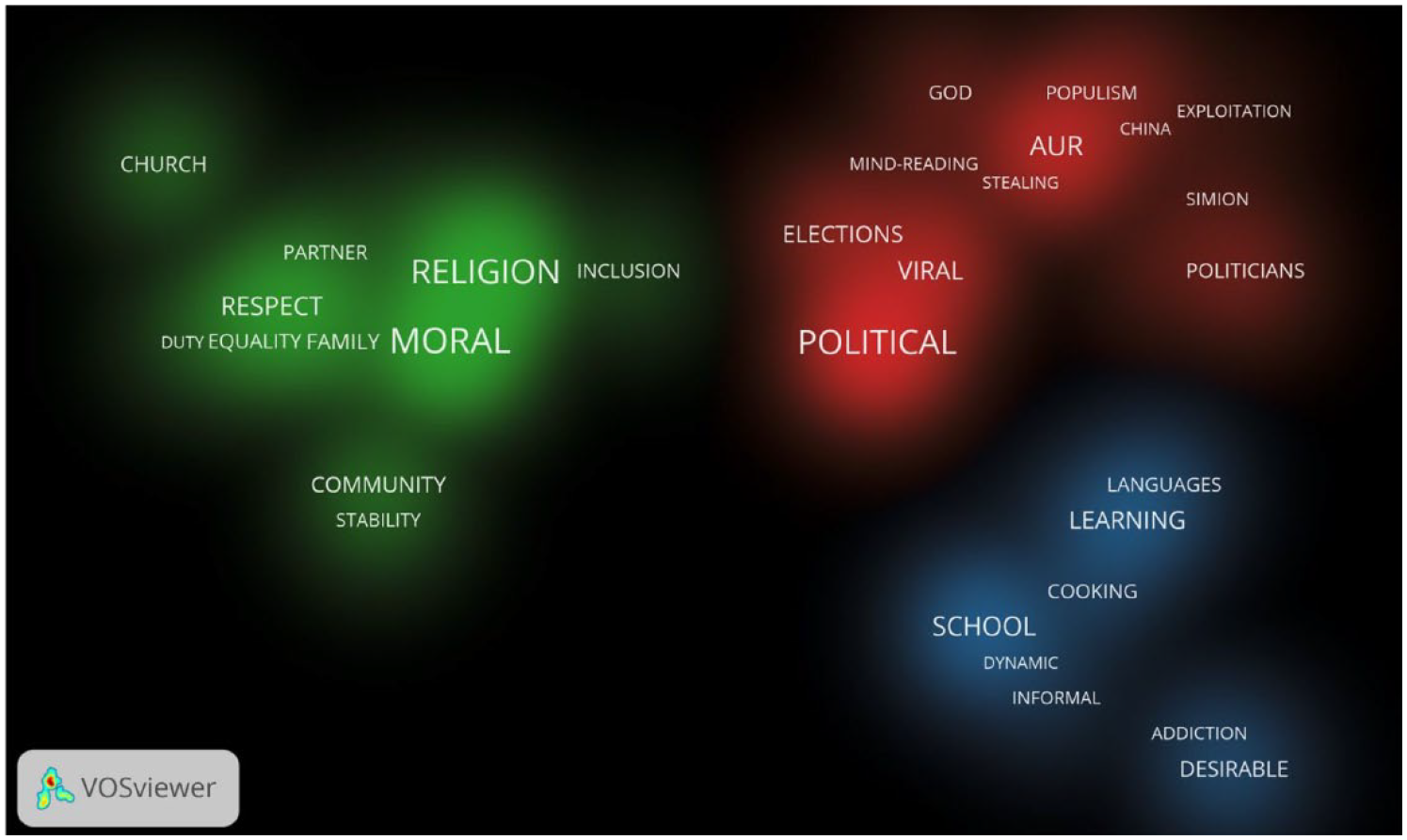

The codes generated from the analysis of the transcripts were initially grouped based on an open coding process, using Quirkos (Turner, 2016) as a tool to coherently organize these codes. Therefore, the use of the principles of thematic analysis (Braun & Clarke, 2006) in abductive research involves the organization of data following the relevant theoretical framework, thereby ensuring that the identified themes in this study take into account the specific institutional logic of “intersubjective sedimentation” (Berger & Luckmann, 1967, p. 85). After organizing the codes in Quirkos, they were grouped using VOSviewer (Van Eck & Waltman, 2013) to provide a coherent visual representation of the main words mentioned by the participants during the interviews, along with their grouping in relevant clusters, specific to the thematic analysis. Thus, the thematic analysis revealed the existence of three recurrent and interrelated themes: (1) algorithm as political profiling, (2) algorithm as moral plethora, and (3) algorithm as educational benchmark. See Figure 1 below for the distribution of recurring terms in each theme and the density of each term.

Density map of the recurrent words for each cluster.

Findings

Algorithm as Political Profiling

Participants within this theme highlight their experiences with TikTok algorithms in a way that foregrounds institutional understandings of politics in shaping stories about these algorithms. Most participants had the impression of greater agency concerning political content when they first joined TikTok; however, this impression changed significantly during the interviews. One such case is that of Terry, a 28-year-old employed in the field of IT. In the open-ended survey, Terry expressed his ability to avoid political content he dislikes; however, after gaining experience using the application, Terry states that

I keep getting videos that I never appreciated, as is the case with George Simion [a Romanian leader of the far-right party AUR]. I never like when I see Simion, I just watch in disgust all his populist statements and wonder how he is so successful.

Costi, a 21-year-old chemistry student also confirms this diminution of agency in the political spectrum as he progressively increased his level of engagement on the social media platform TikTok. About the algorithm, Costi asserts that “it wants to somehow incite you to stay on TikTok as long as possible, but it does this by giving you the content you hate, not just the one you love.” Users such as Costi are subject to algorithms that comprehensively understand their preferences. However, these algorithms intentionally prioritize the presentation of politically charged content likely to elicit controversy to sustain user engagement. This perspective is shared by Lena, a 37-year-old freelancer who uses TikTok to promote her own political perspectives. According to Lena, the algorithm is a “perverted mind-reader” that “shows you what you already hate, to make you hate it even more.”

Users even discuss the influence of the Like button on subsequent recommendations, thereby expressing concern about the frequency with which they receive similar political content. Many interviewees within this political theme mention AUR, the Romanian far-right party popular on TikTok precisely because of George Simion, the party’s president. However, when the contents received on the For You page are of interest, the awareness of being profiled is partially shadowed by affective content, which is reinforced by the frequency of scrolling. Anne, a 23-year-old sociology student, confirms that she does not agree with Simion’s politics, “but he is right when he says that PSD [Social Democratic Party] and PNL [National Liberal Party] have been stealing from us for thirty years.” Through affect and populist rhetoric, representatives of institutions such as political parties act successfully in the context of datafication, taking advantage of TikTok’s specific technological affordances.

However, it is not just the politically unpopular recommendations that raise participants’ concerns about the opaque powers of TikTok’s algorithm. Senna, a 28-year-old dermatologist, worries that “every time I get videos of the party I usually vote for, even though I’ve never liked them on TikTok.” For participants like Senna, the TikTok algorithm operates like a black box when recommending relevant videos in the absence of concrete input, such as likes and comments, from users. While it is inherent for the algorithm to perform faulty predictions (Bucher, 2019), it is important to acknowledge that the very precise political estimates of the algorithm are a major source of concern. This confirms previously obtained results (Ruckenstein & Granroth, 2020), whereby sensing a loss of agency in relation to algorithms creates confusing or disturbing states, as the extent to what the algorithm “knows” about users is uncertain.

Users in this thematic domain have diverse perspectives regarding their interpretation of political content that contradicts their own views. Simi, a 44-year-old cardiologist, stated in the preliminary online survey that “the algorithm will be as you want it to be.” However, during the interview, he changed his perspective, stating that the algorithm shows you the politicians you dislike because “it wants to convince you to change your political options, to think that they are also good politicians.” All these perspectives show how algorithmic awareness is a long process, and users’ expectations regarding the algorithm also change depending on their relationship with TikTok (Siles et al., 2022). Thus, even if users do not use TikTok for political purposes, it is observed that these political topics are increasingly present within users’ stories as they become familiar with TikTok. In addition, all these stories regarding the political activity of algorithms confirm their “identity profiling” function (Bucher, 2019), through which the applications work intensively to estimate user profiles with the aim of anticipating potential values and political options, along with their dynamism. Therefore, the persistence of the political factor in the participants’ discourse highlights the efforts to gain an institutional existence within the users’ algorithmic realities.

The primary focus of this theme revolves around examining the political aspects as a social institution in clarifying users’ algorithmic sensemaking. The next theme delves into how moral or ethical values are institutionalized within TikTok users’ discourses.

Algorithm as a Moral Plethora

Participants within this theme repeatedly discussed the moral or ethical implications of TikTok’s algorithms in terms of recommendations on the “For You” page. As in the previous theme, notable differences are observed between the initial impressions of the users—reproduced in the online survey—and the impressions revealed in the interviews. For example, Tina, a 53-year-old retiree, says that her initial anticipation of TikTok was to promote perfect bodies. However, she realized that this perception is not accurate, as she encounters a wide array of body types on a daily basis while using TikTok. Thus, Tina sees these diverse practices as “clear evidence of a CEO interested in morality, and who deserves all our respect.” She elaborates on this perspective by being delighted that

TikTok shows me that not all people in the world have to be young, beautiful and flawless . . . it shows me that there are people like me, menopausal women trying to occupy their free time with all kinds of activities [laughs]

Tina is also impressed that she found a famous Romanian priest on TikTok who discusses the importance of fidelity and family in various videos while sanctioning unfaithful behavior. Such church representatives seem to operate as meaningful institutional actors, aiming to expand their popularity among digitally mediated communities.

Other users offer another moral assessment of the algorithms present on TikTok. According to Bobo, a 29-year-old who works as a game tester, the algorithm is rather dangerous in the process of identity formation because it “manages to exploit you by giving you everything you want to see.” According to Bobo, he has contemplated discontinuing his use of TikTok on multiple occasions. However, he has refrained from reaching a definitive conclusion due to the consistent influx of videos that consistently astonish him with their novelty and unfamiliarity. For Bobo and other interviewees, an algorithm like the one on TikTok has reached such a high-performance level precisely because of its lack of transparency, given the fact that “we don’t have access to the high-performance ways through which the algorithms have come to know us so well, and my fear grows as I see more and more countries banning TikTok due to immoral practices.” Thus, controversies surrounding privacy and lack of transparency represent recurring problems for some TikTok users and are closely related to moral or ethical values (Magalhães, 2018; Milano et al., 2020).

Clara, a 23-year-old psychology student, also discusses the moral risks of an algorithm that acts like Pandora’s box. She says that enhanced diversity on TikTok “is usually seen as something good.” However, this diversity actually leads to “the decay of all morality” because it “completely neglects the idea of family and mutual respect.” For her, the activity of church representatives on TikTok “is not prominent enough,” given that “it is not taken seriously by too many young people.” Thus, what works for Tina as a “collective support environment” (Schellewald, 2022) is designed quite differently for other users.

A completely different perspective comes from Alin, an 18-year-old high school senior. Alin is confident about the diversity he has on TikTok, and this is because

applications like TikTok have a moral duty to show users that there are people who are different from them . . . not all of them are white, straight and rich, and the first step in becoming more empathetic is to understand that not everyone is like you. (Own emphasis)

Users such as Alin believe that algorithmic morality is best observed on TikTok by promoting diversity, while other users believe that this diversity is harmful because it undermines the institution of the family. Thus, the incongruity of identities on TikTok often leads to evaluating these practices as dangerous, leading users who consider themselves institutionally disadvantaged to opt for different ways of rejecting the use of TikTok (Siles et al., 2022).

It is important to note that TikTok is a heterogeneous space, and algorithmic recommendations work at the confluence of inclusivity and identity (Simpson & Semaan, 2021). This outlines the premises of an obscure computational activity, so users often feel they do not know how it works. This is what a 21-year-old user who completed the online survey says about this algorithm: “When you think you understand it, you realize you don’t.”

Algorithm as Educational Benchmark

Participants in this theme see the TikTok algorithm as having a practical and coherent role in the acquisition of various educational aspects. Similar to the preceding sections, the observation of these algorithmic roles became increasingly conspicuous as users engaged in TikTok. Lia, a 54-year-old retiree, was pleasantly surprised by the app’s appeal, as it promptly recognized and catered to her preferences:

On For You I get things that I wouldn’t have received anywhere else. For example, I found some ladies who teach you how to make a very tasty pie . . . I realize that neither Facebook nor Instagram gives you such powerful recommendations as in the case of TikTok.

It is not just Lia who brings up other social networks. Cosmin, a 29-year-old high-school teacher, says Facebook and Instagram exist mainly “for entertainment,” while TikTok “can get very professional sometimes.” To give a concrete example, Cosmin says that he learned his first Russian phrases, thanks to his TikTok account, so he now gets “at least twenty videos a day of people who speak Russian to teach others . . . I definitely didn’t expect this when I first joined TikTok” [laughs]. By examining such narratives, it becomes evident how users highlight the emergence of particular forms of knowledge, thereby delineating these forms through institutional practices that give TikTok the authority of traditional institutions such as schools. In Romania, nutritionists have become well-liked institutional figures on TikTok alongside politicians and priests. Many interviewees frequently follow these nutritionists to get dietary advice. For Lia, TikTok functions as a site of awareness, given that she became aware that she was consuming too much glucose. For Cosmin, the same nutritionist encouraged him to start going to the gym.

Given TikTok’s popularity among users, interviewees believe its educational role could be institutionalized even within formal education systems. For example, Alin states that many students would be more captivated by the content taught at school “if the teaching was done in a more interactive way, such as through TikTok.” According to Alin, this would be easily possible due to the diversity made available on this application, which makes “almost any video to have something educational in it.”

The participants in this study engage in a comprehensive discussion regarding TikTok’s educational role, encompassing both positive and negative aspects. Nok, a 20-year-old journalism student, says that such educational videos are “a double-edged sword,” given that

you don’t even realize how the algorithm makes you lose hours while teaching you geography, playing the piano or making a cream soup . . . I think each of us should ask what the purpose of such an algorithm is, since it only gives you things you want to see.

Thus, whether it is about appreciating or not the recommendations available on TikTok, it is observed that the assimilation of the “educational” function of the algorithm appears to be a process through which the interviewees developed their own routines in relation to TikTok (Siles, 2023; Siles et al., 2022), and these routines legitimize the institutionalization of different values and algorithmic practices.

Addressing the Intersubjective Side of Algorithmic Sensemaking

This article argued that the algorithmic stories of Romanian TikTok users frequently go beyond isolated perceptions, highlighting some institutional ways of sensemaking. The results demonstrate the efficacy of algorithmic awareness as a process (Siles et al., 2022). It also indicates a significant shift in users’ expectations as they engage with the TikTok platform. In constructivist language, users assign different forms of legitimation as they use TikTok, a transition observed in most interviews. To ensure this legitimation as a process, it is necessary for users to go through concrete stages of explaining and justifying (Berger & Luckmann, 1967, p. 111). As users interact more with algorithms, new algorithmic stories emerge, thereby contributing to the ongoing process of legitimization.

Algorithms also contribute to the efficient categorization of users, a capability that was challenging to achieve in the era prior to the advent of digital technology. The occurrence of this phenomenon is not solely attributed to digitization but rather to the datafication processes that make social media platforms such as TikTok function as sites of categorization (Couldry & Hepp, 2017). Given the emergence of social media platforms as significant sources of knowledge, it is imperative to establish novel governing bodies to oversee these digitally mediated realities. According to MacKenzie and Wajcman (1999, p. 33), “technological innovations are similar to legislative acts or political foundings that establish a framework for public order.” Moreover, this current study highlights the active involvement of “traditional” social institutions in the processes of datafication and categorization, as evidenced by the emergence of institutional themes on TikTok. Consequently, users’ stories about algorithms transform into intersubjective realities that should be investigated in future research to gain a more comprehensive understanding.

How users legitimize the presence of institutional actors on the For You page highlights the constant process of agency negotiation between these users and algorithms. Thus, the extensive algorithmic recommendation process—rendered through procedures that respect a mathematical formalization (Matei & Preda, 2019) or a “grammar of action” (Kitchin & Dodge, 2011, p. 80)—is in permanent tension with how TikTok users make sense of such recommendations. Therefore, it is imperative to acknowledge the digital emergence of institutional categories such as doctors, politicians, and teachers, which justifies the need to understand how the new processes of institutionalization now work in an environment characterized by datafication and categorization (Couldry & Hepp, 2017).

TikTok takes a bottom-up approach, whereby content creators are able to broadcast their videos to a larger audience than non-AI-based platforms. In other words, content generators are frequently followed even by users who do not follow these creators, and who have identified these contents through recommendation algorithms, regardless of the number of likes and followers they have. By an increasingly viral content on educational, political, and moral/religious topics, these content generators—who are also institutional actors—benefit from TikTok’s enhanced agency, which has the ability to professionally curate content for each user.

Furthermore, these projections take the form of shared experiences that confirm the usefulness of the conceptual framework regarding stories about algorithms. The need to go beyond personal impressions regarding algorithms has been nuanced by scholars such as Schellewald (2022, p. 3), who insist on the need to investigate algorithmic realities “through stories that extend beyond the horizon of personal experience.” Thus, this study addressed the empirical necessity of broadening the conceptualization of algorithmic narratives, specifically focusing on the institutional response to this need.

Although Romanian TikTok users in this study are generally excited about the diversity offered by the algorithms, this diversity is challenged when it seems to threaten the institutional values that users adhere to. Therefore, users’ experiences with these algorithms are closely tied to users’ institutional ways of sensemaking. Precisely through this research, I set out to show how algorithms and institutionally guided stories are deeply interconnected, contributing to varied—and yet so understudied—relationships between users and applications like TikTok.

Limitations and Future Research Directions

This study has certain limitations. First, it explores a limited cultural space, which is notably familiar to the author. However, this restricted scope can be advantageous as it validates the institutional elements unique to Romania. Further studies could investigate the complex interplay between the algorithmic stories and the institutional values in other cultural contexts, given the fact that research has been carried out on TikTok activity in Costa Rica (Siles et al., 2022), Pakistan (Kamran, 2023), and so on. Another limitation arises from the relatively short distance between the stages of this study. Therefore, it would be beneficial for future research endeavors to explore more expansive longitudinal trajectories in relation to TikTok, with the aim of observing potential developments in terms of users’ algorithmic sensemaking over an extended period of time.

Future research should examine whether TikTok’s algorithm is associated with other social institutions in different cultural contexts. Examples of such institutions may include religious organizations, interactive digital entertainment platforms, constructs related to gender and sexuality, familial structures, and various other entities.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.