Abstract

This article explores how the pandemic in Iran was discursively framed by automated accounts and human users. While there is a growing body of literature on bot activism, little is known about how bots and humans framed the pandemic in authoritarian regimes. Drawing on networked framing theory, we use both computational and qualitative methods to fill this gap. Our empirical analysis centers on a data set of 4,165,177 tweets collected between 27 January 2020 and 18 April 2020. We found that while anti-regime human users strongly criticized Iran’s regime, pro-regime bots countered with messages emphasizing the sacrifices of medical staff, the strength of Iran, and the failings of Western governments in managing the crisis. Our results suggest that Persian Twitter human users were largely against the regime, while the regime employed bots extensively to maintain balance. Human users used sarcasm, while pro-regime bots invoked religious and revolutionary sentiments metaphorically to defend the regime. By focusing on a relatively unexplored context, this article adds to the growing literature on bot activism.

Introduction

In July 2022, Elon Musk withdrew from a US$44 billion agreement with Twitter, alleging that the company had misrepresented the number of social bots, or automated accounts, present on its platform (Wagner & Frier, 2022). Although an agreement was eventually reached between Musk and Twitter on 27 October 2022, 1 this incident once again brought the issue of social bots to the forefront of public attention. The problem of automated accounts is not only crucial for business, but it also has significant implications for political communication. Social bots are accounts on social media that are either partially or fully automated and attempt to communicate and interact with human accounts (Howard et al., 2018). They have increasingly entered the political sphere in recent years, and while they can serve some useful functions, they have also been used maliciously to manipulate political events, such as elections.

Despite a growing body of literature on social bots on Twitter, several areas require further investigation. Existing research has focused largely on Western democracies and the negative impact of bots on authentic political communication on social media. While there is a growing body of research into bot activism in non-democratic societies, our understanding of those contexts, such as Iran, is limited. In addition, the outbreak of the coronavirus pandemic presents a unique situation that demands investigation. While research into bot activism during the pandemic is growing, the majority of studies have relied on computational methods to analyze the bots’ role in spreading misinformation and fake news. As a result, researchers have largely neglected to examine bot activism during this global health crisis from a discursive perspective, beyond their typical functions of amplifying negative messages and hate speech.

This study seeks to enhance our understanding of how bots’ framing and discursive practices differ from those of human users in an authoritarian regime during the Covid-19 crisis. We focus on the Iranian Twittersphere (Persian Twitter) during the first wave of the Covid-19 crisis to address these gaps. Our empirical analysis centers on a data set of 4,165,177 Persian tweets collected during the first months of the Covid-19 crisis, from 27 January 2020 to 18 April 2020. Using a discursive approach, we combine computational methods, such as bot detection and social network analysis (SNA) with textual interpretations, such as discourse analysis, to understand the extent to which bots’ framing practices resemble human discursive activism. This research contributes to the growing body of literature on social bots and Covid-19 studies, particularly in understudied contexts like Iran.

Bot Activism During the Pandemic

Social bots are computer programs designed to imitate human behaviors in social networks, potentially with the intention of manipulation (Ferrara et al., 2016). Unlike early bots that were simple and programmed for routine tasks, social bots are now capable of engaging with human users and mimicking complex human behavior, often attempting to conceal their true nature. These bots are prevalent across various social media platforms, particularly Twitter, where studies suggest that approximately 15% of accounts operate automatically or semi-automatically (Varol et al., 2017). The existence of social bots poses a serious threat to human interaction on Twitter, with some scholars arguing that as bots become increasingly sophisticated in simulating human behavior, the line between humans and these socio-technical entities becomes less distinct (Antenore et al., 2022). Furthermore, research has shown that Twitter bots are considered credible sources of information (Edwards et al., 2014) and can have up to 2.5 times more influence than humans (Rizoiu et al., 2018).

The use of bots, or malicious automation, in politics has garnered significant scholarly attention due to its negative effects. Howard & Kollanyi (2016) explains that bots can inundate online activists with a deluge of tweets, creating the illusion of a fake supportive environment, known as an AstroTurf campaign. He defined AstroTurf as the process of seeking electoral victory or legislative relief for grievances by helping political actors find and mobilize a sympathetic public, a process designed to create the image of public consensus where there is none. Several studies have emphasized that bots can effectively disseminate partisan content, misinformation, and false news (Shao et al., 2018). Moreover, bots can manipulate popularity cues and masquerade as members of marginalized groups (Howard et al., 2018). Keller and Klinger (2019) demonstrate that bots can initiate and fuel online phenomena, inciting outrage and artificial hype, while remaining indistinguishable as non-human agents by both people and trending algorithms.

The current literature on bot activism in politics, such as during the 2016 US presidential election (Bessi & Ferrara, 2016; Howard & Kollanyi, 2016) and Brexit Referendum (Bastos & Mercea, 2019), has been well documented. However, the Covid-19 crisis presents a significant case to investigate how social bots intervene in public discourse during a health crisis. This research is of paramount importance as the malicious effects of bots could distort the flow of reliable information and increase the number of casualties, undermine government policies’ effectiveness, and weaken people’s understanding of the situation.

Researchers have devoted considerable attention to bots’ role in spreading misinformation and conspiracy theories during the pandemic. For example, Ferrara (2020) highlights the significant role played by bots in disseminating conspiracy theories early in the pandemic, particularly regarding the coronavirus originating from a Wuhan lab. In addition, Duan et al. (2022) found that automated accounts selectively promoted specific topics aligned with partisan narratives.

One area of research examines the impact of bots’ sentiments on human behavior. Studies have shown that bots can influence human sentiments by transmitting positive or negative tweets on social media (Zhang et al., 2022). During the pandemic, bots accounted for around 9% of the Twitter user population (Duan et al., 2022; Shi et al., 2020; Zhang et al., 2022), providing valuable insight into their role in this context. However, there are still two gaps in this field that need to be addressed to further our understanding of bot activism during the pandemic.

First, most existing studies have been conducted at a macro level, using computational methods, such as sentiment analysis and topic modeling. As a result, there is a lack of systematic research at a micro level, focusing on the complex discursive relationships between bots and humans. Comparative studies that contrast bots and human behaviors during the crisis are particularly scarce. Chang and Ferrara (2022) provide a rare study discussing and contrasting bot and human engagement in Covid-19-related discussions. However, like many studies mentioned above, they rely mainly on automated text analysis to extract frames and analyze the framing practices of humans and bots. The reliability of the results obtained through automated techniques has been called into question, as these algorithms work primarily on the frequency and co-occurrence of words and often fail to uncover the deeper layers of meaning that are inherent in human language (De Grove et al., 2020). Thus, while Chang and Ferrara’s (2022) work provides an overview of bot activism during the pandemic, the validity of their findings remains uncertain. Furthermore, they fail to explore the frames discursively, to provide a close and nuanced analysis.

To gain a deeper understanding of the similarities and differences between bots and human users in framing the pandemic, we adopted a qualitative approach to analyze their discursive framing. Unlike previous studies that mainly focused on specific aspects of bots’ influence on Twitter discussions, such as the spread of misinformation, our approach provides a nuanced perspective on the differences and similarities between bots and humans in framing the pandemic.

An example of the second research gap in this field can also be found in Chang and Ferrara’s (2022) study, which solely focused on the sociopolitical context of the United States without contextualizing their findings or discussing their relevance to other contexts. This type of research assumes that all readers are familiar with the US political system and its scientific importance, which limits the generalizability of their results to other regions and contexts. To advance our understanding of how bots and humans frame the pandemic, it is crucial to conduct more research in diverse contexts. For instance, studying bot activism in non-democratic countries, such as Iran, can offer valuable insights into how bots function in societies that differ from Western societies.

The existing literature on bot activism in non-democratic societies is relatively similar to studies conducted in democratic countries. A line of research examines the statistics and quantitative behavior of bots in authoritarian regimes, including the type of bots, their characteristics, and their follower/following ratio (Vasilkova & Legostaeva, 2019). However, research into the frequency of bots on Twitter indicates that social bots are more prevalent in non-democratic societies. According to Stukal et al. (2017), the proportion of bots among actively Tweeting Russian accounts reached as high as 85% on occasion. Neyazi (2020) and Jones (2019) verified these results on Twitter in India and Qatar, respectively.

The majority of research focuses on the instrumental and strategic operations of bots in sociopolitical events, for instance, disinformation dissemination, falsifying popularity metrics, and populating hashtags and discussions. There is a growing line of inquiry showing the aforementioned results in Russia as a big player in this field (Stukal et al., 2017; Vasilkova & Legostaeva, 2019). Hegelich (2016) affirms these findings and goes further by demonstrating that Ukrainian bots during the Ukraine–Russia conflict of 2014 had a vast arsenal of techniques at their disposal for eluding detection by traditional bot detection algorithms. Stukal et al. (2019) argue in a separate study that, contrary to popular belief, pro-Kremlin bots are not the only type of bots in the Russian political Twittersphere. They highlight the fact that anti-Kremlin and pro-Kiev bots maintain a significant presence on Russian Twitter.

B. Zhao et al. (2023), who studied the Twitter discussion of the Russia–Ukraine conflict, argued that social bots have distinct or even opposing effects on agenda-setting in national/regional discussions. Again, the authors relied solely on topic modeling to estimate this outcome. In addition, Bradshaw et al. (2021) documented the use of bots in over 80 countries, including authoritarian and democratic regimes. They cited authoritarian regimes, such as Kazakhstan, Egypt, Syria, and Bahrain as examples. It was a descriptive report that documented instances of bot activism in those countries. Thus, it did not contribute to our theoretical comprehension of bot activism in democratic and non-democratic countries significantly. In India, they demonstrated how Modi’s administration utilized a botnet to elevate the president’s number of adherents and popularity indicators. Neyazi (2020) additionally displayed how a minority of Twitter users polarized the Indian public sphere by creating a false impression of public opinion on critical national issues.

In spite of this expanding corpus of literature, our understanding of bot activism in non-democratic societies remains limited. In alignment with the majority of studies conducted in democratic countries, research in non-democratic societies prioritizes bots’ strategic behavior and quantitative measures but fails to advance our understanding of bots’ discursive activism, particularly in comparison to human users. Moreover, there are fewer studies on bot activism during the pandemic in authoritarian regimes than in democratic countries. Thus, we intend to resolve these deficiencies by focusing on a context that has been relatively understudied: Iran.

Politics, Twitter, and Bots in Iran

Bots pose a threat to democracy in all political systems, but their impact could be even more severe in restrictive contexts, such as Iran, where authoritarian regimes have employed various tactics to quell social media activism, including internet shutdowns (Gunitsky, 2015). Bots could potentially be used by such regimes to thwart citizens’ genuine efforts to oppose the regime, by spreading false information and redirecting dissident conversations. Conversely, in Western democracies, news media and other organizations are monitoring political parties’ activities, making it difficult for right-wing parties to use bots during elections without facing public scrutiny (Keller & Klinger, 2019). However, non-democratic regimes have no limits when it comes to suppressing democratic movements and may resort to any means necessary to achieve their goals.

The use of bots could significantly impede the efforts of Iranian citizens to establish a more democratic system in their country. Iran is a non-democratic nation characterized by an Islamic populist discourse, and the regime maintains strict control over media and political activities (Holliday, 2016). The regime seeks to silence any dissenting voices that do not align with its antagonistic, Islam-focused, and anti-Western discourse. Since the early days of blogging, Iranians have turned to social media to express their opinions, bypass government restrictions, share and access sensitive information, and challenge the regime’s dominant discourse (Kermani & Hooman, 2022). Twitter played a crucial role in the 2009 Green Movement by amplifying the protests and mobilizing citizens (Karimi, 2018). Although the regime initially blocked Twitter during the early stages of the demonstrations, this attempt to suppress the platform failed to deter Iranians from using it. The use of bots in such a context could have devastating consequences for citizens’ democratic aspirations.

While some studies have highlighted that Twitter has been utilized by anti-regime citizens to disseminate dissident content and sensitive information (Azadi & Mesgaran, 2021), the extent of bot activism in the Iranian Twittersphere (Persian Twitter) remains unclear. Investigating this subject is of significant interest as bots may not only impede Iranians’ democratic endeavors but also exacerbate the impact of health crises. Given that Twitter and other social media platforms are often the primary channels for disseminating reliable information in Iran, bots have the potential to disrupt the mechanisms of authentic news dissemination. For instance, during the Covid-19 crisis, Iran’s government was accused of concealing the true number of infections and casualties. Bots could have been used to aid the regime in this manipulation effort.

As discussed in the previous sections, research into bot activism on Persian Twitter is niche and primarily focuses on topics similar to the conventional line of inquiry in this field. Thieltges et al. (2018) showed a small portion of tweets sent by bots in Iran-related debates on Twitter in 2017. Running sentiment analysis, they determined that social bots used more negative words in their tweets. Nonetheless, this study, like a number of the aforementioned studies, relied exclusively on computational methods and provided us with some straightforward and quantitative data. In a separate study, Honari and Alinejad (2022) examined the Twitter discourse related to women’s rights during the Iranian parliamentary election of 2016. These academics argued that the content of the tweets was frequently provocative and intended to elicit a response from other Twitter users.

Farzam et al. (2022) contended that opinion manipulation by fake accounts, or bots, was more prevalent in divisive political discussions on Persian Twitter than in non-divisive or apolitical discussions. They discovered that fake accounts were more likely to engage in coordinated behavior, such as content retweeting. Mohammadi et al. (2022) investigated bot activism on Persian Twitter during the 2021 presidential election in Iran. They argued that bots were among the most retweeted accounts in all clusters resulting in polarized Persian Twitter, reiterating the majority of the findings from other studies. In a recent study, Mazoochi et al. (2023) took a technical approach to the problem of bots on Persian Twitter, attempting to determine which feature is most important for bot detection.

As demonstrated above, these studies were primarily concerned with bots’ behavior, statistics, and quantitative measurements, as well as their negative impact on the network. Numerous studies in other democratic or authoritarian contexts have identified a similar emphasis. The gap discussed beforehand exists here as well. Our grasp of the more complex dynamics of discursive bot activism on Persian Twitter is specialized. Furthermore, there are few studies on bot activism on Persian Twitter throughout the pandemic. Therefore, our research aims to investigate the discursive activism of bots versus humans during the pandemic in non-democratic regimes, specifically on Persian Twitter. We employ framing theory to analyze their discursive activism, which we will discuss in the following section.

Networked Framing as Social Media Discursive Activism

Framing, as Entman (1993) defines it, involves “[selecting] some aspects of a perceived reality and make them more salient in a communicating text” (p. 52). Different types of frames, such as issue framing and emphasis framing, have been discussed by scholars (Lecheler & De Vreese, 2019). However, we adopt Gamson and Modigliani’s (1989) perspective that framing is a discursive practice. According to them, frames are the core of the “interpretive packages” that make up media discourses. By attributing meaning to an issue, frames can shape individual understanding and opinion by accentuating specific elements or features of the bigger picture (De Vreese, 2005). Consequently, frames are discursive packages individuals impose on their lived experiences to make sense of them (Gamson & Modigliani, 1989).

The emergence and widespread use of social media have led to the development of a new form of framing known as networked framing, as coined by Meraz and Papacharissi (2013). Unlike the “passive” audiences of mass media, social media users can actively engage in producing and consuming iterative frames (Bennett & Pfetsch, 2018). Meraz and Papacharissi (2013) proposed that networked framing emerges from the interaction between elites and crowds in networked publics to generate dominant frames that shape the form of news narratives, building upon traditional framing theory. Unlike mass media frames, which tend to be static and permanent, networked frames are continuously revised, rearticulated, and redistributed by both the crowd and the elites.

Given the discursive nature of framing, as highlighted by Papacharissi and Easton (2013), and Kermani and Tafreshi (2022), we interpret networked framing as a form of discursive activism on social media. In addition, we draw on Bitzer’s (1968) ideas to suggest that users utilize rhetorical devices, such as metaphors, as discursive practices to shape frames as discursive packages. We define discursive practices as textual tactics used to produce, change, and negotiate meaning. Examining how users utilize rhetorical devices to shape dominant frames provides us with a more nuanced understanding of discursive activism on Twitter.

The following research questions guide this investigation:

RQ1: What were the primary communities on Persian Twitter during the Covid-19 pandemic?

RQ2: Who were the most influential users and bots in each community?

RQ3: Which frames became dominant among human users in each community? What discursive practices were used to articulate these frames?

RQ4: What frames became dominant among automated accounts (bots) in each community? What discursive practices were used to articulate these frames?

RQ5: To what degree did the dominant frames of human accounts differ from or overlap with the frames of bots in each community?

Methods

Data Collection and Preprocessing

The research data were collected using Twitter REST-API during the first wave of the Coronavirus crisis in Iran. The data collection process began on 27 January 2020 and continued for 4 months until 18 April 2020. All tweets containing related hashtags, including various Farsi variations 2 of #Corona, were collected, resulting in an original data set of 4,165,177 tweets. The data set was then filtered to include only tweets in the Persian language, as the writing of Corona in Farsi and Arabic is identical. Duplicates were removed, resulting in a final research data set of 1,986,625 tweets.

SNA

To perform SNA, the first step was to create the retweet (RT) network, which is a network that represents the sharing of information on Twitter (Bruns & Stieglitz, 2013). The RT network consisted of 172,672 nodes (i.e., users) and 1,506,172 edges (retweets). Next, we conducted a cluster analysis on the RT network using the Louvain method (Blondel et al., 2008). Our community detection algorithm identified 1,831 clusters. However, we focused only on the clusters that made up at least 4% of the network, resulting in a final analysis of five clusters. We used the random labels generated by Gephi 3 for these clusters, but we later replaced them with more meaningful labels after an extensive process of qualitative human-driven coding. We will get back to this later.

To identify the most influential human users in each cluster, we employed PageRank centrality (Easley & Kleinberg, 2010, p. 407). By utilizing this technique, we were able to identify the top users in each cluster, regardless of whether they were bots or human accounts. We then manually verified the results and separated any bot accounts that appeared among the top accounts. We selected the top 20 human users in four clusters, resulting in a total of 80 accounts. However, one of the clusters consisted entirely of bots (which we later identified as the pro-regime botnet [PR-BN] cluster). As a result, it was both difficult and irrelevant to collect 20 human accounts from this cluster. Instead, we only collected 15 non-bot accounts, bringing the total number of top users to 95. We then extracted all tweets from these users within the network (n = 32,576).

To further interpret the data qualitatively, we randomly sampled a subset of this data set using Cochran’s formula for calculating the sample size of a finite population. The resulting sample included 3,690 tweets from non-automated accounts with a confidence level of 99%. It is important to note that we made some changes to this sample based on initial findings from qualitative coding, which will be discussed later. In the subsequent section, we describe our process for identifying bots and selecting their tweets for qualitative analysis.

Bot Detection

In recent years, scholars have developed several methods for detecting bots, including Heavy Automation and Social Fingerprinting techniques (Martini et al., 2021). However, there is no perfect technique available to date, as each method has its own advantages and limitations (Martini et al., 2021). For this study, we utilized Botometer, one of the most reliable and widely used bot detection methods (Ferrara et al., 2016). Nonetheless, Botometer also has its limitations. For example, it is based on machine learning and is partly black-boxed, meaning we know the basic categories of variables but not the details of how they are weighted or how the result scores are calculated (for additional criticisms, see Martini et al., 2021).

Botometer does not provide a binary classification of whether a user is a bot or not, but rather produces a probability estimate ranging from 0 to 1 (with 1 indicating the highest likelihood of being a bot, and 0 indicating the highest likelihood of being human; the scale can also be 0–5 as displayed on Botometer’s website). Therefore, researchers need to set a manual threshold to classify accounts as bots or not. The threshold is determined by the researcher’s discretion based on what seems reasonable to them. For instance, Keller and Klinger (2019) chose 0.76. In contrast, Zhang et al. (2019) used a relatively low threshold of 0.25 for the complete automation probability (CAP), which is also provided by Botometer.

In contrast to using a binary threshold, we employed a probabilistic scale to categorize bot accounts based on their CAP. Accounts with a CAP higher than 0.8 were classified as highly probable bot accounts. Other classes based on the accounts’ CAP included probably bot (0.6–0.8), uncertain (0.4–0.6), improbably bot (0.2–0.4), and highly improbable (0.0–0.2). We contend that this approach is more appropriate as it acknowledges the uncertainty of bot detection and aligns with the Botometer algorithm. It also provides a more nuanced understanding of the probability of an account being a bot.

The top 50 accounts in each community with the highest CAP were chosen after computing the CAP score for all accounts, all of which were in the highly probable class. Botometer’s result is uncertain and typically bears levels of error (Gallwitz & Kreil, 2022; B. Zhao et al., 2023). Thus, validating the output by Botometer is necessary. We did it in two steps. First, we manually checked the top accounts with the highest CAP scores. We removed accounts that were labeled as bots by Botometer by mistake. Therefore, we ensured that all chosen accounts at this juncture, on which all qualitative analyses had been done on them, were bots. In addition, two human coders coded a random sample of 1,000 accounts to identify if they were bots or not. Then, we used Krippendorff’s alpha (Lombard et al., 2002) to measure the agreement between human coders and Botometer. Although the alpha was poor (64%), we deemed it acceptable.

While it is true that the Botometer’s result is uncertain and could cause some bias in research, this fact does not affect the findings of this study significantly. As mentioned above, all qualitative and discursive analyses have been conducted on bots with the highest CAP score. We validated this sample manually. Thus, there is no error in the qualitative sample. While there might be more concerns about the Botometer’s result on the whole data set regarding the low agreement between this algorithm and human coders, we should mention that the focus of our analyses was not on the whole sample. We only did hashtag analysis, as it is discussed further, on all tweets. Therefore, the potential adverse effect of Botometer’s results on the findings in this research is minimized.

In addition, it is not a primary aim of this study to discuss the strengths and weaknesses of the Botometer. However, our analyses on selected top bot accounts and the random sample show that Botometer works better in identifying spammers and fake followers on Persian Twitter. These two types bear the low level of errors in all subtypes of bots analyzed by Botometer. 4 However, we emphasize that further study is required to investigate the causes of the Botometer’s bot identification errors on Persian Twitter and to improve it.

It is important to note another constraint. Due to the time delay between data collection and analysis, certain accounts were removed or suspended, and Botometer was unable to calculate their CAP score. For this study, a total of 37,383 accounts out of 172,672 accounts were excluded since we did not have adequate data to evaluate their probability of being a bot.

We proceeded by retrieving all tweets from the selected 250 bot accounts (n = 1,422). As the sample size was not too large, we conducted a qualitative coding of all tweets from the bot accounts. It is worth mentioning that, in addition to this, we utilized automated text analysis to determine the top hashtags in each community for all bot and not-bot accounts. The findings from hashtag detection were employed to supplement the qualitative analyses.

Qualitative Interpretations and Human-Driven Coding

During this phase, we engaged in coding tweet samples from both the bot and non-bot data sets, and non-bot accounts. Our approach to frame identification in this study followed Van Gorp’s (2010) two-stage mixed inductive–deductive method. The initial phase involved the inductive coding of content utilizing open coding, which allowed potential frames to surface. This was succeeded by a deductive stage where the components of those frame packages were utilized to code frame prevalence in a controlled and replicable manner (Nicholls & Culpepper, 2020). To scrutinize the qualitative interpretation process, we added an extra stage to the first phase. The samples were subsequently coded in three steps, two of which were inductive and one was deductive.

The inductive steps followed Saldaña’s (2015) two-step approach. In the first step, three coders inductively developed a codesheet by closely examining the tweets. The codesheet for networked frames was broad and contained numerous open codes. After finishing open coding, the coders discussed their codesheets to produce a provisional codebook. Accumulating open codes into general themes, a total of 28 themes were identified. They also defined the practical definitions of the themes and their subthemes. This codebook served as the foundation for the second round. In this stage, the coders coded the entire sample utilizing pattern coding (Saldaña, 2015, p. 209) to uncover the core themes in the tweet samples. They were free to modify and revise the codesheet. As 30% of the codes changed, all coders coded the sample from the start once more. This process was repeated in five consecutive rounds, resulting in a final codesheet containing six networked frames, including news and information (NI); solidarity, hope, and optimism (SHO); mismanagement and incapability (MI); the virus and health instructions (VHI); and effects and fun. We also created a codesheet for discursive practices utilizing the same process. The final codesheet for discursive practices included sarcasm, metaphor, hate speech, and conspiracy theory. The complete list of codes is presented in Supplemental Appendix 1.

In the deductive step, we utilized the final codesheets to quantify the codes across all sample tweets, and the coders were not permitted to modify or alter the codebook. The output of this deductive step was used to determine the dominant themes (frames and rhetorical devices) in the sample. However, we did not solely rely on these numbers. We also utilized the qualitative notes and memos from the previous rounds to obtain a more in-depth understanding of grounded frames. In addition, each tweet could be coded for two frames, and both frames were regarded as equally important. As a result, neither one was given preference over the other.

In addition, we conducted coding of non-bot users to determine their type. To classify user types, we followed the approach of Kermani and Adham (2021). Consequently, we classified human users into seven categories, including media (legacy and digital-born), journalists, organizations (non-governmental organization [NGO] and state), politicians, celebrities, ordinary citizens (elite and ordinary users), and activists. The operational definitions of these categories are presented in Supplemental Appendix 1.

Furthermore, we calculated intercoder reliability using Krippendorff’s alpha (Lombard et al., 2002). The measures were 0.91, 0.95, and 0.83 for frames, discursive practices, and users, respectively, which were all satisfactory.

Initial Findings and Final Tweets Sample

Prior to presenting the final results, it is important to address modifications made to the sample of human users’ tweets in relation to the research objectives. Initial findings indicate that the majority of tweets were generated by media outlets and journalists, accounting for 65% of influential human users’ tweets and 66% of the sample chosen for this research. These accounts primarily shared NI, resulting in a biased data set toward NI. Although this is expected during the Covid-19 crisis, we aimed to examine the similarities and differences between humans and bots in framing the pandemic. Therefore, we excluded media and journalists from our qualitative analysis and added 2,000 random tweets to balance the sample. The final research sample consisted of 3,201 tweets from non-bot users after the removal of journalists and media. The main article’s qualitative analyses were conducted using this sample, while findings from all selected tweets, including media and journalists, were included in Supplemental Appendix 2. Our results confirm that even with the addition of 2,000 tweets from non-news accounts, NI framing by journalists remained the most dominant in the overall network.

Findings

This section outlines the findings that address our research questions. First, we will present the outcomes of our network analysis. We will provide details regarding the types and frequency of human users and bots in each community. Subsequently, we will present the most dominant frames in each cluster by both bots and non-bot accounts. Finally, we will examine the similarities and differences in framing the pandemic and the way that Twitter users rhetorically shaped those frames.

SNA

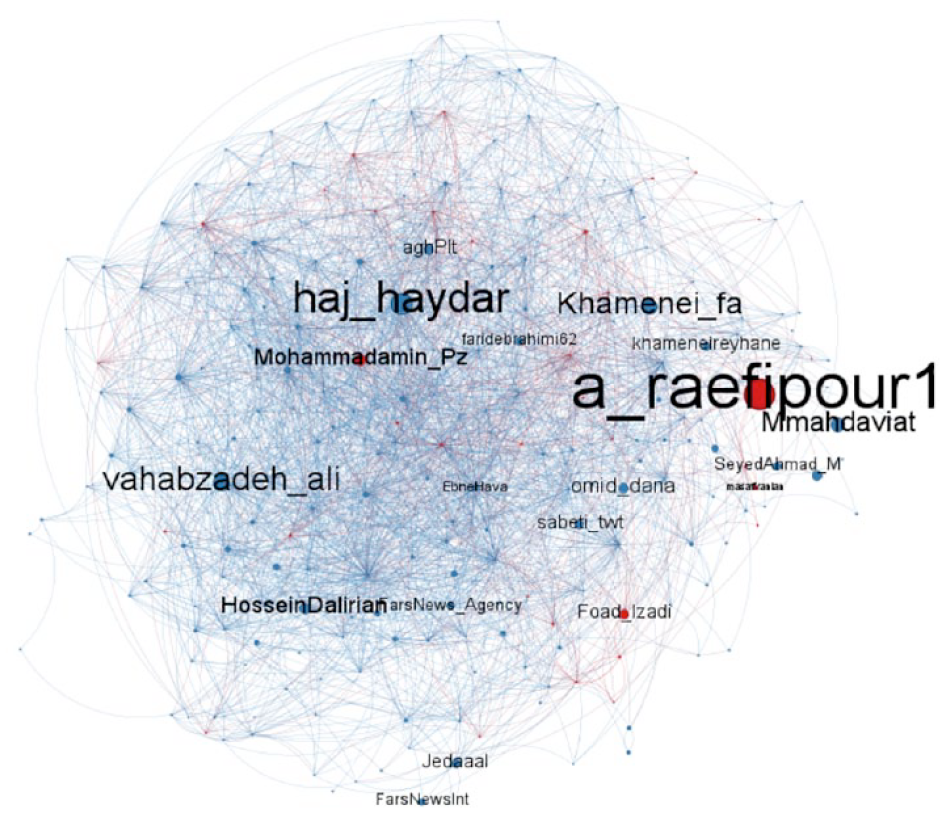

Our SNA revealed five primary clusters. As explained in the “Methods” section, we labeled these clusters after extensive rounds of qualitative interpretations. Thus, we named these clusters: PR-BN, pro-regime radical (PR-R), anti-regime everyday users, anti-regime moderate (AR-M), and anti-regime radical (AR-R). These clusters are illustrated in Figure 1.

RT network in Persian Twitter during the pandemic.

The complete graph, featuring all clusters, is presented in Supplemental Appendix 3. Figure 1 demonstrates that the network spans from far radical pro-regime communities to far radical anti-regime communities. Among the five primary clusters, two are pro-regime, while three are anti-regime communities. The graph indicates that Persian Twitter became more polarized during the initial months of the pandemic than during the 2017 or 2013 presidential elections. During those elections, scholars (Kermani & Adham, 2021; Khazraee, 2019) demonstrated that Persian Twitter consisted of three primary communities: reformist, conservative, and diaspora. Of these three communities, the reformist and conservative clusters belonged relatively to political forces operating within the regime’s boundaries, with most actors living in Iran. The reformist cluster was critical of certain regime policies, but their primary objective was not regime change. The diaspora cluster was the sole radical community advocating regime change. Nonetheless, this picture altered dramatically during the pandemic.

This shift is likely due to various events that occurred in Iran in the years leading up to February 2020. In 2013, Iranians elected ex-president Rouhani with the hope of more democratic reforms in the political system. However, by 2020, the majority of citizens were disappointed by the performance of Rouhani and other reformist figures, such as ex-president Mohammad Khatami. The violent suppression of nationwide protests in November 2019 and the subsequent shooting down of a passenger flight by the Islamic Revolutionary Guard Corps (IRGC, or Sepah in Farsi) on 8 January 2020, further infuriated and frustrated the people. These feelings of anger and disappointment with the lack of change in the system are reflected in Figure 1. As a result, Persian Twitter became divided between the regime’s supporters and opponents, with no community in the middle. As we will discuss later, certain figures attempted to bridge these opposing camps, but they were only located on the outskirts of these radical communities and could not form an independent community.

Bot Accounts

Within the network supportive of the regime, a specific cluster displays a notable prevalence of automated accounts. This observation aligns with the research of Azadi and Mesgaran (2021), who contend that numerous pro-regime users have joined Twitter in recent times, but their authenticity is doubtful. It should be noted, however, that bots exist in varying proportions across all clusters. Heatmap 1 depicts the percentage of bots within each cluster.

The percentage of bots within each cluster.

The highly probable class accounts constituted 0.24% of all accounts in general. Our classification of these accounts as bots indicates that the percentage of bots on Persian Twitter was higher compared to other contexts (Duan et al., 2022; Zhang et al., 2022). However, we observed that bots were not more active than human users. Specifically, the number of tweets from the top bots was considerably lower than that of the top human users.

Heatmap 1 reveals that pro-regime communities had a substantial number of bots. In the PR-BN cluster, a staggering 76.2% of users were highly automated accounts. Similarly, the PR-R had a significant population of highly probable automated accounts, comprising 47.9% of its users. In addition, 41% of the accounts in this cluster were likely bots. The anti-regime everyday (AR-EV) users and AR-R also had a large proportion of users with a high probability of being bots. While 43.6% of users in the AR-M) cluster were likely bots, it was the community with the highest number of probably genuine users.

These results suggest that the regime is likely managing a significant number of automated accounts on Twitter. This finding is consistent with previous research suggesting that radical non-democratic groups attempt to dominate and redirect public discourse on social media through bots (Keller & Klinger, 2019). However, anti-regime forces are also using bots to amplify their messages. Despite this, as we explain below, genuine users are the primary actors in anti-regime communities. In contrast, the primary users in pro-regime clusters acted in a coordinated manner and were identified as bots by Botometer algorithms.

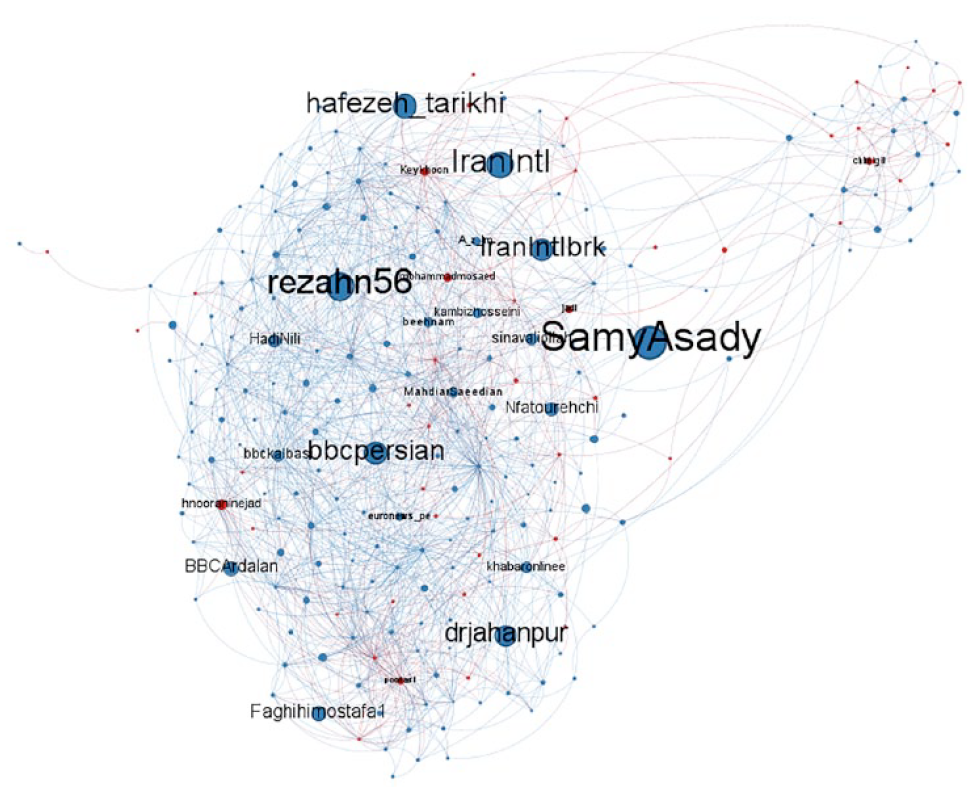

Figures 2 to 6 illustrate the graph of each cluster, depicting the intermingling of bots and non-bot accounts. Red nodes represent highly probable bot accounts, while blue nodes denote highly probable human accounts. In addition, the node size indicates the user’s PageRank. To improve the visibility of graphs, all graphs have been filtered to the top 10% of users (based on their PageRank) in each cluster. The most influential individuals were then those with designated accounts.

The pro-regime botnet.

The pro-regime radical community.

The anti-regime everyday users.

The anti-regime moderate community.

The anti-regime radical community.

As shown in Figure 3, @a_raefipour1 is a user with a high PageRank who had a significant impact on the PR-R cluster. While Botometer classified this user as a bot, we know that it is not. This account belongs to Aliakbar Raefipour, a hardliner pro-regime figure who manages a state-affiliated institution called Massaf (“Conservaties Against Raefipour?” 2020). Massaf is dedicated to promoting regime propaganda on Twitter and in the offline world. Since we know this user is not a bot, we classified it as a non-bot account. However, it can be noted that Botometer was not entirely incorrect. @a_raefipour1 is situated on the border between the PR-R and PR-BN communities, indicating that this user has extensive connections with bot accounts. Furthermore, most pro-regime users are members of the regime’s cyber army, which is operated by Massaf and Raefipour. They are only present on Twitter to retweet and support one another, aiming to silence anti-regime users. They typically undertake this mission through coordinated activities. This is why Botometer identified them as bots. It should be noted that this finding suggests that most of the bot accounts on Persian Twitter are not operated by algorithms and automated programs. Instead, some humans are behind them, acting as bots.

Neyazi (2020) identifies a comparable pattern in the Indian Twittersphere. The increasing scrutiny of digital platforms by fact-checking organizations and platform companies themselves, according to him, has led India’s political parties to resort to cyborg technology, which combines automation with human agency, to avoid detection.

In the PR-R cluster, there are two notable figures situated at opposite ends of the spectrum. Media adviser @vahabzadeh_ali, who served under the former health minister, is in close proximity to the AR-M community. Conversely, @drjahanpur, the then spokesman for the Health Ministry, is located in close proximity to the PR-R cluster. These individuals hold positions of medical authority and are situated between PR-R supporters and AR-M users. This suggests that the Rouhani administration’s approach aimed to appease both groups (Kermani, 2022). Further into the AR-M community, there are users with more radical views against the regime, such as @BBCardalan and @Sinavaliollah. On the opposite border of this cluster, we can find some accounts that are strongly opposed to the regime, including @rezahn56 and @iranintl. These accounts connect the community to the AR-R cluster. In the AR-R community, many automated accounts can be observed. However, genuine users, such as anti-regime journalists @masihalinejad and @Alighazizadeh are the primary actors. The findings from Figure 6 support the numbers in Table 1, as many bots are present. Nevertheless, the community is still primarily directed by non-bot users, and bots cannot form an independent cluster. Nonetheless, it is expected to find more bots at the two extremes of the network.

Top User Types in Communities.

Human Accounts

Up to this point, our investigation has focused on the structure of Persian Twitter during the pandemic, as well as the prevalence of bots within the primary clusters. In addition to this, we will examine how both bot and non-bot accounts framed the pandemic. Before delving into that, we will first discuss the types of human users present in each community. To provide a comprehensive view of human users, our analyses were conducted on the full sample without excluding journalists/media. We only removed these users and their tweets after completing the analyses.

Table 1 displays the frequency of each user type within each community. As many cells in the table have small values, we filtered it to show only the top ones with values >3. The complete table is available in Supplemental Appendix 3.

Table 1 illustrates that the AR-M community was primarily led by journalists and legacy media, as can also be observed in Figure 5. This community was initially associated with the reformist camp in Iran but began to adopt more critical stances against the regime and align themselves with AR-Rs due to their dissatisfaction with the reformist government’s performance. While journalists also played a role in the AR-R and PR-R communities, media outlets, whether legacy or digital-born, did not have a significant impact on other clusters. This suggests that journalists, regardless of their political affiliation, had to some extent surpassed their affiliated media outlets in dominating public discourse on Persian Twitter. Furthermore, the high frequency of journalists across the three communities indicates that they still hold a prominent position in the Iranian political sphere. In contrast, other political forces, particularly politicians, have lost their standing in society.

In terms of popularity on Persian Twitter, the next user group after journalists and media outlets is ordinary citizens, including both ordinary and elite users. The AR-EV community was largely made up of ordinary citizens, with 18 of the top 20 users in this cluster falling into this category. In the PR-BN community, 9 of 15 users were also ordinary users. In both the AR-R and PR-R communities, elite users were successful in gaining prominence. These findings suggest that the struggle for dominance on Persian Twitter was primarily between journalists/media and ordinary citizens (ordinary/elite users). However, it is important to note that we excluded journalistic accounts due to their heavy news-sharing practices, which allows for a more comprehensive understanding of the activity on Persian Twitter during the pandemic.

In addition, the analysis of Table 1 shows that elite political forces, such as politicians or activists did not feature prominently. However, it is important to note that other types of users, although less frequent, may have had an impact on the network. Therefore, we have expanded our discursive interpretations to include all of the top users, regardless of their frequency. As a result, we will refer to these top accounts throughout the article.

Networked Framing of the Pandemic

As previously discussed, our analysis identified six dominant frames: NI, SHO, MI, VHI, effects, and fun.

As observed in other countries during the Covid-19 crisis (Montiel et al., 2021), NI was a prominent theme on Persian Twitter during the pandemic. Users shared NI about the virus, infected individuals, and ongoing developments.

Furthermore, social media users disseminated information about virus symptoms and health instructions to help others avoid infection or know what to do in case of illness. Given the uncertainty caused by the first wave of Covid-19 globally, people were keen to receive detailed and accurate information to help them understand the situation. Another important theme on Persian Twitter was the VHIs, which emerged due to the pandemic’s impact. This frame was mainly devoted to discussing the virus, its symptoms, and the health measures to avoid infection. As a result, it was a central theme at that time.

In contrast, the two other frames were more interpretative. The first was SHO, which focused on good news and optimistic information to raise spirits and cheer others up. Iranian social media users actively shared stories of hope and solidarity to foster positivity during the pandemic.

The second frame was MI, which mainly revolved around questioning the policies and control measures taken by the governments and other authorities. This frame focused on discussing who was responsible for the crisis and criticizing decisions and activities that exacerbated the situation. Criticisms ranged from the failure to quarantine cities in Iran to the role played by the United States, China, and other Western countries in worsening the crisis. In addition, users criticized the lack of medical supplies, such as face masks and sanitation gels and the inability of Iran’s government to manage the crisis effectively.

The effects frame emerged on Persian Twitter as users discussed the diverse impacts of Covid-19. One notable subframe was the effects of the pandemic on religious beliefs and behavior, where a discursive battle happened. Pro-regime users advocated for the importance of relying on spiritual and religious affairs to help people navigate the crisis, while anti-regime citizens criticized religion for its inability to provide effective solutions. These users prioritized science over religion, citing Covid-19 as an example.

The final frame identified was Fun, which consisted of humorous content related to the pandemic and other topics. Many users shared jokes and memes related to Covid-19, providing lighthearted relief amid the crisis.

While it is not feasible to discuss all subframes of the identified dominant frames here, we have included a complete list of these frames and their subframes in Supplemental Appendix 1. We may refer to specific subframes throughout the article as relevant to our analysis.

Framing the Pandemic by Non-Bot Accounts

The initial heatmap (Heatmap 2) displays the distribution of dominant frames among non-bot accounts in all five clusters according to their user type. It is noteworthy that subsequent heatmaps utilized in this article incorporate multiple threshold values to exclude unimportant cells. This screening process was indispensable because a majority of the cells held a zero value or a value close to zero. For Heatmap 2, a threshold of 0.2 was employed.

Prevalence of networked frames in each community among non-bot accounts.

Heatmap 3, like Heatmap 2, utilizes a threshold of 0.2 and visualizes the proportion of each subframe in all five clusters, categorized by user type for non-bot accounts.

Distribution of networked subframes in each community among non-bot accounts.

The combination of Heatmaps 2 and 3 clearly indicates that non-bot accounts, irrespective of their political inclinations, predominantly approached the pandemic from a political perspective. Covid-19 was viewed as not just a health crisis, but rather a political imperative where political decisions could have a significant impact on its severity. In addition, Iranian users connected Covid-19 to other political crises in preceding years to the pandemic. For instance, a user wrote: Some people feel that the solution to fighting #Corona, the protests over the shutdown of the Ukrainian plane, 2019 nationwide protests and the high price of fuel, the inflation crisis, and all other issues is only and only #internet_shutdown. This observation aligns with Spector’s (2020) contention that in a global pandemic, there are no crises per se. Across Persian Twitter, the most pervasive frame was one of mismanagement accompanied by a markedly negative outlook toward political identities. Conversely, frames that focused on health-related issues, such as VHI, were relatively unpopular.

Prior research (Azadi & Mesgaran, 2021; Kermani, 2020) has contended that Persian Twitter was predominantly anti-regime traditionally. Heatmap 2 corroborates this assertion by highlighting that dissident citizens, including both elite and ordinary users, in the AR-EV and AR-R communities, were responsible for propagating the mismanagement frame by expressing their harsh criticisms of the regime’s performance. For example, a user tweeted: The regime is the only culprit in Covid-19 dissemination, by not quarantining the cities and allowing Mahan Airline to continue its travels to China. Moreover, Heatmap 3 clearly reveals anti-regime users’ conviction that the regime was culpable for the casualties and the dire state of affairs in the country, as evidenced by the prevalence of Iran’s state subtheme in the mismanagement class for both elite and ordinary users in AR-R and AR-EV. Notably, they drew a distinction between the regime and the government, and we will get back to it later, with Heatmap 3 demonstrating that the proportion of tweets that blamed the government was considerably lower than those blaming the regime.

They perceived the entirety of Iran’s state apparatus as being responsible for the dire situation, not just the government. While they were critical of the government’s role, they also refrained from attacking it as they did not wish to be perceived as playing into the conservative agenda. Notably, they employed irony and sarcasm as rhetorical devices to bolster their criticisms, which is evident from the prevalence of fun messages shared by anti-regime individuals in Heatmap 2, accounting for 6.35% of the total tweets and ranking second in the sample. Heatmaps 4 and 5 provide an overview of the percentage of discursive practices, including rhetorical devices and their subthemes, in each community.

Distribution of discursive practices employed by non-bot accounts in each community.

Percentage of subthemes of discursive practices utilized by non-bot accounts in each community.

According to Heatmap 4, a significant proportion of the tweets employing humorous discursive practices were sarcastic messages targeting the regime, as were tweets related to other discursive practices, such as mismanagement. For instance, an anti-regime user posted: I asked a doctor why Corona kills people above 60 y/o? It replied that it is the punishment for those who participated in the revolution. The user was referring to the 1979 Islamic revolution that overthrew the Pahlavi monarchy and established the Islamic regime in Iran. In this tweet, the user employed sarcasm to criticize the regime for its performance during its whole life period.

The AR-R elite users played a crucial role in promoting news related to Iran by selectively sharing information that portrayed the country in a negative light. These users acted as gatekeepers for any news that could be construed as critical of the Iranian government and regime, whether implicitly or explicitly. They specifically highlighted news that acknowledged Iran’s position as a regional hub for virus dissemination. For instance, a prominent user in this group posted the following message: Bahrain has confirmed that all six cases of coronavirus in the country were contracted by individuals who had recently traveled to Iran.

Regime supporters faced the challenging task of defending the regime in the face of the drastic situation while acknowledging its severity. To tackle this paradox, they developed a rhetorical strategy. They emphasized a narrative that compared the situation in Iran with that in Western governments, highlighting that despite the challenges, Iran fared better. In addition, they attributed the situation to the Iranian government rather than the regime. Regime supporters used war and revolutionary metaphors to appeal to the sacrifices and efforts of medical staff.

In contrast to anti-regime individuals who criticized the regime, pro-regime users framed mismanagement differently. They denounced both Western governments and Iran’s government for the crisis, positioning the West as the “discursive other” in Iran’s hegemonic discourse (Jahanbakhsh, 2003). The regime has a historical tradition of blaming the West for its shortcomings and adversities in the country. Pro-regime users utilized this antagonistic theme to justify the worse situation in Iran. They argued that Western countries were responsible for the situation in Iran, such as by imposing unfair sanctions, and that they failed to manage the crisis well themselves. Regime supporters shared many tweets pointing out the severe suffering of Western people due to a shortage of medical support and materials. Therefore, while acknowledging the challenges in Iran, pro-regime users concluded that the situation was worse in Western countries. They argued that as Western countries were often depicted as developed and powerful, Iranians should take pride in faring better than them and have no complaints. For example, a user tweeted: These days, anyone who wants to see the majesty of our Islamic revolution should compare the situation in Iran with the West. The so-called advanced Western countries are stuck in the swamp of the shortage of health materials while Iran is managing the crisis with power and strength.

In addition to blaming the West for the crisis, pro-regime individuals also held Iran’s government responsible for the situation. In Iran’s political system, the state encompasses the entirety of the regime, which is headed by Ali Khamenei, Iran’s leader. This includes all parts of the regime that are directly or indirectly appointed by Khamenei, such as the Guardian Council. As a metonymy, the state or “Nizam” in Farsi refers to Khamenei himself. The government operates within a limited electoral system where the president is elected from a pool of approved candidates by the Guardian Council. However, the government is not free and is constrained by various unelected forces managed by Khamenei. The political relationship between elected and unelected parts of Iran’s political system is dependent on the distance between the president at any given time and Khamenei, which can lead to political conflicts or alliances.

Despite this, the leader and his supporters aim to shift the responsibility for any shortcomings in the country onto the government while absolving the regime, specifically Khamenei. This strategy was evident during the pandemic, when pro-regime users attempted to deflect criticism by focusing on the government’s failings. They blamed the government for the shortage of sanitizing materials and other necessary resources. At the time, the government was led by Hassan Rouhani, who was considered to be more reformist than conservative. This presented an opportunity for regime supporters to attack the government and clear the regime’s name. A tweet from a pro-regime user depicts this strategy clearly: With the current performance by the government, there is no hope in controlling the Covid-19 crisis. The leader should intervene directly and stop the government from killing more people.

In addition to blaming the government, pro-regime individuals attempted to argue that Iran was a powerful country capable of handling the crisis, without necessarily crediting the government. They achieved this by using discursive framing to highlight the sacrifices made by medical staff. Furthermore, they emphasized that it was the Basij 5 and Sepah, rather than the government, who were controlling the situation. Ordinary users in PR-BN took the lead in promoting this narrative. By focusing on the role of these organizations in managing the crisis, they attempted to demonstrate Iran’s strength and resilience in the face of adversity. For instance, a tweet asserts: The performance of Basij and Sepah in managing the pandemic and their support for poor people show relying on our revolutionary spirit could solve all the problems. The government should take a lesson and do not expect that the West will help us.

In the analyzed data set, supporters of the regime frequently used war rhetoric in reference to the conflict between Iran and Iraq, portraying medical professionals as soldiers fighting alongside the enemy. This language served to praise organizations like Basij and Sepah while legitimizing the regime’s actions. According to Heatmap 4, revolutionary and war metaphors were the most prevalent rhetorical devices in the entire sample. For instance, a user tweeted: Our medical staff, our Basij and Sepah are fighting against the pandemic like they did during the war with Iraq. They are our health soldiers in the front line!

Framing the Crisis by Bots

The following are Heatmaps 6 and 7, displaying the proportion of each frame/subframe produced by bots in each community.

Distribution of networked frames produced by bots in each community.

Distribution of networked subframes generated by bots in each community.

The results indicate that mismanagement was the most extensively discussed frame by bots, particularly in PR-BN and PR-R. In contrast, anti-regime bots in AR-EV and AR-R were unable to surpass pro-regime automated accounts. Even though anti-regime human users were actively criticizing the regime’s mismanagement, pro-regime bots were framing it differently. These bots were primarily focused on promoting SHO and emphasizing medical staff, Basij, and Sepah subframes. Heatmap 7 reveals that pro-regime bots in PR-BN dedicated significant effort to highlight subframes related to Western governments, Iran’s power, and medical staff. These findings are consistent with our earlier observations.

As previously mentioned, the network of human users was primarily influenced by the anti-regime narrative of the regime’s mismanagement and incapacity in managing the crisis. It is well-established that pro-regime users’ communities on Twitter have historically been smaller than their anti-regime counterparts (Kermani & Tafreshi, 2022; Khazraee, 2019). Therefore, regime supporters attempted to alter the anti-regime narrative by deploying bots, as also observed in Azadi and Mesgaran’s study (2021). In essence, bots were utilized to reinforce and amplify the existing narratives advocated by human users, rather than to produce new or alternative ones. This phenomenon was most prominent in the pro-regime camp, but anti-regime users also employed automated accounts for this purpose.

It is worth noting an intriguing discovery concerning the prevalence of rhetorical devices within pro-regime bot networks. Heatmaps 8 and 9 exhibit the proportion of rhetorical devices used in each community.

Percentage of rhetorical devices utilized by bots in each community.

Proportion of rhetorical devices (subthemes) used by bots in each community.

Based on Heatmap 8, it is evident that the most prevalent rhetorical device used by pro-regime bots in the sample was metaphor, particularly the revolutionary and war metaphors. While sarcasm was popular among anti-regime human users, bots from pro-regime communities relied heavily on such metaphors. Heatmaps 7 and 8 together reveal that pro-regime bots employed these metaphors primarily to reinforce the narratives developed by ordinary and elite users in PR-BN and PR-R. For example, a bot retweeted a tweet from a pro-regime elite user that read, “Everybody says we wish Covid-19 would have been halted in China, now you understand why Sepah stopped ISIS in Syria.” This tweet employs a war metaphor to justify Iran’s intervention in Syria.

Another metaphor that further reinforces this notion is that of the defenders of health (مدافعان سلامت). This metaphor is associated with another one that references the people who fought to protect the holy shrines of the Shiite Imams (the defenders of the Haram). This metaphor links the holy shrines with health, suggesting that the shrines should be protected from the enemy (i.e., the United States). Therefore, Iranian soldiers fighting in Syria are depicted as defenders of society’s health. The use of such metaphoric language serves to justify Iran’s hostile foreign policy, and pro-regime bots actively promoted it.

The above-mentioned tweet was also an attempt to rebrand Sepah. Ordinary/elite users in PR-BN and PR-R developed a narrative that praised Sepah for helping people while denouncing the government, as shown in Heatmap 3. A bot further promoted this narrative by retweeting a message that used a war metaphor: “I am pretty sure that Iran is the only country whose military forces come to the front line to combat Covid-19.” The bot also used the hashtags #strong_Iran and #the_illusion_of_the_west.

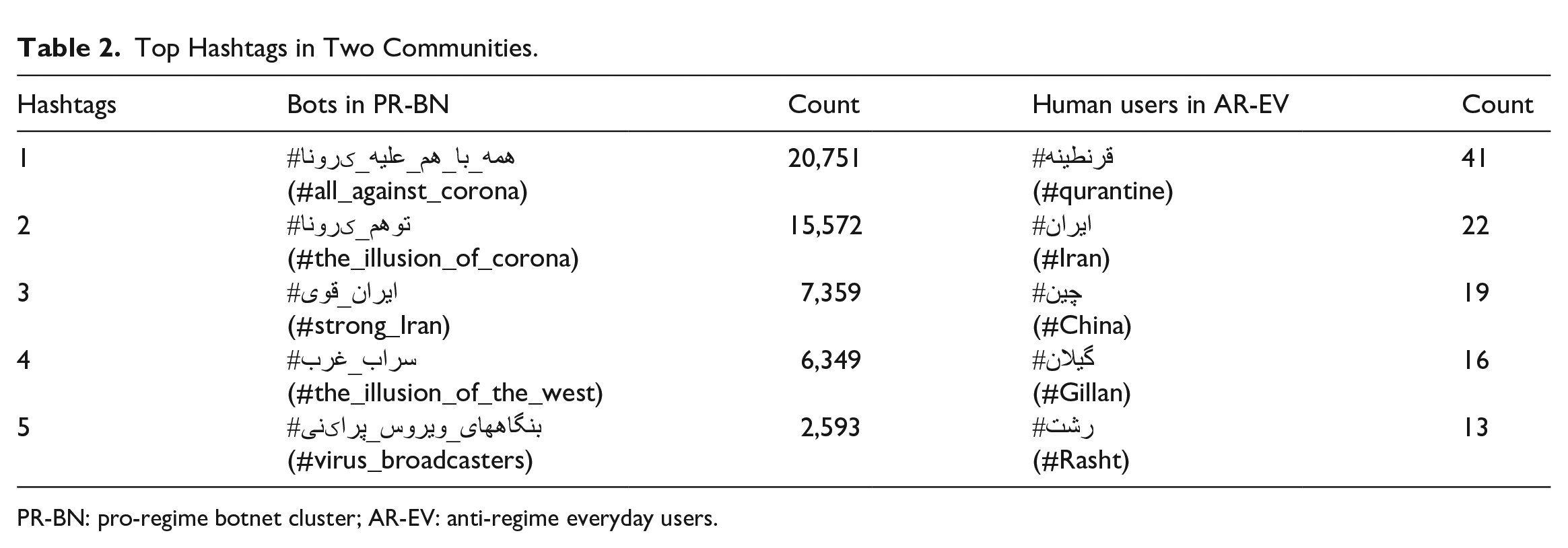

Based on the hashtag analysis, it was found that a primary objective of the pro-regime bots was to spread hashtags and infiltrate Twitter trends within Iran. To further illustrate this point, Table 2 displays a comparison of the five most commonly shared hashtags between bots in PR-BN and human users in AR-EV.

Top Hashtags in Two Communities.

PR-BN: pro-regime botnet cluster; AR-EV: anti-regime everyday users.

Table 2 provides a clear indication that there were significant quantitative and qualitative differences between the hashtags used by bots and those used by human users. Pro-regime bots in PR-BN primarily aimed to amplify hashtags that supported their narratives around Iran’s power and the West’s weakness in managing the Covid-19 crisis. In addition, they spread the notion that Covid-19 was an illusion and used #virus_broadcasters to criticize the Western media’s negative coverage of Iran. In contrast, human users in AR-EV used more conventional hashtags that provided information about the situation, including the names of Iran and China and two cities within Iran. The most frequently used hashtag was #quarantine, which reflected the public debate around this topic at the time. Table 2 also confirms that bots used hashtags on a much larger scale than human users.

In addition, Heatmap 8 demonstrates that pro-regime bots were actively spreading conspiracy theories, which aligns with the activities of far-right bots in other contexts (Keller & Klinger, 2019). Furthermore, the heatmap reveals that there was no significant difference between pro-regime and anti-regime bots in their use of hate speech. Conversely, Heatmap 4 suggests that anti-regime human users were more likely to share hateful messages than pro-regime users. This could be due to their frustration and outrage toward the regime.

Conclusion

This article contributes to multiple strands of research on bot activism in contemporary societies. While our empirical analyses focus on Persian Twitter, the contribution of this study is not restricted to this specific context. Existing research on bot activism has primarily focused on political events, such as election campaigns, while the growing body of literature on bots’ behavior during the pandemic has remained at a descriptive level using computational methods without providing a deeper understanding of their discursive strategies. Moreover, most of these studies have been conducted in Western countries, limiting our understanding of bot communication in other contexts, particularly authoritarian regimes.

The existing literature on bot activism, in both democratic and non-democratic societies, has shown that bots negatively impact public discourse, predominantly retweet each other and other users in their camps, and frequently express negative and hateful sentiments (Duan et al., 2022; Keller & Klinger, 2019; Shao et al., 2018; Stukal et al., 2017). Few studies on Persian Twitter also confirm these findings (Farzam et al., 2022; Honari & Alinejad, 2022; Thieltges et al., 2018). Existing research in this field highlights the use of bots to discredit opposition politicians, manipulate popularity metrics, and amplify particular messages and narratives. Since research is conclusive in such debates, whether in democratic societies (Howard & Kollanyi, 2016; Howard et al., 2018; Woolley & Howard, 2018), non-democratic societies (Alieva et al., 2022; Stukal et al.,2017, 2019; Vasilkova & Legostaeva, 2019), or more particularly in Iran (Farzam et al., 2022; Honari & Alinejad, 2022; Thieltges et al., 2018), our primary aim was not to replicate them and solely investigate bots’ behavior and quantitative measures. Instead, we seek to contribute to the growing body of literature in this field by investigating bots’ discursive activism and the similarities and differences of them with human activism during the pandemic.

Considering that the majority of research in this field has been conducted using computational methods in various contexts, comparing the meaning-making practices of bots and humans is of the utmost importance. For instance, Farzam et al. (2022) and Thieltges et al. (2018) tried to investigate bot activism in Iranian Twittersphere. However, they relied primarily on basic computational methods, such as topic modeling and sentiment analysis, as opposed to more in-depth qualitative analyses. While there is little research on bot activism during the pandemic in Iran, a significant number of studies on this topic in other contexts are plagued by the same issue.(Cai et al., 2023; Shi et al., 2020; Weng & Lin, 2022; Zhang et al., 2022). Our findings, as discussed below, are an attempt to fill these gaps to some extent.

This study also sheds more light on Persian Twitter as an understudied context. The results confirm previous studies (Kermani & Adham, 2021; Khazraee, 2019) identified three main clusters on Persian Twitter (reformist, conservative, and diaspora communities) or the work by Marchant et al. (2018) discovered more diverse communities. However, we provided a more nuanced description of clusters on Persian Twitter. This is essential in light of the developments and changes in the Iranian political sphere and Persian Twitter as a result of numerous sociopolitical events in recent years, such as the shooting of the Ukrainian passenger flight in 2020. We hinted that Persian Twitter has become more polarized and that the reformist community that existed between pro- and anti-regime clusters has vanished. Now, Twitter in Persian is the battleground between pro- and anti-regime users with varying degrees of hostility.

Our findings reveal that the frequency and number of bots on Persian Twitter are not as high as in other non-democratic societies. In Russia, Stukal et al. (2017) discovered that the daily proportion of bots among actively Tweeting Russian accounts reached as high as 85%. Jones (2019) observed that during the conflict between Qatar and Saudi Arabia, at least 71% of the active accounts were found to be bots on some hashtags. Also, Neyazi (2020) documented the high number of bots on the Indian Twittersphere. However, the number of bots we identified on Persian Twitter is higher than the numbers found by other scholars during the pandemic, which is around 9% (Duan et al., 2022; Shi et al., 2020; Zhang et al., 2022). Contrary to the findings of other research, the number of tweets disseminated by bots was substantially less than that of human users. Most studies indicate that Twitter bots share more messages than human accounts (Howard & Kollanyi, 2016; Jones, 2019; Neyazi, 2020; Stukal et al., 2022). Using the metric of 50 daily shared tweets as an index to identify an account as a bot, as suggested by Howard et al. (2018), no account in our data set could be identified as a bot. This result highlights the importance of considering the context when identifying bots based on their (hyper) activity and supports the criticism of setting a universal metric for identifying bots (Martini et al., 2021).

This study parallels previous research that asserts governments and non-democratic forces do not inherently employ bots (Sanovich et al., 2018; Stukal et al., 2022). Both pro- and anti-regime communities contained bots. Nonetheless, the majority of bots were found in pro-regime communities, particularly PR-BN and PR-R. Bots were also present in the AR-R community to a large extent, but their share and activity were still higher in pro-regime communities. Middle communities, such as AR-M and AR-EV, hosted a smaller number of bots. This finding is consistent with previous research that has highlighted the significant role of bots in radical far-right parties in other contexts, particularly in Western democracies (Keller & Klinger, 2019) or non-democratic societies (Jones, 2019; Stukal et al., 2022; Vasilkova & Legostaeva, 2019). The study also confirms that pro-regime clusters were led by bots, while anti-regime communities, especially AR-R, hosted a notable number of bots but were dominated by human users.

Our study also revealed that despite the lower number of bots, they shared more hashtags, particularly among pro-regime bots, which is consistent which previous research in other countries like Qatar and India (Jones, 2019; Neyazi, 2020). The coordinated nature of their activity suggests that they were likely managed by the Iranian regime, which has admitted to using cyber battalions to manipulate social media platforms (Tabnak, 2022). In addition, the Massaf institution employed individuals to share tweets and participate in social media communication. This finding challenges our conventional understanding of social bots and suggests that many of the accounts identified as bots in this study were likely not fully automated but rather operated by humans tasked with bot-like behavior. This could be an attempt to deceive human users and prevent them from quickly identifying the accounts as bots. This result confirms other researchers’ findings like Neyazi (2020) and Hegelich (2016).

Based on our findings, it appears that the Iranian regime likely utilized bots to compensate for its limited presence on Twitter. Our study demonstrates that the predominant narrative among human users centered on the regime’s mismanagement and incompetence, as expressed by AR-EV and other anti-regime groups. Conversely, the bots’ section of the network featured a narrative that emphasized Iran’s strength and the Western governments” inability to handle the crisis, which was propagated by pro-regime human users and then amplified by bots. While we observed a similar pattern to a lesser degree in anti-regime communities, we conclude that the primary function of Persian Twitter bots is astroturfing. Specifically, pro-regime bots engaged in an astroturfing campaign aimed at replacing the anti-regime narrative with the regime’s perspective on the crisis. It resembles studies on non-democratic societies like the Russian Twitter (Sanovich et al., 2018) and democratic societies like Germany (Keller & Klinger, 2019) and the United Kingdom (Bastos & Mercea, 2019) where governments and radical groups try to increase the indicators of their narratives artificially.

The extensive use of bots by the Iranian regime during a health crisis is a matter of significant importance. Although the Covid-19 crisis had political implications, it was not a political crisis comparable to those faced by the regime in recent years. Hence, it is plausible to assume that the regime utilizes even more expansive bot communities to exert dominance over social media during political crises, such as the recent #MahsaAmini movement. Consequently, it is crucial to monitor the activities of the Iranian regime on Persian Twitter to identify potential threats to democratic efforts in Iran. Here, we should acknowledge that the extent to which the findings of this study are applicable to Persian Twitter is not entirely apparent. Not only are there few studies on bot activism on Persian Twitter, but there are also few studies on sociopolitical events and no research on the more profound meaning-making practices of automated accounts and humans. Therefore, we cannot determine whether our findings are applicable to other events and eras, especially during political upheavals.

A major contribution of this study is analyzing and comparing the discursive activism of bots and humans during the pandemic. We confirmed previous research opining that NI dominated Twitter during the pandemic (Montiel et al., 2021; Shi et al., 2020). Moreover, we pointed out that anti-regime narratives were the most ubiquitous networked frames on Persian Twitter. It reflected prior research on Persian Twitter (Azadi & Mesgaran, 2021; Kermani & Hooman, 2022; Kermani & Tafreshi, 2022; Khazraee, 2019) and in other non-democratic societies (Nip & Fu, 2016; X. Zhao & Wang, 2023).

Our findings also reveal that both human and bot accounts utilized distinct rhetorical techniques to achieve their objectives. Anti-regime human users predominantly employed irony, sarcasm, and hate speech directed toward the regime and its leaders. Conversely, pro-regime bots relied on revolutionary and war metaphors to reinforce their messages. This finding highlights the fact that while anti-regime individuals were more straightforward in their opposition to the regime, pro-regime bots attempted to appeal to people’s religious and revolutionary emotions in more subtle ways. Nevertheless, this study indicates that both human and automated accounts on Persian Twitter, irrespective of their stance toward the regime, perceived the Covid-19 crisis primarily as an opportunity to advance their political objectives, with the health crisis being of secondary significance. In contrast to other contexts, such as the study by Duan et al. (2022) and Ferrara (2020), Iranian users (bot or human) were less inclined to propagate conspiracy theories during this politically contentious period, choosing instead to channel their efforts into their political goals. Our results also echoes other researches in India (Neyazi, 2020) and Russia (Alieva et al., 2022; Stukal et al., 2017) showing that bots use more hashtags and disseminate particular hashtags to infiltrate the flow on information on Twitter.

It is essential to recognize the limitations of our research. We were unable to access certain parts of the data due to deletions or suspensions, which is an unavoidable aspect of big data research. In addition, we excluded news-leaning accounts, such as media outlets and journalists, from our data set to better understand how non-news accounts portrayed the crisis. This decision implies that the dominant atmosphere on Persian Twitter was news-oriented, which is not surprising given the unpredictable nature of the pandemic’s initial wave worldwide.

Despite these limitations, this research contributes to our understanding of how bot and non-bot accounts operate in an authoritarian regime during a pandemic. It not only advances our comprehension of framing practices on Persian Twitter but also adds to the growing body of knowledge on bot activism in our field. The study provides new perspectives on how human users and bots used discourse to frame a health crisis on Twitter, despite it being blocked in Iran. It also suggests avenues for future research. For example, we could explore Persian Twitter during other political events to determine if the regime employs more sophisticated strategies to counter anti-regime narratives. The recent #Mahsa_Amini movement could serve as a suitable case study for such research. In addition, more research is necessary to uncover discursive activism by automated accounts and those accounts that appear to be bots but are actually managed by humans.

Supplemental Material

sj-docx-1-sms-10.1177_20563051231216927 – Supplemental material for Bots Versus Humans: Discursive Activism During the Pandemic in the Iranian Twittersphere