Abstract

Given that political groups are dispersed across platforms, resulting in different discourses, there is a need for more studies comparing communication across platforms. In this study, we compared posts about #StopTheSteal from three social media platforms after the 2020 US Presidential election and preceding the January 6 Capitol Riot. To do so, we utilized Snow and Benford’s typology of social movement frames—diagnostic, prognostic, and motivational frames—in the context of far-right movements and an additional frame device: violence cues. This study focused on the following three social media platforms: Facebook, Twitter, and Parler. We built three corpora of social media data: 26,093 Facebook posts, 248,643 tweets, and 400,600 Parler posts. Using Bidirectional Encoder Representations from Transformers (BERT) classifiers, dictionary methods, and qualitative text analysis, we find that the use of these frames varies by platform, with users on the alt-tech platform Parler using violence cues such as “smash” and “combat,” suggesting a greater call to action relative to the mainstream platforms.

Keywords

Following the defeat of former President Donald Trump in the 2020 U.S. Presidential election, Trump and his supporters disputed Joe Biden’s victory, alleging that the Democrats engaged in voting fraud. Efforts to overturn this outcome, described as the “Stop the Steal” (StS) movement, culminated in the storming of Capitol Hill on 6 January 2021, endangering the democratic process. Among the many factors that contributed to the alternative right (alt-right) StS movement, this study highlights their use of different social media platforms to disseminate election-related disinformation, mobilize supporters, and reinforce their in-group members’ political identities and nationalism.

We compared discourse from three social media platforms about the StS movement after the 2020 U.S. Presidential election and leading up to the January 6 Capitol Riots. Specifically, we compared social media discourse related to the StS movement on two mainstream platforms—Facebook and Twitter—and an alt-right platform Parler.

To analyze this discourse, we gathered 675,336 social media posts published during a 5-month period (1 September 2020 to 1 February 2021) and applied a mixed-methods (computational and qualitative) content analysis to identify framing activities employed when discussing far-right social movements. Applying Snow and Benford’s (1988) three collective action frames (diagnostic framing, prognostic framing, and motivational framing), we first investigated the prevalence of three frames on Facebook, Twitter, and Parler. In doing so, we demonstrate the nature of discourse in online activism across various social media platforms. The findings will help us understand how various social media affordances and content moderation practices might have facilitated collective action in a social movement.

Although mobilizing discourse on social media have been studied with regard to disenfranchised populations (e.g., Enjolras et al., 2013), it is also worth considering the ugly side of mobilizing discourse on social media; that is, how extremist movements utilize mobilizing discourse to advocate for violent solutions (Koopmans & Olzak, 2004). Our analysis accounts for this by studying an additional prominent framing device in the StS discourse: grievance-driven, pro-violence language features, which we conceptualize as a frame cue (McLeod & Shah, 2015). We compared the prevalence of violence cues in the discourse among three platforms, as well as the specific linguistic elements utilized by social media users to incite violence.

Literature Review

Political Discourse Across Different Social Media Platforms

The social media ecosystem is composed of many platforms, varying in modality (e.g., text, image, video), size, affordances, and moderating tools (Xu et al., 2022), and most US citizens use a myriad of platforms (Auxier & Anderson, 2021). For this reason, researchers have described social media consumption and production as “platformized” (Helmond, 2015), requiring social movements and political groups to construct messages that could be both homogeneous across platforms and tailored for a platform’s affordances. However, most social media research has overwhelmingly been single-platform analyses (Bode & Vraga, 2018). Addressing this gap with comparative analyses of platforms is critical to understand how discourses may vary across platform contexts (Matassi & Boczkowski, 2021).

One mechanism for contrasting social media is to consider whether they are distinct in their affordances. For example, users may use private or encrypted communication for talking to certain people but might opt for public-facing platforms to reach a wider audience. Different affordances of platforms can also contribute to a greater degree of the disclosure; for example, if users feel as if what they say will not be shared beyond that group (O’Leary et al., 2020). This can be good, as platforms can specialize in different types of content and build both mass and niche user bases. However, this can also allow groups to organize malicious online and offline activities on private or less popular social media platforms.

Alternatively, content across platforms can share some similarity, particularly when strategically published together. This is common in the case of advertising, where one advertising campaign is expected to have a unified message that works across multiple platforms (Laurie & Mortimer, 2019). Drawing from these techniques, organized groups, including state-sponsored trolls, have also built multi-platform political campaigns (Kreiss et al., 2018; Wagner & Boatright, 2019), producing shared narratives and claims that reach different audiences across the platforms (Shrestha et al., 2020).

The multi-platform structure of the social media ecosystem raises questions about how content across different social media may vary as a consequence of the platform’s affordances and its user base. While social media platforms support a range of communities, both political and non-political, we focus this question on one particular group, US far-right movements (and specifically the “Stop the Steal” effort) that are especially successful at using social media to advance their agenda (Schroeder, 2019).

Far-Right Movements on Social Media

Scholars have used social movement theory to understand the nature and context of extremist groups, including White supremacists and neo-Nazis (Bhat & Klein, 2020; Caiani & Della Porta, 2018). One subgenre in this scholarship focuses on far-right movements, defined as ultra-nationalists that reject out-groups and advocate for radical, sometimes violent actions to achieve political, economic, social, or cultural goals (Crosset et al., 2019). Studies examining far-right groups on social media show how they mobilize support and coordinate action online (Castelli Gattinara & Pirro, 2019). To be clear, we are not saying that social media causes far-right extremist beliefs. 1 Rather, social media enables the communication between far-right activists and prospective supporters (Mamié et al., 2021).

As a result, far-right groups have proliferated online, both on traditional social media (Winter, 2019) and on smaller, niche platforms known as “alt-tech” platforms, since they collectively frame themselves as alternatives to larger platforms (Wilson & Starbird, 2021). Within alt-tech platforms, far-right movements organize and mobilize offline events (Ekman, 2018), discuss and reframe mainstream narratives (Peucker & Fisher, 2023), and facilitate more amenable spaces for far-right rhetoric (Urman & Katz, 2022).

These platforms can be distinguished from their mainstream counterparts in two ways. First, some alt-tech platforms, such as “Gab,” emphasize their encrypted services, facilitating more private conversations (Shehabat et al., 2017). Second, alt-tech platforms often brand themselves as “free speech” havens (Munn, 2021), inviting anti-democratic and sometimes pro-violence discourse that would otherwise be routinely removed from mainstream platforms (Dori-Hacohen et al., 2021). Both the encrypted structure of these platforms and their ideological stance allow extremist communities and hate groups to build more resilient social media spaces wherein extremist narratives and conspiracy theories proliferate (Wilson & Starbird, 2021).

It is not clear the extent to which far-right discourse may be similar or different across these social media spaces. Literature on multi-platform social media dynamics broadly highlights the value of cross-platform coordination for genuine actors and disinformation agents (Kreiss et al., 2018). While we expect such groups to be somewhat aligned in their messaging (Mundt et al., 2018), the less formal hierarchical structure may produce disagreements about how a group manages their discourse (Gerbaudo, 2017). For example, members of a group may frame a topic using a unified critique; however, different factions of a group could advocate for different solutions across multiple platforms. This may be especially true if some users promote more heterodoxical actions (e.g., violence).

As mainstream social media platforms continue to suspend users posting or sharing far-right misinformation or calls to violence, particularly after the Capitol was attacked on 6 January (Aliapoulios et al., 2021), much of the far-right media ecosystem has relocated to alt-tech platforms (Zuckerman & Rajendra-Nicolucci, 2021). However, during the lead up to the 2020 election, far-right content proliferated on mainstream platforms like Twitter and Facebook (Muis et al., 2020). We focus on discourse about “Stop the Steal,” a far-right movement that promotes the false claim that the outcome of the 2020 US Presidential election was a result of electoral fraud (Homans & Peterson, 2022).

“Stop the Steal”

Building on the popularity of Trump’s claims of election fraud, which were further amplified across the right-wing media ecology (Bump, 2021), the “StS” movement is commonly understood as an extremist, far-right subset of Trump supporters, some of which have also advocated for other Trump-endorsed conspiracy theories (MacFarquhar, 2021). However, it is worth noting that the false claims had reached wider support among conservatives: post-election polls suggested 64% of Republicans believed that the election results were unreliable (C. Kim, 2020).

Attempts to reinstate Trump or stop the official vote count reached an apex when, on 6 January 2020, Trump supporters gathered in Washington D.C. to participate in several loosely related rallies and marches to “Stop the Steal.” In a bombastic speech, Trump urged his supporters to “fight like hell” (Andersen, 2021). During and after his speech, over 2,000 attendees marched to the Capitol Building and broke into the building, breaching the police perimeter and resulting in the death of five people. 2 Most Americans viewed the storming of the Capitol Building as an attack on US democracy, though the public remains split as to whether Trump bears direct responsibility for the attacks (Quinnipiac University Poll, 2022).

As a result of these criticisms, many mainstream social media platforms suspended users espousing far-right beliefs. Facebook focused on removing StS content or groups espousing the election fraud conspiracy, whereas Twitter primarily suspended users affiliated with the false QAnon conspiracy (a related, but distinct, far-right conspiracy group, see Booker, 2021). Trump himself was suspended permanently from Twitter and Facebook (Delkic, 2022), although his Facebook suspension was later changed to a temporary ban.

A substantial amount of policy and media attention has focused on how the StS movement utilized social media platforms to organize the 6 January rallies and marches (Timberg et al., 2021). Internal documents suggest that, in the days after the 2020 election, platforms like Facebook struggled to detect and moderate false election fraud claims (Levine, 2021) and, frustratingly, had few guidelines regarding how to moderate this content. As a result, the StS movement presents a unique opportunity to study how discourse in far-right movements varied across mainstream and alt-tech platforms.

Our analysis will focus on StS discourse in three platforms: Facebook, Twitter, and Parler. The first two are large, mainstream platforms with active StS discourse in the lead up to the election. The third is an alt-tech platform used by far-right protesters to organize the 6 January rallies (Munn, 2021). To study the discourse across these three platforms, we will use the concept of collective action frames. To ground our work, we ask the following:

RQ1. To what extent do Facebook, Twitter, and Parler vary in the prevalence of different collective action frames in #StopTheSteal discourse?

Collective Action Frames within StS Discourse

While media framing theory can be traced back to sociology and psychology research about print and broadcast news (Lecheler & De Vreese, 2019), the study of frames—defined as elements within a message that encourage a specific perspective or understanding of an event or issue (Matthes & Kohring, 2008)—in social media is understandably newer. This work has found that, because frames can emerge bottom-up, social media users are able to spread their political beliefs with relatively few barriers to entry (J. Kim, 2017). Thus, frames from social media content can promote alternative viewpoints on a political issue (Hamdy & Gomaa, 2012). Owing to the ephemeral attention of online audiences, social media frames can create “ad hoc issue publics” (Meraz & Papacharissi, 2013, p. 144) of which members may have multiple, scenario-specific affiliations (Bennett & Segerberg, 2012).

Drawing from the scholarship that has applied framing theory to study social media discourse (including its relationship to news media, see Aslett et al., 2022), our analysis will focus on collective action frames, which have been traditionally used to study social movements. We draw from this scholarly tradition to define framing as “the signifying work or meaning construction engaged in by movement adherents [. . .] and other actors [. . .] relevant to the interests of movements and the challenges they mount in pursuit of those interests” (Snow, 2013).

We define collective action frames as, “action-oriented sets of beliefs and meanings that inspire and legitimize the activities and campaigns of a social movement organization” (Benford & Snow, 2000). Social movements use three different types of collective action frames (Snow & Benford, 1988): diagnostic, prognostic, and motivational frames. The diagnostic frame identifies social problems (or victims of social problems), and attributes blame and responsibility, often delineating an “us” and “them” boundary (Gamson, 1995). The prognostic frame suggests proposed solutions, plans, or strategies in response to the identified problem. The motivational frame refers to a motivational drive to participate, such as by calling to arms or providing a rationale for joining in ameliorative or corrective action (Snow & Benford, 1988).

Empirical studies have identified and analyzed various types of diagnostic, prognostic, and motivational frames in right-wing social movements. In explaining the rise of right-wing parties in Denmark, Rydgren (2018) found these parties tended to blame specific ethnic groups for social problems (diagnostic frame) and to suggest stricter immigration policies and more law and order as solutions (prognostic frame). When studying an anti-Islamic social movement in Norway, Berntzen and Sandberg (2014) found that the social movement organizations diagnostically framed Islam as an existential threat, identified the prognosis as mostly non-violent and democratic (e.g., advocating a complete halt to non-Western immigration), and used a slogan to manifest the motivational frame, “fight for what is yours” to encourage activism.

We anticipate that Parler, our alt-tech case, will likely be more amenable to far-right conspiratorial misinformation and pro-violence discourse (Wahlström et al., 2021). Thus, we expect to see that the diagnostic frame, focusing on election fraud as the problem; the prognostic frame, focusing on political action; and the motivational frame, focusing on right-wing populism (defined as political rhetoric and ideology that argues society is divided into the pure people and the corrupt elite; Mudde, 2004) 3 will be most prevalent on Parler. We hypothesize that

H1. The diagnostic (H1a), prognostic (H1b), and motivational (H1c) frame will be most prevalent on Parler.

Violence Frame Cues

As far-right groups are themselves not a monolith, it’s worth considering the extent to which far-right users will advocate for violence. Notably, not all far-right social movements advocate for violence. However, pro-violence discourse and violent actions may be especially prevalent in communities with hate groups and far-right organizations (Gaudette et al., 2022). Given the use of violence as a response to perceived election fraud, both in the United States and internationally, we explore whether pro-violence frame cues will vary across platforms.

Unlike the larger, more extensive claims that ground the aforementioned collective action frames (diagnostic, prognostic, and motivational), we anticipate that calls for violence will manifest as cues that guide information processing (Igartua & Cheng, 2009). Cues and frames are two components of discourse that are said to shape individuals’ cognitive processing and social judgments of issues, groups, and figures (Cho et al., 2006). Whereas frames refer to the underlying principles that organize and structure discourse about a topic, cues are the labels and terms utilized to highlight aspects of that issue (Shah et al., 2002). As such, while frames may exhibit consistency across different issues, cues often vary from issue to issue. For example, it is conceivable to observe the presence of diagnostic or prognostic frames in discourses of the #MeToo and #StopTheSteal movements, while the cues employed in the discourses are distinct. Despite being relatively small in linguistic size, a few choice words, cues can nevertheless affect people’s perception of political issues (Mondak, 1993).

We focus here on violence cues—cues that advocate for or talk about violence—because of far-right groups’ propensity to use more negative (Ceron & d’Adda, 2016), violent, dehumanizing (Wahlström et al., 2021), and exclusionary discourse (Krämer, 2017). The presence of far-right discourse across multiple platforms may contribute to the use of violence cues, albeit for different reasons. For example, alt-tech platforms may create discursive opportunities for extremists to advocate for pro-violent actions (Wahlström & Törnberg, 2019). In the context of the StS movement, we anticipate that violence cues may be especially prevalent in the alt-tech platform Parler, as there are fewer moderation policies that would limit or remove this content:

H2. The violence cues will be most prevalent on Parler.

However, violence cues may manifest differently across platforms. On mainstream platforms, with users across the political spectrum, online discursive arguments may contribute to a greater willingness to use violence offline (Gallacher et al., 2021). Thus, users on these platforms may use violence cues reactively, rather than consistently. In more heavily moderated social media platforms, users may resort to dog-whistling and subtle cues to signal their more extremist beliefs (Weimann & Am, 2020). We, therefore, look at the various ways in which users on different platforms have instigated violence:

RQ2. Which words or phrases did social media users on three platforms use to incite violence?

Method

Data Collection

We collected social media posts engaged in the StS movement from three social media platforms: Facebook, Twitter, and Parler. For each platform, we queried posts that contained the hashtag “#StoptheSteal.” We adopted a conservative keyword-searching strategy to reduce the noise brought by using multiple keywords. For example, if we query posts containing “Stop the Steal” or “sts,” we would get millions of posts that are actually criticizing, not promoting, the movement. However, posts that use the hashtag “#StoptheSteal” are often meant to amplify the movement, which makes our data collection more relevant for testing our hypotheses. In addition, we collected posts that were created from 1 September 2020 to 1 February 2021; this time frame allows us to look at the complete narrative of the #StoptheSteal discourse during the 2020 presidential election (Atlantic Council’s DFRLab, 2021).

To gather Facebook data, we used CrowdTangle, which archives public content on Facebook. CrowdTangle currently covers more than 7 million Facebook pages, groups, and verified profiles. 4

We used the Twitter 2.0 application programming interface (API) to collect tweets. We only collected original tweets (excluding retweets). To ensure consistency across the platforms, only English tweets were collected.

Parler

To gather the Parler data, we drew from a publicly available archive of Parler data (Aliapoulios et al., 2021). This dataset includes 183 million posts made by 4 million users between 1 August 2018 and 11 January 2021. It does not contain information after January 2021, when the Parler website lost support from Amazon Web Services (AWS).

This collection yielded about 26,093 Facebook posts, 248,643 tweets, and 400,600 Parler posts.

Labeling Frames

We used a Bidirectional Encoder Representations from Transformers (BERT) model to train a classifier for coding the frames for each social media post. BERT is a deep learning technique that has been shown to outperform other classification algorithms and language models in common NLP (Natural Language Processing) tasks such as text classification (Devlin et al., 2019). Because of its proven performance in classification and its adaptability to different domains, BERT has been increasingly used in communication research (e.g., Lu et al., 2021; Moffitt et al., 2021). In this study, we used the pre-trained “DistilBERT” model—a lighter version of the standard “bert-base-uncased” model while preserving over 95% of its performance, and then fine-tuned the model with human-annotated data to perform the labeling.

Two human coders (two of three authors) produced a labeled dataset for whether a post contains the following collective action frames: diagnostic, prognostic, and motivational frames. We defined the diagnostic frame as focusing on the roots of the #StoptheSteal movement (e.g., “election fraud”). The prognosis frame referred to posts suggesting solutions and calling for actions, in other words, mobilizing collective action. Finally, the motivational frame spoke to the usage of motivational vocabularies such as “patriots,” “Americans,” “Republicans,” and “Trump supporters” to resonate with people and recruit participants for the #StoptheSteal movement. For each post, we coded 1 if it contains a certain frame and 0 otherwise. A single post could contain multiple frames or none of the three frames. After two rounds of coding, a sufficient inter-coder reliability level was reached between the two coders with an average Cohen’s Kappa is 0.88 (diagnostic: 0.91, prognostic: 0.88, motivational: 0.86). Finally, a total of 1,500 randomly sampled posts were labeled by the coders.

Three BERT models were trained to perform the classification tasks on the three labels: diagnostic frame, prognosis frame, and motivational frame. Model parameters such as batch size and learning rates were adjusted to optimize the models’ performance. The operationalization for each frame and evaluation metrics of the three models are reported in Table 1.

Operationalization of Frame Classification and Metrics of BERT Models.

Note. BERT: bidirectional encoder representations from transformers.

Violence Cues

As cue frames can be as small as word choices (McLeod & Shah, 2015), we employed the Grievance Dictionary (van der Vegt et al., 2021) to detect violence cues. The Grievance Dictionary is a psycholinguistics dictionary that identifies 20,502 words signaling “grievance-fuelled violent language”; it includes 22 sub-categories, including “threat,” “violence,” or “paranoia.” For the purpose of this study, we only focused on the “violence” category, which includes keywords such as “bloodshed,” “fight,” and “bullet” (van der Vegt et al., 2021). The Grievance Dictionary provides a more contextualized list of language for violence/threat assessment than other dictionaries, such as the Linguistic Inquiry and Word Count (LIWC; van der Vegt et al., 2021). 5 We applied the Grievance Dictionary to the Twitter, Facebook, and Parler datasets to calculate proportional scores for each and ran an analysis of variance (ANOVA) to examine if the proportional differences are statistically significant. In addition, we conducted a qualitative analysis of social media posts to supplement our findings and gain a more nuanced understanding of the context in which violence frame cues were employed.

Results

Descriptive Results

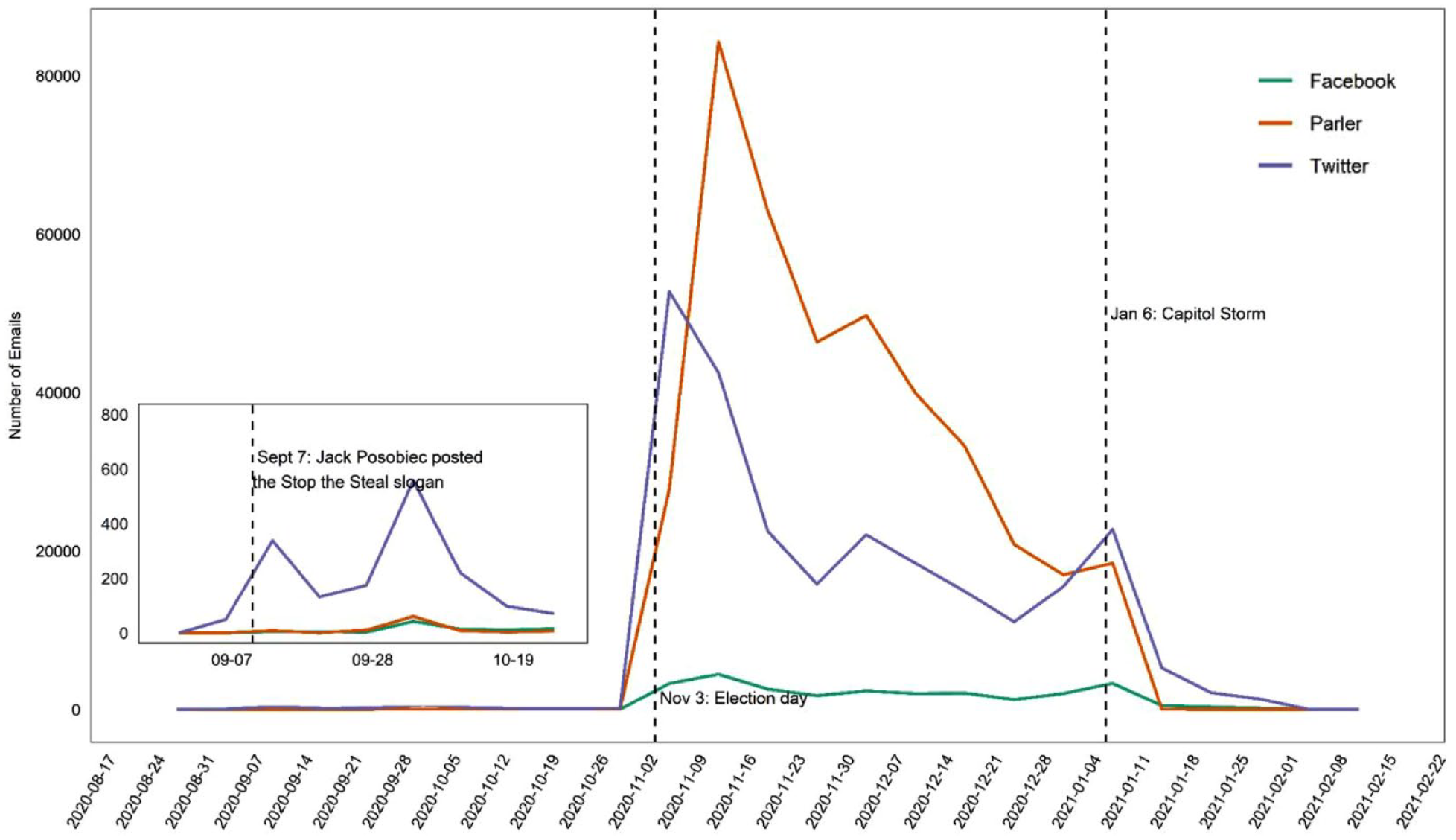

Figure 1 presents the weekly number of #StoptheSteal posts created between September 2020 and February 2021. The first wave of #StoptheSteal discourse was observed on Twitter around 7 September 2020, when far-right One America News correspondent Jack Posobiec tweeted, “#StoptheSteal 2020 is coming” (Atlantic Council’s DFRLab, 2021). The volume of #StoptheSteal discourse dramatically increased at the end of October 2020 and peaked around election day (3 November). Within the peak week, about 80,000 #StoptheSteal posts were generated on Parler and about 50,000 tweets were posted on Twitter. After the election day, there was a steady decrease in the #StoptheSteal posts, until another peak around 6 January 2021—the day when the vote results were to be verified by Congress, and the date of the Capitol Insurrection.

Weekly number of #StoptheSteal posts on Facebook, Twitter, and Parler.

There are two noteworthy patterns. First, Twitter is the platform where the #StoptheSteal discourse gained its first wave of attention in early September 2020. Second, Parler generated the most #StoptheSteal posts between November 2020 to January 2021, followed by Twitter and Facebook. At its peak time, the number of #StoptheSteal posts on Parler was almost 1.5 times more than that on Twitter. The number of posts by frames and violence cues for each platform are presented in Appendix A. 6

Notably, the spike in content, seen most clearly in Parler and Twitter, occurred soon after the election, with Parler producing far more content at its peak compared to Twitter. Activity persisted until 6 January. Thus, the “time range” of activity for #StoptheSteal posts, as expected, would be from the end of the 2020 election day to 6 January 2021.

Comparing Frames Across Platforms

To answer RQ1, which asked to what extent will Facebook, Twitter, and Parler vary in the prevalence of different collective action frames (diagnostic, prognosis, motivational), we calculated the percentage of posts with each frame for each platform, and the results were summarized in Table 2. The results show that for all three platforms, posts with a diagnostic or motivational frame are present, while the posts with a prognosis frame were less present (13% for Facebook, 21% for Parler, and 21% for Twitter). The most present collective action frame on Twitter is diagnostic (48%), while on Parler, the most present frame is motivational (53%), the same as that on Facebook (21%).

Proportions of Frames and Violence Cues across Facebook, Twitter, and Parler.

Note. Numbers above the bars are proportions of posts containing three main collective action frames or violence cues. The sum of the proportions can exceed, or be under, 1 because one post may contain multiple frames. The results of proportion tests show that all pairwise comparisons are significant (p < .001) except differences between Twitter and Parler in prevalence of “prognostic frame” and “violence cues.”

To test H1a, H1b, and H1c, which hypothesized that the three types of collective action frames—diagnostic, prognostic, and motivational frames would be more prevalent on Parler than on Facebook and Twitter, we conducted a series of pairwise proportion tests. The results show that the proportion of diagnostic framed posts on Parler is significantly higher than that on Facebook (χ2 = 6,998.5, p < .001) and Twitter (χ2 = 61.35, p < .001). The proportion of prognosis frames on Parler is higher than on Facebook (χ2 = 968.17, p < .001), but the difference is not significant between Parler and Twitter (χ2 = 33.22, p = 1.00). The proportion of motivational frames on Parler is higher than on Facebook (χ2 = 7,909.1, p < .001) and Twitter (χ2 = 7,909.1, p < .001). Therefore, H1a and H1c were fully supported while H1b was partially supported.

To test H2, which hypothesized that the violence cues would be most prevalent on Parler, we compared the proportions of posts containing violence cues between three platforms. The results show that the posts containing violence cues are more prevalent on Parler than those on Facebook (χ2 = 959.03, p < .001). However, this difference in proportions is not significant between Parler and Twitter (χ2 = 18.9, p = 1.00). Therefore, H2 was not supported.

RQ2 asked which words or phrases social media users on three platforms use to incite violence. As shown in Table 3, “fight,” “law,” “dead,” “war,” and “power” were some of the most commonly used words that signaled violence across three platforms, according to the Grievance dictionary. When comparing the most frequently used words on each platform, some words were more present in one social media platform than in others. For instance, Facebook users used the words “racist,” “stress,” and “violent” more frequently than Parler and Twitter users. Parler users frequently and uniquely used the word “smash” and “combat,” whereas Twitter users used “push” and “murder.” “Jail” and “death” were prevalent on Twitter and Parler but not on Facebook. Selected examples of violent posts with unique violence cues are presented in Appendix B. 7

The Top 15 Most Violence Cue Words Based on the Grievance Dictionary.

Note. Frequency number in the table shows the number of unique posts containing a violence cue word.

Qualitative Analysis of Violence Content

To further explore the RQ2, we conducted a qualitative discourse analysis of the violence frame cues to better understand how these cues were employed. In this analysis, we were especially interested in the semantic context of how cues were used and whether that varied across platforms. We used a grounded theory approach to observe the data, uncover patterns, and generate concepts (Glaser & Strauss, 2017). First, we compared the use of the top violence-related keywords on Twitter, Facebook, and Parler. Our findings reveal both similarities and differences. For example, across the three platforms, the word “fight” was used similarly, with social media users encouraging others to fight against election fraud and for Trump, justice, country, and democracy.

However, there were differences in how users utilized the term “law” in the three platforms. Twitter and Facebook users often used it as part of the noun phrase, “law and order,” a common policing refrain among conservatives (Wozniak et al., 2019), which was used in this context to argue that policing was necessary to reverse perceived election fraud. The word “laws” was also used to refer to mail-in ballot laws that were passed in the eleventh hour. For example, one Facebook user posted, “@ABC @GStephanopoulos You need to look into North Carolina because the election laws were changed at the last minute in that state like they were in Wisconsin.” In this post, the user raised suspected cases of voter fraud and demanded an investigation by the news media. Owing to its user base, users on Twitter were also especially likely to tag Trump, Republican politicians, and journalists in their tweets, urging them to take legal action to criticize voter fraud.

When Parler users used the word “law,” by comparison, they were urging Trump to declare martial law to stop voter fraud. This suggests greater advocacy for military involvement. Parler users also highlighted the laws that gave people the right to keep and bear arms: “#realdonaldjtrump should declare #martiallaw and order military to hold a complete election for POTUS or invoke the #12amendment immediately! #stopthesteal.”

We also looked into keywords that appeared uniquely on each platform. On Facebook, alt-right communities used the term “racist” to accuse “leftists”—particularly Black Lives Matter (BLM) demonstrators—of being racists or arguing that conservatives and alt-right users were not racist. BLM was frequently used as a negative foil in the StS discourse. For example, one Facebook post lamented, “Big Tech is censoring this peaceful rally! When was the last time Big Tech censored a violent rally for Black Lives Matter?” In this example, Facebook users frame BLM events as “violent,” compared to the “peaceful” #StoptheSteal “rally.” Furthermore, the user blames “Big Tech” as allies of BLM. Similar narratives, placing blame on social media platforms or Democratic and liberal groups, were pervasive across platforms.

Similarly, on Twitter, violence cues were often used to frame opponents as aggressive. For example, the term “murder” was frequently used to cast blame on liberal protesters. One tweet claimed, “#BBC hack Katy Kay described Trump supporters as a Mob. Never described #BLM a mob while they were Burning Looting and Murdering #LiberalHandwringingVermin #STOPTHESTEAL.” In this example, StS activists describe liberals as “vermin,” dehumanizing political opponents, and use terms like “looting and murdering,” which has historically been used to degenerate Black Americans (Johnson et al., 2011). Again, violence cues are not used to discuss their own actions, but the actions of BLM.

Compared to Twitter and Facebook, Parler discourse was far more aggressive. Parler users often used military metaphors to frame the Democratic party as enemies. This is especially evident when the term “combat” is used, as seen below: Throw out any ballot not for Trump as it is ILLEGAL BY LAW!!! It’s time for Trump to take EMERGENCY action to #stopthesteal! REPOST THIS!! 1. Declare a NATIONAL EMERGENCY because of the treason from the Democrats. 2. Executive order to nullify the results of this FRAUD election! 3. Fire ANYONE who disagrees with this course of action, including in the military brass. Hire only those who will protect the President! 4. Declare the Democratic Party a TERRORIST organization—enemy combatants! All patriots who love liberty must defend President Trump and ensure at least another 4 years!!

In this example, the user goes so far as to describe the Democratic Party as a “terrorist organization—enemy combatants” and recommends military action to overturn the election.

While the overall prevalence of collective action frames and violence cues may not have varied greatly between Twitter and Parler, our qualitative analysis reveals a heightened level of absolutism and panic in posts from the alt-tech platform. Similarly, other violence cues such as “smash” and “jail” were used to advocate for “smashing” or “jailing” “the left” (encompassing Democratic politicians, Democratic voters, and BLM) or “Big Tech.” Parler users also frequently referenced being in “Twitter/Facebook jail” after their accounts or posts were taken down on other platforms. Despite criticizing these platforms for their suspensions, Parler users would simultaneously ask other users to share their posts on the mainstream platforms or would tag people they perceived as being able to influence their suspension: “This needs to get to @CodeMonkeyZ and I am in twitter jail! Please save and send!#MarchForTrump #stopthesteal.”

It is worth emphasizing that alt-tech platforms such as Parler make it easy for such discourses to proliferate. Unlike mainstream platforms that have some standards against hate speech, mis/disinformation, and other harmful content, Parler’s community guidelines (during and after 6 January) emphasized their desire to create a space “in the spirit of the First Amendment” (Parler, 2021), striving to minimize content moderation. This might explain why the discourse on Parler is substantively different from its mainstream counterparts, where such content would either be removed or flagged by users. 8 As a consequence, while we see some similarity in the use of the collective action frames and violence frame cue, the level of extremity on Parler appears to be heightened, and perhaps more mobilizing.

Discussion

Social media platforms can be pivotal for groups to mobilize collective action, but different platforms may be more suited to different types of discourses. In this study, we focused on discourse about StS, examining how the movement and its claims were framed differently across three platforms. Specifically, we identified the use of diagnostic, prognostic, and motivational framing in this discourse, as well as violence cues. In studying these collective action frames across platforms in the context of alt-right communities, our results yield several curious findings. We find that, on the whole, Parler users evoke these collective action frames more than on mainstream platforms. However, Twitter discourse appeared to use the prognostic frame as much as Parler users.

These findings can be interpreted through the lens of platform affordance, specifically in relation to content moderation and anonymity. Facebook and Twitter’s content moderation policies, for example, may have encouraged or forced users to find alternative platforms with fewer content moderation policies, such as Parler. In these alternative spaces, users could garner a sustainable audience within a smaller, closed network, leading to the circulation of disinformation and posts inciting violence. This suggests that barring or removing content on one platform is insufficient to curb online political extremism or hate speech (Zuckerman & Rajendra-Nicolucci, 2021).

The varying levels of anonymity provided by the three platforms can explain why Parler and Twitter exhibited more prognostic and violent content than Facebook. Unlike Facebook users, who use real names, many Twitter and Parler users used pseudonyms. Political extremists have been found to leverage this anonymity to spread hate and White supremacism while making the far-right community more cohesive (Bhat & Klein, 2020; Crosset et al., 2019).

These findings advance three areas of scholarship. Methodologically, we present a mixed-methods approach for comparing frames across multiple social media platforms. Our approach to building a classifier suggests that bespoke, platform-specific classifiers still yield the most consistent results. Furthermore, our qualitative analysis allows us to assess the rich contexts of these frames in use.

Our results suggest that different platforms serve different organizing roles for far-right extremists, particularly when we compare mainstream platforms such as Twitter and Facebook to alt-tech platforms such as Parler. More specifically, if far-right extremist discourse is systematically removed from mainstream platforms, alt-tech platforms not only facilitate the spread of this content; they can also help translate it into offline form.

Finally, our study contributes to the ongoing use of social movements literature to understand far-right movements. While far-right extremism has gained more scholarly attention, only a few scholars have applied the social movement framework to understand these groups (Castelli Gattinara & Pirro, 2019). Our work builds on this by exploring the discourse of far-right movements through the lens of collective action frames, revealing that prognostic, diagnostic, and motivational frames used by far-right movements are conceptually distinct from those used by leftist social movements. Furthermore, our findings suggest that the use of violence cues is not inherently pro-violence; rather, these cues can frame opponents as enemy combatants. Thus, StS activists do not use violence cues to frame their own actions, but rather to portray political opponents as the “enemy.” This aligns with previous literature on the use of fear to evoke action among far-right communities (Scrivens & Amarasingam, 2020) and shows how far-right activists can further prime such fears through collective action frames.

Of course, this study is not without limitations. First, the representativeness of the data is constrained due to limitations in data collection. Facebook data were collected post hoc, and there might be missing data that was deleted after the January 6 Capitol attack, which cannot be retrieved. We highlight the challenges in accessing data when studying content such as mis/disinformation, conspiratorial beliefs, hate speech, and calls for violence. It is also worth mentioning that mainstream platforms, such as Facebook or Twitter, have posts containing the hashtag #StoptheSteal that opposed the StS narrative; however, our analysis did not differentiate between posts that supported the StS discourse and those that presented counter-arguments. We suggest future research to examine how Stop the Steal narratives varied on mainstream platforms. In addition, while we do study multiple platforms, the social media ecology in the United States comprises many more platforms, catering to different communities and offering different affordances (including, but not limited to, multi-modal functionalities). Finally, our analysis is comparative. The goal of this work is not to study how content from one platform moves to another, but rather to compare and contrast frames across these platforms. Future research can build on these findings by studying temporal relationships or the mobilizing effectiveness of these framing devices. The scholarship on cross-platform literature is nascent, and it is worth considering how descriptive analyses such as ours can lay the foundation for future causal work.

Nevertheless, we argue that our results have important implications for both understanding discourse about and from far-right group activity and for those interested in comparing alt-tech and mainstream social media platforms.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.