Abstract

In recent years, conspiracy theories have pervaded mainstream discourse. Social media, in particular, reinforce their visibility and propagation. However, most prior studies on the dissemination of conspiracy theories in digital environments have focused on individual cases or conspiracy theories as a generic phenomenon. Our research addresses this gap by comparing the 10 most prominent conspiracy theories on Twitter, the communities supporting them, and their main propagators. Drawing on a dataset of 106,807 tweets published over 6 weeks from 2018 to 2019, we combine large-scale network analysis and in-depth qualitative analysis of user profiles. Our findings illustrate which conspiracy theories are prevalent on Twitter, and how different conspiracy theories are separated or interconnected within communities. In addition, our study provides empirical support for previous assertions that extremist accounts are being “deplatformed” by leading social media companies. We also discuss how the implications of these findings elucidate the role of societal and political contexts in propagating conspiracy theories on social media.

Introduction

During the COVID-19 pandemic and the “infodemic” (World Health Organization, 2020) surrounding it, numerous conspiracy theories about the origin and scale of the virus have been spreading on social and news media. They claim, for instance, that the virus is a bioweapon developed by the Chinese government (Sardarizadeh & Robinson, 2020), or that Bill Gates is using the pandemic to force mass vaccination on the population (Huddleston, 2020).

Although conspiracy theories currently abound, and scholars, news media, and the public are devoting a great deal of attention to them, conspiracy theorizing is not a new phenomenon. In fact, conspiracy theories have existed throughout history (Knight, 2003)—often amplified during crises (van Prooijen, 2018)—and they are more diverse than might appear in the spotlight of the current pandemic.

Based on the widespread existence of conspiracy theories and the increased attention they have been receiving on social media, scholarly efforts to understand the phenomenon have gained momentum (for overviews, see Butter & Knight, 2017; Douglas et al., 2019). Numerous attempts have been made to conceptualize conspiracy theories (for overviews, see Thresher-Andrews, 2013; Walker, 2018), resulting in multiple, partly conflicting definitions. Drawing on the conceptual elements of definitions that seem most established in the field, we define conspiracy theories as proposed explanations for events or practices that refute established accounts and instead refer to secret machinations of influential people or institutions- acting for their own benefit (e.g., Coady, 2003; Goertzel, 1994; Hofstadter, 1965; Keeley, 1999; Uscinski & Parent, 2014).

For a long time, conspiracy theories were considered to be a deviant social phenomenon (Hofstadter, 1965) and an individual and societal anomaly (Abalakina-Paap et al., 1999). In recent years, however, they have penetrated mainstream discourse and legacy media coverage (Waisbord, 2018), permeated popular culture (Uscinski, 2018) and political rhetoric (Mede & Schäfer, 2020), and have become increasingly “normalized, institutionalized and commercialized” (Aupers, 2012, p. 24). This increasing normalization of conspiracy theories is one of the factors driving a growing interest among researchers in how conspiracy theories are mediated and communicated (Stempel et al., 2007). Unsurprisingly, the role of new information and communication technologies is at the center of this emerging research field (Wood, 2013) as it explores how social media interplay with conspiracy theories (e.g., Bessi et al., 2015; Smith & Graham, 2019). In addition, recent research has focused on “deplatforming,” that is, permanently banning, extremist content on social media (e.g., Rogers, 2020).

Using a communication science perspective, our study contributes to this body of research by tackling a specific shortcoming of prior scholarship: While most existing studies have analyzed individual conspiracy theories or focused on conspiracy theories as a generic phenomenon, our research systematically maps communication about the most visible conspiracy theories to highlight the topical diversity of conspiracy theory discourses—before the coronavirus dominated different platforms and conspiracy talks.

The contribution of our article is threefold: By analyzing various conspiracy theories, we take a significant step toward understanding the diversity of conspiracy theories on social media; their communities, that is, users propagating the same conspiracy theories; and main propagators within these communities. These three foci provide an empirically grounded understanding of the dissemination of conspiracy theories on Twitter as one of the most widely used social media platforms (N. Newman et al., 2020) that contains a considerable amount of misinformation (Brennen et al., 2020). Combining large-scale social network and in-depth qualitative content analysis, we studied 106,807 tweets related to 10 conspiracy theories published over a 6-week timespan between 11 December 2018 and 23 January 2019.

Conceptual Framework

Conspiracy Theories and Social Media

When analyzing conspiracy theories, social psychologists and political scientists often seek to explain why individuals believe in conspiracy theories, while most communication researchers are concerned with how specific conspiracy theories are represented in legacy, online, and social media, that is, how prominent conspiracy theories are there, among whom, and what effects this may have. The respective scholarship has mostly analyzed conspiracy theories related to the Anti-Vaccination movement (e.g., Smith & Graham, 2019), 9/11 (e.g., Stempel et al., 2007), climate change (e.g., Gavin & Marshall, 2011) as well as, more recently, COVID-19 (e.g., Bruns et al., 2020).

On social media platforms like Facebook or Twitter, users can upload content, distribute their own and others’ content, endorse content by liking or commenting upon it, and therefore rapidly disseminate conspiracy theories and amplify their visibility. As shown in prior studies, conspiracy theories, along with other forms of misinformation, often spread to audiences faster than verified information. Vosoughi et al.’s (2018) study of misinformation on Twitter demonstrates that “falsity travels with greater velocity than the truth,” as they find that misinformation propagates faster, deeper, and wider online (p. 1149). Similarly, Friggeri and colleagues (2014) show that on Facebook, rumor cascades resulting from individuals’ resharing run deeper in the network than other forms of information.

Social media’s ability to disseminate content is critical to conspiracy theories. A hallmark of conspiracy theories is their heavy reliance on (alternative) evidence and the highly repetitive nature of their claims. Miller (2002) points out that social media have brought these characteristics “to a qualitatively different level” (p. 45). Online platforms, for instance, give conspiracy theorists the opportunity to cross-reference and mutually support their claims. Following the 2012 Sandy Hook shooting, conspiracy theorists in the United States published video “evidence,” claiming that the incident was staged by a group of “crisis actors”: individuals trained and recruited to portray disaster victims. Supporters of this conspiracy theory uploaded additional YouTube videos purportedly showing that the same “crisis actors” also appeared in other incidents such as the Boston Marathon bombing (Wood, 2013).

Alongside cross-referencing, the visibility of conspiracy theory content online also encourages more individuals to publicly share their beliefs. This “snowball effect” of conspiracy theory exposure is related to Kuran’s (1997) theory of preference falsification, according to which individuals who hold unpopular minority opinions hold back their genuine positions under perceived social pressure and only reveal their ideas when they encounter a critical mass of like-minded people. As DeWitt et al. (2018, p. 326) point out, Kuran’s theory of preference falsification explains why individuals are more likely to publicly endorse unpopular ideas like conspiracy theories when they perceive safety in numbers. Arguably, more than any other medium, social media serve as platforms where people can find such a sense of safety in numbers, because the visibility of conspiracy content online affords believers the opportunity to find and connect with like-minded people.

In this vein, focusing on communities propagating conspiracy theories on social media is crucial in three respects. First, like-minded individuals tend to interact with each other (Williams et al., 2015) to reinforce existing ideological identities or to strengthen group affiliations (Yardi & Boyd, 2010). In addition, research suggests the existence of highly polarized and homogeneous communities around conspiracy and scientific topics on Facebook and YouTube (Bessi et al., 2016; Cinelli et al., 2021). Second, conspiracy theories tend to remain confined to their communities (Sunstein & Vermeule, 2009) and not to spread haphazardly from person to person through social media (DeWitt et al., 2018). Third, research has shown that conspiracy beliefs tend to “stick together” (Douglas et al., 2019, p. 7); thus, people who believe in one conspiracy theory are likely to also turn to others (van Prooijen, 2018). Effectively counteracting the spread and impact of conspiracy theories requires an in-depth understanding of the propagators and communities who disseminate them.

Most earlier studies took one of two approaches to study conspiracy theories online. They either analyzed conspiracy theories as a general, abstract phenomenon (e.g., Bessi et al., 2015) or focused on specific conspiracy theories around vaccination (e.g., Broniatowski et al., 2018), 9/11 (e.g., Stempel et al., 2007), or climate change (e.g., Lewandowsky et al., 2013). While both approaches have merits, they fail to consider important parallels and distinctions between conspiracy theories—either because they analyze the phenomenon on a meta-level or because they only focus on one topic, which makes it difficult to draw comparisons across conspiracy theories. This is particularly problematic as accumulated evidence across different studies suggests that the emergence and dissemination of misinformation online varies considerably from topic to topic (Zhang et al., 2015; Zubiaga et al., 2016). Thus, we aim to deliver a more comprehensive picture by mapping the structure and networked properties of the most visible conspiracy theories on Twitter. Therefore, we ask the following research questions (RQs):

In addition, our analysis centers on the micro level of individuals. To date, little is known about the composition of communities around conspiracy theories, including the main propagators, who play an important role in the distribution network of conspiracy theory content. Addressing this gap, we ask:

Disseminating Content on Twitter: Types of Interaction and Influence

We chose Twitter for analysis. As an important component of today’s information ecosystem, it contains a considerable amount of misinformation (Brennen et al., 2020) and is comparatively easy to access for scientific research. In addition, due to its interactive and networked nature that facilitates the formation of communities, Twitter is a powerful platform to study the dissemination of misinformation and plays a significant role in propagating conspiracy theories (e.g., Broniatowski et al., 2018; Vosoughi et al., 2018).

On Twitter, people are directly connected through an underlying network (Boyd et al., 2010). Due to its technological affordances, it enables different types of interaction. According to Bruns and Moe (2014), these interaction types operate on micro-, meso-, and macro-layers of communication and information exchange, which in turn are interconnected in various ways. While hashtags, as indicators of topics, discourses, or events, are situated on the macro level, following other users as the most prevalent from of interaction is located on the meso level of exchange. Replying to other users’ tweets or mentioning them are types of interaction which are closely related to individuals and micro-level communication. Retweeting, that is, forwarding other users’ tweets, serves as a transversal interaction as messages are transferred from the macro level to the micro level and thus to the attention of a user’s own followers. Therefore, retweeting is a powerful way of disseminating content on Twitter more widely since the original message is transmitted to new individuals and communities (Boyd et al., 2010; Suh et al., 2010). Apart from that, retweeting can be understood as a way of validating content, participating in conversations, and engaging with others. Moreover, spreading others’ tweets suggests that the original message contains valuable information (Boyd et al., 2010).

Based on these forms of interaction, Cherepnalkoski and Mozetič (2016) differentiate three types of influence. While the number of followers indicates the in-degree influence of a user, his or her number of mentions and replies points to the mention influence and thus to the users’ ability to participate and engage with other people. The number of retweets individuals get, their retweet influence, signals that these users produce content that might be of interest for others and worth sharing.

Since we aim to provide a comparative picture of conspiracy theories on Twitter, we focus on hashtag-based networks on the macro level of communication, identifying the most visible conspiracy theories and mapping their diversity. In addition, we seek to shed new light on communities around conspiracy theories as well as on the main propagators who spread them. In this vein, we build on retweet networks, since research has shown that these serve as an appropriate analytical tool to reveal “influential” users and processes of information diffusion occurring on Twitter (Boyd et al., 2010; Cherepnalkoski & Mozetič, 2016; Suh et al., 2010).

Data and Method

We rely on two datasets: A first dataset (D1) containing 111,466 tweets published between 2011 and 2018 was used to answer RQ1. The second dataset (D2), which builds on D1 and comprises 106,807 tweets generated over a 6-week timespan between 11 December 2018 and 23 January 2019, served to answer RQ2 and RQ3.

Detecting Conspiracy Theories via Hashtag Co-Occurrence Network Analysis (RQ1)

We started with a purposive sample of English-language Twitter accounts that spread a range of conspiracy theories and provide insights into different conspiratorial movements. These accounts were collected through snowball sampling. We first targeted well-known conspiracy theory accounts on Twitter, like @AboveTopSecret and @davidicke. From these accounts, we expanded our list by including conspiracy theory accounts that were retweeted or “similar accounts” recommended by Twitter. Three criteria were used to select profiles in this step. First, we chose “general” conspiracy theory accounts that do not predominantly focus on one single conspiracy theory by verifying that the first five posts on the timeline covered at least two conspiracy theories. Second, we ensured that these profiles propagate different types of conspiracy theories, for instance, scientific (e.g., vaccination) and political (e.g., 9/11) ones. We compared our resulting list of conspiracy theories with scholarly typologies of conspiracy theories (e.g., Huneman & Vorms, 2018; Räikkä, 2009) and empirical studies on specific conspiracy theories to ensure our list was not biased by the selected accounts. Third, we limited our study to Twitter accounts with English-language content. Following this procedure, we identified 40 Twitter accounts. Using Twitter’s API (application programming interface), we collected up to 3,200 tweets from each account’s timeline. In total, 111,466 tweets published between 2011 and 2018 were included in Dataset D1.

To identify conspiracy theories on Twitter, we applied network analysis of co-occurring hashtags. Hashtag-based gathering approaches come with some limitations; for instance, users may modify hashtags to add new arguments on a topic, or leave hashtags out entirely when replying to a tweet (Burgess & Bruns, 2015). However, this approach seemed acceptable for this study, which aimed to identify specific rather than more amorphous and compound conspiracy theories. Similar to Burgess and Matamoros-Fernández (2016), we extracted all 5,242 unique hashtags appearing in D1 to identify “issue clusters” (see Supplementary Material, Figure A1, for co-occurrence network of hashtags). In the network, each node represents a hashtag, and an edge is drawn between two hashtags when they appear in the same tweet. We used the Louvain modularity algorithm to detect clusters of conspiracy theories (Blondel et al., 2008), using modularity classes as the nodes partition parameter. From each modularity class that contains more than 5% of nodes, we used degree centrality as a measure to assess each hashtag’s visibility, and selected only those with a degree above 50. In the following step, hashtags were qualitatively assigned to 10 topic groups based on their thematic relationship. Through this process, we identified the 10 most visible conspiracy theories and the 43 hashtags most often associated with them (see Table 1).

Conspiracy Theories and Related Hashtags.

It is notable that QAnon-related hashtags appeared in this network as well, but we decided not to include them for data collection, guided by two rationales. First, QAnon can be described as a “tent” conspiracy theory (Zuckerman, 2019, p. 3) or meta-conspiratorial narrative that interweaves a wide range of conspiracy theories. Second, QAnon-related hashtags are frequently used in ambiguous contexts. As prior literature indicates (e.g., Conover et al., 2011; Graham, 2016), hashtags affiliated with conservative politics (e.g., #TheGreatAwakening, #WWG1WGA, #tcot, #tgdn) are commonly used by their supporters for self-identification, regardless of the actual topic of the tweet. Our pilot data collection, which included QAnon-associated hashtags, supported this observation. As it turned out, less than 5% of these tweets referred to any specific conspiracy theory.

Analyzing Conspiracy Theory Communities via Retweet Network Analysis (RQ2)

To better understand which conspiracy theories are propagated by the same users, we conducted retweet network analysis to identify communities related to the 10 conspiracy theories listed above. For this purpose, we used the hashtag list mentioned in the previous section to retrieve tweets on a daily basis, employing Twitter’s search API. In total, we collected 106,807 tweets generated by 35,333 unique accounts between 11 December 2018 and 23 January 2019 in the second dataset (D2). This 6-week timespan was selected deliberately to ensure that samples for each conspiracy theory were large enough to identify communities while avoiding major events or anniversaries (like the anniversary of 9/11) which might have skewed our data toward one specific conspiracy theory (for a daily distribution of all tweets, see Supplementary Material, Figure A2).

To identify communities, we analyzed group partitions in the retweet network with the Louvain modularity algorithm (Blondel et al., 2008). Modularity is a scalar value that quantifies the quality of partitions by comparing the density of links from inside the module (e.g., community, cluster) with that in a random network structure (M. E. J. Newman et al., 2002). Blondel et al.’s (2008) algorithm serves to identify high modularity partitions, wherein nodes belonging to one module are densely interconnected but sparsely connected with nodes in different modules. A filter was used to keep major modularity classes that contain more than 5% of nodes.

Examining the Main Conspiracy Theory Propagators via In-Depth Qualitative Analysis (RQ3)

Based on Dataset D2, we identified the main accounts contributing to the propagation of the 10 selected conspiracy theories by utilizing network metrics. As we are most interested in the dissemination of conspiracy theories, “influence” is understood as one account’s ability to widely spread information within the network they belong to, which in turn affects the actions of many other users in the network (Li et al., 2014; Riquelme & González-Cantergiani, 2016). In order to identify the main propagators of each community, we used the well-known PageRank algorithm (which was originally developed by Brin & Page, 1998, to measure the relevance and presence of web content through hyperlinks in Google’s search engine but has since been applied widely in social network analysis) as a measure of influence (Heidemann et al., 2010; Riquelme & González-Cantergiani, 2016). Unlike degree centrality, which measures influence by counting the number of links a node has, PageRank goes beyond the first-degree connections and attributes higher weight to nodes that rank higher themselves.

Based on PageRank, we selected the 10 most influential propagators of each community for further qualitative analysis (Mayring, 2015). This included an iterative process of interpreting, paraphrasing, and aggregating the material under investigation. To characterize and understand differences and similarities between the communities, we built detailed profiles of their main propagators by analyzing each one’s profile description, timeline, pinned posts, used images, or videos as well as references to external sources. We applied an integrated approach. First, we coded the type of actor (e.g., citizen, scientist or medical practitioner, journalist, conspiracy theory aggregator) for each user, which we deductively determined based on previous studies (e.g., Starbird, 2017). In a second step, information indicating users’ political leaning (e.g., conservative, QAnon supporter) was inductively derived from the data. For example, based on the profile description “God Fearing Family Man, Patriot, #MAGA #TRUMP2020,” we coded this user as a citizen (“God Fearing Family Man”) and Trump supporter (“#MAGA #TRUMP2020”). Furthermore, because recent research has shown that mainstream social media platforms like Twitter began to systematically crack down on conspiracy theory-related content and ban influential conspiratorial personalities (Rogers, 2020), we were interested in how many accounts have been suspended. Therefore, we coded the status of profile 1 for each account (e.g., suspended, deleted, still active). To enrich our qualitative description with quantitative information, we selected user metrics (e.g., followers, retweets, posts) for each account.

Results

Prevalent Conspiracy Theories on Twitter (RQ1)

Our network analysis of co-occurring hashtags revealed 10 prominent conspiracy theories circulating on Twitter. 2

(1) Agenda 21 refers to the United Nations’ (UN) non-binding action plan, signed in 1992, that encourages governments to pursue sustainable development. Related conspiracy theories claim that Agenda 21 is a disguised plot to strip nations of their sovereignty and eventually impose global communism.

(2) The Anti-Vaccination movement is opposed to the vaccination of children to protect them from contagious diseases. In particular, supporters of this movement reject mandatory vaccination, despite the overwhelming scientific consensus on the efficacy of vaccines. Moreover, they promote unproven links between vaccinations and a range of childhood illnesses and disorders, for instance, autism. Instead, they advocate “natural remedies” to treat childhood diseases.

(3) Supporters of the Chemtrail conspiracy theory argue that condensation trails left behind by high-flying aircraft contain various chemical or biological agents, deliberately released over the public for nefarious purposes such as weather modification, psychological manipulation, or human population control.

(4) Climate Change Denial, or global warming conspiracy theory, asserts that the scientific consensus of human-induced climate change is a plot based on manipulated data, concocted for political and ideological purposes. Those who subscribe to this theory believe that governmental agencies such as NASA deliberately engage in distorting information in order to mislead the population.

(5) The Directed Energy Weapons conspiracy theory emerged during the 2018 California wildfire season and postulates that the deadly wildfires were deliberately lit using “directed energy weapons”—experimental weapons that utilize a highly focused energy beam—for political purposes, including promoting the aforementioned climate change hoax.

(6) The Flat Earth movement is an expanding pseudoscientific group propagating the age-old claim that the Earth is, in fact, a flat disk bordered by a wall of ice. People who subscribe to this theory argue that governments and government agencies such as NASA have attempted to cover up this “truth” by staging fake space missions and generating fake imagery of the Earth as a sphere.

(7) Illuminati refers to the conspiracy that a selected group of individuals, “the Illuminati,” secretly controls and masterminds global affairs in order to gain political power and to establish a New World Order.

(8) Pizzagate relates to a debunked conspiracy that circulated during the 2016 United States Presidential Election, claiming that coded email messages from Hillary Clinton’s campaign manager, John Podesta, implicated a series of Democratic Party officials in an alleged human trafficking and child sex ring. A pizzeria in Washington, D.C., was allegedly involved.

(9) The Reptilians conspiracy theory, popularized by conspiracy theorist David Icke, claims that a race of shapeshifting reptilian aliens is attempting to manipulate societies by taking on human form. Several world leaders and popular celebrities are accused of being so-called Reptilians, including Queen Elizabeth II and Justin Bieber.

(10) The 9/11 conspiracy theory postulates that, rather than the work of Jihadist terrorists, the attacks on America on September 11, 2001, were, in fact, an “inside job,” orchestrated by the US government to justify their foreign policy agenda. It propagates pseudoscientific theories to prove, among other things, that the collapse of the Twin Towers was the result of a controlled demolition.

Communities Around Conspiracy Theories (RQ2)

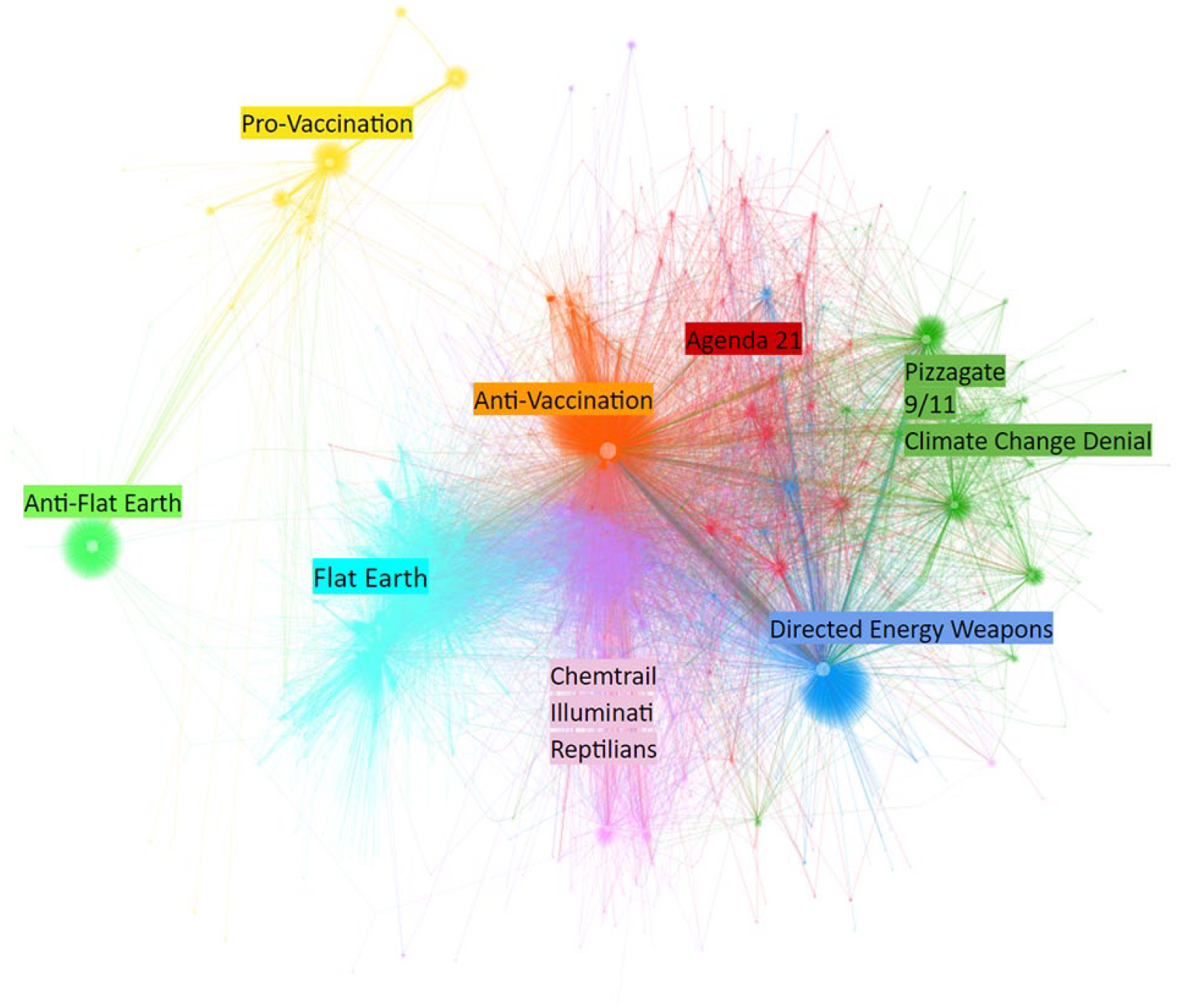

The retweet network of these 10 conspiracy theories consists of 31,368 nodes and 49,510 edges, and reveals eight major communities with a modularity score of 0.715. In Figure 1, nodes represent Twitter accounts and edges represent retweets; the size of a node is coordinated with its in-degree, that is, the retweets one user receives. Community clusters were calculated and colored via Blondel et al.’s (2008) modularity algorithm, using a default modularity resolution of 1.0. Because we assigned values for each node to indicate each given conspiracy theory, we were able to label each community based on its most prevalent conspiracy theories.

Communities around conspiracy theories.

As Figure 1 indicates, the retweet network consists of two loosely connected clusters of user communities. A closer reading of tweets shared within these communities reveals that the cluster on the left comprises two communities that do not spread conspiracy theories but actively oppose Flat Earth and Anti-Vaccination conspiracy theories. The other, bigger cluster contains proponents of the 10 conspiracy theories, which make up an additional six communities. Anti-Vaxxers and Flat Earthers are the biggest conspiracy theory communities, with 4,797 and 3,562 accounts, respectively (see Table 2 for an overview). Both anti-conspiracy theory communities contain significantly fewer accounts (Pro-Vaccination: 1,923, Anti-Flat Earth: 1,650). Conspiracy theories concerning Chemtrails, Illuminati, and Reptilians are often retweeted by the same community of Twitter users (N = 2,945). Likewise, the conspiracy theories Climate Change Denial, Pizzagate, and 9/11 are often shared by the same user community (N = 2,574). The Directed Energy Weapons community has 2,535 members; the Agenda 21 community includes 2,299 accounts.

Overview of Conspiracy Theory Communities and Propagators.

Note. M = mean, SD = standard deviation.

Main Propagators Within Conspiracy Theory Communities (RQ3)

Our in-depth analysis of the top 10 propagators, 3 that is, influential accounts with the greatest ability to spread information within each community, revealed that individuals like citizens, scientists, or medical practitioners, but also aggregator accounts, are most prevalent (see Table 2; for a mapping of the most influential propagators within each community, see Supplementary Material, Figure A3). However, the composition of propagators differs between communities, especially between the anti- and pro-conspiracy theory communities.

The top propagators within the Pro-Vaccination community include mostly science-related accounts. Among vocal scientists and medical practitioners are Peter Hotez (@PeterHotez), Professor of Pediatrics and Molecular Virology & Microbiology, who has by far the highest influence in this community (13,612 followers, 876 retweets). Other examples for influential science-related propagators are Scott Gottlieb (@SGottliebFDA), physician and commissioner of the Food and Drug Administration; Daniel Kraft (@daniel_kraft), a physician-scientist with expertise in biomedical research and health care innovation; as well as Meghan May (@DrMay5), Professor of Microbiology and Infectious Diseases. Besides researchers and physicians, the scientific journal Microbes and Infection (@MicrobesInfect), a citizen, and an aggregating account called “TheReal Truther” (@thereal_truther), which exposes “lies, propaganda & hate of the anti-science movement,” actively spread information in favor of vaccination.

Similarly, top accounts among the Anti-Flat Earth community are often scientists, such as astronomer and science blogger Philip Plait, “The Bad Astronomer” (@BadAstronomer), who is the most influential actor in this community (612,527 followers, 1,653 retweets, 1,519 posts); George Claassen (@GeorgeClaassen), South African scientist and science journalist; or Jeff Ollerton (@JeffOllerton), Professor of Biodiversity. In addition, the community includes citizens, Rob Davis (@robwdavis), an investigative journalist at “The Oregonian,” and the author of the scientific blog “The Conquest of Space” (@conquestofspace), which aims to appeal to space enthusiasts.

In contrast to accounts advocating vaccination, the 10 most influential Anti-Vaccination propagators largely consist of self-described “enlightened” citizens “searching for truth” about the harm of vaccination. Like anti-conspiracy theory accounts, most of the main propagators within the Anti-Vaccination community do not convey their political leanings in their profile. A notable exception is one user who explicitly self-identifies as a “TRUMP SUPPORTER!!” in his profile, frequently using the hashtag “#MAGA,” referring to Donald Trump’s 2016 presidential slogan “Make America Great Again.” In addition, this user points out his support of QAnon, a meta-conspiracy theory claiming that Trump is battling a cabal of shadow groups including United States Democrats, business tycoons, and Hollywood celebrities who are secretly ruling the world (Zuckerman, 2019). Among the most influential propagators of Anti-Vaccination content is the editor of “Natural News,” Mike Adams (@healthranger), a conspiracy theory website known for promoting pseudoscientific claims and far-right extremism (138,560 followers, 5,605 retweets); as well as the organization “A Voice for Choice” (@avoiceforchoice) with 2,345 followers. According to their self-description, they speak out “against the practices of the food, agriculture, and pharmaceutical industries that infringe on people’s rights to control what they put into their bodies” (A Voice for Choice Advocacy, 2020).

The most prominent accounts of the Flat Earth community are individual citizens. They admonish, for example, that one should not believe everything the “controllers” say, and encourage others to break free. In addition, conspiracy theory aggregators curating Flat Earth–related conspiracy theories are influential propagators within this community. One example for such an aggregator is “WeAreWakinUp” (@WeAreWakinUp) with 11,018 followers, 519 retweets, and 154 posts.

Conspiracy theories concerning Chemtrails, Illuminati, and Reptilians are also often disseminated by citizens and conspiracy theory aggregators. Important in this community is the aggregator “The Truth Community” (@chooselovetoday) with 16,500 followers, 513 retweets, and 169 posts. This account describes itself as a “member-based supported community UNITED in our belief #truth saves” and supports conspiracy theories like Chemtrails, Illuminati, Reptilians, and many others. Citizens in this community portray themselves either as “seekers of the truth” or supporters of Donald Trump and the Second Amendment of the US Constitution which protects the right to bear arms.

Main propagators among the three communities Directed Energy Weapons, Agenda 21, and Climate Change Denial, Pizzagate, and 9/11 can be described as citizens with similar political leanings: Trump supporters, QAnon followers, conservatives, patriots, and supporters of the Second Amendment. Typically, these accounts describe themselves as, for instance, “politically incorrect freethinker,” “Christian,” or “Patriot!” Within these three communities, some individuals are highly influential and visible with up to 102,328 followers.

In line with previous research on deplatforming on social media (e.g., Rogers, 2020), our results also show that Twitter has been suspending influential propagators within conspiracy theory communities and especially within communities dominated by Trump and QAnon supporters. Moreover, the profile descriptions of some users who are still active refer to their account on Gab—a microblogging service launched in 2016 that has attracted a large number of radical conservatives and conspiracy theorists—or Parler—a social networking service launched in 2018 that has brought together Trump supporters, right-wing extremists, and conspiracy theorists.

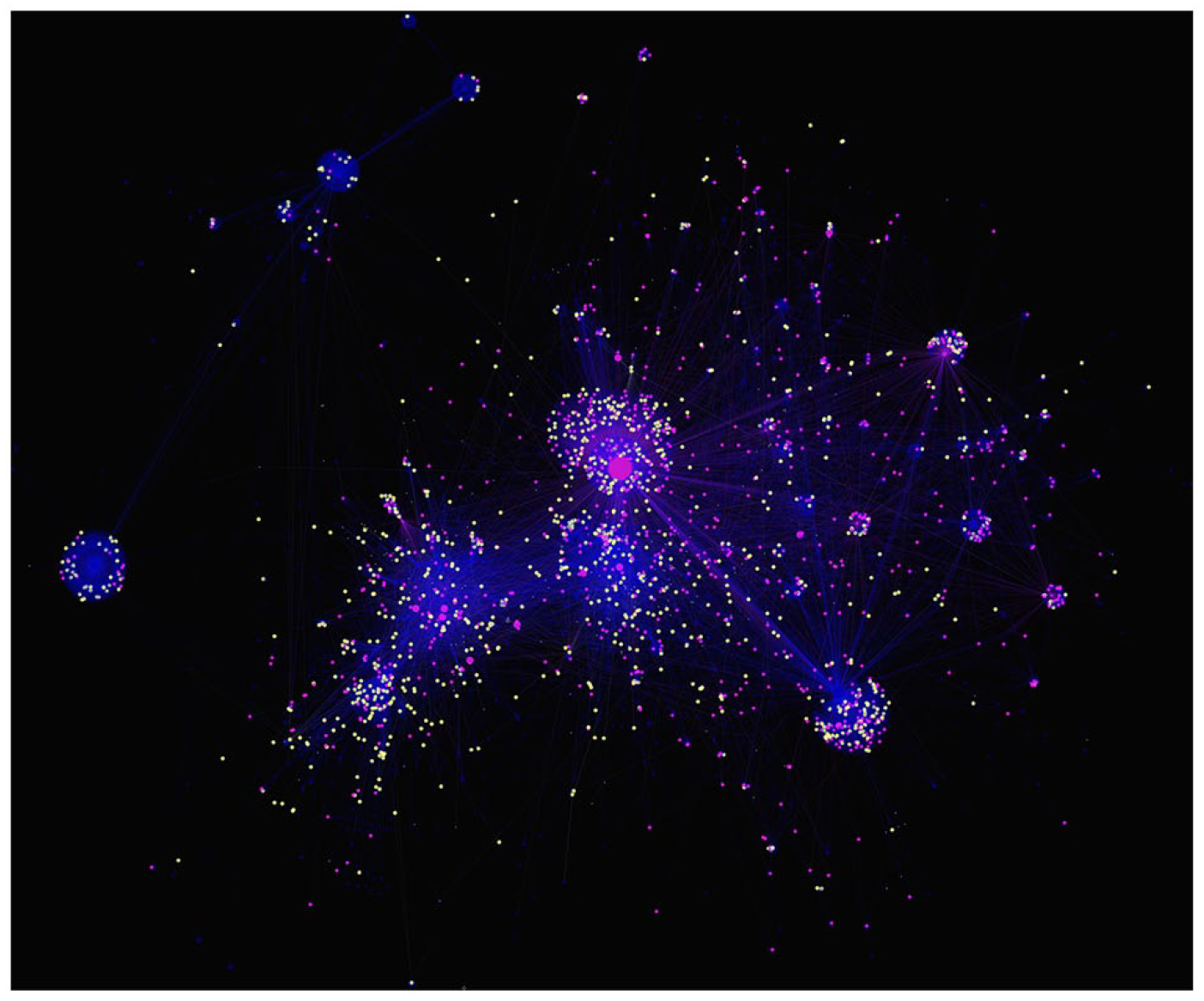

Shifting the focus from the micro level to the macro level, Figure 2 displays that the number of deplatformed accounts is higher in pro-conspiracy theory communities (895 accounts, 4.78%) than in anti-conspiracy theory communities (27 accounts, 0.76%). A chi-square test with Yates’ continuity correction points toward significant differences between communities: χ2(1) = 121.68, p < .001.

Deplatformed accounts within conspiracy theory communities.

Discussion

Conspiracy theories are a fast-changing phenomenon and highly responsive to external events. In light of the ongoing COVID-19 pandemic, a plethora of conspiracy theories abound online. Going beyond previous studies on either the general phenomenon of conspiracy theories (e.g., Del Vicario et al., 2016) or specific conspiracy theories (e.g., Broniatowski et al., 2018), our study provides an empirically informed comparison of the most visible conspiracy theories on Twitter by shedding light on the interplay of platform affordances and the dissemination of conspiracy theory content.

Regarding the diversity of conspiracy theories, our results reveal a variety of prevalent conspiratorial explanations circulating on Twitter: Agenda 21, Anti-Vaccination, Chemtrails, Climate Change Denial, Directed Energy Weapons, Flat Earth, Illuminati, Pizzagate, Reptilians, and 9/11. While most of these conspiracy theories are directed against the establishment and elite, referring to secret machinations of influential people or institutions acting for their own benefit (e.g., Agenda 21, Illuminati), others construct narratives challenging science, epistemic institutions, or scientists (e.g., Anti-Vaccination, Flat Earth).

Concerning communities evolving around conspiracy theories on Twitter as well as main propagators within these communities, our results reveal two loosely connected clusters of pro- and anti-conspiracy theories. Both anti-conspiracy theory communities, Anti-Flat Earth and Pro-Vaccination, are centered around scientists and medical practitioners. Their use of pro-conspiracy theory hashtags likely is an attempt to directly engage and confront users who disseminate conspiracy theories. Studies from social psychology have shown that cross-group communication can be an effective way to resolve misunderstandings, rumors, and misinformation (e.g., DiFonzo, 2013). By deliberately using pro-conspiracy hashtags, anti-conspiracy theory accounts inject their ideas into the conspiracists’ conversations. However, our study suggests that this visibility does not translate into cross-group communication, that is, retweeting each other’s messages. This, in turn, indicates that debunking efforts hardly traverse the two clusters.

Finally, our study lends support to previous assertions that social media platforms are taking increasingly proactive measures to systematically crack down on accounts promoting conspiracy theories (e.g., Rogers, 2020). As our results show, users banned from Twitter are predominantly those who propagate conspiracy theories.

Alignments Between Conspiracy Theories and Their Communities

In line with recent research demonstrating that conspiracy beliefs tend to “stick together” (Douglas et al., 2019, p. 7; van Prooijen, 2018), our study reveals a general proximity between several conspiracy theories. We argue that three factors help us to explore these overlaps in more depth. First, the closely aligned conspiracy theories Climate Change Denial, Pizzagate, and 9/11 share structural and thematic features. They provide alternative rationales and explanations for national or international policies, political affairs, or events, challenging accounts from governments and official authorities (Huneman & Vorms, 2018; Räikkä, 2009). Other conspiracy theories such as Chemtrail, Reptilians, and Illuminati emphasize a conspiracy of powerful groups and non-human entities, claiming that, for instance, aliens or secret societies rule the world (Uscinski, 2018). In contrast to political conspiracy theories, these conspiracies mingle reality with fiction and are often closely tied to popular culture such as Dan Brown’s novel “The Da Vinci Code.” Second, factors such as ideological and geographic proximity further help explain alignments between conspiracy theories. A shared characteristic of several conspiracy theories is that they are disseminated by people with conservative political views who support Donald Trump and that they are mostly popular in the United States. For instance, conspiracy theories around Climate Change, Agenda 21, and Directed Energy Weapons have their roots in anti-environmentalist and anti-globalist ideologies, both of which are aligned with conservative political ideology and values (Kirilenko & Stepchenkova, 2014) and popular among the political right and populists in the United States (Harris et al., 2017). 9/11 and Pizzagate conspiracy theories are also widely promoted by conservative and right-wing politicians in the United States and often supported by individuals who hold conservative beliefs (Stempel et al., 2007). In contrast, believers in Anti-Vaccination, Flat Earth, Reptilians, Illuminati, and Chemtrail conspiracy theories can be found across the political spectrum, and their respective communities are less US-centric. For instance, Anti-Vaccination sentiment is on the rise around the globe and the movement finds supporters on both the political left and right (Holt, 2018). In addition, some of the most influential propagators of Illuminati and Reptilians conspiracy theories are based outside the United States (Robertson, 2013), which underlines the importance of societal and political contexts to understand the propagation patterns of conspiracy theories.

Limitations and Future Research

As all studies, ours comes with some limitations as well. A general limitation resides in the way we built our sample. First, hashtag-based approaches to collect tweets leave out ancillary discussions by participants who have chosen not to use these hashtags or any hashtags at all (Burgess & Bruns, 2015). Future studies should dive deeper and make use of alternative sampling methods, such as including specific user-defined keywords, utilizing topic-related dictionaries or classifiers, or examine recent tweeting history and follower network information of participating accounts to capture further communication (Burgess & Bruns, 2015). Second, our analysis was limited to a relatively small sample of English-language Twitter only, limiting the generalizability of our findings. As prior research suggests that conspiracy theories are communicated differently according to national and regional contexts (e.g., Gray, 2008), studies on other languages and linguistic regions would be recommendable.

To further enhance our understanding of conspiracy theories in digital environments, future research should incorporate more cross-platform, cross-lingual, and cross-regional comparative perspectives in general. Furthermore, we argue that future research of online conspiracy theories should not be limited to mainstream platforms, such as Twitter, Facebook, or YouTube. These platforms, as indicated in both literature (e.g., Rogers, 2020) and our current study, have been systematically cracking down on accounts that promote conspiracy theories. As more and more conspiracy theorists and their followers migrate to “alternative” social media, such as Gab, BitChute, and Parler, more research will be required to investigate the impacts of this trend.

Supplemental Material

sj-docx-1-sms-10.1177_20563051211017482 – Supplemental material for From “Nasa Lies” to “Reptilian Eyes”: Mapping Communication About 10 Conspiracy Theories, Their Communities, and Main Propagators on Twitter

Supplemental material, sj-docx-1-sms-10.1177_20563051211017482 for From “Nasa Lies” to “Reptilian Eyes”: Mapping Communication About 10 Conspiracy Theories, Their Communities, and Main Propagators on Twitter by Daniela Mahl, Jing Zeng and Mike S. Schäfer in Social Media + Society

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Swiss National Science Foundation (SNSF).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.