Abstract

This study extends the nudge principle with media effects and credibility evaluation perspectives to examine whether the effectiveness of fact-check alerts to deter news sharing on social media is moderated by news source and whether this moderation is conditional upon users’ skepticism of mainstream media. Results from a 2 (nudge: fact-check alert vs. no alert) × 2 (news source: legacy mainstream vs. unfamiliar non-mainstream) (N = 929) experiment controlling for individual issue involvement, online news involvement, and news sharing experience revealed significant main and interaction effects from both factors. News sharing likelihood was overall lower for non-mainstream news than mainstream news, but showed a greater decrease for mainstream news when nudged. No conditional moderation from media skepticism was found; instead, users’ skepticism of mainstream media amplified the nudge effect only for news from legacy mainstream media and not unfamiliar non-mainstream source. Theoretical and practical implications on the use of fact-checking and mainstream news sources in social media are discussed.

Introduction

The unyielding transmission of erroneous news on social media—a platform that has outpaced print and broadcast as the source of news (Shearer, 2018)—has become a cause for serious concern around the globe. When defined as “faulty information that appropriates the look and feel of real news” (Tandoc et al., 2018, p. 147), news misinformation constitutes the essence of “fake news,” and a timely understanding on how to curb its proliferation in social media is warranted. At the same time, the fact that fake news stories are disseminated through simple “one-click” actions by “short-attention-span social sharers” on social media (Rainie et al., 2017, p. 11) calls for countermeasures capable of disrupting the quick decision-making process. Exposing users to fact-check alerts warning users of news misinformation is one such strategy.

Fact-check alerts are flagging features (e.g., pop-up banners, tags, and information panels) applied to break the news sharing momentum by helping users to quickly spot misinformation in online news and articles. Such alerts, however, merely caution users about information that has been disputed by third-party fact-checkers or news publishers, such as Snopes, PolitiFact, FactCheck.org, and Associated Press. They neither stop users from trusting the information nor prevent them from sharing it. By the same token, mistakes made by fact-checkers, debates about “who becomes the arbiter of truth” (Allcott & Gentzkow, 2017, p. 233), and the alleged activist agenda of fact-checkers (Haigh et al., 2018) cast aspersions on fact-checking intentions and raise doubts about the effectiveness of such measures. Despite the criticisms, fact-check alerts look set to grow and become a mainstay of social media. In early 2019, Facebook expanded its global partnership with third-party fact-checkers in 16 languages enabling them to directly flag misinformation on its News Feed while YouTube launched its fact-check panels to “pop-up” alongside videos beginning with its 250 million users in India.

To complicate matters, the prevalence of incidental news exposure on social media is increasingly exposing users to different news sources with identical news content (De Zúñiga et al., 2017; Karnowski et al., 2017; Yamamoto & Morey, 2019). It could be easier, intuitive even, for users to quickly decide not to share news flagged for misinformation from non-mainstream news producers that are less conventional (e.g., Huffington Post, Al Jazeera, and BuzzFeed) or from user-generated sources that are less familiar and non-professional (i.e., citizen journalism), as compared to those from more recognizable and reputable mainstream news organizations (e.g., BBC and The New York Times). On the contrary, users’ personal experience with and mistrust of what they perceive as a biased mainstream press could, instead, make them more likely to share news from independent, non-mainstream sources, whose credibility grew precisely “in response to the crisis of confidence between audiences and mainstream journalism” (Tsfati & Peri, 2006, p. 167).

That being said, a survey of the fact-checking literature is replete with evidence on the perceived reliability and consistency of fact-checking practices, methods, and services (see for review, Nieminen & Rapeli, 2019) but reveals little about the effects of such measures on users. While recent studies have evaluated the effectiveness of fact-check formats to correct audiences’ beliefs and misperceptions (Young et al., 2018) and motivate users to create and disseminate fact-check alerts (Amazeen et al., 2019), none have investigated the potential of fact-checks to deter users from sharing disputed news with others on social media. Having the knowledge that the presence of fact-check alerts would make untagged news stories seem accurate despite the stories being false (i.e., implied truth) (Pennycook & Rand, 2017), that countries such as Germany, France, Singapore, and Russia have passed versions of “fake news” laws to curb the spread of online misinformation (Reuters, 2019), and that we currently understand little on how to effectively stop users from sharing faulty news after a decade of online news sharing research (see for review, Kümpel et al., 2015) underscores the timeliness of a theoretical investigation on the effectiveness of fact-check alerts to curb the spread of news misinformation on social media.

Given the background, this study will investigate whether exposure to fact-check alerts flagging for inaccurate content will nudge users to not share the disputed news on social media in Singapore’s context—where 86% of the population consume their daily news from online sources, including social media, more than from print (38%) and television (51%) in 2019 (Newman et al., 2019). News content will be conceptually dissociated from the source, and the potential of news sources (legacy mainstream and unfamiliar non-mainstream) to moderate the nudge effect conditional upon users’ skepticism of mainstream media will be tested. Users’ personal issue involvement, online news involvement, and news sharing experience will also be measured and accounted for to obtain a more precise investigation. On top of expanding the theoretical premise of nudge with media effects and credibility evaluation perspectives, findings will also provide practical implications on the use of fact-check alerts in the prevailing multiple news source environment on social media.

Nudging via Fact-Check Alerts

Rooted in behavioral economics, to “nudge” is to design and implement choice situations via symbols and innovations with the intent to “alter people’s behavior in predictable ways without significantly changing incentives for conformity” or overt, punitive repercussions for non-conformity (Thaler & Sunstein, 2008, p. 6). Essentially, nudges work by triggering the least human resistance through indirect enablement (i.e., suggestion) and non-enforcement. Based on the psychological underpinnings in Tversky and Kahneman’s (1973) systems thinking, humans are inarguably “nudge-able” because they need to “cope in a complex world in which they cannot afford to think deeply about every choice they have to make” (p. 37)—requiring them to think quickly and take mental shortcuts (i.e., heuristics) to make the best decision possible (i.e., Automatic—system one thinking, cf. Reflective—system two thinking).

Nudges, therefore, operate on systematic biases or “rules of thumb” that guide decision-making by reconciling humans’ semiautonomous “planner” self (that works to “promote long-term welfare”) and “doer” self (that is tempted to react based on habits when making choices and taking actions) (Thaler & Sunstein, 2008, pp. 23, 42). For example, loss aversion bias, the tendency for people to avoid potential losses, “helps produce inertia” for action the same way that status quo bias produces a “yeah, whatever” heuristic that discourages people from taking actions that can potentially alter their relationships with others in the society (p. 35). Therefore, theoretically speaking, fact-check alerts warning users of misinformation in a piece of news would heuristically accentuate potential loss and nudge users toward intended directions—to not believe and share the potentially false news with others on social media to preserve personal reputation and credibility.

Nudge experiments have been done to study behavioral changes in many fields including health, marketing, business, environment, and politics. Extending the nudge principle to the digital context, a study in human–computer interaction reveals how interface metric cues that signal greater customer order numbers would effectively prod users into ordering the particular food on a snack-ordering website (Hou, 2017). Such cues induce the anchoring heuristic where quick decisions are made by comparing and adjusting to the prevalence of specific behaviors. In other studies, website interface cueing via prevalence estimates has similarly been found to predict music downloads (Salganik et al., 2006) and online ticket purchases regardless of review valences (i.e., positive vs negative) (Liu, 2006). Research on user behaviors on social media revealed that privacy nudges in the form of visual cues (i.e., audience profiles) and sentiment cues (i.e., statements such as “other people may perceive your post as negative”) inhibit message-posting behavior (Wang et al., 2013, p. 765). Such nudges indirectly produced intended outcomes via behavioral modification when users who had intended to post messages to their social networks decide otherwise, and those who had intended to use certain words chose to omit or substitute them—from “damn” to “dang,” for example (p. 767).

We can expect similar outcomes when it comes to sharing and commenting on news flagged for erroneous content in one’s social networks, with users choosing to exercise restraint out of concerns about “appearing ignorant,” “misrepresenting facts,” or “causing a misunderstanding” (Nekmat & Gonzenbach, 2013, p. 748). Moreover, by sharing news to befriend (Weeks & Holbert, 2013) and interact with others on social media (Ma et al., 2014), users may be viewed as important sources of information or even opinion leaders in their social networks (Oeldorf-Hirsch & Sundar, 2015; Turcotte et al., 2015), reaping status benefits and cultivating greater social capital (Dwyer & Martin, 2017). Having confidence that a piece of news is accurate before sharing it is thus essential to fully “enhance one’s status in the virtual community” (Kümpel et al., 2015; Lee & Ma, 2012, p. 337). Based on the discussion, it is posited that,

Legacy Mainstream Versus Unfamiliar Non-Mainstream News Sources

Users are unlikely to react uniformly to fact-check alerts for news sources that have different levels of perceived familiarity as well as reputation and expertise in news production (Kohring & Matthes, 2007). In the digital news landscape, mainstream news sources tend to be large “economic (public or private) corporations representing the central national value system” that have a print (magazines or newspapers) legacy, and non-mainstream sources tend to be “online-only” or “digital-born” news producers that do not have a print legacy (Tsfati & Peri, 2006, p. 170). The former is accorded credibility due to the “halo effect” of users’ familiarity with their established brand names and prestige (e.g., The New York Times and Washington Post). The online news content produced are deemed to undergo the same quality of expert editorial processes and reporting rigor as their print versions (Chung et al., 2012), and positively affecting users’ perceptions of the quality of their informational sources and credibility of content (Metzger et al., 2010).

On the contrary, non-mainstream news publishers that are “born on the web” (Chung et al., 2012; Stocking, 2017) usually begin as unconventional, alternative sources of news offering greater content diversity (Carr et al., 2014) and are generally perceived to be less trustworthy due to their lack of established reputation, brand recognition, and structural and editorial features (Medders & Metzger, 2018). Unfamiliarity with a news source obfuscates users’ ability to discern source intention, reporting bias, and news authenticity, but there can be non-mainstream online sources, such as Huffington Post and BuzzFeed, that grow over time to gain recognition (Fletcher & Park, 2017). Considering this, the current study further conceptualizes digital non-mainstream news sources as those users would perceive to be unfamiliar to distinguish them from familiar sources and to better isolate source influence when users are nudged by fact-check alerts.

Fletcher and Park’s (2017) analysis of user engagement with online news involving 21,000 users across 11 countries revealed a greater level of credibility accorded to legacy news sources (e.g., BBC and The New York Times) as compared to non-mainstream sources (e.g., independent sites and news aggregators). Users’ greater approval of mainstream news strongly correlates with online news participation, which includes news sharing, commenting, and rating on online social networks. Online credibility studies comparing legacy news sources and less recognized ones confirm the consistently low user credibility rating of unknown news sources (e.g., OnlineNews.com) as compared to familiar, established news sites, such as www.CNN.com (Flanagin & Metzger, 2007). Non-professional news blogs rate lower on credibility as compared to large news organizations (e.g., www.nytimes.com) (Banning & Sweetser, 2007), as do independent news sites (e.g., www.drudgereport.com) when compared to established news sites (e.g., www.usatoday.com) (Chung et al., 2012). Mainstream news were also shown to be more widely shared on social media, particularly by opinion leaders due to its perceived informational utility (Bobkowski, 2015) and among users engaged in issue discussions and fact-checking activities (Stroud et al., 2016). It is thus posited that,

In line with the notion that nudges operate based on a person’s dependency on cognitive heuristics to guide decision-making, credibility evaluation studies similarly revealed humans’ dependency on source heuristics (i.e., “looks professional,”) and cognitive biases to peripherally cue users’ credibility judgments in online environments (Metzger et al., 2015). From a credibility transfer perspective, content credibility evaluations are posited to be dependent on media-linked objects (Schweiger, 2000). For example, one’s evaluation of a parent news organization’s credibility translates to one’s perceived credibility of the news stories produced, as do the credibility of news read on air conferred by the reputation of a radio broadcast station. Accordingly, when warned of misinformation in the news, we can expect users to cognitively dissociate news content from the source and scrutinize the veracity of the information provided in the news by linking it with their experience, knowledge, and beliefs associated with the source (Hilligoss & Rieh, 2008). In such a case, as shown by Thorson and associates (2010), violation of the credibility transfer process (i.e., faulty news from a credible news source) will discount the overall credibility evaluation of the perceivably credible news linked to a reputable mainstream news source. On the contrary, we can expect fact-check alerts to confirm, if not reinforce, users’ low credibility perception of news that is linked to a perceivably non-credible source (e.g., unfamiliar source with no reputational impression). As users would rather share news perceived to be credible in their social network, as earlier discussed, it is posited that,

Biased by Media Skepticism

Defined as the “subjective feeling of alienation and mistrust toward mainstream media” (Tsfati, 2003, p. 160), media skepticism is the degree to which readers discount the information in mainstream media as representations of reality (Cozzens & Contractor, 1987). As a trait-like variable habituated through individual experiences with the media’s portrayal of reality, media skepticism varies between audiences and represents one’s “mistrust of mainstream journalism” more than “non-mainstream” or “alternative” journalism (Tsfati & Cappella, 2003). Media skepticism, thus, constitutes another layer of subjective credibility evaluation on top of objective source traits (i.e., expertise and competence) (Cappella & Jamieson, 1997) or even a “flip side of [source] credibility” based on how journalistic standards and impartiality of mainstream news are compromised due to economic and policy pressures (Carr et al., 2014, p. 4).

Connecting to our earlier discussion that nudges operate on “systematic biases” and “heuristics” to affect decision-making, we can expect media skepticism to factor into users’ credibility evaluation of mainstream news flagged for informational inaccuracy (i.e., fact-check alerts) by triggering the judgment-heuristic based on a generalized mistrust of mainstream media (e.g., Arpan & Raney, 2003; Giner-Sorolla & Chaiken, 1994). In this evaluation, news produced by mainstream press as a “collective referent” is perceived to be biased, particularly against one’s own point of view (for discussion of hostile media perception (see Gunther & Schmitt, 2004; Vallone et al., 1985). When utilized as a judgment-heuristic, media skepticism is shown to be a stronger explanation of media bias perceptions than individuals’ personal issue involvement with the topic in the news coverage (cf. attitude-heuristic in hostile media perception) (Choi et al., 2009). In addition, media skepticism as a negatively valenced, collective perception of “the biased press” influences readers’ mental evaluation more readily compared to positive impressions of a single news source due to accessibility bias (Daniller et al., 2017).

Mistrust of mainstream media, however, does not mean distrust of the information produced. More specifically, being skeptical indicates individuals’ unwillingness to be vulnerable based on negative expectations of the media’s behavior (Rousseau et al., 1998), with trust being likely to lead to cooperation, whereas mistrust decreases cooperation. In this line of reasoning, studies show that individuals who are skeptical of mainstream media would be less likely to regard issues reported in mainstream media as the most salient problem affecting society (i.e., agenda-setting) (Tsfati, 2003), tend to consume news from other countries in addition to local mainstream news (Tsfati & Peri, 2006), and seek more alternative (Tsfati & Cappella, 2003) as well as partisan news sources (Ladd, 2011).

Karlsson and associates (2017) found that users with less media trust are less tolerant of factual errors made in mainstream press, regardless of whether corrections were provided or whether the error was small (e.g., mistake in reporting numbers, “number of arrested people as 50, which eventually turned out to be 49”) or big (e.g., mistake in describing cause, “describes the police as using unprovoked violence although the disturbance really started with demonstrators”) (p. 155). From the hostile media perspective, research reveals individuals’ inclination to engage in corrective action for others by supporting censorship of the perceivably biased, wide-reaching mass media (Rojas, 2010). It is thus reasonable to expect media skepticism to intensify users’ disinclination to share news by mainstream sources flagged for informational inaccuracy.

There is, however, no clear evidence to show how media skepticism would affect users’ likelihood to share non-mainstream news with others online. A transverse interaction found in an experiment by Carr and colleagues (2014) revealed media skeptics’ propensity to rate mainstream news as less credible than non-mainstream news (i.e., citizen journalism) when inaccuracies in digital news sources were corrected. Non-skeptics, on the contrary, would perceive both news sources as equally credible, leading the authors to surmise that skeptics’ “mistrust of mainstream journalistic standards and motivations” has a stronger effect than non-skeptics’ belief of non-mainstream news sources as being more competent than mainstream journalists (Carr et al., 2014, p. 5). In other words, media skepticism as a function of individuals’ reaction to mainstream media is expected to negatively affect the evaluation—and consequently, the likelihood—of sharing news from mainstream sources more than non-mainstream sources when nudged by a fact-check alert. Figure 1 shows the hypothesized conditional moderation model. It is thus conceivable that when nudged about informational inaccuracy via fact-check alerts,

Hypothesized conditional moderation model.

Method

A 2 (nudge factor: fact-check alert vs. no fact-check alert) × 2 (source factor: legacy mainstream vs. unfamiliar non-mainstream) experiment via stimuli-embedded online survey was carried out. Data collection was administered by an independent survey company, Je Ne Sais Quoi (JNSQ) Research Singapore. 1 Participants were recruited from a survey panel comprising 45,000 unique individuals, with those owning a personal social media account that includes Facebook invited to participate in the study. The recruitment from panel was drawn via simplex algorithm and quota sampling based on a nationally representative sample of Singapore residents (61.3% Citizens ~ 85% sample; 9.4% Permanent Residents ~ 15% sample) and age category distribution (24.2% Millenials, 18–34 years ~ 51.2% sample; 23.1% Gen X’ers and Boomers, 35–50 years ~ 48.8% sample). A triple opt-in process comprising opting in to the panel, opting to provide personal particulars upon confirmation of interest, and opting to voluntarily participate in the study upon reading the study information page was carried out. A total of 1,100 responses were collected, with overall 85.6% response and 94.7% completion rates.

Procedure and Stimulus

All participants completed a pre-test questionnaire that measured their personal involvement in the news issue, involvement with online news, prior social media news sharing experience, and media skepticism. Participants were then randomly assigned to each of the two news source stimuli. Those exposed to a legacy mainstream source were shown a replication of the online news page belonging to The Straits Times. Being the oldest news organization in Singapore (since 1845) with the highest daily newspaper circulation and readership rates (600,000 in print, 597,000 in digital) (Singapore Press Holdings, 2018), The Straits Times is regarded as the most reputable news organization in the country and is the most suitable stimulus to represent a legacy mainstream news source for the study’s context. Participants assigned to an unfamiliar non-mainstream source were shown a news page belonging to a fictitious news source named Red Dot Review.

Participants were shown the same mock news report in both news sources entitled “Fall in HIV cases” and were told that they were given “less than 2 minutes to read the news.” Overall, participants spent an average of 79 s on the news page (SD = 3.86). A factual news report on a relatively unpopular topic was chosen to enhance the control of participants’ issue involvement in the experiment (see control checks in the following section). To manipulate a nudge, half of the participants in each of the news source groups were randomly exposed to a fact-check alert that popped up next to the news report to warn of the informational inaccuracy contained in the news report. The alert was similar to the banner that was used by Facebook when a piece of news or information is tagged as “fake or dubious” by third-party fact-checkers (Jenkins, 2017). A post-test questionnaire measuring participants’ likelihood to share the news was then administered. Stimuli used in the study are provided in Appendix.

Control and Manipulation Checks

Multiple control and manipulation checks were carried out for the experimental manipulation and measurement of outcome. First, based on the assumption that that nudges operate via heuristics (Thaler & Sunstein, 2008) and that humans engage in heuristic-based message processing when their personal involvement with the issue is low (Petty & Cacioppo, 1986), and data from participants who reported being somewhat involved to highly involved in the issue(MPersonalIssueInvolvement > 4.0) (n = 57) were omitted from the analysis. Personal issue involvement is defined as how personally meaningful, relevant, and important an issue is to an individual (Y. M. Kim, 2009). To measure this, participants rated on 7-point semantic differentials, how (1) Irrelevant–Relevant, (2) Unimportant–Important, (3) Boring–Interesting, (4) Worthless–Valuable, (5) Insignificant–Significant, a “HIV or AIDS-related issue” was to them personally. The items were composited with higher scores indicating higher personal involvement with the issue (α = .91, M = 2.03, SD = .82). No significant pairwise differences in participants’ personal issue involvement between the four experimental groups was found, F(3, 925) = .23, p = .87.

Second, to check for the manipulation of a recognized legacy mainstream news source and an unfamiliar non-mainstream one, participants responded “Yes,” “No,” or “Not sure” to three questions asking whether they (1) recognize the news source, (2) heard about the news source, and (3) had previously read news from the source. Significant differences were shown for each of the three measurements for the two news sources, respectively: (1) χ2(2) = 606.59, p < .001; (2) χ2(2) = 673.17, p < .001; and (3) χ2(2) = 597.39, p < .001. Finally, to check that participants who indicated that they were likely to share the news on their social media (MNewsSharingLikelihood > 4) might actually carry out the action and that those who had reported being unlikely to share the news might actually not do so (MNewsSharingLikelihood < 4) (see measurement in following section), participants were told to “click the ‘Share’ button to post the news on your social media (Facebook)” after exposure to stimuli. Data from participants who had reported likely to share the news but had not clicked the “Share” button (n = 46) and those who reported unlikely to share the news but had clicked “Share” (n = 23) were omitted from analysis. After omitting these cases and incomplete responses (n = 45), a total of 929 valid cases with the following group distributions were obtained for analysis: mainstream news source (n = 233) with fact-check alert (n = 239), unfamiliar non-mainstream news source (n = 220) with fact-check alert (n = 237).

The final sample comprised participants aged between 18 and 50 years (M = 27.3, SD = 7.45, median = 26.0) with 25th percentile at 21 years, 50th percentile at 28 years, and 75th percentile at 37 years. There were slightly more women (n = 479, 51.6%) than men (n = 450, 48.4%), with most participants possessing the highest education level equivalent of a polytechnic or professional certification diploma (n = 353, 38.0%), followed by a university degree (n = 327, 35.2%), high school diploma (n = 124, 13.3%), post-graduate degree (n = 52, 5.6%), and middle school diploma (n = 46, 4.9%).

Measures

News Sharing

This indicates individuals’ likelihood to relay the news and/or pertinent information related to the news to others on social media. Adapting prior measures on news dissemination likelihood (e.g., Lee & Ma, 2012; Weeks & Holbert, 2013) which also involves recommending (Kümpel et al., 2015) and “commentary acts” (Dwyer & Martin, 2017, p. 1082), participants rated on a 7-point Likert-type scale (1 = very unlikely and 7 = very likely) how likely they were to (1) recommend the news; (2) share the news; and (3) share opinions together with the news—with others on social media (Facebook) (α = .90, M = 2.55, SD = .71).

Media Skepticism

Defined as the general feeling of mistrust toward mainstream media (Tsfati, 2003) and the disbelief of information in mainstream media as actual representation of reality (Cozzens & Contractor, 1987), this variable was measured, similar to prior studies (e.g., Tsfati & Cappella, 2003), by asking participants to rate their agreement (1 = strongly disagree and 7 = strongly agree) with the following: “Overall, mainstream news in Singapore, such as The Straits Times” – (1) is slanted in reporting issues affecting society, (2) leaves out important facts in reporting the issues affecting society, (3) is biased when reporting issues affecting society, (4) can be trusted to tell an accurate story about issues affecting society (reverse coded), and (5) has an agenda other than helping to solve the problems affecting society (α = .87, M = 3.55, SD = 1.16).

Control measures

To better isolate the experimental effects on users’ news sharing likelihood, aggregate control measures for individual predispositions—online news involvement and prior news sharing experience—were collected and statistically controlled in data analysis.

Online news involvement is defined as the perceived importance and relevance of online news to individuals’ lives and has been shown to enhance users’ confidence about current affairs and predict news sharing intentions as well as political engagement behaviors more than general use of social network sites (SNSs) (Y. Kim et al., 2013; Weeks & Holbert, 2013). To measure this, participants rated their agreement using a 7-point Likert-type scale (1 = strongly disagree and 7 = strongly agree) with the following: “In general, online news is” – (1) relevant to my daily routines, (2) important to me, (3) valuable to me, and (4) interesting to me (α = .93, M = 4.27, SD = .69).

Prior news sharing experience indicates the frequency with which users engage in news sharing as an online activity on social media (Kalogeropoulos et al., 2017; Kümpel et al., 2015) and would not only induce familiarity and confidence but also ritualize users to such activities (Lee & Ma, 2012). To measure this variable, participants reported on a 6-point scale how often, on average, they “share news with others on social networking platforms, such as on Facebook, Twitter, and Instagram” (1 = less than once per month, 2 = once per month, 3 = once per week, 4 = more than once per week, 5 = once per day, 6 = more than once per day) (M = 4.38, SD = .95).

Results

Data analyses were carried out in IBM SPSS, version 25. Regression-based conditional and moderated moderation model analyses to test H3 and H4, respectively, were computed using a macro-installed PROCESS, version 3.2 program in SPSS (Hayes, 2018). Multiple regression results revealed prior news sharing experience and online news involvement to be significantly related to news sharing likelihood on social media, F(2, 928) = 79.79, p < .001, VIF = 1.01, R2adjusted = .04, with prior news sharing experience positively related to news sharing likelihood (t = 6.02, β = .19, p < .001), and online news involvement negatively related to news sharing likelihood (t = –4.08, β = –.13, p < .05). The variables were thus statistically controlled as covariates in subsequent analyses that regressed news sharing likelihood.

Hypothesis 1 predicted that exposure to fact-check alerts would lead to lower news sharing likelihood, and H2 posited that news from an unfamiliar non-mainstream source would be less likely to be shared than news from a legacy mainstream source. Hypothesis 3 posited an interaction effect such that users’ reluctance to share the news when exposed to a fact-check alert would be greater when the news was from the non-mainstream source compared to a mainstream news source. Results from a moderated model PROCESS analysis (i.e., pre-specified PROCESS model 3) showed significant main effects from fact-check alerts (p < .001) and news sources (p < .001) on the likelihood to share information on social media. Participants were less likely to share the news when exposed to the fact-check alert (M = 2.19, SD = .67) compared to non-exposure (M = 2.77, SD = .72), and less likely to share the news from an unfamiliar non-mainstream news source (M = 2.21, SD = .70) compared to news from a familiar mainstream source (M = 3.03, SD = .86). Hypotheses 1 and 2 were supported.

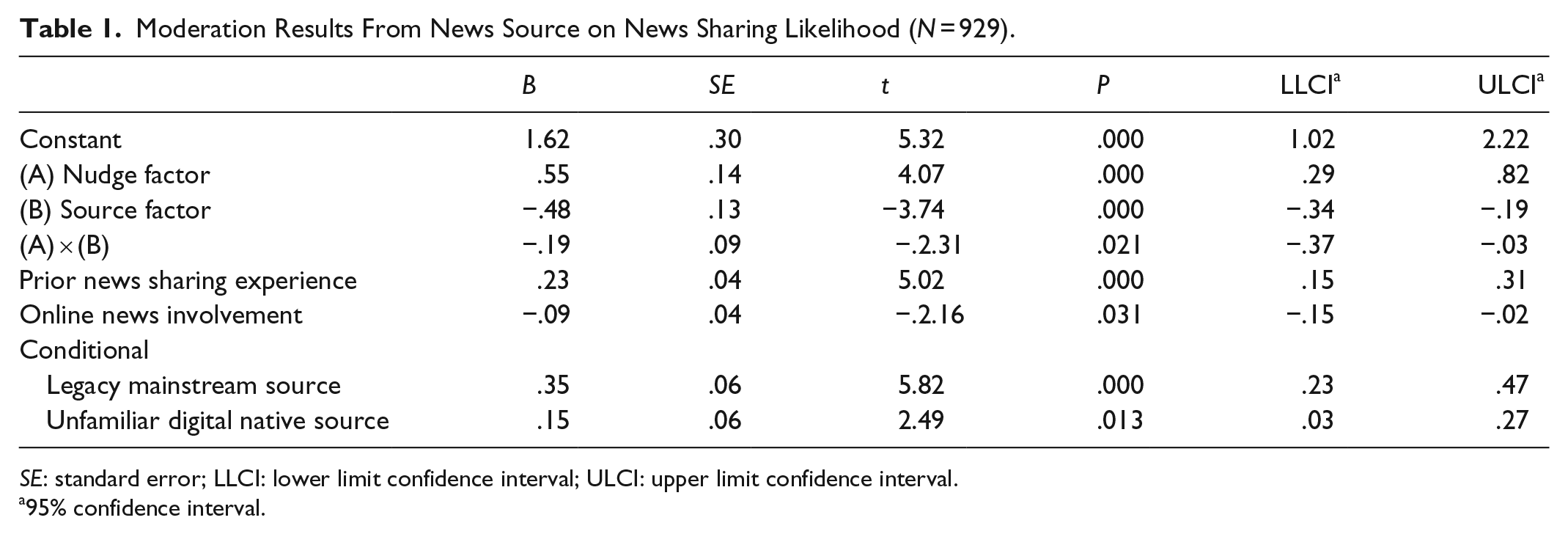

A significant interaction effect between exposure to fact-check alerts and news source was also retrieved, F(5, 923) = 32.36, p < .001, MSE = .43, R2 = .15, 95% CI = [–.37, –.03]. However, news sharing likelihood was not shown to decrease at a greater rate when participants were nudged on news misinformation coming from an unfamiliar non-mainstream news source (Figure 2). Instead, contrary to H3, the likelihood to share the news was found to decrease more for mainstream news source instead. Overall, H3 was thus not supported. Table 1 shows the PROCESS statistic output for the moderation effect at conditional values of the two news sources.

Interaction graph of fact-check alert nudge on news sharing likelihood as a function of news source.

Moderation Results From News Source on News Sharing Likelihood (N = 929).

SE: standard error; LLCI: lower limit confidence interval; ULCI: upper limit confidence interval.

95% confidence interval.

Hypothesis 4 posited that the moderated effect in H3 is conditional on media skepticism, such that the users’ greater reluctance to share news from an unfamiliar non-mainstream source compared to a legacy mainstream source when exposed to fact-check alerts would be weakened at greater levels of media skepticism. PROCESS analysis results showed a significant moderated moderation model, F(9, 919) = 21.76, p < .001, MSE = .42, R2 = .18, but with no significant three-way effect: nudge factor × source factor × perceived media bias, F(1, 919) = .45, B = –.05, SE = .07, p = .49, 95% CI = [–.19, .09]. Table 2 shows the PROCESS conditional moderation test output. Hypothesis 4 was not supported.

Conditional Moderation Results on News Sharing Likelihood (N = 929).

SE: standard error; LLCI: lower limit confidence interval; ULCI: upper limit confidence interval.

95% confidence interval.

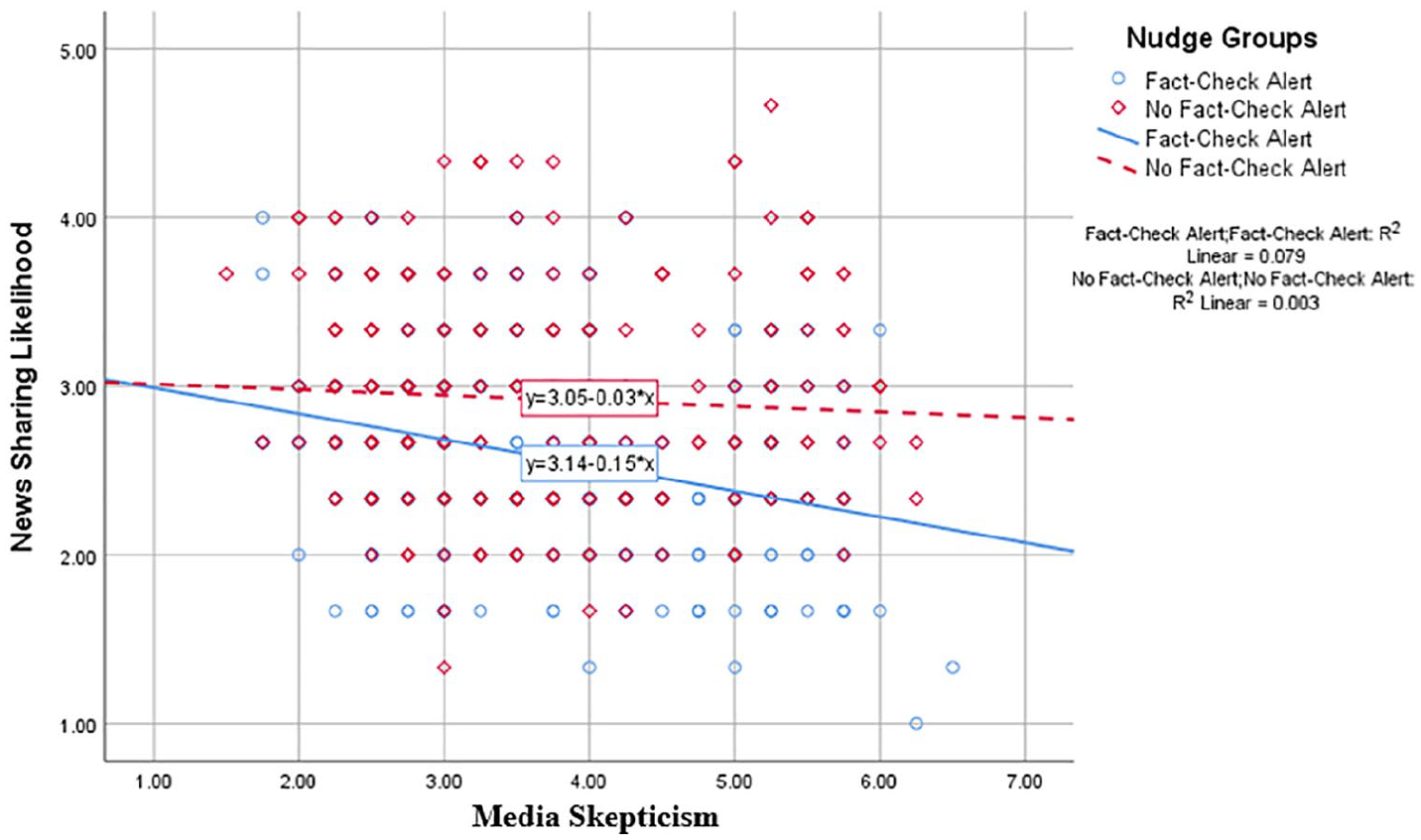

Post hoc Analysis

A plausible reason for the non-support for H4 is the non-variability of media skepticism between the two news sources, specifically for an unfamiliar non-mainstream source. In this case, users’ skepticism toward media might have only affected their overall evaluation of news from mainstream news source. To examine this postulation, two separate within-group analyses were done in PROCESS to examine whether media skepticism would moderate the effect of fact-check alerts for a mainstream source group and a non-mainstream source group separately. For the mainstream news source, a significant moderation model was retrieved, F(5, 466) = 15.23, p < .001, MSE = .39, R2 = .14, with significant conditional effects from media skepticism at values ±1 SD, F(1, 466) = 4.98, p < .05, 95% CI = [.01, .21]. No significant moderation effect was found for unfamiliar non-mainstream source, F(1, 451) = 1.50, p < .22, 95% CI = [–.04, .17]. Indeed, media skepticism is found to significantly moderate the effect of fact-check alerts when the news is from a mainstream source but not from a non-mainstream source; with likelihood to share the mainstream news significantly mitigated at greater (M = 5.25) than weaker (M = 2.50) levels of media skepticism. Table 3 shows the moderation analysis output for both news sources, and Figure 3 illustrates the interaction graph.

Conditional Effect of Media Skepticism on News Sharing Likelihood within News Source Groups.

SE: standard error; LLCI: lower limit confidence interval; ULCI: upper limit confidence interval.

95% confidence interval.

Interaction of media skepticism and news sharing likelihood as function of fact-check alert nudge in legacy mainstream news source.

Discussion

This study has demonstrated that fact-check alerts could, indeed, deter users from disseminating news misinformation to others on social media. This finding extends those from prior studies that showed how nudges in the form of informational cues can predict human action in making online purchases (Hou, 2017; Liu, 2006) and downloading music (Salganik et al., 2006), by revealing that nudges in the form of informational warning signs could produce human inaction. Akin to how visual and sentiment privacy nudges inhibits message-posting behaviors on Facebook (Wang et al., 2013), seeing a fact-check alert would, as theoretically postulated, invoke the loss aversion bias and accentuate potential loss to users when faced with the risk of sharing faulty news with people they know on social media. This restraint aligns with prior studies evincing users’ inclination to preserve their social status and approval in peer networks for sharing news and information on social media (Kümpel et al., 2015; Lee & Ma, 2012; Oeldorf-Hirsch & Sundar, 2015; Turcotte et al., 2015), and contributes to the literature on online news sharing by showing how a nudge on news misinformation can demotivate users from sharing news on social media.

The main effect found for news source aligns with prior evidence and confirms users’ greater reluctance to share news from non-mainstream sources as compared to mainstream sources on social media (Bobkowski, 2015; Fletcher & Park, 2017; Stroud et al., 2016). The current finding expands this knowledge by revealing that users were overall more reluctant to share news from an unfamiliar non-mainstream source that is flagged for misinformation. That being said, the decrease in users’ likelihood to share news flagged for misinformation when nudged by a fact-check alert was, instead, found to be stronger for news that had come from a legacy mainstream source.

The unanticipated interaction found between erroneous content and news source offers new insights on the violation of the credibility transfer process (Schweiger, 2000) in users’ cognitively expedient evaluation of news flagged for misinformation. Users’ propensity to discount the overall credibility of faulty content linked to reputable news sources (Thorson et al., 2010) would actually be stronger than their confirmation of the perceived non-credibility of content consistent with an unfamiliar non-mainstream source that is perceivably less reputable. This is, perhaps, unsurprising if we consider that audiences can be intolerant of factual errors made by mainstream press (Karlsson et al., 2017). Echoing prior suggestions (e.g., Amazeen et al., 2019), future research could examine how this intolerance can affect users’ confirmation of belief—and disbelief—when news, particularly those from established mainstream sources, is fact-checked to be inaccurate. Future studies should also interrogate whether such intolerance for misinformation by mainstream news would increase mistrust and diminish audience reliance on news media for public opinion for social indicators of opinion climates on social media; as research has begun to show (e.g., Nekmat, 2019; Neubaum & Krämer, 2017). Findings from such studies on online news misinformation in social media would bear profound theoretical implications on the current role of mass media to determine public concerns on salient issues affecting society (i.e., agenda-setting) and public perceptions of the dominant ideology in society (i.e., spiral of silence).

Findings also show that the interaction between a nudge signaling faulty news content and news source was not conditional upon users’ skepticism toward mainstream media. This might be explained by the “transverse interaction” between media skepticism and news source (Carr et al., 2014), suggesting media skeptics as being more prone to discredit news from mainstream sources as compared to non-skeptics’ tendency to discredit non-mainstream news. This finding could also imply that the judgment-heuristic triggered by users’ general mistrust of mainstream media (Chia et al., 2007; Giner-Sorolla & Chaiken, 1994) seemed to matter only when evaluative decisions are made pertaining to mainstream press as evidenced by the significant correlation between media skepticism and the sharing of mainstream news but not non-mainstream news (see results in Table 3). Based on the findings, future studies should consider that media skepticism—as a construct of users’ general mistrust of mainstream media (Cozzens & Contractor, 1987; Tsfati & Cappella, 2003)—does not directly translate to users’ trust of non-mainstream media, especially in their evaluation of fact-checked news.

Taken together, findings demonstrate the conditionality of fact-check alerts on news source and media skepticism to impact disputed news from legacy mainstream sources more than non-mainstream sources. It is, thus, essential for researchers and policymakers to seriously consider claims related to the misuse of fact-checking to “rebrand authentic information as ‘fake’ news” to discredit established news organizations (Levin, 2017) and examine the sustained impact of fact-check alerts on the perceived reputation and trust of legacy news media should their content be flagged as “fake.” Perhaps we can expect a different outcome should the disputed content come from more familiar and established independent non-mainstream news producers like Huffington Post, Al Jazeera, or BuzzFeed, which can be credible alternatives to mainstream news among online audiences (Tsfati & Peri, 2006). Nevertheless, knowing now that message source would bias the effect of fact-check alerts suggests for social media companies like Facebook to share more information on flagged content that would enable fact-checkers to include details about the source in fact-check alerts. Examples of such source-related information in the alerts could be the number of times that the particular source has been flagged for misinformation, the origin and background of the source, as well as editor and crowdsource ratings on the trustworthiness and familiarity of different online news sources.

We might also expect the influence of media skepticism on user evaluation and sharing of disputed news content that was fact-checked to be different in relatively freer press systems compared to this study’s context where the bias of mainstream media has been shown to be more apparent due to stricter press controls (Chia et al., 2007). Plausibly, the notion that freedom of press and credibility of mainstream media are mutually constitutive and affect each other inversely is at play. In South Korea, for example, users tend to trust online sources more than established media outlets with links to government party (D. Kim & Johnson, 2009). Nonetheless, the non-Western media perspective provided in this study fills the gap in fact-checking research that has been largely done in the United States (37 of 48 studies) (Nieminen & Rapeli, 2019). More significantly, the current finding bridges online news engagement research that has lacked empirical insights into “the mechanisms that connect media features and the social practices” (Mitchelstein & Boczkowski, 2010, p. 1094), particularly in the context of users’ social sharing of faulty news in a multiple source news environment.

Limitations

It is essential to note that the estimated marginal means of news sharing likelihood did not show that users were likely to share faulty news (MNewsSharingLikelihood > 4) in any of the experimental conditions but more precisely revealed the significant differences between users’ unlikelihood to share the news. This finding is more ecologically valid if we consider that users are typically more unlikely to share news received on social media to begin with due to the prevalence of incidental exposure to a wide spectrum of news on the platform (Karnowski et al., 2017; Y. Kim et al., 2013).

Findings were based on a single, objective news stimulus on an issue that affects a minority of the population (e.g., HIV statistics) instead of a more salient, hot-button issue that affects wider segments of society. While the relatively unpopular topic helps control for the influence of users’ issue attitudes and involvement on news sharing likelihood, it is necessary to recognize that the sharing of “fake news” on contentious and morally loaded issues, such as those on abortion and same-sex marriages, can be more rampant among members in ideologically polarized networks on social media. Furthermore, the fact-based news stimulus limits the current findings to fact-check alerts on faulty positive news information, c.f. normative news report such as editorials that demand subjective evaluation from users to ascertain accuracy and truthfulness in the information.

The study had also not accounted for the influence of political ideology on audiences’ skepticism toward mainstream news sources. Based on the hostile media effects literature, audiences would undergo selective categorization (i.e., selective attention, processing, and recall of information) based on their ideological leanings when evaluating mainstream news (Giner-Sorolla & Chaiken, 1994). The process inevitably results in audiences, particularly among those who are highly partisan, evaluating mainstream news coverage as being biased against their position and in favor of those in ideologically opposed positions (i.e., relative hostile effect) (Gunther et al., 2001) and consequently perceiving the news source as being more biased and untrustworthy (Arpan & Raney, 2003). It is thus reasonable to expect the current findings to vary in more binary political-media systems, such as those in the United States for example, where ideological polarizations exist between democrats and conservatives, “blue” media and “red” media, and so forth—in which case, we can also expect the effect of fact-check alerts to differ should the faulty news be related to a political topic as compared to the health issue (HIV cases) used in this study.

While the current investigation on users’ likelihood to share news on Facebook fills a gap in online news sharing literature that has been “exclusively focused on Twitter” (Kümpel et al., 2015, p. 7), it is necessary to note that users can share news for many other reasons, fake or accurate, with different online networks on different social media platforms. These could be for entertainment purposes, to satisfy social integrative needs, to engage in corrective action, or even to caution others about suspicious and faulty news. Future research could consider the audience factor to affect the sharing of news misinformation, especially for closed-network social networking platforms where users curate audience groups to suit different purposes (e.g., WhatsApp, WeChat, and Messenger) and where fake news is shown to proliferate (Newman, 2017). In addition, the text-based fact-check alert designed to simulate a nudge in this study could have been more effective had the format been a video or an image (Young et al., 2018), or whether corrective information is provided within social media following the alert (Bode & Vraga, 2015). Users’ trust in the fact-checkers and “truth” verification could have also influenced their reaction to fact-check alerts (Nieminen & Rapeli, 2019).

Conclusion

Despite the limitations, this study has plausibly provided the first experimental evidence on the effectiveness of fact-check alerts to dampen user sharing of news misinformation from different sources on social media. Theoretically, this study produced a more intricate understanding of the heuristics-based nudge process that is cognitively biased by news source characteristics and users’ skepticism of the media to influence user sharing of disputed news on social media. The potential of fact-check alerts to impact legacy mainstream news media more than non-mainstream producers provides impetus for future studies to examine the sustained impact of fact-checking on the perceived reputation, trust, and dependability of legacy news organizations, especially when their online news content is flagged as erroneous.

Footnotes

Appendix

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.