Abstract

This study investigates fact-checking effectiveness in reducing belief in misinformation across various types of fact-check sources (i.e., professional fact-checkers, mainstream news outlets, social media platforms, artificial intelligence, and crowdsourcing). We examine fact-checker credibility perceptions as a mechanism to explain variance in fact-checking effectiveness across sources, while taking individual differences into account (i.e., analytic thinking and alignment with the fact-check verdict). An experiment with 859 participants revealed few differences in effectiveness across fact-checking sources but found that sources perceived as more credible are more effective. Indeed, the data show that perceived credibility of fact-check sources mediates the relationship between exposure to fact-checking messages and their effectiveness for some source types. Moreover, fact-checker credibility moderates the effect of alignment on effectiveness, while analytic thinking is unrelated to fact-checker credibility perceptions, alignment, and effectiveness. Other theoretical contributions include extending the scope of the credibility-persuasion association and the MAIN model to the fact-checking context, and empirically verifying a critical component of the two-step motivated reasoning model of misinformation correction.

Fact-checking has emerged as a key weapon to combat misinformation on topics such as health and politics. A considerable number of studies find support for the effectiveness of fact-checking messages in reducing misperceptions (Chung & Kim, 2021; Smith & Seitz, 2019; Vraga & Bode, 2017; Wang, 2021; York et al., 2020), altering attitudes (Wintersieck et al., 2021; Zhang et al., 2021), and decreasing intentions to share misinformation (Chung & Kim, 2021; Yaqub et al., 2020), at least ephemerally (Carey et al., 2022). Indeed, a recent meta-analysis of the misinformation corrections literature revealed a positive and significant effect size (

Yet fact-checks do not always decrease misperceptions and research has found that different sources of fact-checking vary in their ability to sway opinions (e.g., Banas et al., 2022; Yaqub et al., 2020). For example, expert sources tend to be more effective than non-expert sources in reducing beliefs in and sharing of health misinformation (Vraga & Bode, 2017; Walter et al., 2021; Zhang et al., 2021). This suggests that source credibility perceptions of fact-checkers likely help to explain why fact-checks from different sources are more or less effective in reducing belief in misinformation. Understanding how credibility plays into fact-checking effectiveness is especially urgent now because new forms of fact-checking are emerging, including AI-based and crowdsourcing methods, that might engender low(er) levels of trust or credibility.

The central aim of this study is thus to test the mediating role of fact-checker credibility perceptions on their effectiveness in reducing belief in misinformation across sources, which is informed by persuasion research, the MAIN model (Sundar, 2008), and the two-step motivated reasoning model of how corrective messages are processed (Jennings & Stroud, 2023). This approach situates fact-checker credibility as the theoretical mechanism to account for differences in fact-checking effectiveness across sources, including professional fact-checkers, mainstream news organizations, artificial intelligence, social media platforms, and crowdsourcing. Our research thus extends the long-established association between source credibility and communication effectiveness (Hovland & Weiss, 1951) and expands the scope of the MAIN model to fact-check source credibility. It also offers the first test of a critical component of the two-step motivated reasoning model to better understand when fact-checks are more or less likely to work.

In addition to the source of a fact-check, individual differences also influence fact-checking effectiveness. For example, research finds that political partisanship can undermine fact-checking effectiveness by preventing convergence of opinion on the fact-checked topic and increasing the likelihood of rejecting corrective information (Jarman, 2016; Jennings & Stroud, 2023; Nyhan et al., 2013; Walter et al., 2020). The final goal of this study is thus to elucidate the boundary conditions for which fact-check messages may be more or less effective by testing two theoretically-derived individual-level variables: (1) alignment of an individual’s initial belief about the veracity of a claim with the fact-check’s verdict about that claim and (2) the level of analytic thinking by the fact-check recipient. There are mixed results showing that fact-checks are sometimes effective for people unaligned with the fact-check verdict (i.e., people who believed the misinformation) and sometimes not effective due to motivated reasoning (i.e., individuals process information in ways that support a preferred conclusion) (Kunda, 1990), which could be explained by perceived fact-checker credibility. Emerging research also finds that cognitive sophistication (i.e., analytic thinking) plays a role in belief in misinformation and may even magnify motivated reasoning during information processing under some circumstances (see Tappin et al., 2021). This suggests that analytic thinking might also impact the effectiveness of fact-checking messages.

The current study employs an experimental design to investigate the degree to which fact-checker source type, fact-checker credibility perceptions, alignment of fact-check verdict with initial beliefs about the veracity of a news story, and analytic thinking influence fact-checking effectiveness. This study broadens the scope of prior research on fact-checking by including the full array of contemporary fact-checking sources to compare their effectiveness in reducing belief in misinformation. It additionally extends existing theory to understand how and why different fact-checking sources could be perceived as more or less credible and investigates how those perceptions work in conjunction with individual-level variations in thinking style and prior attitudes, both of which can activate motivated reasoning, to affect fact-check effectiveness in reducing belief in misinformation. Finally, it examines the research questions using two different health topics.

Sources of Fact-Checking and Their Effectiveness

There are currently five main types of fact-checkers in use—professional fact-checking organizations, mainstream news outlets, social media platforms, AI, and crowdsourcing. Each has distinctive characteristics. For example, professional fact-checking organizations, such as

More recent sources of fact-checking include artificial intelligence (AI) and crowdsourcing. AI is being applied to fact-check information by services such as Logically.AI and FullFact. While algorithms are able to identify and label false claims quickly and in great volume, there are concerns about their accuracy given the brevity and inherent ambiguity of language in news reporting (Graves, 2018). The newest form of fact-checking is crowdsourcing. Twitter’s (now X)

To date, only a handful of studies have compared the effectiveness of different types of fact-checking sources. Researchers commonly operationalize fact-checking effectiveness in terms of reduction in misperceptions or changed attitudes toward a topic (e.g., Vraga & Bode, 2017; Zhang et al., 2021), and in some cases as reduced liking of and sharing intention toward the misinformation (e.g., Chung & Kim, 2021; Garrett & Poulsen, 2019; Yaqub et al., 2020).

Studies in the political realm typically compare professional fact-checkers to one other source. For example, Wintersieck et al. (2021) found that a fact-check from a professional fact-checker altered assessments of candidates’ advertisements more than a fact-check from a news organization. Jennings and Stroud (2023) found no differences on belief in misinformation among people who saw a fact-check from a professional fact-checker versus a news organization. Garrett and Poulsen (2019) found that flags of inaccurate political posts on social media from professional fact-checkers versus from peers made no difference in reducing beliefs in misinformation. The mixed evidence for the effect of source type on fact-checking effectiveness in these studies may be due to individuals’ bias toward the fact-checking source or message based on their political ideological identification. Conservatives are known to hold less favorable views of professional fact-checkers than do liberals (Nyhan & Reifler, 2015). As such, the effectiveness of fact-checks in political contexts cannot be fully understood without consideration of individuals’ partisanship.

Studies outside of political news have revealed varied effectiveness of different fact-checking sources in reducing belief in and sharing intention of misinformation. Corrective messages from experts (e.g., CDC, health institutions, universities) tend to be more effective than from non-experts (e.g., crowdsourcing) in reducing beliefs in health misinformation (Vraga & Bode, 2017; Walter et al., 2021; Wang, 2021). For example, Vraga and Bode found that the CDC was most effective in reducing Zika misperceptions, while a correction from another user led to no reduction at all. Although not about effectiveness per se, Zhang et al. (2021) showed that fact-checking labels attributed to universities and health institutions resulted in more positive attitudes toward vaccines than other sources (i.e., AI, news media, professional fact-checkers). Yaqub et al. (2020) also found that, among professional fact-checkers, major news outlets, the “public” (i.e., majority of Americans) and AI, professional fact-checkers were most effective in reducing people’s sharing intent of misinformation on social media across several topics (e.g., politics, science, entertainment, everyday events), followed by major news outlets, and AI reduced sharing intention the least.

Interestingly, no studies so far have directly compared the five main sources of fact-checking in use today in terms of reducing belief in misinformation. Yet there is reason to think they might differ in effectiveness, given that these fact-checking sources employ different methodology to vet information and/or are more or less established and familiar to users. At the same time, however, the directions of these differences are hard to predict for all types of fact-checking sources. As one example, AI-based fact-checkers might be more effective than news organizations because AI is seen as more objective, but also might be less effective due to suspicion about AI’s ability to judge nuanced information (Graves, 2018). In several cases, there is little guidance available in the literature to predict the comparative effectiveness of fact-checking sources, and in others competing predictions could be made. The first goal of this study is thus to advance research on fact-checking to understand if there are differences among the full array of existing fact-checking source types, especially amongst newer sources (e.g., AI and crowdsourcing) that have received less attention in the literature than legacy sources (e.g., professional fact-checkers) in terms of reducing belief in misinformation, and to examine the specific nature of any differences, by answering the following research question:

Perceived Fact-Checker Credibility and Fact-Checking Effectiveness

Source credibility is defined as the believability of a source of information, operationalized as a multidimensional concept consisting of dimensions such as expertise, trustworthiness, completeness, bias, objectivity, goodwill, and transparency (Curry & Stroud, 2021; Hovland et al., 1949; Rieh & Danielson, 2007). Credibility perceptions rest on information receivers’ objective and subjective judgments of the believability of a source. Although to our knowledge few studies have examined perceptions of fact-checker source credibility per se, it is reasonable to suspect that people hold such perceptions toward fact-checking sources, as well as that those perceptions likely influence the effectiveness of fact-checks in reducing belief in misinformation.

The influence of source credibility perceptions on communication effectiveness has long been documented. Research on the persuasiveness of sources has generally revealed a superiority of high-credibility over low-credibility sources in terms of their effectiveness in changing audience beliefs, attitudes, or behaviors (Albarracín & Vargas, 2010; Hovland & Weiss, 1951; Pornpitakpan, 2004; Stiff & Mongeau, 2016). This is also likely to hold true in the context of news and news correction, suggesting that an effective way to reduce belief in misinformation is to attribute the correction to a credible source. Indeed, Jennings and Stroud’s (2023) two-step motivated reasoning model of how misinformation corrections are processed holds that source trust is a key factor in the extent to which recipients adjust their beliefs as a result of a corrective message, although they did not actually measure trust in their study. Vraga and Bode (2017) advanced, but also did not test, a similar assumption. Therefore, our first hypothesis aims to verify this idea empirically:

It is also likely that different types of fact-checking sources are perceived as more or less credible than each other, which may function as a mechanism for explaining variance in their effectiveness in reducing belief in misinformation. The MAIN model (Sundar, 2008; Sundar et al., 2015) helps to theorize how various fact-check sources may be perceived differently in terms of their credibility. The MAIN model was developed to understand how people form credibility judgments based on the modality, agency, interactivity, and navigation affordances of a communication source or channel. It stipulates that people’s credibility assessments are guided by cues that are based on heuristics, which are cognitive rules of thumb that people invoke to help them evaluate sources or information. “Agency” refers to cues or affordances pertaining to the source of media content, and is thus relevant to the study of fact-check source credibility. Some heuristics associated with the agency affordance include the authority, bandwagon, and machine heuristics. The authority heuristic is the perception that expert sources can be trusted. The bandwagon heuristic is the notion that consensus implies correctness, so that if others find a source to be credible, one can assume it is credible. The machine heuristic refers to a belief that machines are more objective than humans (see Sundar, 2008 for more details). The remainder of this section applies the MAIN model to understand how various sources of fact-checks might be viewed differently in terms of credibility. 1

Sources of fact-checks that rely on professional (human) fact-checkers to evaluate information may trigger the authority heuristic. As noted earlier, professional fact-checking services and mainstream news organizations employ experienced experts to vet information and invest in resources to help them perform fact-checks. Due to these attributes, such entities may be seen as credible sources of fact-checking (Vraga & Bode, 2017; Wang, 2021). However, while these fact-checking sources are likely perceived as expert, they may not be perceived as fair. Many conservatives in the U.S. feel mainstream news organizations are biased against their views, and thus cannot produce objective fact-checks (Robertson et al., 2020; Walker & Gottfried, 2019). This perception also applies to professional fact-checking organizations (Brandtzaeg et al., 2018; Shin & Thorson, 2017; Wintersieck et al., 2021).

There is also reason to think newer sources of fact-checks might be perceived as more credible than legacy sources. For example, fact-checking sources that use crowdsourcing may evoke the bandwagon heuristic which could lead users to assume verdicts rendered by such sources are objective because they involve consensus across a large number of independent reviewers. Crowdsourced fact-checking systems may be less prone to charges of bias, as judges from different political outlooks contribute to the verdicts. Similarly, tools that employ algorithms for fact-checking may trigger the machine heuristic, leading to perceptions that automated fact-checking services verify claims in a more objective manner compared to humans, because machines are less susceptible to personal feelings and political biases when fact-checking claims (Moon et al., 2023). Yet as mentioned above the inherent ambiguity of language poses significant challenges for automated fact-checking systems to interpret meaning in context (Graves, 2018). Users may doubt the ability of machines to adjudicate factual disputes and thus perceive AI-based fact-checkers as less credible than human-based fact-checking services.

Another factor that may impact fact-checker source credibility perceptions is how transparent sources are about how and why they arrived at their verdict, with greater transparency evoking higher credibility perceptions (Banas et al., 2022). Sundar et al. (2020) found evidence for a similar concept in the realm of privacy, finding that the more a website makes its privacy policy transparent to users, the more people trust and disclose personal information to that website. Legacy fact-checking sources tend to be more transparent in their methods than newer sources. For example, professional fact-checking organizations make their methodology public. By contrast, algorithmic decision-making is often considered an impenetrable “black box” (Burrell, 2016) and people’s relative unfamiliarity with tools for automated fact-checking may make it hard for users to infer how AI systems fact-check claims. Similarly, crowdsourced fact-checks provide no information about how much topical knowledge the volunteers doing the fact-checks have or how they make their decisions. Consequently, people may perceive fact-checking sources based on AI and crowdsourcing to be lower in credibility compared to the other sources.

In any case, given the positive connection found between source credibility and message effectiveness in the research literatures in communication and psychology, and that differences in credibility perceptions among various fact-checking sources likely exist, we propose that perceptions of factchecker source credibility serve as a key theoretical mechanism to explain why factchecks from different sources are more or less effective in reducing belief in misinformation. This proposition extends the long-established association between source credibility and communication effectiveness (Hovland & Weiss, 1951) to the fact-checking context and offers a test of the first step of Jennings and Stroud’s recently-proposed two step motivated reasoning model of how misinformation corrections are processed. Specifically, we seek to empirically validate that model’s assertion that source trust—which is closely related to, and a component of, credibility—impacts the extent to which a corrective message is effective in reducing belief in misinformation. Preliminary supporting evidence for our proposed mediating role of fact-checker credibility perceptions is provided by Zhang et al. (2021) who found that fact-checks provided by research universities and health institutions resulted in more positive attitudes toward vaccines than fact-checks provided by AI, news media, or professional fact-checking organizations through greater perceived expertise. We thus propose:

Alignment With Fact-Checking Message and Fact-Checking Effectiveness

Alignment refers to the congruence between the fact-check verdict (i.e., story is true/false) and a person’s initial belief about a piece of misinformation (i.e., story is true/false). Research shows that people evaluate misinformation and corrective messages in a biased way to confirm their preexisting beliefs (see Jennings & Stroud, 2023; Moon et al., 2023; Walter et al., 2020). For example, Wang (2021) found that those who held initial misbeliefs evaluated a misinformation claim as more credible and a fact-check of the claim as less credible compared with those without initial misbeliefs. Moreover, for people with initial misperceptions (“unaligned” individuals), fact-checks hardly reduced their belief in the misinformation, whereas fact-checks increased correct beliefs among people with accurate initial perceptions (“aligned” individuals). These findings can be explained by motivated reasoning and more specifically the confirmation bias in human information processing, which is the tendency to interpret and believe information that favors one’s prior attitudes (Kunda, 1990; Taber & Lodge, 2006).

Hameleers and van der Meer’s (2020) study, however, showed the opposite results. After exposure to a fact-check, participants altered their misbeliefs to a greater extent when they initially believed the misinformation than when they initially disbelieved the misinformation. By exposing participants to political news and a follow-up fact-check from a professional fact-checker debunking the information, the researchers found that the fact-check was more successful in reducing false beliefs among people who held initial misperceptions (“unaligned” individuals), while the fact-check had no corrective effect for those with accurate initial perceptions (“aligned” individuals).

It is thus likely that the effect of alignment on fact-checking effectiveness depends on a third variable. Perceived fact-checker credibility is one potential variable because it may hold the key to overcoming motivated reasoning. Although motivated reasoning exerts a powerful force on information processing, research finds that people will change their beliefs when confronted with strong contradictory evidence (Kunda, 1990). Therefore, while low credibility sources serve as a discounting cue that allows information processors to dismiss their messages (Hovland et al., 1949), messages from high credibility sources are not easily discounted and can lead to successful attitude change even for those under the influence of motivated reasoning (Albarracín & Vargas, 2010; Jamieson & Hardy, 2014). Indeed, Wang (2021) found that only corrections from an expert source rather than a peer source were effective in reducing belief in misinformation among people whose initial beliefs were unaligned with the fact-check message.

Thus, when a fact-checker is perceived as highly credible, people who initially believed the misinformation (unaligned individuals) may be influenced to overcome their motivated reasoning and reduce their belief in the misinformation after exposure to the fact-check, while the fact-check is not likely to alter beliefs of people who initially disbelieved the misinformation (aligned individuals), due to a floor effect. This may explain the findings from Hameleers and van der Meer’s (2020) study because professional fact-checkers may be perceived as more credible than other sources by most people, and so can counter the effect of motivated reasoning among unaligned individuals (i.e., people who initially believed the misinformation). On the other hand, when a fact-checker is perceived to have low credibility it will have no impact on either aligned or unaligned individuals. This is because it is unable to overcome motivated reasoning among unaligned individuals as they will likely discount the fact-check message, and a fact-check confirming the beliefs of aligned individuals (i.e., people who initially disbelieved the misinformation) would also not result in a change in their belief. Based on this reasoning, we hypothesize:

Analytic Thinking, Fact-Checker Source Credibility, and Effectiveness

Recent years have witnessed a growing interest in analytic thinking in misinformation research (e.g., Batailler et al., 2022; Bronstein et al., 2019; Nurse et al., 2021; Pennycook & Rand, 2019, 2020). Analytic thinking refers to a disposition to engage in effortful, deliberative thinking and inhibit intuitive, heuristic-driven information processing (Frederick, 2005). People who are high in analytic thinking are less likely to judge fake news as accurate and are better able to discern fake from real news (Pennycook & Rand, 2019; Ross et al., 2021). Given the documented relationship between analytic thinking and misinformation susceptibility, it is surprising that little attention has been paid to how analytic thinking influences individuals’ responses to fact-checkers and their messages.

Yet it is reasonable to conceive that individual differences in analytic thinking can shape preferences for logic-based messages, including fact-checks. Drawing on cognitive-experiential self-theory (Epstein, 1994), there are two information processing systems: a rational system that operates based on effortful analytical thinking and logical inferences, and an experiential system that depends on automatic affective experience and rapid processing. While these two information processing systems can operate in a parallel and interactive manner, individuals differ in their preference for intuitive-experiential or analytical-rational thinking styles (Epstein et al., 1996). Scholars argue that individual differences in dominant thinking styles influence responses to messages such that rational messages can be more appealing to analytical thinkers compared to emotional messages (Epstein, 1994; Epstein & Pacini, 1999). Following this logic, analytic-rational thinkers may appreciate fact-checking messages, which typically feature logic and factual evidence, more than intuitive-experiential thinkers. This, in turn, may translate into higher perceived credibility of fact-checkers and their messages for some individuals more than others. Somewhat related, two recent studies found that people with a higher tendency toward analytic thinking were more likely to agree with corrective messages than those lower in analytic thinking (Allen et al., 2021; Martel et al., 2021). Thus, we hypothesize that:

While fact-checking aims to correct misinformation by providing rigorously-vetted information, not all users are willing to accept fact-checking messages and update their beliefs in the direction of the correction (Walter & Tukachinsky, 2020; Walter et al., 2020). As discussed earlier, people tend to process information through the lens of their prior attitudes such that they readily accept attitudinally-congruent information and may actively resist attitudinally-incongruent information to defend their prior beliefs (Kunda, 1990; Taber & Lodge, 2006). Some scholars recently theorized that systematic (i.e., analytic) thinking may further amplify the effects of prior beliefs on reasoning, as individuals who use more systematic thinking possess better reasoning capacities and are more capable of marshaling their cognitive resources to process information in a biased way (e.g., Kahan, 2017; Kahan, Peters et al., 2017; and see Tappin et al., 2021 for a full discussion of this point). Thus, when fact-checking messages contradict one’s prior beliefs, people who are higher in analytical thinking might be more likely to actively resist attitudinally-incongruent facts, which could undermine the effectiveness of fact-checking in reducing misperceptions. That said, the scant empirical research on whether analytic thinking amplifies motivated reasoning is mixed, at least when evaluating novel information. In light of this, and given that no studies have tested whether motivated reasoning amplifies resistance to counter-attitudinal corrective messages (which likely trigger especially high levels of motivated reasoning), the following research question is posed:

Method

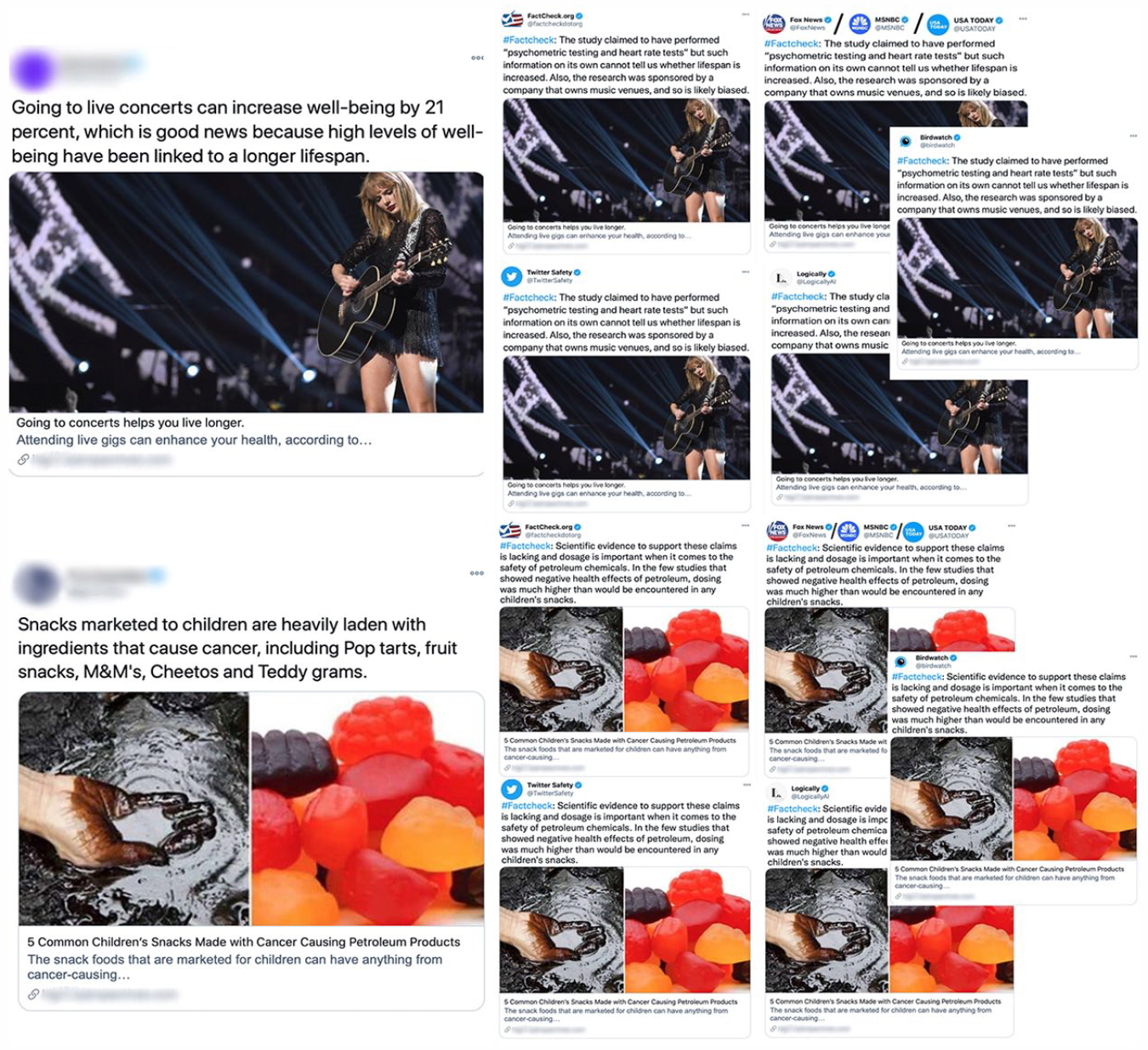

This study employed an online experiment hosted on Qualtrics. Upon IRB approval, participants were recruited from Amazon’s Mechanical Turk. 2 Participants viewed 10 social media posts in random order that contained health-related news stories across a wide variety of topics (e.g., benefits of drinking wine or attending live concerts, toxic chemicals in popular snacks, a cancer vaccine, the spread of bacteria via hand dryers, negative consequences of sleeping too much). Half of the stories were true and half were false. The stories were not created for this study, rather they were true and “fake” news stories that had been circulated online at some point in time prior to this study. The stories were all formatted to look like Twitter (now X) posts, but the source of each news story was blurred to ensure that perceptions of the news source’s credibility did not affect judgments of a fact-check that was shown to participants subsequently (see Figure 1, left side). 3

Focal news story stimuli (left) and fact-check stimuli (right).

After viewing each news story, participants reported their perceived veracity (true/false) and credibility of the story. Perceived veracity of the story was obtained by asking participants “Using your best guess, would you say this news story is: completely false (1), mostly false (2), mostly true (3), or completely true (4)?” This variable was used to derive the alignment measure as described later in this section. Our measure of perceived story credibility was based on recommendations for measuring message credibility (i.e., believability) by Appelman and Sundar (2016) and included four items. Participants indicated the extent that they felt the information in the story they just read was accurate, believable, authentic, and credible on a scale ranging from 1 = strongly disagree to 7 = strongly agree. The ratings of the four items were averaged to represent the score of perceived story credibility. Cronbach’s alpha for the scale across all stories was .96. Scores on this scale were used to compute the dependent variable, fact-checking effectiveness, explained below.

Participants next completed the cognitive reflection test (CRT) to measure analytic thinking. The CRT is commonly used for this purpose and consists of seven items (see Pennycook & Rand, 2020). Item wordings were changed slightly (e.g., the name “Mark” was changed to “Maria” and “sheep” was changed to “cows”) to reduce the chance that participants would look up answers to the CRT questions or to disguise the test in case they had seen the test before, although the CRT is robust to multiple exposures (Bialek & Pennycook, 2018). Answers were scored such that higher values reflect greater analytic thinking (

Participants then were presented with a fact-check of one of the 10 news stories that they had seen earlier (i.e., either a fake news story about the health effects of attending live concerts or about cancer-causing chemicals in popular snack foods). The fact-check was also formatted to appear as a Twitter post, and the source of the fact-check was manipulated. Specifically, participants were randomly assigned to view a fact-check of the story from one of seven sources: Twitter (social media platform), Logically.ai (artificial intelligence based fact-checking service), FactCheck.org (professional fact-checking organization), Fox (conservative news organization), USA Today (politically neutral news organization), MSNBC (liberal news organization), or Birdwatch (crowdsource). The fact-check message was identical across all conditions, only the source differed (see Figure 1, right side).

After reading the fact-check, participants again reported their perceived credibility of the original concert or snacks news story that was the subject of the fact-check on the same scale as before. The main dependent variable—fact-check effectiveness in terms of reduction of belief in misinformation—was therefore computed by deducting each participant’s story credibility rating before exposure to the fact-check (see above) from their story credibility rating after exposure to the fact-check. This pre-to-post story credibility (

Participants were next asked about their perceived credibility of the fact-checker. A six-item scale was used to measure fact-check source credibility perceptions with items based on previously-validated source credibility scales that included dimensions particularly relevant to fact-checker credibility, including how credible, qualified, trustworthy, biased, benevolent, and easy to understand how it works they felt the fact-checker was on a 7-point Likert scale. Cronbach’s alpha for this scale ranged from .98 to .99 across the different fact-checker source types. And finally, participants indicated if they had any previous exposure to the fact-checked story and to any fact-checks of the story (yes, no), as well as their frequency of reading news and using social media in terms of days per week and hours per day.

Participants’ alignment of the fact-check verdict with their initial belief was derived from the story veracity scores. After reading the initial news post, participants indicated if they believed the story was true or false. Participants who initially believed the story was false or mostly false were categorized as “aligned” because the fact-check message confirmed their prior belief. Participants who believed the original story was true or mostly true were categorized as “unaligned” because the fact-check conflicted with their initial belief about the story’s veracity.

Demographic and control variables collected from the sample included age, education, race/ethnicity, sex, income, and political identification and partisanship. Participants were also asked if they have worked in the medical field, given the focus on health misinformation in this study. One item that asked participants “Who was the fact check that you saw today from?” served as a manipulation check. Finally, an attention check item (i.e., “Please answer ‘slightly disagree’ to this question”) and a question asking if participants looked up information pertaining to the story during the experiment were also included in order to remove participants who did not pay attention or who knew the stimulus story’s veracity by Googling the story.

The data were cleaned by removing participants who failed the attention check (

Participants’ average age was 42.61 years (

Results

To explore RQ1, a one-way analysis of covariance (i.e., ANCOVA) was conducted to examine differences in fact-check effectiveness in reducing belief in misinformation across the different types of fact-checkers (i.e., professional fact-checker, FOX, MSNBC, USA Today, social media platform, AI, and crowdsource), controlling for demographic variables.

5

The omnibus test only approached significance, suggesting that there was no difference in the effectiveness of the various fact-checkers in reducing belief in misinformation,

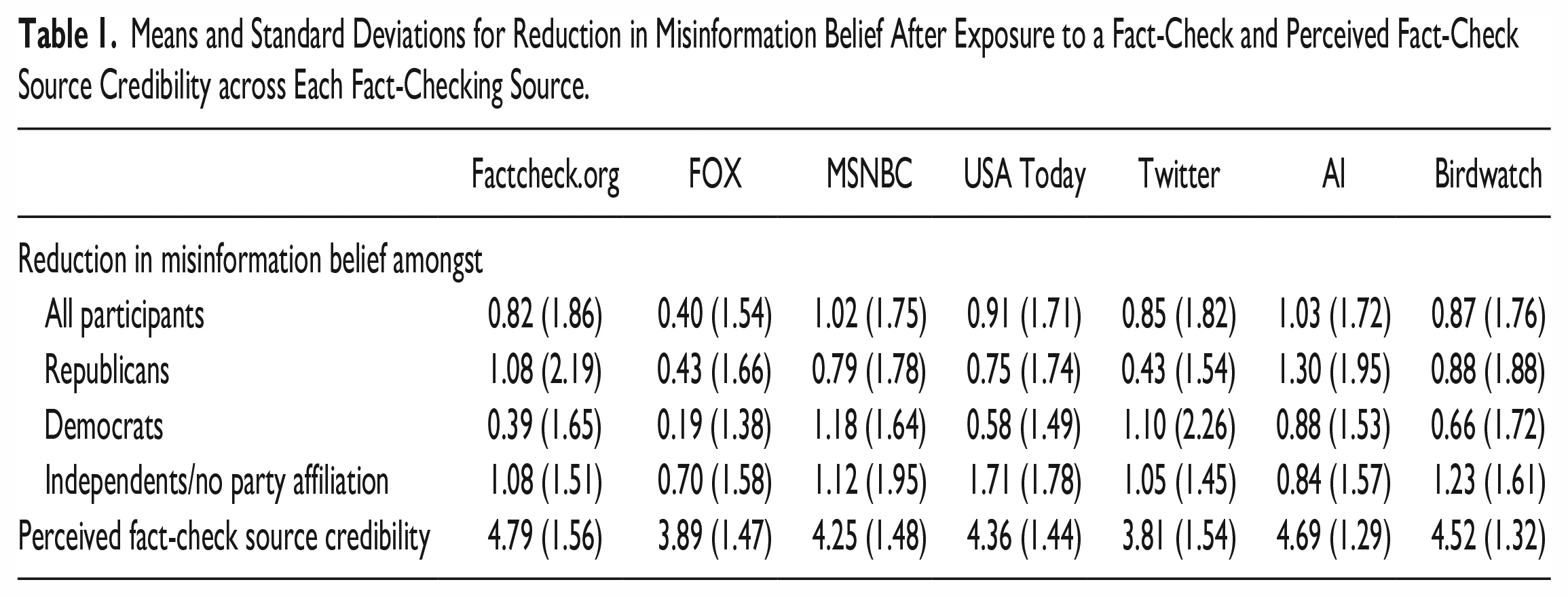

Means and Standard Deviations for Reduction in Misinformation Belief After Exposure to a Fact-Check and Perceived Fact-Check Source Credibility across Each Fact-Checking Source.

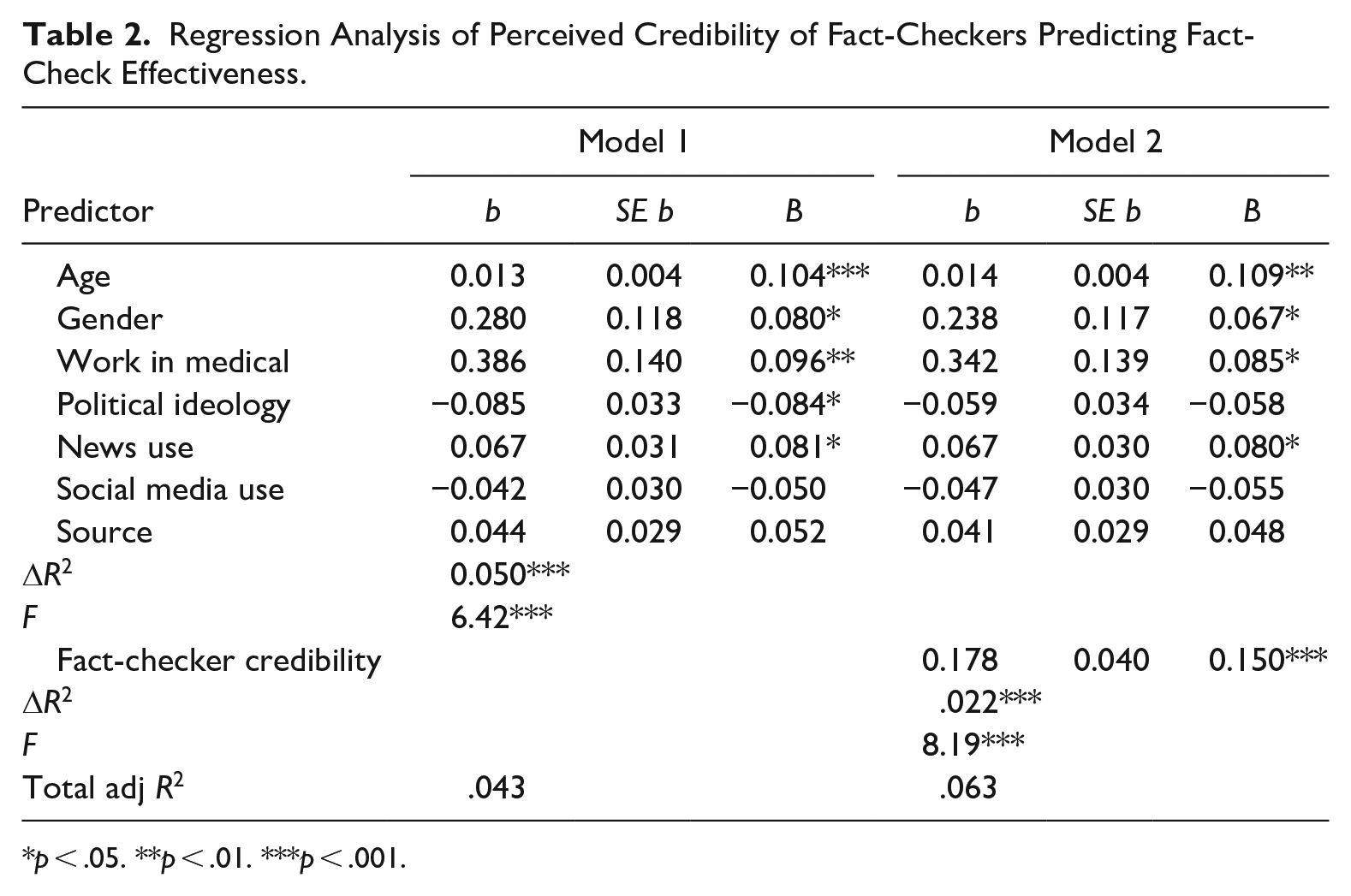

Hypothesis 1 predicted that perceived credibility of fact-checkers would be positively related to fact-check effectiveness. A hierarchical regression was performed in which demographic variables and fact-check source were entered in Model 1, and perceived credibility of fact-checkers across sources was entered in Model 2. Results showed that Model 1 was significant,

Regression Analysis of Perceived Credibility of Fact-Checkers Predicting Fact-Check Effectiveness.

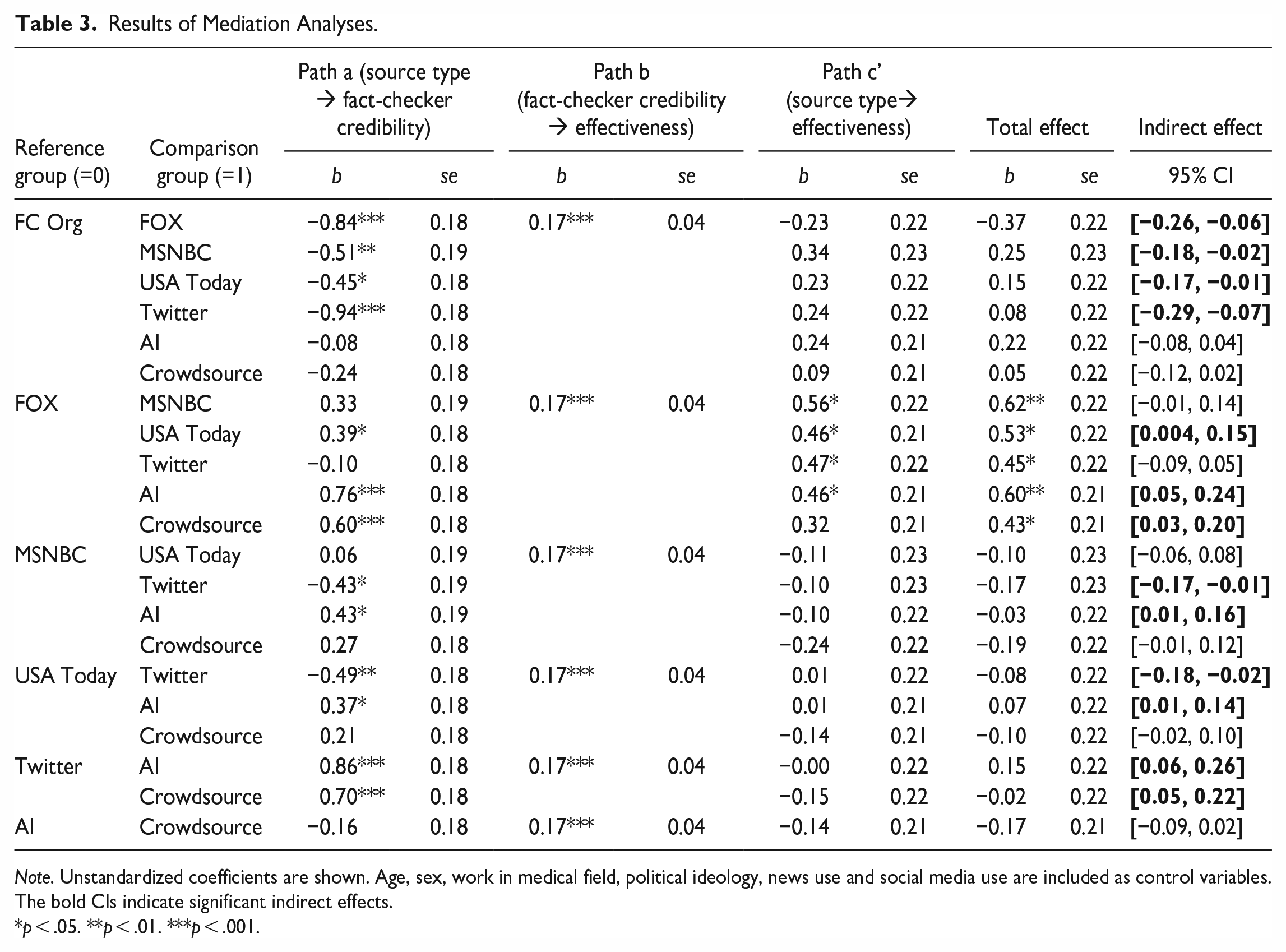

Hypothesis 2 predicted that perceived credibility of fact-checkers would mediate the relationship between exposure to fact-check messages from different sources and their effectiveness in reducing belief in misinformation. Table 1 shows the mean credibility rating for each source. Mediation analysis was conducted using PROCESS Model 4 with multicategorical antecedent variables controlling for demographics. Table 3 shows all statistics. Compared to professional fact-checkers, FOX, MSNBC, USA Today, and Twitter were perceived as less credible, indicating that participants judged professional fact-checkers as more credible than media sources (i.e., news organizations and social media platforms) for fact-checking, but this difference was not significant for the newer fact-checking sources (i.e., AI and crowdsource). Meanwhile, AI-based fact-checking was perceived as more credible than FOX, MSNBC, USA Today, and Twitter. Crowdsourced fact-checkers are also perceived as more credible than FOX and Twitter. Together, these results show that the participants judged newer forms of fact-checking (e.g., AI and crowdsource) as more credible fact-checking sources than established news organizations and social media platforms. There was also a significant positive effect of perceived credibility of fact-checkers on fact-checking effectiveness (Path b) for all comparisons, which is also consistent with Hypothesis 1. The total and direct effects are also shown in Table 3.

Results of Mediation Analyses.

As shown in Table 3, the bootstrap confidence intervals (based on 5,000 samples) for the relative indirect effects did not include zero for a majority of the source comparisons (i.e., professional fact-checker vs. FOX/MSNBC/USA Today/Twitter, FOX vs. USA Today/AI/Crowdsource, MSNBC vs. Twitter/AI, USA Today vs. Twitter/AI, Twitter vs. AI/Crowdsource), and all of the comparisons where Path a was significant. This suggests that perceived fact-checker credibility plays a mediating role in the effects of source type on fact-checking effectiveness. Most important, participants found professional fact-checkers and new types of fact-checkers (i.e., AI and crowdsource) more credible than news organizations and social media platforms for fact-checking, which in turn, increases their fact-checking effectiveness. H2 was therefore supported for all of the comparisons in which the sources differed in perceived credibility.

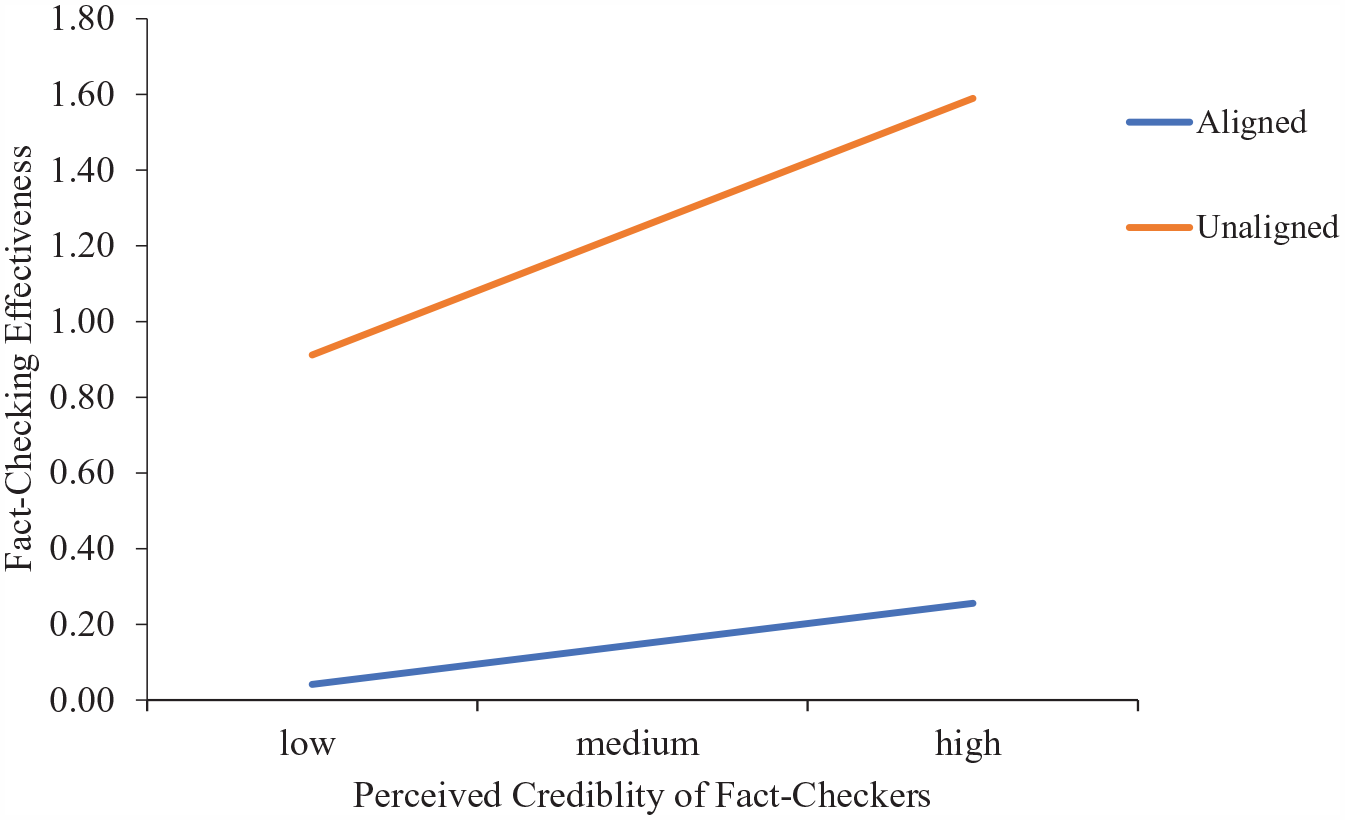

Hypothesis 3 predicted that perceived credibility of fact-checkers would play a moderating role in the effect that alignment with the fact-check verdict has on fact-checking effectiveness. Alignment was determined from the story veracity ratings such that if participants originally indicated that the original story was false, they were categorized as “aligned” and were assigned a value of 1 because the fact-check message confirmed their prior belief. Participants who believed the original story was true were categorized as “unaligned” and were assigned a value of 0 because the fact-check conflicted with their prior belief about the story’s falsity.

Moderation analyses showed a significant overall model,

Perceived credibility of fact-checkers moderating the effect of alignment with fact-check verdict on fact-check effectiveness.

Hypothesis 4 predicted that people who were higher in analytic thinking will perceive the fact-checker as more credible than people lower in analytic thinking. Hierarchical regression was performed in which fact-checking source and demographic variables were entered in Model 1, and analytic thinking was entered in Model 2. Results showed that Model 1 was significant,

H5 predicted a positive relationship between analytic thinking and fact-check effectiveness. Hierarchical regressions showed that analytic thinking significantly predicted fact-checking effectiveness after controlling for fact-check source and demographics (

RQ2 asked if analytic thinking moderates the effects of alignment with a fact-check verdict on fact-checking effectiveness, such that counter-attitudinal fact-checking messages are less effective in correcting misperceptions for people who are higher in analytic thinking compared to people lower in analytic thinking. Moderation analyses showed a significant overall model,

Discussion

The increasing prevalence of fact-checking efforts and the emergence of new types of fact-checkers highlight the importance of evaluating their effectiveness, as well as factors influencing their effectiveness, such as fact-check sources, perceived credibility of fact-checkers, and individual differences among receivers of fact-check messages. This study found that the effectiveness of fact-checkers varies little across different fact-checking sources and that effectiveness is positively associated with the perceived credibility of fact-checkers. Perceived credibility of fact-checkers also mediates the effect of fact-checker source type on effectiveness for some source comparisons, and moderates the effect of alignment on effectiveness. Analytic thinking, however, is not a significant predictor of perceived fact-checker credibility, nor a moderator of the relation between alignment and effectiveness. It is, however, positively related to fact-checking effectiveness. The theoretical and practical implications of these findings are discussed below.

Results for RQ1 indicate that the effectiveness of different fact-checkers does not vary much, which is somewhat surprising given that past research has often found professional fact-checkers to be superior. One potential explanation for the null result might be that the stimuli used in this study were about health rather than political news. Most past studies of misinformation that compare fact-checkers were done within a political context, where people may have felt more issue importance, personal relevance, or identity threat. By contrast, participants in our study may not have perceived the stimuli (i.e., the concert and snack stories) as personally relevant or as having a significant impact on their health. Fact-checks in non-political contexts with low topic salience may be less likely to be influenced by the source’s political stand than studies that use political misinformation stimuli. This is consistent with the Elaboration Likelihood Model, because when topic salience is low, participants may not carefully process or even care about the source of a fact-checking message. This explanation is also consistent with the few studies in the health context that have found evidence for varied effectiveness across correction sources, in that the topics used in those studies tend to be high-profile salient health issues (e.g., vaccine, disease, or epidemic) (Vraga & Bode, 2017; Zhang et al., 2021).

Overall, our data suggest that when the topic is less relevant or urgent, different fact-checkers are generally similarly effective. This is good news for newer fact-checking sources such as AI and crowdsourcing, as it means that these cost-effective yet scalable means of fact-checking are likely equally effective as more expensive options for misinformation correction. In any case, these findings underscore a need for future research on fact-checking effectiveness that includes not only the full array of fact-checking source types available, but also a wide range of topics to better understand the impact that fact-checks have on reducing belief in misinformation.

Another notable finding of this study is that there is a positive association between perceived credibility of fact-checkers and their effectiveness, even when controlling for source, political ideology, and other demographic and media use factors. This finding highlights the importance of taking source credibility into consideration in research on fact-checking and demonstrates the benefit of increasing source credibility for fact-checking services as an additional effort beyond simply ensuring content validity of their fact-checks. Our results also confirm the association between source credibility and communication effectiveness that has long been documented in other persuasion contexts (e.g., Hovland & Weiss, 1951), for all sources of fact-checking. This is especially important for novel fact-checking services (e.g., AI-based fact-check services) as they try to establish themselves in the field. A practical implication of this finding is thus for novel fact-checking sources to find ways to boost their credibility, which may be done, for example, by providing more transparency about their methodology for vetting information (see also Banas et al., 2022).

Perceived credibility of fact-checkers also appears to mediate the effect of fact-checker source type on effectiveness when sources differ in their perceived credibility. So although fact-check effectiveness did not differ across sources overall, people did reduce their belief in misinformation through perceived credibility of the fact-checker they saw, such that a source was more effective in reducing misinformation beliefs because of its higher perceived credibility. In other words, in circumstances where perceived fact-checker credibility significantly predicts effectiveness in all conditions, the difference in perceived credibility of different fact-checking sources determines if one source is more effective in reducing people’s belief in misinformation than another. This finding supports our hypothesized mediating role of fact-checker credibility perceptions, showing that perceived source credibility functions as an explanatory mechanism for varied fact-checking effectiveness across sources.

Specifically, our study revealed four “tiers” of fact-checking sources in terms of their effectiveness through perceived credibility—professional fact-checkers (first tier), AI and crowdsource (second tier), news outlets including USA Today, MSNBC and FOX (third tier), and social media platforms such as Twitter (fourth tier). Professional fact-checkers (first tier) are not different in effectiveness and credibility from AI and crowdsourcing (second tier), but are more effective than news sources (third tier) due to their higher perceived credibility. Meanwhile, AI and crowdsource sources are perceived as equally credible and effective as the three traditional news outlets, indicating that newer types of fact-checking sources might suffer a little from skepticism about their credibility, which diminishes their effectiveness to the level of traditional news outlets who often bear the criticism of being politically biased. The three news outlets (third tier) are perceived as similarly credible and thus effective, although USA Today is more effective than MSNBC and FOX, likely because of its relative political neutrality. Finally, Twitter (fourth tier) is perceived as the least credible and less effective than all other sources (except FOX), possibly because in recent years social media platforms have been accused of lacking transparency, being politically biased, and facilitating the spread of misinformation (Andersen & Søe, 2020), which might explain their relatively low effectiveness in reducing belief in misinformation compared to most other sources.

Perceived credibility of fact-checkers also moderated the effect of alignment on effectiveness. As credibility increased, fact-checkers were more effective in reducing beliefs in misinformation among unaligned individuals than aligned individuals. This is consistent with our prediction that when a fact-checker is perceived as highly credible, people who initially believed the misinformation (“unaligned”) are more persuaded into changing their false beliefs than people who did not believe the misinformation initially (“aligned”), although interestingly, aligned people also increase their accurate beliefs after reading a fact-check, probably due to a reinforcement effect. We also found that when a fact-checker is perceived to have low credibility, a corrective effect still exits for unaligned people but not for aligned people, and the difference in reduction in misbeliefs between unaligned and aligned people is smaller than when the fact-checker is highly credible. In other words, as perceived credibility of a fact-checker increases, so does its fact-checking effectiveness regardless of people’s prior beliefs about the misinformation. This is encouraging news for fact-checkers because it shows that people’s belief in fake news can be corrected, and underscores the need for fact-checkers to put effort into increasing their perceived credibility among the public. It also offers an explanation to the mixed results discussed earlier found in the literature on varied fact-checking effectiveness from different sources among individuals with different prior beliefs, and emphasizes the important role of credibility cues in examining the effect of individual factors on persuasion.

Last, this study contributes to our understanding of how analytic thinking influences credibility assessments in the context of fact-checking. Previous studies find that people who are higher in analytic thinking are less likely to perceive misinformation as accurate (Pennycook & Rand, 2019). We similarly find that fact-checks are more effective among people higher in analytic thinking. Interestingly, however, our data suggest the positive effects of analytic thinking do not translate into increased perceived credibility of fact-checkers. More analytical thinkers do not perceive fact-checkers as more credible than less analytical thinkers. Regarding the debate over whether analytic thinking magnifies motivated reasoning (see Hutmacher et al., 2022; Kahan, Peters et al., 2017; Pennycook & Rand, 2019; Tappin et al., 2021), our study suggests that analytic thinking does not amplify the effects of prior factual beliefs on reasoning. Instead of actively resisting counter-attitudinal fact-checks, people who are high in analytic thinking are more likely to update their beliefs in the direction of the fact-check.

While this study provides an evaluation of the effectiveness of fact-checkers across a comprehensive list of fact-checking sources, as well as investigates factors influencing their effectiveness in correcting misperceptions, its findings are not to be interpreted without limitations. These include only being able to test a small number of misinformation messages on one topic (health) and in one culture (the U.S.). Unlike a lot of prior research, our stimuli used non-politicized health news stories. It is possible that fact-checks could be more effective for non-politicized issues because people may be more willing to update their beliefs on issues they care less about or are less emotionally arousing. Similarly, a fact-checker’s credibility might be more influential for non-politicized issues as people would likely be less entrenched in their beliefs about such issues, and thus the source of a corrective message could make more of a difference. And analytic thinking may also work differently for politicized versus non-politicized issues too. In our study, analytic thinking was unrelated to perceptions of fact-checker credibility, but maybe the results would differ if we had used politicized issues. All of this argues that future studies using more diverse samples and story topics (e.g., political or controversial issues where motivated reasoning might be stronger or stories with high personal relevance) are needed.

Another limitation is the application of our findings only to people who found the misinformation at least somewhat credible, because we excluded those who completely disbelieved the misinformation (perceived pre-story credibility <2). That said, our study still meaningfully highlights the effectiveness of fact-checks for those who are most vulnerable. And finally, the placement of the CRT test between reading the news and seeing the fact-checks could have primed greater analytic thinking, and if it did this would limit the generalizability of our findings on analytic thinking. Although there is no evidence that this happened, future research will need to vary the order of the measures to ensure earlier measures do not prime responses to later measures in systematically biased ways.

Despite these limitations, no studies so far have compared the five main sources of fact-checking in use today in terms of either their perceived credibility or their ability to reduce belief in misinformation. Our work thus advances existing research on fact-checking by investigating the full array of existing fact-checking sources, especially social media platforms and crowdsourcing that have been examined less in the literature to date, as well as AI that is currently garnering increased attention in the field. As such, our study provides a comprehensive picture of the effectiveness and perceived credibility of different fact-checking sources currently available to fight misinformation.

Indeed, surprisingly few studies have examined perceived credibility of fact-checkers at all. Rather, most research focuses on perceptions of the credibility of the misinformation content (i.e., a fake news story), the misinformation source, or the fact-check content. Our work instead draws attention to credibility of fact-checks vis a vis their source, helping to complete the picture of examining all aspects of source and message credibility in misinformation research. In addition, while studies of fact-checking effectiveness often compare effectiveness of different sources, they rarely offer a theoretical mechanism to explain why one fact-checking source may be more effective than another. By proposing source credibility perceptions as a mediator, our study theorizes and tests fact-checker credibility as the theoretical mechanism for differences in fact-checking effectiveness across sources. Our study also extends the scope of the MAIN model (Sundar, 2008) to the fact-checking context, though without empirical testing of the model and heuristics, which is the next step for future research. However, this study does offer the first test of a critical component of Jennings and Stroud’s (2023) recently proposed two-step motivated reasoning model which says that people will evaluate whether to accept or reject an attempt to correct misinformation based on their impression (i.e., trust) of the fact-checking source. While Jennings and Stroud did not actually test their assertion, our research does so by examining fact-checker source credibility perceptions, and our results support Step 1 of their model.

Last, our finding that perceived fact-checker credibility acts as a moderator in the relationship between alignment and effectiveness offers an explanation to the mixed results in the literature where fact-checks are sometimes effective for people with initial misbeliefs and sometimes not effective. Our data suggest that this is probably because corrective messages with strong source credibility cues can overcome motivated reasoning to persuade individuals who initially believed the misinformation to correct their beliefs, while a fact-checker with low credibility cannot be as effective. Similarly, existing empirical research on whether analytic thinking amplifies motivated reasoning is also mixed in the context of evaluating novel information. In light of this, and given that no studies have tested whether motivated reasoning amplifies resistance to counter-attitudinal corrective messages (which may trigger especially high levels of motivated reasoning), our research contributes to scholarship in these areas as well. In sum, our work advances understanding of conflicting findings in the corrections literature and emphasizes the important role credibility cues play in examining the effect of individual-level factors on persuasion effects, all within a context that has great social significance and urgency. Overall, this study heralds a promising future for fact-checkers in their continuing efforts to combat fake news, as well as for researchers in their further explorations of credibility perceptions and other individual factors as keys to understanding why and when fact-checks are likely to work.

Supplemental Material

sj-docx-1-crx-10.1177_00936502231206419 – Supplemental material for Checking the Fact-Checkers: The Role of Source Type, Perceived Credibility, and Individual Differences in Fact-Checking Effectiveness

Supplemental material, sj-docx-1-crx-10.1177_00936502231206419 for Checking the Fact-Checkers: The Role of Source Type, Perceived Credibility, and Individual Differences in Fact-Checking Effectiveness by Xingyu Liu, Li Qi, Laurent Wang and Miriam J. Metzger in Communication Research

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.