Abstract

Voters increasingly rely on social media for news and information about politics. But increasingly, social media has emerged as a fertile soil for deliberately produced misinformation campaigns, conspiracy, and extremist alternative media. How does the sourcing of political news and information define contemporary political communication in different countries in Europe? To understand what users are sharing in their political communication, we analyzed large volumes of political conversation over a major social media platform—in real-time and native languages during campaign periods—for three major European elections. Rather than chasing a definition of what has come to be known as “fake news,” we produce a grounded typology of what users actually shared and apply rigorous coding and content analysis to define the types of sources, compare them in context with known forms of political news and information, and contrast their circulation patterns in France, the United Kingdom, and Germany. Based on this analysis, we offer a definition of “junk news” that refers to deliberately produced misleading, deceptive, and incorrect propaganda purporting to be real news. In the first multilingual, cross-national comparison of junk news sourcing and consumption over social media, we analyze over 4 million tweets from three elections and find that (1) users across Europe shared substantial amounts of junk news in varying qualities and quantities, (2) amplifier accounts drive low to medium levels of traffic and news sharing, and (3) Europeans still share large amounts of professionally produced information from media outlets, but other traditional sources of political information including political parties and government agencies are in decline.

Introduction

Voters have access to an immense amount of information during elections, but it is not always clear that they are accessing quality information, in efficient ways, when they come to decide how to vote (Newman, Fletcher, Kalogeropoulos, Levy, & Nielsen, 2018; Woolley & Howard, 2017). Recently, the viral spread of misinformation has emerged as a central concern on the public agenda. Around the globe, a wide range of politically and economically motivated actors take advantage of social media platforms to inject public discourse with computational propaganda, conspiracy, and extremist rhetoric. In an effort to undermine the integrity of political processes hostile foreign actors, radical groups at the fringe of the political spectrum and profit-driven alternative outlets rely on a manipulative amalgam of deception, falsehoods, and misleading sensationalism designed to manipulate. Amid calls for regulation of social media platforms tasked with countering the spread of hyper-polarizing and hateful rhetoric, there is a heightened concern about the effect of misinformation on democracy (Bradshaw, Neudert, & Howard, 2018; Persily, 2017; Tucker, Theocharis, Roberts, & Barberá, 2017; Wardle & Derakhshan, 2017).

Efforts to evaluate the quality of the political news and information shared during elections—especially over social media—face a number of methodological and epistemic challenges. A growing number of scholars have presented empirical evidence about how large volumes of incorrect information spread over social media. However, scholarly efforts to conceptualize phenomena in relation to the viral spread and weaponization of disinformation have remained contentious, lacking scholarly consensus on definition and nomenclature. Specifically, “fake news” has proved difficult to operationalize, and despite in common parlance now, it is more of a political accusation than concept for evaluating news quality.

Understanding what political news and information voters are sharing must be the starting point for any academic discussion—and public conversation—about the impact of social media on public life. To address this shortcoming and to understand the salient qualities of political news and information on social media, this article puts forward a grounded typology of sources shared on social media. We collected 4.3 million tweets from three European elections in France, Germany, and the United Kingdom. Users in Europe posted links to 5,158 distinct domains which informed our systemic analysis. Drawing from political communication theory, we developed a grounded typology of political news and information. We find that users across Europe shared substantial amounts of junk news in varying qualities and quantities, but that large amounts of professionally produced news and information from media outlets dominated the traffic on social media by far. However, other traditional sources of political news and information are in decline. Relatively small amounts of news and information were generated by political parties and governments. Our analysis shows that traffic around the elections was predominantly organic and amplifier accounts drove only low to medium levels of shares.

Our grounded typology categorized sources of political news and information on a domain level, rather than assessing the veracity of individual stories. This approach accommodated for a comprehensive analysis of the aggregate reporting of web sources as a whole. Over our analysis of three elections, we consistently found a salient category of “junk news” purporting to be real political news and information in varying amounts. Our methodology describes “junk news” as sources that meet three out of the following five criteria: (1) professionalism, where sources fall short of the standards of professional journalistic practice including information about authors, editors, and owners; (2) style, where inflammatory language, suggestion, ad hominem attacks and misleading visuals are used; (3) credibility, where sources report on unsubstantiated claims, rely on conspiratorial and dubious sources, and do not post corrections; (4) bias, where reporting is highly biased and ideologically skewed and opinion is presented as fact; and (5) counterfeit, where sources mimic established news publications in branding, design, and content presentation.

For scholarship on political communication to advance, it is crucial to form both an empirical and theoretical understanding of political news and information on social media during elections and how this content is shared. For each country in this comparative study, we have three research questions.

Which political parties dominated Twitter conversation during their national elections?

What are the salient quantities and qualities of sources of political news and information users shared over social media, and how much of this content was junk news?

To what extent was political conversation on social media driven by inauthentic amplifier accounts as opposed to organically shared human traffic?

First, we develop a distinct conceptual framework of “junk news.” Second, we situate the foundational role of typology building for understanding new phenomena in political communication literature. Third, we discuss our methodology for developing a grounded typology of political news and information that were shared over Twitter during elections in France, the United Kingdom, and Germany. Fourth, we analyze patterns and trends across the public discourse across these three elections online. To conclude, we discuss the implications of our findings for both democracy and political communication research.

Junk News in Contemporary Political Discourse

Disinformation and specifically the spread of “fake news” have emerged as a prominent public issue in Europe (Marchal, Kollanyi, Neudert, & Howard, 2019). In the aftermath of the Brexit referendum and the 2016 Presidential elections in the United States, a growing number of scholars have become concerned with “fake news” phenomena. Political communication scholarship has become devoted to the spread of fake news over online networks and messenger applications, its effects on human behavior and cognition, its relationship with digital content production, and its impact on public discourse and democracy (Del Vicario et al., 2016; Machado, Kira, Narayanan, Kollanyi, & Howard, 2019; Vicario et al., 2016; Vosoughi, Roy, & Aral, 2018). Despite a growing body of literature on the phenomenon, a consistent definition of fake news has yet to emerge. The current operationalization of the term in political communication scholarship overwhelmingly lacks conceptual clarity and a comprehensive, grounded framework (Neudert & Marchal, 2019). A wide range of information maladies phenomena including malicious information operations, misleading headlines, alternative media outlets, and “click-bait” are referred to as fake news in popular parlance.

In light of the diverse scope of the fake news term, scholars have identified a range of epistemological challenges surrounding its definition, including its uncritical adoption in popular verbatim, and its appropriation through political actors (European Commission, 2018; Neudert, 2017). To address these shortcomings, we have developed a conceptual framework that is grounded in real world data about what users are actually sharing on social media (on junk news conceptualization). As opposed to other attempts of operationalizing “fake news,” our analysis is rooted in a rigorous social media data analysis of sharing behavior rather than phenomenological evidence and hypothesizing. We analyzed 5,158 sources to develop a comprehensive definition of the new phenomenon. Junk news fulfills at least three out of the five following criteria: (1) professionalism, (2) style, (3) credibility, (4) bias, and (5) counterfeit. Drawing on political communication literature, we identify an increasing prevalence of “junk news” that is distinct from the range of different forms of problematic information online.

Our methodology for classifying sources distinguishes the content of the domain as whole (i.e., style, bias, credibility), information about authors and the organization (i.e., professionalism), and the layout and design of the domain itself (i.e., counterfeit). A source needs to exhibit at least three of our criteria to be labeled as junk news. For example, partisan news organizations that report with an ideological angle (bias) would not be considered junk news unless they also failed on at least two of the other criteria.

Professionalism

Junk news domains that fail on the professionalism criteria purposefully refrain from providing clear information about real authors, editors, publishers, and owners, and do not publish corrections on debunked information.

Counterfeit

Some sources mimic established news reporting including fonts, branding, and design. Junk news is stylistically disguised as professional news with references to news wire services and credible sources, as well as headlines written in a news tone with date, time, and location stamps. In pronounced cases, outlets will copy logos and counterfeit domains.

Style

Style is concerned with the literary devices and language used throughout news reporting. Junk news employs propaganda techniques to systematically manipulate users for political purposes that appeal to emotions, rather than cognition, including emotional language with emotive expressions and symbolism, ad hominem attacks, misleading headlines, exaggeration, inflammatory language, unsafe generalizations, logical fallacies, moving images, and lots of pictures or mobilizing memes.

Bias

These junk news sources are highly biased and ideologically skewed in the presentation and selection of their reporting and publish opinion pieces as news. They exhibit systematic differences in the mapping from facts to news reports conveying strikingly different accounts of what actually transpired to misrepresentation and selective reporting.

Credibility

Websites that fall short of credibility typically report on unsubstantiated claims and quote conspiratorial and dubious sources to verify their reporting. They do not vet their sources, fact-check, or consult multiple sources.

Typologies and New Modes of Political Communication

Content typologies are a foundational element in political research, and especially relevant for examining and explicating new phenomena, unanticipated problems, or rapid changes in social systems (Aronovitch, 2012; Howard & Hussain, 2013; Swedberg, 2018). Broad categories of political news and communication have remained widely persistent over the years, and have been transported across national, cultural, and technological contexts though a number of scholars have debated the applicability of traditional frameworks to new media (see Earl, Martin, McCarthy, & Soule, 2004; Karlsson & Sjøvaag, 2016). Recently, scholarly attention—especially of those working in political communication and social media—has shifted on the effects of the viral spread of disinformation and extremist alternative media over digital media ecosystem, prompting a debate about models, standards, and values of content and the ideational impact of political news and information. Grounded in the content that users share over social media, new categories and definitions have been brought forward (Kalogeropoulos, Negredo, Picone, & Nielsen, 2017; Tandoc, Lim, & Ling, 2018). However, the current debate lacks a comparative framework of the types of information that circulate on social media that is grounded in methodologically collected data from social media sharing behavior as opposed to phenomenological evidence.

Certainly, propaganda, disinformation, and negative campaigning are not new phenomena of public life. But social media platforms offer new affordances for spreading political news and information at great speed and scale, while using an individual’s own data for targeted disinformation campaigns. Typologies in political communication have been useful for understanding the diversity of variables and cases, that both accurately describe the features of such new phenomena and identify transportable concepts across several cases, among real-world outcomes in which media accounts provide the primary features for important incidents (Althaus, Edy, & Phalen, 2001; Erickson & Howard, 2007). In particular, typology building allows for meaningful comparisons of the type and format of political news and information across countries (McMenamin, Flynn, O’Malley, & Rafter, 2013).

We advance this debate with a rigorously composed typology based on a focused, cross-country case comparison of social media content during three elections in large consolidated democracies in Europe—France, the United Kingdom, and Germany. These elections offered a unique opportunity for comparing political news and information across countries. The close proximity of campaign periods allows for a focused comparison that can both establish a content typology and set into sharp relief the differences that political culture might have in explaining what relative amounts of content are relevant in public life.

Methods and Sampling

To understand the circulation of political news and information during sensitive political events, we performed a real-time social media data analysis of political news and information shared on Twitter during three European elections in 2017. In all three countries, the elections generated large amounts of political debates and media coverage over Twitter. As a critical moment of political life, elections spark heightened public interest in political events which is also reflected in their voter behavior online (Howard, 2005). Boczkowski and Mitchelstein (2012) note that in times of heightened political activity, users shared more information on public issues.

Drawing from our real-time data collections, we developed a grounded typology of political news and information that is persistent across the three cases. Our methodology considers different sources of political news and information. This approach allows for a highly contextual evaluation which considers the reporting, design, and practices of content production as a whole. This comprehensive evaluation is especially necessary since junk news sources occasionally mix in factual reporting and news wire reports to signal legitimacy.

We collected data via public Twitter’s Streaming API which comes with some known limitations. The Streaming API only collects at most 1% of all global public tweets related to a specific search query, but Twitter has not released the precise sampling method. The representativeness of its random samples has been questioned (Blank, 2017; Morstatter, Pfeffer, Liu, & Carley, 2013). Nonetheless, many scholars have recognized this approach to be highly useful for studying “ad-hoc publics” especially around political events (Burgess & Bruns, 2012; Larsson & Moe, 2012). Conducting the analysis in real-time has the advantage of capturing content before it can be removed or before non-permanent URLs become invalid.

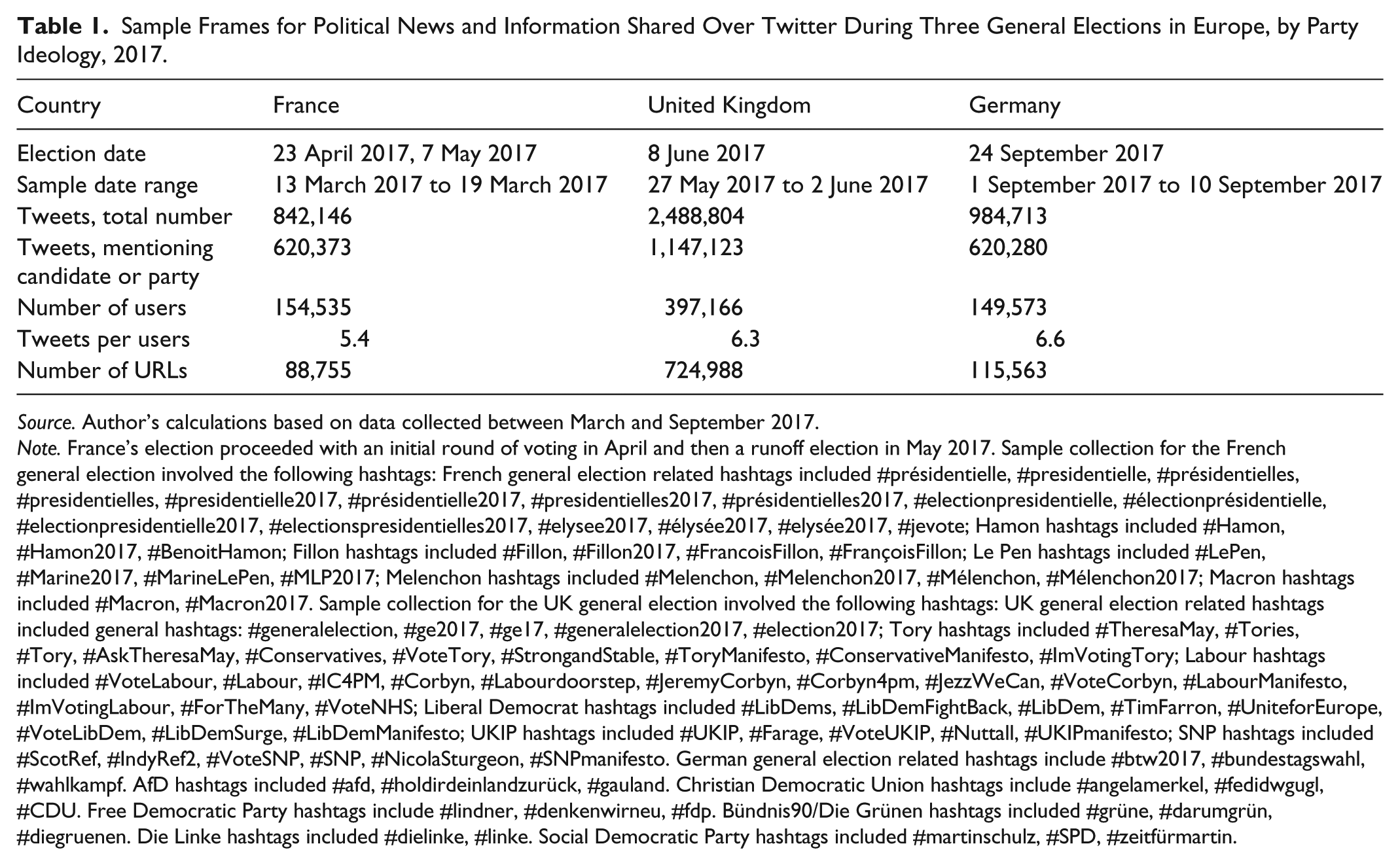

Our methodology followed four stages. First, we manually identified relevant hashtags about politics and the election for each country and language. Usually these included the names of leading candidates, political parties, official campaign slogans, and salient election-specific issues. We pre-tested manually identified hashtags for relevance in test data collections which we ran for at least a week in the month before the elections. From this dataset, we identified additional salient hashtags based on co-use and frequency of use. For each country, our team reviewed a list of 200 to 300 hashtags including their total number of shares for relevance. This sampling method allows us to capture salient political conversation about the elections which we are focusing on in this study. Our approach excluded minor hashtags that refer to small or short-lived issues. It does not capture conversation that users shared without using hashtags. A complete list of hashtags for each election can be found in Table 1.

Sample Frames for Political News and Information Shared Over Twitter During Three General Elections in Europe, by Party Ideology, 2017.

Source. Author’s calculations based on data collected between March and September 2017.

Note. France’s election proceeded with an initial round of voting in April and then a runoff election in May 2017. Sample collection for the French general election involved the following hashtags: French general election related hashtags included #présidentielle, #presidentielle, #présidentielles, #presidentielles, #presidentielle2017, #présidentielle2017, #presidentielles2017, #présidentielles2017, #electionpresidentielle, #électionprésidentielle, #electionpresidentielle2017, #electionspresidentielles2017, #elysee2017, #élysée2017, #elysée2017, #jevote; Hamon hashtags included #Hamon, #Hamon2017, #BenoitHamon; Fillon hashtags included #Fillon, #Fillon2017, #FrancoisFillon, #FrançoisFillon; Le Pen hashtags included #LePen, #Marine2017, #MarineLePen, #MLP2017; Melenchon hashtags included #Melenchon, #Melenchon2017, #Mélenchon, #Mélenchon2017; Macron hashtags included #Macron, #Macron2017. Sample collection for the UK general election involved the following hashtags: UK general election related hashtags included general hashtags: #generalelection, #ge2017, #ge17, #generalelection2017, #election2017; Tory hashtags included #TheresaMay, #Tories, #Tory, #AskTheresaMay, #Conservatives, #VoteTory, #StrongandStable, #ToryManifesto, #ConservativeManifesto, #ImVotingTory; Labour hashtags included #VoteLabour, #Labour, #IC4PM, #Corbyn, #Labourdoorstep, #JeremyCorbyn, #Corbyn4pm, #JezzWeCan, #VoteCorbyn, #LabourManifesto, #ImVotingLabour, #ForTheMany, #VoteNHS; Liberal Democrat hashtags included #LibDems, #LibDemFightBack, #LibDem, #TimFarron, #UniteforEurope, #VoteLibDem, #LibDemSurge, #LibDemManifesto; UKIP hashtags included #UKIP, #Farage, #VoteUKIP, #Nuttall, #UKIPmanifesto; SNP hashtags included #ScotRef, #IndyRef2, #VoteSNP, #SNP, #NicolaSturgeon, #SNPmanifesto. German general election related hashtags include #btw2017, #bundestagswahl, #wahlkampf. AfD hashtags included #afd, #holdirdeinlandzurück, #gauland. Christian Democratic Union hashtags include #angelamerkel, #fedidwgugl, #CDU. Free Democratic Party hashtags include #lindner, #denkenwirneu, #fdp. Bündnis90/Die Grünen hashtags included #grüne, #darumgrün, #diegruenen. Die Linke hashtags included #dielinke, #linke. Social Democratic Party hashtags included #martinschulz, #SPD, #zeitfürmartin.

Second, based on these hashtags, we collected tweets from Twitter that (1) contained the selected hashtags; (2) contained a URL to a web source, such as a news article, where the URL or the title of the web source included a selected hashtag; (3) retweets that contained a message’s original text, wherein a selected hashtag is used either in the retweet or in the original tweet; and (4) quote tweets where the original text is not included but Twitter uses a URL to refer to the original tweet. Tweets with URLs that pointed toward another tweet were removed from our sample, as these tweets are generally generated automatically when someone quotes a tweet.

In total, we collected 843,146 tweets in France; 2,488,804 in the United Kingdom; and 984,713 in Germany. We then extracted all URLs that Twitter users in our sample shared. We identified 88,755 URLs in France; 724,988 in the United Kingdom; and 115,563 in Germany. If Twitter users shared more than one URL in their tweet, only the first URL was analyzed. For each of the countries, we prepared a random sample of 10% of tweets for coding. Coding decisions were then later applied to the entire datasets.

Third, we prepared our tweets for semi-automated analysis by providing a simple spreadsheet that listed the base URL to web sources, along with random samples of full URLs and frequency of shares. URLs that were shortened with a link shortener (such as bit.ly) were unwrapped with a script our team developed and added to the spreadsheet for analysis where possible. We also unwrapped URLs to social media posts that were sharing URLs (i.e., a tweet about a public Facebook post linking to a news story).

Finally, using an iterative coding process, our team developed a grounded typology and manually categorized base URLs, which is described in detail below. Our team labeled a total of 5,158 individual web sources of which 1,033 were shared in France; 2,579 in the United Kingdom; and 2,579 in Germany. Using a simple python script, the coding decisions for base URLs were applied to the individual links that user shared over the entire dataset. If sources were missing from our sample of 10%, they were categorized in this step. By using this method, we were able to classify and precisely classify 5,158 web sources. Following these consistent steps to sample, collect, clean, and code data allows for safer comparison and generalization across the country case studies. Table 1 summarizes the comparative sampling frames for this study.

Typologizing Political News and Information on Social Media

The process of cataloging content involved several iterative stages, following best practices in both concept formation in typology building and content analysis (Collier, LaPorte, & Seawright, 2012; Earl et al., 2004). The crucial first stage of this process involves developing an initial training dataset, testing our assumptions and definitions on real data, which we then re-evaluated and refined over multiple rounds.

First, we developed a testing grounded typology for political news and information shared over social media with a test dataset. We began with a sample of URLs shared in the United States during that country’s 2016 Presidential election—an important moment in which the “fake news” term first emerged. The team elaborated four broad: (1) professional news outlets, (2) established political actors, (3) polarizing and conspiratorial content, and (4) other sources of political news and information (Howard, Kollanyi, Bradshaw, & Neudert, 2017).

Within those categories, we developed a system of sub-types of sources, but instead of transporting a US-centric typology onto the political cultures in France, the United Kingdom, and Germany, our coding procedure often involved full-team negotiations over subcategory labels, definitions, and coding decisions. This analytical flexibility allowed us to adapt as we met classification challenges, sometimes introducing new categories to account for differences in the three political cultures.

To examine junk news sources, coders browsed the domain for information about authors, owners, and funding sources, and corroborated this information with third-party sources such as Wikipedia. This helped inform the Professionalism and Counterfeit decisions made by the team. Coders also searched for information about the domain from other international and national third-party fact-checkers, such as FullFact, Correctiv, AFP Fact Check, and Media Bias Fact, to improve the certainty of criteria such as credibility and bias. Style was evaluated based on visual and aesthetic cues including images, wording, and videos used. Overall, this process allowed the team to develop a comprehensive typology of sources involved over 1,500 hr of coding, bi-weekly review meetings, and six training sessions. The typology reflects 22 months of iterative coding procedures, in which the typology was also exported to other country contexts in Mexico, the United States, Sweden, and Brazil (Glowacki et al., 2018; Hedman et al., 2018; Marchal, Neudert, Kollanyi, & Howard, 2018).

Subsequently, we developed a rigorous training system to train our team of language-specific experts in working with our typology. For this stage, we used a dedicated team of five coders working on both US and Europena Union (EU) data in an iterative process. Coding and training on the shared English-language dataset from the United States achieved an intercoder reliability score of Krippendorf’s alpha = .89 among our groups of language-specific experts, signaling good concept formation across national contexts and high adeptness to our method. We chose the English-language dataset since all of our team members were fluent in the language which allowed for a comparative cross-country evaluation and meaningful assessment of the quality of coding decisions by country. To measure intercoder reliability, our team categorized web sources from our full list of base URLs in sets of random 50 sources. Individual cases in which the experts did not reach a consensus were re-evaluated in face-to-face team meetings. We did not calculate intercoder reliability scores within the language-specific teams of two to three coders, but as reported, the coders achieved sufficiently high scores.

Our system of analysis brought to light valuable distinctions across major categories of political news and information, and important nuances in subcategories. Professional News and Information was defined largely by the professional reputation of the organization behind the source, with two subcategories. Major News Brands included large sources that adhere to the standards and best practices of professional journalism, with known fact-checking operations and credible standards of production including clear information about real authors, editors, publishers, and owners. This content came from significant, branded news organizations, including any locally affiliated broadcasters. This content also included links to tabloids, unless they failed on at least three out of five criteria for junk news. This assessment is in line with recent political communication research, which finds that tabloids can exhibit “elective affinities” with disinformation and junk news (Chadwick, Vaccari, & O’Loughlin, 2018). New Media and Start-Ups were digitally native or start-ups that display evidence of organization, resources, and professionalized output that distinguishes between fact-checked news and commentary.

Professional Political Sources are produced by traditional political actors whose bulletins, working papers, websites, and reports provide citizen with information that is relevant in political processes. The subcategory Political Parties or Candidates was defined as official content produced by a political party or campaign. There were links to Government websites and reports from public agencies. Finally, content from Experts took the form of white papers, policy papers, or scholarship from researchers based at universities, think tanks, or other research organizations.

The third category of Divisive and Conspiracy Sources included various forms of unreliable and misleading reporting. The most important subcategory here was Junk News and Information. A source was labeled as junk news when it fulfilled at least three out our five criteria (professionalism, style, credibility, bias, and counterfeit). The subcategory Russia consisted exclusively of state-funded Russian sources such as Russia Today and Sputnik to their known slanted reporting and application of propaganda techniques (Helmus, 2018).

The fourth category included a host of Other Political News and Information sources. These sources included many kinds of political actors who typically generate political news and information during an election. The subcategory Citizen, Civic, and Civil Society was used to label links to content produced by independent citizens, civic groups, or civil society organizations; watchdogs; and interest and lobby groups. It included blogs and websites dedicated to citizen journalism, citizen-generated petitions, personal activism, and other forms of civic engagement. We sparingly used a subcategory of Other Political Content to capture myriad other kinds of political content, including portals like AOL and Yahoo! that do not themselves have editorial policies or news content, survey providers, and political documentary movies. Humor and Entertainment was used to label sources that produce political jokes, sketch comedy, and political art.

Large numbers of URLs simply referred to content on other social media platforms, or were themselves spam, so we needed a major Other category. Links labeled as Social Media Platforms simply referred to other social media platforms, such as Facebook or Instagram. If the content linked to on social media platforms could be attributed to another source of information (e.g., when users were posting links to news articles posted on Facebook), it was. Other Non-Political were sites that did not appear to be providing information even though they were shared in tweets using election-related hashtags. Spam is also included in this category.

We also separated a Language subcategory for links that led to content that could not be interpreted by the coding team. An additional category Not Available included subcategories of links that were inaccessible after repeated attempts. Where possible, we wayback machine to code such sources.

There were three subcategories that were relevant in the training data but not in these three European cases. Links to unverified WikiLeaks content, political merchandise, and religious content were found in the US training data but rarely or not at all present in the case study data. If we found individual instances of such content, it was cataloged as Other Political.

Cross-Case Comparison

For our analysis of political news and information and automation, we collected data on Twitter for elections in France, the United Kingdom, and Germany. Tables 1 and 2 reveal details about the data collections including hashtags, dates, and candidates across countries. Twitter does not provide official information on active users by country, but according to the Reuters Digital News Report 2018, Twitter is the Top 2 social media platform in the United Kingdom and Top 4 in France and Germany with growing—or in France stagnating—importance for news consumption (Newman et al., 2018).

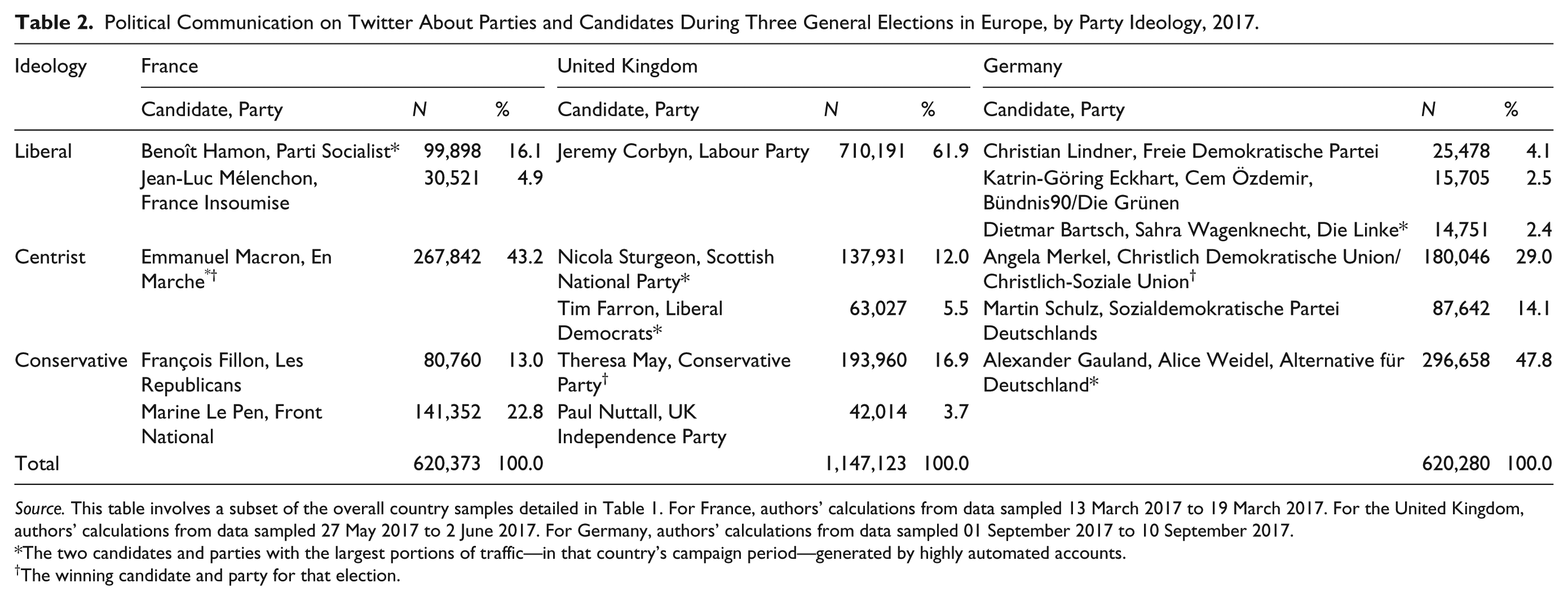

Political Communication on Twitter About Parties and Candidates During Three General Elections in Europe, by Party Ideology, 2017.

Source. This table involves a subset of the overall country samples detailed in Table 1. For France, authors’ calculations from data sampled 13 March 2017 to 19 March 2017. For the United Kingdom, authors’ calculations from data sampled 27 May 2017 to 2 June 2017. For Germany, authors’ calculations from data sampled 01 September 2017 to 10 September 2017.

The two candidates and parties with the largest portions of traffic—in that country’s campaign period—generated by highly automated accounts.

The winning candidate and party for that election.

In France, the first round of the French elections was held on 23 April 2017. Marine Le Pen of the conservative, nationalist party Front National and François Fillon from Les Rèpublicains led in early polls (Lemarié & Goar, 2017). Emmanuel Macron ran for the central, socio-liberal En Marche! which he founded in April 2016. Benoît Hamon was the candidate of ruling the Parti Socialist. As no candidate won a majority in the first round, a run-off was held between the top two candidates Macron and Le Pen on 7 May 2017, which Macron won by a decisive margin. It was the first time since 2002 that a candidate from the Front National made it to the final round which some experts attributed this to a new populist movement after several terrorist attacks (McAuley, 2017). In the lead up to the French, several instances of far-right conspiracy theories and falsehoods with xenophobic, anti-Islam, and anti-Semitic references spread over social media (BBC News, 2017). A day before the second election, Macron was targeted with a fictitious email leak which was promoted by highly automated accounts under the hashtag #Macronleaks (Ferrara, 2017).

The UK election took place on 8 June 2017 and was announced just 2 months earlier by Prime Minister Theresa May. Theresa May ran for the Conservative Party. Jeremy Corbyn was Labour’s candidate. The governing Conservative Party was confirmed as the single largest party in the House of Commons, yet lost its majority, which resulted in the formation of a minority government (Hunt, 2016). After the Brexit referendum in 2016, May had called for a snap election (Birrell, 2017). Targeted propaganda and the spread of misinformation had emerged as a critical concern on the public agenda in response to the Brexit referendum. In January 2017, the Culture, Media and Sports Committee launched an inquiry into “fake news” (House of Commons Select Committee, 2017). During Brexit, highly automated social media accounts were active for both the leave and remain campaign, generating large amounts of traffic (Howard & Kollanyi, 2016).

The German national election was held on 24 September 2017 and confirmed Angela Merkel in her mandate as chancellor for the fourth time. Angela Merkel led the Christlich Demokratische Union (CDU) and Christlich-Soziale Union (CSU). Martin Schulz was the Sozialdemokratische Partei Deutschlands (SPD) candidate. While Merkel’s CDU won the vote, the party suffered substantial losses. After Schulz led the polls at distinct margin for months, the party lost the leaded and achieved its lowest result in history (Frank, 2018). Represented in the Bundestag for the first time, the ultra-conservative AfD became the third party. Concerns over computational propaganda had emerged as a central political issue in the lead up to the election (Neudert, 2017). All of the major German parties committed to refrain using automation for campaigning, and German lawmakers introduced a stringent law, the NetzDG, to counter hate speech and illegal misinformation (Stern, 2017).

Comparative Findings and Analysis

Candidate and Party Coverage on Social Media in Three Elections

Which political parties dominated Twitter conversation during their national elections? A number of political communication scholars have examined the central role of political parties for setting the agenda and influencing online discourse over social media (Howard, 2006; Kreiss, 2016; Nielsen & Vaccari, 2013). Our first point of analytical comparison shows patterns in how often each candidate and their respective parties were discussed on Twitter. This analysis reveals some of the salient quantities and qualities of the public conversation during the time of the national elections. Based on this evaluation, we can offer some basic context about which political parties were most relevant to the public conversation. Our method counted tweets with the selected hashtags in a straightforward manner. Each tweet was coded and counted if it contained one of the specific hashtags that were being followed. If a tweet contained more than one selected hashtag, it was credited to all the relevant hashtag categories.

Table 2 identifies the proportions of Twitter traffic. It distinguishes parties on a traditional liberal, centrist, and conservative ideological spectrum. Some of these parties are also considered populist—on the left or the right—or are essentially single issue, nationalist, or regionally specific parties. The craft of comparison involves selecting a parsimonious set of comparative attributes that take advantage of the cases in a limited case comparison, without creating points of contrast that have no real-world instances. But these three ideological categories provide the needed analytical contrast to make some rough associations across several political cultures.

In France, hashtags about Emmanuel Macron appeared most often—43.2% of the candidate-specific tweets during the week as a whole. For the most part, traffic about Marine Le Pen was about half that of Macron’s. Yet, day to day, there were moments when Benoît Hamon drove the most traffic over Twitter, even though only 16.1% of the content overall related to him. In our follow-up of the run-off, Macron continued to dominate the Twittersphere with 42.3% of all traffic.

In the UK sample, hashtags about the Labour Party appeared most often, representing 61.9% of the party-specific tweets during the week as a whole. The Conservative Party generated a much lower proportion of the conversation at 16.9% and the Labour Party attracted consistently more conversation than the Conservative Party. At 12.0%, the Scottish National Party generated a disproportionally high percentage of the conversation, especially given the size of the party.

In Germany, Twitter conversations about the controversial AfD and its candidates were dominant on Twitter during the German election and concerned 47.8% of all traffic. The traffic relating to the CDU accounted for 29.0% of all political traffic. Comparatively, traffic related to the Social Democratic Party and its candidate Martin Schulz accounted for only 14.1% of total Twitter traffic. There was a significant peak in traffic on 3 September 2017, the day of the public TV debate between Angela Merkel and Martin Schulz.

Table 2 provides yet another reminder of the distance between the volume of Twitter conversation about parties or candidates and their popularity among voters. Only in France did the ultimate victor in the election also dominate political communication on Twitter. In the United Kingdom, Jeremy Corbyn and the Labour Party dominated social media activity, while in Germany, the ultra-conservative AfD had the largest block of content. But neither of these parties won their elections.

Amplified Political Communication in Three Elections

How did amplification support the volume of political communication by particular parties over Twitter? We analyzed the activity of amplifier accounts over the three elections. We use the terminology of amplified traffic to refer to accounts that deliberately seek to increase the number of voices or attention paid to particular messages (Mckelvey & Dubois, 2017). These accounts include automated, semi-automated, and highly active human curated accounts on social media.

We describe amplifier accounts as those that post 50 times a day or more on one of the selected hashtags during our sampling period. This detection methodology falls short of capturing amplifier accounts that are tweeting at lower frequencies. But, despite the simplicity of our metric, more complex methods using machine learning yield comparative numbers of false positives and remain contested in the field of computational social science. On the contrary, we have identified very few human users that tweet more than 49.5 times average per day on the sets of identified political hashtags. To reflect this observation, our simple threshold for identifying accounts that use some kind of amplification was chosen to reflect this threshold. Table 2 identifies the proportions of amplified traffic by candidate, party, and ideology. Automation trends are most sensibly compared between political, within countries, competing in the same election. An asterisk (*) identifies the two candidates or parties in each electoral contest that had the largest portions of traffic generated by highly automated accounts.

For the French election, it appears that a relatively low level of the overall traffic on the candidates was driven by amplifier accounts. At the high end, 11.4% of the Twitter traffic about Hamon was driven by highly automated accounts. At the low end, amplifiers generated 4.6% of the traffic about Mélenchon. Over the course of our sampling period, we find that the level of amplification remains fairly consistent over the entire period, on average, 7.2%. During the run-offs between Le Pen and Macron, 16.4% of traffic was generated by amplifier accounts, with 14.0% and 19.5% of respective candidate traffic being driven by amplifiers.

It appears that in the United Kingdom, the parties and their respective candidates have a medium level of amplified traffic about them with fairly consistent levels across parties. On average, 16.5% of traffic about UK politics is generated by highly automated accounts that we are able to track. In our earlier analysis from the beginning of May 2017—soon after the elections were announced—we measured 12.3% of amplified traffic, so there was a rise in activity closer to elections.

The share of amplified traffic during the German election was not substantial. We identified 92 such accounts that generated 7.4% of the total traffic. The traffic generated by amplifier accounts for the CDU, FDP, and the SPD averaged between 7.3% and 9.4%. For the AfD-related hashtags, 15% of the traffic came from amplifier accounts.

Three points are notable across our cross-country evaluation. First, for all countries in our analysis, we find low to moderate levels of amplified traffic which suggests limited effects on aggregate social media sharing outcomes, overall in Europe. Second, the level of amplification in France and the United Kingdom grew substantially closer to the elections. This suggests that amplification was coordinated to distort political debate surrounding the elections. Third, it is worth pointing out that we cannot establish who manages these accounts, and we do not analyze the content or emotional valence of particular tweets. Hence, this information alone is insufficient to determine whether the accounts are run by the campaign or candidate.

Sources of Political News and Information

What are the salient quantities and qualities of sources of political news and information users shared over social media? To understand what voters were sharing, we analyzed the web sources that users in France, the United Kingdom, and Germany shared links to. Table 3 breaks down the typological proportions of political news and information.

Political News and Information Shared Over Twitter During Three Elections in Europe, by Source Type, 2017.

French Twitter users shared a lot of Professional News Sources, only occasionally shared content from major Professional Political Sources, and rarely relied on Divisive and Conspiracy Sources or other forms of political news and information. The largest proportion of content being shared by Twitter users interested in French politics—46.7% of all sources used—came from Major and Minor News Brands. Information from Political Parties or Candidates, Government, and other Experts was somewhat less used. Yet, the largest proportion of that content is citizen-generated content.

The largest proportion of content being shared by Twitter users interested in UK politics comes from Professional News Sources, which account for 48.8% of all content. In comparison, Junk News and Information account for 10.3% of all content shared. A high percentage of other political content that was shared comes from Citizen, Civic, and Civil Society. Russia news sources, such as Russia Today or Sputnik, did not feature prominently in the entire sample.

In Germany, overall 40.2% of the political news and information Twitter users shared users discussing the German election came from Professional News Sources. Content from Divisive and Conspiracy Source accounted for 10.2% of shares. Links to content produced by Government, Political Parties or Candidates, or Experts altogether added up to just 10.5% of the total shares.

There are three main findings that should be noted across categories. First, our analysis demonstrates that in France, for every one link to Junk News Sources, there were seven links to content produced by professional news organizations. In the United Kingdom, the ratio was 5 to 1, and in Germany, the ratio was 4 to 1. The low proportion of junk news in France may be related to national political communication cultures which traditionally emphasize political debate in everyday life and social systems. Second, only a small number of domains were operated by known Russian sources during the time of the elections despite mainstream Russian outlets and their respective national offshoots having a substantial following in France, the United Kingdom, and Germany. Third, across national elections, the category of Professional News Sources was the largest category of sources shared during the elections that was at least roughly double as large as the second largest category of sources proportionally. Although many have suggested we are in “post-truth” area with declining levels of trust in the media, this finding suggests that social media users in Europe are still widely relying on traditional news media to inform their political decision making.

Conclusion

In each of these elections, social media had an important role both in providing an additional platform for public conversation and as a kind of news bulletin for political news and information about elections, candidates, and campaigns. Social media certainly has transformed the modes of political communication affording highly scalable, real-time debate and deliberation that is fueled by algorithms and personal data. In increasingly digital media ecosystems, the nature of political news and information has changed over time. Yet, current political communication theory lacks a comprehensive, grounded, and transportable typology of the types of sources that social media users share as a fundamental framework for understanding the impact of such content on political systems.

In this article, we developed a grounded typology of sources of political news and information drawing from real-time social media data from three advanced democracies in Europe. Based on our comparative analysis, we put forward the concept of “junk news” which we use to refer to sources that publish or aggregate deceptive, conspiratorial, or incorrect information purporting to be real news and political information. This is the first multilingual, cross-national comparison of the production, consumption, and sourcing of political news and information over social media.

First, we find that political parties drive traffic in varying degrees. In France, the winning party led the public conversation, whereas in the United Kingdom and Germany, parties that challenged the current government, often controversially so, dominated traffic. Second, low to moderate levels of amplification intervene in political communication but activity levels rise closer to the elections. Third, in comparative terms, the importance of Divisive and Conspiracy Sources to political conversation varies from country to country with our analysis demonstrating that in France, for every one link to junk news, there were seven links to content produced by professional news organizations, and in the United Kingdom, the ratio was 5 to 1 and in Germany 4 to 1, respectively.

However, the most concerning trend for public life is not about the waxing and waning of professionally produced news as a source for political information. The real casualty appears to be political parties, government agencies, and experts. Trust in these sources of information has been in decline for a while, but in this comparative analysis, we are able to identify some sharp contrasts in how such sources are valued across political cultures in three advanced democracies. In France, for every one link to Divisive and Conspiratorial Sources, social media users shared more than 2.4 links to information coming directly from political parties, government agencies, or acknowledged experts. One might consider that ratio already too low. But in the United Kingdom, the ratio was the inverse, such that for each link to Divisive and Conspiratorial Sources, there was 0.8 links to sources from traditional political actors, and in Germany, the proportions were at par with a one to one ratio between these kinds of sources.

Assessing what information users shared on social media, and whether “fake news” even holds as a concept, was a project of immense analytical scale. We find “fake news” to be un-operationalizable and vague concept, but in analyzing data on what has been shared in recent elections, we offer an alternative typology that captures the poor, polarizing qualities of these sources of news and information. Grounded in what users were actually referencing during these three elections, we find that the substantive volume of political news and information does not even come from traditional sources of political and technical expertise. Rather than chasing a granular definition of the popular “fake news” term, we focus our inquiry on an exhaustive analysis of various forms of political news and information and set it in a context of aggregate social media sharing patterns.

In comparison to our analyzed data from France, the United Kingdom, and Germany, French users were sharing much better quality information than what many US users shared, and slightly better quality news and information as German and UK users share. While we have yet to test this, we anticipate that extending the typology to countries in the Global South and non-democratic countries with less lively media ecosystems would require some substantial changes to the typology, such as introducing categories for pro-government state-funded media, and anti-regime blogs that are subject to suppression and censorship.

Many of the patterns in sourcing political news and information over social media—and the forms of content themselves—are new features of contemporary political communication. Researchers need to be flexible in applying the traditional definitions we have for what counts as political content, while interpreting the new types of sources and content. In this article, we develop and analyze a carefully constructed, holistic typology of how news production and consumption is evolving over social media.

Grounded typology building requires complex analytical exercise. But the political trends and events of the last few years paired with new modes of public discourse underscore that political communication scholars need to retool and adapt their frameworks. Typology building is a central exercise if researchers want to answer questions on the impact of problematic information on political processes, or critically interrogate how political discourse and news consumption are evolving over time. Most importantly, rigorous grounded typologies of content are fundamental for putting forward policy recommendations to inform platform design and content moderation in ways that underscore democratic ideals of free speech and deliberation.

Footnotes

Acknowledgements

The authors are grateful to Gillian Bolsover, Samantha Bradshaw, Clementine Desigaud, John Gallacher, and Monica Kaminska for their research assistance.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the European Research Council through the grant “Computational Propaganda: Investigating the Impact of Algorithms and Bots on Political Discourse in Europe,” Proposal 648311, 2015-2020, Philip N. Howard, Principal Investigator. For supporting our Election Observatory and our research in Europe, we are grateful to the Adessium Foundation, Microsoft Research, and Omidyar Network. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the funders.