Abstract

Background

Short-form videos are an increasingly important source of health information for individuals with type 1 diabetes mellitus (T1DM), yet their quality is unverified.

Objective

This study aimed to evaluate and compare the quality, reliability, and engagement of T1DM-related videos on Bilibili and TikTok.

Methods

We conducted a cross-sectional analysis of the top 100 T1DM-related videos from Bilibili and TikTok (N=200). Videos were systematically evaluated using four validated instruments: the Global Quality Scale (GQS), Journal of the American Medical Association (JAMA) criteria, Video Information and Quality Index (VIQI), and modified DISCERN (mDISCERN). Engagement metrics were extracted, and Spearman correlations and a multivariable negative binomial regression were performed to identify predictors of video ‘likes’. A comprehensive sensitivity analysis, including Principal Component Analysis (PCA), was conducted to ensure robustness.

Results

TikTok videos achieved significantly higher user engagement than those on Bilibili (median views: 88,089 vs. 3,418). In terms of quality, TikTok scored higher on the VIQI (median: 12.0 vs. 9.0, P < 0.001), while Bilibili scored higher on the JAMA criteria (median: 2.0 vs. 0.0, P < 0.001). No significant platform differences were found for GQS or mDISCERN. In the adjusted regression model, VIQI score was a strong positive predictor of likes (RR=1.66, 95% CI 1.32-2.13), whereas a higher GQS score was a negative predictor (RR=0.24, 95% CI 0.13-0.45). These findings were robust across all sensitivity analyses.

Conclusions

T1DM-related short videos on Bilibili and TikTok exhibit substantial variability in quality and reliability. TikTok demonstrates stronger audiovisual quality, whereas Bilibili shows better transparency (JAMA). Engagement was driven more by production quality than informational accuracy. These findings suggest that optimizing content strategies and strengthening professional participation may be beneficial for digital diabetes education.

1. Introduction

Type 1 diabetes mellitus (T1DM) is a chronic autoimmune condition characterized by the immune-mediated destruction of pancreatic β-cells, resulting in absolute insulin deficiency and lifelong dependence on exogenous insulin therapy. 1 Unlike many chronic diseases diagnosed later in life, T1DM is commonly diagnosed in childhood or adolescence. This distinct patient demographic faces heterogeneous clinical trajectories and diverse therapeutic challenges.2,3 Furthermore, the management of T1DM requires continuous medical decision-making, including glucose monitoring, insulin dose adjustment, and the prevention of acute complications such as diabetic ketoacidosis.4,5

With the evolution of digital ecosystems, individuals with T1DM increasingly seek health information through social media and short-video platforms, reflecting broader shifts in online health-seeking behaviors observed in chronic disease communities.6,7 In China, Bilibili and TikTok (Douyin) have emerged as two of the most influential platforms for health-related short videos. They represent two distinct dominant models within the Chinese digital landscape: community-driven versus algorithm-driven content distribution.8,9 Thus, evaluating these two platforms offers a representative assessment of the broader digital health ecosystem.

However, the growing popularity of digital health education on short-video platforms may present substantial challenges. Prior studies examining short-video health content have raised concerns about accuracy, completeness, and misleading health claims. Evaluations of videos on thyroid nodules, liver cancer, COVID-19, and metabolic diseases have consistently shown wide variability in information reliability and quality.8–10 These findings are consistent with international evidence showing that user-generated health videos frequently omit essential clinical information, lack source attribution, or emphasize entertainment over education.11,12

For T1DM specifically, the patient demographic aligns closely with the primary user base of short-video platforms. Adolescents and young adults, who constitute a large proportion of individuals with T1DM, are highly active consumers of short-form content and often rely on peer-shared experiences for emotional support and disease literacy.13–15 However, studies also caution that online diabetes information sometimes contains inaccuracies, inconsistent guidance on insulin administration, oversimplified explanations of hypoglycemia or ketoacidosis, or commercially biased narratives.16,17 These concerns underscore the need for systematic evaluations of Chinese short-video platforms, which remain understudied compared with YouTube, Instagram Reels, or TikTok in Western contexts.

To assess the quality and reliability of online health information, prior research frequently employs validated instruments such as the Global Quality Scale (GQS), the Journal of American Medical Association (JAMA) benchmark criteria, the modified DISCERN (mDISCERN), and the Video Information and Quality Index (VIQI). These tools have been widely applied in video-evaluation studies on endocrine disorders, chronic illnesses, surgical care, and infectious diseases.8,9,18 Despite their popularity, to our knowledge, no study has simultaneously deployed all four instruments to evaluate T1DM-related short videos on Chinese platforms.

Moreover, the relationship between content quality and user engagement remains poorly understood. Some evidence suggests that high-quality videos do not necessarily attract more likes, comments, or shares, 11 whereas others find modest positive associations. 19 It is unclear whether engagement is primarily driven by audiovisual quality, informativeness, uploader characteristics, or platform-specific algorithms. Understanding this relationship is essential for informing responsible content promotion strategies.

To address these gaps, this study systematically evaluated 200 T1DM-related short videos from Bilibili and TikTok. The primary aim of this study was to evaluate the overall adequacy, defined as quality and reliability, of T1DM information available to individuals within the short-video ecosystem. A secondary aim was to compare Bilibili and TikTok to understand how different algorithmic environments influence information quality. By integrating four validated quality instruments and multiple analytic strategies, this study provides a comprehensive evaluation intended to support the development of evidence-based digital diabetes-education ecosystems in China.

2. Materials and methods

2.1. Study design

This cross-sectional study evaluated the quality, reliability, and engagement patterns of short videos related to T1DM on Bilibili and TikTok. The methodological principles followed established approaches in digital health communication research, particularly prior analyses of Chinese short-video platforms that assessed quality and engagement of disease-related content.8,9 Because all data were publicly accessible and contained no personal identifiers, institutional review board approval was not required.

2.2. Video search and eligibility

All videos were retrieved on September 25, 2025. The search term “1型糖尿病” (“Type 1 diabetes mellitus”) was entered manually into new browser sessions with no login or prior watch history to minimize personalization biases intrinsic to platform recommendation algorithms, as recommended in short-video research methodologies. 8 For each platform, the first 100 videos generated by the native algorithm were screened, following the convention that short-video studies analyze top-ranked content to replicate typical user experiences. 9

When a video served multiple purposes (e.g., entertainment combined with education), the “primary purpose” was determined based on the uploader-defined category tags and the content type that occupied more than 50% of the total video duration. Although our inclusion criteria did not restrict video language, the top 200 videos meeting all eligibility criteria were incidentally all in Mandarin Chinese. This naturally reflects the primary user demographics and the regional content distribution algorithms of these two platforms. Exclusion criteria included advertisements, duplicated content, non–T1DM content, and videos exceeding 10 minutes in duration to maintain the focus on short-form content. Additionally, videos rendered inaccessible by uploader deletion or platform removal prior to data extraction were excluded. Ultimately, 200 videos met inclusion criteria, with 100 from Bilibili and 100 from TikTok.

2.3. Data extraction

Two trained reviewers independently extracted video-level metadata, including duration in seconds, days elapsed since upload, uploader characteristics, and engagement indicators such as views, likes, comments, saves, and shares. “Video duration” refers to the total length of the content file. Due to platform privacy policies, granular data on user retention or actual watch time is unavailable to public researchers. Uploader type was categorized as professional or non-professional based on institutional affiliation or platform-verified medical credentials, consistent with classification schemes in Chinese digital health communication studies.6,8–10,20–22 Each video was assigned to one predefined content theme. These themes—Basic Disease Knowledge, Daily Management and Technical Procedures, Lifestyle Guidance, Medical Advances and News, Myth Debunking and Public Guidance, Psychological Support and Experience Sharing, and Others—were adapted from established T1DM education and psychosocial communication frameworks.15,16 Reviewer discrepancies were resolved through consensus.

2.4. Quality and reliability assessment

Video quality and reliability were evaluated using four validated instruments widely applied in online health information research.

First, the GQS score provided an integrative measure of educational quality. 9 The scoring criteria are defined as follows: a score of 1 indicates “poor quality, poor flow, most information missing”; a score of 2 indicates “generally poor quality and flow”; a score of 3 indicates “moderate quality, suboptimal flow”; a score of 4 indicates “good quality and generally good flow”; and a score of 5 indicates “excellent quality and excellent flow, very useful for patients”.

Second, to assess transparency, the JAMA Benchmark Criteria was employed. 13 Each of the four criteria is scored 1 point if met. This instrument assigns a total score from 0 to 4 by evaluating four specific components (1 point per criterion): (1) Authorship (author credentials and affiliations are provided); (2) Attribution (references and sources are clearly listed); (3) Currency (initial date of posting and subsequent updates are provided); and (4) Disclosure (conflicts of interest, funding, sponsorship, or video ownership are fully disclosed).

Third, the VIQI score was utilized to capture structural and audiovisual dimensions, 11 consisting of four sub-items each scored from 1 to 5 (total range: 5–20). These sub-items are defined as: VIQI-1 (Information Flow), which was quantified in this study using user “likes” to standardize subjectivity (scored as 1 point for <10 likes, 2 for <100, 3 for <1,000, 4 for <10,000, and 5 for ≥10,000 likes); VIQI-2 (Information Accuracy); VIQI-3 (Video Quality), calculated by assigning 1 point for each production element present (images, animations, interviews, video captions, and summaries); and VIQI-4 (Precision), assessing the level of coherence between the video title and content.

Finally, the mDISCERN index was applied to evaluate the reliability of treatment information. 14 This tool comprises five binary questions (scored 0 for “no” and 1 for “yes”): (1) Are the aims clear and achieved? (2) Are reliable sources of information used? (3) Is the information presented balanced and unbiased? (4) Are additional sources of information listed for individual reference? and (5) Are areas of uncertainty mentioned?

Two independent reviewers scored all videos. Inter-rater reliability was quantified using Cohen’s κ for categorical indicators and intraclass correlation coefficients for continuous scales, both demonstrating acceptable agreement consistent with reliability standards for digital health content evaluation. 13

2.5. Statistical analysis

All analyses were performed using R version 4.5.1. Continuous variables were summarized as medians with interquartile ranges, and categorical variables as frequencies and percentages. Differences between Bilibili and TikTok videos were tested using Wilcoxon rank-sum tests for continuous variables and chi-square tests for categorical variables. Spearman correlation coefficients assessed associations between video quality indicators and engagement metrics, with P-values adjusted using the Benjamini–Hochberg method to account for multiple comparisons.

2.6. Regression modeling

The number of likes served as the primary engagement outcome because it represents a stable behavioral indicator of audience preference across short-video ecosystems. Visual inspection and dispersion indices confirmed overdispersion, supporting the use of negative binomial regression. Independent variables included platform, video duration, days since upload, follower count, uploader category, content theme, and all four quality metrics (GQS score, JAMA, VIQI total score, and mDISCERN total score). Rate ratios (RRs) and 95% confidence intervals (CIs) were calculated to estimate the strength and direction of associations.

2.7. Sensitivity analyses

To ensure the robustness of our findings, a comprehensive sensitivity analysis framework was applied. First, multicollinearity was assessed using Variance Inflation Factors (VIFs) to detect redundancy among thematic variables and quality metrics. Second, to address potential collinearity, Principal Component Analysis (PCA) was conducted on the four quality scores (GQS, JAMA, VIQI, mDISCERN) to extract a unified quality construct (PC1). We then fitted a secondary regression model substituting PC1 for the individual quality metrics. Third, alternative model specifications were tested to verify the stability of the engagement predictors. These included: (1) a log-linear model using log-transformed likes; and (2) a normalized model evaluating likes per 1,000 followers to account for uploader reach. A zero-inflated negative binomial model was evaluated but deemed unnecessary given the negligible proportion of zero-like videos (0.5%). Detailed methodological procedures, including specific R packages used and full diagnostic tables (Tables S1–S9), are provided in the Supplementary Materials.

3. Results

3.1. Characteristics of included videos

To obtain the final sample of 200 videos (100 per platform), we screened the top 263 search results. A total of 63 videos were excluded based on the eligibility criteria. Specifically, 2 videos were excluded due to inaccessibility, such as removal by the uploader. The remaining exclusions comprised advertisements, duplicates, unrelated content, and videos exceeding the duration limit. Figure 1(a) and (b) illustrates the distribution of uploader categories and content themes, and Table 1 summarizes all descriptive characteristics. The median duration across all videos was 112 seconds (IQR 83–184). Bilibili videos were significantly longer than those on TikTok (122.0 vs 105.0 seconds; P = 0.002; Table 1). No significant difference was detected in days since upload (P = 0.792). Characteristics of included videos. Descriptive characteristics of included videos stratified by platform. 1Data are presented as median [Q1, Q3] for continuous variables and n (%) for categorical variables. 2P-values were calculated using the Wilcoxon rank-sum test for continuous variables and the Pearson’s Chi-squared test for categorical variables. All included videos (N=200) were in Mandarin Chinese.

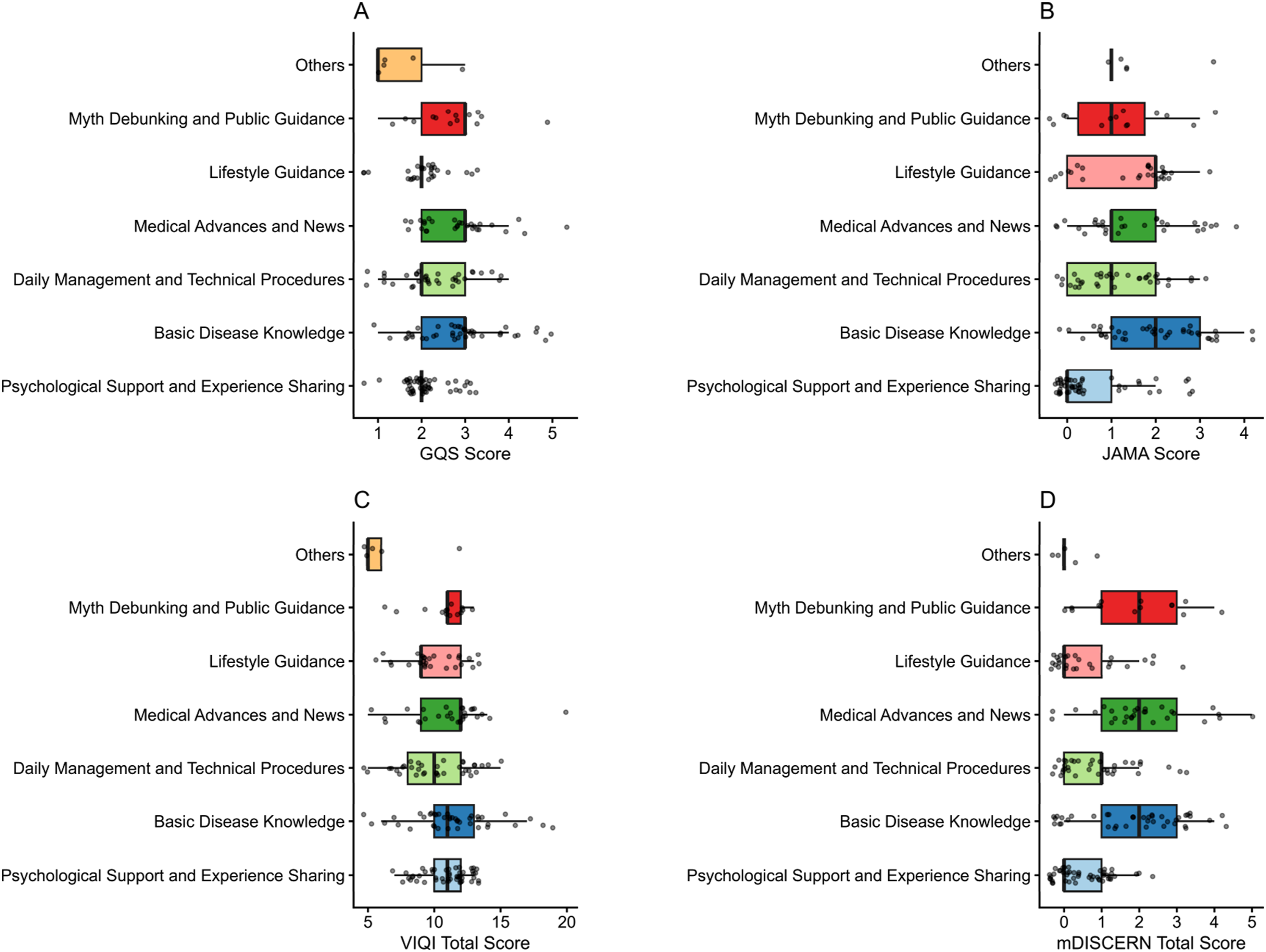

Large platform-level disparities in engagement were observed. TikTok videos garnered markedly higher views (median 88,089.0 vs 3,418.0; P < 0.001), comments (565.0 vs 2.0; P < 0.001), saves (199.0 vs 23.5; P < 0.001), likes (991.0 vs 90.5; P < 0.001), and shares (247.5 vs 9.0; P < 0.001), as summarized in Table 1. Professional uploaders contributed 48 (24.0%) of videos, with no statistically significant platform difference. Content theme distributions differed sharply (P < 0.001), with TikTok hosting a higher proportion of psychological-support–oriented content and Bilibili concentrating more on core disease knowledge (Figure 1(b)). Differences in quality and reliability scores across themes are presented in subsequent analyses (Figure 2). Content-theme differences in the four video quality and reliability scores.

3.2. Quality and reliability scores

Quality and reliability varied substantially across platforms and themes. As shown in Figure 3(a), platform-stratified distributions for the four instruments reveal that TikTok videos had significantly higher VIQI total scores (median 12.0, IQR 11.0–13.0) than Bilibili (median 9.0, IQR 8.0–11.5; P < 0.001). In contrast, Bilibili achieved markedly higher JAMA scores (median 2.0 vs 0.0; P < 0.001). No statistically significant platform differences were observed in GQS (P = 0.30) or mDISCERN total (P = 0.09), although subcomponent comparisons revealed nuanced distinctions captured in Table 1. Score distributions by platform and uploader category.

Differences across uploader categories are illustrated in Figure 3(b), with professional uploaders produced videos with significantly higher scores across all four quality instruments (P < 0.001 for all). As Figure 2 demonstrates, all four quality metrics varied significantly across content themes (P < 0.05 for all). For instance, “Basic Disease Knowledge” and “Medical Advances and News” consistently ranked among the highest in median scores for GQS, JAMA, and mDISCERN (Figure 2(a), (b) and (d)), whereas themes like “Psychological Support and Experience Sharing” often scored lower. Detailed VIQI subitem and mDISCERN subitem comparisons by platform (stacked proportions and radar plots) are presented in Figure 4. VIQI and mDISCERN subitem comparisons by platform (stacked bars and radar plots).

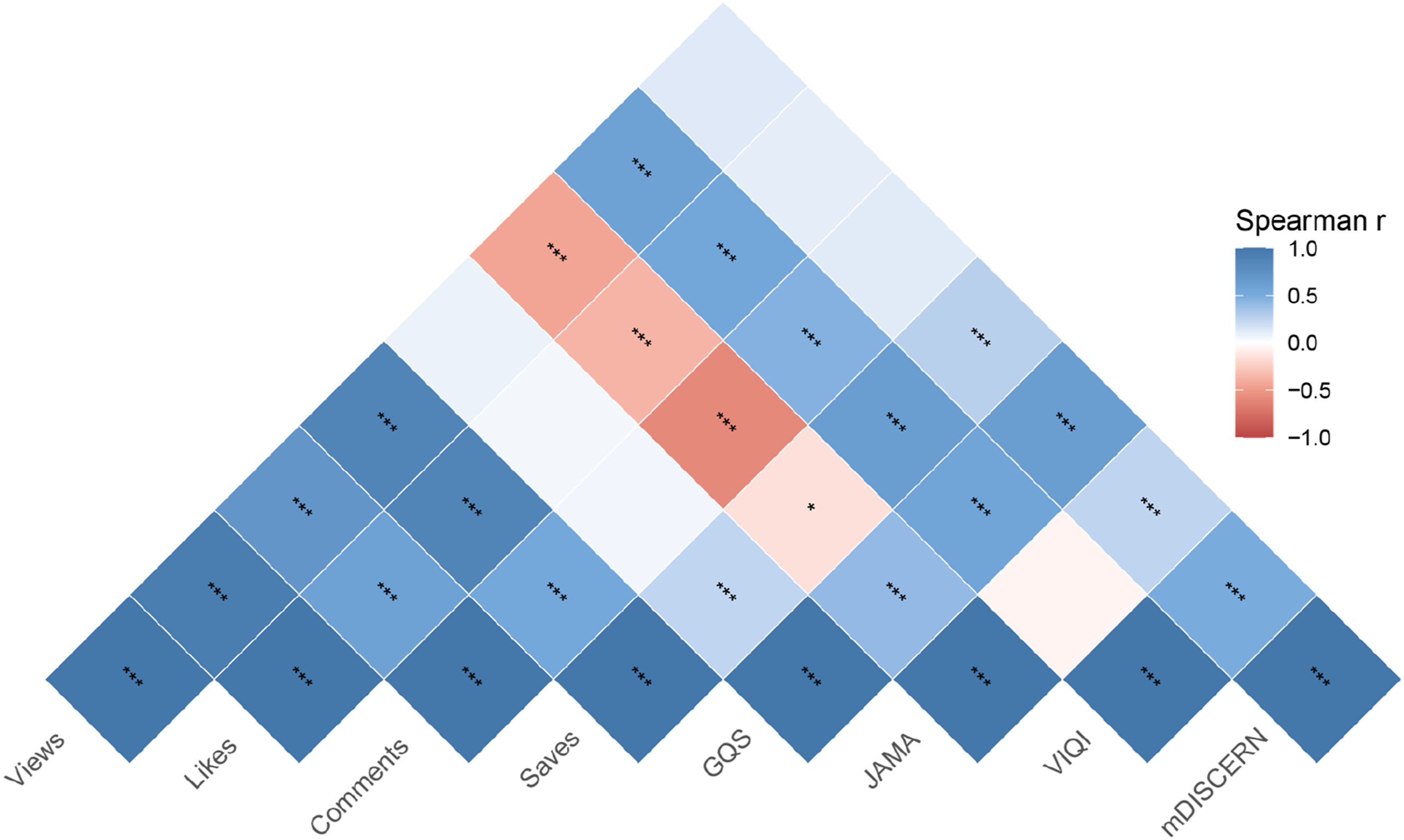

3.3. Correlation between quality and engagement

The interrelationship between video quality and user engagement was assessed via Spearman correlation (Figure 5). GQS, VIQI, and mDISCERN showed significant positive correlations with multiple engagement metrics, including Views, Likes, Saves, and Comments (all P < 0.001). In stark contrast, JAMA score exhibited a weak negative or null correlation with engagement, only reaching statistical significance with Saves (P < 0.05). Spearman correlation heatmap between quality indicators and engagement metrics.

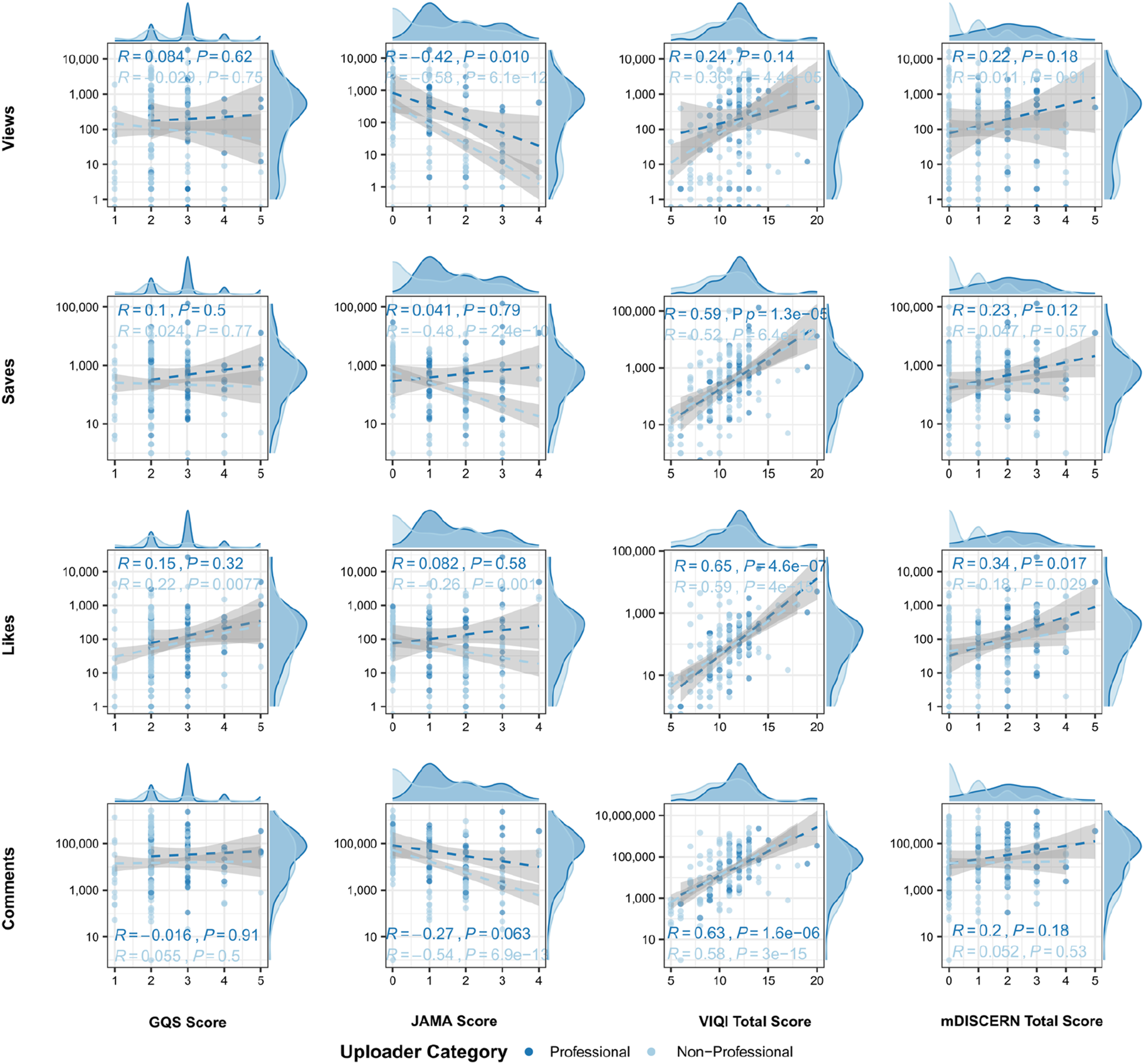

Platform-stratified analyses revealed crucial nuances (Figure 6). For VIQI Total, the positive correlation with Likes was substantially stronger on Bilibili (R = 0.61, P < 0.001) than on Tiktok (R = 0.33, P < 0.001). A similar, though less pronounced, platform-dependent pattern was observed for GQS, which was moderately correlated with Likes on Bilibili (R = 0.37, P < 0.001) but showed no relationship on Tiktok (R = -0.0057, P = 0.96). Platform-stratified associations between quality metrics and engagement parameters.

Stratifying by uploader category (Figure 7) highlighted that for non-professional creators, videos with higher JAMA scores were paradoxically associated with significantly fewer Likes (R = -0.26, P = 0.001), a relationship not observed for professionals (R = 0.082, P = 0.58). Uploader-stratified associations between quality metrics and engagement parameters.

3.4. Regression analysis

Multivariable negative binomial regression analysis of factors associated with video likes.

Notes. videos classified as “Others” (n = 5, 2.5%) were excluded from stratified analyses due to insufficient sample size.

Abbreviations: RR, Rate Ratio; CI, Confidence Interval.

Multivariable regression forest plot.

Among covariates, follower count (P = 0.001) and days since upload (P = 0.023) were independent predictors of higher engagement. Platform, uploader type, and most content themes did not demonstrate statistically significant effects after adjustment. Figure 8 illustrates the full set of rate ratios on a log scale.

3.5. Sensitivity analyses

To ensure the robustness of the primary regression model, a suite of sensitivity analyses was conducted. First, multicollinearity was assessed using Variance Inflation Factors (VIFs), which ranged from 2.74 to 13.82 (Supplementary Table S1), indicating moderate to high collinearity among some predictors, particularly the quality instruments.

Consequently, a PCA was performed on the four quality scores. PC1 captured 59.9% of the total variance and was strongly loaded by GQS (0.916), mDISCERN (0.838), and VIQI (0.758), thus representing a composite measure of overall quality. A secondary regression model substituting PC1 for the individual quality metrics yielded highly stable coefficients for all other variables, confirming the robustness of the primary model’s findings (Supplementary Figure S2).

Further, alternative model specifications, including a log-linear model and a model with a normalized engagement outcome (likes per 1,000 followers), produced consistent effect directions and significance levels. A zero-inflated model was considered but deemed unnecessary given the low proportion of zero-like videos (0.5%). The primary negative binomial model was supported by Akaike Information Criterion (AIC) comparisons (Supplementary Table S3).

4. Discussion

This study provides a systematic evaluation of the quality, reliability, and audience engagement of T1DM-related short videos across Bilibili and TikTok, two major Chinese platforms whose algorithmic formats shape public health communication in increasingly distinct ways.9,20,21 Consistent with the growing recognition that short-video ecosystems have become dominant sources of health information for young and middle-aged populations in China,21,23,24 our findings underscore substantial variability in both informational quality and engagement patterns. The observation that TikTok videos generated significantly higher levels of views, likes, comments, and shares compared with Bilibili aligns with recent platform-comparative analyses showing that TikTok’s recommendation architecture prioritizes emotionally salient and visually dynamic content, leading to broader reach but not necessarily higher educational quality.20,22,23 By contrast, Bilibili’s historically community-centered structure and longer average watch durations have been associated with more didactic or instructional scientific communication.21,25

A specific socio-medical context in China further underscores the significance of these findings. Although the absolute number of individuals living with T1DM is substantial due to the country’s large population base, the incidence rate remains relatively low compared with many Western countries and with type 2 diabetes. Population-based registry data from China have reported an overall T1DM incidence of approximately 1.0 per 100,000 person-years across all age groups, 26 with regional pediatric estimates ranging from 3.1 to 5.5 per 100,000 person-years in urban centers such as Beijing. 27 This epidemiologic pattern has been associated with constrained availability of specialized resources for T1DM education and care in many regions. National surveys and reviews of pediatric diabetes services in China have documented a shortage of full-time diabetes educators and limited access to multidisciplinary diabetes care teams, particularly outside major urban centers. 28 As a consequence, individuals with T1DM and their families may increasingly rely on free and easily accessible digital health information, including short-video platforms, to supplement professional medical guidance. Within this context, the accuracy, balance, and reliability of online health content assume heightened clinical and educational importance, as misinformation may directly influence daily self-management decisions in a population already facing limited access to specialized T1DM care.

In our study, quality assessment revealed that VIQI total scores were consistently higher on TikTok, suggesting that technical audiovisual characteristics and narrative structure—key drivers of user retention in algorithmic feeds—were better optimized on TikTok than on Bilibili.11,22,23 However, GQS, JAMA, and mDISCERN scores remained modest across both platforms, echoing long-standing concerns that short-video–based medical information tends to emphasize visually appealing presentation rather than comprehensive, transparent, or evidence-based health education.13,14,29 The particularly low JAMA scores observed in our sample reflect limited disclosure of authorship, references, and currency—issues repeatedly documented in Chinese short-video health research.9,20,21

The content-theme patterns further highlight the uneven landscape of online T1DM education. Videos focusing on lifestyle guidance and psychological support were more common on Bilibili, whereas TikTok featured more content related to disease basics and public guidance. Prior studies similarly reported that TikTok’s algorithm tends to amplify simplified health narratives and “myth-debunking” formats that align with rapid consumption behaviors.22,23 Yet psychological and self-management–oriented content, which is critically important for chronic diseases such as T1DM—often receives lower visibility in high-velocity short-video environments.30–32 These trends reinforce broader concerns within digital diabetes education that algorithmic selection may inadvertently deprioritize content addressing emotional well-being, coping strategies, or long-term disease management.33–35 For T1DM specifically, the algorithmic prioritization of brevity and entertainment creates a fundamental conflict with the disease’s management complexity. Therapeutic decisions, such as insulin dose adjustment and ketoacidosis prevention, rely on nuanced physiological context that cannot be safely condensed into fragmented, 15-second soundbites without losing critical accuracy.16,17 Consequently, reliance on such oversimplified narratives poses a tangible clinical risk of mismanagement. Furthermore, the prevalence of idealized, filter-enhanced depictions of life with diabetes may induce unrealistic expectations, exacerbating diabetes distress and burnout among individuals struggling with the unglamorous daily realities of the condition.33–35

Correlation analyses demonstrated that VIQI total score displayed the strongest positive associations with multiple engagement metrics, particularly views and likes, consistent with evidence that structural clarity, visual appeal, and audiovisual quality are primary determinants of user engagement in short-form video settings.11,36 By contrast, both GQS and mDISCERN exhibited weak or inverse correlations with engagement, a pattern echoed in previous studies showing that high-quality or evidence-based content often fails to achieve high engagement on short-video platforms.8,13,37–39 The absence of substantial correlations for JAMA criteria parallels similar findings in other chronic disease domains, where disclosure-related indicators are not major determinants of audience response.9,14

Regression models provided additional nuance. Higher VIQI total scores remained a significant positive predictor of likes, whereas GQS and mDISCERN tended to show negative associations. These results highlight an inverse association between quality and engagement in digital health communication. High GQS and mDISCERN scores typically require comprehensive, evidence-based medical explanations. These cognitively demanding elements may increase the viewer’s cognitive load, potentially reducing “watchability” in the fast-paced short-video environment. These results mirror prior research showing that depth, completeness, and didactic scientific accuracy—while essential for health literacy—may reduce audience retention in fast-paced video environments.13,23,29 In contrast, high VIQI scores, reflecting superior audiovisual quality, align more closely with algorithmic preferences for rapid gratification and retention. JAMA scores were not independently associated with engagement, consistent with the notion that transparency and attribution indicators have limited visibility to users and minimal effect on interaction behaviors.8,10 The positive association between followers and engagement aligns with social amplification literature, which highlights the strong role of network size and parasocial dynamics in shaping video performance.25,40 Content themes, however, showed inconsistent associations after adjustment, suggesting that format and quality may outweigh topical category in influencing TikTok and Bilibili user behavior.22,41

Sensitivity analyses strengthened the robustness of these interpretations. The high variance inflation factors observed among certain quality indicators are consistent with prior methodological reports noting conceptual overlap between GQS, VIQI, and mDISCERN.11,42 PCA findings further corroborated this, with PC1 representing a general video quality factor characterized by strong loadings from GQS, VIQI, and mDISCERN, while JAMA formed an orthogonal axis reflecting formal disclosure practices.14,43 Regression models substituting PC1 for individual metrics produced similar patterns, reinforcing the centrality of audiovisual and structural quality in predicting engagement. Alternative outcome specifications, including log-transformed likes and likes per 1,000 followers, also supported the consistency of these findings, aligning with methodological recommendations for digital health engagement modeling. 40 The negligible proportion of zero-like videos matches expectations for algorithmically distributed health content, further confirming model fit appropriateness.9,23

The findings of this study have direct implications for clinical practice and health policy. First, for clinicians and diabetes educators, the variability in content quality suggests that simply advising individuals to “search online” is insufficient. Instead, practitioners should actively curate and “prescribe” specific, high-quality accounts (digital prescriptions) to counteract algorithmic bias toward entertainment. Second, for policymakers and platforms, the observed disconnect between high engagement and low educational quality highlights the need for refined recommendation algorithms that upweight content from verified medical professionals. Finally, regarding digital health communication strategies, content creators should aim to balance rigorous medical evidence with high audiovisual production value to improve the dissemination of accurate health information.

Despite these contributions, several limitations should be acknowledged. The reliance on platform-generated top-ranked videos may introduce bias related to algorithmic curation, as documented extensively in Chinese short-video platform research. Measurements of uploader professionalism rely on publicly available verification systems, which may incompletely capture real-world clinical expertise. 44 Although four validated instruments were used, each metric has known limitations, and their application to short videos, rather than long-form educational materials, has been debated. Moreover, the sample included only Chinese-language content and may not generalize to international video ecosystems. Critically, this study was limited to a content analysis of video metrics; we did not measure actual individual learning outcomes, comprehension levels, or subsequent behavioral changes in self-management. Finally, engagement metrics were based on observable indicators and may not reflect deeper cognitive or behavioral learning outcomes, a limitation widely recognized in digital health communication research.

5. Conclusion

This study demonstrates that short-video platforms disseminating T1DM-related content exhibit substantial variability in quality, reliability, and audience engagement. TikTok videos achieved greater reach and higher technical quality but did not necessarily provide more comprehensive or transparent educational content compared with Bilibili. Audiovisual and structural presentation—captured primarily by VIQI—emerged as the strongest and most consistent predictor of engagement, whereas more rigorous educational quality indicators, including GQS and mDISCERN, did not translate into higher user interaction. These findings suggest a critical tension in digital diabetes communication within these specific algorithmic environments: the types of content that attract user engagement are not always those that best support evidence-based individual education. Addressing this gap suggests a potential benefit from collaboration between clinicians, digital media creators, and platform designers to promote content that is simultaneously accurate, engaging, and accessible. Future research may benefit from examining longitudinal engagement trajectories, evaluate the impact of creator training interventions, and develop quality assessment tools specifically tailored to the affordances of short-video platforms such as Bilibili and TikTok.

Supplemental material

Supplemental material - Quality and reliability of type 1 diabetes mellitus –related short videos on Bilibili and TikTok: A cross-sectional assessment study

Supplemental material for Quality and reliability of type 1 diabetes mellitus –related short videos on Bilibili and TikTok: A cross-sectional assessment study by Yunwen Tao, Ruoyu Zhang, Ye Zhao, Sicheng Li, Heming Guo, Yun Huang and Chen Fang in Digital Health.

Footnotes

Acknowledgements

The authors used ChatGPT to improve the readability and language of the manuscript. After using this tool, the authors reviewed and edited the content as needed and take full responsibility for the content of the publication.

Ethical considerations

This study was conducted using publicly available data from the social media platforms Bilibili and TikTok. All data were anonymized at the point of collection, and no direct interaction with any platform users occurred. As the research did not involve human participants, private data, or personal identifiable information, institutional review board approval was not required, in accordance with local legislation and institutional guidelines. The study was performed in compliance with the platforms’ terms of service.

Author contributions

YT was responsible for the overall research design, primary data analysis, and manuscript drafting. RZ and YZ contributed to data collection and figure preparation. RZ, SL and HG were responsible for table preparation and manuscript layout. YH revised the manuscript. CF provided research general guidance and financial support. All authors read and approved the final version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Scientific Research Project of Suzhou Sports Bureau (TY2024-402), the Youth Project of “Science and Education Strategies for Health” of Suzhou Municipal Health Commission (QNXM2025055), and the Youth Science and Technology Talent Lifting Project of Suzhou Association for Science and Technology (to Ruoyu Zhang).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The raw dataset generated and analyzed during the current study is not publicly available due to its large size and the dynamic nature of social media data, but it is available from the corresponding author on reasonable request.

Guarantor

Chen Fang, Ph.D. takes full responsibility for the work as a whole, including the study design, access to data, and the decision to submit and publish. E-mail:

Supplemental material

Supplemental material for this article is available online.

Appendix

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.