Abstract

Background

Digital therapeutics (DTx) allow for the deployment of real-world interventions on a broad scale, at a lower cost. However, using digital modalities for both intervention delivery and evaluation can become a concern if they unintentionally influence each other, as well as behavioral adherence. A DTx formative test to enhance affective response during physical activity (PA) examined intercorrelations between engagement with digital intervention strategies and compliance with digital assessment procedures. We also examined whether digital engagement and compliance were associated with behavioral adherence.

Methods

Adults (N = 37, M = 46.5, range: 25–75 years) participated in a 14-day DTx. Fitbit smartwatches assessed intervention mediators (e.g., affective response) and outcomes (e.g., PA) through ecological momentary assessment (EMA) and accelerometers, respectively. Digital compliance (e.g., EMA response rates, smartwatch wear time), digital engagement (e.g., completing weekly PA scheduling sessions, completing morning PA planning/goal sessions), and behavioral adherence (e.g., performing planned PA sessions, achieving PA goals) were assessed. Partial correlations controlling for age and body mass index examined person-level associations among compliance, engagement, and adherence variables.

Results

One component of digital engagement showed a moderate association with the random EMA component of digital compliance (r = .37). Some engagement components showed medium to strong associations with behavioral adherence (rs = .46−.84). Digital compliance was unrelated to behavioral adherence.

Conclusion

Digital engagement was largely unrelated to digital compliance. Digital engagement (but not digital compliance) was associated with behavioral adherence. Using similar digital modalities for intervention delivery and evaluation does not appear to pose serious methodological concerns for DTx to promote PA.

The study is registered on clinicaltrial.gov under eMOTION Formative Study (TRN: NCT06125964) on 26 October 2023.

Introduction

With the ongoing rapid pace at which mobile devices are evolving, scientists are readily incorporating digital technologies to improve public health and health care. The use of technology in these fields has facilitated improvements in access, time, and cost. 1 One example is the shift toward using digital technologies to deliver health behavior interventions to large groups of people, at a lower cost, and in the natural environment. 2 These technological innovations, also known as Digital Therapeutics (DTx), use mobile and wearable sensor technologies to deliver behavior change mechanisms across a range of areas, including mental health, drug use, and physical activity (PA).2,3

Although there are many potential advantages to DTx, a number of challenges, including lack of human support, technical complications, and competition with events in participants’ daily lives, could potentially attenuate intervention efficacy and implementation.2,4 In particular, DTx may require greater familiarity, comfort, and patience with technology than participants have available. Additionally, DTx often requires participants to respond to frequent notifications from a smartphone or smartwatch device, which can lead to annoyance, boredom, frustration, as well as feelings of being burdened.2,4

A unique characteristic of DTx interventions is that both the delivery and evaluation of the intervention components on the intended outcomes occur through digital interactions. 5 For example, a DTx may provide digital push notifications through smartphone messaging, reminding participants to periodically break up sedentary behavior. The extent to which a participant responds to these notifications through digital interactions on a mobile device (e.g., clicking the screen, completing digital tasks) is referred to as digital intervention engagement.6,7 The number of digital interactions in conjunction with the depth (i.e., cognitive and emotional processing of the interaction) can represent the intervention “dose,” giving insight into the fidelity of the intervention.6,8 At the same time, a study may evaluate the effects of breaking up sedentary behavior on specific outcomes, such as perceived levels of stress, through self-reported ecological momentary assessment (EMA) mobile devices prompted by a similar digital notification system as the one mentioned above. Responding to EMA to evaluate the study's outcome may be part of the protocol, in which participants are instructed to complete the EMA prompts. The rate at which a participant digitally responds to intervention assessment procedures, such as responding to EMA or wearing sensor devices to evaluate the effects of the intervention on targeted outcomes, is referred to as compliance.6,8

In DTx, engagement with intervention strategies and compliance with intervention assessments both often occur through digital notifications and interactions, whereas in nondigital interventions, the intervention delivery channel (e.g., face-to-face) typically differs from the intervention evaluation modality (e.g., paper questionnaires). 9 Using digital modalities for both intervention delivery and evaluation can become a concern if compliance and engagement unintentionally influence each other. For example, poor digital engagement with the intervention strategies (due to any range of technological or user burden-related issues) could lead to lower levels of compliance with the digital assessment procedures. Additionally, researchers may provide incentives (e.g., monetary compensation) for compliance based on meeting minimum thresholds for the digital assessment procedures (e.g., frequency of responding to digital EMA prompts) or digital device wear time. This may become a significant concern to the validity of the intervention when participants are not able to differentiate between digital notifications to comply with the assessment procedures (compliance) as opposed to digital notifications to engage with intervention strategies (engagement). If participants mistakenly believe that they are being compensated for the latter, participants may provide more meaningless responses (i.e., randomly clicking through tasks to get paid), compromising the validity of the intervention and its underlying theory. 10 When engagement with intervention delivery strategies is too highly correlated with compliance with intervention assessments, there may be more missing evaluation data among participants with lower intervention engagement, leading to differences in the quantity and generalizability of available data between intervention and control groups and difficulties in determining whether there are dose–response effects on hypothesized outcomes. 11

Additionally, digital engagement with intervention delivery and digital compliance to intervention may influence the degree of behavioral adherence to nondigital intervention strategies (i.e., behavioral adherence) of DTx. In addition to engaging with digital intervention strategies (e.g., goal-setting, planning, and self-monitoring through digital interactions on a mobile device), a DTx may promote the execution of non-digital behavioral tasks and activities, such as performing planned behaviors and achieving behavioral goals. 12 These elements of intervention behavioral adherence differ from downstream intervention outcomes, which may involve clinically relevant behavioral and health metrics such as daily minutes of moderate-to-vigorous physical activity (MVPA), body mass index (BMI), or cardiometabolic indicators (e.g., cholesterol, insulin, blood sugar).13–15 Thus, behavioral adherence to nondigital intervention strategies within a DTx offers an intermediate indicator of whether the intervention is working to improve health behaviors.

The current study involved a 14-day formative test of a DTx to enhance affective response (i.e., how one feels) during PA among physically inactive adults with overweight or obesity. In the USA, the majority of adults (i.e., ≥18 years) do not meet the national PA recommendations, leading to increased risk for various chronic conditions. 16 Affective response to PA is associated with future activity engagement, since individuals are more likely to repeat activities they find enjoyable. 17 However, positive affective response may not always translate to following through with PA plans or sustained engagement.18,19 In recent years, the use of digital technologies in interventions to promote PA has grown exponentially as they provide a platform for long-term and repeated support for these behaviors in real-world environments.13–15 Many DTx interventions for PA have been successful in improving step counts, activity levels, and other health-related metrics (e.g., heart rate, sleep duration, sleep onset).13–15

The current study examines levels of engagement with digital intervention strategies, compliance with digital assessment procedures, and behavioral adherence to nondigital intervention strategies to assess the functionality and determine potential confounding variables in the intervention protocol. We sought to determine whether engagement with digital intervention strategies was associated with compliance with digital assessment procedures. Research also examined whether digital engagement and compliance were associated with behavioral adherence to nondigital strategies of the intervention.

Method

Participants and procedures

Adults were recruited through ResearchMatch, a national health volunteer registry created by academic institutions and supported by the NIH as part of the Clinical Translational Science Award (CTSA) program. Interested adults completed an online screening survey via REDCap to assess eligibility and medical history using the 2020 Physical Activity Readiness Questionnaire (PAR-Q).20,21 Inclusion criteria were as follows: (1) ≥ 18 years of age, (2) reside in the USA, (3) BMI ≥ 25 kg/m2, (4) currently engage in ≤60 min of structured PA per week, (5) own a personal smartphone, (6) have Internet or Wi-Fi connectivity, and (7) able to speak and read English. Exclusion criteria consisted of adults who (1) have cognitive disabilities that prevent the ability to provide informed consent, (2) have physical disabilities or medical issues that limit PA, (3) are unable to wear a smartwatch, or (4) are currently pregnant. All study procedures were approved by the University of Southern California Institutional Review Board (ID # UP-22-00332) with data collected remotely between September 2023 and March 2024. Furthermore, the study is registered on clinicaltrial.gov under eMOTION Formative Study (TRN: NCT06125964) on 26 October 2023.

Eligible participants were contacted to schedule a videoconference consent session, where research assistants reviewed the informed consent form with participants and answered their questions. After the informed consent form was signed, participants completed an online questionnaire assessing a range of psychological constructs and demographics. Participants then completed a 1- to 3-day trial period, which included two brief SMS messages daily. Participants who completed both messages within a single day “passed” the trial period and were mailed a Fitbit Versa 3 smartwatch and a link to schedule a 60-min online orientation session.

Prior to the orientation session, participants (N = 37) were randomized by LH to study condition ((1) intensity-based daily goals (n = 9); (2) affect-based daily goals with an enhancement that recommended types and contexts to make PA more enjoyable (n = 10); (3) affect-based daily goals with an enhancement involving savoring positive experiences during PA (n = 9); and (4) affect-based daily goals with both enhancements (n = 9)). Due to the high correlation between PA intensity and experiences, an intensity-based goal condition was used as the control.22,23 Randomization was stratified to ensure approximately equal representation of age (<45 vs. ≥45 years), sex (male vs. female), and BMI (overweight vs. obese) across groups. Participants and research staff (MH and RL) were provided a code to stay blinded to their study conditions. Orientation sessions included setting up the smartwatch, providing a PA guidebook and prescription (developed by the research team using information from the CDC), and an overview of intervention procedures. The intervention began on the first Sunday after the orientation session with a 7-day run-in period followed by 14 days of intervention SMS messaging via REDCap. At the same time, participants wore a Fitbit versa 3 smartwatch, which administered EMA and measured heart rate. Many health behavior EMA studies range from 7 to 14 days in duration. The 14-day intervention duration allows us to test and refine the behavior change techniques and study measures used in the study protocol, assessing the feasibility of the study, while obtaining a sufficient amount of data to assess reliability within-person patterns.24,25 After the intervention, participants returned the Fitbit Versa 3 smartwatch via prepaid envelope, received a second online questionnaire, and a link to schedule a virtual exit interview. Participants received up to $75 for their participation based on compliance with digital assessment procedures (i.e., smartwatch wear time and EMA response rate). They were not compensated for engagement with digital intervention strategies or behavioral adherence to nondigital intervention strategies.

Behavior change techniques

PA scheduling

Weekly scheduling notifications were sent via REDCap SMS on Sundays at 5:00 pm with up to three hourly reminders. Completed weekly scheduling notifications were recorded via REDCap as completed (1) or not completed (0). Participants were asked to select the day(s) of the upcoming week they intended to perform PA and were introduced to the focus of their PA sessions (i.e., heart rate or enjoyment). Additionally, on the evenings of days with scheduled PA (based on the weekly scheduling notifications), participants could schedule additional PA on the upcoming day by selecting “Yes” or “Not Sure” in response to “Do you plan on doing a PA session that lasts at least 15 min tomorrow?” on the self-monitoring session. Engagement in weekly scheduling could vary across participants. Each scheduled PA session were coded as 1 and summed to calculate the total number of scheduled PA sessions. Participants who did not complete the weekly scheduling sessions were not considered dropouts.

PA goal and self-monitoring sessions

REDCap SMS notifications to engage in goal/planning and self-monitoring sessions, based on the mHealth intervention MyDayPlan, 26 were sent in the morning and evening, respectively, of days participants scheduled PA. Notifications to engage in morning goal sessions were sent at 6:00 AM with up to four hourly reminders. Completed morning goal sessions were recorded via REDCap as completed (1) or not completed (0) and summed to calculate the total number of planned PA sessions. The morning session gave a daily PA goal, as well as action plan and coping plan modules. The daily goal for the intensity-based condition was worded as follows: “Today, your goal is to maintain a heart rate of XX bpm during your physical activity session,” where the heart rate (HR) was 55% (Week 1) and 60% (Week 2) of age-related HR max, using the Gellish formula. 27 The affect-based condition received daily goals worded as follows: “Today, your goal is to try [a TYPE of physical activity, physical activity in a PLACE, physical activity with SOMEONE, physical activity while LISTENING TO SOMETHING] that makes the experience more enjoyable while you are doing it.” During the action plan module, participants planned a PA session for that day, including domain, type, time, location, social setting, and audio, using drop-down or free response answer choices. The action plan was followed by a coping plan module which allowed participants to identify potential barriers and solutions from drop-down or free response answer choices.

Notifications to engage in the evening self-monitoring sessions were sent via REDCap SMS starting at 7:00 pm with up to 3 hourly reminders, asking participants if they completed a PA session for at least 15 min that day. Completed evening self-monitoring sessions were recorded via REDCap as completed (1) or not completed (0) and summed, while each completed PA session were coded as 1 and summed to calculate the total number of PA sessions performed. If yes, participants completed a self-monitoring module asking whether they met their intensity- or affect-based PA goal. Participants in the intensity-based goal condition were asked, “Did you maintain an average heart rate greater than XX bpm during that PA session?,” with “Yes” (1), “No” (0), and “I don’t know” (1) as answer choices. While “Did you try a type/location/social setting/audio that you thought would make the experience more enjoyable?” was asked for those in the affect-based goal condition, with “Yes” (1) and “No” (0) as answer choices. A “I don’t know” response was included for the intensity-based goal condition for participants whose activity was not tracked in the Fitbit app, since a different HR formula than Fitbit's was utilized. Meeting the intensity- or affect-based PA goal were summed to calculate the number of times the PA goal was achieved.

Savoring enhancement

After completing the morning goal session, participants in the savoring group received a REDCap SMS notification to engage in a savoring exercise once their PA session was complete. The savoring exercises aimed to enhance and prolong positive experiences during PA. Some examples of savoring exercises were, “What were some positive things you experienced during your last physical activity session?” and “What about your last physical activity session are you grateful for?” If not completed immediately after their PA session, participants could complete the savoring exercise during the evening self-monitoring session. Completed savoring exercises (immediately after a PA session or during evening self-monitoring sessions) were coded as 1 and summed to calculate the total number of completed savoring exercises.

Recommended PA type and context enhancement

For participants randomized to receive type/context recommendations, an individually tailored algorithm recommended activity types and contexts likely to satisfy psychological needs while accounting for personal constraints (e.g., ability, access to facilities, and equipment) based on individuals’ reported perceived importance of 13 domains of psychological needs, assessed at baseline. 28 The algorithm, which is used to inform behavior change techniques, selected from 49 activity types and 30 contexts to satisfy personal needs using ratings from 1558 adults on Amazon Mechanical Turk, a crowdsourcing platform. Recommendations were presented to participants with their daily affect goal during the morning goal session to increase affective response of PA. Activity type, activity physical context, activity social context, and activity audio context recommendations each appeared on 25% of days with scheduled PA. Participants received the message: “Based on your profile, we recommend [backpacking/at home outdoors/in a live class/listening to your surroundings] today because it will satisfy your unique needs.” During the evening self-monitoring session, participants were asked, “Based on your profile, it was recommended that you try [backpacking/at home outdoors/in a live class/listening to your surroundings] today because it will satisfy your unique needs. Did you follow this recommendation?” with “Yes” (1) and “No” (0) as answer choices. Following the PA type or context recommendations were summed to calculate the number of PA type or context recommendations performed.

Measures

Fitbit versa

Fitbit smartwatches are common wearable devices used throughout the literature.29–31 A Fitbit Versa 3 smartwatch was loaned to participants to be worn all day and night (excluding time to charge) on their nondominant wrist for the study duration to measure heart rate (HR) and deliver Ecological Momentary Assessment (EMA). Fitabase, a third-party company that facilitates the deployment of Fitbit technology in research for measurement, tracking, and intervention, to collect data on the day-, hour-, and minute-level, was used to prompt smartwatch-based EMA through the Fitabase Engage. The Fitbit Versa 3 smartwatch was chosen due to its compatibility with the Fitabase Engage EMA tool and water resistance, allowing the watch to be worn during water-based PA (e.g., swimming). HR was measured through photoplethysmography and used to determine smartwatch wear time. EMA bipolar affective prompts (e.g., Feeling GOOD or BAD?; Feeling PROUD or EMBARASSED?) were sent to participants via Fitabase randomly four times per day between 09:00 am and 09:00 pm and when their HR met a 10-min rolling average of 60% HR-max based on the Gellish formula (i.e., event-contingent). 27 A rolling average of 60% HR-max was selected to try to limit the amount of event-contingent prompts during daily activities (e.g., brushing teeth, washing dishes). If participants did not achieve the event-contingent threshold, they were not prompted with event-contingent EMA. Random and event-contingent EMA prompts were coded as completed (1) or not completed (0). These values were summed, separately, to calculate the number of completed random EMA prompts and the number of completed event-contingent EMA prompts.

Demographic characteristics

Demographic characteristics, including age (<45 (0) / ≥45 (1) years), BMI (overweight (0) / obese (1)), sex (male (0)/female (1)), gender identity, race, ethnicity, and annual household income, were assessed.

Engagement, compliance, and behavioral adherence indicators

Descriptions and definitions of digital engagement with intervention strategies, digital compliance with intervention assessment procedures, and behavioral adherence to nondigital intervention strategies are shown in Table 1.

Measures and operationalization of digital engagement, digital compliance, and behavioral adherence variables.

Note. PA: physical activity; EMA: ecological momentary assessment.

Analytic plan

Analyses were conducted in SPSS Version 29.0.2.0. Descriptive statistics were calculated to assess digital engagement with intervention strategies, digital compliance with intervention assessment procedures, and behavioral adherence to nondigital intervention strategies. Independent sample t-tests examined age (<45/≥45 years), sex (male/female), and BMI (overweight/obese) differences in all variables, while paired sample t-tests examined differences based on intervention week (Week 1/Week 2). Partial correlations, using pairwise deletion, examined associations within and between engagement, compliance, and behavioral adherence measures at the person level, while controlling for significant demographic characteristics (i.e., age and BMI). Effect sizes were categorized as small (r ≤ .10), medium (r = .20−.30), and large (r ≥ .40). 32 Significance for all analyses was defined as p < .05.

Results

Descriptives

Demographic characteristics

The sample included 37 individuals (51.4% female; 13.5% Hispanic/Latino) between the ages of 25 and 75 years with an average age of 46.5 years (SD: 13.4) and BMI of 33.4 kg/m2 (SD: 5.4). Approximately a quarter of the sample had an annual household income less than $55,000 and a quarter more than $150,000.

Digital engagement with intervention strategies

Participants completed 89.2% (range: 0.0–100.0%) of the weekly scheduling sessions, with 81.1% of participants completing both (i.e., Weeks 1 and 2) scheduling sessions. During the weekly scheduling sessions, participants scheduled PA an average of 3.4 (SD: 1.7) days per week. Participants also, on average, scheduled PA an additional .78 (SD: 0.89) days per week based on the opportunity given for additional PA scheduling in the evening self-monitoring sessions. Participants completed an average of 82.9% (range: 33.0–100.0%) and 83.8% (range: 0.0–100.0%) of prompted morning goal and evening self-monitoring sessions, respectively. Among participants who were randomized to receive the savoring enhancement, on average, each person completed 84.0% (range: 11.0–100.0%) of the prompted savoring exercises. In total, 1.9% were completed before PA—indicating responses were not completed as instructed. About 45.3% of savoring exercises were completed at midday, while the rest were completed during the evening goal session. Older participants (≥45 years) completed a higher percentage of prompted savoring exercises (t(9.6) = −2.5, p = .03) than those younger than 45 years of age. No differences among any engagement metrics were found by week, sex, and BMI.

Digital compliance with intervention assessment procedures

Participants wore the Fitbit Versa 3 Smartwatch an average of 93.0% (range: 51.2–99.6%) of the time or 22.3 (SD: 2.6) hours per day throughout the study period. On average, participants responded to 63.8% (range: 0.0–98.3%) of random and 47.8% (range: 0.0–100.0%) of event-contingent EMA prompts. No differences were found in compliance metrics by week, age, sex, or BMI.

Behavioral adherence to non-digital intervention strategies

Participants performed, on average, 64.2% (range: 0.0–100.0%) of scheduled PA. Participants performed a PA session, on average, 63.1% (range: 0.0–100.0%) of the days that PA was planned on the morning goal session. No differences were found in behavioral adherence metrics by week, age, sex, or BMI. For participants randomized to receive the tailored PA type and context recommendations, these recommendations were performed an average of 43.0% (range: 0.0–92.0%) of the time, with context recommendations (avg. = 61.6% (range: 0.0–100.0%)) performed more frequently than type recommendations (avg. = 22.2% (range: 0.0–100.0%)). Based on self-report data, when PA was planned and performed, participants who received intensity-based goals, achieved their daily HR goal an average of 84.3% (range: 25.0–100.0%) of the time, with “Not Sure” to achieving their PA goal reported eleven out of 50 times. While participants who received affect-based goals, achieved their daily goal an average of 68.7% (range: 22.0–100.0%) of the time. Participants with obesity (vs. overweight) had a greater percentage of achieving their intensity-based PA goals (t(7) = −3.8, p = .01). No differences were found based on week, age, and sex.

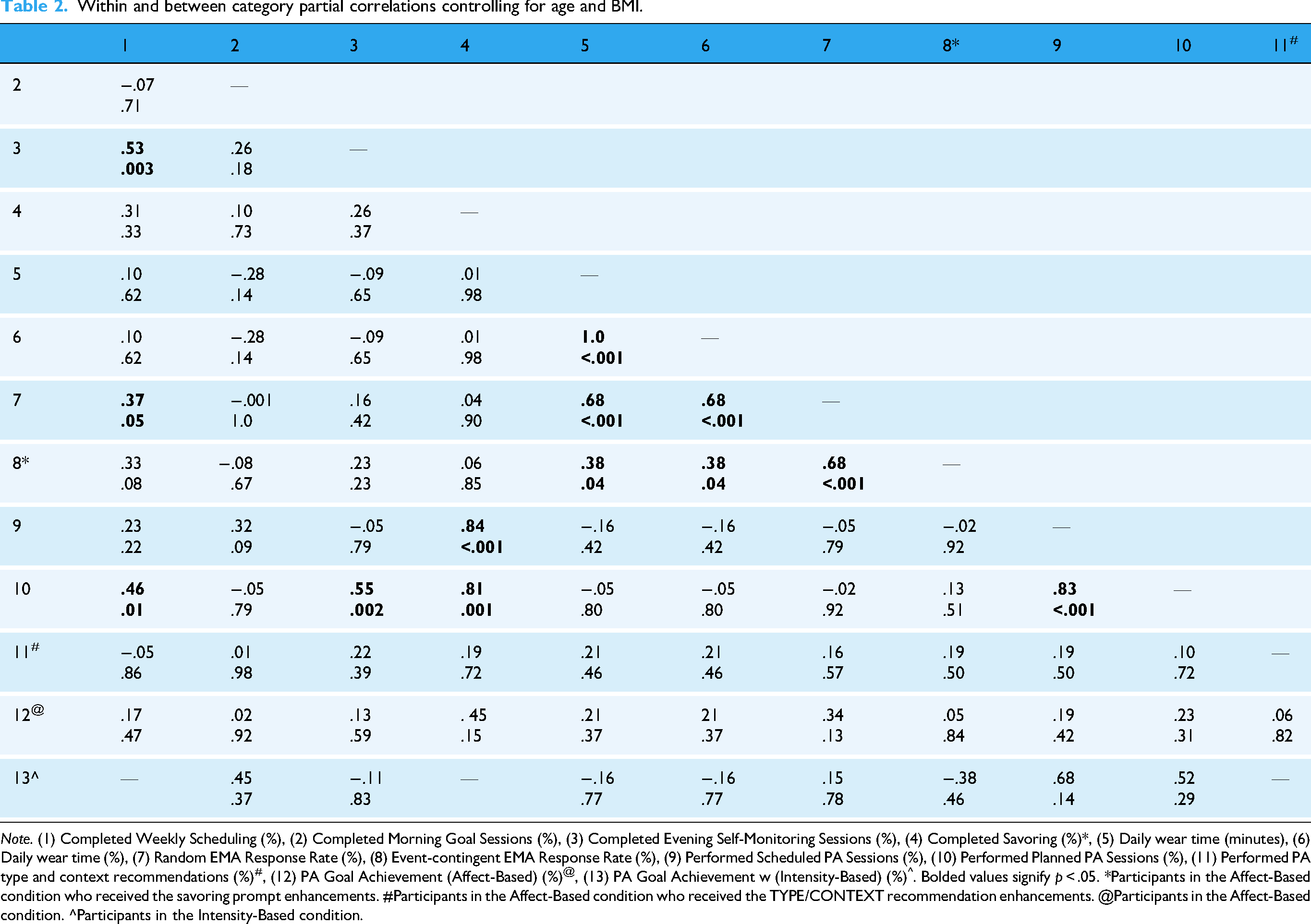

Associations within and between digital engagement and digital compliance

Person-level partial correlations examined associations within and between engagement and compliance (see Table 2). A range of moderate to strong, significant positive correlations were found among all the measures within the compliance category (rs = .38–1.00). Among engagement measures, the percent of completed weekly scheduling sessions was positively associated with the percent of completed evening goal sessions. No other significant correlations were found within the engagement category.

Within and between category partial correlations controlling for age and BMI.

Note. (1) Completed Weekly Scheduling (%), (2) Completed Morning Goal Sessions (%), (3) Completed Evening Self-Monitoring Sessions (%), (4) Completed Savoring (%)*, (5) Daily wear time (minutes), (6) Daily wear time (%), (7) Random EMA Response Rate (%), (8) Event-contingent EMA Response Rate (%), (9) Performed Scheduled PA Sessions (%), (10) Performed Planned PA Sessions (%), (11) Performed PA type and context recommendations (%)#, (12) PA Goal Achievement (Affect-Based) (%)@, (13) PA Goal Achievement w (Intensity-Based) (%)^. Bolded values signify p < .05. *Participants in the Affect-Based condition who received the savoring prompt enhancements. #Participants in the Affect-Based condition who received the TYPE/CONTEXT recommendation enhancements. @Participants in the Affect-Based condition. ^Participants in the Intensity-Based condition.

Between engagement and compliance categories, the rate of completing weekly PA scheduling sessions showed a moderate association with the EMA response rate for random prompts (r = .37). No other significant correlations were found between engagement and compliance categories.

Associations digital engagement and digital compliance with behavioral adherence

As shown in Table 2, among behavioral adherence measures, the percent of completed scheduled PA sessions showed a strong positive association with the percent of completed planned (r = .83). No other significant correlations were found within the behavioral adherence category.

Between categories, some digital engagement components (i.e., completed weekly PA scheduling, completed evening self-monitoring) showed moderate to strong associations with one aspect of intervention behavioral adherence (i.e., performing planned PA sessions) (r's = .46−.84). Completing savoring exercises was also positively associated with performing scheduled PA sessions (r = .83). Digital compliance was unrelated to the interventions behavioral adherence variables.

Discussion

Rates of digital engagement and compliance provide insight into the dose–response and scalability of a DTx.6,8,33 Knowledge of these categories can help identify parts of an intervention protocol that may need to be changed or refined to improve its effectiveness. Overall, in a formative test of a 14-day DTx intervention to increase PA behaviors, we found reasonable levels (i.e., greater than 65.0–70.0%) of digital engagement and digital compliance. Digital engagement with intervention delivery strategies was largely unrelated to digital compliance with intervention assessments. While digital engagement (but not digital compliance) was associated with behavioral adherence. These results indicate a well-formulated protocol for a DTx to promote PA that uses multiple digital modalities.

Although generally acceptable, there were areas of engagement, compliance, and behavioral adherence that could improve in future study iterations. For example, random and event-contingent EMA response rates were below the 70–75% level that is typically viewed as desirable. 34 Participants mentioned through qualitative exit interviews that the event-contingent EMA prompts came through too frequently and were found to be interruptive. In an attempt to improve EMA response rates, the random EMA prompts were removed, and the HR formula for event contingent prompts was changed to the Karvonen training zone formula to reduce the number of EMA prompts and, in turn, improve the response rate in future study iterations.35,36 Additionally, behavioral adherence to the tailored recommendations for PA types and context was somewhat low. Participant feedback acknowledged the difficulty in organizing a PA session that incorporated the recommendations on such short notice (in the morning). Therefore, future study iterations will introduce the recommendations during the weekly scheduling session and remind participants of the recommendations during the morning goal sessions. This adjustment will increase the frequency and lead time between the recommendation introduction and planning PA, which is thought to improve behavioral adherence.37,38

Furthermore, results indicated that digital engagement with intervention strategies did not necessarily influence compliance with intervention assessment procedures. In other words, although similar modalities were used, individuals who were less engaged with the digital intervention strategies (for various reasons, including interest in or willingness to perform PA) were not necessarily less compliant with digital assessment procedures. Within the compliance category, findings suggest that when an individual is less compliant with one form of assessment, they are less likely to be compliant with other forms of assessment–leading to correlated patterns of missingness among digital compliance indicators. 39 In the current study, it may not be surprising that participants who had lower smartwatch device wear time also responded to fewer EMA notifications on that device (given that it would be difficult to respond to prompts on a device that is separated from the participant). However, the lack of confounding between digital engagement and compliance may be because they were delivered through separate devices (i.e., smartphone for engagement with the intervention and smartwatch for evaluation of the intervention). Additionally, special attention was given during the onboarding process to explain that the incentives were linked to wearing and completing digital prompts on the smartwatch, not the digital tasks requested on the smartphone, nor the amount of PA completed. The minimal associations among digital engagement with intervention strategies and compliance with assessment procedures limit the suggestion of methodological weaknesses and confounding factors (i.e., incentives), supporting the DTx Protocol.

The associations between engagement with digital intervention strategies (not digital compliance with intervention assessment procedures) and behavioral adherence to non-digital intervention strategies (i.e., completing scheduled and planned PA sessions) suggest the intervention strategies are working to promote positive health behaviors and may be sustained once the intervention is concluded. Since engagement leads to the amount of data available, it can easily influence the evaluation of the intervention's fidelity.40,41 However, high engagement with the digital intervention strategies does not equate to an effective behavior change intervention. These moderate-to-strong associations found between engagement and behavioral adherence components provide insight that participants see value and are motivated by the intervention's behavior change techniques. 2 Additionally, the associations between the weekly scheduling session and evening self-monitoring sessions with completing planned PA sessions, reflect the depth of the interaction with the intervention strategies that may translate over into real-world long-term PA behavioral change (i.e., thoughtful planning leading to PA engagement). 42

The absence of significant associations among digital compliance and behavioral adherence suggests that participants were informed that the incentives were linked to wearing and completing digital prompts on the smartwatch and not to digital engagement or behavioral adherence. In our study, compliance with intervention assessment procedures were more passive (i.e., wearing the smartwatch) and convenient (i.e., answering EMA on the wrist-worn smartwatch), while behavioral adherence to nondigital intervention strategies required more intentional planning and motivation (e.g., following through with physical activity intentions and plans). 4 Although both compliance and behavioral adherence come with their own distinct barriers, such as clarity and burden for compliance, and time and motivation for behavioral adherence. 4 By separating digital compliance and behavioral adherence, we can determine that the intervention protocol is strong and the behavioral change techniques are utilized in various ways, potentially due to real-world barriers. Overall, by disentangling digital engagement, digital compliance, and behavioral adherence, we have identified areas to improve and reduced potential methodological issues, which is a critical step to ensure that intent-to-treat and dose–response effects can be evaluated sufficiently in DTx interventions.

Strengths and limitations

Strengths of this study include automatic and passive collection of a range of metrics to quantify interactions with digital technologies and sample stratification to allow a balanced number of participants in age, BMI, and sex groups. This allowed us to separate the influence of digital engagement with intervention strategies, digital compliance with assessment procedures, and behavioral adherence to the non-digital intervention strategies, to fully examine the overall efficiency and effectiveness of the behavior change intervention. However, it is important to note that this study provided a formative evaluation of a DTx to identify protocol and design improvements to implement in a full-scale randomized controlled trial. Therefore, the sample size (using the Central Limit Theorem, sample size of >30 to inform inferences among larger populations) and study duration were limited, which can hinder external validity, the ability to detect small statistical effect sizes, and examine how associations may change over longer periods of time. 43

Conclusion

In this formative study, levels of digital engagement with intervention strategies, digital compliance with intervention assessment procedures, and behavioral adherence to non-digital intervention strategies were sufficient and generally did not influence each other, speaking to the validity of the intervention. Although highly preliminary, this study highlights the importance of evaluating engagement, compliance, and adherence during this specific digital therapeutic intervention to describe potential methodological concerns and confounding sources (e.g., incentives) that may affect similar types of interventions. Understanding the interplay between engagement, compliance, and behavioral adherence in DTx interventions is a critical step to delineate methodological concerns and confounding sources that can threaten the rigor and integrity of DTx interventions. Together, these components enhance the interpretability of results, guide optimization, and provide the evidence needed for the scalability and sustainability of DTx behavior change interventions.

Footnotes

Acknowledgments

We would like to thank our participants for their contributions.

Ethical considerations

Approval was obtained from the University of Southern California Institutional Review Board (ID # UP-22-00332). The procedures used in this study adhere to the tenets of the Declaration of Helsinki.

Consent to participate

Informed consent was obtained from all individual participants included in the study.

Consent for publication

Patients signed informed consent regarding publishing their data.

Authors’ contributions

Conceptualization: Genevieve F. Dunton, Jimi Huh, and Delfien Van Dyck. Methodology: Genevieve F. Dunton, Lori A. Hatzinger, Rachel Crosley-Lyons, Micaela Hewus, and Wei-Lin Wang. Data curation: Lori A. Hatzinger, Rachel Crosley-Lyons, and Micaela Hewus. Formal analysis: Lori A. Hatzinger. Investigation: Genevieve F. Dunton, Lori A. Hatzinger, Rachel Crosley-Lyons, and Micaela Hewus. Project administration: Micaela Hewus. Software: Lori A. Hatzinger. Resources: Genevieve F. Dunton. Supervision: Genevieve F. Dunton, Jimi Huh, and Delfien Van Dyck. Writing—original draft: Lori A. Hatzinger. Writing—review and editing: Genevieve F. Dunton, Lori A. Hatzinger, Rachel Crosley-Lyons, Micaela Hewus, Wei-Lin Wang, Jimi Huh, and Delfien Van Dyck. Funding acquisition: Genevieve F. Dunton and Rachel Crosley-Lyons.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the NIH R01CA272933 awarded to Genevieve F. Dunton. Rachel Crosley-Lyons’ effort on this paper was supported by NIH F31HL176165.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

Data and materials will be made available upon request.